Submitted:

18 July 2025

Posted:

21 July 2025

You are already at the latest version

Abstract

Keywords:

- Pretraining: In this phase, models learn general language patterns by predicting masked or next tokens over large amounts of unlabeled text. They use objectives like masked language modeling (e.g., BERT) or causal language modeling (e.g., GPT) [10,27]. This stage is computationally demanding and often requires distributed training on GPUs or TPUs while handling billions of tokens.

- Fine-tuning: This step involves supervised training on labeled datasets designed for specific tasks such as question answering, summarization, or code generation. This improves accuracy for those tasks [15].

Research Problem:

Significance:

- Provide a detailed overview of LLM architectures, training techniques, and alignment methods.

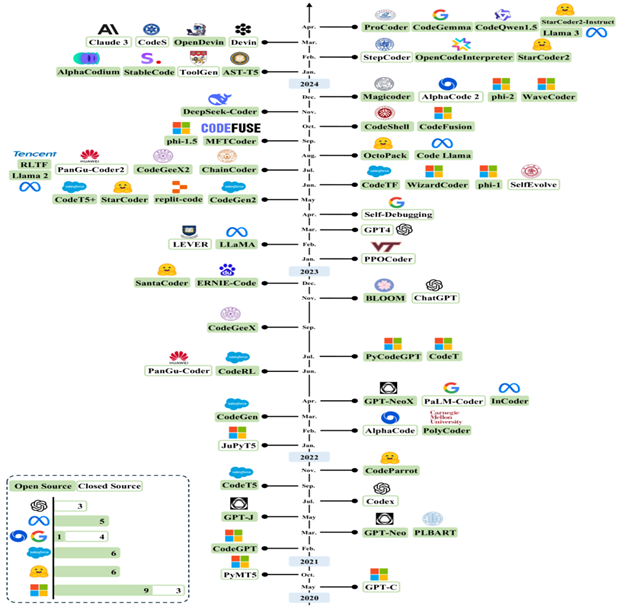

- Trace the development of LLMs across general-purpose, code-focused, and cybersecurity-oriented models.

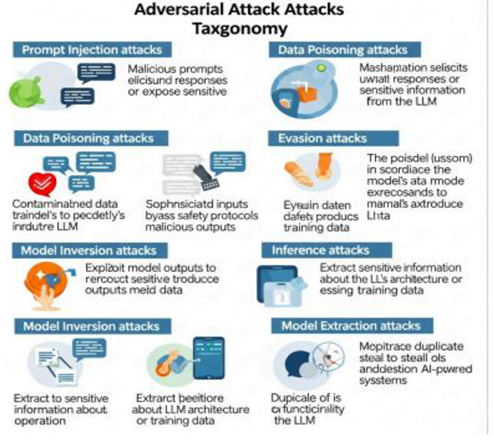

- Classify known vulnerabilities, attack vectors, and defense strategies targeting LLM systems.

- Evaluate the impact of LLMs on software engineering workflows, from requirement analysis to code deployment.

- Analyze the role of LLMs in improving cybersecurity threat detection, response, and automation.

- Discuss ethical, privacy, and operational challenges in deploying LLMs securely and responsibly.

Contributions:

II. Related Work

III. Research Outcome And Review

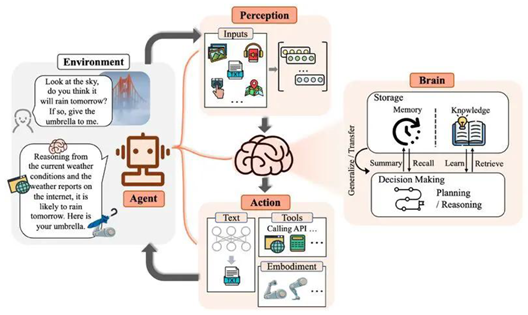

IV. The Large Language Model

- A.

- How LLMs Work

Tokenization and Embedding in Large Language Models (LLMs)

Embedding

Adding Positional Encoding

Passing Through Transformer Layers

Contextual Representation

Next Token Prediction

Generating Output

Training (Self-Supervised Learning)

Training (Self-Supervised Learning)

Fine-Tuning & Deployment

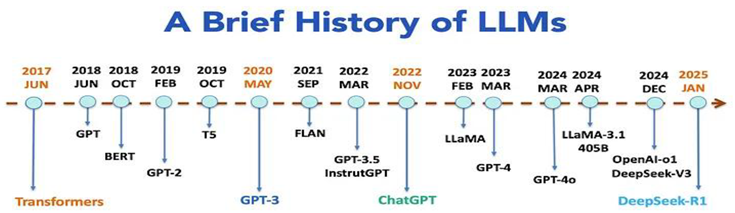

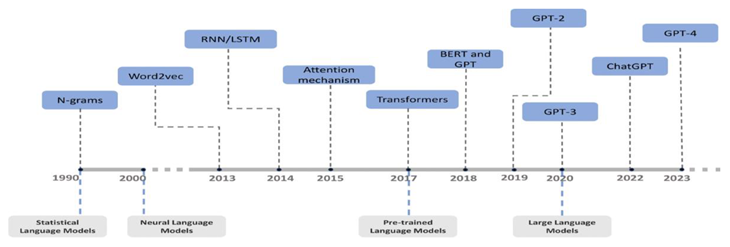

V. The Evolution of LLM

- A.

- Early Language Models Before 2017

- Slow training times due to lack of parallelism.

- Difficulty capturing long-range dependencies, especially in very long text sequences.

- Vanishing gradient problems, making optimization harder over long time steps.

- C.

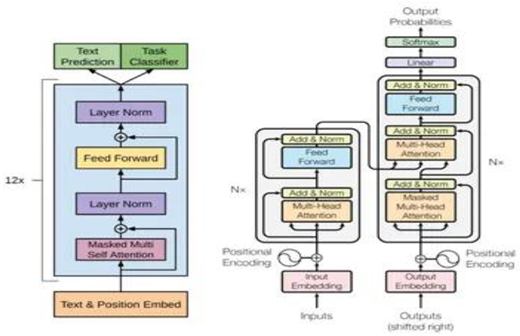

- Core Components of the Transformer

- Multi-Head Self-Attention: Instead of using a single attention mechanism, the Transformer uses multiple attention heads in parallel. This allows the model to learn different types of relationships in the data simultaneously, such as syntactic and semantic dependencies.

- Positional Encoding: Since the model lacks recurrence, it cannot inherently understand the order of tokens. To compensate, positional encodings are added to the input embeddings, allowing the model to consider sequence order.Position Encoding is very important .

- Feed-Forward Networks: Each layer of the Transformer includes position-wise feed-forward neural networks that add non-linearity and increase model capacity.

- Layer Normalization and Residual Connections: These additions stabilize training and allow gradients to flow more effectively through deep networks.

- BERT (Bidirectional Encoder Representations from Transformers) - which used the encoder.

- GPT (Generative Pretrained Transformer) - which used only the decoder.

- T5 (Text-to-Text Transfer Transformer) - which used the full encoder-decoder stack.

- E.

- Industry Adoption and Research Momentum

- D.

- BERT and Bidirectional Representations (2018)

2.15 The GPT Series: Autoregressive Growth

- -

- Autoregressive modeling can achieve strong performance in language understanding and generation.

- -

- -Transfer learning works in both directions; models trained for generation can also be used for understanding tasks.

- -

- It showed that increasing model size and data volume leads to new behaviors, such as coherent multi-paragraph text generation, zero-shot learning, and in-context reasoning.

- -

- OpenAI initially withheld the full model due to concerns over misuse, including disinformation, spam, and automation of fake content. This sparked one of the first major ethical debates about generative models. The model was assessed in both zero-shot and few-shot contexts, where tasks like translation, summarization, and comprehension were attempted without specific training data. GPT-2’s performance in these areas indicated the early signs of general-purpose language ability, although it still fell short compared to fine-tuned models on benchmarks.

2.15 GPT-3: Emergence of Few-Shot Learning

- -

- Arithmetic and logic puzzles

- -

- Translation and summarization

- -

- Programming and code completion

- -

- Question answering and essay writing

- -

- Lack of consistent reasoning

- -

- Susceptibility to prompt sensitivity and incorrect information

- -

- Difficulty verifying facts or performing reliable multi-step logic

- -Greater factual accuracy

- -Fewer hallucinations

- -Better support for multiple languages

- -More stable behavior across different prompts

- F.

- The Rise of Open-Source Language Models

- -

- GPT-Neo and GPT-J (2021) by EleutherA: These were some of the first serious attempts to create open GPT-3-style models. GPT-J reached 6 billion parameters and displayed impressive zero-shot capabilities.

- -

- GPT-NeoX-20B (2022): This 20-billion-parameter model was trained with open tools and datasets. It matched or outperformed GPT-3 on certain benchmarks while staying freely accessible.

- -

- OPT (Open Pre-trained Transformer, 2022) by Meta AI: Meta released a group of decoder-only language models with up to 175 billion parameters to allow researchers to study the behavior of large-scale LLMs. This included transparent training logs and evaluation metrics, marking a significant achievement in open AI research.

- -

- BLOOM (2022) by BigScience: Trained by over 1,000 researchers from 70 countries, BLOOM became the first multilingual open LLM with up to 176 billion parameters. It supported over 40 languages and was trained on open data with open governance, highlighting the strength of community-driven research. These models showed that high-quality LLMs could be trained and released openly without sacrificing performance. This sparked a surge of innovation, reproducibility, and ethical discussions.

- -

- Alpaca (Stanford): Fine-tuned LLaMA using instruction-following data, creating lightweight ChatGPT-style assistants.

- -

- Vicuna (UC Berkeley): Built on LLaMA with conversational tuning, closely mimicking ChatGPT-level interactions.

- -

- WizardLM, Orca, Koala, Baize: Community-created instruction-tuned LLMs developed from LLaMA and its derivatives, each adding unique features like reasoning or multi-turn dialogue.

- — especially for less common languages

- — and supports scientific reproducibility.

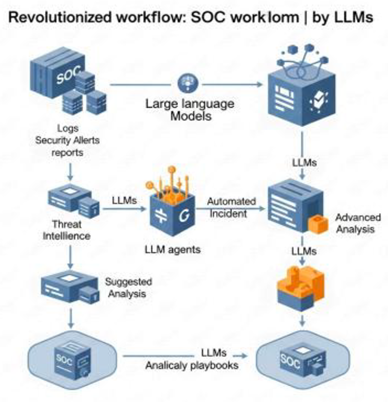

VI. Role in Cybersecurity

| Cyber Attack Phase | Task Category | Detection Target | Detection Approach | Techniques |

| Reconnaissance | System-based Reconnaissance Detection | Advanced adversaries in Smart Satellite Networks | Transform network data into contextually suitable inputs | Transformer-based MHA |

| System-based Reconnaissance Detection | Malicious user activities | Use three self-designed agents to detect log les | CoT reasoning, PbE paradigm, EMAD mechanism | |

| System-based Reconnaissance Detection | Aack early detection, threat intelligence gathering, aacker's behavior analysis | Use LLM-based honeypot to simulate realistic shell responses | CoT prompting, Few-shot learning, Session management | |

| Human-based Reconnaissance Detection | Malicious web pages | Use question-and-answer detection example to guide LLM | K-means clustering, Few-shot Prompting | |

| Human-based Reconnaissance Detection | Phishing Sites | Translate email into LLM-readable format and use CoT | Prompt Engineering, CoT prompting | |

| Foothold Establishment | Malware Detection and Analysis | Malicious software (code snippets, textual descriptions) | Analyze textual descriptions and code snippets | BERT fine-tuning |

| Vulnerability Detection and Repair | Security aws in code repositories and bug reports | Analyze code, identify patterns, generate patches | GPT-3, Prompt Engineering, Semantic Processing | |

| Lateral Movement | Security | Anomalies in network trace and logs | Continuously monitor network tracking, system logs, user behavior | Advanced anomaly detection systems |

| Incident Response Automation | Critical events, security reports, logs | Assess and summarize events, generate scripts, aid report writing | LLM agents, Knowledge management | |

| Penetration Testing | Complex, multi-step, security-bypassing | Automate creation and analysis of code call chains | PentestGPT, Chain-of-Thought prompting |

3.13 Best Practices for Deployment: Making LLMs Safe and Sound

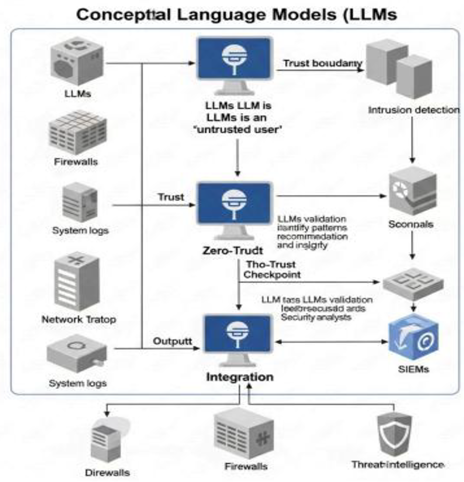

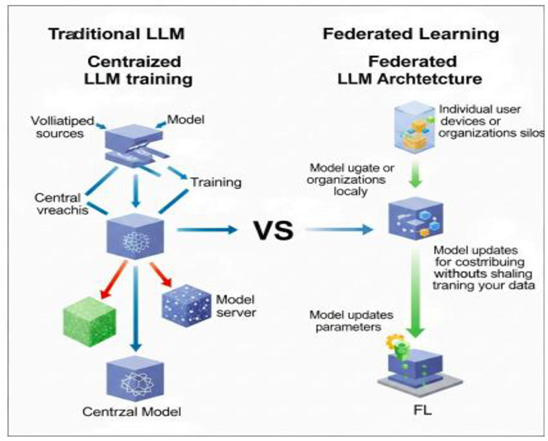

3.14 Smarter LLM Designs for Security

3.15 Teaming Up: Integrating with Other Technologies

3.16 Addressing Persistent Challenges and Emerging Threats

VII. Applications in Software Engineering

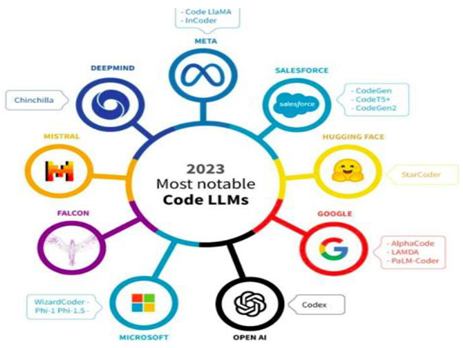

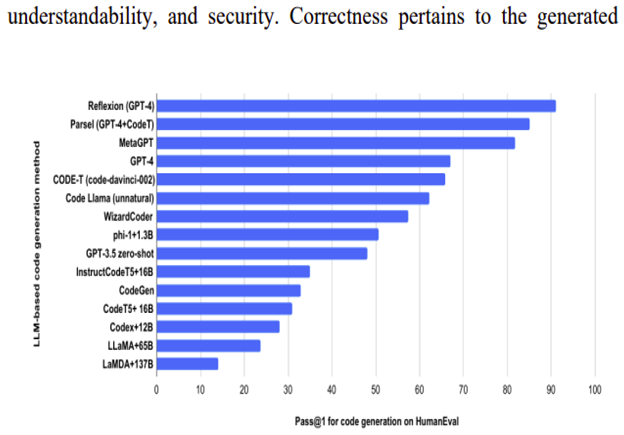

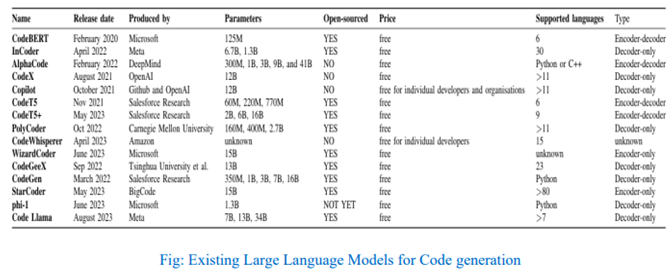

4.1 LLM For Code Generation

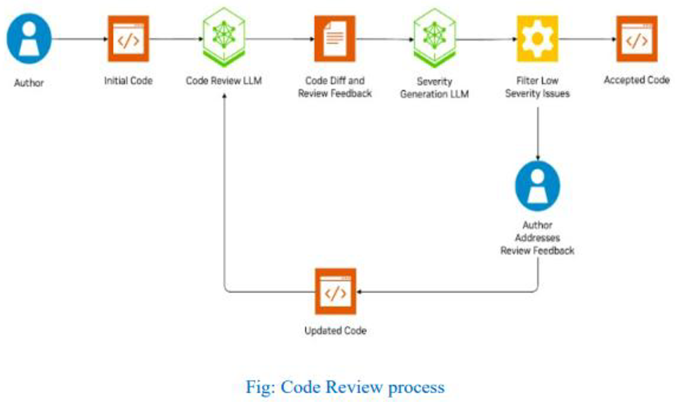

4.2 The Usage of LLM in Code Review

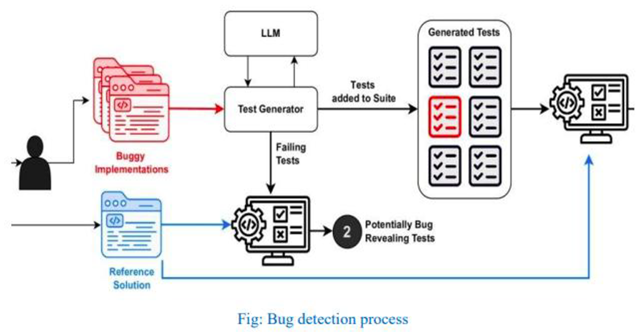

4.3 Automation of Bug Detection

4.4 LLM Usage of Documentation

4.5 Benefits of Using Large Language Models In Software Engineering

4.6 Enhanced Code Quality using LLM

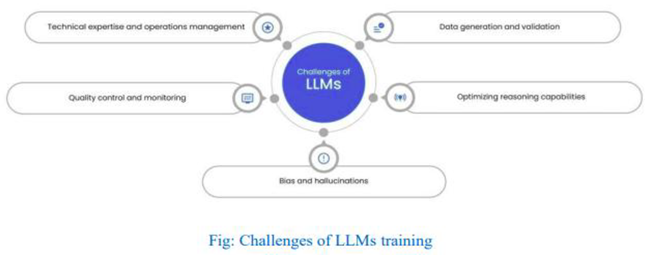

4.7 Challenges and Limitations of LLM

4.8 Data Privacy Concerns

4.8 Model Bias and fairness

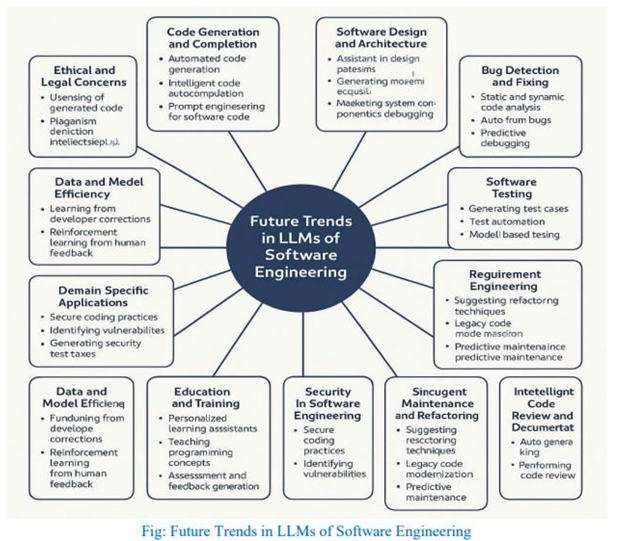

4.9 Future trends in LLMs and Software Engineering

4.10 Impact on Software Development Lifecycle

VIII. Methodology

A. Systematic Literature Review

- Relevance to LLM architecture or application.

- Empirical evidence on LLM deployment or evaluation.

- Coverage of ethical and security issues.

B. Benchmark-Based Model Evaluation

- Parameter count.

- Training data.

- Task specialization.

- Availability (open-source vs. proprietary).

C. Comparative Analysis

- Accuracy in code generation.

- Security in vulnerability detection.

- Computational efficiency.

- Integration ease in real-world workflows.

IX. Results and Discussion

- Software Engineering Applications

- -

- Code completion and real-time error detection.

- -

- Automated documentation, such as SRS generation.

- -

- Bug fixing with Spectrum-Based Fault Localization (SBFL) techniques.

- B.

- Cybersecurity Applications

- C.

- Emerging Trends

- D.

- Implications

X. Challenges and Limitations

- A.

- Technical Challenges

- B.

- Ethical and Security Limitations

- C.

- Practical Barriers

- D.

- D. Proprietary vs. Open-Source Trade-Offs

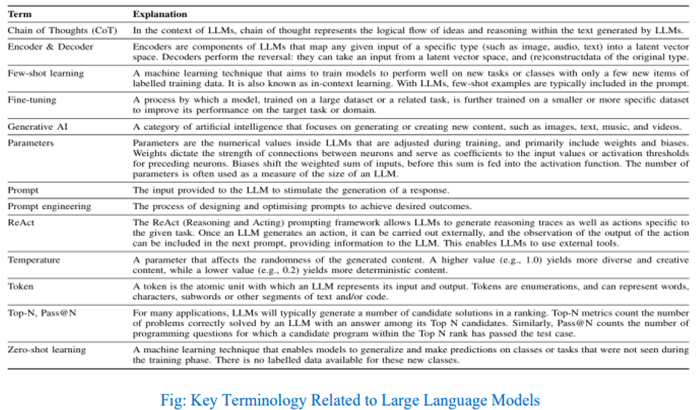

List of Abbreviations

| LLM | Large Language Model |

| AI | Artificial Intelligence |

| NLP | Natural Language Processing |

| BERT | Bidirectional Encoder Representations from Transformers |

| GPT | Generative Pretrained Transformer |

| RLHF | Reinforcement Learning from Human Feedback |

| API | Application Programming Interface |

| RPA | Robotic Process Automation |

| CTI | Cyber Threat Intelligence |

| TPU | Tensor Processing Unit |

| RNN | Recurrent Neural Network |

| LSTM | Long Short-Term Memory |

| GRU | Gated Recurrent Unit |

| BPE | Byte-Pair Encoding |

| OOV | Out-of-Vocabulary |

| MLM | Masked Language Modeling |

| NSP | Next Sentence Prediction |

| mBERT | Multilingual BERT |

| SWAG | Situations With Adversarial Generations |

| GLUE | General Language Understanding Evaluation |

| SQuAD | Stanford Question Answering Dataset |

| ALBERT | A Lite BERT |

| RoBERTa | Robustly Optimized BERT Pretraining Approach |

| NPLM | Neural Probabilistic Language Model |

| GloVe | Global Vectors for Word Representation |

| PEFT | Parameter-Efficient Fine-Tuning |

| LoRA | Low-Rank Adaptation |

| HELM | Holistic Evaluation of Language Models |

| LMSYS | Large Model Systems Organization |

| CNN | Convolutional Neural Network |

| GAN | Generative Adversarial Network |

| VAE | Variational Autoencoder |

| RL | Reinforcement Learning |

| DNN | Deep Neural Network |

| ML | Machine Learning |

| AWS | Amazon Web Services |

| SVM | Support Vector Machine |

| KNN | K-Nearest Neighbors |

| PCA | Principal Component Analysis |

| TF-IDF | Term Frequency-Inverse Document Frequency |

| POS | Part of Speech |

| QA | Question Answering |

| IE | Information Extraction |

| NER | Named Entity Recognition |

| SRL | Semantic Role Labeling |

| ASR | Automatic Speech Recognition |

| TTS | Text-to-Speech |

| OCR | Optical Character Recognition |

| ELMo | Embeddings from Language Models |

| Transformer | A Neural Network Architecture |

| FLOPS | Floating Point Operations Per Second |

| BLEU | Bilingual Evaluation Understudy |

| ROUGE | Recall-Oriented Understudy for Gisting Evaluation |

| CIDEr | Consensus-based Image Description Evaluation |

| WMD | Word Mover's Distance |

| ELU | Exponential Linear Unit |

| ReLU | Rectified Linear Unit |

| GeLU | Gaussian Error Linear Unit |

| SGD | Stochastic Gradient Descent |

| Adam | Adaptive Moment Estimation |

| LDA | Latent Dirichlet Allocation |

| HMM | Hidden Markov Model |

| CRF | Conditional Random Fields |

| TF | TensorFlow |

| PT | PyTorch |

| CPU | Central Processing Unit |

| GPU | Graphics Processing Unit |

| URL | Uniform Resource Locator |

| JSON | JavaScript Object Notation |

| CSV | Comma-Separated Values |

| ANN | Artificial Neural Network |

| IoT | Internet of Things |

| CV | Computer Vision |

| MLOps | Machine Learning Operations |

| EDA | Exploratory Data Analysis |

| BERTology | The study of BERT and similar transformer-based models |

| POS tagging | Part-of-Speech tagging |

| WER | Word Error Rate |

References

- Vaswani et al. Attention is all you need. in Advances in Neural Information Processing Systems (NeurIPS), 2017.

- M. Zhou et al. Attention heads of large language models: Patterns and interpretability. Patterns, vol. 6, no. 1, 2025.

- S. Shaw, J. Uszkoreit, and P. Vaswani, “ALTA: Compiler-based analysis of transformers. in ICLR, 2024.

- Press and O. Wolf, “Output embedding for improved language models. arXiv 2017. arXiv:1709.03564. [CrossRef]

- Q. Wang et al. Learning deep transformer models for machine translation. in ICML, 2019.

- R. Strubell, A. Ganesh, and A. McCallum, “Energy and policy considerations for deep learning in NLP. arXiv 2019. arXiv:1906.02243. [CrossRef]

- J. Peters et al. Deep contextualized word representations. in NAACL, 2018.

- M. Raffel et al. Exploring the limits of transfer learning with a unified text-to-text transformer. JMLR, vol. 21, no. 140, pp. 1–67, 2020. [CrossRef]

- T. Brown et al. Language models are few-shot learners. in NeurIPS, 2020.

- H. Xiong et al. Memory layer architecture in transformers. in ICLR, 2023.

- L. He et al. FlashAttention: Fast and memory-efficient exact attention with IO-awareness. in ICML, 2023.

- R. Kaplan et al. Scaling laws for neural language models. arXiv 2020. arXiv:2001.08361. [CrossRef]

- Hoffmann et al. Training compute-optimal large language models. arXiv 2022. arXiv:2203.15556. [CrossRef]

- J. Wei et al. Chain-of-thought prompting elicits reasoning in large language models. in NeurIPS, 2022.

- D. Arora et al. Sparse transformer architectures. arXiv 2022. arXiv:2212.02007. [CrossRef]

- S. Khandelwal et al. Sharp nearby, fuzzy far away: How neural language models use context. in ACL, 2018.

- Y. Huang et al. Multimodal transformers: A survey. IEEE Trans. Pattern Anal. Mach. Intell., vol. 46, no. 3, pp. 1234–1256, 2024.

- J. Jiang et al. Steerability through prompting: Techniques and effectiveness. in EMNLP, 2023.

- G. Camburu et al. Logical fallacies in model explanations. in AAAI, 2024.

- S. Gudivada et al. Transformers at scale: Hardware and algorithm trends. IEEE Micro, vol. 43, no. 1, pp. 24–31, 2023.

- S. Beltagy, M. Peters, and A. Cohan, “Longformer: The long-document transformer. arXiv 2020. arXiv:2004.05150. [CrossRef]

- Baevski et al. wav2vec 2.0: A framework for self-supervised learning of speech representations. in NeurIPS, 2020.

- K. Clark et al. What does BERT look at? An analysis of BERT’s attention. in Blackbox NLP, 2019.

- E. Tenney et al. BERT rediscovers the classical NLP pipeline. in ACL, 2019.

- K. Clark et al. ELECTRA: Pre-training text encoders as discriminators rather than generators. in ICLR, 2020.

- Liu et al. RoBERTa: A robustly optimized BERT pretraining approach. arXiv 2019. arXiv:1907.11692. [CrossRef]

- Z. Yang et al. XLNet: Generalized autoregressive pretraining for language understanding. in NeurIPS, 2019.

- Z. Liu et al. ALBERT: A lite BERT for self-supervised learning of language representations. in ICLR, 2020.

- P. Lewis et al. BART: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. in ACL, 2020.

- P. Edunov et al. Pretraining of BERT components. arXiv 2019. arXiv:1907.11692. [CrossRef]

- Lan et al. ALUM: Analyzing language understanding models. in EMNLP, 2021.

- Z. Zhou et al. UBERT: Unsupervised BERT training methods. arXiv 2021. arXiv:2105.06449. [CrossRef]

- N. Rafferty et al. Transformer interpretability and visualization. arXiv 2022. arXiv:2205.10072. [CrossRef]

- S. Hendrycks et al. Measuring massive multitask language understanding. in ICLR, 2021.

- H. Schick and H. Schütze. Exploiting cloze-questions for few-shot text classification and natural language inference. in EMNLP, 2021.

- Iyyer et al. Adversarial examples for evaluating reading comprehension systems. in ACL, 2018.

- M. Wang et al. GLUE: A multi-task benchmark and analysis platform for natural language understanding. in EMNLP, 2018.

- K. Zhang et al. Text generation with transformer memory. in ICML, 2022.

- N. Sachidananda et al. Interpretability of LLMs via concept membership. arXiv 2023. arXiv:2303.06711. [CrossRef]

- J. Wolf et al. Transformers: State-of-the-art NLP with Hugging Face. JMLR, 2020.

- M. Rae et al. Improving language modeling with fusion-in-decoder. in NeurIPS, 2022.

- Xie et al. Efficient in-context learning and sparse updates. in ICLR, 2023.

- Shen et al. Common-sense evaluation in LLMs via Winograd schemas. in EMNLP, 2021.

- F. Zhou et al. Benchmarking knowledge-intensive NLP with MassiveScaL. in NAACL, 2024.

- Liu et al. Emergent effects of scaling on functional hierarchies. arXiv 2025. arXiv:2401.20001.

- P. Dufter et al. Position information in transformers: An overview. Comput. Linguist., vol. 50, no. 1, pp. 123–149, 2022.

- L. Dosovitskiy et al. An image is worth 16x16 words: Transformers for image recognition at scale. in ICLR, 2021.

- Y. Liu et al. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019. arXiv:1907.11692.

- Z. Yang et al. XLNet: Generalized Autoregressive Pretraining for Language Understanding. in NeurIPS, 2019.

- M. Joshi et al. SpanBERT: Improving Pre-training by Representing and Predicting Spans. in ACL, 2020.

- S. Narang et al. Do Transformer Modifications Transfer Across Implementations?” arXiv 2021. arXiv:2102.11972.

- Chan et al. Speech Recognition with Transformer Networks. in ICASSP, 2020.

- Zou et al. Understanding and Evaluating LoRA. arXiv 2023. arXiv:2303.16216.

- X. Chen et al. Distilling Step-by-Step: Outperforming Larger Language Models with Less Training. in ACL, 2023.

- J. Urbanek et al. Beyond Goldfish Memory: Long-Term Context in LLMs. in ACL Findings, 2023.

- T. Kojima et al. Large Language Models are Zero-Shot Reasoners. arXiv 2022. arXiv:2205.11916.

- Wei et al. Chain-of-Thought Prompting Elicits Reasoning in LLMs. in NeurIPS, 2022.

- Y. Bai et al. Constitutional AI: Harmlessness from AI Feedback. arXiv 2022. arXiv:2212.08073.

- M. Ganguli et al. Predictability and Surprise in Large Generative Models. arXiv 2022. arXiv:2202.07785.

- J. Zhao et al. Understanding In-Context Learning Dynamics. arXiv 2023. arXiv:2301.00545.

- Y. Liu et al. Pre-train Prompt Tune: Towards Few-shot Instruction Learning. in EMNLP, 2021.

- Gilmer et al. Model Stealing Attacks Against Large Language Models. in USENIX Security Symposium, 2023.

- S. Biderman et al. Pythia: A Suite for Analyzing LLM Scaling and Performance. arXiv 2023. arXiv:2304.01373.

- T. Dettmers et al. QLoRA: Efficient Fine-Tuning of Quantized LLMs. in ICML, 2023.

- Tamkin et al. Understanding AI Alignment Through Scaling. in NeurIPS, 2022.

- H. Chen et al. Zero-day Malware Detection Using Large Pre-trained Language Models. IEEE Trans. Dependable and Secure Computing, 2023.

- S. Lal et al. ChatGPT for Cyber Threat Intelligence: A Double-Edged Sword?” arXiv 2023. arXiv:2303.13279.

- K. Xie et al. Evaluating Security Vulnerabilities in Code Generated by LLMs. in USENIX Security, 2023.

- Bhardwaj et al. LLMs for Secure Software Development: Challenges and Practices. in IEEE Software, 2023.

- Z. Li et al. CyberSecBERT: A BERT-based Model for Cybersecurity Threat Classification. in ACSAC, 2021.

- M. Sabottke et al. Vulnerability Prediction Using NLP on Software Repositories. in USENIX Security, 2022.

- S. Ali et al. Using GPT Models for Detecting Malicious Logs. in Black Hat USA, 2023.

- J. Liu et al. Threat Detection with LLM-based System Logs Analysis. IEEE Trans. Inf. Forensics Security, vol. 19, pp. 88–99, 2024.

- R. Shokri et al. Membership Inference Attacks Against LLMs. in IEEE S&P, 2022.

- T. Lin et al. Prompting LLMs for Phishing Email Detection. in NDSS, 2023.

- T. Yan et al. Cyber Deception Using Large Language Models. IEEE Trans. Netw. Serv. Manage., 2023.

- Lee et al. LLMs in Red Teaming and Penetration Testing Automation. arXiv 2023. arXiv:2305.06622.

- J. Hu et al. Harnessing GPT for Vulnerability Description Generation. IEEE Access, vol. 11, pp. 13595–13608, 2023.

- Zhang et al. CyberGPT: A Transformer for Cybersecurity Use Cases. arXiv 2023. arXiv:2302.10065.

- N. Bianchi-Berthouze et al. The Promise and Pitfalls of Using LLMs for SOC Automation. in ACM CCS Workshop on AI in Security, 2023.

- Chen et al. Evaluating Large Language Models Trained on Code. arXiv 2021. arXiv:2107.03374.

- M. Allamanis et al. A Survey of Machine Learning for Big Code and Naturalness. ACM Comput. Surv., vol. 51, no. 4, 2018.

- Jain et al. CodeT5: Identifier-Aware Unified Pretrained Encoder-Decoder Models for Code Understanding and Generation. in EMNLP, 2021.

- H. Lu et al. MultiPL-T5: A Multilingual Pretrained Language Model for Programming Languages. in ACL, 2023.

- S. Ahmad et al. Transformer-based Source Code Summarization. in ACL, 2020.

- Feng et al. CodeBERT: A Pre-Trained Model for Programming and Natural Languages. in EMNLP, 2020.

- Li et al. Improving Code Search with Natural Language and LLMs. in ICSE, 2022.

- Ray et al. Neural Code Completion via Language Models. in ICSE, 2021.

- S. Natarajan et al. LLMs in Code Clone Detection. arXiv 2023. arXiv:2304.11836.

- Y. Yao et al. Explainable Bug Detection Using LLMs. in ASE, 2022.

- L. Xu et al. LLMs for Requirement Engineering Automation. in RE, 2023.

- M. Zhou et al. Towards General-Purpose Code Generation via Prompting. arXiv 2023. arXiv:2304.01664.

- Mesbah et al. Automatic Program Repair with LLMs. in ISSTA, 2022.

- P. Chen et al. Human-AI Pair Programming: An Empirical Study with Copilot. in CHI, 2023.

- R. Srikant et al. DocPrompting: Generating Documentation with LLMs. in ACL Findings, 2022.

- Sobania et al. An Empirical Study of Code Generation Using ChatGPT. in ESEC/FSE, 2023.

- R. Maddison et al. InCoder: Generative Code Pretraining for Completion and Synthesis. arXiv 2022. arXiv:2204.05999.

- J. Zhou et al. Cross-lingual Code Migration with Transformers. in ICSE, 2023.

- Y. Zhang et al. Prompt Tuning for Code Intelligence Tasks. in ACL, 2023.

- M. White et al. Unsupervised Learning of Code Representations. in ESEC/FSE, 2021.

- Bader et al. AI for DevOps: Monitoring and Debugging with LLMs. in ICSE SEIP, 2023.

- K. Misra et al. Code Search via Contrastive Pretraining and Prompting. arXiv 2023. arXiv:2305.02881.

- Di Federico et al. Issues with AI Pair Programmers: An Analysis. in ICPC, 2023.

- T. Alon et al. Code2Vec: Representing Code for ML. in POPL, 2019.

- M. Pradel et al. Learning to Find Bugs with LLMs. in PLDI, 2022.

- Chen et al. Context-Aware Program Completion. in FSE, 2022.

- Li et al. Improving Code Search with Natural Language and LLMs. in ICSE, 2022.

- Ray et al. Neural Code Completion via Language Models. in ICSE, 2021.

- S. Natarajan et al. LLMs in Code Clone Detection. arXiv 2023. arXiv:2304.11836. [CrossRef]

- Y. Yao et al. Explainable Bug Detection Using LLMs. in ASE, 2022.

- L. Xu et al. LLMs for Requirement Engineering Automation. in RE, 2023.

- M. Zhou et al. Towards General-Purpose Code Generation via Prompting. arXiv 2023. arXiv:2304.01664. [CrossRef]

- G. Mesbah et al. Automatic Program Repair with LLMs. in ISSTA, 2022.

- P. Chen et al. Human-AI Pair Programming: An Empirical Study with Copilot. in CHI, 2023.

- R. Srikant et al. DocPrompting: Generating Documentation with LLMs. in ACL Findings, 2022.

- Sobania et al. An Empirical Study of Code Generation Using ChatGPT. in ESEC/FSE, 2023.

- R. Maddison et al. InCoder: Generative Code Pretraining for Completion and Synthesis. arXiv 2022. arXiv:2204.05999. [CrossRef]

- Zhou et al. Cross-lingual Code Migration with Transformers. in ICSE, 2023.

- Y. Zhang et al. Prompt Tuning for Code Intelligence Tasks. in ACL, 2023.

- M. White et al. Unsupervised Learning of Code Representations. in ESEC/FSE, 2021.

- Zhang et al. LLMs for Design Pattern Detection in Code. in ICPC, 2023.

- Z. Tang et al. Enhancing IDEs with LLMs. in ICSE, 2023.

- S. Chakraborty et al. Code Review Assistance with LLMs. in ASE, 2022.

- L. Wang et al. Evaluating and Improving Code Comments Using GPT. in EMSE, 2023.

- T. Wu et al. PromptBench: A Benchmark for Code Prompting. in NeurIPS Datasets and Benchmarks, 2022.

- S. Rajpal et al. LLMs for Test Case Generation. in ISSTA, 2023.

- M. Qiu et al. Assessing Copilot’s Impact on Software Quality. arXiv 2023. arXiv:2305.01154. [CrossRef]

- Kriz et al. Lambada: Few-shot Code Generation. arXiv 2021. arXiv:2102.04664. [CrossRef]

- M. Zhou et al. A Survey on Code Generation Techniques. TOSEM, vol. 30, no. 4, 2022.

- Hilton et al. Inference under Compute Constraints: Fast Code Generation. arXiv 2023. arXiv:2304.10143. [CrossRef]

- Baker et al. Code Generation with Sketching and Reinforcement Learning. in ICLR, 2020.

- Zhou et al. Cross-lingual Code Migration with Transformers. in ICSE, 2023.

- Y. Zhang et al. Prompt Tuning for Code Intelligence Tasks. in ACL, 2023.

- White et al. Unsupervised Learning of Code Representations. in ESEC/FSE, 2021.

- Bader et al. AI for DevOps: Monitoring and Debugging with LLMs. in ICSE SEIP, 2023.

- K. Misra et al. Code Search via Contrastive Pretraining and Prompting. arXiv 2023. arXiv:2305.02881. [CrossRef]

- Di Federico et al. Issues with AI Pair Programmers: An Analysis. in ICPC, 2023.

- T. Alon et al. Code2Vec: Representing Code for ML. in POPL, 2019.

- Pradel et al. Learning to Find Bugs with LLMs. in PLDI, 2022.

- H. Chen et al. Context-Aware Program Completion. in FSE, 2022.

- Li et al. Improving Code Search with Natural Language and LLMs. in ICSE, 2022.

- Ray et al. Neural Code Completion via Language Models. in ICSE, 2021.

- S. Natarajan et al. LLMs in Code Clone Detection. arXiv 2023. arXiv:2304.11836. [CrossRef]

- Y. Yao et al. Explainable Bug Detection Using LLMs. in ASE, 2022.

- L. Xu et al. LLMs for Requirement Engineering Automation. in RE, 2023.

- Zhou et al. Towards General-Purpose Code Generation via Prompting. arXiv 2023. arXiv:2304.01664. [CrossRef]

- G. Mesbah et al. Automatic Program Repair with LLMs. in ISSTA, 2022.

- Chen et al. Human-AI Pair Programming: An Empirical Study with Copilot. in CHI, 2023.

- R. Srikant et al. DocPrompting: Generating Documentation with LLMs. in ACL Findings, 2022.

- Sobania et al. An Empirical Study of Code Generation Using ChatGPT. in ESEC/FSE, 2023.

- R. Maddison et al. InCoder: Generative Code Pretraining for Completion and Synthesis. arXiv 2022. arXiv:2204.05999. [CrossRef]

- J. Zhou et al. Cross-lingual Code Migration with Transformers. in ICSE, 2023.

- Y. Zhang et al. Prompt Tuning for Code Intelligence Tasks. in ACL, 2023.

- M. White et al. Unsupervised Learning of Code Representations. in ESEC/FSE, 2021.

- Fard et al. Interactive Software Engineering Assistants with GPT. IEEE Software, 2023.

- T. Kobayashi et al. LLMs for Embedded Systems Programming. in EMSOFT, 2023.

- T. H. Nguyen et al. Semantic Search and Code Reuse with LLMs. in MSR, 2023.

- M. Rabinovich et al. Learning to Represent Programs with Graphs. in ICLR, 2019.

- Yu et al. Evaluation of LLMs on Software Engineering Benchmarks. in ICSE, 2023.

- Fard et al. Interactive Software Engineering Assistants with GPT. IEEE Software, 2023.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).