This section presents the results of the statistical analyses conducted to evaluate the effectiveness of health applications. It begins with descriptive statistics summarizing key features of the apps. Subsequently, the performance of each classification model—namely K-Nearest Neighbors, Naive Bayes, and Logistic Regression—is assessed using confusion matrices, ROC curves, and key performance metrics, including accuracy, precision, recall, F1 score, AUC and etc. In addition, logistic regression coefficients are examined to evaluate the predictive effectiveness of application features in relation to user ratings.

3.1. Distribution of Application Features

Distribution of the variables are presented in

Table 1, revealed that the majority were AI-based (n= 328, 57.7%) and primarily categorized under Health & Fitness (n = 360, 63.4%). Some effective health & fitness applications filtered in this study are mostly developed by Leap Fitness Group. According to [

7], AI algorithms can predictively project individual choices, preferences, geographic behaviors, and patterns by analyzing user data. This enables mobile apps to deliver truly personalized, tailored content, recommendations, and notifications, creating a more engaging and personalized user experience. Furthermore, [

11] states that Fitness apps provide various feature sets to assist individuals’ physical activity (e.g., running, cycling, working out, and swimming). For example, data management feature set allows users to collect and manage their exerciser's data, such as recording their steps, running routes, calories burned, and heart rate. A considerable proportion of applications had low user reviews (n = 411, 72.4%), indicating limited user engagement or relatively new releases. In terms of effectiveness, applications were evenly distributed between those with high ratings (n = 284, 50%) and low ratings (n = 284, 50%), justifying the binary outcome modeling in subsequent machine learning analysis.

Furthermore, most apps were developed by small developers (n = 530, 93.3%), which may reflect the increasing participation of independent developers in the health app market. Recently updated apps comprised the majority (n = 480, 84.5%), showing that developers actively maintain and improve their applications. Regarding versioning, older versions were most common (n = 375, 66.0%), possibly due to compatibility or maintenance constraints. Almost half of the applications were released earlier (n = 276, 48.6%), indicating a longer presence on the market.

Table 1.

Distribution of Application Features.

Table 1.

Distribution of Application Features.

| Variables |

Frequency |

Percentage |

| Classification |

AI |

328 |

57.7 |

| Non-AI |

240 |

42.2 |

| Category |

Health&Fitness |

360 |

63.4 |

| Medical |

208 |

36.6 |

| Reviews |

High |

42 |

7.4 |

| Medium |

115 |

20.2 |

| Low |

411 |

72.4 |

Table 1.

Continuation.

| Variables |

Frequency |

Percentage |

| Ratings |

High Ratings |

284 |

50 |

| Low Ratings |

284 |

50 |

| Developer |

Big Developer |

38 |

6.7 |

| Small Developer |

530 |

93.3 |

| Recent Update |

Old |

88 |

15.5 |

| Recent |

480 |

84.5 |

| Version |

High |

43 |

7.6 |

| Medium |

150 |

26.4 |

| Old |

375 |

66.0 |

| Release Year |

Old |

276 |

48.6 |

| Mid |

197 |

34.7 |

| Recent |

95 |

16.7 |

3.2. Health Application Effectiveness

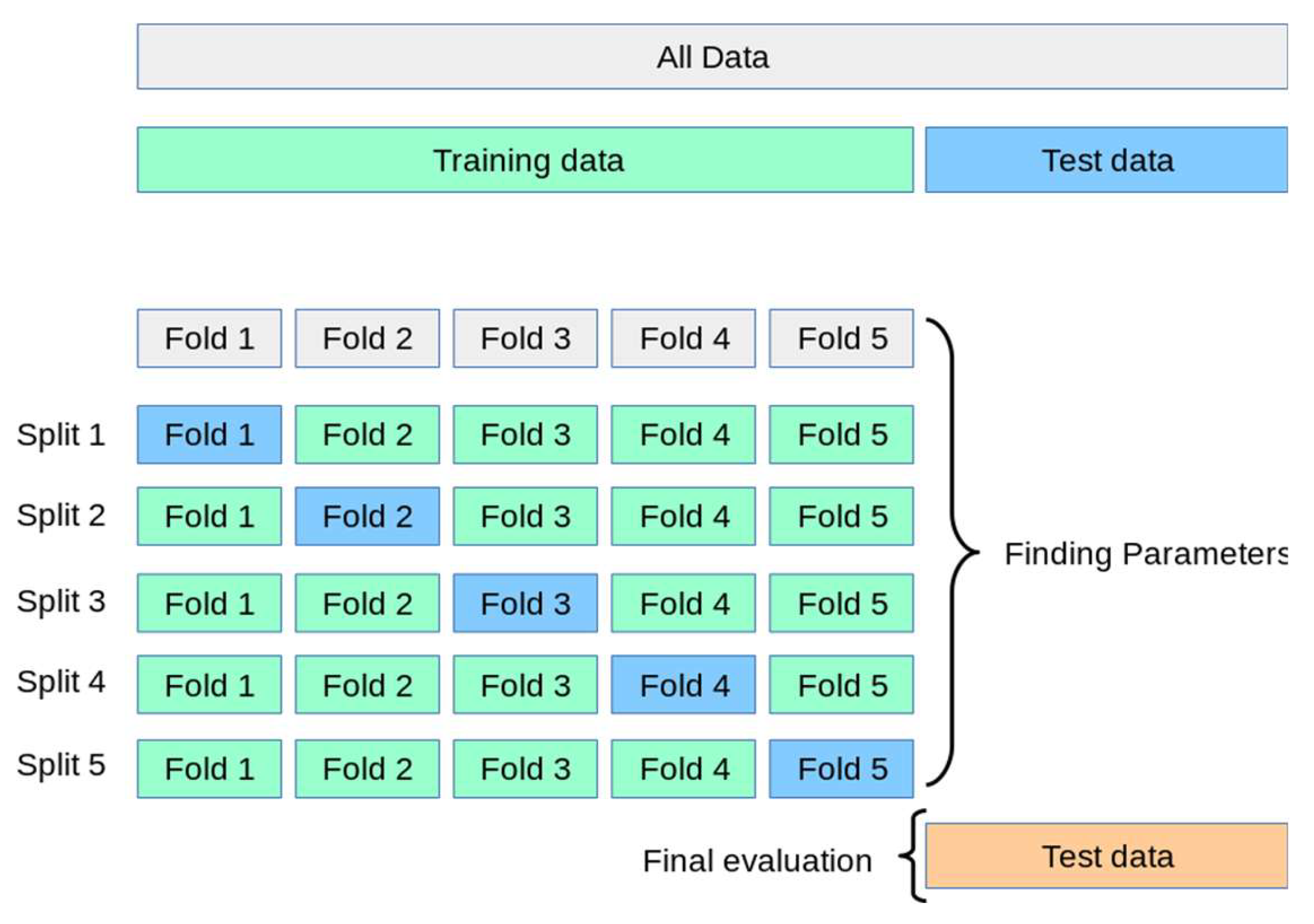

A Naive Bayes classifier was applied to evaluate the effectiveness of health applications based on user ratings. [

Table 2]. The model achieved an overall accuracy of 57.06%, with a 95% confidence interval of [49.26%, 64.61%]. However, it did not significantly outperform the No Information Rate of 92.94% (p = 1.00), indicating that the model did not perform better than simply predicting the majority class. The agreement between predicted and actual outcomes was weak, with a Cohen’s Kappa of (κ=0.14) suggesting only slight reliability beyond chance.

The confusion matrix revealed that the model successfully identified all apps that were actually rated highly by users, resulting in a recall of 1.000 (100%). However, it also incorrectly classified 73 low-rated apps as highly rated, producing a low precision of 14.12% [

Table 3]. In other words, while the model was sensitive to identifying effective apps, most of its predictions of “high rating” were incorrect. The combined effect of high recall and low precision led to an F1 score of 0.25, indicating a weak overall balance between correctly identifying and over-predicting highly rated apps. The model’s specificity was 53.80%, reflecting limited ability to correctly identify low-rated apps. The balanced accuracy, averaging performance across both classes, was 76.90%.

Crucially, McNemar’s Test was highly significant (p<.001), confirming that the model’s misclassifications were not random. Specifically, the model produced many more false positives (73) than false negatives (0), suggesting a strong bias toward predicting high ratings, even when apps were not actually rated highly. In summary, although the Naive Bayes model demonstrated perfect sensitivity in detecting highly rated apps, it’s very low precision and classification imbalance limits its practical usefulness. The tendency to over-predict effectiveness makes it unsuitable for applications where recommending low-quality health apps must be avoided.

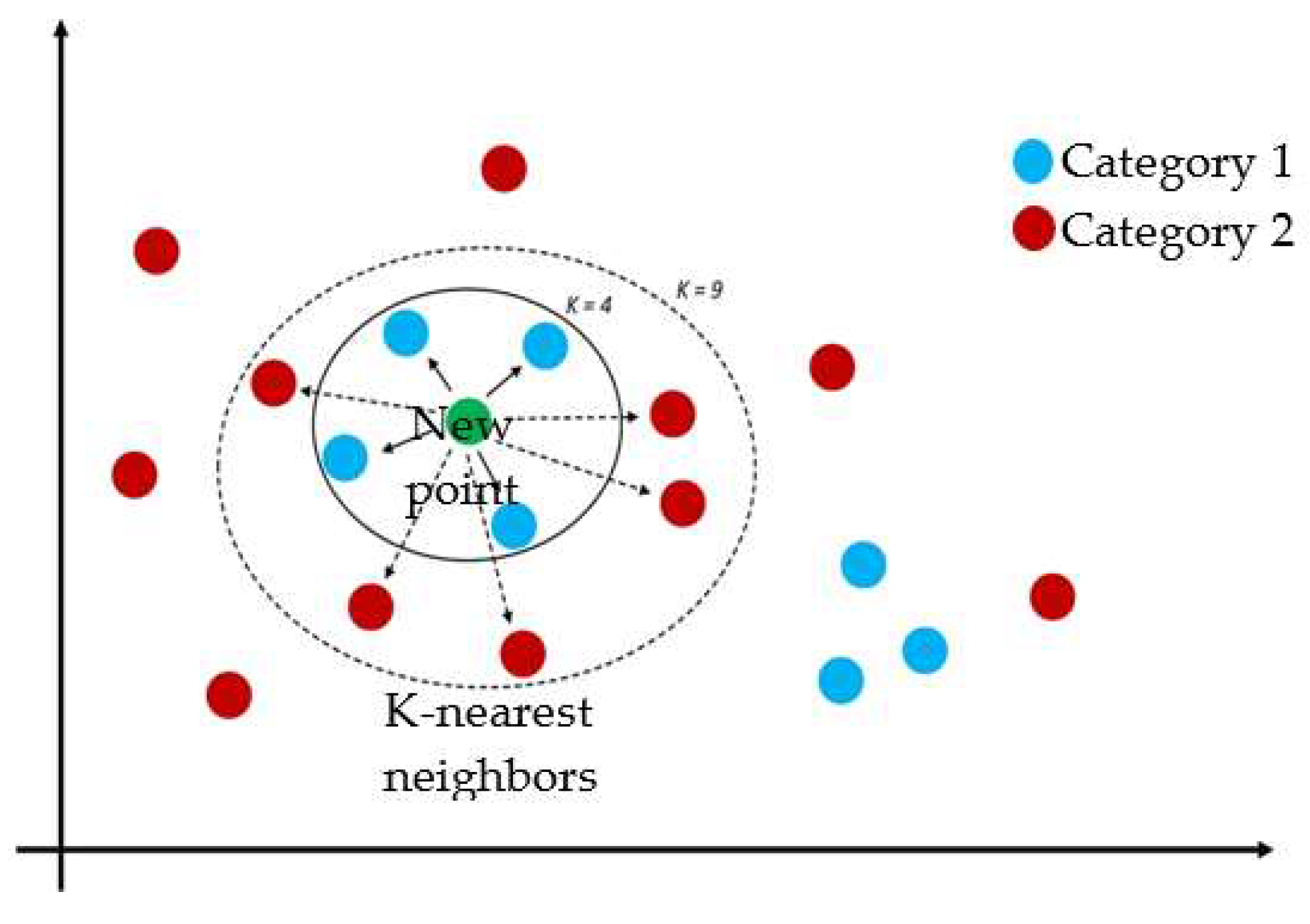

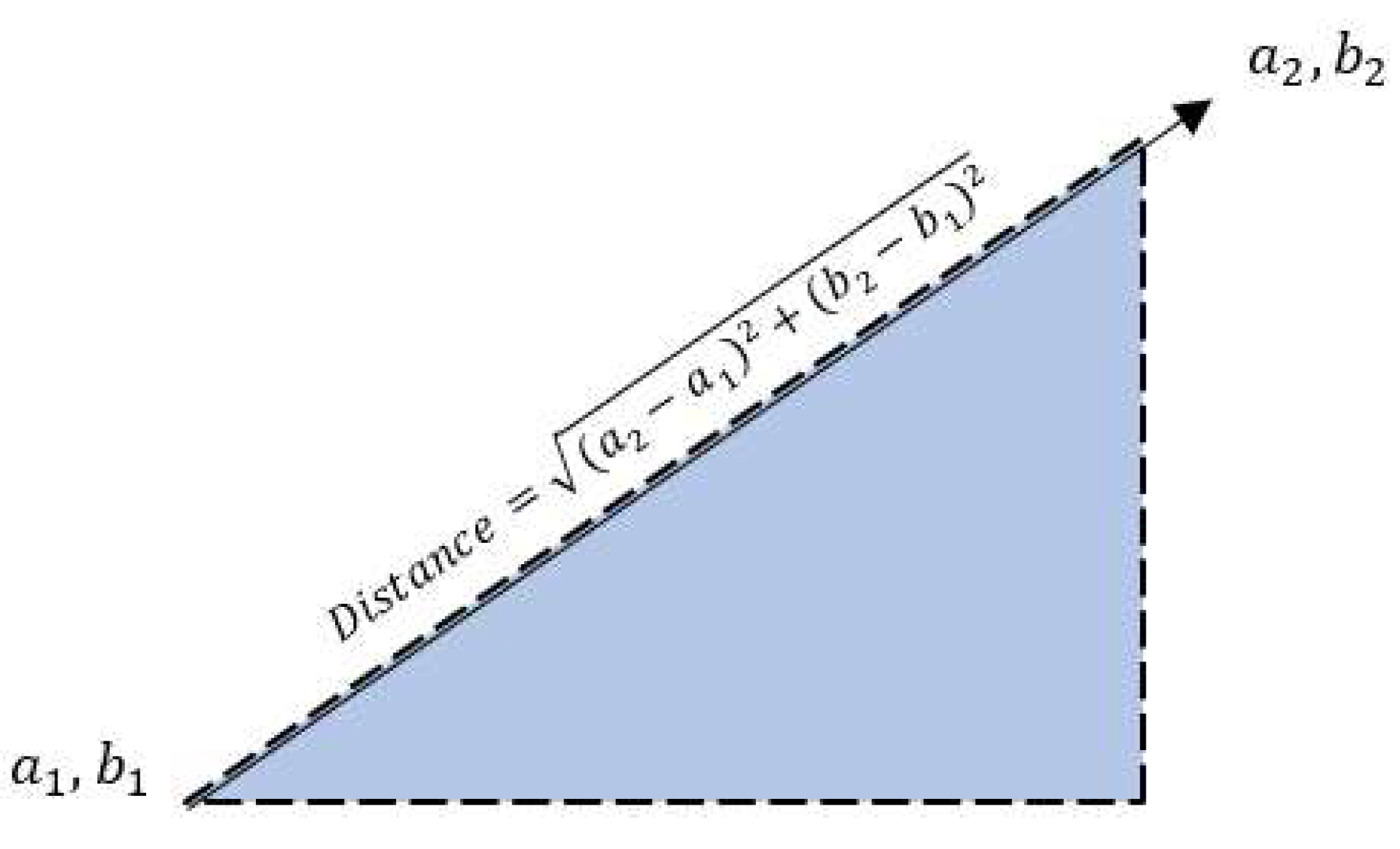

Additionally, K-Nearest Neighbors (KNN) classification model was also employed to evaluate the effectiveness of health applications based on user ratings [

Table 4]. The model achieved an overall accuracy of 75.89%, with a 95% confidence interval of [66.9%, 83.47%], significantly higher than the No Information Rate of 50% (p < .001). The Cohen’s Kappa coefficient (κ = 0.52) indicated a moderate agreement between predicted and actual class labels.

The confusion matrix showed that the model correctly classified 37 highly rated apps (true positives) and 48 lower-rated apps (true negatives), while misclassifying 19 high-rated apps (false negatives) and 8 lower-rated apps (false positives) [

Table 5]. The model yielded a recall (sensitivity) of 66.07%, meaning it correctly identified two-thirds of truly effective apps. The precision (positive predictive value) was 82.22%, indicating that most apps predicted to be highly rated were indeed so. These values resulted in an F1 score of 0.7326, reflecting a strong balance between recall and precision. The specificity was 85.71%, and the negative predictive value was 71.64%, suggesting reliable identification of both effective and ineffective apps. The balanced accuracy was equal to overall accuracy (75.89%), reinforcing the model's robustness in handling the two classes.

Although McNemar’s Test approached significance (p = .0543), it did not reach the conventional alpha threshold (p < .05), indicating that the difference in misclassification between false positives and false negatives was not statistically significant. Therefore, the model does not exhibit a strong bias toward one type of misclassification over the other. These findings suggest that the KNN model can effectively classify health applications based on user ratings, offering both sensitivity in detecting highly rated apps and precision in ensuring that positive predictions made by the model are accurate.

Lastly, Binomial Logistic Regression was used to assess health app effectiveness based on user ratings, a binomial logistic regression model was applied [

Table 6]. The model achieved an overall accuracy of 76.32%, with a 95% confidence interval of [67.44%, 83.78%], which was significantly greater than the No Information rate of 50% (p < .001). The Cohen’s Kappa coefficient (κ = 0.53) indicated a moderate agreement between predicted and actual classifications.

According to the confusion matrix, the model correctly identified 30 highly rated apps (true positives) and 57 low-rated apps (true negatives), while it missed 27 highly rated apps (false negatives) and made no false positive errors [

Table 7]. This yielded a recall (sensitivity) of 52.63%, meaning the model identified just over half of the truly high-rated apps. However, the precision (positive predictive value) was perfect at 1.000, indicating that every app predicted to be highly rated was indeed correct. These values produced an F1 score of 0.6897, reflecting a solid balance between sensitivity and precision. The model also demonstrated perfect specificity (1.000) and a balanced accuracy of 76.32%, indicating equal strength in identifying both positive and negative classes.

However, McNemar’s Test was highly significant (p<.001), revealing a notable imbalance in classification errors. Specifically, the model showed a strong tendency toward false negative failing to detect many truly high-rated apps—while avoiding false positives entirely. This pattern suggests that the model was highly conservative in predicting high-rated apps, prioritizing precision over sensitivity. In summary, the binomial logistic regression model provided reliable and cautious predictions of app effectiveness. Its perfect precision makes it useful in contexts where false recommendations must be avoided, though its lower sensitivity indicates it may overlook some truly effective apps.

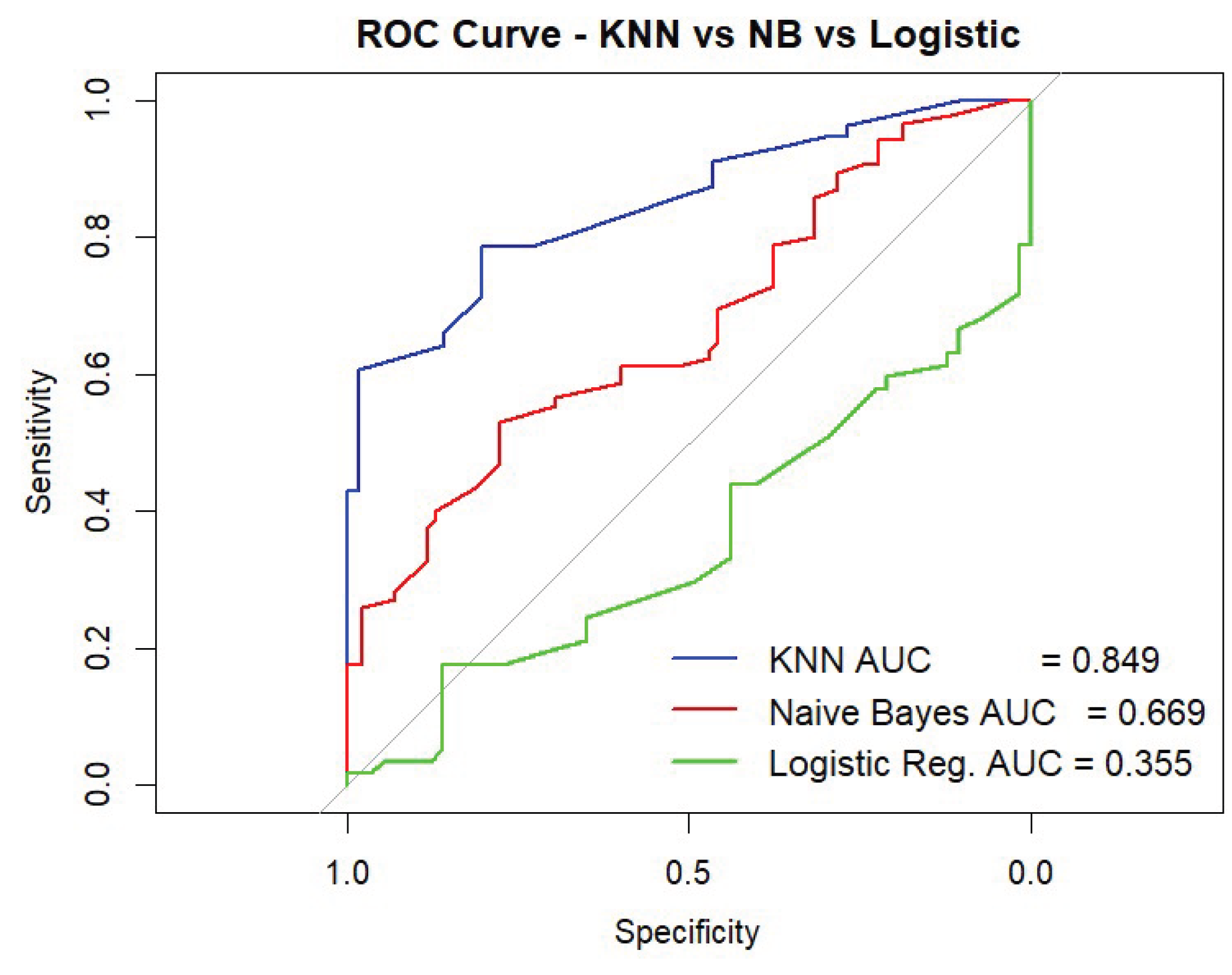

3.3. Performance Metric of the 3 Classification Models in Predicting Highly Effective Applications

Three machine learning models were evaluated in predicting whether health-related mobile applications were perceived by users as highly effective (positive class = 1) or not (class = 2) as shown in

Table 8. The K-Nearest Neighbors (KNN) model performed best overall, with an accuracy of 75.89%, high precision (82.22%), and balanced sensitivity (66.07%) and specificity (85.71%). Its F1 Score was 73.26%, and the AUC of 0.849 indicated excellent discriminative ability. The Naïve Bayes model, while achieving perfect recall (100%) for identifying highly effective apps, had very low precision (14.12%), resulting in an F1 Score of 24.74%. This suggests that it overclassified apps as highly effective, yielding many false positives.

The Binomial Logistic Regression has the highest accuracy (76.32%) and precision (100%), but its recall was lower (52.63%), meaning nearly half of truly effective apps were missed. Its F1 Score was 68.97%. However, the AUC (0.355) based on probabilities was surprisingly low [

Figure 6], indicating weak ranking ability across thresholds despite solid and positive classification at the default cutoff. Therefore, K-nearest Neighbors (KNN) Classification Model demonstrated the most reliable and balanced performance in identifying health apps rated highly (1) by users, making it the most suitable model for this classification task.

3.4. Predicting (1) High App Ratings on Health Application Features

A binomial logistic regression was conducted to assess whether specific application features significantly predicted the likelihood of receiving high user ratings. The model included seven predictors: Classification (AI vs. Non-AI), Category (Health & Fitness vs. Medical, Reviews (continuous), Developer Type, Recent Update (binary), Version, and Release Year. The overall model was statistically significant, χ² (7) = 65.09, p < .001, indicating that the set of predictors reliably distinguished between high- and low-rated applications. The model showed a reduction in deviance from 629.38 (null) to 564.29 (residual), with an Akaike Information Criterion (AIC) of 580.29, suggesting improved model fit. The Nagelkerke R² value was 0.176, indicating that approximately 17.6% of the variance in user ratings was explained by the predictors in

Table 9.

Among the predictors in

Table 10, Category emerged as a significant factor (β = 0.75, SE = 0.21, z = 3.48, p < .001), with apps in the Health & Fitness category being more than twice as likely to receive high ratings compared to those in the medical category (OR = 2.11). According to Shaw [

21], many fitness apps have now perfectly marketed themselves to both serve as a resource to use for on-demand fitness content, as well as provide personalized service and include the same type of hands-on dedicated approach one would receive if working directly with a personal trainer or gym class.

Also, [

14] cited some best and effective health and fitness applications to help you train at home, some are Centr, Nike Training Club, Fiit, Apple Fitness Plus, Sweat, Body Coach, Strava, Home Workout No equipment is among the best fitness applications. Furthermore, Recent Update was also a statistically significant predictor (β = 0.66, SE = 0.31, z = 2.14, p = .032), with recently updated apps being nearly twice as likely to receive high ratings (OR = 1.93). According to the survey of [

3] for top 20 trending Health & Fitness apps on Google Play as of July 9, 2025, apps like HealthifyMe, Replika, Catzy, and others are currently trending—with user ratings ranging from 4.2 to 4.8 stars, indicating both active use and high satisfaction, This demonstrates that recently updated health apps on Google Play are indeed highly rated, reinforcing the trend that top-performing health apps combine frequent maintenance with strong user approval.

In Classification of (AI vs. Non-AI) it showed a marginally significant positive association (β = 0.39, SE = 0.21, z = 1.87, p = .062), indicating that AI-based apps were 1.47 times more likely to receive high ratings. A study of [

13] analyzed reviews were largely positive with 6700 reviews (6700/7929, 84.50%) giving the app a 5-star rating and 2676 reviews (2676/7929, 33.75%) explicitly terming the app “helpful” or that it “helped.” Of 7929 reviews, 251 (3.17%) had a less than 3-star rating and were termed as negative reviews for AI health apps. Conversely, Version also approached statistical significance (β = 0.34, SE = 0.18, z = 1.95, p = .052), suggesting that newer app versions may be associated with higher ratings (OR = 1.41). For instance, the recently updated version of MyFitnessPal (Android build 25.26.0) released on July 2, 2025, hits a 4.7 ratings with over 2,751,560 downloads on google play store. According to Tim Holley [

10], Chief Product Officer at MyFitnessPal, “The 2025 Winter Release underscores MyFitnessPal's commitment to supporting our members as they advance the way they approach nutrition and habit development”, she added on the post "Integrating tools like Voice Log and Weekly habits, gives members effective solutions to streamline tracking, while reinforcing the importance of progress over perfection in building lasting habits—because true success in nutrition comes from consistency, not perfection.".

In contrast, Reviews (β = -0.06, p = .748), Developer Type (β = 17.59, p = .981), and Release Year (β = 0.04, p = .803) were not statistically significant predictors of high ratings. Notably, the extremely large coefficient and standard error for Developer Type may indicate model instability or data sparsity in that category. Overall, the findings suggest that application category, recent updates, and possibly AI classification and versions are relevant features associated with higher user ratings.