Submitted:

19 July 2025

Posted:

21 July 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Problem Statement

3. Literature Review

3.1. Image Segmentation Techniques for Urban Environments

3.2. Advancements in Deep Learning for Urban Scene Analysis

3.3. Utilization of Attention Mechanisms in Urban Scene Segmentation

3.4. Context-Aware Segmentation Approaches

4. Problem Formulation

4.1. Dataset Replacement

4.2. Introduction of a Competitive Layer

4.3. Model Light-Weighting

4.4. Accuracy Preservation Amidst Model Reduction

4.5. Optimization Goal

5. Analysis

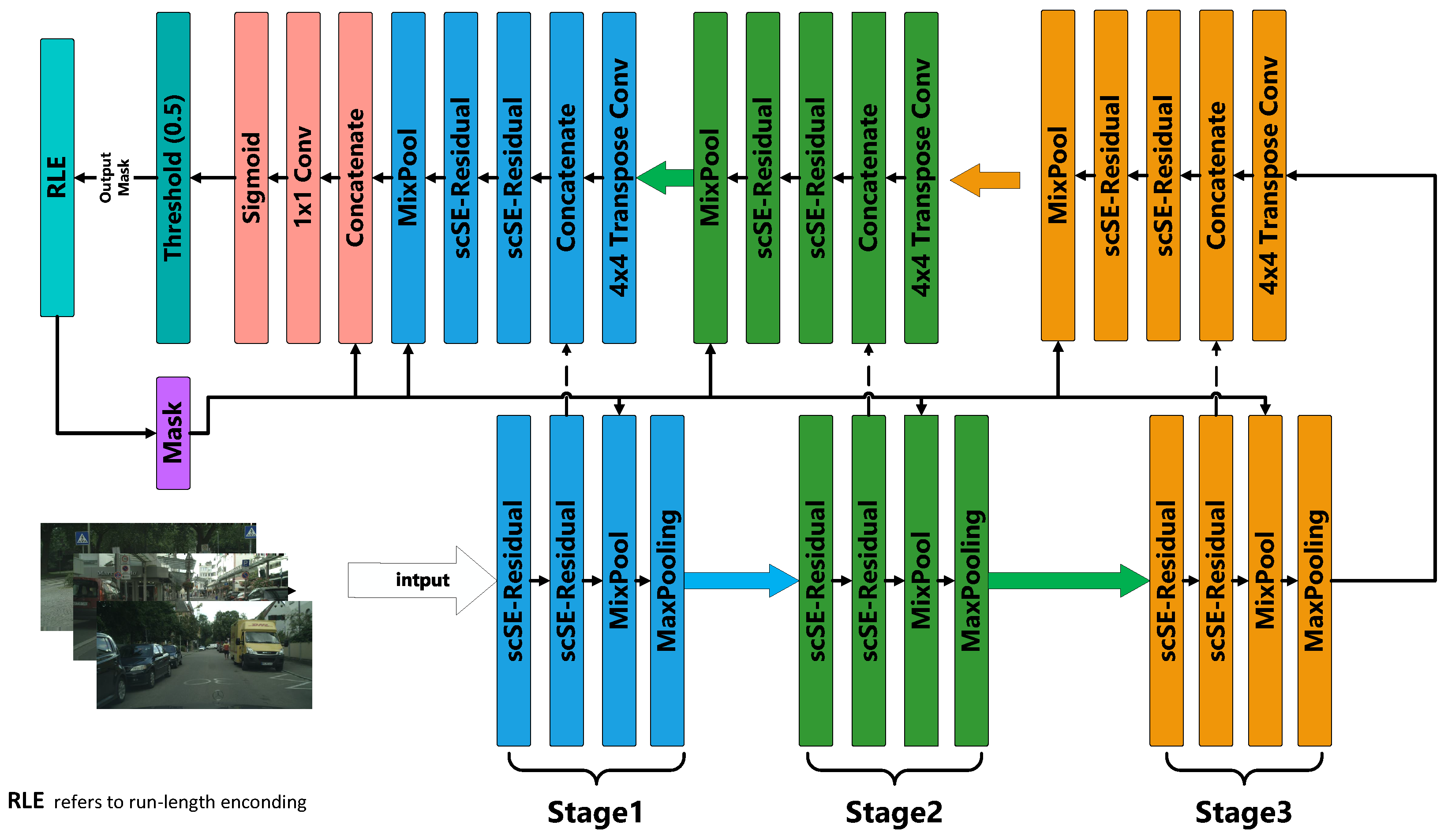

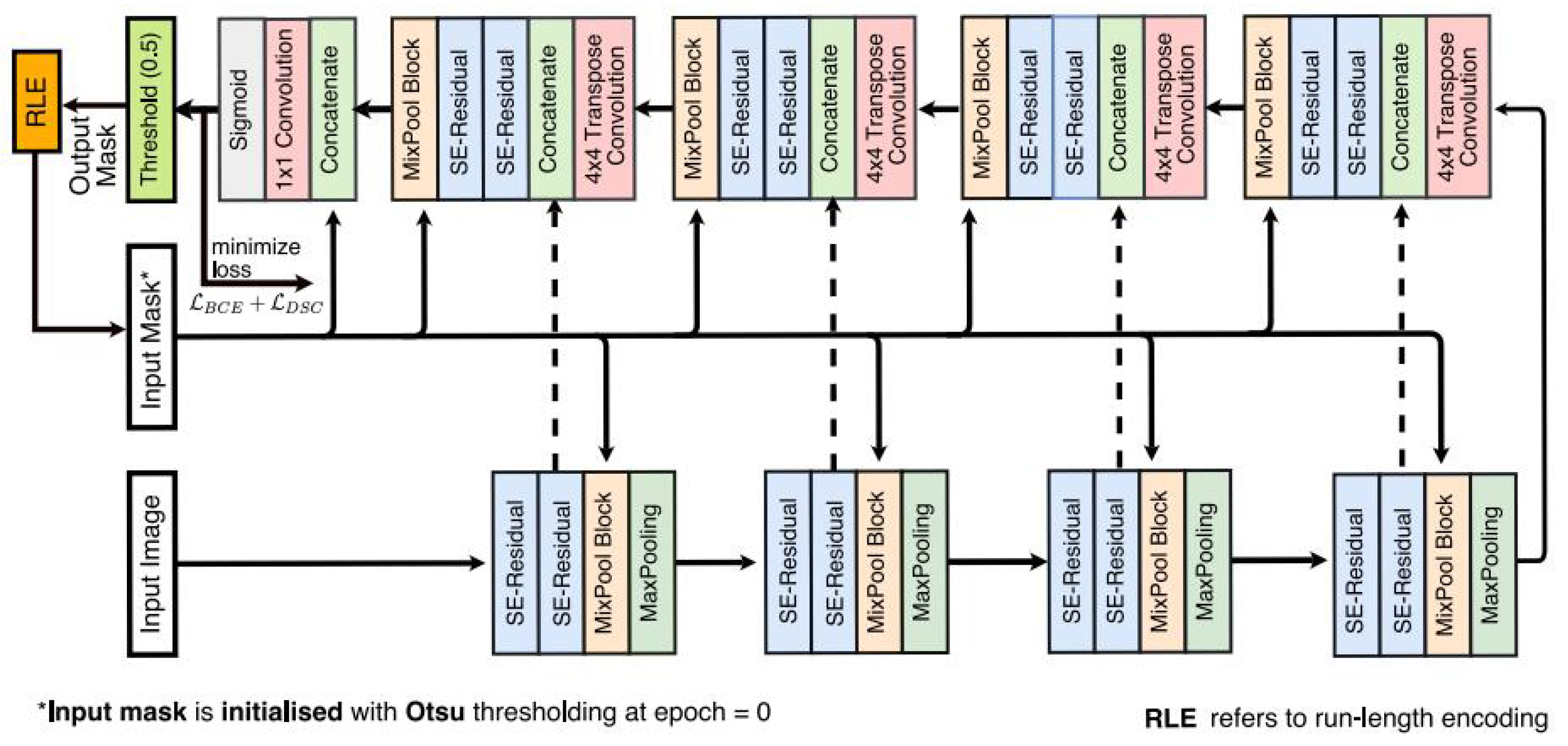

5.1. Original FANet Model: Principles and Application

5.2. Improved FANet Model with New Dataset "Cityscapes"

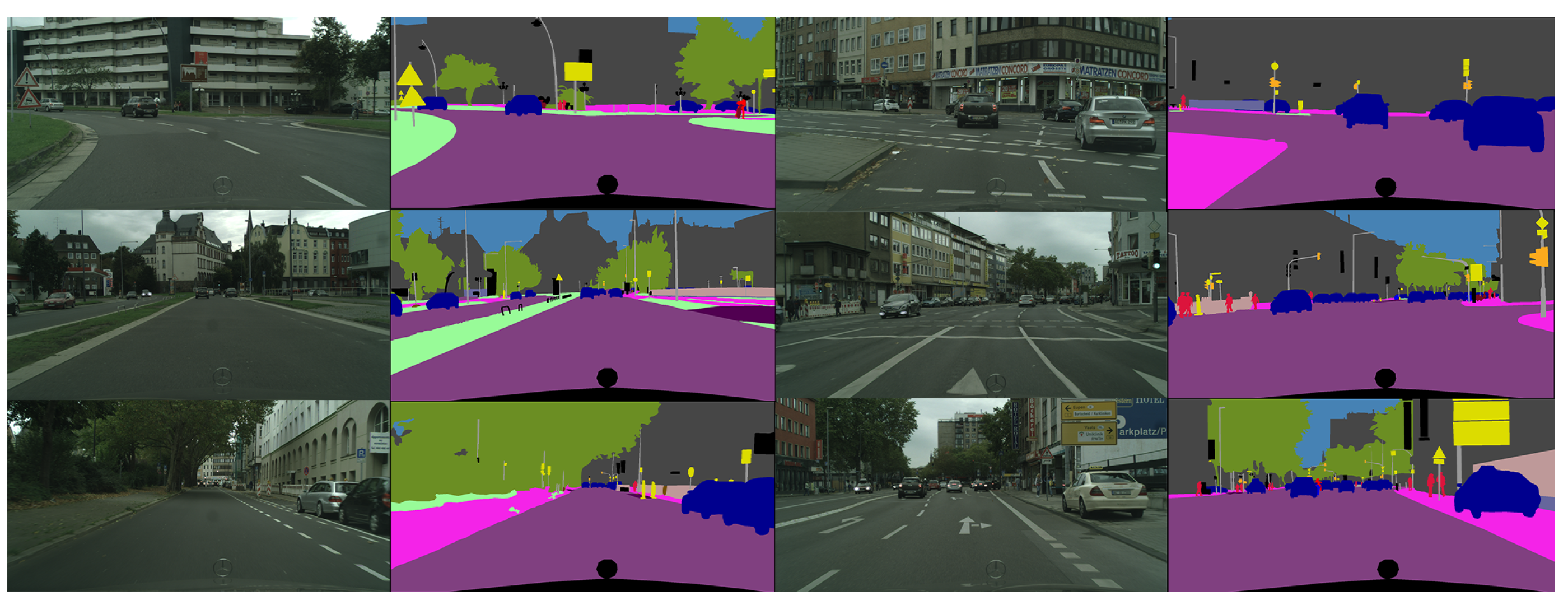

5.2.1. Dataset Description

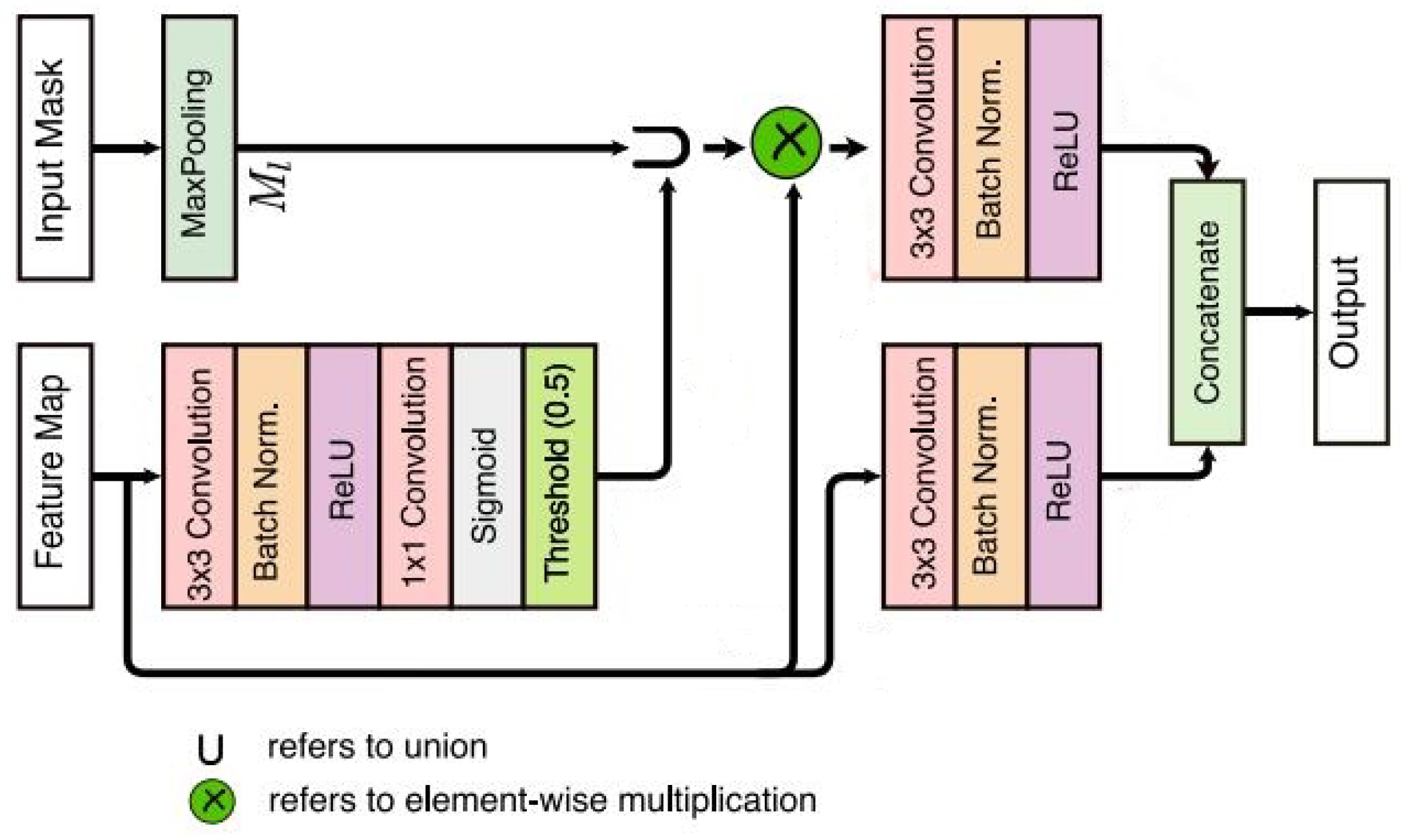

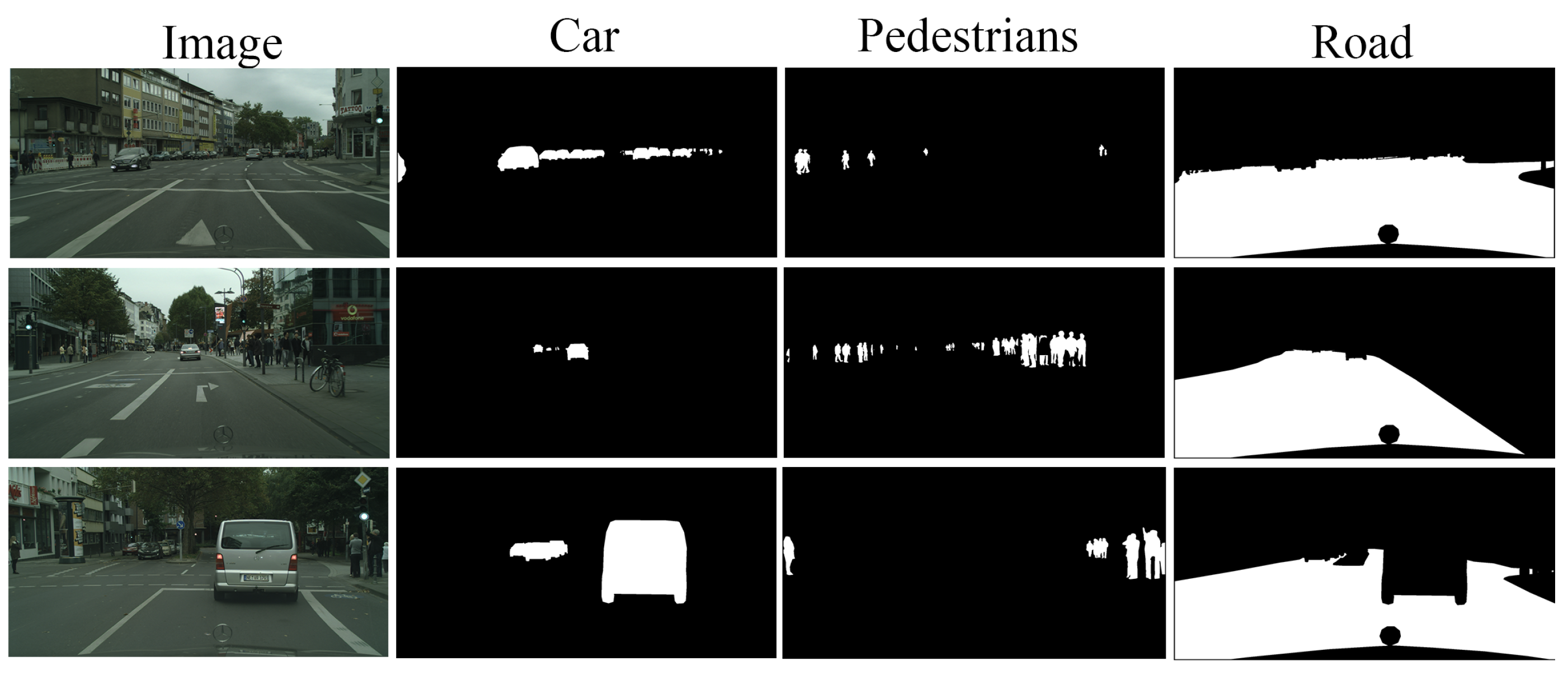

5.2.2. Adaptation to Cityscapes Dataset by Introducing a Competitive Layer

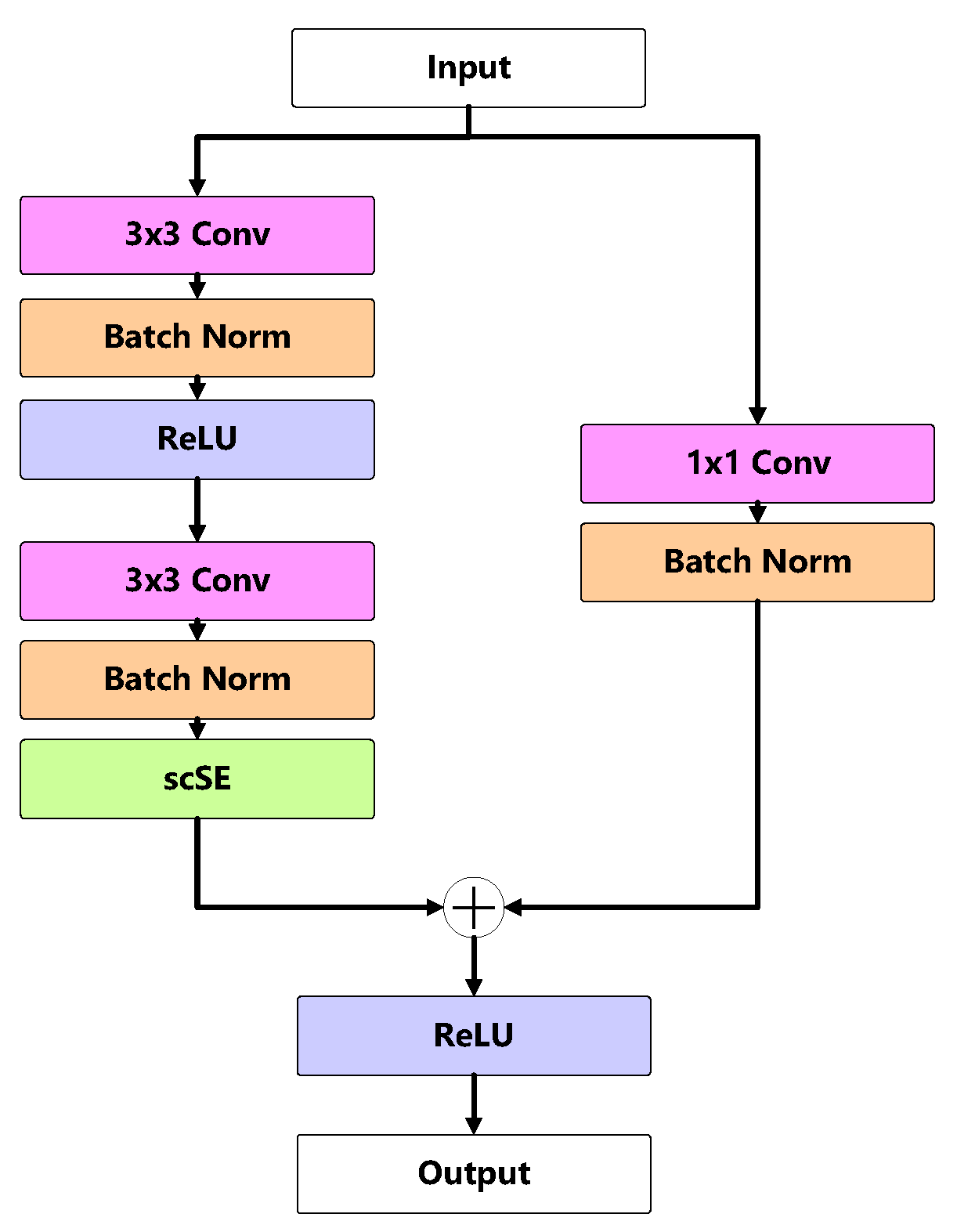

5.2.3. Model Light-Weighting

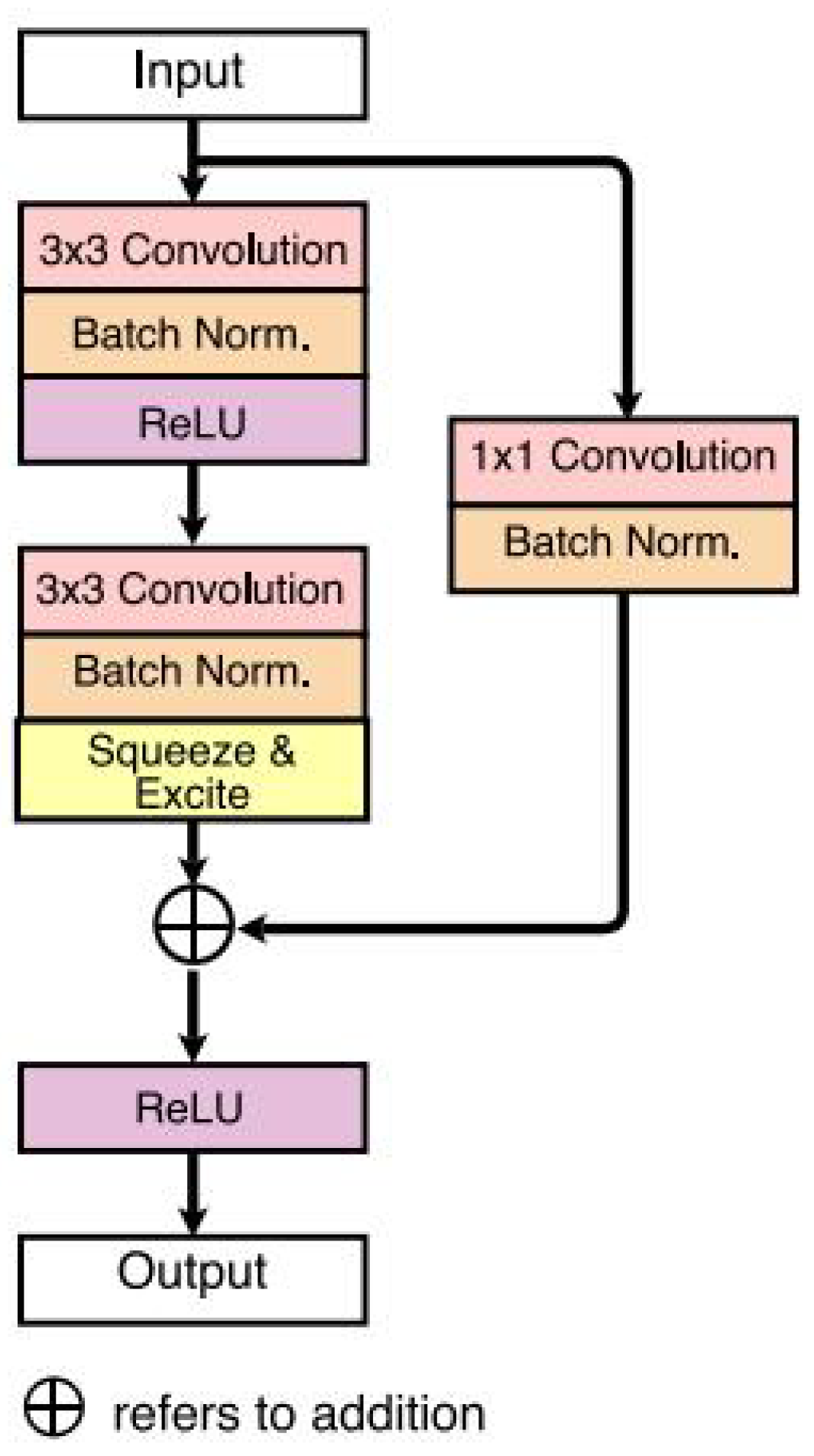

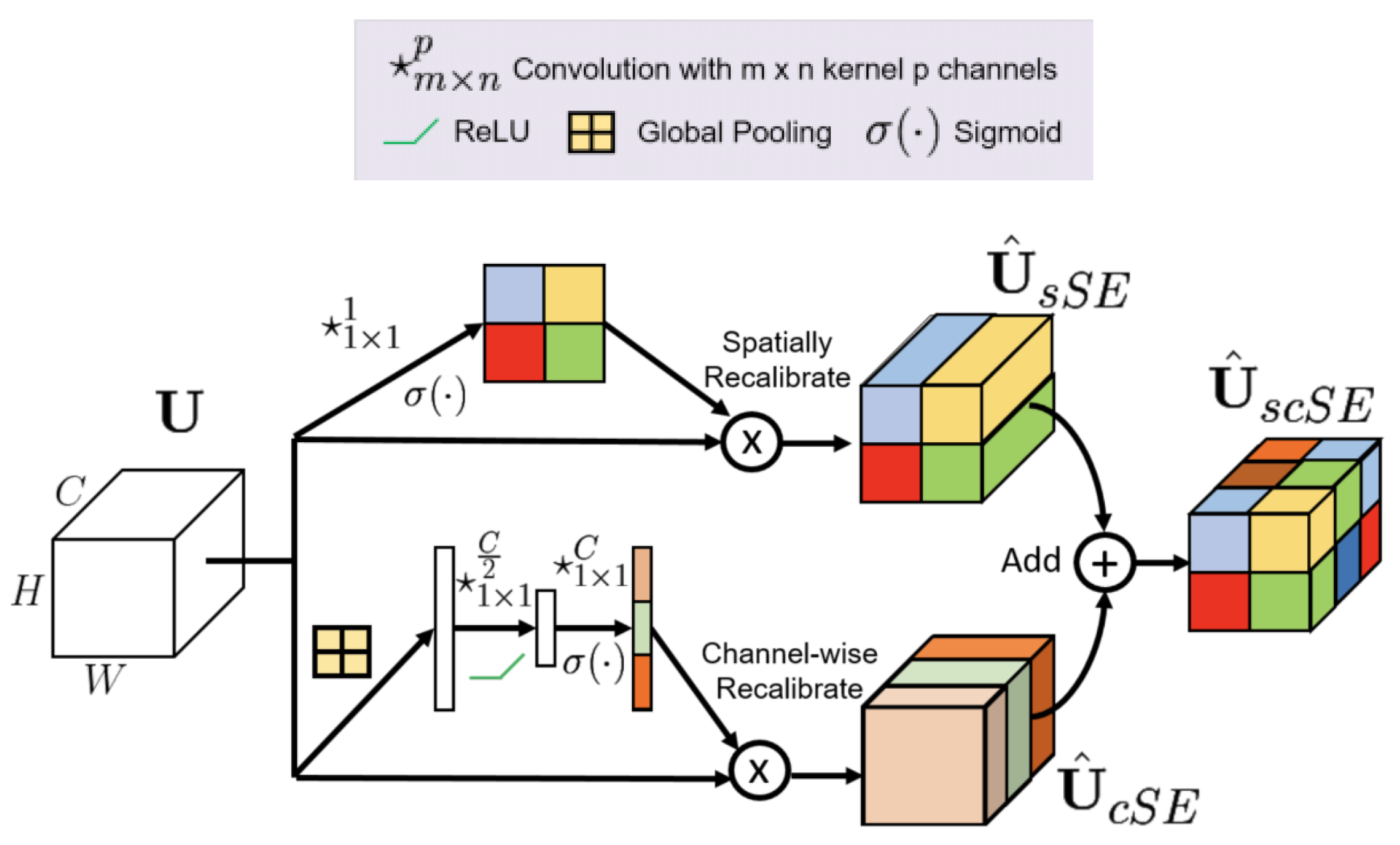

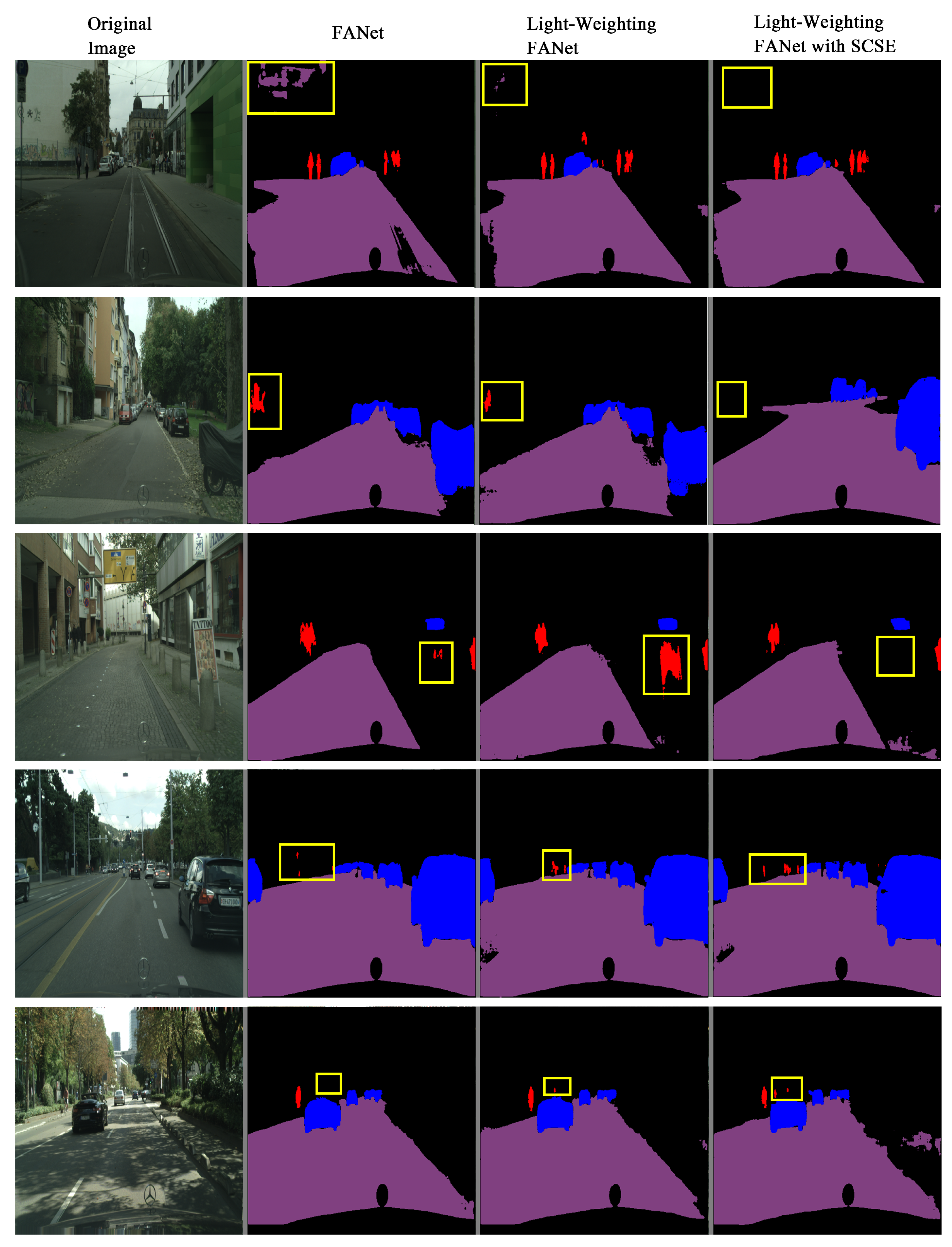

5.2.4. Optimization with Attention Mechanism Modules

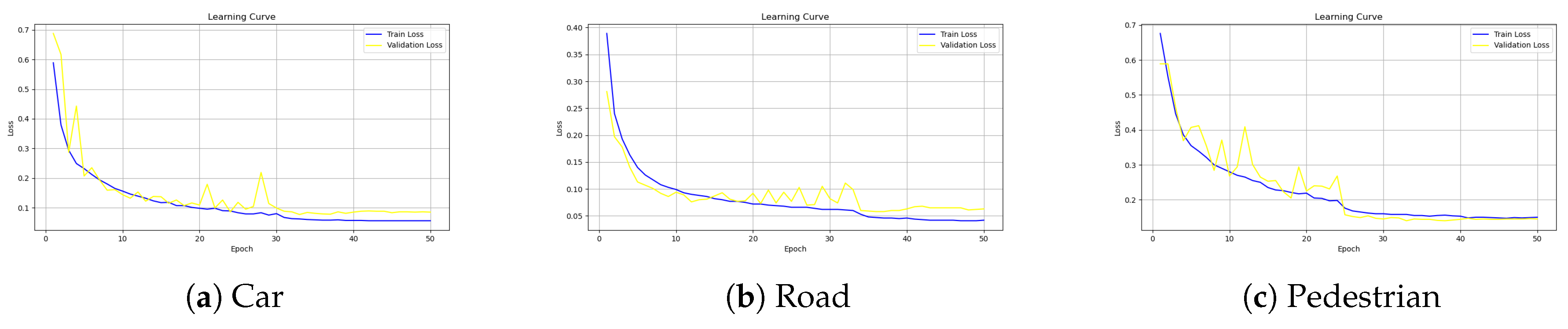

6. Experimental Results

6.1. Implementation Details

6.2. Results and Analysis

7. Discussion

8. Conclusions

References

- M. Cordts et al., “The Cityscapes Dataset for Semantic Urban Scene Understanding,” in 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA: IEEE, Jun. 2016, pp. 3213–3223. [CrossRef]

- G. Neuhold, T. Ollmann, S. R. Bulo, and P. Kontschieder, “The Mapillary Vistas Dataset for Semantic Understanding of Street Scenes,” in 2017 IEEE International Conference on Computer Vision (ICCV), Venice: IEEE, Oct. 2017, pp. 5000–5009. [CrossRef]

- H. Zhao, J. Shi, X. Qi, X. Wang, and J. Jia, “Pyramid Scene Parsing Network,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI: IEEE, Jul. 2017, pp. 6230–6239. [CrossRef]

- H. Zhang et al., “Context Encoding for Semantic Segmentation,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA: IEEE, Jun. 2018, pp. 7151–7160. [CrossRef]

- J. Hu, L. Shen, S. Albanie, G. Sun, and E. Wu, “Squeeze-and-Excitation Networks,” IEEE Trans. Pattern Anal. Mach. Intell., vol. 42, no. 8, pp. 2011–2023, Aug. 2020. [CrossRef]

- A. G. Roy, N. Navab, and C. Wachinger, “Recalibrating Fully Convolutional Networks With Spatial and Channel ‘Squeeze and Excitation’ Blocks,” IEEE Trans. Med. Imaging, vol. 38, no. 2, pp. 540–549, Feb. 2019. [CrossRef]

- N. K. Tomar et al., “FANet: A Feedback Attention Network for Improved Biomedical Image Segmentation,” IEEE Trans. Neural Netw. Learning Syst., vol. 34, no. 11, pp. 9375–9388, Nov. 2023. [CrossRef]

- J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” in 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA: IEEE, Jun. 2015, pp. 3431–3440. [CrossRef]

- G. Han et al., “Improved U-Net based insulator image segmentation method based on attention mechanism,” Energy Reports, vol. 7, pp. 210–217, Nov. 2021. [CrossRef]

- Sitao Luan, Mingde Zhao, Xiao-Wen Chang, Doina Precup (2019). Break the ceiling: Stronger multi-scale deep graph convolutional networks. Advances in neural information processing systems, 32.

- Chenqing Hua, Sitao Luan, Qian Zhang, Jie Fu Graph neural networks intersect probabilistic graphical models: A survey. arXiv preprint. arXiv:2206.06089.

- Kass, M. , Witkin, A., & Terzopoulos, D. (1988). Snakes: Active contour models. International Journal of Computer Vision, 1(4), 321–331.

- Xu, C. , & Prince, J. L. (1997). Gradient vector flow: A new external force for snakes. Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, 66–71.

- Sitao Luan, Mingde Zhao, Chenqing Hua, Xiao-Wen Chang, Doina Precup (2020). Complete the missing half: Augmenting aggregation filtering with diversification for graph convolutional networks. In NeurIPS 2022 Workshop: New Frontiers in Graph Learning.

- Long, J. , Shelhamer, E. , & Darrell, T. (2015). Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp.3431–3440). IEEE. [Google Scholar]

- Ronneberger, O. , Fischer, P., & Brox, T. (2015). U-Net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015 (Vol. 9351, pp.234–241). Springer. [CrossRef]

- Badrinarayanan, V. , Kendall, A., &; Cipolla, R. (2017). SegNet: A deep convolutional encoder–decoder architecture for image segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 39(12), 2481–2495. [Google Scholar]

- Zhao, H., Shi, J., Qi, X.,Wang, X., & Jia, J. (2017). Pyramid scene parsing network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 2881–2890.

- Sitao Luan, Chenqing Hua, Qincheng Lu, Jiaqi Zhu, Mingde Zhao, Shuyuan Zhang, Xiao-Wen Chang, Doina Precup (2021). Is Heterophily A Real Nightmare For Graph Neural Networks To Do Node Classification? arXiv preprint. arXiv:2109.05641.

- Grady, L. (2006). Random walks for image segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 28(11), 1768–1783.

- He, K. , Gkioxari, G. , Dollár, P., & Girshick, R. B. (2017). Mask R-CNN. In Proceedings of the IEEE International Conference on Computer Vision (ICCV) (pp.2980–2988). IEEE. [Google Scholar] [CrossRef]

- Girshick, R. , Donahue, J., Darrell, T., & Malik, J. (2016). Region-based convolutional networks for accurate object detection and segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 38(1), 142–158.

- Sitao Luan, Chenqing Hua, Qincheng Lu, Jiaqi Zhu, Mingde Zhao, Shuyuan Zhang, Xiao-Wen Chang, Doina Precup (2022). Revisiting heterophily for graph neural networks. Advances in neural information processing systems, 35, 1362-1375.

- Chen, L.-C. , Papandreou, G., Kokkinos, I., Murphy, K., & Yuille, A. L. (2016). DeepLab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs. IEEE Transactions on Pattern Analysis and Machine Intelligence, 40(4), 834–848.

- Chen, L.-C. , Papandreou, G., Schroff, F., & Adam, H. (2017). Rethinking atrous convolution for semantic image segmentation. arXiv preprint. arXiv:1706.05587.

- Sitao Luan, Chenqing Hua, Minkai Xu, Qincheng Lu, Jiaqi Zhu, Xiao-Wen Chang, Jie Fu, Jure Leskovec, Doina Precup (2024). When Do Graph Neural Networks Help with Node Classification? Investigating the Homophily Principle on Node Distinguishability. Advances in Neural Information Processing Systems, 36.

- Mingde Zhao, Zhen Liu, Sitao Luan, Shuyuan Zhang, Doina Precup, Yoshua Bengio (2021). A consciousness-inspired planning agent for model-based reinforcement learning. Advances in neural information processing systems, 34, 1569-1581.

- Çiçek, Ö. , Abdulkadir, A., Lienkamp, S. S., Brox, T., & Ronneberger, O. (2016). 3D U-Net: Learning dense volumetric segmentation from sparse annotation. In Medical Image Computing and Computer-Assisted Intervention – MICCAI 2016 (Vol. 9901, pp.424–432). Springer.

- Chen, C. , Qin, C., Qiu, H., Tarroni, G., Duan, J., Bai, W., & Rueckert, D. (2019). Deep learning for cardiac image segmentation: A review. arXiv preprint. arXiv:1911.03723.

- Sitao Luan, Chenqing Hua, Qincheng Lu, Jiaqi Zhu, Xiao-Wen Chang, Doina Precup (2023). When do we need graph neural networks for node classification?. In International Conference on Complex Networks and Their Applications (pp. 37-48). Cham: Springer Nature Switzerland.

- Dosovitskiy, A. , Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T.,... Houlsby, N. (2021). An image is worth 16×16 words: Transformers for image recognition at scale. In International Conference on Learning Representations.

- Hatamizadeh, A. , Nath, V., Tang, Y., Yang, D., Myronenko, A., Landman, B., & Roth, H. (2022). UNet++: A nested U-Net architecture for medical image segmentation. arXiv preprint. arXiv:1912.05074.

- Sitao Luan, Chenqing Hua, Qincheng Lu, Liheng Ma, Lirong Wu, Xinyu Wang, Minkai Xu, Xiao-Wen Chang, Doina Precup, Rex Ying, Stan Z. Li, Jian Tang, Guy Wolf, Stefanie Jegelka (2024). The heterophilic graph learning handbook: Benchmarks, models, theoretical analysis, applications and challenges. arXiv preprint. arXiv:2407.09618.

- Qincheng Lu, Sitao Luan, Xiao-Wen Chang (2024). Gcepnet: Graph convolution-enhanced expectation propagation for massive mimo detection. arXiv preprint. arXiv:2404.14886.

- Chen, J. , Lu, Y., Yu, Q., Luo, X., Adeli, E., Wang, Y., & Kalinin, A. A. (2021). TransUNet: Transformers make strong encoders for medical image segmentation. arXiv preprint. arXiv:2102.04306.

- Liu, Z. , Lin, Y. , Cao, Y., Hu, H., Wei, Y., Zhang, Z.,... Guo, B. (2021). Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE International Conference on Computer Vision (ICCV). [Google Scholar]

- Minaee, S. , Boykov, Y., Porikli, F., Plaza, A., Kehtarnavaz, N., & Terzopoulos, D. (2021). Image segmentation using deep learning: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence. Advance online publication. [CrossRef]

- Qincheng Lu, Jiaqi Zhu, Sitao Luan, Xiao-Wen Chang. Flexible Diffusion Scopes with Parameterized Laplacian for Heterophilic Graph Learning. In The Third Learning on Graphs Conference.

- García-García, A. , Orts-Escolano, S., Oprea, S., Villena-Martínez, V., & García-Rodríguez, J. (2017). A review on deep learning techniques applied to semantic segmentation. arXiv preprint. arXiv:1704.06857.

- Zhang, Y. , Yang, L., Pang, G., &; Cohn, A. (2024). Techniques and challenges of image segmentation: A review. Electronics, 12(5), 1199. [Google Scholar] [CrossRef]

- Li, S. S. L., Huang, B., Jiang, B., & Others. (2023). A survey on image segmentation using deep learning. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 16, 1–xx.

- Wang, J. , Zhao, D., & Wang, R. (2023). A systematic review of deep learning based image segmentation to guide polyp detection. Artificial Intelligence Review. Advance online publication. [CrossRef]

- Sitao Luan, Qincheng Lu, Chenqing Hua, Xinyu Wang, Jiaqi Zhu, Xiao-Wen Chang. Re-evaluating the Advancements of Heterophilic Graph Learning.

- Li, W. , Fu, H., Yu, L., & Cracknell, A. (2024). A review of remote sensing image segmentation by deep learning. International Journal of Remote Sensing. Advance online publication. [CrossRef]

- Chen, L.-C. , Zhu, Y. , Papandreou, G., Schroff, F., & Adam, H. (2018). Encoder–decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV) (pp.801–818). [Google Scholar]

- Zhou, B. , Khosla, A. , Lapedriza, A., Oliva, A., & Torralba, A. (2016). Learning deep features for discriminative localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). [Google Scholar]

- Chenqing Hua, Sitao Luan, Minkai Xu, Zhitao Ying, Jie Fu, Stefano Ermon, & Doina Precup (2023, November). MUDiff: Unified Diffusion for Complete Molecule Generation. In The Second Learning on Graphs Conference.

- Selvaraju, R. R. , Cogswell, M. , Das, A., Vedantam, R., Parikh, D., &; Batra, D. (2017). Grad-CAM: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE International Conference on Computer Vision (ICCV) (pp.618–626).

- Sitao Luan, Mingde Zhao, Xiao-Wen Chang, Doina Precup (2023, November). Training matters: Unlocking potentials of deeper graph convolutional neural networks. In International Conference on Complex Networks and Their Applications (pp. 49-60). Cham: Springer Nature Switzerland.

- Szegedy, C. , Liu, W. , Jia, Y., Sermanet, P., Reed, S., Anguelov, D.,... Rabinovich, A. (2015). Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (pp.1–9). [Google Scholar]

- Krizhevsky, A., Sutskever, I., Hinton, G. E. (2012). ImageNet classification with deep convolutional neural networks. Communications of the ACM, 60(6), 84–90.

| Methods | Category | Jaccard | F1 | Recall | Specificity | Accuracy | F2 | mIoU | Size(MB) |

|---|---|---|---|---|---|---|---|---|---|

| Original | car | 0.0263 | 0.0322 | 0.1682 | 0.9046 | 0.915596 | 0.037733 | 0.4614 | 30 |

| Light-weighting | car | 0.0246 | 0.029 | 0.1641 | 0.905 | 0.905954 | 0.034053 | 0.4606 | 7.6 |

| Light-weighting+scSE | car | 0.0267 | 0.0329 | 0.1669 | 0.9047 | 0.915748 | 0.038513 | 0.4612 | 7.8 |

| Original | people | 0.4528 | 0.5533 | 0.572 | 0.9176 | 0.995089 | 0.549075 | 0.684 | 30 |

| Light-weighting | people | 0.4939 | 0.6054 | 0.6823 | 0.9169 | 0.995032 | 0.62651 | 0.7048 | 7.6 |

| Light-weighting+scSE | people | 0.5413 | 0.6495 | 0.6709 | 0.9184 | 0.996241 | 0.645008 | 0.7292 | 7.8 |

| Original | path | 0.0006 | 0.0012 | 0.1094 | 0.6625 | 0.655846 | 0.002224 | 0.3286 | 30 |

| Light-weighting | path | 0.0005 | 0.001 | 0.1082 | 0.6719 | 0.644969 | 0.001973 | 0.3326 | 7.6 |

| Light-weighting+scSE | path | 0.0004 | 0.0008 | 0.1088 | 0.6638 | 0.656963 | 0.001557 | 0.3282 | 7.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).