2. Materials and Methods

2.1. Dataset description

The dataset used for this research was collected from March to December 2024, as part of the University of Georgia’s 4D farm (Digital and Data-Driven Demonstration Farm) on the Abraham Baldwins Agricultural College (ABAC) D.A.T.A. (Demonstrating Applied Technology in Agriculture) farm, located in Tifton, Georgia, USA (GPS coordinates: 31.485043, –83.543575). IoT device sensors were installed in the farm to measure soil moisture content and weather condition in the fields as shown in

Table 1.

The farm is divided into four (4) fields namely; Front Field, North Pivot, South Pivot and West Field with a total of fourteen (14) watermark soil moisture sensors (Realm5 Inc.) named 4D 01 to 4D 14. The Front Field contains two soil moisture sensors (4D 01 and 4D 02) while the rest contain four sensors each; North Pivot (4D 03 – 4D 06), South Pivot (4D 07 - 4D 10) and West Field has (4D 11 – 4D 14).

This study focuses exclusively on the South Pivot field. For each timestamp, the soil moisture value used in the analysis was calculated as the average of the four active sensors located in that field. Each soil moisture device records soil moisture and temperature at three depths: 8 inches, 16 inches, and 24 inches, measured in kilopascals (kPa) and Celsius (C), respectively. A single on-site weather station records the following atmospheric parameters: air temperature, dew point, humidity, solar radiation, rainfall, wind speed, wind direction, wind gust, and air pressure.

2.2. Data acquisition and preprocessing

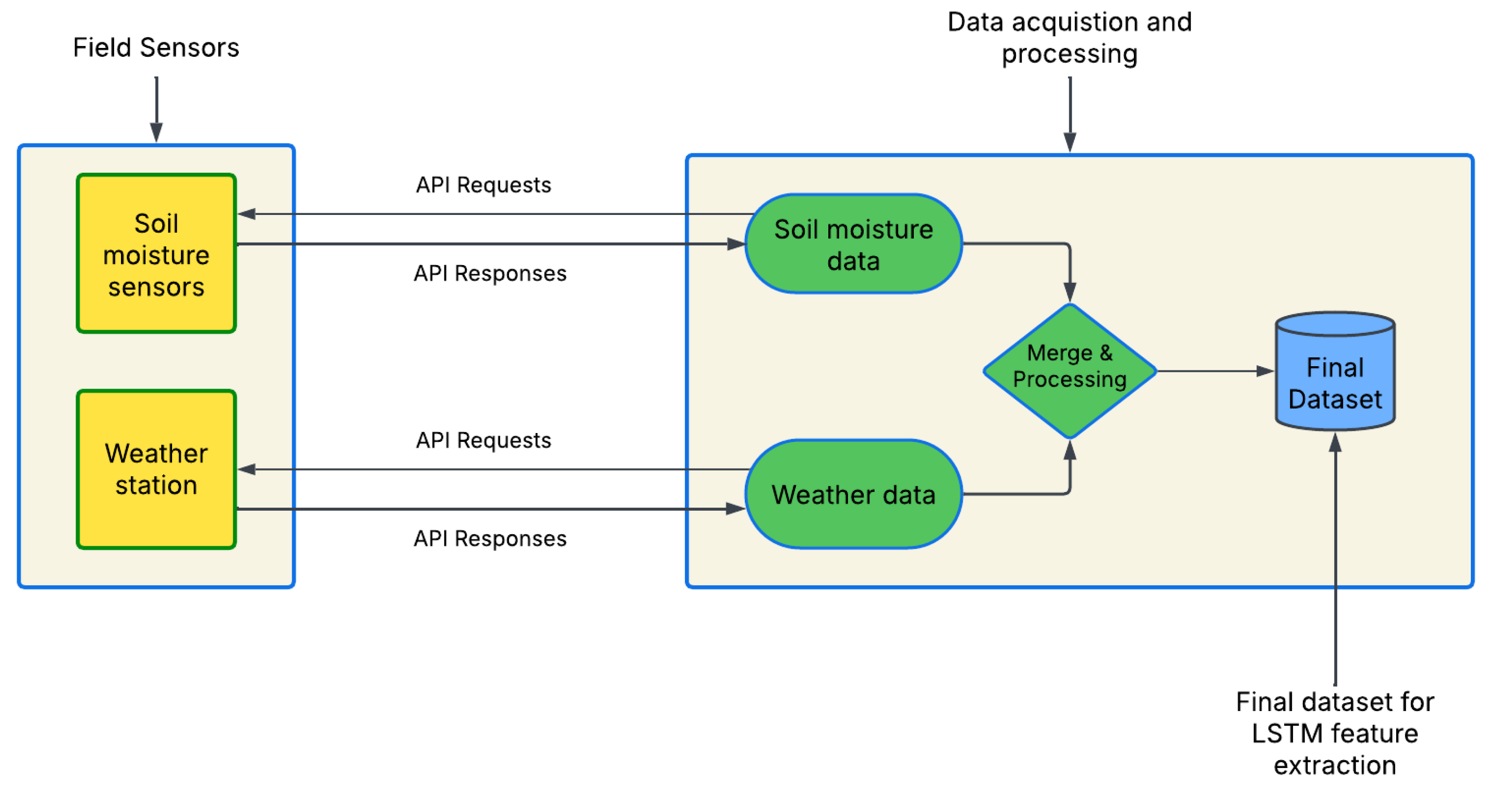

Firstly, we access the soil moisture and weather measurements from two separate web API endpoints via python requests. These measurements are recorded at different time intervals (weather data recorded every 15 minutes, soil moisture recorded every hour) and are merged together into a CSV datasheet. (Merged feature distribution is shown in Figure 2).

Figure 1 shows the data acquisition and pre-processing pipeline, generating the final dataset for model training and testing. Since weather readings are measured and recorded in 15 min. intervals and soil moisture is recorded every hour, there are four instances of weather readings for each instance of soil moisture reading, hence these data were merged based on their timestamps.

Initially, the timestamp column was converted into a datetime object to ensure proper handling of time-dependent data. To capture temporal dependencies in soil moisture, lagged features are created for average moisture readings using different time intervals (LSTM window), including 1, 6, 12, 24, 48, and 96 hour historical readings These lagged features help the model understand the influence of past moisture measurements on current and future soil moisture levels.

Missing values in the dataset are addressed by removing any rows with incomplete data, ensuring that only valid, clean data is used for model training. The data is then normalized using the MinMaxScaler, which scales the features to a range between 0 and 1, improving the convergence speed of the machine learning models and ensuring that no feature dominates due to its scale. Data is split into 60% training, 20% validation and 20% testing.

Figure 1.

Data acquisition and processing workflow.

Figure 1.

Data acquisition and processing workflow.

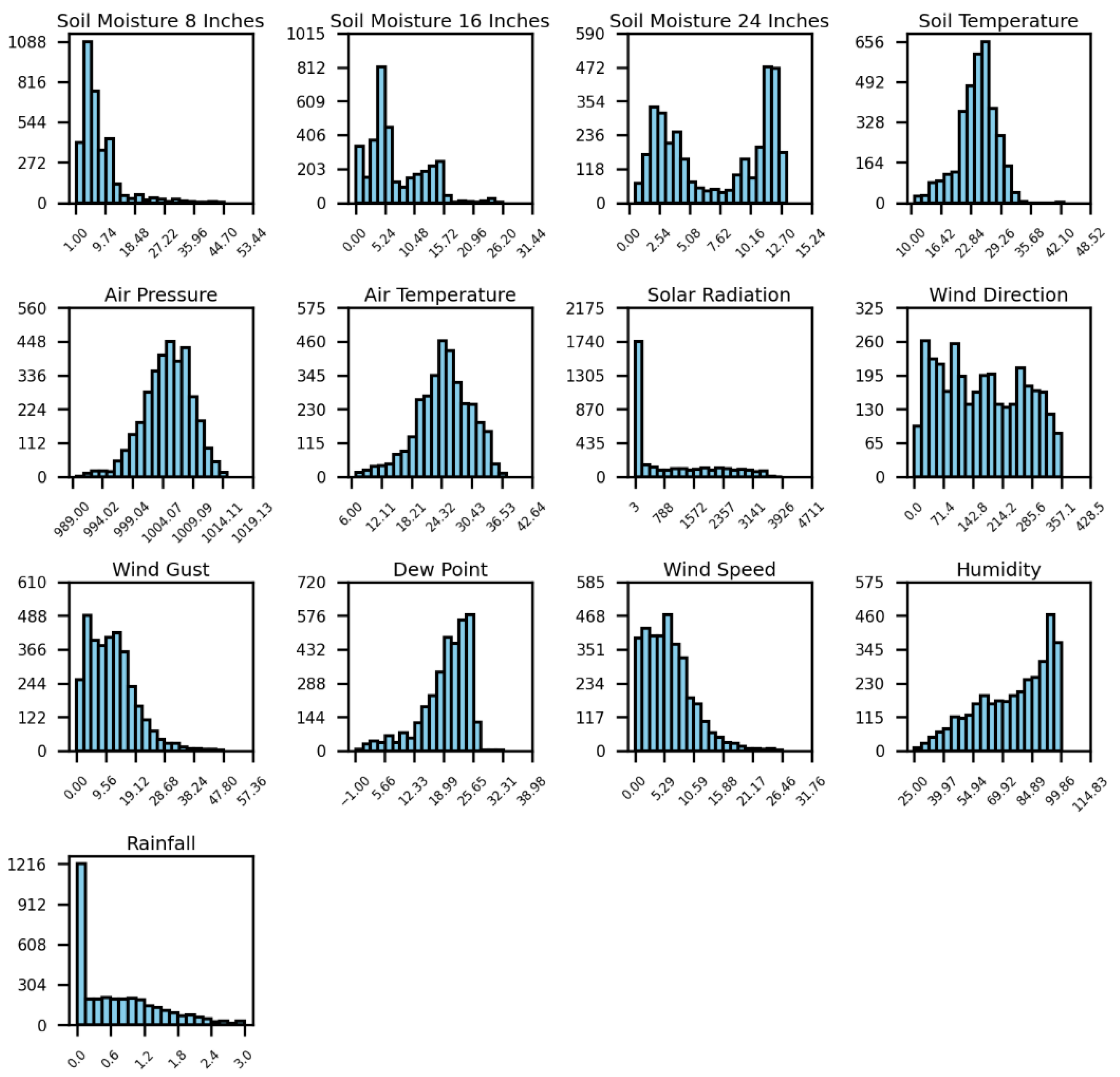

Figure 2 presents a visual representation of the feature frequencies for ten months (March 2024 to December 2024) in the South Pivot field. After merging the weather and soil moisture data, soil moisture is recorded at three depths—8, 16, and 24 inches—where the average value is used as the target variable for prediction. Soil temperature, measured by the IoT sensor device, is another parameter considered. The remaining features, including air temperature, air pressure (Pa), solar radiation (W/m2), wind direction (degrees), wind gust (kph), wind speed (kph), humidity (%), dew point temperature (C), and rainfall (in.), are measured by the weather station sensors.

Figure 1.

Feature distribution for model inputs during 2024 in the South Pivot field.

Figure 1.

Feature distribution for model inputs during 2024 in the South Pivot field.

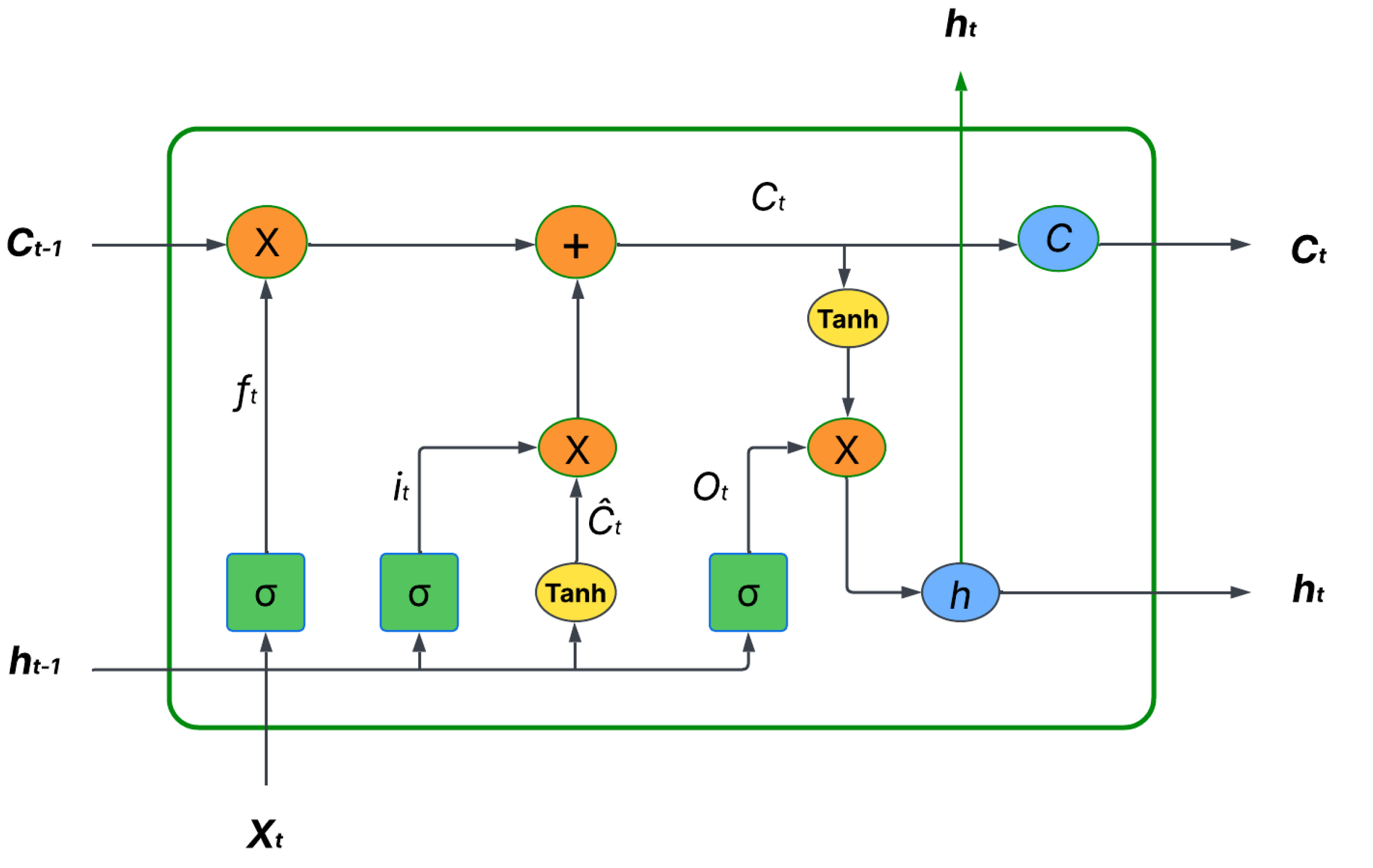

2.3. General LSTM architecture

An LSTM is a neural network derived from recurrent neural networks (RNN), designed to overcome vanishing and exploding gradient issues in time-series forecasting. They contain memory cells that store long-term dependencies, making them particularly effective for soil moisture prediction, where past moisture conditions influence future values. A standard LSTM unit consists of an input gate to update the cell state with new information, a forget gate which decides what information from previous states should be dropped and the output gate to determines the final output based on the cell state. Figure 3 represents a general LSTM architecture.

Figure 2.

General LSTM architecture with input gate, forget gate and output gate [

28].

Figure 2.

General LSTM architecture with input gate, forget gate and output gate [

28].

The standard LSTM block is presented by equations (1) to (6):

The input gate, denoted as it , determines which new information from the current input xt and the previous hidden state ht−1 should be added to the cell state. Its activation is calculated using the sigmoid function σ, applied to a weighted sum of the concatenated input and previous hidden state [ht−1, xt], along with its associated weight matrix Wi and bias bi. Similarly, the forget gate denoted as ft, decides what information to discard from the cell state based on its own weight matrix Wf, bias bf, and sigmoid activation σ. The output gate, Ot, controls what information from the current cell state will be output as the hidden state ht. It also uses the sigmoid function σ applied to a weighted combination of [ht−1, xt] with its weight matrix Wo and bias bo. The symbol ⊙ represents element-wise multiplication, a crucial operation within the LSTM to selectively filter and combine information. The hyperbolic tangent function tanh, is used to produce outputs in the range of (-1 to 1) often applied to the cell state when calculating the candidate values or the final hidden state.

2.4. Proposed LSTM architecture

The proposed LSTM model architecture consists of three sequential LSTM layers followed by a dropout layer and two fully connected (dense) layers, as summarized in

Table 2. The input to the model is a 24-hour time series, where 24 represents the number of time steps, and 32 denotes the batch size as shown in

Table 2. The first LSTM layer outputs a sequence of 256 features for each time step, which is passed to the second LSTM layer that reduces it to 128 features per time step. The third LSTM layer outputs only the final hidden state of size 64, capturing the summarized temporal representation. A dropout layer is applied for regularization. The output is then passed through a dense layer with 256 neurons (output features) and a final dense layer with 168 neurons which predicts soil moisture values for the next 168 hours (7-day forecast).

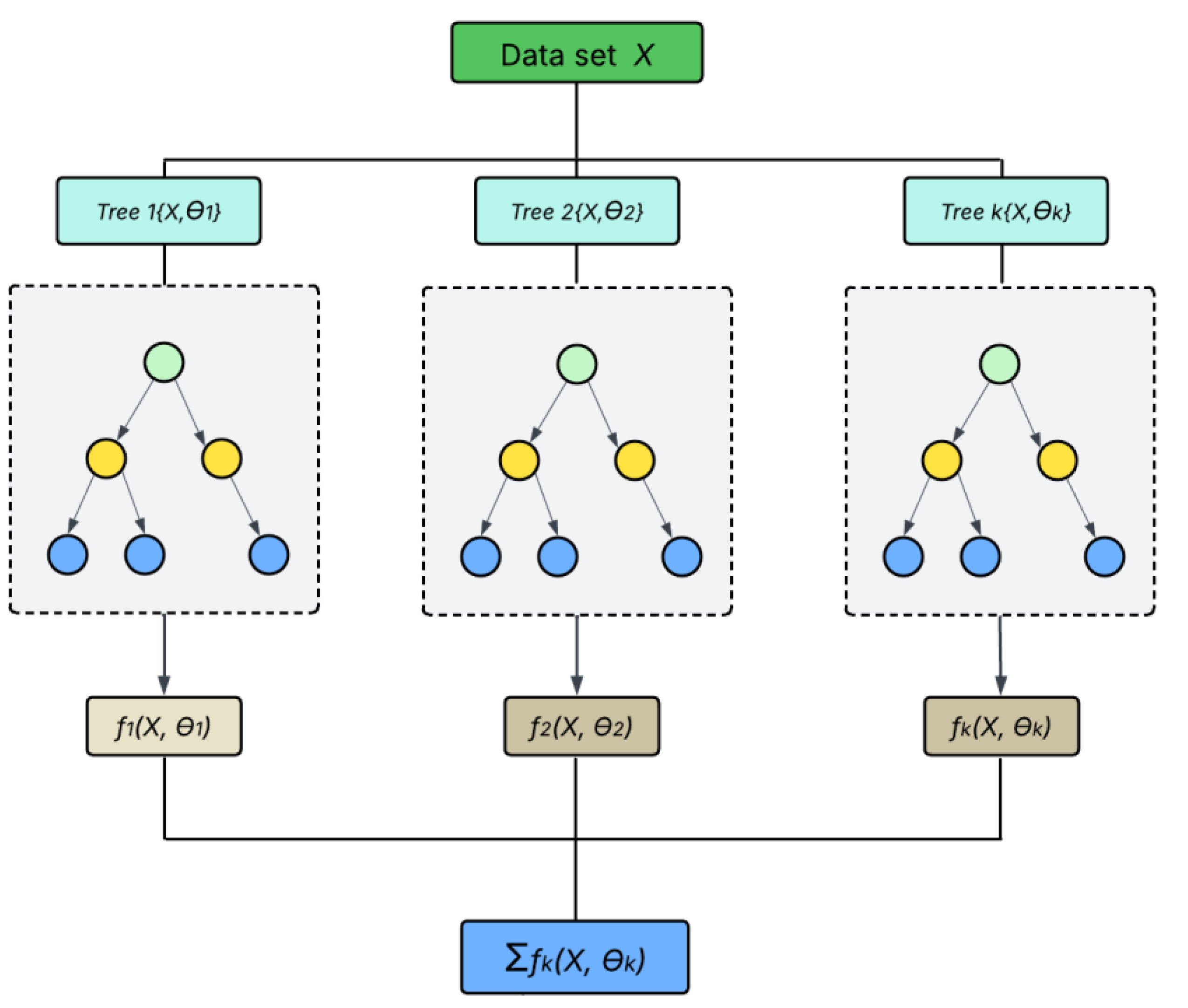

2.5. General XGBoost architecture

XGBoost is an ensemble learning method derived from regular gradient boosting method. It uses sequential decision trees as base learners with each tree responsible for correcting errors made by the previous tree in the process called boosting, thereby a good choice for time series data. Figure 4 presents the general XGBoost workflow.

Figure 3.

General XGBoost architecture [

29].

Figure 3.

General XGBoost architecture [

29].

2.6. Proposed XGBoost architecture

The proposed XGBoost model architecture is configured with key hyperparameters optimized for stability and performance, as shown in

Table 3. It uses 500 boosting rounds (n_estimators = 500) to iteratively improve model accuracy, with a learning rate of 0.05 to control the step size and reduce the risk of overfitting. The maximum tree depth is set to 5 to balance model complexity and generalization. A fixed random seed (random_state = 42) ensures reproducibility of results across runs.

2.7. Experiment setup

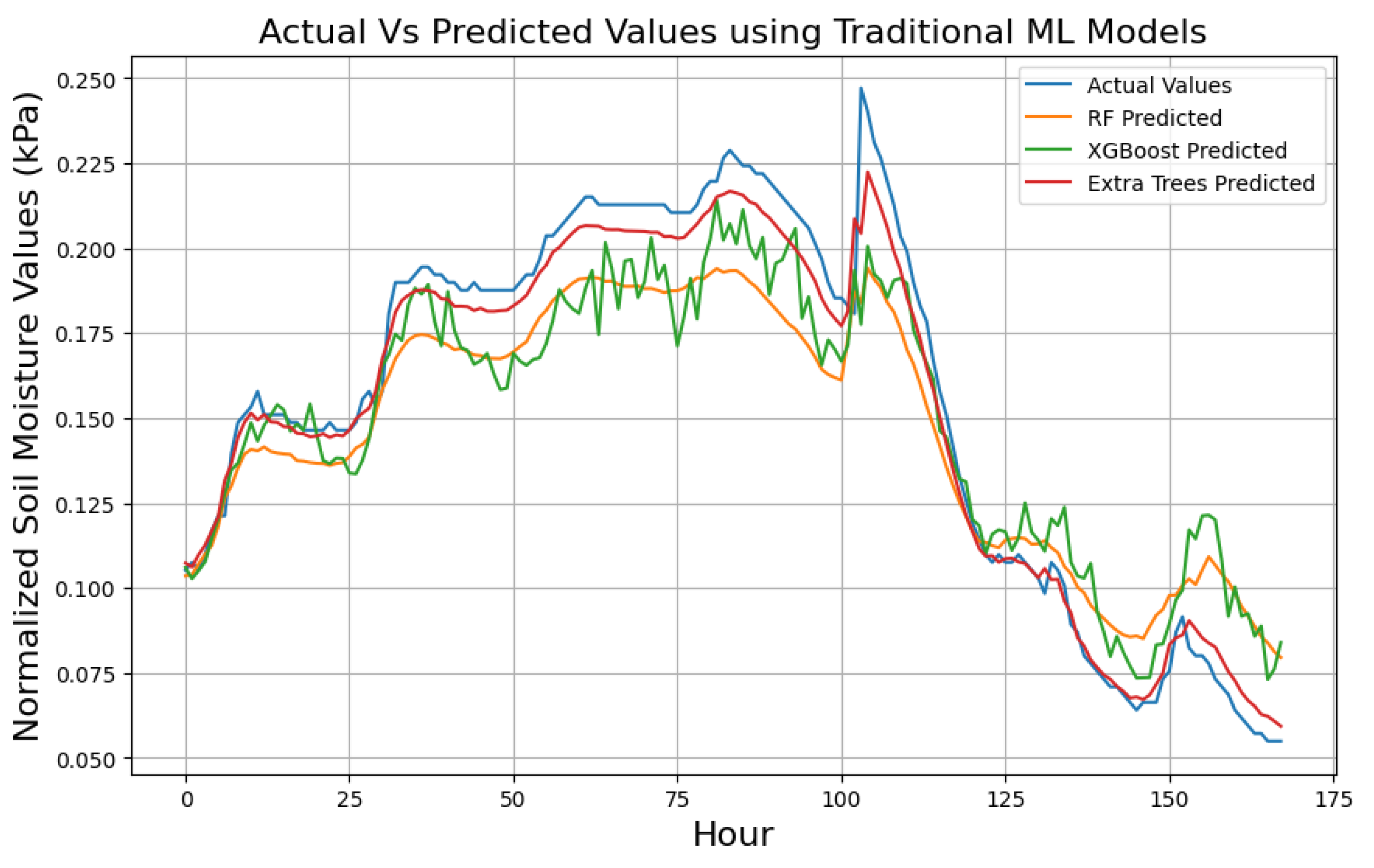

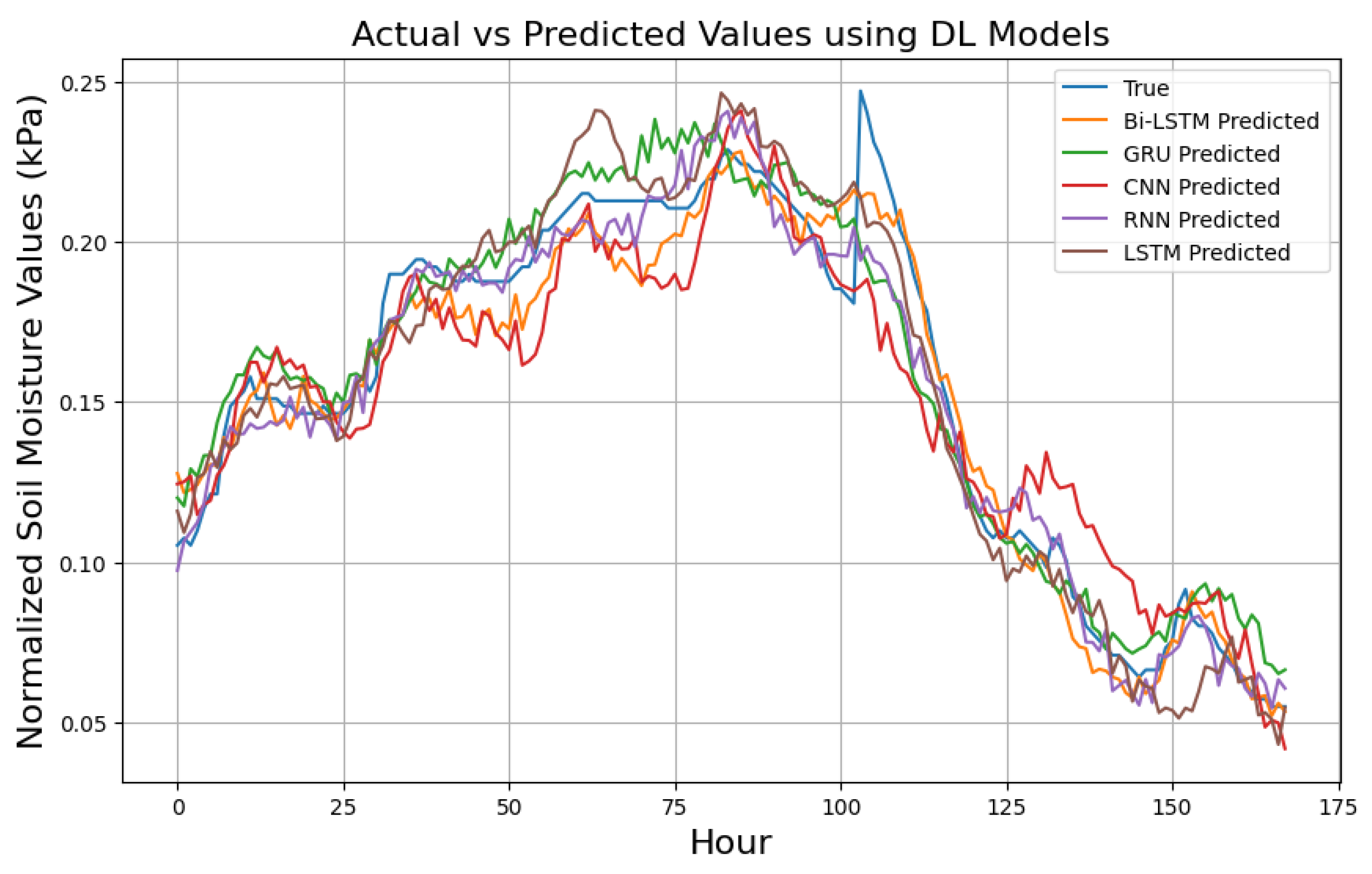

This study experiments evaluated standalone ML, DL and a hybrid ML-DL models in predicting soil moisture trends over 24-hour, 72-hour, and 168-hour prediction. Firstly, the ML models tested included; XGBoost, RF, GB, SVR, LightGBM, LR, ElasticNet, CatBoost, DT, MLP, AdaBoost, and KNN. Secondly, five DL architectures were tested including; GRU, CNN, RNN, Bi-LSTM, and LSTM. The LSTM model architecture comprised three stacked LSTM layers followed by a dropout and a dense layer, allowing it to effectively model long-term temporal dependencies.

The GRU model followed this structure but used GRU units, offering comparable performance with reduced computational overhead. The CNN model consisted of a sequence of 1D convolutional (Conv1D) and max-pooling layers to extract localized temporal features, followed by a flattening operation and dense layer for prediction. The RNN model used three recurrent layers and a dense output layer, providing a baseline for evaluating short-term dependency modeling. The Bi-LSTM model integrated three bidirectional LSTM layers, enabling the network to process input sequences in both forward and backward directions and capture richer contextual information. These DL architectures are summarized in

Table 4.

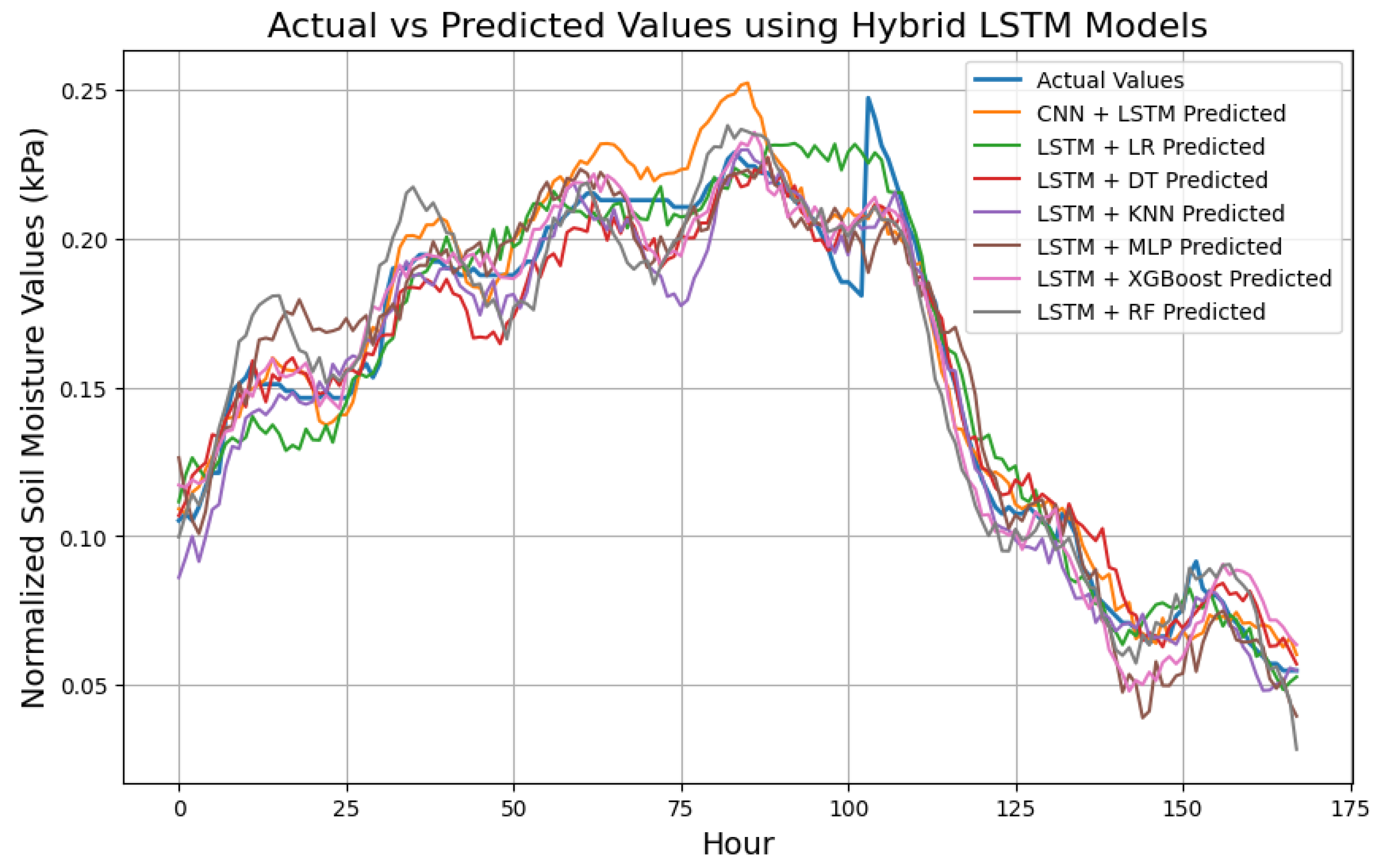

Lastly, beyond standalone models, we also developed hybrid architectures that combined LSTM-based temporal feature extraction with machine learning regressors for final prediction. These hybrid models included combinations of LSTM with other models like RF, LR, XGBoost, KNN, MLP, DT and CNN. While in other cases LSTM was used for feature extraction before passing them to a regressor for final prediction, in CNN + LSTM model, CNN layers performed initial feature extraction before passing the sequence to LSTM layers for further temporal modeling and prediction.

Finally, we proposed a hybrid model combining LSTM for feature extraction with XGBoost for the final prediction task. This approach capitalizes on the temporal learning capabilities of LSTM and the robust, non-linear decision-making strength of XGBoost. All models were evaluated across the same multi-step horizons (24, 72, and 168 hours) to ensure consistent comparison.

Table 4 presents the DL models architectures used in the study along with their structural details.

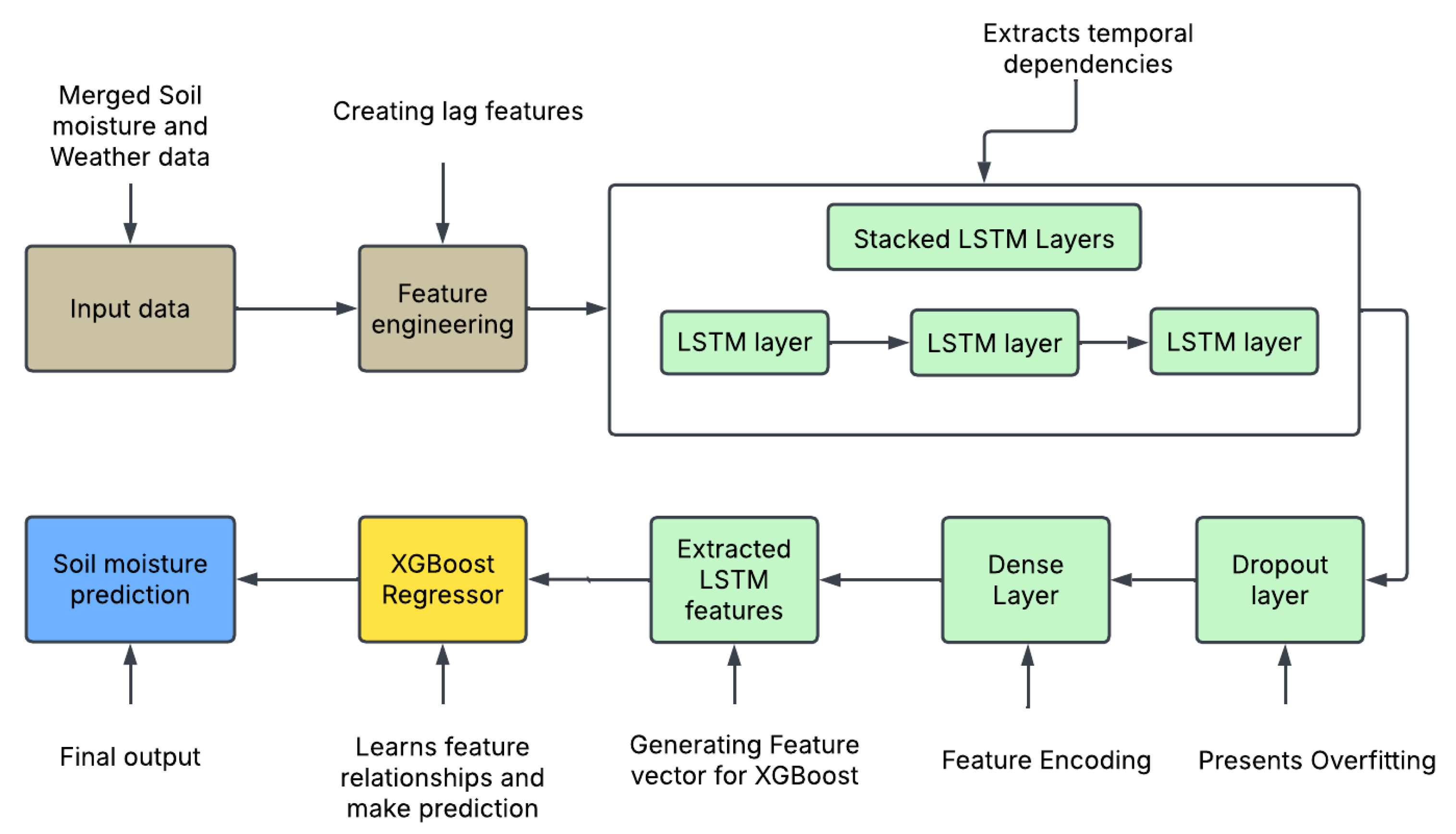

2.8. Proposed method architecture

The proposed (LSTM + XGBoost) hybrid method consists of three sequentially stacked LSTM layers for temporal feature extraction, followed by dropout and dense layers for regularization and feature encoding, and finally an XGBoost regressor for final soil moisture prediction. The LSTM architecture begins with a 256-unit layer, followed by 128 and 64-unit LSTM layers, respectively. A dropout layer with a rate of 0.2 is applied after the final LSTM layer to mitigate the risk of overfitting by randomly deactivating neurons during training. The extracted temporal features are then passed through a dense layer with 64 units, serving as a compact encoded representation of the sequence. This feature vector is used as input to the XGBoost model, which performs the final multi-step soil moisture prediction. The LSTM model is trained using the Adam optimizer, ReLU activation function, and MSE loss over 100 epochs, using a (80%-20%) train -test split with 20% of the training data reserved for validation. The workflow for the proposed framework is shown in

Figure 5.

Figure 5.

Proposed method architecture.

Figure 5.

Proposed method architecture.

2.9. Model Performance Evaluation Metrics

We evaluated model performance using three common metrics: Coefficient of determination also known as the R-squared (R²), Mean Squared Error (MSE), and Mean Absolute Error (MAE). R² measures the proportion of variance in the dependent variable explained by the model, with values between 0 and 1 whereas the closer R² score is to 1, the better the model fits the data. MSE measures the average squared difference between predicted and actual values, where a lower MSE indicates better prediction accuracy while the MAE on the other hand, calculates the average magnitude of errors without considering their direction. It is less sensitive to outliers than MSE, making it suitable for cases where large errors should not overly impact model evaluation. The equations (7), (8) and (9) presents R

2, MAE and MSE respectively;

where n is the number of samples, y

i is the actual value for the i-th sample, ŷ

i is the predicted value for the i-th sample and y is the mean of the actual values.

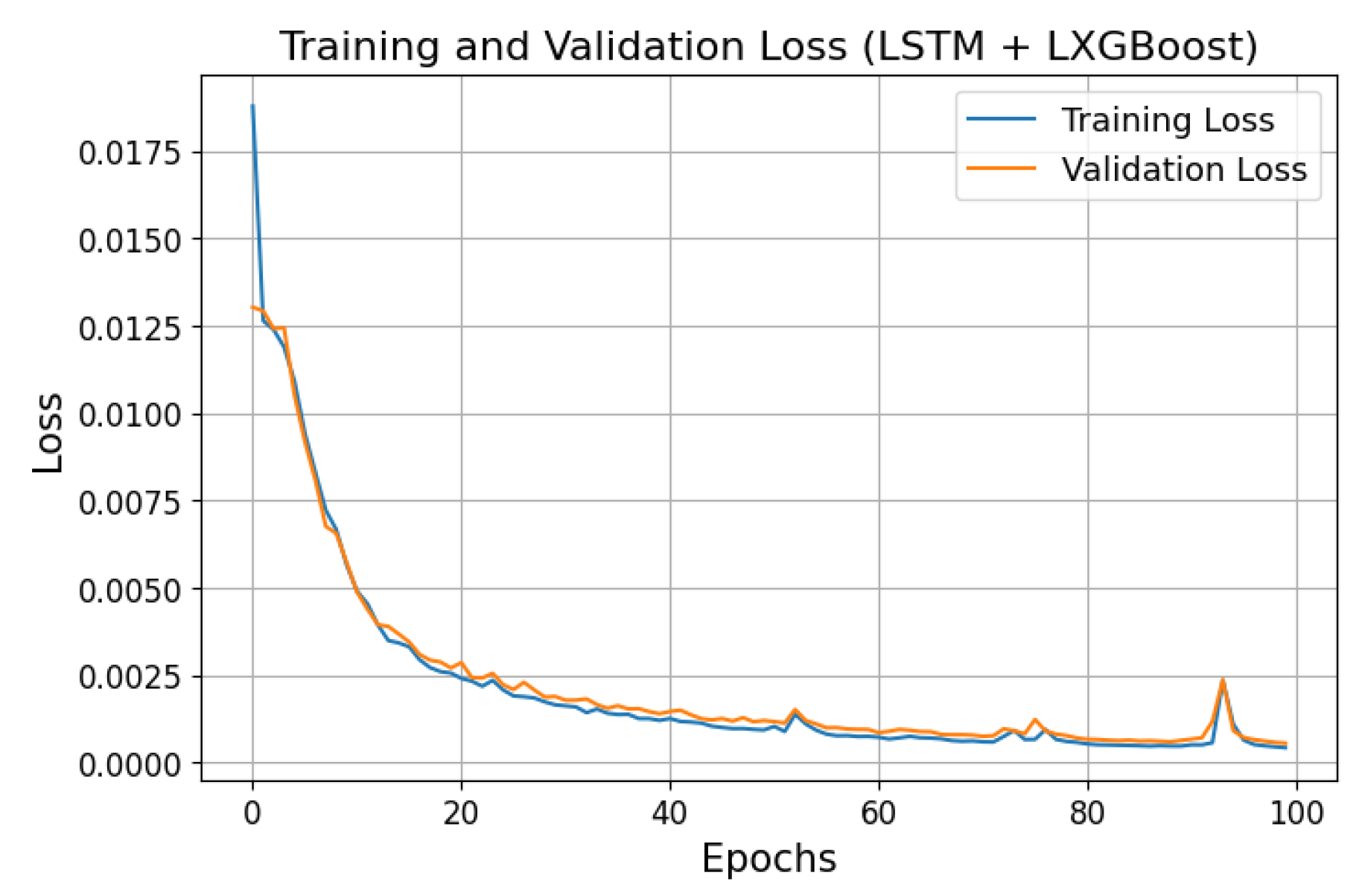

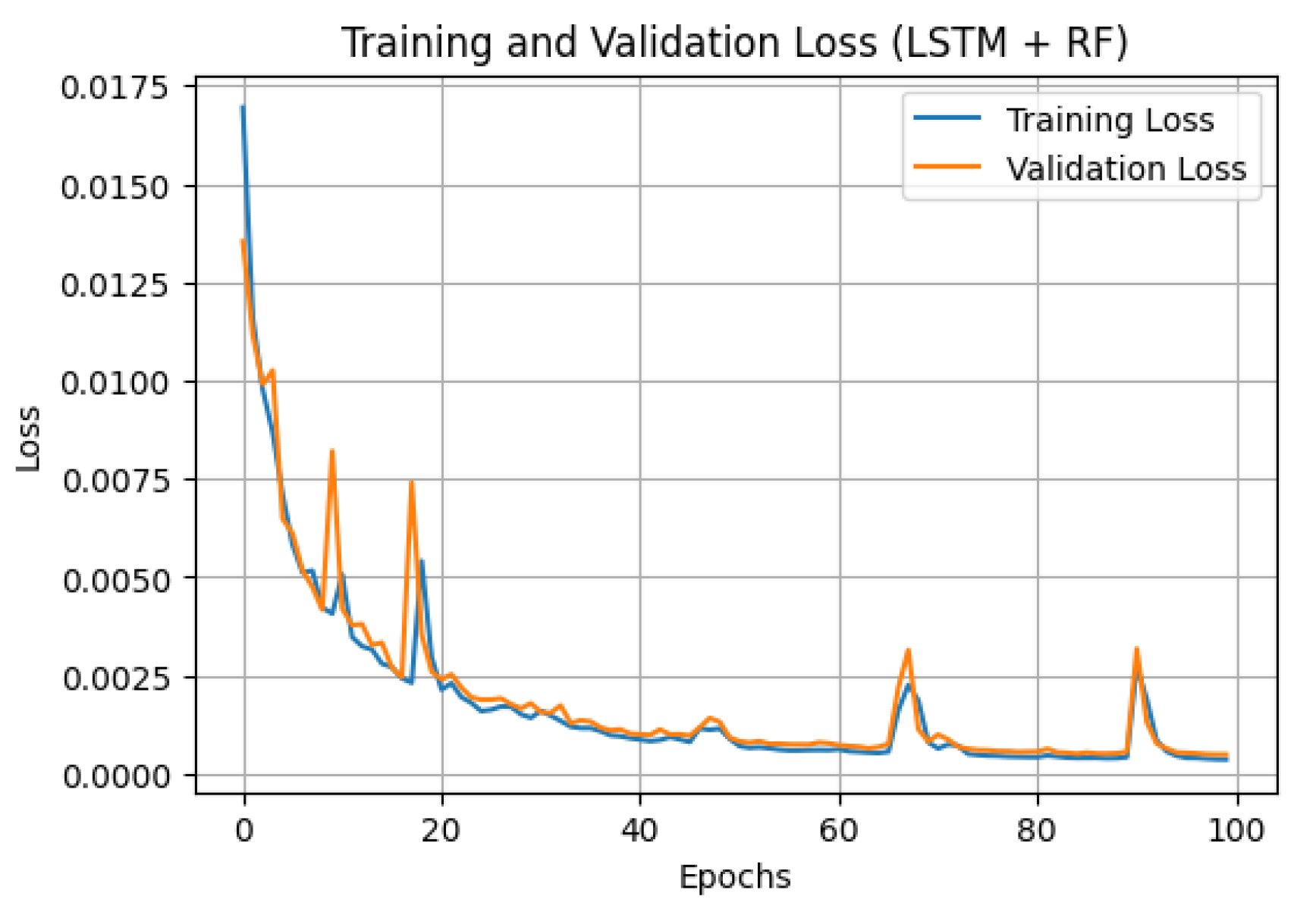

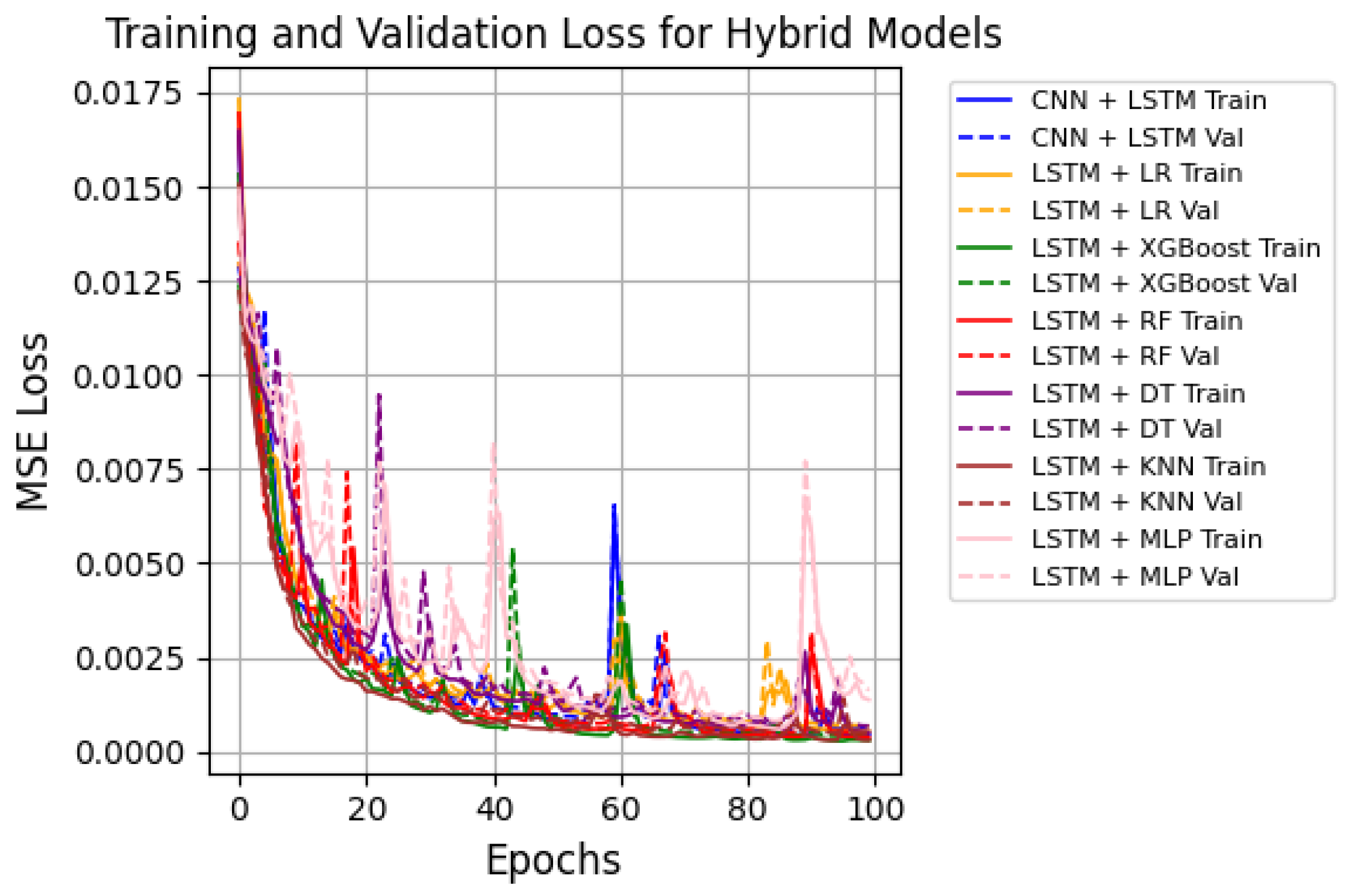

2.10. Models training history

In summary, all DL and hybrid models were trained with a batch size of 32 and 100 epochs, demonstrating good generalization and convergence with a sharp decrease in MSE loss and increase in R2. Figure 6 and Figure 7 display the training and validation MSE loss curves for the proposed model and LSTM + RF, which recorded the second-best results, making it a useful benchmark for comparison. The training history of all hybrid models is shown in Figure 8. All models displayed effective learning, with error decreasing from approximately 0.0175 to near 0.0001. LSTM + XGBoost showed a smooth learning curve with minimal fluctuations, while LSTM + RF exhibited some loss variations but eventually converged as expected. A summary of the training history for all models is presented in Figure 8.

Figure 4.

MSE loss curve for LSTM + XGBoost.

Figure 4.

MSE loss curve for LSTM + XGBoost.

Figure 5.

MSE loss curve for LSTM + RF.

Figure 5.

MSE loss curve for LSTM + RF.

Figure 6.

MSE loss curves for all hybrid models.

Figure 6.

MSE loss curves for all hybrid models.