Submitted:

19 June 2025

Posted:

20 June 2025

You are already at the latest version

Abstract

Keywords:

Contents

| 1. Preface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 2 |

| 2. Problem . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 4 |

| 2.1. Marketplace . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 4 |

| 2.2. Trustless Verification Complexities . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 4 |

| 2.3. Identity Gap in N-Creation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 4 |

| 2.4. Neutral Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 4 |

| 2.5. Limitations of Conventional AI Benchmarking . . . . . . . . . . . . . . . . . . . . . . . . | 5 |

| 2.6. Related Work and Gaps . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 5 |

| 3. Solution . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 5 |

| 3.1. Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 5 |

| 3.1.1. Layers of I Cubed . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 6 |

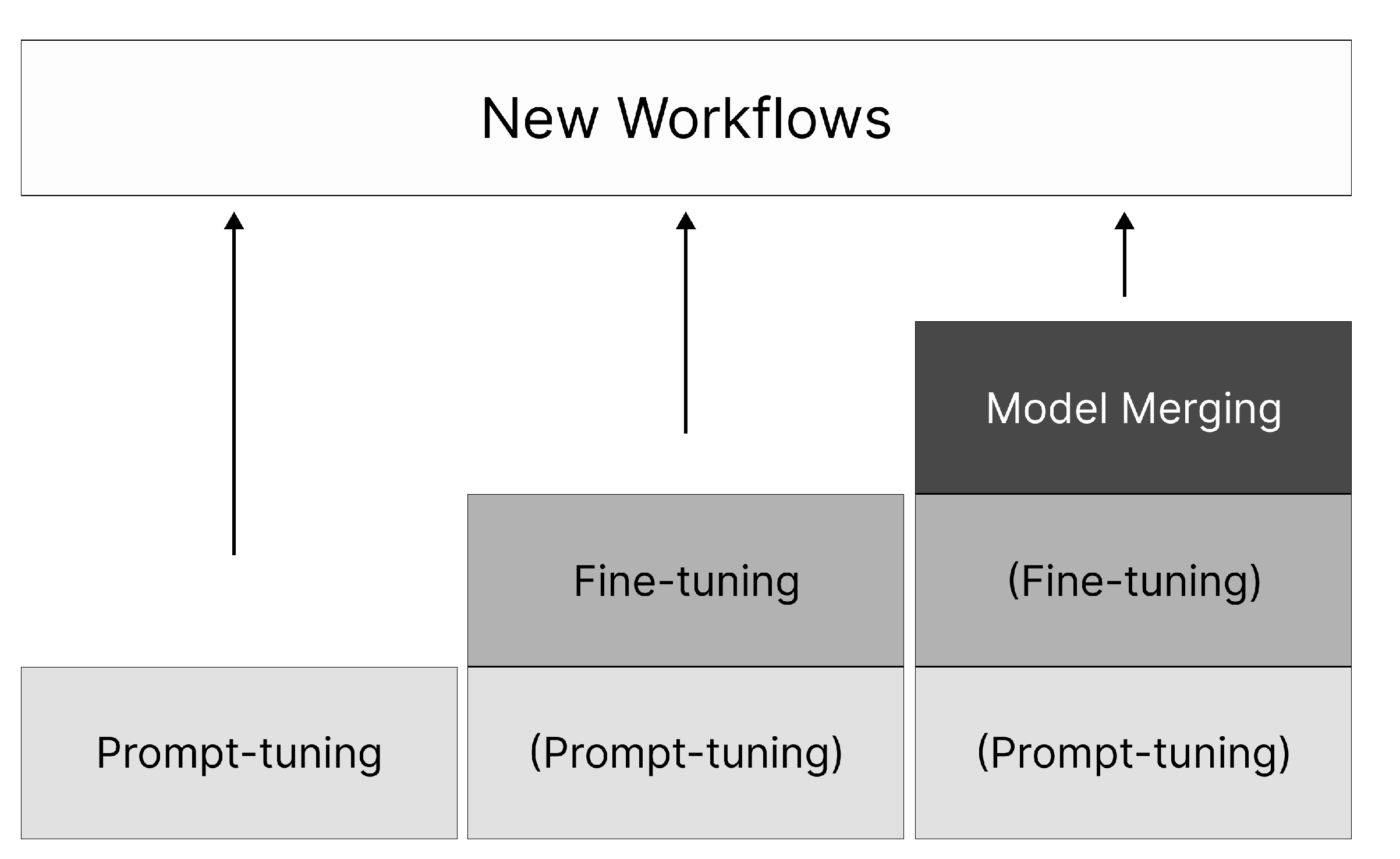

| 3.1.2. Key Workflows . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 6 |

| 3.2. Decentralized AI modelverse . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 7 |

| 3.2.1. Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 7 |

| 3.2.2. From Open-Source Visibility to Incentive-Aligned Value Creation . . . . . . . . . | 8 |

| 3.3. Initial Model Offering . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 9 |

| 3.3.1. Process . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 9 |

| 3.3.2. Model Valuation Recommendation Engine . . . . . . . . . . . . . . . . . . . . . . . | 10 |

| 3.3.3. Anti-Rollback & Lock-in Rules . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 10 |

| 3.3.4. Post-IMO Market Dynamics: Equity-like Ownership & Liquidity . . . . . . . . . . | 10 |

| 3.4. Proof of Intelligence . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 10 |

| 3.5. Neutral Evaluation & Democratic Pricing . . . . . . . . . . . . . . . . . . . . . . . . . . . | 11 |

| 3.5.1. Model-Peer Benchmark: A Three-Tier Ecosystem Valuation Framework . . . . . . | 12 |

| 4. Decentralization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 13 |

| 4.1. Why Web3 Is the Only Viable Path for Create-to-Earn . . . . . . . . . . . . . . . . . . . . | 13 |

| 4.2. Decentralization as an Economic and Moral Imperative . . . . . . . . . . . . . . . . . . . | 13 |

| 4.3. Rebuilding the Order of the AI Industry . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 14 |

| 5. Discussion: Fostering AI Co-Creation in the I Cubed Modelverse . . . . . . . . . . . . . . . | 14 |

| 6. Community Building . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 15 |

| 7. Future Ecosystem . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 15 |

| 7.1. Hardware Membership . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 15 |

| 7.2. Decentralized Compute Power Integration . . . . . . . . . . . . . . . . . . . . . . . . . . . | 16 |

| 7.3. Inference Endpoints and Public Demo Spaces . . . . . . . . . . . . . . . . . . . . . . . . . | 16 |

| 8. Contributors . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 16 |

| 9. References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 16 |

1. Preface

2. Problem

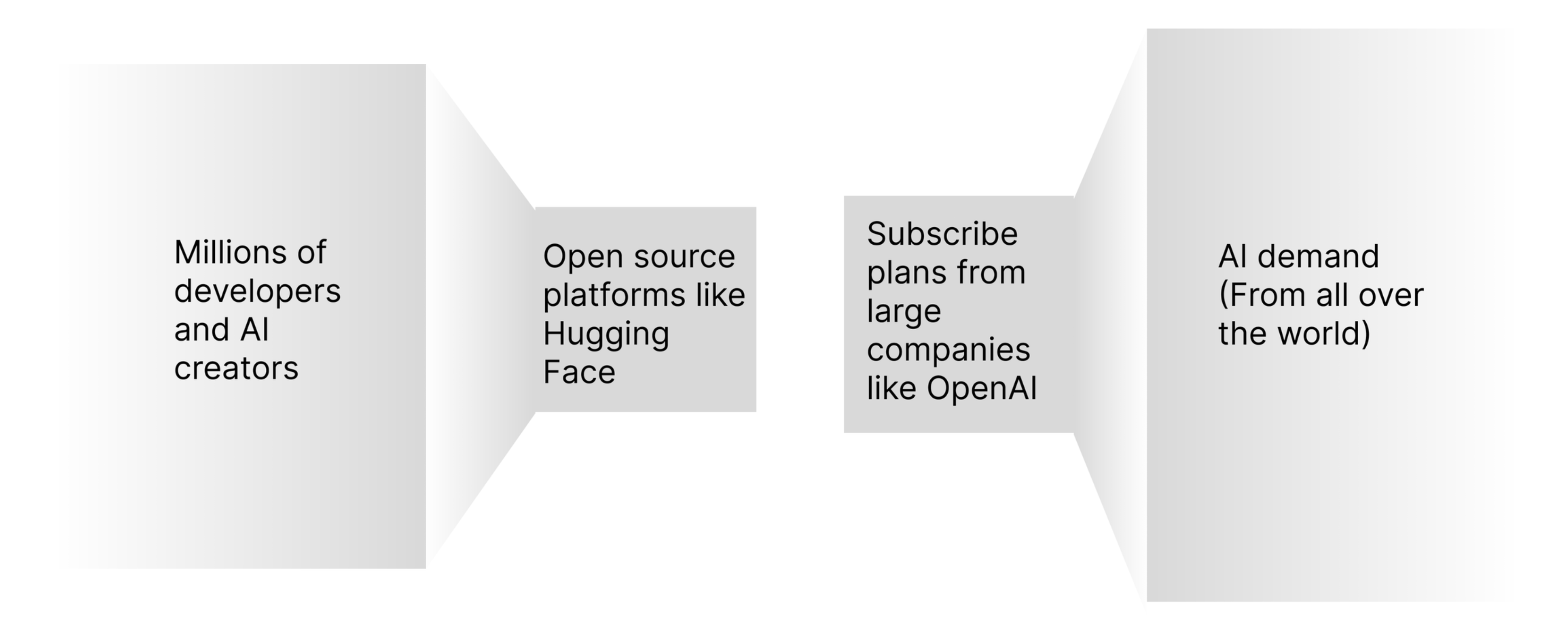

2.1. Marketplace

2.2. Trustless Verification Complexities

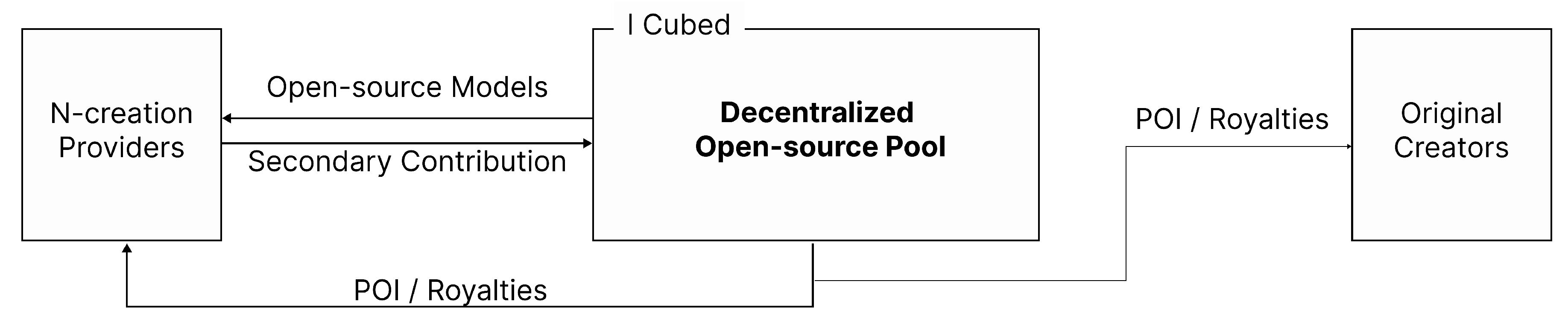

2.3. Identity Gap in N-Creation

2.4. Neutral Evaluation

2.5. Limitations of Conventional AI Benchmarking

2.6. Related Work and Gaps

3. Solution

3.1. Overview

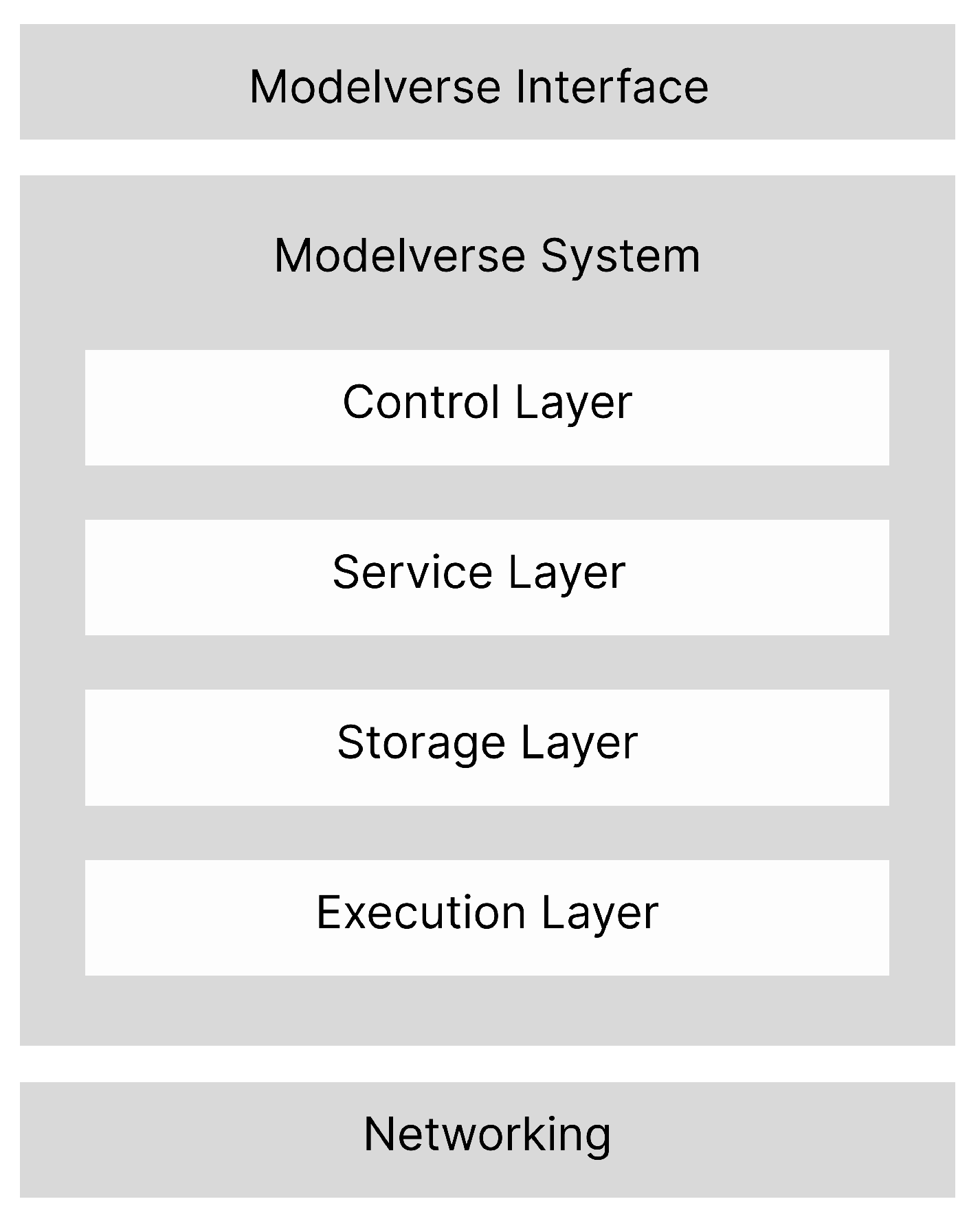

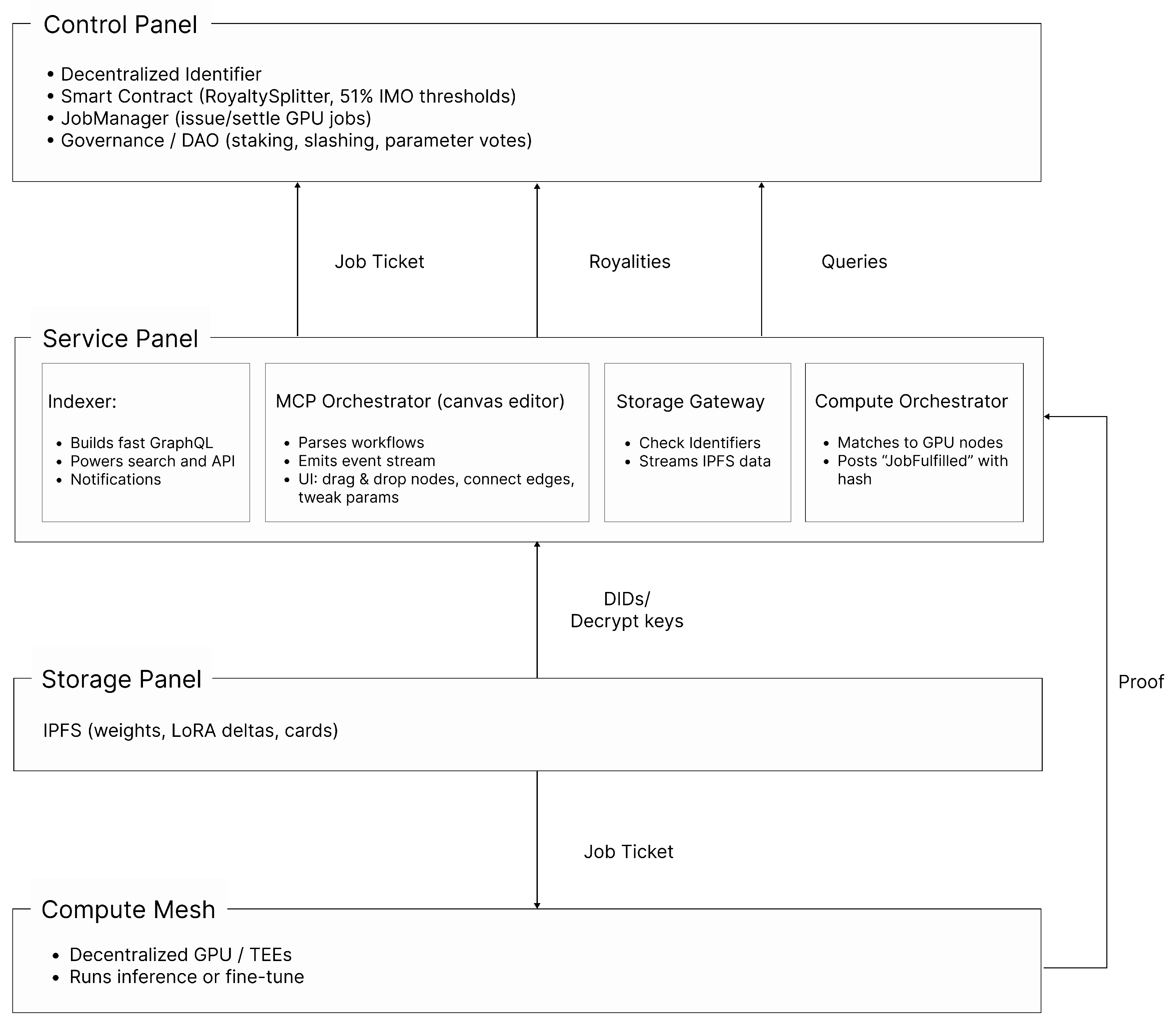

3.1.1. Layers of I Cubed

3.1.2. Key Workflows

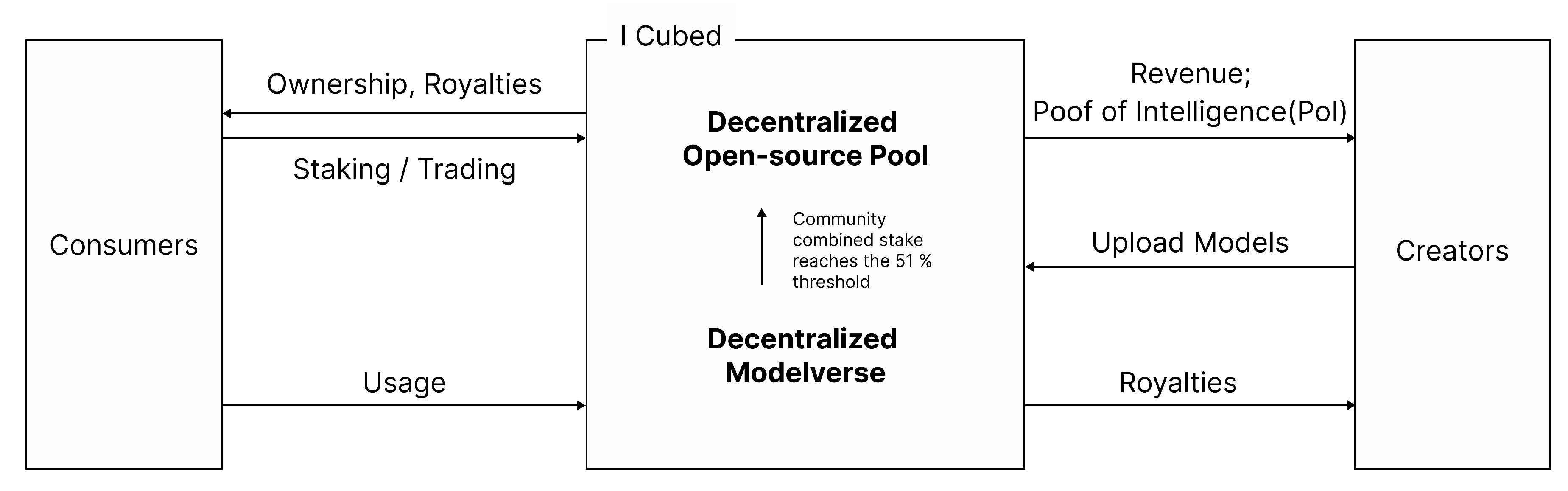

3.2. Decentralized AI modelverse

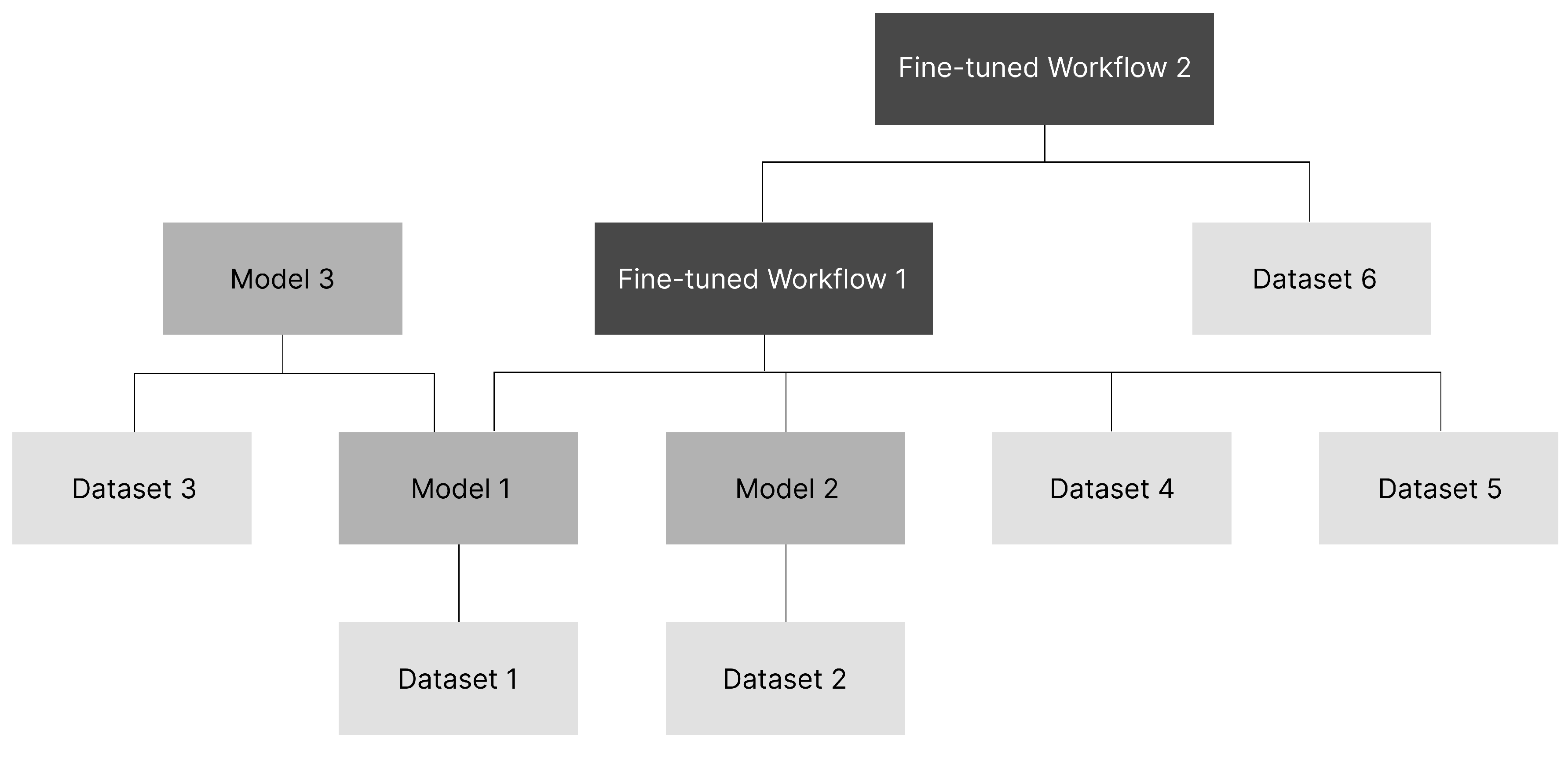

3.2.1. Architecture

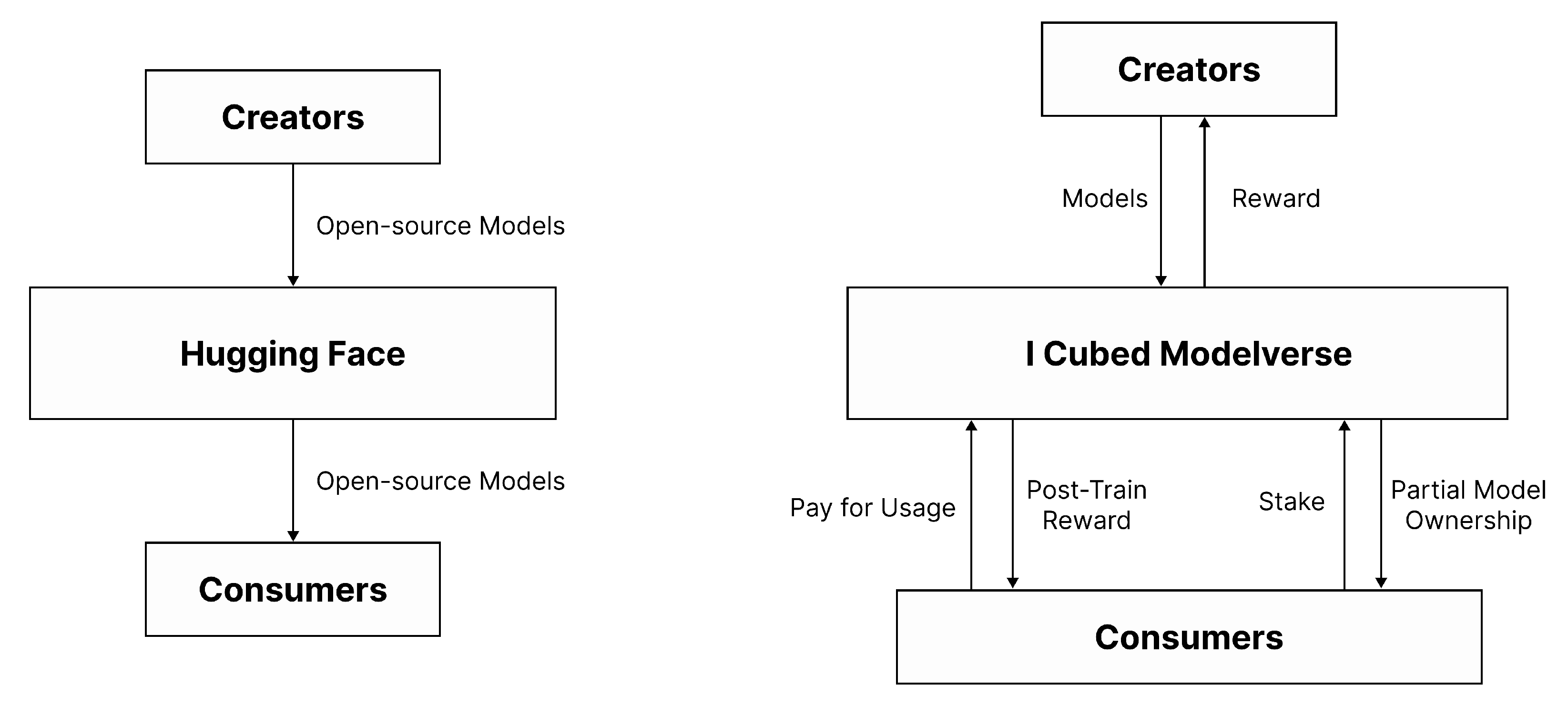

3.2.2. From Open-Source Visibility to Incentive-Aligned Value Creation

| Feature | Hugging Face | I Cubed Modelverse |

| Incentivization | Developers often open-source without direct rewards | Developers and creators earn from usage, staking, and remixing activities |

| Ownership | No native ownership mechanism | Blockchain-based model ownership and transferable token stakes via IMO |

| Developer Value | Exposure and community feedback | Royalties, usage fees, and community recognition via on-chain tracking |

| Consumer Value | Free access but limited incentive feedback loop | Pay-per-use pricing and potential post-train rewards for high-impact data contributions |

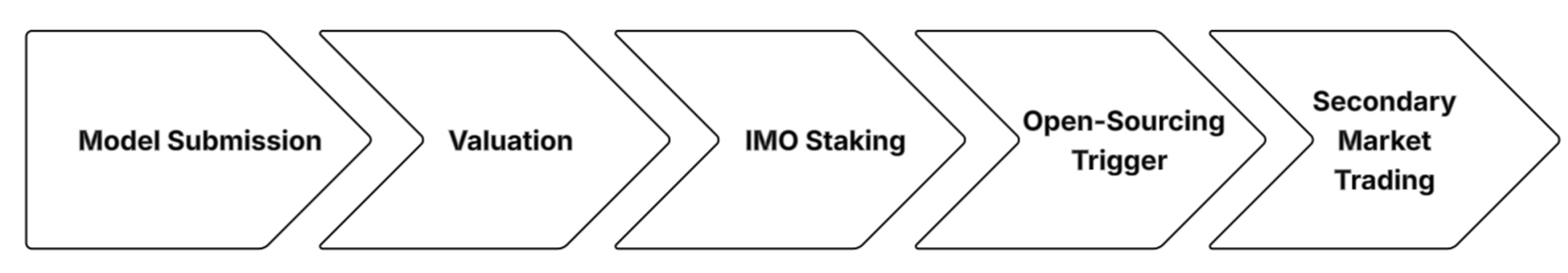

3.3. Initial Model Offering

3.3.1. Process

3.3.2. Model Valuation Recommendation Engine

- : predicted pre-IMO demand (inferred from page views, API trials, and wishlist activity),

- : the average token price of functionally similar models, and

- : the creator’s historical reputation or track record of successful model launches.

3.3.3. Anti-Rollback & Lock-in Rules

3.3.4. Post-IMO Market Dynamics: Equity-like Ownership & Liquidity

3.4. Proof of Intelligence

3.5. Neutral Evaluation & Democratic Pricing

- whether YES or NO will be chosen;

- the value of M if YES is chosen (otherwise 0);

- the value of M if NO is chosen (otherwise 0).

3.5.1. Model-Peer Benchmark: A Three-Tier Ecosystem Valuation Framework

- : user rating

- : usage volume

- : social signal or citation frequency

- : Final Peer-Workflow Compatibility score for model i.

- : Model i’s conventional benchmark score (Tier 1).

- : Fraction of workflows involving model i that complete end-to-end tasks, derived from MCP logs.

- : Observed lift in business KPIs (e.g., conversion or throughput) when model i is included in a pipeline.

4. Decentralization

4.1. Why Web3 Is the Only Viable Path for Create-to-Earn

4.2. Decentralization as an Economic and Moral Imperative

4.3. Rebuilding the Order of the AI Industry

5. Discussion: Fostering AI Co-Creation in the I Cubed Modelverse

6. Community Building

7. Future Ecosystem

7.1. Hardware Membership

7.2. Decentralized Compute Power Integration

7.3. Inference Endpoints and Public Demo Spaces

8. Contributors

- Fernando Jia

- Rebekah Jia

- Florence Li

- Tianqin Li

- Jade Zheng

References

- First Page Sage. Top generative ai chatbots by market share – may 2025, May 2025. Accessed: 2025-06-01.

- Shubham Singh. Chatgpt statistics 2025 – dau & mau data (worldwide), May 2025. Accessed: 2025-06-01.

- Will Knight. Openai’s ceo says the age of giant ai models is already over. WIRED, April 2023. Accessed: 2025-06-01.

- Aditya Ramesh, Mikhail Pavlov, Gabriel Goh, Scott Gray, Chelsea Voss, Alec Radford, Mark Chen, and Ilya Sutskever. Zero-shot text-to-image generation, 2021.

- AI Hive. Build llm from scratch, 2023.

- DeepSeek-AI. Deepseek-v3, 2025. Accessed: 2025-06-01.

- Sungmin Woo. The deepseek shock: A ‘cost-effective’ language model challenging gpt, 2 2025.

- Andrii Rusanov. Bypassing sanctions: Deepseek flashmla improves the performance of nvidia h800 ai chips by 8 times, 2 2025.

- Niklas Muennighoff, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candès, and Tatsunori Hashimoto. s1: Simple test-time scaling. arXiv preprint arXiv:2501.19393, 1 2025.

- Seek AI. Understanding deepseek: What enterprises need to know, 2 2025.

- Allied Insight. Allied insight, 2025.

- Planable. 77 ai statistics & trends to quote in 2025 + own survey results, 3 2025.

- Tim Tully, Joff Redfern, and Derek Xiao. 2024: The state of generative ai in the enterprise, 11 2024.

- Kar Balan, Andrew Gilbert, and John Collomosse. Content arcs: Decentralized content rights in the age of generative ai. arXiv preprint arXiv:2503.14519, 2025.

- Alex Wang, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel R Bowman. Glue: A multi-task benchmark and analysis platform for natural language understanding. arXiv preprint arXiv:1804.07461, 2018.

- Olga Russakovsky, Jia Deng, Hao Su, Jonathan Krause, Sanjeev Satheesh, Sean Ma, Zhiheng Huang, Andrej Karpathy, Aditya Khosla, Michael Bernstein, et al. Imagenet large scale visual recognition challenge. International journal of computer vision, 115:211–252, 2015.

- Code Labs Academy. What is the glue benchmark?, 2024.

- Alex Wang, Yada Pruksachatkun, Nikita Nangia, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel Bowman. Superglue: A stickier benchmark for general-purpose language understanding systems. Advances in neural information processing systems, 32, 2019.

- Minsu Kim, Evan L Ray, and Nicholas G Reich. Beyond forecast leaderboards: Measuring individual model importance based on contribution to ensemble accuracy. arXiv preprint arXiv:2412.08916, 2024.

- Anthropic. Model context protocol, 2024.

- Shreya Shankar and Aditya Parameswaran. Towards observability for production machine learning pipelines. arXiv preprint arXiv:2108.13557, 2021.

- AIOZ Network. Aioz network launches aioz ai: A marketplace for web3 ai models and compute, 2025.

- Ritual Foundation. Model marketplace, 2025.

- Reelmind.ai. Prime video license ai: Understanding the challenges of digital rights management, 2025.

- Robin Hanson. Futarchy: Vote values, but bet beliefs, 2000.

- OpenAI Platform. Text generation and prompting, 2024.

- Kaiyan Chang, Songcheng Xu, Chenglong Wang, Yingfeng Luo, Xiaoqian Liu, Tong Xiao, and Jingbo Zhu. Efficient prompting methods for large language models: A survey. arXiv preprint arXiv:2404.01077, 2024.

- Siwei Li, Yifan Yang, Yifei Shen, Fangyun Wei, Zongqing Lu, Lili Qiu, and Yuqing Yang. Expressive and generalizable low-rank adaptation for large models via slow cascaded learning. arXiv preprint arXiv:2407.01491, 2024.

- Gabriel Ilharco, Marco Tulio Ribeiro, Mitchell Wortsman, Suchin Gururangan, Ludwig Schmidt, Hannaneh Hajishirzi, and Ali Farhadi. Editing models with task arithmetic. arXiv preprint arXiv:2212.04089, 2022.

- Jiho Choi, Donggyun Kim, Chanhyuk Lee, and Seunghoon Hong. Revisiting weight averaging for model merging. arXiv preprint arXiv:2412.12153, 2024.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).