Submitted:

16 June 2025

Posted:

17 June 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A comprehensive threat model and taxonomy of unsafe tool use patterns in LLM systems

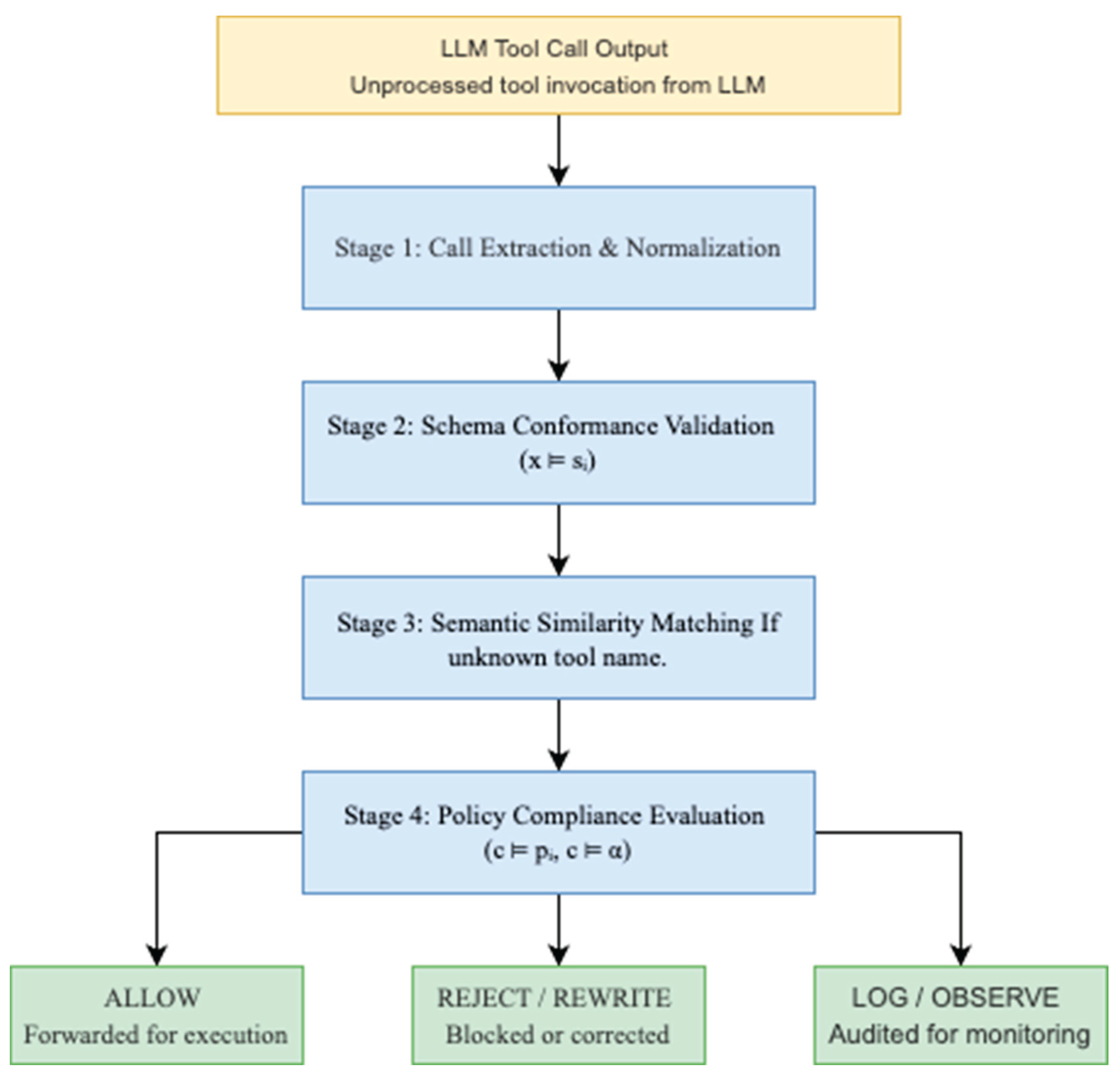

- A novel multi-stage validation pipeline incorporating schema validation, fuzzy matching, and policy enforcement

- Empirical evaluation demonstrating high accuracy (98%) and low latency (<10ms) in detecting unsafe tool calls

- An open-source implementation suitable for integration with existing LLM frameworks

2. Related Work

2.1. AI Safety and Alignment

2.2. Tool Use in Language Models

2.3. Security in LLM Applications

2.4. Middleware Systems for AI Safety

3. Problem Formulation

3.1. Threat Model

- A schema sᵢ, which specifies the expected structure and types of input parameters.

- A policy pᵢ, which defines usage constraints and access control rules.

- tool_name ∈ 𝕋 is a string identifying the intended tool.

- x is the input parameter object.

3.2. Design Objectives

- Safety: Block or correct unsafe tool calls with accuracy > 95%

- Performance: Maintain validation latency < 50ms per request

- Scalability: Support throughput > 1000 requests/second

- Usability: Integrate with existing LLM frameworks with minimal configuration

- Auditability: Provide comprehensive logging for compliance and debugging

4. Method

4.1. Approach Overview

4.2. Multi-Stage Validation Pipeline

4.3. Policy Framework

- REJECT: Terminate execution with diagnostic feedback

- REWRITE: Apply parameter corrections while preserving intent

- MONITOR: Allow execution with enhanced logging and alerting

- ALLOW: Grant unconditional execution approval

4.4. Tool Schema Management

5. Experimental Design

5.1. Evaluation Framework

- 42 valid tool calls spanning diverse domains (weather, finance, travel, utilities)

- 35 invalid tool calls exhibiting specific threat patterns

- 18 contextually unsafe calls requiring policy intervention

- 5 tool calls with deliberate naming errors for fuzzy matching evaluation

- Accuracy: Overall proportion of correct validation decisions

- Precision: Threat detection accuracy (TP / (TP + FP))

- Recall: Threat coverage (TP / (TP + FN))

- Latency: Per-call validation processing time

- Throughput: System capacity under load

6.2. Safety Effectiveness Analysis

| Validation Metric | Performance |

| Overall Accuracy | 98.0% |

| Precision | 96% |

| Recall | 94.7% |

| False Positive Rate | 1.8% |

| False Negative Rate | 2.1% |

- Phantom Invocation Detection: Perfect identification of non-existent tools (15/15 cases), demonstrating effective registry lookup mechanisms

- Parameter Hallucination Detection: 96.7% accuracy (29/30 cases) in identifying schema violations across diverse parameter types

- Policy Violation Prevention: 94.4% effectiveness (17/18 cases) in blocking contextually inappropriate calls

- Fuzzy Matching Accuracy: 100% success rate (5/5 cases) in providing appropriate tool name corrections

6.3. Computational Efficiency Results

- Median validation time: 6.2ms

- 95th percentile: 14.8ms

- 99th percentile: 28.1ms

- Maximum observed: 45.3ms

- Single-thread capacity: 5,247 validations/second

- Multi-threaded peak: 12,150 validations/second

- Memory utilization: 45MB average, 78MB peak

- Scaling behavior: Linear throughput scaling with consistent latency profiles

6.4. Generalizability Assessment

- LLM Compatibility: Successfully validated tool calls from multiple language models including GPT-4, Claude, and open-source alternatives. This demonstrates format-agnostic processing capabilities.

- Tool Domain Coverage: Effective validation across heterogeneous tool categories (APIs, databases, file systems, external services) without domain-specific customization.

- Integration Complexity: Framework integration required minimal code modifications (2-15 lines) across popular LLM frameworks, supporting practical adoption.

7. Discussion

7.1. Key Findings

7.2. Limitations and Challenges

- Schema Maintenance: Tool schemas require regular updates to remain synchronized with backend API changes. This maintenance burden could become significant in environments with frequently evolving APIs.

- Context Limitations: The current implementation does not incorporate full conversation history or user permission models in policy decisions. This potentially misses context-dependent safety issues.

- Policy Complexity: While YAML-based policies provide accessibility, complex business logic may require more sophisticated policy languages or custom validation functions.

7.3. Comparison with Existing Approaches

- Real-time Validation: Unlike post-hoc analysis tools, our system provides immediate feedback and prevention capabilities.

- Tool-specific Focus: While general-purpose content filters exist, HGuard specifically addresses the unique challenges of tool use validation.

- Policy Flexibility: Configurable policy engine supports diverse organizational requirements without code changes.

8. Future Work

8.1. Advanced Policy Languages

- Temporal Logic: Support for time-based constraints and workflows

- Probabilistic Policies: Risk-based decision making with uncertainty quantification

- Learning Policies: Adaptive policies that evolve based on observed patterns

8.2. Context-Aware Validation

- Conversation History: Full context consideration in validation decisions

- User Modeling: Personalized safety thresholds based on user profiles

- Intent Recognition: Deeper understanding of user goals to improve validation accuracy

8.3. Machine Learning Integration

- Anomaly Detection: ML-based identification of unusual tool use patterns

- Semantic Validation: Understanding parameter semantics beyond syntactic validation

- Feedback Learning: Improvement of validation accuracy through user feedback

9. Conclusion

Acknowledgments

References

- Schick, T., Dwivedi-Yu, J., Dessì, R., Raileanu, R., Lomeli, M., Zettlemoyer, L., ... & Scialom, T. (2023). Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761.

- Qin, Y., Liang, S., Ye, Y., Zhu, K., Yan, L., Lu, Y., ... & Sun, M. (2023). Toolllm: Facilitating large language models to master 16000+ real-world apis. arXiv preprint arXiv:2307.16789.

- Ji, Z., Lee, N., Frieske, R., Yu, T., Su, D., Xu, Y., ... & Fung, P. (2023). Survey of hallucination in natural language generation. ACM Computing Surveys, 55(12), 1-38. [CrossRef]

- Zhang, Y., Li, Y., Cui, L., Cai, D., Liu, L., Fu, T., ... & Shi, S. (2023). Siren's song in the AI ocean: a survey on hallucination in large language models. arXiv preprint arXiv:2309.01219.

- Greshake, K., Abdelnabi, S., Mishra, S., Endres, C., Holz, T., & Fritz, M. (2023). Not what you've signed up for: Compromising real-world llm-integrated applications with indirect prompt injection. arXiv preprint arXiv:2302.12173.

- Liu, Y., Deng, G., Xu, Z., Li, Y., Zheng, Y., Zhang, Y., ... & Zhang, T. (2023). Jailbreaking chatgpt via prompt engineering: an empirical study. arXiv preprint arXiv:2305.13860.

- Perez, F., Ribeiro, I., Malmaud, J., Soares, C., Lyu, R., Zheng, Y., ... & Sharma, A. (2022). Red teaming language models with language models. arXiv preprint arXiv:2202.03286.

- Bai, Y., Kadavath, S., Kundu, S., Askell, A., Kernion, J., Jones, A., ... & Kaplan, J. (2022). Constitutional ai: Harmlessness from ai feedback. arXiv preprint arXiv:2212.08073.

- Hendrycks, D., Burns, C., Basart, S., Zou, A., Mazeika, M., Song, D., & Steinhardt, J. (2021). Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300.

- Ganguli, D., Lovitt, L., Kernion, J., Askell, A., Bai, Y., Kadavath, S., ... & Kaplan, J. (2022). Red teaming language models to reduce harms: Methods, scaling behaviors, and lessons learned. arXiv preprint arXiv:2209.07858.

- Yao, S., Zhao, J., Yu, D., Du, N., Shafran, I., Narasimhan, K., & Cao, Y. (2022). React: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629.

- Schick, T., Dwivedi-Yu, J., Dessì, R., Raileanu, R., Lomeli, M., Zettlemoyer, L., ... & Scialom, T. (2023). Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761.

- OpenAI. (2023). Function calling and other API updates. Retrieved from https://openai.com/blog/function-calling-and-other-api-updates.

- OWASP Foundation. (2023). OWASP Top 10 for Large Language Model Applications. Retrieved from https://owasp.org/www-project-top-10-for-large-language-model-applications/.

- Wei, A., Haghtalab, N., & Steinhardt, J. (2023). Jailbroken: How does llm safety training fail? arXiv preprint arXiv:2307.02483.

- Zou, A., Wang, Z., Kolter, J. Z., & Fredrikson, M. (2023). Universal and transferable adversarial attacks on aligned language models. arXiv preprint arXiv:2307.15043.

- Carlini, N., Ippolito, D., Jagielski, M., Lee, K., Tramer, F., & Zhang, C. (2023). Quantifying memorization across neural language models. arXiv preprint arXiv:2202.07646.

- Gehman, S., Gururangan, S., Sap, M., Choi, Y., & Smith, N. A. (2020). Realtoxicityprompts: Evaluating neural toxic degeneration in language models. arXiv preprint arXiv:2009.11462.

- Welbl, J., Glaese, A., Uesato, J., Dathathri, S., Mellor, J., Hendricks, L. A., ... & Irving, G. (2021). Challenges in detoxifying language models. arXiv preprint arXiv:2109.07445.

- Solaiman, I., Brundage, M., Clark, J., Askell, A., Herbert-Voss, A., Wu, J., ... & Wang, J. (2019). Release strategies and the social impacts of language models. arXiv preprint arXiv:1908.09203.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).