Submitted:

12 June 2025

Posted:

13 June 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Background

1.2. Research Status

1.3. Purpose and Structure of the Paper

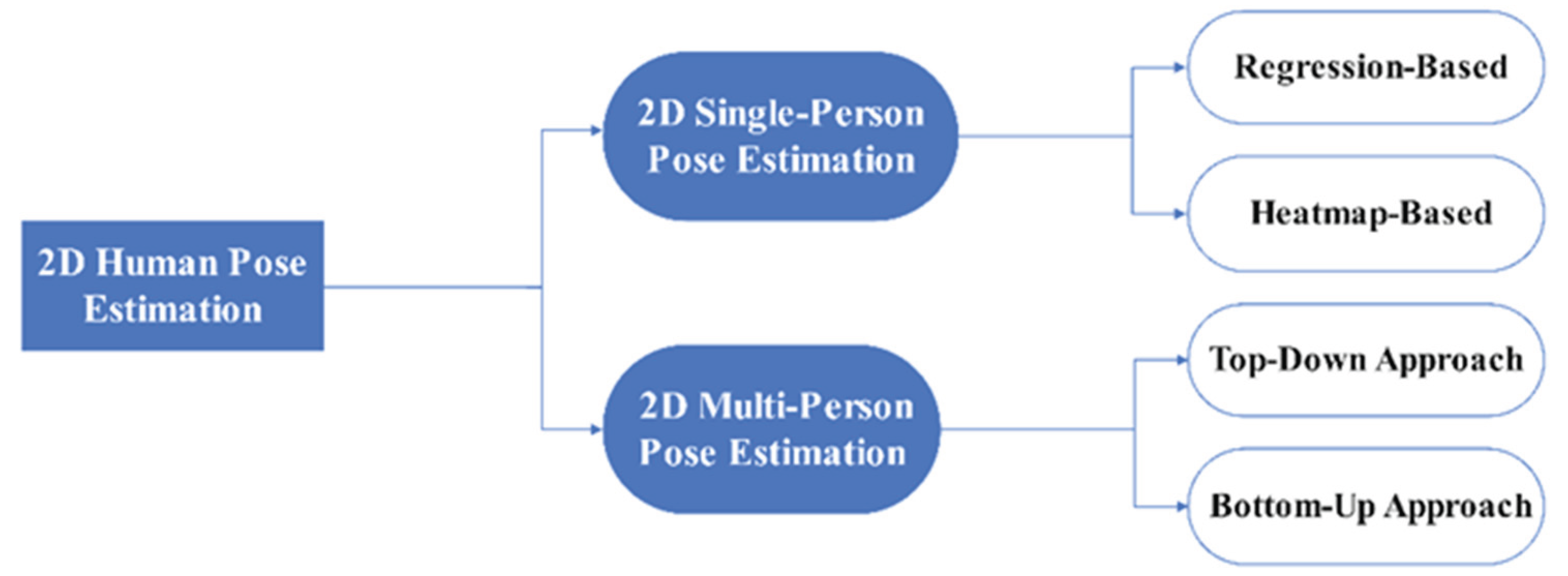

2. 2D Human Pose Estimation

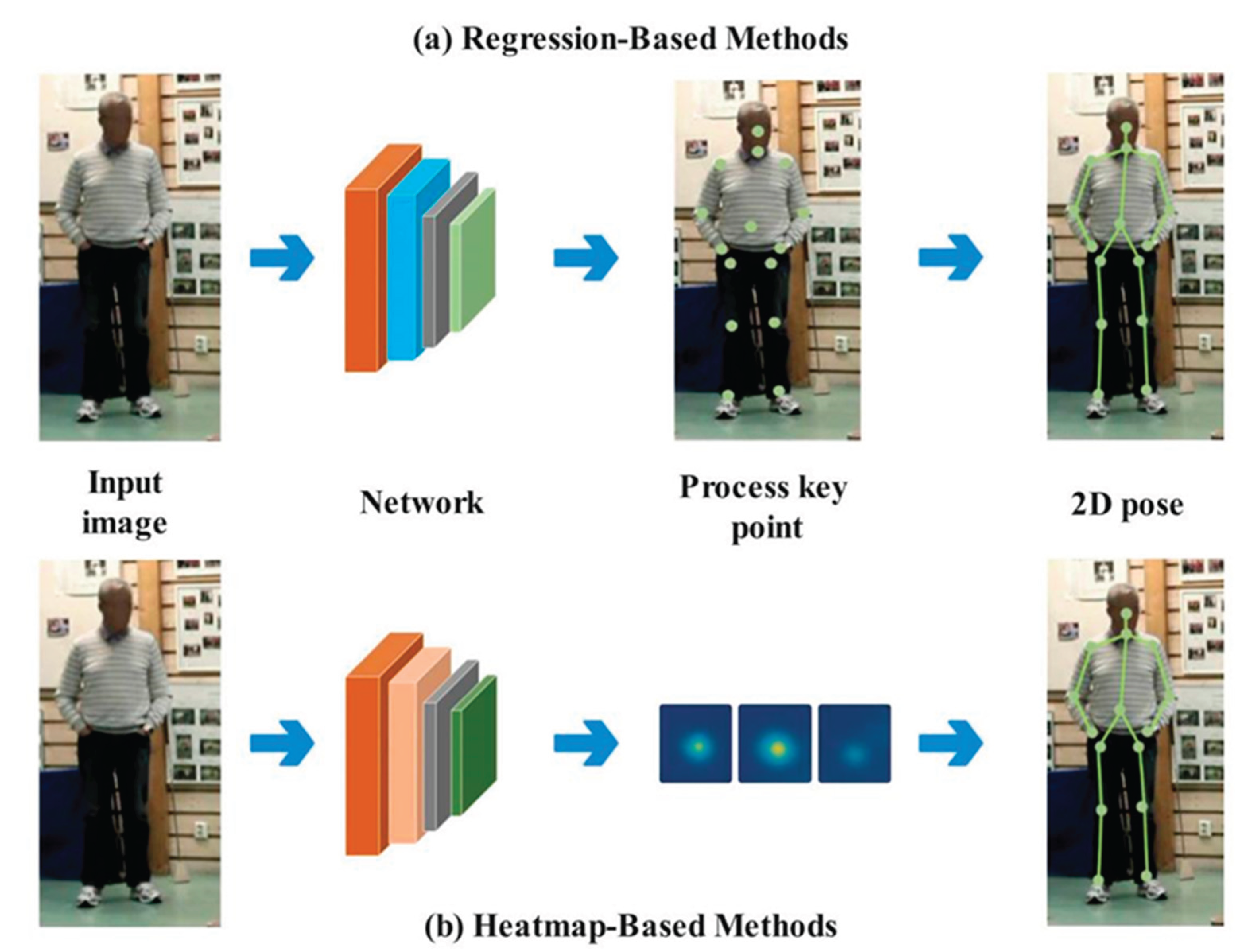

2.1. 2D Single-Person Pose Estimation

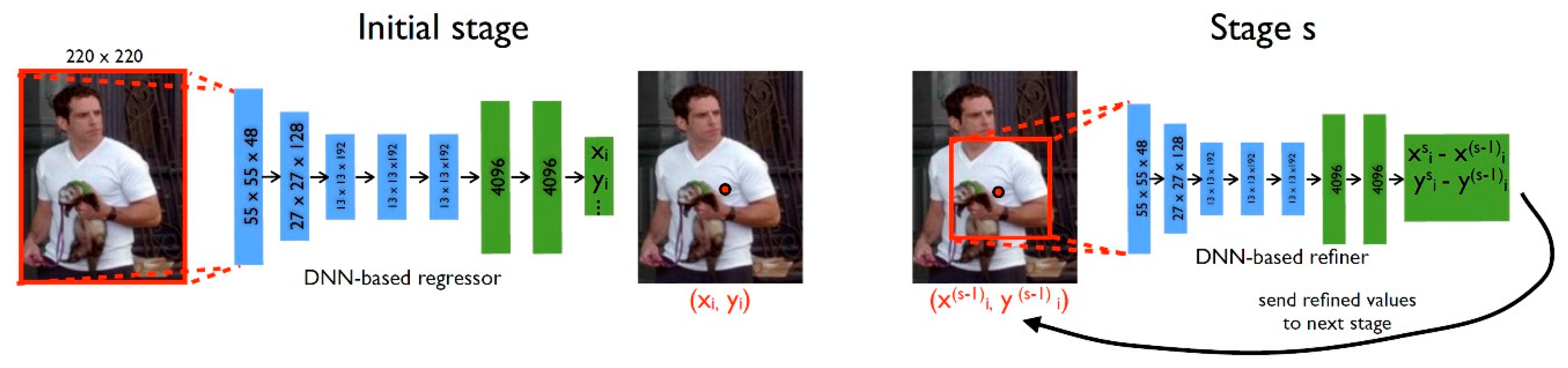

2.1.1. Regression-Based Pose Estimation

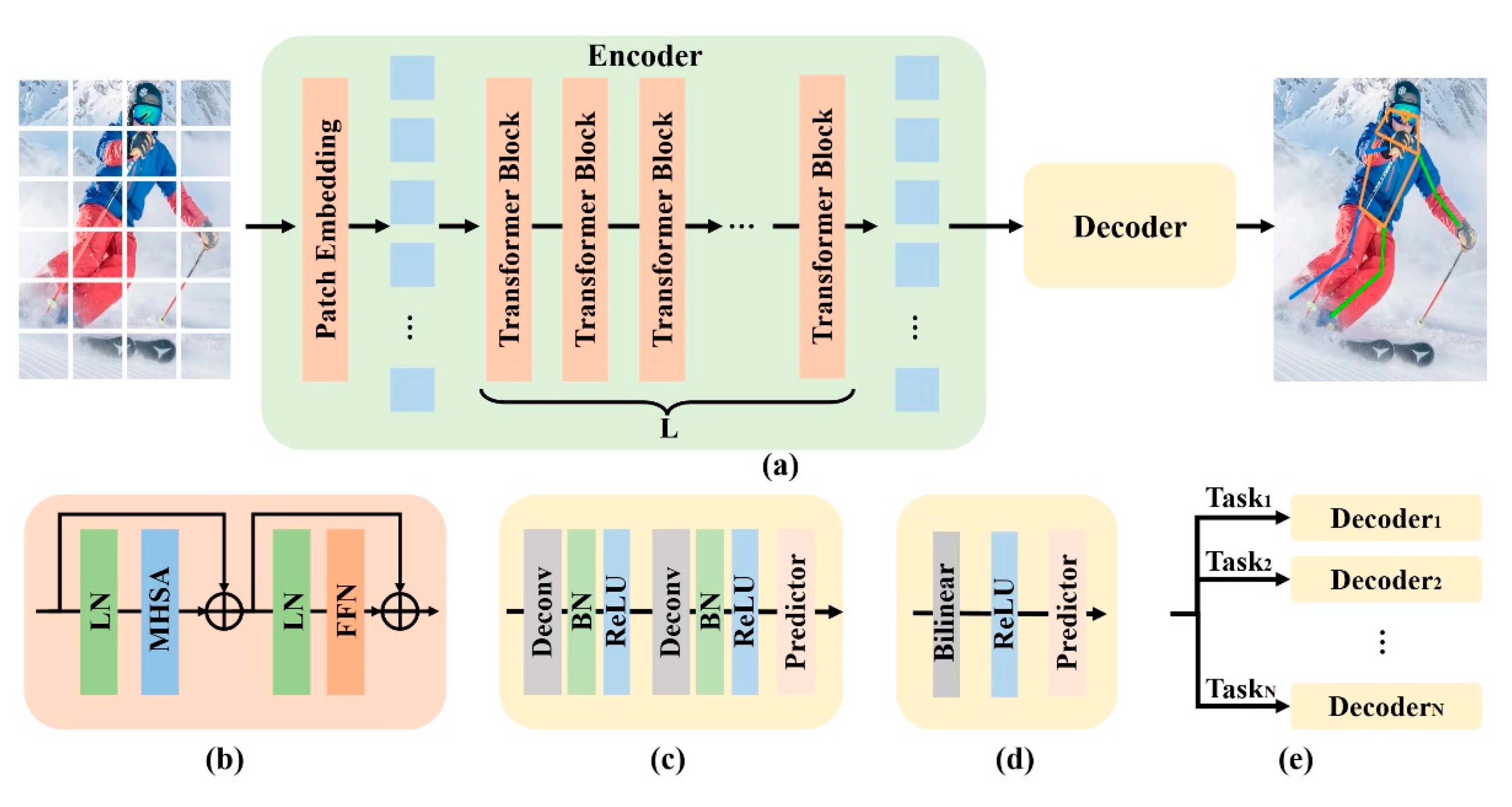

2.1.2. Heatmap -Based Pose Estimation

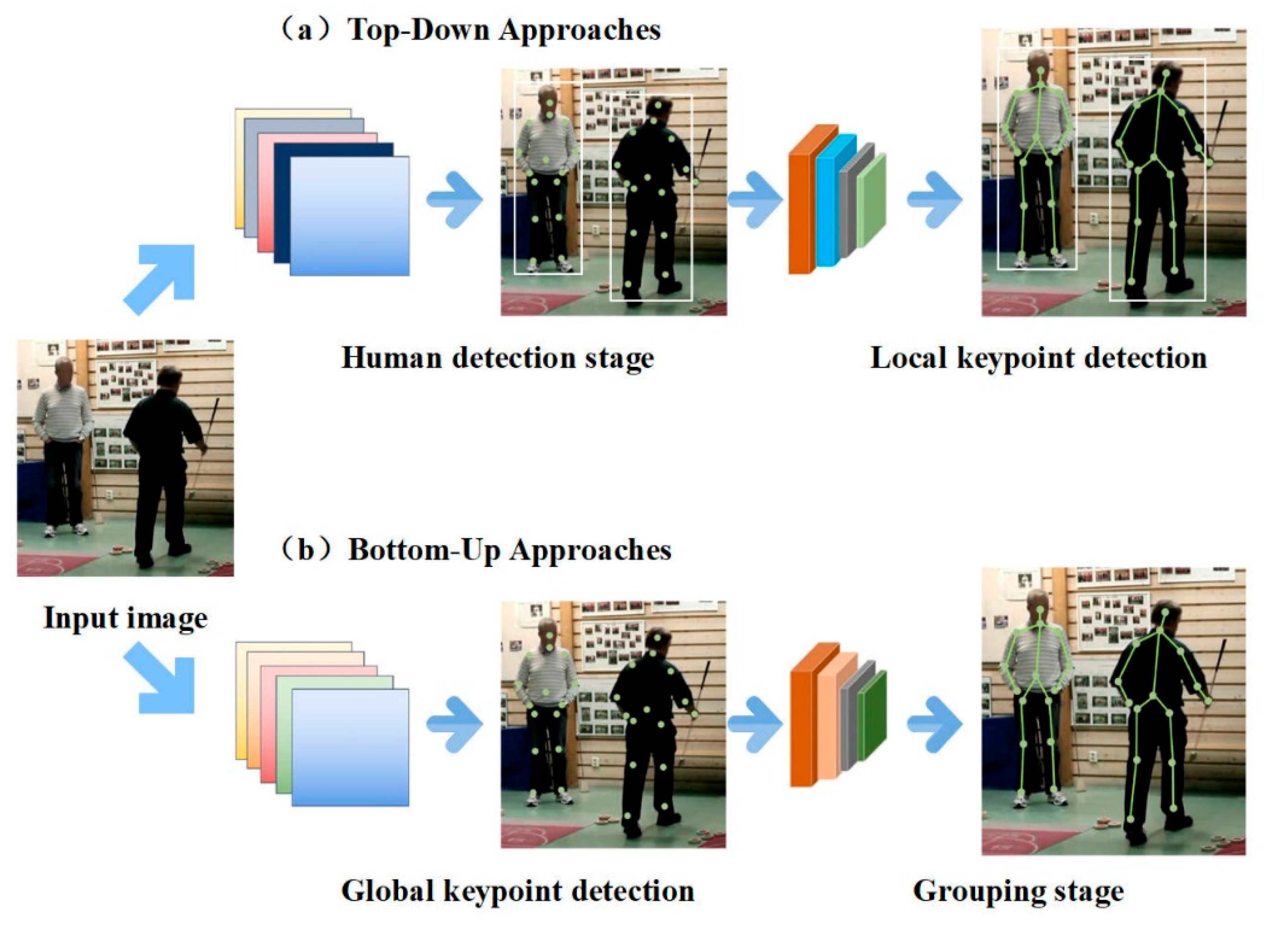

2.2. 2D Multi-Person Pose Estimation

2.2.1. Top-Down Approach of 2D Multi-Person Pose Estimation

2.2.2. Bottom-Up Approach of 2D Multi-Person Pose Estimation

3. Evaluation Metrics and Benchmark Datasets

3.1. Evaluation Metrics

3.2. Benchmark Datasets

3.2.1. Max Planck Institute for Informatics (MPII)

3.2.2. Common Objects in Context (COCO)

3.2.3. Leeds Sports Pose (LSP) and LSP Extended (LSPE)

3.2.4. Occluded Human Dataset (OCHuman)

3.2.5. CrowdPose Dataset

4. Top-Performing Models on Benchmark Datasets

4.1. Top-Performing Models on MPII Dataset

4.2. Top-Performing Models on COCO Test-Dev Dataset

| Model | Year | Backbone | AP | Input size | Characteristic |

| ViTPose[33] | 2022 | ViTAE-G | 80.9 | 256 × 192 | ViTPose is built on a streamlined ViT architecture, offering a compelling combination of high performance, scalability, and strong transferability. |

| UDP-Pose-PSA [56] | 2021 | HRNet-W48 | 79.5 | 384 × 288 | UDP-Pose-PSA integrates HRNet-W48 with polarized self-attention to enhance long-range dependency modeling, thereby improving the accuracy and fine-grained performance of keypoint detection. |

| 4xRSN-50(ensemble ) [52] |

2020 | ResNet-50 | 79.2 | 256×192 | 4×RSN-50 (ensemble) integrates four multi-branch Residual Steps Networks to effectively fuse intra-layer features. |

| CCM+ [57] | 2020 | HRNet-w48 | 78.9 | 384×288 | CCM+ significantly improves keypoint detection accuracy through cascaded contextual fusion, sub-pixel localization, and an efficient training strategy. |

| UDP-Pose-PSA [56] | 2021 | HRNet-W48 | 78.9 | 256x192 | UDP-Pose-PSA achieves an AP of 78.9 at an input resolution of 256×192, which is slightly lower than the 79.5 obtained at 384×288. |

4.3. Top-Performing Models on LSP Dataset

| Model | Year | Backbone | PCK | Input size | Characteristic |

| Soft-gated Skip Connections [59] | 2020 | HourGlassand U-Net | 94.8% | 256×256 | A channel scaling factor is introduced to optimize feature fusion, enhancing both model accuracy and computational efficiency. |

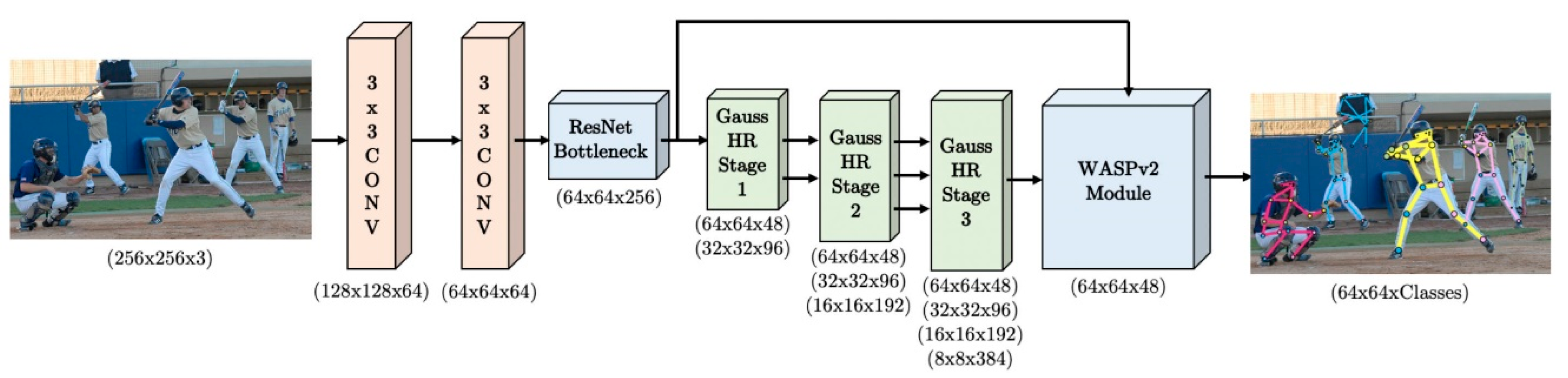

| UniPose [60] | 2020 | ResNet-101 | 94.5% | 1280×720 | UniPose integrates a ResNet backbone with the WASP module to enable efficient end-to-end pose estimation and bounding box detection. |

| Residual Hourglass + ASR + AHO [61] | 2018 | Stacked Hourglass | 94.5% | 256×256 | Residual Hourglass combines residual structures with adversarial enhancement to improve multi-scale feature modeling and the accuracy of keypoint localization. |

| SAHPE-Network [62] | 2017 | Stacked Hourglass Network | 94% | 256×256 | This approach introduces a discriminator to learn structural constraints, thereby enhancing the accuracy and plausibility of pose estimation. |

| PRMs [25] | 2017 | stacked Hourglass network | 93.9% | 256×256 | PRMs employs multi-branch convolutions to extract multi-scale features, enhancing the adaptability and accuracy of pose estimation under varying scales. |

4.4. Top-Performing Models on OCHuman Dataset

| Model | Year | Backbone | Test AP | Input size | Characteristic |

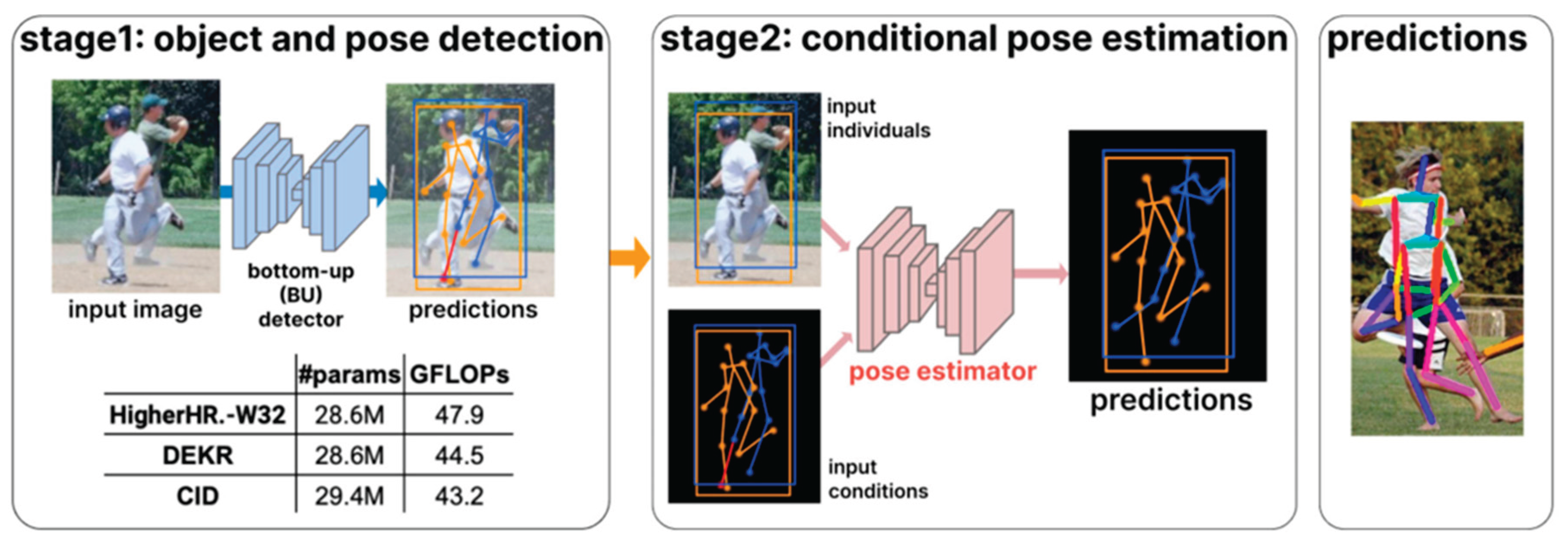

| BUCTD [63] | 2023 | CID-W32 | 47.2 | 256×192 | BUCTD leverages bottom-up pose estimation as a conditional input to enhance accuracy and robustness in crowded and occluded scenarios. |

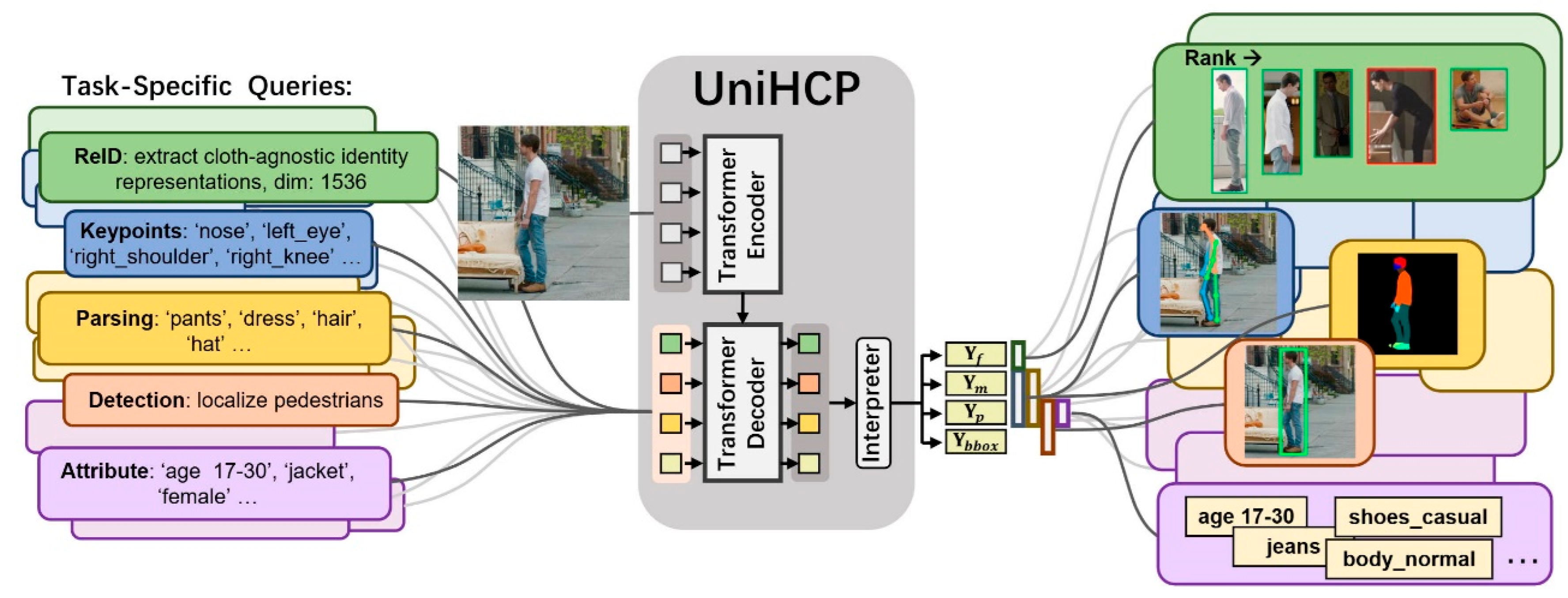

| HQNet [64] | 2024 | ViT-L | 45.6 | 512×512 | HQNet enables unified modeling of multi-task human understanding by sharing a Transformer decoder and a human query mechanism. |

| CID [32] | 2022 | HRNet-W48 | 45.0 | 512x512 | CID introduces a context-instance decoupling mechanism that employs spatial and channel attention to extract instance-aware features for keypoint estimation. |

| MIPNet [62] | 2021 | HRNet-W48 | 42.5 | 256×256 | MIPNet introduces a Multi-Instance Modulation Block (MIMB) that enables efficient prediction of multiple pose instances within a single detection box. |

| HQNet [64] | 2024 | ResNet-50 | 40.0 | 512×512 | Built on a ResNet-50 backbone, the model offers strong spatial locality modeling, high computational efficiency, and a lightweight structure. |

4.5. Top-Performing Models on CrowdPose Dataset

| Model | Year | Backbone | AP | Input size | Characteristic |

| ViTPose [33] | 2022 | ViTAE-G | 78.3 | 256×192 | ViTPose is built on a streamlined ViT architecture, offering a compelling combination of high performance, scalability, and strong transferability. |

| BUCTD [63] | 2023 | HRNet-W48 | 76.7 | 384×288 | The BUCTD model integrates conditional inputs inspired by the PETR framework. |

| SwinV2-L 1K-MIM [66] |

2022 | Swin Transformer | 75.5 | 384×384 | By employing self-supervised Masked Image Modeling (MIM) pretraining on the ImageNet-1K dataset, the model is able to learn rich and generalizable representations. |

| SwinV2-B 1K-MIM [66] |

2022 | Swin Transformer | 74.9 | 224×224 | Self-supervised pretraining with Masked Image Modeling (MIM) significantly enhances the model's performance on geometric and motion-related tasks. |

| BUCTD [63] | 2023 | ResNet-50 | 72.9 | 384×288 | The BUCTD model uses the CoAM-W48 configuration without employing any sampling strategy. |

5. Conclusions and Future Perspectives

- Develop unified end-to-end frameworks that integrate object detection, keypoint localization, and post-processing. These frameworks should emphasize the use of Transformer architectures to capture long-range spatial and semantic dependencies;

- Improve the robustness of keypoint prediction under occlusion. This can be achieved by incorporating generative models and self-supervised learning techniques;

- Design compact models that are efficient during inference. These models should be suitable for deployment on resource-constrained platforms such as mobile phones and wearable devices;

- Enhance model adaptability across different datasets, environments, and population groups. This can be achieved by leveraging cross-domain generalization techniques such as domain adaptation and transfer learning;

- Build more diverse and densely annotated datasets. Additionally, refine evaluation protocols to enable the extension of pose estimation to high-level tasks, such as action recognition and semantic behavior analysis;

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Sun, R., Lin, Z., Leng, S., Wang, A., & Zhao, L. (2025). An In-Depth Analysis of 2D and 3D Pose Estimation Techniques in Deep Learning: Methodologies and Advances. Electronics, 14(7), 1307. [CrossRef]

- Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems, 25.

- Kipf, T. N., & Welling, M. (2016). Semi-supervised classification with graph convolutional networks. arXiv preprint arXiv:1609.02907.

- Tian, L., Wang, P., Liang, G., & Shen, C. (2021). An adversarial human pose estimation network injected with graph structure. Pattern Recognition, 115, 107863. [CrossRef]

- Felzenszwalb, P. F., & Huttenlocher, D. P. (2005). Pictorial structures for object recognition. International journal of computer vision, 61, 55-79. [CrossRef]

- Felzenszwalb, P., McAllester, D., & Ramanan, D. (2008, June). A discriminatively trained, multiscale, deformable part model. In 2008 IEEE conference on computer vision and pattern recognition (pp. 1-8). Ieee.

- Cao, Z., Simon, T., Wei, S. E., & Sheikh, Y. (2017). Realtime multi-person 2d pose estimation using part affinity fields. In Pro. [CrossRef]

- ceedings of the IEEE conference on computer vision and pattern recognition (pp. 7291-7299).

- He, K., Gkioxari, G., Dollár, P., & Girshick, R. (2017). Mask r-cnn. In Proceedings of the IEEE international conference on computer vision (pp. 2961-2969).

- Xiao, B., Wu, H., & Wei, Y. (2018). Simple baselines for human pose estimation and tracking. In Proceedings of the European conference on computer vision (ECCV) (pp. 466-481).

- Wang, J., Sun, K., Cheng, T., Jiang, B., Deng, C., Zhao, Y., ... & Xiao, B. (2020). Deep high-resolution representation learning for visual recognition. IEEE transactions on pattern analysis and machine intelligence, 43(10), 3349-3364. [CrossRef]

- Newell, A., Huang, Z., & Deng, J. (2017). Associative embedding: End-to-end learning for joint detection and grouping. Advances in neural information processing systems, 30.

- Cheng, B., Xiao, B., Wang, J., Shi, H., Huang, T. S., & Zhang, L. (2020). Higherhrnet: Scale-aware representation learning for bottom-up human pose estimation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 5386-5395).

- Ma, X., Su, J., Wang, C., Ci, H., & Wang, Y. (2021). Context modeling in 3d human pose estimation: A unified perspective. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 6238-6247).

- Li, Y., Zhang, S., Wang, Z., Yang, S., Yang, W., Xia, S. T., & Zhou, E. (2021). Tokenpose: Learning keypoint tokens for human pose estimation. In Proceedings of the IEEE/CVF International conference on computer vision (pp. 11313-11322).

- Yuan, Y., Fu, R., Huang, L., Lin, W., Zhang, C., Chen, X., & Wang, J. (2021). Hrformer: High-resolution transformer for dense prediction. arXiv preprint arXiv:2110.09408.

- Yu, C., Xiao, B., Gao, C., Yuan, L., Zhang, L., Sang, N., & Wang, J. (2021). Lite-hrnet: A lightweight high-resolution network. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 10440-10450).

- Hou, T., Ahmadyan, A., Zhang, L., Wei, J., & Grundmann, M. (2020). MobilePose: Real-time pose estimation for unseen objects with weak shape supervision. arXiv preprint arXiv:2003.03522.

- Toshev, A., & Szegedy, C. (2014). Deeppose: Human pose estimation via deep neural networks. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1653-1660).

- Carreira, J., Agrawal, P., Fragkiadaki, K., & Malik, J. (2016). Human pose estimation with iterative error feedback. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 4733-4742).

- Sun, X., Xiao, B., Wei, F., Liang, S., & Wei, Y. (2018). Integral human pose regression. In Proceedings of the European conference on computer vision (ECCV) (pp. 529-545).

- Moon, G., Chang, J. Y., & Lee, K. M. (2019). Posefix: Model-agnostic general human pose refinement network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 7773-7781).

- Tompson, J. J., Jain, A., LeCun, Y., & Bregler, C. (2014). Joint training of a convolutional network and a graphical model for human pose estimation. Advances in neural information processing systems, 27.

- Wei, S. E., Ramakrishna, V., Kanade, T., & Sheikh, Y. (2016). Convolutional pose machines. In Proceedings of the IEEE conference on Computer Vision and Pattern Recognition (pp. 4724-4732).

- Newell, A., Yang, K., & Deng, J. (2016). Stacked hourglass networks for human pose estimation. In Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, October 11-14, 2016, Proceedings, Part VIII 14 (pp. 483-499). Springer International Publishing.

- Yang, W., Li, S., Ouyang, W., Li, H., & Wang, X. (2017). Learning feature pyramids for human pose estimation. In proceedings of the IEEE international conference on computer vision (pp. 1281-1290).

- Chu, X., Yang, W., Ouyang, W., Ma, C., Yuille, A. L., & Wang, X. (2017). Multi-context attention for human pose estimation. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1831-1840).

- Zhang, F., Zhu, X., Dai, H., Ye, M., & Zhu, C. (2020). Distribution-aware coordinate representation for human pose estimation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 7093-7102).

- Huang, J., Zhu, Z., Guo, F., & Huang, G. (2020). The devil is in the details: Delving into unbiased data processing for human pose estimation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 5700-5709).

- Li, K., Wang, S., Zhang, X., Xu, Y., Xu, W., & Tu, Z. (2021). Pose recognition with cascade transformers. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 1944-1953).

- Wang, J., Long, X., Gao, Y., Ding, E., & Wen, S. (2020). Graph-pcnn: Two stage human pose estimation with graph pose refinement. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part XI 16 (pp. 492-508). Springer International Publishing.

- Kocabas, M., Karagoz, S., & Akbas, E. (2018). Multiposenet: Fast multi-person pose estimation using pose residual network. In Proceedings of the European conference on computer vision (ECCV) (pp. 417-433).

- Khirodkar, R., Chari, V., Agrawal, A., & Tyagi, A. (2021). Multi-instance pose networks: Rethinking top-down pose estimation. In Proceedings of the IEEE/CVF International conference on computer vision (pp. 3122-3131).

- Xu, Y., Zhang, J., Zhang, Q., & Tao, D. (2022). Vitpose: Simple vision transformer baselines for human pose estimation. Advances in neural information processing systems, 35, 38571-38584.

- Cao, Z., Hidalgo, G., Simon, T., Wei, S. E., & Sheikh, Y. (2019). Openpose: Realtime multi-person 2d pose estimation using part affinity fields. IEEE transactions on pattern analysis and machine intelligence, 43(1), 172-186. [CrossRef]

- Papandreou, G., Zhu, T., Chen, L. C., Gidaris, S., Tompson, J., & Murphy, K. (2018). Personlab: Person pose estimation and instance segmentation with a bottom-up, part-based, geometric embedding model. In Proceedings of the European conference on computer vision (ECCV) (pp. 269-286). [CrossRef]

- Li, M., Zhou, Z., Li, J., & Liu, X. (2018, August). Bottom-up pose estimation of multiple person with bounding box constraint. In 2018 24th international conference on pattern recognition (ICPR) (pp. 115-120). IEEE.

- Jin, S., Ma, X., Han, Z., Wu, Y., Yang, W., Liu, W., ... & Ouyang, W. (2017, October). Towards multi-person pose tracking: Bottom-up and top-down methods. In ICCV posetrack workshop (Vol. 2, No. 3, p. 7).

- Li, J., Su, W., & Wang, Z. (2020, April). Simple pose: Rethinking and improving a bottom-up approach for multi-person pose estimation. In Proceedings of the AAAI conference on artificial intelligence (Vol. 34, No. 07, pp. 11354-11361). [CrossRef]

- Geng, Z., Sun, K., Xiao, B., Zhang, Z., & Wang, J. (2021). Bottom-up human pose estimation via disentangled keypoint regression. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 14676-14686).

- Shi, D., Wei, X., Li, L., Ren, Y., & Tan, W. (2022). End-to-end multi-person pose estimation with transformers. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 11069-11078).

- Barron, J. L., Fleet, D. J., & Beauchemin, S. S. (1994). Performance of optical flow techniques. International journal of computer vision, 12, 43-77.

- Ferrari, V., Marin-Jimenez, M., & Zisserman, A. (2008, June). Progressive search space reduction for human pose estimation. In 2008 IEEE Conference on Computer Vision and Pattern Recognition (pp. 1-8). IEEE.

- Yang, Y., & Ramanan, D. (2012). Articulated human detection with flexible mixtures of parts. IEEE transactions on pattern analysis and machine intelligence, 35(12), 2878-2890. Yang, Y., & Ramanan, D. (2012). Articulated human detection with flexible mixtures of parts. IEEE transactions on pattern analysis and machine intelligence, 35(12), 2878-2890.

- Andriluka, M., Pishchulin, L., Gehler, P., & Schiele, B. (2014). 2d human pose estimation: New benchmark and state of the art analysis. In Proceedings of the IEEE Conference on computer Vision and Pattern Recognition (pp. 3686-3693).

- Sun, X., Shang, J., Liang, S., & Wei, Y. (2017). Compositional human pose regression. In Proceedings of the IEEE international conference on computer vision (pp. 2602-2611).

- Lin, T. Y., Maire, M., Belongie, S., Hays, J., Perona, P., Ramanan, D., ... & Zitnick, C. L. (2014). Microsoft coco: Common objects in context. In Computer vision–ECCV 2014: 13th European conference, zurich, Switzerland, September 6-12, 2014, proceedings, part v 13 (pp. 740-755). Springer International Publishing.

- Everingham, M., Van Gool, L., Williams, C. K., Winn, J., & Zisserman, A. (2010). The pascal visual object classes (voc) challenge. International journal of computer vision, 88, 303-338. [CrossRef]

- Johnson, S., & Everingham, M. (2010, August). Clustered pose and nonlinear appearance models for human pose estimation. In bmvc (Vol. 2, No. 4, p. 5).

- Zhang, S. H., Li, R., Dong, X., Rosin, P., Cai, Z., Han, X., ... & Hu, S. M. (2019). Pose2seg: Detection free human instance segmentation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 889-898).

- Li, J., Wang, C., Zhu, H., Mao, Y., Fang, H. S., & Lu, C. (2019). Crowdpose: Efficient crowded scenes pose estimation and a new benchmark. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 10863-10872).

- Geng, Z., Wang, C., Wei, Y., Liu, Z., Li, H., & Hu, H. (2023). Human pose as compositional tokens. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 660-671).

- Cai, Y., Wang, Z., Luo, Z., Yin, B., Du, A., Wang, H., ... & Sun, J. (2020, August). Learning delicate local representations for multi-person pose estimation. In European conference on computer vision (pp. 455-472). Cham: Springer International Publishing.

- Artacho, B., & Savakis, A. (2020). Unipose: Unified human pose estimation in single images and videos. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 7035-7044).

- Li, W., Wang, Z., Yin, B., Peng, Q., Du, Y., Xiao, T., ... & Sun, J. (2019). Rethinking on multi-stage networks for human pose estimation. arXiv preprint arXiv:1901.00148.

- Zhang, H., Ouyang, H., Liu, S., Qi, X., Shen, X., Yang, R., & Jia, J. (2019). Human pose estimation with spatial contextual information. arXiv preprint arXiv:1901.01760.

- Liu, H., Liu, F., Fan, X., & Huang, D. (2021). Polarized self-attention: Towards high-quality pixel-wise regression. arXiv preprint arXiv:2107.00782.

- Zhang, J., Chen, Z., & Tao, D. (2021). Towards high performance human keypoint detection. International Journal of Computer Vision, 129(9), 2639-2662. [CrossRef]

- Artacho, B., & Savakis, A. (2021). Omnipose: A multi-scale framework for multi-person pose estimation. arXiv preprint arXiv:2103.10180.

- Bulat, A., Kossaifi, J., Tzimiropoulos, G., & Pantic, M. (2020, November). Toward fast and accurate human pose estimation via soft-gated skip connections. In 2020 15th IEEE international conference on automatic face and gesture recognition (FG 2020) (pp. 8-15). IEEE.

- Artacho, B., & Savakis, A. (2020). Unipose: Unified human pose estimation in single images and videos. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 7035-7044).

- Peng, X., Tang, Z., Yang, F., Feris, R. S., & Metaxas, D. (2018). Jointly optimize data augmentation and network training: Adversarial data augmentation in human pose estimation. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 2226-2234).

- Chou, C. J., Chien, J. T., & Chen, H. T. (2018, November). Self adversarial training for human pose estimation. In 2018 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC) (pp. 17-30). IEEE.

- Zhou, M., Stoffl, L., Mathis, M. W., & Mathis, A. (2023). Rethinking pose estimation in crowds: overcoming the detection information bottleneck and ambiguity. In Proceedings of the IEEE/CVF International Conference on Computer Vision (pp. 14689-14699).

- Jin, S., Li, S., Li, T., Liu, W., Qian, C., & Luo, P. (2024, September). You only learn one query: learning unified human query for single-stage multi-person multi-task human-centric perception. In European Conference on Computer Vision (pp. 126-146). Cham: Springer Nature Switzerland.

- Wang, D., & Zhang, S. (2022). Contextual instance decoupling for robust multi-person pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 11060-11068).

- Xie, Z., Geng, Z., Hu, J., Zhang, Z., Hu, H., & Cao, Y. (2023). Revealing the dark secrets of masked image modeling. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 14475-14485).

| Metric Name | Full Name | Normalized |

Structure Considered |

Confidence Considered |

Applicable Datasets |

| PCP[42] | Percentage of Correct Parts | √ | √ | × | LSP, LSP-Extended |

| PCK[43] | Percentage of Correct Keypoints | √ | × | × | MPII, LSP, AI Challenger |

| PCKh[44] | PCK with Head-normalized | √ | × | × | MPII |

| AUC[45] | Area Under Curve | √ | × | × | AI Challenger |

| OKS[46] | Object Keypoint Similarity | √ | √ | √ | COCO、PoseTrack |

| AP@OKS[46] | Average Precision based on OKS | √ | √ | √ | COCO, CrowdPose |

| AR@OKS[46] | Average Recall based on OKS | √ | √ | √ | COCO, CrowdPose |

| mAP@OKS[46] | Mean Average Precision based on OKS | √ | √ | √ | COCO, PoseTrack, OCHuman |

| IoU[47] | Intersection over Union | √ | × | × | COCO |

| Metric Name | Computation Principle | Advantages | Limitations |

| PCP[42] | A body part is considered correct if both predicted endpoints fall within a tolerance distance of the ground truth endpoints. | Captures structural correctness; accounts for limb connectivity. | Sensitive to limb length; ineffective for small parts; rarely used in modern benchmarks. |

| PCK[43] | A keypoint prediction is correct if it lies within α × reference length of the ground truth point. | Simple and intuitive; widely adopted in early pose estimation research. | Threshold-dependent; not scale-invariant; ignores keypoint visibility. |

| PCKh[44] | Similar to PCK but uses head segment length as the normalization factor | Better normalization for human scale; standard for the MPII dataset. | Depends on accurate head annotation; not suitable for multi-person scenarios. |

| AUC[45] | Computes the area under the PCK curve across varying thresholds. | Aggregates performance across multiple thresholds; reduces threshold sensitivity. | Less interpretable; inherits limitations of PCK. |

| OKS[46] | Measures keypoint similarity using a Gaussian penalty based on Euclidean distance, normalized by object scale and keypoint-specific constants. | Scale-invariant; considers keypoint visibility and relative importance. | Sensitive to hyperparameters (e.g., σ); requires manual calibration; complex to compute. |

| AP@OKS[46] | Computes average precision across multiple OKS thresholds (0.50 to 0.95) using confidence-ranked predictions. | Official COCO benchmark; evaluates both localization and detection confidence. | High computational cost; strongly dependent on confidence ranking; penalizes slight deviations. |

| AR@OKS[46] | Computes average recall across a fixed OKS threshold under varying detection limits (e.g., maxDets=20). | Measures detection completeness; useful in dense or occluded scenes. | Does not reflect precision; prone to false positives; affected by maxDets setting. |

| mAP@OKS[46] | Mean of AP@OKS scores across 10 OKS thresholds (0.50–0.95, step size 0.05). | Standard benchmark for multi-person pose estimation; balances precision and recall across scales and occlusion. | Sensitive to confidence calibration; penalizes invisible or slightly off-keypoints; computationally intensive. |

| IoU[47] | Ratio of overlap area to union area between predicted and ground truth bounding boxes. | Intuitive geometric measure; standard in object detection. | Inapplicable to keypoint evaluation; insensitive to pose structure or semantics. |

| Model | Year | Backbone | PCKh@0.5 | Input size | Characteristic |

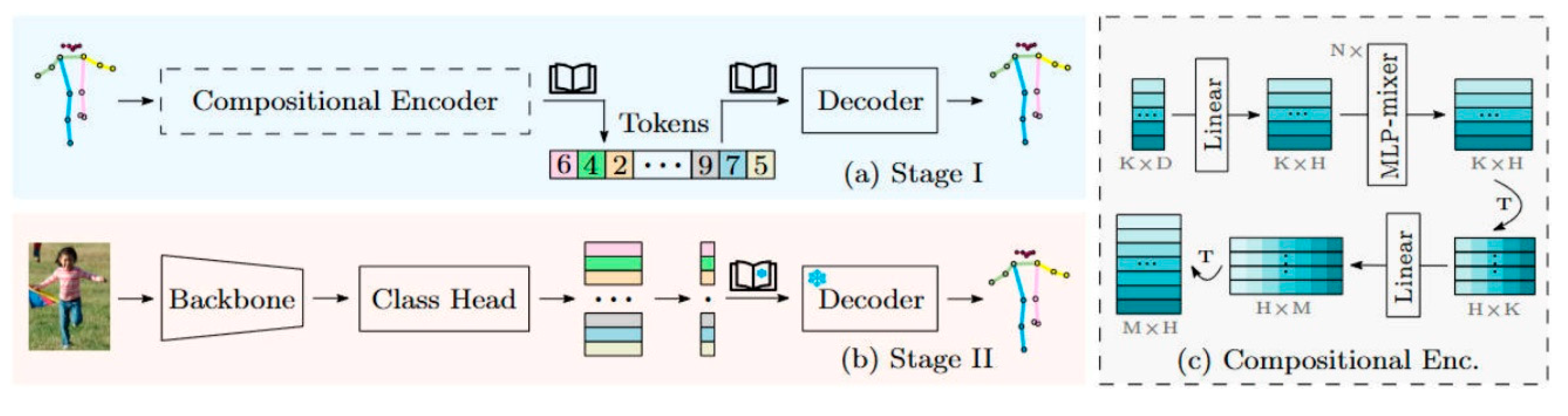

| PCT[51] | 2023 | swin-base | 93.8% | 256 × 256 | PCT enhances dependency modeling and occlusion-robust inference by decomposing human pose into structured, discrete symbolic subcomponents. |

| 4xRSN-50[52] | 2020 | ResNet-50 | 93.0% | 256×192 | 4×RSN-50 enhances keypoint localization accuracy and efficiency by stacking Residual Steps Blocks (RSBs) to integrate fine-grained local features. |

| UniPose[53] | 2020 | ResNet-101 | 92.7% | 1280×720 | By integrating the ResNet architecture with the WASP multi-scale module, the model achieves efficient and accurate single-stage pose estimation and bounding box detection. |

| MSPN[54] | 2019 | ResNet-50 | 92.6% | 384×288 | MSPN improves both accuracy and efficiency by optimizing single-stage modules, feature aggregation mechanisms, and supervision strategies. |

| Spatial Context[55] |

2019 | ResNet-50 | 92.5% | 256×256 | The Spatial Context model integrates multi-stage prediction with joint graph structures to accurately capture spatial relationships between human joints. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).