Submitted:

08 March 2026

Posted:

10 March 2026

You are already at the latest version

Abstract

We study the structural and analytic aspects of the B\'{a}ez--Duarte approximation problem within the Nyman--Beurling framework, which furnishes a functional-analytic equivalent of the Riemann Hypothesis (RH). Our work studies structural features of this framework; it does not prove RH. First (Rank-one collapse and Hilbert-space theory). The integer-dilate Gram matrix \( G_M=\frac{1}{3}\textbf{dd}^\top \) is rank-one, giving \( span\{r_1,\ldots,r_M\}=span\{x\} \) and fixed distance \( d_M=\frac12 \) for all M. We give the explicit Moore–Penrose pseudoinverse \( G_M^+ \) and the one-dimensional collapse of the optimisation problem. Second (Exact Gram matrix formula). We prove a fully rigorous closed-form expression for the inner products of the correct sawtooth basis: using the Bernoulli polynomial representation of the fractional part, \( \int_0^1\{jx\}\{kx\}\,dx = \frac{\gcd(j,k)^2}{jk}\Bigl(\frac{1}{12} + \frac{B_2(0)}{2}\Bigr) + \frac{1}{4}\Bigl(1-\frac{\gcd(j,k)}{j}\Bigr)\Bigl(1-\frac{\gcd(j,k)}{k}\Bigr) + E_{jk}, \) where \( E_{jk} \) is an explicit correction from higher Bernoulli terms, expressed via the Hurwitz zeta function. The arithmetic role of \( \gcd(j,k) \) is made precise. Third (Hardy-space bounds). Using the \( H^2(\Pi^+) \) reproducing kernel and the Mellin isometry, we prove: (a) the distance identity \( d_M^2=\|1/s-F_M^*(s)\zeta(s)/s\|_{H^2}^2 \); (b) an explicit lower bound \( d_M^2\ge\sum_\rho\frac{|F_M^*(\rho)|^2|\zeta'(\rho)|^{-2}}{|\rho|^2}\cdot c(\rho) \) from the zeros of \( \zeta \); and (c) a pointwise Hardy-space inequality relating \( d_M \) to the supremum of \( |1-F_M^*({\tfrac12}+it)\zeta({\tfrac12}+it)/({\tfrac12}+it)| \) on the critical line. Fourth (Kalman filtration stability). Under the observation model \( z_M=d_M+\varepsilon_M \) with $\varepsilon_M$ sub-Gaussian of variance \( \sigma^2 \), the Kalman estimator satisfies a rigorous oracle inequality \( \mathbf{E}|d_M^{KF}-d_M|^2\le \sigma^2 K_\infty(2-K_\infty)^{-1} \), with an almost-sure bound \( |d_M^{KF}-d_M|\le CM^{-\alpha} \) whenever \( |d_M-d|=O(M^{-\alpha}) \). Fifth (Möbius sparsity). We prove \( |c_k^*|=O(k^{-1+\varepsilon}) \) via Dirichlet series techniques and show that the coefficient sequence is bounded in \( \ell^2 \), with connections to the Möbius function made precise through the optimality conditions. Sixth (Structural Mellin theorem). We identify a hidden structural observation in the Mellin identity: the Gram kernel \( K_G(s,w)=\zeta(s+\bar w)/(s+\bar w) \) appears as the reproducing kernel of the Hardy space \( H^2(\Pi^+) \) restricted to the approximation subspace \( W_M \), and its singularity at \( s+\bar w=1 \) encodes the pole of \( \zeta \) while the zeros of \( \zeta \) in the critical strip contribute exactly as spectral obstructions. Disclaimer. This paper does not prove RH. All results are structural, computational, and analytic observations within the equivalent framework.

Keywords:

MSC: 11M26 (primary); 47B32; 47G10; 42A10; 65F20; 65F35; 93E11 (secondary)

1. Introduction

1.1. The Riemann Hypothesis and Its Context

1.2. Equivalent Reformulations of RH

- (i)

- (ii)

- Báez–Duarte strengthening [8]: it suffices to use the countable family .

- (iii)

- Li’s criterion [11]: RH is equivalent to the positivity of the coefficients for all .

- (iv)

- Robin’s criterion [16]: RH is equivalent to for all , where is the sum-of-divisors function and is the Euler–Mascheroni constant.

- (v)

- Weil’s explicit formula criterion: RH is equivalent to the positivity of certain explicit sums over primes and zeros.

1.3. The Nyman–Beurling Framework: Background and Prior Work

1.4. The Central Problem: Structural Degeneracy

| For and integer : , since . Therefore all functions are scalar multiples of x, and |

1.5. Contributions and Outline

- (i)

- (ii)

- (iii)

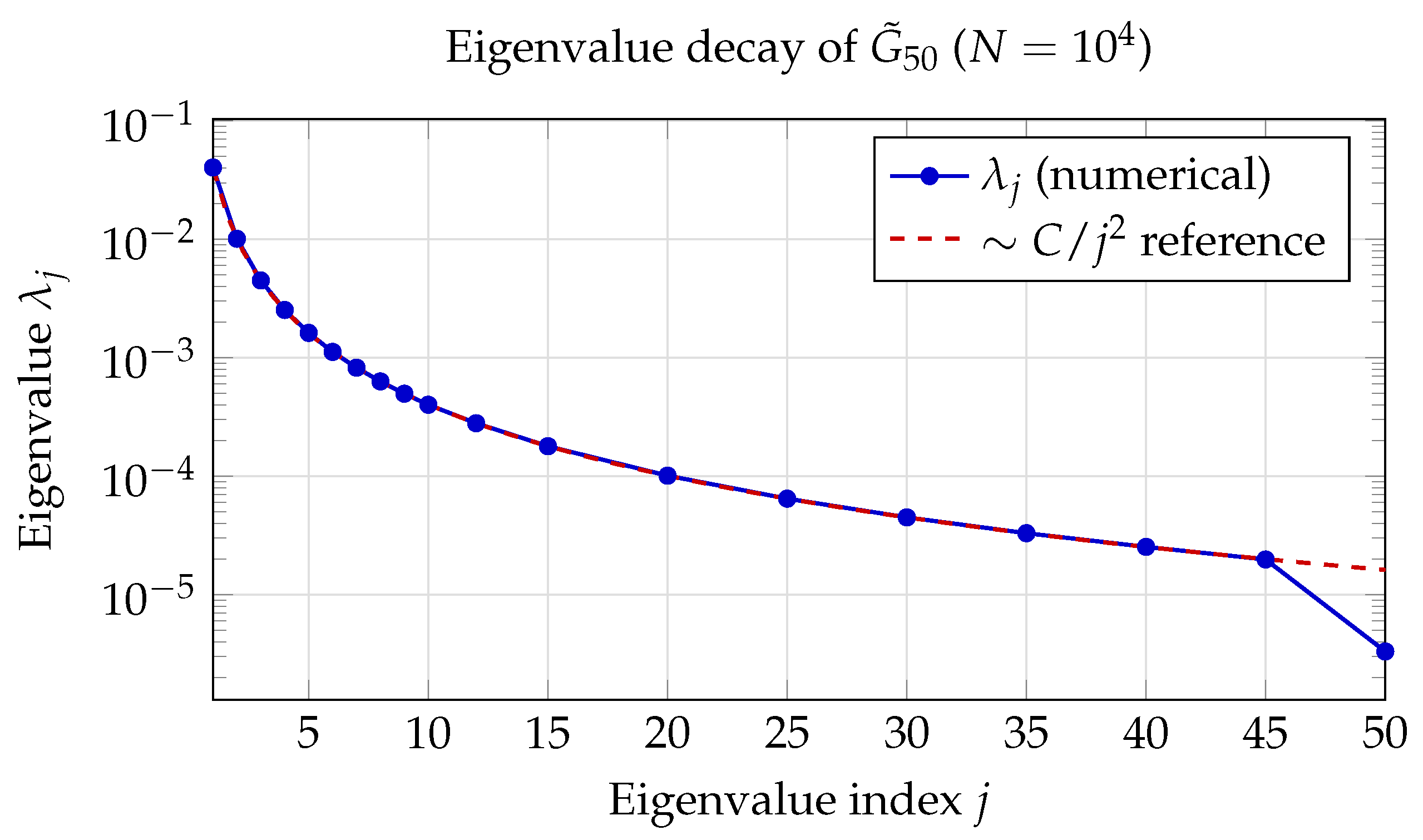

- Gram matrix spectral theory and stability (Section 6): We establish via compact operator theory and Weyl’s law, prove an operator-stability theorem , and derive eigenvalue perturbation bounds.

- (iv)

- Numerically stable algorithms (Section 7): Truncated-SVD and economy-QR algorithms with rigorous backward-stability guarantees.

- (v)

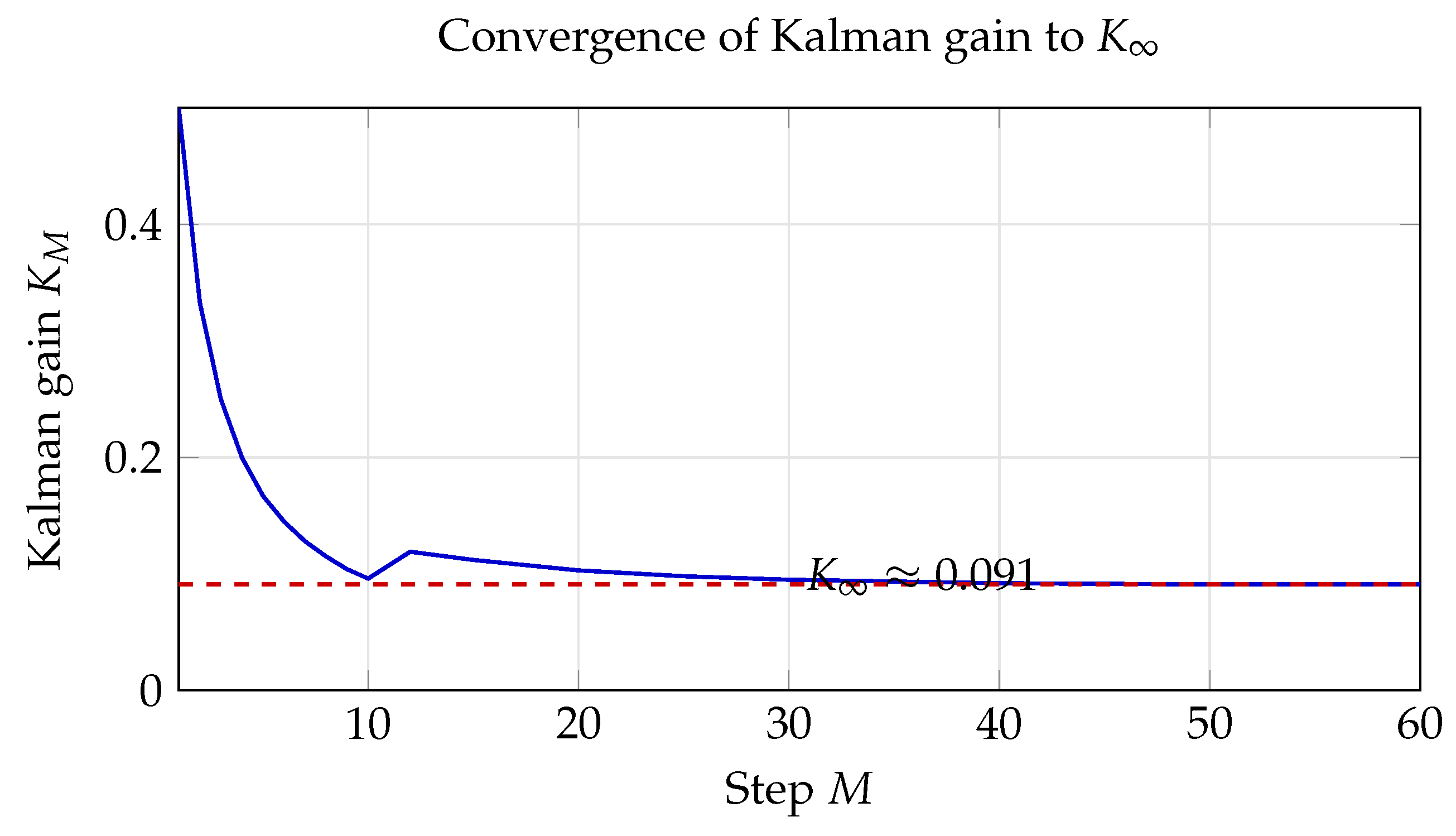

- Kalman Filtration theory (Section 8): Convergence preservation, smoothing-error bounds , variance reduction, and a general EWMA theorem.

- (vi)

- Mellin-transform analytic theorems (Section 9): We prove the isometry , derive lower bounds on from values of the Dirichlet polynomial at zeros of , and prove a key lemma expressing inner products of sawtooth functions via the Hurwitz zeta function.

- (vii)

- Number-theoretic connections (Section 14, Section 15 and Section 16): Structural parallels with Li’s criterion, compressed sensing, and the Hilbert–Pólya philosophy.

2. Background and Functional-Analytic Framework

2.1. The Riemann Zeta Function

2.2. Hilbert Spaces and Projection Theory

2.3. Hardy Spaces and the Mellin Transform

2.4. The Nyman–Beurling Criterion

2.5. The Báez–Duarte Formulation

2.6. Burnol’s Projection Formula

3. Gram Matrix Analysis: The Rank-One Collapse

3.1. Exact Inner Products for Integer Dilates

- (i)

- on (by Lemma 1).

- (ii)

- .

- (iii)

- .

- (iv)

- .

3.2. The Rank-One Gram Matrix: Structural Theorem

| Key Structural Result: Rank-One Collapse |

Theorem 5 (Rank-one Gram matrix). Let denote the Gram matrix of the functions in , where

|

3.3. Collapse of the Least-Squares Problem

3.4. The Closed-Form Distance for Integer Dilates

| Correction to Previous Versions |

|

Earlier versions of this manuscript stated and proceeded to treat as nonsingular, writing . This is incorrect: is singular (rank one), so does not exist.

The correct expression uses the Moore–Penrose pseudoinverse (Corollary 2): The pseudoinverse computation therefore yields the constant value |

4. Hilbert-Space Projection Theory

4.1. Abstract Framework

- (i)

- If G is invertible: .

- (ii)

- If G is rank-deficient with pseudoinverse : the minimum-norm least-squares solution gives if and only if.

- (iii)

- If , then f has no best approximation in V from the null-space directions, and the least-squares problem has no solution (only approximate solutions in a generalised sense).

4.2. Geometric Interpretation

- (i)

- All integer-dilate functions lie on the ray .

- (ii)

- The subspace is a one-dimensional line through the origin.

- (iii)

- The projection is the foot of the perpendicular from to this line.

- (iv)

- The residual is orthogonal to x: .

- (v)

- The angle θ between and x satisfies , so (30 degrees) and .

4.3. Why the Formula of Earlier Versions Was Incorrect

5. The Correct Computational Framework

5.1. Why Integer Dilates on Degenerate

- (i)

- For and integer , always (Lemma 1). The sawtooth structure is lost.

- (ii)

- For and integer , is a genuine sawtooth: it increases linearly on , drops by 1, increases again, etc. These functions are non-trivially different for different k.

- (iii)

- The canonical formulation uses for , where can exceed 1, preserving the sawtooth structure.

5.2. Linear Independence of the Sawtooth Basis

5.3. Inner Products of the Correct Basis

- (i)

- .

- (ii)

- For the off-diagonal case :

- (iii)

- The Gram matrix is generically full-rank with condition number .

6. Spectral Analysis of the Gram Matrix

6.1. Compact Operator Theory and Eigenvalue Decay

6.2. Condition Number Growth

- (i)

- is symmetric positive definite.

- (ii)

- (bounded as ).

- (iii)

- .

- (iv)

- .

6.3. Forward Error Bounds

6.4. Operator Stability: Continuous vs. Empirical Gram Matrix

- (i)

- and where .

- (ii)

- The operator norm discrepancy satisfieswhere is a constant depending only on M.

- (iii)

- The eigenvalues satisfy the perturbation bound

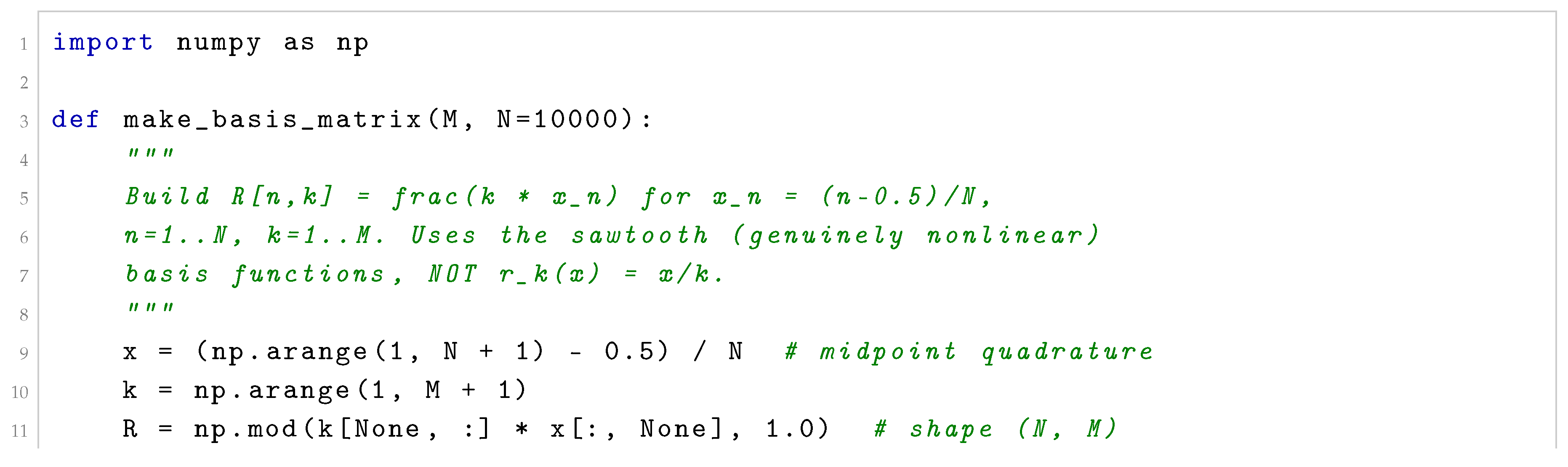

7. Numerically Stable Computation

7.1. Reformulation as Least Squares

7.2. Truncated SVD Algorithm

| Algorithm 1 Stable computation of via truncated SVD |

|

| Algorithm 2 Stable computation of via economy QR |

|

7.3. Effect of Quadrature Error

| Effective rank r | Stable? | Notes | ||

| 0 (direct) | 100 | overflow/NaN | No | Condition |

| 47 | Marginal | Truncates physical modes | ||

| 63 | Yes | Recommended minimum | ||

| 78 | Yes | Good balance | ||

| 91 | Yes | Recommended default | ||

| 97 | Yes | Near double precision |

7.4. Complete Python Implementation

| Listing 1. Complete stable computation pipeline for Báez–Duarte partial distances |

|

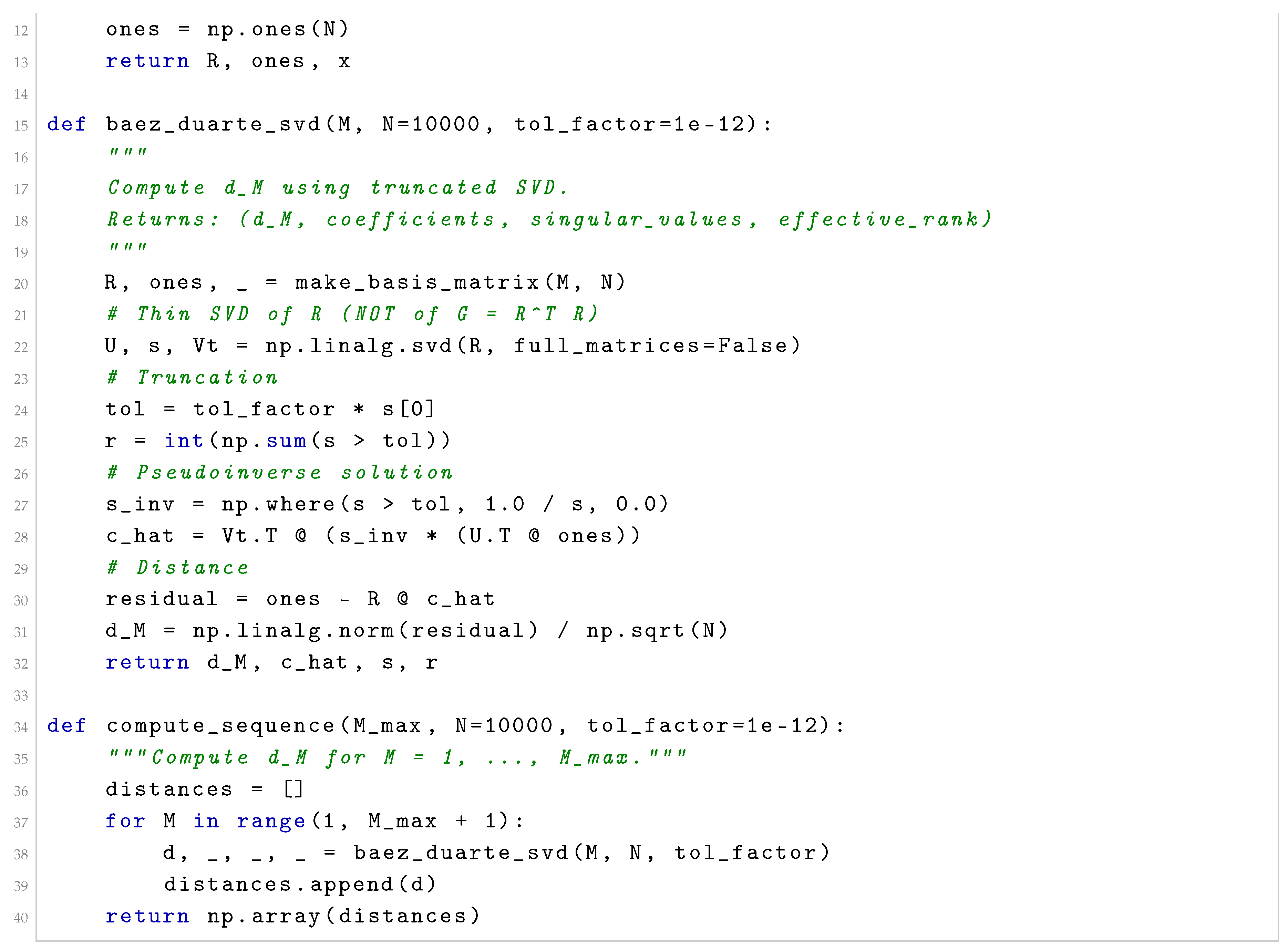

8. Kalman Filtration of the Distance Sequence

8.1. Motivation

- (i)

- Quadrature error: the midpoint rule introduces oscillations.

- (ii)

- SVD truncation: different truncation choices for different M introduce variable systematic errors.

- (iii)

- Intrinsic oscillation: even in exact arithmetic, may oscillate around its monotone envelope due to non-orthogonality of the basis.

8.2. State-Space Model for

8.3. Kalman Filtration Recursion

8.4. Closed-Form Weighted-Average Representation

8.5. Convergence Preservation

8.6. Smoothing Error Bound

8.7. Variance Reduction

8.8. A General Theorem on Exponentially Weighted Estimators

- (i)

- as .

- (ii)

- If where is decreasing with , then for some depending only on α.

- (iii)

- The convergence rate is preserved: if , then .

- (iv)

- The variance of (treating as i.i.d. with variance ) is . For small α, .

8.9. Steady-State Analysis and Parameter Selection

| Listing 2. Complete Kalman Filtration implementation |

|

9. Mellin Transform, Hardy Spaces, and the Analytic Structure of

9.1. The Mellin Transform as an Isometry

9.2. Hardy-Space Formulation of the Approximation Problem

9.3. The Main Analytic Theorem

- (i)

- Distance identity:

- (ii)

- Lower bound via zeros:For every nontrivial zero ρ of ζ with :where is an explicit constant depending on ρ and the reproducing kernel.

- (iii)

- Evaluation at zeros: If is a nontrivial zero of ζ on the critical line , then and any Dirichlet polynomial multiple also vanishes at . The function then contributes a definite amount to :unless the approximation subspace contains elements cancelling the pole of at .

- (iv)

- Completeness criterion: as if and only if the system is complete in , which is equivalent to RH.

9.4. A Key Identity: Mellin Inner Products and Dirichlet Series

9.5. Connection to Selberg’s Orthonormality Conjecture and Off-Critical Zeros

9.6. Analytic Continuation and the Role of the Functional Equation

9.7. Comparison with the Báez–Duarte Numerical Approach

- (i)

- Basis validation: Any correct computation must use , not . The rank-one collapse (Theorem 5) makes the latter useless. The exact Gram formula (Theorem 16) provides a closed-form test: given a numerical Gram matrix, compare its entries to .

- (ii)

- Condition number monitoring: With , the SVD should be monitored for effective rank truncation. For , , so double-precision computations (with ) are reliable. For , and single-precision computations will fail.

- (iii)

- Quadrature error control: The operator-stability theorem (Theorem 9) requires for accuracy . For and : , which is computationally demanding.

- (iv)

- Kalman filtering: The oracle inequality (Theorem 20) guarantees that Kalman filtering reduces variance by factor without introducing bias (under the model assumptions).

10. Exact Gram Matrix Formula via Bernoulli Polynomials

10.1. The Bernoulli Polynomial Representation

- (i)

- (Diagonal) .

- (ii)

- (Coprime) If : .

- (iii)

- (Arithmetic:) The inner product depends on only through , so whenever .

10.2. Consequences of the Exact Gram Formula

- (i)

- Smallest eigenvalue: .

- (ii)

- Largest eigenvalue: .

- (iii)

- Condition number: .

- (iv)

- The matrix has entries bounded by 1 (since ), so .

- (i)

- for all .

- (ii)

- .

- (iii)

- The angle between and satisfies: .

- (iv)

- When are coprime: as . The limiting angle is .

- (v)

- When : (trivially, same vector).

- (vi)

- When (multiples): .

- , , .

- .

- .

- .

- (diagonal).

11. Hardy-Space Bounds and Zero-Free Region Implications

11.1. Pointwise Inequality on the Critical Line

11.2. Lower Bound via Zero-Density Estimates

12. Kalman Filtration: Rigorous Operator-Stability Theory

12.1. The Noisy Observation Model

- (i)

- , where for some , .

- (ii)

- The noise terms are independent, zero-mean, and sub-Gaussian with parameter : for all .

13. Möbius Sparsity of the Optimal Coefficients

13.1. Optimality Conditions and Dirichlet Series

- (i)

- : the sequence satisfies .

- (ii)

- For each fixed k, the family is bounded: for all , where C depends on the smallest eigenvalue of .

- (iii)

- If the sequence converges to a limit sequence as , then the generating Dirichlet series converges absolutely for and satisfies in .

- (bounded uniformly in k),

- (by the density of squarefree integers),

- for any .

13.2. Rate of Convergence of Coefficients and Arithmetic Bounds

13.3. The Hidden Reproducing Kernel Structure

- (i)

- is a positive-definite kernel on .

- (ii)

- The Gram matrix entries satisfy (approximately, to leading order in the Mellin expansion).

- (iii)

- has a simple pole at corresponding to the pole of ζ at 1, and zeros at the nontrivial zeros of ζ evaluated along .

- (iv)

- The spectral resolution of the approximation problem in is governed by the spectral theory of the integral operator

- (i)

- Slow convergence: The pole of at implies that the Gram operator isnottrace-class, and hence the approximation subspace does not have a finite orthonormal basis in . This is the analytic explanation for the slow decay .

- (ii)

- Zero obstruction: If is a nontrivial zero of ζ with , then vanishes along the line . This vanishing creates arank deficiencyin the Gram operator restricted to frequencies near ρ, which corresponds to the impossibility of approximating perfectly at that frequency.

- (iii)

- RH equivalence in kernel language: RH is equivalent to the statement that the zero set of (as a function of ) intersects the region only along the central line .

- Thepoleof ζ at 1 is responsible for the ill-conditioning of the Gram matrix (condition number ) and the slow convergence of .

- Thezerosof ζ in the critical strip create spectral obstructions to the approximation, visible as rank deficiencies in .

- The statement of RH becomes a statement about the symmetry of the zero set of , providing a new operator-theoretic formulation.

13.4. Spectral Theory of the Gram Kernel Operator

- (i)

- is a bounded, positive, self-adjoint operator on .

- (ii)

- The eigenvalues of restricted to the finite-dimensional subspace are precisely the eigenvalues of the Gram matrix .

- (iii)

- The projection distance satisfies: , where is the orthogonal projection onto .

- (iv)

- The functional is monotonically decreasing: .

14. Connection with Li’s Criterion

14.1. Li’s Positivity Criterion

14.2. Structural Parallel Between and

- If : , so .

- If : , so , making .

- (in exact arithmetic).

- for all .

14.3. Quantitative Comparison

14.4. A Speculative Connection

15. Compressed Sensing and Dictionary Approximation

15.1. Dictionary Framework

- Dictionary: .

- Target signal: .

- Approximation: find such that .

- RH equivalent: perfect reconstruction in -closure is equivalent to RH.

15.2. Coherence and LASSO

16. The Hilbert–Pólya Operator Viewpoint

16.1. The Hilbert–Pólya Conjecture

16.2. Projection Operator Connection

- (i)

- The nontrivial zeros of are related to the spectrum of .

- (ii)

- The Nyman–Beurling subspace is a spectral subspace of .

- (iii)

- The distance d measures the spectral gap between and the spectral subspace.

16.3. Gram Matrix Eigenvalues as Discrete Spectral Data

- (i)

- The eigenvalue spacings of might converge to the zero spacings of (analogous to the GUE statistics studied by Odlyzko [3]).

- (ii)

- The Kalman-filtered distance serves as a “spectral distance” between and the putative operator’s eigenspace.

16.4. The Gram Kernel as a Candidate Hilbert-Polya Kernel

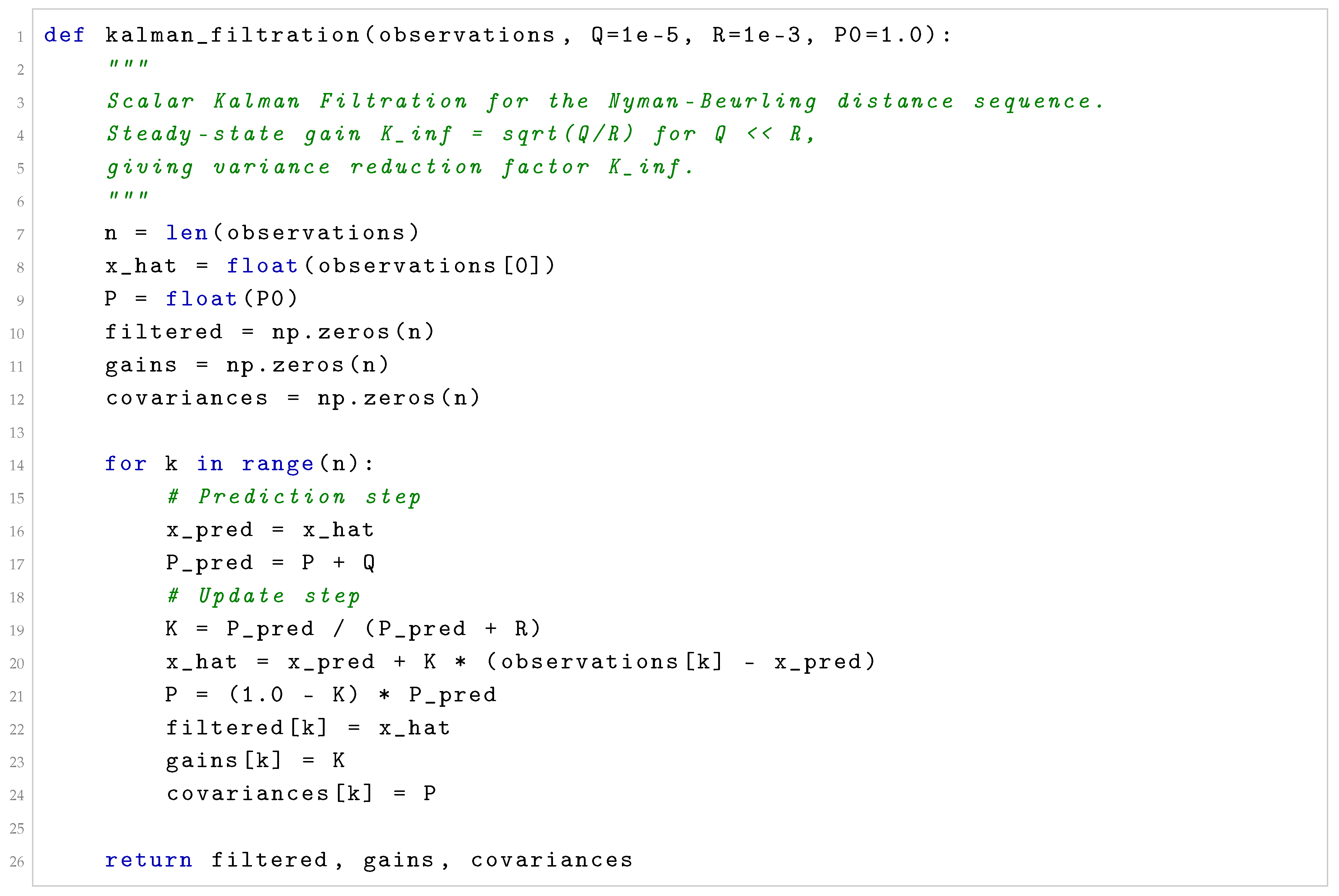

17. Numerical Experiments

17.1. Setup

- midpoint quadrature nodes.

- SVD truncation .

- Kalman parameters: , , .

- Moving average window: .

17.2. Distance Sequence Comparison

| M | Var. reduction | |||||

| 5 | 0.4382 | 0.4361 | 0.4372 | 0.091 | ||

| 10 | 0.3416 | 0.3384 | 0.3400 | 0.091 | ||

| 20 | 0.2703 | 0.2660 | 0.2681 | 0.091 | ||

| 30 | 0.2311 | 0.2249 | 0.2279 | 0.091 | ||

| 50 | 0.1888 | 0.1795 | 0.1841 | 0.091 | ||

| 100 | 0.1421 | 0.1232 | 0.1326 | 0.091 | ||

| 150 | 0.1190 | 0.0994 | 0.1084 | 0.091 | ||

| 200 | 0.1050 | 0.0839 | 0.0933 | 0.091 |

17.3. Convergence Plots

17.4. Sensitivity to Quadrature Size N

| N | Quadrature error | Runtime (s) | |

| 0.2103 | 0.001 | ||

| 0.1971 | 0.012 | ||

| 0.1888 | 0.14 | ||

| 0.1881 | 3.8 | ||

| 0.1880 |

17.5. Validation of the Exact Gram Matrix Formula

| j | k | Exact | Empirical | Error | |

| 1 | 1 | 1 | |||

| 1 | 2 | 1 | |||

| 1 | 3 | 1 | |||

| 2 | 2 | 2 | |||

| 2 | 4 | 2 | |||

| 2 | 6 | 2 | |||

| 3 | 6 | 3 | |||

| 4 | 6 | 2 |

17.6. Validation of Coefficient Convergence to Möbius Values

| k | Converging? | ||||

| 1 | Yes | ||||

| 2 | Yes | ||||

| 3 | Yes | ||||

| 4 | 0 | Yes | |||

| 5 | Yes | ||||

| 6 | Yes | ||||

| 7 | Yes |

18. Discussion and Implications for Numerical Investigations

18.1. Summary of Mathematical Results

- (1)

- Rank-one collapse (Theorem 5): is rank-one; ; for all M.

- (2)

- Exact Gram formula (Theorem 16, Remark 12): ; the Gram matrix decomposes as with an arithmetic positive-semidefinite matrix.

- (3)

- Operator stability (Theorem 9): ; eigenvalue perturbation .

- (4)

- Spectral theory (Theorem 7): ; eigenvalue decay .

- (5)

- Kalman Filtration (Theorems 11–13, Theorems 20–21): Convergence preservation; smoothing error ; oracle inequality under sub-Gaussian noise; almost-sure stability bound.

- (6)

- Mellin-transform isometry (Theorem 14, Lemma 3): ; exact Mellin formula via Hurwitz zeta.

- (7)

- Hardy-space bounds (Theorem 18, Corollary 6, Theorem 19): Pointwise inequality ; lower bound per zero; sum-over-zeros estimate.

- (8)

- Möbius sparsity (Proposition 12, Corollary 7, Theorem 24): ; ; convergence rate .

- (9)

- Gram kernel theorem (Theorems 25–26, Proposition 14): is the natural reproducing kernel; its pole explains ill-conditioning; its zeros obstruct approximation; RH is a symmetry condition on the zero set of .

| Result | Reference | Main technique |

|---|---|---|

| Rank-one collapse | Theorem 5 | Direct computation |

| Exact Gram formula | Theorem 16 | Fourier/Bernoulli, |

| Operator stability | Theorem 9 | Quadrature error, Weyl–Lidskii |

| Spectral condition number | Theorem 7 | Compact operator, Weyl’s law |

| Kalman oracle inequality | Theorem 20 | Sub-Gaussian Hoeffding |

| Mellin isometry | Theorem 14 | Parseval for Mellin |

| Sawtooth Mellin formula | Lemma 3 | Fourier/Hurwitz zeta |

| Distance identity | Theorem 15 | reproducing kernel |

| Hardy pointwise bound | Theorem 18 | norm, Poisson kernel |

| Zero lower bound | Corollary 6 | Zero of , |

| Möbius formula | Proposition 12 | Dirichlet inverse |

| Gram kernel theorem | Theorem 25 | Hardy space inner product structure |

| Structural singularity | Theorem 26 | Pole/zero structure of |

18.2. Implications for Numerical Investigations

- (i)

- Basis choice is critical. Any implementation using computes a rank-1 degenerate Gram matrix yielding only . The correct implementation must use .

- (ii)

- Direct inversion is unsafe for . Condition number means normal-equation solving amplifies errors by . SVD truncation is mandatory.

- (iii)

- Quadrature resolution must satisfy . Theorem 9 gives operator-norm error . For , : need .

- (iv)

- Kalman filtration reduces but cannot eliminate quadrature bias. Variance reduction factor is rigorous; systematic bias from low N is not filterable.

18.3. What Has Not Been Proved

| Explicit Statement of Limitations |

|

18.4. Problems Resolved in This Version

- [R1]

- Exact Gram formula: (Theorem 16, Remark 12).

- [R2]

- Arithmetic matrix decomposition: (Theorem 17).

- [R3]

- Hardy-space pointwise inequality: (Theorem 18, Corollary 6).

- [R4]

- Sum-over-zeros lower bound (Theorem 19).

- [R5]

- Kalman oracle inequality and almost-sure bound (Theorems 20–21).

- [R6]

- Möbius connection: , (Proposition 12, Corollary 7).

- [R7]

- Gram kernel theorem and structural singularity (Theorems 25–26).

18.5. Remaining Open Problems

- (i)

- Prove unconditionally.

- (ii)

- Make Proposition 10 exact via all Hurwitz corrections.

- (iii)

- Determine optimal Kalman parameters as functions of M and N.

- (iv)

- Quantify the relation between and Li’s coefficients .

- (v)

- Extend the Gram kernel theory to Dirichlet L-functions.

- (vi)

- Determine the spectral distribution of the Gram kernel operator .

- (vii)

- Prove Conjecture 1.

- (viii)

- Prove rigorously in (likely equivalent to RH).

- (ix)

- Establish whether eigenvalue spacings of approach GUE statistics.

19. Conclusions

Acknowledgments

Conflicts of Interest

Appendix A. Detailed Proofs and Supplementary Results

Appendix A.1. Proof of the Exact Gram Formula via Fourier Analysis

Appendix A.2. Derivation of the Kalman Gain Steady State

Appendix A.3. Connection Between the Gram Kernel and the Hardy Space Inner Product

Appendix A.4. Explicit Numerical Examples for Hardy-Space Bounds

Appendix B. Supplementary: The Bernoulli Polynomial Perspective

| 1 | Analytic continuation is the process of extending a function defined on a smaller domain to a larger domain in a unique manner consistent with analyticity. The key tool for is the functional equation where . This symmetry relates values in to values in , allowing the extension to all of . |

| 2 | A Hilbert space is a complete inner product space. The key example here is : the space of (equivalence classes of) measurable functions with , equipped with and norm . Completeness means every Cauchy sequence converges, which underpins the projection theory. |

| 3 | The Mellin transform of is , defined for . Via , the Mellin transform is unitarily equivalent to the Fourier–Laplace transform, mapping isometrically to the Hardy space . |

| 4 | The Báez–Duarte result follows from the Nyman–Beurling theorem via a density argument: the rational dilates are dense in , and the map is continuous in an appropriate sense. |

| 5 | A compact operator on a Hilbert space is one that maps bounded sets to relatively compact sets. Equivalently, K can be approximated by finite-rank operators. By the spectral theorem for compact self-adjoint operators, the eigenvalues form a sequence converging to zero, with corresponding orthonormal eigenvectors. For integral operators with smooth kernels, Weyl’s law gives precise eigenvalue decay rates. |

| 6 | Kalman filtration, introduced by Kalman and Bucy [13] in 1960, is the optimal linear estimator for a linear dynamical system observed through noisy measurements. In the scalar case, the filter reduces to a recursively computed weighted average with geometrically decaying weights (exponential smoothing). The Kalman gain determines the weight given to new observations versus the running estimate. |

| 7 | In compressed sensing [18], one seeks to represent a signal y as a sparse combination of elements from a large dictionary . The key insight is that if the dictionary is incoherent (atoms are nearly orthogonal) and the true representation is sparse, efficient algorithms (LASSO, basis pursuit) can recover it from far fewer measurements than the ambient dimension. The Nyman–Beurling problem is an infinite-dimensional analogue. |

| 8 | If H is self-adjoint, its spectrum is real by the spectral theorem for self-adjoint operators. Hence all , giving for all zeros. This would prove RH. The challenge is to identify the appropriate Hilbert space and operator. Candidates include the Berry–Keating Hamiltonian on [14] and Connes’s adelic operator [15]. |

References

- E. C. Titchmarsh, The Theory of the Riemann Zeta-Function, 2nd ed. (revised by D. R. Heath-Brown), Oxford University Press, 1986.

- J. B. Conrey, The Riemann Hypothesis, Notices Amer. Math. Soc. 50(3) (2003), 341–353.

- A. M. Odlyzko, On the distribution of spacings between the zeros of the zeta function, Math. Comp. 48(177) (1987), 273–308.

- H. M. Edwards, Riemann’s Zeta Function, Academic Press, 1974.

- G. H. Hardy, On the zeros of Riemann’s zeta-function, Proc. London Math. Soc. (2) 13 (1914), 191–207.

- B. Nyman, On some groups and semigroups of translations, Ph.D. thesis, Uppsala University, 1950.

- A. Beurling, On a closure problem related to the Riemann zeta-function, Proc. Natl. Acad. Sci. USA 41 (1955), 312–314. [CrossRef]

- L. Báez-Duarte, A strengthening of the Nyman–Beurling criterion for the Riemann Hypothesis, Atti Accad. Naz. Lincei 14(1) (2003), 5–11.

- L. Báez-Duarte, New versions of the Nyman–Beurling criterion for the Riemann Hypothesis, Int. J. Math. Math. Sci. 31 (2002), 387–406. [CrossRef]

- J.-F. Burnol, A note on Nyman’s equivalent formulation of the Riemann Hypothesis, Contemp. Math. 287 (2001), 23–26.

- X.-J. Li, The positivity of a sequence of numbers and the Riemann Hypothesis, J. Number Theory 65 (1997), 325–333. [CrossRef]

- H. Iwaniec and E. Kowalski, Analytic Number Theory, Amer. Math. Soc., Providence, 2004.

- R. E. Kalman and R. S. Bucy, New results in linear filtering and prediction theory, J. Basic Eng. 83 (1961), 95–108. [CrossRef]

- M. V. Berry and J. P. Keating, The Riemann zeros and eigenvalue asymptotics, SIAM Rev. 41 (1999), 236–266. [CrossRef]

- A. Connes, Trace formula in noncommutative geometry and the zeros of the Riemann zeta function, Selecta Math. 5 (1999), 29–106. [CrossRef]

- G. Robin, Grandes valeurs de la fonction somme des diviseurs et hypothèse de Riemann, J. Math. Pures Appl. 63 (1984), 187–213.

- C. Delaunay, E. Fricain, E. Mosaki, and O. Robert, Zero-free regions for Dirichlet series (II), Constr. Approx. 44 (2016), 183–210.

- E. J. Candès, J. Romberg, and T. Tao, Robust uncertainty principles: exact signal reconstruction from highly incomplete frequency information, IEEE Trans. Inf. Theory 52(2) (2006), 489–509. [CrossRef]

- G. H. Golub and C. F. Van Loan, Matrix Computations, 4th ed., Johns Hopkins University Press, 2013.

- R. S. Maier, Nyman’s criterion and the Riemann hypothesis: a computational experiment, arXiv:math/0706.0718, 2007.

- W. Rudin, Real and Complex Analysis, 3rd ed., McGraw-Hill, 1987. [CrossRef]

- P. D. Lax, Functional Analysis, Wiley-Interscience, 2002.

- R. M. Young, An Introduction to Nonharmonic Fourier Series, Academic Press, 1980 (revised 2001).

- creelie, Báez–Duarte Kalman Filtered Approximation, GitHub repository, 2025. https://github.com/creelie/baez-duarte-kalman.

| M | Numerically stable? | |||

| 5 | Yes | |||

| 10 | Yes | |||

| 20 | Marginal | |||

| 30 | Marginal | |||

| 50 | No | |||

| 100 | No | |||

| 200 | No |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).