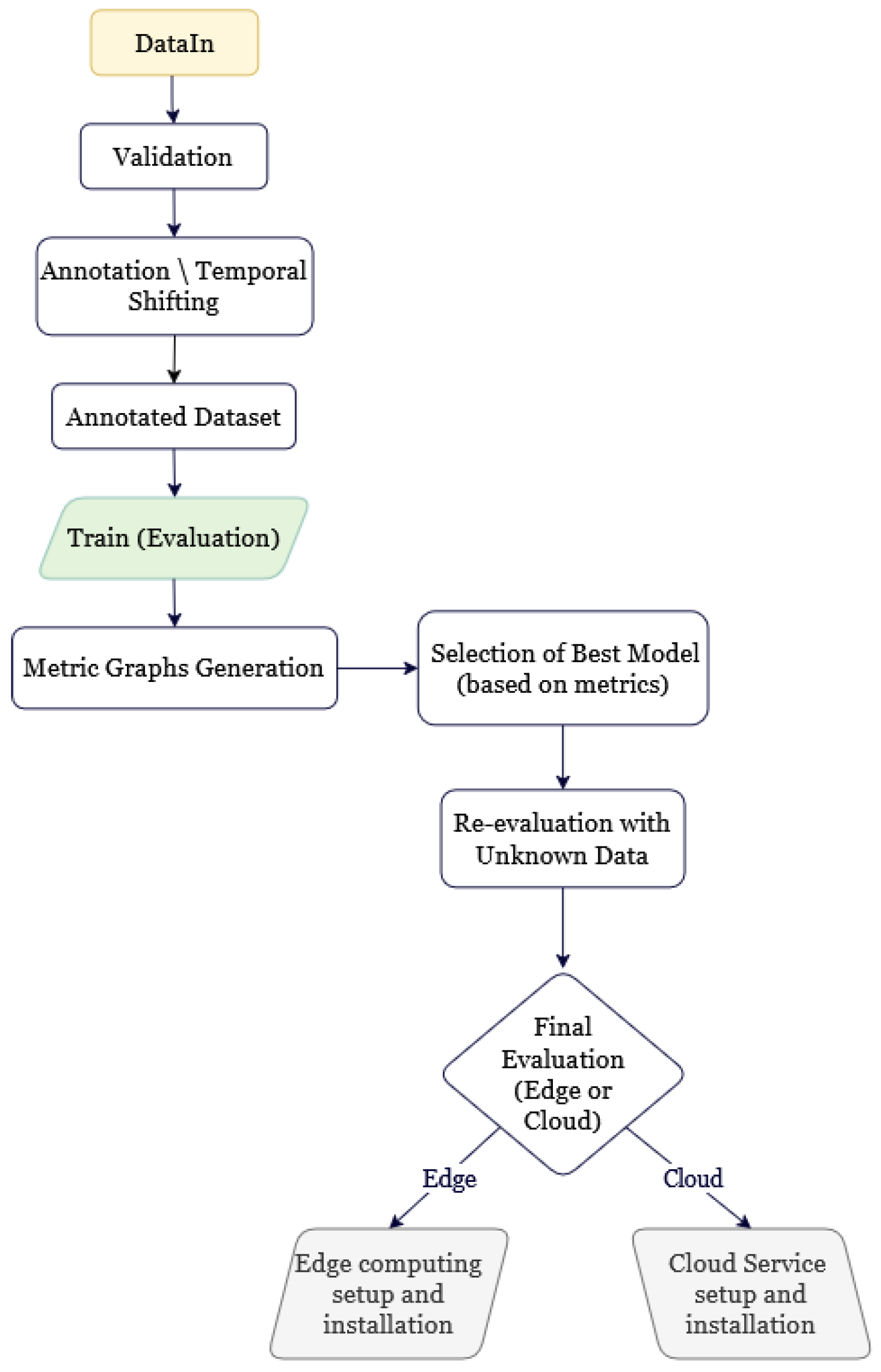

3. Experimental Scenarios

The authors conducted controlled experiments using NN and recurrent NN models to evaluate and compare their proposed framework. Each model was trained separately with adjusted hyperparameters and architectural choices in an attempt to investigate performance improvements under different data and model configurations. Two distinct deep learning architectures were implemented and analyzed:

A feedforward neural network, referred to as slideNN.

A gated recurrent unit-based architecture (GRU).

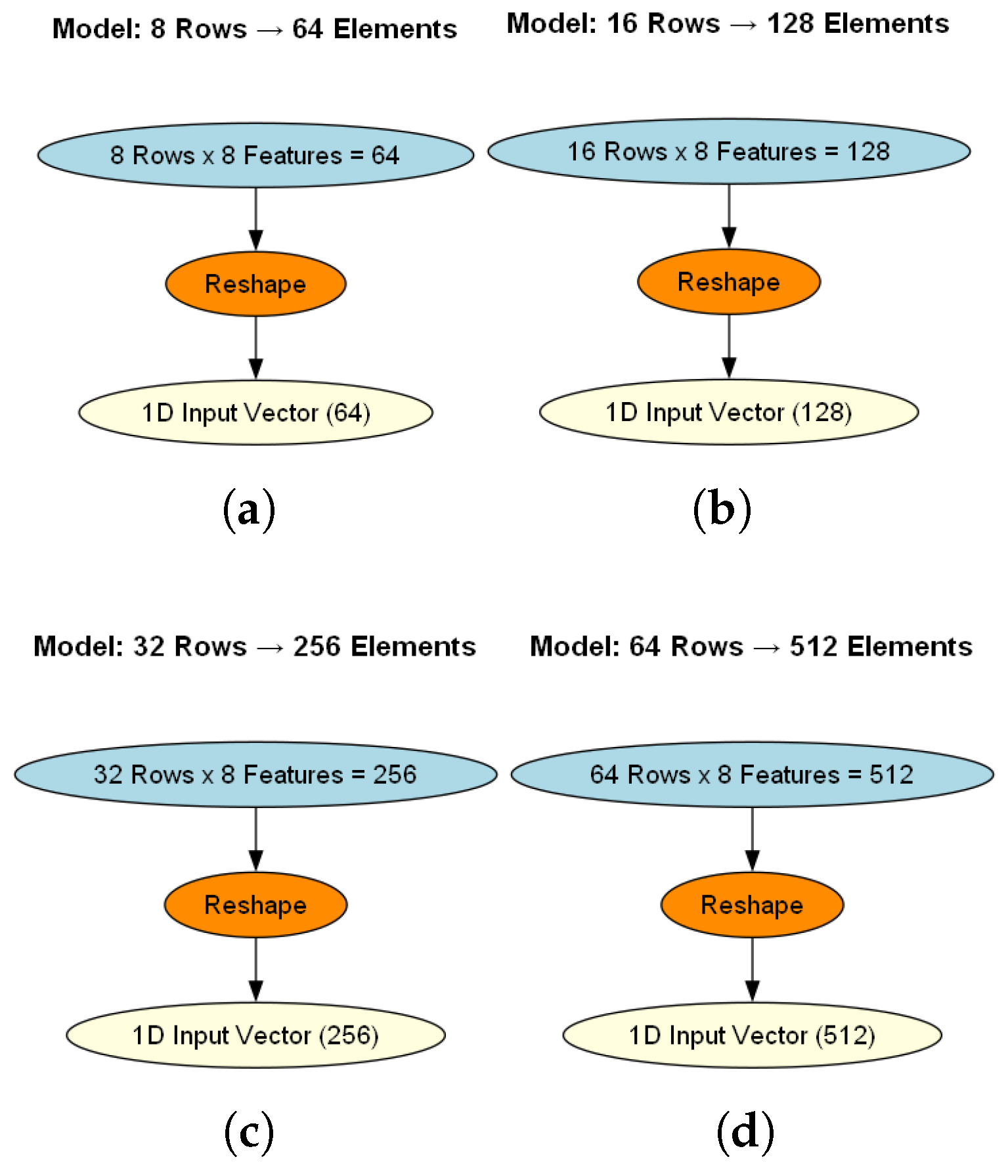

Both models were evaluated under four input-output configurations, using time windows of 64, 128, 256, and 512 hours as input, with corresponding prediction horizons of 2, 4, 8, and 16 hours of AQI values, respectively. This allowed for a consistent AQI forecasting comparison, revealing how varying the amount of historical input data and forecast length affects model performance. The experiments were performed under identical preprocessing conditions, and both architectures were trained using standardized AQI data to ensure consistency and fairness in comparison.

The experiments have also been differentiated into the two main framework computational deployment cases: Edge Computing and Cloud Computing. Each scenario is designed to reflect realistic use cases, accounting for constraints in computational resources and inference requirements.

Edge Computing: This scenario simulates environments with limited hardware capabilities, such as embedded systems or mobile devices. Both architectures—slideNN (a feedforward neural network) and a GRU-based model—were tested under four distinct input-output configurations of and (hours). The variable length GRU model was adjusted using a small number of cells (e.g., 8, 16, 32, 64) to match the parameter count of the corresponding slideNN sub-model. This enables a fair and direct comparison of their performance on the same resource-constrained platform.

Cloud Computing: This scenario represents high-resource environments where model complexity and real-time inferences are not a limiting factor. Only the GRU-based model was tested in this case, using a larger number of cells (specifically 1280) to exploit its full representational capacity. For each of the four input-output configurations mentioned above, the GRU layer was followed by a dense NN sub-network, forming a hybrid architecture that combines deep-temporal recurrent modeling with deep-layered neural network processing.

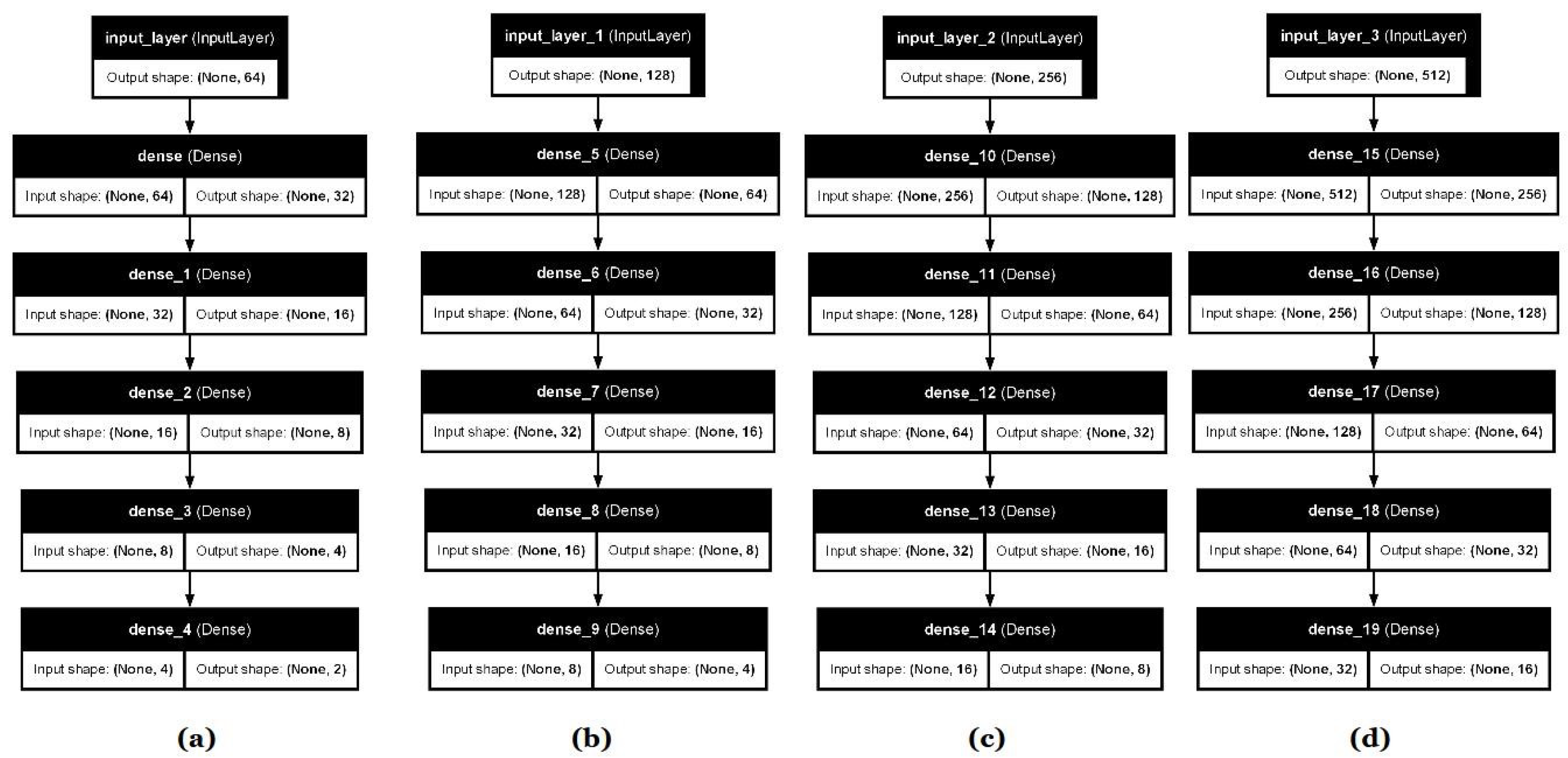

3.1. Scenario I: Edge Case Evaluation (slideNN vs. GRU)

In this scenario, we focused on environments with limited computational resources, where smaller models are preferable (Edge and real-time AI). For this reason, the GRU model was tested with a small number of recurrent units (cells) selected from the set . The experiment showed that the performance difference between using 8 and 16 GRU cells was negligible. As such, they are not treated as distinct cases but are instead grouped into a single category representing the smallest cell configuration.

To ensure a fair comparison, the number of trainable parameters in the Edge GRU model was matched to that of the corresponding slideNN model for each input-output configuration. Specifically, four submodels of both GRU and slideNN were created and trained for input sizes 64, 128, 256, and 512, and their performance was evaluated using Root Mean Squared Error (RMSE).

Below is a table providing each configuration’s information:

Table 4.

Model configurations with parameter count and the corresponding memory size.

Table 4.

Model configurations with parameter count and the corresponding memory size.

| Configurations |

Input/Output Size |

Parameters (p) |

Memory (KB) |

| 1 |

64 / 2 |

|

|

| 2 |

128 / 4 |

|

|

| 3 |

256 / 8 |

|

|

| 4 |

512 / 16 |

|

|

Each configuration was tested independently to compare how well each architecture performs in resource-constrained settings, both in terms of training convergence and forecasting accuracy.

3.2. Scenario I: Experimental Results

The results of the Edge GRU models were compared with those of the slideNN models for the same input-output configurations. Performance was measured using RMSE, allowing direct comparison between recurrent and feedforward approaches at matched model capacities. To initiate the evaluation process, we conducted experiments on the feedforward neural network architecture, referred to as slideNN. The model was trained and tested independently for the four input-output configurations, namely 64-2, 128-4, 256-8, and 512-16, corresponding to the number of hours used for input and prediction, respectively.

Despite the fairly small size of the training dataset, each submodel was trained for 400 epochs. This choice was empirically justified, as the models required an extended number of training cycles to begin converging toward meaningful patterns in the data. Preliminary experiments using fewer epochs, larger batch sizes, or higher learning rates (e.g., greater than the chosen value of 0.0008) consistently resulted in suboptimal performance, where the network failed to learn or showed unstable loss behavior. This is an indication that the model benefits from a gradual learning process with smaller batch updates and a low learning rate (slow temporal learner), particularly when the input data volume is limited.

The resulting predictions were not highly accurate in absolute terms. However, the experiments did reveal a consistent, inductive pattern of improvement across the submodels. Specifically, as the output size increased from 2 to 16, each subsequent configuration produced better results than the previous one.

SlideNN model performance improves inductively as more output steps are introduced, likely due to its capacity to capture broader temporal dependencies when given more extensive target horizons. To showcase the performance of each submodel within the

slideNN architecture,

Table 5 presents the loss (RMSE) and the respective MSE for each configuration.

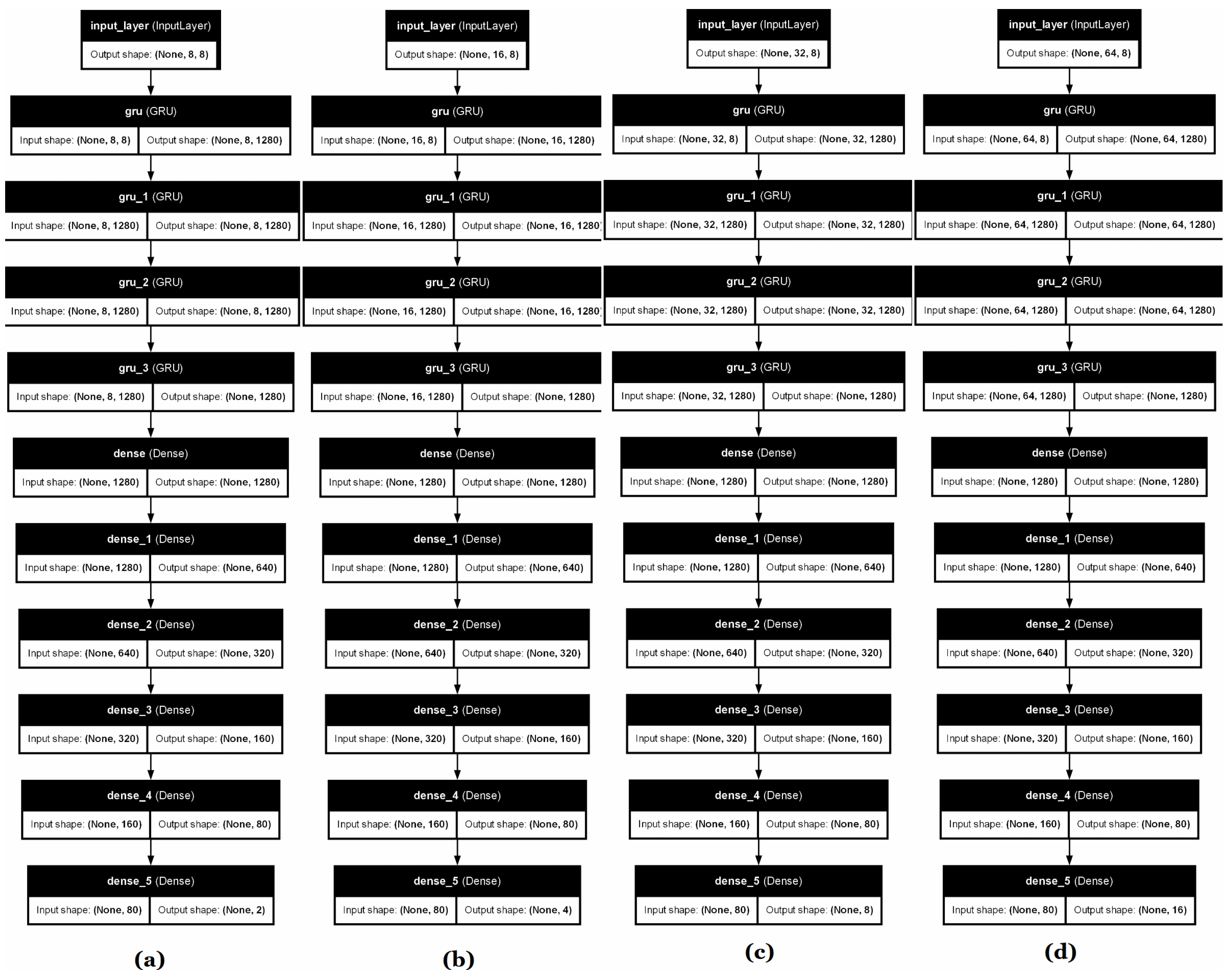

Following the experimentation on the slideNN architecture, we conducted a corresponding series of evaluations to capture temporal patterns using Recurrent Neural Networks, the GRU-based model. GRU has been selected as better at capturing long-range dependencies than RNNs without suffering from vanishing gradients, maintaining fewer gates than LSTMs (two instead of three and cell states), leading to faster inferences and less memory usage, which is an important limitation for edge computing devices.

As with the previous case, four distinct input-output configurations were employed—64-2, 128-4, 256-8, and 512-16—ensuring direct comparability between the two architectures. In addition to input and output window sizes, the GRU model introduced two more key hyperparameters: the number of GRU cells and the number of internal layers. For each configuration, the number of cells was carefully selected so that the total number of trainable parameters closely matched that of its slideNN counterpart. The selected values were 16, 32, 64, and 128 cells for the respective input-output pairs. About the internal GRU layers, in the context of the resource-constrained edge computing scenario, this hyperparameter was fixed at a constant value of 2 layers across all configurations. This design choice was also driven by the need to maintain a parameter count comparable to that of the corresponding slideNN models, ensuring a fair and consistent basis for comparison.

Training was performed over 50 epochs with a batch size of 32 and a small learning rate of 0.001. Compared to slideNN, the GRU architecture required significantly fewer epochs to converge, largely due to its recurrent structure, which is inherently more capable of capturing temporal dependencies in sequential data. The increased learning rate reflects the model’s greater stability and ability to generalize from time-correlated features, allowing it to assertively update its weights without compromising convergence.

Maintaining the same parameter settings across both sub-scenarios, it becomes evident that the GRU-based architecture consistently achieves better results compared to the slideNN, regardless of the input-output configuration. A clear inductive improvement is still observed in prediction performance as both the number of timesteps and GRU cells increase. This trend is reflected in the gradual reduction of error metrics. These findings indicate that the GRU architecture benefits substantially from increased complexity, improving its ability to capture long-term dependencies and patterns within the data. The performance results of each configuration of the GRU architecture for edge computing are shown in

Table 6 below:

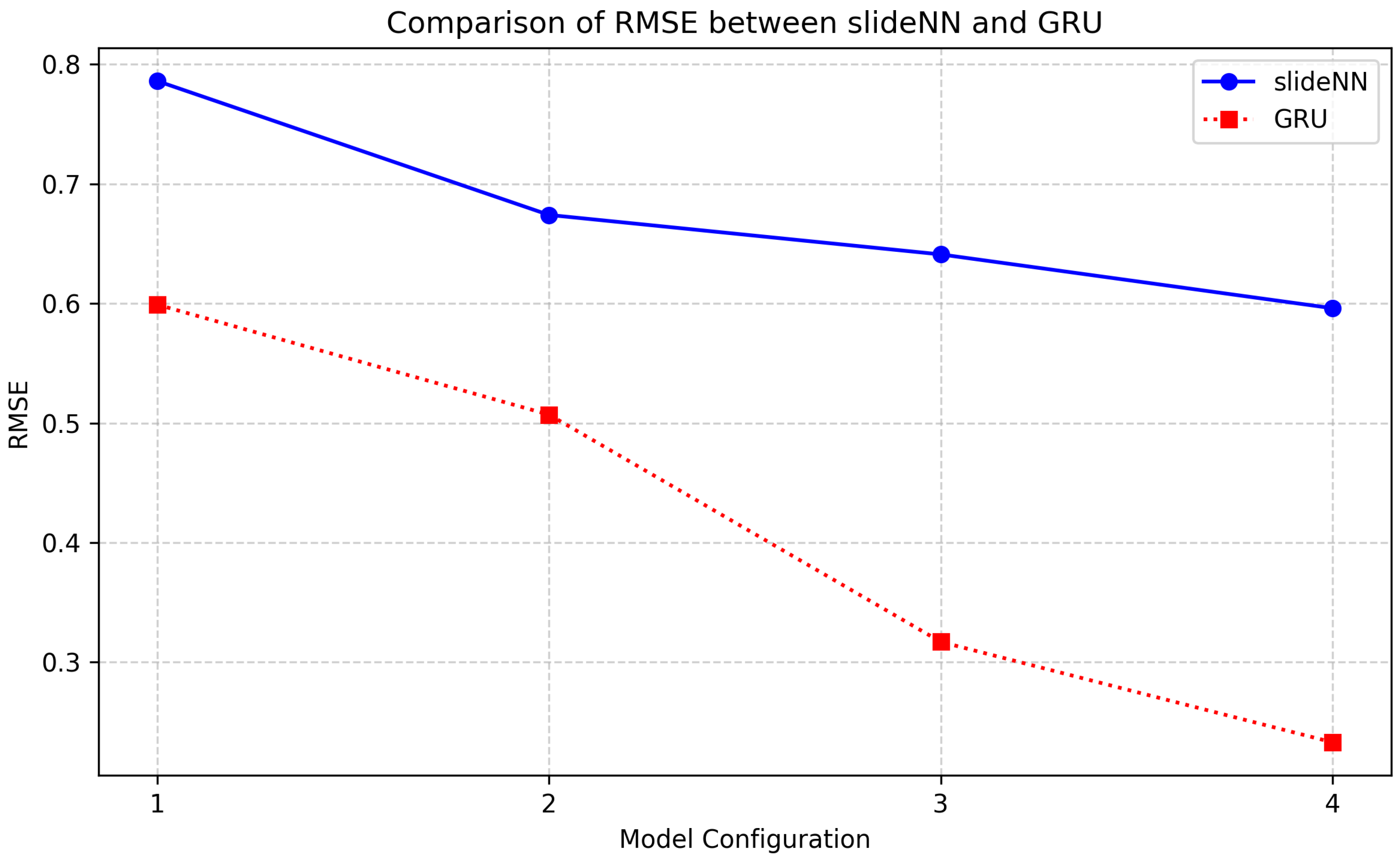

A comparative plot of the RMSE values was constructed to visually assess the relative performance of the two architectures across different input-output configurations.

Figure 6 illustrates how the prediction error, as measured by RMSE, evolves for each configuration (1 through 4, meaning the four different input and output windows discussed previously) for both the feedforward

slideNN and the recurrent GRU model. Each point on the curves corresponds to a specific model setup, with the x-axis representing increasing input and output size and the y-axis showing the corresponding RMSE. This comparison highlights the general trend of performance improvement in both models as the amount of historical data increases while also showcasing the consistent superiority of the GRU architecture in minimizing prediction error across all scenarios.

As shown in

Figure 6, the GRU models outperformed in terms of RMSE all

slideNN models, using the same dataset, data transformations, and training parameters. For the configuration 1 of 8 vectorized timestep inputs of environmental measurements (

), variable GRU model presented 25% less loss than the

slideNN model. A similar profile is maintained also for the configuration 2 of 16 timestep inputs. Then, for 32 and 64 temporal inputs configurations, the GRU models outperformed even the

slideNN models, offering 50% and 80% less loss accordingly. Furthermore, to achieve the good mentioned

slideNN losses (expressed by RMSE), the model has been trained over 400 epochs with respect to the GRU models’ of 50 epochs using a stop training condition of three epochs patience and a delta value to qualify as an improvement of 0.001. This indicates that GRU models can easily distinguish temporal patterns better than plain NN models and train much faster than NN models. Regarding dataset training epochs, GRU model training is at least 8 times faster concerning

slideNN.

In conclusion, under similar-sized models of the same number of parameters and memory sizes, GRU models performed at least 25% better than NN models for small temporal timesteps and at least 50% better for medium temporal timesteps. Looking at inference times, both models performed similarly in their corresponding configurations, showing no significant delays (similar inference times).

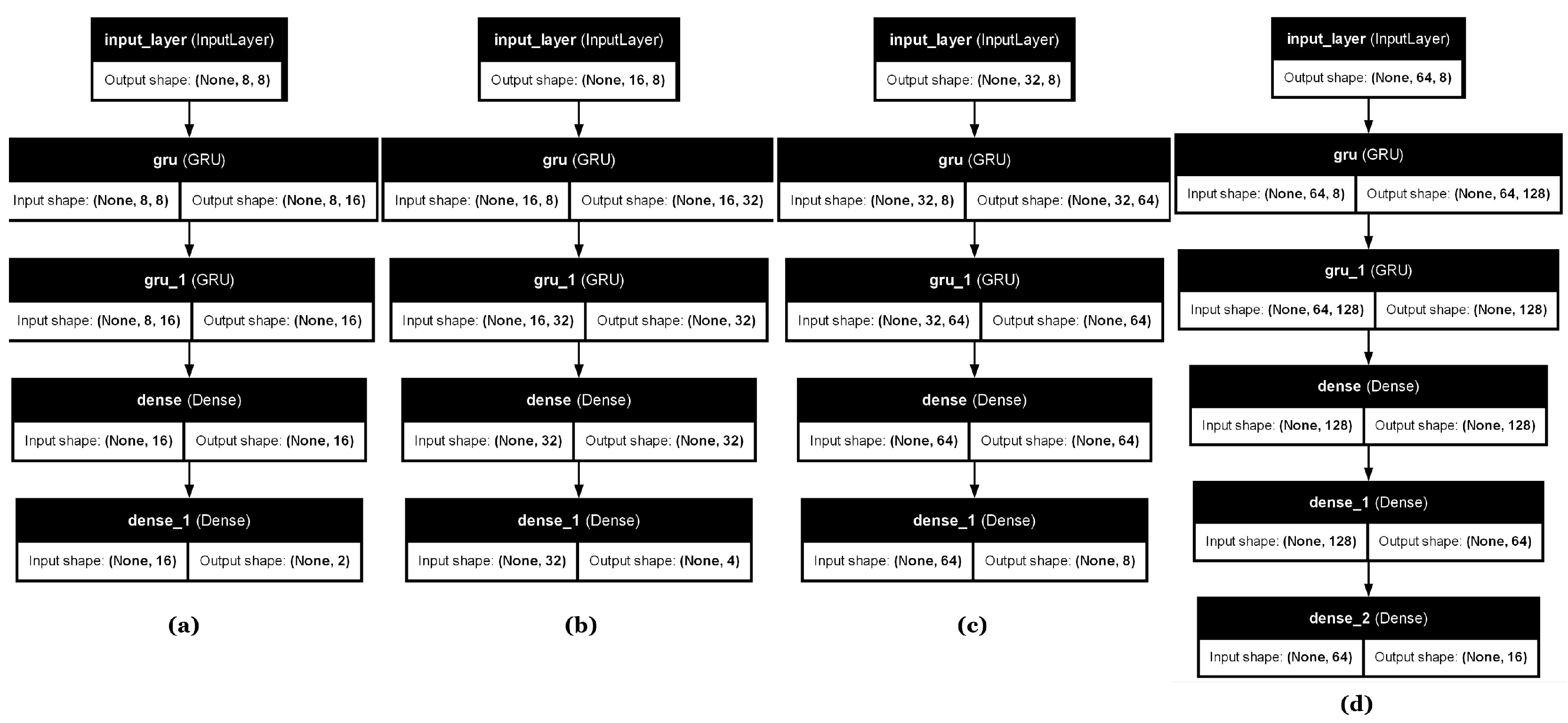

3.3. Scenario II: Cloud Case Evaluation

Following the framework experiment on cloud cases and the better performance results achieved in the previous scenario by the GRU models, we carried out experiments centered on significantly larger parameter sets, deeper internal layers, and generally a more complex hybrid GRU-NN architecture. These configurations are more effectively implemented using cloud computing resources, which provide the necessary resources in terms of memory and processing power to support training, loading, and short inference intervals. In this scenario, only the GRU architecture was employed, as it was proven more suited for handling long temporal sequences and complex sequential dependencies. Since GRU outperformed NN models, maintaining a better forecasting profile of minimal loss in variable timesteps, only the variable GRU architecture was evaluated across all four input-output window configurations (64–2, 128–4, 256–8, and 512–16) to provide a comprehensive comparison and examine how the architecture performs under varying temporal resolutions and forecasting horizons when deployed in a cloud-based environment.

Transition to a Cloud GRU architecture that broadens model instantiation memory requirements and is close to real-time inferences allowed for a substantial increase in the number of trainable parameters compared to the edge computing scenarios. This increase is attributed to the higher number of GRU cells and the deeper, more complex network structure employed in these experiments. In cloud-based model deployment, a widely accepted threshold for qualifying a model as appropriate for cloud inference is a parameter memory size exceeding 100 MB, as mentioned in [

52]. To illustrate this difference,

Table 7 below summarizes the number of parameters and their corresponding memory size for the two configurations used in this scenario. All models maintain almost similar parameter sizes while increasing timestep depth and forecasting lengths similarly to the slideNN and edge-GRU outputs of the edge computing scenarios.

3.4. Scenario II: Experimental Results

The Cloud GRU model’s performance was examined using RMSE, focusing on its ability to produce accurate long-range AQI forecasts. Unlike the Edge scenario, the model size was not constrained here, enabling the architecture to fully exploit the available computational resources. Furthermore, it was configured with 1280 GRU cells and connected to a sub-network with decreasing neuron counts, forming a hybrid recurrent-dense architecture. This design aims to combine the temporal learning capability of GRUs with dense layers’ hierarchical feature abstraction strengths. The dense sub-network used here mirrors the layer structure of the corresponding slideNN models: fully connected layers with neuron counts decreasing by a factor of 2 at each step.

This scenario treated the number of internal GRU layers as a key hyperparameter, as deeper architectures increase model complexity and are better suited for cloud-based experimentation. An initial configuration with two layers was tested but later discarded, as it failed to fully utilize cloud environment computational advantages. Consequently, configurations with three and four layers were selected to explore the benefits of increased model depth, and eventually, four layers proved ideal for these experimental cases. The number of training epochs also varied depending on the model setup, ranging between 25 and 40 based on the conditional training termination if it reaches a small learning rate value. Performance outcomes were analyzed compared to the best-performing

Edge GRU configurations. Across all configurations evaluated in the cloud-based setting, architectural and training modifications were applied to scale the models appropriately beyond their edge-based counterparts. A key adjustment involved significantly increasing the number of GRU cells, as it became evident that timestep size alone contributed relatively little to the total parameter count compared to other hyperparameters, a thing that can be assumed even by looking at the difference in memory size between the edge configurations (

Table 4). The GRU cell size was scaled up for each configuration to ensure the models reached a substantial memory footprint suitable for cloud experimentation [

52]. In line with this approach, the internal architecture was also deepened by increasing the number of GRU layers, typically favoring setups with three or more layers to leverage the higher capacity and representational power available in cloud environments.

While training duration varied slightly across configurations, most models were trained for approximately 25 to 40 epochs. However, the training histories indicated that the validation loss plateaued well before the final epoch. This suggests that the models had already captured the most relevant patterns earlier in training, implying that fewer iterations could achieve satisfactory performance. The same behavior was observed consistently across the configurations: in the first two setups, the validation performance plateaued around the 30th to 35th epoch, allowing the number of training epochs to be safely reduced to 25 without loss in model quality. Similarly, in the two larger configurations, performance stabilized by the 40th epoch, which justified reducing training to approximately 35 epochs, thereby improving training efficiency and maintaining predictive accuracy (minimal RMSE loss).

As expected, the evaluation results outperformed those of its edge-based counterpart, as sumarized in

Table 8.

The Cloud-based GRU models consistently outperformed their Edge counterparts across all input-output configurations while using the same datasets, data preprocessing, and training setups. In the smallest setup (64 → 2), the Cloud GRU achieved an RMSE of 0.468, representing a 21.9% improvement over the Edge model’s 0.599. As the sequence length increased, the performance advantage of the Cloud GRUs became even more apparent. For the 128 → 4 configuration, the RMSE was 34.1% lower, and for 256 → 8, the reduction remained significant at 32.8%. Even in the largest configuration (512 → 16), where gains typically diminish, the Cloud GRU model still achieved a 13.7% reduction in RMSE compared to the Edge model. These results emphasize GRU models’ scalability and increased effectiveness when more computational resources and memory are available. The Cloud GRUs learned more robust temporal patterns due to their greater capacity.

In conclusion, the Cloud-based GRUs clearly outperformed Edge GRUs in terms of predictive accuracy across all tested configurations, offering up to 34% lower RMSE in mid-range settings and still delivering gains even in high-timestep conditions, with comparable inference times across both environments.