Submitted:

06 June 2025

Posted:

09 June 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

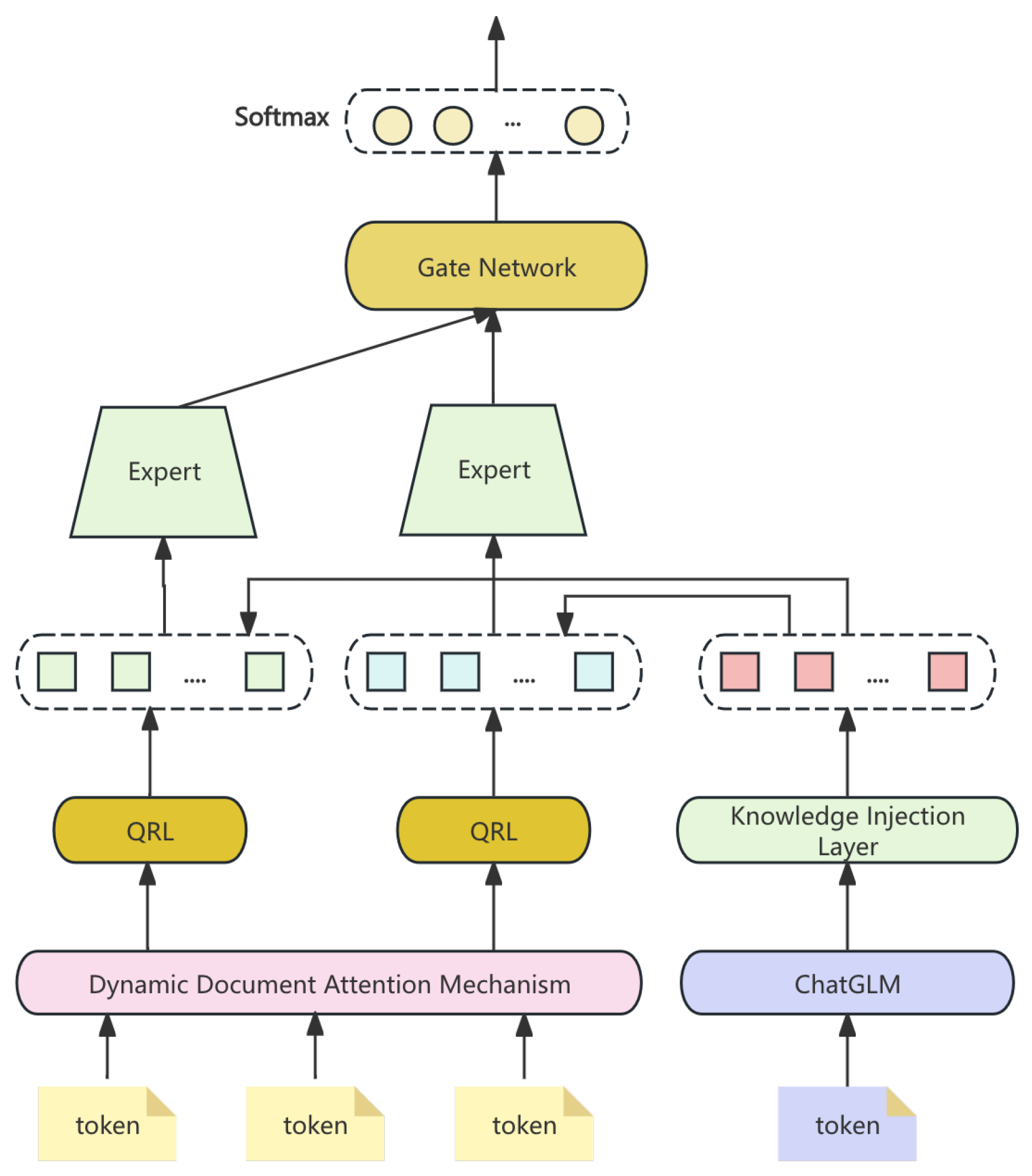

3. Methodology

3.1. Dynamic Document Attention Mechanism

3.2. Query Refinement Layer

3.3. Knowledge Injection Layer

3.4. Multi-Task Learning Framework

3.4.1. Task-Specific Gated Networks

3.4.2. Expert Networks for Task Specialization

3.4.3. Joint Training of Shared and Task-Specific Modules

3.4.4. Task-Aware Attention Mechanism

3.4.5. Adaptive Task Allocation During Inference

3.5. Loss Function

3.5.1. Task-Specific Loss Functions

3.5.2. Composite Loss Function

3.5.3. Gradient Flow for Multi-Task Optimization

3.6. Data Preprocessing

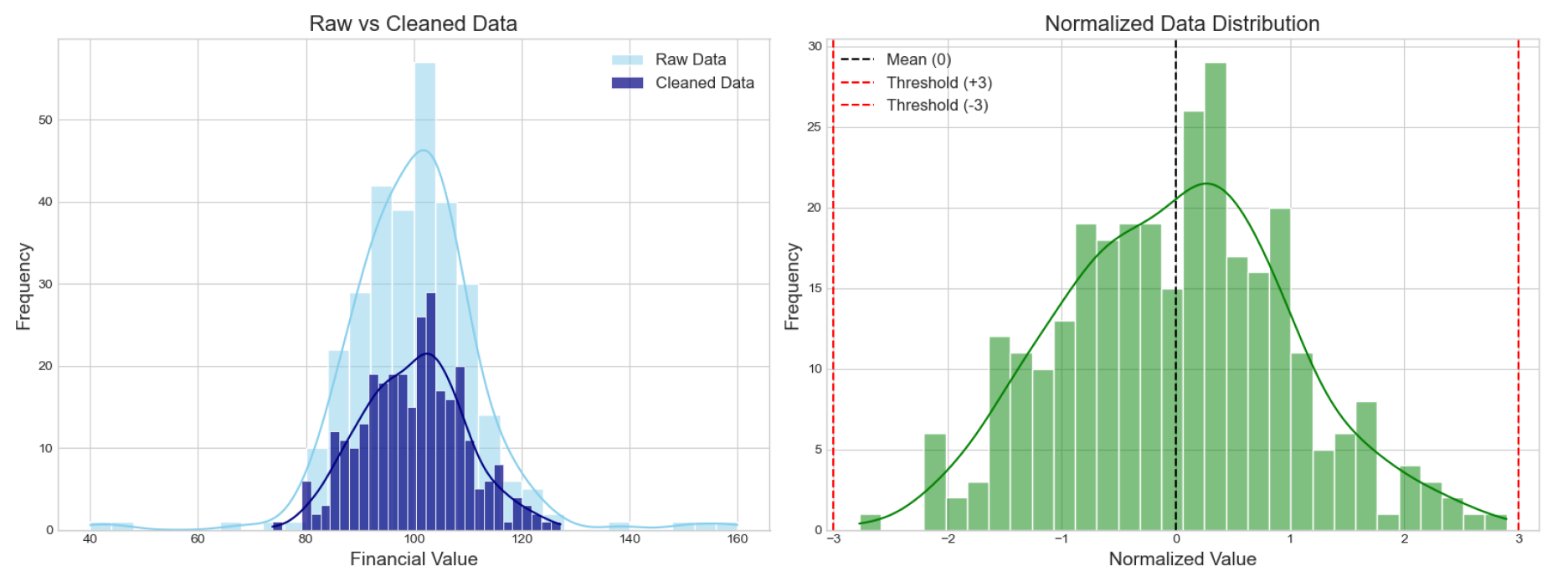

3.6.1. Data Cleaning and Normalization

- Text Cleaning: Removing headers and disclaimers.

- Numerical Normalization: Applying standardization:

3.6.2. Tokenization and Embedding

3.6.3. Handling Missing Data and Outliers

- Imputation: Filling missing values with mean or median.

- Outlier Detection: Using z-score:

4. Evaluation Metrics

5. Experiment Results

6. Conclusion

References

- Jin, T. Attention-Based Temporal Convolutional Networks and Reinforcement Learning for Supply Chain Delay Prediction and Inventory Optimization. Preprints 2025. [Google Scholar] [CrossRef]

- Yang, Y.; Uy, M.C.S.; Huang, A. Finbert: A pretrained language model for financial communications. arXiv preprint arXiv:2006.08097 2020.

- Sun, Y.; Cui, Y.; Hu, J.; Jia, W. Relation classification using coarse and fine-grained networks with SDP supervised key words selection. In Proceedings of the Knowledge Science, Engineering and Management: 11th International Conference, KSEM 2018, Changchun, China, August 17–19, 2018, Proceedings, Part I 11. Springer, 2018, pp. 514–522.

- Kenton, J.D.M.W.C.; Toutanova, L.K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Proceedings of naacL-HLT. Minneapolis, Minnesota, 2019, Vol. 1.

- Yang, Z. XLNet: Generalized Autoregressive Pretraining for Language Understanding. arXiv preprint arXiv:1906.08237 2019.

- u, J. Enhancing chatbot user satisfaction: A machine learning approach integrating decision tree, tf-idf, and bertopic. In Proceedings of the 2024 IEEE 6th International Conference on Power, Intelligent Computing and Systems (ICPICS). IEEE, 2024, pp. 823–828.

- Jin, T. Optimizing Retail Sales Forecasting Through a PSO-Enhanced Ensemble Model Integrating LightGBM, XGBoost, and Deep Neural Networks. Preprints 2025. [Google Scholar] [CrossRef]

- Dai, W.; Jiang, Y.; Liu, Y.; Chen, J.; Sun, X.; Tao, J. CAB-KWS: Contrastive Augmentation: An Unsupervised Learning Approach for Keyword Spotting in Speech Technology. In Proceedings of the International Conference on Pattern Recognition. Springer, 2025, pp. 98–112.

- Douaioui, K.; Oucheikh, R.; Benmoussa, O.; Mabrouki, C. Machine Learning and Deep Learning Models for Demand Forecasting in Supply Chain Management: A Critical Review. Applied System Innovation (ASI) 2024, 7. [Google Scholar] [CrossRef]

- Cao, J.; Xu, R.; Lin, X.; Qin, F.; Peng, Y.; Shao, Y. Adaptive receptive field U-shaped temporal convolutional network for vulgar action segmentation. Neural Computing and Applications 2023, 35, 9593–9606. [Google Scholar] [CrossRef]

- Chen, B.; Qin, F.; Shao, Y.; Cao, J.; Peng, Y.; Ge, R. Fine-grained imbalanced leukocyte classification with global-local attention transformer. Journal of King Saud University-Computer and Information Sciences 2023, 35, 101661. [Google Scholar] [CrossRef]

| Model | QA Accuracy (%) | FTE MSE | DC MAP |

|---|---|---|---|

| FinGLM-6B | 91.3 | 0.045 | 0.837 |

| BERT | 85.6 | 0.063 | 0.752 |

| GPT-3 | 88.1 | 0.052 | 0.813 |

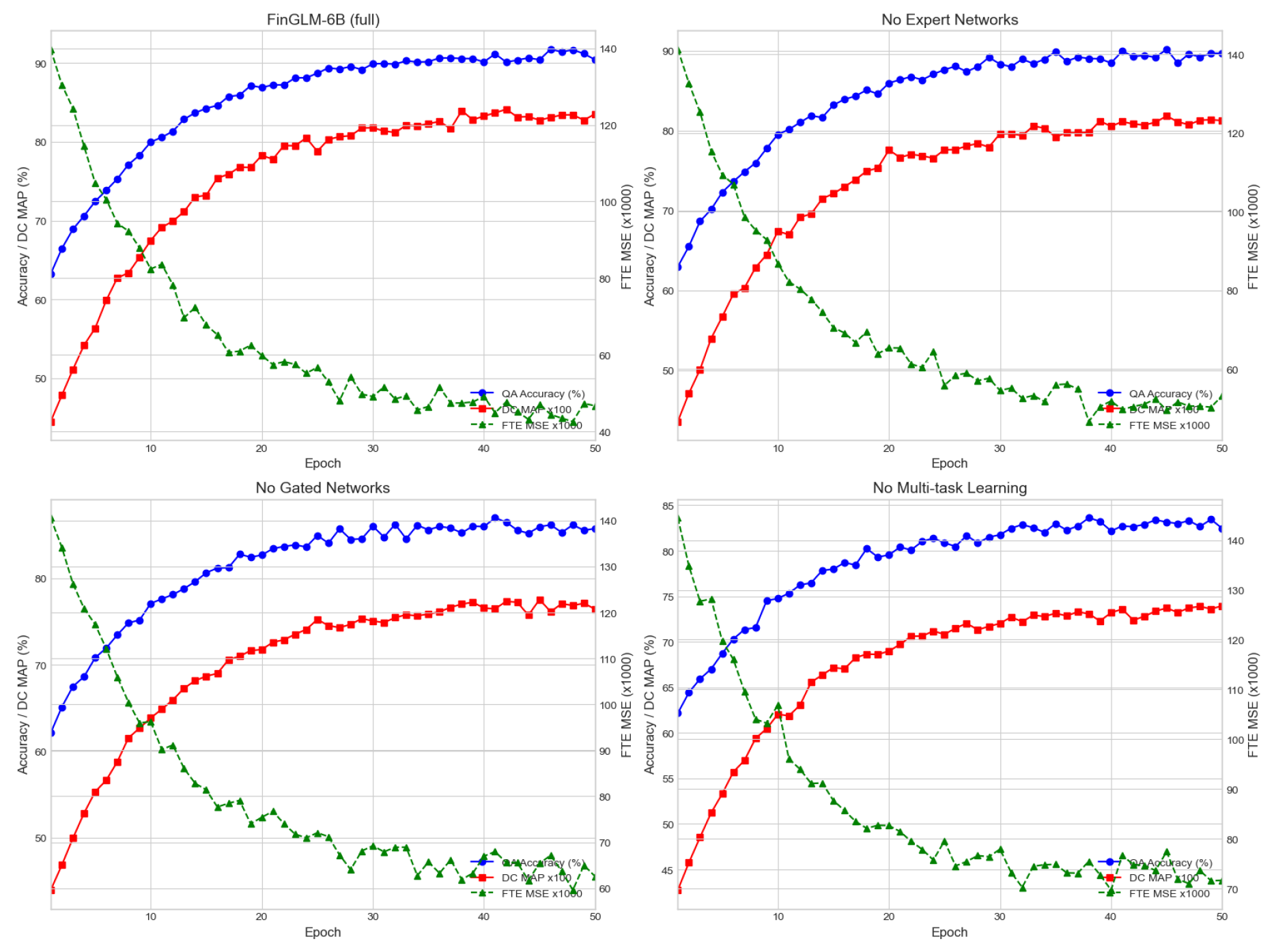

| Model Variant | QA Accuracy (%) | FTE MSE | DC MAP |

|---|---|---|---|

| FinGLM-6B (full) | 91.3 | 0.045 | 0.837 |

| No Expert Networks | 89.7 | 0.051 | 0.812 |

| No Gated Networks | 86.4 | 0.063 | 0.772 |

| No Multi-task Learning | 83.1 | 0.072 | 0.740 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).