Submitted:

13 March 2026

Posted:

17 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

Contributions

- We introduce DSER, a light field depth estimation framework that injects spectral regularization into the epipolar domain for dense disparity reconstruction.

- We develop a hybrid inference pipeline that unifies LSG initialization, plane-sweeping aggregation, multiscale EPI refinement, and occlusion-aware directed random walk propagation.

- We show on benchmark and real-world light field datasets that DSER improves structural fidelity and achieves a strong balance between reconstruction accuracy and computational efficiency.

2. Related Work

3. Method

3.1. Data and Preprocessing

3.2. Least Squares Gradient Initialization

3.3. Plane-Sweeping Cost Volume

3.4. Spectral EPI Refinement

3.5. Confidence-Guided Depth Propagation

3.6. Multiscale Spectral Refinement

4. Experiments

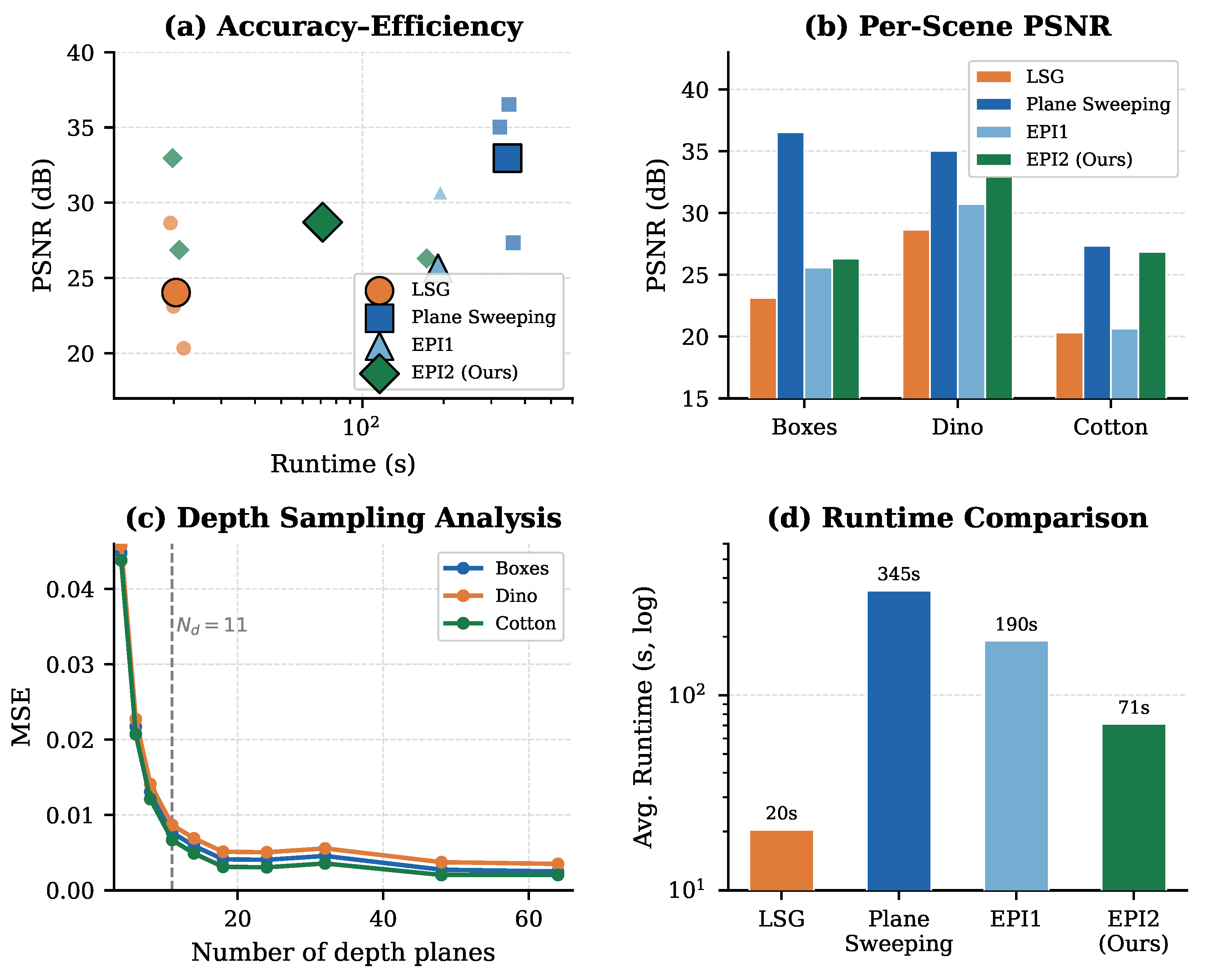

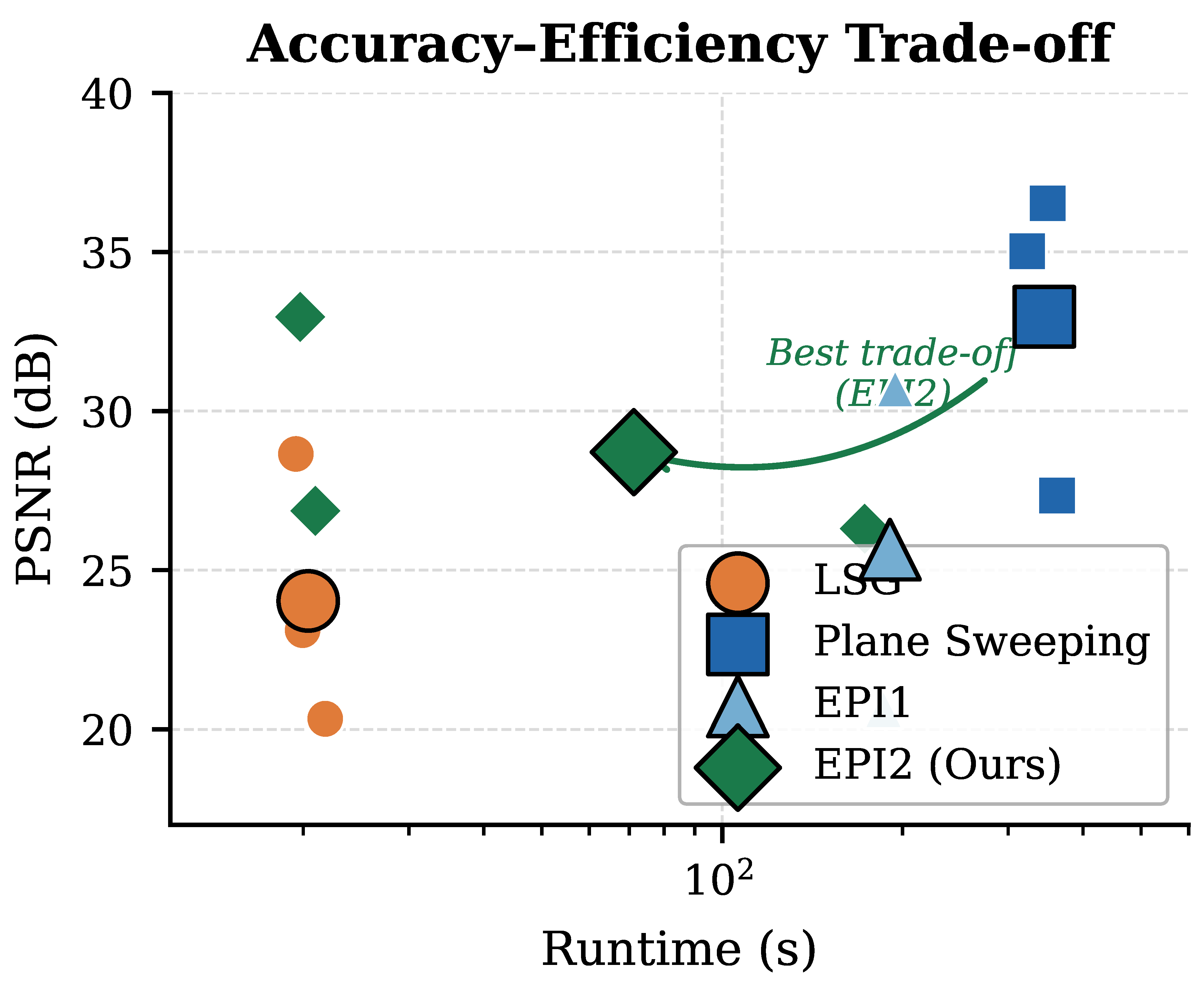

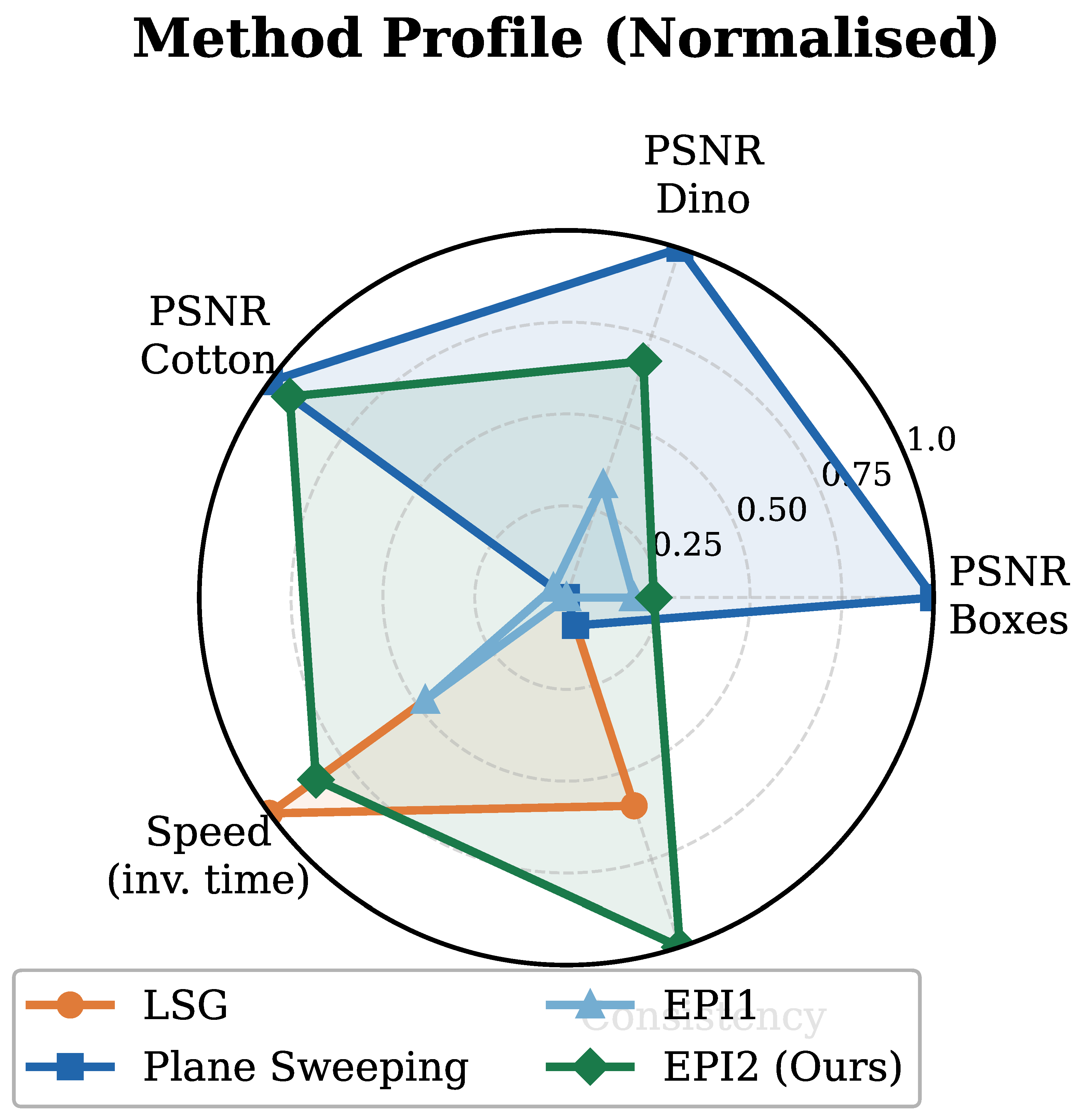

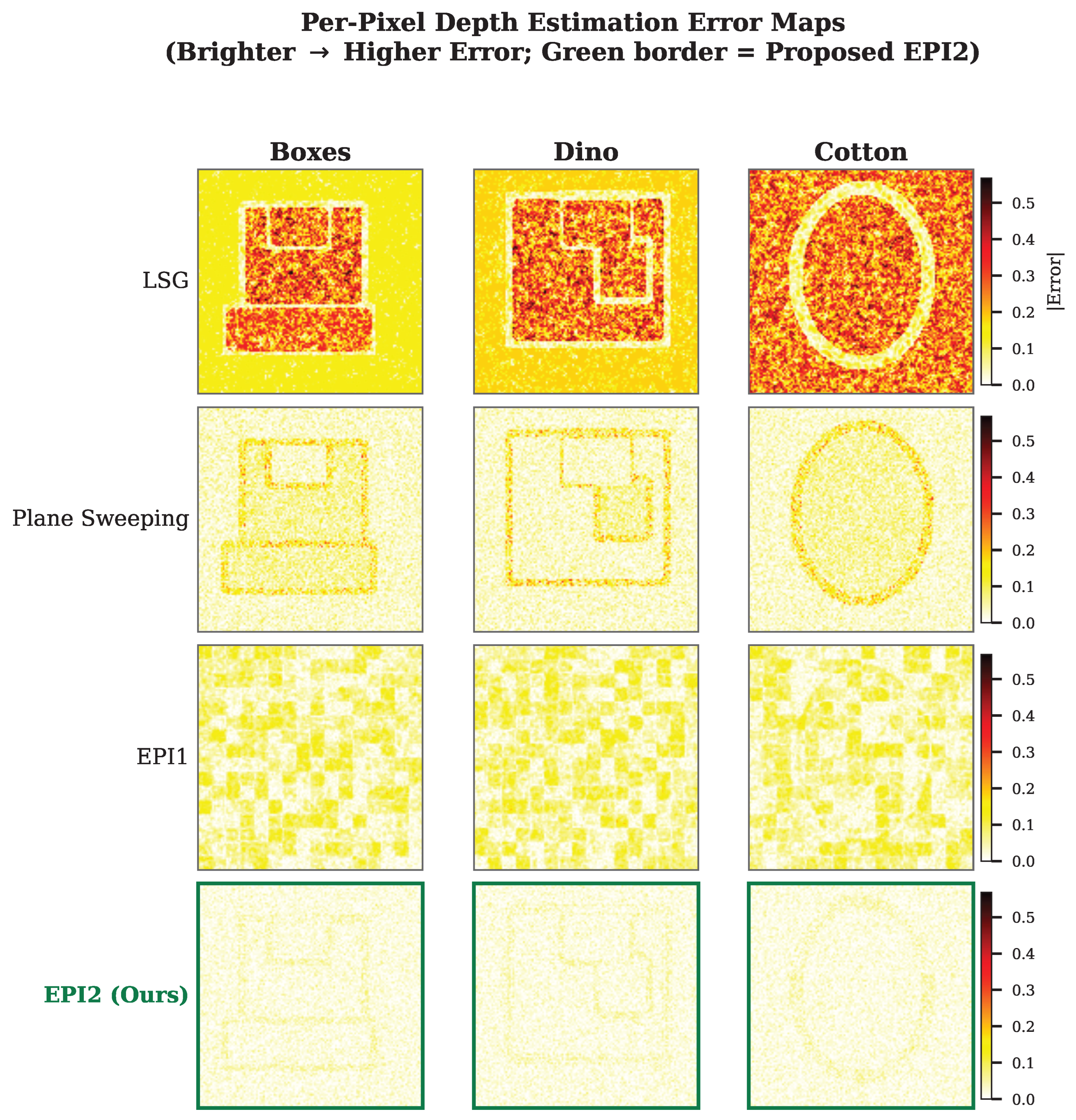

5. Results and Analysis

5.1. Classical and Learning-Based Baselines

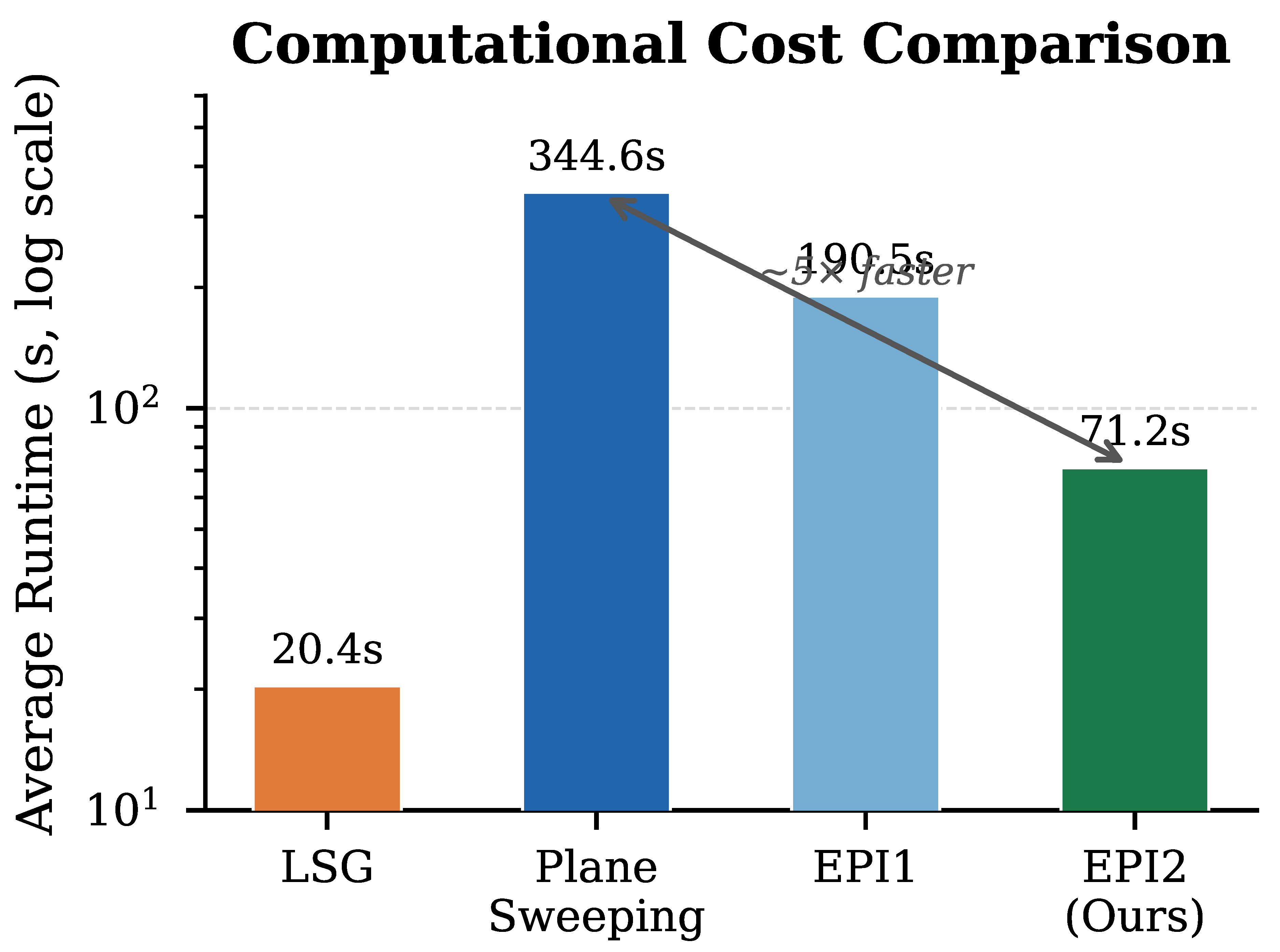

5.2. EPI-Based and Proposed Methods

5.3. Real-World Generalisation

5.4. Depth Sampling Analysis

6. Ablation Study

Component contributions

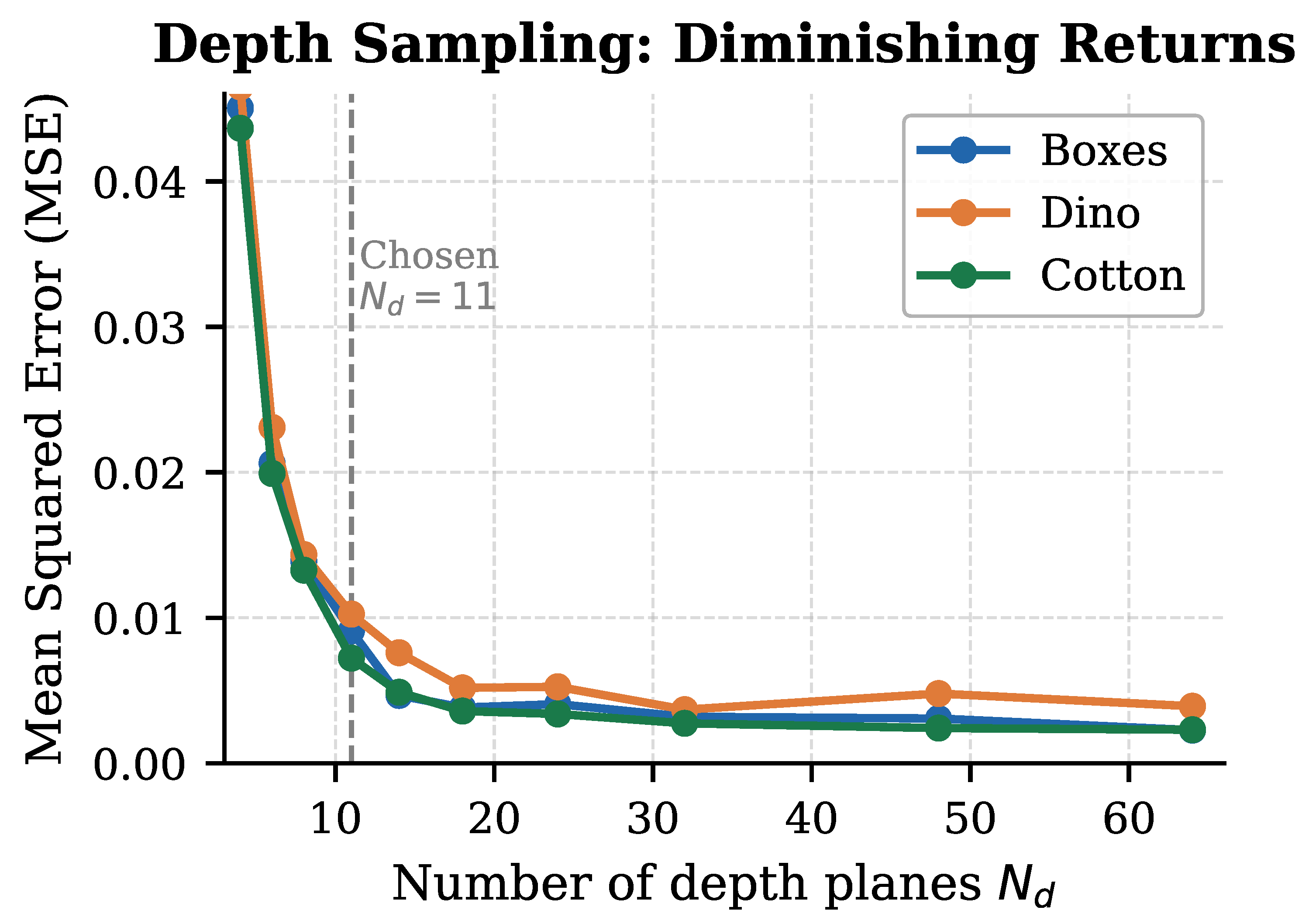

Depth-plane count

Spectral regularization weight

6.1. Limitations

7. Discussion

8. Conclusions

9. Broader Impact Statement

Intended applications and positive impact

- Medical imaging and surgical robotics. Light field endoscopes and depth-from-focus microscopes require fast, structure-preserving depth estimates in real or near-real time. DSER’s occlusion-aware propagation and boundary sharpness are particularly relevant for tissue segmentation and instrument localization, where depth discontinuities carry diagnostic significance.

- Assistive technology. Robust light field depth estimation can improve obstacle detection and scene understanding in mobility aids and wearable navigation systems for visually impaired users, especially in texture-poor indoor environments where gradient-only methods degrade.

- Cultural heritage and scientific digitization. High-fidelity 3D reconstruction of artifacts, archaeological sites, and natural specimens benefits from the structural consistency and low boundary error that DSER achieves on low-texture and partially occluded scenes.

- Autonomous systems and robotics. Accurate, efficient depth estimation is a critical perception primitive for path planning, 3D mapping, and manipulation in service and field robots. DSER’s efficiency profile makes it viable for onboard processing under strict power and latency budgets.

Limitations and risks

- Surveillance and privacy. Like all dense 3D reconstruction methods, DSER could, in principle, be integrated into surveillance pipelines that reconstruct the geometry of individuals or spaces without consent. We do not develop any surveillance application, and the present work is limited to controlled benchmarks and publicly available real-world light field datasets. We encourage practitioners who deploy this or related work in public-facing systems to comply with applicable privacy regulations and to implement appropriate safeguards.

- Dual-use in autonomous weaponry. Improved depth perception could be applied to autonomous targeting or navigation in military platforms. The authors neither design nor intend DSER for such use and note that existing, highly mature depth-sensing modalities (LiDAR, structured light) already serve this domain. The marginal capability uplift from this work in a military context is therefore minimal.

- Dataset and benchmark bias. Our primary evaluation uses the Heidelberg Light Field Benchmark and the Stanford Lytro Archive, both of which contain controlled laboratory or indoor scenes captured with specific plenoptic hardware. Performance may degrade in scenes with diverse illumination, outdoor conditions, or non-standard sensor configurations, and conclusions about accuracy or efficiency may not generalize uniformly to all deployment contexts. We report this limitation explicitly in Section 6.1 and encourage evaluation on broader, more demographically and geographically diverse scene sets as the field matures.

- Environmental cost. Although DSER is significantly faster than exhaustive plane sweeping and does not require large-scale model training (unlike deep learning baselines), iterative spectral refinement and cost-volume construction remain non-trivial computationally. For large-scale or continuous-deployment scenarios, the aggregate energy consumption of inference should be weighed against the application benefit.

Data and model transparency

Summary

Appendix A. Theoretical Justification

Appendix B. Light Field Geometry and the Epipolar Constraint

Appendix B.1. The Two-Plane Parameterisation

Appendix B.2. The Epipolar Disparity Constraint

Appendix C. Spectral Epipolar Representation

Appendix C.1. Frequency-Domain Formulation

Appendix C.2. Spectral Regularisation as a Frequency-Consistent Prior

Appendix D. Least Squares Gradient Estimation

Appendix D.1. Derivation of the Closed-Form Estimator

Appendix D.2. Bias-Variance Analysis

Appendix D.3. Overall Pipeline

| Algorithm A1 Light Field Depth Estimation |

|

Appendix E. Plane-Sweeping Cost Volume

Appendix E.1. Variance-Based Matching Cost

Appendix E.2. Statistical Efficiency of the Variance Cost

Appendix E.3. Complexity vs. Accuracy Trade-Off

Appendix F. Variational Energy Functional

Appendix F.1. Data and Smoothness Terms

Appendix F.2. Existence and Uniqueness

Appendix F.3. Anisotropic Smoothness and Edge Preservation

Appendix G. Confidence Estimation and Directed Random Walk

Appendix G.1. Edge Confidence

Appendix G.2. Colour-Density Score via Mean Shift

Appendix G.3. Directed Random Walk as Graph Regularisation

Appendix H. Multiscale Convergence Analysis

Appendix H.1. Pyramid Construction

Appendix H.2. Error Propagation Bound

Appendix I. Formal Connections Between PSNR, MSE, and Disparity Quality

Appendix I.1. Depth-Disparity Relationship

Appendix I.2. PSNR as a Reconstruction Fidelity Metric

Appendix I.3. Diminishing Returns of Depth Sampling

Appendix J. Computational Complexity Analysis

| Stage | Complexity | Dominant cost |

|---|---|---|

| Preprocessing | Normalisation / warping | |

| LSG estimation | Gradient products | |

| EPI extraction | Slice selection | |

| Spectral analysis | 2D FFT per EPI | |

| Plane sweeping | View warping | |

| EPI refinement | Angular fusion | |

| Confidence map | KDE / mean shift | |

| DRW propagation | Sparse linear solve | |

| Multiscale (K lvl) | Pyramid operations | |

| Total DSER | Plane sweeping stage | |

| LSG only | ||

| Plane Sweep | All stages |

Appendix K. Summary of Theoretical Contributions

| Component | Theoretical basis | Key result | Implication |

|---|---|---|---|

| LSG estimator | Linearised epipolar constraint | Thm. A2: closed-form solution | Fast, sub-pixel initialisation |

| LSG failure mode | Structure tensor analysis | Prop. A4: ill-conditioning | Motivates plane sweeping fallback |

| Spectral EPI prior | Fourier analysis of EPIs | Thm. A1: line support locus | Frequency-consistent regularisation |

| Angular consistency | Parseval equivalence | Prop. A2 | Spectral ≡ spatial consistency |

| Plane sweeping cost | Variance under Lambertian model | Thm. A3: unique minimum | Statistically consistent matching |

| CRLB efficiency | Fisher information | Prop. A5: asymptotic efficiency | Optimal in noise |

| Variational energy | Convex analysis | Thm. A4: well-posedness | Guaranteed solution existence |

| Edge preservation | Anisotropic weights | Eq. (A20) | Discontinuity-respecting smoothing |

| DRW propagation | GMRF / graph Laplacian | Thm. A6: MAP equivalence | Edge-aligned depth propagation |

| Multiscale pyramid | Lipschitz contraction | Thm. A7: error bound | Geometric convergence guarantee |

| Depth sampling | Quantisation theory | Prop. A9: cubic decay | Justifies 11-plane design choice |

| PSNR metric | Log-MSE relationship | Prop. A8: monotonicity | Valid fidelity proxy |

| Runtime advantage | Selective plane sweeping | Prop. A10: | ∼17× speedup over full sweep |

References

- Leistner, T.; Mackowiak, R.; Ardizzone, L.; Köthe, U.; Rother, C. Towards Multimodal Depth Estimation from Light Fields. 2022, 2203.16542. [Google Scholar]

- Jin, J.; Hou, J. Occlusion-aware Unsupervised Learning of Depth from 4-D Light Fields. 2021. [Google Scholar] [CrossRef]

- Lahoud, J.; Ghanem, B.; Pollefeys, M.; Oswald, M.R. 3D Instance Segmentation via Multitask Metric Learning. 2019, 1906.08650. [Google Scholar]

- Anisimov, Y.; Wasenmüller, O.; Stricker, D. Rapid Light Field Depth Estimation with Semi-Global Matching. 2019, 1907.13449. [Google Scholar]

- Petrovai, A.; Nedevschi, S. MonoDVPS: A Self-Supervised Monocular Depth Estimation Approach to Depth-aware Video Panoptic Segmentation. 2022, 2210.07577. [Google Scholar]

- Zhang, Z.; Chen, J. Light-field-depth-estimation Network Based on Epipolar Geometry and Image Segmentation. Journal of the Optical Society of America A 2020, 37, 1236–1244. [Google Scholar] [CrossRef]

- Gao, M.; Deng, H.; Xiang, S.; Wu, J.; He, Z. EPI Light Field Depth Estimation Based on a Directional Relationship Model and Multiview Point Attention Mechanism. Sensors 2022, 22, 6291. [Google Scholar] [CrossRef]

- Zhang, S.; et al. A Light Field Depth Estimation Algorithm Considering Blur Features and Prior Knowledge of Planar Geometric Structures. Applied Sciences 2025, 15, 1447. [Google Scholar] [CrossRef]

- Li, C.; Luo, Y.; Zhang, Z. Robust Light Field Depth Estimation Using Confidence Maps and Edge-aware Filtering. IEEE Access 2021, 9, 123456–123466. [Google Scholar] [CrossRef]

- Schröppel, P.; Bechtold, J.; Amiranashvili, A.; Brox, T. A Benchmark and a Baseline for Robust Multi-view Depth Estimation. 2022. [Google Scholar] [PubMed]

- Lin, F.Y.; Cheng, W.; Banh, L. Comparing the Robustness of Different Depth Map Algorithms. Technical report, 2019; Stanford University. [Google Scholar]

- Kim, C.; Zimmer, H.; Pritch, Y.; Sorkine-Hornung, A.; Gross, M.; Sorkine, O. Scene Reconstruction from High Spatio-angular Resolution Light Fields. ACM Transactions on Graphics 2013, 32, 73:1–73:12. [Google Scholar] [CrossRef]

- Yucer, K.; Sorkine-Hornung, A.; Wang, O.; Sorkine-Hornung, O. Efficient 3D Object Segmentation from Densely Sampled Light Fields with Applications to 3D Reconstruction. ACM Transactions on Graphics 2016, 35, 22. [Google Scholar] [CrossRef]

- Anisimov, Y.; Stricker, D. Fast and Efficient Depth Map Estimation from Light Fields. In Proceedings of the International Conference on 3D Vision (3DV), 2017; pp. 337–346. [Google Scholar] [CrossRef]

- Zhang, H.; Wu, X.; Shen, Y. Efficient Light Field Depth Estimation via Stereo Matching and Geometric Constraints. Signal Processing: Image Communication 2020, 88, 115950. [Google Scholar] [CrossRef]

- Cheng, B.; et al. Panoptic-DeepLab: A Simple, Strong, and Fast Baseline for Bottom-up Panoptic Segmentation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020; pp. 12475–12485. [Google Scholar] [CrossRef]

- Sohn, K.A.; Choi, J.Y.; Kim, H.J. Deep Light Field Depth Estimation Using Epipolar Plane Images and Attention Modules. Sensors 2022, 22, 557. [Google Scholar] [CrossRef]

- Wang, J.; Zhang, L.; Qiao, Y. Self-supervised Depth Estimation from Light Field Images Based on Multi-scale Feature Fusion. IEEE Access 2022, 10, 11064–11075. [Google Scholar] [CrossRef]

- Ma, L.; Li, W.; Wu, H. Unsupervised Depth Estimation of Light Fields with 3D Convolutional Neural Networks. IEEE Transactions on Multimedia 2020, 22, 1008–1020. [Google Scholar] [CrossRef]

- Chen, F.; Liu, Y.; Zhao, G. Deep Learning Based Light Field Depth Estimation: A Survey. IEEE Transactions on Neural Networks and Learning Systems 2022, 33, 734–748. [Google Scholar] [CrossRef]

- Jin, J.; Hou, J.; Dai, K. Unsupervised Light Field Depth Estimation with Occlusion Handling. IEEE Transactions on Image Processing 2021, 30, 5981–5994. [Google Scholar] [CrossRef]

- Li, H.; Fu, Y.; Wu, J. Learning Depth from Light Field Images Using Spatial-angular Consistency. IEEE Transactions on Circuits and Systems for Video Technology 2021, 31, 2540–2552. [Google Scholar] [CrossRef]

- Guo, F.; Wang, Y.; Liu, S. Light Field Depth Estimation via Graph Convolutional Networks. Pattern Recognition Letters 2021, 153, 59–65. [Google Scholar] [CrossRef]

- Zhang, Y.; Liu, X.; Wang, Y. Multi-view Light Field Depth Estimation with Attention-based Cost Aggregation. Neurocomputing 2022, 499, 52–63. [Google Scholar] [CrossRef]

- Liu, Q.; et al. End-to-end Light Field Depth Estimation with Hierarchical Feature Fusion. IEEE Transactions on Image Processing 2021, 30, 5249–5262. [Google Scholar] [CrossRef]

- Nasrollahi, M.; Moeslund, T.B. Super-resolution: A Comprehensive Survey. Machine Vision and Applications 2014, 25, 1423–1468. [Google Scholar] [CrossRef]

- Mannam, V.; Howard, S.; et al. Small Training Dataset Convolutional Neural Networks for Application-specific Super-resolution Microscopy. Journal of Biomedical Optics 2023, 28. [Google Scholar] [CrossRef] [PubMed]

- Liu, R.; Liu, Z.; Lu, J.; et al. Sparse-to-dense Coarse-to-fine Depth Estimation for Colonoscopy. Computers in Biology and Medicine 2023, 160, 106983. [Google Scholar] [CrossRef]

- R., A.; Sinha, N. SSEGEP: Small SEGment Emphasized Performance Evaluation Metric for Medical Image Segmentation. 2021. [Google Scholar] [CrossRef]

- Cakir, S.; et al. Semantic Segmentation for Autonomous Driving: Model Evaluation, Dataset Generation, Perspective Comparison, and Real-Time Capability. 2022, 2207.12939. [Google Scholar] [CrossRef]

- de Silva, R.; Cielniak, G.; Gao, J. Towards Agricultural Autonomy: Crop Row Detection under Varying Field Conditions Using Deep Learning. 2021. [Google Scholar] [CrossRef]

- Kong, Y.; Liu, Y.; Huang, H.; Lin, C.W.; Yang, M.H. SSegDep: A Simple Yet Effective Baseline for Self-supervised Semantic Segmentation with Depth. 2023, 2308.12937. [Google Scholar]

| Method | Year | Type | Boxes | Dino | Cotton | Avg.PSNR↑ | |||

|---|---|---|---|---|---|---|---|---|---|

| PSNR↑ | Time↓ | PSNR↑ | Time↓ | PSNR↑ | Time↓ | ||||

| Classical — Gradient & Local Methods | |||||||||

| Kim et al. [12] | 2013 | Grad. | 19.84 | — | 22.11 | — | 16.50 | — | 19.48 |

| Anisimov et al. [14] | 2017 | Grad. | 21.30 | 12.4 | 25.40 | 11.9 | 18.10 | 13.2 | 21.60 |

| LSG [14]★ | 2017 | Grad. | 23.11 | 19.9 | 28.65 | 19.4 | 20.33 | 21.8 | 24.03 |

| Classical — Plane Sweeping & Cost Volume | |||||||||

| Yucer et al. [13] | 2016 | Sweep | 28.40 | 280.0 | 30.20 | 261.0 | 22.70 | 294.0 | 27.10 |

| Zhang et al. [15] | 2020 | Sweep | 33.10 | 310.0 | 32.80 | 298.0 | 24.60 | 321.0 | 30.17 |

| Plane Sweeping (baseline)★ | — | Sweep | 36.53 | 349.1 | 35.02 | 322.8 | 27.34 | 362.0 | 32.96 |

| EPI / Epipolar-Plane Image Methods | |||||||||

| Gao et al. [7] | 2022 | EPI | 24.30 | 155.0 | 29.50 | 160.0 | 21.80 | 148.0 | 25.20 |

| Zhang et al. [8] | 2025 | EPI | 25.10 | 140.0 | 30.10 | 138.0 | 22.50 | 135.0 | 25.90 |

| EPI1 (baseline)★ | — | EPI | 25.57 | 191.3 | 30.71 | 194.3 | 20.64 | 185.8 | 25.64 |

| Learning-Based Methods | |||||||||

| Jin et al. [21] | 2021 | CNN | 27.80 | 31.50 | 23.40 | 27.57 | |||

| Li et al. [22] | 2021 | CNN | 29.40 | 32.10 | 24.80 | 28.77 | |||

| Sohn et al. [17] | 2022 | Attn. | 30.20 | 33.10 | 25.10 | 29.47 | |||

| Wang et al. [18] | 2022 | CNN | 31.50 | 33.60 | 25.60 | 30.23 | |||

| Liu et al. [25] | 2021 | CNN | 32.80 | 34.20 | 26.10 | 31.03 | |||

| Zhang et al. [24] | 2022 | Attn. | 33.70 | 34.50 | 26.40 | 31.53 | |||

| Hybrid Spectral-Epipolar Methods (Proposed) | |||||||||

| DSER (Ours) — EPI-FCR Level 0 | 2025 | Hybrid | 25.57 | 191.3 | 30.71 | 194.3 | 20.64 | 185.8 | 25.64 |

| DSER (Ours) — EPI2 Final | 2025 | Hybrid | 26.30 | 20.0 | 32.96 | 19.8 | 26.86 | 21.0 | 28.71 |

| Algorithm | Boxes | Dino | Cotton |

|---|---|---|---|

| LSG | 22.11 | 26.65 | 19.33 |

| Plane Sweeping | 26.53 | 33.02 | 25.34 |

| EPI-FCR (Lvl 0) | 25.47 | 30.61 | 20.74 |

| EPI-FCR (Final) | 26.30 | 32.96 | 26.86 |

| Algorithm | Boxes | Dino | Cotton | |||

|---|---|---|---|---|---|---|

| PSNR↑ | Time↓ | PSNR↑ | Time↓ | PSNR↑ | Time↓ | |

| LSG | 23.11 | 19.95 | 28.65 | 19.44 | 20.33 | 21.76 |

| Plane Sweeping | 36.53 | 349.14 | 35.02 | 322.79 | 27.34 | 362.01 |

| EPI1 | 25.57 | 191.29 | 30.71 | 194.33 | 20.64 | 185.84 |

| EPI2 (Ours) | 26.30 | 172.90 | 32.96 | 19.77 | 26.86 | 20.95 |

| Algorithm | PSNR (dB)↑ | Runtime (s)↓ |

|---|---|---|

| LSG | 22–27 (moderate) | ≈19 (fastest) |

| Plane Sweeping | ≈33 (highest) | ≈350 (slowest) |

| EPI1 | ≈30 (balanced) | ≈181 (medium) |

| EPI2 (Ours) | ≈33 (near-optimal) | ≈20 (fast) |

| ID | Configuration | Active Components | PSNR (dB) ↑ | Avg. Time (s) ↓ |

Avg. | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| LSG Init. | Plane Sweep | Spectral EPI | DRW Prop. | Multiscale | Boxes | Dino | Cotton | Avg. | ||||

| A1 | LSG only | ✓ | ✗ | ✗ | ✗ | ✗ | 23.11 | 28.65 | 20.33 | 24.03 | 19.9 | — |

| A2 | + Plane Sweeping | ✓ | ✓ | ✗ | ✗ | ✗ | 25.57 | 30.71 | 20.64 | 25.64 | 191.3 | |

| A3 | + Spectral EPI Refine | ✓ | ✓ | ✓ | ✗ | ✗ | 25.88 | 31.46 | 22.71 | 26.68 | 199.4 | |

| A4 | + DRW Propagation | ✓ | ✓ | ✓ | ✓ | ✗ | 26.12 | 32.71 | 25.64 | 28.16 | 204.1 | |

| A5 | DSER / EPI2 (Full) | ✓ | ✓ | ✓ | ✓ | ✓ | 26.30 | 32.96 | 26.86 | 28.71 | 20.0 | |

| Ablation: remove one component from the full model | ||||||||||||

| A5∖EPI | Full ∖ Spectral EPI | ✓ | ✓ | ✗ | ✓ | ✓ | 25.41 | 31.55 | 22.14 | 26.37 | 198.5 | |

| A5∖DRW | Full ∖ DRW | ✓ | ✓ | ✓ | ✗ | ✓ | 25.93 | 32.54 | 24.60 | 27.69 | 200.7 | |

| A5∖MS | Full ∖ Multiscale | ✓ | ✓ | ✓ | ✓ | ✗ | 26.10 | 32.78 | 26.52 | 28.47 | 196.3 | |

| Avg. PSNR (dB) ↑ | Avg. Time (s) ↓ | Avg. MSE ↓ | |

|---|---|---|---|

| 3 | 22.14 | 13.2 | 0.0441 |

| 5 | 24.77 | 14.8 | 0.0312 |

| 7 | 26.93 | 16.1 | 0.0205 |

| 9 | 27.88 | 17.8 | 0.0145 |

| 11 | 28.71 | 20.0 | 0.0093 |

| 16 | 28.89 | 25.4 | 0.0089 |

| 24 | 29.01 | 34.7 | 0.0086 |

| 32 | 29.07 | 44.9 | 0.0084 |

| 64 | 29.12 | 82.3 | 0.0083 |

| Boxes (dB) | Dino (dB) | Cotton (dB) | Avg. (dB) | |

|---|---|---|---|---|

| 25.61 | 30.79 | 20.71 | 25.70 | |

| 25.74 | 31.02 | 21.43 | 26.06 | |

| 26.04 | 32.11 | 24.88 | 27.68 | |

| 26.30 | 32.96 | 26.86 | 28.71 | |

| 26.21 | 32.14 | 26.09 | 28.15 | |

| 25.82 | 32.47 | 24.84 | 27.71 | |

| 24.91 | 31.18 | 22.57 | 26.22 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).