Submitted:

31 May 2025

Posted:

03 June 2025

You are already at the latest version

Abstract

Keywords:

I. Introduction

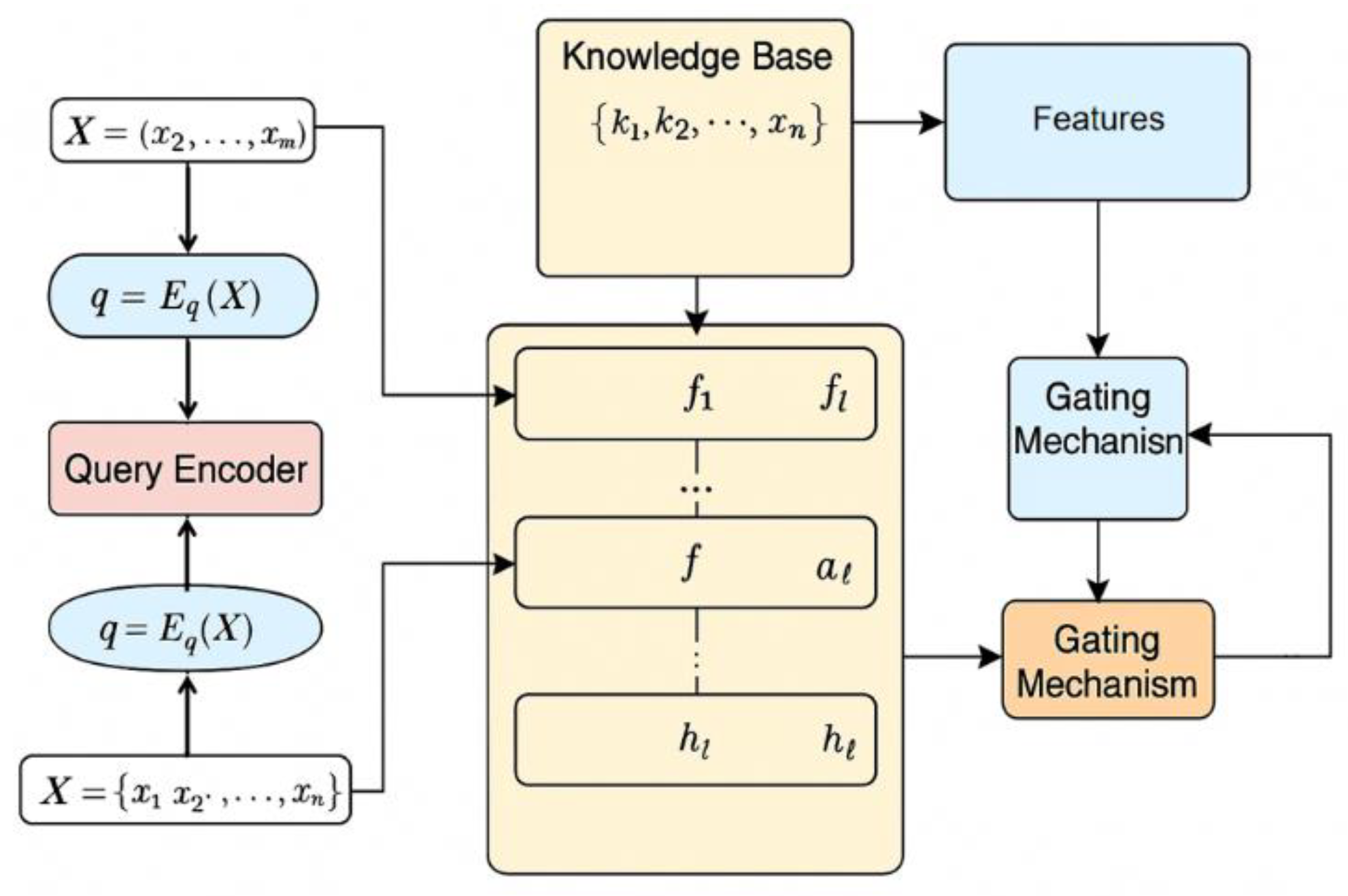

II. Method

III. Experiment

A. Datasets

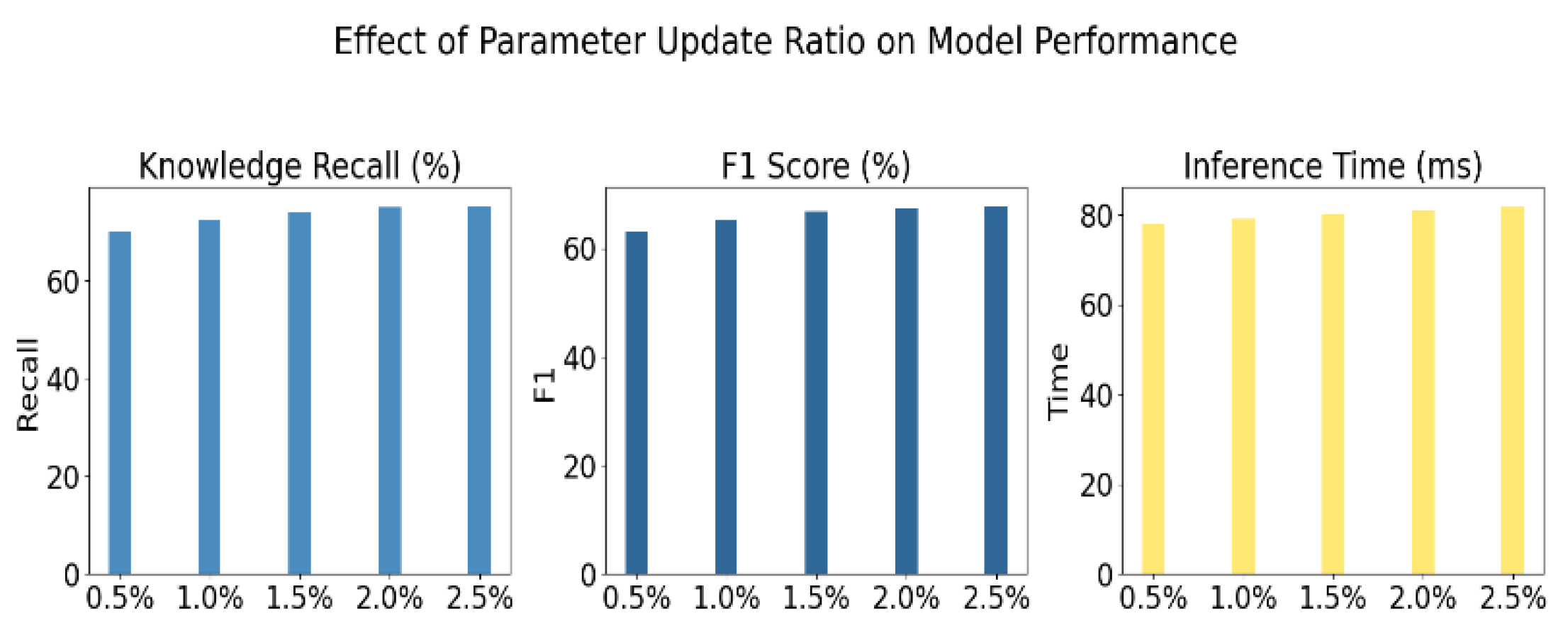

B. Experimental Results

| Method | Knowledge Recall(%) | F1 Score(%) | Inference Time(ms) |

| T5-base + Full Fine-tuning[20] | 68.4 | 55.1 | 82 |

| T5-base + Adapter Tuning[21] | 71.3 | 60.5 | 76 |

| T5-base + Prefix Tuning[22] | 73.6 | 63.2 | 79 |

| T5-base + LoRA[23] | 70.8 | 58.7 | 88 |

| T5-base+Ours | 75.4 | 67.9 | 81 |

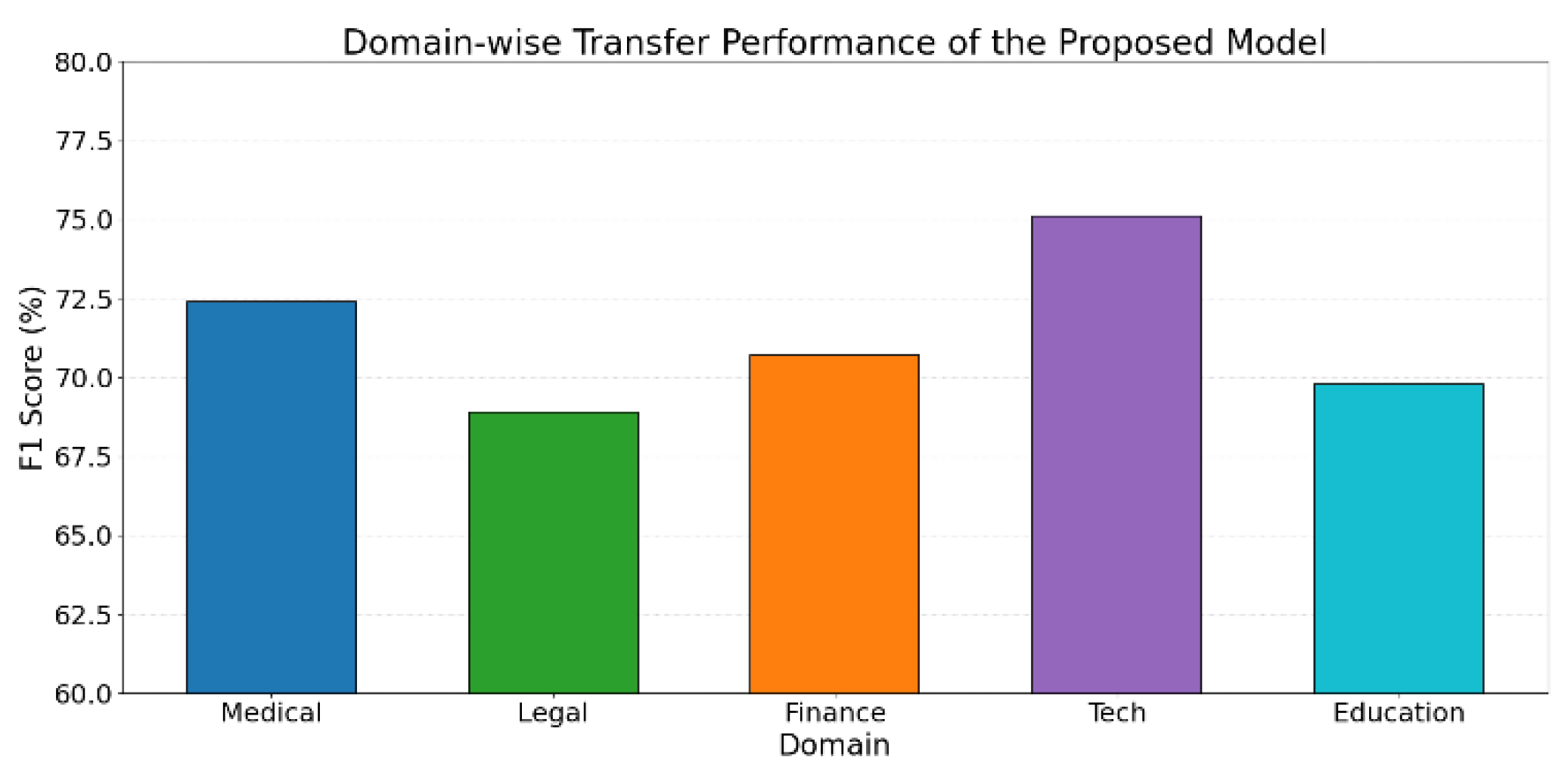

IV. Conclusion

References

- T. Susnjak et al., “Automating research synthesis with domain-specific large language model fine-tuning,” ACM Transactions on Knowledge Discovery from Data, vol. 19, no. 3, pp. 1–39, 2025.

- R. Pan et al., “LISA: layerwise importance sampling for memory-efficient large language model fine-tuning,” Advances in Neural Information Processing Systems, vol. 37, pp. 57018–57049, 2024.

- Y. Zhang, J. Liu, J. Wang, L. Dai, F. Guo and G. Cai, “Federated Learning for Cross-Domain Data Privacy: A Distributed Approach to Secure Collaboration,” arXiv preprin. 2025; arXiv:2504.00282.

- J. Zhan, “Single-Device Human Activity Recognition Based on Spatiotemporal Feature Learning Networks,” Transactions on Computational and Scientific Methods, vol. 5, no. 3, 2025.

- X. Du, “Financial Text Analysis Using 1D-CNN: Risk Classification and Auditing Support”, 2025.

- . Wang, R. Zhang, J. Du, R. Hao and J. Hu, “A Deep Learning Approach to Interface Color Quality Assessment in Human–Computer Interaction,” arXiv preprint. 2025; arXiv:2502.09914.

- Y. Duan, L. Yang, T. Zhang, Z. Song and F. Shao, “Automated User Interface Generation via Diffusion Models: Enhancing Personalization and Efficiency,” arXiv preprint. 2025; arXiv:2503.20229.

- W. Zhang et al., “Fine-tuning large language models for chemical text mining,” Chemical Science, vol. 15, no. 27, pp. 10600–10611, 2024.

- R. Zhang et al., “LLaMA-adapter: Efficient fine-tuning of large language models with zero-initialized attention,” in Proceedings of the Twelfth International Conference on Learning Representations,pp. 106–1115, 2024.

- B. Weng, “Navigating the landscape of large language models: A comprehensive review and analysis of paradigms and fine-tuning strategies,” arXiv preprint. 2024; arXiv:2404.09022.

- L. Xia et al., “Leveraging error-assisted fine-tuning of large language models for manufacturing excellence,” Robotics and Computer-Integrated Manufacturing, vol. 88, p. 102728, 2024.

- Y. Deng, “A Reinforcement Learning Approach to Traffic Scheduling in Complex Data Center Topologies,” Journal of Computer Technology and Software, vol. 4, no. 3, 2025.

- J. Liu, Y. Zhang, Y. Sheng, Y. Lou, H. Wang and B. Yang, “Context-Aware Rule Mining Using a Dynamic Transformer-Based Framework,” arXiv preprint. 2025; arXiv:2503.11125.

- X. Wang, “Medical Entity-Driven Analysis of Insurance Claims Using a Multimodal Transformer Model,” Journal of Computer Technology and Software, vol. 4, no. 3, 2025.

- Y. Deng, “A Hybrid Network Congestion Prediction Method Integrating Association Rules and Long Short-Term Memory for Enhanced Spatiotemporal Forecasting,” Transactions on Computational and Scientific Methods, vol. 5, no. 2, 2025.

- L. Wu, J. Gao, X. Liao, H. Zheng, J. Hu and R. Bao, “Adaptive Attention and Feature Embedding for Enhanced Entity Extraction Using an Improved BERT Model,” in Proceedings of the 2024 Fourth International Conference on Communication Technology and Information Technology, pp. 702–705, December 2024.

- Z. Yu, S. Wang, N. Jiang, W. Huang, X. Han and J. Du, “Improving Harmful Text Detection with Joint Retrieval and External Knowledge,” arXiv preprint. 2025; arXiv:2504.02310.

- G. Cai, J. Gong, J. Du, H. Liu and A. Kai, “Investigating Hierarchical Term Relationships in Large Language Models,” Journal of Computer Science and Software Applications, vol. 5, no. 4, 2025.

- J. Gong, Y. Wang, W. Xu and Y. Zhang, “A Deep Fusion Framework for Financial Fraud Detection and Early Warning Based on Large Language Models,” Journal of Computer Science and Software Applications, vol. 4, no. 8, 2024.

- K. Lv et al., “Full Parameter Fine-Tuning for Large Language Models with Limited Resources,” arXiv preprint. 2023; arXiv:2306.09782.

- R. He et al., “On the Effectiveness of Adapter-Based Tuning for Pretrained Language Model Adaptation,” arXiv preprint. 2021; arXiv:2106.03164.

- X. L. Li and P. Liang, “Prefix-Tuning: Optimizing Continuous Prompts for Generation,” arXiv preprint. 2021; arXiv:2101.00190.

- E. J. Hu et al., “LoRA: Low-Rank Adaptation of Large Language Models,” in Proceedings of the International Conference on Learning Representations, Article no. 3, 2022.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).