Submitted:

22 May 2025

Posted:

23 May 2025

You are already at the latest version

Abstract

Keywords:

I. Introduction

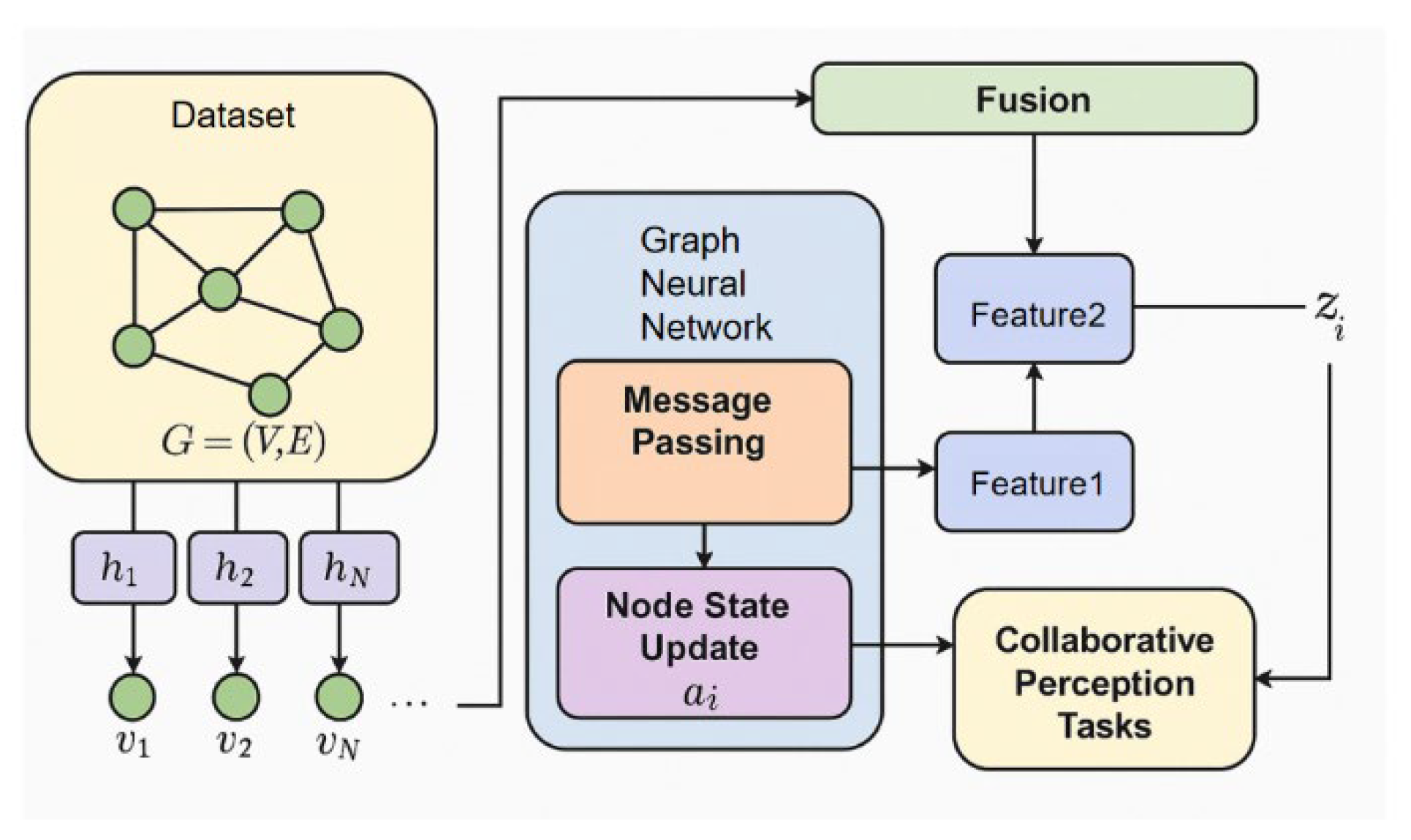

II. Background and Fundamentals

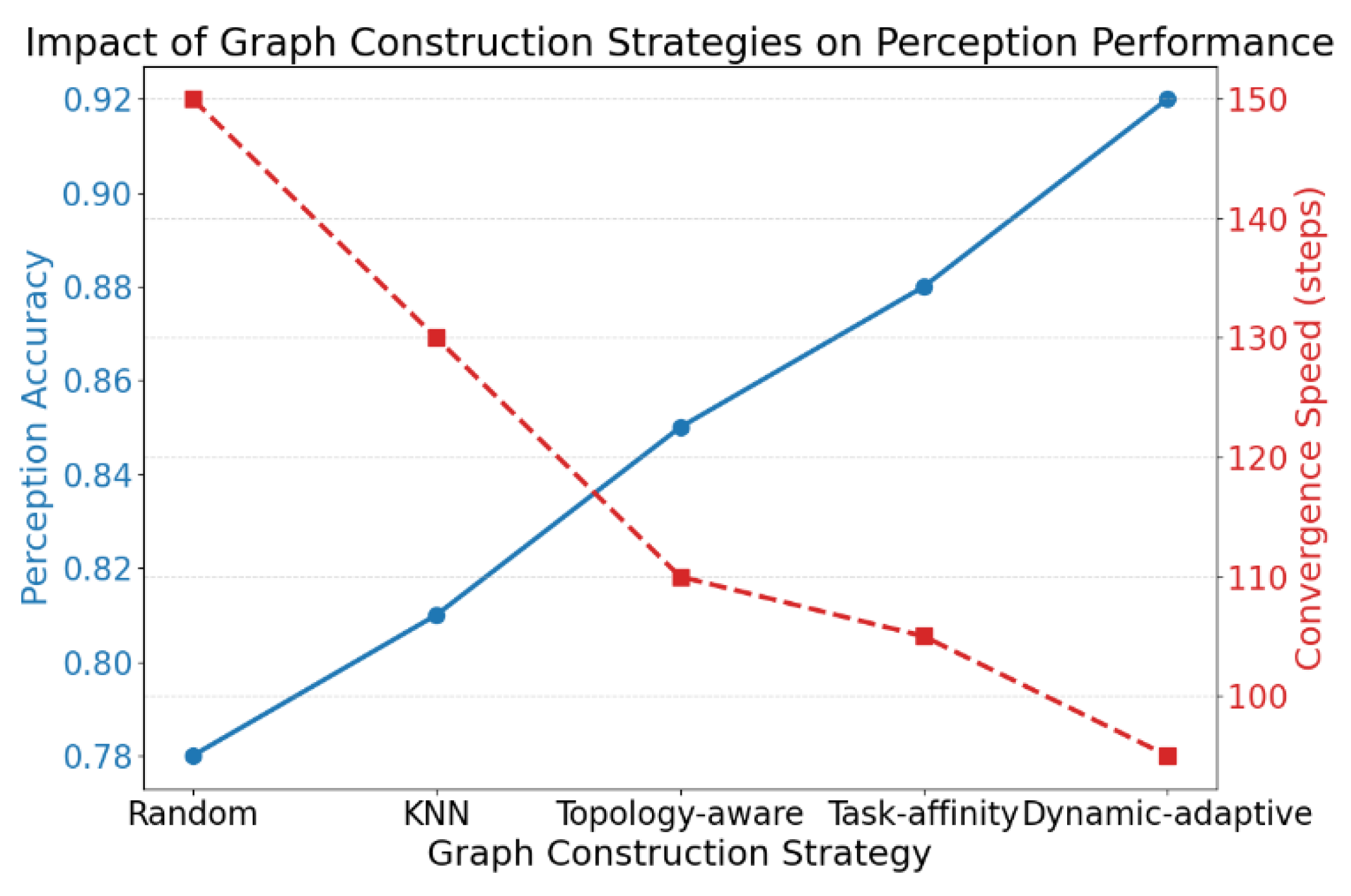

III. Frameworks

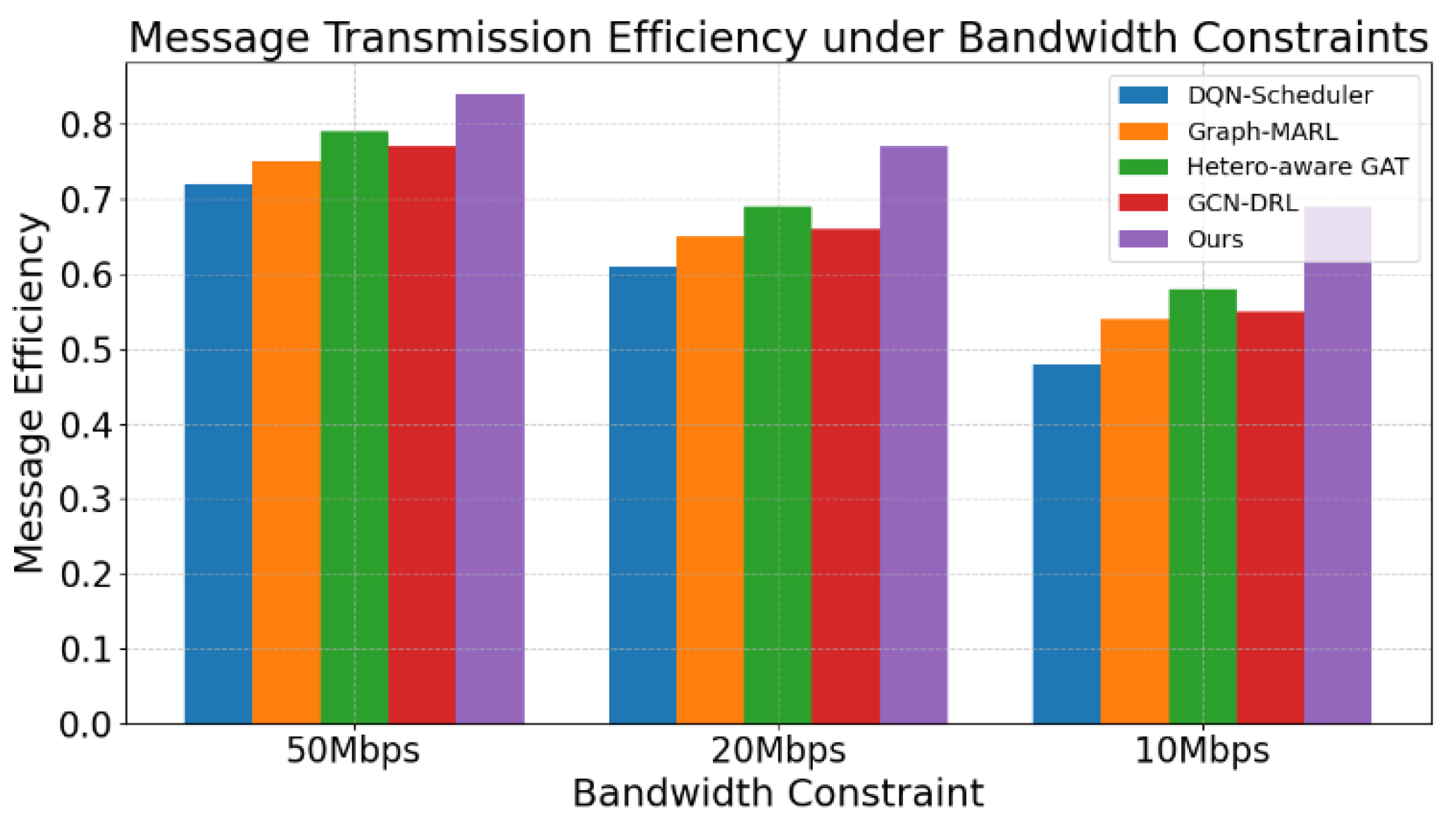

IV. Experiment

A. Datasets

B. Experimental Results

V. Conclusion

References

- J. Vatter, R. Mayer and H.-A. Jacobsen. The evolution of distributed systems for graph neural networks and their origin in graph processing and deep learning: A survey. ACM Computing Surveys 2023, 56, 1–37.

- Y. Shao et al. Distributed graph neural network training: A survey. ACM Computing Surveys 2024, 56, 1–39.

- H. Lin et al. A comprehensive survey on distributed training of graph neural networks. Proceedings of the IEEE 2023, 111, 1572–1606. [CrossRef]

- H. Zhang, Y. Ma, S. Wang, G. Liu and B. Zhu. Graph-Based Spectral Decomposition for Parameter Coordination in Language Model Fine-Tuning. arXiv preprint, 2025; arXiv:2504.19583.

- R. Liu et al. Federated graph neural networks: Overview, techniques, and challenges. IEEE Transactions on Neural Networks and Learning Systems 2024.

- Q. He, C. Liu, J. Zhan, W. Huang and R. Hao. State-Aware IoT Scheduling Using Deep Q-Networks and Edge-Based Coordination. arXiv preprint, 2025; arXiv:2504.15577.

- Y. Ren, M. Wei, H. Xin, T. Yang and Y. Qi. Distributed Network Traffic Scheduling via Trust-Constrained Policy Learning Mechanisms. Transactions on Computational and Scientific Methods 2025, 5.

- T. Yang, Y. Cheng, Y. Ren, Y. Lou, M. Wei and H. Xin. A Deep Learning Framework for Sequence Mining with Bidirectional LSTM and Multi-Scale Attention. arXiv preprint 2025, arXiv:2504.15223.

- Y. Cheng. Multivariate Time Series Forecasting through Automated Feature Extraction and Transformer-Based Modeling. Journal of Computer Science and Software Applications 2025, 5.

- Y. Wang. Optimizing Distributed Computing Resources with Federated Learning: Task Scheduling and Communication Efficiency. Journal of Computer Technology and Software 2025, 4.

- X. Sun, Y. Duan, Y. Deng, F. Guo, G. Cai and Y. Peng. Dynamic Operating System Scheduling Using Double DQN: A Reinforcement Learning Approach to Task Optimization. arXiv preprint, 2025; arXiv:2503.23659.

- P. Li. Machine Learning Techniques for Pattern Recognition in High-Dimensional Data Mining. arXiv preprint, 2024; arXiv:2412.15593.

- Y. Lou, J. Liu, Y. Sheng, J. Wang, Y. Zhang and Y. Ren. Addressing Class Imbalance with Probabilistic Graphical Models and Variational Inference. arXiv preprint, 2025; arXiv:2504.05758.

- B. Wang. Topology-Aware Decision Making in Distributed Scheduling via Multi-Agent Reinforcement Learning. Transactions on Computational and Scientific Methods 2025, 5.

- Y. Deng. A Reinforcement Learning Approach to Traffic Scheduling in Complex Data Center Topologies. Journal of Computer Technology and Software 2025, 4.

- A. Llorens-Carrodeguas, C. Cervelló-Pastor and F. Valera. DQN-based intelligent controller for multiple edge domains. Journal of Network and Computer Applications 2023, 218, 103705. [CrossRef]

- C. Mu et al. Graph multi-agent reinforcement learning for inverter-based active voltage control. IEEE Transactions on Smart Grid, 2023, 15, 1399–1409.

- H. Deng et al. BGSD: A SBERT and GAT-based service discovery framework for heterogeneous distributed IoT. Computer Networks 2023, 220, 109488. [CrossRef]

- Z. Wu et al. DRL-GCNet: A Deep Reinforcement learning and Graph Convolutional Network for Harmonic Drive Fault Diagnosis. IEEE Transactions on Instrumentation and Measurement 2025.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).