Submitted:

20 May 2025

Posted:

21 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- MAML technique is designed to enable anomaly detection in 14 different scenarios including highway, shop, front door, office, parking lot, pedestrian street, street Highview, warehouse, road, park, mall, train, restaurant, sidewalk by using small number of normal and anomalous data, where data collection is limited.

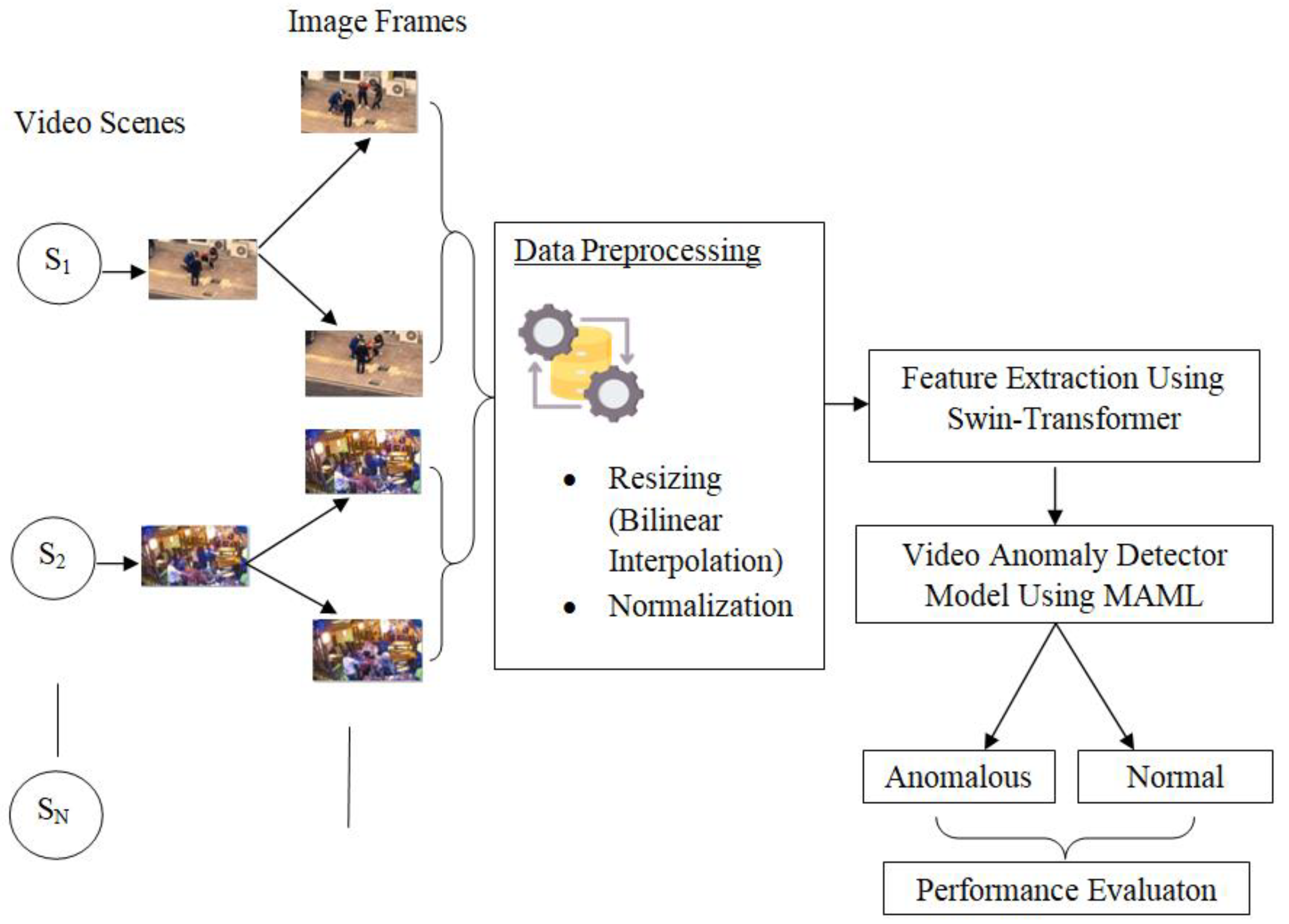

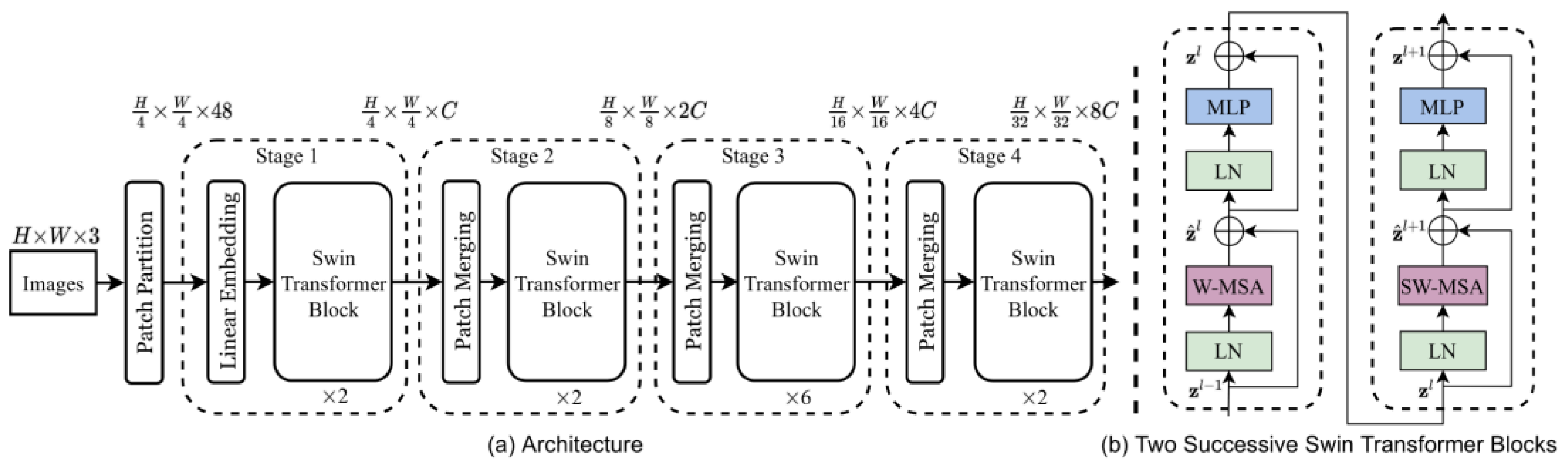

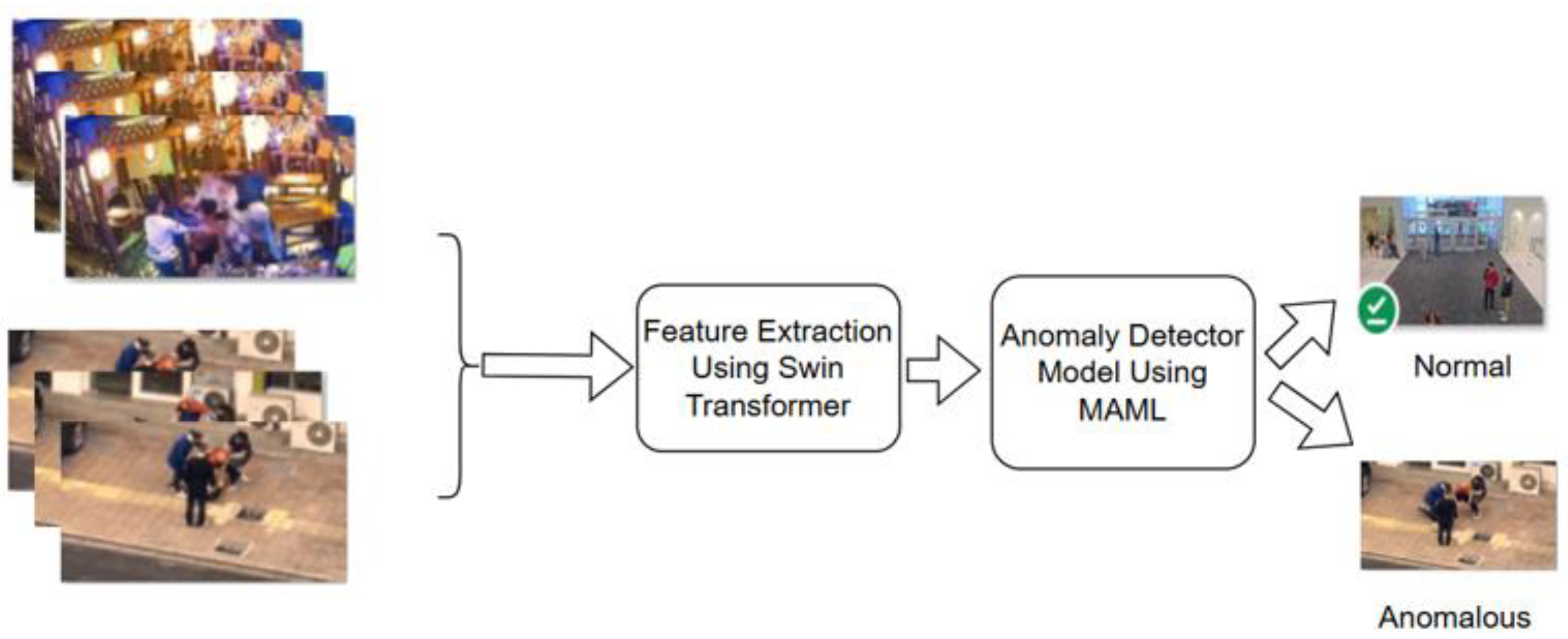

- Our method leverages metadata and swin transformers to extract spatial features from frames of video data from different targeted scenes, thereby enhancing adaptability to different scenarios.

- By leveraging anomaly detector models using MAML to acquire the capability to classify between normal and abnormal data, better accuracy in anomaly detection from different scenarios with limited data is realized from targeted domain.

2. Related Works

3. Methodology

3.1. Dataset

3.2. Data Preprocessing

3.3. Data Preprocessing

3.4. Data Preprocessing

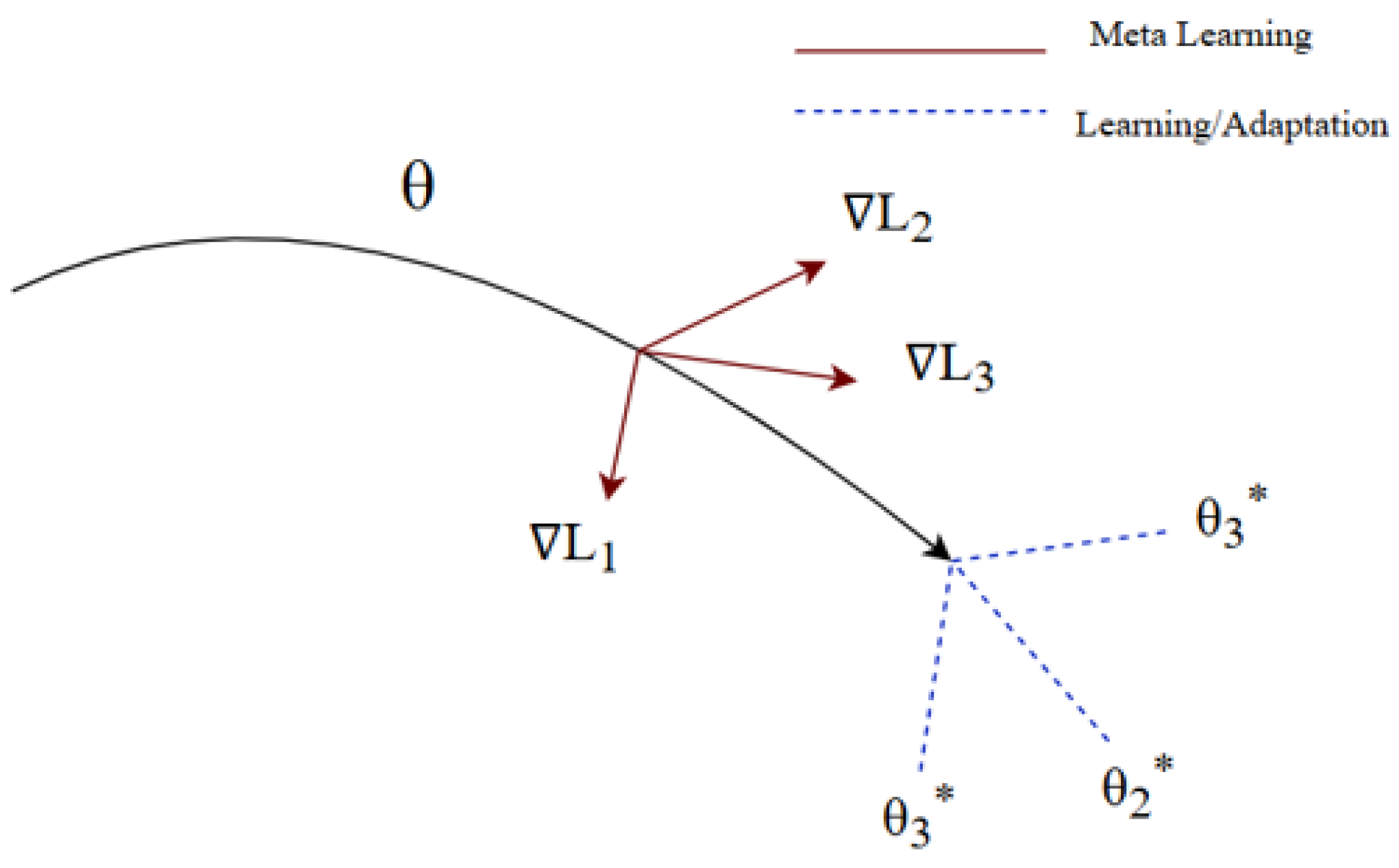

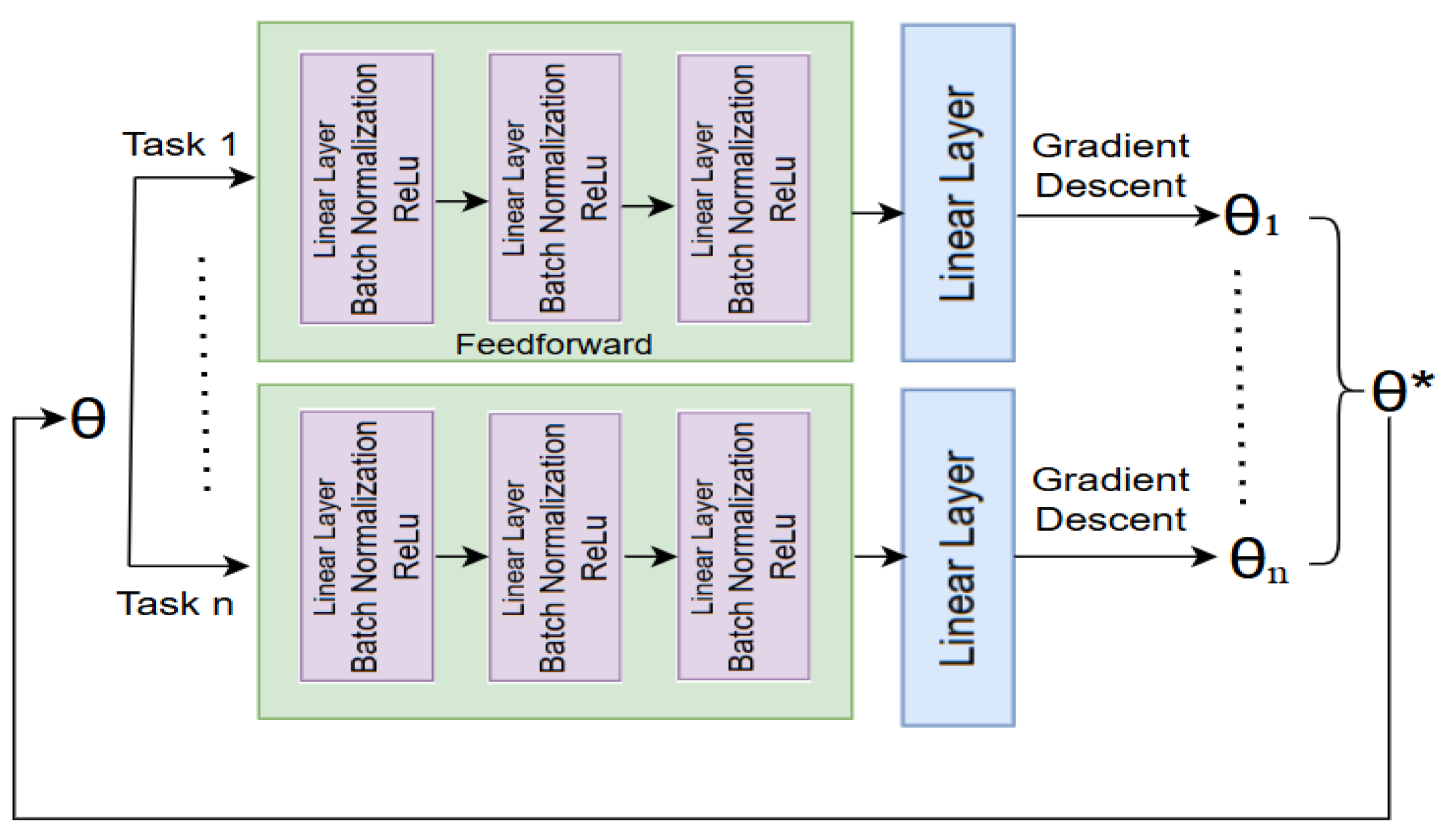

3.4.1. Meta Training on Dataset

- Task Sampling: The sample task method was designed to generate a dataset sample for anomaly detection task. Here a random scenario from a predefined list was selected. Within that scenario, it identified normal and anomalous images by cross-referencing scenario-specific indices with label-specific indices. Once these images were identified, it randomly selected a fixed number (k_shot + k_query) of normal and anomalous samples. These selected images were then split into two sets: the support set, which consists of k_shot normal and k_shot anomalous images for training, and the query set, which contains k_query normal and k_query anomalous images for evaluation. Each image was assigned to its respective label, either normal or anomalous. To avoid bias, the method shuffled both the support and query sets before returning them. Then the function ultimately ensured a balanced, structured dataset that can be used in meta-learning tasks, particularly for detecting anomalies within specific scenarios.

- Inner Loop: The method began by creating a temporary copy of the model to ensure that updates do not affect the original model. The copied model was then placed in training mode, and a Binary Cross Entropy with Logits Loss function was defined for classification. Then a series of inner-loop updates were performed over self-inner steps iterations. In each iteration, it made predictions using the support set, calculated the loss, and computed gradients with respect to the model’s parameters. Instead of using an optimizer, it manually updated each parameter using stochastic gradient descent (SGD) by subtracting the gradient scaled by the inner learning rate. This adapted model, then fine-tuned to the support set, was returned. The purpose of this method was to allow a model to quickly adapt to a new task using only a few examples. This approach maintains the computational graph so that the outer update can backpropagate through the inner adaptation steps.

- Outer Loop: The meta update function implements a meta-learning step based on the Model-Agnostic Meta-Learning (MAML) approach. It processed multiple tasks, where each task consists of a support set (used for inner-loop adaptation) and a query set (used for evaluation). First, it initialized the loss function, optimizer, and storage variables for query labels and predictions. Then, it iterated over tasks, moving data to the appropriate device and performing an inner-loop update, where the model adapts to the task using the support set. After adaptation, the model made predictions on the query set, and the binary cross-entropy loss was computed. The predicted probabilities and true query labels were stored for later analysis. The total meta-loss was averaged across tasks, and outer-loop optimization was performed by backpropagating the loss. Finally, the main model’s weights were updated using the adapted model, and the function returned the final loss along with stored query labels and predictions. This process helped the model learn a generalizable initialization that can quickly adapt to new tasks with minimal updates.

- Meta Validate: A meta-learning model was then evaluated by iterating over a set of tasks, each containing a support set for fine-tuning and a query set for evaluation. It first initialized the total loss and prepared storage for true labels and predictions. As it looped through tasks, it moves data to the appropriate device (CPU/GPU) and fi-ne-tuned a copy of the model on the support set using an inner-loop update function (inner update). After adaptation, it made predictions on the query set, applied a sigmoid activation function to convert logits into probabilities, and stored both true and predicted labels. Then the function computed the binary cross-entropy loss for each task, accumulated the losses, and then averaged them over all tasks. Finally, it invoked an early stop-ping mechanism, which can determine if the model should be saved or training should halt based on the meta-loss trend. Then the function returned the averaged loss, true query labels, predicted labels, and the early stopping decision, ensuring model evaluation in a meta-learning framework.

3.4.2. Meta Testing on Dataset

3.5. Data Preprocessing

3.5.1. Dataset Meta Training Phase

3.5.2. Meta Testing Phase

3.5.3. Loss Function for Anomaly Detection

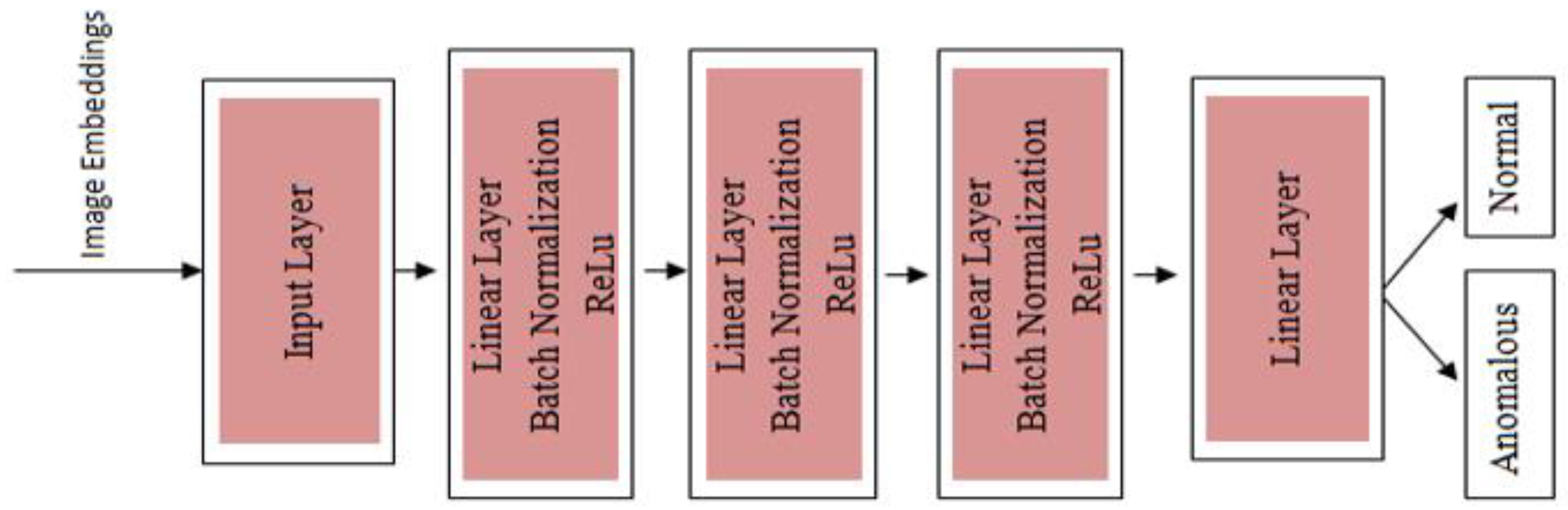

3.5.4. Model Agnostic Meta Learning Architecture

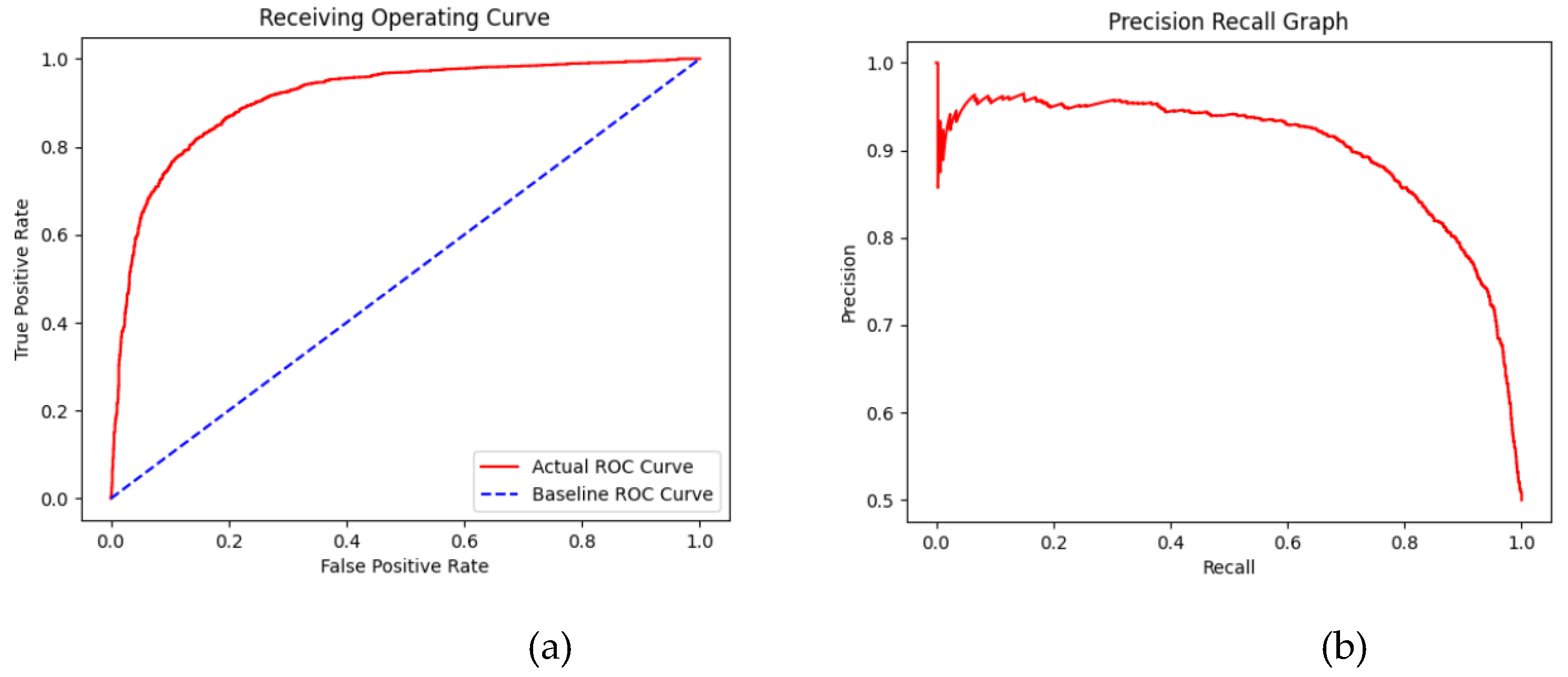

4. Result and Analysis

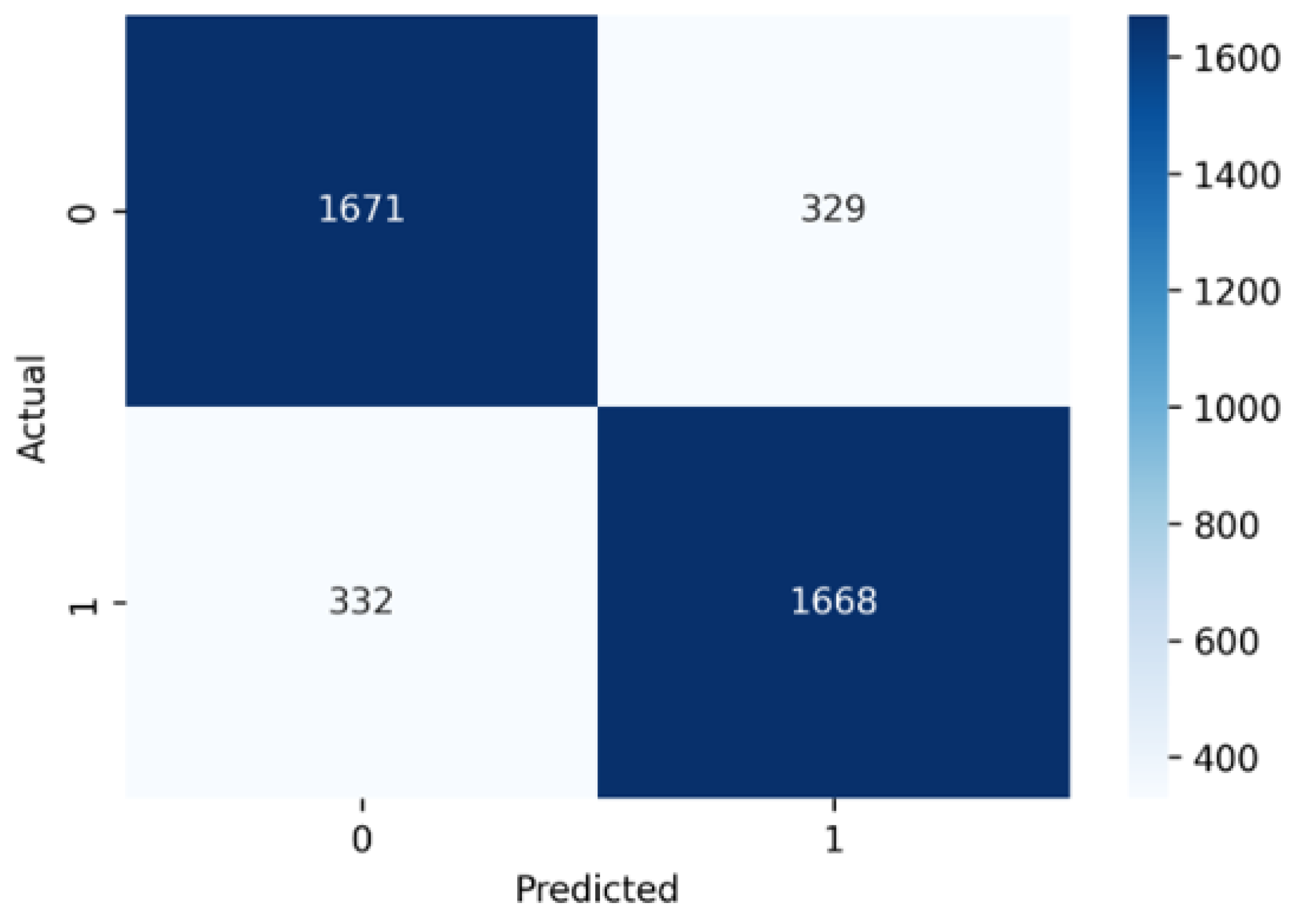

4.1. Confusion Matrix

- Accuracy = (T P + T N )/(T P + T N + F P + F N ) = 3339/4000 = 0.834

- Precision = T P/(T P + F P ) = 1668/(1668 + 329) = 1668/1997 = 0.835

- Recall = T P/(T P + F N ) = 1668/(1668 + 322) = 1668/2000 = 0.834

- F1-Score = 2 ∗ (Precision ∗ Recall)/(Precision + Recall) = 0.834

4.2. Learning Curve

4.3. Model Comparison

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Bergman, L.; Hoshen, Y. Classification-Based Anomaly Detection for General Data. arXiv arXiv:2005.02359, 2020.

- Chen, D.; Yue, L.; Chang, X.; Xu, M.; Jia, T. NM-GAN: Noise-Modulated Generative Adversarial Network for Video Anomaly Detection. Pattern Recognit. 2021, 116, 107969. [Google Scholar] [CrossRef]

- Duan, Y.; Bao, H.; Bai, G.; Wei, Y.; Xue, K.; You, Z.; Ou, Z. Learning to Diagnose: Meta-Learning for Efficient Adaptation in Few-Shot AIOps Scenarios. Electronics 2024, 13(11), 2102. [Google Scholar] [CrossRef]

- Finn, C.; Abbeel, P.; Levine, S. Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks. In Proceedings of the International Conference on Machine Learning (ICML), Sydney, Australia, 6–11 August 2017; pp. 1126–1135. [Google Scholar]

- Gong, D.; Liu, L.; Le, V.; Saha, B.; Mansour, M. R.; Venkatesh, S.; van den Hengel, A. Memorizing Normality to Detect Anomaly: Memory-Augmented Deep Autoencoder for Unsupervised Anomaly Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27–28 October 2019; pp. 1705–1714. [Google Scholar]

- Jeong, J.; Yeo, D.; Roh, S.; Jo, Y.; Kim, M. Leak Event Diagnosis for Power Plants: Generative Anomaly Detection Using Prototypical Networks. Sensors 2024, 24(15), 4991. [Google Scholar] [CrossRef] [PubMed]

- Ji, C. Bilinear Resize. https://chao-ji.github.io/jekyll/update/2018/07/19/BilinearResize.html (accessed Jan 28, 2025).

- Joshi, M.; Pant, D. R.; Heikkonen, J.; Kanth, R. One, Five, and Ten-Shot-Based Meta-Learning for Computationally Efficient Head Pose Estimation. Int. J. Embed. Real-Time Commun. Syst. 2023, 14(1), 1–24. [CrossRef]

- Li, A.; Qiu, C.; Kloft, M.; Smyth, P.; Rudolph, M.; Mandt, S. Zero-Shot Anomaly Detection via Batch Normalization. Adv. Neural Inf. Process. Syst. 2023, 36, 40963–40993. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, Canada, 10–17 October 2021; pp. 10012–10022. [Google Scholar]

- Meng, Y.; Lu, K. C.; Dong, Z.; Li, S.; Shao, C. Explainable Few-Shot Learning for Online Anomaly Detection in Ultrasonic Metal Welding with Varying Configurations. J. Manuf. Process. 2023, 107, 345–355. [Google Scholar] [CrossRef]

- Moon, J.; Noh, Y.; Jung, S.; Lee, J.; Hwang, E. Anomaly Detection Using a Model-Agnostic Meta-Learning-Based Variational Auto-Encoder for Facility Management. J. Build. Eng. 2023, 68, 106099. [Google Scholar] [CrossRef]

- Munkhdalai, T.; Yu, H. Meta Networks. In Proceedings of the International Conference on Machine Learning (ICML), Sydney, Australia, 6–11 August 2017; pp. 2554–2563. [Google Scholar]

- Natha, S.; Leghari, M.; Rajput, M. A.; Zia, S. S.; Shabir, J. A Systematic Review of Anomaly Detection Using Machine and Deep Learning Techniques. Quaid-e-Awam Univ. Res. J. Eng. Sci. Technol. 2022, 20(1), 83–94. [CrossRef]

- Navarro, J. M.; Huet, A.; Rossi, D. Meta-Learning for Fast Model Recommendation in Unsupervised Multivariate Time Series Anomaly Detection. In Proceedings of the International Conference on Automated Machine Learning (AutoML), New Orleans, LA, USA, December 2023; pp 24-1.

- Parnami, A.; Lee, M. Learning from Few Examples: A Summary of Approaches to Few-Shot Learning. arXiv arXiv:2203.04291, 2022.

- Ravi, S.; Larochelle, H. Optimization as a Model for Few-Shot Learning. In Proceedings of the International Conference on Learning Representations (ICLR), Toulon, France, April 2017.

- Reiss, T.; Hoshen, Y. Attribute-Based Representations for Accurate and Interpretable Video Anomaly Detection. arXiv arXiv:2212.00789, 2022.

- Santoro, A.; Bartunov, S.; Botvinick, M.; Wierstra, D.; Lillicrap, T. Meta-Learning with Memory-Augmented Neural Networks. In Proceedings of the International Conference on Machine Learning (ICML), New York, NY, USA, 19–24 June 2016; pp. 1842–1850. [Google Scholar]

- Smith, J.; Doe, A.; Lee, M. Investigating the Impact of Meta-Learning on Anomaly Detection in Cybersecurity. J. Cybersecur. Res. 2020, 10(3), 145–160. [Google Scholar]

- Soh, J. W.; Cho, S.; Cho, N. I. Meta-Transfer Learning for Zero-Shot Super-Resolution. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 14–19 June 2020; pp. 3516–3525. [Google Scholar]

- Wu, J. C.; Chen, D. J.; Fuh, C. S.; Liu, T. L. Learning Unsupervised MetaFormer for Anomaly Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, Canada, 10–17 October 2021; pp. 4369–4378. [Google Scholar]

- Wu, P.; Pan, C.; Yan, Y.; Pang, G.; Wang, P.; Zhang, Y. Deep Learning for Video Anomaly Detection: A Review. arXiv 2024, arXiv:2409.05383. [Google Scholar]

- Zhang, M.; Shen, Y.; Yin, J.; Lu, S.; Wang, X. ADAGENT: Anomaly Detection Agent with Multimodal Large Models in Adverse Environments. IEEE Access 2024. [CrossRef]

- Zhang, S.; Ye, F.; Wang, B.; Habetler, T. G. Few-Shot Bearing Anomaly Detection via Model-Agnostic Meta-Learning. In Proceedings of the 2020 23rd International Conference on Electrical Machines and Systems (ICEMS), Hamamatsu, Japan, 24–27 November 2020; pp. 1341–1346. [Google Scholar]

- Zhu, L.; Raj, A.; Wang, L. Advancing Anomaly Detection: An Adaptation Model and a New Dataset. arXiv 2024, arXiv:2402.

- Zhu, L.; Wang, L.; Raj, A.; Gedeon, T.; Chen, C. Advancing Video Anomaly Detection: A Concise Review and a New Dataset. arXiv 2024, arXiv:2402.04857. [Google Scholar]

| Metrics | Values |

| Epoch | 100 |

| K-Shot | 10 |

| K-Query | 1 |

| Optimizer | Adam |

| N-Way | 5 |

| Inner Learning Rate | 0.1 |

| Outer Learning Rate | 0.01 |

| Batchsz | 2000 |

| Input Image Size | 224x224 |

| No. of Layers | 3 |

| Feature Embedding Size | 768 |

| Device | Cuda |

| Model | AUC |

| SAAD | 0.69 |

| RFTM | 0.867 |

| MGFN | 0.85 |

| MLP+MAML+SWIN(Our Model) | 0.91 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).