Submitted:

17 May 2025

Posted:

20 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Agentic, multi-stage KG construction. We design and implement a hierarchical, agent-based extraction pipeline that processes text, tables, code snippets, and images to automatically build and update a knowledge graph capturing entities, relations, and attributes.

- Hybrid retrieval fusion. We develop a unified mechanism that merges graph-based traversal with semantic search at query time, ensuring that all relevant, structurally grounded information informs the LLM’s prompt.

- Grounded hallucination mitigation. We introduce a post-generation refinement step that cross-verifies initial LLM outputs against the knowledge graph and iteratively corrects inconsistencies, dramatically reducing factual errors.

- Plug-and-play modularity. Our framework accommodates diverse LLMs and retrieval modules, enabling seamless component swapping and straightforward extension to new domains without retraining.

2. Preliminary and Related Work

2.1. Preliminary

2.2. Related Work

3. Approach

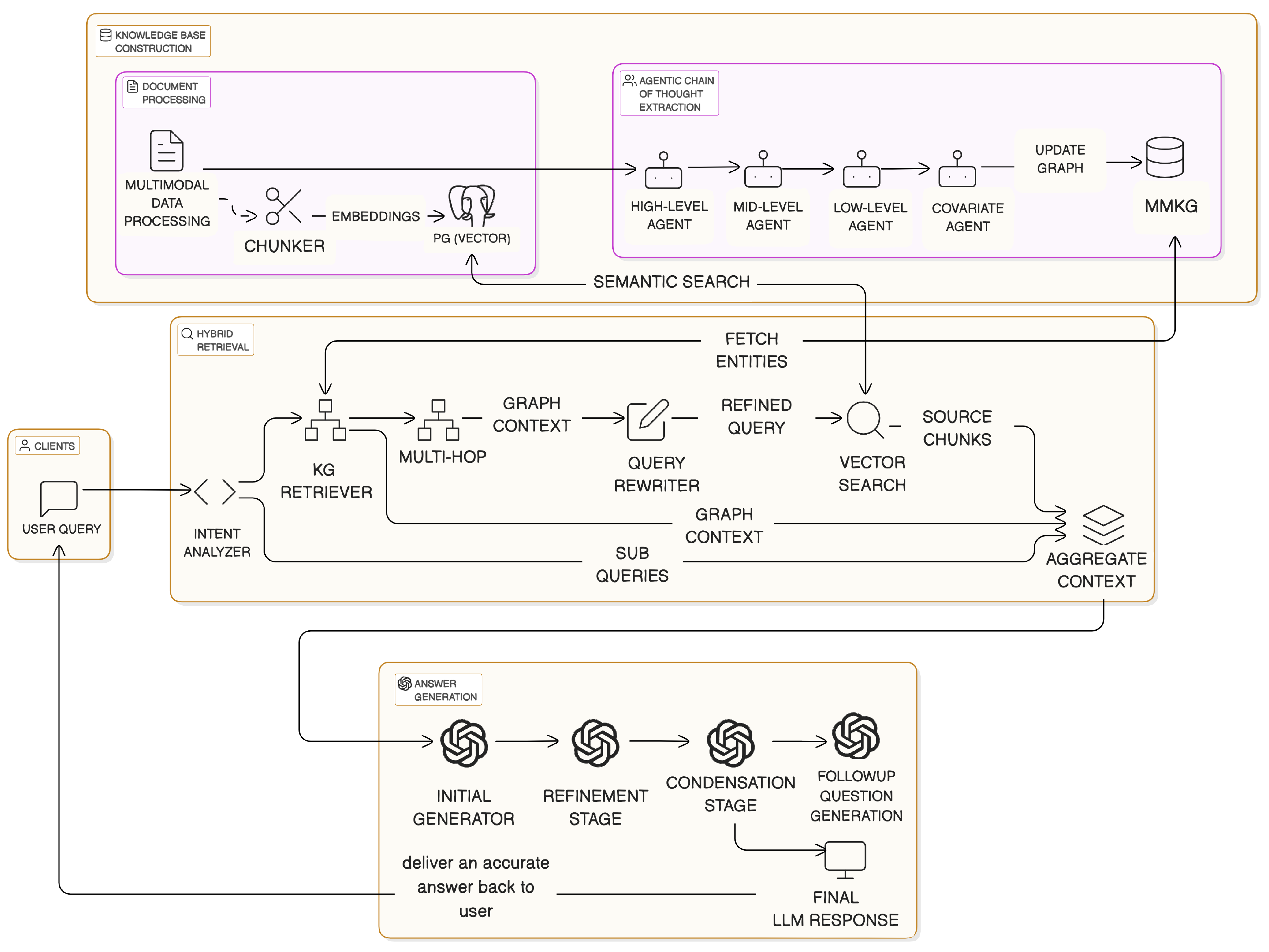

3.1. System Overview

3.2. Knowledge Base Construction

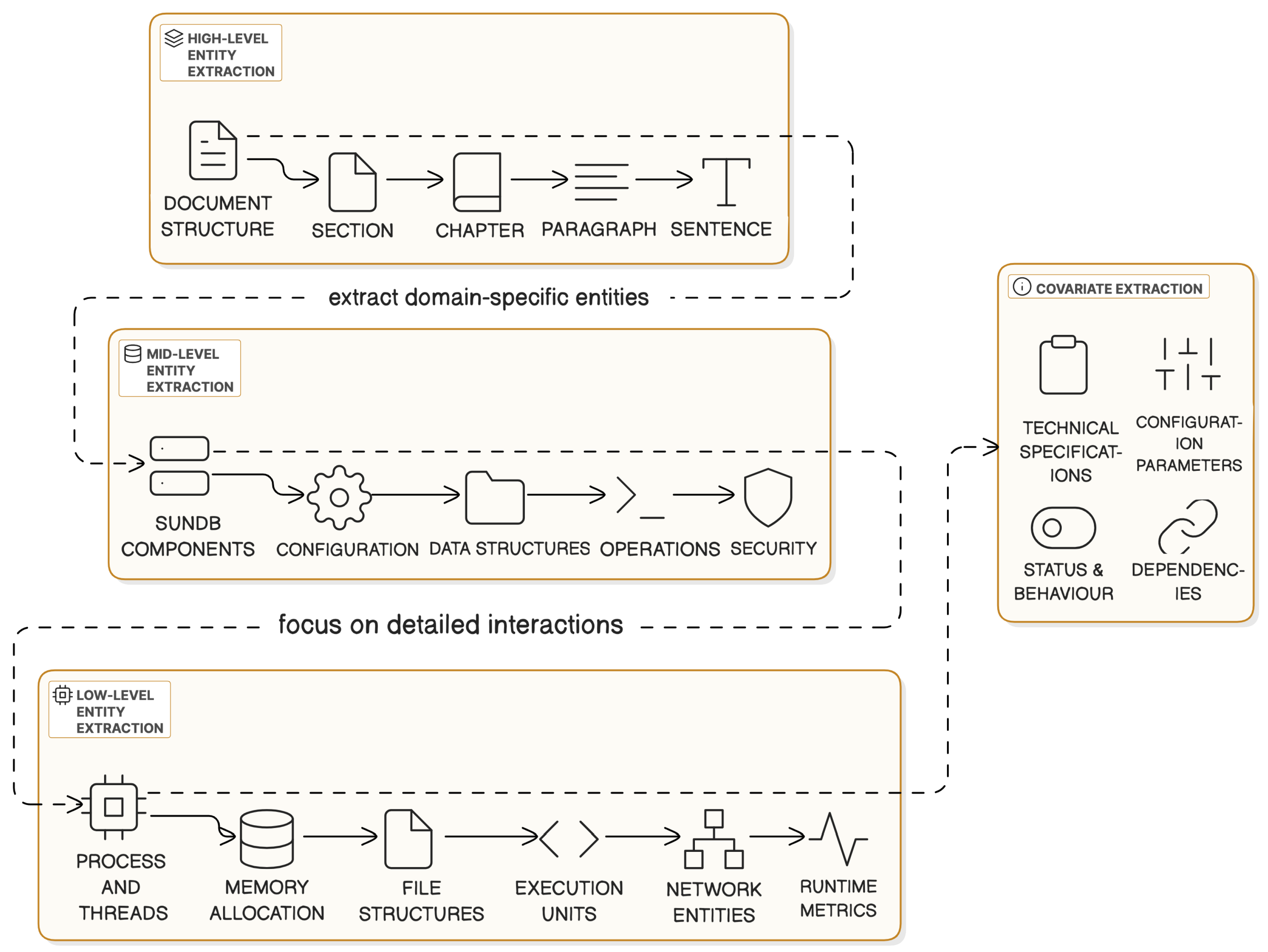

- High-Level Agent: Identifies structural elements (e.g., chapters, sections, paragraphs).

- Mid-Level Agent: Extracts domain-specific entities such as system components, APIs, and parameters.

- Low-Level Agent: Captures fine-grained operational relationships like thread behavior or error propagation.

- Covariate Agent: Attaches attributes (e.g., default values, performance impact) to existing nodes.

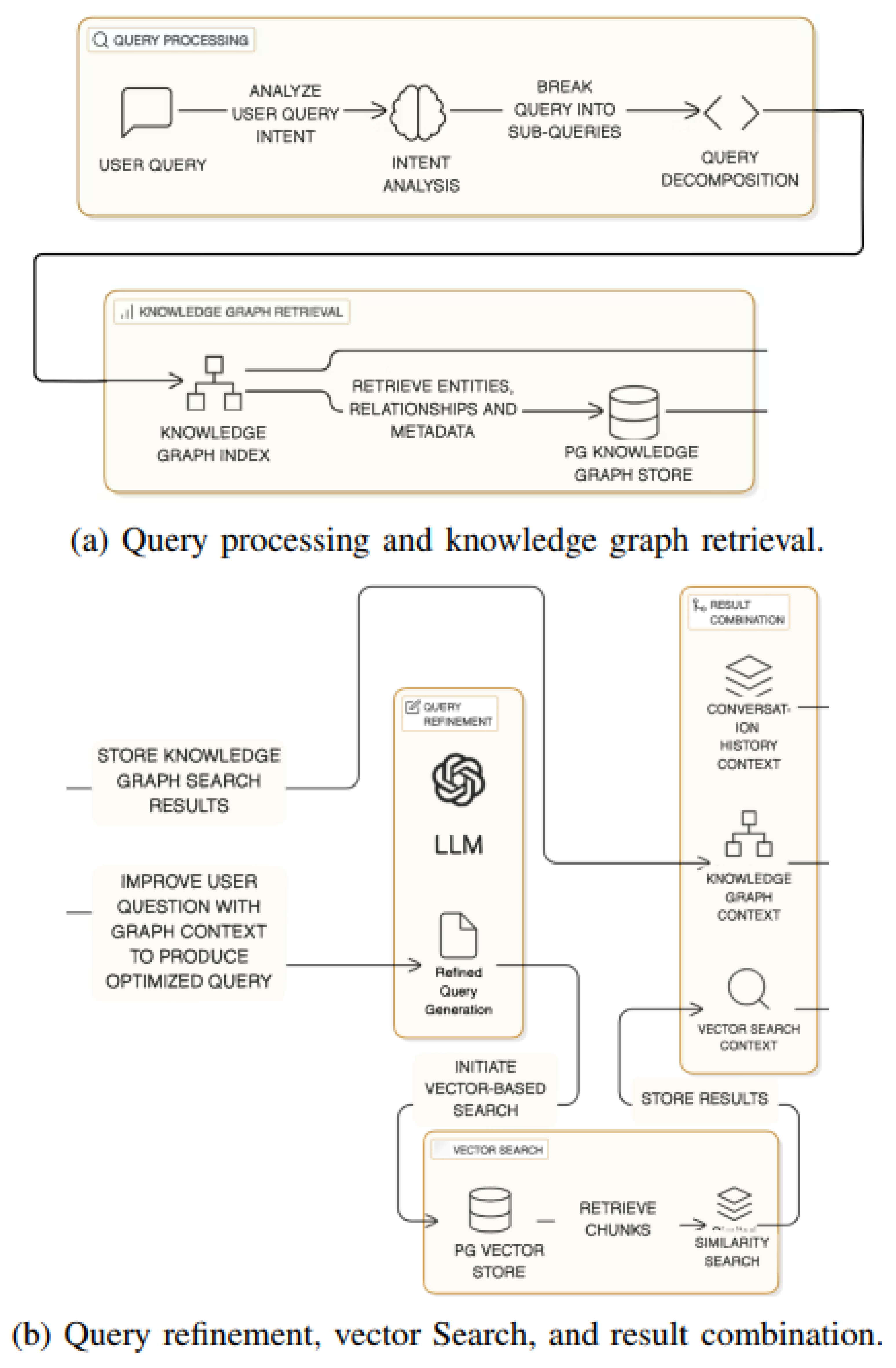

3.3. Hybrid Retrieval and Query Decomposition

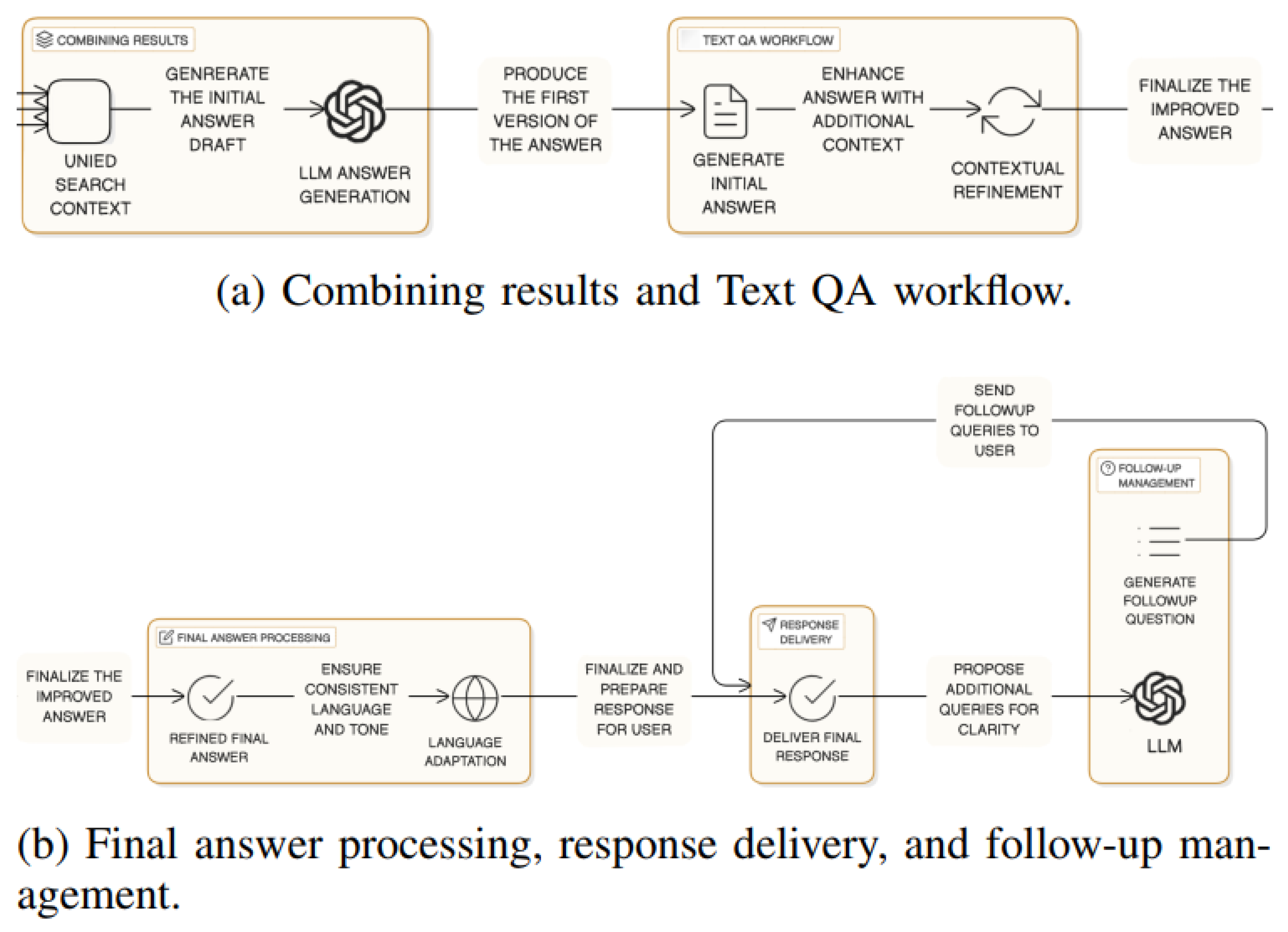

3.4. Grounded Answer Generation and Delivery

4. Experiments

4.1. Experimental Setup

- Answer Relevancy (AR): Measures alignment between the query and the generated answer, computed as:where , is the embedding of the i-th generated answer, and is the query embedding.

- Contextual Recall (CR): Evaluates the retrieval of all relevant information:

- Contextual Precision (CP): Assesses the accuracy of retrieved context, excluding irrelevant data:where , and indicates relevance.

- Faithfulness (F): Measures how accurately the answer reflects the retrieved context:

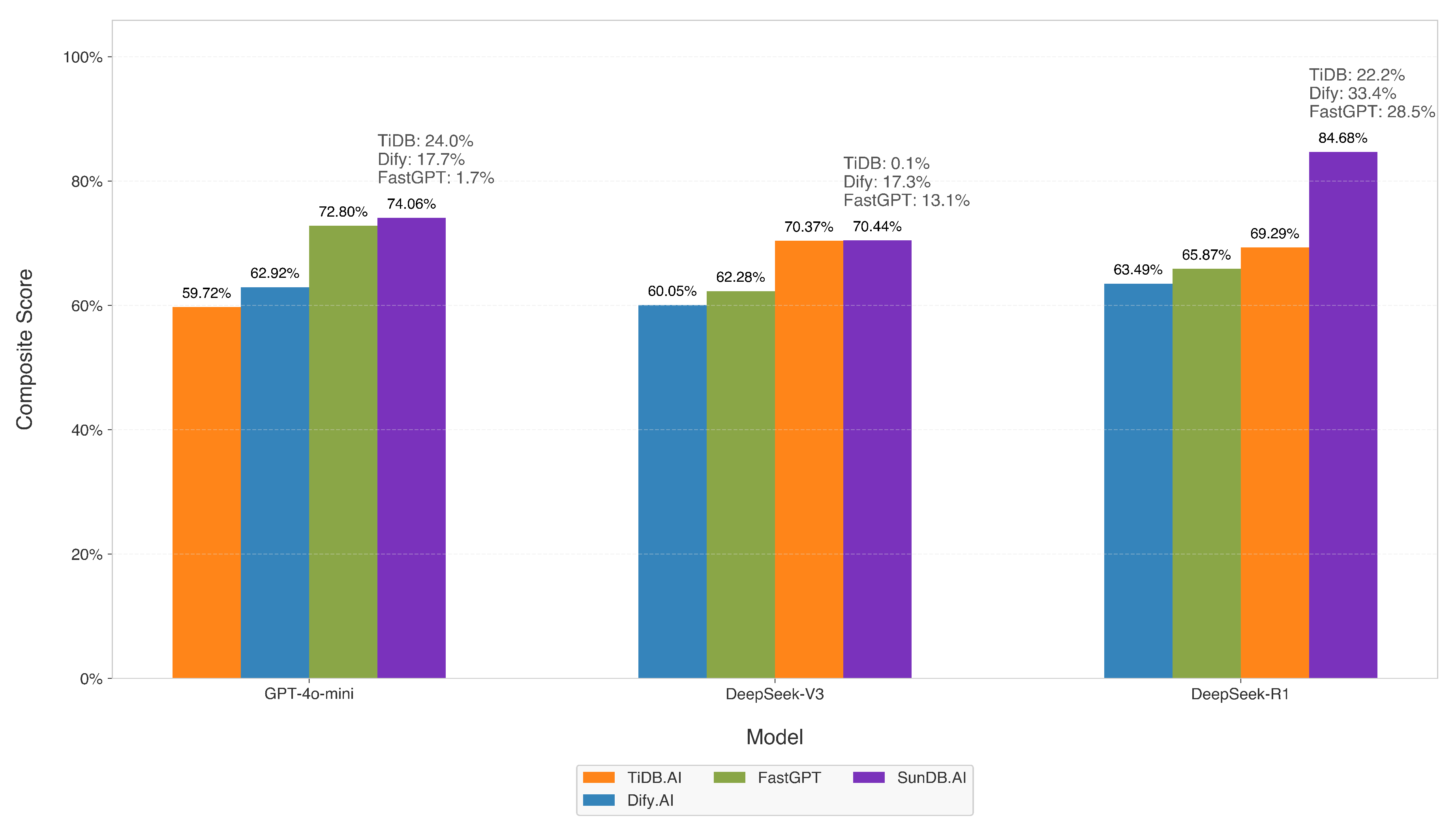

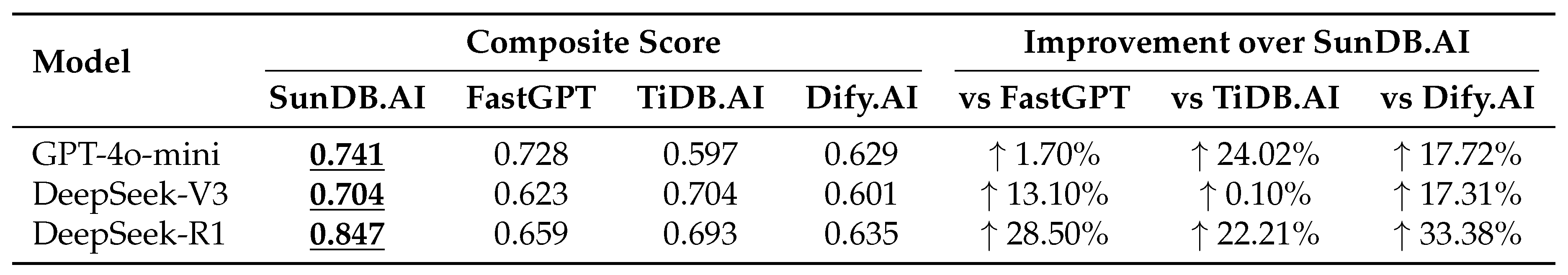

4.2. Results

4.3. Discussion

5. Conclusions

References

- DeepSeek-AI, Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning, 2025.

- xAI, Grok 3 beta — the age of reasoning agents, 2025.

- Q. Team, Qwq: Reflect deeply on the boundaries of the unknown, 2024.

- OpenAI, Introducing o3 and o4 mini, 2024.

- Z. Yang et al., “CuriousLLM: Elevating multi-document question answering with llm-enhanced knowledge graph reasoning,” in NAACL, 2025.

- Q. Zhou et al., “An llm-based multi-stage approach for automated test case generation from user stories,” in SEKE, 2024.

- S. Siriwardhana et al., “Improving the domain adaptation of retrieval augmented generation (RAG) models for open domain question answering,” Trans. Assoc. Comput. Linguistics, vol. 11, pp. 1–17, 2023.

- Z. Huang et al., “Recent trends in deep learning based open-domain textual question answering systems,” IEEE Access, vol. 8, pp. 94 341– 94 356, 2020.

- K. Bhushan et al., “Systematic knowledge injection into large language models via diverse augmentation for domain-specific rag,” in NAACL, 2025.

- Z. Zhang et al., “A domain question answering algorithm based on the contrastive language-image pretraining mechanism,” J. Comput. Commun., vol. 11, pp. 1–15, Jan. 2023.

- A. Tu et al., “Lightprof: A lightweight reasoning framework for large language model on knowledge graph,” in AAAI, 2025.

- Y. Wang et al., “Knowledge graph prompting for multi-document question answering,” AAAI, vol. 38, no. 17, pp. 19 206–19 214, Mar. 2024.

- A. Zafar et al., “Kimedqa: Towards building knowledge-enhanced medical qa models,” J. Intell. Inf. Syst., Jan. 2024.

- A. Dagnino, “Importance of good data quality in ai business information systems for iiot enterprises: A data-centric ai approach,” in SEKE, 2024.

- Labring, Fastgpt: Empower ai with your expertise, 2025.

- P. Inc., Tidb.ai: An open-source graphrag knowledge base tool, 2024.

- D. Contributors, Dify.ai: Open-source llm app development platform, 2023.

- A. M. N. Allam and M. H. Haggag, “Question answering in restricted domains: An overview,” Computational Linguistics, vol. 33, no. 1, pp. 41–66, 2012.

- E. Dimitrakis et al., “A survey on question answering systems over linked data and documents,” J. Intell. Inf. Syst., vol. 55, no. 1, pp. 37– 63, 2020.

- K. Singhal et al., “Large language models encode clinical knowledge,” Nature, vol. 620, no. 7972, pp. 172–180, 2023.

- P. Lewis et al., “Retrieval-augmented generation for knowledgeintensive nlp tasks,” in NIPS, vol. 33, Curran Associates, Inc., 2020, pp. 9459–9474.

- X. Zhu et al., “Knowledge graph-guided retrieval augmented generation,” in NAACL, 2025, pp. 8912–8924.

- V. Y. Singh et al., “Panda: Performance debugging for databases using LLM agents,” in CIDR, 2024.

- K. Soman et al., “Biomedical knowledge graph-enhanced prompt generation for large language models,” CoRR, vol. abs/2311.17330, 2023. arXiv: 2311.17330.

- L. Team, Langfuse: Observability for llm applications, Version 3.29.0, 2025.

- R. Ltd., Redis, Version 7.2.5, 2025.

- I. MinIO, Minio: High performance object storage, Version 2025-04- 22T22-12-26Z, 2025.

- I. ClickHouse, Clickhouse: Open source column-oriented dbms, Version 25.4.3.22-stable, 2025.

- Postgresql, Version 16.4, 2025.

- R. Developers, Ragas documentation, 2023.

- DeepSeek-AI et al., “Deepseek-v3 technical report,” CoRR, vol. abs/2412.19437, 2024.

- OpenAI, Gpt-4o mini: Advancing cost-efficient intelligence, 2024.

| Metric | DeepSeek-R1 | DeepSeek-V3 | KG Impact (R1) | KG Impact (V3) | ||

|---|---|---|---|---|---|---|

| Baseline | +KG | Baseline | +KG | () | () | |

| Answer Relevancy | 0.851 | 0.820 | 0.871 | 0.921 | ↓ 3.6% | ↑ 5.7% |

| Contextual Recall | 0.964 | 1.000 | 0.977 | 1.000 | ↑ 3.7% | ↑ 2.4% |

| Contextual Precision | 0.907 | 0.918 | 0.919 | 0.943 | ↑ 1.2% | ↑ 2.6% |

| Faithfulness | 0.718 | 0.678 | 0.711 | 0.714 | ↓ 5.6% | ↑ 0.4% |

| Model | Answer Relevancy | Contextual Recall | Contextual Precision (ragas) | Faithfulness |

|---|---|---|---|---|

| DeepSeek-R1 | 0.820407 | 1.000000 | 0.918463 | 0.677958 |

| DeepSeek-V3 | 0.920967 | 1.000000 | 0.942694 | 0.714305 |

| GPT-4o | 0.944203 | 0.979167 | 0.950279 | 0.770946 |

| GPT-4o mini | 0.939535 | 0.977273 | 0.921744 | 0.842160 |

| Grok 3 | 0.898365 | 1.000000 | 0.953021 | 0.836704 |

| Model | Answer Relevancy | Contextual Recall | Contextual Precision (ragas) | Faithfulness |

|---|---|---|---|---|

| DeepSeek-V3 | 0.965342 | 1.000000 | 0.919427 | 0.912089 |

| GPT-4o | 0.975000 | 1.000000 | 0.930203 | 0.870054 |

| GPT-4o mini | 0.958386 | 1.000000 | 0.913326 | 0.943798 |

| O3 mini | 0.949360 | 1.000000 | 0.888545 | 0.880241 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).