Submitted:

18 May 2025

Posted:

19 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

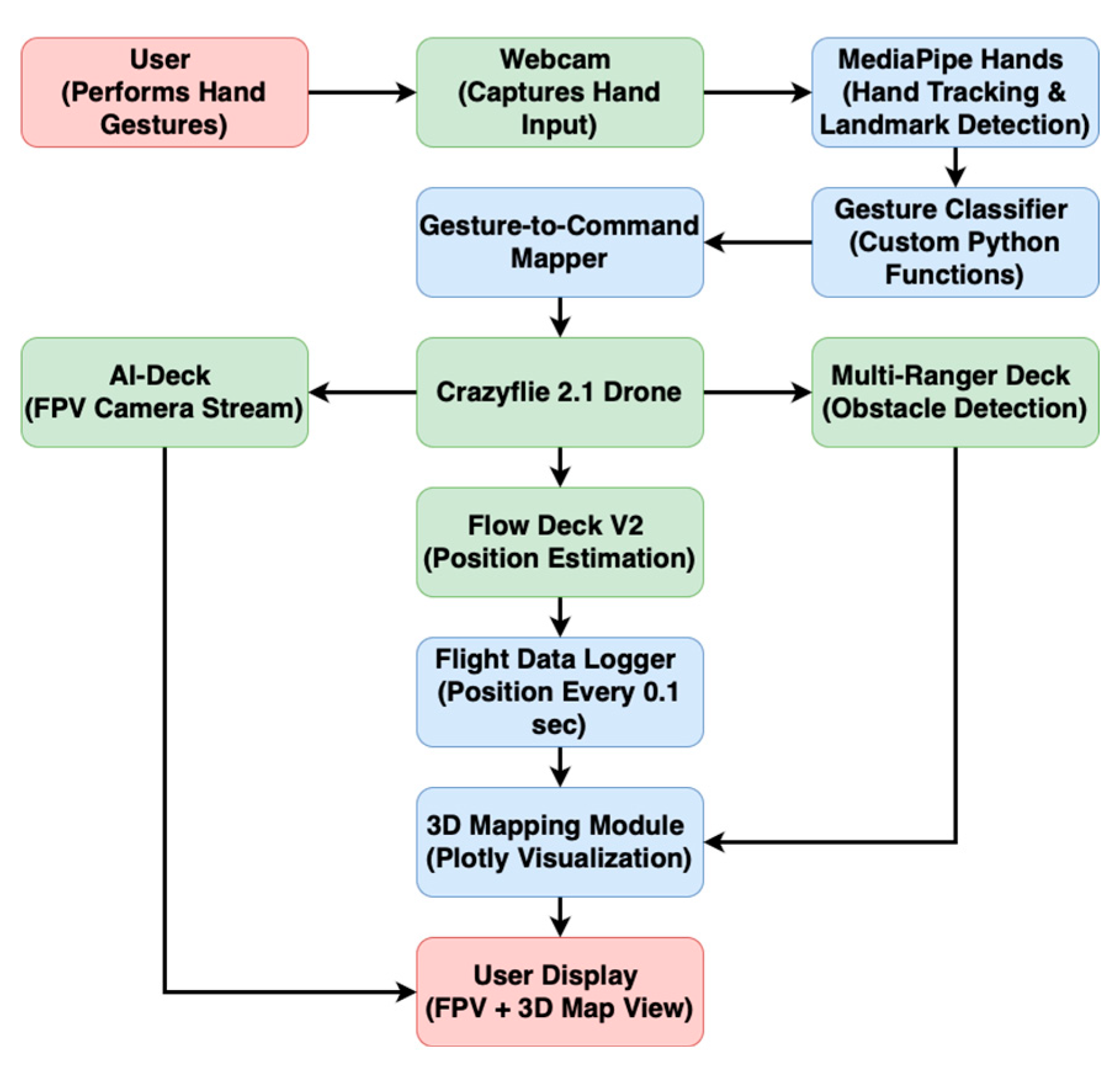

2.1. Integrated System Architecture and Workflow

2.2. Hardware and Software Components

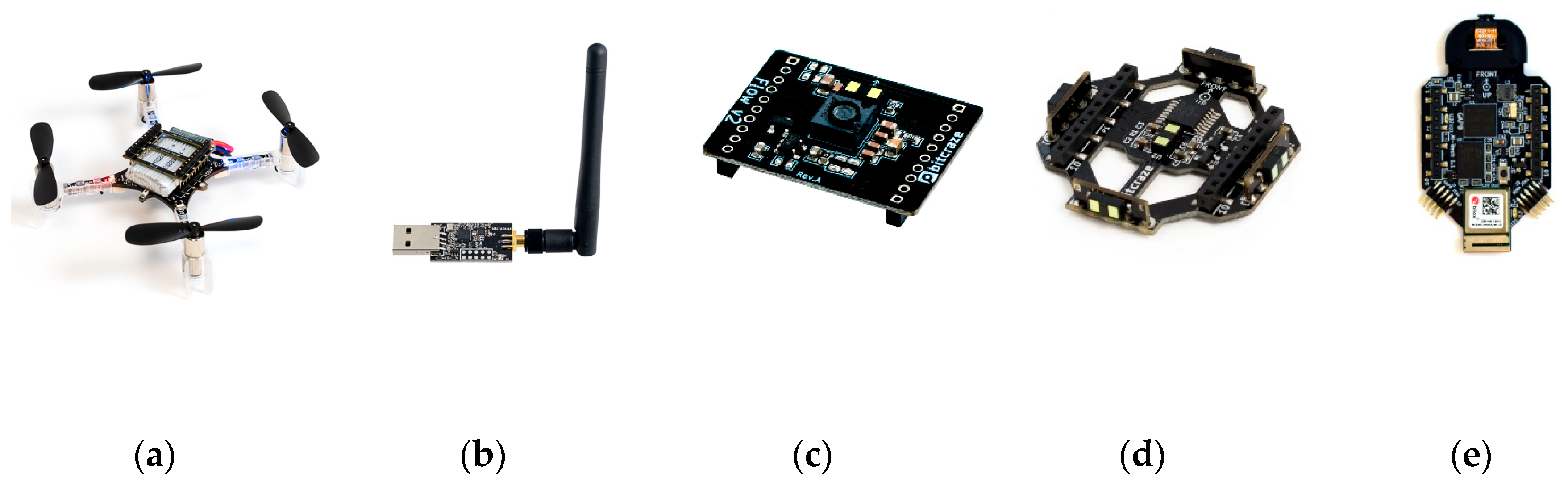

- Crazyflie 2.1 Drone: Figure 2a illustrates the main aerial platform for this research. It is a lightweight, open-source nano quadcopter developed by Bitcraze, equipped with an STM32F405 microcontroller, built-in IMU, and expansion deck support via an easy-to-use pin header system [22]. Its modular design allows seamless integration of multiple decks for advanced sensing and control functionalities.

- Crazyradio PA:Figure 2b is a 2.4 GHz radio dongle used to establish wireless communication between the host computer and the Crazyflie 2.1 drone [23]. The PA (Power Amplifier) version enhances signal strength and communication reliability, particularly in environments with interference or extended range requirements.

- AI-Deck 1.1:Figure 2e features a camera module for video streaming, allowing users to navigate the drone beyond their direct line of sight [26]. The AI-Deck includes a GAP8 RISC-V multi-core processor, enabling onboard image processing and low-latency streaming to a ground station. A Linux system is required for setting up and flashing the AI-Deck, as the software tools provided by Bitcraze, such as the GAP8 SDK, are optimized for Linux-based environments.

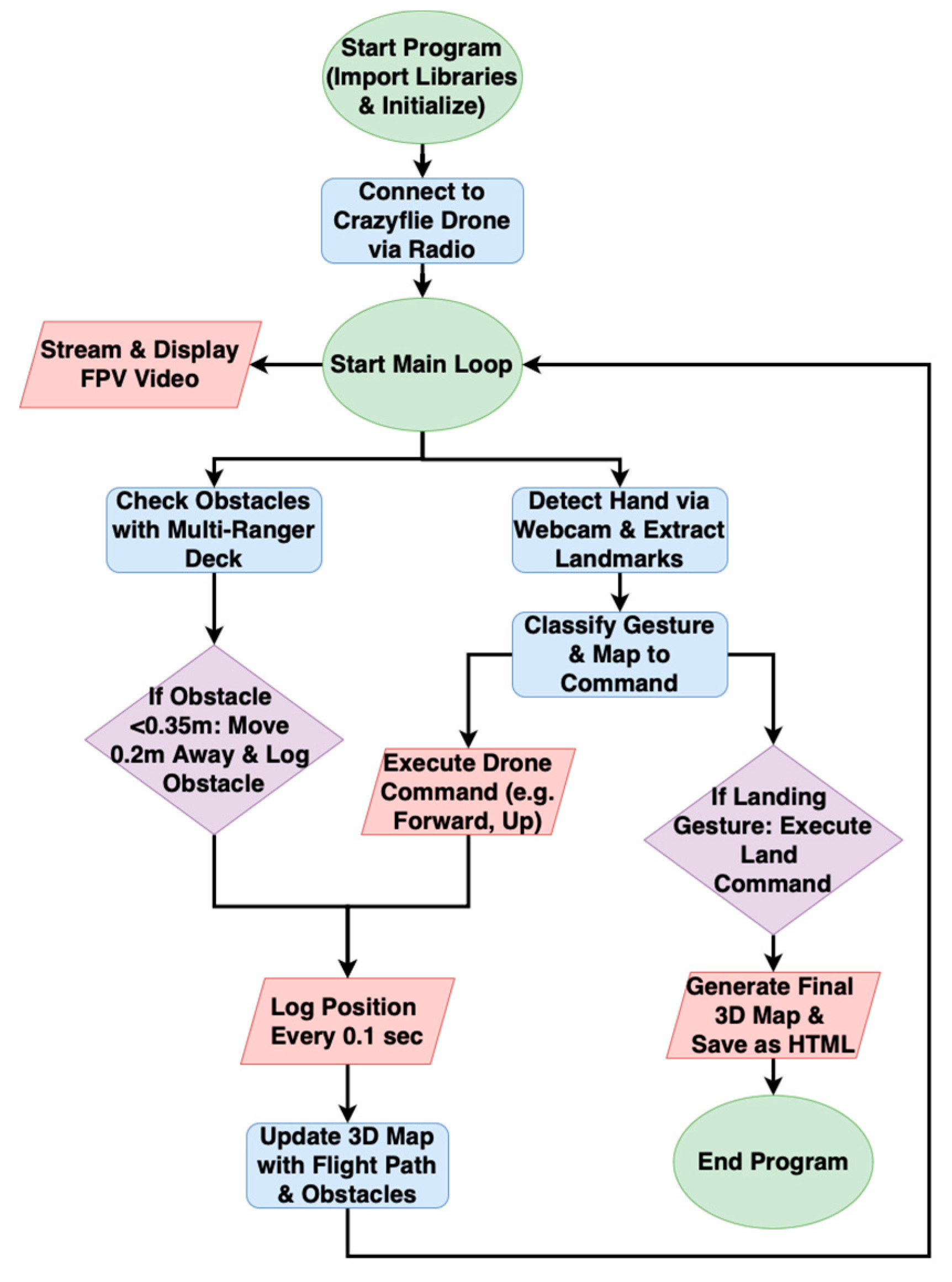

2.3. Code Implementation and System Logic

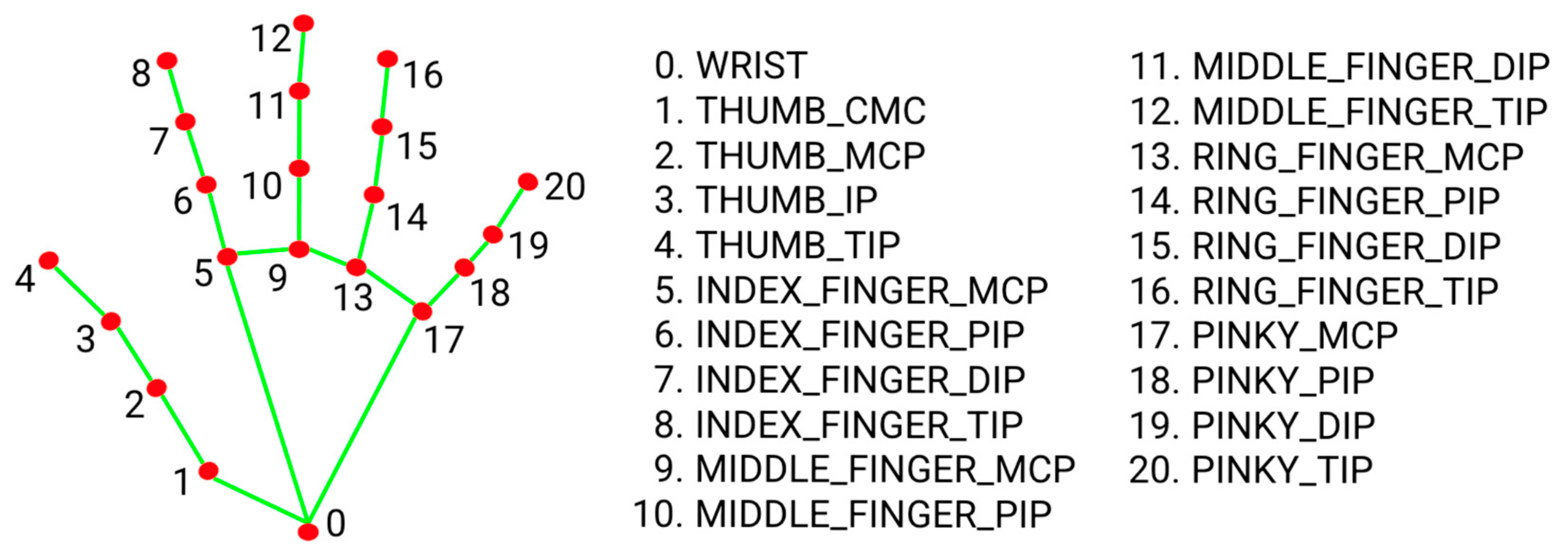

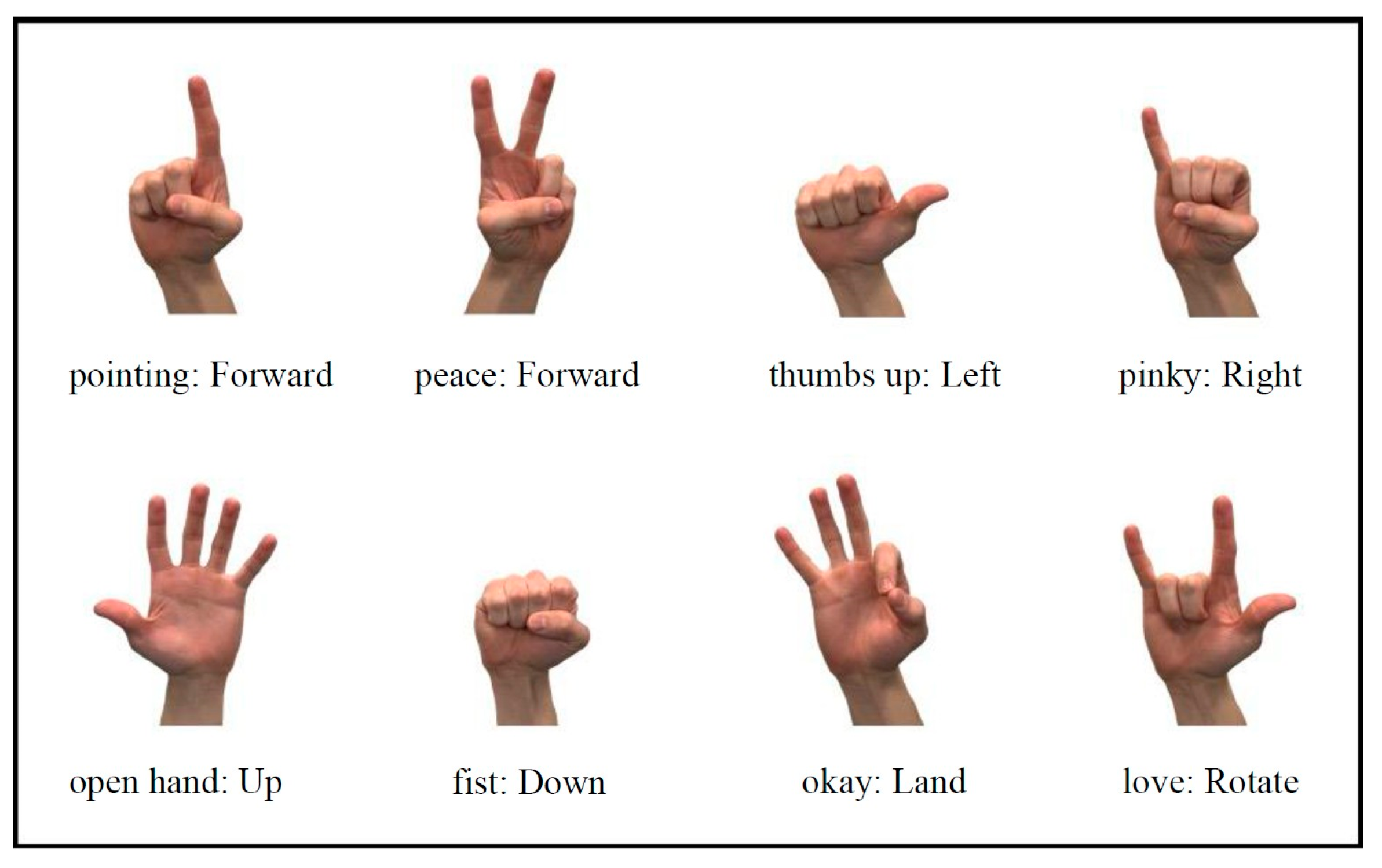

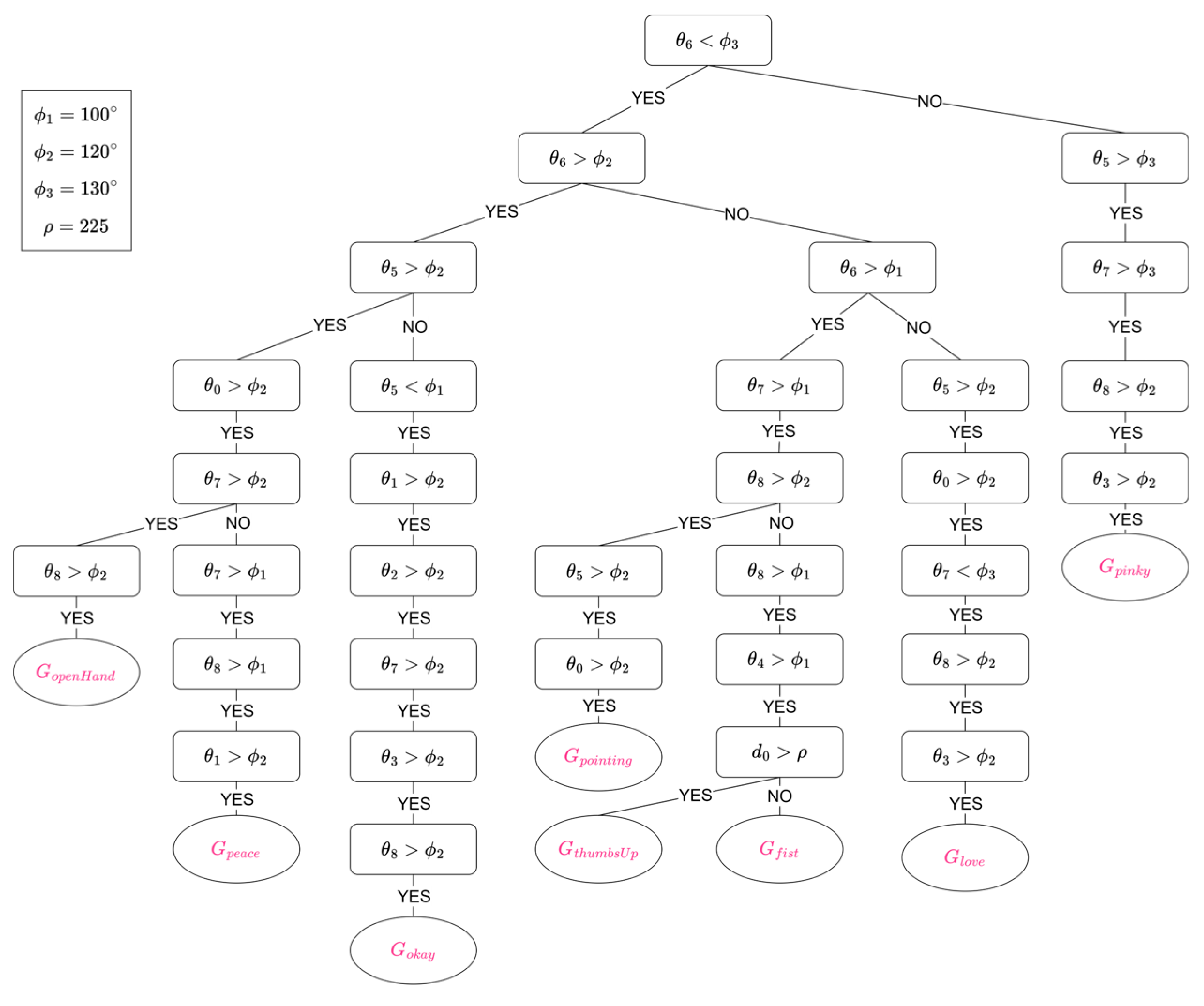

2.4. Hand-Gesture Recognition and Control

- A “pointing” gesture moves the drone forward.

- A “peace” gesture moves the drone backward.

- A “thumbs up” gesture moves the drone to the left.

- A “pinky” gesture moves the drone to the right.

- An “open hand” gesture triggers the drone to ascend (up).

- A “fist” gesture triggers the drone to descend (down).

- An “okay” gesture triggers the drone to land.

- A “love” gesture triggers the drone to rotate.

2.5. Obstacle Avoidance System

2.6. 3D Mapping and Flight Data Logging

2.7. Experimental Setup and Testing Procedure

- Hand-Gesture Control Test: Users performed predefined hand gestures in front of a webcam to verify accurate classification and execution of drone commands. Each gesture was tested multiple times to assess recognition accuracy and command responsiveness.

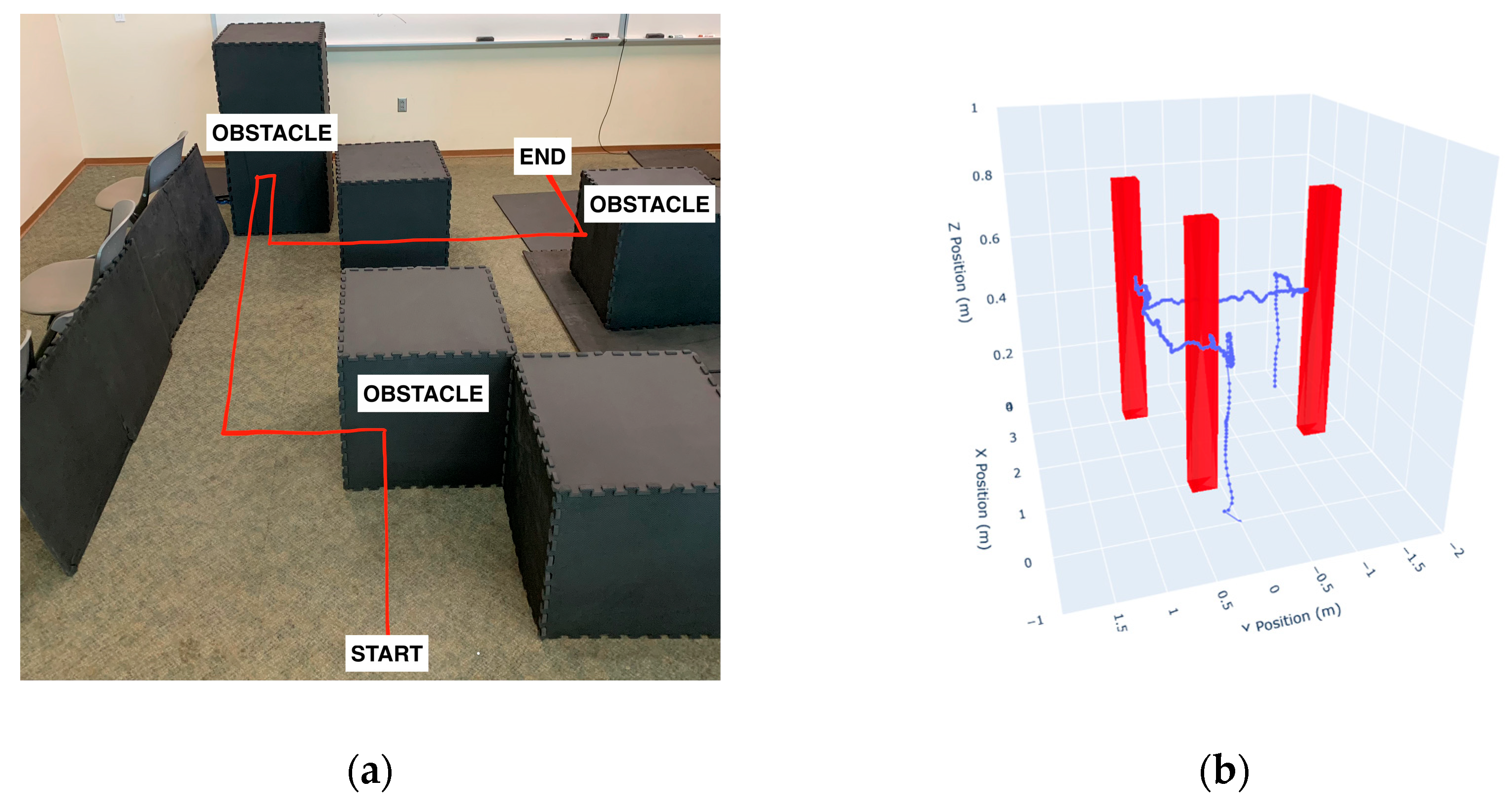

- Obstacle Avoidance Test: The drone was commanded to move forward while obstacles were placed in randomized positions along its path. The system’s ability to detect and avoid obstacles within the 0.35-meter threshold was analyzed, recording the reliability of avoidance maneuvers and any unintended collisions.

- 3D Mapping Validation: To assess mapping accuracy, the recorded flight path and obstacle locations were compared to real-world measurements. Errors in obstacle placement and discrepancies in flight path representation were quantified.

2.8. Data Availability

3. Results

3.1. Obstacle Avoidance Performance

3.1.1. Obstacle Detection and Response

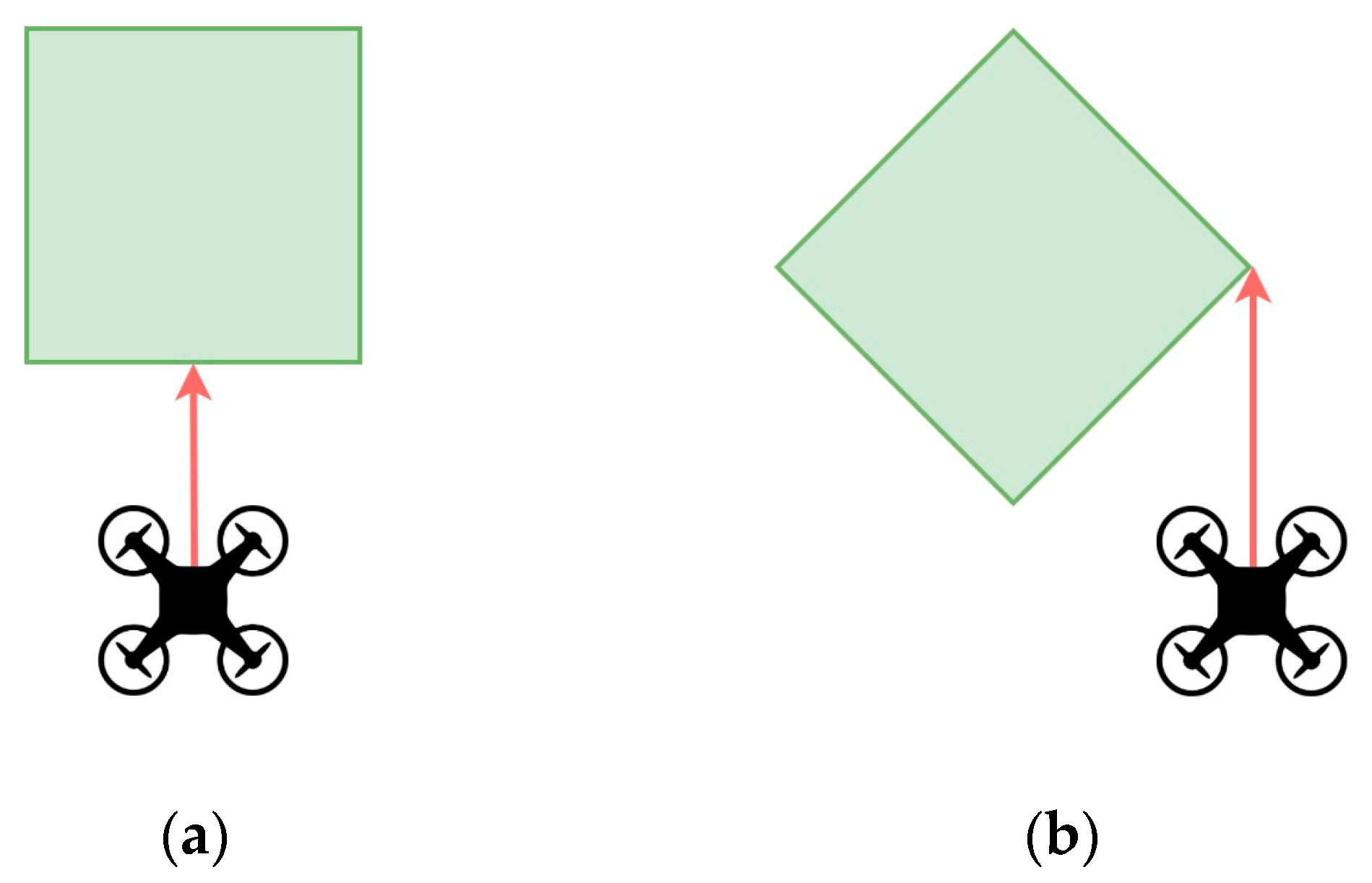

3.2.1. Obstacle Response During Perpendicular vs. Angled Approaches

3.2. Hand-Gesture Recognition Performance

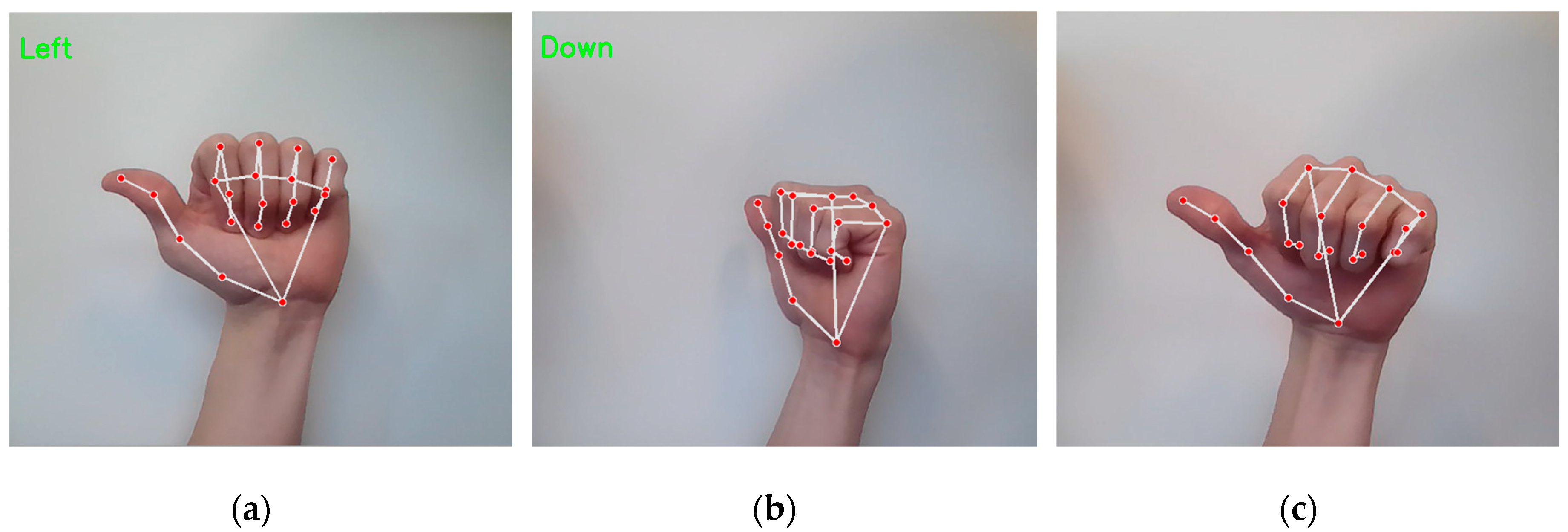

3.2.1. Gesture Recognition Accuracy

- Gesture Misclassification: The system occasionally misinterpreted one gesture as another (Figure 8b), especially when switching between gestures, leading to unintended drone movements. Also, if a gesture was presented at an unfavorable angle or partially obscured, it sometimes failed to register as any labeled gesture (Figure 8c), resulting in no response from the drone.

- Lighting Conditions: Bright, even lighting improved recognition, while dim or uneven lighting sometimes led to unstable tracking of landmarks.

- External Hand Interference: If another person’s hand entered the camera’s field of view, the system sometimes detected it as an input, disrupting the control process.

3.2.2. Gesture-to-Command Latency

3.2.3. User Experience and Usability

- Memorizing multiple gestures and associating them with their corresponding drone commands.

- Maintaining proper hand positioning in front of the camera to ensure accurate recognition.

- Balancing attention between their hand gestures and the drone’s movement, particularly when not relying on the AI deck’s streamed FPV footage.

3.3.1. Accuracy of 3D Map Generation

3.4. Integrated System Performance: Gesture Control and Obstacle Avoidance

3.4.1. Interaction Between Gesture Control and Obstacle Avoidance

3.4.2. AI-Deck Streaming and Remote Navigation

3.5. Comparison with Existing Gesture-Controlled Drone Systems

4. Discussion

- Internal Validity − The gesture recognition and drone control were tested under controlled indoor conditions with stable lighting and minimal distractions. Performance may decline in less controlled, real-world environments where lighting, background activity, or camera positioning vary significantly.

- Construct Validity − The predefined gestures selected for this study may not be universally intuitive for all users, potentially influencing usability outcomes. Furthermore, users’ hand size, motion speed, and articulation could impact gesture recognition reliability.

- External Validity − The findings are based on a specific hardware setup (Crazyflie 2.1, MediaPipe Hands, and AI-Deck). Results may not generalize to other drone models or hardware configurations without significant reengineering.

- Reliability Threats − System performance may degrade over prolonged use due to sensor drift, thermal effects, or battery limitations. Additionally, the gesture classification system may face reduced robustness in multi-user settings or when exposed to unintended hand movements.

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CMC | Carpometacarpal |

| DIP | Distal Interphalangeal |

| FOV | Field of View |

| FPV | First-Person View |

| IMU | Inertial Measurement Unit |

| MCP | Metacarpophalangeal |

| PA | Power Amplifier |

| PIP | Proximal Interphalangeal |

| SLAM | Simultaneous Localization and Mapping |

| ToF | Time-of-Flight |

| UAV | Unmanned Aerial Vehicle |

References

- Amicone, D. , et al., A smart capsule equipped with artificial intelligence for autonomous delivery of medical material through drones. Applied Sciences 2021, 11, 7976. [Google Scholar] [CrossRef]

- Hu, D. , et al., Automating building damage reconnaissance to optimize drone mission planning for disaster response. Journal of Computing in Civil Engineering 2023, 37, 04023006. [Google Scholar] [CrossRef]

- Nooralishahi, P., F. López, and X. P. Maldague, Drone-enabled multimodal platform for inspection of industrial components. IEEE Access 2022, 10, 41429–41443. [Google Scholar] [CrossRef]

- Nwaogu, J.M. , et al., Enhancing drone operator competency within the construction industry: Assessing training needs and roadmap for skill development. Buildings 2024, 14, 1153. [Google Scholar] [CrossRef]

- Tezza, D., D. Laesker, and M. Andujar. The learning experience of becoming a FPV drone pilot. in Companion of the 2021 ACM/IEEE international conference on human-robot interaction. 2021.

- Lawrence, I.D. and A.R.R. Pavitra, Voice-controlled drones for smart city applications. Sustainable Innovation for Industry 6.0, 2024: p. 162-177.

- Shin, S.-Y., Y. -W. Kang, and Y.-G. Kim. Hand gesture-based wearable human-drone interface for intuitive movement control. in 2019 IEEE international conference on consumer electronics (ICCE). 2019. IEEE.

- Naseer, F. , et al. Deep learning-based unmanned aerial vehicle control with hand gesture and computer vision. in 2022 13th Asian Control Conference (ASCC). 2022. IEEE.

- Hayat, A. , et al. Gesture and Body Position Control for Lightweight Drones Using Remote Machine Learning Framework. International Conference on Electrical and Electronics Engineering. Springer, 2024. [Google Scholar]

- Bae, S. and H.-S. Park, Development of immersive virtual reality-based hand rehabilitation system using a gesture-controlled rhythm game with vibrotactile feedback: an fNIRS pilot study. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 2023, 31, 3732–3743. [Google Scholar]

- Manikanavar, A.R. and S.B. Shirol. Gesture controlled assistive device for deaf, dumb and blind people using Raspberry-Pi. in 2022 International Conference on Smart Technologies and Systems for Next Generation Computing (ICSTSN). 2022. IEEE.

- Paterson, J. and A. Aldabbagh. Gesture-controlled robotic arm utilizing opencv. in 2021 3rd International Congress on Human-Computer Interaction, Optimization and Robotic Applications (HORA). 2021. IEEE.

- Seidu, I. and J.O. Lawal, Personalized Drone Interaction: Adaptive Hand Gesture Control with Facial Authentication. Int J Sci Res Sci Eng Technol 2024, 11, 43–60. [Google Scholar] [CrossRef]

- Alanezi, M.A. , et al., Obstacle avoidance-based autonomous navigation of a quadrotor system. Drones 2022, 6, 288. [Google Scholar] [CrossRef]

- Xue, Z. and T. Gonsalves, Vision based drone obstacle avoidance by deep reinforcement learning. AI 2021, 2, 366–380. [Google Scholar] [CrossRef]

- Ostovar, I. , Nano-drones: enabling indoor collision avoidance with a miniaturized multi-zone time of flight sensor. 2022, Politecnico di Torino.

- Courtois, H. , et al. , OAST: Obstacle avoidance system for teleoperation of UAVs. IEEE Transactions on Human-Machine Systems 2022, 52, 157–168. [Google Scholar]

- Bouwmeester, R.J., F. Paredes-Vallés, and G.C. De Croon. Nanoflownet: Real-time dense optical flow on a nano quadcopter. in 2023 IEEE International Conference on Robotics and Automation (ICRA). 2023. IEEE.

- Khoza, N., P. Owolawi, and V. Malele. Drone Gesture Control using OpenCV and Tello. in 2024 Conference on Information Communications Technology and Society (ICTAS). 2024. IEEE.

- Zhang, N. , et al., End-to-end nano-drone obstacle avoidance for indoor exploration. Drones 2024, 8, 33. [Google Scholar] [CrossRef]

- Telli, K. , et al., A comprehensive review of recent research trends on unmanned aerial vehicles (uavs). Systems 2023, 11, 400. [Google Scholar]

- Bitcraze. Crazyflie 2.1. 2024 18 April 2025]; Available from: https://www.bitcraze.io/products/old-products/crazyflie-2-1/.

- Bitcraze. Crazyradio PA. 2025 18 April 2025]; Available from: https://www.bitcraze.io/products/crazyradio-pa/.

- Bitcraze. Flow deck v2. 2025 14 April 2025]; Available from: https://store.bitcraze.io/products/flow-deck-v2.

- Bitcraze. Multi-ranger deck. 2025 14 April 2025]; Available from: https://store.bitcraze.io/products/multi-ranger-deck.

- Bitcraze. AI-deck 1.1. 2025 14 April 2025]; Available from: https://store.bitcraze.io/products/ai-deck-1-1.

- Google. Hand landmarks detection guide. 2025 4 May 2025]; Available from: https://ai.google.dev/edge/mediapipe/solutions/vision/hand_landmarker.

- Bitcraze. Multi-ranger deck. 2025 17 April 2025]; Available from: https://www.bitcraze.io/products/multi-ranger-deck/.

- Yun, G., H. Kwak, and D.H. Kim, Single-Handed Gesture Recognition with RGB Camera for Drone Motion Control. Applied Sciences 2024, 14, 10230. [Google Scholar] [CrossRef]

- Lee, J.-W. and K.-H. Yu, Wearable drone controller: Machine learning-based hand gesture recognition and vibrotactile feedback. Sensors 2023, 23, 2666. [Google Scholar] [CrossRef]

- Natarajan, K., T. -H.D. Nguyen, and M. Mete. Hand gesture controlled drones: An open source library. in 2018 1st International Conference on Data Intelligence and Security (ICDIS). 2018. IEEE.

- Begum, T., I. Haque, and V. Keselj. Deep learning models for gesture-controlled drone operation. in 2020 16th International Conference on Network and Service Management (CNSM). 2020. IEEE.

- Khaksar, S. , et al., Design and Evaluation of an Alternative Control for a Quad-Rotor Drone Using Hand-Gesture Recognition. Sensors 2023, 23, 5462. [Google Scholar] [CrossRef] [PubMed]

- Moffatt, A. , et al. Obstacle detection and avoidance system for small UAVs using a LiDAR. in, 2020. [Google Scholar]

- Karam, S. , et al. , Microdrone-based indoor mapping with graph slam. Drones 2022, 6, 352. [Google Scholar]

- Krul, S. , et al., Visual SLAM for indoor livestock and farming using a small drone with a monocular camera: A feasibility study. Drones 2021, 5, 41. [Google Scholar] [CrossRef]

- Backman, K., D. Kulić, and H. Chung, Reinforcement learning for shared autonomy drone landings. Autonomous Robots 2023, 47, 1419–1438. [Google Scholar] [CrossRef]

- Schwalb, J. , et al. A study of drone-based AI for enhanced human-AI trust and informed decision making in human-AI interactive virtual environments. in 2022 IEEE 3rd International Conference on Human-Machine Systems (ICHMS). 2022. IEEE.

- Sacoto-Martins, R. , et al. Multi-purpose low latency streaming using unmanned aerial vehicles. in 2020 12th International Symposium on Communication Systems, Networks and Digital Signal Processing (CSNDSP). 2020. IEEE.

- Truong, N.Q. , et al., Deep learning-based super-resolution reconstruction and marker detection for drone landing. IEEE Access 2019. 7, 61639–61655.

- Lee, S.H. , Real-time edge computing on multi-processes and multi-threading architectures for deep learning applications. Microprocessors and Microsystems 2022, 92, 104554. [Google Scholar] [CrossRef]

- Elbamby, M.S. , et al., Toward low-latency and ultra-reliable virtual reality. IEEE network 2018, 32, 78–84. [Google Scholar] [CrossRef]

- Hu, B. and J. Wang, Deep learning based hand gesture recognition and UAV flight controls. International Journal of Automation and Computing 2020, 17, 17–29. [Google Scholar]

- Lyu, H. Detect and avoid system based on multi sensor fusion for UAV. in 2018 International Conference on Information and Communication Technology Convergence (ICTC). 2018. IEEE.

- Habib, Y. , Monocular SLAM densification for 3D mapping and autonomous drone navigation. 2024, Ecole nationale supérieure Mines-Télécom Atlantique.

- Gao, J. , et al., SwarmCVT: Centroidal Voronoi Tessellation-Based Path Planning for Very-Large-Scale Robotics. arXiv 2024, arXiv:2410.02510,. [Google Scholar]

- Zhu, P., C. Liu, and S. Ferrari, Adaptive online distributed optimal control of very-large-scale robotic systems. IEEE Transactions on Control of Network Systems 2021, 8, 678–689. [Google Scholar]

- Zhu, P., C. Liu, and P. Estephan, A Novel Multivariate Skew-Normal Mixture Model and Its Application in Path-Planning for Very-Large-Scale Robotic Systems, in 2024 American Control Conference (ACC). 2024, IEEE: Toronto, Canada. pp. 3783-3790.

| Obstacle Location | Avoidance Success Rate |

|---|---|

| Forward | 100 |

| Backward | 100 |

| Left | 100 |

| Right | 100 |

| Angled | 100 |

| Hand Gesture | Accuracy |

|---|---|

| Pointing | 100 |

| Peace | 88 |

| Thumbs Up | 96 |

| Pinky | 100 |

| Open Hand | 100 |

| Fist | 100 |

| Okay | 100 |

| Love | 100 |

| Components | Deviation (cm) |

|---|---|

| Flight Path | 0 |

| Obstacle 1 | 11 |

| Obstacle 2 | 5 |

| Obstacle 3 | 4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).