Submitted:

19 May 2025

Posted:

19 May 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

- Focused Temporal Analysis of rPPG: Establishes foundational insights into the capabilities and limitations of using exclusively temporal physiological information, providing a rigorous benchmark.

- Multi-scale Temporal Dynamics Encoder (MTDE): Effectively captures physiologically meaningful ANS responses across multiple timescales, addressing complexity in subtle temporal emotional signals.

- Adaptive Sparse Attention: Precisely identifies transient, emotionally relevant physiological segments amidst noisy rPPG data, significantly enhancing robustness.

- Gated Temporal Pooling: Sophisticatedly aggregates emotional information across temporal chunks, effectively mitigating noise and irrelevant features.

- Curriculum Learning Strategy: Systematically addresses learning complexities associated with weak labels, noise, and temporal sparsity, ensuring robust, stable model learning.

2. Related Work

2.1. The Physiological Signals for Emotion Recognition

2.2. Remote PPG Signal Extraction and Denoising

2.3. Emotion Recognition from rPPG/PPG

2.4. Comparison and Key Differences

- Temporal-only Focus: Clarifies inherent temporal limitations and potentials, establishing foundational benchmarks.

- Robust Aggregation Strategy: Gated pooling methodically filters noise, prioritizing emotionally informative temporal segments.

- Generalizable, Rigorous Evaluation: Utilizing weighted F1 metrics and unseen test validation enhances objective performance assessments, overcoming methodological shortcomings of previous studies [9].

3. Methodology

3.1. Dataset and Preprocessing

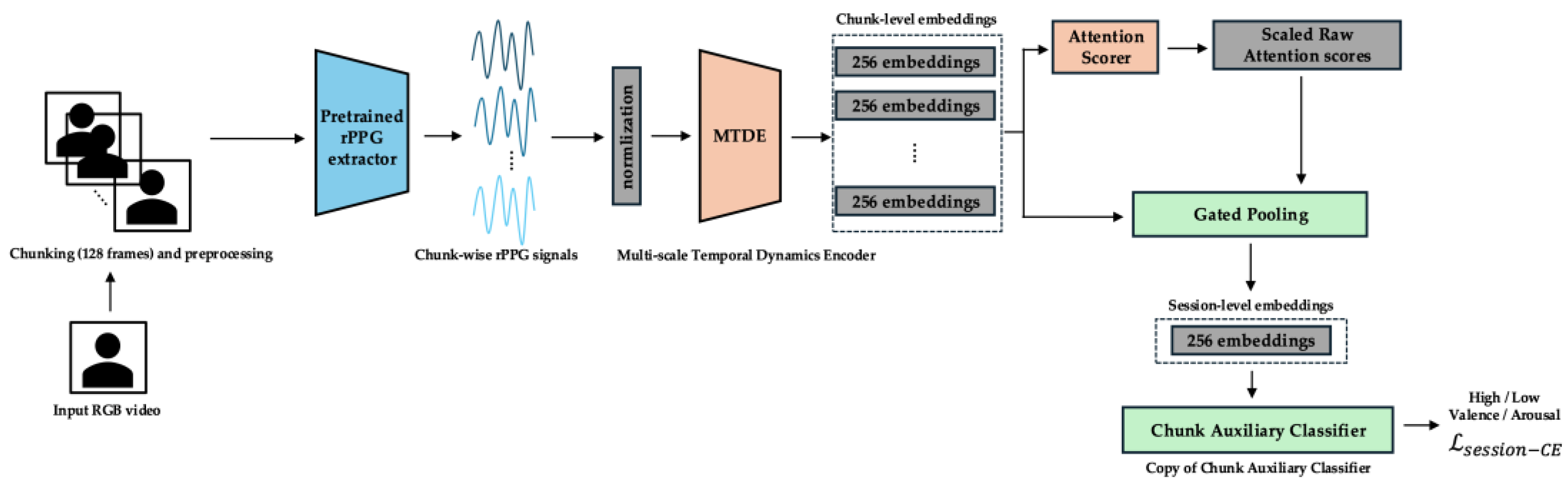

3.2. Overall Framework

3.3. Training Modules

3.3.2. Multi-scale Temporal Dynamics Encoder (MTDE)

3.3.3. AttnScorer

3.3.4. Auxiliary Components

3.3.5. GatedPooling

3.3.6. Main Classifier

3.4. Training Curriculum

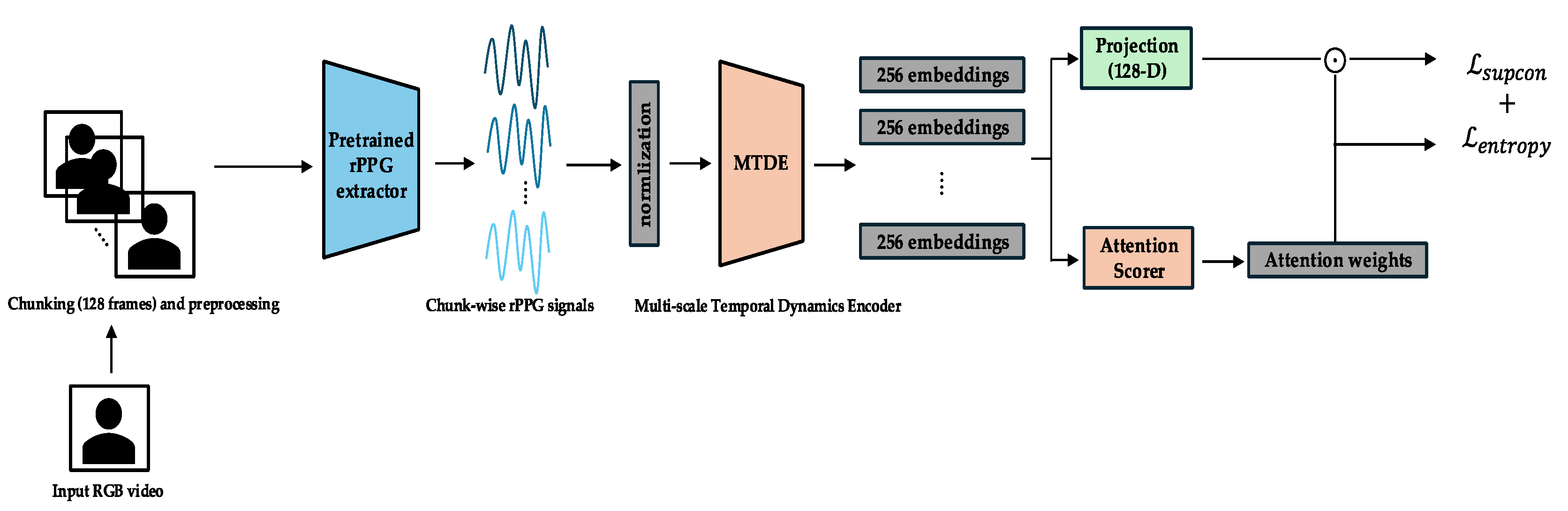

3.4.1. Phase 0 (Epochs 0–14): Exploration and Representation Learning.

- Physiological/Cognitive Link: This initial phase serves as an analogy to broad, unguided sensory exploration in biological systems. Before specific pattern recognition, a system first captures a wide array of sensory inputs to build a general understanding of the feature space. Similarly, the model focuses on encoding diverse physiological patterns within the rPPG signal across different time scales, irrespective of the final emotional labels, aiming to structure the embedding space based on inherent data characteristics and label proximity.

- Objective: The primary objective is to train the MTDE and related components (AttnScorer, ChunkProjection) to produce robust and diverse embedding representations for individual temporal chunks. During this phase, the GatedPooling module and the Main Classifier are not used for the primary loss computation.

- Primary Losses: The total loss in Phase 0 is a combination of the Supervised Contrastive Loss and an Entropy Regularization Loss. The Supervised Contrastive Loss () [12] is applied to the normalized embeddings from the ChunkProjection. This loss encourages embeddings from chunks originating from the same session (sharing the same label) to be closer in the representation space, while pushing embeddings from different sessions apart. This helps structure the embedding space according to emotional labels and promotes representation diversity.Here, is the set of anchor indices in the batch, is the set of all indices in the batch except , is the set of indices of positive samples (same label) as , represents the normalized embedding vectors, and is the temperature parameter. The Entropy Regularization Loss () is applied to the AttnScorer's internal Softmax attention output. With a weight (detailed in Appendix C), this loss encourages the initial attention distribution to be more uniform across chunks, promoting broader exploration of temporal features by the MTDE. The temperature parameter τ for is adaptively scheduled (Appendix C) based on the complexity of learned attention distributions, facilitating effective contrastive learning alongside exploration. The overall loss for this phase is .

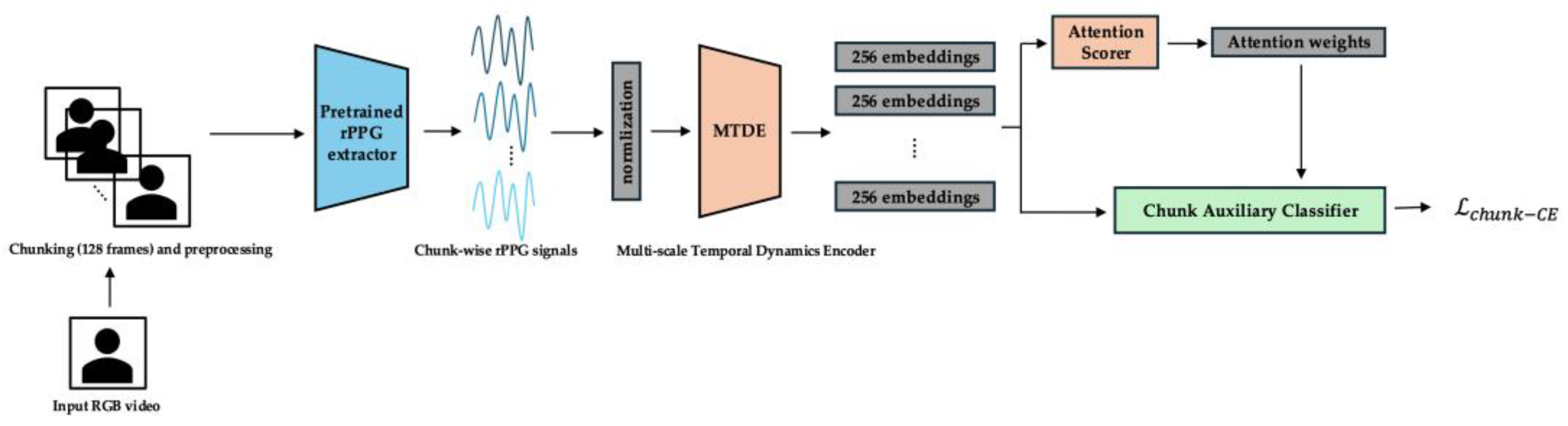

3.4.2. Phase 1 (Epochs 15–29): Chunk-level Discrimination and Attentional Refinement.

- Physiological/Cognitive Link: This phase simulates the development of selective attention and fine discrimination. After initial broad exploration, a biological system learns to differentiate between stimuli and focus processing on the most relevant or challenging aspects. In this phase, the model refines its ability to discriminate between emotional classes specifically at the chunk level, learning to focus its attention on the temporal segments that are most informative or difficult to classify amidst noise.

- Objective: To significantly enhance the discriminative capacity of the individual chunk embeddings and to refine the AttnScorer's ability to identify emotionally salient temporal segments. During this phase, the ChunkProjection module and its loss are frozen. The MTDE, AttnScorer, and ChunkAuxClassifier are actively trained. The GatedPooling module's parameters also begin training from epoch 25, preparing for the final session-level task.

- Primary Losses: The total loss in Phase 1 combines a Chunk-level Cross-Entropy Loss with a gradually introduced Session-level Cross-Entropy Loss. The Chunk-level Cross-Entropy Loss () is applied using the ChunkAuxClassifier. To effectively handle potential class imbalance present at the chunk level and to focus learning on challenging examples, we employ Focal Loss [41] with (Appendix C):

3.4.3. Phase 2 (Epochs ≥ 30): Session-level Exploitation and Fine-tuning.

- The Physiological/Cognitive Link: This final phase is analogous to integrating filtered and relevant information to make a final decision or judgment. The system leverages its refined chunk representations and attentional mechanisms to consolidate evidence from the most salient and informative features identified across time, leading to the final emotional inference.

- Objective: To optimize the entire end-to-end pipeline for the final session-level emotion recognition task. In this phase, the ChunkAuxClassifier and its associated loss are removed. The Main Classifier is initialized using the trained weights from the ChunkAuxClassifier at the start of epoch 30. The MTDE, AttnScorer, GatedPooling, and the Main Classifier are all actively trained. The full pipeline shown in Figure 1 is operational.

- Primary Loss Function: The sole objective function in Phase 2 is the Session-level Cross-Entropy Loss () applied to the output of the Main Classifier based on the GatedPooling session embedding. Its weight ramps up from 0.5 (at epoch 30) towards 1.0 (schedule in Appendix C) to become the primary focus.Here, is the one-hot encoded ground truth label for the session, is the predicted probability distribution over classes from the Main Classifier, and is the number of classes. During this phase, the AttnScorer is fine-tuned at a reduced learning rate (scaling factor in Appendix C). Additionally, the value for the α-Entmax transformation within the GatedPooling module is annealed from 1.5 (at epoch 30) to 1.8 (at epoch 50) (schedule in Appendix C). This increases the sparsity of the temporal attention applied during aggregation, further refining the focus on the most crucial temporal segments and their gated features for the final prediction.

3.5 Evaluation Metrics

- Accuracy: Defined as the proportion of correctly classified sessions out of the total number of sessions in the test set:

- Weighted F1-score: This metric is calculated based on the Precision (), Recall (), and F1-score () for each individual class . The formulas for these class-specific metrics are:

3.6. Baseline

3.7. Experimental Setup

4. Results

4.1. Main Results

- Accuracy: 64.04% vs. 61.31%

- Positive-class F1-score: 74.29% vs. 50.96%

- Weighted F1-score: 61.97% vs. 59.46%

- Physiological limitations: Arousal is closely associated with autonomic nervous system (ANS) activity—particularly sympathetic arousal—which is effectively captured through heart rate and HRV patterns inherent in rPPG signals [3, 22, 30, 39]. In contrast, valence is more intricately tied to subtle physiological cues, such as facial muscle activity (e.g., EMG) or cortical patterns, which are not sufficiently reflected in peripheral cardiovascular dynamics [39].

- Modality constraints: The use of spatially averaged 1D temporal rPPG precludes access to fine-grained spatial information, such as facial blood flow asymmetries, which have been shown to correlate with valence [34, 27].

- Data imbalance: A notable class imbalance in valence labels, both in the overall dataset and particularly within the test set, may contribute to biased predictions and hinder generalization performance.

4.2. Ablation Studies

4.2.1. Ablation Study on Pooling and Attention Mechanisms (Arousal)

4.2.2. Ablation Study on Pooling and Attention Mechanisms (Arousal)

- Phase 0 (Contrastive learning) significantly enhances the diversity and expressiveness of learned representations.

- Phase 1 (Chunk-level weak supervision) improves the model’s ability to localize and distinguish emotionally salient segments.

- Phase 2 (Session-level classification) yields optimal results only when preceded by these preparatory stages.

4.3. Computational Efficiency

5. Discussion

5.1. Limitations

5.2. Future work

6. Conclusions

7. Patents

Code Availability

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| Acc | Accuracy |

| ANS | Autonomic Nervous System |

| AttnScorer | Attention Scorer |

| BVP | Blood Volume Pulse |

| CE | Confusion Matrix |

| CM | Convolutional Neural Network |

| EDA | Electrodermal Activity |

| HRV | Heart Rate Variability |

| MIL | Multiple Instance Learning |

| MTDE | Multi-scale Temporal Dynamics Encoder |

| rPPG | remote Photoplethysmography |

| WF1 | Weighted F1-score |

Appendix A. Architecture Details of MTDE

A.1. Architecture Overview

A.2. Physiological Rationale & Receptive Fields

| Branch | Kernel | Dilation | Effective RF | Approx. Duration | Physiological Role |

| Short | 3 | 3 | 6 | ∼0.2 s | Pulse upslope, sympathetic ramp |

| Medium | 5 | 8 | 66 | ∼2.2 s | High-frequency HRV |

| Long | 3 | 32 | 129 | ∼4.3 s | Multi-cycle ANS modulation |

A.3. Pooling Layer: SoftmaxPool

Appendix B. Attention Modules: AttnScorer and GatedPooling

B.1. AttnScorer: Phase-aware Attention Scoring

B.1.1. Architecture

- o 2-layer MLP: Linear(D, D/2) → GELU → Linear(D/2, 1)

- o Zero-mean score normalization: scaling with EMA-based adjustment:

- o Raw score scaling:

B.1.2. Phase-dependent Attention Mechanism

| Phase | Epoch Range | Attention Type | Notes |

| 0 | 0–14 | Softmax (with temperature ) | Encourages diversity |

| 1 | 15–29 | -Entmax (adaptive ) | Sharp, sparse, differentiable Top-K |

| 2 | ≥30 | Raw scores only | Passed to GatedPooling |

B.1.3. Entmax Scheduling during Phase 1.

B.2. GatedPooling: Sparse Temporal Aggregation

Appendix C. Phase-wise Training Schedule

| Phase | Epochs | Objective | Active Modules |

| 0 | 0–14 | Embedding diversity (SupCon) | MTDE, AttnScorer, ChunkProjection |

| 1 | 15–29 | Chunk-level discrimination | + ChunkAuxClassifier, GatedPooling✓ (E≥25) |

| 2 | 30–49 | Session-level classification | GatedPooling, Classifier |

| Epoch | Top-K Ratio | SupCon | CE | (Temp) | (AttnScorer) | (Gated) | |

| 0 | 0.0 | 1.00 | 0.0 | 0.1 | 1.2 | — | — |

| 14 | 0.0 | 0.44 | 0.0 | 0.1 | 0.7 | — | — |

| 15 | 0.6 | 0.0 | 0.5 | 0.0 | 1.0 | 1.0 | — |

| 25 | 0.3 | 0.0 | 0.5 | 0.0 | 1.0 | 1.7 | start = 1.7 |

| 30 | — | 0.0 | 0.7 | 0.0 | 1.0 | raw only | 1.7 → 2.0 |

| 50 | — | 0.0 | 1.0 | 0.0 | 1.0 | raw only | 2.0 |

Appendix D. Contusion Matrices

| Predicted Low | Predicted High | |

| Actual Low | 9 | 18 |

| Actual High | 0 | 26 |

- Accuracy: 64.04%

- Weighted F1-score: 61.97%

- The model shows strong sensitivity to high arousal states (recall: 100%), with most misclassifications occurring in the low-arousal category.

| Predicted Low | Predicted High | |

| Actual Low | 13 | 10 |

| Actual High | 10 | 20 |

- Accuracy: 62.26%

- Weighted F1-score: 62.26%

- The model demonstrates relatively balanced performance but reveals confusion between low and high valence categories, indicating the nuanced nature of valence detection from unimodal temporal signals.

References

- Author 1, Calvo, R. A.; D'Mello, S. Affective computing and education: Learning about feelings. IEEE Trans. Affect. Comput. 2010, 1, 161–164. [CrossRef]

- Picard, R.W. Affective Computing; MIT Press: Cambridge, MA, USA, 1997. [Google Scholar] [CrossRef]

- Kreibig, S.D. Autonomic nervous system activity in emotion: A review. Biol. Psychol. 2010, 84, 394–421. [Google Scholar] [CrossRef] [PubMed]

- Cacioppo, J.T.; Gardner, W.L.; Berntson, G.G. The affect system has parallel and integrative processing components: Form follows function. J. Pers. Soc. Psychol. 1999, 76, 839–855. [Google Scholar] [CrossRef]

- Posada, F.; Russell, J.A. The affective core of emotion. Annu. Rev. Psychol. 2005, 56, 807–838. [Google Scholar] [CrossRef]

- Elman, J.L. Learning and development in neural networks: The importance of starting small. Cognition 1993, 48, 71–99. [Google Scholar] [CrossRef]

- Dietterich, T.G.; Lathrop, R.H.; Lozano-Pérez, T. Solving the multiple instance problem with axis-parallel rectangles. Artif. Intell. 1997, 89, 31–71. [Google Scholar] [CrossRef]

- Itti, L.; Koch, C.; Niebur, E. A model of saliency-based visual attention for rapid scene analysis. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 1254–1259. [Google Scholar] [CrossRef]

- Mellouk, W.; Handouzi, W. Deep Learning-Based Emotion Recognition Using Contactless PPG Signals. Appl. Sci. 2023, 13, 7009. [Google Scholar] [CrossRef]

- Mauss, I.B.; Robinson, M.D. Measures of emotion: A review. Cogn. Emot. 2009, 23, 209–237. [Google Scholar] [CrossRef]

- Svanberg, J. Emotion recognition from physiological signals. Master's Thesis, KTH Royal Institute of Technology, Stockholm, Sweden, 2019. [Google Scholar] [CrossRef]

- Khosla, P.; Teterwak, P.; Wang, C.; Xiao, Y.; Anand, A.; Zhu, H.; Wang, Y. Supervised contrastive learning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Virtual Conference, 6-12 December 2020; Volume 33, pp. 1602–1613. [Google Scholar] [CrossRef]

- Li, X.; Wang, X. Gated convolution networks for semantic segmentation. arXiv arXiv:1804.01033, 2018. [CrossRef]

- Liu, X.; Yu, Z.; Zhang, J.; Zhao, Y.; Zhou, J. RPPG-Toolbox: A Benchmark for Remote PPG Methods. IEEE Trans. Biomed. Eng. 2022, 70, 605–615. [Google Scholar] [CrossRef]

- McDuff, D.; Estepp, M.; Piasecki, N.; Blackford, E. Remote physiological measurement: Opportunities and challenges. IEEE Consum. Electron. Mag. 2017, 6, 62–70. [Google Scholar] [CrossRef]

- Peters, J.F.; Martins, A. Sparse attentive backtracking and the alpha-entmax. arXiv arXiv:1905.05055, 2019. [CrossRef]

- Agrafioti, F.; Mayosi, B.; Tarassenko, L. Robust emotion recognition from physiological signals using spectral features. In Proceedings of the IEEE International Conference on Multimedia and Expo (ICME), Melbourne, VIC, Australia, 9-13 July 2012; pp. 562–567. [Google Scholar] [CrossRef]

- Greene, D.; Tarassenko, L. Capturing the Multi-Scale Temporal Dynamics of Physiological Signals using a Temporal Convolutional Network for Emotion Recognition. In Proceedings of the Affective Computing and Intelligent Interaction Workshops (ACIIW), Cambridge, UK, 3-6 September 2019. [Google Scholar] [CrossRef]

- Corbetta, M.; Shulman, G.L. Control of goal-directed and stimulus-driven attention in the brain. Nat. Rev. Neurosci. 2002, 8, 201–215. [Google Scholar] [CrossRef]

- Xu, J.; Li, Z.; Wu, F.; Ding, Y.; Liu, T. Gated pooling for convolutional neural networks. IEEE Trans. Circuits Syst. Video Technol. 2020, 30, 2201–2213. [Google Scholar] [CrossRef]

- Soleymani, M.; Lichtenauer, J.; Pun, T.; Pantic, M. A multimodal database for affect recognition in response to movies. IEEE Trans. Affect. Comput. 2012, 3, 269–284. [Google Scholar] [CrossRef]

- Akselrod, S.; Gordon, D.; Ubel, J.B.; Shannon, D.C.; Berger, A.C.; Cohen, R.J. Power spectrum analysis of heart rate fluctuation: a quantitative probe of beat-to-beat cardiovascular control. Science 1981, 213, 220–222. [Google Scholar] [CrossRef]

- Vantriglia, F.; Morabito, F.C. A Survey on Remote Photoplethysmography and Its Applications. Sensors 2021, 21, 4071. [Google Scholar] [CrossRef]

- Wang, W.; den Brinker, A.C.; Stuijk, S.; de Haan, G. Algorithmic principles of remote PPG. IEEE Trans. Biomed. Eng. 2017, 64, 2757–2768. [Google Scholar] [CrossRef]

- Yu, Z.; Li, X.; Zhao, Y.; Chen, R.; Zhou, J. PhysNet: A Deep Learning Framework for Remote Physiological Measurement From Face Videos. IEEE Trans. Circuits Syst. Video Technol. 2020, 30, 2852–2863. [Google Scholar] [CrossRef]

- Zhou, W.; Zhang, J.; Hu, J.; Han, H.; Zhao, Y. Emotion Recognition from Remote Photoplethysmography: A Review. ACM Trans. Multimed. Comput. Commun. Appl. 2023, 19, 1–21. [Google Scholar] [CrossRef]

- Zhu, X.; Yu, Z.; Wang, Y.; Chen, R.; Liu, X.; Zhou, J. PhysFormer++: Robust Remote Physiological Measurement Guided by Multi-scale Fusion and Noise Modeling. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 603–619. [Google Scholar] [CrossRef]

- Zhu, X.; Yu, Z.; Wang, Y.; Chen, R.; Liu, X.; Zhou, J. PhysFormer: Robust Remote Physiological Measurement via Transformer. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 8686–8704. [Google Scholar] [CrossRef]

- Zhou, K.; Schinle, M.; Stork, W. Dimensional emotion recognition from camera-based PRV features. Methods 2023, 218, 224-232. [Google Scholar] [CrossRef] [PubMed]

- Shaffer, F.; Ginsberg, J.P. An Overview of Heart Rate Variability Metrics and Norms. Front. Public Health 2017, 5, 258. [Google Scholar] [CrossRef]

- Marín-Morales, J.; Guixeres, J.; Cebrián, M.; Alcañiz, M.; Chicchi Giglioli, I.A. Affective Computing in Virtual Reality: Emotion Recognition from Physiological Signals Using Machine Learning and a Novel Open Dataset. Sensors 2021, 21, 1207. [Google Scholar] [CrossRef]

- Luo, C.; Xie, Y.; Yu, Z. PhysMamba: Efficient Remote Physiological Measurement with SlowFast Temporal Difference Mamba. arXiv arXiv:2409.12031, 2024. [CrossRef]

- Valenza, G.; Lanata, A.; Padgett, L.; Thayer, J.F.; Scilingo, E.P. Instantaneous autonomic evaluation during affective elicitation. Psychol. Sci. 2011, 22, 847–852. [Google Scholar] [CrossRef]

- Talala, F.; Bazi, Y.; Al Rahhal, M.M.; Al-Jandan, B. Emotion Classification Based on Pulsatile Images Extracted from Short Facial Videos via Deep Learning. Sensors 2024, 24, 2620. [Google Scholar] [CrossRef]

- Lee, C.Y.; Gallagher, P.; Tu, Z. Generalizing Pooling Functions in CNNs: Mixed, Gated, and Tree. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 372–385. [Google Scholar] [CrossRef]

- Yu, Z.; Zhou, X.; Chen, R.; Wang, Y.; Liu, X.; Zhou, J. RhythmFormer: Extracting rPPG Signals Based on Hierarchical Temporal Periodic Transformer. arXiv arXiv:2402.12788, 2024.

- Briggs, F.; Mangun, G.R.; Usrey, W.M. Attention enhances Synaptic Efficacy and Signal-to-Noise in Neural Circuits. Nature 2013, 499, 476–480. [Google Scholar] [CrossRef] [PubMed]

- Bengio, Y.; Louradour, J.; Collobert, R.; Weston, J. Curriculum learning. In Proceedings of the 26th Annual International Conference on Machine Learning (ICML), Montreal, QC, Canada, 14-18 June 2009; pp. 41–48. [Google Scholar] [CrossRef]

- Sato, W.; Kochiyama, T. Dynamic relationships of peripheral physiological activity with subjective emotional experience. Front. Psychol. 2022, 13, 837085. [Google Scholar] [CrossRef]

- Li, X.; Chen, X.; Arslan, O.; Bilgin, G.; Al Machot, F. Emotion recognition from physiological signals: a review. In Proceedings of the International Conference on Intelligent Human Computer Interaction, Halifax, NS, Canada, 5-7 December 2018; pp. 223–233. [Google Scholar] [CrossRef]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal Loss for Dense Object Detection. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar] [CrossRef]

| Method | Accuracy (%) | F1 of Positive (%) | Weighted F1 (%) |

| CNN-LSTM [9] | 61.31 | 50.96 | 59.46 |

| Ours* | 66.04* | 74.29* | 61.97* |

| Method | Accuracy (%) | F1 of Positive (%) | Weighted F1 (%) |

| CNN-LSTM [9] | 73.50 | 76.23 | 73.14 |

| Ours* | 62.26* | 66.67* | 62.26* |

| Method | Accuracy (%) | Weighted F1 (%) |

| Ours (MTDE + Gated Pooling) * | 66.04* | 61.97* |

| MTDE + Attention Pooling | 50.94 | 47.56 |

| MTDE + Average Pooling | 50.94 | 39.07 |

| Method | Accuracy (%) | Weighted F1 (%) |

| Full Curriculum (Phase 0→2)* | 66.04* | 61.97* |

| Phase 1 → Phase 2 | 61.22 | 57.46 |

| Phase 2 → Phase 2 (Init from Aux) | 54.72 | 45.88 |

| Phase 2 (Direct training) | 50.94 | 36.34 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).