Submitted:

14 May 2025

Posted:

14 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Survey

2.1. Study On Human And Pedestrian Behaviour

2.2. Machine Learning Based Modeling

2.3. Deep Learing Based Modeling

2.4. Reinforcement Learning Based Modelling

2.5. Imitation Learning Based Modelling

3. Methodology

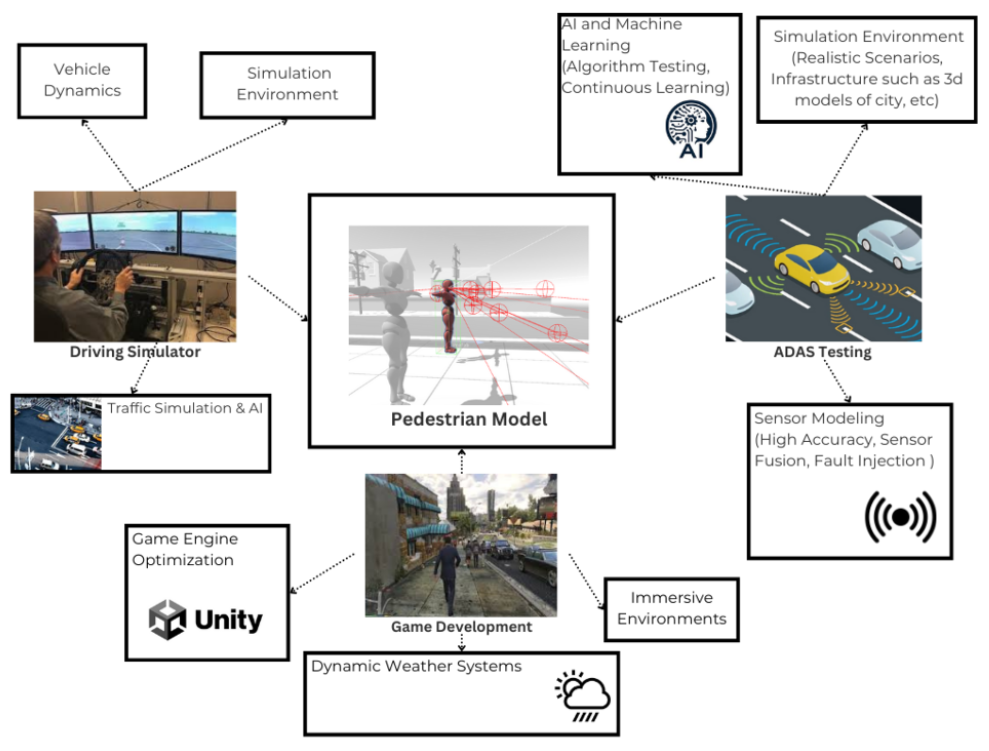

3.1. Overview

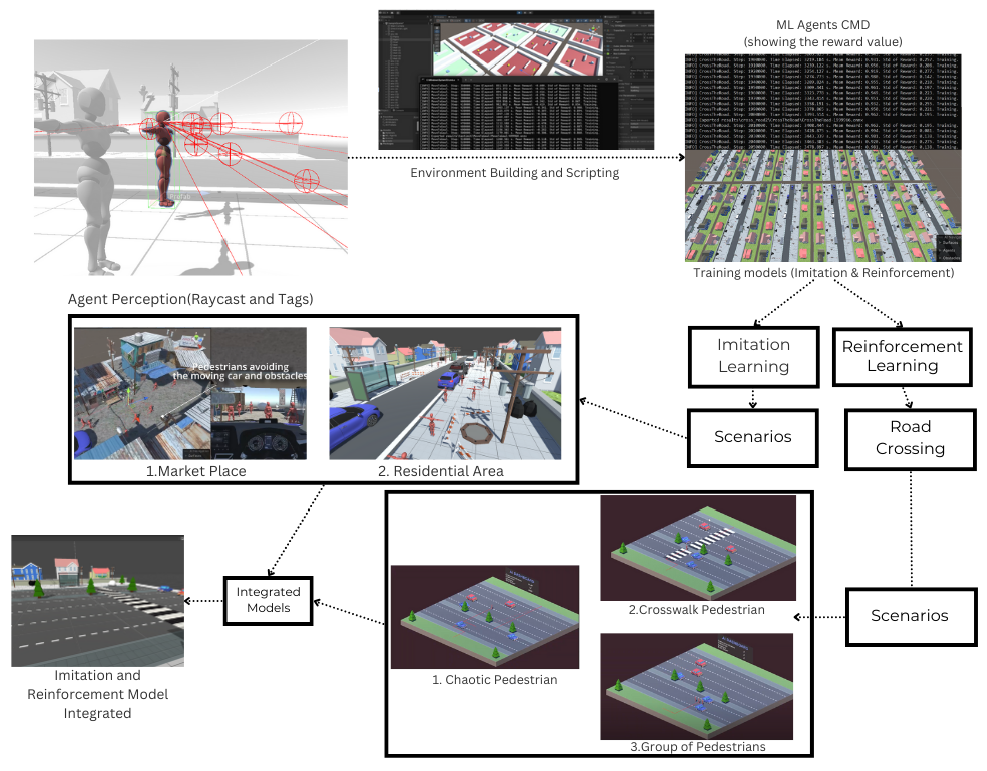

3.2. Model Development

3.2.1. Behavioural Cloning (BC)

3.2.1.1. Loss Function:

3.2.2. Generative Adversarial Imitaion Learning (GAIL)

3.2.2.1. Equations:

3.2.3. Proximal Policy Optimization (PPO)

Working Mechanism:

Equations:

Analysis:

3.2.4. Hybrid Learning Model for Pedestrian Navigation

Key Features:

- Adaptive Learning: When performing usual navigation tasks,the agent employed imitation learning but switched to reinforcement learning to handle dynamic traffic conditions.

- Reward System: A reward system based on reinforcement learning incentivised successful fast movements across the road and penalized any accidents or incorrect behaviours. Through experience, the agent could repeatedly improve its strategy.

3.2.5. Agent Perception And Deception

3.3. Recording The Demonstration

- Human-like Movements: The expert indicated how the subjects adjusted their speed and direction in response to approaching objects (vehicles).

- Training Objectives: The recordings were analyzed to establish behaviours for the agent, such as stopping before crossing or waiting for a safe gap in traffic.

3.4. Scenario Design

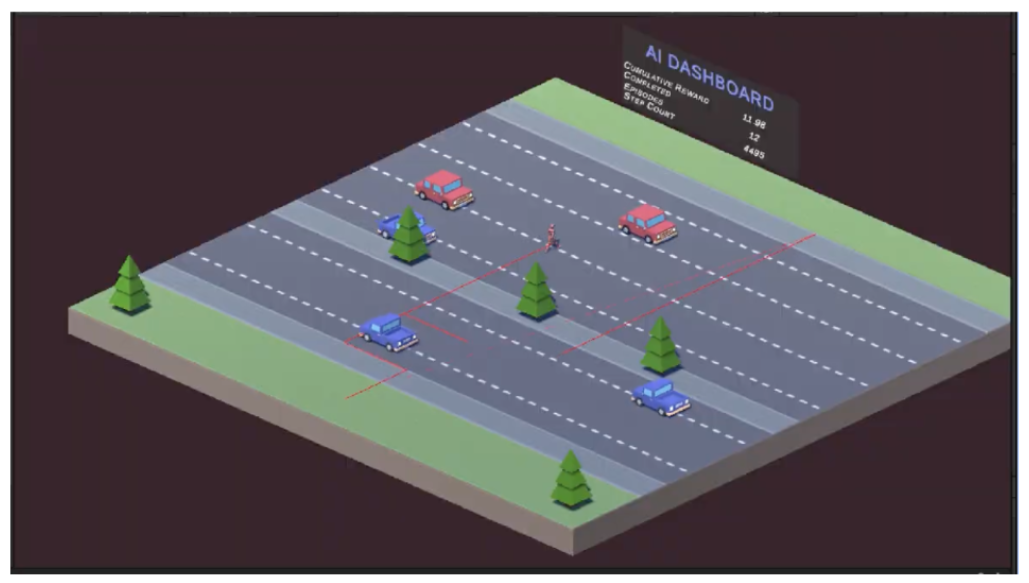

- Scene 1: The scenario depicted in Figure 4, where individuals are crossing the road without following any traffic laws, assessed the agent’s ability to react quickly and safely when faced with making a decision in a dangerous situation.

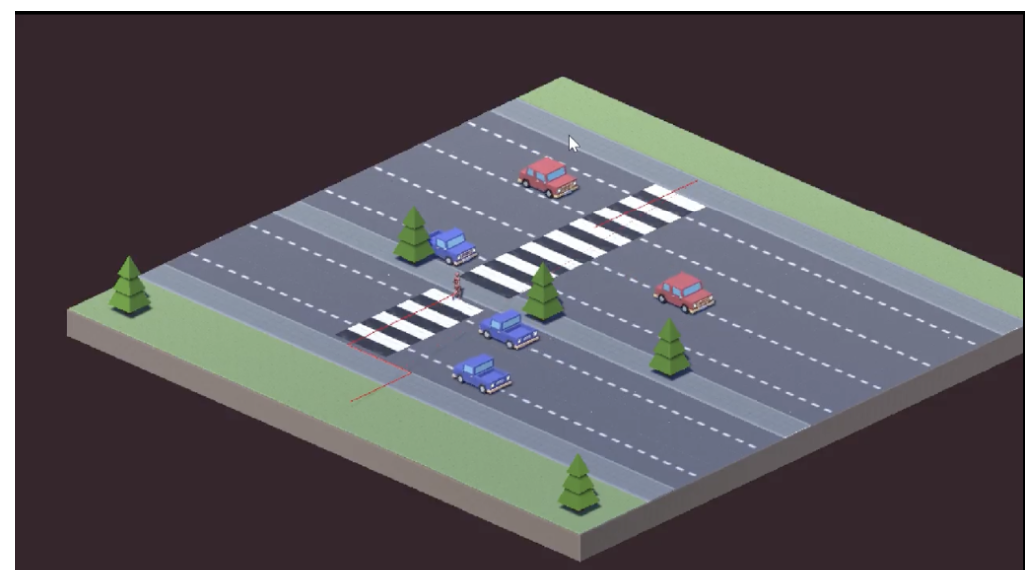

- Scene 2: As seen in Figure 5, the agent was required to detect and use the closest crosswalk, emphasizing the importance of structured, rule-based crossings.

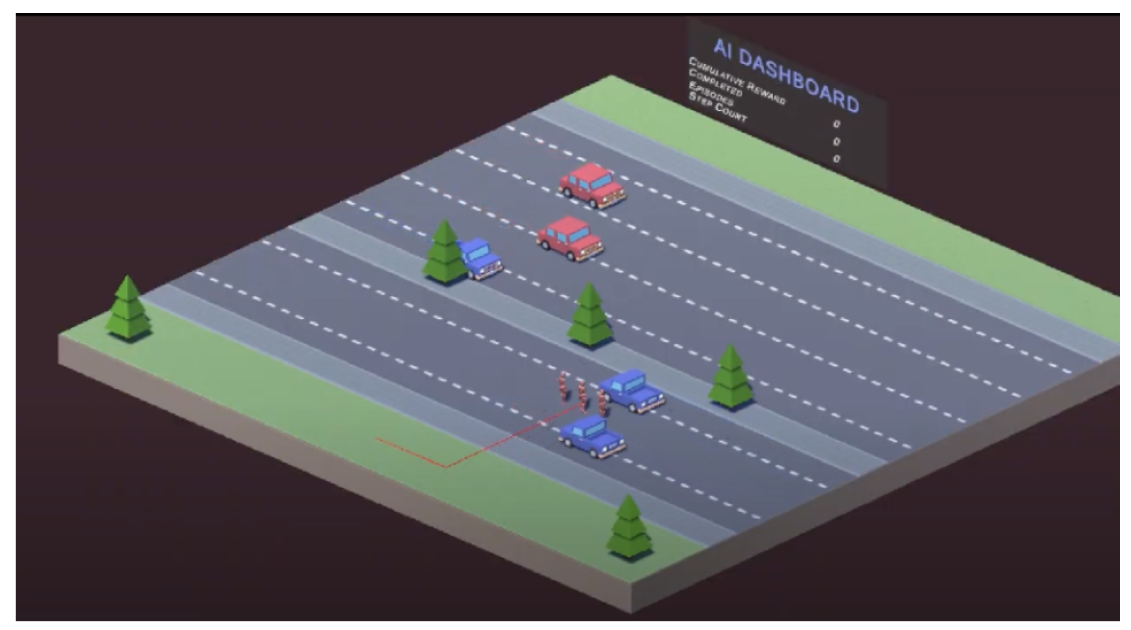

- Scene 3: Multiple agents cross the road at the same time, as shown in Figure 6, creating crowd dynamics and testing agent abilities in social navigation.

- Scene 4: The agent demonstrated its adaptability by switching from imitation learning to reinforcement learning when approaching a busy road for crossing, ensuring it could handle various situations effectively, as seen in Figure 7.

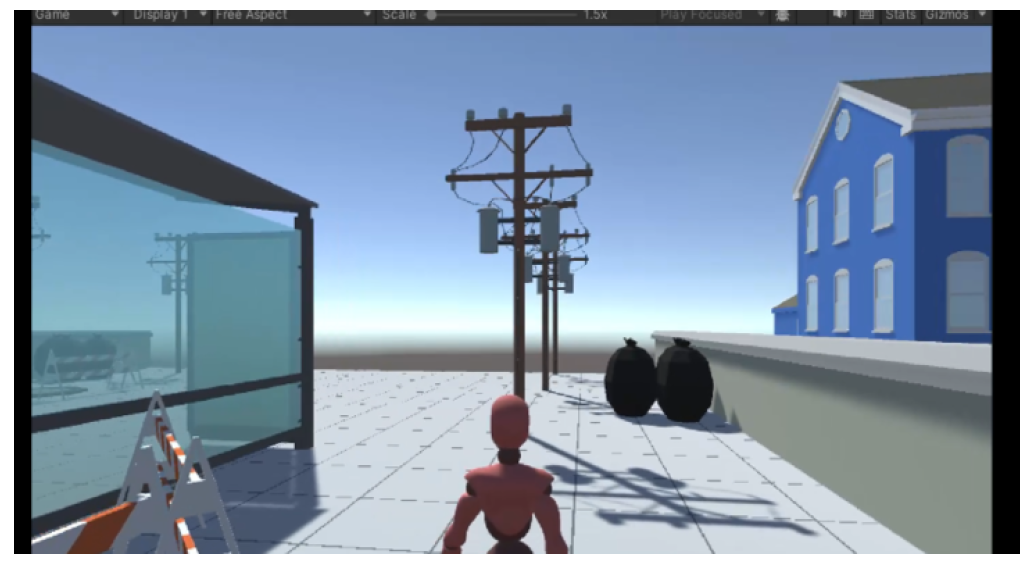

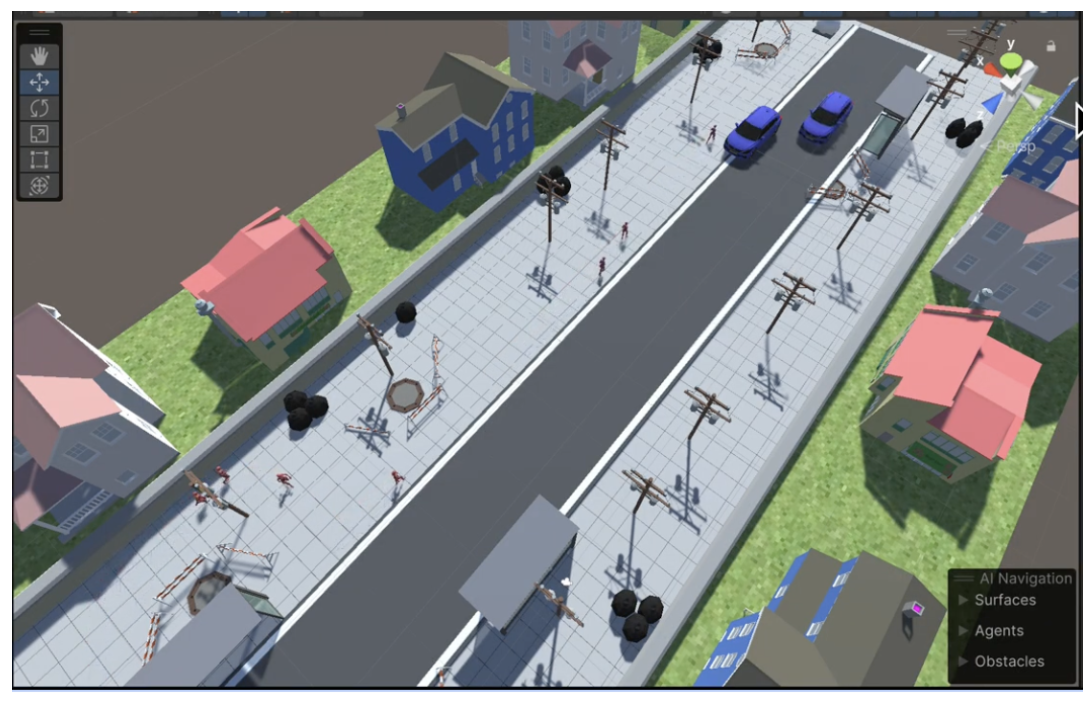

- Scene 5: This scenario involved modelling a realistic residential street with footpaths, houses, streetlights, and various scattered obstacles, as shown in Figure 8. While the agents move, they have the opportunity to avoid these static obstacles and follow pedestrian rules for safe crossing. This realistic use case simulates typical weekday pedestrian behaviour in a residential environment.

- Scene 6: The setting depicted in Figure 9 is that of a bustling marketplace filled with pedestrians meandering through numerous kiosks, vehicles, and barriers. The simulation provides an urban experience that reflects real-world conditions, testing the agent’s decision-making in a high-traffic area.

3.5. 3D Environment Design

- Flat Surface (Ground): A plane that describes a starting point of the environment.

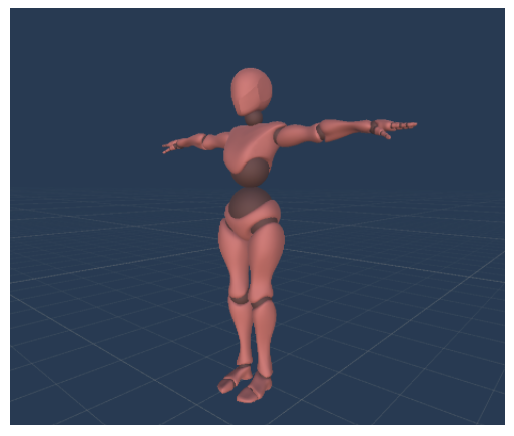

- Controllable Character (Figure 10): The player character that would learn to safely navigate the road by training.

- Surrounding Barriers (Walls): The barriers set and limit the user within the environment and attempt to simulate real world, such as buildings or barriers.

- Designated Destination (Target Point): The agent must be able to cross the street, which is the aim in the case of successful crossings.

- Dynamic Obstacles (Vehicles and Roadblocks): Car models create traffic with controlling parameters such as speed and density that will allow the assessment of the agent.

3.6. Hyperparameters For Training

4. Results

4.1. Monte Carlo Simulation

4.1.1. Introduction

4.1.2. Inference

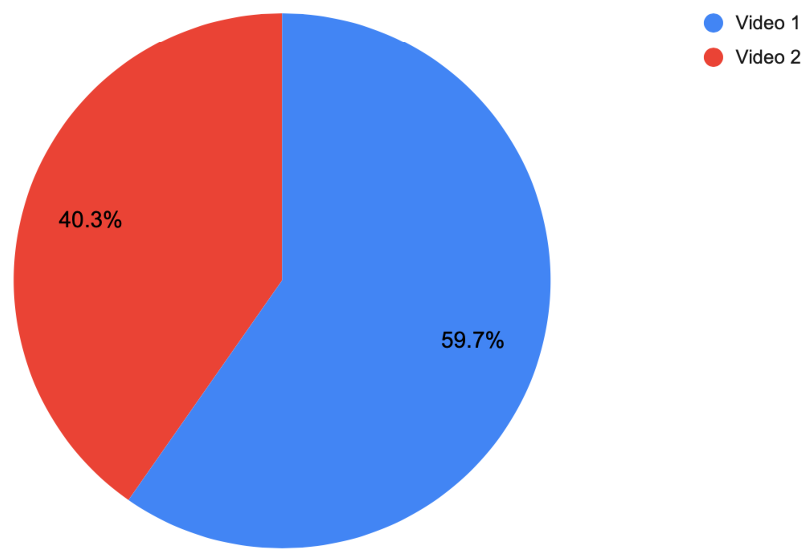

4.2. Turing Test

4.2.1. Introduction

4.2.2. Inference

| Age Group | AI-Controlled (Video 1) | Human-Controlled (Video 2) |

|---|---|---|

| 18-25 | 25 | 15 |

| 26-40 | 11 | 9 |

| 40+ | 5 | 5 |

4.3. Replicating A Real-World Scenario

5. Discussion and Future Work

| Category | Opportunities |

|---|---|

| Data-related | Collecting extensive pedestrian motion data across different Indian cities; Incorporating real-time sensor data for dynamic model updates; Including seasonal and time-of-day variations. |

| Model-related | Adding additional pedestrian behaviours such as distraction, indecision, and reactions to noise; Enhancing interaction models between pedestrians and autonomous vehicles; Expanding diversity of pedestrians (children, elderly, etc.). |

| Simulation-related | The system requires more advanced environments that include varied terrain features along with traffic control systems and scrambling obstacles from stray animals while improving efficiency through GPU-cloud processing and developing immersive training and urban development programs in AR/VR settings. |

5.1. Conclusion

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Andreychuk, A.; Yakovlev, K.; Panov, A.; Skrynnik, A. MAPF-GPT: Imitation Learning for Multi-Agent Pathfinding at Scale. Moscow Institute of Physics and Technology, Dolgoprudny, Russia, 2024.

- Mahmoudi, R.; Ostreika, A. Reinforcement Learning for Obstacle Avoidance Application in Unity ML-Agents. Kaunas Technology University (KTU), Kaunas, Lithuania, 2023.

- Vozniak, I.; Klusch, M.; Antakli, A.; Muller, C. InfoSalGAIL: Visual Attention-Empowered Imitation Learning of Pedestrian behavior in Critical Traffic Scenarios. German Research Center for Artificial Intelligence (DFKI), 2020.

- Cruz, J.A. Learning Realistic and Safe Pedestrian behavior by Imitation. Faculty of Engineering of the University of Porto, Porto, Portugal, 2021.

- Vizzari, G.; Cecconello, T. Pedestrian Simulation with Reinforcement Learning: A Curriculum-Based Approach. Department of Informatics, Systems and Communication, University of Milano-Bicocca, Milano, Italy, 2022.

- Antakli, A.; Vozniak, I.; Lipp, N.; Klusch, M.; Muller, C. HAIL: Modular Agent-Based Pedestrian Imitation Learning. German Research Center for Artificial Intelligence (DFKI), Saarbruecken, Germany, 2021.

- Nasernejad, P.; Sayed, T.; Alsaleh, R. Modelling Pedestrian behavior in Pedestrian-Vehicle Near Misses: A Continuous Gaussian Process Inverse Reinforcement Learning (GP-IRL) Approach. Department of Civil Engineering, University of British Columbia, Vancouver, Canada, 2021.

- Renman, C. Creating Human-Like AI Movement in Games Using Imitation Learning. Master’s Thesis, Royal Institute of Technology, Stockholm, Sweden, 2017.

- Mu, S.; Huang, X.; Wang, M.; Zhang, D.; Xu, D.; Li, X. Optimizing Pedestrian Simulation Based on Expert Trajectory Guidance and Deep Reinforcement Learning. 2022.

- AI Access Foundation. Modelling Human-Like Pedestrian behavior: A Reinforcement Learning Approach. Journal of Artificial Intelligence Research, 2024.

- Mu, S.; Huang, X.; Wang, M.; Zhang, D.; Xu, D.; Li, X. Optimizing Pedestrian Simulation Based on Expert Trajectory Guidance and Deep Reinforcement Learning. GeoInformatica, 2023.

- Norambuena, P.R.; Bekios-Calfa, J.; Torres, J.M. Study of Machine Learning Techniques for Pedestrian Dynamics Simulation Models. 3rd International Conference on Pattern Recognition Systems (ICPRS) by IEEE, 2023.

- Qianyin, J.; Guoming, L.; Jinwei, Y.; Xiying, L. A Model-Based Method of Pedestrian Abnormal behavior Detection in Traffic Scenes. IEEE First International Smart Cities Conference (ISC2), 2015.

- Papathanasopoulou, V.; Spyropoulou, I.; Perakis, H.; Gikas, V.; Andrikopoulou, E. A Data-Driven Model for Pedestrian behavior Classification and Trajectory Prediction. School of Rural, Surveying, and Geoinformatics Engineering (SRSE), National Technical University of Athens, Greece, 2021.

- Dimitrievski, M.; Veelaert, P.; Philips, W. behavioral Pedestrian Tracking Using a Camera and LiDAR Sensors on a Moving Vehicle. TELIN-IPI, Ghent University- imec, Belgium, 2019.

- Chaudhari, A.; Shah, J.; Arkatkar, S.; Joshi, G.; Parida, M. Investigating Effect of Surrounding Factors on Human behavior at Uncontrolled Mid-Block Crosswalks in Indian Cities. Reliability Engineering & System Safety, 2018.

- Jayaraman, S.K.; Robert Jr., L.P.; Yang, X.J.; Tilbury, D.M. Multimodal Hybrid Pedestrian: A Hybrid Automaton Model of Urban Pedestrian behavior for Automated Driving Applications. IEEE Access, 2021.

- Neubauer, M.; Ruddeck, G.; Schrab, K.; Protzmann, R.; Radusch, I. A Pedestrian Movement Model for 3D Visualization in a Driving Simulation Environment. Proceedings of the IEEE/ACM 26th International Symposium on Distributed Simulation and Real Time Applications (DS-RT), 2022.

- Jin, C.-J.; Luo, Y.; Wu, C.; Song, Y.; Li, D. Exploring Pedestrian Route Choice behaviors by Machine Learning Models. ISPRS International Journal of Geo-Information, 13(5), 2024.

- Zhai, X.; Hu, Z.; Yang, D.; Zhou, L.; Liu, J. Social-Aware Multimodal Pedestrian Crossing behavior Prediction. Proceedings of the Asian Conference on Computer Vision (ACCV), 2022.

- Lee, S.; Hwang, J.; Kim, J.; Han, J. CNN-Based Crosswalk Pedestrian Situation Recognition System Using Mask-R-CNN and CDA. Applied Sciences, 13(4291), 2023.

- Mounsey, A.; Khan, A.; Sharma, S. Deep and Transfer Learning Approaches for Pedestrian Identification and Classification in Autonomous Vehicles. Electronics, 2021.

- Boda, P.; Ramadevi, Y. Predicting Pedestrian behavior at Zebra Crossings Using Bottom-Up Pose Estimation and Deep Learning. International Journal of Intelligent Systems and Applications in Engineering (IJISAE), 12(4s), 2024.

- Ghasemi, M.; Sorkhoh, I.; Alzhouri, F.; Moosavi, A.H.; Agarwal, A.; Ebrahimi, D. An Introduction to Reinforcement Learning: Fundamental Concepts and Practical Applications. Wilfrid Laurier University and Concordia University, 2024.

- Jaeger, B.; Geiger, A. An Invitation to Deep Reinforcement Learning. arXiv, Version 2, 2024.

- Sivamayil, K.; Rajasekar, E.; Aljafari, B.; Nikolovski, S.; Vairavasundaram, S.; Vairavasundaram, I. A Systematic Study on Reinforcement Learning-Based Applications. Energies, 2024.

- Gavenski, N.; Meneguzzi, F.; Luck, M.; Rodrigues, O. A Survey of Imitation Learning Methods, Environments, and Metrics. King’s College London, University of Aberdeen, and University of Sussex, 2024.

- Zare, M.; Kebria, P.M.; Khosravi, A.; Nahavandi, S. A Survey of Imitation Learning: Algorithms, Recent Developments, and Challenges. IEEE Transactions on Artificial Intelligence, 2024.

- Kielar, P.M.; Borrmann, A. An Artificial Neural Network Framework for Pedestrian Walking behavior Modeling and Simulation. Proceedings of the 9th International Conference on Pedestrian and Evacuation Dynamics (PED), 2018.

- Larter, S.; Queiroz, R.; Sedwards, S.; Sarkar, A.; Czarnecki, K. A Hierarchical Pedestrian behavior Model to Generate Realistic Human behavior in Traffic Simulation. University of Waterloo, Canada, 2022.

- Alozi, A.R.; Hussein, M. Active Road User Interactions with Autonomous Vehicles: Proactive Safety Assessment. Transportation Research Record, 2023.

- Artal-Villa, L.; Olaverri-Monreal, C. Vehicle-Pedestrian Interaction in SUMO and Unity3D. Proceedings of the WorldCIST’19 Conference, 2019.

- Olszewski, P.; Osinska, B.; Zielinska, A. Pedestrian Safety at Traffic Signals in Warsaw. Transportation Research Procedia, 2016.

- Torabi, F.; Warnell, G.; Stone, P. behavioral Cloning from Observation. In Proceedings of the Twenty-Seventh International Joint Conference on Artificial Intelligence (IJCAI-18), Stockholm, Sweden, 2018; pp. 4950–4957.

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. arXiv preprint arXiv:1707.06347v2, 2017.

- Webster, J.; Amos, M. A Turing Test for Crowds. Royal Society Open Science, 7(8), 200307, 2020.

- Naderi, B. Monte Carlo Localization for Pedestrian Indoor Navigation Using a Map-Aided Movement Model. Master’s Thesis, Technische Universität Berlin, 2012.

- Sun, Y. Kinetic Monte Carlo Simulations of Bi-Directional Pedestrian Flow with Different Walk Speeds. Physica A, 549, 2020.

- Hanski, J.; Biçak, K.B. An Evaluation of the Unity Machine Learning Agents Toolkit in Dense and Sparse Reward Video Game Environments. Bachelor’s Thesis, Uppsala University, Campus Gotland, 2021.

- Yousif, Y. Hands-On Imitation Learning: From behavior Cloning to Multi-Modal Imitation Learning. Towards Data Science, 2024.

- Kennedy, W.G. Modelling Human behavior in Agent-Based Models. Krasnow Institute for Advanced Study, George Mason University, USA, 2011.

- Fuchs, A.; Passarella, A.; Conti, M. Modeling, Replicating, and Predicting Human behavior: A Survey. ACM Transactions on Autonomous and Adaptive Systems, May 2023.

- Puentes, M.; Novoa, D.; Delgado Nivia, J.M.; Barrios Hernández, C.J.; Carrillo, O.; Le Mouël, F. Pedestrian behavior Modeling and Simulation from Real-Time Data Information. Proceedings of the 2nd Workshop on CATAÏ - SmartData for Citizen Wellness, 2019.

- Kadali, B.R.; Vedagiri, P. Modelling Pedestrian Road Crossing behavior under Mixed Traffic Conditions. European Transport/Trasporti Europei, 55(3), 2013.

- Jain, A.; Gupta, A.; Rastogi, R. Pedestrian Crossing behavior Analysis at Intersections. International Journal for Traffic and Transport Engineering, 4(1), 103–116, 2014. [CrossRef]

- Barón, L.; Faria, S.; Sousa, E.; Freitas, E. Analysis of Pedestrians’ Road Crossing behavior in Social Groups. Transportation Research Record, 2678(3), 387–409, 2023. [CrossRef]

- Pascucci, F.; Rinke, N.; Schiermeyer, C.; Friedrich, B.; Berkhahn, V. Modeling of Shared Space with Multi-Modal Traffic Using a Multi-Layer Social Force Approach. European Transport/Trasporti Europei, 85, 2025.

- Wang, J., Lv, W., Jiang, Y., & Huang, G. A cellular automata approach for modeling pedestrian-vehicle mixed traffic flow in urban city. arXiv preprint arXiv:2405.06282.

- Vizzari, G., & Cecconello, T. Pedestrian Simulation with Reinforcement Learning: A Curriculum-Based Approach. Future Internet, 15(1), 12.

- Tai, L., Zhang, J., Liu, M., & Burgard, W. Socially Compliant Navigation through Raw Depth Inputs with Generative Adversarial Imitation Learning. arXiv preprint arXiv:1710.02543.

- Terzopoulos, D., & Yu, Q. A Decision Network Framework for the behavioral Animation of Virtual Humans. Proceedings of the 2007 ACM SIGGRAPH/Eurographics Symposium on Computer Animation, 119–128.

- Ng, A. Y., & Russell, S. J. Algorithms for Inverse Reinforcement Learning. Proceedings of the Seventeenth International Conference on Machine Learning, 663–670.

- MLJourney. XGBoost vs LightGBM: Detailed Comparison. 2023. Available online: https://mljourney.com/xgboost-vs-lightgbm-detailed-comparison.

- MLJourney. LightGBM vs XGBoost vs CatBoost: A Comprehensive Comparison. 2023. Available online: https://mljourney.com/lightgbm-vs-xgboost-vs-catboost-a-comprehensive-comparison.

- Konrad, S.G.; Masson, F.R. Pedestrian Skeleton Tracking Using OpenPose and Probabilistic Filtering. *IEEE Biennial Congress of Argentina (ARGENCON)* **2020**,.

- (2013, November 20). Pedestrianising Avenue Road. Deccan Herald.https://www.deccanherald.com/content/370143/pedestrianising-avenue-road.html.

- Unity Technologies. Best Practice Guide: Making believable visuals—Scale. Unity Documentation, 2019. Available online: https://docs.unity3d.com/2019.3/Documentation/Manual/BestPracticeMakingBelievableVisuals1.html.

- Fuchs, M.; Schmid, P.; Bär, D. Human-Centered AI for Transportation: A Review. *Transportation Research Part C: Emerging Technologies*, 2023, 144, 103908.

- Wang, Y.; Wang, Y.; Xu, D. Trust and Transparency in AI: A Systematic Review. *Journal of Artificial Intelligence Research*, 2021, 70, 891–937.

- Glikson, E.; Woolley, A. W. Human Trust in Artificial Intelligence: Review of Empirical Research. *Academy of Management Annals*, 2020, 14(2), 627–660.

- Schmidt, A.; Müller, V. C. Explainable AI: From black box to glass box. *AI & Society*, 2022, 37, 585–595.

- Lee, J. D.; See, K. A.; Adams, J. A. Human-Autonomy Teaming: Challenges and Opportunities. *Annual Review of Control, Robotics, and Autonomous Systems*, 2023, 6, 1–27.

- Liu, Y.; Chen, S.; Zhang, H. Human Behavior Recognition Using Deep Learning: A Survey. *Pattern Recognition Letters*, 2022, 154, 33–40.

- Zhao, Y.; Zhang, L.; Liu, X. Natural Evasion Model (NEM) for Adversarial Strategy Simulation in Security Systems. *Journal of Artificial Intelligence and Security*, 2022, 15(3), 115–126.

- Green, P. A.; Kang, T. P.; Jeong, H. Using an OpenDS Driving Simulator for Car Following: A First Attempt. AutomotiveUI 2014 - 6th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, in Cooperation with ACM SIGCHI - Adjunct Proceedings, 2014, pp. 64–69.

| Parameter | Road Crossing Scenario | Footpath Walking Scenario |

|---|---|---|

| Trainer Type | PPO (RL) | BC (IL) |

| Batch Size | 2048 | 128 |

| Buffer Size | 20480 | 2048 |

| Learning Rate | 0.0004 | 0.0003 |

| Beta | 0.00003 | 0.01 |

| Epsilon | 0.2 | 0.2 |

| Lambda | 0.95 | 0.95 |

| Num Epochs | 3 | 3 |

| Learning Rate Schedule | Linear | - |

| Network Normalization | True | False |

| Hidden Units | 256 | 512 |

| Num Layers | 2 | - |

| Reward Signal (Extrinsic) | Gamma: 0.99, Strength: 1.0 | Gamma: 0.99, Strength: 1.0 |

| Max Steps | 2,000,000 | |

| Time Horizon | 64 | 128 |

| Summary Frequency | 10,000 | 10,000 |

| Keep Checkpoints | 5 | 5 |

| Checkpoint Interval | 500,000 | 500,000 |

| Reward Signal (Curiosity) | - | Gamma: 0.99, Strength: 0.02 |

| GAIL Strength | - | 0.01 |

| GAIL Gamma | - | 0.99 |

| Trial | Lane 1 Speed | Lane 2 Speed | Lane 3 Speed | Lane 4 Speed | Lane 5 Speed | Player Speed | Success % | Scenario |

|---|---|---|---|---|---|---|---|---|

| 1 | 14.4 | 28.8 | 25.2 | 21.6 | 18.0 | 36.0 | 88% | Group of 3 pedestrians |

| 2 | 3.6 | 10.8 | 7.2 | 39.6 | 36.0 | 21.6 | 63% | Group of 3 pedestrians |

| 3 | 43.2 | 32.4 | 36.0 | 10.8 | 7.2 | 28.8 | 81% | Group of 3 pedestrians |

| 4 | 3.6 | 10.8 | 7.2 | 39.6 | 36.0 | 36.0 | 82% | Multiple pedestrians |

| 5 | 54.0 | 36.0 | 18.0 | 43.2 | 10.8 | 28.8 | 67% | Multiple pedestrians |

| 6 | 126.0 | 126.0 | 126.0 | 126.0 | 126.0 | 18.0 | 33% | Multiple pedestrians |

| 7 | 14.4 | 25.2 | 43.2 | 28.8 | 18.0 | 10.8 | 74% | Single pedestrian |

| 8 | 39.6 | 14.4 | 25.2 | 28.8 | 36.0 | 28.8 | 72% | Single pedestrian |

| 9 | 39.6 | 14.4 | 25.2 | 28.8 | 36.0 | 21.6 | 70% | Single pedestrian |

| 10 | 18.0 | 25.2 | 43.2 | 14.4 | 57.6 | 21.6 | 85% | Single pedestrian |

| 11 | 126.0 | 126.0 | 126.0 | 126.0 | 126.0 | 14.4 | 88% | Crosswalk |

| 12 | 36.0 | 43.2 | 36.0 | 18.0 | 25.2 | 21.6 | 80% | Crosswalk |

| 13 | 54.0 | 43.2 | 36.0 | 28.8 | 18.0 | 18.0 | 70% | Crosswalk |

| 14 | 54.0 | 43.2 | 36.0 | 28.8 | 18.0 | 21.6 | 65% | Crosswalk |

| 15 | 28.8 | 36.0 | 18.0 | 21.6 | 25.2 | 14.4 | 86% | Crosswalk |

| 16 | 28.8 | 36.0 | 18.0 | 21.6 | 25.2 | 21.6 | 76% | Crosswalk |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).