Submitted:

12 May 2025

Posted:

12 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Density-Based Grid Decimation Algorithm: A novel preprocessing method that dynamically adjusts grid sizes based on point density, addressing imbalanced sampling and improving computational efficiency compared to traditional grid-based approaches.

- Attention-Based Modules: Two new modules—Local Attention Aggregation (LAA) and Attention Residual (AR)—are designed to efficiently capture both local and global features, reducing memory consumption and computational overhead.

- RT-Net Architecture: A new segmentation network that integrates the density-based grid decimation algorithm with the proposed attention-based modules. RT-Net outperforms existing benchmarks, especially in segmenting small-sized object categories, as demonstrated on large-scale datasets.

2. Related Works

2.1. Deep Learning Methods for Extracting Point Cloud Features

2.2. Preprocessing of Point Clouds Before Training

3. Methodology

3.1. Network Architecture

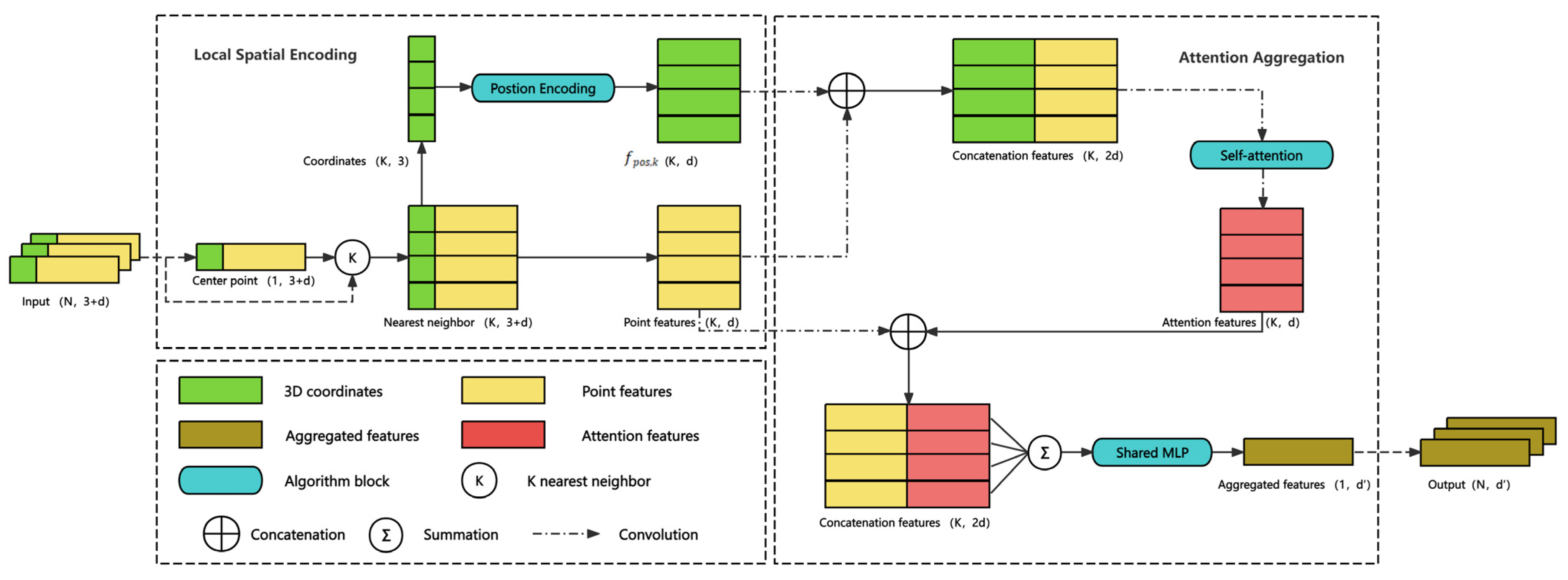

3.2. Local Attention Aggregation

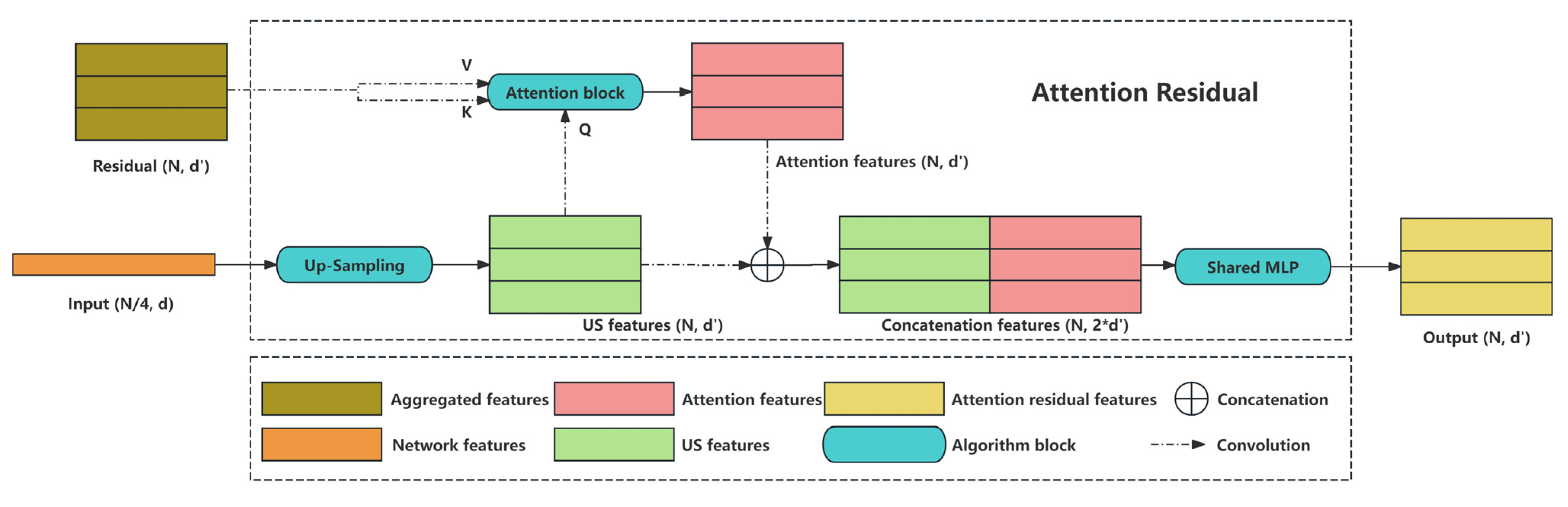

3.3. Attention Residual

3.4. Density-Based Grid Decimation

- Compute the upper and lower bounds of the 3D coordinates in the original point cloud set.

- Specify the grid size and calculate the grid count per dimension.

- Determine the three-dimensional grid indices for every point within the point set by their grid index.

- Classify points in based on the indices calculated in the previous step. Points sharing the same index are grouped into a grid. Unlike traditional grid-based decimation, where the grid size is fixed, our approach considers the density of points and dynamically adjusts the grid size accordingly. If the point count in a grid exceeds a preset threshold, the grid is subdivided into four equal-sized sub-grids. This process repeats until the number of points in all grids falls below the preset threshold. Subsequently, each grid randomly retains one point while discarding the rest, completing the density-based grid decimation process, and obtaining

4. Experiments and Results

4.1. Density-Based Grid Decimation

4.2. Density-Based Grid Decimation

4.3. Efficiency of Density-Based Grid Decimation

4.4. Ablation of RT-Net Framework

- Removal of Self-Attention Pooling: This structure facilitates the aggregation of features from neighboring points in the point clouds. Upon its removal, we replaced it with standard max/mean/sum pooling for the local feature encoding.

- Removal of Attention Residual: This structure enhances the effectiveness of residual adversarial networks by emphasizing feature values through attention mechanisms. Upon its removal, we utilized an original residual connection.

4.5. Ablation of AR

- RT-Net with Residual: Utilizes a standard residual connections module.

- RT-Net with Attention Residual: Uses an attention residual connections module with addition.

- RT-Net with Attention Residual (Concatenation): Employs our complete attention residual module with concatenation.

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| GPU | Graphics processing unit |

| LAA | Local attention aggregation |

| AR | Attention residual |

| FC | Fully connected |

| US | Up sampling |

| MLP | Multi-layer perceptron |

| KNN | K-nearest neighbor |

| IoU | Intersection over union |

| mIoU | Mean intersection over union |

References

- Charles, R.Q.; Su, H.; Kaichun, M.; Guibas, L.J. PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Honolulu, HI, July, 2017; pp. 77–85. [Google Scholar] [CrossRef]

- Weinmann, M.; Jutzi, B.; Hinz, S.; Mallet, C. Semantic Point Cloud Interpretation Based on Optimal Neighborhoods, Relevant Features and Efficient Classifiers. ISPRS Journal of Photogrammetry and Remote Sensing 2015, 105, 286–304. [Google Scholar] [CrossRef]

- Schnabel, R.; Wahl, R.; Klein, R. Efficient RANSAC for Point-Cloud Shape Detection. Computer Graphics Forum 2007, 26, 214–226. [Google Scholar] [CrossRef]

- Strom, J.; Richardson, A.; Olson, E. Graph-Based Segmentation for Colored 3D Laser Point Clouds. In Proceedings of the 2010 IEEE/RSJ International Conference on Intelligent Robots and Systems; IEEE: Taipei, October, 2010; pp. 2131–2136. [Google Scholar] [CrossRef]

- Jiang, X.Y.; Meier, U.; Bunke, H. Fast Range Image Segmentation Using High-Level Segmentation Primitives. In Proceedings of the Proceedings Third IEEE Workshop on Applications of Computer Vision. WACV’96; IEEE Comput. Soc. Press: Sarasota, FL, USA, 1996; pp. 83–88. [Google Scholar] [CrossRef]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space. In Proceedings of the Advances in Neural Information Processing Systems 30 (NIPS 2017), Long Beach, CA, USA, 2017; Vol. 30. [Google Scholar] [CrossRef]

- Li, Y.; Bu, R.; Sun, M.; Wu, W.; Di, X.; Chen, B. PointCNN: Convolution On X-Transformed Points. In Proceedings of the Advances in Neural Information Processing Systems 31 (NeurIPS 2018); Montréal, Canada, 2018; Vol. 31. [Google Scholar] [CrossRef]

- Wang, Y.; Sun, Y.; Liu, Z.; Sarma, S.E.; Bronstein, M.M.; Solomon, J.M. Dynamic Graph CNN for Learning on Point Clouds. ACM Trans. Graph. 2019, 38, 1–12. [Google Scholar] [CrossRef]

- Fan, S.; Dong, Q.; Zhu, F.; Lv, Y.; Ye, P.; Wang, F.-Y. SCF-Net: Learning Spatial Contextual Features for Large-Scale Point Cloud Segmentation. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Nashville, TN, USA, June, 2021; pp. 14499–14508. [Google Scholar] [CrossRef]

- Zhao, H.; Jiang, L.; Jia, J.; Torr, P.; Koltun, V. Point Transformer. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Montreal, QC, Canada, October, 2021; pp. 16239–16248. [Google Scholar] [CrossRef]

- Hu, Q.; Yang, B.; Xie, L.; Rosa, S.; Guo, Y.; Wang, Z.; Trigoni, N.; Markham, A. Learning Semantic Segmentation of Large-Scale Point Clouds with Random Sampling. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 1–1. [Google Scholar] [CrossRef] [PubMed]

- Li, H.; Guan, H.; Ma, L.; Lei, X.; Yu, Y.; Wang, H.; Delavar, M.R.; Li, J. MVPNet: A Multi-Scale Voxel-Point Adaptive Fusion Network for Point Cloud Semantic Segmentation in Urban Scenes. International Journal of Applied Earth Observation and Geoinformation 2023, 122, 103391. [Google Scholar] [CrossRef]

- Liu, T.; Ma, T.; Du, P.; Li, D. Semantic Segmentation of Large-Scale Point Cloud Scenes via Dual Neighborhood Feature and Global Spatial-Aware. International Journal of Applied Earth Observation and Geoinformation 2024, 129, 103862. [Google Scholar] [CrossRef]

- Zeng, Z.; Xu, Y.; Xie, Z.; Tang, W.; Wan, J.; Wu, W. Large-Scale Point Cloud Semantic Segmentation via Local Perception and Global Descriptor Vector. Expert Systems with Applications 2024, 246, 123269. [Google Scholar] [CrossRef]

- Landrieu, L.; Simonovsky, M. Large-Scale Point Cloud Semantic Segmentation with Superpoint Graphs. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Salt Lake City, UT, June, 2018; pp. 4558–4567. [Google Scholar] [CrossRef]

- Park, J.; Kim, C.; Kim, S.; Jo, K. PCSCNet: Fast 3D Semantic Segmentation of LiDAR Point Cloud for Autonomous Car Using Point Convolution and Sparse Convolution Network. Expert Systems with Applications 2023, 212, 118815. [Google Scholar] [CrossRef]

- Akwensi, P.H.; Wang, R.; Guo, B. PReFormer: A Memory-Efficient Transformer for Point Cloud Semantic Segmentation. International Journal of Applied Earth Observation and Geoinformation 2024, 128, 103730. [Google Scholar] [CrossRef]

- Wu, X.; Lao, Y.; Jiang, L.; Liu, X.; Zhao, H. Point Transformer V2: Grouped Vector Attention and Improved Sampling – Supplementary Material. In Proceedings of the Advances in Neural Information Processing Systems 35 (NeurIPS 2022); 2022; Vol. 35; pp. 33330–33342. [Google Scholar] [CrossRef]

- Wu, X.; Jiang, L.; Wang, P.-S.; Liu, Z.; Liu, X.; Qiao, Y.; Ouyang, W.; He, T.; Zhao, H. Point Transformer V3: Simpler, Faster, Stronger 2024. [CrossRef]

- Boulch, A.; Guerry, J.; Saux, B.L.; Audebert, N. SnapNet: 3D Point Cloud Semantic Labeling with 2D Deep Segmentation Networks. Computers & Graphics 2018, 71, 189–198. [Google Scholar] [CrossRef]

- Lang, A.H.; Vora, S.; Caesar, H.; Zhou, L.; Yang, J.; Beijbom, O. PointPillars: Fast Encoders for Object Detection From Point Clouds. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Long Beach, CA, USA, June, 2019; pp. 12689–12697. [Google Scholar] [CrossRef]

- Yang, B.; Luo, W.; Urtasun, R. PIXOR: Real-Time 3D Object Detection from Point Clouds. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Salt Lake City, UT, USA, June, 2018; pp. 7652–7660. [Google Scholar] [CrossRef]

- Lyu, Y.; Huang, X.; Zhang, Z. EllipsoidNet: Ellipsoid Representation for Point Cloud Classification and Segmentation. In Proceedings of the 2022 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV); IEEE: Waikoloa, HI, USA, January, 2022; pp. 256–266. [Google Scholar] [CrossRef]

- Tchapmi, L.; Choy, C.; Armeni, I.; Gwak, J.; Savarese, S. SEGCloud: Semantic Segmentation of 3D Point Clouds. In Proceedings of the 2017 International Conference on 3D Vision (3DV); IEEE: Qingdao, October, 2017; pp. 537–547. [Google Scholar] [CrossRef]

- Zhou, Y.; Tuzel, O. VoxelNet: End-to-End Learning for Point Cloud Based 3D Object Detection. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Salt Lake City, UT, USA, June, 2018; pp. 4490–4499. [Google Scholar] [CrossRef]

- Meng, H.-Y.; Gao, L.; Lai, Y.-K.; Manocha, D. VV-Net: Voxel VAE Net With Group Convolutions for Point Cloud Segmentation. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Seoul, Korea (South), October, 2019; pp. 8499–8507. [Google Scholar] [CrossRef]

- Liu, Z.; Tang, H.; Lin, Y.; Han, S. Point-Voxel CNN for Efficient 3D Deep Learning. In Proceedings of the Advances in Neural Information Processing Systems 32 (NeurIPS 2019); Vancouver, Canada, 2019; Vol. 32. [Google Scholar] [CrossRef]

- Chen, Y.; Liu, S.; Shen, X.; Jia, J. Fast Point R-CNN. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Seoul, Korea (South), October, 2019; pp. 9774–9783. [Google Scholar] [CrossRef]

- Fan, H.; Yang, Y. PointRNN: Point Recurrent Neural Network for Moving Point Cloud Processing 2019. [CrossRef]

- Hu, Q.; Yang, B.; Xie, L.; Rosa, S.; Guo, Y.; Wang, Z.; Trigoni, N.; Markham, A. RandLA-Net: Efficient Semantic Segmentation of Large-Scale Point Clouds. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Seattle, WA, USA, June, 2020; pp. 11105–11114. [Google Scholar] [CrossRef]

- Xu, Y.; Tang, W.; Zeng, Z.; Wu, W.; Wan, J.; Guo, H.; Xie, Z. NeiEA-NET: Semantic Segmentation of Large-Scale Point Cloud Scene via Neighbor Enhancement and Aggregation. International Journal of Applied Earth Observation and Geoinformation 2023, 119, 103285. [Google Scholar] [CrossRef]

- Rethage, D.; Wald, J.; Sturm, J.; Navab, N.; Tombari, F. Fully-Convolutional Point Networks for Large-Scale Point Clouds. In Computer Vision – ECCV 2018; Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y., Eds.; Lecture Notes in Computer Science; Springer International Publishing: Cham, 2018; Volume 11208, pp. 625–640. ISBN 978-3-030-01224-3. [Google Scholar] [CrossRef]

- Tatarchenko, M.; Park, J.; Koltun, V.; Zhou, Q.-Y. Tangent Convolutions for Dense Prediction in 3D. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Salt Lake City, UT, USA, June, 2018; pp. 3887–3896. [Google Scholar] [CrossRef]

- Chen, S.; Niu, S.; Lan, T.; Liu, B. PCT: Large-Scale 3d Point Cloud Representations Via Graph Inception Networks with Applications to Autonomous Driving. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP); IEEE: Taipei, Taiwan, September, 2019; pp. 4395–4399. [Google Scholar] [CrossRef]

- Thomas, H.; Qi, C.R.; Deschaud, J.-E.; Marcotegui, B.; Goulette, F.; Guibas, L. KPConv: Flexible and Deformable Convolution for Point Clouds. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Seoul, Korea (South), October, 2019; pp. 6410–6419. [Google Scholar] [CrossRef]

- Tan, W.; Qin, N.; Ma, L.; Li, Y.; Du, J.; Cai, G.; Yang, K.; Li, J. Toronto-3D: A Large-Scale Mobile LiDAR Dataset for Semantic Segmentation of Urban Roadways. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), June 2020; pp. 797–806. [Google Scholar] [CrossRef]

- Hackel, T.; Savinov, N.; Ladicky, L.; Wegner, J.D.; Schindler, K.; Pollefeys, M. SEMANTIC3D.NET: A NEW LARGE-SCALE POINT CLOUD CLASSIFICATION BENCHMARK. ISPRS Ann. Photogramm. Remote Sens. Spatial Inf. Sci 2017, IV-1/W1, 91–98. [Google Scholar] [CrossRef]

- Behley, J.; Garbade, M.; Milioto, A.; Quenzel, J.; Behnke, S.; Stachniss, C.; Gall, J. SemanticKITTI: A Dataset for Semantic Scene Understanding of LiDAR Sequences. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Seoul, Korea (South), October, 2019; pp. 9296–9306. [Google Scholar] [CrossRef]

- Li, Y.; Ma, L.; Zhong, Z.; Cao, D.; Li, J. TGNet: Geometric Graph CNN on 3-D Point Cloud Segmentation. IEEE Trans. Geosci. Remote Sensing 2020, 58, 3588–3600. [Google Scholar] [CrossRef]

- Wan, J.; Zeng, Z.; Qiu, Q.; Xie, Z.; Xu, Y. PointNest: Learning Deep Multiscale Nested Feature Propagation for Semantic Segmentation of 3-D Point Clouds. IEEE J. Sel. Top. Appl. Earth Observations Remote Sensing 2023, 16, 9051–9066. [Google Scholar] [CrossRef]

- Yoo, S.; Jeong, Y.; Jameela, M.; Sohn, G. Human Vision Based 3D Point Cloud Semantic Segmentation of Large-Scale Outdoor Scenes. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: Vancouver, BC, Canada, June, 2023; pp. 6577–6586. [Google Scholar] [CrossRef]

- Boulch, A.; Saux, B.L.; Audebert, N. Unstructured Point Cloud Semantic Labeling Using Deep Segmentation Networks. 3dor@ eurographics 2017, 3, 1–8. [Google Scholar] [CrossRef]

- Contreras, J.; Denzler, J. Edge-Convolution Point Net for Semantic Segmentation of Large-Scale Point Clouds. In Proceedings of the IGARSS 2019 - 2019 IEEE International Geoscience and Remote Sensing Symposium; IEEE: Yokohama, Japan, July, 2019; pp. 5236–5239. [Google Scholar] [CrossRef]

- Truong, G.; Gilani, S.Z.; Islam, S.M.S.; Suter, D. Fast Point Cloud Registration Using Semantic Segmentation. In Proceedings of the 2019 Digital Image Computing: Techniques and Applications (DICTA); IEEE: Perth, Australia, December, 2019; pp. 1–8. [Google Scholar] [CrossRef]

- Liu, C.; Zeng, D.; Akbar, A.; Wu, H.; Jia, S.; Xu, Z.; Yue, H. Context-Aware Network for Semantic Segmentation Toward Large-Scale Point Clouds in Urban Environments. IEEE Trans. Geosci. Remote Sensing 2022, 60, 1–15. [Google Scholar] [CrossRef]

- Zeng, Z.; Xu, Y.; Xie, Z.; Tang, W.; Wan, J.; Wu, W. LEARD-Net: Semantic Segmentation for Large-Scale Point Cloud Scene. International Journal of Applied Earth Observation and Geoinformation 2022, 112, 102953. [Google Scholar] [CrossRef]

- Yin, F.; Huang, Z.; Chen, T.; Luo, G.; Yu, G.; Fu, B. DCNet: Large-Scale Point Cloud Semantic Segmentation With Discriminative and Efficient Feature Aggregation. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 4083–4095. [Google Scholar] [CrossRef]

- Luo, L.; Lu, J.; Chen, X.; Zhang, K.; Zhou, J. LSGRNet: Local Spatial Latent Geometric Relation Learning Network for 3D Point Cloud Semantic Segmentation. Computers & Graphics 2024, 124, 104053. [Google Scholar] [CrossRef]

- Xu, J.; Zhang, R.; Dou, J.; Zhu, Y.; Sun, J.; Pu, S. RPVNet: A Deep and Efficient Range-Point-Voxel Fusion Network for LiDAR Point Cloud Segmentation. [CrossRef]

- Hou, Y.; Zhu, X.; Ma, Y.; Loy, C.C.; Li, Y. Point-to-Voxel Knowledge Distillation for LiDAR Semantic Segmentation. [CrossRef]

| Methods | mIoU | road | rd mrk. | natural | building | util. line | pole | car | fence |

|---|---|---|---|---|---|---|---|---|---|

| PointNet++ [6] | 41.81 | 89.27 | 0.00 | 69.06 | 54.16 | 43.78 | 23.30 | 52.00 | 2.95 |

| DGCNN [8] | 61.79 | 93.88 | 0.00 | 91.25 | 80.39 | 62.40 | 62.32 | 88.26 | 15.81 |

| KPFCNN [35] | 69.11 | 94.62 | 0.06 | 96.07 | 91.51 | 87.68 | 81.56 | 85.66 | 15.81 |

| TGNet [39] | 61.34 | 93.54 | 0.00 | 90.93 | 81.57 | 65.26 | 62.98 | 88.73 | 7.85 |

| RandLA-Net [11] | 81.77 | 96.69 | 64.21 | 96.62 | 94.24 | 88.06 | 77.84 | 93.37 | 42.86 |

| PointNest [40] | 74.7 | 91.0 | 27.9 | 96.2 | 89.5 | 88.3 | 78.6 | 91.1 | 35.1 |

| MVPNet [12] | 84.14 | 98.00 | 76.36 | 97.34 | 94.77 | 87.69 | 84.61 | 94.63 | 39.74 |

| EyeNet [41] | 81.13 | 96.98 | 65.02 | 97.83 | 93.51 | 86.77 | 84.86 | 94.02 | 30.01 |

| PReFormer [17] | 75.8 | 96.8 | 65.4 | 92.4 | 84.6 | 82.0 | 68.3 | 85.5 | 31.2 |

| DG-Net [13] | 82.1 | 97.1 | 65.3 | 97.2 | 92.6 | 88.1 | 84.2 | 93.6 | 38.7 |

| RandLA-Net (Ours rep.) | 76.64 | 93.10 | 55.23 | 94.45 | 93.35 | 76.21 | 73.37 | 80.24 | 47.18 |

| RandLA-Net (Ours w/ density-grid) | 81.55 | 94.77 | 60.85 | 96.25 | 95.31 | 80.66 | 79.28 | 86.99 | 54.64 |

| Ours (w/ RGB w/o density-grid) | 80.85 | 94.95 | 63.72 | 96.00 | 95.01 | 81.30 | 80.34 | 86.06 | 49.42 |

| Ours (w/ RGB and density-grid) | 86.79 | 92.28 | 81.22 | 95.03 | 89.96 | 86.97 | 90.45 | 88.06 | 70.29 |

| Methods | mIoU | man-made. | natural. | high veg. | low veg. | buildings | hard scape | scanning art. | cars |

|---|---|---|---|---|---|---|---|---|---|

| PointNet++ [6] | 63.1 | 81.9 | 78.1 | 64.3 | 51.7 | 75.9 | 36.4 | 43.7 | 72.6 |

| SPGraph [15] | 76.2 | 91.5 | 75.6 | 78.3 | 71.7 | 94.4 | 56.8 | 52.9 | 88.4 |

| ConvPoint [42] | 76.5 | 92.1 | 80.6 | 76.0 | 71.9 | 95.6 | 47.3 | 61.1 | 87.7 |

| EdgeConv [43] | 64.4 | 91.1 | 69.5 | 65.0 | 56.0 | 89.7 | 30.0 | 43.8 | 69.7 |

| RGNet [44] | 72.0 | 86.4 | 70.3 | 69.5 | 68.0 | 96.9 | 43.4 | 52.3 | 89.5 |

| RandLA-Net [11] | 77.8 | 97.4 | 93.0 | 70.2 | 65.2 | 94.4 | 49.0 | 44.7 | 92.7 |

| SCF-Net [9] | 77.6 | 97.1 | 91.8 | 86.3 | 51.2 | 95.3 | 50.5 | 67.9 | 80.7 |

| CAN [45] | 74.7 | 97.9 | 94.1 | 70.8 | 64.3 | 94.0 | 48.5 | 38.8 | 89.2 |

| LEARD-Net [46] | 74.5 | 97.5 | 92.7 | 74.6 | 61.0 | 93.2 | 40.2 | 44.2 | 92.2 |

| DCNet [47] | 74.1 | 97.9 | 86.5 | 72.9 | 64.6 | 96.2 | 48.7 | 35.3 | 90.4 |

| LSGRNet [48] | 77.5 | 97.2 | 91.2 | 84.4 | 52.2 | 94.8 | 51.6 | 70.1 | 78.5 |

| RandLA-Net (Ours rep.) | 71.80 | 91.71 | 86.81 | 87.51 | 55.07 | 91.93 | 31.26 | 54.07 | 76.03 |

| RandLA-Net (Ours w/ density-grid) | 76.22 | 90.13 | 87.65 | 87.29 | 57.96 | 93.52 | 55.75 | 62.84 | 74.59 |

| Ours (w/ RGB w/o density-grid) | 74.58 | 90.20 | 86.67 | 83.34 | 61.70 | 92.44 | 52.76 | 59.30 | 70.28 |

| Ours (w/ RGB and density-grid) | 79.88 | 92.41 | 86.91 | 90.35 | 63.32 | 95.04 | 60.48 | 67.55 | 82.96 |

| Methods | mIoU | Road | Sidewalk | Parking | Other-gro. | Building | Car | Truck. | Bicycle | Motorcycle | Other-veh. | Vegetation | Trunk | Terrain | Person | Bicyclist | Motorcyclist | Fence | Pole | Traffic sign |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PointNet++ [6] | 20.1 | 72.0 | 41.8 | 18.7 | 5.6 | 62.3 | 53.7 | 0.9 | 1.9 | 0.2 | 0.2 | 46.5 | 13.8 | 30.0 | 0.9 | 1.0 | 0.0 | 16.9 | 6.0 | 8.9 |

| KPConv [35] | 58.8 | 90.3 | 72.7 | 61.3 | 31.5 | 90.5 | 95.0 | 33.4 | 30.2 | 42.5 | 44.3 | 84.8 | 69.2 | 69.1 | 61.5 | 61.6 | 11.8 | 64.2 | 56.4 | 47.4 |

| RandLA-Net [11] | 55.9 | 90.5 | 74.0 | 61.8 | 24.5 | 89.7 | 94.2 | 43.9 | 29.8 | 32.2 | 39.1 | 83.8 | 63.6 | 68.6 | 48.4 | 47.4 | 9.4 | 60.4 | 51.0 | 50.7 |

| RPVNet [49] | 70.3 | 93.4 | 80.7 | 70.3 | 33.3 | 93.5 | 97.6 | 44.2 | 68.4 | 68.7 | 61.1 | 86.5 | 75.1 | 71.7 | 75.9 | 74.4 | 43.4 | 72.1 | 64.8 | 61.4 |

| PVKD [50] | 71.2 | 91.8 | 70.9 | 77.5 | 41.0 | 92.4 | 97.0 | 67.9 | 69.3 | 53.5 | 60.2 | 86.5 | 73.8 | 71.9 | 75.1 | 73.5 | 50.5 | 69.4 | 64.9 | 65.8 |

| RandLA-Net (Ours rep.) | 51.2 | 88.7 | 72.4 | 62.1 | 22.1 | 85.1 | 89.7 | 38.9 | 27.6 | 33.0 | 33.0 | 81.1 | 63.2 | 66.8 | 44.6 | 42.1 | 8.3 | 54.8 | 47.5 | 45.2 |

| RandLA-Net (Ours w/ density-grid) | 53.6 | 84.3 | 80.2 | 63.3 | 37.6 | 91.3 | 91.7 | 41.8 | 45.6 | 59.2 | 32.8 | 84.9 | 68.5 | 70.5 | 64.5 | 49.9 | 20.8 | 68.2 | 60.4 | 59.3 |

| Ours (w/ RGB w/o density-grid) | 70.2 | 89.9 | 76.8 | 59.3 | 40.1 | 91.6 | 96.8 | 57.9 | 43.5 | 53.5 | 58.8 | 80.2 | 72.8 | 70.9 | 60.5 | 66.4 | 47.3 | 69.8 | 61.5 | 59.8 |

| Ours (w/ RGB and density-grid) | 69.9 | 88.9 | 86.9 | 67.4 | 50.8 | 93.4 | 97.4 | 57.6 | 72.6 | 70.2 | 60.4 | 83.4 | 71.9 | 60.4 | 52.4 | 60.8 | 46.9 | 80.8 | 72.8 | 74.1 |

| Model | mIoU (%) |

|---|---|

| Full RT-Net with 0.01 grid-based decimation | 76.66 |

| Full RT-Net with 0.06 grid-based decimation | 80.85 |

| Full RT-Net with 1.0 initial grid density-based grid decimation | 86.91 |

| Full RT-Net with 10 initial grid density-based grid decimation | 86.79 |

| Model | mIoU (%) |

|---|---|

| Removing self-attention pooling | 65.66 |

| Removing attention residual | 74.81 |

| The full framework (RT-Net) | 86.79 |

| Model | mIoU(%) |

|---|---|

| RT-Net with residual | 74.81 |

| RT-Net with attention residual | 79.96 |

| RT-Net with attention residual (concatenation) | 83.00 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).