Submitted:

06 May 2025

Posted:

07 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Explainability and Hallucinations in LLMs

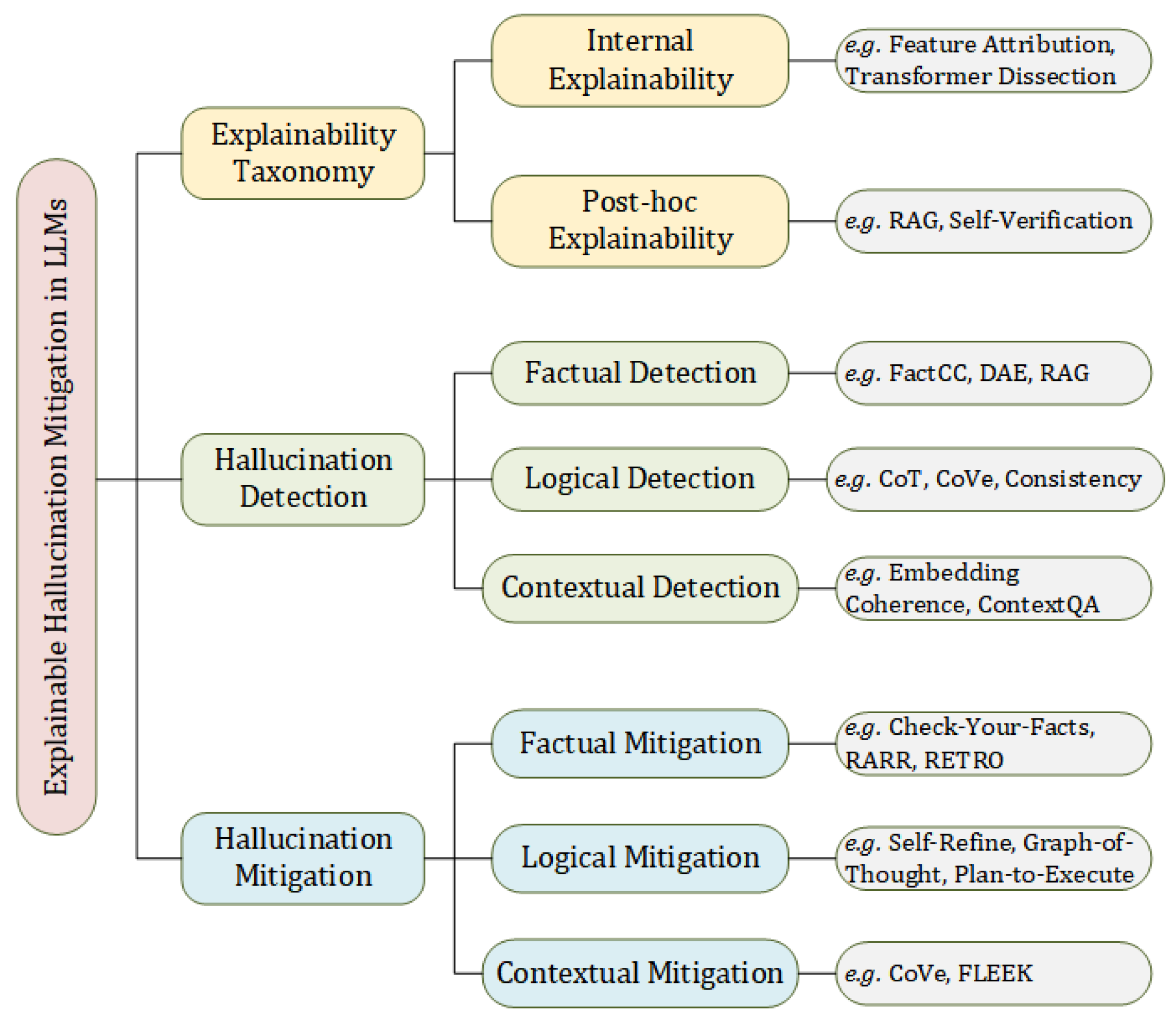

2.1. Typology of Explainability Methods

2.1.1. Internal Explainability

Feature Attribution

- Perturbation-based methods assess feature importance by removing, masking, or altering input components and observing the resulting changes in the output [20,21]. Although intuitive, these methods often assume feature independence and may overlook interactions. They can also produce unreliable results for nonsensical inputs [22], prompting efforts to improve robustness and efficiency [23].

- Vector-based methods represent input features in high-dimensional embedding spaces and analyze their relationships to model outputs, using metrics such as cosine similarity or Euclidean distance [27,28]. These techniques account for inter-feature dependencies but are sensitive to embedding quality and representation space [29].

Dissecting Transformer Blocks

- MHSA sublayers capture dependencies between input tokens. Given input X, it is projected into queries (Q), keys (K), and values (V), with attention computed as . Outputs from all attention heads are concatenated. Interpretability is typically achieved through attention weight visualization [30] or gradient-based attribution methods [31].

- MLP sublayers consist of linear transformations and non-linear activation functions. A typical MLP computes and . These components are thought to function as memory units that retain semantic content across layers [32]. Notably, MLPs often contain more parameters than attention sublayers, underscoring their importance for feature abstraction [31].

2.1.2. Post-Hoc Explainability

Before Generation

During Generation

After Generation

2.2. Typology of Hallucinations Under Limited Explainability

2.2.1. Factual Hallucinations

- Erroneous Training Data: Outputs influenced by misinformation or low-quality content present in the training data can result in factually incorrect generations [18].

- Knowledge Boundaries: The model may lack access to domain-specific or current knowledge, leading to outdated or incomplete responses [35].

- Fabricated Content: In the absence of verifiable knowledge, the model may generate plausible-sounding but entirely fabricated information, especially in response to ambiguous prompts [15].

2.2.2. Logical Hallucinations

- Insufficient Reasoning Ability: The model fails to apply basic logical rules or computational procedures, resulting in conclusions that are either incorrect or unjustified [40].

2.2.3. Contextual Hallucinations

3. Analyzing Hallucinations via Explainability

3.1. Explainability-Oriented Hallucination Detection Methods

3.1.1. Factual Hallucinations Detection

3.1.2. Logical Hallucinations Detection

3.1.3. Contextual Hallucinations Detection

3.2. Explainability-Driven Hallucination Mitigation Strategies

3.2.1. Factual Hallucination Mitigation

3.2.2. Logical Hallucination Mitigation

3.2.3. Contextual Hallucination Mitigation

4. Methodological Outlook, and Theoretical Reflections

4.1. Methodological Outlook

4.2. Explainability-Driven Prompt Optimization

- Multimodal Explainable Prompting: Developing structured, interpretable prompts that maintain logical coherence across text, image, and audio modalities.

- Interactive User-Driven Prompt Refinement: Building interfaces that provide real-time, interpretable feedback during prompt crafting, enabling users to dynamically adjust prompts to minimize hallucination risks.

- Attribution-Guided Prompt Search and Optimization: Leveraging attribution techniques to automatically identify and refine critical prompt components, creating self-explaining and hallucination-resilient prompts at scale.

4.3. The Value of Hallucination: A Theoretical Reflection from the Perspective of Explainability

5. Conclusion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yu, W.; Zhu, C.; Li, Z.; Hu, Z.; Wang, Q.; Ji, H.; Jiang, M. A survey of knowledge-enhanced text generation. ACM Computing Surveys 2022, 54, 1–38. [Google Scholar] [CrossRef]

- Poibeau, T. Machine translation; MIT Press, 2017.

- Zhao, W.X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; et al. A survey of large language models. 2023, arXiv:2303.18223 2023, 11. [Google Scholar]

- Zhou, G.; Kwashie, S.; et al. FASTAGEDS: fast approximate graph entity dependency discovery. In Proceedings of the International Conference on Web Information Systems Engineering. Springer; 2023; pp. 451–465. [Google Scholar]

- Wu, T.; He, S.; Liu, J.; Sun, S.; Liu, K.; Han, Q.L.; Tang, Y. A brief overview of ChatGPT: The history, status quo and potential future development. IEEE/CAA Journal of Automatica Sinica 2023, 10, 1122–1136. [Google Scholar] [CrossRef]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv:2303.08774 2023.

- Huang, L.; Yu, W.; Ma, W.; Zhong, W.; Feng, Z.; Wang, H.; Chen, Q.; Peng, W.; Feng, X.; Qin, B.; et al. A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions. ACM Transactions on Information Systems 2025, 43, 1–55. [Google Scholar] [CrossRef]

- Thirunavukarasu, A.J.; Ting, D.S.J.; Elangovan, K.; Gutierrez, L.; Tan, T.F.; Ting, D.S.W. Large language models in medicine. Nature medicine 2023, 29, 1930–1940. [Google Scholar] [CrossRef]

- Almeida, G.F.; Nunes, J.L.; Engelmann, N.; Wiegmann, A.; de Araújo, M. Exploring the psychology of LLMs’ moral and legal reasoning. Artificial Intelligence 2024, 333, 104145. [Google Scholar] [CrossRef]

- Li, Y.; Wang, S.; Ding, H.; Chen, H. Large language models in finance: A survey. In Proceedings of the Proceedings of the fourth ACM international conference on AI in finance, 2023, pp. 374–382.

- Kasneci, E.; Seßler, K.; Küchemann, S.; Bannert, M.; Dementieva, D.; Fischer, F.; Gasser, U.; Groh, G.; Günnemann, S.; Hüllermeier, E.; et al. ChatGPT for good? On opportunities and challenges of large language models for education. Learning and individual differences 2023, 103, 102274. [Google Scholar] [CrossRef]

- Zhao, H.; Chen, H.; Yang, F.; Liu, N.; Deng, H.; Cai, H.; Wang, S.; Yin, D.; Du, M. Explainability for large language models: A survey. ACM Transactions on Intelligent Systems and Technology 2024, 15, 1–38. [Google Scholar] [CrossRef]

- Hassija, V.; Chamola, V.; Mahapatra, A.; Singal, A.; Goel, D.; Huang, K.; Scardapane, S.; Spinelli, I.; Mahmud, M.; Hussain, A. Interpreting black-box models: a review on explainable artificial intelligence. Cognitive Computation 2024, 16, 45–74. [Google Scholar] [CrossRef]

- Hagendorff, T.; Fabi, S.; Kosinski, M. Human-like intuitive behavior and reasoning biases emerged in large language models but disappeared in ChatGPT. Nature Computational Science 2023, 3, 833–838. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, Y.; Cui, L.; Cai, D.; Liu, L.; Fu, T.; Huang, X.; Zhao, E.; Zhang, Y.; Chen, Y.; et al. Siren’s song in the AI ocean: a survey on hallucination in large language models. arXiv:2309.01219 2023.

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems 2020, 33, 9459–9474. [Google Scholar]

- Varshney, N.; Yao, W.; Zhang, H.; Chen, J.; Yu, D. A stitch in time saves nine: Detecting and mitigating hallucinations of llms by validating low-confidence generation. arXiv:2307.03987 2023.

- McKenna, N.; Li, T.; Cheng, L.; Hosseini, M.J.; Johnson, M.; Steedman, M. Sources of hallucination by large language models on inference tasks. arXiv preprint arXiv:2305.14552.

- Lei, D.; Li, Y.; Hu, M.; Wang, M.; Yun, V.; Ching, E.; Kamal, E. Chain of natural language inference for reducing large language model ungrounded hallucinations. arXiv preprint arXiv:2310.03951.

- Li, J.; Monroe, W.; Jurafsky, D. Understanding neural networks through representation erasure. arXiv preprint arXiv:1612.08220.

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Feng, S.; Wallace, E.; Grissom II, A.; Iyyer, M.; Rodriguez, P.; Boyd-Graber, J. Pathologies of neural models make interpretations difficult. arXiv preprint arXiv:1804.07781.

- Atanasova, P. A diagnostic study of explainability techniques for text classification. In Accountable and Explainable Methods for Complex Reasoning over Text; Springer, 2024; pp. 155–187.

- Kindermans, P.J.; Hooker, S.; Adebayo, J.; Alber, M.; Schütt, K.T.; Dähne, S.; Erhan, D.; Kim, B. The (un) reliability of saliency methods. Explainable AI: Interpreting, explaining and visualizing deep learning 2019, pp. 267–280.

- Sikdar, S.; Bhattacharya, P.; Heese, K. Integrated directional gradients: Feature interaction attribution for neural NLP models. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 2021, pp. 865–878.

- Enguehard, J. Sequential integrated gradients: a simple but effective method for explaining language models. arXiv preprint arXiv:2305.15853.

- Ferrando, J.; Gállego, G.I.; Costa-Jussà, M.R. Measuring the mixing of contextual information in the transformer. arXiv preprint arXiv:2203.04212.

- Modarressi, A.; Fayyaz, M.; Yaghoobzadeh, Y.; Pilehvar, M.T. GlobEnc: Quantifying global token attribution by incorporating the whole encoder layer in transformers. arXiv preprint arXiv:2205.03286.

- Kobayashi, G.; Kuribayashi, T.; Yokoi, S.; Inui, K. Analyzing feed-forward blocks in transformers through the lens of attention maps. arXiv preprint arXiv:2302.00456.

- Jaunet, T.; Kervadec, C.; Vuillemot, R.; Antipov, G.; Baccouche, M.; Wolf, C. Visqa: X-raying vision and language reasoning in transformers. IEEE Transactions on Visualization and Computer Graphics 2021, 28, 976–986. [Google Scholar] [CrossRef] [PubMed]

- Luo, H.; Specia, L. From understanding to utilization: A survey on explainability for large language models. arXiv preprint arXiv:2401.12874.

- Makantasis, K.; Georgogiannis, A.; Voulodimos, A.; Georgoulas, I.; Doulamis, A.; Doulamis, N. Rank-r fnn: A tensor-based learning model for high-order data classification. IEEE Access 2021, 9, 58609–58620. [Google Scholar] [CrossRef]

- Peng, B.; Galley, M.; He, P.; Cheng, H.; Xie, Y.; Hu, Y.; Huang, Q.; Liden, L.; Yu, Z.; Chen, W.; et al. Check your facts and try again: Improving large language models with external knowledge and automated feedback. arXiv preprint arXiv:2302.12813.

- Li, X.; Zhao, R.; Chia, Y.K.; Ding, B.; Joty, S.; Poria, S.; Bing, L. Chain-of-knowledge: Grounding large language models via dynamic knowledge adapting over heterogeneous sources. arXiv preprint arXiv:2305.13269.

- Vu, T.; Iyyer, M.; Wang, X.; Constant, N.; Wei, J.; Wei, J.; Tar, C.; Sung, Y.H.; Zhou, D.; Le, Q.; et al. Freshllms: Refreshing large language models with search engine augmentation. arXiv preprint arXiv:2310.03214.

- Kamesh, R. Think Beyond Size: Dynamic Prompting for More Effective Reasoning. arXiv preprint arXiv:2410.08130.

- Fu, R.; Wang, H.; Zhang, X.; Zhou, J.; Yan, Y. Decomposing complex questions makes multi-hop QA easier and more interpretable. arXiv preprint arXiv:2110.13472.

- Shi, Z.; Sun, W.; Gao, S.; Ren, P.; Chen, Z.; Ren, Z. Generate-then-ground in retrieval-augmented generation for multi-hop question answering. arXiv preprint arXiv:2406.14891.

- Kang, H.; Ni, J.; Yao, H. Ever: Mitigating hallucination in large language models through real-time verification and rectification. arXiv preprint arXiv:2311.09114.

- Dhuliawala, S.; Komeili, M.; Xu, J.; Raileanu, R.; Li, X.; Celikyilmaz, A.; Weston, J. Chain-of-verification reduces hallucination in large language models. arXiv preprint arXiv:2309.11495.

- Gao, L.; Dai, Z.; Pasupat, P.; Chen, A.; Chaganty, A.T.; Fan, Y.; Zhao, V.Y.; Lao, N.; Lee, H.; Juan, D.C.; et al. Rarr: Researching and revising what language models say, using language models. arXiv preprint arXiv:2210.08726.

- Pan, L.; Saxon, M.; Xu, W.; Nathani, D.; Wang, X.; Wang, W.Y. Automatically correcting large language models: Surveying the landscape of diverse self-correction strategies. arXiv preprint arXiv:2308.03188.

- Rawte, V.; Chakraborty, S.; Pathak, A.; Sarkar, A.; Tonmoy, S.; Chadha, A.; Sheth, A.; Das, A. The troubling emergence of hallucination in large language models-an extensive definition, quantification, and prescriptive remediations. 2023.

- Zhang, T.; Qiu, L.; Guo, Q.; Deng, C.; Zhang, Y.; Zhang, Z.; Zhou, C.; Wang, X.; Fu, L. Enhancing uncertainty-based hallucination detection with stronger focus. arXiv preprint arXiv:2311.13230.

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.; Chi, E.; Narang, S.; Chowdhery, A.; Zhou, D. Self-consistency improves chain of thought reasoning in language models. arXiv preprint arXiv:2203.11171.

- Zhang, T.; Kishore, V.; Wu, F.; Weinberger, K.Q.; Artzi, Y. Bertscore: Evaluating text generation with bert. arXiv preprint arXiv:1904.09675.

- Wang, A.; Cho, K.; Lewis, M. Asking and answering questions to evaluate the factual consistency of summaries. arXiv preprint arXiv:2004.04228.

- Kryściński, W.; McCann, B.; Xiong, C.; Socher, R. Evaluating the factual consistency of abstractive text summarization. arXiv preprint arXiv:1910.12840.

- Goyal, T.; Durrett, G. Evaluating factuality in generation with dependency-level entailment. arXiv preprint arXiv:2010.05478.

- Borgeaud, S.; Mensch, A.; Hoffmann, J.; Cai, T.; Rutherford, E.; Millican, K.; Van Den Driessche, G.B.; Lespiau, J.B.; Damoc, B.; Clark, A.; et al. Improving language models by retrieving from trillions of tokens. In Proceedings of the International conference on machine learning. PMLR; 2022; pp. 2206–2240. [Google Scholar]

- Thorne, J.; Vlachos, A.; Christodoulopoulos, C.; Mittal, A. FEVER: a large-scale dataset for fact extraction and VERification. arXiv preprint arXiv:1803.05355, arXiv:1803.05355 2018.

- Rashkin, H.; Nikolaev, V.; Lamm, M.; Aroyo, L.; Collins, M.; Das, D.; Petrov, S.; Tomar, G.S.; Turc, I.; Reitter, D. Measuring attribution in natural language generation models. Computational Linguistics 2023, 49, 777–840. [Google Scholar] [CrossRef]

- Gekhman, Z.; Herzig, J.; Aharoni, R.; Elkind, C.; Szpektor, I. Trueteacher: Learning factual consistency evaluation with large language models. arXiv preprint arXiv:2305.11171, arXiv:2305.11171 2023.

- Lin, S.; Hilton, J.; Evans, O. Truthfulqa: Measuring how models mimic human falsehoods. arXiv preprint arXiv:2109.07958, arXiv:2109.07958 2021.

- Manakul, P.; Liusie, A.; Gales, M.J. Selfcheckgpt: Zero-resource black-box hallucination detection for generative large language models. arXiv preprint arXiv:2303.08896, arXiv:2303.08896 2023.

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 2022, 35, 24824–24837. [Google Scholar]

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large language models are zero-shot reasoners. Advances in neural information processing systems 2022, 35, 22199–22213. [Google Scholar]

- Dziri, N.; Kamalloo, E.; Milton, S.; Zaiane, O.; Yu, M.; Ponti, E.M.; Reddy, S. Faithdial: A faithful benchmark for information-seeking dialogue. Transactions of the Association for Computational Linguistics 2022, 10, 1473–1490. [Google Scholar] [CrossRef]

- Wang, L.; Xu, W.; Lan, Y.; Hu, Z.; Lan, Y.; Lee, R.K.W.; Lim, E.P. Plan-and-solve prompting: Improving zero-shot chain-of-thought reasoning by large language models. arXiv preprint arXiv:2305.04091, arXiv:2305.04091 2023.

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. React: Synergizing reasoning and acting in language models. In Proceedings of the International Conference on Learning Representations (ICLR); 2023. [Google Scholar]

- Madaan, A.; Tandon, N.; Gupta, P.; Hallinan, S.; Gao, L.; Wiegreffe, S.; Alon, U.; Dziri, N.; Prabhumoye, S.; Yang, Y.; et al. Self-refine: Iterative refinement with self-feedback. Advances in Neural Information Processing Systems 2023, 36, 46534–46594. [Google Scholar]

- Besta, M.; Blach, N.; Kubicek, A.; Gerstenberger, R.; Podstawski, M.; Gianinazzi, L.; Gajda, J.; Lehmann, T.; Niewiadomski, H.; Nyczyk, P.; et al. Graph of thoughts: Solving elaborate problems with large language models. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol. 38, pp. 17682–17690.

- Sato, S.; Akama, R.; Suzuki, J.; Inui, K. A Large Collection of Model-generated Contradictory Responses for Consistency-aware Dialogue Systems. arXiv preprint arXiv:2403.12500, arXiv:2403.12500 2024.

- He, J.; Pan, K.; Dong, X.; Song, Z.; Liu, Y.; Sun, Q.; Liang, Y.; Wang, H.; Zhang, E.; Zhang, J. Never Lost in the Middle: Mastering Long-Context Question Answering with Position-Agnostic Decompositional Training. arXiv preprint arXiv:2311.09198, arXiv:2311.09198 2023.

- Khandelwal, U.; Levy, O.; Jurafsky, D.; Zettlemoyer, L.; Lewis, M. Generalization through memorization: Nearest neighbor language models. arXiv preprint arXiv:1911.00172, arXiv:1911.00172 2019.

- Wu, Q.; Lan, Z.; Qian, K.; Gu, J.; Geramifard, A.; Yu, Z. Memformer: A memory-augmented transformer for sequence modeling. arXiv preprint arXiv:2010.06891, arXiv:2010.06891 2020.

- Lee, S.; Park, S.H.; Jo, Y.; Seo, M. Volcano: mitigating multimodal hallucination through self-feedback guided revision. arXiv preprint arXiv:2311.07362, arXiv:2311.07362 2023.

- Cui, P.; Hu, L. Sliding selector network with dynamic memory for extractive summarization of long documents. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021, pp. 5881–5891.

- Li, J.; Cheng, X.; Zhao, W.X.; Nie, J.Y.; Wen, J.R. Halueval: A large-scale hallucination evaluation benchmark for large language models. arXiv preprint arXiv:2305.11747, arXiv:2305.11747 2023.

- Cui, L.; Wu, Y.; Liu, S.; Zhang, Y.; Zhou, M. MuTual: A dataset for multi-turn dialogue reasoning. arXiv preprint arXiv:2004.04494, arXiv:2004.04494 2020.

- Tonmoy, S.; Zaman, S.; Jain, V.; Rani, A.; Rawte, V.; Chadha, A.; Das, A. A comprehensive survey of hallucination mitigation techniques in large language models. arXiv preprint arXiv:2401.01313 2024, arXiv:2401.01313 2024, 66. [Google Scholar]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y.J.; Madotto, A.; Fung, P. Survey of hallucination in natural language generation. ACM computing surveys 2023, 55, 1–38. [Google Scholar] [CrossRef]

- He, Q.; Zeng, J.; He, Q.; Liang, J.; Xiao, Y. From complex to simple: Enhancing multi-constraint complex instruction following ability of large language models. arXiv preprint arXiv:2404.15846, arXiv:2404.15846 2024.

- Ling, Z.; Fang, Y.; Li, X.; Huang, Z.; Lee, M.; Memisevic, R.; Su, H. Deductive verification of chain-of-thought reasoning. Advances in Neural Information Processing Systems 2023, 36, 36407–36433. [Google Scholar]

- Bayat, F.F.; Qian, K.; Han, B.; Sang, Y.; Belyi, A.; Khorshidi, S.; Wu, F.; Ilyas, I.F.; Li, Y. Fleek: Factual error detection and correction with evidence retrieved from external knowledge. arXiv preprint arXiv:2310.17119, arXiv:2310.17119 2023.

- Pearl, J. Causality; Cambridge university press, 2009.

- Cheng, D.; Li, J.; Liu, L.; Liu, J.; Le, T.D. Data-driven causal effect estimation based on graphical causal modelling: A survey. ACM Computing Surveys 2024, 56, 1–37. [Google Scholar] [CrossRef]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.; Cao, Y.; Narasimhan, K. Tree of thoughts: Deliberate problem solving with large language models. Advances in neural information processing systems 2023, 36, 11809–11822. [Google Scholar]

- Joshi, I.; Shahid, S.; Venneti, S.M.; Vasu, M.; Zheng, Y.; Li, Y.; Krishnamurthy, B.; Chan, G.Y.Y. CoPrompter: User-Centric Evaluation of LLM Instruction Alignment for Improved Prompt Engineering. In Proceedings of the Proceedings of the 30th International Conference on Intelligent User Interfaces, 2025, pp. 341–365.

- Sriramanan, G.; Bharti, S.; Sadasivan, V.S.; Saha, S.; Kattakinda, P.; Feizi, S. Llm-check: Investigating detection of hallucinations in large language models. Advances in Neural Information Processing Systems 2024, 37, 34188–34216. [Google Scholar]

- Rudolph, J.; Tan, S.; Tan, S. ChatGPT: Bullshit spewer or the end of traditional assessments in higher education? Journal of applied learning and teaching 2023, 6, 342–363. [Google Scholar]

| Type | Sub-Causes | User Input | Model Output | Explanation |

|---|---|---|---|---|

| Factual | ||||

| Hallucination | Data Source Error | What is the capital city of China? | The capital city of China is Shanghai. | The model incorrectly states Shanghai as the capital of China, likely due to exposure to inaccurate or biased training data. |

| Knowledge Boundary | What is the primary treatment for CJD? | The primary treatment for CJD is antibiotic therapy. | The model generates medically incorrect content, reflecting limitations in domain-specific or up-to-date knowledge acquisition. | |

| Factual Fabrication | When did Japan surrender in WWII? | Japan surrendered in December 1944. | The model fabricates a historical fact in the absence of verifiable knowledge or source attribution. | |

| Logical | ||||

| Hallucination | Insufficient Reasoning | Solve | 2 | The model demonstrates a failure in symbolic reasoning by producing a mathematically incorrect solution. |

| Broken Reasoning Chain | Mary is 4 years older than John (age 12). How old is Mary? | Mary is 16. Next year, she will be 5 years older than John. | The model fails to preserve logical continuity, producing a temporally inconsistent and unjustified conclusion. | |

| Contextual | ||||

| Hallucination | Insufficient Context Tracking | (round-1): I watched The Lord of the Rings yesterday. It was amazing! (round-2): Yes, The Lord of the Rings is a classic. Who’s your favorite character? | (round-1): I like Gandalf the most. His wisdom and power really impressed me. Do you know who played him? (round-2): The director of The Lord of the Rings is Peter Jackson. | The model fails to maintain topic continuity across dialogue turns, resulting in a contextually misaligned response. |

| Poor Information Integration | (round-1): I love Iron Man movies, he’s my favorite superhero. (round-2): Yes, I really admire his intelligence. When did he first appear? (round-3): I also like Captain America. Have Iron Man and Captain America ever worked together? | (round-1): Iron Man is indeed a key figure in the Marvel Universe. His real name is Tony Stark, a wealthy inventor. (round-2): Iron Man first appeared in 1963, and the Iron Man movie was released in 2008. Do you like any other superheroes? (round-3): Iron Man and Captain America have never appeared in the same movie. | The model fails to integrate prior contextual cues, resulting in a factually inaccurate and inconsistent conclusion across turns. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).