4.2.1. Neural Network Architectures

In the model design of this paper, we first use an age estimation network pre-trained on an unoccluded dataset as the teacher model to guide the student model’s learning. The teacher model selects three models that perform well in the age estimation task: CORAL, DLDL, and MWR:

The CORAL (Cumulative Ordinal Regression) model effectively utilizes the ordinal relationship of age labels by converting the age estimation task into multiple sequential binary classification problems. This model divides the age labels into

binary classification tasks, where the

k-th binary task determines whether the sample’s age reaches or exceeds the threshold

. For example, for

K age levels, CORAL contains

binary classifiers, each sharing the same weight parameters but with independent bias terms, ensuring consistency and monotonicity of the predictions. The loss function for CORAL is defined as:

where

is the true label of sample

i in the

k-th binary classification task, indicating whether the sample’s age reaches the threshold

, and

is the probability predicted by the model for sample

i in the

k-th task, calculated by the Sigmoid function:

where

is the output of the shared weight layer, and

is the bias for the

k-th task. To ensure ordering consistency, the CORAL model requires:

ensuring that the predicted probabilities for each task satisfy monotonicity:

DLDL (Deep Label Distribution Learning) transforms the age estimation task into label distribution learning by constructing the probability distribution of age labels to capture the uncertainty of age. The output layer of DLDL contains the probability for each age label, representing the probability distribution over ages. By predicting a probability distribution instead of a single age value, DLDL reflects the ambiguity in age estimation, leading to higher robustness when processing real-world data, especially when age labels are vague or hard to annotate accurately. The label distribution is modeled using a Gaussian distribution, with the distribution center at the true age

and standard deviation

, defined as follows:

where

The optimization goal of DLDL is to minimize the Kullback-Leibler (KL) divergence between the predicted age distribution

and the true label distribution

y, so that the predicted distribution is as close as possible to the true label distribution:

where

is the true label distribution probability at age label

k, and

is the predicted probability by the model. By learning the probability distributions between different ages, DLDL better captures the ambiguity of age, thus improving robustness in the presence of sparse data or imprecise labels.

MWR (Moving Window Regression) is an innovative ordinal regression algorithm that achieves precise estimation of ordered data by predicting relative ranks (

-rank). Traditional ordinal regression typically predicts the absolute value of the target, whereas MWR uses the concept of

-rank, transforming the prediction task into estimating the relative position with respect to reference points. The definition of

-rank is as follows:

where

is the absolute rank of the input instance

x,

is the mean rank of reference points

and

, and

is half the difference in their ranks. By predicting the

-rank, the model can reconstruct the absolute rank of the input using the following formula:

The MWR algorithm first selects several reference points using a nearest-neighbor approach and calculates their average rank as the initial estimate. Then, using this initial estimate as the center, it forms a search window by iteratively selecting two reference points, optimizing the

-rank, and progressively approaching the target value. In the

t-th iteration, the update rule is:

where

is the predicted

-rank in the

t-th iteration, and

is the window size. This optimization process continues until the predicted value converges or the maximum number of iterations is reached.

The loss function for MWR is defined as the squared error of the

-rank:

where

is the predicted

-rank by the model, and

is the true value.

4.2.2. Implementation Details

The architectures of the three age estimation models consist of an encoder and a regression prediction module. To avoid introducing empirical bias by designing our own CNN architecture for comparing ordinal regression methods, we unify the encoders of all three models as the standard architecture ResNet-34[

42] and retain the original regression module structure and loss function to train the teacher network. During training, we use a batch size of 128, train for 50 epochs, and optimize with the Adam optimizer, with an initial learning rate of

, which is gradually reduced based on the validation set performance. In the backpropagation process, we freeze the feature extraction network parameters for the first 20 epochs, optimizing only the output layer for the age estimation task; after 20 epochs, we unfreeze the feature extraction network parameters and globally optimize the entire network, allowing the model to learn features useful for the output layer’s age estimation, with the best model selected based on MAE performance on the validation set.

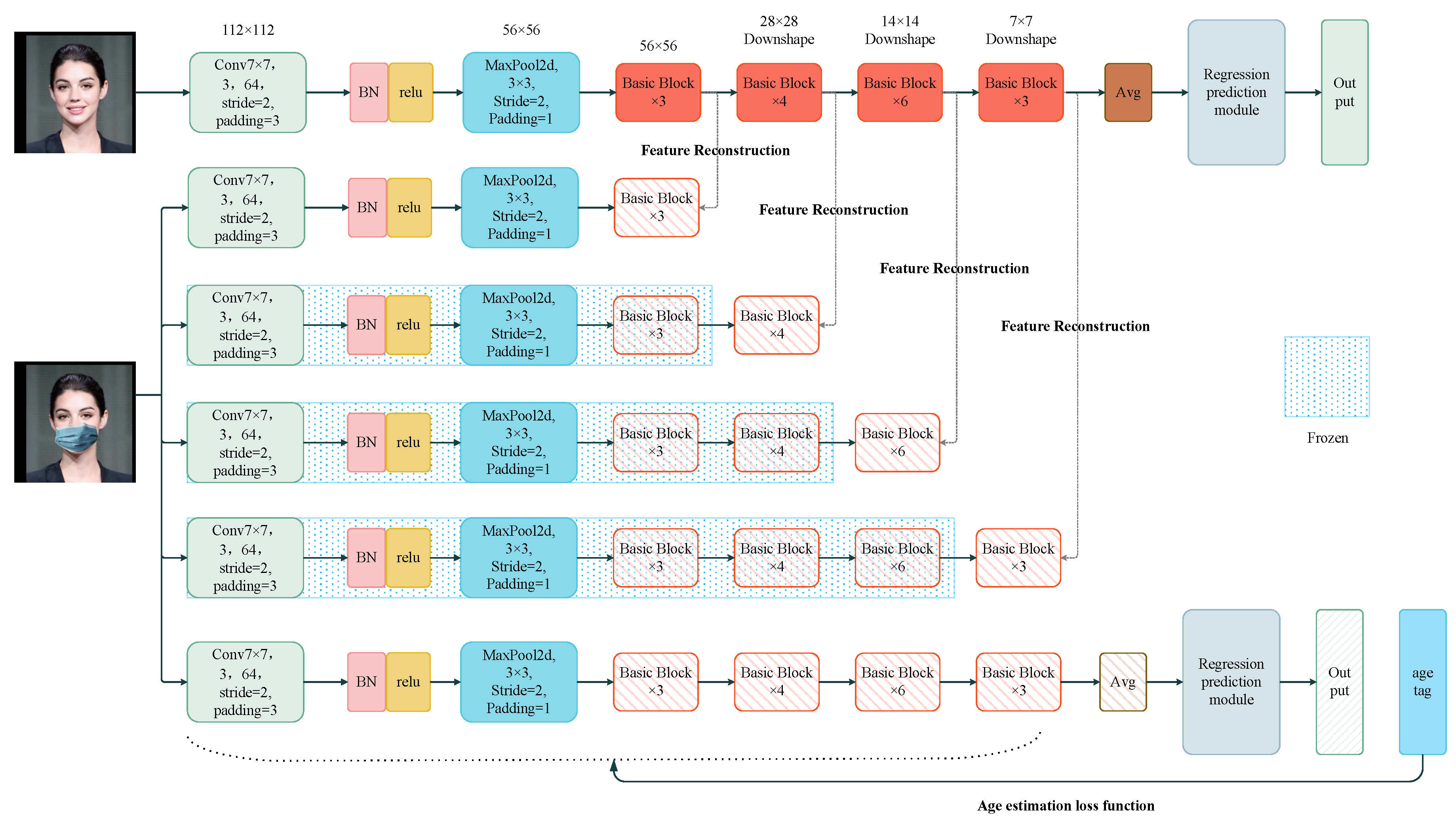

In the training of the student model, the student model extracts features from a dataset with occlusions, while the feature maps from each layer of the teacher model are used as supervisory signals to guide the student model in optimizing the same layer of the network. Since the core task of this study is to reconstruct the features of occluded images rather than model compression, we maintain the structural consistency between the student and teacher networks during training. The training process follows a layer-wise learning approach: first, the parameters of the student model’s current layer are frozen, and the next layer is gradually learned until all hidden layers’ features are reconstructed. Initially, only the student model’s bottom feature layer (layer1) is unfrozen, with the remaining three residual blocks frozen. L2 loss is used to bring the student model’s low-level feature map closer to that of the teacher model, trained for 40 epochs with a learning rate of . Next, the second feature layer (layer2) is unfrozen, while the others remain frozen, and L2 loss is used to optimize feature differences, trained for 40 epochs with a learning rate of . Then, the middle (layer3) and high layers (layer4) are sequentially unfrozen, each trained for 40 epochs, continuing to optimize feature differences with L2 loss, with the learning rate gradually reduced to and . Through this sequential unfreezing and training approach, the student model can approximate the teacher model’s feature expression in a layer-wise learning process, starting with simple low-level features and gradually building up to complex high-level features, ultimately achieving high-quality feature reconstruction.

However, features reconstructed through this method may still retain some meaningless or even harmful noisy features. Therefore, the final step of training involves unfreezing all network layers and performing global fine-tuning for 50 epochs using the original age labels to further eliminate noisy features and retain valuable ones. During the global fine-tuning process, the loss function should remain consistent with the teacher model’s loss function during pre-training to ensure the student model learns the teacher model’s feature expression to the greatest extent.

4.2.3. Ablation Study

As presented in Equation (

1), we employed the mean absolute error (MAE), which is the most commonly used metric[

43,

44], to assess the accuracy of age estimation. A lower MAE value indicates a higher accuracy in age estimation performance. where

N is the total number of samples,

represents the actual age, and

denotes the predicted age. A lower MAE value indicates better performance in age estimation.

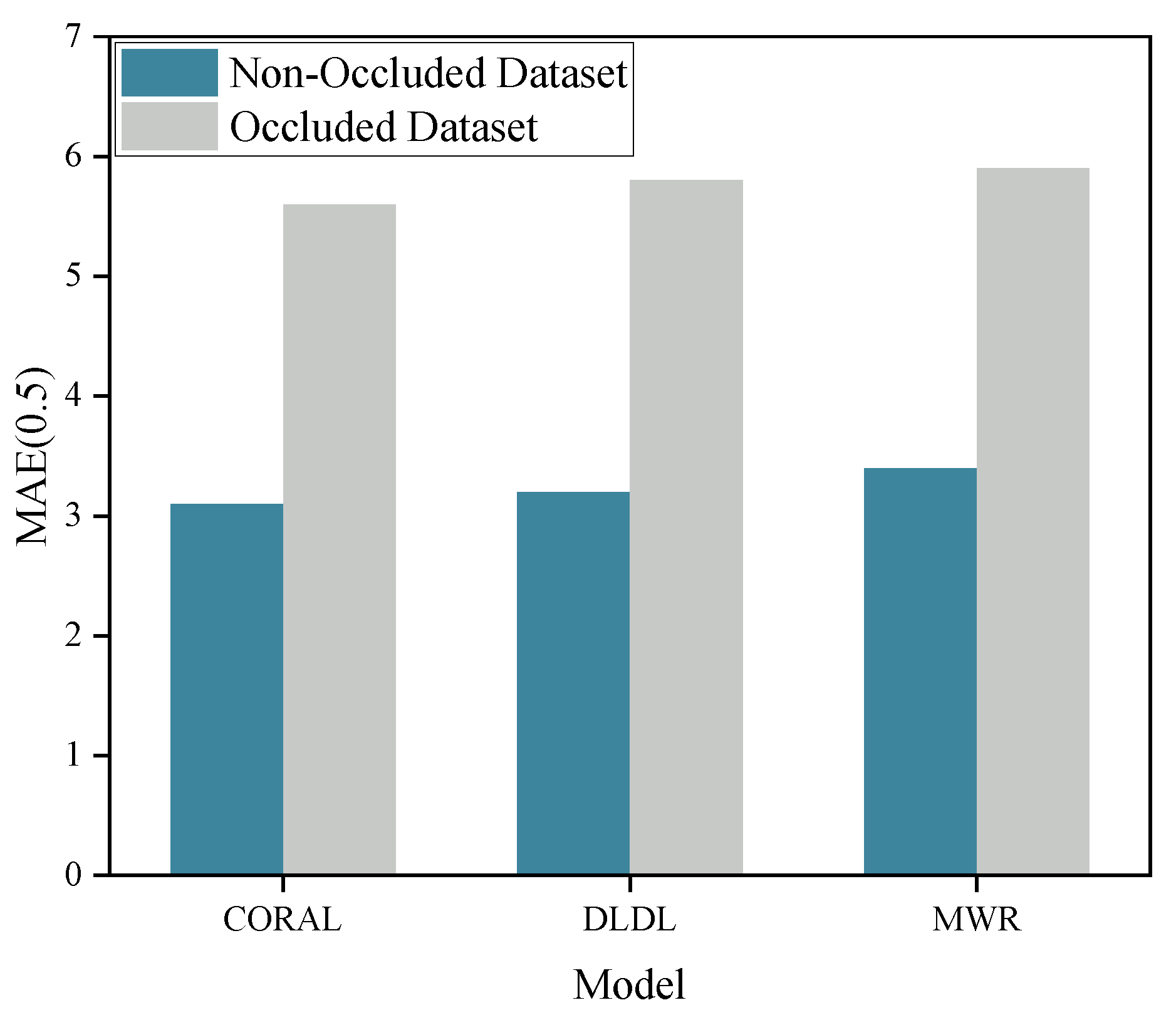

The MAE values for the CORAL, DLDL, and MWR models, when directly tested on the unoccluded dataset, were 3.12, 3.23, and 3.39, respectively. However, upon testing on a dataset with 50% randomly occluded images, the MAE values increased to 5.62, 5.82, and 5.89, respectively. While all three models exhibited strong performance on the unoccluded dataset, the tests on the dataset with random occlusions revealed a substantial increase in the MAE for all models, indicating that facial occlusion significantly impairs the models’ ability to estimate age accurately.

Figure 5 visually illustrates the extent of the effect of random occlusion on model performance.

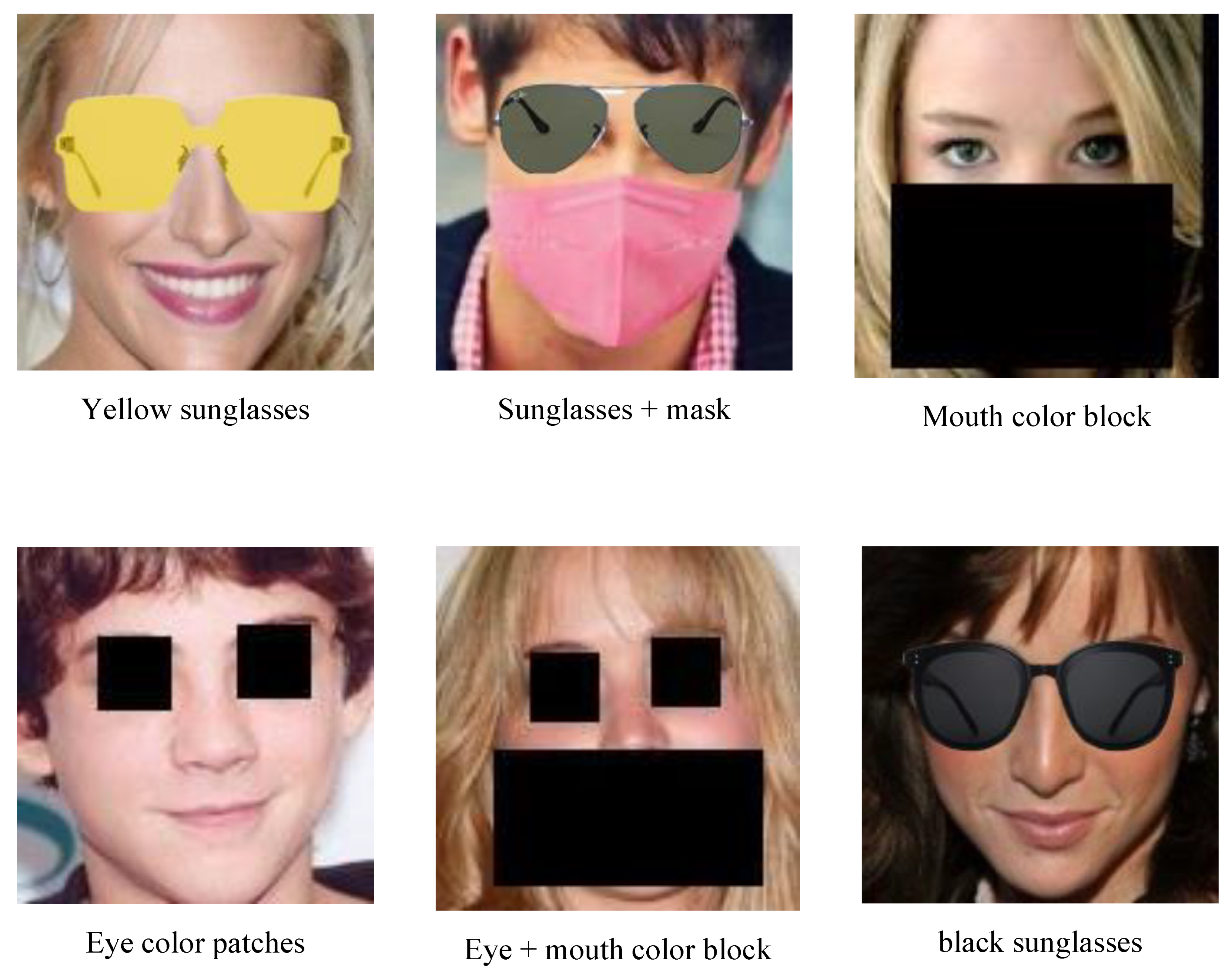

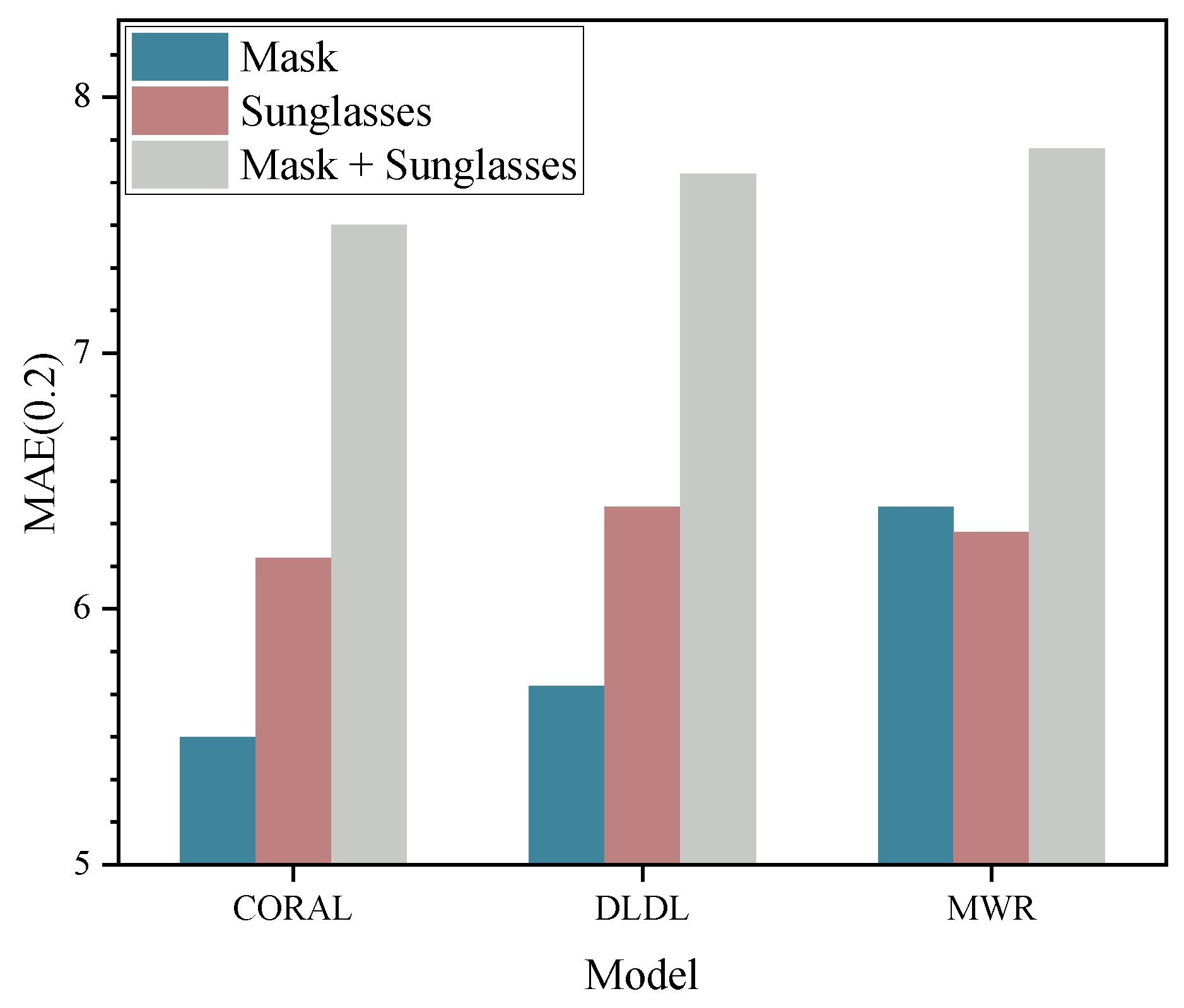

In order to analyze the specific impact of different occlusion methods on model accuracy, we evaluated the performance of the three pre-trained baseline models under various occlusion conditions. The results demonstrated that the MAE values of the CORAL, DLDL, and MWR models, under different occlusion scenarios, reflect the varying effects of different occlusion types on model performance. For single occlusions, the MAE values when wearing a mask were 5.51 (CORAL), 5.72 (DLDL), and 6.44 (MWR); and when wearing sunglasses, the MAE values were 6.21 (CORAL), 6.44 (DLDL), and 6.32 (MWR). When both a mask and sunglasses were simultaneously worn, the MAE values increased to 7.52 (CORAL), 7.71 (DLDL), and 7.82 (MWR). It was observed that occlusion of the eyes had a more pronounced impact on model performance compared to the occlusion of the mouth and nose. When both types of occlusion were present, the performance of the models declined markedly.

Figure 6 presents the impact of various occlusion methods on model performance. Furthermore, we examined the effect of using color blocks as a direct occlusion technique instead of physical occlusion objects. The experimental results indicated that the use of color block occlusion also led to a reduction in model accuracy, although the impact was slightly less severe compared to using physical occlusion objects. Our analysis suggests that the color block occlusion method introduces less complexity, whereas the use of diverse physical occlusion objects, with varying colors, textures, and shapes, introduces greater uncertainty, thereby introducing more complex noise to the model.

Table 3 provides a comparison of the impact of all occlusion methods on model performance.

To validate the impact of layer-wise feature reconstruction on the performance of age estimation models, we tested the MAE values of three baseline models on the MORPH-2 dataset with 100% random occlusion. The experimental results show that after layer-wise feature reconstruction, all three models achieved varying degrees of MAE reduction. This demonstrates that reconstructing occluded features provides age estimation models with more informative facial features, thereby effectively improving the accuracy of age estimation.

Table 4 shows the effect of feature reconstruction on model accuracy.

To further validate the impact of using original age labels on the accuracy of age estimation models, we compared the accuracy of three baseline models after layer-wise feature reconstruction, both before and after fine-tuning with age labels.

Table 5 presents the MAE values of the models after global fine-tuning. The results indicate that all three models achieved further accuracy improvements, demonstrating that fine-grained tuning better adapts to the age distribution within the dataset, enabling the models to provide more accurate estimations across different age groups.

Table 4.

MAE of the models with and without layer-wise feature reconstruction on the MORPH-2 dataset with random occlusion.

Table 4.

MAE of the models with and without layer-wise feature reconstruction on the MORPH-2 dataset with random occlusion.

| Model |

MAE |

| Baseline |

Layer-wise Feature Reconstruction |

| CORAL |

6.62 |

4.59 ↓ 2.03

|

| DLDL |

6.88 |

4.91 ↓ 1.97

|

| MWR |

6.85 |

5.08 ↓ 1.77

|

Although feature reconstruction enhances the model’s capability, it still retains some noisy features. By incorporating age labels for global fine-tuning, the models effectively eliminate these noise features, resulting in more precise final estimations. Therefore, the fine-tuning process not only improves model performance but also significantly enhances the model’s adaptability to complex data characteristics, thereby boosting overall model accuracy.

Table 5.

MAE of the models before and after global fine-tuning with original age labels.

Table 5.

MAE of the models before and after global fine-tuning with original age labels.

| Model |

MAE |

| Layer-wise Feature Reconstruction |

Layer-wise Feature Reconstruction + Age Label Fine-tuning |

| CORAL |

4.59 |

4.27 ↓ 0.32

|

| DLDL |

4.91 |

4.43 ↓ 0.48

|

| MWR |

5.08 |

4.87 ↓ 0.21

|

4.2.4. Comparison Experiment

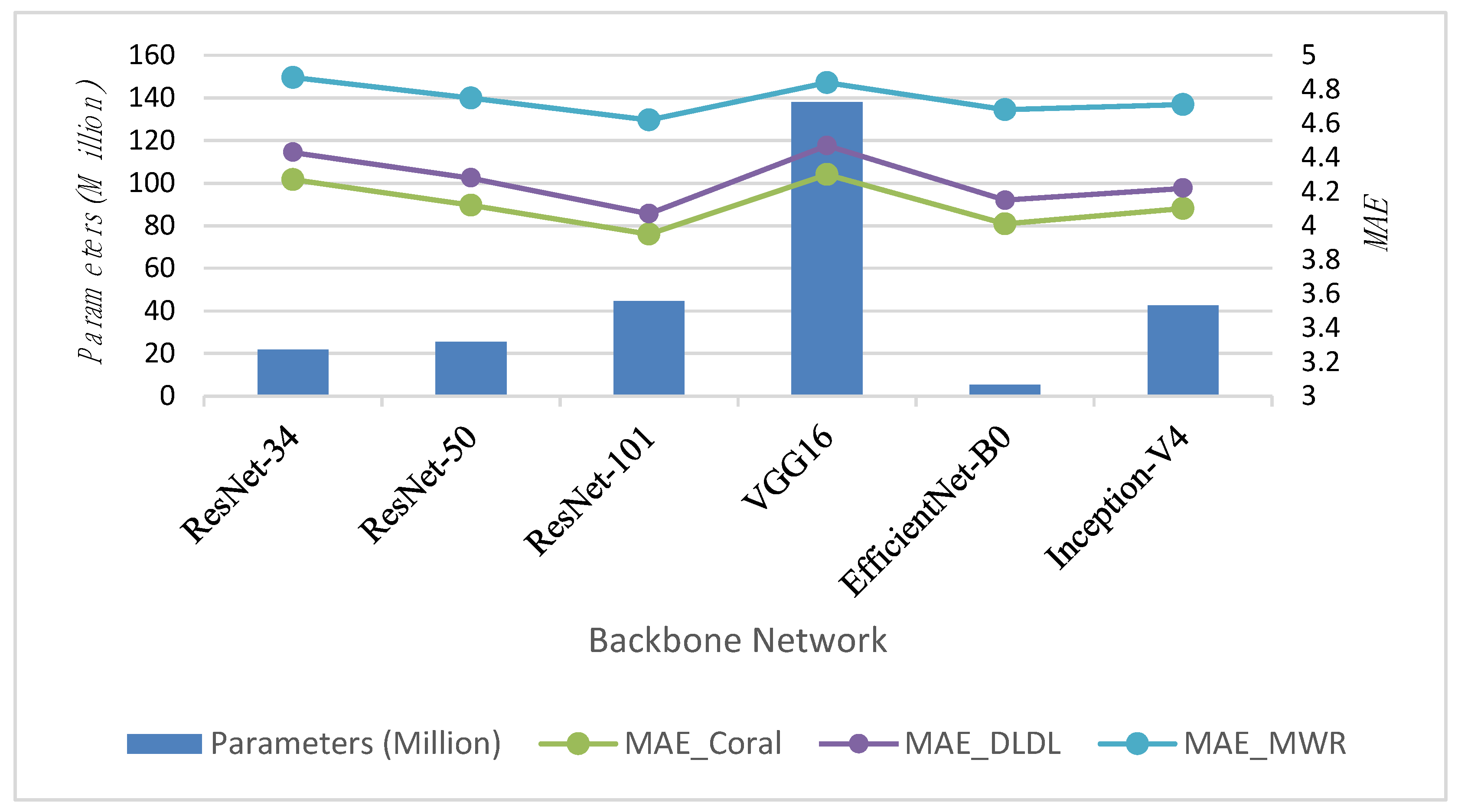

To test the impact of different backbone networks on model performance, we tested six backbone networks: ResNet-34, ResNet-50, ResNet-101, VGG16[

45], EfficientNet-B0 [

46], and InceptionV4 [

47], evaluating their performance after layer-wise feature reconstruction and age label fine-tuning, as shown in

Table 6. EfficientNet employs a compound scaling method. By simultaneously adjusting the network’s width, depth, and resolution, it balances model accuracy and computational efficiency. The design goal of EfficientNet is to achieve more efficient utilization of parameters and computational resources, so it generally delivers better performance with lower computational resources compared to the ResNet series architectures. However, when computational resources are abundant, ResNet networks can achieve better performance as the network depth increases. Although VGG16 performs well in traditional classification tasks, age estimation is a regression task rather than a simple classification task. Its relatively simple structure may not be sufficient to capture the complex features needed, and the excessive number of fully connected layers makes the VGG16 network parameter-heavy, resulting in lower computational efficiency and requiring more computational resources during training.

Figure 7 visually demonstrates the impact of different backbone networks on the performance of the age estimation task.

To fully demonstrate the effectiveness of the layer-by-layer distillation approach, we directly trained and fine-tuned three baseline models on the MORPH-2 dataset with multiple occlusion types. The occlusion types in the dataset are shown in

Table 7, and we compare the experimental results with those of our student model.

The results in

Table 8 show that the layer-wise feature reconstruction combined with fine-tuning significantly outperforms the baseline model trained directly on the occluded dataset. This is because the model trained directly on the occluded dataset relies solely on visible features, failing to fully exploit the relationship between the occluded and unoccluded regions, which results in suboptimal feature representations. Additionally, the model learns a substantial amount of noise features introduced by occlusions, further degrading its performance. In contrast, layer-wise feature reconstruction allows the model to progressively reconstruct features from low-level to high-level, ensuring that it can learn more informative and accurate facial features while gradually mitigating the impact of noise features.

Table 6.

Comparison of Models with Parameters, Layers, and MAE under different regression methods.

Table 6.

Comparison of Models with Parameters, Layers, and MAE under different regression methods.

| Model |

Parameters (Millions) |

Layers |

MAE |

| CORAL |

DLDL |

MWR |

| ResNet-34 |

21.8 |

34 |

4.27 |

4.43 |

4.87 |

| ResNet-50 |

25.6 |

50 |

4.12 |

4.28 |

4.75 |

| ResNet-101 |

44.5 |

101 |

3.95 |

4.07 |

4.62 |

| VGG16[45] |

138.0 |

16 |

4.30 |

4.47 |

4.84 |

| EfficientNet-B0 [46] |

5.3 |

237 |

4.01 |

4.15 |

4.68 |

| InceptionV4 [47] |

42.5 |

48 |

4.10 |

4.22 |

4.71 |

Figure 7.

Performance comparison of six backbone networks—ResNet-34, ResNet-50, ResNet-101, VGG16, EfficientNet-B0, and InceptionV4—on three age estimation tasks: CORAL, DLDL, and MWR.

Figure 7.

Performance comparison of six backbone networks—ResNet-34, ResNet-50, ResNet-101, VGG16, EfficientNet-B0, and InceptionV4—on three age estimation tasks: CORAL, DLDL, and MWR.

Table 7.

Proportion of Six Occlusion Types in the MORPH-2 Dataset

Table 7.

Proportion of Six Occlusion Types in the MORPH-2 Dataset

| Occlusion Type |

Proportion (%) |

| Mask |

15% |

| Sunglasses |

15% |

| Mask + Sunglasses |

20% |

| Mouth Color Block |

15% |

| Eyes Color Block |

15% |

| Mouth + Eyes Color Block |

20% |

4.2.5. Cross-Dataset Comparison with Multiple Advanced Models

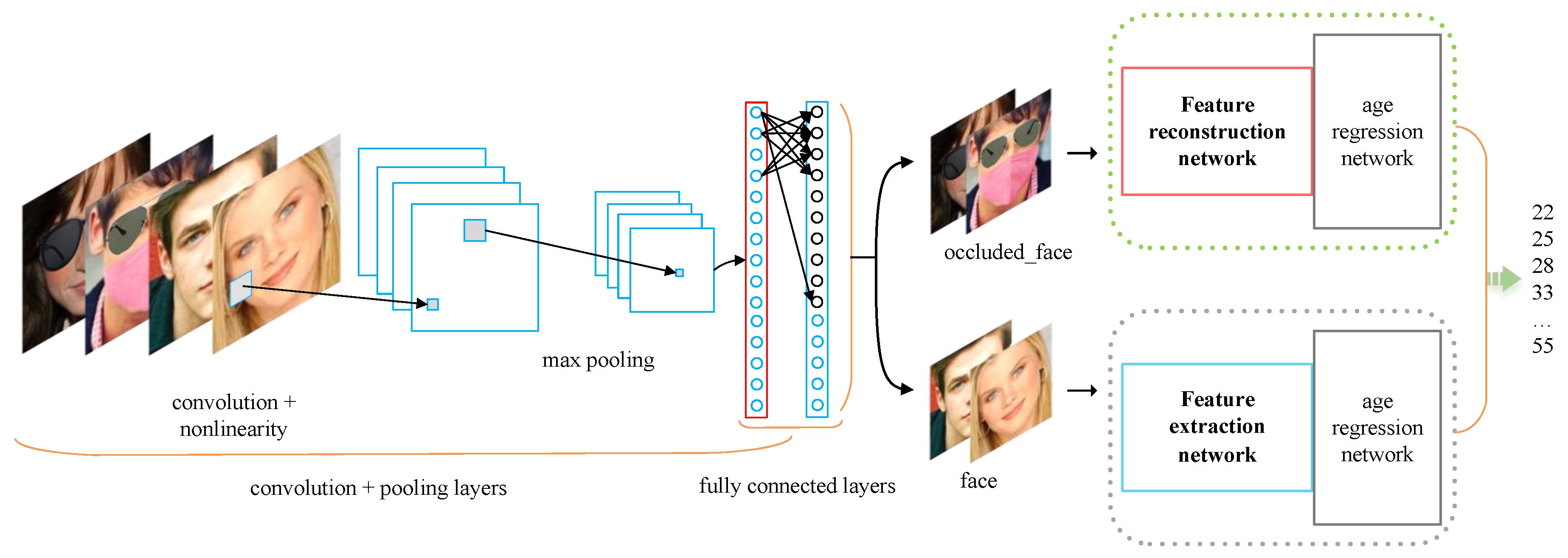

To ensure the model can correctly route the images, we trained a binary classification convolutional neural network for occlusion detection. The model’s class labels were set to “face” and “occluded_face” to allow the model to effectively distinguish between faces with and without occlusions. In the network architecture, the input layer accepts color face images of size 224x224 pixels. Features are then extracted through multiple convolutional and pooling layers, followed by a global average pooling layer that converts the feature map into a one-dimensional vector. The output layer contains two neurons, using the Sigmoid activation function to output the probability for each class.

During training, binary cross-entropy was used as the loss function to optimize the model’s ability to distinguish between the two classes. The optimizer was Adam with an initial learning rate of 0.001, a training batch size of 32, and 50 epochs. To enhance the model’s generalization ability, we applied various data augmentations, including random rotations, flips, and color jittering. The performance on the validation set was monitored during training, and an early stopping strategy was applied to prevent overfitting. In this way, the trained detector was able to accurately identify whether the face region in the input image had occlusion, achieving good classification results.

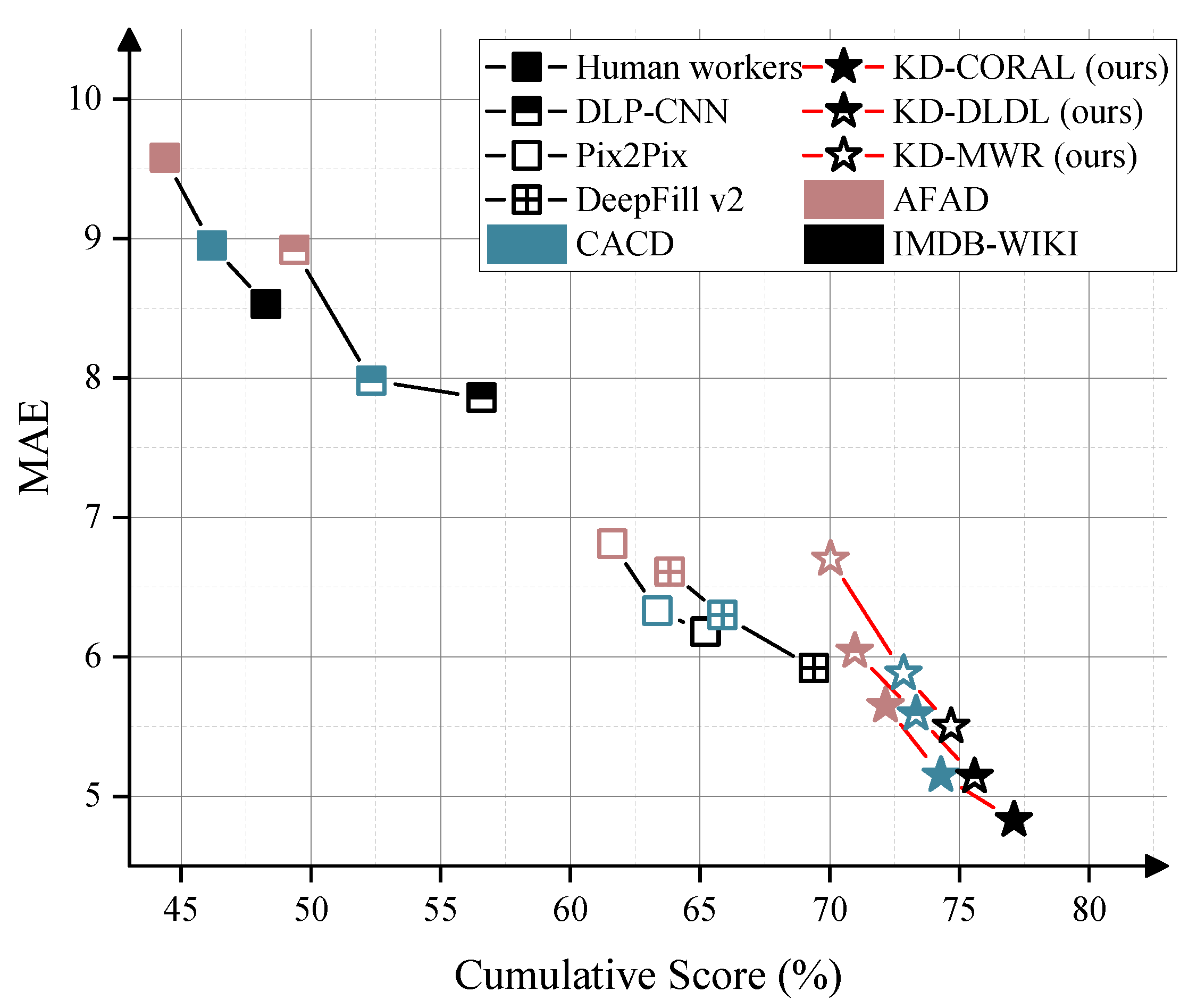

To validate the cross-dataset performance of the model, we conducted random occlusion tests on the AFAD, CACD, and IMDB-WIKI datasets, comparing the results with several mainstream occluded face age estimation models. A group of human workers, who manually estimated ages from occluded facial images, served as the baseline for the age regression task. We compared the performance of human workers, DLP-CNN [

48], Pix2Pix [

49], DeepFill v2 [

50], LCA-GAN [

1], MCGRL[

6], and our methods (using CORAL, DLDL, and MWR as the underlying models) for occlusion-aware facial age estimation in terms of MAE and CS(%).All comparison models were retrained on the MORPH-2 dataset, with the occlusion types divided according to

Table 7, ensuring consistency in the training conditions.For all methods, we performed tests on different datasets where the test set randomly applied various mixed occlusions to 50% of the facial images, while the other 50% remained unchanged.

Table 9 shows that the KD-CORAL model, using CORAL as the age regressor, achieves the best performance in our experiments across multiple datasets. Additionally, we observed significant differences in MAE and CS performance among the various models across the three datasets. For all methods, the overall performance on different datasets follows the order: AFAD > CACD > IMDB-WIKI. We attribute this to the fact that the CACD dataset includes some low-quality images (e.g., 20×20 pixels) and has a wide age range (14-62 years), requiring the model to consider a broader age span. The IMDB-WIKI dataset, on the other hand, uses an automated labeling process, resulting in lower annotation quality and significant label noise. We found many photos where the apparent age did not match the labeled age, and some images even lacked faces. Additionally, there is a data imbalance issue, with a severe lack of images in younger age groups. As a result, the model performance showed a noticeable decline when tested on this dataset.Due to LCA-GAN employing a more complex attention mechanism for pixel-level de-occlusion reconstruction, it exhibited the best performance on the high-quality AFAD dataset. However, its performance showed significant degradation on the lower-quality CACD and IMDB-WIKI datasets. Experimental results show that our method still performs well under lower image quality, as the layer-by-layer detailed feature reconstruction allows the model to focus on different feature dimensions of the image, which maximizes the model’s robustness.