Submitted:

04 April 2025

Posted:

07 April 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methodology (Further Abbreviated)

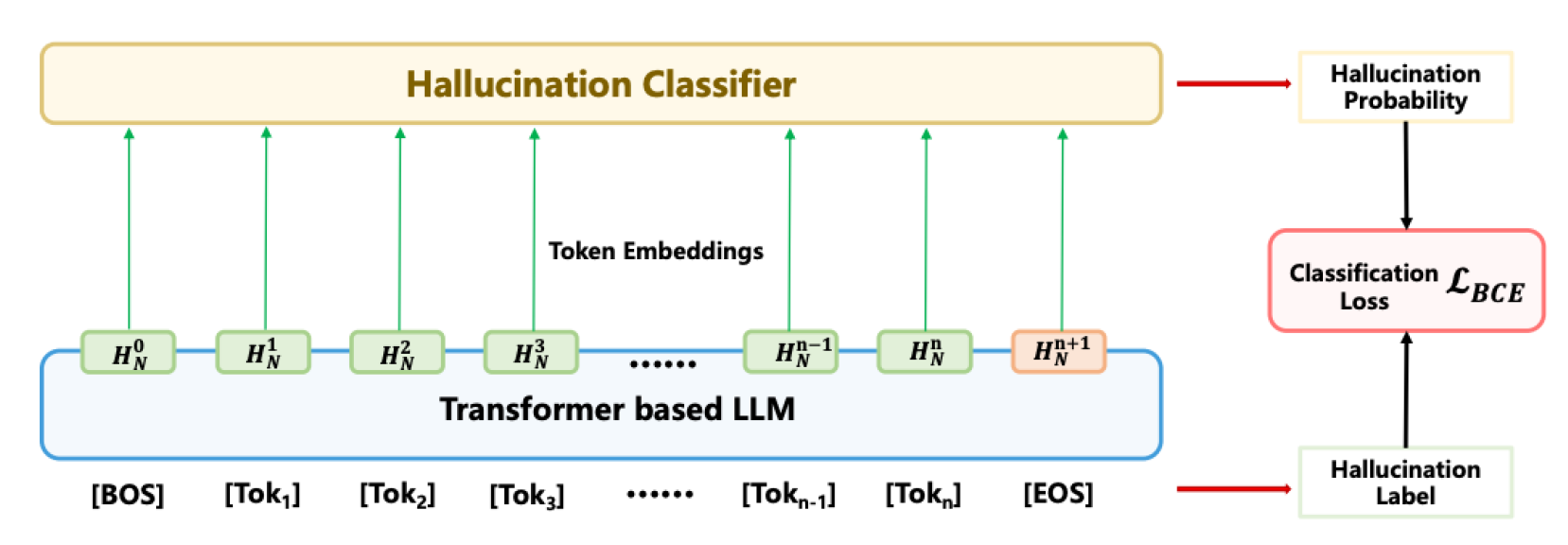

3.1. Model Network (Further Abbreviated)

3.2. Transformer Encoder Layer

3.3. Hierarchical Attention Mechanism (Abbreviated)

3.4. Multi-level Self-Attention Weighting

3.5. LoRA-Based Fine-Tuning (Abbreviated)

- scales LoRA’s impact on transforming hidden states.

- is the previous layer’s hidden state, updated by a low-rank transformation.

- is trained on hallucination detection data, distinguishing between genuine and hallucinatory responses.

3.5.1. Benefits of LoRA in Hallucination Detection (Abbreviated)

- Reduced Memory Footprint: Only a small parameter subset is updated, reducing memory usage.

- Preserved Generalization: Retaining pre-trained parameters keeps general linguistic abilities while enhancing hallucination detection.

- Increased Adaptability: LoRA facilitates targeted updates in transformer layers, especially within self-attention and cross-attention, where prompt-response correlations are analyzed.

3.5.2. Layer-Specific Adaptation Strategy (Abbreviated)

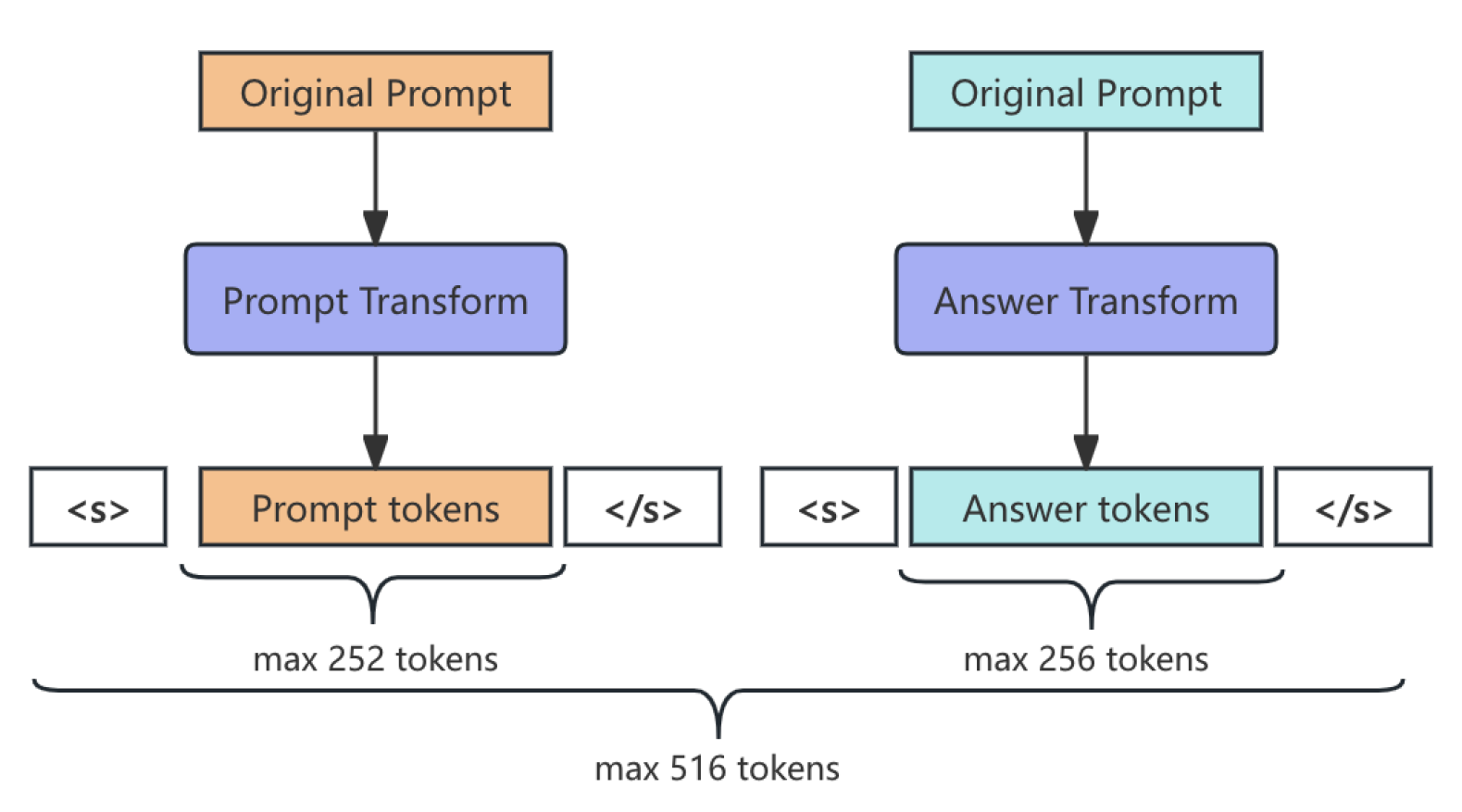

3.6. Prompt-Answer Token Segmentation

3.7. Function Definitions

4. Evaluation Metrics (Abbreviated)

4.1. Accuracy (Abbreviated)

4.2. Precision and Recall (Abbreviated)

4.3. F1 Score

4.4. Area Under the ROC Curve (AUC-ROC) (Abbreviated)

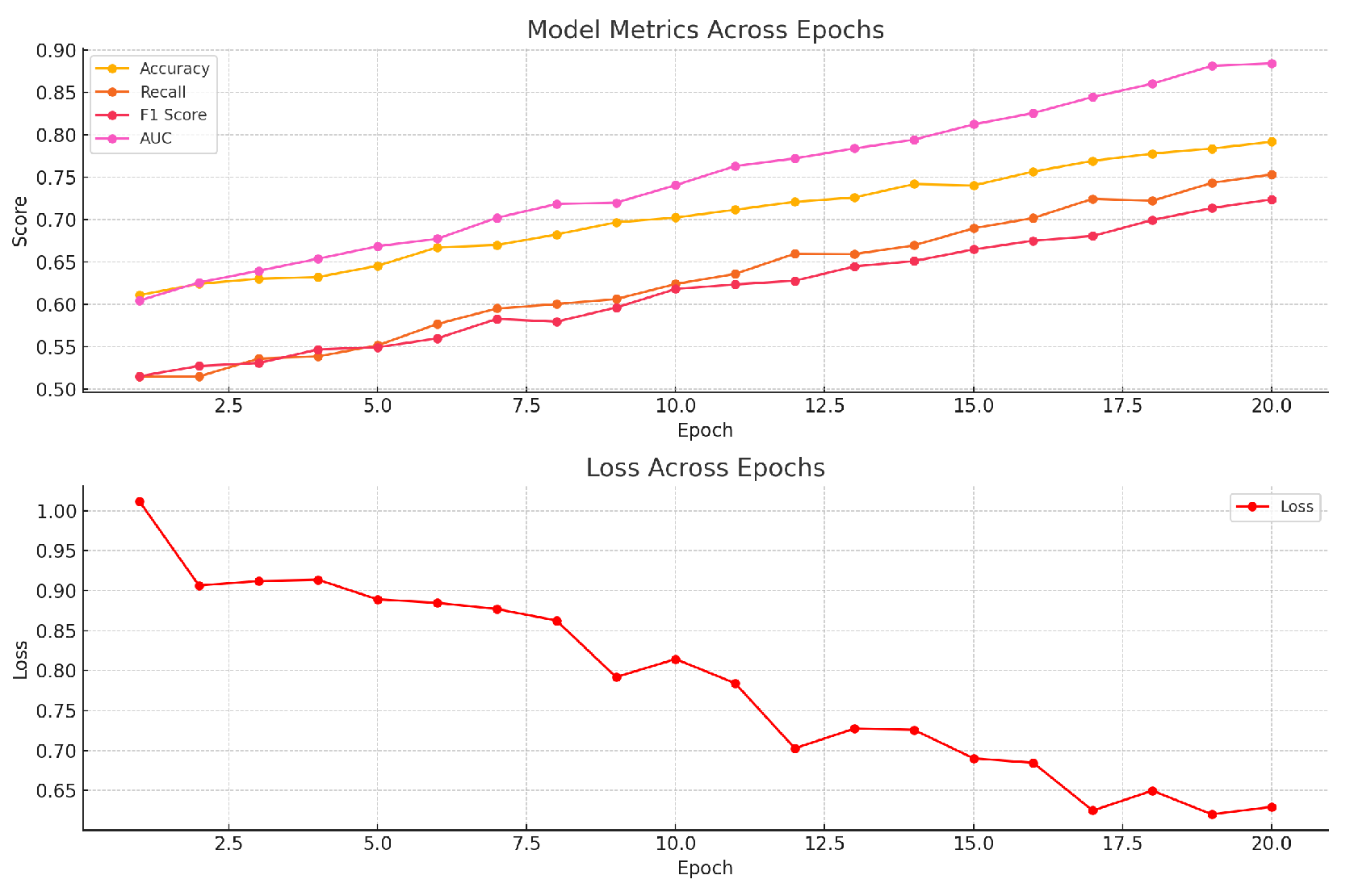

5. Experiment Results

6. Conclusion (Abbreviated)

References

- E. J. Hu, Y. E. J. Hu, Y. Shen, P. Wallis, Z. Allen-Zhu, Y. Li, S. Wang, L. Wang, and W. arXiv preprint arXiv:2106.09685, arXiv:2106.09685, 2021.

- X. Hu, D. X. Hu, D. Ru, L. Qiu, Q. Guo, T. Zhang, Y. Xu, Y. Luo, P. Liu, Z. Zhang, and Y. Zhang, “Knowledge-centric hallucination detection,” 2024.

- M. Košprdić, A. M. Košprdić, A. Ljajić, B. Bašaragin, D. Medvecki, and N. Milošević, “Verif. arXiv preprint arXiv:2402.18589, arXiv:2402.18589, 2024.

- A. Simhi, J. A. Simhi, J. Herzig, I. Szpektor, and Y. arXiv preprint arXiv:2410.22071, arXiv:2410.22071, 2024.

- L. Da, P. M. L. Da, P. M. Shah, A. Singh, and H. Wei, “Evidencechat: A rag enhanced llm framework for trustworthy and evidential response generation,” 2024.

- T. Kwartler, M. T. Kwartler, M. Berman, and A. Aqrawi, “Good parenting is all you need–multi-agentic llm hallucination mitigation,” arXiv e-prints, pp. arXiv–2410, 2024.

- M. Berman, A. M. Berman, A. Aqrawi et al. arXiv preprint arXiv:2410.14262, arXiv:2410.14262, 2024.

- Y. Chen, Q. Y. Chen, Q. Fu, Y. Yuan, Z. Wen, G. Fan, D. Liu, D. Zhang, Z. Li, and Y. Xiao, “Hallucination detection: Robustly discerning reliable answers in large language models,” in Proceedings of the 32nd ACM International Conference on Information and Knowledge Management, 2023, pp. 245–255.

- S. Lin, J. S. Lin, J. Hilton, and O. arXiv preprint arXiv:2109.07958, arXiv:2109.07958, 2021.

| Model | Accuracy | Recall | F1 Score | AUC |

|---|---|---|---|---|

| Our Model (LoRA-Roberta) | 91.5% | 0.86 | 0.87 | 0.94 |

| BERT-base | 84.2% | 0.75 | 0.77 | 0.82 |

| GPT-2 | 82.9% | 0.73 | 0.74 | 0.81 |

| T5-small | 83.5% | 0.74 | 0.75 | 0.83 |

| Roberta-base (without LoRA) | 85.8% | 0.78 | 0.79 | 0.85 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).