Submitted:

30 March 2025

Posted:

31 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

2.1. “Traditional” Topic Detection Algorithms

2.1.1. Bag-of-Words Based

2.1.2. Embedding-Based

2.2. Using Large Language Models (LLMs)

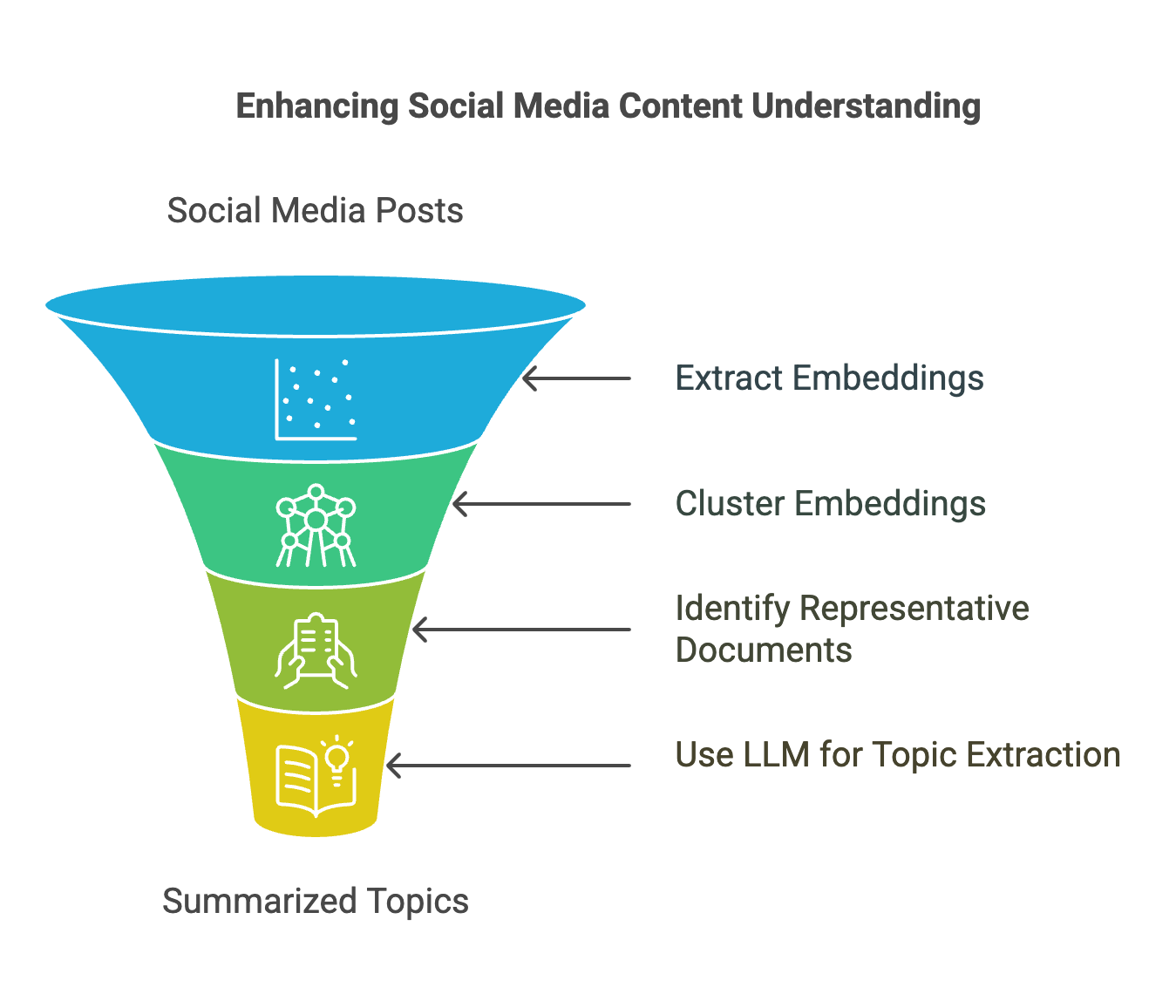

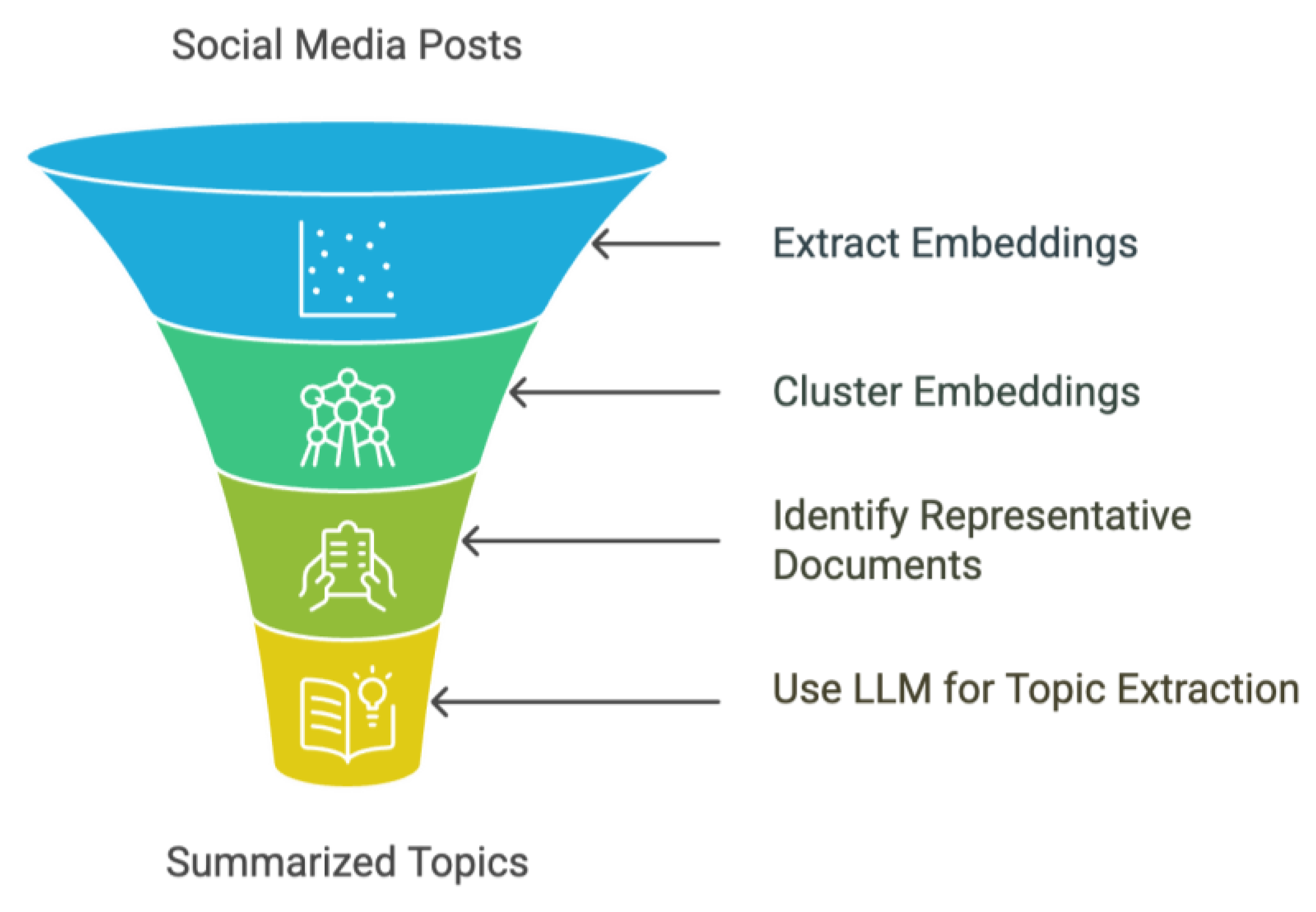

3. Proposed Solution

- Extract embeddings from Web & Social Media documents (posts)

- Cluster embeddings

- Specify the representative social media documents from each cluster

- Send the representatives to LLM to extract topics and summarise.

1. Extract Embeddings

2. Cluster Embeddings

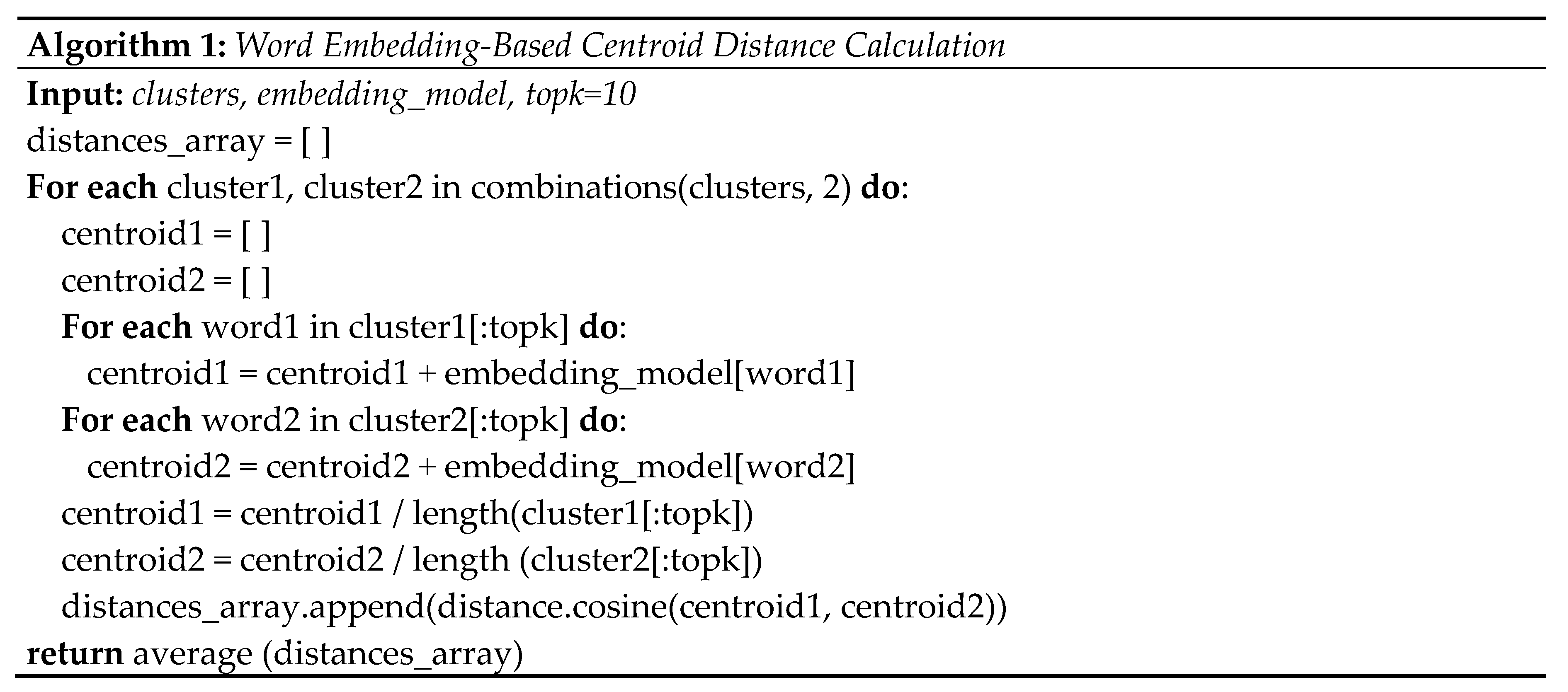

3. Specify the Representative Documents

4. Send Cluster Representatives to LLM

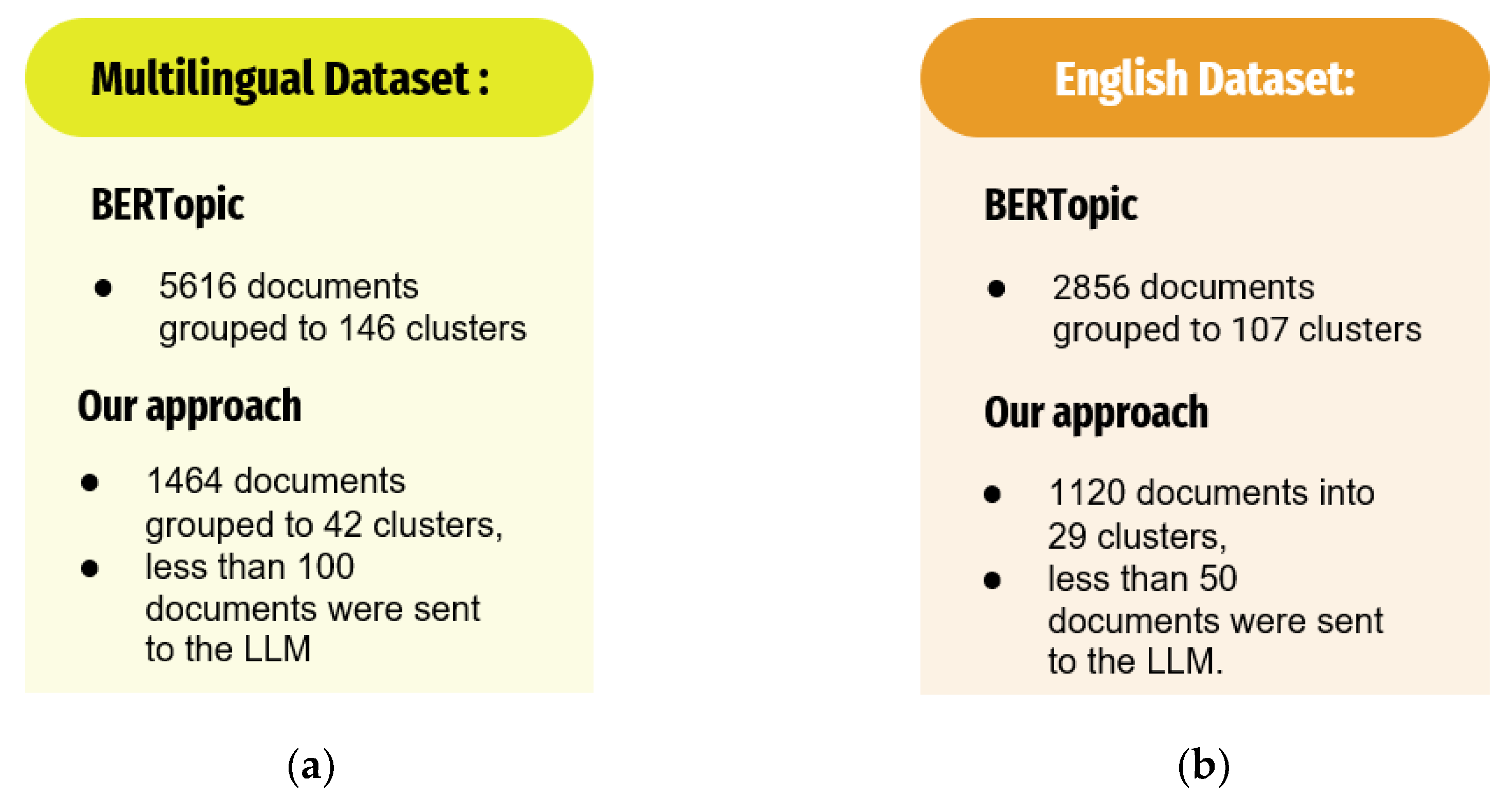

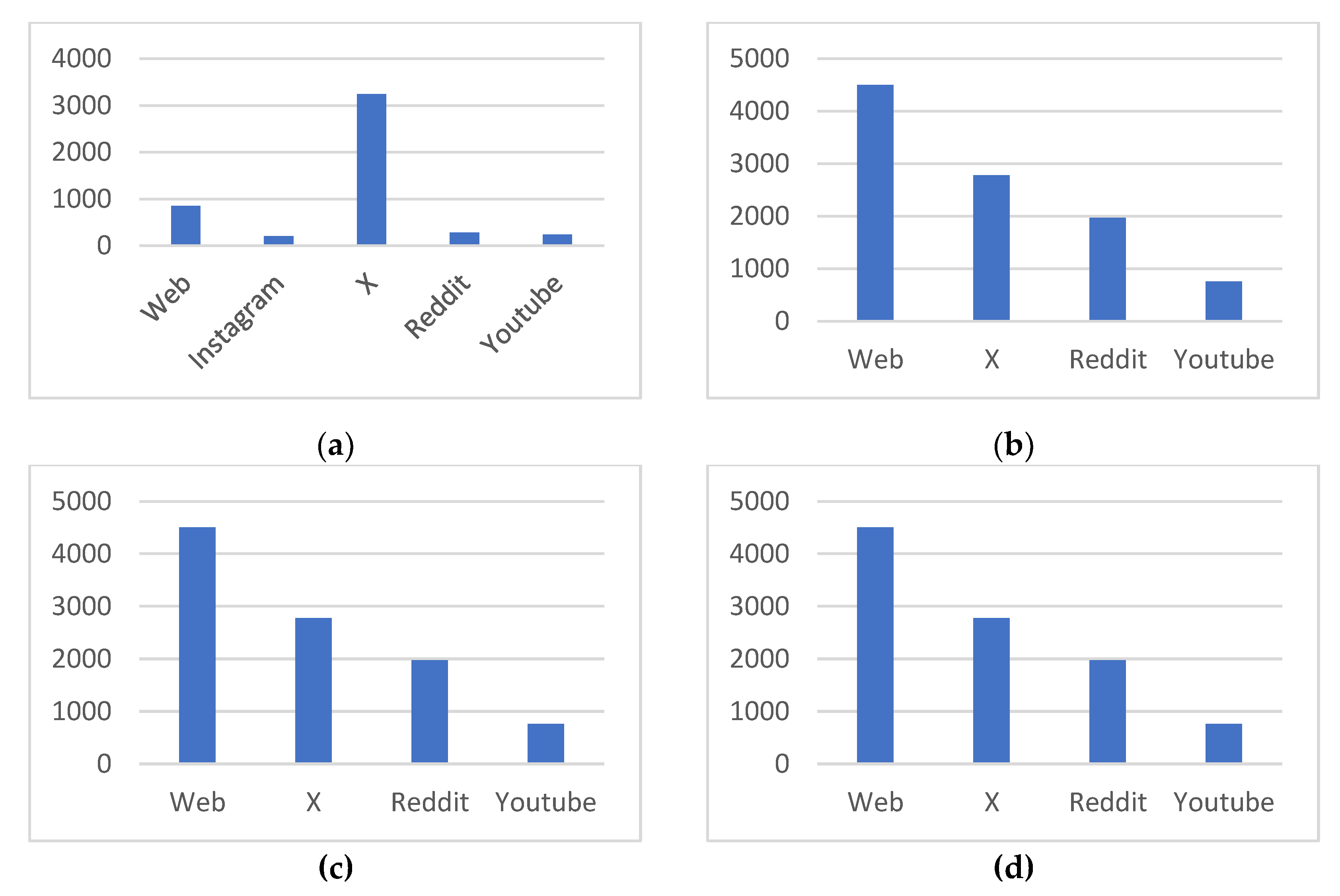

4. Evaluation

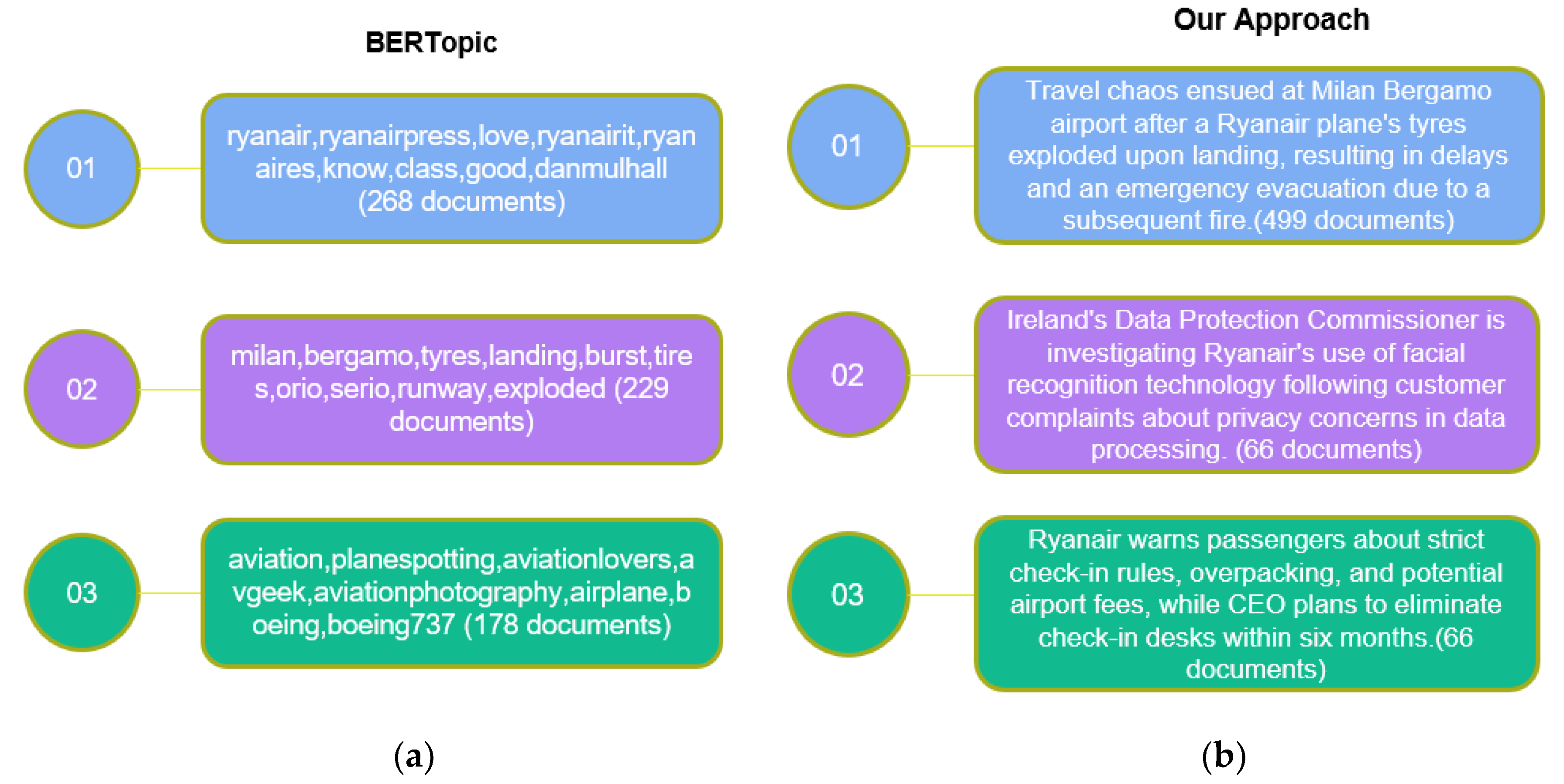

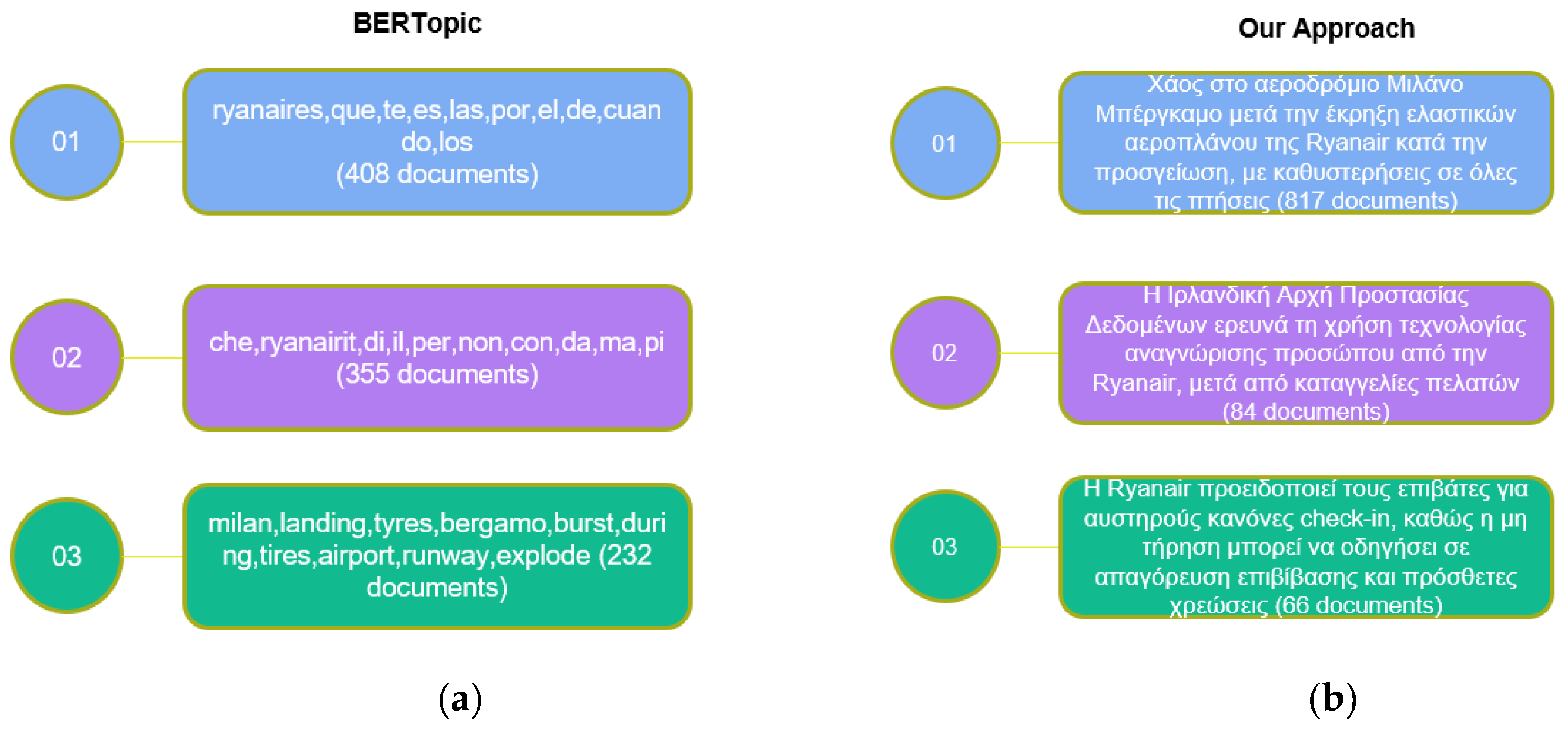

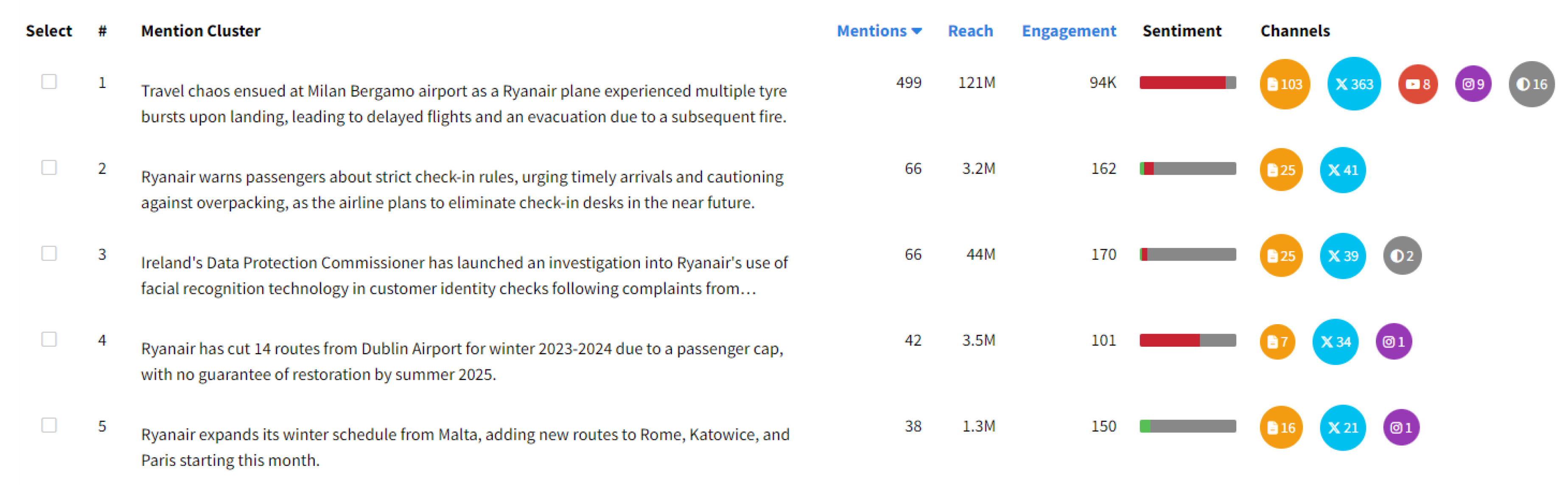

4.1. Qualitative Evaluation

4.2. Quantitative Evaluation

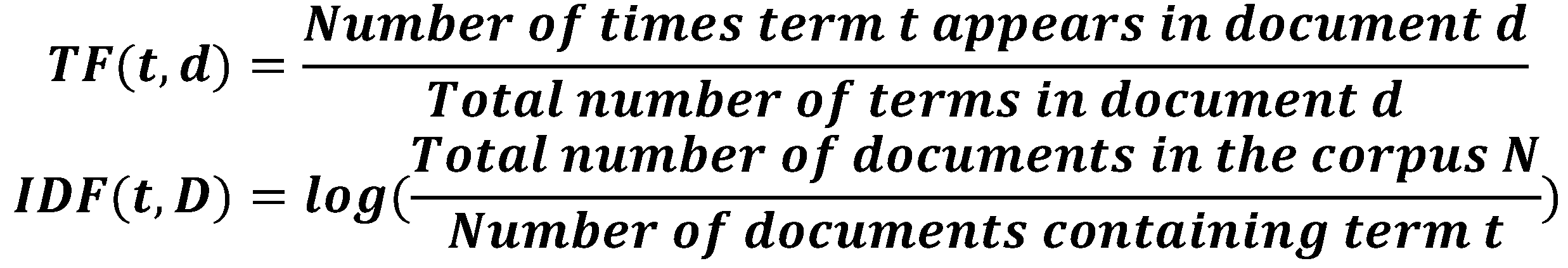

Topic Coherence

Topic Diversity

| Dataset | Ryanair | Easyjet | Trello | Asana |

|---|---|---|---|---|

| AI Mention Clustering | ||||

| Unique Keywords | 78% | 68% | 67% | 68% |

| Centroid Distance | 0.56 | 0.55 | 0.54 | 0.57 |

| BERTopic | ||||

| Unique Keywords | 52% | 52% | 48% | 53% |

| Centroid Distance | 0.56 | 0.55 | 0.53 | 0.58 |

Davies-Bouldin Index

5. Discussion & Future Work

Data Availability Statement

Conflicts of Interest

References

- Tedeschi, A.; Benedetto, F. A cloud-based big data sentiment analysis application for enterprises' brand monitoring in social media streams. Proc. IEEE RSI Conf. on Robotics and Mechatronics 2015, 2, 186–191. [CrossRef]

- Perakakis, E.; Mastorakis, G.; Kopanakis, I. Social Media Monitoring: An Innovative Intelligent Approach. Designs 2019, 3(2), 24. [CrossRef]

- Burzyńska, J.; Bartosiewicz, A.; Rękas, M. The social life of COVID-19: Early insights from social media monitoring data collected in Poland. Health Informatics Journal 2020, 26(4), 3056–3065. [CrossRef]

- Hayes, J.L.; Britt, B.C.; Evans, W.; Rush, S.W.; Towery, N.A.; Adamson, A.C. Can Social Media Listening Platforms' Artificial Intelligence Be Trusted? Examining the Accuracy of Crimson Hexagon's (Now Brandwatch Consumer Research's) AI-Driven Analyses. J. Advert. 2020, 50(1), 81–91. [CrossRef]

- Hussain, Z.; Hussain, M.; Zaheer, K.; Bhutto, Z. A.; Rai, G. Statistical Analysis of Network-Based Issues and Their Impact on Social Computing Practices in Pakistan. J. Comput. Commun. 2016, 4(13), 23–39. [CrossRef]

- Shi, L.; Luo, J.; Zhu, C.; Kou, F.; Cheng, G.; Liu, X. A survey on cross-media search based on user intention understanding in social networks. Inf. Fusion 2022, 91, 566–581. [CrossRef]

- Kitchens, B.; Abbasi, A.; Claggett, J. L. Timely, Granular, and Actionable: Designing a Social Listening Platform for Public Health 3.0. MIS Q. 2024, 48(3), 899–930. [CrossRef]

- He, Q.; Lim, E.-P.; Banerjee, A.; Chang, K. Keep It Simple with Time: A Reexamination of Probabilistic Topic Detection Models. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32(10), 1795–1808. [CrossRef]

- Li, C.; Liu, M.; Yu, Y.; Wang, H.; Cai, J. Topic Detection and Tracking Based on Windowed DBSCAN and Parallel KNN. IEEE Access 2020, 9, 3858–3870. [CrossRef]

- Ahmed, A.; Ho, Q.; Smola, A. J.; Teo, C. H.; Xing, E.; Eisenstein, J. Unified analysis of streaming news. In Proceedings of the 2011 ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Diego, CA, USA, August 21–24, 2011; ACM: New York, NY, USA, 2011; pp. 1–9. [CrossRef]

- Lu, Q.; Conrad, J. G.; Al-Kofahi, K.; Keenan, W. Legal document clustering with built-in topic segmentation. Proceedings of the Fifth International Conference on Statistical Data Analysis Based on the L1-Norm and Related Methods, Shanghai, China, July 5–8, 2011; Elsevier: Amsterdam, The Netherlands, 2011; pp. 383–392.

- Davis, C. A.; Serrette, B.; Hong, K.; Rudnick, A.; Pentchev, V.; Menczer, F.; Gonçalves, B.; Grabowicz, P. A.; Mckelvey, K.; Chung, K.; Ciampaglia, G. L.; Ratkiewicz, J.; Ferrara, E.; Peli Kankanamalage, C.; Wu, T.-L.; Flammini, A.; Meiss, M. R.; Shiralkar, P.; Aiello, L. M.; Weng, L. OSoMe: The IUNI Observatory on Social Media. PeerJ Comput. Sci. 2016, 2, e87. [CrossRef]

- Chen, X.; Vorvoreanu, M.; Madhavan, K. P. C. Mining Social Media Data for Understanding Students' Learning Experiences. IEEE Trans. Learn. Technol. 2014, 7(3), 246–259. [CrossRef]

- Blei, D. M.; Ng, A. Y.; Jordan, M. I. Latent Dirichlet Allocation. J. Mach. Learn. Res. 2003, 3, 993–1022. [CrossRef]

- Lee, D. D.; Seung, H. S. Learning the Parts of Objects by Non-negative Matrix Factorization. Nature 1999, 401(6755), 788–791. [CrossRef]

- Deerwester, S.; Dumais, S. T.; Furnas, G. W.; Landauer, T. K.; Harshman, R. Indexing by latent semantic analysis. J. Am. Soc. Inf. Sci. 1990, 41(6), 417–428.

- Grootendorst, M. BERTopic: Neural topic modeling with a class-based embedding model. arXiv 2022, arXiv:2203.05794. Available online: https://arxiv.org/abs/2203.05794.

- Angelov, D. Top2Vec: Distributed Representations of Topics. arXiv 2020, arXiv:2008.09470. Available online: https://arxiv.org/abs/2008.09470.

- Li, P.; et al. Hierarchical Summarization with Reusable Abstractive Units. arXiv 2023, arXiv:2305.14546. Available online: https://arxiv.org/abs/2305.14546.

- Shao, Y.; et al. Long-Range Summarization with Memory-Augmented Transformers. In Proceedings of the 2023 Association for Computational Linguistics Conference, Toronto, ON, Canada, 23–28 July 2023; Association for Computational Linguistics: Toronto, Canada, 2023. Available online: https://arxiv.org/abs/2305.14546.

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G. S.; Dean, J. Distributed Representations of Words and Phrases and Their Compositionality. In Proceedings of the 26th Annual Conference on Neural Information Processing Systems (NeurIPS 2013), Lake Tahoe, NV, USA, December 5–10, 2013; Curran Associates, Inc.: Red Hook, NY, USA, 2013; pp. 3111–3119. Available online: https://arxiv.org/abs/1310.4546.

- Salton, G.; Buckley, C. Term-weighting Approaches in Automatic Text Retrieval. Inf. Process. Manag. 1988, 24(5), 513–523. [CrossRef]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing (EMNLP 2019), Hong Kong, China, 3–7 November 2019; Association for Computational Linguistics: Hong Kong, China, 2019; pp. 3982–3992. [CrossRef]

- DBSCAN (Density-Based Spatial Clustering of Applications with Noise): Ester, M.; Kriegel, H. P.; Sander, J.; Xu, X. A Density-Based Algorithm for Discovering Clusters in Large Spatial Databases with Noise. In Proceedings of the 2nd International Conference on Knowledge Discovery and Data Mining (KDD-96), Portland, OR, USA, August 2–4, 1996; AAAI Press: Portland, OR, USA, 1996; pp. 226–231. Available online: . [CrossRef]

- MacQueen, J. K. Some Methods for Classification and Analysis of Multivariate Observations. In Proceedings of the Fifth Berkeley Symposium on Mathematical Statistics and Probability, Berkeley, CA, USA, June 21–23, 1967; University of California Press: Berkeley, CA, USA, 1967; Volume 1, pp. 281–297.

- Ankerst, M.; Breunig, M. M.; Kriegel, H. P.; Sander, J. OPTICS: Ordering Points to Identify the Clustering Structure. In Proceedings of the ACM SIGMOD International Conference on Management of Data, Philadelphia, PA, USA, June 1–3, 1999; ACM: New York, NY, USA, 1999; pp. 49–60. [CrossRef]

- Kaufman, L.; Rousseeuw, P. J. Finding Groups in Data: An Introduction to Cluster Analysis; Wiley: Hoboken, NJ, USA, 2005.

- Mentionlytics [Computer Software]. Available online: https://www.mentionlytics.com (accessed on 15 March 2025).

- Zhou, K.; Yang, Q. LDA-PSTR: A Topic Modeling Method for Short Text. In Proceedings of the 2018 International Conference on Big Data Analysis, Beijing, China, July 25–27, 2018; Springer: Singapore, 2018; pp. 339–352. [CrossRef]

- Kim, H. D.; Zhai, C.; Park, D. H.; Lu, Y. Enriching Text Representation with Frequent Pattern Mining for Probabilistic Topic Modeling. Proceedings of the American Society for Information Science and Technology 2012, 49(1), 1–10. [CrossRef]

- Sriurai, W. Improving Text Categorization By Using A Topic Model. Adv. Comput. Int. J. 2011, 2(6), 21–27.

- Milios, E.; Zhang, X. MPTopic: Improving Topic Modeling via Masked Permuted Pre-training. arXiv 2023, arXiv:2309.01015. Available online: https://arxiv.org/abs/2309.01015.

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. Available online: https://arxiv.org/abs/2303.08774.

- Röder, M.; Both, A.; Hinneburg, A. Exploring the Space of Topic Coherence Measures. In Proceedings of the Eighth ACM International Conference on Web Search and Data Mining (WSDM 2015), Shanghai, China, February 2–6, 2015; ACM: New York, NY, USA, 2015; pp. 399–408. [CrossRef]

- Bianchi, F.; Terragni, S.; Hovy, D.; Nozza, D.; Fersini, E. Cross-lingual Contextualized Topic Models with Zero-shot Learning. Proceedings of the 2021 European Chapter of the Association for Computational Linguistics (EACL 2021), Online, April 19–23, 2021; Association for Computational Linguistics: Online, 2021; pp. 84–96. https://aclanthology.org/2021.eacl-main.9/.

- Bojanowski, P.; Grave, E.; Joulin, A.; Mikolov, T. Enriching Word Vectors with Subword Information. CoRR 2016, arXiv:1607.04606. Available online: http://arxiv.org/abs/1607.04606.

- David Newman, Jey Han Lau, Karl Grieser, and Timothy Baldwin. Automatic Evaluation of Topic Coherence. Proceedings of the 2010 Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL-HLT 2010), Los Angeles, CA, USA, June 1–6, 2010; Association for Computational Linguistics: Los Angeles, CA, USA, 2010; pp. 100–108. [CrossRef]

- Allaoui, M.; Kherfi, M. L.; Cheriet, A. Considerably Improving Clustering Algorithms Using UMAP Dimensionality Reduction Technique: A Comparative Study. In Proceedings of the 2020 International Conference on Machine Learning and Data Science, Singapore, September 6–8, 2020; Springer: Cham, Switzerland, 2020; pp. 317–325. [CrossRef]

- Luo, G.; Luo, X.; Tian, L.; Gooch, T. F.; Qin, K. A Parallel DBSCAN Algorithm Based on Spark. Proceedings of the 2016 IEEE International Conference on Big Data and Cloud Computing, Beijing, China, November 4–6, 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 548–553. [CrossRef]

- Borgeaud, S.; et al. Improving Language Models by Retrieving from Trillions of Tokens. Proceedings of the International Conference on Machine Learning (ICML 2022), Baltimore, MD, USA, July 17–23, 2022; PMLR: Baltimore, MD, USA, 2022. Available online: https://arxiv.org/abs/2208.10157.

- Mu, Y.; Dong, C.; Bontcheva, K.; Song, X. Large Language Models Offer an Alternative to the Traditional Approach of Topic Modelling. arXiv 2024, arXiv:2403.16248. Available online: https://arxiv.org/abs/2403.16248.

- Miller, J. K.; Alexander, T. J. Human-Interpretable Clustering of Short-Text Using Large Language Models. arXiv 2024, arXiv:2405.07278. Available online: https://arxiv.org/abs/2405.07278.

- Zhang, Y.; Wang, Z.; Shang, J. ClusterLLM: Large Language Models as a Guide for Text Clustering. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing (EMNLP 2023), Singapore, November 7–11, 2023; Association for Computational Linguistics: Singapore, 2023; pp. 13903–13920. [CrossRef]

- Viswanathan, V.; Gashteovski, K.; Lawrence, C.; Wu, T.; Neubig, G. Large Language Models Enable Few-Shot Clustering. arXiv 2023, arXiv:2307.00524. Available online: https://arxiv.org/abs/2307.00524.

- Davies, D.; Bouldin, D. A Cluster Separation Measure. IEEE Transactions on Pattern Analysis and Machine Intelligence 1979, PAMI-1(2), 224–227. [CrossRef]

| Dataset | Ryanair | Easyjet | Trello | Asana |

|---|---|---|---|---|

| Size | 5600 | 5216 | 9470 | 10004 |

| Date Range | 28/9 – 4/10 | 1/12 – 18/12 | 1/12 – 15/1 | 1/11 -10/1 |

| Language | English | English | English | English |

| AI Mention Clustering (% total document) | 29 (20%) | 57 (19%) | 68 (18%) | 75 (18%) |

| BERTopic Clustering (% total document) | 107 (51%) | 125 (74%) | 156 (60%) | 159 (63%) |

| Dataset | Ryanair | Easyjet | Trello | Asana |

|---|---|---|---|---|

| AI Mention Clustering | 0.46 | 0.40 | 0.37 | 0.38 |

| BERTopic | 0.41 | 0.37 | 0.35 | 0.36 |

| Dataset | Ryanair | Easyjet | Trello | Asana |

|---|---|---|---|---|

| AI Mention Clustering | 1.8460 | 1.7529 | 1.6616 | 1.6689 |

| BERTopic | 3.0871 | 3.1058 | 3.4994 | 3.4099 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).