Submitted:

18 March 2025

Posted:

18 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methods

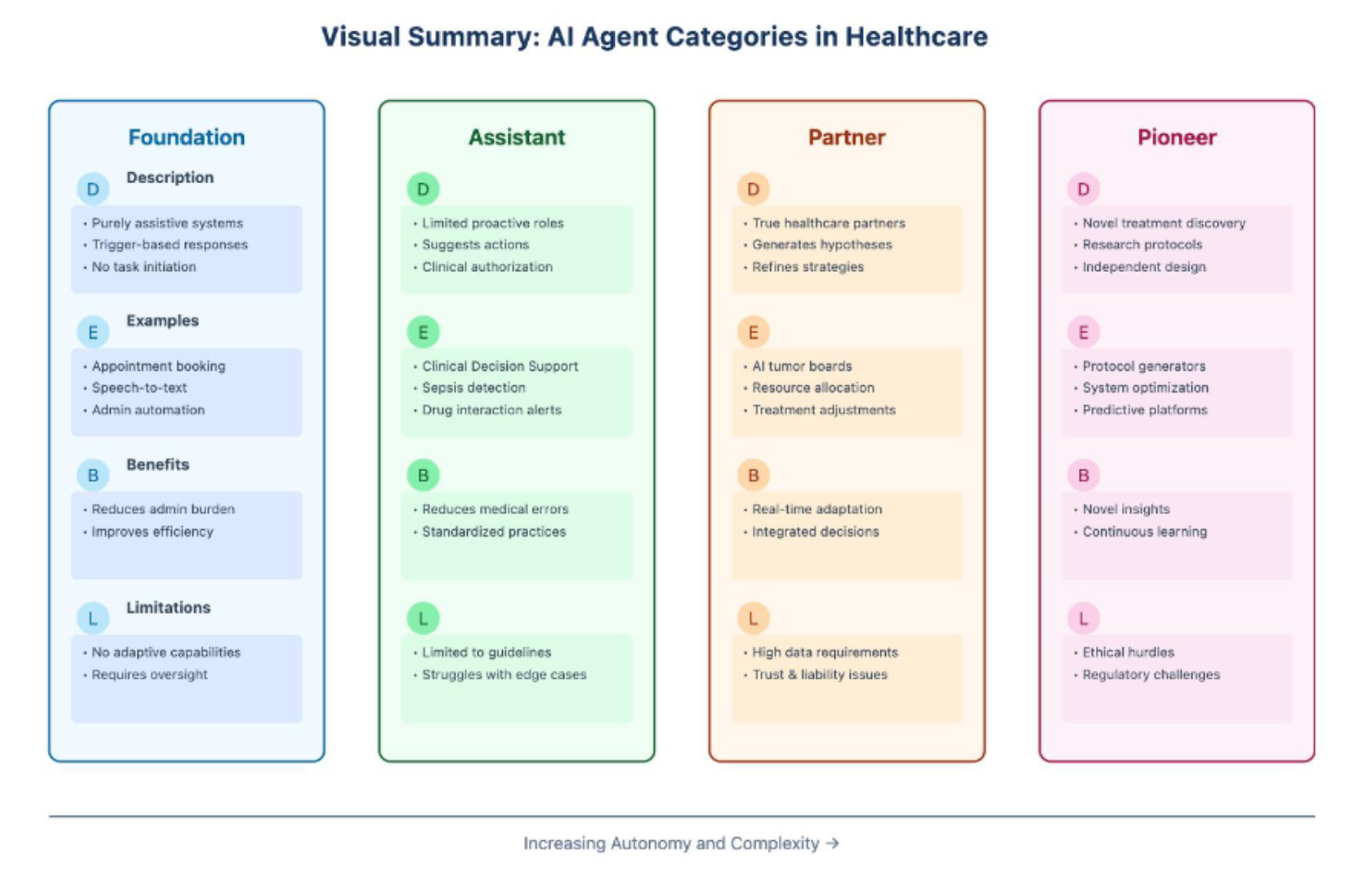

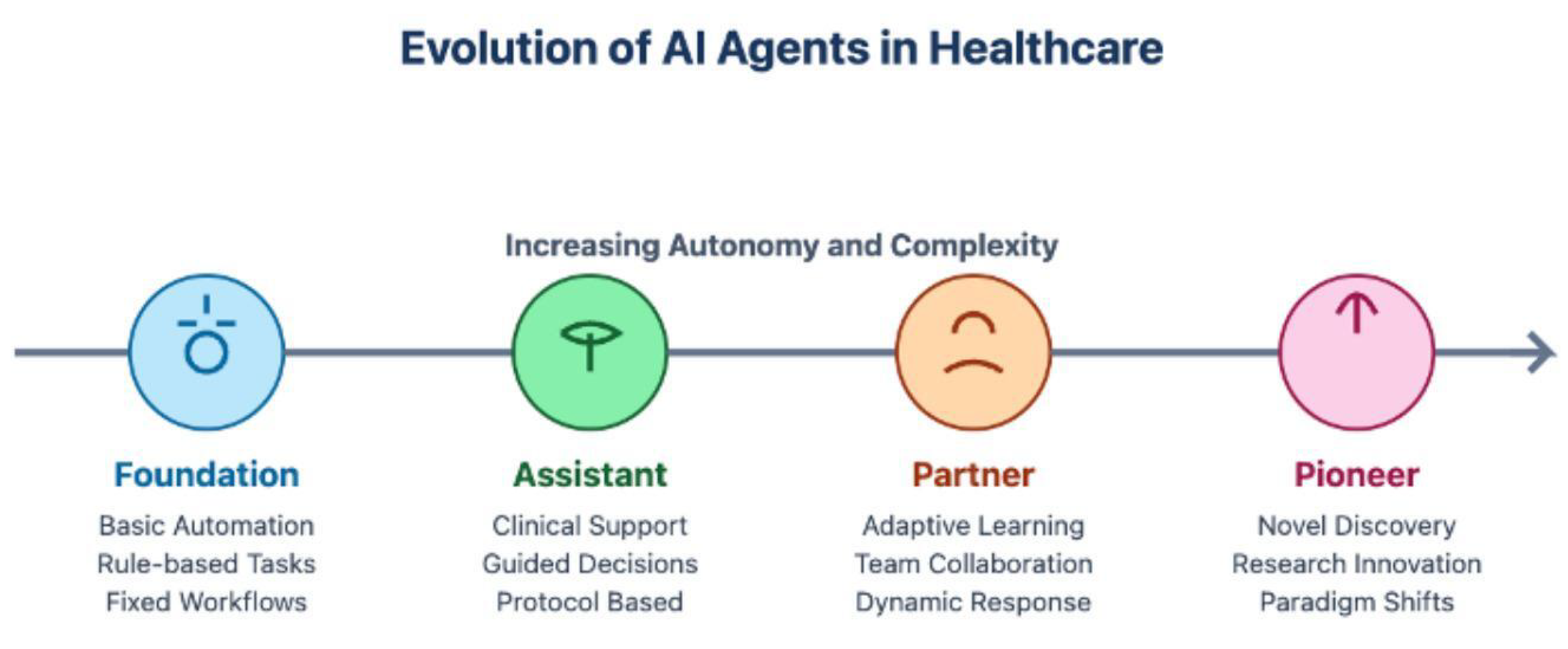

3. Types of AI Agents in Healthcare

3.1. Foundation Agents

3.2. Assistant Agents

3.3. Partner Agents

3.4. Pioneer Agents

3.5. Practical Implications for Healthcare

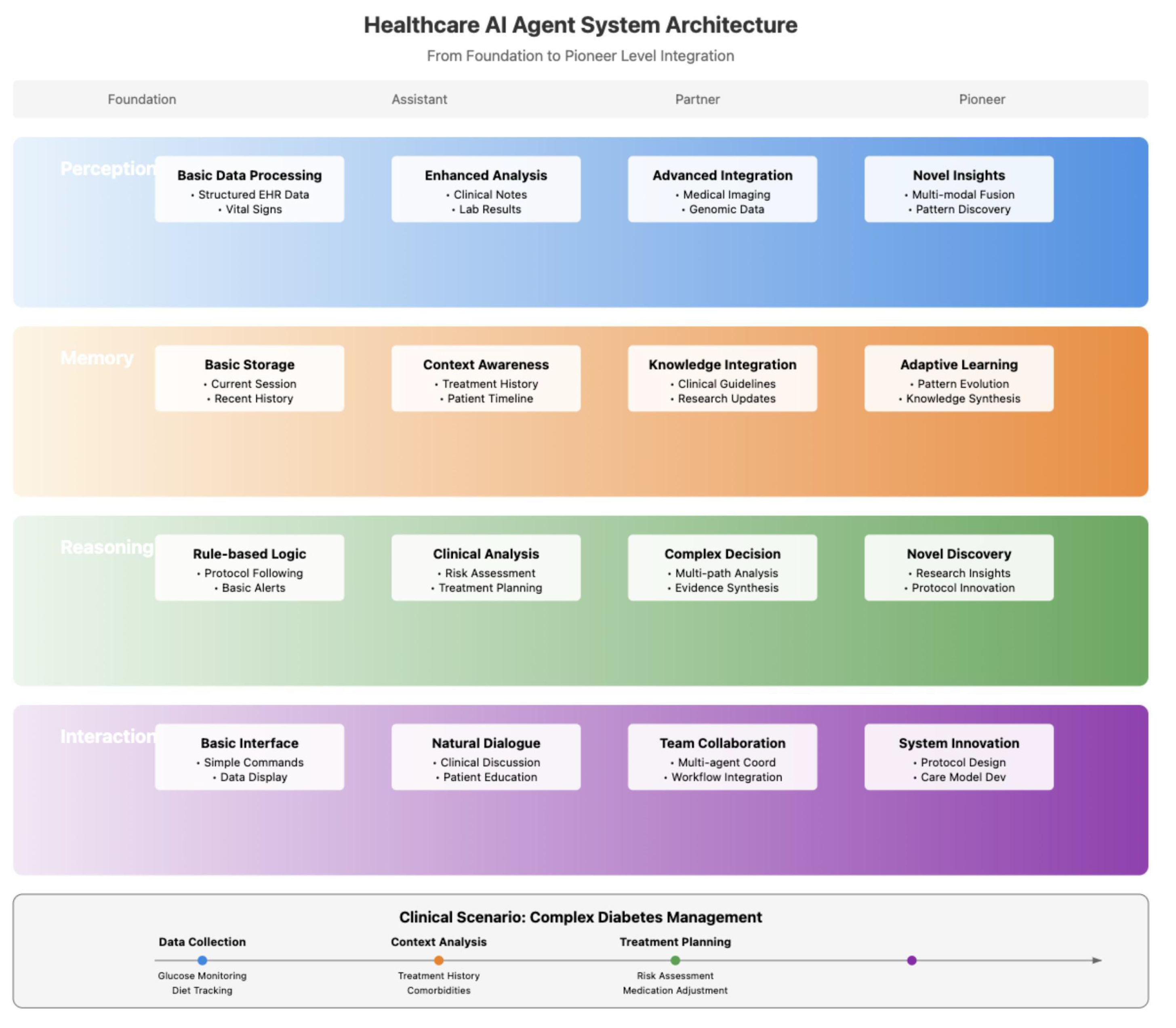

4. Roadmap For Building AI Agents In Healthcare

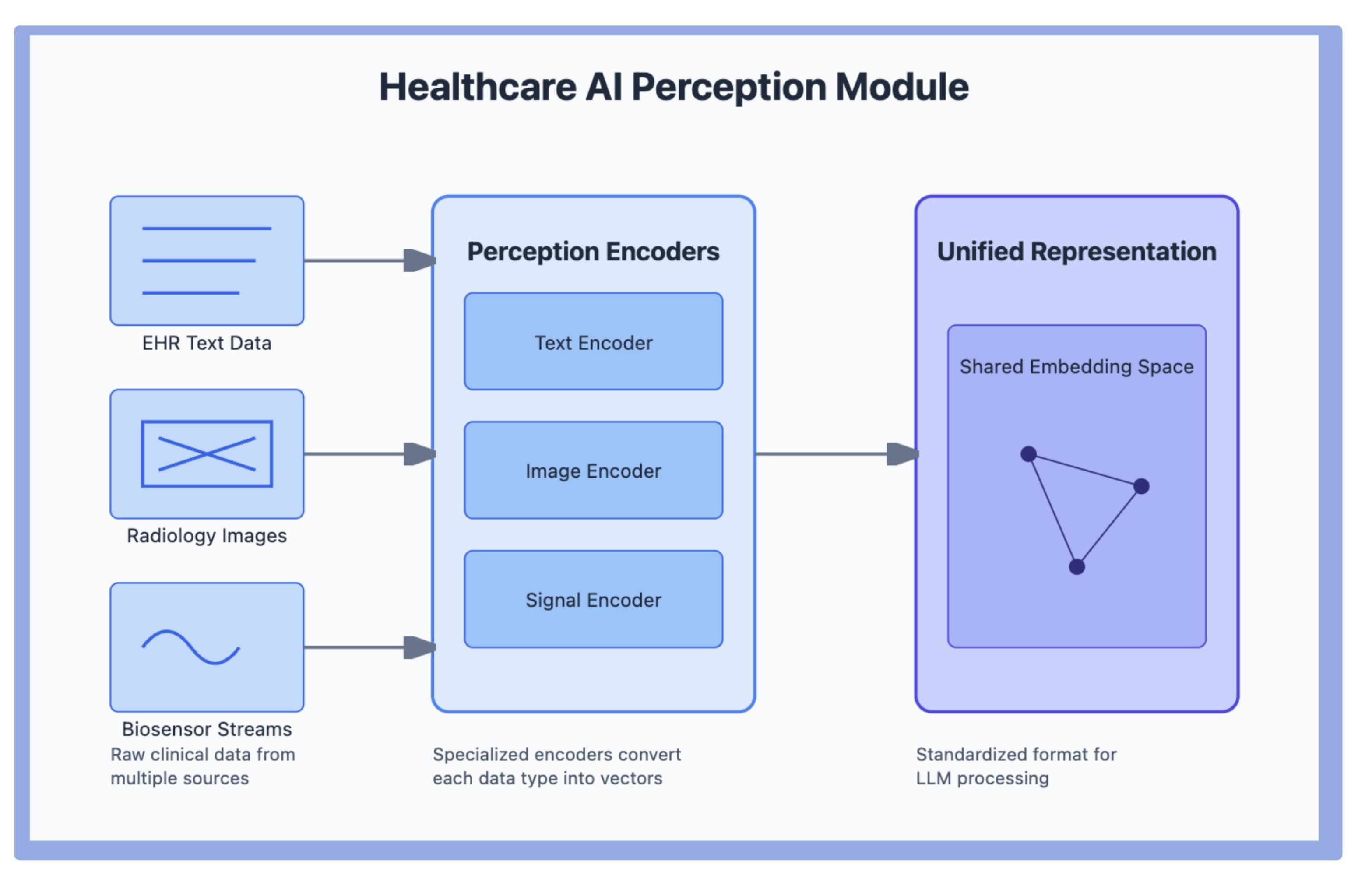

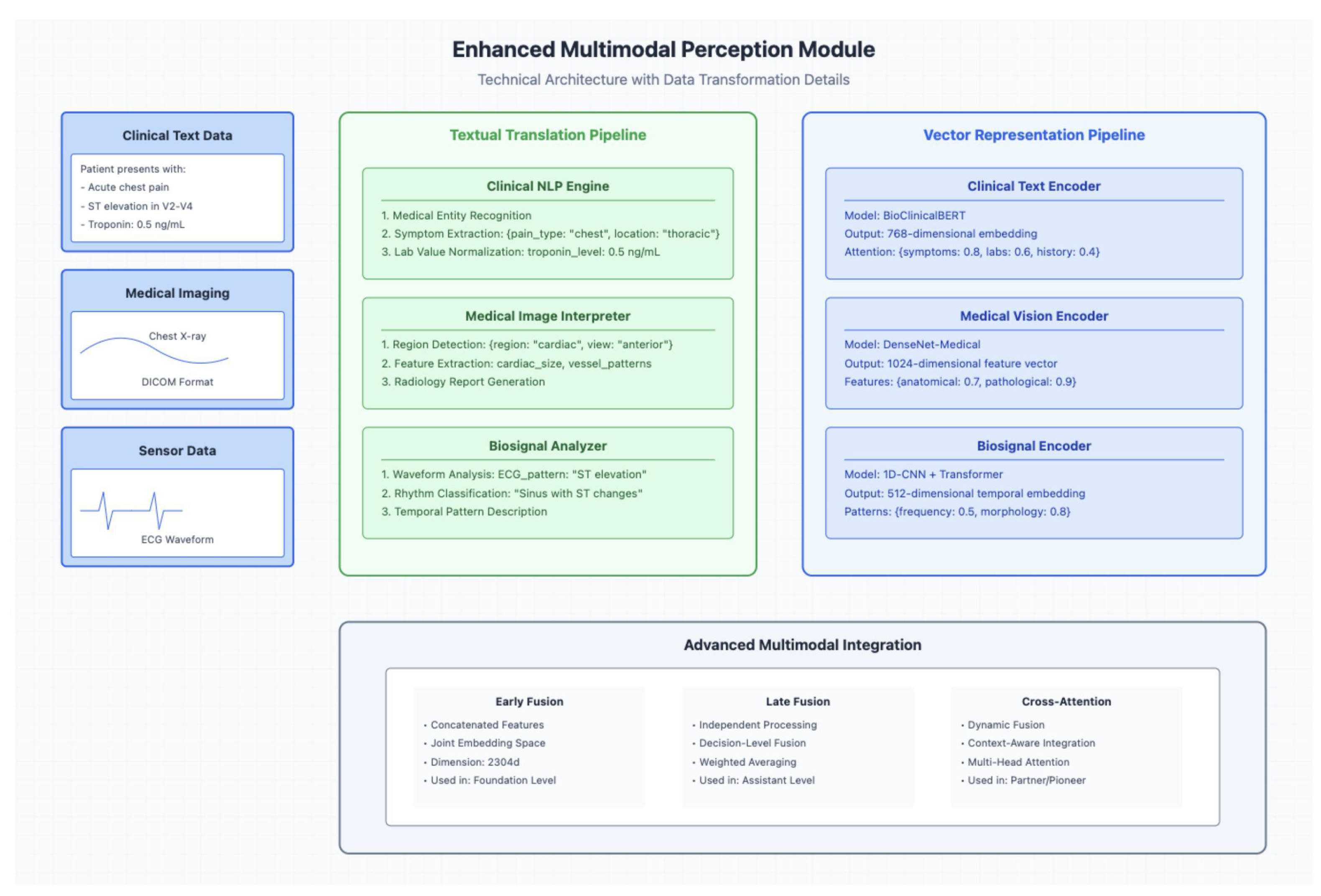

4.1. Perception Modules

4.1.1. Multimodal Perception Modules

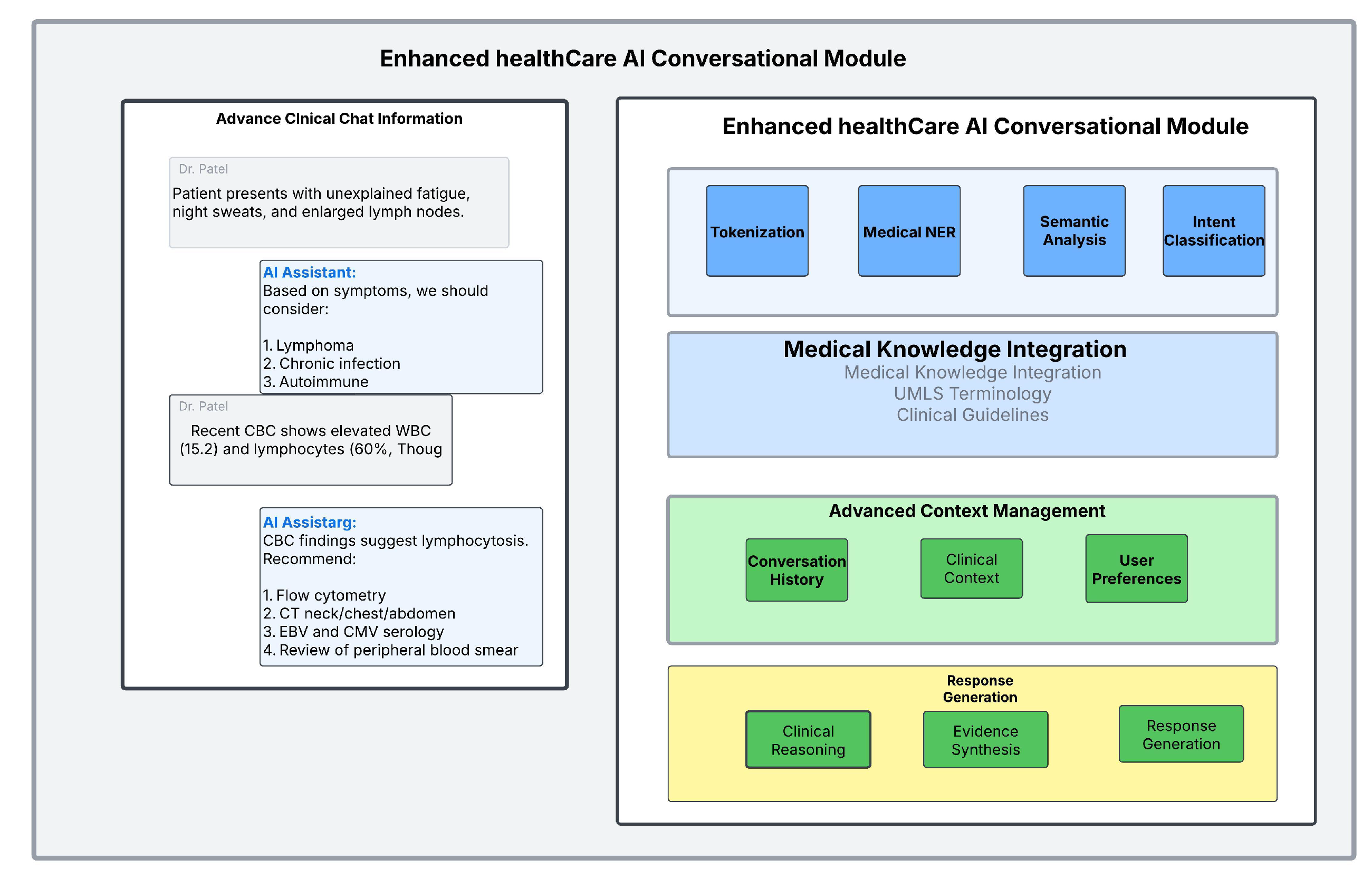

4.2. Conversational Modules

4.3. Interaction Modules

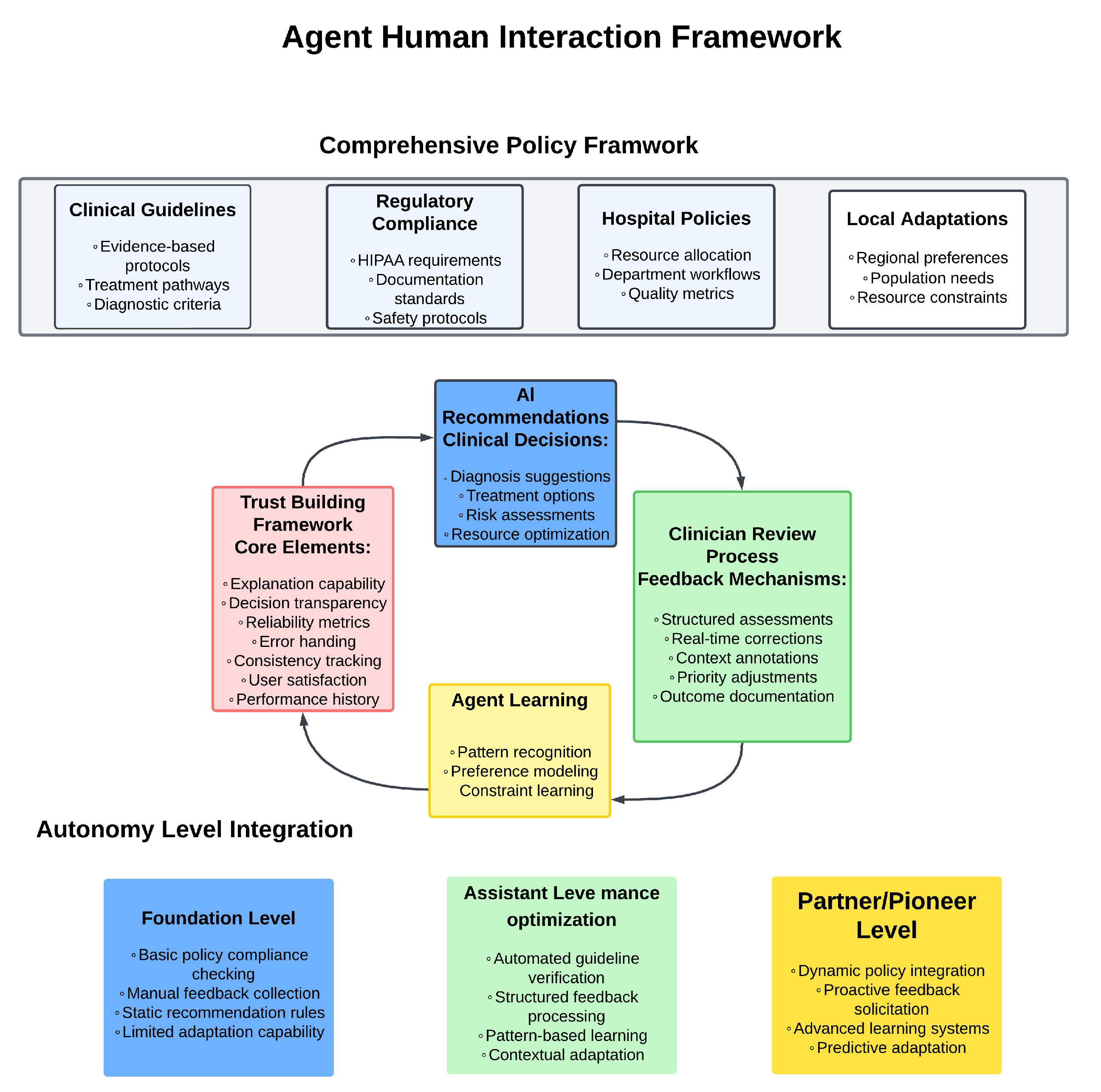

4.3.1. Agent–Human Interaction Modules

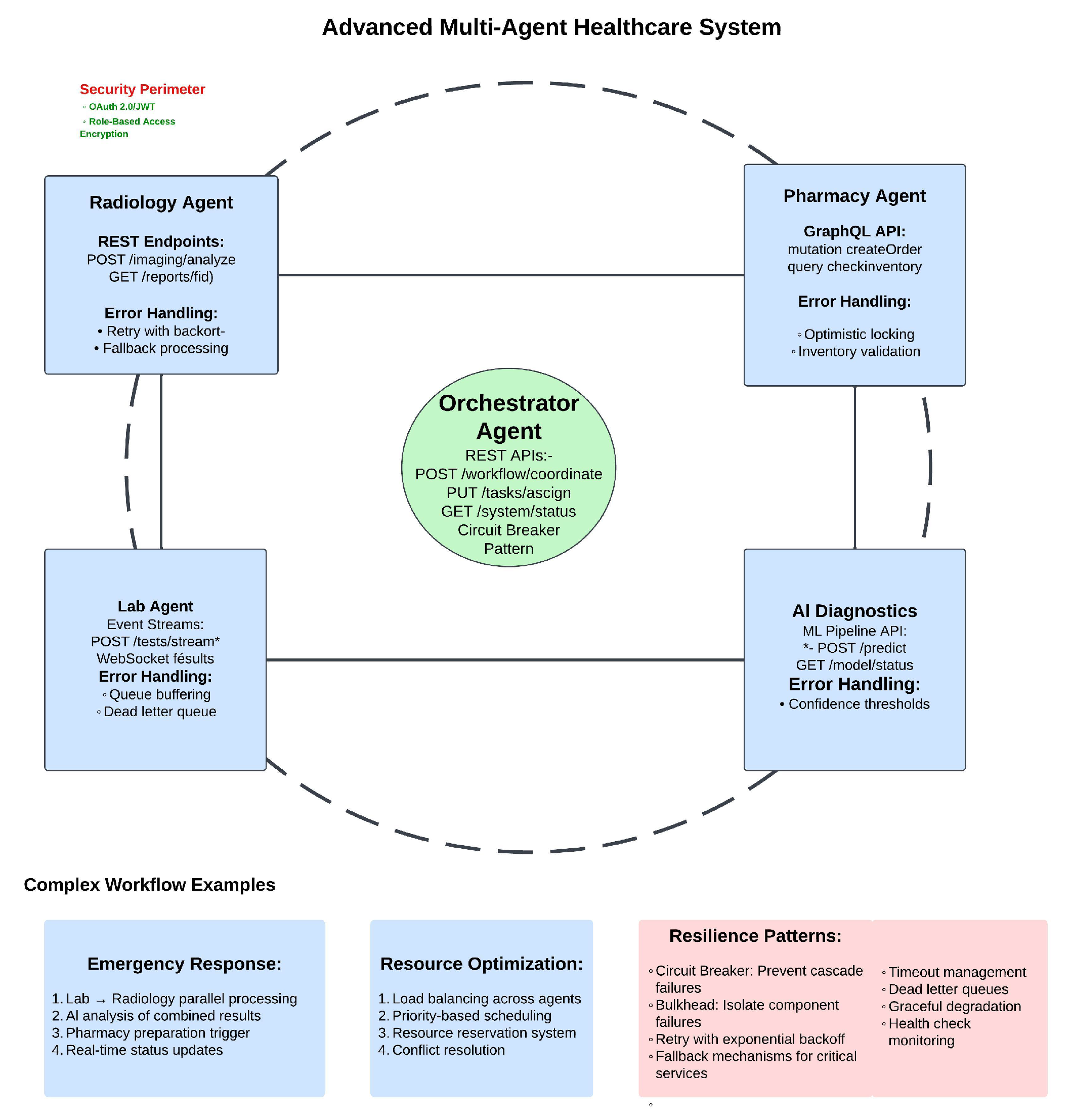

4.3.2. Multi-Agent Interaction

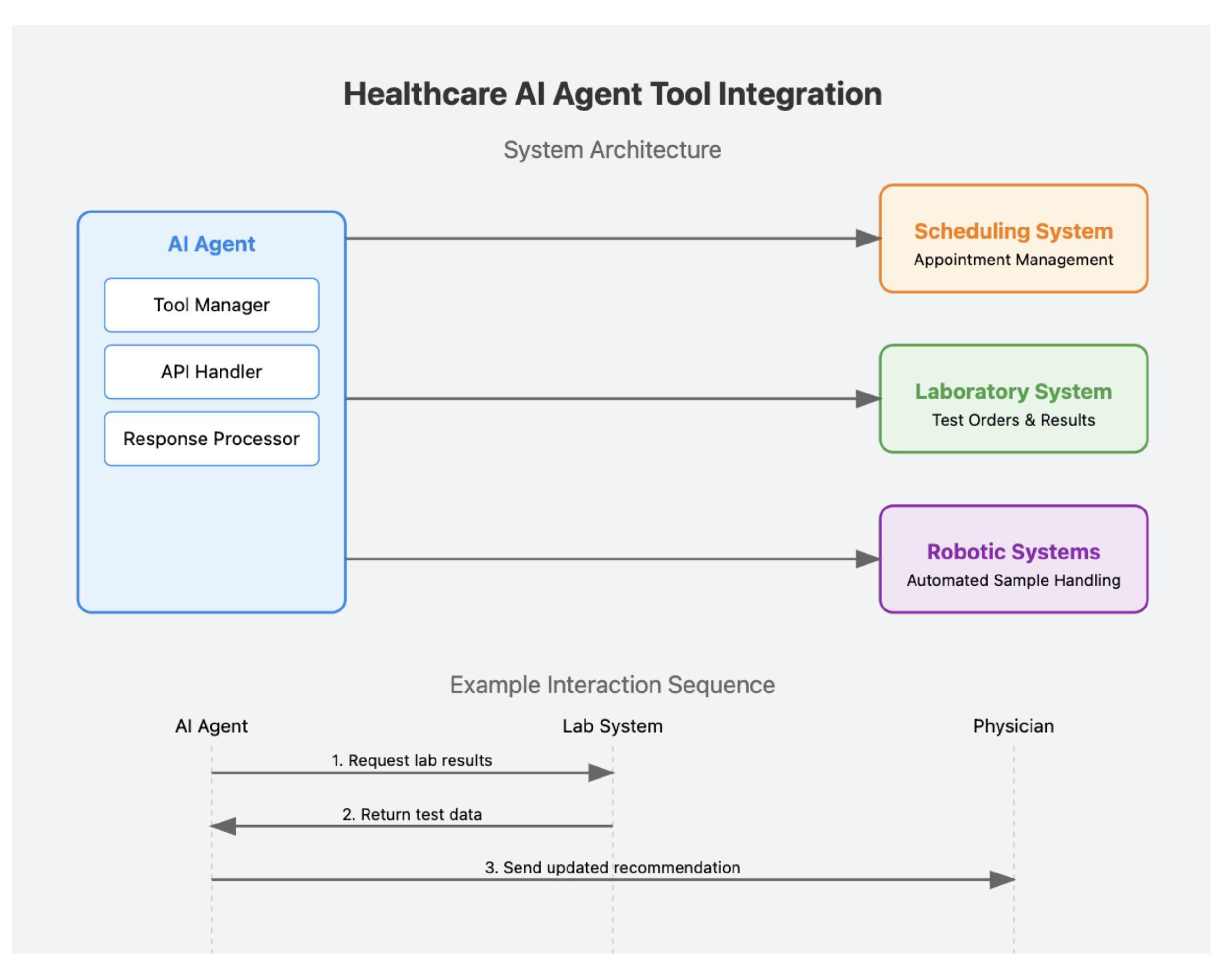

4.4. Tool Use

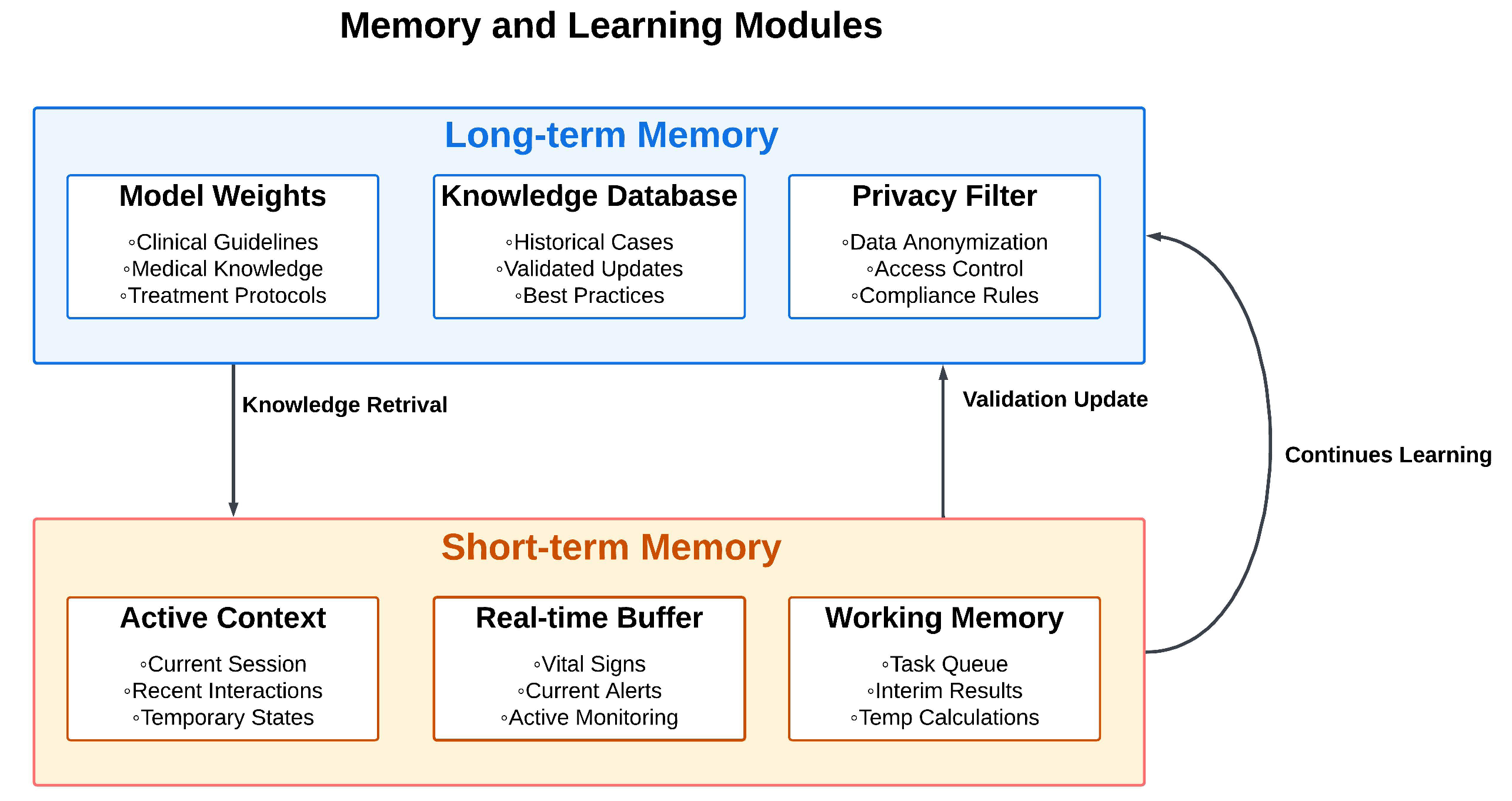

4.5. Memory and Learning Modules

4.6. Reasoning Modules

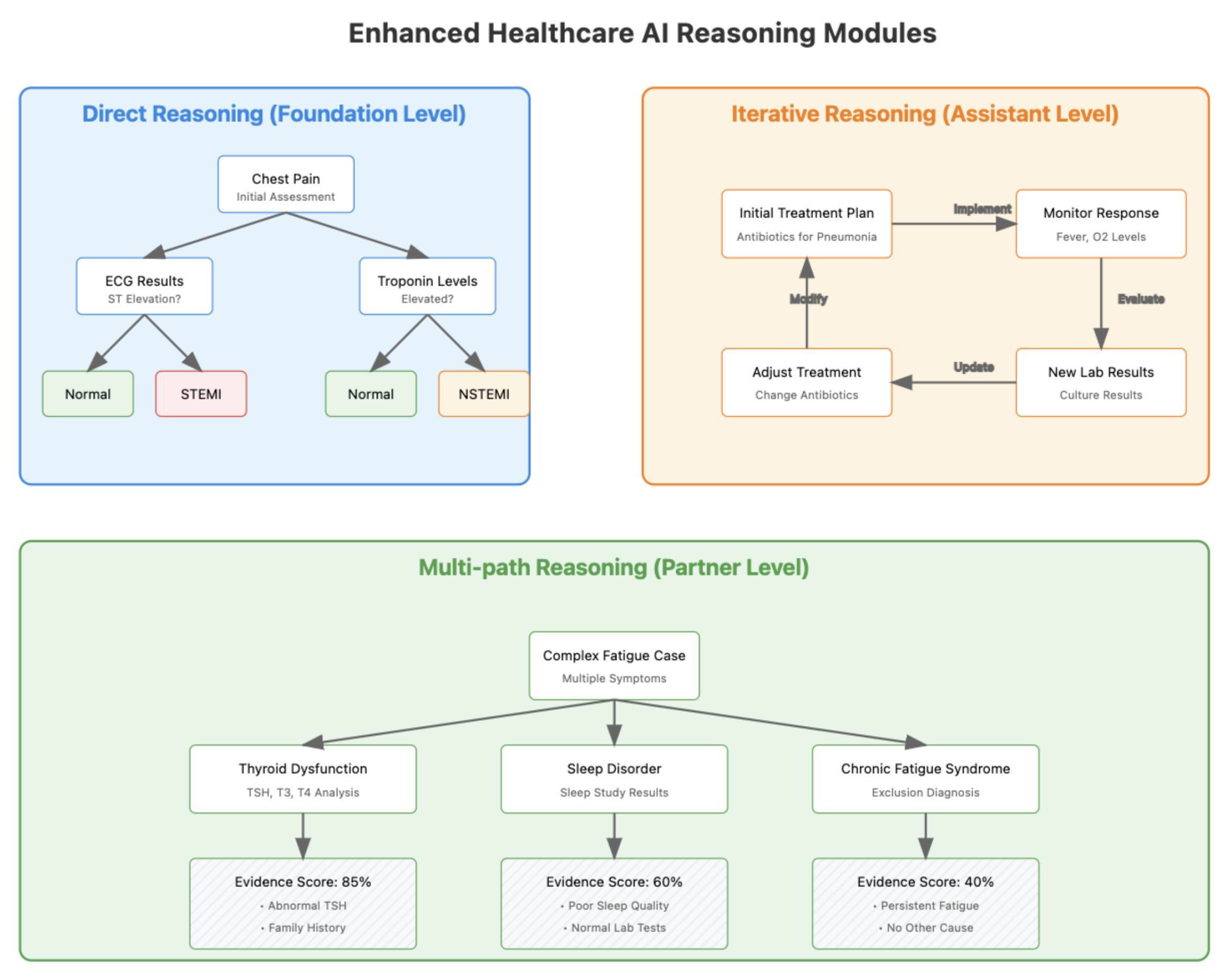

4.6.1. Direct Reasoning Modules

4.6.2. Reasoning with Feedback

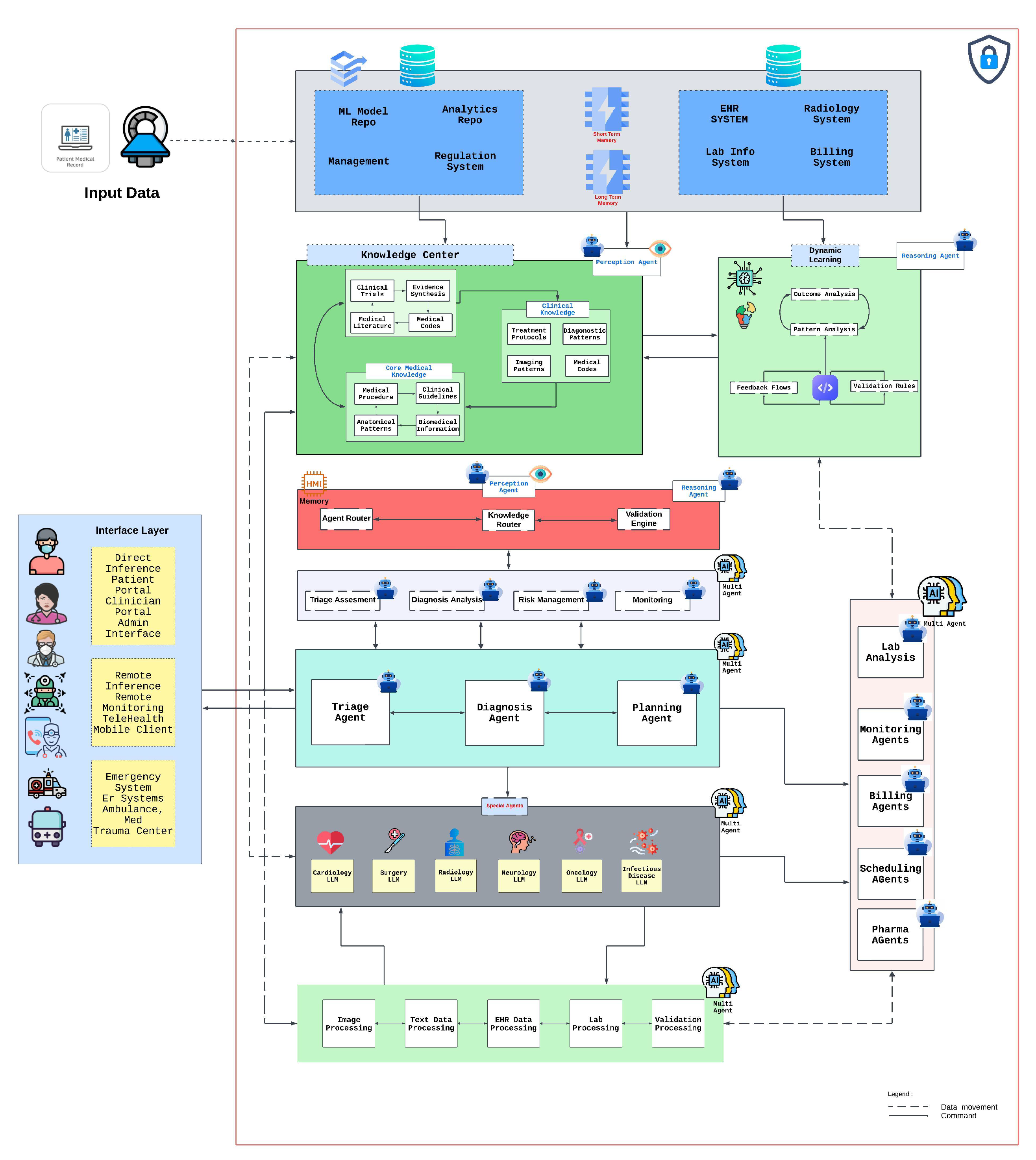

5. Integrated AI Healthcare Architecture

5.1. System Architecture and Knowledge Integration

5.2. Multi-Agent Integration and Clinical Workflow

5.3. Implementation Framework and Validation

6. Healthcare-Specific Requirements

6.1. Data Considerations

6.2. System Requirements

6.3. Integration Frameworks

6.3.1. Healthcare Systems

6.3.2. Technical Infrastructure

6.4. Performance Metrics

6.4.1. Technical Metrics

6.4.2. Healthcare Metrics

7. Challenges and Limitations

7.1. Robustness and Reliability Challenges

7.2. Healthcare-Specific Barriers

7.3. Ethical and Regulatory Challenges

7.4. Evaluation Protocols and Dataset Generation

7.5. Robustness and Reliability Concerns

7.6. Governance and Risk Management

8. Future Directions

8.1. Emerging Technologies

8.1.1. Technical Advancements

8.1.2. Healthcare Applications

8.2. Research Directions

8.2.1. Technical Research

8.2.2. Healthcare Research

8.3. Implementation Roadmap

8.3.1. Development Path

8.3.2. Success Indicators

9. Conclusion

References

- R. Grand View, "Artificial Intelligence in Healthcare Market Size, Share & Trends Analysis Report," 2023. [Online]. Available: https://www.grandviewresearch.com/industry-analysis/artificial-intelligence-ai-healthcare-market.

- T. Panch, H. Mattie, and L. A. Celi, "The “inconvenient truth” about AI in healthcare," npj Digital Medicine, vol. 2, no. 1, p. 77, 2019/08/16 2019. [CrossRef]

- T. Davenport and R. Kalakota, "The potential for artificial intelligence in healthcare," (in eng), Future Healthc J, vol. 6, no. 2, pp. 94-98. [CrossRef]

- J. Bajwa, U. Munir, A. Nori, and B. Williams, "Artificial intelligence in healthcare: transforming the practice of medicine," Future healthcare journal, vol. 8, no. 2, pp. e188-e194, 2021.

- S. Cohen, "Chapter 1 - The evolution of machine learning: Past, present, and future," in Artificial Intelligence in Pathology (Second Edition), C. Chauhan and S. Cohen, Eds.: Elsevier, 2025, pp. 3-14.

- E. J. Topol, "High-performance medicine: the convergence of human and artificial intelligence," Nature Medicine, vol. 25, no. 1, pp. 44-56, 2019/01/01 2019. [CrossRef]

- J. He, S. L. Baxter, J. Xu, J. Xu, X. Zhou, and K. Zhang, "The practical implementation of artificial intelligence technologies in medicine," (in eng), Nat Med, vol. 25, no. 1, pp. 30-36, Jan 2019. [CrossRef]

- D. Patel et al., "Exploring temperature effects on large language models across various clinical tasks," medRxiv, p. 2024.07. 22.24310824, 2024.

- D. Patel et al., "Traditional Machine Learning, Deep Learning, and BERT (Large Language Model) Approaches for Predicting Hospitalizations From Nurse Triage Notes: Comparative Evaluation of Resource Management," JMIR AI, vol. 3, p. e52190, Aug 27 2024. [CrossRef]

- V. Gulshan et al., "Development and Validation of a Deep Learning Algorithm for Detection of Diabetic Retinopathy in Retinal Fundus Photographs," (in eng), Jama, vol. 316, no. 22, pp. 2402-2410, Dec 13 2016. [CrossRef]

- A. Esteva et al., "Dermatologist-level classification of skin cancer with deep neural networks," Nature, vol. 542, no. 7639, pp. 115-118, 2017/02/01 2017. [CrossRef]

- Z. C. Lipton, "The Mythos of Model Interpretability: In machine learning, the concept of interpretability is both important and slippery," Queue, vol. 16, no. 3, pp. 31–57, 2018. [CrossRef]

- A. P. Yan et al., "A roadmap to implementing machine learning in healthcare: from concept to practice," (in eng), Front Digit Health, vol. 7, p. 1462751, 2025. [CrossRef]

- A. Paproki, O. Salvado, and C. Fookes, "Synthetic Data for Deep Learning in Computer Vision & Medical Imaging: A Means to Reduce Data Bias," ACM Computing Surveys, 2024.

- A. Bohr and K. Memarzadeh, "The rise of artificial intelligence in healthcare applications," in Artificial Intelligence in healthcare: Elsevier, 2020, pp. 25-60.

- B. D. Mittelstadt, P. Allo, M. Taddeo, S. Wachter, and L. Floridi, "The ethics of algorithms: Mapping the debate," Big Data & Society, vol. 3, no. 2, p. 2053951716679679, 2016.

- K. A. Huang, H. K. Choudhary, and P. C. Kuo, "Artificial Intelligent Agent Architecture and Clinical Decision-Making in the Healthcare Sector," Cureus, vol. 16, no. 7, p. e64115, 2024.

- D. S. Bitterman, H. J. W. L. Aerts, and R. H. Mak, "Approaching autonomy in medical artificial intelligence," The Lancet Digital Health, vol. 2, no. 9, pp. e447-e449, 2020. [CrossRef]

- L. Martinengo et al., "Conversational agents in health care: expert interviews to inform the definition, classification, and conceptual framework," Journal of Medical Internet Research, vol. 25, p. e50767, 2023.

- T. Schachner, R. Keller, and F. v Wangenheim, "Artificial Intelligence-Based Conversational Agents for Chronic Conditions: Systematic Literature Review," (in English), J Med Internet Res, Review vol. 22, no. 9, p. e20701, 2020. [CrossRef]

- A. Laitinen and O. Sahlgren, "AI systems and respect for human autonomy," Frontiers in artificial intelligence, vol. 4, p. 705164, 2021.

- E. Royal Academy of, "Towards Autonomous Systems in Healthcare - July 2023 Update," 2023. [Online]. Available: https://nepc.raeng.org.uk/media/mmfbmnp0/towards-autonomous-systems-in-healthcare_-jul-2023-update.pdf.

- A. Bin Sawad et al., "A systematic review on healthcare artificial intelligent conversational agents for chronic conditions," Sensors, vol. 22, no. 7, p. 2625, 2022.

- N. Mexico Business, "Beyond GenAI: The Rise of Autonomous AI Agents in Healthcare," 2024. [Online]. Available: https://mexicobusiness.news/health/news/beyond-genai-rise-autonomous-ai-agents-healthcare.

- J. Liu et al., "Medchain: Bridging the Gap Between LLM Agents and Clinical Practice through Interactive Sequential Benchmarking," arXiv preprint arXiv:2412.01605, 2024.

- A. Dutta and Y.-C. Hsiao, "Adaptive Reasoning and Acting in Medical Language Agents," arXiv preprint arXiv:2410.10020, 2024.

- D. Ferber et al., "Autonomous artificial intelligence agents for clinical decision making in oncology," arXiv preprint arXiv:2404.04667, 2024.

- M. Abbasian, I. Azimi, A. M. Rahmani, and R. Jain, "Conversational health agents: A personalized llm-powered agent framework," arXiv preprint arXiv:2310.02374, 2023.

- D. Schouten et al., "Navigating the landscape of multimodal AI in medicine: a scoping review on technical challenges and clinical applications," arXiv preprint arXiv:2411.03782, 2024.

- M. Hewett, "The impact of perception on agent architectures," in Proceedings of the AAAI-98 Workshop on Software Tools for Developing Agents, 1998.

- B. D. Simon, K. B. Ozyoruk, D. G. Gelikman, S. A. Harmon, and B. Türkbey, "The future of multimodal artificial intelligence models for integrating imaging and clinical metadata: a narrative review," Diagnostic and interventional radiology (Ankara, Turkey).

- F. Bousetouane, "Physical AI Agents: Integrating Cognitive Intelligence with Real-World Action," arXiv preprint arXiv:2501.08944, 2025.

- A. Al Kuwaiti et al., "A review of the role of artificial intelligence in healthcare," Journal of personalized medicine, vol. 13, no. 6, p. 951, 2023.

- M. Grüning et al., "The Influence of Artificial Intelligence Autonomy on Physicians Work Outcomes in Healthcare: A Lab-in-the-Field Experiment," 2025.

- D. Restrepo, C. Wu, C. Vásquez-Venegas, L. F. Nakayama, L. A. Celi, and D. M. López, "DF-DM: A foundational process model for multimodal data fusion in the artificial intelligence era," Research Square, 2024.

- P. Festor, I. Habli, Y. Jia, A. Gordon, A. A. Faisal, and M. Komorowski, "Levels of autonomy and safety assurance for AI-Based clinical decision systems," in Computer Safety, Reliability, and Security. SAFECOMP 2021 Workshops: DECSoS, MAPSOD, DepDevOps, USDAI, and WAISE, York, UK, September 7, 2021, Proceedings 40, 2021: Springer, pp. 291-296.

- J. N. Acosta, G. J. Falcone, P. Rajpurkar, and E. J. Topol, "Multimodal biomedical AI," Nature Medicine, vol. 28, no. 9, pp. 1773-1784, 2022.

- K. Singhal et al., "Large language models encode clinical knowledge," arXiv preprint arXiv:2212.13138, 2022.

- L. Wang et al., "A survey on large language model based autonomous agents," Frontiers of Computer Science, vol. 18, no. 6, p. 186345, 2024.

- G. Team et al., "Gemini: A Family of Highly Capable Multimodal Models," p. arXiv:2312.11805. [CrossRef]

- Z. Huang, F. Bianchi, M. Yuksekgonul, T. J. Montine, and J. Zou, "A visual–language foundation model for pathology image analysis using medical twitter," Nature medicine, vol. 29, no. 9, pp. 2307-2316, 2023.

- L. Ouyang et al., "Training language models to follow instructions with human feedback," Advances in neural information processing systems, vol. 35, pp. 27730-27744, 2022.

- T. B. Brown et al., "Language Models are Few-Shot Learners," p. arXiv:2005.14165. [CrossRef]

- H. Zhang et al., "Building cooperative embodied agents modularly with large language models," arXiv preprint arXiv:2307.02485, 2023.

- Z. Yang, S. S. Z. Yang, S. S. Raman, A. Shah, and S. Tellex, "Plug in the safety chip: Enforcing constraints for llm-driven robot agents," in 2024 IEEE International Conference on Robotics and Automation (ICRA), 2024: IEEE, pp. 14435-14442.

- T. Guo et al., "Large language model based multi-agents: A survey of progress and challenges," arXiv preprint arXiv:2402.01680, 2024.

- D. Patel et al., "Cloud Platforms for Developing Generative AI Solutions: A Scoping Review of Tools and Services," arXiv preprint arXiv:2412.06044, 2024.

- Y. Qin et al., "Toolllm: Facilitating large language models to master 16000+ real-world apis," arXiv preprint arXiv:2307.16789, 2023.

- T. Schick et al., "Toolformer: Language models can teach themselves to use tools," Advances in Neural Information Processing Systems, vol. 36, pp. 68539-68551, 2023.

- Y. Song et al., "RestGPT: Connecting Large Language Models with Real-World RESTful APIs," arXiv preprint arXiv:2306.06624, 2023.

- A. M. Bran, S. Cox, O. Schilter, C. Baldassari, A. D. White, and P. Schwaller, "ChemCrow: Augmenting large-language models with chemistry tools," arXiv preprint arXiv:2304.05376, 2023.

- R. Nakano et al., "WebGPT: Browser-assisted question-answering with human feedback," p. arXiv:2112.09332. [CrossRef]

- W. Zhong, L. W. Zhong, L. Guo, Q. Gao, H. Ye, and Y. Wang, "Memorybank: Enhancing large language models with long-term memory," in Proceedings of the AAAI Conference on Artificial Intelligence, 2024, vol. 38, no. 17, pp. 19724-19731.

- C. Hu, J. Fu, C. Du, S. Luo, J. Zhao, and H. Zhao, "Chatdb: Augmenting llms with databases as their symbolic memory," arXiv preprint, 2023. arXiv:2306.03901.

- A. Madaan et al., "Self-refine: Iterative refinement with self-feedback," Advances in Neural Information Processing Systems, vol. 36, 2024.

- T. Kojima, S. S. Gu, M. Reid, Y. Matsuo, and Y. Iwasawa, "Large language models are zero-shot reasoners," Advances in neural information processing systems, vol. 35, pp. 22199-22213, 2022.

- S. Yao et al., "Tree of thoughts: Deliberate problem solving with large language models," Advances in Neural Information Processing Systems, vol. 36, 2024.

- D. Patel et al., "Evaluating prompt engineering on GPT-3.5’s performance in USMLE-style medical calculations and clinical scenarios generated by GPT-4," Scientific Reports, vol. 14, no. 1, p. 17341, 2024.

- X. Wang et al., "Self-consistency improves chain of thought reasoning in language models," arXiv preprint, 2022. arXiv:2203.11171.

- S. Yao et al., "React: Synergizing reasoning and acting in language models," arXiv preprint, 2022. arXiv:2210.03629.

- J. Yang et al., "Harnessing the power of llms in practice: A survey on chatgpt and beyond," ACM Transactions on Knowledge Discovery from Data, vol. 18, no. 6, pp. 1-32, 2024.

- Y. Liang et al., "Taskmatrix. ai: Completing tasks by connecting foundation models with millions of apis," Intelligent Computing, vol. 3, p. 0063, 2024.

- Y. Qin et al., "Tool learning with foundation models," ACM Computing Surveys, vol. 57, no. 4, pp. 1-40, 2024.

- C. Zhang et al., "ProAgent: building proactive cooperative agents with large language models," in Proceedings of the AAAI Conference on Artificial Intelligence, 2024, vol. 38, no. 16, pp. 17591-17599.

- E. Karpas et al., "MRKL Systems: A modular, neuro-symbolic architecture that combines large language models, external knowledge sources and discrete reasoning," arXiv preprint, 2022. arXiv:2205.00445,.

- Y. Wang et al., "Aligning Large Language Models with Human: A Survey," p. arXiv:2307.12966. [CrossRef]

- J. Ruan et al., "TPTU: Large Language Model-based AI Agents for Task Planning and Tool Usage. arXiv 2308.03427 (2023)," ed, 2023.

- D. Banerjee, P. Singh, A. Avadhanam, and S. Srivastava, "Benchmarking LLM powered Chatbots: Methods and Metrics," p. arXiv:2308.04624. [CrossRef]

- G. Mialon et al., "Augmented Language Models: a Survey," p. arXiv:2302.07842. [CrossRef]

- M. Lee et al., "Evaluating Human-Language Model Interaction," p. arXiv:2212.09746. [CrossRef]

- T. A. Chang and B. K. Bergen, "Language Model Behavior: A Comprehensive Survey," Computational Linguistics, vol. 50, no. 1, pp. 293-350, 2024. [CrossRef]

- Z. Ji et al., "Survey of Hallucination in Natural Language Generation," ACM Comput. Surv., vol. 55, no. 12, p. Article 248, 2023. [CrossRef]

- K. Kojs, "A Survey of Serverless Machine Learning Model Inference," p. arXiv:2311.13587. [CrossRef]

| Category | Description | Examples | Benefits | Limitations |

|---|---|---|---|---|

| Foundation Agent |

|

|

|

|

| Assistant Agent |

|

|

|

|

| Partner Agent |

|

|

|

|

| Pioneer Agent |

|

|

|

|

| Challenge Category | Specific Challenges | Technical Solutions | Operational Solutions | Governance Solutions |

|---|---|---|---|---|

| 7.1 Robustness & Reliability | - Data scarcity (esp. rare conditions)- System integration- Real-time processing constraints- Hallucination or reasoning errors in LLMs | - Ensemble models & fallback- Auto error detection with confidence scoring- Transfer learning & synthetic data- Efficient MLops | - Staff training on AI outputs- Staged pilot deployments- Clinical KPI audits- Multi-disciplinary oversight teams | - Mandatory safety certifications- Incident reporting systems- Healthcare-specific AI guidelines |

| 7.2 Healthcare-Specific | - High-stakes settings (surgery, ED)- Clinical validation gap- Limited time windows- Staff acceptance & workflow disruption | - Specialized model optimization- Rapid-inference architectures- Simulation-based testing | - Change management- Clear clinical protocols- Streamlined user interfaces- Cross-functional training modules | - Implementation guidelines- Real-world pilot standards- Post-approval monitoring |

| 7.3 Ethical & Regulatory | - Data privacy (HIPAA, GDPR)- Bias & underrepresented groups- Black-box reasoning- Continuous-learning certification | - Homomorphic encryption- Fairness constraints in training- Explainable-AI modules | - Ethics committees & IRBs- Bias & drift monitoring- Periodic re-check of model outputs | - FDA/EMA re-approval for model updates- Transparent logs & accountability- Inclusive data policies |

| 7.4 Evaluation & Dataset Generation | - Non-stationary disease distributions- Inconsistent data formats- Lack of standardized workflows- Rare disease representation | - Federated/multicenter data- Synthetic data for edge cases- Continuous or online learning | - Cross-institution data-sharing- Longitudinal performance tracking- Real-world usage metrics | - Regulatory frameworks for data usage- Collaborative consortia- International standardization initiatives |

| 7.5 Implementation & Adoption | - Workflow disruptions- User training burdens- Maintenance & updates- Provider trust & acceptance | - User-centered design- Automated maintenance tools- Gentle ramp-up deployment | - Training simulators- Staff buy-in & involvement- Performance dashboards | - Maintenance & upgrade protocols- Reimbursement policies- Liability frameworks |

| 7.6 Governance & Risk Management | - Multi-agent error cascades- Cybersecurity threats- Unclear autonomy boundaries- Accountability & transparency | - Secure agent gating protocols- Communication standards- Auditable logs- Autonomy control | - Human-in-the-loop for critical tasks- Routine safety drills- Crisis simulation & fallback strategies | - Oversight committees & licensing- Binding best practices- Data stewardship & compliance |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).