Submitted:

14 March 2025

Posted:

17 March 2025

You are already at the latest version

Abstract

Keywords:

MSC: 91G70; 91B84

1. Introduction

1.1. Research Contributions

- A first-of-its-kind hybrid DRL trading framework integrating IMCA, which dynamically adjusts model selection weights in response to real-time market conditions, addressing the rigidity and inefficiencies of static DRL ensemble models.

- Application of IMCA-DRL to multi-market trading across the US, Australia, Europe, Thailand, and cryptocurrency markets, providing new insights into cross-market adaptability—an area underexplored in financial AI research.

- A comparative evaluation of multiple DRL models (A2C, PPO, DDPG, TD3, SAC) within an ensemble structure, demonstrating how dynamically optimizing model combinations enhances performance under different market conditions.

2. Literature Review

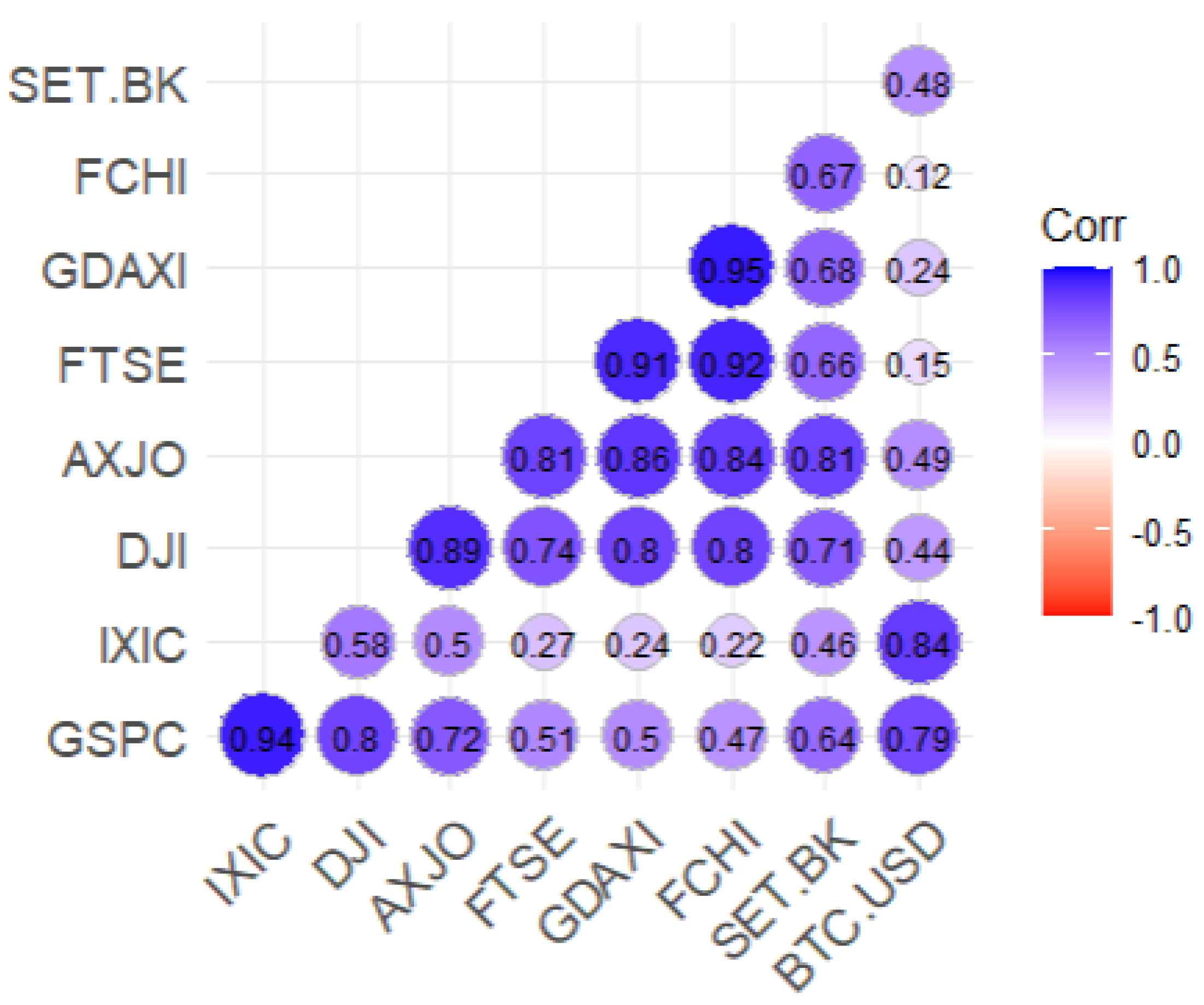

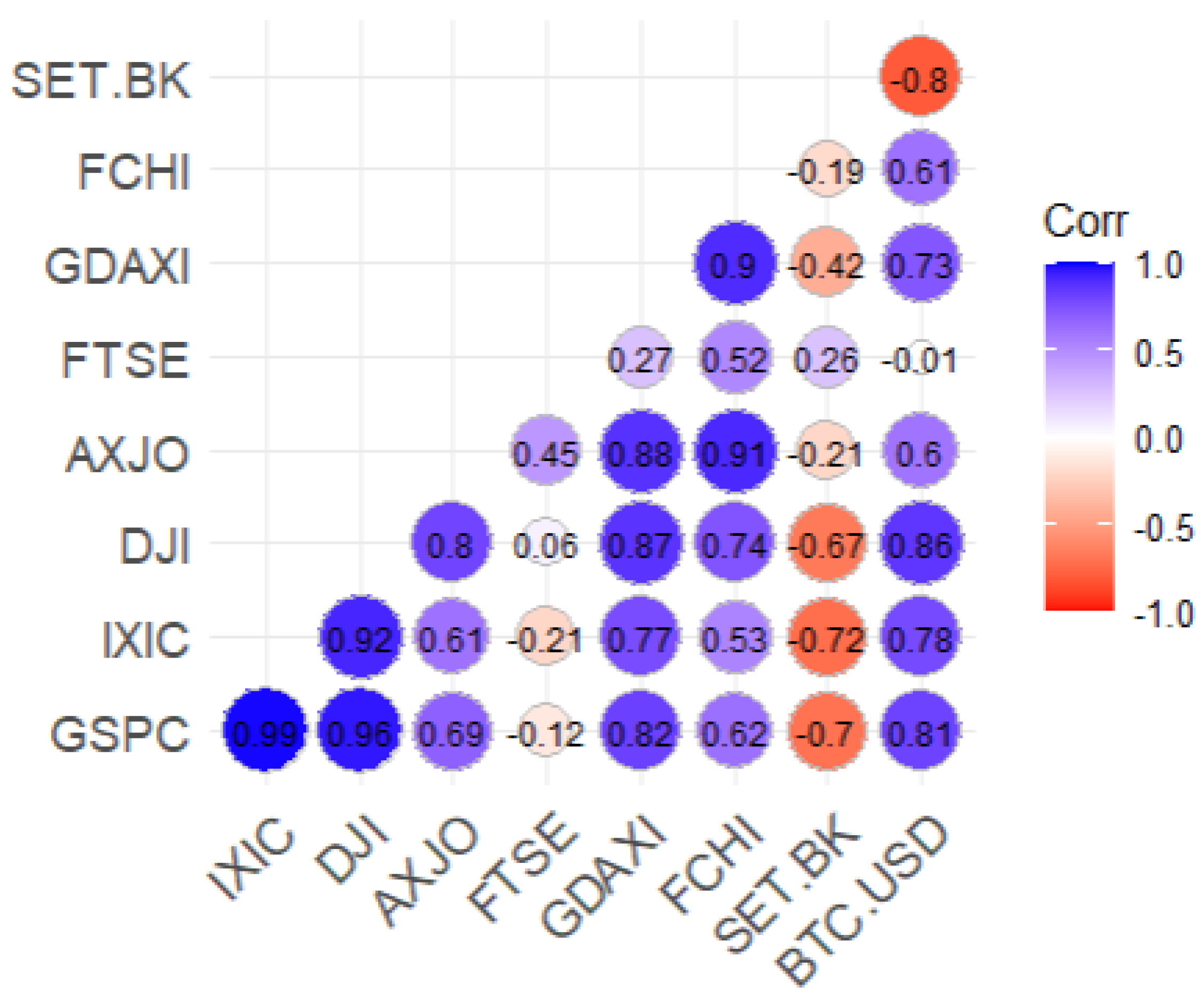

2.1. Ensemble Learning in Global Multi-Asset Trading

2.2. Deep Reinforcement Learning for Cross-Market Trading

2.3. Emerging Paradigms in Algorithmic Trading

2.4. Innovations and Advancements of IMCA

2.5. Risk Management and Portfolio Diversification

3. Methodology

3.1. Reinforcement Learning Algorithms and Experimental Setup

- U.S. Market (10 stocks): Apple Inc. (AAPL), Microsoft Corporation (MSFT), Alphabet Inc. (GOOGL), Amazon.com Inc. (AMZN), Berkshire Hathaway Inc. Class B (BRK-B), Tesla Inc. (TSLA), JPMorgan Chase & Co. (JPM), Johnson & Johnson (JNJ), NVIDIA Corporation (NVDA), Visa Inc. (V)

- Australian Market (10 stocks): Commonwealth Bank of Australia (CBA.AX), BHP Group Limited (BHP.AX), Westpac Banking Corporation (WBC.AX), CSL Limited (CSL.AX), Woolworths Group Limited (WOW.AX), Telstra Group Limited (TLS.AX), National Australia Bank Limited (NAB.AX), Fortescue Metals Group Ltd (FMG.AX), Rio Tinto Limited (RIO.AX), Wesfarmers Limited (WES.AX)

- European Market (UK & Germany) (10 stocks): Shell plc (SHEL.L), HSBC Holdings plc (HSBA.L), Unilever plc (ULVR.L), BP plc (BP.L), GSK plc (GSK.L), Diageo plc (DGE.L), AstraZeneca plc (AZN.L), Rio Tinto plc (RIO.L), SAP SE (SAP.DE), Lloyds Banking Group plc (LLOY.L)

- Thai Market (9 stocks): PTT Public Company Limited (PTT.BK), CP All Public Company Limited (CPALL.BK), Advanced Info Service Public Company Limited (ADVANC.BK), Kasikornbank Public Company Limited (KBANK.BK), The Siam Cement Public Company Limited (SCC.BK), Bangkok Dusit Medical Services Public Company Limited (BDMS.BK), Airports of Thailand Public Company Limited (AOT.BK), Charoen Pokphand Foods Public Company Limited (CPF.BK), Electricity Generating Public Company Limited (EGCO.BK)

- Cryptocurrency (1): Bitcoin to US Dollar (BTC-USD)

- Training Data: Daily adjusted closing prices with derived technical indicators, including Moving Average Convergence Divergence (MACD), Relative Strength Index (RSI), and Simple Moving Averages (SMA), which are widely recognized for capturing momentum, trend strength, and market conditions.

- Training Period: Spans from January 2010 to February 2022, ensuring sufficient exposure to diverse market conditions. The out-of-sample testing period extends from March 2022 to December 2024, enabling robust performance evaluation.

- Learning Rate: Initially set at 0.0003 for most models and fine-tuned based on validation performance to ensure convergence stability without overshooting optimal solutions.

- Episodes: 1000 episodes were used to ensure the models achieve convergence while capturing diverse trading patterns.

- Batch Size: 64 observations per batch, balancing computational efficiency with stable gradient updates.

- Discount Factor (): Set at 0.99 to prioritize long-term rewards while ensuring short-term fluctuations do not overly influence decisions.

- Exploration Rate: Initialized at 1.0 and gradually decayed for epsilon-greedy policies to ensure sufficient exploration in early stages, transitioning to exploitation as learning progresses.

- Optimization Method: Grid search was employed for hyperparameter tuning, including learning rates, discount factors, and batch sizes, ensuring optimal performance across varied trading scenarios.

- Computational Resources: Training was performed on an NVIDIA RTX 3090 GPU with 24 GB memory, enabling efficient parallel processing and accelerated model convergence.

- Framework: TensorFlow and PyTorch were used for algorithm implementation, leveraging their flexibility and scalability for deep learning applications.

- Optimizer: The Adam optimizer was employed across all models due to its adaptive learning rate and efficient handling of sparse gradients, which is critical for achieving faster convergence and improved performance in complex, high-dimensional financial environments.

3.1.1. Experimental Workflow

- Data Acquisition and Preprocessing: Market data is retrieved from multiple sources, including Yahoo Finance and cryptocurrency exchanges, which takes approximately two minutes. Feature engineering follows, including the addition of technical indicators, taking an additional three minutes.

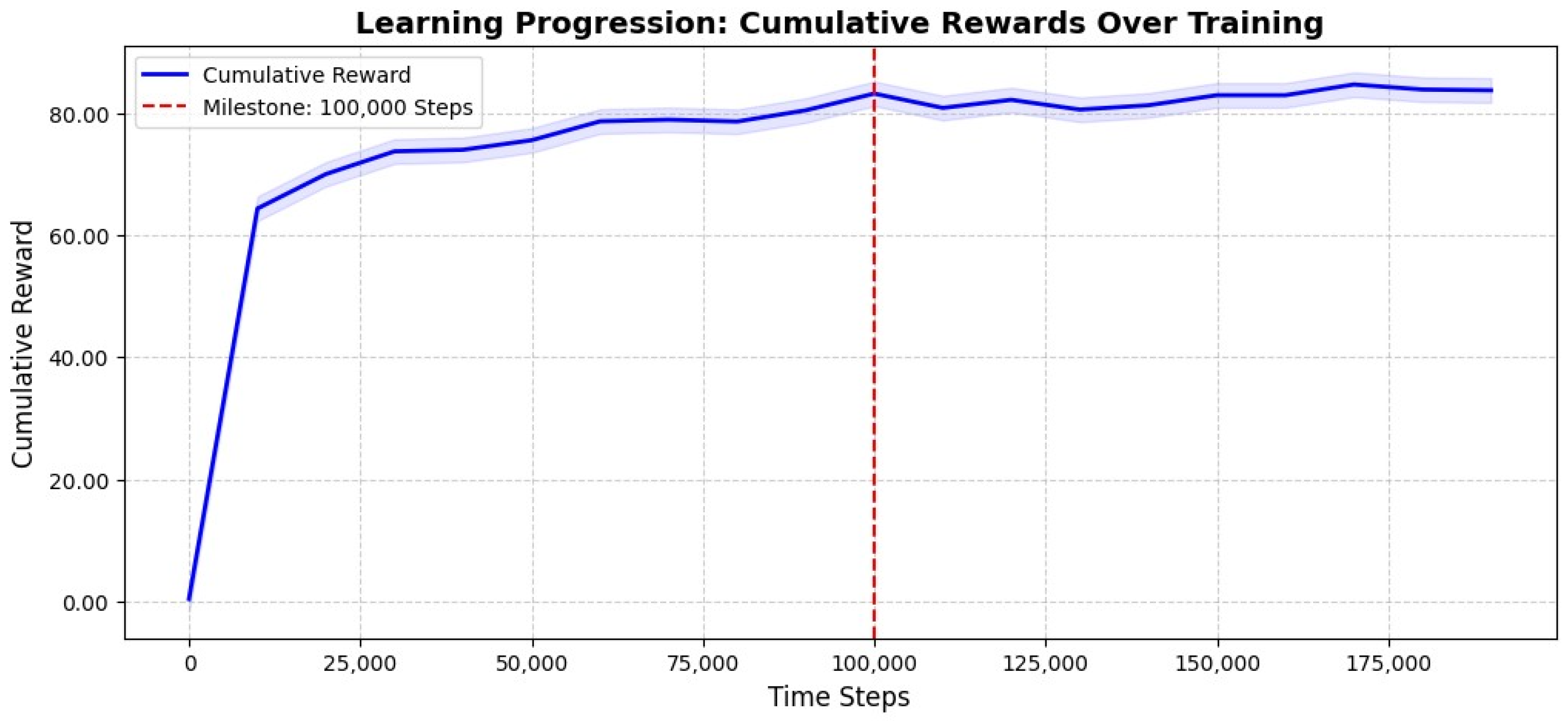

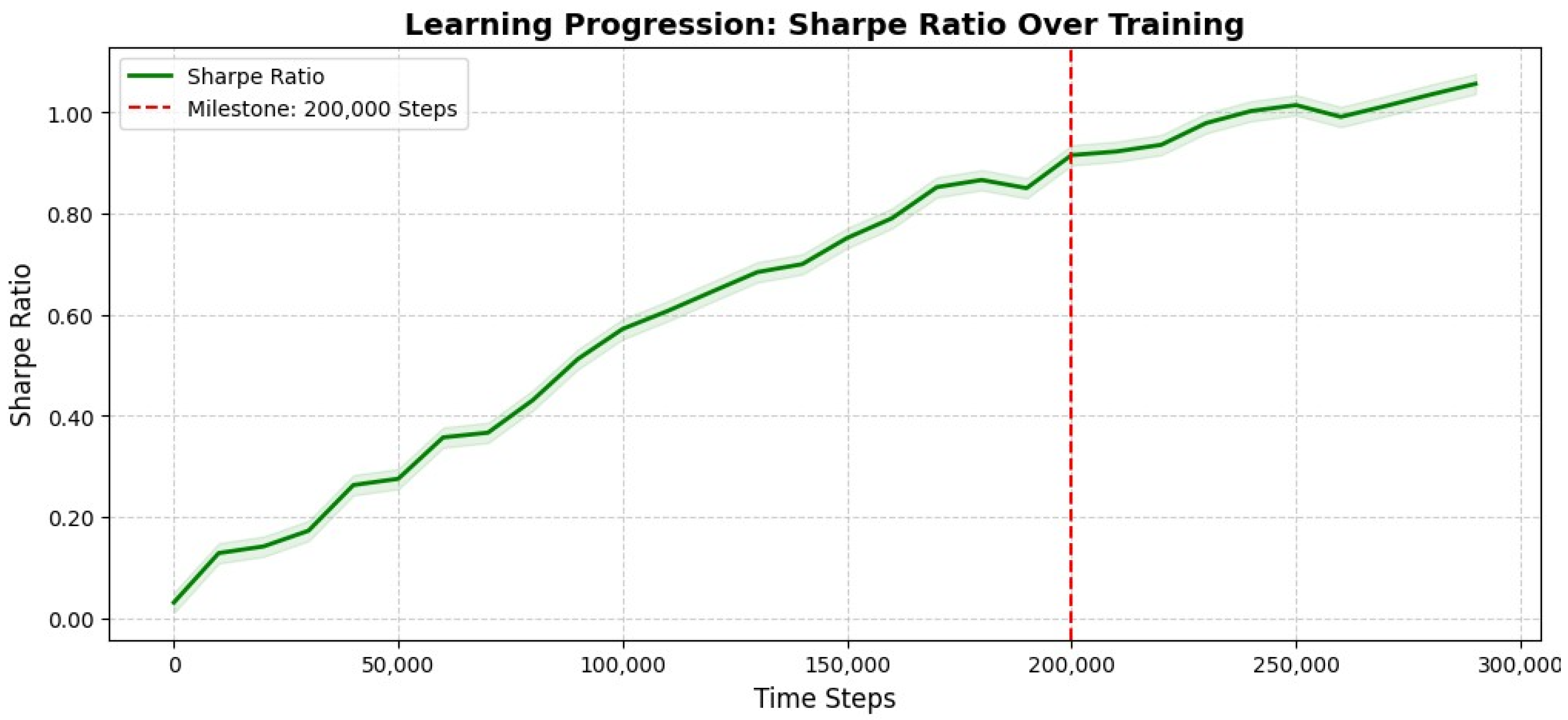

- Training and Hyperparameter Optimization: Each model undergoes extensive training for 100,000 timesteps, with training durations varying by model complexity.

- Evaluation and Performance Assessment: Models are evaluated on the out-of-sample test dataset, measuring key performance metrics such as cumulative returns, Sharpe ratios, and maximum drawdowns.

3.1.2. Advantage Actor-Critic (A2C)

3.1.3. Proximal Policy Optimization (PPO)

3.1.4. Deep Deterministic Policy Gradient (DDPG)

3.1.5. Soft Actor-Critic (SAC)

3.1.6. Twin Delayed Deep Deterministic Policy Gradient (TD3)

3.1.7. Iterative Model Combining Algorithm (IMCA)

3.1.8. Steps in IMCA

3.1.9. Performance Evaluation Metrics

- Cumulative Return: Measures total investment growth over the evaluation period [68].

- Annual Return: Represents the average yearly portfolio growth, enabling comparisons across strategies [68].

- Annualized Volatility: Quantifies the variability of returns on an annual basis, indicating portfolio risk [60].

- Sharpe Ratio: Evaluates risk-adjusted returns by measuring excess returns per unit of risk [24].

- Maximum Drawdown: Captures the largest peak-to-trough decline, assessing downside risk resilience [63].

4. Estimation Results

4.1. Cumulative Return Trends

4.2. Comparative Performance of IMCA and Traditional Strategies

4.3. Overall Performance Metrics

- Among the DRL-based models, PPO delivers a strong cumulative return of 29.3% with an annual return of 6.04%, achieving a competitive balance between risk and profitability. Its Sharpe ratio of 0.7475 suggests an efficient risk-return tradeoff, making it one of the top-performing reinforcement learning models.

- The IMCA model outperforms all DRL strategies in terms of risk-adjusted returns, achieving the highest Sharpe ratio of 0.8293. It maintains a strong cumulative return of 29.5% with an annual return of 6.80%, confirming its adaptability in portfolio allocation. Additionally, its lower maximum drawdown of -13.50% compared to most DRL models demonstrates its enhanced ability to manage downside risks.

- The traditional Min-Variance strategy remains the most stable, exhibiting the lowest annual volatility of 5.99% and the smallest maximum drawdown of -10.96%. However, it produces the lowest cumulative return of only 0.75%, with an annual return of 0.17%, reflecting its conservative nature and limited growth potential.

- The CAPM model performs significantly worse in risk-adjusted terms, with the highest annual volatility of 17.17% and the largest maximum drawdown of -50.97%. Despite achieving a cumulative return of 16.1%, its Sharpe ratio of only 0.2033 indicates poor risk management.

4.4. Advanced Robustness and Cross-Market Adaptability

4.4.1. Evaluating Learning Progression of IMCA

4.5. Discussion

4.5.1. Economic Policy Implications

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Deng, Y.; Bao, F.; Kong, Y.; Ren, Z.; Dai, Q. Deep direct reinforcement learning for financial signal representation and trading. IEEE Trans. Neural Networks Learn. Syst. 2016, 28, 653–664. [Google Scholar] [CrossRef] [PubMed]

- Haarnoja, T.; Zhou, A.; Abbeel, P.; Levine, S. Soft Actor-Critic: Off-Policy Maximum Entropy Deep Reinforcement Learning with a Stochastic Actor. arXiv Preprint 2018. Available online: https://arxiv.org/abs/1801.01290.

- Jiang, Z.; Xu, D.; Liang, J. A deep reinforcement learning framework for the financial portfolio management problem. arXiv Preprint 2017. Available online: https://arxiv.org/abs/1706.10059.

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. arXiv Preprint 2017. Available online: https://arxiv.org/abs/1707.06347.

- Lu, C.I. Evaluation of Deep Reinforcement Learning Algorithms for Portfolio Optimization. arXiv Preprint 2023. Available online: https://arxiv.org/abs/2307.07694.

- Vishal, M.; Vadlamani, R.; Ramanuj, L. Maglaras, L.A., Das, S., Tripathy, N., Patnaik, S., Eds.; Ensemble Deep Reinforcement Learning for Financial Trading. In Machine Learning Approaches in Financial Analytics; Springer Nature Switzerland: Cham, 2024. [Google Scholar] [CrossRef]

- Yang, H.; Liu, X.-Y.; Zhong, S.; Walid, A. Deep Reinforcement Learning for Automated Stock Trading: An Ensemble Strategy. SSRN Preprint 2020. Available online: https://ssrn.com/abstract=3690996. [CrossRef]

- Nassirtoussi, A.K.; Aghabozorgi, S.; Wah, T.Y.; Ngo, D.C.L. Text mining for market prediction: A systematic review. Expert Syst. Appl. 2014, 41, 7653–7670. [Google Scholar] [CrossRef]

- Rajendran, H.; Kayal, P.; Maiti, M. Is the U.S. Energy Independence and Security Act of 2022 associated with stock market volatility? Util. Policy 2024, 90, 101813. [Google Scholar] [CrossRef]

- Yang, J.; Li, P.; Cui, Y.; Han, X.; Zhou, M. Multi-Sensor Temporal Fusion Transformer for Stock Performance Prediction: An Adaptive Sharpe Ratio Approach. Sensors 2025, 25. [Google Scholar] [CrossRef]

- Aggrawal, N.; Rathi, M.; Kansal, R.; Jamwal, A.; Agarwal, S. QuantForecast-Navigating the Financial Future. ResearchSquare 2024. [Google Scholar] [CrossRef]

- Shahsafi, S.; Naderkhani, F. Enhancing Stock Trading Performance with Deep Q-Learning by Addressing Noisy Data through Advanced Denoising Techniques. In Proceedings of the 27th International Conference on Information Fusion (FUSION); Venice, Italy, 2024; pp. 1–7. [Google Scholar] [CrossRef]

- Yadav, P.; Giri, J.N. Challenges and Opportunities in Price Forecasting for Commodities: A Study of Technical Indicators in the NCR Region. Eur. Econ. Lett. 2025, 15, 1079–1088. [Google Scholar]

- Liu, A.; Chen, J.; Yang, S.Y.; Hawkes, A.G. The Flow of Information in Trading: An Entropy Approach to Market Regimes. Entropy 2020, 22, 1064. [Google Scholar] [CrossRef] [PubMed]

- Calefariu Giol, E.; Panazan, O.; Gheorghe, C. Cyber, Geopolitical, and Financial Risks in Rare Earth Markets: Drivers of Market Volatility. Risks 2025, 13, 46. [Google Scholar] [CrossRef]

- Sahut, J.M.; Hajek, P.; Olej, V.; Hikkerova, L. The Role of News-Based Sentiment in Forecasting Crude Oil Price During the Covid-19 Pandemic. Ann. Oper. Res. 2025, 345, 861–884. [Google Scholar] [CrossRef]

- Jagirdar, S.S.; Gupta, P.K. Charting the financial odyssey: a literature review on history and evolution of investment strategies in the stock market (1900–2022). China Account. Finance Rev. 2024, 25, 277–307. [Google Scholar] [CrossRef]

- Mohammadshafie, A.; Mirzaeinia, A.; Jumakhan, H.; Mirzaeinia, A. Deep Reinforcement Learning Strategies in Finance: Insights into Asset Holding, Trading Behavior, and Purchase Diversity. arXiv Preprint 2024. [Google Scholar] [CrossRef]

- Panya, T.; Khamkong, M. Deep Reinforcement Learning for Automated of Asian Stocks Trading. In Applications of Optimal Transport to Economics and Related Topics; Kreinovich, V., Yamaka, W., Leurcharusmee, S., Eds.; Springer Nature Switzerland: Cham, Switzerland, 2024. [Google Scholar] [CrossRef]

- Vetrin, D.; Koberg, M. Deep Reinforcement Learning in High-Frequency Trading. arXiv Preprint 2024. Available online: https://arxiv.org/abs/2404.09876.

- Huang, G.; Zhou, X.; Song, Q. A Deep Reinforcement Learning Framework for Dynamic Portfolio Optimization: Evidence from China’s Stock Market. arXiv 2025, arXiv:2412.18563. https://arxiv.org/abs/2412.18563. [Google Scholar]

- Zhong, X.; Wei, J.; Li, S.; Xu, Q. Deep reinforcement learning for dynamic strategy interchange in financial markets. Appl. Intell. 2024, 55. [Google Scholar] [CrossRef]

- Markowitz, H. Portfolio selection. J. Finance 1952, 7, 77–91. [Google Scholar]

- Sharpe, W.F. The Sharpe Ratio. J. Portf. Manag. 1994, 21, 49–58. [Google Scholar] [CrossRef]

- Breiman, L. Bagging predictors. Mach. Learn. 1996, 24, 123–140. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A Decision-Theoretic Generalization of On-Line Learning and an Application to Boosting. J. Comput. Syst. Sci. 1997, 55, 119–139. [Google Scholar] [CrossRef]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Schapire, R.E. A brief introduction to boosting. IJCAI Int. Joint Conf. Artif. Intell. 1999, 2, 1401–1406. [Google Scholar]

- Wang, M.; Yu, S. Application of Bayesian network and genetic algorithm enhanced Monte Carlo method in urban transportation infrastructure investment. J. Comput. Methods Sci. Eng. 2025, 0. [Google Scholar] [CrossRef]

- Reifschneider, D. US Monetary Policy and the Recent Surge in Inflation. Peterson Inst. Int. Econ. Work. Pap. 2024, 24-13. [Google Scholar] [CrossRef]

- Shu, M.; Song, R. Real-time Bubble Status of US Stock Market After the 2020 Stock Market Crash. In 2024 JSM Proc.; American Statistical Association: Alexandria, VA, USA, 2024; Available online: https://ssrn.com/abstract=5075507. [CrossRef]

- Thongkairat, S.; Yamaka, W. A Combined Algorithm Approach for Optimizing Portfolio Performance in Automated Trading: A Study of SET50 Stocks. Mathematics 2025, 13. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, Y.; Zou, J.; Ravishanker, N. Online structural break detection in financial durations. Stat. Comput. 2025, 35. [Google Scholar] [CrossRef]

- Xiong, Z. Ensemble RL through Classifier Models: Enhancing Risk-Return Trade-offs in Trading Strategies. arXiv 2025, arXiv:2502.17518. https://arxiv.org/abs/2502.17518. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Rezaei, A.; Abdellatif, I.; Umar, A. Towards Economic Sustainability: A Comprehensive Review of Artificial Intelligence and Machine Learning Techniques in Improving the Accuracy of Stock Market Movements. Int. J. Financ. Stud. 2025, 13, 1–36. [Google Scholar]

- Bollerslev, T. Generalized autoregressive conditional heteroskedasticity. J. Econometrics 1986, 31, 307–327. [Google Scholar] [CrossRef]

- Engle, R.F. Dynamic Conditional Correlation: A Simple Class of Multivariate GARCH Models. J. Bus. Econ. Stat. 2002, 20, 339–350. [Google Scholar] [CrossRef]

- Goodell, J.W. COVID-19 and finance: Agendas for future research. Finance Res. Lett. 2020, 35, 101512. [Google Scholar]

- Mukherjee, A.; Singhal, R.; Shroff, G. Numin: Weighted-Majority Ensembles for Intraday Trading. In Proc. 5th ACM Int. Conf. AI Finance (ICAIF ’24); ACM, 2024; pp. 703–710. [Google Scholar] [CrossRef]

- Orra, A.; Bhambu, A.; Choudhary, H.; Thakur, M. Dynamic Reinforced Ensemble using Bayesian Optimization for Stock Trading. In Proc. 5th ACM Int. Conf. AI Finance (ICAIF ’24); ACM, 2024; pp. 361–369. [Google Scholar] [CrossRef]

- Balijepalli, N.S.S.; Thangaraj, V. Prediction of cryptocurrency’s price using ensemble machine learning algorithms. Eur. J. Manag. Bus. Econ. 2025. [Google Scholar] [CrossRef]

- Dong, Y.; Huang, H.; Zhang, G.; Jin, J. Adaptive Transit Signal Priority Control for Traffic Safety and Efficiency Optimization: A Multi-Objective Deep Reinforcement Learning Framework. Mathematics 2024, 12, 3994. [Google Scholar] [CrossRef]

- Fujimoto, S.; van Hoof, H.; Meger, D. Addressing Function Approximation Error in Actor-Critic Methods. In Proc. 35th Int. Conf. Mach. Learn. (ICML) 2018, 1582–1591. [Google Scholar]

- Buehler, H.; Gonon, L.; Teichmann, J.; Wood, B. Deep Hedging. Quant. Finance 2019, 19, 1271–1291. [Google Scholar] [CrossRef]

- Gao, H.; Kou, G.; Liang, H.; Zhang, H.; Chao, X.; Li, C.C.; Dong, Y. Machine learning in business and finance: A literature review and research opportunities. Financ. Innov. 2024, 10, 86. [Google Scholar]

- Sangve, S.; Kohad, N.D.; Khot, P.C.; Khobragrade, S.D.; Kumbhar, S.S. ProfitPulse: Reinforcement Learning-Driven Trading Strategy. Cureus J. Comput. Sci. 2025, 2, es44389–024. [Google Scholar] [CrossRef]

- Bai, X.; Zhuang, S.; Xie, H.; Guo, L. Leveraging Generative Artificial Intelligence for Financial Market Trading Data Management and Prediction. Preprints 2024. [Google Scholar] [CrossRef]

- Zhang, Z.; Zohren, S.; Roberts, S. Deep reinforcement learning for trading. J. Financ. Data Sci. 2020, 2, 25–40. [Google Scholar] [CrossRef]

- Narayana, M.L.; Kartha, A.J.; Mandal, A.K.; et al. Ensemble time series models for stock price prediction and portfolio optimization with sentiment analysis. J. Intell. Inf. Syst. 2025. [Google Scholar] [CrossRef]

- Vojtko, R.; Dujava, C. Using Inflation Data for Systematic Gold and Treasury Investment Strategies. SSRN 2025. [Google Scholar] [CrossRef]

- Li, W. The Study on the Application of Machine Learning Algorithms for Stock Prices Prediction During Special Periods. In Proc. Int. Workshop Navigating Digit. Bus. Frontier Sustain. Financ. Innov. (ICDEBA 2024); Atlantis Press, 2025; pp. 656–663. [Google Scholar] [CrossRef]

- Shahzad, S.J.H.; Raza, N.; Balcilar, M.; Ali, S.; Shahbaz, M. Can economic policy uncertainty and investors sentiment predict commodities returns and volatility? Resour. Policy 2017, 53, 208–218. [Google Scholar] [CrossRef]

- Kumar, A.; Ji, T. CryptoPulse: Short-Term Cryptocurrency Forecasting with Dual-Prediction and Cross-Correlated Market Indicators. arXiv 2025, arXiv:2502.19349. Available online: https://arxiv.org/abs/2502.19349.

- Mohammed, K.S.; Obeid, H.; Oueslati, K.; Kaabia, O. Investor sentiments, economic policy uncertainty, US interest rates, and financial assets: Examining their interdependence over time. Financ. Res. Lett. 2023, 57, 104180. [Google Scholar] [CrossRef]

- Qureshi, S.; Saeed, A.; Ahmad, F.; Khattak, A.; Almotiri, S.; Al Ghamdi, M.; Rukh, M. Evaluating machine learning models for predictive accuracy in cryptocurrency price forecasting. PeerJ Comput. Sci. 2025, 11, e2626. [Google Scholar] [CrossRef]

- Zúñiga-Cedillo, S.Y.; Jiménez-Preciado, A.L.; Cruz-Aké, S.; Venegas-Martínez, F. Behavioral Economics and Stock Market Sentiments in Investment Decisions in Mexico: Web Scraping, Natural Language Processing, and Pearson Correlation of Scores. Int. J. Econ. Financ. Issues 2025, 15, 344–354. [Google Scholar] [CrossRef]

- Jorion, P. Value at Risk: The New Benchmark for Managing Financial Risk, 3rd ed.; McGraw Hill Professional: New York, NY, USA, 2006. [Google Scholar]

- Rockafellar, R.; Uryasev, S. Optimization of conditional value-at-risk. J. Risk 2000, 2, 21–41. [Google Scholar]

- Alexander, C. Market Risk Analysis, Quantitative Methods in Finance; John Wiley & Sons: Hoboken, NJ, USA, 2008. [Google Scholar]

- Hull, J. Risk Management and Financial Institutions, 4th ed.; John Wiley & Sons: Hoboken, NJ, USA, 2015. [Google Scholar]

- Celestin, M.; Kumar, D.A.; Asamoah, P. Applications of GARCH Models for Volatility Forecasting in High-Frequency Trading Environments. Zenodo 2025, 10, 12–21. [Google Scholar] [CrossRef]

- Tsay, R.S. Analysis of Financial Time Series, 2nd ed.; John Wiley & Sons: Hoboken, NJ, USA, 2005. [Google Scholar]

- Enenkel, M.; Engle, N.L.; Svoboda, M. A major blind spot in drought risk financing: water services in low-income countries. Front. Clim. 2024. Available online: https://api.semanticscholar.org/CorpusID:270682748. [CrossRef]

- Pagano, M.S.; Schwartz, R.A. A closing call’s impact on market quality at Euronext Paris. J. Financ. Econ. 2003, 68, 439–484. [Google Scholar] [CrossRef]

- Rodríguez Cuadro, D.; Pérez-Plaza, S.; Castaño-Martínez, A.; Fernández-Palacín, F. A Study of the Colombian Stock Market with Multivariate Functional Data Analysis (FDA). Math. 2025, 13. [Google Scholar] [CrossRef]

- Koutmos, G.; Booth, G.G. Asymmetric volatility transmission in international stock markets. J. Int. Money Finance 1995, 14, 747–762. [Google Scholar] [CrossRef]

- Damodaran, A. Investment Valuation: Tools and Techniques for Determining the Value of Any Asset; John Wiley & Sons: Hoboken, NJ, USA, 2012. [Google Scholar]

- Dong, J. Silicon Valley Bank Bankruptcy—Liquidity Risk Analysis Based on Financial Statements. SHS Web Conf. 2024. [Google Scholar] [CrossRef]

- Majnoni, G.; Martinez Peria, M.; Blaschke, W.; Jones, M. Stress Testing of Financial Systems: An Overview of Issues, Methodologies, and FSAP Experiences. IMF Work. Pap. 2001, 01. [Google Scholar] [CrossRef]

- Sorge, M. Stress-Testing Financial Systems: An Overview of Current Methodologies. BIS Working Paper No. 165. 2004. [Google Scholar] [CrossRef]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction, 2nd ed.; The MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Hamilton, J.D. A New Approach to the Economic Analysis of Nonstationary Time Series and the Business Cycle. Econometrica 1989, 57, 357–384. [Google Scholar] [CrossRef]

- Ooi, K.L. (2025). Modern Behavioural Finance Theories. In: Theories to Contemporary Applications and Future Perspectives. Springer. https://link.springer.com/chapter/10.1007/978-981-96-2690-8_4.

- Hachaïchi, Y.; Lanwer, A. Benchmarking Reinforcement Learning (RL) Algorithms for Portfolio Optimization. RG 2024. [Google Scholar] [CrossRef]

- Benhamou, E. Can Deep Reinforcement Learning solve the portfolio allocation problem? Université Paris Sciences et Lettres 2023. Available online: https://tel.archives-ouvertes.fr/tel-04397754.

- Sattar, A.; Sarwar, A.; Gillani, S.; Bukhari, M.; Rho, S.; Faseeh, M. A Novel RMS-Driven Deep Reinforcement Learning for Optimized Portfolio Management in Stock Trading. IEEE Access 2025, 13, 42813–42835. [Google Scholar] [CrossRef]

- Scaletta, G. Deep Reinforcement Learning for Portfolio Optimization. Politec. Torino 2024, Master’s Thesis, Corso di Laurea Magistrale in Ingegneria Informatica (Computer Engineering).

| Model | Hyperparameters | Timesteps | Training Time |

|---|---|---|---|

| A2C | “n_steps”: 10,000, “ent_coef”: 0.01, “learning_rate”: 0.001 |

200,000 | 8–18 min |

| PPO | “n_steps”: 10,000, “ent_coef”: 0.005, “learning_rate”: 0.001, “batch_size”: 256 |

200,000 | 12–20 min |

| DDPG | “batch_size”: 256, “buffer_size”: 1,000,000, “learning_rate”: 0.001 |

200,000 | 120–130 min |

| SAC | “batch_size”: 256, “buffer_size”: 1,000,000, “learning_rate”: 0.001, “learning_starts”: 0.01, “ent_coef”: “auto_0.1” |

200,000 | 130–140 min |

| TD3 | “batch_size”: 256, “buffer_size”: 1,000,000, “learning_rate”: 0.001 |

200,000 | 115–130 min |

| Model | Annual Return (%) | Cumulative Returns (%) | Annual Volatility (%) | Sharpe Ratio | Max Drawdown (%) | Daily VaR (%) |

|---|---|---|---|---|---|---|

| A2C | 5.72 | 27.6 | 8.11 | 0.7053 | -13.20 | -1.00 |

| PPO | 6.04 | 29.3 | 8.08 | 0.7475 | -13.10 | -1.00 |

| DDPG | 5.35 | 25.4 | 8.26 | 0.6477 | -13.85 | -1.02 |

| SAC | 5.10 | 24.4 | 7.70 | 0.6623 | -12.98 | -0.95 |

| TD3 | 6.07 | 29.4 | 8.32 | 0.7296 | -12.96 | -1.02 |

| Min Variance | 0.17 | 0.75 | 5.99 | 0.0284 | -10.96 | -0.75 |

| CAPM | 3.49 | 16.1 | 17.17 | 0.2033 | -50.97 | -2.14 |

| IMCA | 6.80 | 29.5 | 8.20 | 0.8293 | -13.50 | -1.02 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).