Submitted:

14 March 2025

Posted:

17 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- In summary, the principal contributions of this research are as follows:

- The introduction of dynamic time-weighted Rényi entropy furnishes a quantitative metric to capture the temporal dynamics and intrinsic structural complexity of network dissemination.

- A dual-level Rényi entropy framework is devised at both local node and global time-step scales, thereby facilitating the integrated modeling of localized and global structural complexity alongside propagation dynamics.

- The confluence of propagation temporal complexity and graph topology is exploited to establish a comprehensive spatiotemporal fusion model.

- The model's generalizability is rigorously validated across multiple real-world public opinion datasets, advancing innovative methodologies for key node detection and propagation pathway prediction.

2. Methods

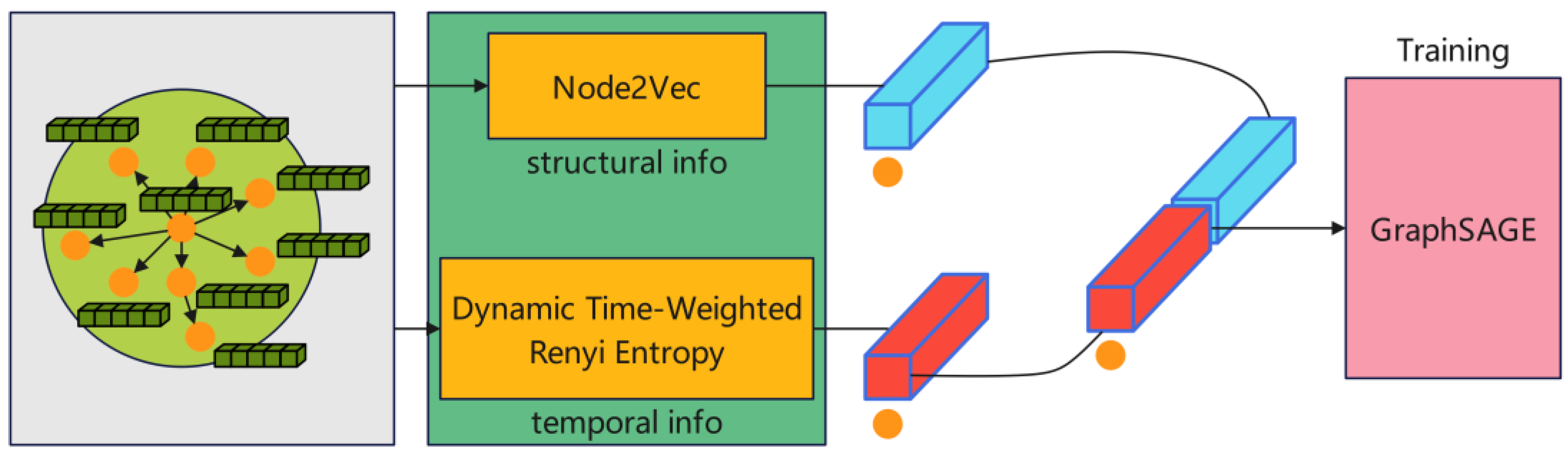

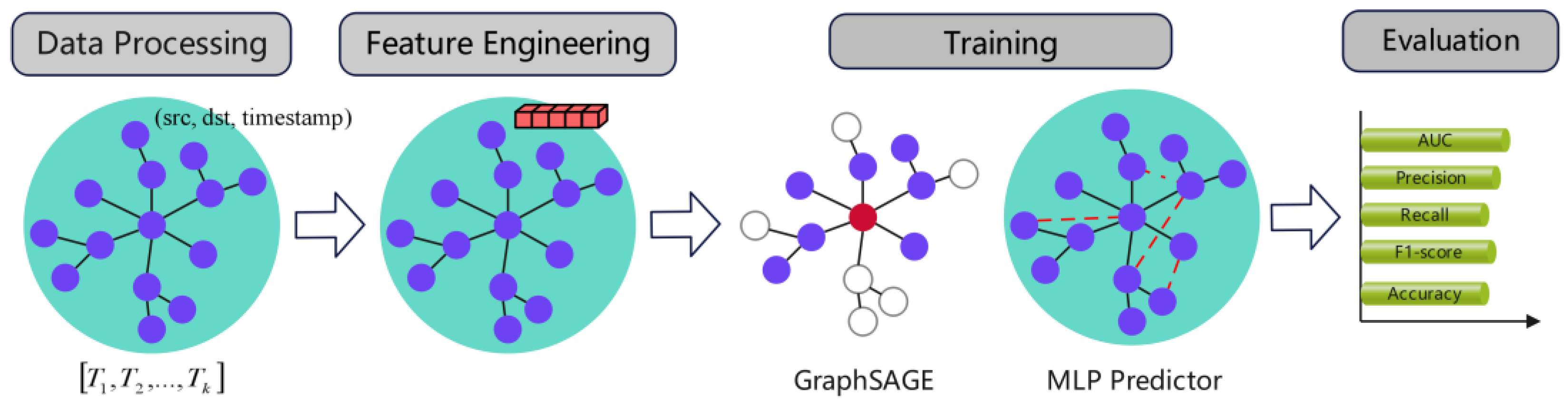

2.1. Spatiotemporal Fusion Modeling

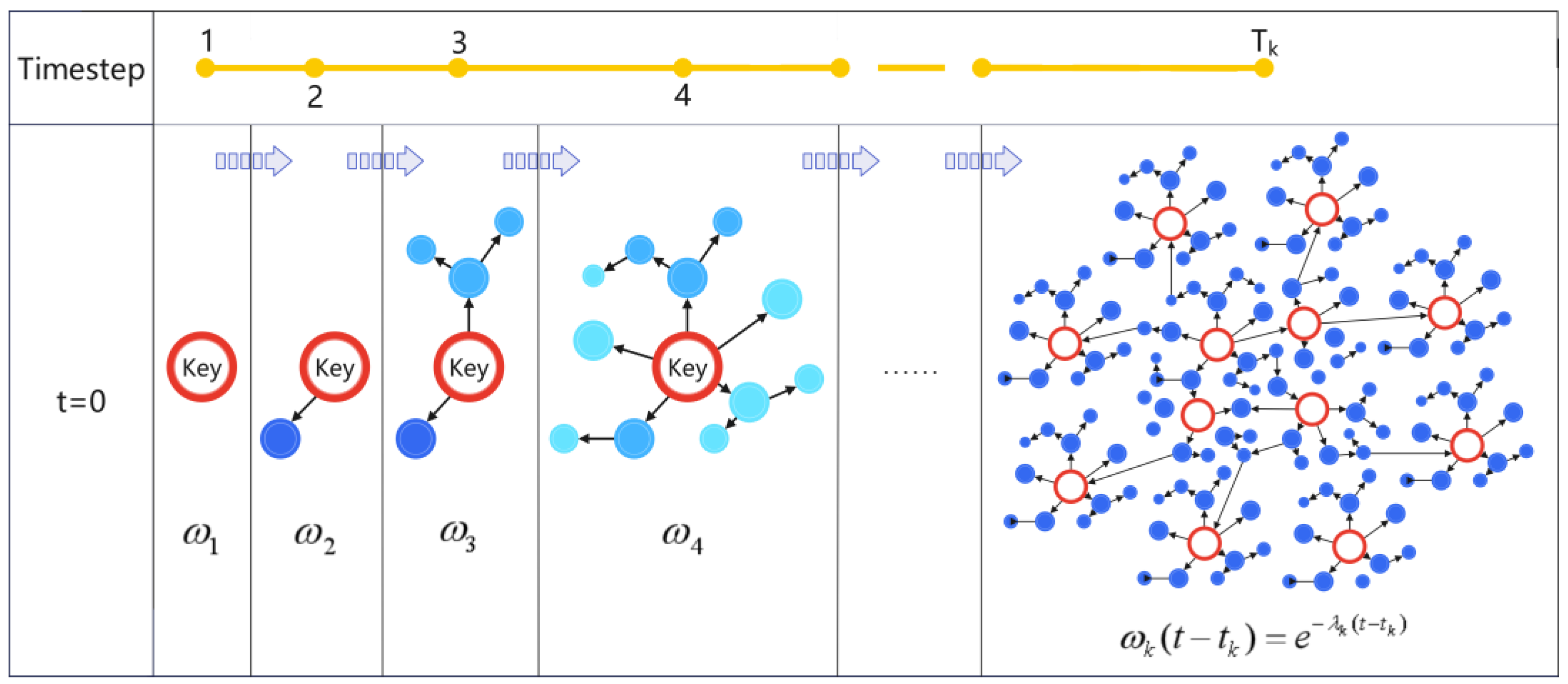

2.2. Definition of Dynamic Time-Weighted Rényi Entropy

| Algorithm 1: DTWRE based on time weighting and time step segmentation. |

| Input: A directed graph G = (V, E) with timestamps for each edge; LNE parameter α; decay factor for time weighting λ; maximum time step after dividing timestamps by a chosen time window T. Output: A trained GraphSAGE-based method for link prediction. 1 Ti ← Split time step 2 for v ∈ V do p(v) ← (Node_degree(v) / Sum(Node_degree(vi))) for each t in [0...T] do ← ComputeLNE(Subgraphs[t], p, α) ← Sum() ← ComputeDTWRE(, λ) end end 3 Combined_features ←Concatenate(Weighted_Entropy, Node2Vec_embeddings) 4 GraphSAGE-Entropy-based link prediction method(Combined_features) |

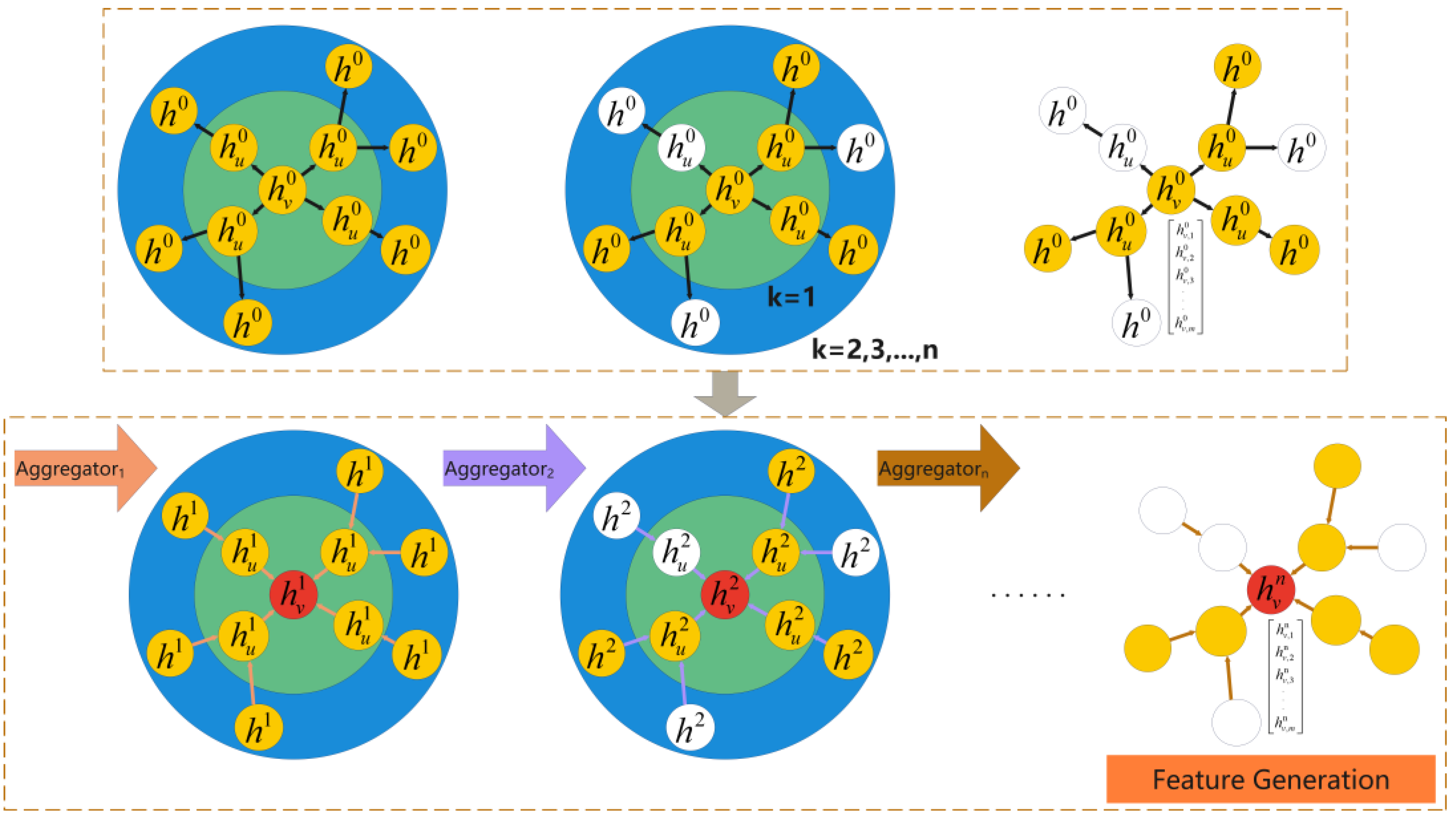

2.3. GraphSAGE for Public Sentiment Prediction

- Neighbor Sampling: Given that social networks typically manifest as large-scale, sparse graphs, GraphSAGE randomly samples a fixed number of neighbors for each node to curtail computational complexity—an essential strategy for managing extensive graph datasets.

- Feature Aggregation: For each node u, GraphSAGE aggregates features from its neighboring nodes and updates its own representation accordingly, as delineated in the following formulation:

3. Experiments

3.1. Datasets and Data Preprocessing

- ▪

- Time labels were standardized by converting them into timestamp format.

- ▪

- Data augmentation procedures were applied, taking into account the large scale of the real-world social network dataset.

- ▪

- Isolated nodes, which do not participate in information dissemination, were removed to ensure graph connectivity and to uphold the validity of Rényi entropy computations.

- ▪

- From the real-world social network dataset—which includes the content of rumor-related Weibo posts, the publishers, records of reposts and comments, and interaction timestamps—filtering was conducted to extract the rumor originators along with the corresponding interaction relationships (comments and reposts) to construct a public opinion propagation network.

- ▪

- Temporal window segmentation was performed by partitioning dissemination data into multiple time windows based on timestamps; each window corresponds to a time step with gradually varying weights. Different datasets were segmented into time steps according to empirical criteria, as illustrated in Figure 3.

3.2. Feature Engineering

3.2.1. Rényi Entropy Feature

3.2.2. Node2Vec Embedding Features

- Graph Conversion: Transform the graph constructed with DGL into NetworkX format and convert it into an undirected graph to satisfy the requirements of Node2Vec.

- Embedding Computation: Perform random walk sampling on the network using the Node2Vec model, and generate continuous vector representations for nodes via the Skip-Gram model, with the embedding dimension set to 64.

- Normalization: To eliminate scale discrepancies, normalize the generated node embeddings using MinMaxScaler and convert them into Tensor format to ensure consistency with other features.

3.2.3. Feature Fusion

3.3. Model Construction and Training

-

Model Architecture: The proposed model is comprised of two principal components:

- (1)

- Feature Input Layer: This layer ingests the extracted node features—both entropy-based and embedding features—as input.

- (2)

- Graph Neural Network Layer: Employing GraphSAGE, this layer aggregates features from neighboring nodes to update node representations, thereby generating embeddings for nodes across distinct time windows.

- Predictor and Loss Function: To facilitate effective link prediction, a multilayer perceptron (MLP) is employed as the predictor. Comprising multiple fully connected layers, the MLP is designed to capture nonlinear relationships among node features and ultimately outputs a prediction score that quantifies the likelihood of a link forming. The binary cross-entropy loss function is used to optimize the predicted link probabilities for node pairs. Specifically, given a node pair (u, v), the model forecasts whether these nodes will establish a connection within a future time window. The formulation is as follows:

- 3.

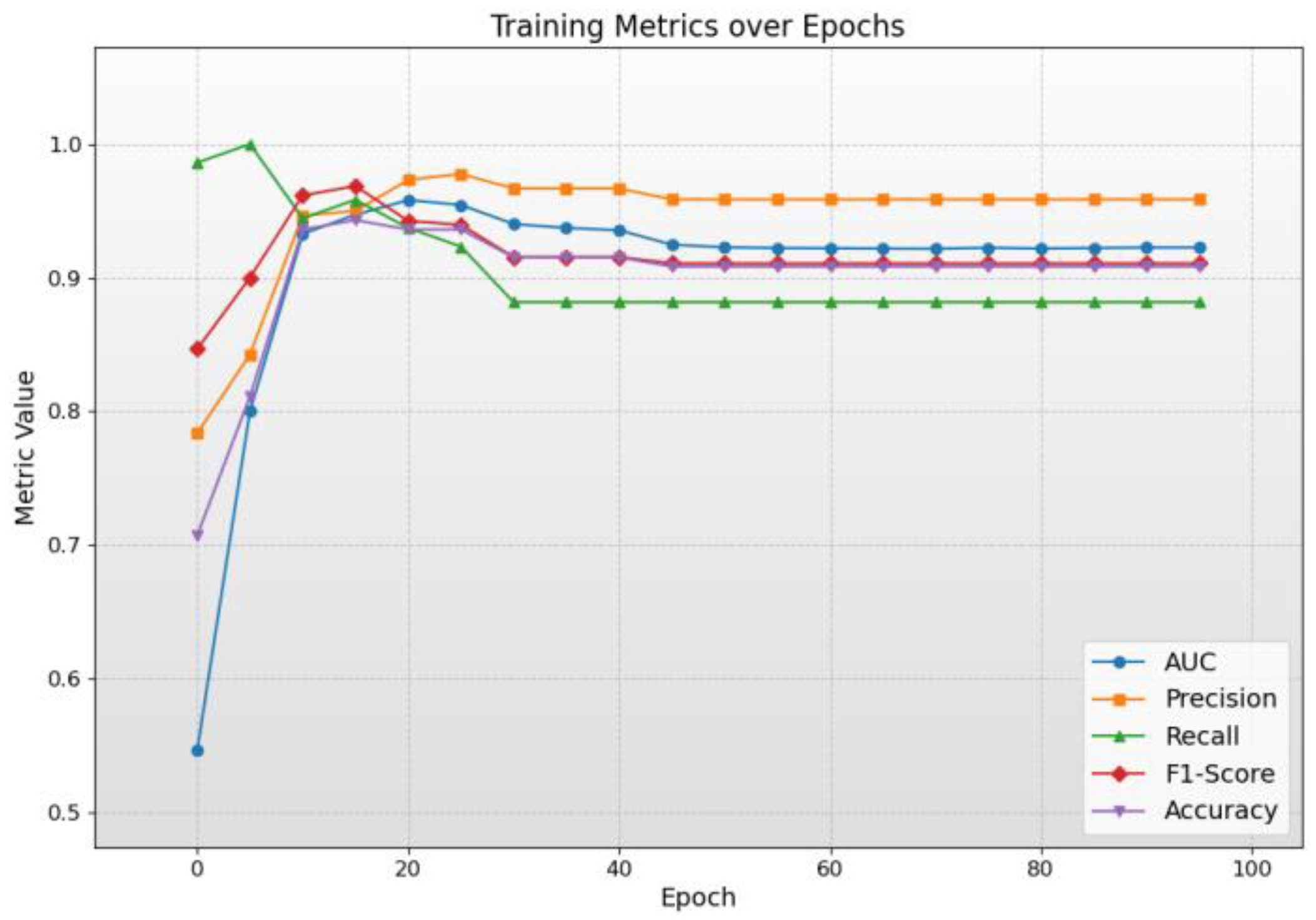

- Training Process: The training phase employs the Adam optimizer—recognized for its adaptive learning rate and rapid convergence—with hyperparameters refined via cross-validation. The model is trained over 100 epochs to minimize the loss function, thereby achieving optimal performance.

3.4. Evaluation Metrics

3.5. Experimental Parameter Setting

4. Results

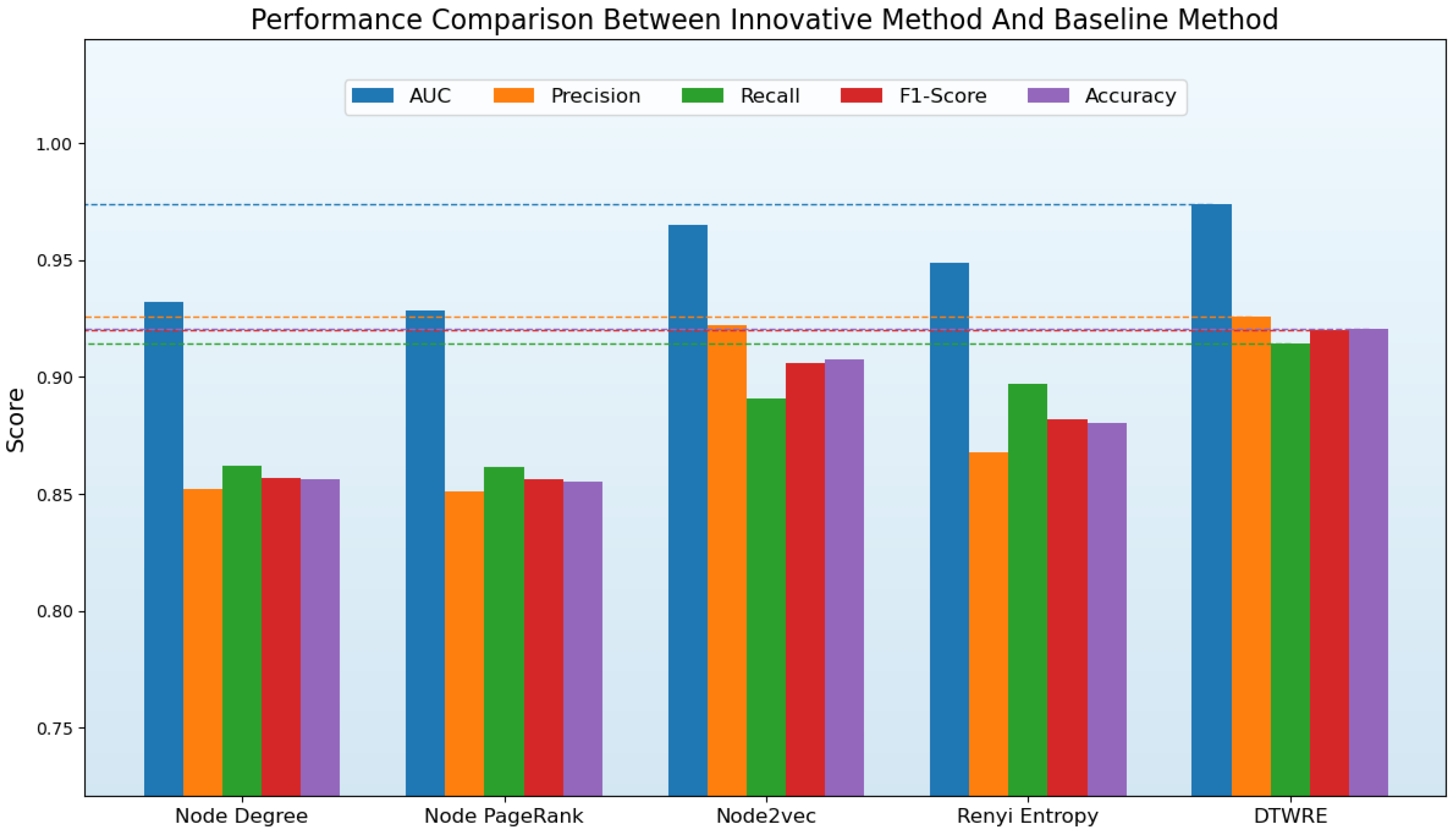

4.1. Model Performance Comparison

4.2. Model Parameter Analysis

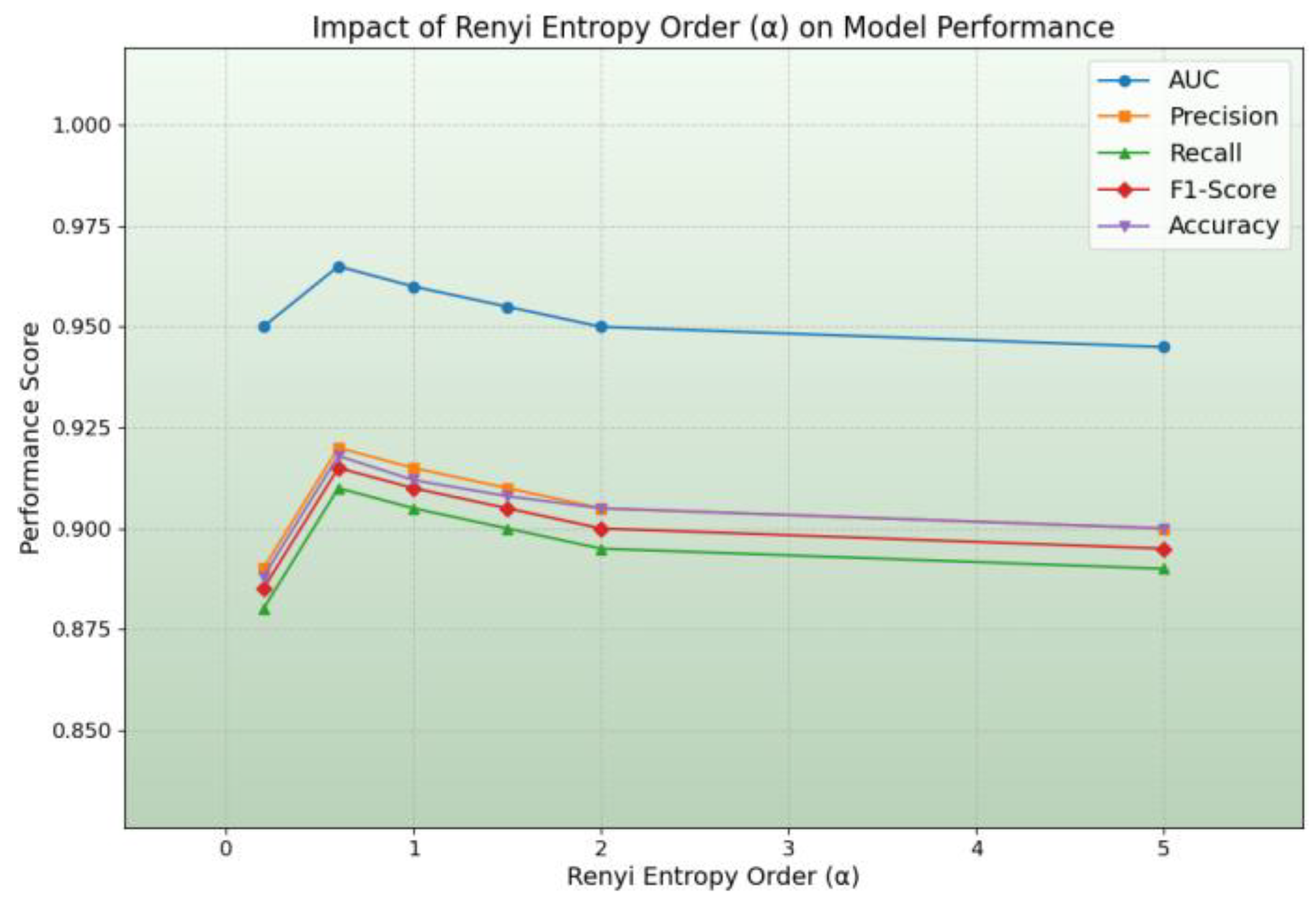

4.2.1. Impact of DTWRE Order α

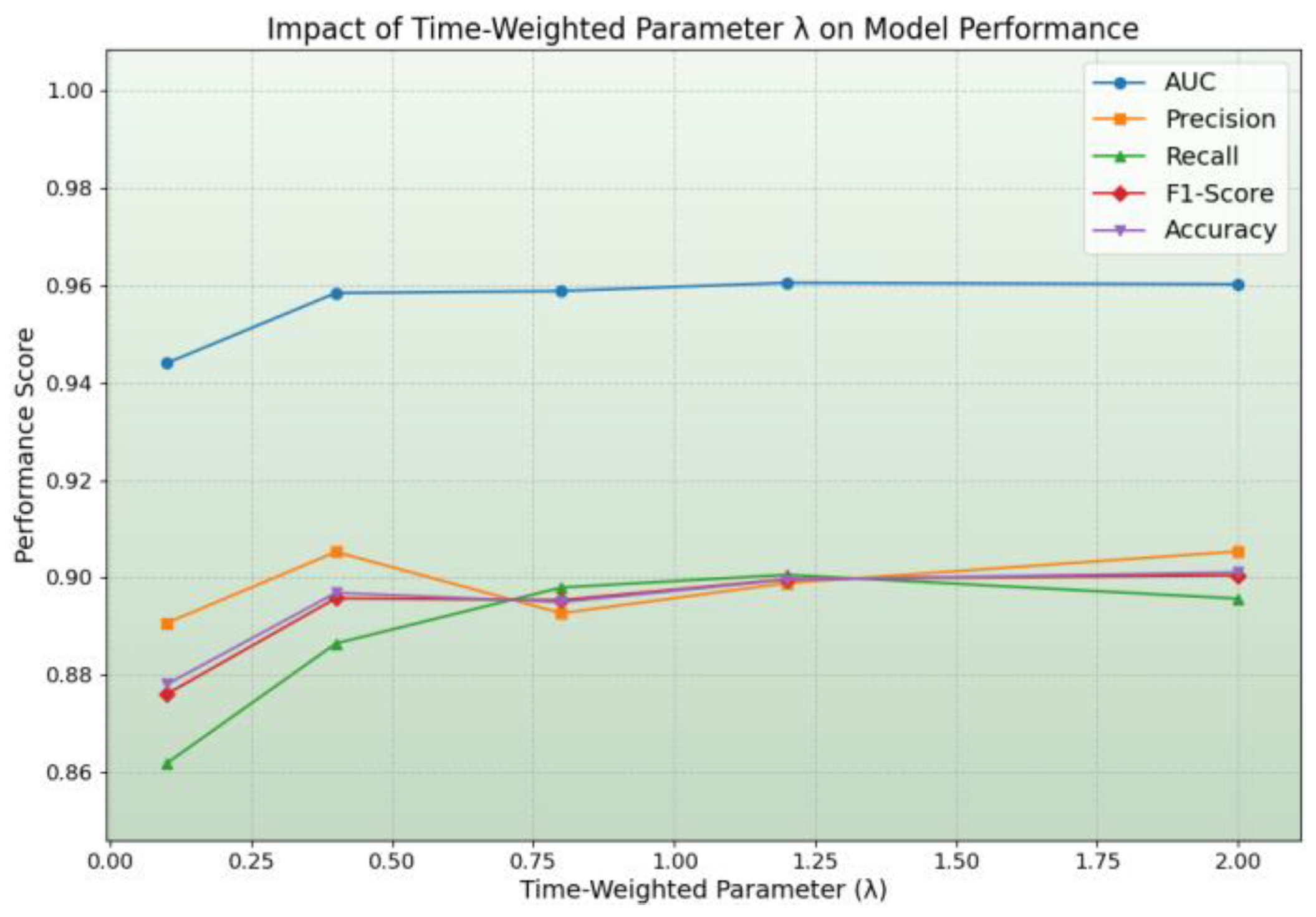

4.2.2. Impact of the Temporal Weighting Parameter λ

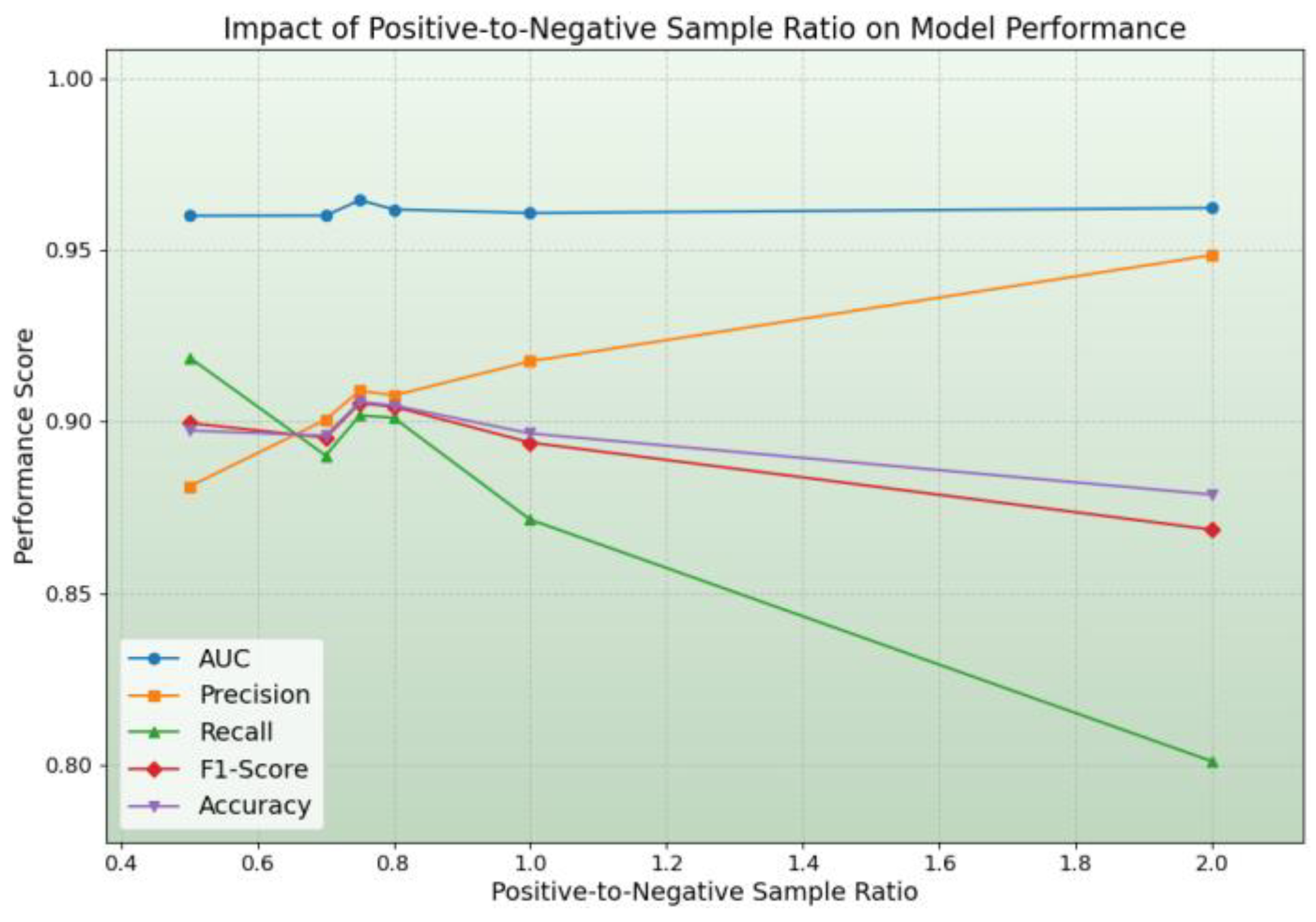

4.2.3. Impact of the Positive-to-Negative Sample Ratio

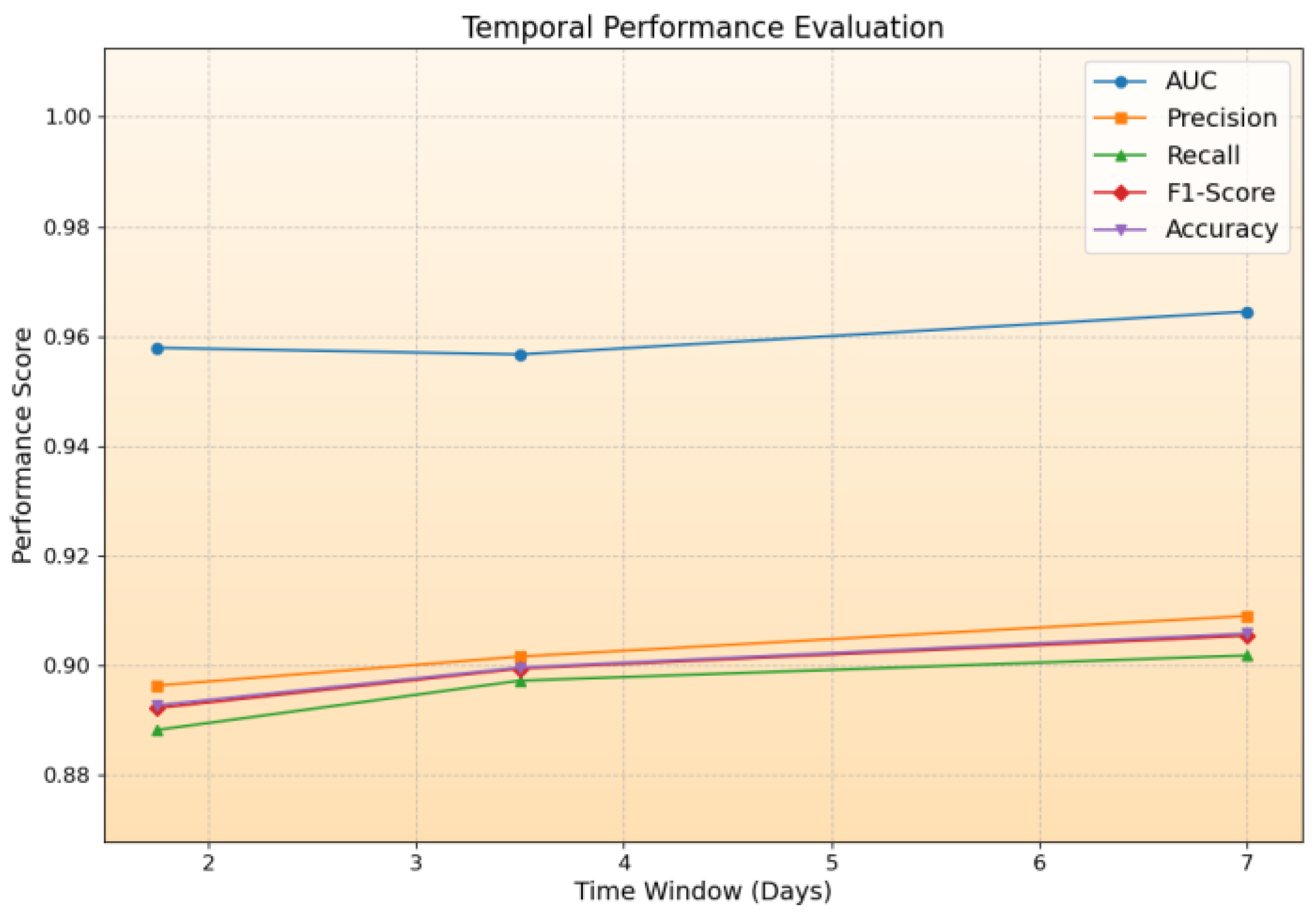

4.2.4. Temporal Performance Evaluation

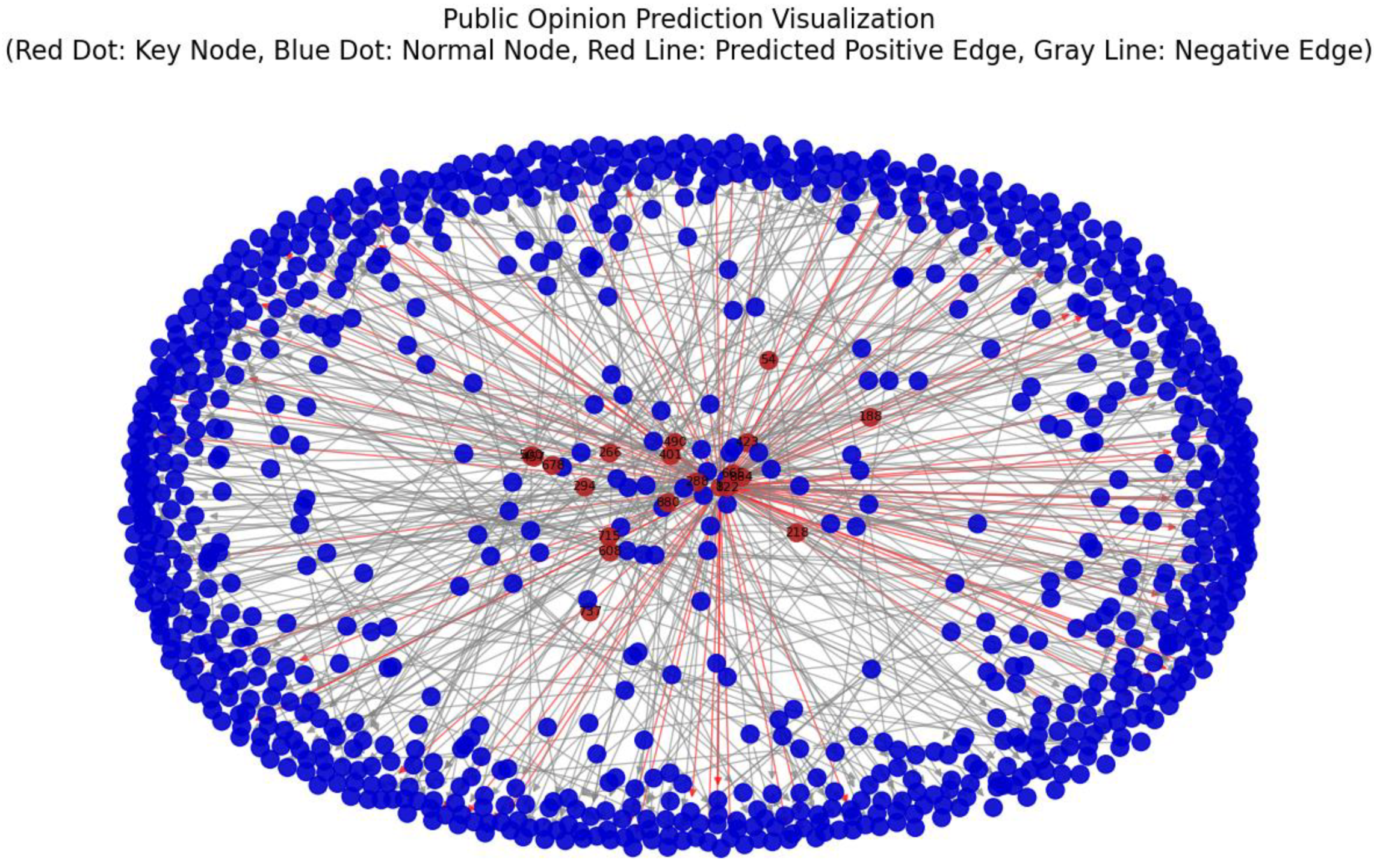

4.3. Prediction of Real Social Network Public Opinion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| DTWRE | Dynamic Time-Weighted Rényi Entropy |

| LNE | Local Node Entropy |

References

- Huberman, B.A.; Adamic, L.A. Information dynamics in the networked world. In Complex networks; Springer Berlin Heidelberg: Berlin, Heidelberg, 2004; pp. 371–398.

- Yang, W.; Wang, S.; Peng, Z.; et al. Know it to defeat it: Exploring health rumor characteristics and debunking efforts on Chinese social media during COVID-19 crisis. In Proceedings of the International AAAI Conference on Web and Social Media; 2022; Vol. 16, pp. 1157–1168. [CrossRef]

- Cui, L.; Wang, S.; Lee, D. Same: sentiment-aware multi-modal embedding for detecting fake news. In Proceedings of the 2019 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining; 2019; pp. 41–48.

- Jain, L. An entropy-based method to control COVID-19 rumors in online social networks using opinion leaders. Technology in Society 2022, 70, 102048. [CrossRef]

- Wang, G.; Wang, Y.; Li, J.; et al. A multidimensional network link prediction algorithm and its application for predicting social relationships. Journal of Computational Science 2021, 53, 101358. [CrossRef]

- Schmeling, J. Time weighted entropies. Colloquium Mathematicum 2000, 84(1), 265–278.

- Zhao, K.; Karsai, M.; Bianconi, G. Entropy of dynamical social networks. PLoS One 2011, 6(12), e28116.

- Chen, N.; Liu, Y.; Chen, H.; et al. Detecting communities in social networks using label propagation with information entropy. Physica A: Statistical Mechanics and its Applications 2017, 471, 788–798. [CrossRef]

- Tian, J.; Fan, H.; Hou, Z. Research on the prediction of popularity of news dissemination public opinion based on data mining. Computational Intelligence and Neuroscience 2022, 2022(1), 6512602. [CrossRef]

- Kim, H.; Anderson, R. Temporal node centrality in complex networks. Phys. Rev. E 2012, 85(2), 026107. [CrossRef]

- Xu, M. Understanding graph embedding methods and their applications. SIAM Review 2021, 63(4), 825–853. [CrossRef]

- Grover, A.; Leskovec, J. node2vec: Scalable feature learning for networks. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining; 2016; pp. 855–864.

- Wang, Y.; Chang, Y.Y.; Liu, Y.; et al. Inductive representation learning in temporal networks via causal anonymous walks. arXiv preprint arXiv:2101.05974, 2021.

- Huang, X.; Li, J.; Yuan, Y. Link Prediction in Dynamic Social Networks Combining Entropy, Causality, and a Graph Convolutional Network Model. Entropy 2024, 26(6), 477. [CrossRef]

- Gao, L.; Wang, H.; Zhang, Z.; et al. HetInf: social influence prediction with heterogeneous graph neural network. Frontiers in Physics 2022, 9, 787185. [CrossRef]

- Li, W.; et al. Internet public opinion diffusion mechanism in public health emergencies: based on entropy flow analysis and dissipative structure determination. Frontiers in Public Health 2021, 9, 731080. [CrossRef]

- Gao, T.; Li, T.; Xu, P. Risk analysis and assessment method for infectious diseases based on information entropy theory. Scientific Reports 2024, 14(1), 16898. [CrossRef]

- Dehmer, M.; Emmert-Streib, F. The role of graph entropy in complex network analysis. Entropy 2016, 18(12), 1–18.

- Rödder, W.; Brenner, D.; Kulmann, F. Entropy based evaluation of net structures–deployed in social network analysis. Expert Systems with Applications 2014, 41(17), 7968–7979. [CrossRef]

- Zhao, K.; Karsai, M.; Bianconi, G. Entropy of dynamical social networks. PLoS One 2011, 6(12), e28116.

- Hamilton, W.; Ying, Z.; Leskovec, J. Inductive representation learning on large graphs. In Advances in Neural Information Processing Systems; 2017; Vol. 30.

- Liu, T.; et al. GraphSAGE-based dynamic spatial–temporal graph convolutional network for traffic prediction. IEEE Trans. Intell. Transp. Syst. 2023, 24(10), 11210–11224. [CrossRef]

- Ma, M.; Zhang, C.; Li, Y.; et al. Rumor detection model with weighted GraphSAGE focusing on node location. Scientific Reports 2024, 14(1), 27127. [CrossRef]

- Zhang, L.; Wu, H.; Chen, Y.; Liu, J. Hierarchical Graph Neural Networks for Cascading Behavior Prediction in Social Networks. In Proceedings of the AAAI Conference on Artificial Intelligence; 2022; pp. 6543–6550.

- Li, X.; Zhang, J.; Wang, Y. Incorporating User Behavior and Network Structure for Predicting Information Diffusion with Graph Neural Networks. In Proceedings of the IEEE International Conference on Data Mining (ICDM); 2021; pp. 1593–1598.

- Rossi, R.A.; Ahmed, H.F. The network data repository with interactive graph analytics and visualization. In Proceedings of the 29th AAAI Conference on Artificial Intelligence; 2015; pp. 4292–4293.

- Kojaku, S.; et al. Network community detection via neural embeddings. Nat. Commun. 2024, 15(1), 9446. [CrossRef]

- Geng, L.; et al. Modeling public opinion dissemination in a multilayer network with SEIR model based on real social networks. Eng. Appl. Artif. Intell. 2023, 125, 106719.

- Xu, D.; Ruan, C.; Korpeoglu, E.; et al. Inductive representation learning on temporal graphs. arXiv preprint arXiv:2002.07962, 2020.

- Wang, X.; Li, Q.; Yu, D.; Huang, W.; Li, Q.; Xu, G. Neural causal graph collaborative filtering. Inf. Sci. 2024, 677, 120872. [CrossRef]

- Tutzauer, F. Entropy as a measure of centrality in networks characterized by path-transfer flow. Social Networks 2007, 29(2), 249–265. [CrossRef]

- Peng, S.; Li, J.; Yang, A. Entropy-based social influence evaluation in mobile social networks. In Algorithms and Architectures for Parallel Processing: 15th International Conference, ICA3PP 2015, Zhangjiajie, China, November 18–20, 2015, Proceedings, Part I; Springer International Publishing: 2015; pp. 637–647.

- Cui, L.; Wang, S.; Lee, D. Same: sentiment-aware multi-modal embedding for detecting fake news. In Proceedings of the 2019 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining; 2019; pp. 41–48.

- Dehmer, M.; Mowshowitz, A. Generalized graph entropies. Complexity 2011, 17(2), 45–50. [CrossRef]

- Rényi, A. On measures of entropy and information. In Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Vol. 1: Contributions to the Theory of Statistics; University of California Press: 1961.

| Baseline Methods | AUC | Precision | Recall | F1-Score | Accuracy |

| ① | 0.9323 | 0.8522 | 0.8618 | 0.8570 | 0.8562 |

| ② | 0.9285 | 0.8509 | 0.8613 | 0.8561 | 0.8552 |

| ③ | 0.9649 | 0.9221 | 0.8909 | 0.9062 | 0.9078 |

| ④ | 0.9487 | 0.8677 | 0.8970 | 0.8821 | 0.8802 |

| DTWRE | 0.9742 | 0.9259 | 0.9144 | 0.9201 | 0.9207 |

| Time step length | AUC | Precision | Recall | F1-Score | Accuracy |

| 604800 | 0.9680 | 0.9159 | 0.9044 | 0.9101 | 0.9107 |

| 302400 | 0.9567 | 0.9016 | 0.8972 | 0.8994 | 0.8996 |

| 151200 | 0.9579 | 0.8963 | 0.8882 | 0.8922 | 0.8927 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).