Submitted:

25 February 2025

Posted:

25 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

- Few-shots prompting – which involves providing some examples of the desired input-output pairs to the LLM, so that it can adapt and provide the response based on the pattern observed in the examples provided [24];

- Contextual prompting – which requires providing a context or background information (a few lines describing the area, domain, why it is required to generate the output) to the LLM so that it can base the response on the context provided [25];

- Dynamic / interactive prompting – which refers to the practice of modifying prompts in real time based on previous responses, so that performance improves [26];

- Zero-shot prompting – which requires asking the model to perform the task without providing any examples and relying solely on the instructions within the prompt [27];

- Instruction-Based Prompting – which refers to crafting clear, direct instructions in the prompt to specify the task that needs to be performed; it is usually used in combination with few-shot or zero-shot techniques [28];

- Chain-of-Thought Prompting – which requires the user to encourage the model to think through its reasoning process step by step and to explain its thought process, rather than just providing the final output, which might lead to more accurate and logical outputs [29].

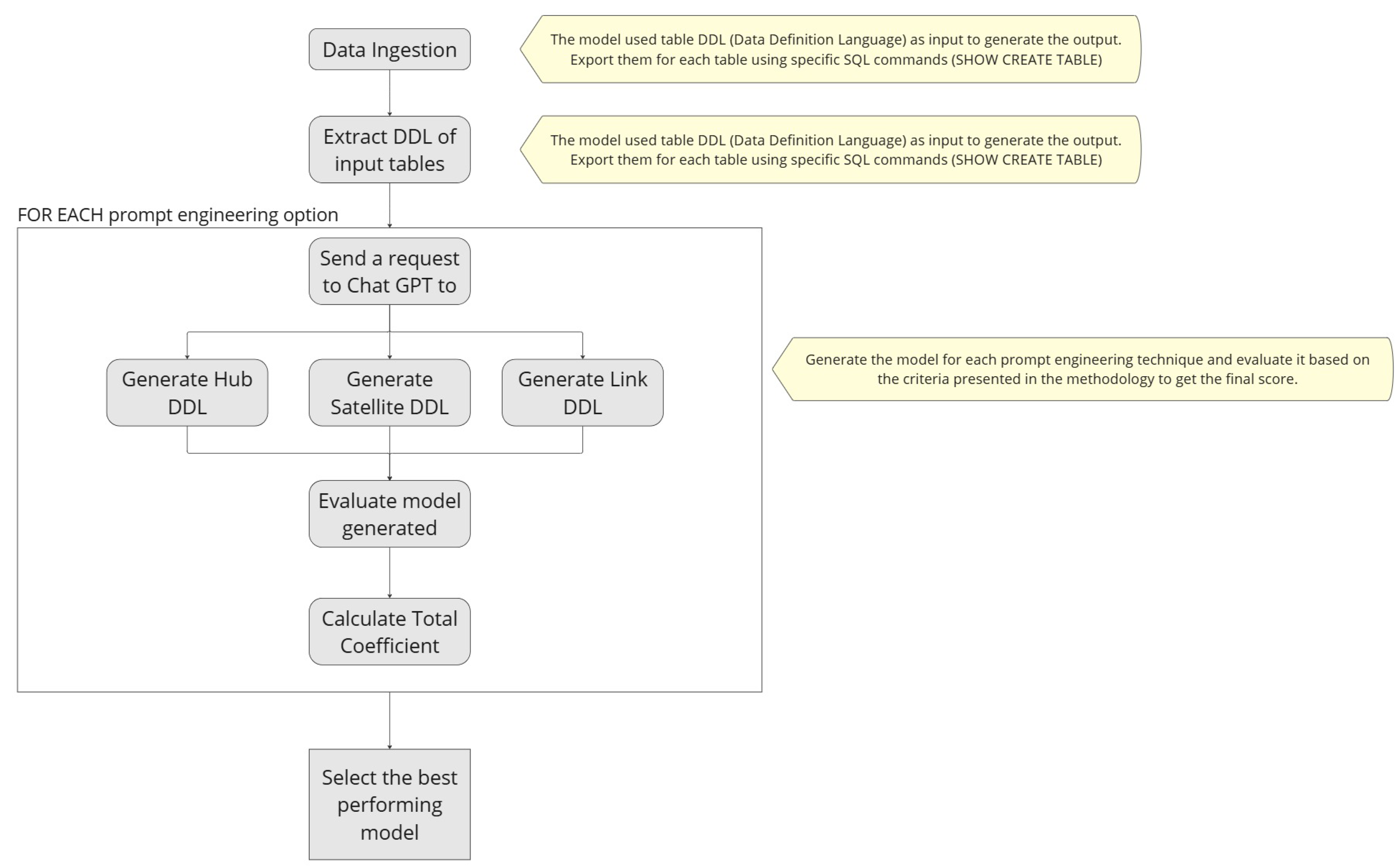

3. Methodology

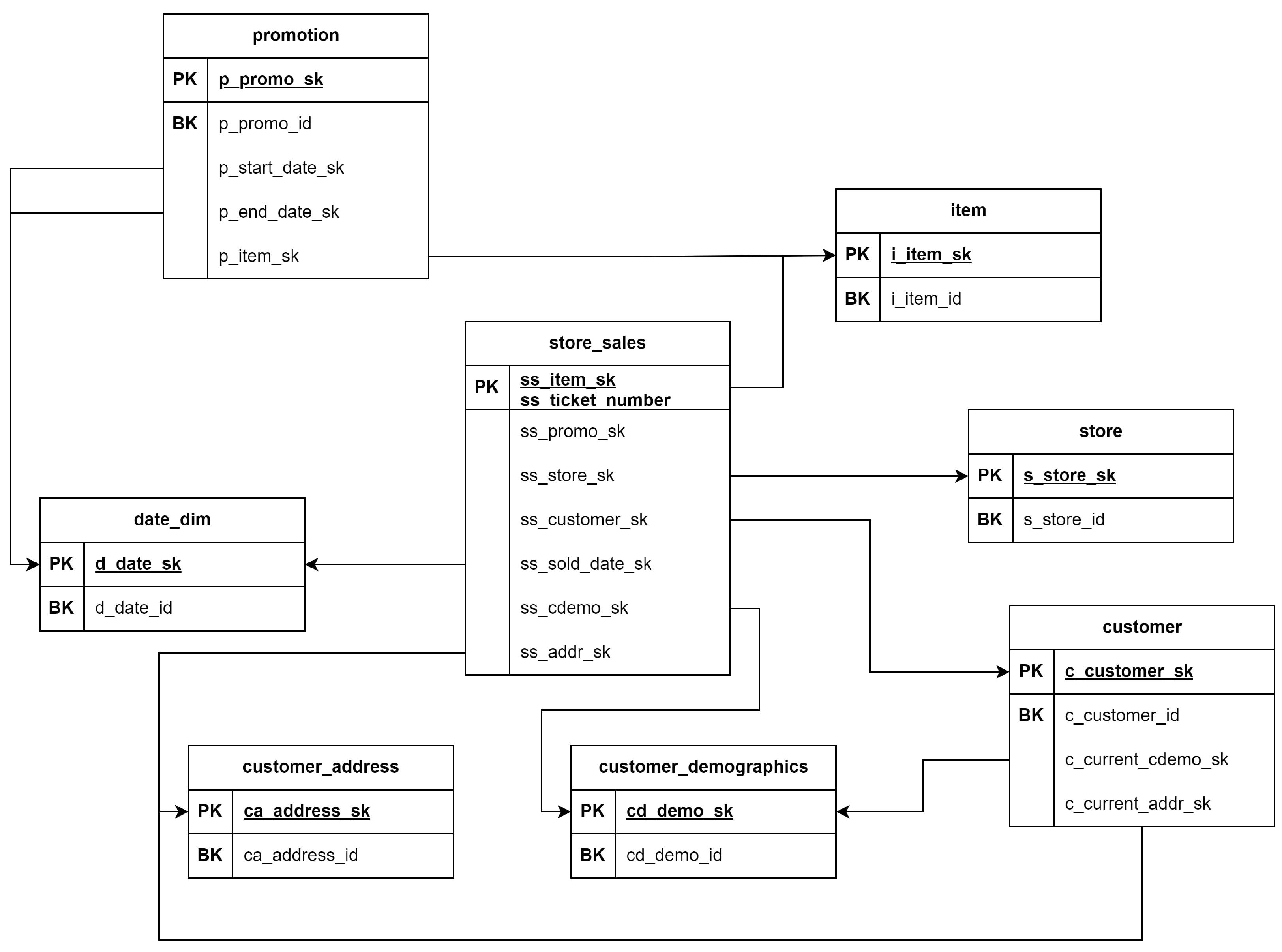

3.1. Data Set Preparation

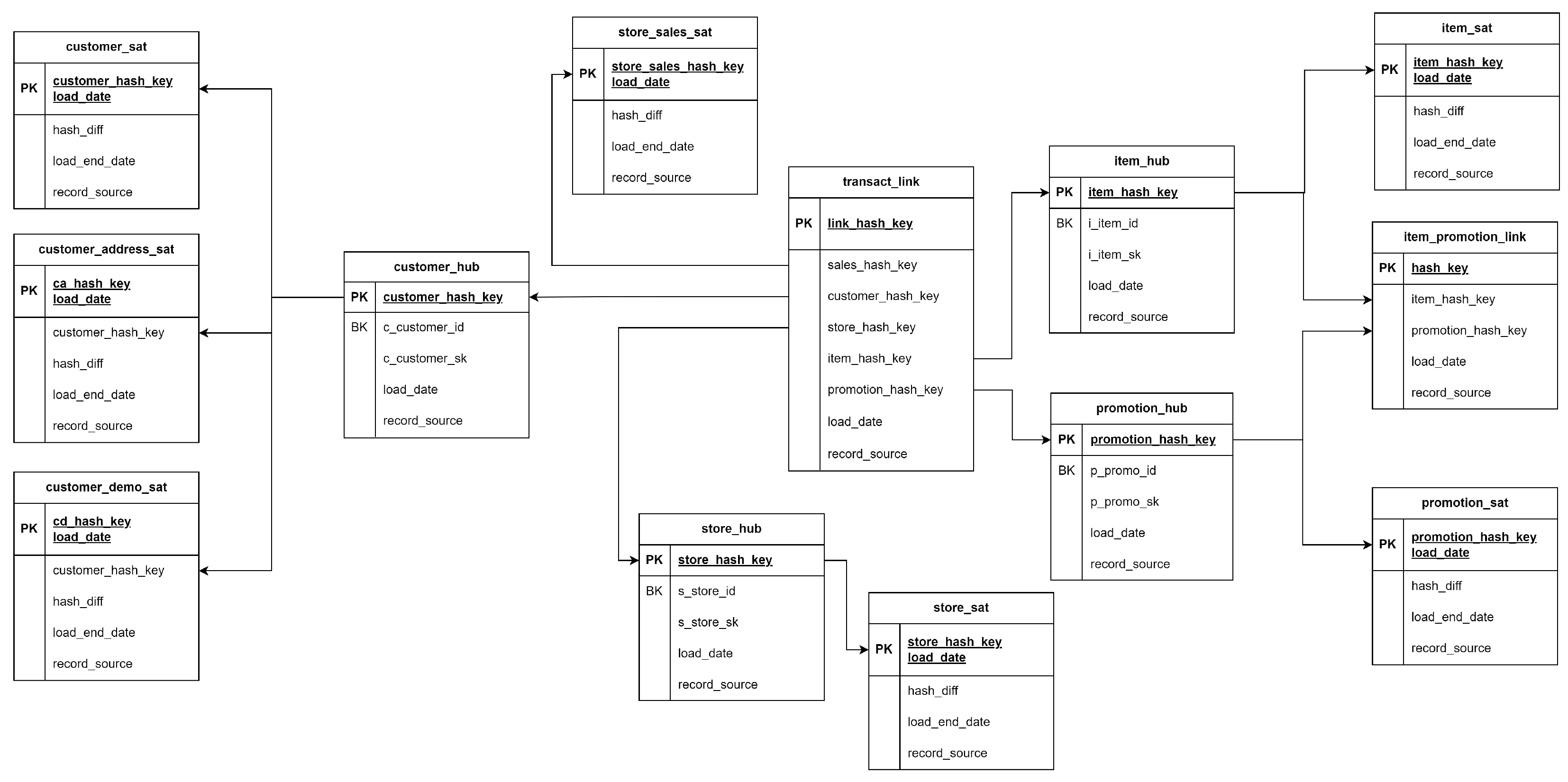

3.2. Data Vault Model Implementation

- 4 hub tables for each core business entity - customer, store, promotion, item

- 7 satellite tables - 3 satellites for customer entity (including demographics and address sources), one table for stores, one for promotion, one for item and one for sales data attributes.

- 2 link tables - one between promotion and item and one between all entities which are directly connected with the sales satellite as well.

3.3. Prompt Engineering Techniques

-

Option 1 - No Prompt EngineeringCreate a Data Vault 2.0 model by using the below DDL as input.

-

Option 2 - Contextual PromptingYou are an expert in Data Vault modeling. I have a TPC-DS data set represented by DDL scripts. Your task is to generate a Data Vault schema that accurately represents this data set. You need to use Data Vault 2.0 principles to generate the output.

-

Option 3 - Few-shot promptingCreate a Data Vault model by using the below DDL as input. Provide the new model DDLs into a single script.Please use this example as reference where there are 2 input tables - bronze_product & bronze_product_details.The output of the provided example will have 1 hub table (hub_product) and 2 satellite tables (sat_product and sat_product_details).The output of the provided example will not return 2 hubs (hub_product and hub_product_details) because the bronze_product_details is related to the product (which is the core business entity) using a foreign key from the bronze_product table.Please provide the DDL for the input model.

-

Option 4 - Dynamic promptingPrompt 1:You are a Data Vault modeling expert. You will need to step-by-step create a Data Vault 2.0 model by using the bronze tables DDL as input.The output should be a new set of DDL.This is the first step of the iterative process. Please provide the DDL of hub tables, which are the core business entities.Be careful to only return the business entities, some tables might not represent business entities, they can be satellites or link or reference tables.Prompt 2:That is great, thank you for your response!Please return the DDL for the satellite tables, which contain all the attributes from the bronze tables (one bronze table will be the source for one satellite).Be aware that there can be multiple satellites associated with the same hub entity and satellites can be associated with either hubs, links or reference tables.Prompt 3:The outputs provided earlier for hubs & satellites are amazing!Let’s go to the final step of the process – link tables. Based on the hub tables generated earlier, please return the DDL for the link tables associated.You do not need to provide links between other tables that are not the hubs or suggest other potential new hub and satellite tables in this step of the process.

-

Option 5 - Instruction-based promptingYou are a Data Vault modeling expert.Task: Generate a comprehensive Data Vault schema based on the provided TPC-DS data set DDL statements.Instructions:1. Identify Hubs:- They represent core business entities (e.g. customers, products)- Each Hub only includes the business key, the generated hash key, effective_datetime when the record is loaded and record_source.2. Identify Satellites:- For each Hub, create one or more Satellite tables to store descriptive attributes related to that business entity.- There can be more satellites for the same hub and they can come from different tables (if they are related to the same business entity).- Each Satellite should include the attributes related to the business entity/hub.3. Identify Links:- For relationships between the entities created previously, create Link tables.- Each Link should include the hash key, foreign keys linked the related tables.

3.4. Validation and Evaluation Criteria

- Model accuracy – this criterion includes comments around adherence to Data Vault principles, checking that the surrogate keys are generated appropriately, and the relationships between entities are captured correctly. This directly addresses the research question regarding ChatGPT’s ability to create efficient and accurate models.

- Efficiency – this criterion includes discussions around the number of tables generated and the effort required to implement the respective solution. It provides insights into how ChatGPT can streamline the traditional labor-intensive process of Data Vault modeling.

- Manual review – this criterion provides a comparison with the manual-crafted model presented in the section above to ensure that automated outputs meet industry standards for accuracy and completeness.

- Adaptability – this criterion includes comments around model scalability to handle changes when a new source is integrated into existing models, a critical requirement for scalable and flexible data warehousing solutions.

- Stability – this criterion is being used to validate if the output provided by the LLM model is consistent and repeatable through multiple iterations, ensuring its reliability for real-world data engineering tasks.

4. Results and Discussions

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Golightly, L.; Chang, V.; Xu, Q. A.; Gao, X.; Liu, B. S. C. (2022). Adoption of cloud computing as innovation in the organization. In International Journal of Engineering Business Management, 14. [CrossRef]

- Vines, A.; Tanasescu, L. (2023). An overview of ETL cloud services: An empirical study based on user’s experience. In Proceedings of the International Conference on Business Excellence, 17(1), 2085–2098. [CrossRef]

- Clissa, L.; Lassnig, M.; Rinaldi, L. (2023). How Big is Big Data? A comprehensive survey of data production, storage, and streaming in science and industry, In Frontiers in Big Data, 6:1271639.

- Linstedt, D. (2016). Building a Scalable Data Warehouse with Data Vault 2.0, Morgan Kaufmann, 1st ed., Boston, MA, USA.

- Vines, A.; Tănăsescu, L. (2024). Data Vault Modeling: Insights from Industry Interviews, In Proceedings of the International Conference on Business Excellence, vol. 18, no. 1, pp. 3597–3605, 2024.

- El-Sappagh, S.; Hendawi, A.; El-Bastawissy, A. (2011). A proposed model for data warehouse ETL processes, Journal of King Saud University - Computer and Information Sciences, 23, 91–104.

- Fan, L.; Lee, C.-H.; Su, H.; Feng, S.; Jiang, Z.; Sun, Z. (2024). A New Era in Human Factors Engineering: A Survey of the Applications and Prospects of Large Multimodal Models, In arXiv. https://arxiv.org/abs/2405.13426.

- Ege, D. N.; Øvrebø; H. H.; Stubberud, V.; Berg, M. F.; Elverum, C.; Steinert, M.; Vestad, H. (2024). ChatGPT as an inventor: Eliciting the strengths and weaknesses of current large language models against humans in engineering design, In arXiv. https://arxiv.org/abs/2404.18479.

- Choi, S.; Gazeley, W. (2024). When Life Gives You LLMs, Make LLM-ADE: Large Language Models with Adaptive Data Engineering, In arXiv preprint, arXiv:2404.13028. https://arxiv.org/abs/2404.13028.

- Mantri, A. (2024). Intelligent Automation of ETL Processes for LLM Deployment: A Comparative Study of Dataverse and TPOT, European Journal of Advances in Engineering and Technology, 11(4), 154–158.

- .

- Yessad, L.; Labiod, A. (2016). Comparative study of data warehouses modeling approaches: In-mon, Kimball, and Data Vault, In 2016 International Conference on System Reliability and Science (ICSRS), Paris, France, pp. 95–99.

- Naamane, Z.; Jovanovic, V. (2016). Effectiveness of Data Vault compared to Dimensional Data Marts on Overall Performance of a Data Warehouse System, In International Journal of Computer Science Issues, 13(1).

- Vines, A. (2024). Performance Evaluation of Data Vault and Dimensional Modeling: Insights from TPC-DS data set Analysis, In Proceedings of 23rd International Conference on Informatics in Economy (IE 2024).

- Helskyaho, H.; Ruotsalainen, L.; Männistö, T. (2024). Defining Data Model Quality Metrics for Data Vault 2.0 Model Evaluation, Inventions, 9, 21.

- Inmon, W. H.; Zachman, J. A.; Geiger, J. G. (2008). Data stores, data warehousing, and the Zachman framework: Managing enterprise knowledge, McGraw-Hill.

- Kimball, R.; Ross, M.; Thornthwaite, W.; Mundy, J.; Becker, B. (2008). Data Warehouse Lifecycle Toolkit: Practical Techniques for Building Data Warehouse and Business Intelligence Systems (2nd ed.), New York: Wiley.

- Smith, J.; Elshnoudy, I. A. (2003). A Comparative Analysis of Data Warehouse Design Methodologies for Enterprise Big Data and Analytics, Emerging Trends in Machine Intelligence and Big Data, 16–29.

- Giebler, C.; Gröger, C.; Hoos, E.; Schwarz, H.; Mitschang, B. (2019). Modeling Data Lakes with Data Vault: Practical Experiences, Assessment, and Lessons Learned, In Proceedings of the 38th Conference on Conceptual Modeling (ER 2019), vol. 38, no. 1, pp. 1–10, 2019.

- Vines, A.; Samoila, A. (2023). An Overview of Data Vault Methodology and Its Benefits, Informatica Economica, 27(2), 11–20.

- Helskyaho, H. (2023). Towards Automating Database Designing, In Proceedings of the 34th Conference of Open Innovations Association (FRUCT).

- Ggaliwango, M.; Nakayiza, H. R.; Jjingo, D.; Nakatumba-Nabende, J. (2024). Prompt Engineering in Large Language Models.

- Lo, L. S. (2023). The CLEAR Path: A Framework for Enhancing Information Literacy Through Prompt Engineering. The Journal of Academic Librarianship, 49(4), 102720.

- Ahmed, T.; Pai, K.; Devanbu, P.; Barr, E. (2023). Improving Few-Shot Prompts with Relevant Static Analysis Products, In Proceedings of the 2023 International Conference on Software Engineering (ICSE), 2023.

- Khattak, M. U.; Rasheed, H., Maaz, M.; Khan, S.; Khan, F. S. (2023). MaPLe: Multi-modal Prompt Learning, In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 19113–19122. IEEE.

- Wang, B.; Deng, X.; Sun, H. (2022). Iteratively Prompt Pre-trained Language Models for Chain of Thought, arXiv preprint, arXiv:2203.08383. https://arxiv.org/abs/2203.08383.

- Kojima, T.; Gu, S. S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. (2023). Large Language Models are Zero-Shot Reasoners, In arXiv preprint, arXiv:2205.11916.

- Alhindi, T.; Chakrabarty, T.; Musi, E.; Muresan, S. (2023). Multitask Instruction-based Prompting for Fallacy Recognition, arXiv preprint, arXiv:2301.09992.

- Diao, S.; Wang, P.; Lin, Y.; Pan, R.; Liu, X.; Zhang, T. (2024). Active Prompting with Chain-of-Thought for Large Language Models, In arXiv preprint, arXiv:2302.12246.

- Hegde, C. (2022). Anomaly Detection in Time Series Data using Data-Centric AI, In Proceedings of the 2022 IEEE International Conference on Electronics, Computing and Communication Technologies (CONECCT), pp. 1–6.

- Chai, C.; Tang, N.; Fan, J.; Luo, Y. (2023). Demystifying Artificial Intelligence for Data Preparation, In Proceedings of the Companion of the 2023 International Conference on Management of Data (SIGMOD ’23), pp. 13–20.

- Zhao, H.; Ye, X. (2013). A Practice of TPC-DS Multidimensional Implementation on NoSQL Database Systems, In Proceedings of the 2013 ACM SIGMOD International Conference on Management of Data, 93–108.

- Al-Kateb, M.; Crolotte, A.; Ghazal, A.; Rose, L. (2013). Adding a Temporal Dimension to the TPC-H Benchmark, In: Nambiar, R., Poess, M. (eds) Selected Topics in Performance Evaluation and Benchmarking. TPCTC 2012. Lecture Notes in Computer Science, vol 7755, Springer, Berlin, Heidelberg.

- Chen, G.; Johnson, T.; Cilimdzic, M. (2022). Quantifying Cloud Data Analytic Platform Scalability with Extended TPC-DS Benchmark. In: Nambiar, R., Poess, M. (eds) Performance Evaluation and Benchmarking. TPCTC 2021. Lecture Notes in Computer Science(), vol 13169. Springer, Cham.

- Databricks Documentation (n.d.). https://docs.databricks.com/en/introduction/index.html.

| Option Identifier | Number of tables | Hub tables | Satellite tables | Link tables |

|---|---|---|---|---|

| 1 | 17 | hub_customer hub_customer_address hub_customer_demographics hub_store hub_promotion hub_item | sat_customer sat_customer_address sat_customer_demographics sat_store sat_promotion sat_item sat_sales | lnk_customer_address lnk_customer_demographics lnk_sales_data lnk_promotion_item |

| 2 | 16 | hub_customer hub_customer_address hub_customer_demographics hub_store hub_promotion hub_item | sat_customer sat_customer_address sat_customer_demographics sat_store sat_promotion sat_item sat_sales | lnk_customer_address lnk_customer_demographics lnk_sales_data |

| 3 | 11 | hub_customer hub_store hub_promotion hub_item | sat_customer sat_customer_address sat_customer_demographics sat_store sat_promotion sat_item | lnk_sales_data |

| 4 | 13 | hub_customer hub_store hub_promotion hub_item | sat_customer sat_customer_address sat_customer_demographics sat_store sat_promotion sat_item sat_sales | lnk_sales_data lnk_promotion_item |

| 5 | 15 | hub_customer hub_customer_address hub_customer_demographics hub_store hub_promotion hub_item | sat_customer sat_customer_address sat_customer_demographics sat_store sat_promotion sat_item | lnk_customer_address lnk_customer_demographics lnk_sales_data |

| Note: The font size for this table has been adjusted for compactness. | ||||

| Option Identifier | Model accuracy | Efficiency | Manual Review | Adaptability | Stability | Total |

|---|---|---|---|---|---|---|

| 1 | 5 | 3 | 3 | 5 | 5 | 21 |

| 2 | 4 | 3 | 3 | 5 | 5 | 20 |

| 3 | 4 | 5 | 4 | 3 | 5 | 21 |

| 4 | 5 | 5 | 5 | 5 | 2 | 22 |

| 5 | 4 | 3 | 2 | 5 | 5 | 19 |

| Number of tables | Running time | Total size of data (GB) | |

|---|---|---|---|

| 6 business entities | 17 | 15m 12s | 26.205 |

| Manual model (4 business entities) | 13 | 10m 17s | 18.075 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).