Submitted:

19 February 2025

Posted:

20 February 2025

You are already at the latest version

Abstract

Large Language Models (LLMs) have revolutionized artificial intelligence, driving advancements in natural language processing, automated content generation, and numerous other applications. However, these models' increasing scale and computational requirements pose significant energy consumption challenges. This paper comprehensively reviews power consumption in LLMs, highlighting key factors such as model size, hardware dependencies, and optimization techniques. We analyze the power demands of various state-of-the-art models, compare their efficiency across different hardware architectures, and explore strategies for reducing energy consumption without compromising performance. Additionally, we discuss the environmental impact of large-scale AI computations and propose future research directions for sustainable AI development. Our findings aim to inform researchers, engineers, and policymakers about the growing energy demand.

Keywords:

1. Introduction

2. Power Consumption in Large Language Models

2.1. Energy Usage in Training vs. Inference

2.2. Factors Influencing Power Consumption

2.3. Energy Consumption Across Different LLMs

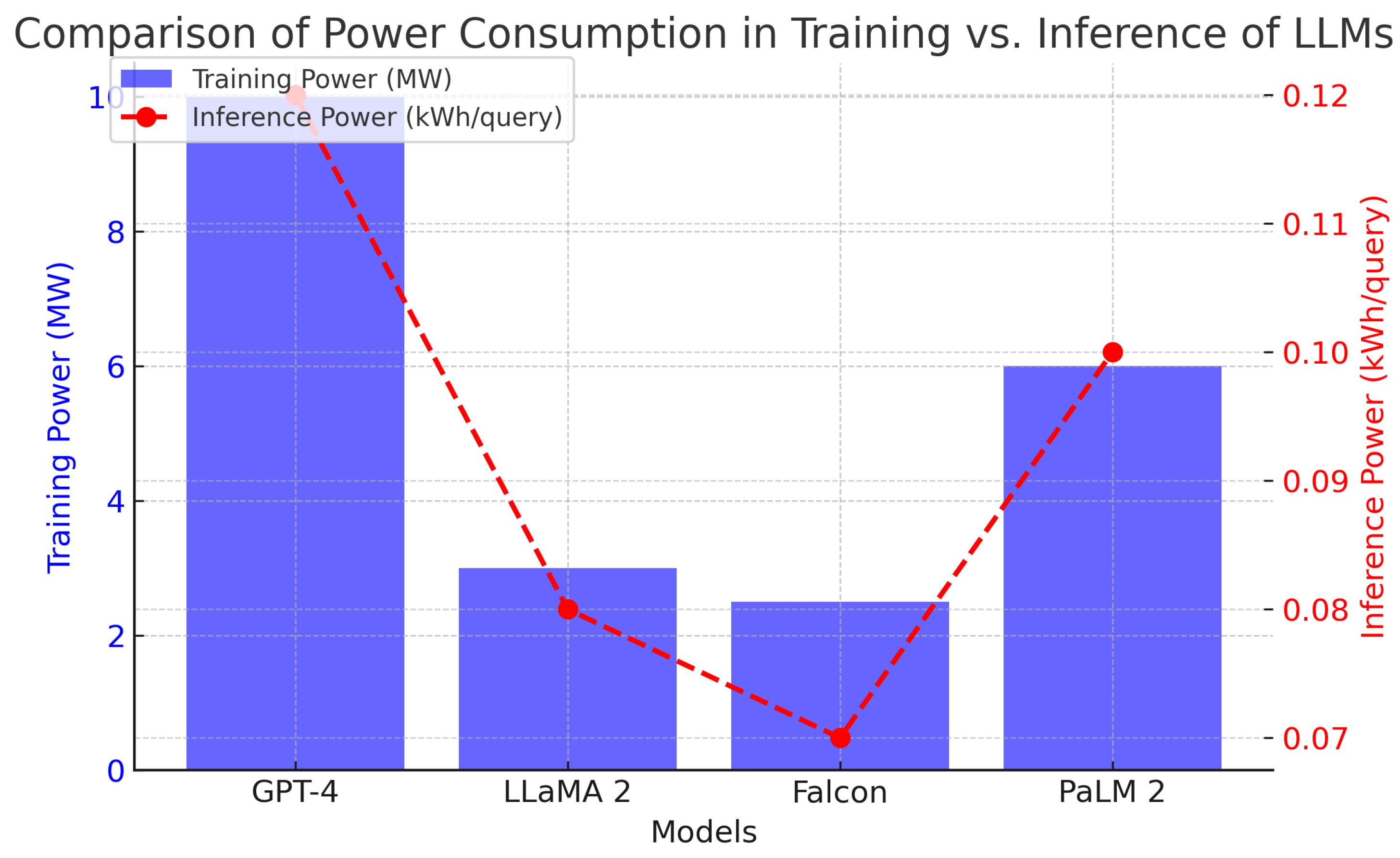

| Model | Parameters (B) | Training Power (MW) | Inference Power (kWh/query) |

|---|---|---|---|

| GPT-4 | 1.7T | 10 MW | 0.12 |

| LLaMA 2 | 65B | 3 MW | 0.08 |

| Falcon | 40B | 2.5 MW | 0.07 |

| PaLM 2 | 540B | 6 MW | 0.10 |

3. Power Consumption in Large Language Models

3.1. Energy Usage in Training vs. Inference

3.2. Factors Influencing Power Consumption

3.3. Energy Consumption Across Different LLMs

| Model | Parameters (B) | Training Power (MW) | Inference Power (kWh/query) |

|---|---|---|---|

| GPT-4 | 1.7T | 10 MW | 0.12 |

| LLaMA 2 | 65B | 3 MW | 0.08 |

| Falcon | 40B | 2.5 MW | 0.07 |

| PaLM 2 | 540B | 6 MW | 0.10 |

4. Comparative Analysis of Energy Efficiency in LLMs

4.1. Performance per Watt

4.2. Optimizations for Energy Efficiency

5. Emerging Techniques and Hardware Advancements for Energy-Efficient AI

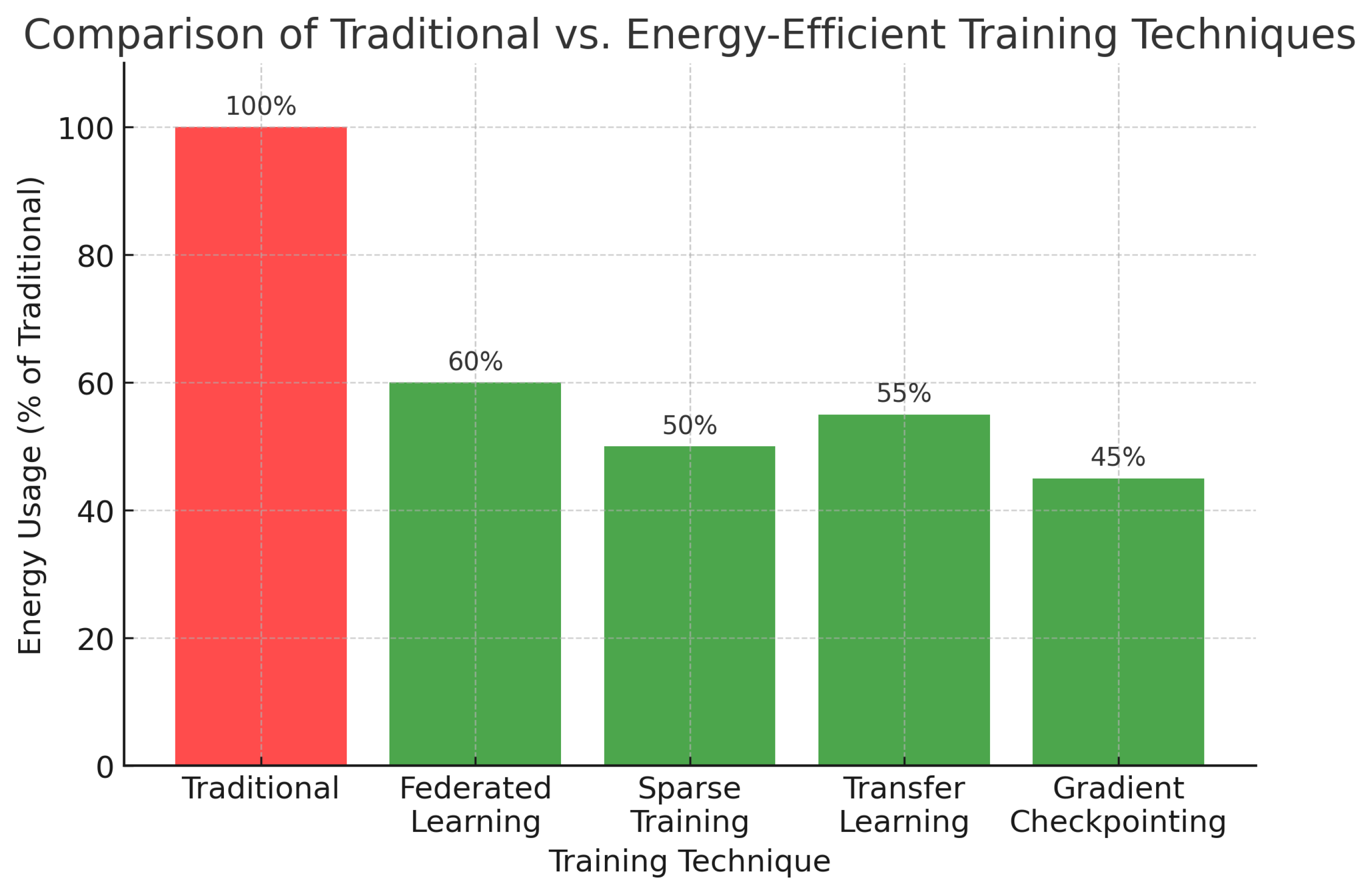

5.1. Efficient Training Strategies

5.2. Advancements in AI Hardware

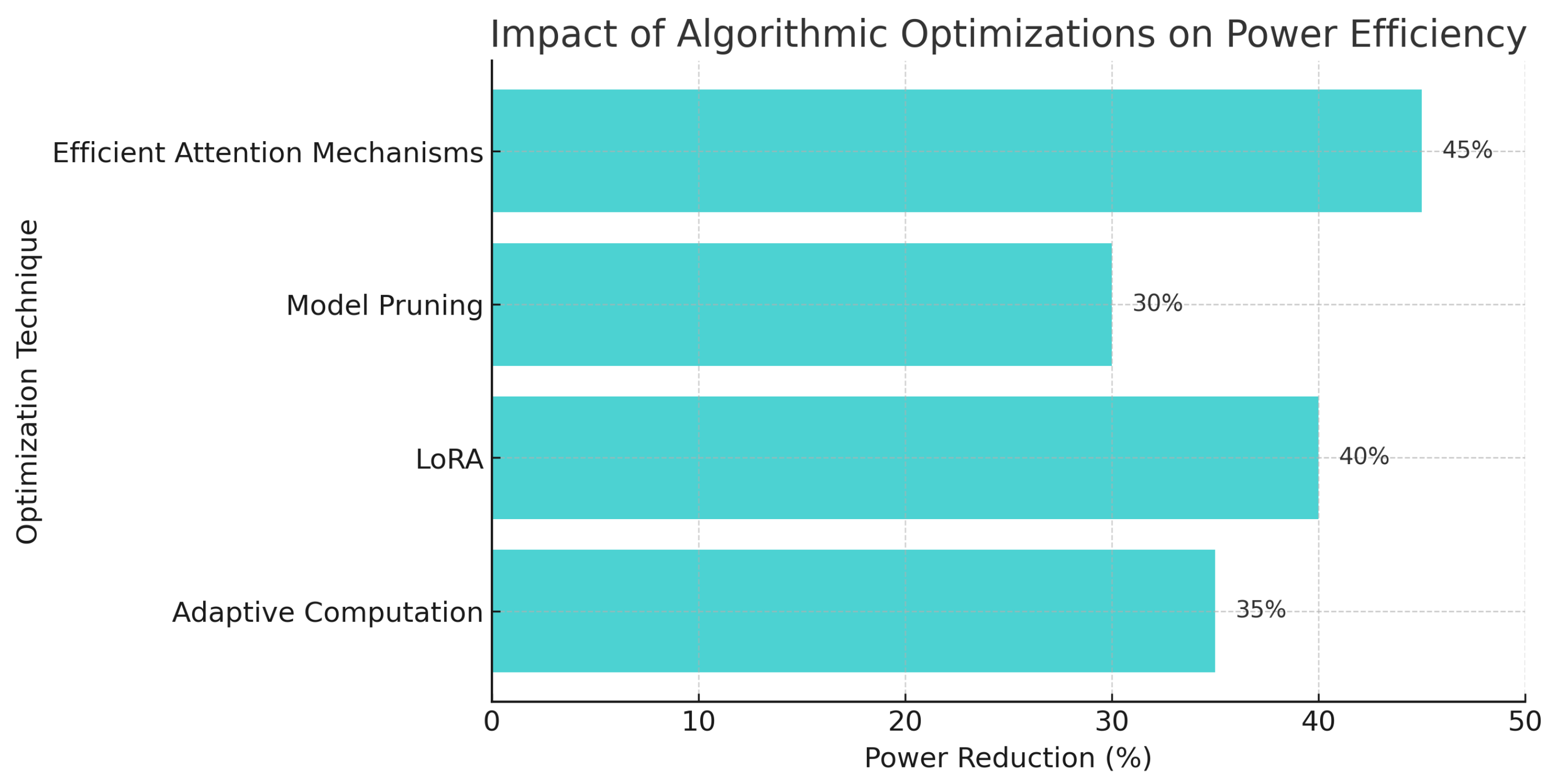

5.3. Algorithmic Enhancements for Power Reduction

6. Challenges and Open Research Problems in Sustainable AI

6.1. Lack of Standardized Energy Metrics

| Metric | Measurement Method | Limitations |

|---|---|---|

| Power Usage Effectiveness (PUE) | Data center-wide measurement | Lacks per-model granularity |

| Floating Point Operations per Second (FLOPS) per Watt | Model-level efficiency | Does not account for data movement energy |

| Carbon Footprint per Training Run | CO2 emissions estimation | Difficult to verify across platforms |

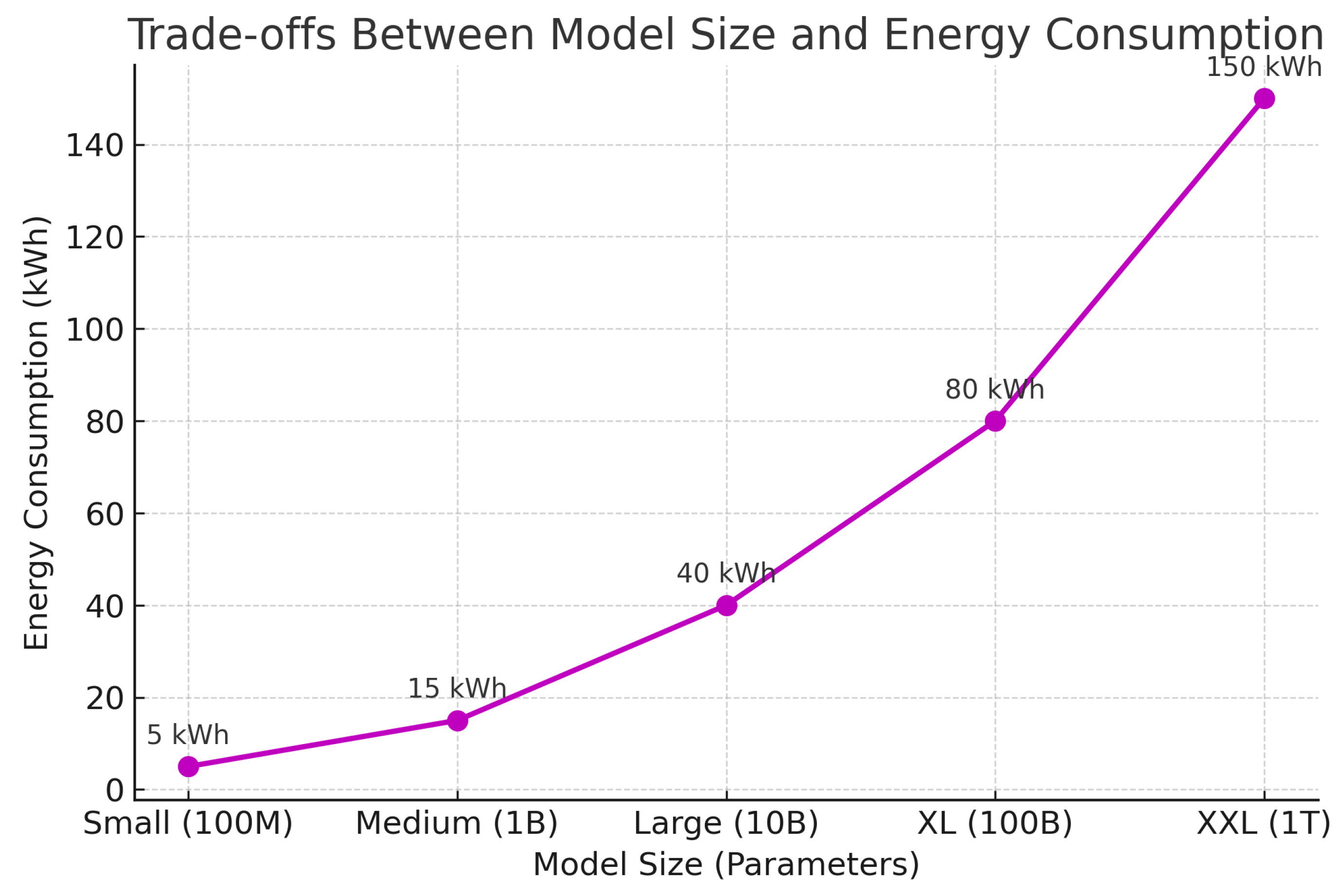

6.2. Trade-Offs Between Model Size and Energy Consumption

6.3. Sustainable AI Infrastructure

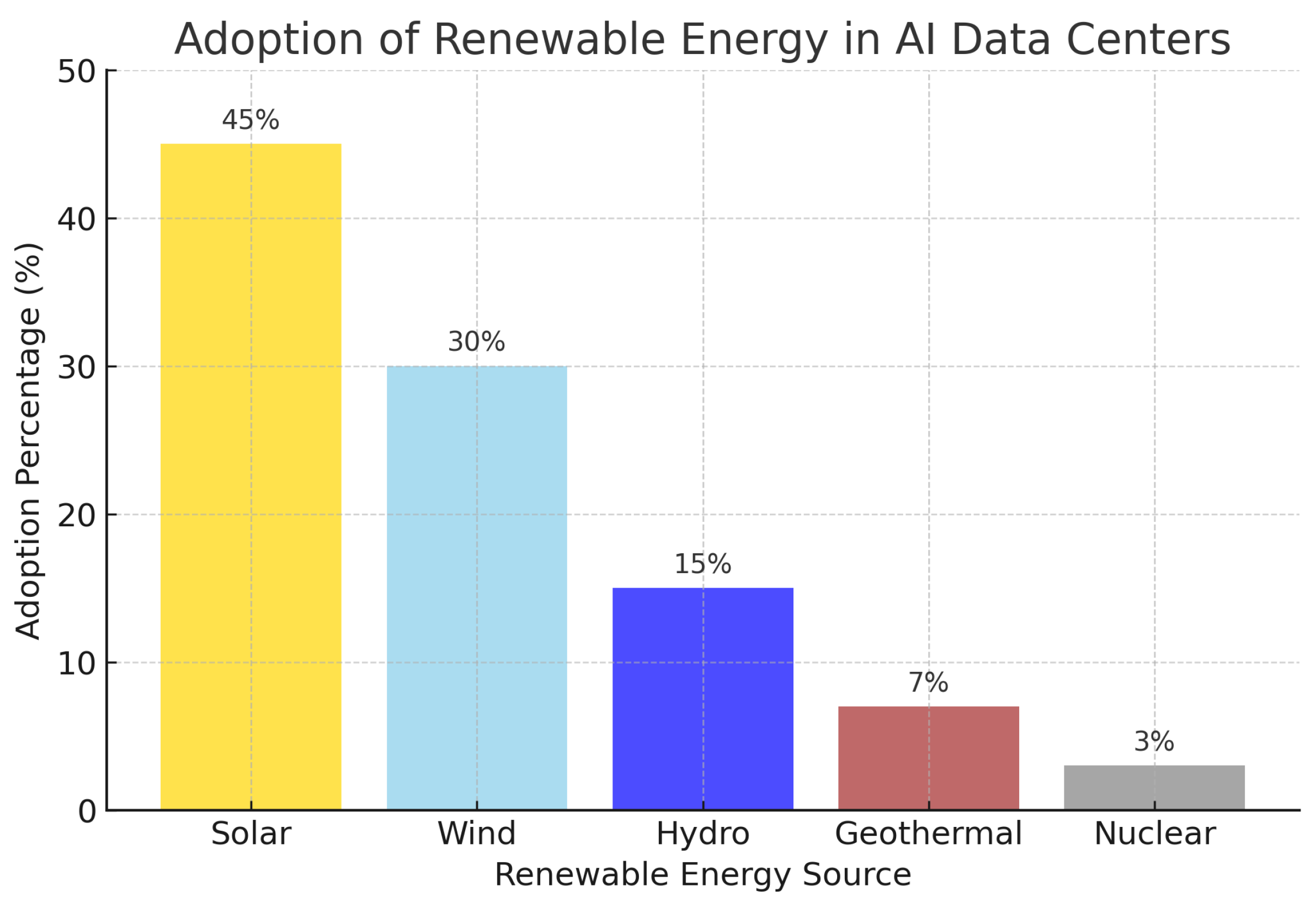

- Green Data Centers: Adoption of renewable energy-powered data centers can significantly lower the carbon footprint of AI computations.

- Energy-Aware Scheduling: Dynamically adjusting workloads to leverage periods of lower electricity demand can optimize energy use.

- Decentralized Computing: Distributing AI workloads across multiple edge devices can reduce reliance on energy-intensive central servers.

6.4. Ethical Considerations and Policy Frameworks

| Policy | Region | Scope |

|---|---|---|

| EU AI Act | Europe | AI sustainability standards |

| US Executive Order on AI | USA | Ethical AI and Power Usage |

| China AI Energy Efficiency Plan | China | Green AI Infrastructure Development |

7. Future Directions and Breakthroughs in Sustainable AI

7.1. Next-Generation Energy-Efficient Architectures

7.2. Renewable Energy-Powered AI Data Centers

7.3. Advancements in AI Model Compression Techniques

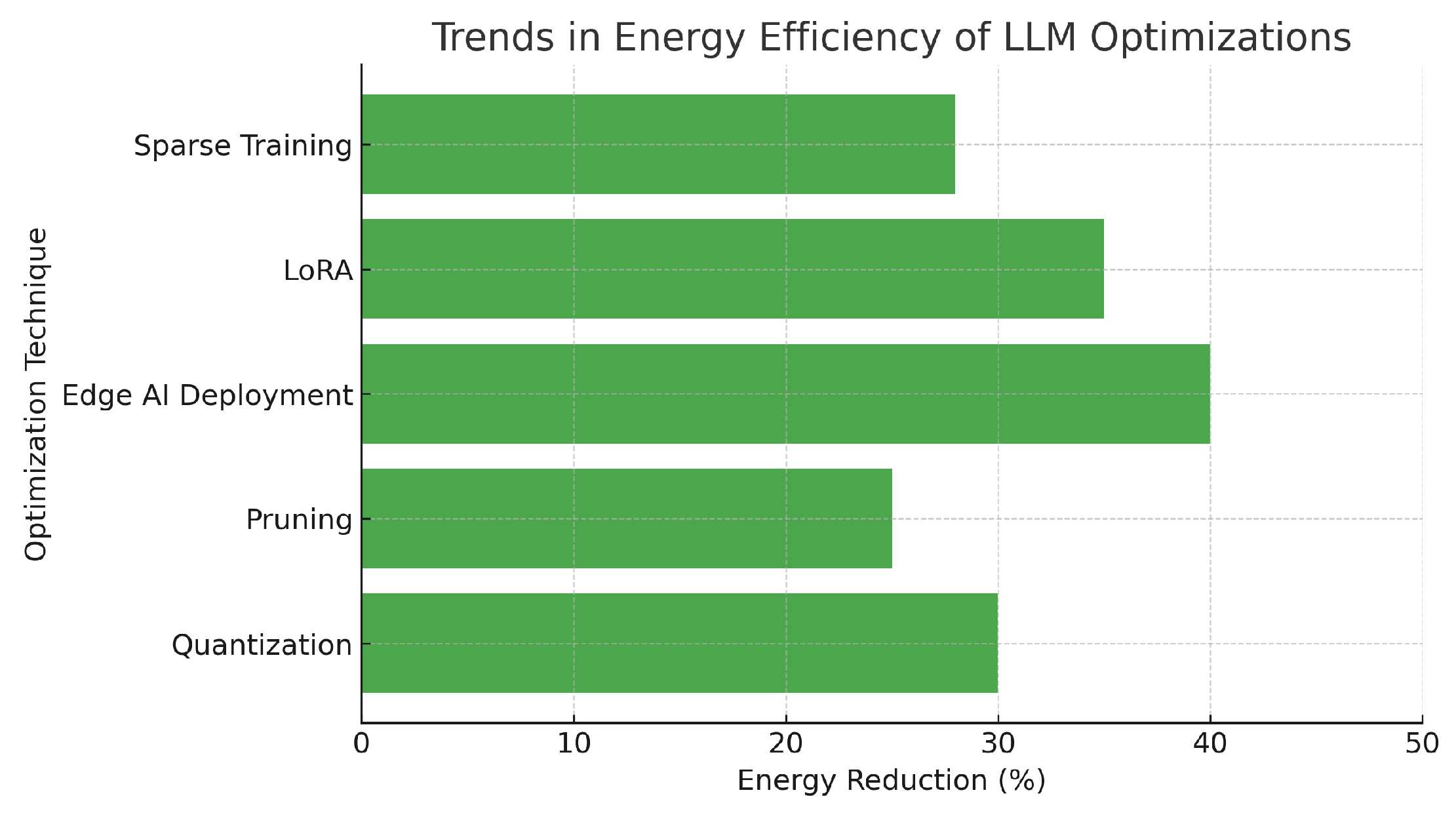

| Optimization Technique | Energy Reduction (%) | Reference |

|---|---|---|

| Quantization | 30% | [4] |

| Pruning | 25% | [1] |

| Edge AI Deployment | 40% | [7] |

8. Conclusions

- Understanding Power Consumption: LLMs demand substantial energy for training and inference, with factors such as model size and hardware efficiency playing a crucial role.

- Comparative Energy Analysis: Different LLM architectures exhibit varying power efficiency, highlighting the need for optimized hardware and algorithmic solutions.

- Energy-Efficient Techniques: Advances in model pruning, quantization, and adaptive computation offer promising pathways for reducing AI’s energy footprint.

- Renewable Energy Integration: AI data centers powered by renewable energy sources, along with dynamic workload scheduling, can significantly reduce carbon emissions.

- Future Research Directions: Emerging trends such as neuromorphic computing, edge AI deployment, and hybrid AI models present exciting opportunities for sustainable AI development.

References

- Brown, T., Mann, B., Ryder, N., et al. (2020). Language models are few-shot learners. Advances in Neural Information Processing Systems, 33, 1877–1901.

- Bommasani, R., et al. (2021). On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258. [CrossRef]

- Patterson, D., et al. (2021). Carbon emissions and large neural network training. arXiv preprint arXiv:2104.10350. [CrossRef]

- Henderson, P., et al. (2020). Towards the systematic reporting of the energy and carbon footprints of machine learning. Journal of Machine Learning Research, 21, 1–43.

- Strubell, E., Ganesh, A., McCallum, A. (2019). Energy and policy considerations for deep learning in NLP. Proceedings of ACL.

- Bender, E. M., Gebru, T., McMillan-Major, A., Shmitchell, S. (2021). On the dangers of stochastic parrots: Can language models be too big? Proceedings of ACM FAccT. [CrossRef]

- Li, Y., et al. (2020). Train big, then compress: Rethinking model size for efficient training and inference. arXiv preprint arXiv:2002.11794.

- Touvron, H., et al. (2023). Efficient LLM training with parameter-efficient fine-tuning methods. arXiv preprint arXiv:2302.05442.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).