Submitted:

16 February 2025

Posted:

17 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. System Model and Assumptions

3.1. Cloud Environment and Elasticity Properties

3.2. Performance and Economic Metrics

3.3. Workload Characterization and Model Inputs

3.4. Scaling Decisions and Control Intervals

3.5. Predictive Modeling Assumptions

3.6. Constraints and Service-Level Agreements

3.7. Geographic and Network Considerations

4. Proposed Method

4.1. Overview

- Predictive Workload Modeling: A machine learning-based module that forecasts workload intensity over a short-term prediction window.

- Multi-Tier Autoscaling: A hierarchical scaling mechanism that adjusts resources across multiple tiers, including application servers, caching systems, and load balancers, based on workload predictions.

- Cost-Aware Optimization: An optimization framework that determines resource allocation configurations to minimize cost while adhering to service-level agreements (SLAs).

4.2. Predictive Workload Modeling

4.3. Multi-Tier Autoscaling

- Resource Utilization: Monitor real-time utilization of resources at each tier.

- Latency Constraints: Ensure that end-to-end request latency remains below the SLA-defined threshold .

- Scalability Constraints: Maintain a feasible range for resource instances, .

4.4. Cost-Aware Optimization

4.5. Proposed Algorithm

| Algorithm 1:Proposed Resource Management Framework |

|

4.6. Control and Feedback Mechanisms

5. Experimental Evaluation

5.1. Experimental Setup

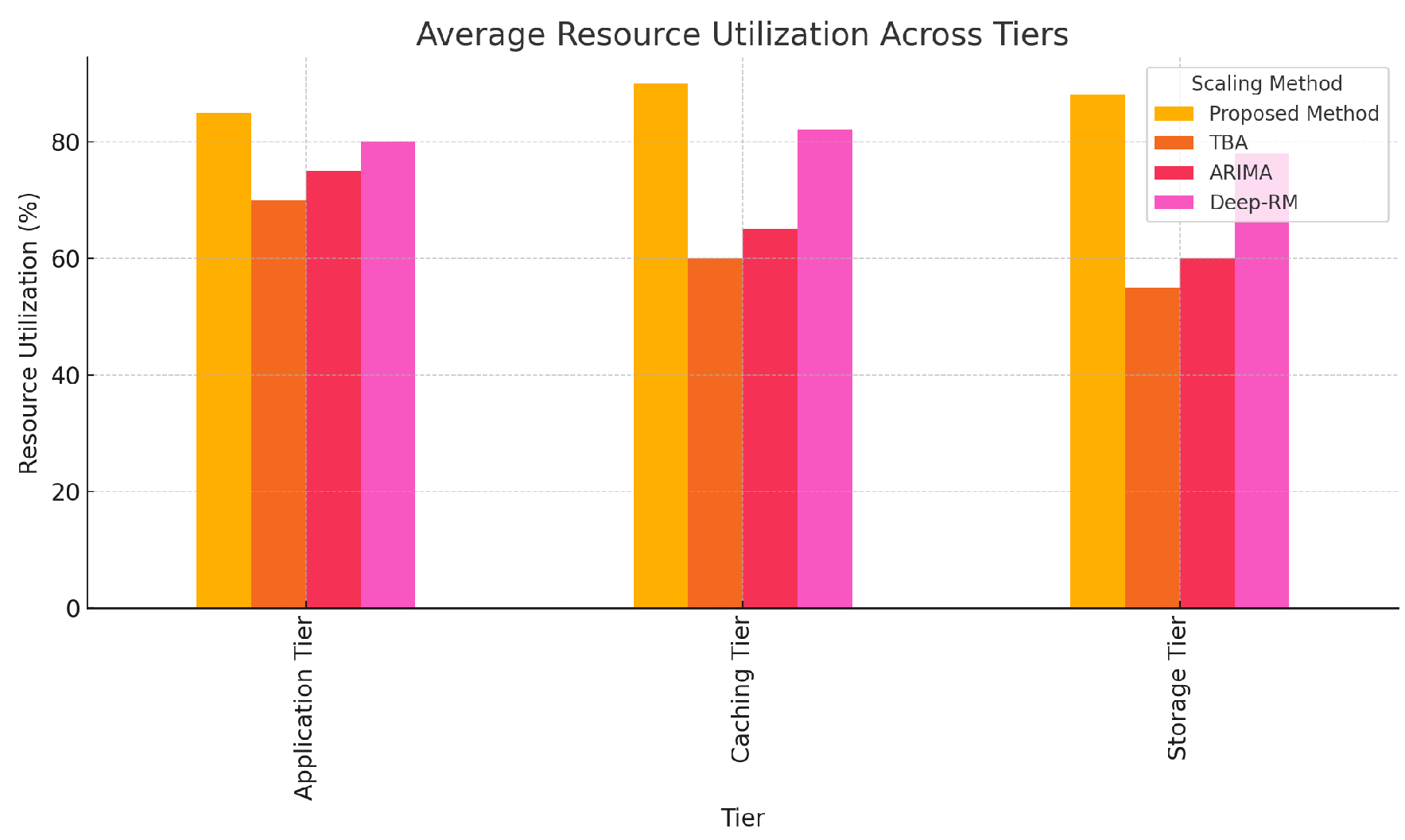

- Application Tier: Stateless microservices managed by Kubernetes, supporting horizontal scaling.

- Caching Tier: Redis in-memory key-value stores for reducing latency.

- Storage Tier: PostgreSQL database clusters configured for autoscaling.

5.2. Models for Comparison

- Threshold-Based Autoscaling (TBA): A reactive method used by Kubernetes Horizontal Pod Autoscaler (HPA), where scaling decisions are triggered by CPU and memory utilization thresholds.

- ARIMA-Based Predictive Autoscaling (ARIMA): A workload prediction approach using AutoRegressive Integrated Moving Average models, focusing on single-tier scaling.

- RL-Based Resource Management (Deep-RM): A reinforcement learning (RL) approach as proposed in [24], which optimizes resource allocation decisions based on workload and cost feedback.

- Hybrid Autoscaling (Hybrid): A method combining reactive scaling with a heuristic optimizer to balance resource allocation and performance.

5.3. Evaluation Metrics

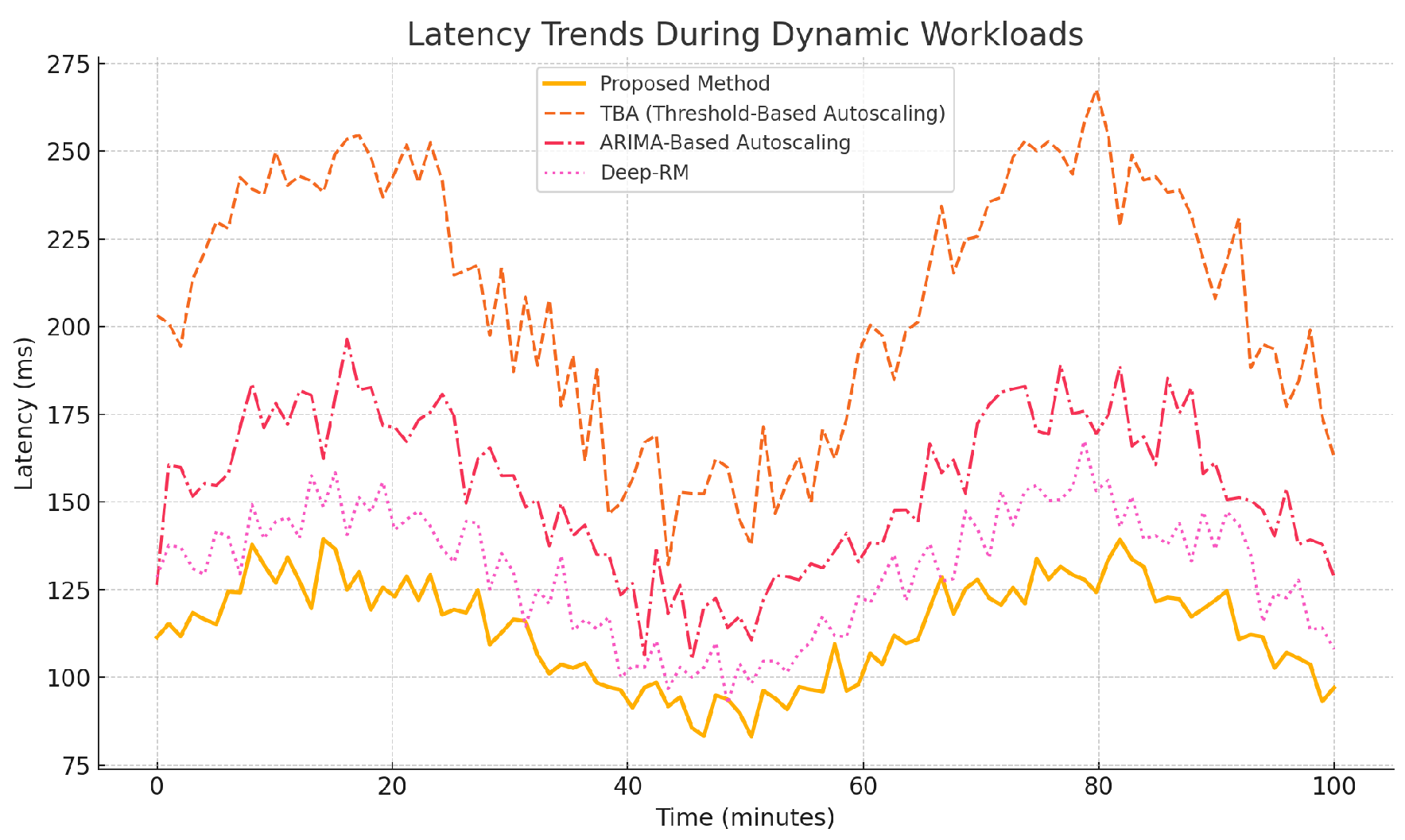

- Latency: Average and 95th percentile latency during peak and steady-state workloads.

- Cost Efficiency: Total cost of allocated resources over the experimental duration.

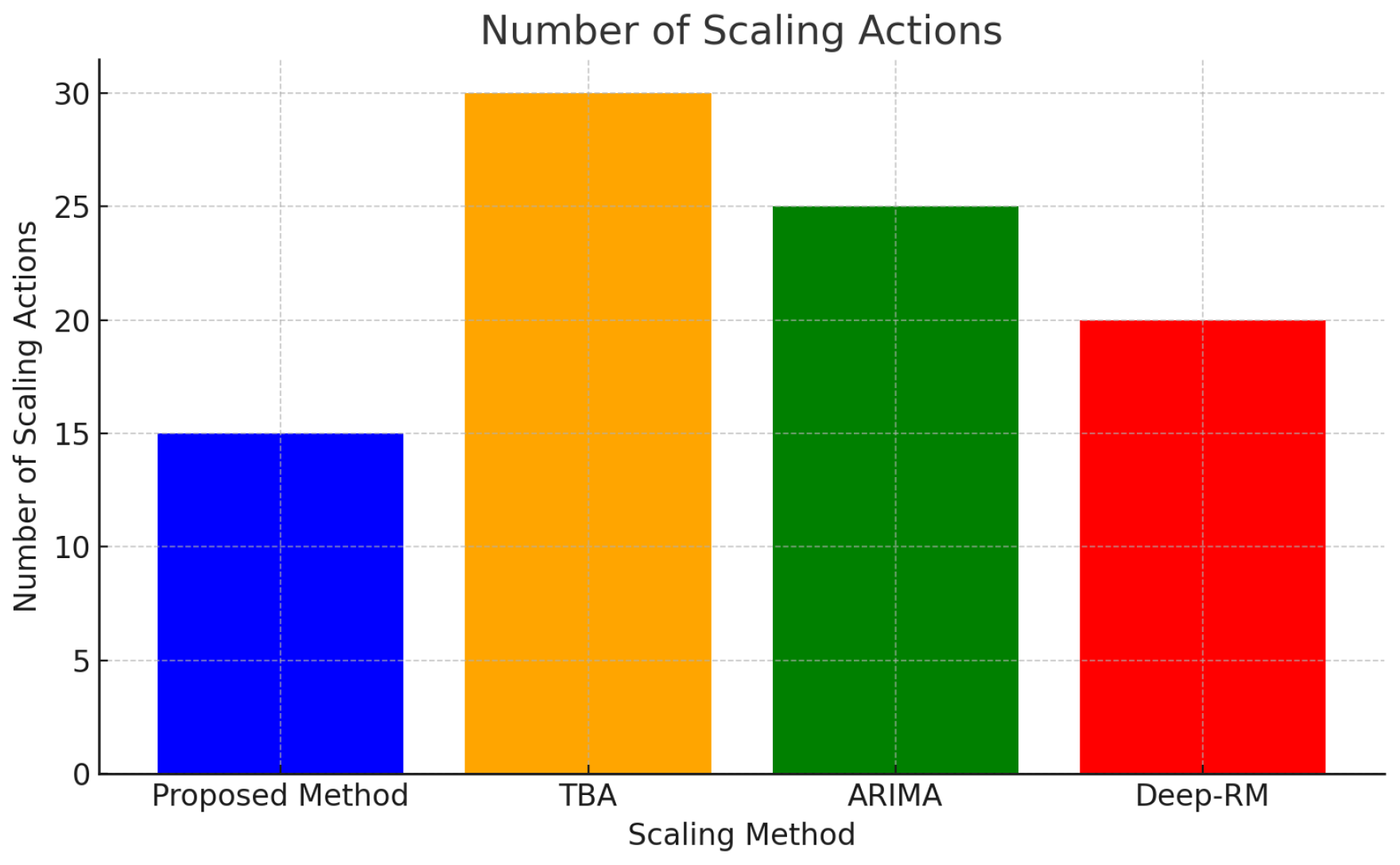

- Scalability: Number of scaling actions and their impact on performance stability.

- Resource Utilization: Average CPU and memory utilization across all tiers.

5.4. Results and Analysis

5.4.1. Latency and Performance

5.4.2. Cost Efficiency

5.4.3. Scalability and Stability

5.4.4. Resource Utilization

6. Discussion

6.1. Performance and Cost Trade-offs

6.2. Scalability and Stability

6.3. Generalizability and Adaptability

6.4. Limitations and Challenges

6.5. Broader Implications

6.6. Future Directions

- Multi-Cloud and Geo-Distributed Environments: Extend the framework to optimize resource allocation across multiple cloud providers and geographic regions, considering inter-region latency and cost trade-offs.

- Dynamic Pricing Models: Incorporate spot and reserved instance pricing to further reduce costs while maintaining reliability.

- Enhanced Predictive Modeling: Explore hybrid and ensemble forecasting models to improve prediction accuracy, particularly under irregular workload patterns.

- Reinforcement Learning Integration: Investigate the use of reinforcement learning to enable adaptive and continuous improvement of scaling policies.

7. Conclusion

References

- A. Lorido-Botran, J. A. Lorido-Botran, J. Miguel-Alonso, and J. A. Lozano, "A review of auto-scaling techniques for elastic applications in cloud environments," Journal of Grid Computing, vol. 12, no. 4, pp. 559–592, Dec. 2014.

- Q. Le, R. N. Q. Le, R. N. Calheiros, and R. Buyya, "A taxonomy and survey on auto-scaling of resources in cloud computing," ACM Computing Surveys, vol. 51, no. 4, pp. 1–33, 2019.

- M. Shahrad, B. M. Shahrad, B. Cutuşu, K. Wang, J. Zhang, A. Ghodsi, C. Kozyrakis, and J. Wilkes, "Serverless in the Wild: Characterizing and Optimizing the Serverless Workload at a Large Cloud Provider," in 2020 USENIX Annual Technical Conference (USENIX ATC 20), 2020, pp. 205–218.

- X. Chen, L. X. Chen, L. Liu, L. Shang, C. Wu, and S. Guo, "CoTuner: Coordinating On-Demand Container Resource Tuning at Run-Time," IEEE Transactions on Cloud Computing, 2019.

- W. Chen, M. W. Chen, M. Chen, Y. C. Hu, and Y. Jiang, "Workload prediction of virtual machines for horizontal auto-scaling via CPU utilization histogram," IEEE Transactions on Services Computing, vol. 12, no. 4, pp. 625–637, 2018.

- Jamali, H., Karimi, A., & Haghighizadeh, M. (2018). A new method of Cloud-based Computation Model for Mobile Devices: Energy Consumption Optimization in Mobile-to-Mobile Computation Offloading. In Proceedings of the 6th International Conference on Communications and Broadband Networking (pp. 32–37). Singapore, Singapore. [CrossRef]

- S. Islam, J. S. Islam, J. Keung, K. Lee, and A. Liu, "Empirical prediction models for adaptive resource provisioning in the cloud," Future Generation Computer Systems, vol. 28, no. 1, pp. 155–162, 2012.

- A. Gambi and C. Franzago, "A Systematic Review of Performance Testing in the Cloud," IEEE Transactions on Services Computing, 2021.

- A. Quiroz, H. A. Quiroz, H. Kim, M. Parashar, N. Gnanasambandam, and N. Sharma, "Towards autonomic workload provisioning for enterprise grids and clouds," in 10th IEEE/ACM International Conference on Grid Computing, 2009, pp. 50–57.

- E. Caron, F. E. Caron, F. Desprez, and A. Muresan, "Predicting the Profit of Cloud Computing Offerings," in 2015 IEEE International Conference on Cloud Engineering, 2015, pp. 47–52.

- H. Jamali, S. M. H. Jamali, S. M. Dascalu, and F. C. Harris, "Fostering Joint Innovation: A Global Online Platform for Ideas Sharing and Collaboration," in ITNG 2024: 21st International Conference on Information Technology-New Generations, S. Latifi, Ed., Advances in Intelligent Systems and Computing, vol. 1456, Cham: Springer, 2024. Available. [CrossRef]

- Q. Zhu, W. Q. Zhu, W. Zhu, and T. Y. Liu, "Multi-Objective Resource Management for Latency-Critical Applications in the Cloud," IEEE Transactions on Parallel and Distributed Systems, vol. 31, no. 4, pp. 754–768, 2020.

- T. Jiang, K. T. Jiang, K. Ye, W. Huang, S. Wu, R. Ranjan, and Q. Wang, "EFAs: An energy-efficient and fairness-aware scheduler to mitigate resource contention in cloud datacenters," IEEE Transactions on Cloud Computing, 2017.

- Q. Zhang, L. Q. Zhang, L. Cherkasova, and E. Smirni, "A regression-based analytic model for dynamic resource provisioning of multi-tier applications," in Proc. of the 4th International Conference on Autonomic Computing (ICAC), 2007, pp. 27–27.

- M. Mao, J. M. Mao, J. Li, and M. Humphrey, "Cloud auto-scaling with deadline and budget constraints," in Proc. of the 11th IEEE/ACM International Conference on Grid Computing, 2010, pp. 41–48.

- B. Addis, D. B. Addis, D. Ardagna, B. Panicucci, M. Trubian, and L. Zhang, "Autonomic management of cloud service centers with availability guarantees," IEEE Transactions on Network and Service Management, vol. 10, no. 1, pp. 3–14, 2013.

- K. Appleby, S. K. Appleby, S. Fakhouri, L. Fong, G. Goldszmidt, M. Kalantar, D. Karla, S. Pampu, J. Pershing, and D. Rosenberg, "Oceano - SLA-based management of a computing utility," in Integrated Network Management Proceedings. IFIP/IEEE Eighth International Symposium on Integrated Network Management, 2001, pp. 855–868.

- H. Jamali, S. M. Dascalu, and F. C. Harris, "AI-Driven Analysis and Prediction of Energy Consumption in NYC’s Municipal Buildings," in Proceedings of the 2024 IEEE/ACIS 22nd International Conference on Software Engineering Research, Management and Applications (SERA), Honolulu, HI, USA, 2024, pp. 277–283. [CrossRef]

- N. Bobroff, A. N. Bobroff, A. Kochut, and K. Beaty, "Dynamic placement of virtual machines for managing SLA violations," in Proc. 10th IFIP/IEEE Int. Symp. on Integrated Network Management, 2007, pp. 119–128.

- R. Nathuji and K. Schwan, "VirtualPower: Coordinated Power Management in Virtualized Enterprise Systems," in ACM SIGOPS Operating Systems Review, vol. 41, no. 6, pp. 265–278, 2007.

- W. Wang, D. W. Wang, D. Niu, and B. Li, "Cost-effective resource management in multi-clouds via randomized auctions," IEEE Transactions on Parallel and Distributed Systems, vol. 27, no. 5, pp. 1473–1486, 2016.

- X. Dutreilh, A. X. Dutreilh, A. Moreau, J. Malenfant, N. Rivierre, and I. Truong, "From data center resource allocation to control theory and back," in Proc. IEEE 3rd International Conference on Cloud Computing, 2010, pp. 410–417.

- A. Ali-Eldin, M. A. Ali-Eldin, M. Kihl, J. Tordsson, and E. Elmroth, "Elastic management of cloud services for improved performance," in IEEE Network Operations and Management Symposium, 2012, pp. 327–334.

| Metric | Proposed | TBA | ARIMA | Deep-RM |

|---|---|---|---|---|

| Mean Latency | 110 | 200 | 150 | 125 |

| 95th Percentile | 240 | 400 | 320 | 270 |

| Model | Proposed | TBA | ARIMA | Deep-RM |

|---|---|---|---|---|

| Total Cost | 5,000 | 7,000 | 6,500 | 5,800 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).