Submitted:

22 January 2025

Posted:

22 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

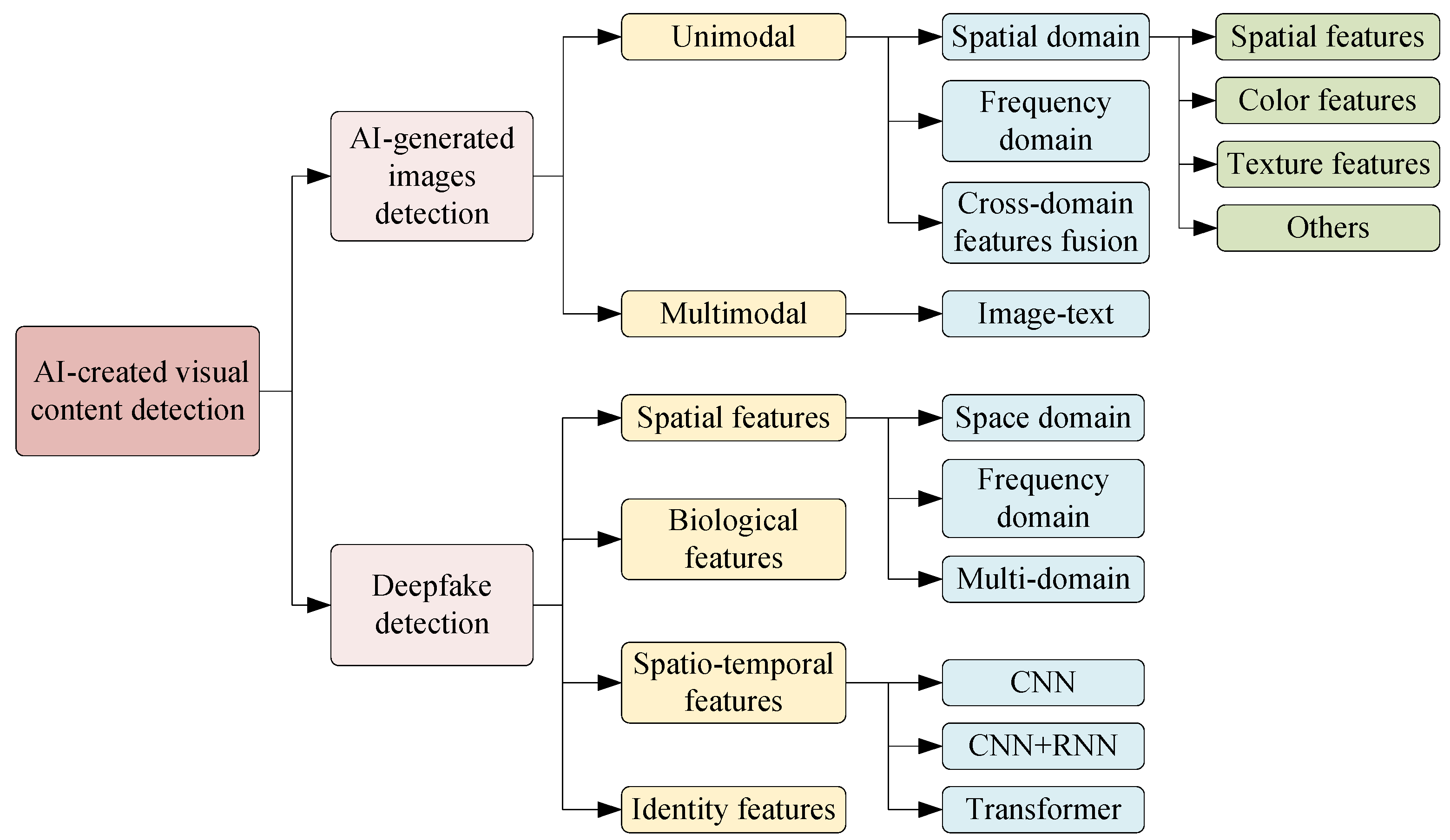

2. AI-Created Visual Content Detection Techniques

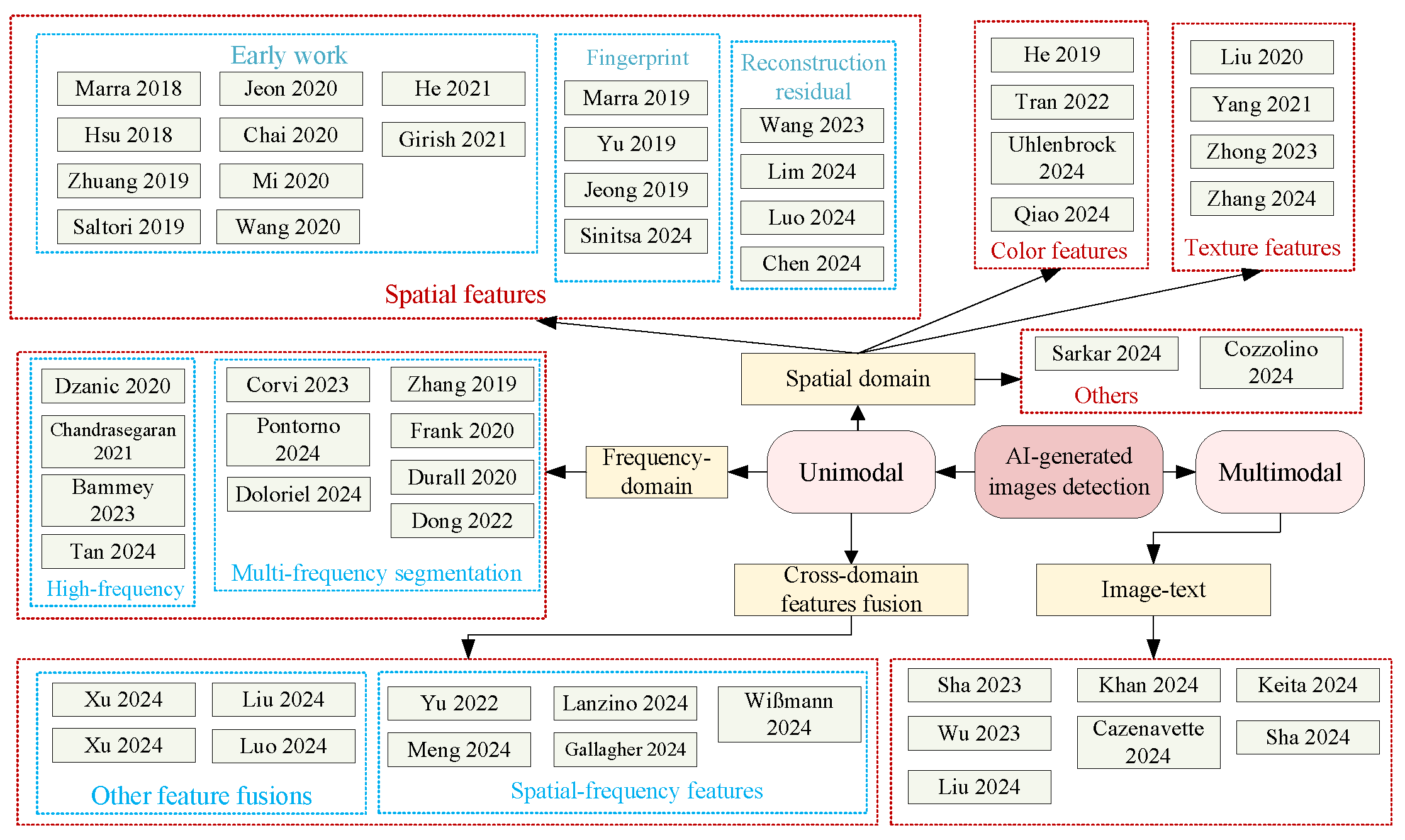

2.1. AI-Generated Images Detection

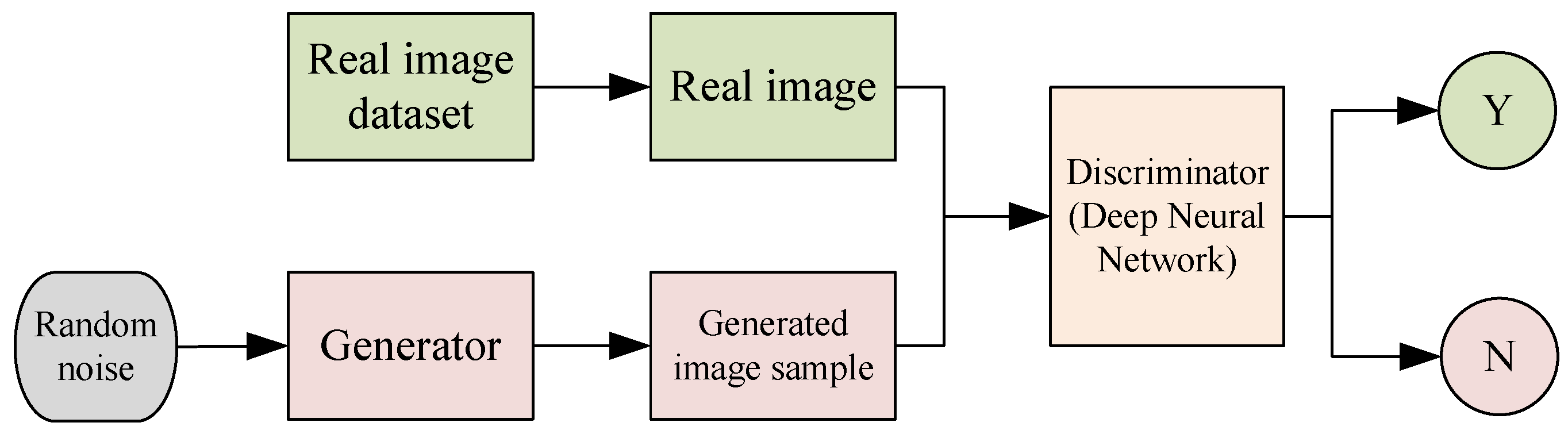

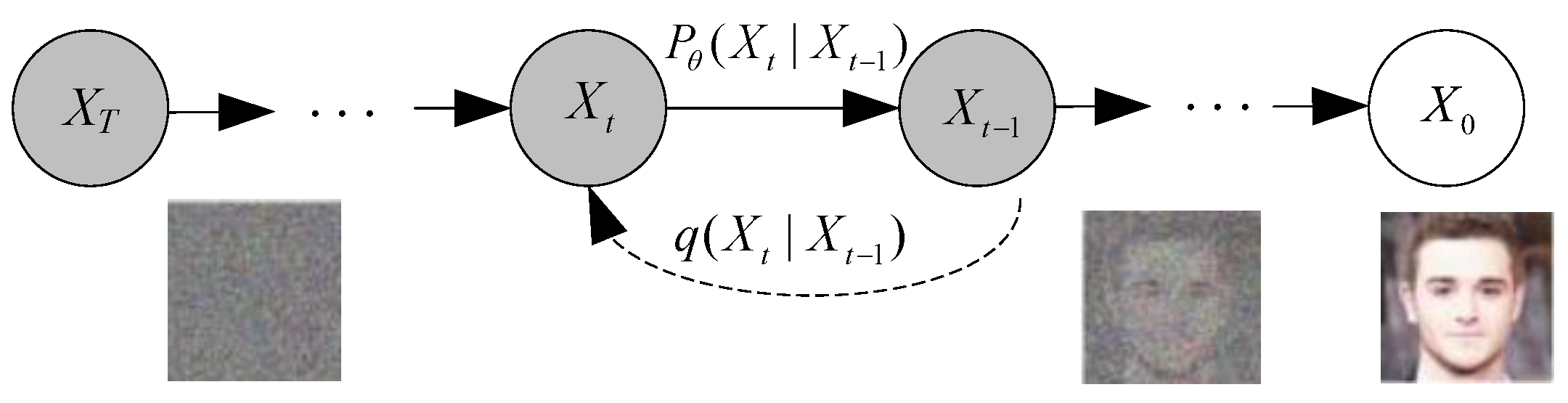

2.1.1. Generative Models for Images

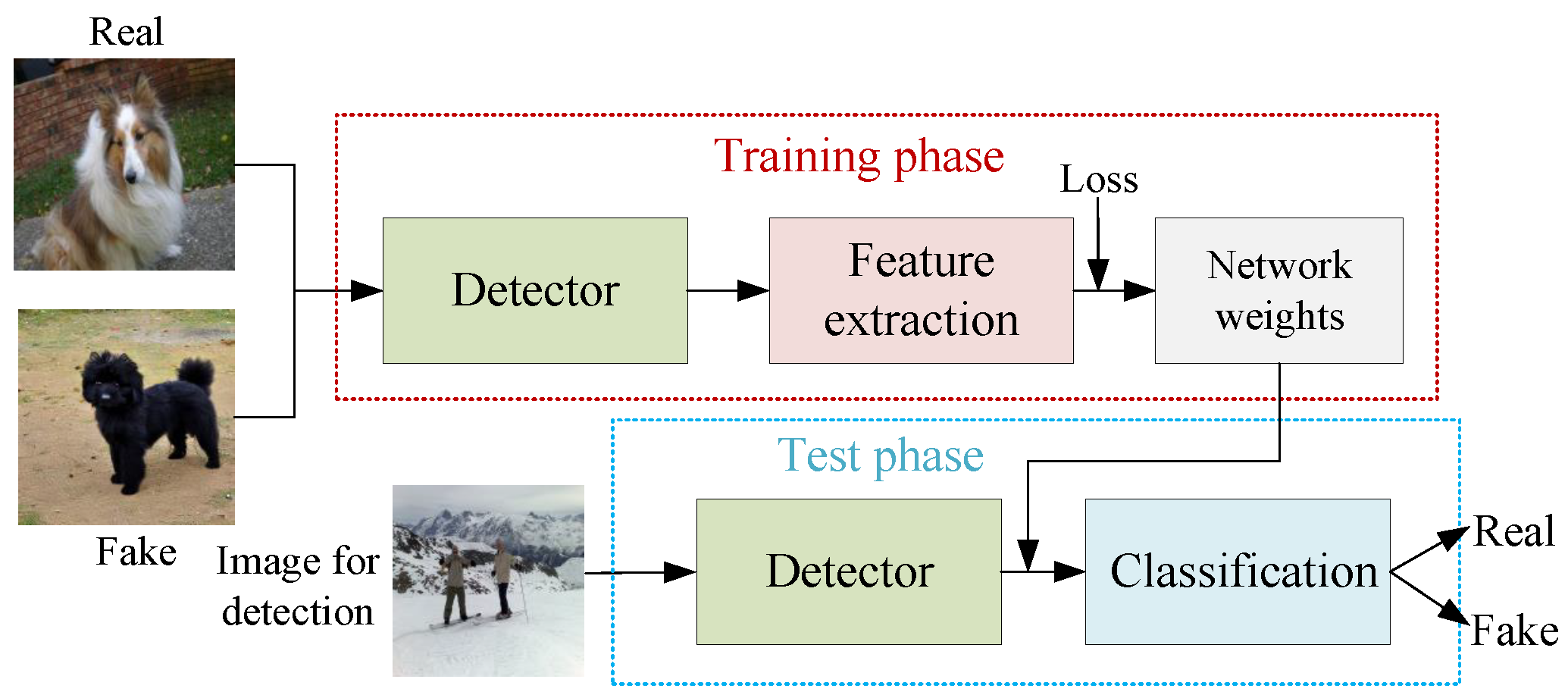

2.1.2. Basic Framework of AI-Generated Images Detection

2.1.3. Datasets for AI-Generated Images Detection

2.2. Deepfake Generation and Detection

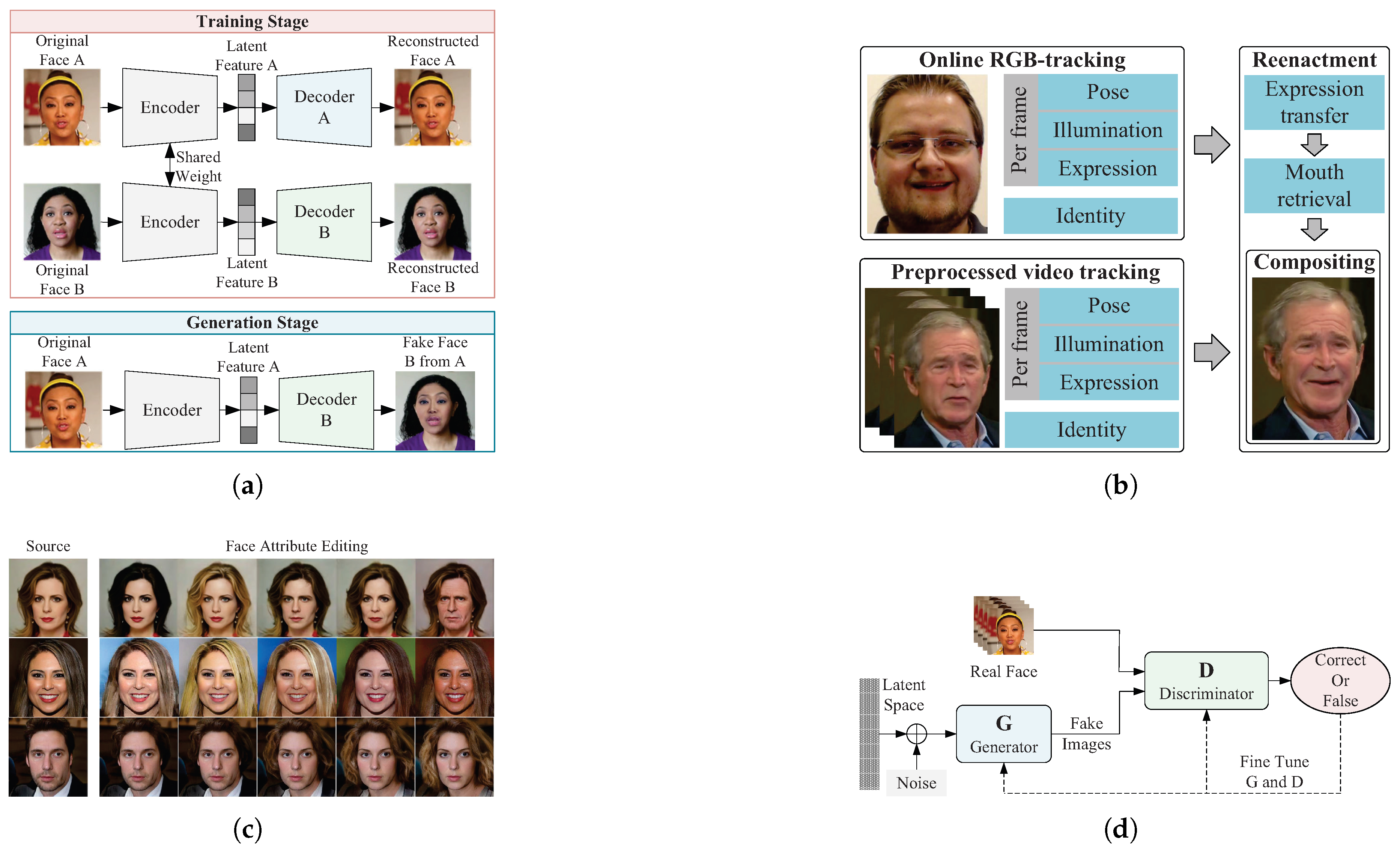

2.2.1. Deepfake Generation

2.2.2. Existing Deepfake Datasets

2.2.3. Evaluation Metrics

3. AI-Generated Images Detection Methods Based on Deep Learning

3.1. Spatial Domain-Based

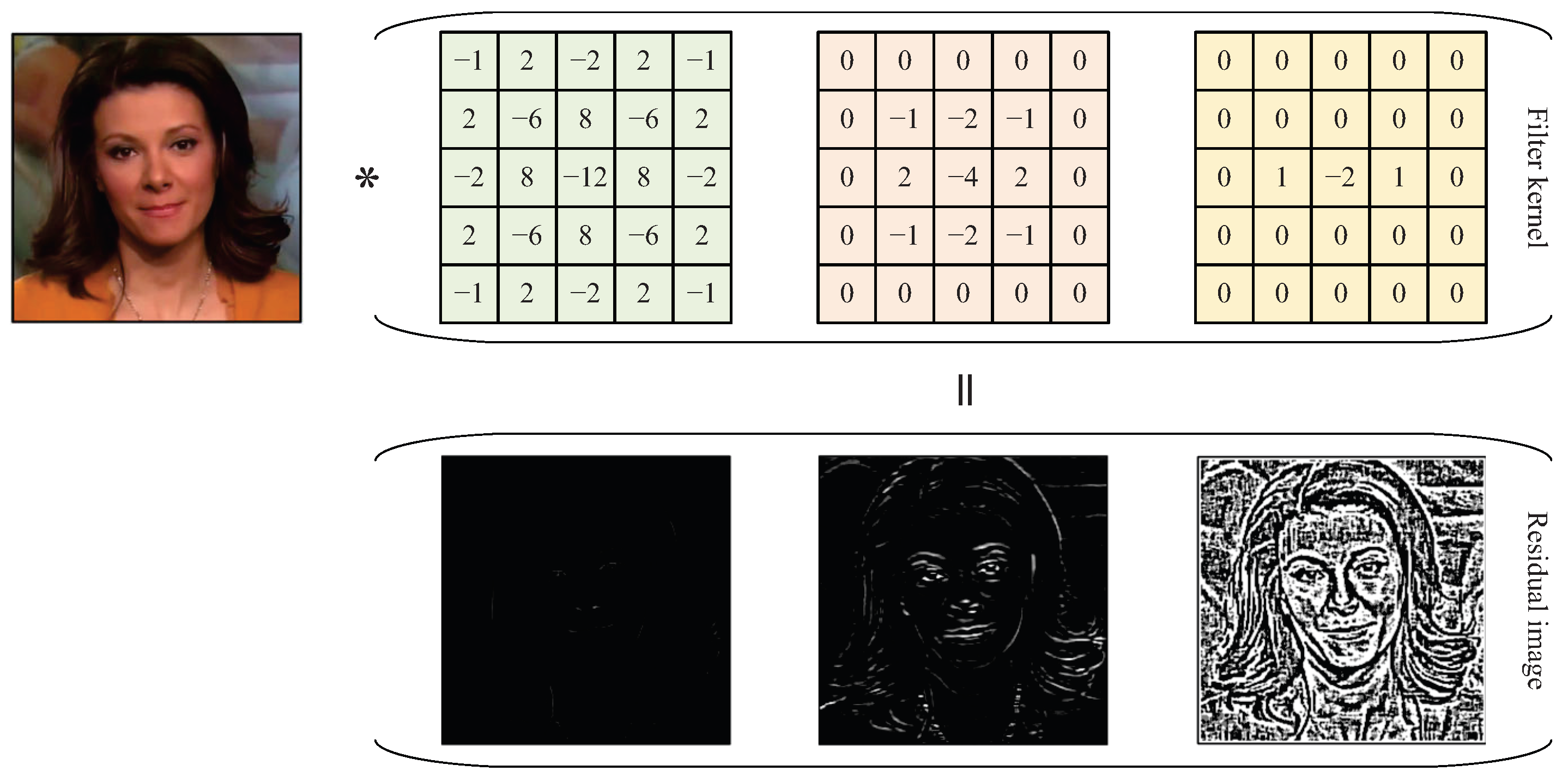

3.1.1. Spatial Features

3.1.2. Color Features

3.1.3. Texture Features

3.1.4. Others

3.2. Frequency Domain-Based

3.3. Cross-Domain Features Fusion-Based

3.4. Image-Text

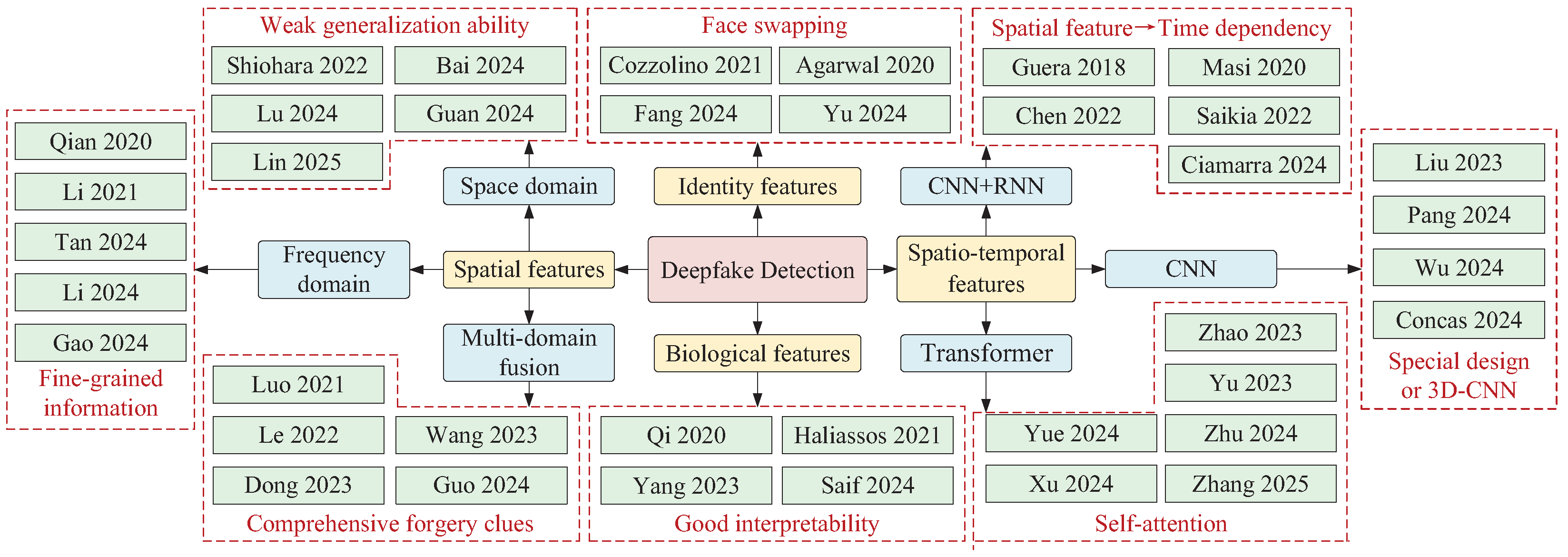

4. Deepfake Detection Based on Feature Selection

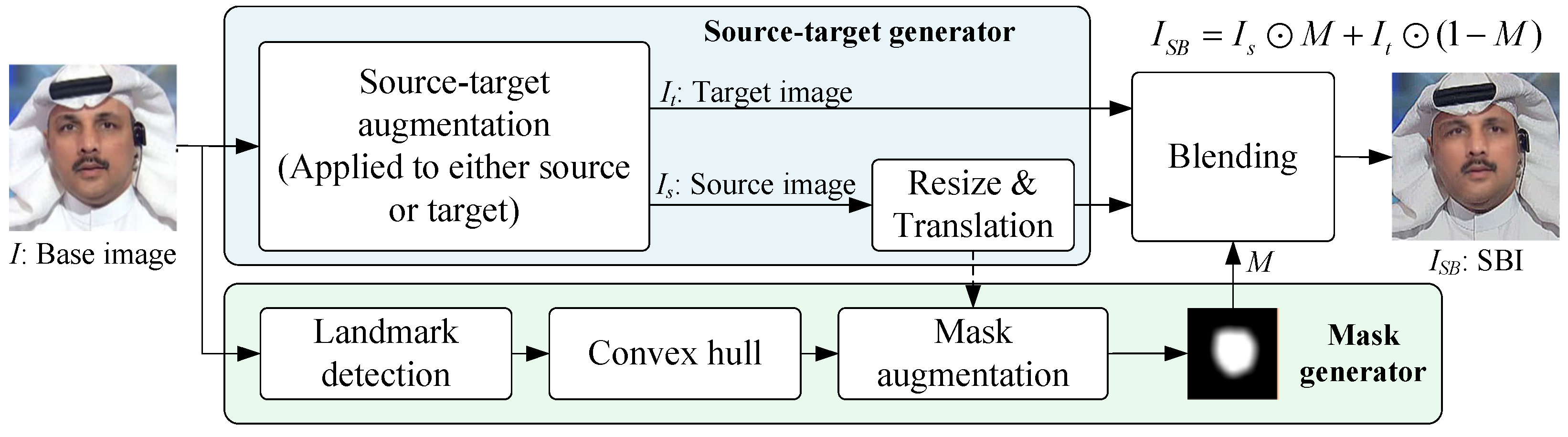

4.1. Spatial Features-Based

4.1.1. Space Domain Information-Based

4.1.2. Frequency Domain Information-Based

4.1.3. Multi-Domain Information Fusion-Based

4.2. Spatio-Temporal Features-Based

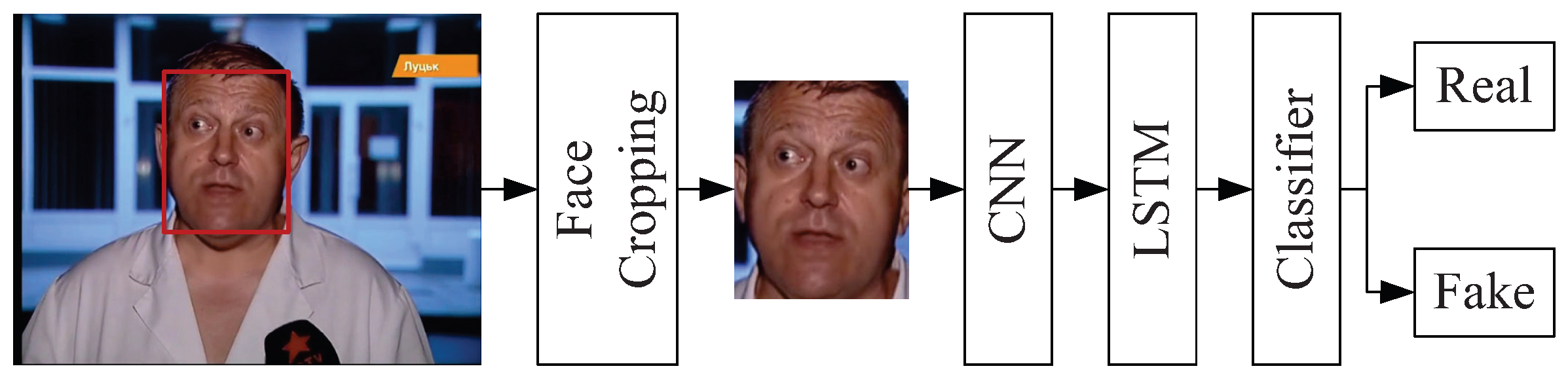

4.2.1. CNN-Based

4.2.2. CNN+RNN-Based

4.2.3. Transformer-Based

4.3. Biological Features-Based

4.4. Identity Features-Based

5. Future Research Directions and Conclusions

5.1. Future Research Directions

5.2. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Yadav, A.; Vishwakarma, D.K. Datasets, clues and state-of-the-arts for multimedia forensics: An extensive review. Expert Syst. Appl. 2024, 249, 123756. [Google Scholar] [CrossRef]

- Hu, B.; Wang, J. Deep learning for distinguishing computer generated images and natural images: A survey. J. Inf. Hiding Privacy Protection 2020, 2, 37–47. [Google Scholar] [CrossRef]

- Deng, J.; Lin, C.; Zhao, Z.; Liu, S.; Wang, Q.; Shen, C. A survey of defenses against AI-generated visual media: Detection, disruption, and authentication. arXiv 2023, arXiv:2407.10575. [Google Scholar]

- Guo, M.; Hu, Y.; Jiang, Z.; Li, Z. AI-generated image detection: Passive or watermark? arXiv 2024, arXiv:2411.13553. [Google Scholar]

- Lin, L.; Gupta, N.; Zhang, Y.; Ren, H.; Liu, C.H.; Ding, F.; Xin, W.; Xin, L.; Luisa, V.; Shu, H. Detecting multimedia generated by large AI models: A survey. arXiv 2024, arXiv:2402.00045. [Google Scholar]

- Rana, M. S.; Nobi, M.N.; Murali, B.; Sung, A.H. Deepfake detection: A systematic literature review. IEEE Access 2022, 10, 25494–25513. [Google Scholar] [CrossRef]

- Seow, J. W.; Lim, M.K.; Phan, R.C.W.; Liu, J.K. A comprehensive overview of deepfake: Generation, detection, datasets, and opportunities. Neurocomputing 2022, 513, 351–371. [Google Scholar] [CrossRef]

- Gong, L.Y.; Li, X.J. A contemporary survey on deepfake detection: Datasets, algorithms, and challenges. Electronics 2024, 13, 585. [Google Scholar] [CrossRef]

- Heidari, A.; Navimipour, N.J.; Dag, H.; Unal, M. Deepfake detection using deep learning methods: A systematic and comprehensive review. Wiley Interdiscip. Rev.-Data Mining Knowl. Discov. 2024, 14, 1–45. [Google Scholar] [CrossRef]

- Sandotra, N.; Arora, B. A comprehensive evaluation of feature-based AI techniques for deepfake detection. Neural Comput. Appl. 2024, 36, 3859–3887. [Google Scholar] [CrossRef]

- Kaur, A.; Hoshyar, A.N.; Saikrishna, V.; Firmin, S.; Xia, F. Deepfake video detection: Challenges and opportunities. Artif. Intell. Rev. 2024, 57, 159. [Google Scholar] [CrossRef]

- Goodfellow, I.; Pouget, A.J.; Mirza, M.; Xu, B. Generative adversarial nets. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, Canada, 8–11 December 2014; pp. 2672–2680. [Google Scholar]

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1251–1258. [Google Scholar]

- Zhu, J.Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired image-to-image translation using cycle-consistent adversarial networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2223–2232. [Google Scholar]

- Bellemare, M.G.; Danihelka, I.; Dabney, W.; Mohamed, S.; Lakshminarayanan, B.; Hoyer, S.; Munos, R. The cramer distance as a solution to biased wasserstein. In Proceedings of the International Conference on Learning Representations, Toulon, France, 24–26 April 2017; pp. 1–23. [Google Scholar]

- Karras, T.; Aila, T.; Laine, S.; Lehtinen, J. Progressive growing of GANs for improved quality, stability, and variation. In Proceedings of the International Conference on Learning Representations, Vancouver, Canada, 30 April–3 May 2018; pp. 1–26. [Google Scholar]

- Choi, Y.; Choi, M.; Kim, M.; Ha, J.W.; Kim, S.; Choo, J. StarGAN: Unified generative adversarial networks for multi-domain image-to-image translation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 8789–8797. [Google Scholar]

- Brock, A.; Donahue, J.; Simonyan, K. Large scale GAN training for high fidelity natural image synthesis. In Proceedings of the Inernational Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019; pp. 1–35. [Google Scholar]

- He, Z.; Zuo, W.; Kan, M.; Shan, S.; Chen, X. AttGAN: Facial attribute editing by only changing what you want. Trans. Img. Proc. 2019, 28, 5464–5478. [Google Scholar] [CrossRef] [PubMed]

- Wu, P.W.; Lin, Y.J.; Chang, C.H.; Chang, E.Y.; Liao, S.W. RelGAN: Multi-domain image-to-image translation via relative attributes. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Korea (South), 27 October–2 November 2019; pp. 5914–5922. [Google Scholar]

- Park, T.; Liu, M.Y.; Wang, T.C.; Zhu, J.Y. Semantic image synthesis with spatially-adaptive normalization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 2337–2346. [Google Scholar]

- Karras, T.; Laine, S.; Aila, T. A style-based generator architecture for generative adversarial networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 4401–4410. [Google Scholar]

- Karras, T.; Laine, S.; Aittala, M.; Hellsten, J.; Lehtinen, J.; Aila, T. Analyzing and improving the image quality of styleGAN. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–20 June 2020; pp. 8110–8119. [Google Scholar]

- Lee, K.S.; Tran, N.T.; Cheung, N.M. Infomax-GAN: Improved adversarial image generation via information maximization and contrastive learning. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 5–9 January 2021; pp. 3942–3952. [Google Scholar]

- Ho, J.; Jain, A.; Abbeel, P. Denoising diffusion probabilistic models. In Proceedings of the Advances in Neural Information Processing Systems, Online, Canada, 6–12 December 2020; pp. 6840–6851. [Google Scholar]

- Ramesh, A.; Pavlov, M.; Goh, G.; Gray, S.; Voss, C.; Radford, A.; Chen, M.; Sutskever, I. Zero-shot text-to-image generation. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 18–24 July 2021; pp. 8821–8831. [Google Scholar]

- Dhariwal, P.; Nichol, A. Diffusion models beat GANs on image synthesis. In Proceedings of the Advances in Neural Information Processing Systems, Online, Canada, 6–14 December 2021; pp. 8780–8794. [Google Scholar]

- Nichol, A.; Dhariwal, P.; Ramesh, A.; Shyam, P.; Mishkin, P.; McGrew, B.; Sutskever, I.; Chen, M. Glide: Towards photorealistic image generation and editing with text-guided diffusion models. In Proceedings of the International Conference on Machine Learning, Baltimore, MD, USA, 17–23 July 2022; pp. 16784–16804. [Google Scholar]

- Saharia, C.; Chan, W.; Saxena, S.; Li, L.; Whang, J.; Denton, E.L.; Ghasemipour, K.; Gontijo, L.R.; Karagol, A.B.; Salimans, T.; Ho, J.; Fleet, D.J.; Norouzi, M. Photorealistic text-to-image diffusion models with deep language understanding. In Proceedings of the Advances in Neural Information Processing Systems, New Orleans, LA, USA, 28 November–9 December 2022; pp. 36479–36494. [Google Scholar]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 10684–10695. [Google Scholar]

- Gu, S.; Chen, D.; Bao, J.; Wen, F.; Zhang, B.; Chen, D.D.; Yuan, L.; Guo, B. Vector quantized diffusion model for text-to-image synthesis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 110696–10706. [Google Scholar]

- Midjourney. Available online: https://www.midjourney.com/home (accessed on 12 July 2022).

- Wukong. Available online: https://xihe.mindspore.cn/modelzoo/wukong (accessed on 14 September 2023).

- Shirakawa, T.; Uchida, S. NoiseCollage: A layout-aware text-to-image diffusion model based on noise cropping and merging. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 8921–8930. [Google Scholar]

- Shiohara, K.; Yamasaki, T. Face2Diffusion for fast and editable face personalization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 6850–6859. [Google Scholar]

- Cao, C.; Cai, Y.; Dong, Q.; Wang, Y.; Fu, Y. LeftRefill: Filling right canvas based on left reference through generalized text-to-image diffusion model. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 7705–7715. [Google Scholar]

- Zhou, D.; Li, Y.; Ma, F.; Zhang, X.; Yang, Y. Migc: Multi-instance generation controller for text-to-image synthesis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 6818–6828. [Google Scholar]

- Hoe, J.T.; Jiang, X.; Chan, C.S.; Tan, Y.P.; Hu, W. InteractDiffusion: Interaction Control in Text-to-Image Diffusion Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 6180–6189. [Google Scholar]

- Höllein, L.; Božič, A.; Müller, N.; Novotny, D.; Tseng, H.Y.; Richardt, C.; Zollhöfer, M.; Nießner, M. Viewdiff: 3d-consistent image generation with text-to-image models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 5043–5052. [Google Scholar]

- Wang, Z.J.; Montoya, E.; Munechika, D.; Yang, H. Diffusiondb: A large-scale prompt gallery dataset for text-to-image generative models. arXiv 2022, arXiv:2210.14896. [Google Scholar]

- Rahman, M.A.; Paul, B.; Sarker, N.H.; Hakim, Z.I.A.; Fattah, S.A. Artifact: A large-scale dataset with artificial and factual images for generalizable and robust synthetic image detection. In Proceedings of the IEEE International Conference on Image Processing, Kuala Lumpur, Malaysia, 8–11 October 2023; pp. 2200–2204. [Google Scholar]

- Bird, J.J.; Lotfi, A. Cifake: Image classification and explainable identification of AI-generated synthetic images. IEEE Access 2024, 12, 15642–15650. [Google Scholar] [CrossRef]

- Zhu, M.; Chen, H.; YAN, Q.; Huang, X.; Lin, G.; Li, W.; Tu, Z.; Hu, H.; Wang, Y. Genimage: A million-scale benchmark for detecting AI-generated image. In Proceedings of the 37th International Conference on Neural Information Processing Systems, New Orleans, LA, USA, 10–16 December 2023; pp. 77771–77782. [Google Scholar]

- Lu, Z.; Huang, D.; Bai, L.; Qu, J.; Wu, C.; Liu, X.; Ouyang, W. Seeing is not always believing: Benchmarking human and model perception of AI-generated images. In Proceedings of the 37th International Conference on Neural Information Processing Systems, New Orleans, LA, USA, 10–16 December 2023; pp. 25435–25447. [Google Scholar]

- Hong, Y.; Zhang, J. Wildfake: A large-scale challenging dataset for AI-generated images detection. arXiv 2024, arXiv:2402.11843. [Google Scholar]

- Edwards, P.; Nebel, J.-C.; Greenhill, D.; Liang, X. A review of deepfake techniques: Architecture, detection, and datasets. IEEE Access 2024, 12, 154718–154742. [Google Scholar] [CrossRef]

- Deepfakes github. Available online: https://github.com/deepfakes/faceswap (accessed on 29 October 2018).

- Thies, J.; Zollhöfer, M.; Stamminger, M.; Theobalt, C.; Nießner, M. Face2face: Real-time face capture and reenactment of RGB videos. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2387–2395. [Google Scholar]

- Korshunov, P.; Marcel, S. Deepfakes: A new threat to face recognition? Assessment and detection. arXiv 2018, arXiv:1812.08685. [Google Scholar]

- Li, Y.; Chang, M.-C.; Lyu, S. In ictu oculi: Exposing AI created fake videos by detecting eye blinking. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Hong Kong, China, 10–13 December 2018; pp. 1–7. [Google Scholar]

- Rossler, A.; Cozzolino, D.; Verdoliva, L.; Riess, C.; Thies, J.; Niessner, M. FaceForensics++: Learning to Detect Manipulated Facial Images. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Korea (South), 27 October–2 November 2018; pp. 1–11. [Google Scholar]

- Zi, B.; Chang, M.; Chen, J.; Ma, X.; Jiang, Y.-G. Wilddeepfake: A challenging real-world dataset for deepfake detection. In Proceedings of thethe 18th ACM International Conference on Multimedia, Seattle, WA, USA, 12–26 October 2020; pp. 2382–2390. [Google Scholar]

- Dolhansky, B.; Bitton, J.; Pflaum, B.; Lu, J.; Howes, R.; Wang, M.; Ferrer, C.C. The deepfake detection challenge (DFDC) dataset. arXiv 2020, arXiv:2006.07397. [Google Scholar]

- Contributing Data to Deepfake Detection Research. Available online: https://research.google/blog/contributing-data-to-deepfake-detection-research (accessed on 24 September 2019).

- Jiang, L.; Li, R.; Wu, W.; Qian, C.; Loy, C.C. Deeperforensics-1.0: A large-scale dataset for real-world face forgery detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 2886–2895. [Google Scholar]

- Li, Y.; Yang, X.; Sun, P.; Qi, H.; Lyu, S. Celeb-df: A large-scale challenging dataset for deepfake forensics. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 3204–3213. [Google Scholar]

- Kwon, P.; You, J.; Nam, G.; Park, S.; Chae, G. Kodf: A large-scale korean deepfake detection dataset. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 10–17 October 2021; pp. 10724–10733. [Google Scholar]

- Trung-Nghia, L.; Nguyen, H. H.; Yamagishi, J.; Echizen, I. Openforensics: Large-scale challenging dataset for multi-face forgery detection and segmentation in-the-wild. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 10–17 October 2021; pp. 10097–10107. [Google Scholar]

- Zhou, T.; Wang, W.; Liang, Z.; Shen, J. Face forensics in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 5774–5784. [Google Scholar]

- Jia, S.; Li, X.; Lyu, S. Model attribution of face-swap deepfake videos. In Proceedings of the IEEE International Conference on Image Processing, Bordeaux, France, 16–19 October 2022; pp. 2356–2360. [Google Scholar]

- Narayan, K.; Agarwal, H.; Thakral, K.; Mittal, S.; Vatsa, M.; Singh, R. Df-platter: Multi-face heterogeneous deepfake dataset. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 18–22 June 2023; pp. 9739–9748. [Google Scholar]

- Bhattacharyya, C.; Wang, H.; Zhang, F.; Kim, S.; Zhu, X. Diffusion deepfake. arXiv 2024, arXiv:2404.01579. [Google Scholar]

- Marra, F.; Gragnaniello, D.; Cozzolino, D.; Verdoliva, L. Detection of GAN-generated fake images over social networks. In Proceedings of the IEEE Conference on Multimedia Information Processing and Retrieval, Miami, FL, USA, 10–12 April 2018; pp. 384–389. [Google Scholar]

- Hsu, C.C.; Lee, C.Y.; Zhuang, Y.X. Learning to detect fake face images in the wild. In Proceedings of the International Symposium on Computer, Consumer and Control, Taiwan, China, 6–8 December 2018; pp. 388–391. [Google Scholar]

- Yu, N.; Davis, L.S.; Fritz, M. Attributing fake images to GANs: Learning and analyzing GAN fingerprints. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Korea (South), 27 October–2 November 2019; pp. 7556–7566. [Google Scholar]

- Zhuang, Y.X.; Hsu, C.C. Detecting generated image based on a coupled network with two-step pairwise learning. In Proceedings of the IEEE International Conference on Image Processing, Taiwan, China, 22–25 September 2019; pp. 3212–3216. [Google Scholar]

- Marra, F.; Gragnaniello, D.; Verdoliva, L.; Poggi, G. Do GANs leave artificial fingerprints? In Proceedings of the IEEE conference on Multimedia Information Processing and Retrieval, San Jose, CA, USA, 28–30 March 2019; pp. 506–511. [Google Scholar]

- Marra, F.; Saltori, C.; Boato, G.; Verdoliva, L. Incremental learning for the detection and classification of GAN-generated images. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Delft, The Netherlands, USA, 9–12 December 2019; pp. 1–6. [Google Scholar]

- Wang, S.Y.; Wang, O.; Zhang, R.; Owens, A.; Efros, A.A. CNN-generated images are surprisingly easy to spot... for now. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 8695–8704. [Google Scholar]

- Jeon, H.; Bang, Y.; Kim, J.; Woo, S.S. T-GD: Transferable GAN-generated images detection framework. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 12–18 July 2020; pp. 4746–4761. [Google Scholar]

- Chai, L.; Bau, D.; Lim, S.N.; Isola, P. What makes fake images detectable? Understanding properties that generalize. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 103–120. [Google Scholar]

- Mi, Z.; Jiang, X.; Sun, T.; Xu, K. GAN-generated image detection with self-attention mechanism against GAN generator defect. IEEE J. Sel. Top. Signal Process. 2020, 14, 969–981. [Google Scholar] [CrossRef]

- He, Y.; Yu, N.; Keuper, M.; Fritz, M. Beyond the spectrum: Detecting deepfakes via re-synthesis. In Proceedings of the International Joint Conference on Artificial Intelligence, Montreal, Canada, 21–26 August 2021; pp. 2534–2541. [Google Scholar]

- Li, W.; He, P.; Li, H.; Wang, H.; Zhang, R. Detection of GAN-generated images by estimating artifact similarity. IEEE Signal Process. Lett. 2021, 29, 862–866. [Google Scholar] [CrossRef]

- Girish, S.; Suri, S.; Rambhatla, S.S.; Shrivastava, A. Towards discovery and attribution of open-world GAN generated images. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Nashville, TN, USA, 11–17 October 2021; pp. 14094–14103. [Google Scholar]

- Liu, B.; Yang, F.; Bi, X.; Xiao, B.; Li, W.; Gao, X. Detecting generated images by real images. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 95–110. [Google Scholar]

- Jeong, Y.; Kim, D.; Ro, Y.; Kim, P.; Choi, J. Fingerprintnet: Synthesized fingerprints for generated image detection. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 76–94. [Google Scholar]

- Zhang, M.; Wang, H.; He, P.; Malik, A.; Liu, H. Improving GAN-generated image detection generalization using unsupervised domain adaptation. In Proceedings of the IEEE International Conference on Multimedia and Expo, Taiwan, China, 18–22 July 2022; pp. 1–6. [Google Scholar]

- Ju, Y.; Jia, S.; Ke, L.; Xue, H.; Nagano, K.; Lyu, S. Fusing global and local features for generalized AI-synthesized image detection. In Proceedings of the IEEE International Conference on Image Processing, Bordeaux, France, 16–19 October 2022; pp. 3465–3469. [Google Scholar]

- Mandelli, S.; Bonettini, N.; Bestagini, P.; Tubaro, S. Detecting GAN-generated images by orthogonal training of multiple CNNs. In Proceedings of the IEEE International Conference on Image Processing, Bordeaux, France, 16–19 October 2022; pp. 3091–3095. [Google Scholar]

- Tan, C.; Zhao, Y.; Wei, S.; Gu, G.; Wei, Y. Learning on gradients: Generalized artifacts representation for GAN-generated images detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 17–24 June 2023; pp. 12105–12114. [Google Scholar]

- Ojha, U.; Li, Y.; Lee, Y.J. Towards universal fake image detectors that generalize across generative models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 17–24 June 2023; pp. 24480–24489. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; Krueger, G.; Sutskever, I. Learning transferable visual models from natural language supervision. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 18–24 July 2021; pp. 8748–8763. [Google Scholar]

- Wang, Z.; Bao, J.; Zhou, W.; Wang, W.; Hu, H.; Chen, H.; Li, H. Dire for diffusion-generated image detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 1–6 October 2023; pp. 22445–22455. [Google Scholar]

- Xu, Q.; Wang, H.; Meng, L.; Mi, Z.; Yuan, J.; Yan, H. Exposing fake images generated by text-to-image diffusion models. Pattern Recognit. Lett. 2023, 176, 76–82. [Google Scholar] [CrossRef]

- Ju, Y.; Jia, S.; Cai, J.; Guan, H.; Lyu, S. GLFF: Global and local feature fusion for AI-synthesized image detection. IEEE Trans. Multimed. 2024, 26, 4073–4085. [Google Scholar] [CrossRef]

- Zhang, L.; Chen, H.; Hu, S.; Zhu, B.; Lin, C.S.; Wu, X.; Hu, J.R.; Wang, X. X-Transfer: A transfer learning-based framework for GAN-generated fake image detection. In Proceedings of the International Joint Conference on Neural Networks, Yokohama, Japan, 30 June–5 July 2024; pp. 1–8. [Google Scholar]

- Tan, C.; Zhao, Y.; Wei, S.; Gu, G.; Liu, P.; Wei, Y. Rethinking the up-sampling operations in CNN-based generative network for generalizable deepfake detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 28130–28139. [Google Scholar]

- Lim, Y.; Lee, C.; Kim, A.; Etzioni, O. DistilDIRE: A small, fast, cheap and lightweight diffusion synthesized deepfake detection. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024; pp. 1–6. [Google Scholar]

- Yan, Z.; Luo, Y.; Lyu, S.; Liu, Q.; Wu, B. Transcending forgery specificity with latent space augmentation for generalizable deepfake detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 8984–8994. [Google Scholar]

- Wang, Z.; Sehwag, V.; Chen, C.; Lyu, L.; Metaxas, D.N.; Ma, S. How to trace latent generative model generated images without artificial watermark? In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024; pp. 51396–51414. [Google Scholar]

- Chen, B.; Zeng, J.; Yang, J.; Yang, R. DRCT: Diffusion reconstruction contrastive training towards universal detection of diffusion generated images. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024; pp. 7621–7639. [Google Scholar]

- Sinitsa, S.; Fried, O. Deep image fingerprint: Towards low budget synthetic image detection and model lineage analysis. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2024; pp. 4067–4076. [Google Scholar]

- He, Z.; Chen, P.Y.; Ho, T.Y. RIGID: A training-free and model-agnostic framework for robust AI-generated image detection. arXiv 2024, arXiv:2405.20112. [Google Scholar]

- Liang, Z.; Wang, R.; Liu, W.; Zhang, Y.; Yang, W.; Wang, L.; Wang, X. Let real images be as a judger, spotting fake images synthesized with generative models. arXiv 2024, arXiv:2403.16513. [Google Scholar]

- Chen, J.; Yao, J.; Niu, L. A single simple patch is all you need for AI-generated image detection. arXiv 2024, arXiv:2402.01123. [Google Scholar]

- Zhang, Y.; Xu, X. Diffusion noise feature: Accurate and fast generated image detection. arXiv 2023, arXiv:2312.02625. [Google Scholar]

- Yang, Y.; Qian, Z.; Zhu, Y.; Wu, Y.D. Scaling up deepfake detection by learning from discrepancy. arXiv 2024, arXiv:2404.04584. [Google Scholar]

- He, P.; Li, H.; Wang, H. Detection of fake images via the ensemble of deep representations from multi color spaces. In Proceedings of the IEEE International Conference on Image Processing, Taiwan, China, 22–25 September 2019; pp. 2299–2303. [Google Scholar]

- Chandrasegaran, K.; Tran, N.T.; Binder, A.; Cheung, N.M. Discovering transferable forensic features for CNN-generated images detection. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 671–689. [Google Scholar]

- Uhlenbrock, L.; Cozzolino, D.; Moussa, D.; Verdoliva, L.; Riess, C.; Cheung, N.M. Did you note my palette? Unveiling synthetic images through color statistics. In Proceedings of the ACM Workshop on Information Hiding and Multimedia Security, Baiona, Spain, 24–26 June 2024; pp. 47–52. [Google Scholar]

- Qiao, T.; Chen, Y.; Zhou, X.; Shi, R.; Shao, H.; Shen, K.; Luo, X. Csc-net: Cross-color spatial co-occurrence matrix network for detecting synthesized fake images. IEEE Trans. Cognit. Dev. Syst. 2024, 16, 369–379. [Google Scholar] [CrossRef]

- Liu, Z.; Qi, X.; Torr, P.H.S. Global texture enhancement for fake face detection in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 8060–8069. [Google Scholar]

- Yang, J.; Li, A.; Xiao, S.; Lu, W.; Gao, X. MTD-Net: Learning to detect deepfakes images by multi-scale texture difference. IEEE Trans. Inf. Forensic Secur. 2021, 16, 4234–4245. [Google Scholar] [CrossRef]

- Zhong, N.; Xu, Y.; Qian, Z.; Zhang, X. Rich and poor texture contrast: A simple yet effective approach for AI-generated image detection. arXiv 2023, arXiv:2311.12397. [Google Scholar]

- Zhang, Y.; Zhu, N.; Zhang, X.; Wang, K. Computer-generated image detection based on deep LBP network. In Proceedings of the International Conference on Computer Application and Information Security, Wuhan, China, 20–22 December 2024; pp. 932–939. [Google Scholar]

- Lorenz, P.; Durall, R.L.; Keuper, J. Detecting images generated by deep diffusion models using their local intrinsic dimensionality. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 2–6 October 2023; pp. 448–459. [Google Scholar]

- Lin, M.; Shang, L.; Gao, X. Enhancing interpretability in AI-generated image detection with genetic programming. In Proceedings of the IEEE International Conference on Data Mining Workshops, Shanghai, China, 1–4 December 2023; pp. 371–378. [Google Scholar]

- Sarkar, A.; Mai, H.; Mahapatra, A.; Lazebnik, S.; Forsyth, D.A.; Bhattad, A. Shadows don’t lie and lines can’t bend! Generative models don’t know projective geometry…for now. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 28140–28149. [Google Scholar]

- Cozzolino, D.; Poggi, G.; Nießner, M.; Verdoliva, L. Zero-shot detection of AI-generated images. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; pp. 54–72. [Google Scholar]

- Tan, C.; Liu, P.; Tao, R.; Liu, H.; Zhao, Y.; Wu, B.; Wei, Y. Data-independent operator: A training-free artifact representation extractor for generalizable deepfake detection. arXiv 2024, arXiv:2403.06803. [Google Scholar]

- Zhang, X.; Karaman, S.; Chang, S.F. Detecting and simulating artifacts in GAN fake images. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Delft, Netherlands, 9–12 December 2019; pp. 1–6. [Google Scholar]

- Frank, J.; Eisenhofer, T.; Schönherr, L.; Fischer, A.; Kolossa, D.; Holz, T. Leveraging frequency analysis for deep fake image recognition. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 12–18 July 2020; pp. 3247–3258. [Google Scholar]

- Durall, R.; Keuper, M.; Keuper, J. Watch your up-convolution: CNN based generative deep neural networks are failing to reproduce spectral distributions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 7890–7899. [Google Scholar]

- Dzanic, T.; Shah, K.; Witherden, F. Fourier spectrum discrepancies in deep network generated images. In Proceedings of the 34th International Conference on Neural Information Processing Systems, Beijing, China, 6–12 December 2020; pp. 3022–3032. [Google Scholar]

- Bonettini, N.; Bestagini, P.; Milani, S.; Tubaro, S. On the use of Benford’s law to detect GAN-generated images. In Proceedings of the International Conference on Pattern Recognition, Taiwan, China, 18–21 July 2021; pp. 5495–5502. [Google Scholar]

- Chandrasegaran, K.; Tran, N.T.; Cheung, N.M. A closer look at fourier spectrum discrepancies for CNN-generated images detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 19–25 June 2021; pp. 7200–7209. [Google Scholar]

- Dong, C.; Kumar, A.; Liu, E. Think twice before detecting GAN-generated fake images from their spectral domain imprints. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 7865–7874. [Google Scholar]

- Corvi, R.; Cozzolino, D.; Zingarini, G.; Poggi, G.; Nagano, K.; Verdoliva, L. On the detection of synthetic images generated by diffusion models. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Rhodes Island, Greece, 4–10 June 2023; pp. 1–5. [Google Scholar]

- Corvi, R.; Cozzolino, D.; Poggi, G.; Nagano, K.; Verdoliva, L. Intriguing properties of synthetic images: from generative adversarial networks to diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Vancouver, Canada, 18–22 June 2023; pp. 973–982. [Google Scholar]

- Bammey, Q. Synthbuster: Towards detection of diffusion model generated images. IEEE Open J. Signal Process. 2024, 5, 1–9. [Google Scholar] [CrossRef]

- Pontorno, O.; Guarnera, L.; Battiato, S. On the exploitation of DCT-traces in the generative-AI domain. In Proceedings of the IEEE International Conference on Image Processing, Bordeaux, France, 27–30 October 2024; pp. 3806–3812. [Google Scholar]

- Tan, C.; Zhao, Y.; Wei, S.; Gu, G.; Liu, P.; Wei, Y. Frequency-aware deepfake detection: Improving generalizability through frequency space learning. In Proceedings of the AAAI Conference on Artifical Intelligence, 20–27 February 2024; Volume 38, pp. 5052–5060. [Google Scholar]

- Doloriel, C.T.; Cheung, N.-M. Frequency masking for universal deepfake detection. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Seoul, Korea (South), 14–19 April 2024; pp. 13466–13470. [Google Scholar]

- Weng, J. Local frequency analysis for diffusion-generated image detection. In Proceedings of the International Conference on Image Processing and Artificial Intelligence, Suzhou, China, 19–21 April 2024; pp. 66–73. [Google Scholar]

- Yu, Y.; Ni, R.; Li, W.; Zhao, Y. Detection of AI-manipulated fake faces via mining generalized features. ACM Trans. Multimed. Comput. Commun. Appl. 2022, 18, 94. [Google Scholar] [CrossRef]

- Liu, C.; Zhu, T.; Zhao, Y.; Zhang, J.; Zhou, W. Disentangling different levels of GAN fingerprints for task-specific forensics. Comput. Stand. Interfaces 2024, 89, 103825. [Google Scholar] [CrossRef]

- Luo, Y.; Du, J.; Yan, K.; Ding, S. LaRE2: Latent reconstruction error based method for diffusion-generated image detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 17006–17015. [Google Scholar]

- Lanzino, R.; Fontana, F.; Diko, A.; Marini, M.R.; Cinque, L. Faster than lies: real-time deepfake detection using binary neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 3771–3780. [Google Scholar]

- Wißmann, A.; Zeiler, S.; Nickel, R.M.; Kolossa, D. Whodunit: Detection and attribution of synthetic images by leveraging model-specific fingerprints. In Proceedings of the ACM International Workshop on Multimedia AI against Disinformation, Phuket, Thailand, 10–13 June 2024; pp. 65–72. [Google Scholar]

- Gallagher, J.; Pugsley, W. Development of a dual-input neural model for detecting AI-generated imagery. arXiv 2024, arXiv:2406.13688. [Google Scholar]

- Meng, Z.; Peng, B.; Dong, J.; Tan, T.; Cheng, H. Artifact feature purification for cross-domain detection of AI-generated images. Comput. Vis. Image Underst. 2024, 247, 104078. [Google Scholar] [CrossRef]

- Xu, Q.; Jiang, X.; Sun, T.; Wang, H.; Meng, L.; Yan, H. Detecting artificial intelligence-generated images via deep trace representations and interactive feature fusion. Inf. Fusion 2024, 112, 102578. [Google Scholar] [CrossRef]

- Leporoni, G.; Maiano, L.; Papa, L.; Amerini, I. A guided-based approach for deepfake detection: RGB-depth integration via features fusion. Pattern Recognit. Lett. 2024, 112, 99–105. [Google Scholar] [CrossRef]

- Yan, S.; Li, O.; Cai, J.; Hao, Y.; Jiang, X.; Hu, Y.; Xie, W. A sanity check for AI-generated image detection. arXiv 2024, arXiv:2406.19435. [Google Scholar]

- Sha, Z.; Li, Z.; Yu, N.; Zhang, Y. DE-FAKE: Detection and attribution of fake images generated by text-to-image generation models. In Proceedings of the ACM SIGSAC Conference on Computer and Communications Security, Seattle, New York, NY, USA, 26–30 November 2024; pp. 3418–3432. [Google Scholar]

- Wu, H.; Zhou, J.; Zhang, S. Generalizable synthetic image detection via language-guided contrastive learning. arXiv 2023, arXiv:2305.13800. [Google Scholar]

- Liu, H.; Tan, Z.; Tan, C.; Wei, Y.; Wang, J.; Zhao, Y. Forgery-aware adaptive transformer for generalizable synthetic image detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 10770–10780. [Google Scholar]

- Cazenavette, G.; Sud, A.; Leung, T.; Usman, B. FakeInversion: Learning to detect images from unseen text-to-image models by inverting stable diffusion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 10759–10769. [Google Scholar]

- Khan, S.A.; Dang-Nguyen, D.-T. CLIPping the deception: Adapting vision-language models for universal deepfake detection. In Proceedings of the International Conference on Multimedia Retrieval, New York, NY, USA, 10–14 June 2024; pp. 1006–1015. [Google Scholar]

- Keita, M.; Hamidouche, W.; Eutamene, H.B.; Hadid, A.; Taleb-Ahmed, A. Bi-LORA: A vision-language approach for synthetic image detection. arXiv 2024, arXiv:2404.01959. [Google Scholar] [CrossRef]

- Keita, M.; Hamidouche, W.; Bougueffa, H.; Hadid, A.; Taleb-Ahmed, A. Harnessing the power of large vision language models for synthetic image detection. arXiv 2024, arXiv:2404.02726. [Google Scholar]

- Sha, Z.; Tan, Y.; Li, M.; Backes, M.; Zhang, Y. ZeroFake: Zero-shot detection of fake images generated and edited by text-to-image generation models. In Proceedings of the ACM SIGSAC Conference on Computer and Communications Security, Salt Lake City, UT, USA, 14–18 October 2024; pp. 4852–4866. [Google Scholar]

- Afchar, D.; Nozick, V.; Yamagishi, J.; Echizen, I. Mesonet: a compact facial video forgery detection network. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Hong Kong, China, 10–13 December 2018; pp. 1–7. [Google Scholar]

- Li, L.; Bao, J.; Zhang, T.; Yang, H.; Chen, D.; Wen, F.; Guo, B. Face x-ray for more general face forgery detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 5000–5009. [Google Scholar]

- Bonettini, N.; Cannas, E.D.; Mandelli, S.; Bondi, L.; Bestagini, P.; Tubaro, S. Video face manipulation detection through ensemble of CNNs. In Proceedings of the 25th International Conference on Pattern Recognition, Milan, Italy, 10–15 January 2021; pp. 5012–5019. [Google Scholar]

- Shiohara, K.; Yamasaki, T. Detecting deepfakes with self-blended images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 18699–18708. [Google Scholar]

- Chen, L.; Zhang, Y.; Song, Y.; Liu, L.; Wang, J. Self-supervised learning of adversarial example: Towards good generalizations for deepfake detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 18689–18698. [Google Scholar]

- Lin, Y.; Song, W.; Li, B.; Li, Y.; Ni, J.; Chen, H.; Li, Q. Fake it till you make it: Curricular dynamic forgery augmentations towards general deepfake detection. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; pp. 104–122. [Google Scholar]

- Guan, W.; Wang, W.; Dong, J.; Peng, B. Improving generalization of deepfake detectors by imposing gradient regularization. IEEE Trans. Inf. Forensic Secur. 2024, 19, 5345–5356. [Google Scholar] [CrossRef]

- Gao, J.; Micheletto, M.; Orru, G.; Concas, S.; Feng, X.; Marcialis, G.L.; Roli, F. Texture and artifact decomposition for improving generalization in deep-learning-based deepfake detection. Eng. Appl. Artif. Intell. 2024, 133, 108450. [Google Scholar] [CrossRef]

- Lu, W.; Liu, L.; Zhang, B.; Luo, J.; Zhao, X.; Zhou, Y.; Huang, J. Detection of deepfake videos using long-distance attention. IEEE Trans. Neural Netw. Learn. Syst. 2024, 35, 9366–9379. [Google Scholar] [CrossRef] [PubMed]

- Zheng, J.; Zhou, Y.; Hu, X.; Tang, Z. Deepfake detection with combined unsupervised-supervised contrastive learning. In Proceedings of the IEEE International Conference on Image Processing, Bordeaux, France, 27–30 October 2024; pp. 787–793. [Google Scholar]

- Bai, W.; Liu, Y.; Zhang, Z.; Zhang, X.; Wang, B.; Peng, C.; Hu, W.; Li, B. Learn from noise: Detecting deepfakes via regional noise consistency. In Proceedings of the International Joint Conference on Neural Networks, Yokohama, Japan, 30 June–5 July 2024; pp. 1–8. [Google Scholar]

- Ma, X.; Tian, J.; Cai, Y.; Chai, Y.; Li, Z.; Dai, J.; Zang, L.; Han, J. HIDD: Human-perception-centric incremental deepfake detection. In Proceedings of the International Joint Conference on Neural Networks, Yokohama, Japan, 30 June–5 July 2024; pp. 1–8. [Google Scholar]

- Lu, L.; Wang, Y.; Zhuo, W.; Zhang, L.; Gao, G.; Guo, Y. Deepfake detection via separable self-consistency learning. In Proceedings of the IEEE International Conference on Image Processing, Abu Dhabi, UAE, 27–30 October 2024; pp. 3264–3270. [Google Scholar]

- Dolhansky, B.; Howes, R.; Pflaum, B.; Baram, N.; Ferrer, C.C. The deepfake detection challenge (DFDC) preview dataset. arXiv 2019, arXiv:1910.08854. [Google Scholar]

- Peng, S.; Cai, M.; Ma, R.; Liu, X. Deepfake detection algorithm for high-frequency components of shallow features. Laser Optoelectron. Prog. 2023, 60, 1015001. [Google Scholar]

- Qian, Y.; Yin, G.; Sheng, L.; Chen, Z.; Shao, J. Thinking in frequency: Face forgery detection by mining frequency-aware clues. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 86–103. [Google Scholar]

- Li, J.; Xie, H.; Li, J.; Wang, Z.; Zhang, Y. Frequency-aware discriminative feature learning supervised by single-center loss for face forgery detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 6454–6463. [Google Scholar]

- Gao, J.; Xia, Z.; Marcialis, G.L.; Dang, C.; Dai, J.; Feng, X. Deepfake detection based on high-frequency enhancement network for highly compressed content. Expert Syst. Appl. 2024, 249, 123732. [Google Scholar] [CrossRef]

- Miao, C.; Tan, Z.; Chu, Q.; Liu, H.; Hu, H.; Yu, N. F2Trans: High-frequency fine-grained transformer for face forgery detection. IEEE Trans. Inf. Forensic Secur. 2023, 18, 1039–1051. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, Y.; Yang, H.; Chen, B.; Huang, D. SA3WT: Adaptive wavelet-based transformer with self-paced auto augmentation for face forgery detection. Int. J. Comput. Vis. 2024, 132, 4417–4439. [Google Scholar] [CrossRef]

- Hasanaath, A.A.; Luqman, H.; Katib, R.; Anwar, S. FSBI: Deepfakes detection with frequency enhanced self-blended images. arXiv 2024, arXiv:2406.08625. [Google Scholar] [CrossRef]

- Zhao, Y.; Li, J.; Wang, L. Harmonizing dynamic frequency analysis with attention mechanisms for efficient facial image authenticity detection. In Proceedings of the International Conference on Computer Science and Automation Technology, Shanghai, China, 6–8 October 2023; pp. 348–352. [Google Scholar]

- Wang, B.; Wu, X.; Tang, Y.; Ma, Y.; Shan, Z.; Wei, F. Frequency domain filtered residual network for deepfake detection. Mathematics 2023, 11, 816. [Google Scholar] [CrossRef]

- Wang, F.; Chen, Q.; Jing, B.; Tang, Y.; Song, Z.; Wang, B. Deepfake detection based on the adaptive fusion of spatial-frequency featuress. Int. J. Intell. Syst. 2024, 2024, 7578036. [Google Scholar] [CrossRef]

- Zhou, J.; Zhao, X.; Xu, Q.; Zhang, P.; Zhou, Z. MDCF-Net: Multi-scale dual-branch network for compressed face forgery detection. IEEE Access 2024, 12, 58740–58749. [Google Scholar] [CrossRef]

- Le, M.B.; Woo, S. ADD: Frequency attention and multi-view based knowledge distillation to detect low-quality compressed deepfake images. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, Canada, 22 February–1 March, 202; pp. 122–130.

- Wang, B.; Wu, X.; Wang, F.; Zhang, Y.; Wei, F.; Song, Z. Spatial-frequency feature fusion based deepfake detection through knowledge distillation. Eng. Appl. Artif. Intell. 2024, 133, 108341. [Google Scholar] [CrossRef]

- Guo, Z.; Jia, Z.; Wang, L.; Wang, D.; Yang, G.; Kasabov, N. Constructing new backbone networks via space-frequency interactive convolution for deepfake detection. IEEE Trans. Inf. Forensic Secur. 2024, 19, 401–413. [Google Scholar] [CrossRef]

- Luo, Y.; Zhang, Y.; Yan, J.; Liu, W. Generalizing face forgery detection with high-frequency features. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 16312–16321. [Google Scholar]

- Zhang, D.; Chen, J.; Liao, X.; Li, F.; Chen, J.; Yang, G. Face forgery detection via multi-feature fusion and local enhancement. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 8972–8977. [Google Scholar] [CrossRef]

- Fei, J.; Dai, Y.; Yu, P.; Shen, T.; Xia, Z.; Weng, J. Learning second order local anomaly for general face forgery detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 20238–20248. [Google Scholar]

- Dong, F.; Zou, X.; Wang, J.; Liu, X. Contrastive learning-based general deepfake detection with multi-scale RGB frequency clues. J. King Saud Univ.-Comput. Inf. Sci. 2023, 35, 90–99. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, H.; Wang, S. Cross-domain local characteristic enhanced deepfake video detection. In Proceedings of the 16th Asian Conference on Computer Vision, Macau SAR, China, 4–8 December 2023; pp. 196–214. [Google Scholar]

- Concas, S.; La Cava, S.M.; Casula, R.; Orrù, G.; Puglisi, G.; Marcialis, G. L. Quality-based artifact modeling for facial deepfake detection in videos. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 17–21 June 2024; pp. 3845–3854. [Google Scholar]

- Pang, G.; Zhang, B.; Teng, Z.; Qi, Z.; Fan, J. MRE-Net: Multi-rate excitation network for deepfake video detection. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 3663–3676. [Google Scholar] [CrossRef]

- Zhang, R.; He, P.; Li, H.; Wang, S.; Cao, Y. Temporal diversified self-contrastive learning for generalized face forgery detection. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 12782–12795. [Google Scholar] [CrossRef]

- Yu, Y.; Ni, R.; Yang, S.; Ni, Y.; Zhao, Y.; Kot, A.C. Mining generalized multi-timescale inconsistency for detecting deepfake videos. Int. J. Comput. Vis. 2024, 1–17. [Google Scholar] [CrossRef]

- Wang, Y.; Peng, C.; Liu, D.; Wang, N.; Gao, X. Spatial-temporal frequency forgery clue for video forgery detection in VIS and NIR scenario. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 7943–7956. [Google Scholar] [CrossRef]

- Wu, J.; Zhang, B.; Li, Z.; Pang, G.; Teng, Z.; Fan, J. Interactive two-stream network across modalities for deepfake detection. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 6418–6430. [Google Scholar] [CrossRef]

- Guera, D.; Delp, E.J. Deepfake video detection using recurrent neural networks. In Proceedings of the 15th IEEE International Conference on Advanced Video and Signal Surveillance, Auckland, New Zealand, 27–30 November 2018; pp. 127–132. [Google Scholar]

- Saikia, P.; Dholaria, D.; Yadav, P.; Patel, V.; Roy, M. A hybrid CNN-LSTM model for video deepfake detection by leveraging optical flow features. In Proceedings of the International Joint Conference on Neural Networks, Padua, Italy, 18–23 July 2024; pp. 1–7. [Google Scholar]

- Chen, B.; Li, T.; Ding, W. Detecting deepfake videos based on spatiotemporal attention and convolutional LSTM. Inf. Sci. 2022, 601, 58–70. [Google Scholar] [CrossRef]

- K, J.; M, A. Safeguarding media integrity: A hybrid optimized deep feature fusion based deepfake detection in videos. Comput. Secur. 2024, 142, 103860. [Google Scholar] [CrossRef]

- Amerini, I.; Caldelli, R. Exploiting prediction error inconsistencies through LSTM-based classifiers to detect deepfake videos. In Proceedings of the ACM Workshop on Information Hiding and Multimedia Security, Denver, CO, USA, 22–24 June 2020; pp. 97–102. [Google Scholar]

- Masi, I.; Killekar, A.; Mascarenhas, R.M.; Gurudatt, S.P. ; Two-branch recurrent network for isolating deepfakes in videos. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 667–684. [Google Scholar]

- Ciamarra, A.; Caldelli, R.; Bimbo, A.D. Temporal surface frame anomalies for deepfake video detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 17–21 June 2024; pp. 3837–3844. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Volume 30, pp. 1–11. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; Uszkoreit, J.; Houlsby, N. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2021, arXiv:2010.11929. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 10–17 October 2021; pp. 9992–10002. [Google Scholar]

- Yu, Y.; Ni, R.; Zhao, Y.; Yang, S.; Xia, F.; Jiang, N.; Zhao, G. MSVT: Multiple spatiotemporal views transformer for deepfake video detection. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 4462–4471. [Google Scholar] [CrossRef]

- Huang, D.; Zhang, Y. Learning meta model for strong generalization deepfake detection. In Proceedings of the International Joint Conference on Neural Networks, Yokohama, Japan, 30 June–5 July 2024; pp. 1–8. [Google Scholar]

- Zhao, C.; Wang, C.; Hu, G.; Chen, H.; Liu, C.; Tang, J. ISTVT: Interpretable spatial-temporal video transformer for deepfake detection. IEEE Trans. Inf. Forensic Secur. 2023, 18, 1335–1348. [Google Scholar] [CrossRef]

- Liu, B.; Liu, B.; Ding, M.; Zhu, T. MeST-former: Motion-enhanced spatiotemporal transformer for generalizable deepfake detection. Neurocomputing 2024, 610, 128588. [Google Scholar] [CrossRef]

- Yue, P.; Chen, B.; Fu, Z. Local region frequency guided dynamic inconsistency network for deepfake video detection. Big Data Min. Anal. 2024, 7, 889–904. [Google Scholar] [CrossRef]

- Zhu, Y.; Zhang, C.; Gao, J.; Sun, X.; Rui, Z.; Zhou, X. High-compressed deepfake video detection with contrastive spatiotemporal distillation. Neurocomputing 2024, 565, 126872. [Google Scholar] [CrossRef]

- Zhang, D.; Xiao, Z.; Li, S.; Lin, F.; Li, J.; Ge, S. Learning natural consistency representation for face forgery video detection. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; pp. 407–424. [Google Scholar]

- Choi, J.; Kim, T.; Jeong, Y.; Baek, S.; Choi, J. Exploiting style latent flows for generalizing deepfake video detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle,WA, USA, 17–21 July 2024; pp. 1133–1143. [Google Scholar]

- Tian, K.; Chen, C.; Zhou, Y.; Hu, X. Illumination enlightened spatial-temporal inconsistency for deepfake video detection. In Proceedings of the IEEE International Conference on Multimedia and Expo, Niagara Falls, ON, Canada, 15–19 July 2024; pp. 1–6. [Google Scholar]

- Tu, Y.; Wu, J.; Lu, L.; Gao, S.; Li, M. Face forgery video detection based on expression key sequences. J. King Saud Univ.-Comput. Inf. Sci. 2024, 36, 102142. [Google Scholar] [CrossRef]

- Xu, Y.; Liang, J.; Sheng, L.; Zhang, X.-Y. Learning spatiotemporal inconsistency via thumbnail layout for face deepfake detection. Int. J. Comput. Vis. 2024, 132, 5663–5680. [Google Scholar] [CrossRef]

- Yang, X.; Li, Y.; Lyu, S. Exposing deep fakes using inconsistent head poses. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Niagara Falls, Brighton, UK, 12–17 May 2019; pp. 8261–8265. [Google Scholar]

- Haliassos, A.; Vougioukas, K.; Petridis, S.; Pantic, M. Lips don’t lie: A generalisable and robust approach to face forgery detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 5037–5047. [Google Scholar]

- Demir, I.; Ciftci, U.A. Where do deep fakes look? Synthetic face detection via gaze tracking. In Proceedings of the ACM Symposium on Eye Tracking Research and Applications, Stuttgart, Germany, 25–27 May 2021; pp. 1–6. [Google Scholar]

- Peng, C.; Miao, Z.; Liu, D.; Wang, N.; Hu, R.; Gao, X. Where deepfakes gaze at? Spatial–temporal gaze inconsistency analysis for video face forgery detection. IEEE Trans. Inf. Forensic Secur. 2024, 19, 4507–4517. [Google Scholar] [CrossRef]

- He, Q.; Peng, C.; Liu, D.; Wang, N.; Gao, X. GazeForensics: Deepfake detection via gaze-guided spatial inconsistency learning. Neural Networks 2024, 180, 106636. [Google Scholar] [CrossRef] [PubMed]

- Qi, H.; Guo, Q.; Juefei-Xu, F.; Xie, X.; Ma, L.; Feng, W.; Liu, Y.; Zhao, J. DeepRhythm: Exposing deepfakes with attentional visual heartbeat rhythms. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; pp. 4318–4327. [Google Scholar]

- Wu, J.; Zhu, Y.; Jiang, X.; Liu, Y.; Lin, J. Local attention and long-distance interaction of rPPG for deepfake detection. Visual Comput. 2024, 40, 1083–1094. [Google Scholar] [CrossRef]

- Yang, J.; Sun, Y.; Mao, M.; Bai, L.; Zhang, S.; Wang, F. Model-agnostic method: Exposing deepfake using pixel-wise spatial and temporal fingerprints. IEEE Trans. Big Data 2023, 9, 1496–1509. [Google Scholar] [CrossRef]

- Saif, S.; Tehseen, S.; Ali, S.S. Fake news or real? Detecting deepfake videos using geometric facial structure and graph neural network. Technol. Forecast. Soc. Chang. 2024, 205, 123471. [Google Scholar] [CrossRef]

- Zhang, R.; Wang, H.; Liu, H.; Zhou, Y.; Zeng, Q. Generalized face forgery detection with self-supervised face geometry information analysis network. Appl. Soft. Comput. 2024, 166, 112143. [Google Scholar] [CrossRef]

- Guan, H.; Kozak, M.; Robertson, E.; Lee, Y.; Yates, A.N.; Delgado, A.; Zhou, D.; Kheyrkhah, T.; Smith, J.; Fiscus, J. MFC datasets: Large-scale benchmark datasets for media forensic challenge evaluation. In Proceedings of the IEEE Winter Applications of Computer Vision Workshops, Waikoloa, HI, USA, 7–11 January 2019; pp. 63–72. [Google Scholar]

- Ciftci, U.A.; Demir, I.; Yin, L. FakeCatcher: Detection of synthetic portrait videos using biological signals. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 1–17. [Google Scholar] [CrossRef]

- Kong, C.; Chen, B.; Yang, W.; Li, H.; Chen, P.; Wang, S. Appearance matters, so does audio: Revealing the hidden face via cross-modality transfer. IEEE Trans. Circuits Syst. Video Technol. 2022, 32, 423–436. [Google Scholar] [CrossRef]

- Wang, Y.; Peng, C.; Liu, D.; Wang, N.; Gao, X. ForgeryNIR: Deep face forgery and detection in near-infrared scenario. IEEE Trans. Inf. Forensic Secur. 2022, 17, 500–515. [Google Scholar] [CrossRef]

- Agarwal, S.; Farid, H.; El-Gaaly, T.; Lim, S.-N. Detecting deep-fake videos from appearance and behavior. In Proceedings of the IEEE International Workshop on Information Forensics and Security, New York City, NY, USA, 6–11 December 2020; pp. 1–6. [Google Scholar]

- Cozzolino, D.; Rössler, A.; Thies, J.; Nießner, M.; Verdoliva, L. ID-Reveal: Identity-aware deepfake video detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 10–17 October 2021; pp. 15088–15097. [Google Scholar]

- Ramachandran, S.; Nadimpalli, A.V.; Rattani, A. An experimental evaluation on deepfake detection using deep face recognition. In Proceedings of the International Carnahan Conference on Security Technology, Pune, India, 11–15 October 2021; pp. 1–6. [Google Scholar]

- Fang, M.; Yu, L.; Song, Y.; Zhang, Y.; Xie, H. IEIRNet: Inconsistency exploiting based identity rectification for face forgery detection. IEEE Trans. Multimed. 2024, 26, 11232–11245. [Google Scholar] [CrossRef]

- Fang, M.; Yu, L.; Xie, H.; Tan, Q.; Tan, Z.; Hussain, A.; Wang, Z.; Li, J.; Tian, Z. STIDNet: Identity-aware face forgery detection with spatiotemporal knowledge distillation. IEEE Trans. Comput. Soc. Syst. 2024, 11, 5354–5366. [Google Scholar] [CrossRef]

- Yu, C.; Zhang, X.; Duan, Y.; Yan, S.; Wang, Z.; Xiang, Y.; Ji, S.; Chen, W. Diff-ID: An explainable identity difference quantification framework for deepfake detection. IEEE Trans. Dependable Secur. Comput. 2024, 21, 5029–5045. [Google Scholar] [CrossRef]

| Datasets | Year | Generator | Number of images (k) | |

| Fake | Ture | |||

| CNNSpot [69] | 2020 | GANs | 362 | 262 |

| Diffusiondb [40] | 2022 | DMs | 14000 | 0 |

| DE-Fake [136] | 2023 | DMs | 20 | 60 |

| Artifact [41] | 2023 | GANs, DMs | 1522 | 962 |

| Cifake [42] | 2024 | DMs | 60 | 60 |

| Genimage [43] | 2024 | GANs, DMs, Others | 133 | 1350 |

| Fake2M [44] | 2024 | GANs, DMs, Others | 2000 | 0 |

| Wildfake [45] | 2024 | GANs, DMs, Others | 2577 | 1313 |

| Dataset | Year | Modality | Real/Fake | Source | Generation Technique |

|---|---|---|---|---|---|

| UADFV | 2018 | Video | 49 / 49 | YouTube | FakeAPP |

| Deepfake TIMIT | 2018 | Video | 320 / 640 | VidTIMIT | FaceSwap |

| FF++ | 2019 | Video | 1000 / 5000 | YouTube | Deepfakes, Face2Face, NeuralTextures, FaceSwap, FaceShifter |

| DFD [54] | 2019 | Video | 363 / 3068 | Live Action | Deepfakes |

| DFDC | 2020 | Video | 23,654 / 104,500 | Live Action | FaceSwap, NTH, FSGAN, StyleGAN |

| DFo [55] | 2020 | Video | 11,000 / 48,475 | YouTube | FaceSwap |

| CDF-(v1, v2) [56] | 2020 | Video | 590 / 5639 | YouTube | DeepFake |

| WDF | 2020 | Video | 3805 / 3509 | Internet | Internet |

| KoDF [57] | 2021 | Image | 175,776 / 62,166 | Live Action | FaceSwap, DeepFaceLab, FSGAN, FOMM, ATFHP, Wav2Lip |

| OpenForensics [58] | 2021 | Image | 45473 / 70325 | Google Open Images | GAN |

| FFIW10k [59] | 2021 | Video | 10,000 / 10,000 | Live Action | FaceSwap, FSGAN, DeepFaceLab |

| DFDM [60] | 2022 | Video | 590 / 6450 | YouTube | Facewap |

| DF-Platter [61] | 2023 | Video | 764 / 132,496 | YouTube | FSGAN, FaceSwap, FaceShifter |

| Diffusion Deepfake [62] | 2024 | Image | 94120 / 112,627 | DiffusionDB | Diffusion Model |

| Prediction Label | Positive | Negative | |

|---|---|---|---|

| True Label | |||

| Positive | True Positive () | False Negative () | |

| Negative | False Positive () | True Negative () | |

| Ref. | Year | Method | Advantage | Deficiency |

| Yu et al. [65] | 2019 | Fingerprint attribution | Trace the image back to the specific generative model | More complex computation when there are many models |

| Wang et al. [69] | 2020 | Data augmentation | The generalization ability of GAN-generated images is good | Poor generalization ability on diffusion models |

| Jeon et al. [70] | 2020 | Teacher-student model | Transferable model and good detection accuracy | Poor generalization ability on diffusion models |

| Chai et al. [71] | 2020 | Image patch | Extracting local features of the image | Ignoring global information |

| Mi et al. [72] | 2020 | Self-attention mechanism | Focus on the artifact regions of the features | Some generative models do not use upsampling operations |

| Girish et al. [75] | 2021 | Fingerprint attribution | Good generalization to unseen GANs | As the number of generative models increases, the computational cost grows |

| Liu et al. [76] | 2022 | Noise pattern | Good generalization | The noise information in the compressed image affect detection performance |

| Jeong et al. [77] | 2022 | Fingerprint recognition | Only real images are needed for training, avoiding data dependency | As the number of generative models increases, the computational load grows |

| Tan et al. [81] | 2023 | Gradient feature | Excellent detection performance on GAN-generated images | Poor detection performance on non-GAN generated images |

| Ojha et al. [82] | 2023 | CLIP model | CLIP demonstrates good generalization capability in detecting generated images | The method is simple, and the accuracy is not high |

| Wang et al. [84] | 2023 | Reconstruction error | Performs well in detecting on diffusion models | Performs poorly on non-diffusion models |

| Tan et al. [88] | 2024 | Pixel correlation | Simple to compute, with good generalization | Relies on upsampling operations, with limitations |

| Lim et al. [89] | 2024 | Reconstruction error | Lightweight network, faster computation | Has limitations for diffusion models |

| Yan et al. [90] | 2024 | Data augmentation | The method can be combined with other networks to improve generalization | It causes the computation time of other networks to increase |

| Chen et al. [92] | 2024 | Image reconstruction | The method can be combined with other detectors | Additional reconstruction dataset is required |

| Ref. | Year | Method | Advantage | Deficiency |

|---|---|---|---|---|

| He et al. [99] | 2019 | Chrominance components | Strong robustness | Limited generalization |

| Chandrasegaran et al. [100] | 2022 | Relevance statistic | Discover that color is a critical feature in universal detectors | Images generated by diffusion models are similar to real images in terms of color |

| Uhlenbrock et al. [101] | 2024 | Color statistics | High accuracy | Not tested on GAN datasets |

| Qiao et al. [102] | 2024 | Co-occurrence matrix | Exhibits strong robustness | The experiment is simple |

| Ref. | Year | Method | Advantage | Deficiency |

|---|---|---|---|---|

| Liu et al. [103] | 2019 | Texture differences | Using texture differences for generated image detection | Limited generalization |

| Yang et al. [104] | 2021 | Multi-scale texture | Extract multi-scale and deep texture information from the image | The network is complex and computationally intensive |

| Zhong et al. [105] | 2023 | Texture contrast | Good generalization ability | Dependent on the high-frequency components of the image |

| Zhang et al. [106] | 2024 | Deep LBP network | Extract depth texture information | The experiment is simple and unable to validate the performance of the method |

| Ref. | Year | Method | Advantage | Deficiency |

|---|---|---|---|---|

| Lorenz et al. [107] | 2023 | Local intrinsic dimensionality | Good performance in diffusion models | Dependent on data augmentation |

| Lin et al. [108] | 2023 | Genetic programming | Can improve accuracy to some extent | Limited generalization ability |

| Sarkar et al. [109] | 2024 | Projective geometry | Having some level of generalization | Lacks effective defense against some attacks |

| Cozzolino et al. [110] | 2024 | Coding cost | Good generalization ability | Weak robustness |

| Ref. | Year | Method | Advantage | Deficiency |

|---|---|---|---|---|

| Zhang et al. [112] | 2019 | Frequency artifacts | Detect frequency differences between real and fake images | Limited generalization |

| Frank et al. [113] | 2020 | 2D-DCT | Discover that color is a critical feature in universal detectors | Images generated by diffusion models are similar to real images in terms of color |

| Durall et al. [114] | 2020 | High-frequency fourier modes | Transferable model and good detection accuracy | Poor generalization ability on diffusion models |

| Corvi et al. [119] | 2023 | Training DM’s images | Enhancing the performance of detecting diffusion model images | With the emergence of new generative models, updates are continuous |

| Tan et al. [123] | 2024 | Frequency learning | It can learn features unrelated to the generative model, enhancing the model’s generalization ability | High computational cost and time-consuming |

| Doloriel et al. [124] | 2024 | Frequency mask | Less dependence on detector data | The mask size affects the performance of the detector |

| Ref. | Year | Method | Advantage | Deficiency |

|---|---|---|---|---|

| Yu et al. [126] | 2022 | Channel and spectrum difference | Effectively mine intrinsic features | Limited generalization ability |

| Luo et al. [128] | 2024 | Reconstruction error | Able to extract refined features from images | Poor performance on non-diffusion models |

| Lanzino et al. [129] | 2024 | Three types of feature fusion | Capture multiple features of the image with a simple network | Weak resistance to adversarial attacks |

| Xu et al. [133] | 2024 | Deep trace feature fusion | Good generalization performance | Complex network with long computation time |

| Leporoni et al. [134] | 2024 | RGB-depth integration | RGB features capable of extracting depth | Weak resistance to adversarial attacks |

| Ref. | Year | Method | Advantage | Deficiency |

|---|---|---|---|---|

| Wu et al. [137] | 2024 | Contrastive learning | Transform the synthetic image detection problem into a recognition problem | Text description affects the performance of the detector |

| Liu et al. [138] | 2024 | Forgery aware adaptive | Strong generalization ability | Computationally intensive and time-consuming |

| Cazenavette et al. [139] | 2024 | Inverting stable diffusion | Good detection accuracy | Limited in the context of stable diffusion |

| Keita et al. [141] | 2024 | Technical optimizations | Combining BLIP and LoRA to enhance accuracy | Computationally intensive and time-consuming |

| Sha et al. [143] | 2024 | Image reconstruction | Requires no large training data and has good robustness | Mainly focused on diffusion models, with certain limitations |

| Ref. | Year | Key Idea | Backbone | Dataset |

|---|---|---|---|---|

| Shiohara et al.[147] | 2022 | Synthetic data | EfficientNetB4 | FF++, CDF, DFD, DFDCp [157], DFDC, FFIW10k |

| Bai et al.[154] | 2024 | Regional noise inconsistency | Xception | FF++, CDF, DFDC |

| Gao et al.[151] | 2024 | Feature decomposition | Convolution layer | FF++, WDF, CDF, DFDC |

| Lu et al.[152] | 2024 | Long-distance attention | Xception | FF++, CDF |

| Lin et al.[149] | 2024 | Synthetic data, curriculum learning | Transformer | FF++, CDF, DFDCp, DFDC, WDF |

| Ref. | Year | Transform Type | Backbone | Dataset |

|---|---|---|---|---|

| Qian et al.[159] | 2020 | DCT | Xception | FF++ |

| Li et al.[160] | 2021 | DCT | Xception | FF++ |

| Miao et al.[162] | 2023 | DWT | Transformer | FF++, CDF, DFDC, Deepfake TIMIT |

| Zhao et al.[165] | 2023 | FFT | Convolution and attention layer | FF++-Deepfakes, FFHQ, CelebA |

| Gao et al.[161] | 2024 | DCT, DWT | Convolution and fusion layer | FF++, CDF, OpenForensics |

| Hasanaath et al.[164] | 2024 | DWT | EfficientNetB5 | FF++, CDF |

| Li et al.[163] | 2024 | DWT | Transformer | FF++, CDF, DFDC, Deepfake TIMIT, DFo |

| Ref. | Year | Information Source | Fusion Method | Backbone | Dataset |

|---|---|---|---|---|---|

| Luo et al. [172] | 2021 | RGB, SRM noise | Concatenation | Xception | FF++, DFD, DFDC, CDF, DFo |

| Fei et al. [174] | 2022 | RGB, SRM noise | Attention-guided | ResNet-18 | FF++, CDF, DFD |

| Wang et al. [166] | 2023 | RGB, DWT | Concatenation | Xception | FF++, CDF, UADFV |

| Guo et al. [171] | 2024 | RGB, High-frequency | Interaction, concatenation | ResNet-26 | HFF , FF++, DFDC, CDF |

| Wang et al. [170] | 2024 | RGB, DCT | Attention-guided | Xception | FF++, CDF |

| Wang et al. [167] | 2024 | RGB, DWT, Residual feature | Attention-guided | ResNet-34 | FF++, CDF, UADFV, DFD |

| Zhang et al. [173] | 2024 | RGB, SRM noise | Attention-guided | EfficientNet | FF++, DFDC, CDF, WDF |

| Zhou et al. [168] | 2024 | RGB, FFT | Multihead-attention | EfficientNetB4 | FF++, CDF, WDF |

| Ref. | Year | Improved method | Backbone | Dataset |

|---|---|---|---|---|

| Liu et al.[176] | 2023 | Local attention augmentation | 3D ResNet-50 | FF++, CDF, DFDC |

| Pang et al.[178] | 2023 | Sampling strategy | ResNet-34 | FF++, CDF, DFDC, WDF |

| Wang et al.[181] | 2023 | Attention augmentation | ResNet-50 | FF++, CDF, WDF, DeepfakeNIR |

| Concas et al.[177] | 2024 | Quality feature | Convolution layer | FF++ |

| Yu et al.[180] | 2024 | Multilevel spatio-temporal features | ResNet-50, GCN | FF++, DFD, DFDC, CDF, DFo |

| Zhang et al.[179] | 2024 | Sampling strategy | EfficientNetB3 | FF++, CDF, DFDC, WDF |

| Ref. | Year | Network Architecture Design | Input Design | Dataset |

|---|---|---|---|---|

| Yu et al.[193] | 2023 | ✕ | ✔ | FF++, DFD, DFDC, DFo, CDF, WDF |

| Zhao et al.[195] | 2023 | ✔ | ✕ | FF++, CDF, DFDC |

| Choi et al.[200] | 2024 | ✕ | ✔ | FF++, DFo, CDF, DFD |

| Liu et al.[196] | 2024 | ✕ | ✔ | DFGC, FF++, DFo, CDF, DFD, UADFV |

| Tian et al.[201] | 2024 | ✕ | ✔ | FF++, CDF, DFDC |

| Tu et al.[202] | 2024 | ✕ | ✔ | FF++ |

| Xu et al.[203] | 2024 | ✔ | ✔ | FF++, CDF, DFDC, DFo, WDF, KoDF, DLB |

| Yue et al.[197] | 2024 | ✕ | ✔ | FF++, CDF, DFDC, DiffFace, DiffSwap |

| Ref. | Year | Biosignal Type | Backbone | Dataset |

|---|---|---|---|---|

| Yang et al. [204] | 2019 | Head poses | SVM | UADFV, DARPA GAN [214] |

| Qi et al. [209] | 2020 | rPPG | DNN, GRU | FF++, DFDCp |

| Demir and Ciftci [206] | 2021 | Eye, gaze | DNN | FF++, DF Datasets [215], CDF, DFo |

| Haliassos et al. [205] | 2021 | Mouth movements | ResNet-18, MS-TCN | FF++, CDF, DFDC |

| Yang et al. [211] | 2023 | CPPG | ACBlock-based DenseNet | FF++, FF, DFDC, CDF, FakeAVCeleb [216] |

| Peng et al. [207] | 2024 | Gaze | ResNet-34,ResNet-50, Res2Net-101 | FF++, WDF, CDF, DFDCp |

| He et al. [208] | 2024 | Gaze | ResNet-18 | FF++, WDF, CDF |

| Saif et al. [212] | 2024 | Facial landmarks | GCN | FF++, CDF, DFDC |

| Wu et al. [210] | 2024 | rPPG | MLA, Transformer | FF++, CDF |

| Zhang et al. [213] | 2024 | Facial landmarks, informative regions | GCN | FF++, CDF, WDF, DFDCp, DFD, DFo, ForgeryNIR [217] |

| Ref. | Year | Key Idea | FF++ | CDF | DFDCp |

|---|---|---|---|---|---|

| Cozzolino et al. [219] | 2021 | Adversarial training | — | 0.840 | 0.910 |

| Fang et al. [221] | 2024 | Identity bias rectification | 0.996 | 0.945 | 0.983 |

| Fang et al. [222] | 2024 | Multi-modal knowledge distillation | 0.958 | 0.921 | 0.994 |

| Yu et al. [223] | 2024 | Attribute alignment | 0.991 | 0.911 | — |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).