Submitted:

17 January 2025

Posted:

20 January 2025

You are already at the latest version

Abstract

Keywords:

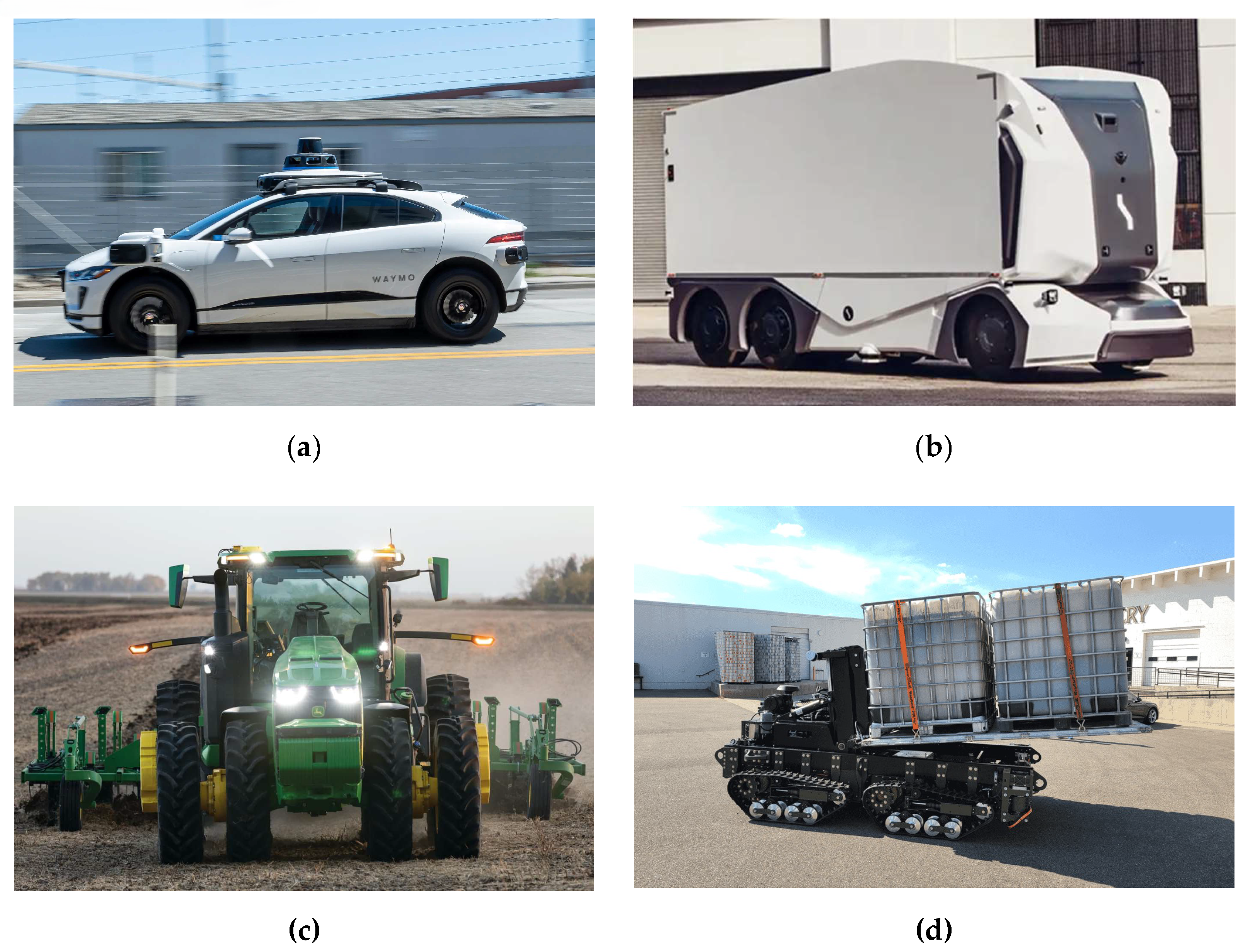

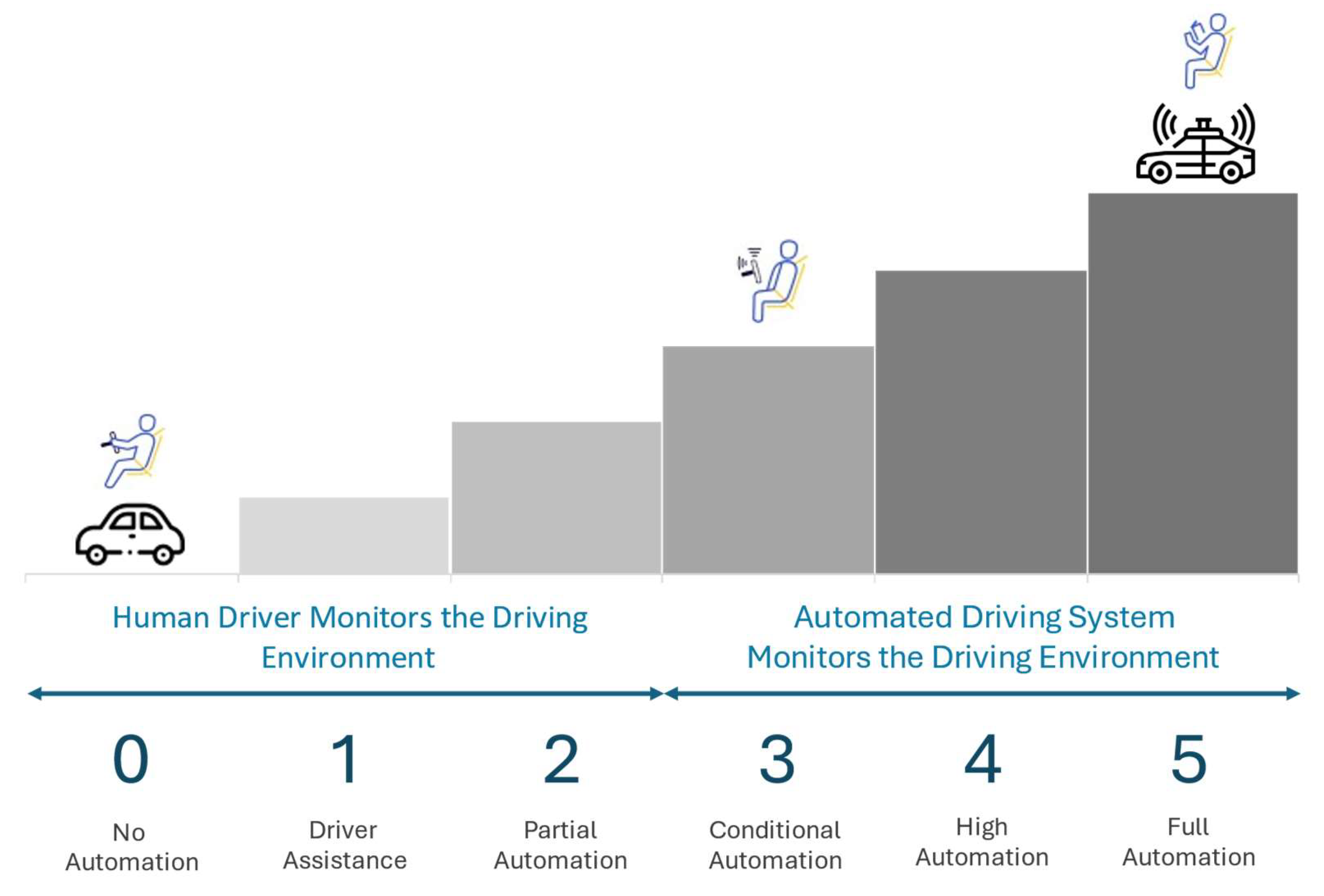

1. Introduction

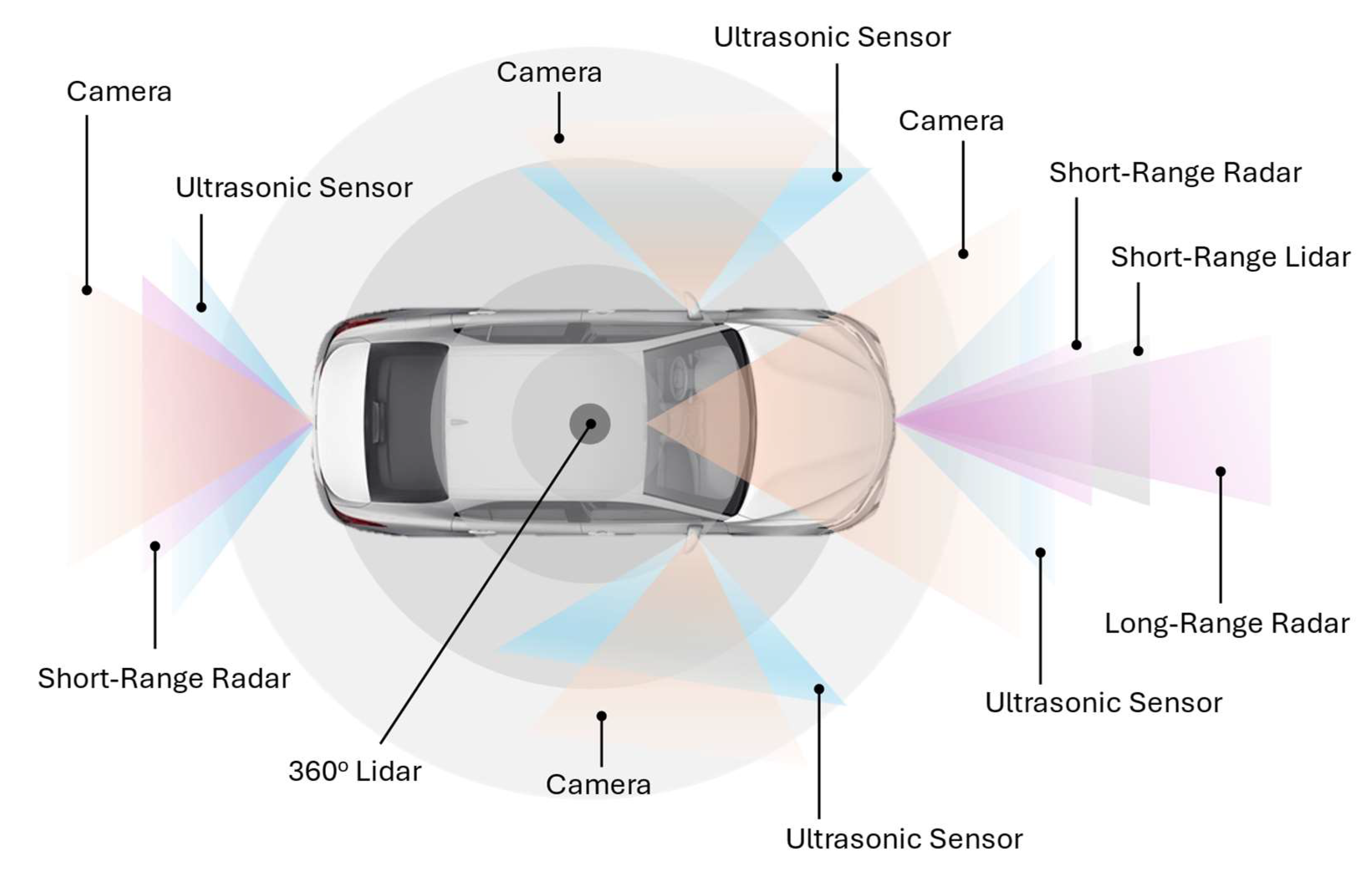

2. Multi-Sensor Fusion in Autonomous Vehicles

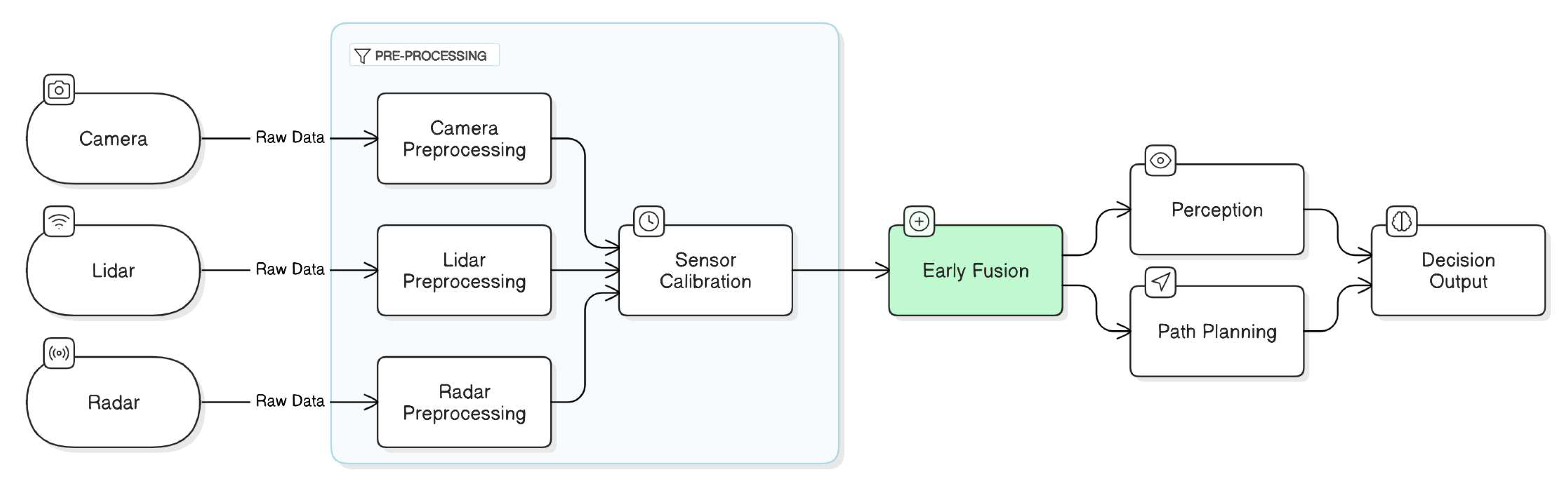

2.1. Multi-Sensor Fusion Approaches

2.1.1. Low-Level Fusion

2.1.2. Mid-Level Fusion

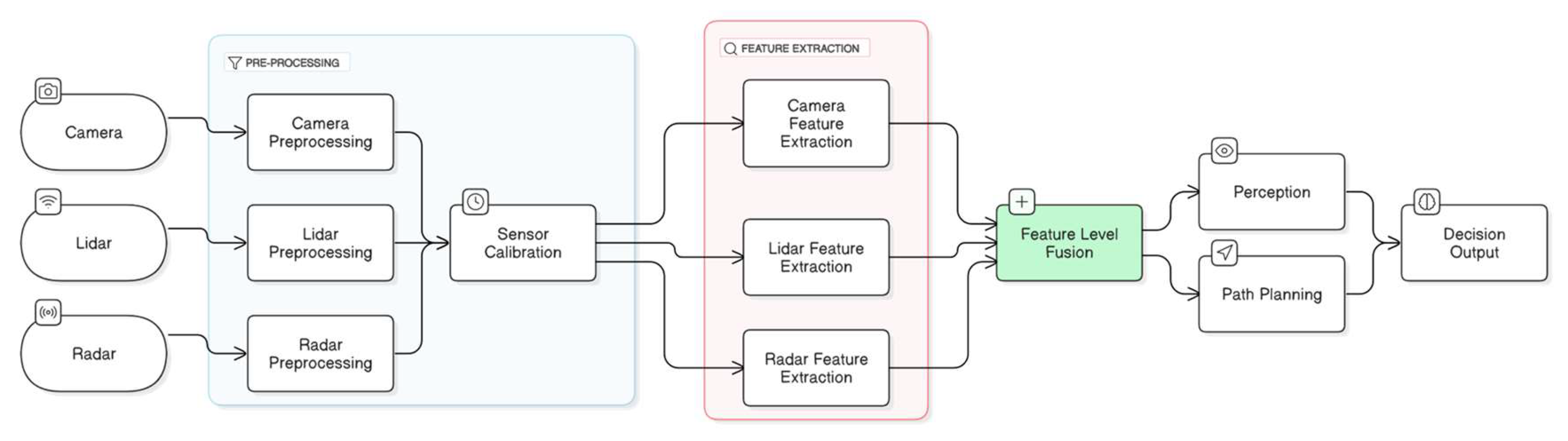

2.1.3. High-Level Fusion

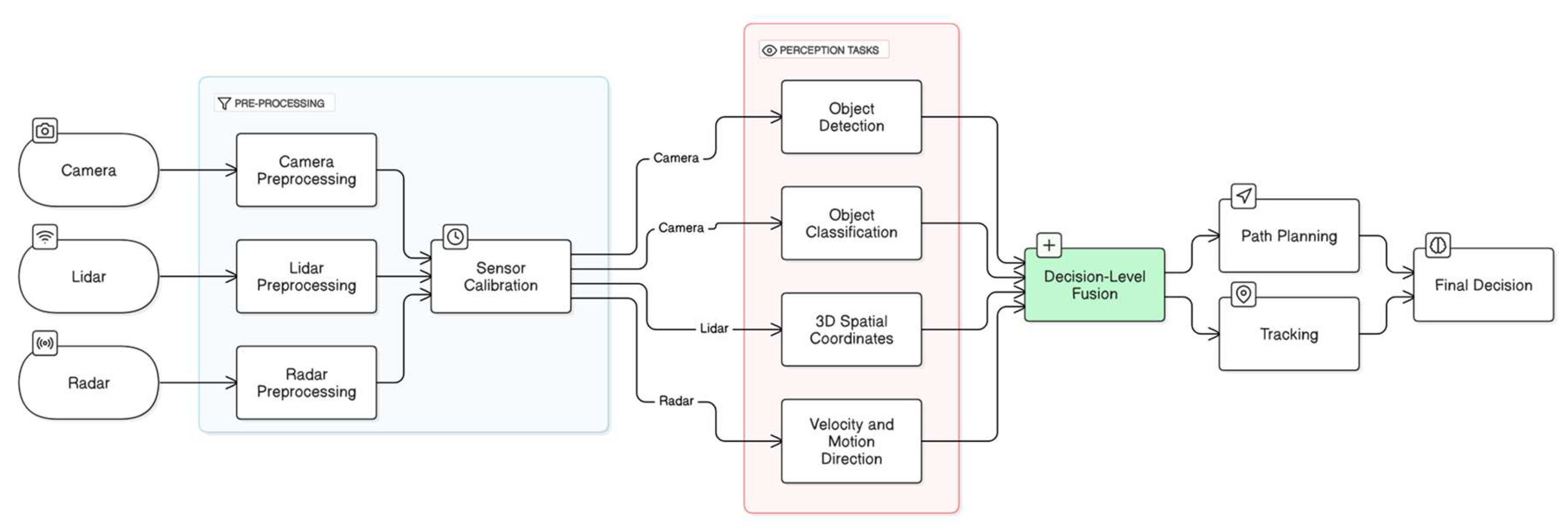

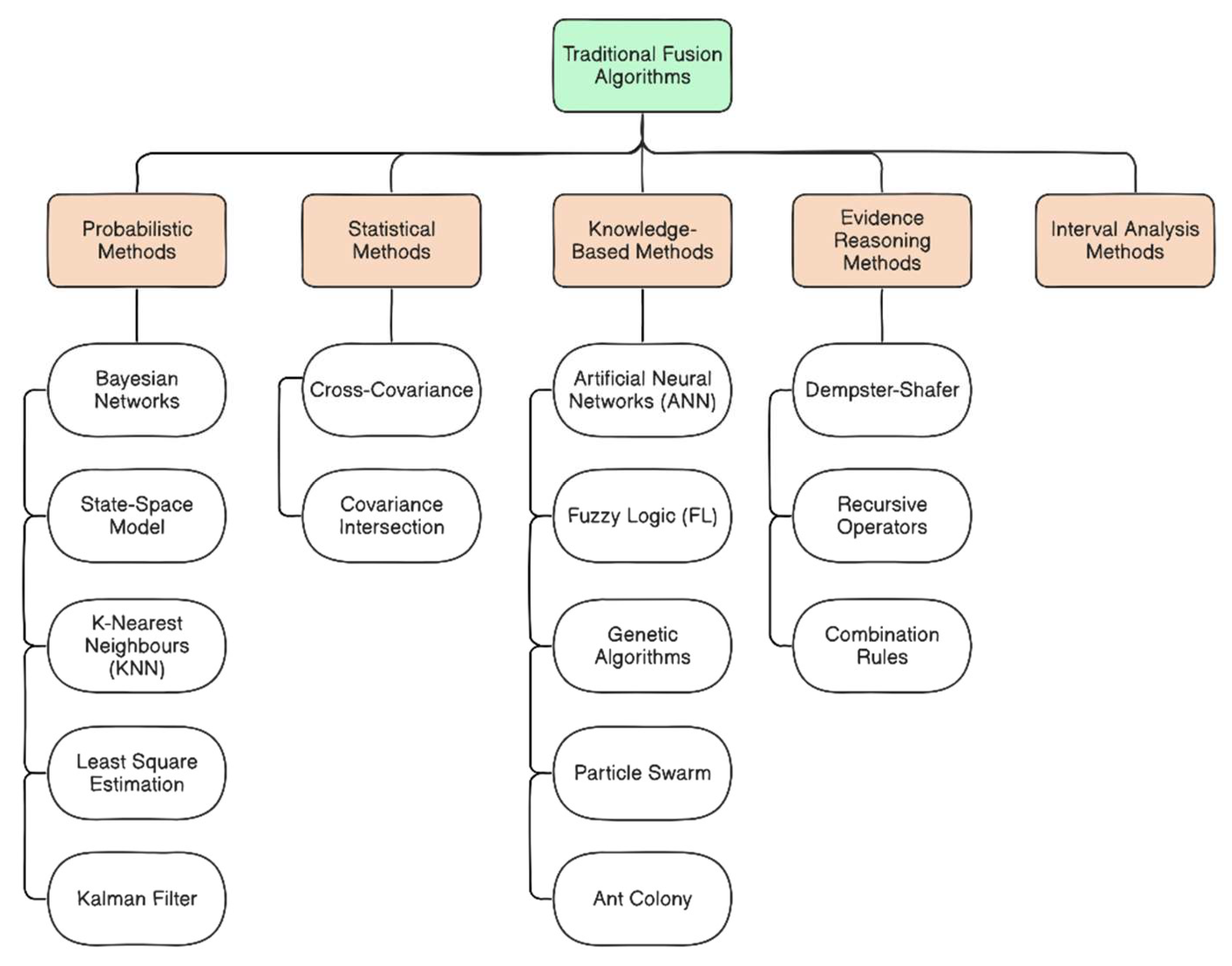

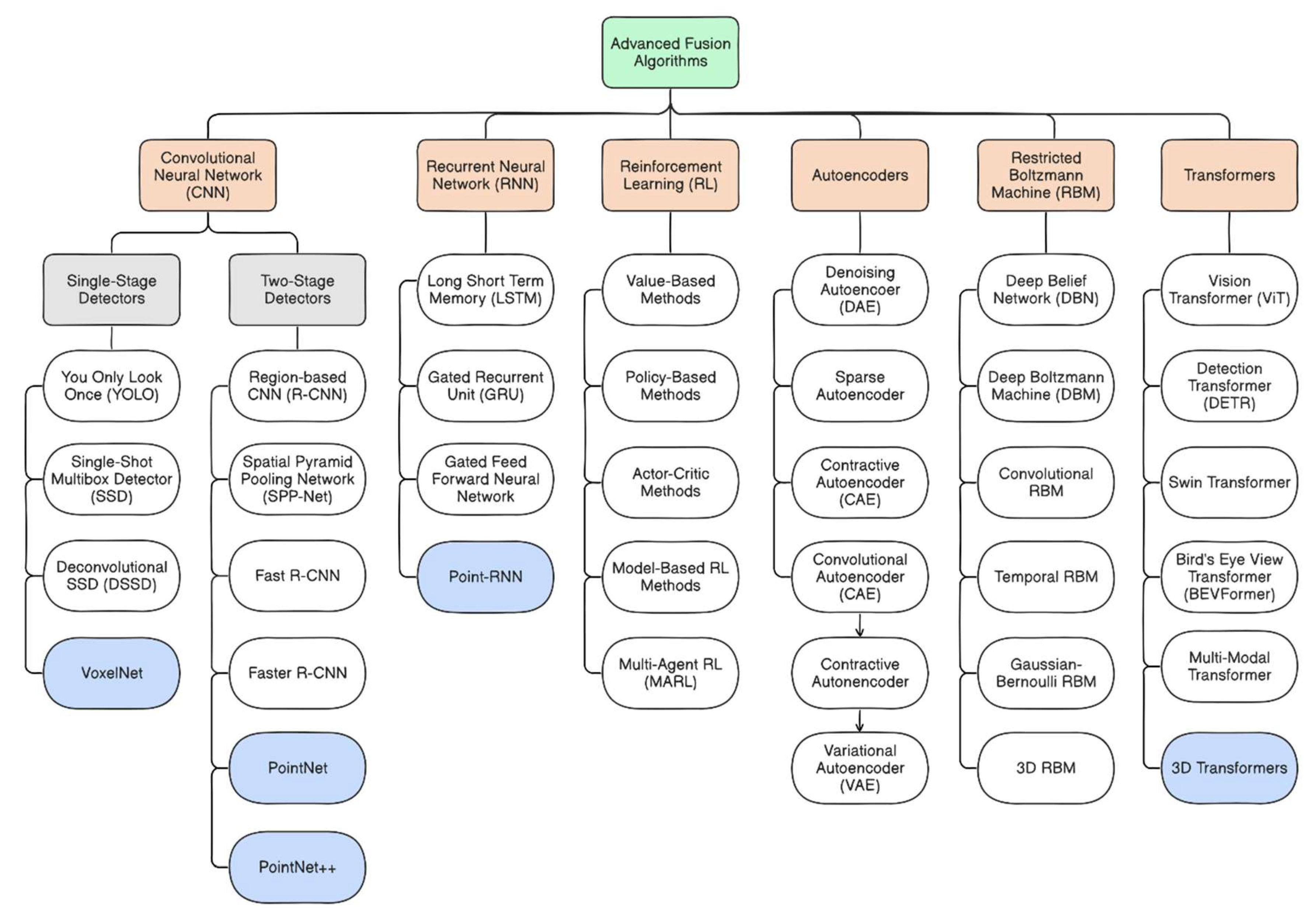

2.2. Fusion Techniques and Algorithms

2.3. Challenges in Multi-Sensor Fusion

3. Explainable Artificial Intelligence (XAI)

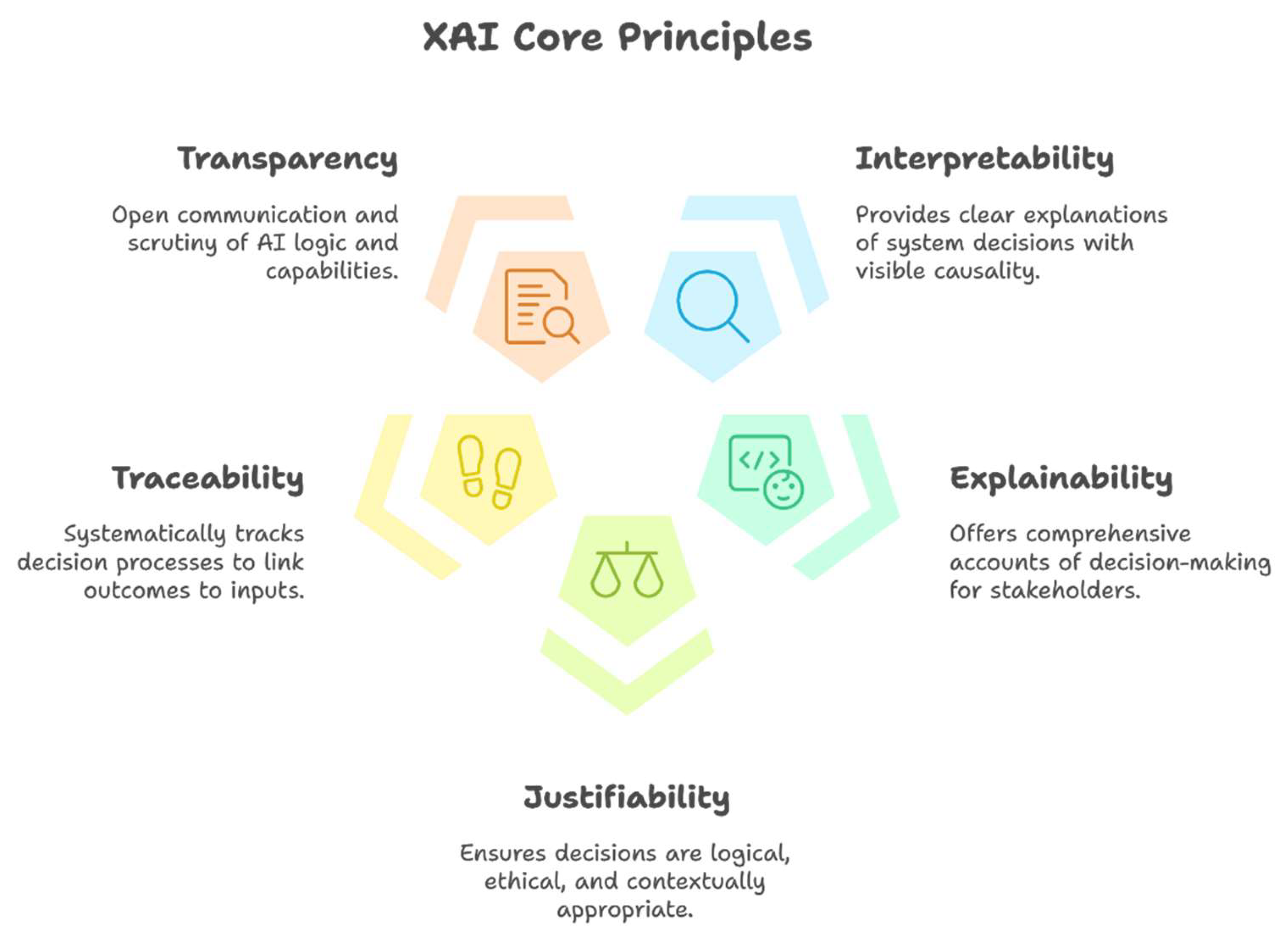

- Interpretability. It is defined as the ability to explain or to provide clear and comprehensible explanations of the actions and decisions made by the autonomous driving system to relevant stakeholders. It is often deliberated that interpretable systems are more suitable for safety-critical applications, as such systems provide a clear and observable chain of casualties that explains the decision-making processes [173].

- Explainability. It is associated with the concept of explanation as a means of providing an interface between humans and a decision-making system that is both an accurate representation of the decision-making process and comprehensive to stakeholders [174]. In essence, explainable systems can provide a clear and detailed account of how and why the decision was made.

- Justifiability. It signifies the capability of an artificial intelligence (AI) system to provide logical, ethical, and contextually appropriate reasons for its decisions (outcome) and ensuring alignment with ethical guidelines, user trusts, and accountability [175]. In essence, justifiability ensures that the AI decision made are justifiable and reasonable based on the given data and context. Several approaches can be used to achieve justifiability, including utilizing interpretable models, incorporating post-hoc explanation tools, and involving human experts to review and validate AI decisions [175].

- Traceability. It refers to the systematic tracking and documentation of the entire decision-making process of an AI system, ensuring that each action or outcome is traceable to its corresponding inputs, processing steps, reasoning, and outcomes. As a result, any anomalies or errors can be precisely identified and addressed, which is particularly essential in critical situations such as collisions or near-miss events.

- Transparency. It involves designing and developing an AI system where the underlying logic, rules, and algorithms governing the decision-making process can be scrutinized and comprehended by all stakeholders. It also involves open and clear communication with stakeholders about the decision-making criteria, functions, capabilities, and limitations of an AI system, e.g., autonomous driving system.

- Simulatability denotes the ability to simulate the behavior of an ML model through interactive experimentation or human understanding. It enables users to replicate or anticipate the decisions made without necessitating in-depth technical knowledge of its underlying mechanisms or internal architecture. In this aspect, a model is considered simulatable if it can be effectively presented to stakeholders utilizing text, visualizations, or other accessible representations. Furthermore, a simulatable model enables users to reasonably anticipate its outputs based on a given set of inputs, fostering a more intuitive grasp of its decision-making processes [187].

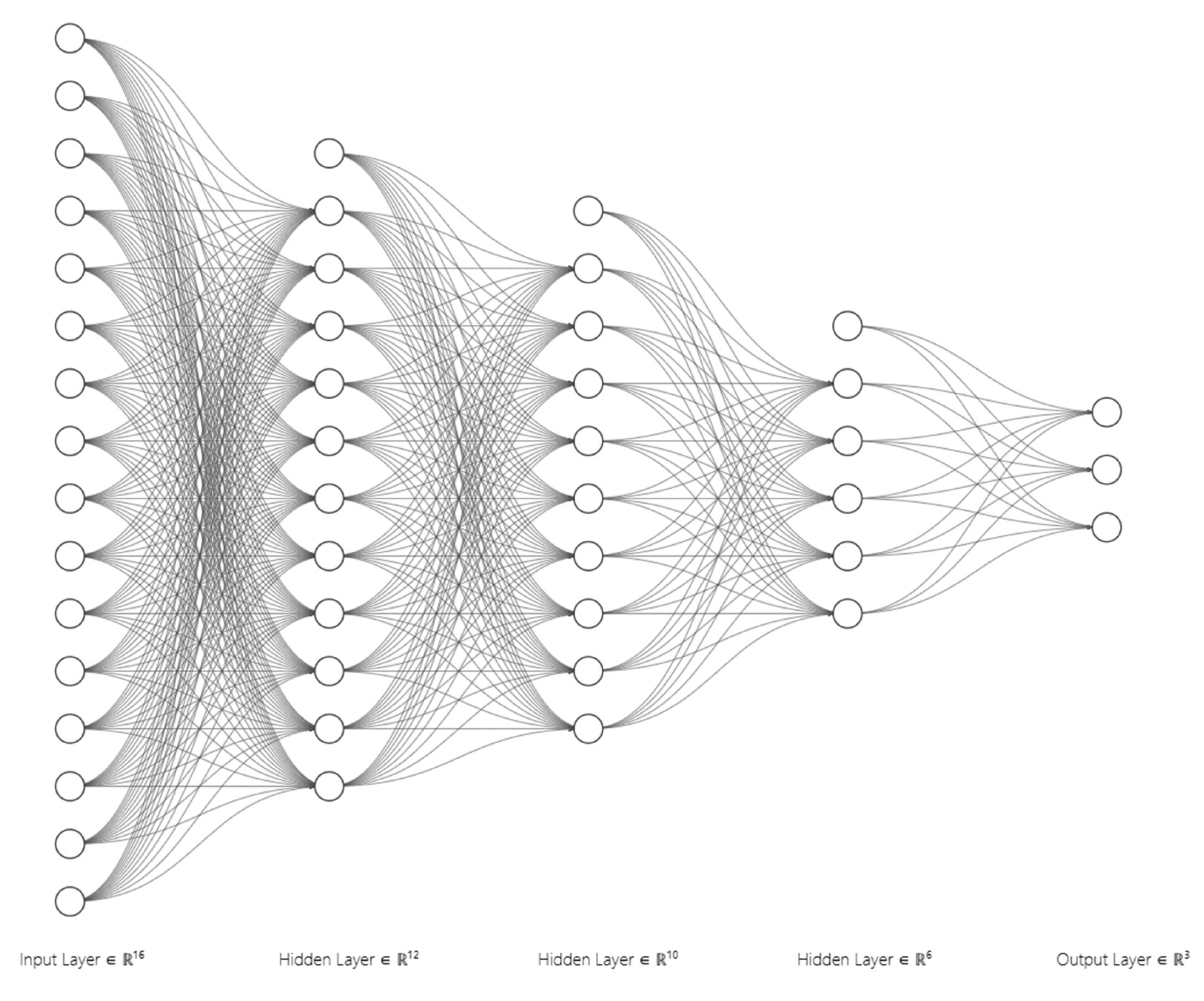

- Decomposability refers to the ability to disaggregate an ML model into smaller and interpretable components, such as inputs, parameters, and computations. In essence, decomposability signifies the capability to explain the functioning of a model by examining its constituent elements, providing clarity about how specific inputs influence the outputs, how parameters are optimized, and how intermediate calculations are carried out to reach a final decision. For example, decomposability enables engineers to isolate and explain the contribution of individual subcomponents in autonomous driving, including object detection, trajectory planning, and control systems, which is critical for technical debugging, model refinement, and ensuring compliance with legal and ethical standards. However, in practice, achieving decomposability in intricate ML models, such as DNNs, can be challenging due to their non-linear relationships and the distributed nature of their data representations [169,188].

- Algorithmic transparency, as the name suggests, pertains to the extent to which the internal workings and decision-making processes of an algorithm can be clearly understood, elucidated, and scrutinized. In essence, it emphasizes the visibility of how an algorithm operates, from its initial design through to its decision outputs. In practical terms, algorithmic transparency ensures that the reasoning behind the algorithm decisions can be traced back to its underlying mathematical or computational principles, which are indispensable in identifying and rectifying potential biases, addressing embedded biases, and uncovering unintended behaviors that could compromise the precision and integrity of an ML system. In autonomous driving, understanding the decision-making processes of algorithms, such as how a vehicle decides when to stop or how it identifies and avoids obstacles, is vital in ensuring safety and adherence to regulatory standards. However, the main limitation of algorithmically transparent models is that these models must be fully accessible for analysis using mathematical methods, which is challenging for deep architectures due to the opaque nature of their loss landscapes (multiple interconnected hidden layers) [169,189,190,191,192].

3.1. XAI Strategies and Techniques

3.2. Roles of XAI in Autonomous Vehicles and its Challenges

4. Conclusions and Future Research Recommendations

- Sensor noise, which relates to the inaccuracies, inconsistencies, or irrelevant data introduced by individual sensors due to a combination of hardware limitations, external interference, or environmental conditions.

- Heterogeneity of sensor modalities in AVs and the resulting system complexity.

- Achieving an optimal balance between accuracy and computational efficiency.

- Multi-sensor fusion systems are susceptible to malicious attacks, which pose significant risk to the integrity and reliability of their autonomous operation.

- Lack of transparency, explainability, and interpretability in black-box AI models, especially in advanced DNN algorithms.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| 3D | Three Dimensional |

| AI | Artificial Intelligence |

| AI HLEG | High-Level Expert Group on AI |

| AV | Autonomous Vehicles |

| BEV | Bird’s-Eye View |

| BRL | Bayesian Rule Lists |

| CNN | Convolutional Neural Networks |

| DeepLIFT | Deep Learning Important Features |

| DL | Deep Learning |

| DNN | Deep Neural Network |

| DST | Dempster-Shafer Theory |

| EC | European Commission |

| EM | Expectation-Maximization |

| Faster R-CNN | Faster Region-Convolutional Neural Network |

| GAM | Generalized Additive Model |

| GNSS | Global Navigation Satellite System |

| GPS | Global Positioning System |

| GPU | Graphics Processing Unit |

| Grad-CAM | Gradient-weighted Class Activation Mapping |

| HD | High-Definition |

| HLF | High-Level Fusion |

| IMU | Inertial Measurement Unit |

| IoU | Intersection over Union |

| KF | Kalman Filter |

| KNN | K-Nearest Neighbors |

| LIME | Local Interpretable Model-Agnostic Explanations |

| LLF | Low-Level Fusion |

| LLM | Large Language Model |

| ML | Machine Learning |

| MLF | Mid-Level Fusion |

| MMLF | Mult-modal Multi-class Late Fusion |

| NMS | Non-Maximum Suppression |

| PDP | Partial Dependency Plots |

| PF | Particle Filter |

| RBM | Restricted Boltzmann Machine |

| RL | Reinforcement Learning |

| RMG | Rail Mounted Gantry |

| RNN | Recurrent Neural Networks |

| RPN | Region Proposal Network |

| SAE | Society of Automation Engineers |

| SCFT | Spatio-Contextual Fusion Transformer |

| SHAP | Shapley Additive Explanations |

| SPA | Soft Polar Association |

| TPU | Tensor Processing Unit |

| UKF | Unscented Kalman Filter |

| XAI | sExplainable Artificial Intelligence |

References

- Vemoori, V. Towards Safe and Equitable Autonomous Mobility: A Multi-Layered Framework Integrating Advanced Safety Protocols, Data-Informed Road Infrastructure, and Explainable AI for Transparent Decision-Making in Self-Driving Vehicles. Human-Computer Interaction Persp. 2022, 2, 10–41. [Google Scholar]

- Olayode, I.O.; Du, B.; Severino, A.; Campisi, T.; Alex, F.J. Systematic Literature Review on the Applications, Impacts, and Public Perceptions of Autonomous Vehicles in Road Transportation System. J. Traffic Transp. Eng. Engl. Ed. 2023, 10, 1037–1060. [Google Scholar] [CrossRef]

- Velasco-Hernandez, G.; Yeong, D.J.; Barry, J.; Walsh, J. Autonomous Driving Architectures, Perception and Data Fusion: A Review. In Proceedings of the 2020 IEEE 16th International Conference on Intelligent Computer Communication and Processing (ICCP); September 2020; pp. 315–321.

- Rondelli, V.; Franceschetti, B.; Mengoli, D. A Review of Current and Historical Research Contributions to the Development of Ground Autonomous Vehicles for Agriculture. Sustainability 2022, 14, 9221. [Google Scholar] [CrossRef]

- Chen, L.; Li, Y.; Silamu, W.; Li, Q.; Ge, S.; Wang, F.-Y. Smart Mining With Autonomous Driving in Industry 5.0: Architectures, Platforms, Operating Systems, Foundation Models, and Applications. IEEE Trans. Intell. Veh. 2024, 9, 4383–4393. [Google Scholar] [CrossRef]

- Molina, A.A.; Huang, Y.; Jiang, Y. A Review of Unmanned Aerial Vehicle Applications in Construction Management: 2016–2021. Standards 2023, 3, 95–109. [Google Scholar] [CrossRef]

- Olapoju, O.M. Autonomous Ships, Port Operations, and the Challenges of African Ports. Marit. Technol. Res. 2023, 5, 260194–260194. [Google Scholar] [CrossRef]

- Naeem, D.; Gheith, M.; Eltawil, A. A Comprehensive Review and Directions for Future Research on the Integrated Scheduling of Quay Cranes and Automated Guided Vehicles and Yard Cranes in Automated Container Terminals. Comput. Ind. Eng. 2023, 179, 109149. [Google Scholar] [CrossRef]

- Negash, N.M.; Yang, J. Driver Behavior Modeling Toward Autonomous Vehicles: Comprehensive Review. IEEE Access 2023, 11, 22788–22821. [Google Scholar] [CrossRef]

- Zhao, J.; Zhao, W.; Deng, B.; Wang, Z.; Zhang, F.; Zheng, W.; Cao, W.; Nan, J.; Lian, Y.; Burke, A.F. Autonomous Driving System: A Comprehensive Survey. Expert Syst. Appl. 2024, 242, 122836. [Google Scholar] [CrossRef]

- Yeong, D.J.; Velasco-Hernandez, G.; Barry, J.; Walsh, J. Sensor and Sensor Fusion Technology in Autonomous Vehicles: A Review. Sensors 2021, 21, 2140. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Carballo, A.; Yang, H.; Takeda, K. Perception and Sensing for Autonomous Vehicles under Adverse Weather Conditions: A Survey. ISPRS J. Photogramm. Remote Sens. 2023, 196, 146–177. [Google Scholar] [CrossRef]

- Waymo - Self-Driving Cars - Autonomous Vehicles - Ride-Hail. Available online: https://waymo.com/ (accessed on 25 October 2024).

- Einride - Intelligent Movement. Available online: https://einride.tech/ (accessed on 21 October 2024).

- Automated RMG (ARMG) System | Konecranes. Available online: https://www.konecranes.com/port-equipment-services/container-handling-equipment/automated-rmg-armg-system (accessed on 21 October 2024).

- Autonomous Tractor | John Deere US. Available online: https://www.deere.com/en/autonomous/ (accessed on 21 October 2024).

- Autonomous Pallet Loader - Stratom. Available online: https://www.stratom.com/apl/ (accessed on 21 October 2024).

- FLIR Thermal Imaging Enables Autonomous Inspections of Mining Vehicles; 2020.

- Ludlow, E. Waymo Sets Its Sights on ‘Premium’ Robotaxi Passengers. Bloomberg.com 2024.

- SAE J3016 Automated-Driving Graphic. Available online: https://www.sae.org/site/news/2019/01/sae-updates-j3016-automated-driving-graphic (accessed on 22 October 2024).

- J3016_202104: Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles - SAE International. Available online: https://www.sae.org/standards/content/j3016_202104/ (accessed on 22 October 2024).

- Infographic: Cars Increasingly Ready for Autonomous Driving. Available online: https://www.statista.com/chart/25754/newly-registered-cars-by-autonomous-driving-level (accessed on 22 October 2024).

- Ackerman, E. What Full Autonomy Means for the Waymo Driver - IEEE Spectrum. Available online: https://spectrum.ieee.org/full-autonomy-waymo-driver (accessed on 22 October 2024).

- SAE Levels of Driving AutomationTM Refined for Clarity and International Audience. Available online: https://www.sae.org/site/blog/sae-j3016-update (accessed on 22 October 2024).

- Press, R. SAE Levels of Automation in Cars Simply Explained (+Image). Available online: https://www.rambus.com/blogs/driving-automation-levels/ (accessed on 28 October 2024).

- Raciti, M.; Bella, G. A Threat Model for Soft Privacy on Smart Cars 2023.

- Senel, N.; Kefferpütz, K.; Doycheva, K.; Elger, G. Multi-Sensor Data Fusion for Real-Time Multi-Object Tracking. Processes 2023, 11, 501. [Google Scholar] [CrossRef]

- Xiang, C.; Feng, C.; Xie, X.; Shi, B.; Lu, H.; Lv, Y.; Yang, M.; Niu, Z. Multi-Sensor Fusion and Cooperative Perception for Autonomous Driving: A Review. IEEE Intell. Transp. Syst. Mag. 2023, 15, 36–58. [Google Scholar] [CrossRef]

- Dong, J.; Chen, S.; Miralinaghi, M.; Chen, T.; Li, P.; Labi, S. Why Did the AI Make That Decision? Towards an Explainable Artificial Intelligence (XAI) for Autonomous Driving Systems. Transp. Res. Part C Emerg. Technol. 2023, 156, 104358. [Google Scholar] [CrossRef]

- Atakishiyev, S.; Salameh, M.; Yao, H.; Goebel, R. Towards Safe, Explainable, and Regulated Autonomous Driving. 2023.

- Data Analysis: Self-Driving Car Accidents [2019-2024]. Craft Law Firm.

- Muhammad, K.; Ullah, A.; Lloret, J.; Ser, J.D.; de Albuquerque, V.H.C. Deep Learning for Safe Autonomous Driving: Current Challenges and Future Directions. IEEE Trans. Intell. Transp. Syst. 2021, 22, 4316–4336. [Google Scholar] [CrossRef]

- Port & Terminal Automation | Quanergy Solutions, Inc. | LiDAR Sensors and Smart Perception Solutions. Available online: https://quanergy.com/port-terminal-automation/ (accessed on 8 November 2024).

- Autonomous Vehicles Factsheet | Center for Sustainable Systems. Available online: https://css.umich.edu/publications/factsheets/mobility/autonomous-vehicles-factsheet (accessed on 8 November 2024).

- Odukha, O. How Sensor Fusion for Autonomous Cars Helps Avoid Deaths on the Road. Available online: https://intellias.com/sensor-fusion-autonomous-cars-helps-avoid-deaths-road/ (accessed on 8 November 2024).

- Introduction to Autonomous Driving Sensors [BLOG] | News | ECOTRONS. Available online: https://ecotrons.com/news/introduction-to-autonomous-driving-sensors-blog/ (accessed on 8 November 2024).

- Grey, T. The Anatomy of an Autonomous Vehicle | Ouster. Available online: https://ouster.com/insights/blog/the-anatomy-of-an-autonomous-vehicle (accessed on 8 November 2024).

- Dreissig, M.; Scheuble, D.; Piewak, F.; Boedecker, J. Survey on LiDAR Perception in Adverse Weather Conditions. 2023.

- Kim, J.; Park, B.; Kim, J. Empirical Analysis of Autonomous Vehicle’s LiDAR Detection Performance Degradation for Actual Road Driving in Rain and Fog. Sensors 2023, 23, 2972. [Google Scholar] [CrossRef] [PubMed]

- Park, J.; Cho, J.; Lee, S.; Bak, S.; Kim, Y. An Automotive LiDAR Performance Test Method in Dynamic Driving Conditions. Sensors 2023, 23, 3892. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Wu, Y.; Niu, Q. Multi-Sensor Fusion in Automated Driving: A Survey. IEEE Access 2020, 8, 2847–2868. [Google Scholar] [CrossRef]

- Cao, Y.; Wang, N.; Xiao, C.; Yang, D.; Fang, J.; Yang, R.; Chen, Q.A.; Liu, M.; Li, B. Invisible for Both Camera and LiDAR: Security of Multi-Sensor Fusion Based Perception in Autonomous Driving Under Physical-World Attacks. 2021.

- Hafeez, F.; Sheikh, U.U.; Alkhaldi, N.; Garni, H.Z.A.; Arfeen, Z.A.; Khalid, S.A. Insights and Strategies for an Autonomous Vehicle With a Sensor Fusion Innovation: A Fictional Outlook. IEEE Access 2020, 8, 135162–135175. [Google Scholar] [CrossRef]

- Hasanujjaman, M.; Chowdhury, M.Z.; Jang, Y.M. Sensor Fusion in Autonomous Vehicle with Traffic Surveillance Camera System: Detection, Localization, and AI Networking. Sensors 2023, 23, 3335. [Google Scholar] [CrossRef] [PubMed]

- Marti, E.; de Miguel, M.A.; Garcia, F.; Perez, J. A Review of Sensor Technologies for Perception in Automated Driving. IEEE Intell. Transp. Syst. Mag. 2019, 11, 94–108. [Google Scholar] [CrossRef]

- Butt, F.A.; Chattha, J.N.; Ahmad, J.; Zia, M.U.; Rizwan, M.; Naqvi, I.H. On the Integration of Enabling Wireless Technologies and Sensor Fusion for Next-Generation Connected and Autonomous Vehicles. IEEE Access 2022, 10, 14643–14668. [Google Scholar] [CrossRef]

- Yoon, K.; Choi, J.; Huh, K. Adaptive Decentralized Sensor Fusion for Autonomous Vehicle: Estimating the Position of Surrounding Vehicles. IEEE Access 2023, 11, 90999–91008. [Google Scholar] [CrossRef]

- Thakur, A.; Mishra, S.K. An In-Depth Evaluation of Deep Learning-Enabled Adaptive Approaches for Detecting Obstacles Using Sensor-Fused Data in Autonomous Vehicles. Eng. Appl. Artif. Intell. 2024, 133, 108550. [Google Scholar] [CrossRef]

- Argese, S. Sensors Selection for Obstacle Detection: Sensor Fusion and YOLOv4 for an Autonomous Surface Vehicle in Venice Lagoon. laurea, Politecnico di Torino, 2023.

- Matos, F.; Bernardino, J.; Durães, J.; Cunha, J. A Survey on Sensor Failures in Autonomous Vehicles: Challenges and Solutions. Sensors 2024, 24, 5108. [Google Scholar] [CrossRef]

- Brena, R.F.; Aguileta, A.A.; Trejo, L.A.; Molino-Minero-Re, E.; Mayora, O. Choosing the Best Sensor Fusion Method: A Machine-Learning Approach. Sensors 2020, 20, 2350. [Google Scholar] [CrossRef] [PubMed]

- Dong, H.; Gu, W.; Zhang, X.; Xu, J.; Ai, R.; Lu, H.; Kannala, J.; Chen, X. SuperFusion: Multilevel LiDAR-Camera Fusion for Long-Range HD Map Generation. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA); May 2024; pp. 9056–9062.

- Ku, J.; Harakeh, A.; Waslander, S.L. In Defense of Classical Image Processing: Fast Depth Completion on the CPU. In Proceedings of the 2018 15th Conference on Computer and Robot Vision (CRV); May 2018; pp. 16–22.

- Kim, Y.; Kim, S.; Choi, J.W.; Kum, D. CRAFT: Camera-Radar 3D Object Detection with Spatio-Contextual Fusion Transformer. Proc. AAAI Conf. Artif. Intell. 2023, 37, 1160–1168. [Google Scholar] [CrossRef]

- nuScenes. Available online: https://www.nuscenes.org/ (accessed on 10 November 2024).

- Srivastav, A.; Mandal, S. Radars for Autonomous Driving: A Review of Deep Learning Methods and Challenges. IEEE Access 2023, 11, 97147–97168. [Google Scholar] [CrossRef]

- Fawole, O.A.; Rawat, D.B. Recent Advances in 3D Object Detection for Self-Driving Vehicles: A Survey. AI 2024, 5, 1255–1285. [Google Scholar] [CrossRef]

- Brabandere, B.D. Late vs Early Sensor Fusion for Autonomous Driving. Segments.ai 2024.

- Shi, K.; He, S.; Shi, Z.; Chen, A.; Xiong, Z.; Chen, J.; Luo, J. Radar and Camera Fusion for Object Detection and Tracking: A Comprehensive Survey. 2024.

- Pandharipande, A.; Cheng, C.-H.; Dauwels, J.; Gurbuz, S.Z.; Ibanez-Guzman, J.; Li, G.; Piazzoni, A.; Wang, P.; Santra, A. Sensing and Machine Learning for Automotive Perception: A Review. IEEE Sens. J. 2023, 23, 11097–11115. [Google Scholar] [CrossRef]

- Sural, S.; Sahu, N.; Rajkumar, R. ContextualFusion: Context-Based Multi-Sensor Fusion for 3D Object Detection in Adverse Operating Conditions. 2024.

- Huch, S.; Sauerbeck, F.; Betz, J. DeepSTEP - Deep Learning-Based Spatio-Temporal End-To-End Perception for Autonomous Vehicles. In Proceedings of the 2023 IEEE Intelligent Vehicles Symposium (IV); IEEE, June 2023; pp. 1–8.

- Visteon | Current Sensor Data Fusion Architectures: Visteon’s Approach. Available online: https://www.visteon.com/current-sensor-data-fusion-architectures-visteons-approach/ (accessed on 18 November 2024).

- Singh, A. Vision-RADAR Fusion for Robotics BEV Detections: A Survey. 2023.

- Jahn, L.L.F.; Park, S.; Lim, Y.; An, J.; Choi, G. Enhancing Lane Detection with a Lightweight Collaborative Late Fusion Model. Robot. Auton. Syst. 2024, 175, 104680. [Google Scholar] [CrossRef]

- Yang, Q.; Zhao, Y.; Cheng, H. MMLF: Multi-Modal Multi-Class Late Fusion for Object Detection with Uncertainty Estimation. 2024.

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision Meets Robotics: The KITTI Dataset. Int. J. Robot. Res. 2013, 32, 1231–1237. [Google Scholar] [CrossRef]

- Park, J.; Thota, B.K.; Somashekar, K. Sensor-Fused Nighttime System for Enhanced Pedestrian Detection in ADAS and Autonomous Vehicles. Sensors 2024, 24, 4755. [Google Scholar] [CrossRef] [PubMed]

- Felzenszwalb, P.F.; Girshick, R.B.; McAllester, D.; Ramanan, D. Object Detection with Discriminatively Trained Part-Based Models. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1627–1645. [Google Scholar] [CrossRef]

- 9 Types of Sensor Fusion Algorithms. Available online: https://www.thinkautonomous.ai/blog/9-types-of-sensor-fusion-algorithms/ (accessed on 29 November 2024).

- Hasanujjaman, M.; Chowdhury, M.Z.; Jang, Y.M. Sensor Fusion in Autonomous Vehicle with Traffic Surveillance Camera System: Detection, Localization, and AI Networking. Sensors 2023, 23, 3335. [Google Scholar] [CrossRef]

- Anisha, A.M.; Abdel-Aty, M.; Abdelraouf, A.; Islam, Z.; Zheng, O. Automated Vehicle to Vehicle Conflict Analysis at Signalized Intersections by Camera and LiDAR Sensor Fusion. Transp. Res. Rec. J. Transp. Res. Board 2023, 2677, 117–132. [Google Scholar] [CrossRef]

- Parida, B. Sensor Fusion: The Ultimate Guide to Combining Data for Enhanced Perception and Decision-Making. Available online: https://www.wevolver.com/article/sensor-fusion. https://www.wevolver.com/article/sensor-fusion (accessed on 30 November 2024).

- Dorlecontrols. Sensor Fusion. Medium 2023.

- Thakur, A.; Mishra, S.K. An In-Depth Evaluation of Deep Learning-Enabled Adaptive Approaches for Detecting Obstacles Using Sensor-Fused Data in Autonomous Vehicles. Eng. Appl. Artif. Intell. 2024, 133, 108550. [Google Scholar] [CrossRef]

- Fayyad, J.; Jaradat, M.A.; Gruyer, D.; Najjaran, H. Deep Learning Sensor Fusion for Autonomous Vehicle Perception and Localization: A Review. Sensors 2020, 20, 4220. [Google Scholar] [CrossRef]

- Stateczny, A.; Wlodarczyk-Sielicka, M.; Burdziakowski, P. Sensors and Sensor’s Fusion in Autonomous Vehicles. Sensors 2021, 21, 6586. [Google Scholar] [CrossRef] [PubMed]

- Tian, C.; Liu, H.; Liu, Z.; Li, H.; Wang, Y. Research on Multi-Sensor Fusion SLAM Algorithm Based on Improved Gmapping. IEEE Access 2023, 11, 13690–13703. [Google Scholar] [CrossRef]

- Ding, Z.; Sun, Y.; Xu, S.; Pan, Y.; Peng, Y.; Mao, Z. Recent Advances and Perspectives in Deep Learning Techniques for 3D Point Cloud Data Processing. Robotics 2023, 12, 100. [Google Scholar] [CrossRef]

- Park, J.; Kim, C.; Kim, S.; Jo, K. PCSCNet: Fast 3D Semantic Segmentation of LiDAR Point Cloud for Autonomous Car Using Point Convolution and Sparse Convolution Network. Expert Syst. Appl. 2023, 212, 118815. [Google Scholar] [CrossRef]

- Wang, Y.; Mao, Q.; Zhu, H.; Deng, J.; Zhang, Y.; Ji, J.; Li, H.; Zhang, Y. Multi-Modal 3D Object Detection in Autonomous Driving: A Survey. Int. J. Comput. Vis. 2023, 131, 2122–2152. [Google Scholar] [CrossRef]

- Mao, J.; Shi, S.; Wang, X.; Li, H. 3D Object Detection for Autonomous Driving: A Comprehensive Survey. Int. J. Comput. Vis. 2023, 131, 1909–1963. [Google Scholar] [CrossRef]

- Appiah, E.O.; Mensah, S. Object Detection in Adverse Weather Condition for Autonomous Vehicles. Multimed. Tools Appl. 2024, 83, 28235–28261. [Google Scholar] [CrossRef]

- Alaba, S.Y.; Gurbuz, A.C.; Ball, J.E. Emerging Trends in Autonomous Vehicle Perception: Multimodal Fusion for 3D Object Detection. World Electr. Veh. J. 2024, 15, 20. [Google Scholar] [CrossRef]

- Schmidhuber, J. Deep Learning in Neural Networks: An Overview. Neural Netw. 2015, 61, 85–117. [Google Scholar] [CrossRef]

- Gurney, K. An Introduction to Neural Networks; 1st ed.; CRC Press: London, 2018; ISBN 978-1-315-27357-0.

- Fan, F.; Xiong, J.; Li, M.; Wang, G. On Interpretability of Artificial Neural Networks: A Survey. 2021.

- Alaba, S.Y. GPS-IMU Sensor Fusion for Reliable Autonomous Vehicle Position Estimation. 2024.

- Tian, K.; Radovnikovich, M.; Cheok, K. Comparing EKF, UKF, and PF Performance for Autonomous Vehicle Multi-Sensor Fusion and Tracking in Highway Scenario. In Proceedings of the 2022 IEEE International Systems Conference (SysCon); April 2022; pp. 1–6.

- Aamir, M.; Nawi, N.M.; Wahid, F.; Mahdin, H. An Efficient Normalized Restricted Boltzmann Machine for Solving Multiclass Classification Problems. Int. J. Adv. Comput. Sci. Appl. IJACSA 2019, 10. [Google Scholar] [CrossRef]

- Li, L.; Sheng, X.; Du, B.; Wang, Y.; Ran, B. A Deep Fusion Model Based on Restricted Boltzmann Machines for Traffic Accident Duration Prediction. Eng. Appl. Artif. Intell. 2020, 93, 103686. [Google Scholar] [CrossRef]

- Kiran, B.R.; Sobh, I.; Talpaert, V.; Mannion, P.; Sallab, A.A.A.; Yogamani, S.; Pérez, P. Deep Reinforcement Learning for Autonomous Driving: A Survey. 2021.

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. 2021.

- Lu, D.; Xie, Q.; Wei, M.; Gao, K.; Xu, L.; Li, J. Transformers in 3D Point Clouds: A Survey. 2022.

- Li, P.; Pei, Y.; Li, J. A Comprehensive Survey on Design and Application of Autoencoder in Deep Learning. Appl. Soft Comput. 2023, 138, 110176. [Google Scholar] [CrossRef]

- Zhong, J.; Liu, Z.; Chen, X. Transformer-Based Models and Hardware Acceleration Analysis in Autonomous Driving: A Survey. 2023.

- Ding, Z.; Sun, Y.; Xu, S.; Pan, Y.; Peng, Y.; Mao, Z. Recent Advances and Perspectives in Deep Learning Techniques for 3D Point Cloud Data Processing. Robotics 2023, 12, 100. [Google Scholar] [CrossRef]

- Liang, L.; Ma, H.; Zhao, L.; Xie, X.; Hua, C.; Zhang, M.; Zhang, Y. Vehicle Detection Algorithms for Autonomous Driving: A Review. Sensors 2024, 24, 3088. [Google Scholar] [CrossRef]

- Zhu, M.; Gong, Y.; Tian, C.; Zhu, Z. A Systematic Survey of Transformer-Based 3D Object Detection for Autonomous Driving: Methods, Challenges and Trends. Drones 2024, 8, 412. [Google Scholar] [CrossRef]

- El Natour, G.; Bresson, G.; Trichet, R. Multi-Sensors System and Deep Learning Models for Object Tracking. Sensors 2023, 23, 7804. [Google Scholar] [CrossRef] [PubMed]

- Vennerød, C.B.; Kjærran, A.; Bugge, E.S. Long Short-Term Memory RNN. 2021.

- Taye, M.M. Understanding of Machine Learning with Deep Learning: Architectures, Workflow, Applications and Future Directions. Computers 2023, 12, 91. [Google Scholar] [CrossRef]

- Reda, M.; Onsy, A.; Haikal, A.Y.; Ghanbari, A. Path Planning Algorithms in the Autonomous Driving System: A Comprehensive Review. Robot. Auton. Syst. 2024, 174, 104630. [Google Scholar] [CrossRef]

- Jin, X.-B.; Chen, W.; Ma, H.-J.; Kong, J.-L.; Su, T.-L.; Bai, Y.-T. Parameter-Free State Estimation Based on Kalman Filter with Attention Learning for GPS Tracking in Autonomous Driving System. Sensors 2023, 23, 8650. [Google Scholar] [CrossRef]

- Brownlee, J. A Gentle Introduction to Expectation-Maximization (EM Algorithm). MachineLearningMastery.com 2019.

- Li, Y.; Li, S.; Du, H.; Chen, L.; Zhang, D.; Li, Y. YOLO-ACN: Focusing on Small Target and Occluded Object Detection. IEEE Access 2020, 8, 227288–227303. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. 2018.

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. 2016.

- Bodla, N.; Singh, B.; Chellappa, R.; Davis, L.S. Soft-NMS — Improving Object Detection with One Line of Code. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV); October 2017; pp. 5562–5570.

- Singh, A. Selecting the Right Bounding Box Using Non-Max Suppression (with Implementation). Anal. Vidhya 2020.

- Wan, E.A.; Van Der Merwe, R. The Unscented Kalman Filter for Nonlinear Estimation. In Proceedings of the Proceedings of the IEEE 2000 Adaptive Systems for Signal Processing, Communications, and Control Symposium (Cat. No.00EX373); October 2000; pp. 153–158.

- Hao, Y.; Xiong, Z.; Sun, F.; Wang, X. Comparison of Unscented Kalman Filters. In Proceedings of the 2007 International Conference on Mechatronics and Automation; August 2007; pp. 895–899.

- Liu, D.; Zhang, J.; Jin, J.; Dai, Y.; Li, L. A New Approach of Obstacle Fusion Detection for Unmanned Surface Vehicle Using Dempster-Shafer Evidence Theory. Appl. Ocean Res. 2022, 119, 103016. [Google Scholar] [CrossRef]

- Wibowo, A.; Trilaksono, B.R.; Hidayat, E.M.I.; Munir, R. Object Detection in Dense and Mixed Traffic for Autonomous Vehicles With Modified Yolo. IEEE Access 2023, 11, 134866–134877. [Google Scholar] [CrossRef]

- Wong, C.-C.; Feng, H.-M.; Kuo, K.-L. Multi-Sensor Fusion Simultaneous Localization Mapping Based on Deep Reinforcement Learning and Multi-Model Adaptive Estimation. Sensors 2024, 24, 48. [Google Scholar] [CrossRef] [PubMed]

- Charroud, A.; Moutaouakil, K.E.; Yahyaouy, A. Fast and Accurate Localization and Mapping Method for Self-Driving Vehicles Based on a Modified Clustering Particle Filter. Multimed. Tools Appl. 2023, 82, 18435–18457. [Google Scholar] [CrossRef]

- Kang, D.; Kum, D. Camera and Radar Sensor Fusion for Robust Vehicle Localization via Vehicle Part Localization. IEEE Access 2020, 8, 75223–75236. [Google Scholar] [CrossRef]

- Stroescu, A.; Daniel, L.; Gashinova, M. Combined Object Detection and Tracking on High Resolution Radar Imagery for Autonomous Driving Using Deep Neural Networks and Particle Filters. In Proceedings of the 2020 IEEE Radar Conference (RadarConf20); September 2020; pp. 1–6.

- Kim, S.; Jang, M.; La, H.; Oh, K. Development of a Particle Filter-Based Path Tracking Algorithm of Autonomous Trucks with a Single Steering and Driving Module Using a Monocular Camera. Sensors 2023, 23, 3650. [Google Scholar] [CrossRef] [PubMed]

- Kusenbach, M.; Luettel, T.; Wuensche, H.-J. Fast Object Classification for Autonomous Driving Using Shape and Motion Information Applying the Dempster-Shafer Theory. In Proceedings of the 2020 IEEE 23rd International Conference on Intelligent Transportation Systems (ITSC); September 2020; pp. 1–6.

- Srivastava, R.P. Dempster-Shafer Theory of Belief Functions: A Language for Managing Uncertainties in the Real-World Problems. Int. J. Finance Entrep. Sustain. 2022, 2. [Google Scholar] [CrossRef]

- Terven, J.; Córdova-Esparza, D.-M.; Romero-González, J.-A. A Comprehensive Review of YOLO Architectures in Computer Vision: From YOLOv1 to YOLOv8 and YOLO-NAS. Mach. Learn. Knowl. Extr. 2023, 5, 1680–1716. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef]

- Yang, W.; Li, Z.; Wang, C.; Li, J. A Multi-Task Faster R-CNN Method for 3D Vehicle Detection Based on a Single Image. Appl. Soft Comput. 2020, 95, 106533. [Google Scholar] [CrossRef]

- Cortés Gallardo Medina, E.; Velazquez Espitia, V.M.; Chípuli Silva, D.; Fernández Ruiz de las Cuevas, S.; Palacios Hirata, M.; Zhu Chen, A.; González González, J.Á.; Bustamante-Bello, R.; Moreno-García, C.F. Object Detection, Distributed Cloud Computing and Parallelization Techniques for Autonomous Driving Systems. Appl. Sci. 2021, 11, 2925. [Google Scholar] [CrossRef]

- Li, Y.; Ma, L.; Zhong, Z.; Liu, F.; Chapman, M.A.; Cao, D.; Li, J. Deep Learning for LiDAR Point Clouds in Autonomous Driving: A Review. IEEE Trans. Neural Netw. Learn. Syst. 2021, 32, 3412–3432. [Google Scholar] [CrossRef] [PubMed]

- Kang, D.; Wong, A.; Lee, B.; Kim, J. Real-Time Semantic Segmentation of 3D Point Cloud for Autonomous Driving. Electronics 2021, 10, 1960. [Google Scholar] [CrossRef]

- Paigwar, A.; Erkent, Ö.; Sierra-Gonzalez, D.; Laugier, C. GndNet: Fast Ground Plane Estimation and Point Cloud Segmentation for Autonomous Vehicles. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS); October 2020; pp. 2150–2156.

- Lu, W.; Zhou, Y.; Wan, G.; Hou, S.; Song, S. L3-Net: Towards Learning Based LiDAR Localization for Autonomous Driving.; 2019; pp. 6389–6398.

- Cui, Y.; Chen, R.; Chu, W.; Chen, L.; Tian, D.; Li, Y.; Cao, D. Deep Learning for Image and Point Cloud Fusion in Autonomous Driving: A Review. IEEE Trans. Intell. Transp. Syst. 2022, 23, 722–739. [Google Scholar] [CrossRef]

- Cheng, W.; Yin, J.; Li, W.; Yang, R.; Shen, J. Language-Guided 3D Object Detection in Point Cloud for Autonomous Driving 2023.

- Alaba, S.Y.; Gurbuz, A.C.; Ball, J.E. Emerging Trends in Autonomous Vehicle Perception: Multimodal Fusion for 3D Object Detection. World Electr. Veh. J. 2024, 15, 20. [Google Scholar] [CrossRef]

- Wang, Y.; Mao, Q.; Zhu, H.; Deng, J.; Zhang, Y.; Ji, J.; Li, H.; Zhang, Y. Multi-Modal 3D Object Detection in Autonomous Driving: A Survey 2023.

- Mao, J.; Shi, S.; Wang, X.; Li, H. 3D Object Detection for Autonomous Driving: A Comprehensive Survey. Int. J. Comput. Vis. 2023, 131, 1909–1963. [Google Scholar] [CrossRef]

- Zhu, M.; Gong, Y.; Tian, C.; Zhu, Z. A Systematic Survey of Transformer-Based 3D Object Detection for Autonomous Driving: Methods, Challenges and Trends. Drones 2024, 8, 412. [Google Scholar] [CrossRef]

- Wang, X.; Li, K.; Chehri, A. Multi-Sensor Fusion Technology for 3D Object Detection in Autonomous Driving: A Review. IEEE Trans. Intell. Transp. Syst. 2024, 25, 1148–1165. [Google Scholar] [CrossRef]

- Khanam, R.; Hussain, M. YOLOv11: An Overview of the Key Architectural Enhancements. 2024.

- Qi, C.; Yi, L.; Su, H.; Guibas, L. PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space. ArXiv 2017. [Google Scholar]

- Srivastav, A.; Mandal, S. Radars for Autonomous Driving: A Review of Deep Learning Methods and Challenges. IEEE Access 2023, 11, 97147–97168. [Google Scholar] [CrossRef]

- Liu, C.; Liu, S.; Zhang, C.; Huang, Y.; Wang, H. Multipath Propagation Analysis and Ghost Target Removal for FMCW Automotive Radars. In Proceedings of the IET International Radar Conference (IET IRC 2020); November 2020; Vol. 2020, pp. 330–334.

- Hasirlioglu, S.; Riener, A. Challenges in Object Detection Under Rainy Weather Conditions. In Proceedings of the Intelligent Transport Systems, From Research and Development to the Market Uptake; Ferreira, J.C., Martins, A.L., Monteiro, V., Eds.; Springer International Publishing: Cham, 2019; pp. 53–65.

- Zhang, Y.; Liu, K.; Bao, H.; Qian, X.; Wang, Z.; Ye, S.; Wang, W. AFTR: A Robustness Multi-Sensor Fusion Model for 3D Object Detection Based on Adaptive Fusion Transformer. Sensors 2023, 23, 8400. [Google Scholar] [CrossRef] [PubMed]

- Liu, W.; Zhu, J.; Zhuo, G.; Fu, W.; Meng, Z.; Lu, Y.; Hua, M.; Qiao, F.; Li, Y.; He, Y.; et al. UniMSF: A Unified Multi-Sensor Fusion Framework for Intelligent Transportation System Global Localization. 2024.

- Dickert, C. Network Overload? Adding Up the Data Produced By Connected Cars. Available online: https://www.visualcapitalist.com/network-overload/ (accessed on 21 December 2024).

- Vakulov, A. Addressing Data Processing Challenges in Autonomous Vehicles. Available online: https://www.iotforall.com/addressing-data-processing-challenges-in-autonomous-vehicles (accessed on 21 December 2024).

- Griggs, T.; Wakabayashi, D. How a Self-Driving Uber Killed a Pedestrian in Arizona. N. Y. Times 2018.

- Parekh, D.; Poddar, N.; Rajpurkar, A.; Chahal, M.; Kumar, N.; Joshi, G.P.; Cho, W. A Review on Autonomous Vehicles: Progress, Methods and Challenges. Electronics 2022, 11, 2162. [Google Scholar] [CrossRef]

- Oh, C.; Yoon, J. Hardware Acceleration Technology for Deep-Learning in Autonomous Vehicles. In Proceedings of the 2019 IEEE International Conference on Big Data and Smart Computing (BigComp); February 2019; pp. 1–3.

- Singh, J. AI-Driven Path Planning in Autonomous Vehicles: Algorithms for Safe and Efficient Navigation in Dynamic Environments. J. AI-Assist. Sci. Discov. 2024, 4, 48–88. [Google Scholar]

- Islayem, R.; Alhosani, F.; Hashem, R.; Alzaabi, A.; Meribout, M. Hardware Accelerators for Autonomous Cars: A Review. 2024.

- Narain, S.; Ranganathan, A.; Noubir, G. Security of GPS/INS Based On-Road Location Tracking Systems. In Proceedings of the 2019 IEEE Symposium on Security and Privacy (SP); May 2019; pp. 587–601.

- Kloukiniotis, A.; Papandreou, A.; Lalos, A.; Kapsalas, P.; Nguyen, D.-V.; Moustakas, K. Countering Adversarial Attacks on Autonomous Vehicles Using Denoising Techniques: A Review. IEEE Open J. Intell. Transp. Syst. 2022, 3, 61–80. [Google Scholar] [CrossRef]

- Hataba, M.; Sherif, A.; Mahmoud, M.; Abdallah, M.; Alasmary, W. Security and Privacy Issues in Autonomous Vehicles: A Layer-Based Survey. IEEE Open J. Commun. Soc. 2022, 3, 811–829. [Google Scholar] [CrossRef]

- Hamad, M.; Steinhorst, S. Security Challenges in Autonomous Systems Design. 2023.

- Yan, X.; Wang, H. Survey on Zero-Trust Network Security. In Proceedings of the Artificial Intelligence and Security; Sun, X., Wang, J., Bertino, E., Eds.; Springer: Singapore, 2020; pp. 50–60.

- Pham, M.; Xiong, K. A Survey on Security Attacks and Defense Techniques for Connected and Autonomous Vehicles. Comput. Secur. 2021, 109, 102269. [Google Scholar] [CrossRef]

- Girdhar, M.; Hong, J.; Moore, J. Cybersecurity of Autonomous Vehicles: A Systematic Literature Review of Adversarial Attacks and Defense Models. IEEE Open J. Veh. Technol. 2023, 4, 417–437. [Google Scholar] [CrossRef]

- Giannaros, A.; Karras, A.; Theodorakopoulos, L.; Karras, C.; Kranias, P.; Schizas, N.; Kalogeratos, G.; Tsolis, D. Autonomous Vehicles: Sophisticated Attacks, Safety Issues, Challenges, Open Topics, Blockchain, and Future Directions. J. Cybersecurity Priv. 2023, 3, 493–543. [Google Scholar] [CrossRef]

- Bendiab, G.; Hameurlaine, A.; Germanos, G.; Kolokotronis, N.; Shiaeles, S. Autonomous Vehicles Security: Challenges and Solutions Using Blockchain and Artificial Intelligence. IEEE Trans. Intell. Transp. Syst. 2023, 24, 3614–3637. [Google Scholar] [CrossRef]

- Wang, Z.; Wei, H.; Wang, J.; Zeng, X.; Chang, Y. Security Issues and Solutions for Connected and Autonomous Vehicles in a Sustainable City: A Survey. Sustainability 2022, 14, 12409. [Google Scholar] [CrossRef]

- Ahmad, U.; Han, M.; Mahmood, S. Enhancing Security in Connected and Autonomous Vehicles: A Pairing Approach and Machine Learning Integration. Appl. Sci. 2024, 14, 5648. [Google Scholar] [CrossRef]

- Zablocki, É.; Ben-Younes, H.; Pérez, P.; Cord, M. Explainability of Deep Vision-Based Autonomous Driving Systems: Review and Challenges. Int. J. Comput. Vis. 2022, 130, 2425–2452. [Google Scholar] [CrossRef]

- Nastjuk, I.; Herrenkind, B.; Marrone, M.; Brendel, A.B.; Kolbe, L.M. What Drives the Acceptance of Autonomous Driving? An Investigation of Acceptance Factors from an End-User’s Perspective. Technol. Forecast. Soc. Change 2020, 161, 120319. [Google Scholar] [CrossRef]

- Lees, M.; Uys, W.; Oosterwyk, G.; Van Belle, J.-P. Factors Influencing the User Acceptance of Autonomous Vehicle AI Technologies in South Africa; 2022.

- Mu, J.; Zhou, L.; Yang, C. Research on the Behavior Influence Mechanism of Users’ Continuous Usage of Autonomous Driving Systems Based on the Extended Technology Acceptance Model and External Factors. Sustainability 2024, 16, 9696. [Google Scholar] [CrossRef]

- Langer, M.; Oster, D.; Speith, T.; Hermanns, H.; Kästner, L.; Schmidt, E.; Sesing, A.; Baum, K. What Do We Want from Explainable Artificial Intelligence (XAI)? – A Stakeholder Perspective on XAI and a Conceptual Model Guiding Interdisciplinary XAI Research. Artif. Intell. 2021, 296, 103473. [Google Scholar] [CrossRef]

- Dwivedi, R.; Dave, D.; Naik, H.; Singhal, S.; Omer, R.; Patel, P.; Qian, B.; Wen, Z.; Shah, T.; Morgan, G.; et al. Explainable AI (XAI): Core Ideas, Techniques, and Solutions. ACM Comput Surv 2023, 55, 194–1. [Google Scholar] [CrossRef]

- Hussain, F.; Hussain, R.; Hossain, E. Explainable Artificial Intelligence (XAI): An Engineering Perspective 2021.

- Barredo Arrieta, A.; Díaz-Rodríguez, N.; Del Ser, J.; Bennetot, A.; Tabik, S.; Barbado, A.; Garcia, S.; Gil-Lopez, S.; Molina, D.; Benjamins, R.; et al. Explainable Artificial Intelligence (XAI): Concepts, Taxonomies, Opportunities and Challenges toward Responsible AI. Inf. Fusion 2020, 58, 82–115. [Google Scholar] [CrossRef]

- Kuznietsov, A.; Gyevnar, B.; Wang, C.; Peters, S.; Albrecht, S.V. Explainable AI for Safe and Trustworthy Autonomous Driving: A Systematic Review. IEEE Trans. Intell. Transp. Syst. 2024, 25, 19342–19364. [Google Scholar] [CrossRef]

- Ali, S.; Abuhmed, T.; El-Sappagh, S.; Muhammad, K.; Alonso-Moral, J.M.; Confalonieri, R.; Guidotti, R.; Del Ser, J.; Díaz-Rodríguez, N.; Herrera, F. Explainable Artificial Intelligence (XAI): What We Know and What Is Left to Attain Trustworthy Artificial Intelligence. Inf. Fusion 2023, 99, 101805. [Google Scholar] [CrossRef]

- Napkin AI - The Visual AI for Business Storytelling. Available online: https://wwww.napkin.ai (accessed on 27 December 2024).

- Rudin, C. Stop Explaining Black Box Machine Learning Models for High Stakes Decisions and Use Interpretable Models Instead. Nat. Mach. Intell. 2019, 1, 206–215. [Google Scholar] [CrossRef] [PubMed]

- Guidotti, R.; Monreale, A.; Ruggieri, S.; Turini, F.; Giannotti, F.; Pedreschi, D. A Survey of Methods for Explaining Black Box Models. ACM Comput Surv 2018, 51, 93–1. [Google Scholar] [CrossRef]

- Derrick, A. The 4 Key Principles of Explainable AI Applications. Available online: https://virtualitics.com/the-4-key-principles-of-explainable-ai-applications/ (accessed on 26 December 2024).

- Optimus - Gen 2 | Tesla; 2023.

- Biba, J.; Urwin, M. Tesla’s Robot, Optimus: Everything We Know. Available online: https://builtin.com/robotics/tesla-robot (accessed on 27 December 2024).

- LeNail, A. NN-SVG: Publication-Ready Neural Network Architecture Schematics. J. Open Source Softw. 2019, 4, 747. [Google Scholar] [CrossRef]

- Xiong, H.; Li, X.; Zhang, X.; Chen, J.; Sun, X.; Li, Y.; Sun, Z.; Du, M. Towards Explainable Artificial Intelligence (XAI): A Data Mining Perspective 2024.

- Wang, S.; Zhou, T.; Bilmes, J. Bias Also Matters: Bias Attribution for Deep Neural Network Explanation.; May 24 2019.

- Perteneder, F. Understanding Black-Box ML Models with Explainable AI. Available online: https://www.dynatrace.com/news/blog/explainable-ai/ (accessed on 29 December 2024).

- Buhrmester, V.; Münch, D.; Arens, M. Analysis of Explainers of Black Box Deep Neural Networks for Computer Vision: A Survey. Mach. Learn. Knowl. Extr. 2021, 3, 966–989. [Google Scholar] [CrossRef]

- Hassija, V.; Chamola, V.; Mahapatra, A.; Singal, A.; Goel, D.; Huang, K.; Scardapane, S.; Spinelli, I.; Mahmud, M.; Hussain, A. Interpreting Black-Box Models: A Review on Explainable Artificial Intelligence. Cogn. Comput. 2024, 16, 45–74. [Google Scholar] [CrossRef]

- Xu, B.; Yang, G. Interpretability Research of Deep Learning: A Literature Survey. Inf. Fusion 2025, 115, 102721. [Google Scholar] [CrossRef]

- Lyssenko, M.; Pimplikar, P.; Bieshaar, M.; Nozarian, F.; Triebel, R. A Safety-Adapted Loss for Pedestrian Detection in Automated Driving 2024.

- Loyola-González, O. Black-Box vs. White-Box: Understanding Their Advantages and Weaknesses From a Practical Point of View. IEEE Access 2019, 7, 154096–154113. [Google Scholar] [CrossRef]

- Murdoch, W.J.; Singh, C.; Kumbier, K.; Abbasi-Asl, R.; Yu, B. Interpretable Machine Learning: Definitions, Methods, and Applications. Proc. Natl. Acad. Sci. 2019, 116, 22071–22080. [Google Scholar] [CrossRef] [PubMed]

- Molnar, C. 8.4 Functional Decomposition | Interpretable Machine Learning; 2024; ISBN 978-0-244-76852-2.

- Kawaguchi, K. Deep Learning without Poor Local Minima 2016.

- Datta, A.; Sen, S.; Zick, Y. Algorithmic Transparency via Quantitative Input Influence: Theory and Experiments with Learning Systems. In Proceedings of the 2016 IEEE Symposium on Security and Privacy (SP); May 2016; pp. 598–617.

- Liu, D.Y. Explainable AI Techniques for Transparency in Autonomous Vehicle Decision-Making. J. AI Healthc. Med. 2023, 3, 114–134. [Google Scholar]

- Chaudhary, G. Unveiling the Black Box: Bringing Algorithmic Transparency to AI. Masaryk Univ. J. Law Technol. 2024, 18, 93–122. [Google Scholar] [CrossRef]

- Alicioglu, G.; Sun, B. A Survey of Visual Analytics for Explainable Artificial Intelligence Methods. Comput. Graph. 2022, 102, 502–520. [Google Scholar] [CrossRef]

- Rjoub, G.; Bentahar, J.; Wahab, O.A.; Mizouni, R.; Song, A.; Cohen, R.; Otrok, H.; Mourad, A. A Survey on Explainable Artificial Intelligence for Cybersecurity. IEEE Trans. Netw. Serv. Manag. 2023, 20, 5115–5140. [Google Scholar] [CrossRef]

- Basheer, K.C.S. Understanding Generalized Additive Models (GAMs): A Comprehensive Guide. Anal. Vidhya 2023.

- Espinoza, J.; Delpiano, R. Statistical Models of Interactions between Vehicles during Overtaking Maneuvers. Transp. Res. Rec. J. Transp. Res. Board 2023, 2678. [Google Scholar] [CrossRef]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations From Deep Networks via Gradient-Based Localization.; 2017; pp. 618–626.

- Yamauchi, T.; Ishikawa, M. Spatial Sensitive GRAD-CAM: Visual Explanations for Object Detection by Incorporating Spatial Sensitivity. In Proceedings of the 2022 IEEE International Conference on Image Processing (ICIP); October 2022; pp. 256–260.

- Quattrocchio, L. Integration of Uncertainty into Explainability Methods to Enhance AI Transparency in Brain MRI Classification. laurea, Politecnico di Torino, 2024.

- Letham, B.; Rudin, C.; McCormick, T.H.; Madigan, D. Interpretable Classifiers Using Rules and Bayesian Analysis: Building a Better Stroke Prediction Model. Ann. Appl. Stat. 2015, 9, 1350–1371. [Google Scholar] [CrossRef]

- Rjoub, G.; Bentahar, J.; Wahab, O.A. Explainable Trust-Aware Selection of Autonomous Vehicles Using LIME for One-Shot Federated Learning. In Proceedings of the 2023 International Wireless Communications and Mobile Computing (IWCMC); June 2023; pp. 524–529.

- Adadi, A.; Berrada, M. Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI). IEEE Access 2018, 6, 52138–52160. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.-I. A Unified Approach to Interpreting Model Predictions. In Proceedings of the Proceedings of the 31st International Conference on Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, December 4 2017; pp. 4768–4777.

- Onyekpe, U.; Lu, Y.; Apostolopoulou, E.; Palade, V.; Eyo, E.U.; Kanarachos, S. Explainable Machine Learning for Autonomous Vehicle Positioning Using SHAP. In Explainable AI: Foundations, Methodologies and Applications; Mehta, M., Palade, V., Chatterjee, I., Eds.; Springer International Publishing: Cham, 2023; pp. 157–183 ISBN 978-3-031-12807-3.

- Simonyan, K.; Vedaldi, A.; Zisserman, A. Deep Inside Convolutional Networks: Visualising Image Classification Models and Saliency Maps. 2014.

- Karatsiolis, S.; Kamilaris, A. A Model-Agnostic Approach for Generating Saliency Maps to Explain Inferred Decisions of Deep Learning Models. 2022.

- Cooper, J.; Arandjelović, O.; Harrison, D.J. Believe the HiPe: Hierarchical Perturbation for Fast, Robust, and Model-Agnostic Saliency Mapping. Pattern Recognit. 2022, 129, 108743. [Google Scholar] [CrossRef]

- Yang, S.; Berdine, G. Interpretable Artificial Intelligence (AI) – Saliency Maps. Southwest Respir. Crit. Care Chron. 2023, 11, 31–37. [Google Scholar] [CrossRef]

- Ding, N.; Zhang, C.; Eskandarian, A. SalienDet: A Saliency-Based Feature Enhancement Algorithm for Object Detection for Autonomous Driving. IEEE Trans. Intell. Veh. 2024, 9, 2624–2635. [Google Scholar] [CrossRef]

- Salih, A.; Raisi-Estabragh, Z.; Galazzo, I.B.; Radeva, P.; Petersen, S.E.; Menegaz, G.; Lekadir, K. A Perspective on Explainable Artificial Intelligence Methods: SHAP and LIME. Adv. Intell. Syst. 2024, 2400304. [Google Scholar] [CrossRef]

- Dieber, J.; Kirrane, S. Why Model Why? Assessing the Strengths and Limitations of LIME. 2020.

- Tursunalieva, A.; Alexander, D.L.J.; Dunne, R.; Li, J.; Riera, L.; Zhao, Y. Making Sense of Machine Learning: A Review of Interpretation Techniques and Their Applications. Appl. Sci. 2024, 14, 496. [Google Scholar] [CrossRef]

- Retzlaff, C.O.; Angerschmid, A.; Saranti, A.; Schneeberger, D.; Röttger, R.; Müller, H.; Holzinger, A. Post-Hoc vs Ante-Hoc Explanations: xAI Design Guidelines for Data Scientists. Cogn. Syst. Res. 2024, 86, 101243. [Google Scholar] [CrossRef]

- Linardatos, P.; Papastefanopoulos, V.; Kotsiantis, S. Explainable AI: A Review of Machine Learning Interpretability Methods. Entropy 2021, 23, 18. [Google Scholar] [CrossRef]

- Tahir, H.A.; Alayed, W.; Hassan, W.U.; Haider, A. A Novel Hybrid XAI Solution for Autonomous Vehicles: Real-Time Interpretability Through LIME–SHAP Integration. Sensors 2024, 24, 6776. [Google Scholar] [CrossRef]

- Ortigossa, E.S.; Gonçalves, T.; Nonato, L.G. EXplainable Artificial Intelligence (XAI)—From Theory to Methods and Applications. IEEE Access 2024, 12, 80799–80846. [Google Scholar] [CrossRef]

- Basheer, K.C.S. Understanding Generalized Additive Models (GAMs): A Comprehensive Guide. Anal. Vidhya 2023.

- Y, S.; Challa, M. A Comparative Analysis of Explainable AI Techniques for Enhanced Model Interpretability. In Proceedings of the 2023 3rd International Conference on Pervasive Computing and Social Networking (ICPCSN); June 2023; pp. 229–234. [Google Scholar]

- Vignesh Everything You Need to Know about LIME. Anal. Vidhya 2022.

- Santos, M.R.; Guedes, A.; Sanchez-Gendriz, I. SHapley Additive exPlanations (SHAP) for Efficient Feature Selection in Rolling Bearing Fault Diagnosis. Mach. Learn. Knowl. Extr. 2024, 6, 316–341. [Google Scholar] [CrossRef]

- Slack, D.; Hilgard, S.; Jia, E.; Singh, S.; Lakkaraju, H. Fooling LIME and SHAP: Adversarial Attacks on Post Hoc Explanation Methods. In Proceedings of the Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society; Association for Computing Machinery: New York, NY, USA, February 7 2020; pp. 180–186.

- Ghorbani, A.; Abid, A.; Zou, J. Interpretation of Neural Networks Is Fragile. Proc. AAAI Conf. Artif. Intell. 2019, 33, 3681–3688. [Google Scholar] [CrossRef]

- Chattopadhyay, A.; Sarkar, A.; Howlader, P.; Balasubramanian, V.N. Grad-CAM++: Improved Visual Explanations for Deep Convolutional Networks. In Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision (WACV); March 2018; pp. 839–847.

- Ahern, I.; Noack, A.; Guzman-Nateras, L.; Dou, D.; Li, B.; Huan, J. NormLime: A New Feature Importance Metric for Explaining Deep Neural Networks. 2019.

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Anchors: High-Precision Model-Agnostic Explanations. Proc. AAAI Conf. Artif. Intell. 2018, 32. [Google Scholar] [CrossRef]

- Montavon, G.; Lapuschkin, S.; Binder, A.; Samek, W.; Müller, K.-R. Explaining Nonlinear Classification Decisions with Deep Taylor Decomposition. Pattern Recognit. 2017, 65, 211–222. [Google Scholar] [CrossRef]

- Huang, J.; Wang, Z.; Li, D.; Liu, Y. The Analysis and Development of an XAI Process on Feature Contribution Explanation. In Proceedings of the 2022 IEEE International Conference on Big Data (Big Data); December 2022; pp. 5039–5048.

- Agarwal, G. Explainable AI (XAI): Permutation Feature Importance. Medium 2022.

- Majella XAI Problems Part 1 of 3: Feature Importances Are Not Good Enough. Elula 2021.

- Cambria, E.; Malandri, L.; Mercorio, F.; Mezzanzanica, M.; Nobani, N. A Survey on XAI and Natural Language Explanations. Inf. Process. Manag. 2023, 60, 103111. [Google Scholar] [CrossRef]

- Andres, A.; Martinez-Seras, A.; Laña, I.; Del Ser, J. On the Black-Box Explainability of Object Detection Models for Safe and Trustworthy Industrial Applications. Results Eng. 2024, 24, 103498. [Google Scholar] [CrossRef]

- Shylenok, D.Y. Explainable AI for Transparent Decision-Making in Autonomous Vehicle Systems. Afr. J. Artif. Intell. Sustain. Dev. 2023, 3, 320–341. [Google Scholar]

- Moradi, M.; Yan, K.; Colwell, D.; Samwald, M.; Asgari, R. Model-Agnostic Explainable Artificial Intelligence for Object Detection in Image Data. Eng. Appl. Artif. Intell. 2024, 137, 109183. [Google Scholar] [CrossRef]

- Chamola, V.; Hassija, V.; Sulthana, A.R.; Ghosh, D.; Dhingra, D.; Sikdar, B. A Review of Trustworthy and Explainable Artificial Intelligence (XAI). IEEE Access 2023, 11, 78994–79015. [Google Scholar] [CrossRef]

- Greifenstein, M. Factors Influencing the User Behaviour of Shared Autonomous Vehicles (SAVs): A Systematic Literature Review. Transp. Res. Part F Traffic Psychol. Behav. 2024, 100, 323–345. [Google Scholar] [CrossRef]

- Liao, Q.V.; Gruen, D.; Miller, S. Questioning the AI: Informing Design Practices for Explainable AI User Experiences. Proc. 2020 CHI Conf. Hum. Factors Comput. Syst. 2020, 1–15. [Google Scholar] [CrossRef]

- Shin, D. The Effects of Explainability and Causability on Perception, Trust, and Acceptance: Implications for Explainable AI. Int. J. Hum.-Comput. Stud. 2021, 146, 102551. [Google Scholar] [CrossRef]

- SmythOS - Explainable AI in Autonomous Vehicles: Building Transparency and Trust on the Road. 2024.

- Ethics Guidelines for Trustworthy AI | Shaping Europe’s Digital Future. Available online: https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai (accessed on 11 January 2025).

- Nasim, M.A.A.; Biswas, P.; Rashid, A.; Biswas, A.; Gupta, K.D. Trustworthy XAI and Application 2024.

- Gunning, D.; Vorm, E.; Wang, Y.; Turek, M. DARPA’s Explainable AI (XAI) Program: A Retrospective. 2021.

- Cugny, R.; Aligon, J.; Chevalier, M.; Roman Jimenez, G.; Teste, O. AutoXAI: A Framework to Automatically Select the Most Adapted XAI Solution. In Proceedings of the Proceedings of the 31st ACM International Conference on Information & Knowledge Management; Association for Computing Machinery: New York, NY, USA, October 17 2022; pp. 315–324.

- Gadekallu, T.R.; Maddikunta, P.K.R.; Boopathy, P.; Deepa, N.; Chengoden, R.; Victor, N.; Wang, W.; Wang, W.; Zhu, Y.; Dev, K. XAI for Industry 5.0 -Concepts, Opportunities, Challenges and Future Directions. IEEE Open J. Commun. Soc. 2024, 1–1. [CrossRef]

- Lundberg, S.M.; Nair, B.; Vavilala, M.S.; Horibe, M.; Eisses, M.J.; Adams, T.; Liston, D.E.; Low, D.K.-W.; Newman, S.-F.; Kim, J.; et al. Explainable Machine-Learning Predictions for the Prevention of Hypoxaemia during Surgery. Nat. Biomed. Eng. 2018, 2, 749–760. [Google Scholar] [CrossRef] [PubMed]

- Holzinger, A.; Malle, B.; Saranti, A.; Pfeifer, B. Towards Multi-Modal Causability with Graph Neural Networks Enabling Information Fusion for Explainable AI. Inf. Fusion 2021, 71, 28–37. [Google Scholar] [CrossRef]

- European Approach to Artificial Intelligence | Shaping Europe’s Digital Future. Available online: https://digital-strategy.ec.europa.eu/en/policies/european-approach-artificial-intelligence (accessed on 14 January 2025).

- Chen, L.; Sinavski, O.; Hünermann, J.; Karnsund, A.; Willmott, A.J.; Birch, D.; Maund, D.; Shotton, J. Driving with LLMs: Fusing Object-Level Vector Modality for Explainable Autonomous Driving 2023.

- Greyling, C. Using LLMs For Autonomous Vehicles. Medium 2024.

- Mankodiya, H.; Obaidat, M.S.; Gupta, R.; Tanwar, S. XAI-AV: Explainable Artificial Intelligence for Trust Management in Autonomous Vehicles. In Proceedings of the 2021 International Conference on Communications, Computing, Cybersecurity, and Informatics (CCCI); October 2021; pp. 1–5.

- Salehi, J. Explainable AI for Real-Time Threat Analysis in Autonomous Vehicle Networks. Afr. J. Artif. Intell. Sustain. Dev. 2023, 3, 294–315. [Google Scholar]

| Definition | Examples | |

|---|---|---|

| Exteroceptive Sensor | It perceives the external environment, detecting objects, obstacles, light intensity, and other relevant features essential for safe navigation. |

|

| Proprioceptive Sensor | It measures the internal values and gathers information about the dynamic state of a self-driving vehicles, such as its position, speed, and acceleration, that are essential for maintaining stability and ensuring precise control of the vehicle motion. |

|

| Exteroceptive Sensors | Advantages | Disadvantages |

|---|---|---|

| Camera |

|

|

| Lidar |

|

|

| Radar |

|

|

| Ultrasonic |

|

|

| Advantages | Disadvantages | |

|---|---|---|

| Centralized Fusion |

|

|

| Decentralized Fusion |

|

|

| Distributed Fusion |

|

|

| Algorithms | Descriptions | Applications | Ref. |

|---|---|---|---|

| UKF | UKF is an advanced adaptation of the KF algorithm, specifically developed to address nonlinearities in state estimation with greater efficiency and accuracy. Its strengths and limitations include:

|

|

[111] [112] [115] |

| Particle Filter (PF) | PF is a recursive algorithm that is utilized to estimate the state of a system by using a set of random samples (particles) to represent the probability distribution, making it ideal for nonlinear and non-Gaussian problems. Its strengths and limitations include:

|

|

[116] [117] [119] |

| Dempster-Shafer Theory (DST) | DST is a mathematical framework for modeling uncertainties in real-world problems and combining evidence from different sources to make decisions, even if that evidence is uncertain or incomplete, to form a belief about a hypothesis. Its strengths and limitations include:

|

|

[113] [120] [121] |

| YOLO | YOLO is a real-time object detection algorithm that utilizes a single CNN (single-stage detector) to predict bounding boxes and class probabilities from an image. Several versions of YOLO have been established, each offering improved precision, with the most recent version being YOLOv11 [137]. Its strengths and limitations include:

|

|

[11] [108] [114] [122] |

| Faster R-CNN | Faster Region-Convolutional Neural Network (Faster R-CNN) is a two-stage object detection algorithm that utilizes a Region Proposal Network (RPN) and a CNN to detect and localize objects in complex real-world images. Its strengths and limitations include:

|

|

[76] [114] [123] [124] [125] |

| PointNet | PointNet is a two-stage detector that introduces a permutation-variant deep neural network to learn global features from unordered point clouds using a symmetric function, without the need for voxelization. Its strengths and limitations include:

|

|

[126] [127] [128] [129] |

| Techniques | Explanation Level | Implementation Level | Model Dependency | Data Type | |||||

| Global | Local | Ante-hoc | Post-hoc | Agnostic | Specific | Tabular | Image | Textual | |

| Decision Tree | ● | ● | ● | - | ● | - | ● | - | - |

| Linear Model | ● | - | ● | - | ● | - | ● | - | - |

| BRL | ● | - | ● | - | - | ● | ● | - | - |

| GAM | ● | - | ● | - | - | ● | ● | - | - |

| LIME | - | ● | - | ● | ● | - | ● | ● | ● |

| SHAP | ● | ● | - | ● | ● | - | ● | ● | ● |

| Saliency Maps * | - | ● | - | ● | ● | ● | - | ● | - |

| Grad-CAM | - | ● | - | ● | ● | - | - | ● | - |

| Anchors | - | ● | - | ● | ● | - | ● | ● | ● |

| DeepLIFT | ● | ● | - | ● | ● | - | - | ● | ● |

| Counterfactuals | - | ● | - | ● | ● | - | ● | ● | ● |

| Sensitivity Analysis * | ● | - | - | ● | ● | ● | ● | - | - |

| Distillation | ● | - | - | ● | - | ● | ● | ● | ● |

| PDP | ● | ● | - | - | ● | - | ● | - | - |

| Feature Importance | ● | ● | - | ● | ● | - | ● | ● | ● |

| Techniques | Strengths | Limitations |

|---|---|---|

| Decision Tree |

|

|

| Linear Model |

|

|

| BRL |

|

|

| GAM |

|

|

| LIME |

|

|

| SHAP |

|

|

| Saliency Maps |

|

|

| Grad-CAM |

|

|

| Anchors |

|

|

| DeepLIFT |

|

|

| Counterfactuals |

|

|

| Sensitivity Analysis |

|

|

| Distillation |

|

|

| PDP |

|

|

| Feature Importance |

|

|

| Criteria | Explanations |

|---|---|

| Human Agency and Oversight | AI systems should enhance human decision-making and support fundamental rights while ensuring adequate oversight, rather than restricting or misleading human autonomy. This can be achieved through human-in-the-loop, human-on-the-loop, and human-in-command approaches. |

| Technical Robustness and Safety | AI systems must be resilient, secure, and safe, with contingency plans in place to address system failures or malfunctions. They must also be accurate, reliable, and reproducible to minimize and prevent unintentional harm. |

| Privacy and Data Governance | In addition to safeguarding privacy and data protection, effective data governance mechanisms must be established, ensuring data quality, integrity, and authorized access. End-users should also maintain full control over their personal information, ensuring that such data is not used in ways that could be detrimental or harmful to their interests. |

| Transparency | Data, systems, and AI business models must be transparent, with traceability mechanisms ensuring accountability. Moreover, AI systems and their decisions should be explained in a way that is tailored to the relevant stakeholders, and it is essential that users are aware that they are interacting with AI and are informed of its capabilities and limitations. |

| Diversity, Non-Discrimination, and Fairness | Unfair bias must be eliminated to prevent negative outcomes such as the marginalization of vulnerable groups and the reinforcement of prejudice. AI systems should be accessible to all, regardless of disability, and involve relevant stakeholders throughout their lifecycle to promote inclusivity. |

| Societal and Environmental Well-Being | AI systems must be designed to benefit all humanity, including future generations, while prioritizing sustainability and environmental responsibility. Additionally, their impact on the environment, other living being, and society must be thoroughly evaluated and considered. |

| Accountability | Mechanisms must be established to ensure accountability for AI systems and their outcomes. Auditability, which allows for the evaluation of algorithms, data, and design processes, is essential, particularly in critical applications. Besides, accessible avenues for compensation should be provided. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).