1. Introduction and Scope of the Current Study

To date, there are numerous supervised machine learning algorithms each having its own strength and weakness. For example, K-Nearest Neighbor (KNN), one of the earliest and perhaps most straightforward algorithm for supervised learning has the ability to train itself in constant time, i.e., as soon as a labelled input is provided, the model can learn it instantly without further processing. However, it has a testing complexity of

, where

n is the total number of training data,

d is the dimensionality of the feature space. This is a rather daunting task, specifically when we have to deal with a large set of training data, as we need to calculate the distance between the new point and all other previously classified points ([

1]). Using techniques like KD Tree and Ball Tree, average running time in the testing phase can be improved up to

at the expense of a costlier time complexity in the training phase ([

2,

3]). However, the worst-case time complexity is still

([

2,

3]).

Apart from the KNN, another most studied supervised learning algorithm is Support Vector Machine (SVM) that aims to construct maximum margin hyperplanes amongst the training data points ([

4]). The time complexity of SVM method depends, among other things, upon the algorithm used for optimization (e.g., quadratic programming or gradient-based methods), dimensionality of the data, the type of the kernel function used, number of support vectors, and the size of the training dataset. Worst-case time complexity in training phase of linear and non-linear SVMs are found to be

and

respectively, where

T is the number of iterations,

n is the size of the training sample and

d is the dimensionality of the feature space ([

5,

6]). Time complexities of the testing phase of SVMs are found to be

for linear kernel and

for non-linear kernel, where

s is the number of support vectors.

Another algorithm that is frequently used in classification of labelled data is Random Forest (RF), which is an ensemble learning technique that works by constructing multiple decision trees in the training phase, where each tree is trained with a subset of the total data ([

7]). To predict the final output of the RF in testing phase, majority voting technique is used to combine the results of multiple decision trees. The time complexity of RF depends upon the number of trees in the forest (

t), sample size (

n), dimensionality of the feature space (

d) and tree height (

h) among other things. Training time complexity of RF is found to be

, while the testing complexity is

per sample.

Another important algorithm for classification is Logistic Regression (LR), which is used primarily for binary classification, although the algorithm can be easily adapted to handle multiclass classification problems as well. Training phase time complexity of the Logistic Regression (LR) depends, among others, on number of training samples, number of iterations and dimensionality of the features space, where the number of iterations depends further on the choice of the algorithm used (stochastic, batch gradient descent or, alike) ([

8]). In a nutshell, the total time complexity of the Logistic Regression (LR) in training phase can be summarized as

, where

E is the number of iterations,

n is the size of the training sample and

d is the dimensionality of the input space. On the other hand, the testing time complexity of LR per sample is

as it simply involves computing the dot product of the weight (

w) and feature vector (

x) ([

8,

9]).

However, perhaps one of the most popular and widely used supervised learning algorithms is the Neural Network (NN), which is inspired from the networks of biological neurons that comprise human brain and is presently used extensively in image and video processing, natural language processing, healthcare, autonomous vehicle routing, finance, robotics, gaming and entertainment, marketing and customer service, anomaly detection etc. Performance of a Neural Network (NN) depends upon the number of hidden layers, number of neurons per layer, number of epochs, input size, input dimensions etc. If there are

L hidden layers each having

M neurons, then the training time complexity of the Neural Network (NN) can be summarized as

, where

E is the number of epochs/iterations,

n is the sample size and

d is the dimensionality of the input space, while the testing time complexity per sample of the said NN is

([

10,

11]). Choices of the number of hidden layers

L, number of neurons per layer

M and number of epochs

E are somewhat arbitrary, i.e., we can choose any value for

and

E from a seemingly infinite range.

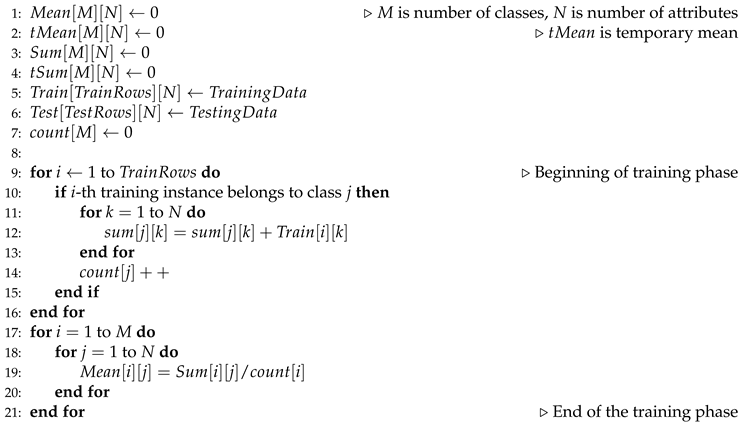

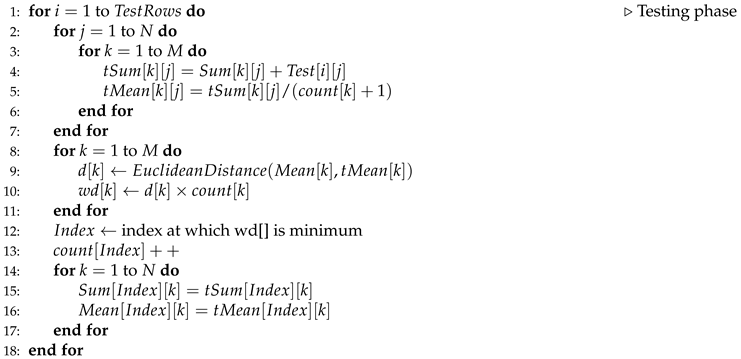

In fact, all of the above algorithms apart from KNN have one or more arbitrary parameters to be set, e.g., number of iterations, number of trees, choice of optimization algorithm, choice of kernels, number of hidden layers, number of nodes in each hidden layer, choice of activation function etc. Although KNN has a deterministic training and testing complexity, which can be anticipated beforehand, its testing time complexity is linear on training space, which is a very time-consuming process and renders KNN effectively ineffective in case of large training data. Here, we propose a new supervised learning algorithm that has a deterministic running time and can learn in time and classify new inputs in times, where n is the number of inputs, d is the dimensionality of input space and k is the number of classes under consideration. For a specific problem, the dimensionality of input space d and the number of classes k are fixed. Thus, unlike KNN, the training phase time complexity of our proposed algorithm is linear on the number of inputs and the testing time complexity per sample is constant. So, whenever we need a light-weight deterministic algorithm like KNN that, unlike KNN, can effectively classify new instances in constant time, we can use our proposed algorithm, which does not involve solving a complex quadratic programming problem (as like SVM) or, operations that require matrix multiplication (for NN) or, the alike.

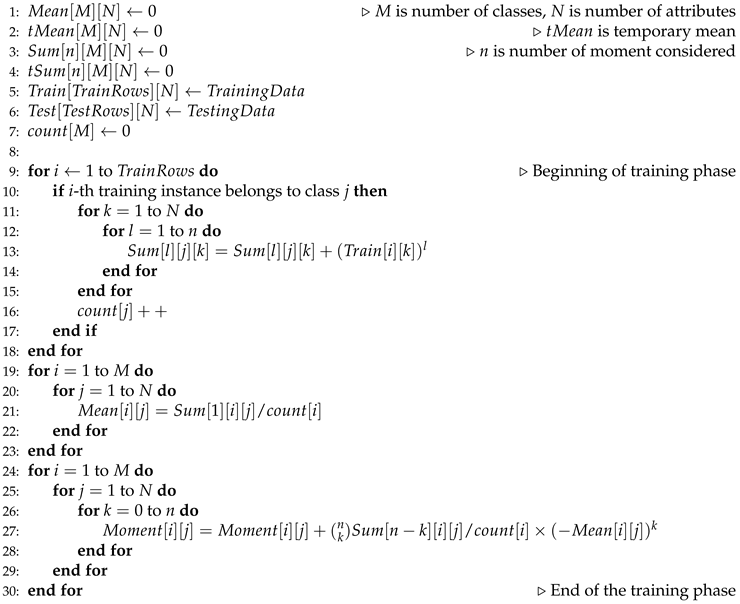

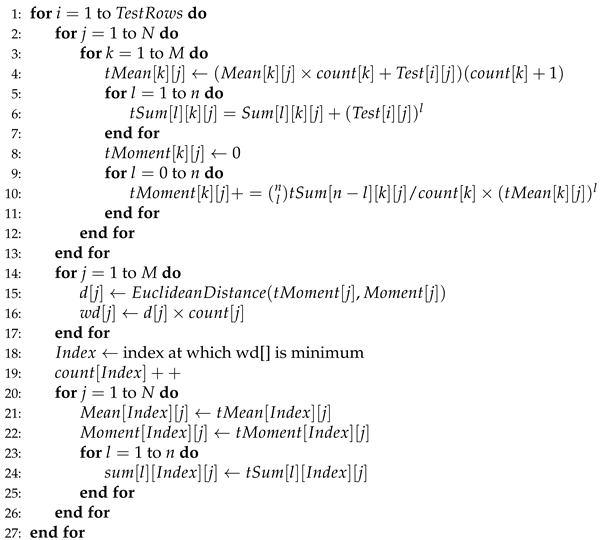

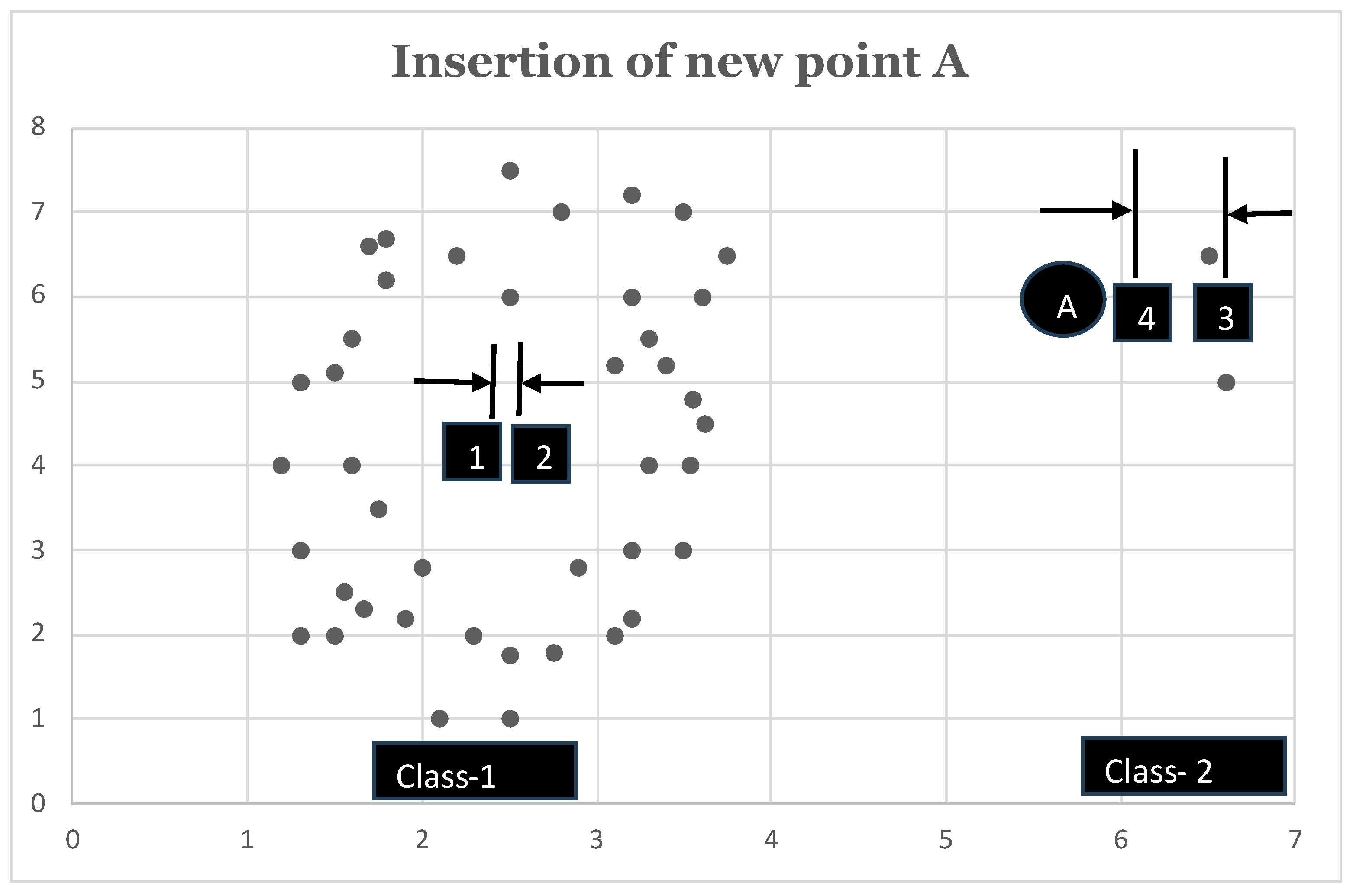

In the training phase, our proposed algorithm resorts to find the n-th moment (raw or central) of each attribute of every class. At testing phase, the algorithm temporarily includes the new input into each of the k classes and computes the new, temporary n-th moment for each attribute of each class resulting from such temporary inclusion. The new input will then be finally classified into the class for which such inclusion causes minimum displacement in the existing n-th moment of the underlying class attributes. Once the new input is classified, the n-th moment of the attributes of the respective class is updated to reflect the change, while the moments of all other classes are left unchanged. Thus, apart from classifying new input in constant time, our algorithm also evolves incrementally after inclusion of every new data point, which makes the model dynamic in nature.

The rest of the article is organized as follows: section: 2 provides the definitions of raw and central moments as used in our analysis, section: 3 provides the new algorithm for supervised learning based upon Minimum Displacement in Existing Moment (MDEM) technique as improvised here, section: 4 discusses the time complexities of the proposed algorithm, section: 5 presents the methodology used for empirical analysis, section: 6 describes and elaborates the data, section: 7 presents various preprocessing techniques used for data cleansing, section: 8 discusses the empirical results and compares the performance of our proposed algorithms to that of various state of the art supervised learning techniques including K-Nearest Neighbor (KNN), Support Vector Machine (SVM), Random Forest (RF), Logistic Regression (LR) and Neural Network (NN), and finally, section: 9 concludes the article.