Submitted:

11 January 2025

Posted:

13 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- •

- Tensor-sensitive NPU performance: Although NPUs can exhibit superior performance under optimal conditions, their efficiency is highly dependent on tensor factors such as the order, size and shape. If tensors do not align with the NPU’s hardware structure, the performance benefit of ’weight-stall’ computation cannot be fully realized, potentially causing performance to regress to GPU levels.

- •

- Static NPU graph with a high generation cost: Existing mobile NPUs only support static computation graphs, which are incompatible with dynamic workloads of LLMs. Due to inherent constraints in the NPU architecture, generating an optimal graph for NPU is more complex than for GPU. Moreover, the overhead of graph generation is non-negligible and related to the size of each tensor, making it impractical to generate the NPU graph during runtime.

- •

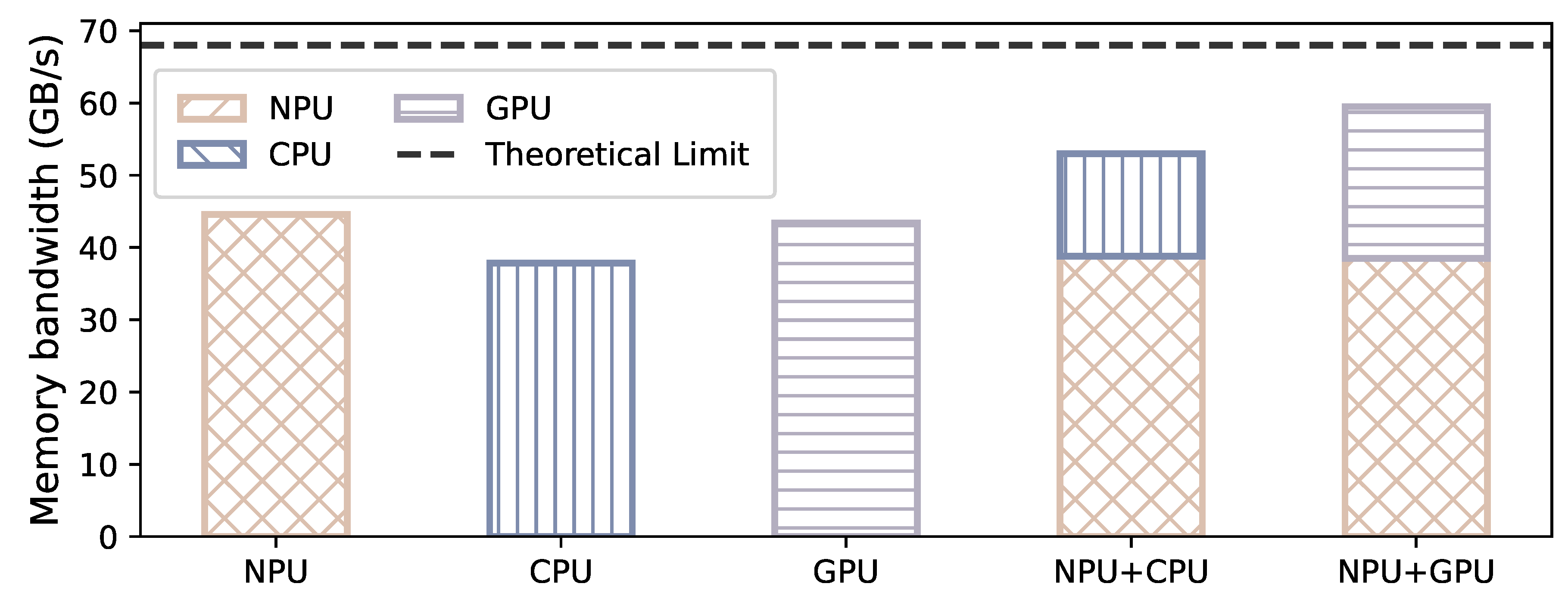

- Memory bandwidth restriction for a single processor: A single processing unit is insufficient to fully saturate the SoC’s memory bandwidth. For example, GPU alone can utilize only GB/s of memory bandwidth in memory-intensive workloads. In contrast, employing two processing units concurrently can achieve a memory bandwidth of about 60 GB/s (maximum memory bandwidth in SoC is 68 GB/s).

2. Background & Related Work

| Vendor | SoC | GPU | GPU FP16 | NPU | NPU INT8 | NPU FP16 |

|---|---|---|---|---|---|---|

| Qualcomm | 8 Gen 3 | Adreno 750 | 2.8 TFlops | Hexagon | 73 Tops | 36 TFlops |

| MTK | K9300 | Mali-G720 | 4.0 TFlops | APU 790 | 48 Tops | 24 TFlops |

| Apple | A18 | Bionic GPU | 1.8 TFlops | Neural Engine | 35 Tops | 17 TFlops |

| Nvidia | Orin | Ampere GPU | 10 TFlops | DLA | 87 Tops | None |

| Tesla | FSD | FSD GPU | 0.6 TFlops | FSD D1 | 73 Tops | None |

2.1. LLM Inference

2.2. Mobile-Side Heterogeneous SoC

| Framework | CPU | GPU | NPU | NPU GEMM Type | Independency on | Accuracy | Performance | |||

|---|---|---|---|---|---|---|---|---|---|---|

| INT | FP | INT | FP | INT | FP | Sparse Activation | ||||

| MLLM-NPU [50] | INT4 | FP16/32 | / | / | INT8 | / | INT | ✗ | Depend on activation | High |

| Qualcomm-AI [51] | INT4/8 | W4A16 | / | FP16 | INT4/8 | / | INT | ✓ | Decrease | High |

| MLC [34] | / | W4A16 | / | W4A16 | / | / | / | ✓ | ✓ | Low |

| Llama.cpp [52] | INT4/8 | W4A16 | / | W4A16 | / | / | / | ✓ | ✓ | Low |

| Onnxruntime [53] | / | FP16/32 | / | / | INT8/16 | / | INT | ✓ | Decrease | Medium |

| MNN [35] | INT8 | W4A16 | / | W4A16 | / | / | / | ✓ | ✓ | Medium |

| Ours | INT8 | W4A16 | INT8 | W4A16 | INT4/8 | W4A16 | FLOAT | ✓ | ✓ | High |

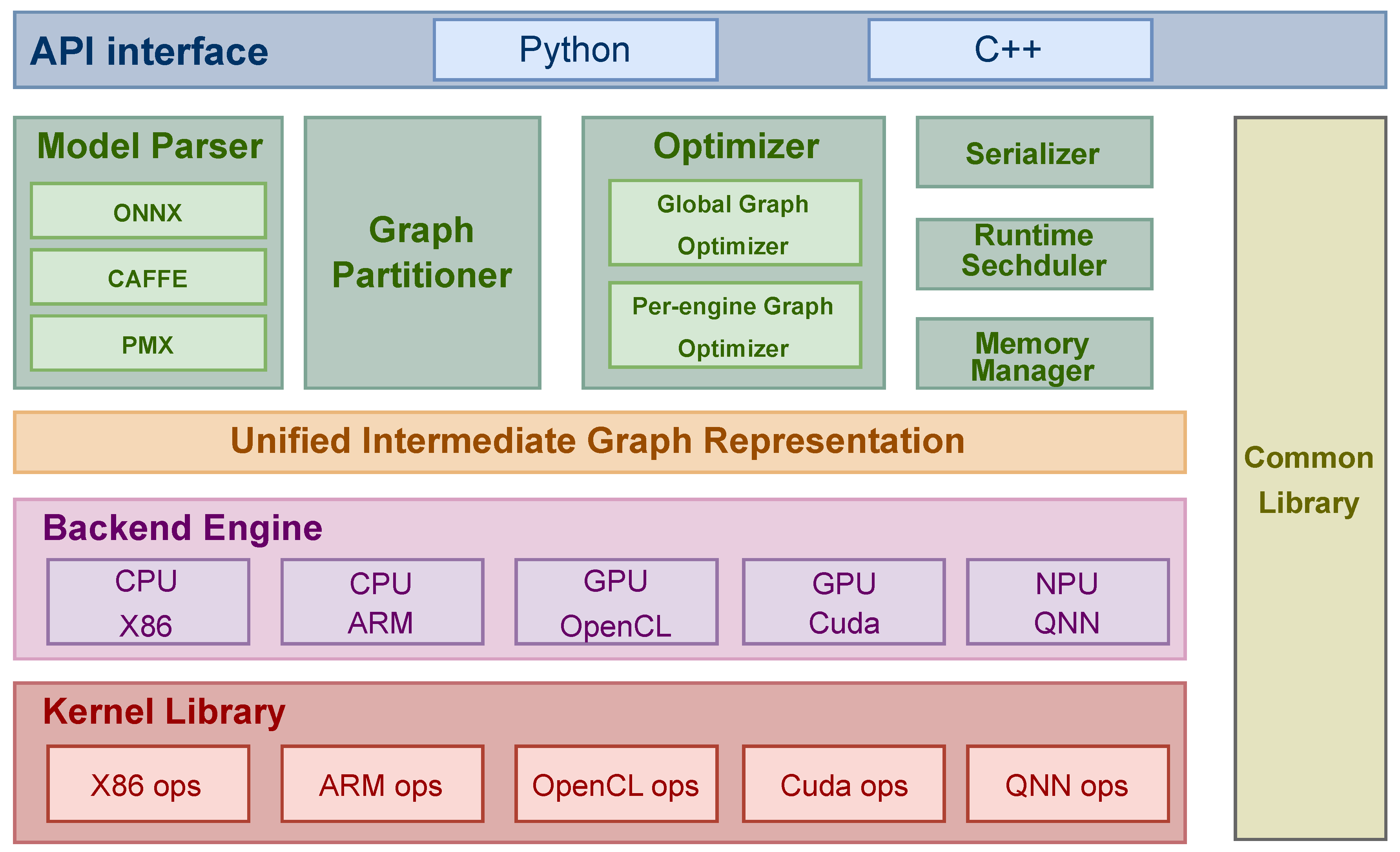

2.3. Mobile-Side Inference Engine

3. Performance Characteristic

3.1. GPU Characteristics

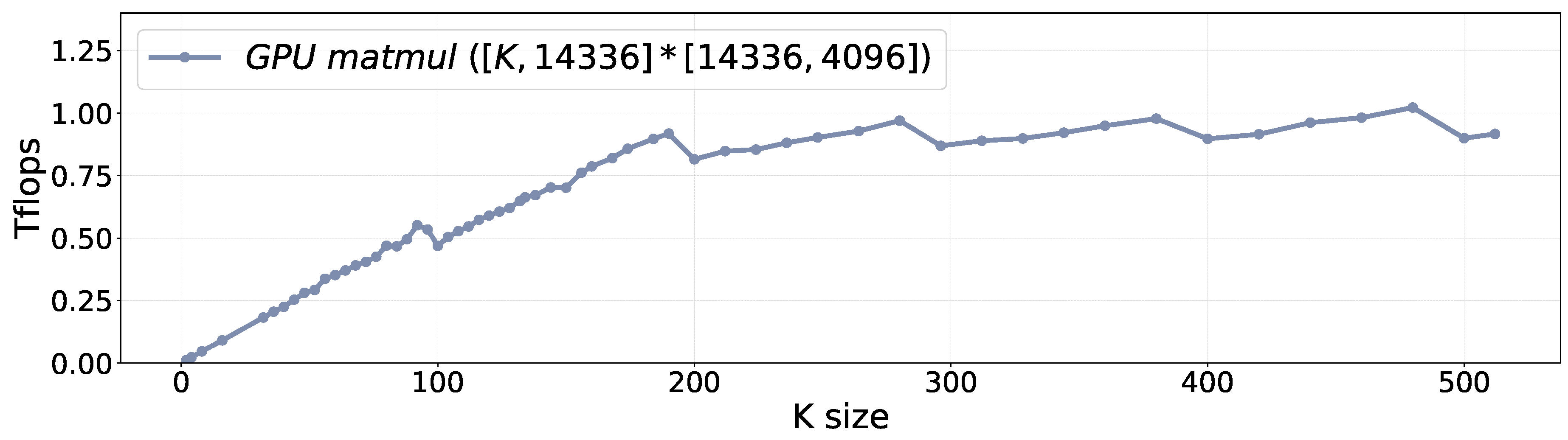

- Characteristic ❶: Linear Performance. Figure 2 illustrates the performance of mobile GPUs with varying tensor sizes. When the tensor size is small, GPU computation is memory-bound. With the increase of tensor size, the total FLOPS increases linearly. Once the size surpasses a certain threshold, GPU computation turns to be computation-bound, the total FLOPS stays stable.

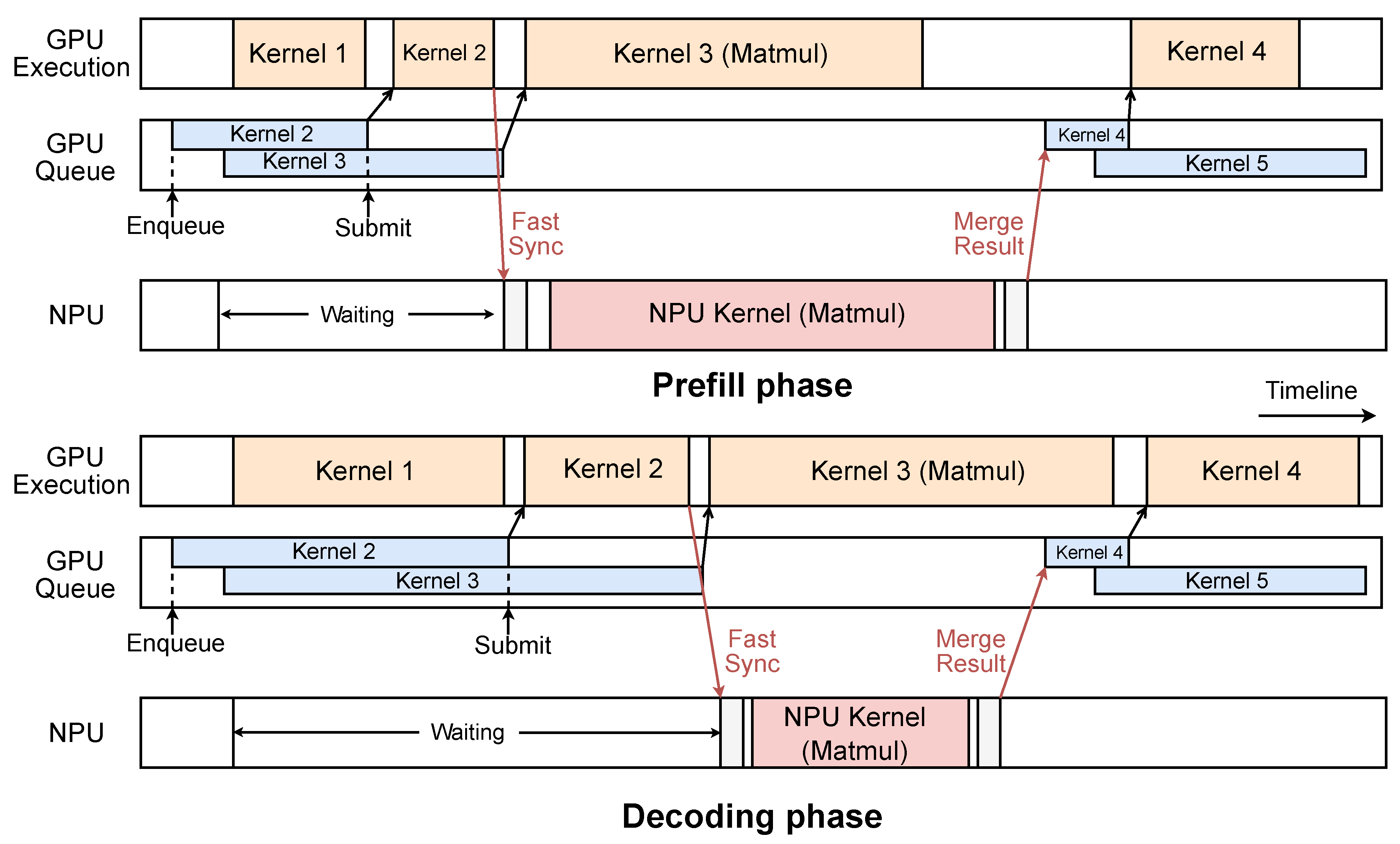

- Characteristic ❷: High-cost Synchronization. There are two primary types of synchronization overheads associated with mobile GPUs. The first type arises from the data copy. Since existing GPU frameworks still maintain a separate memory space for mobile GPUs, developers must utilize APIs such as ‘clEnqueueWriteBuffer’ to transfer data from the CPU-side buffer to GPU memory. Unfortunately, this transfer process incurs a fixed latency, approximately 400 microseconds on our platform, regardless of data size. The second type of overhead is related to kernel submission. As GPUs adopt the asynchronous programming model, subsequent kernels can be queued while the current one is executing, making the submission overhead negligible (about 10 to 20 microseconds). However, after synchronization, the GPU queue becomes empty, which causes an additional latency of 50 to 100 microseconds due to the overhead of kernel queueing and submission.

3.2. NPU Characteristics

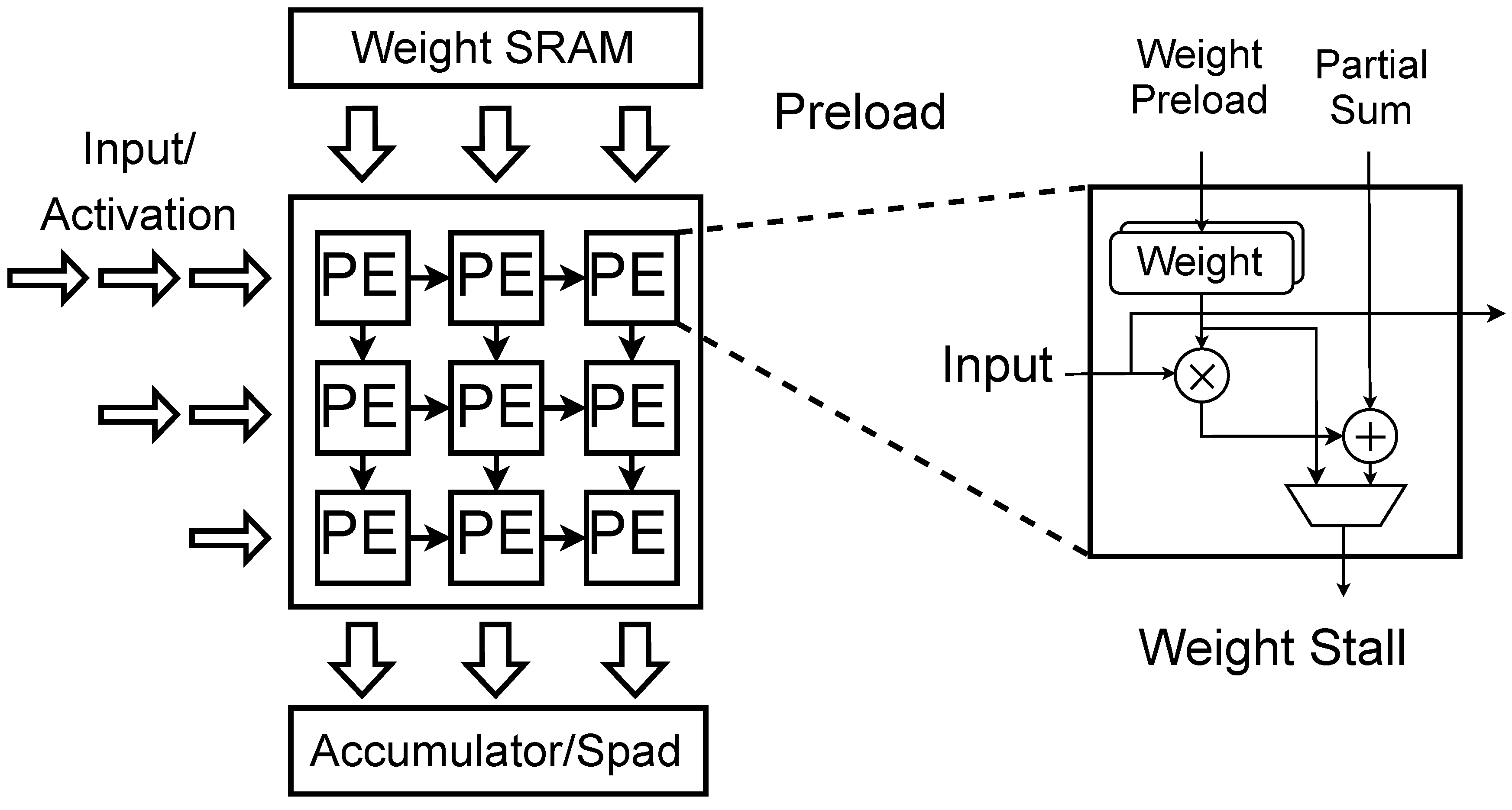

- Characteristic ❶: Stage Performance. Due to the fixed size of the hardware computing array (e.g., systolic array) within NPUs, the dimensions of the tensor used for the Matmul operator may not align with the size of the hardware computing units, which can lead to inefficient use of computational resources. As shown in Figure 4, this misalignment results in a phenomenon referred to as stage performance across different tensor sizes. For instance, considering an NPU with a matrix computation unit utilizing systolic arrays, any computing tensor with dimensions smaller than 32 will exhibit the same computational latency, leading to significant performance degradation for certain tensor shapes. To fully utilize the NPU’s computational resources, the compiler partitions tensors into tiles that align with the hardware configuration of the matrix computation unit. When tensor dimensions are not divisible by the size of the matrix computation unit, the NPU compiler must introduce internal padding to align with the required computation size. This alignment results in a stage performance effect during NPU calculations.

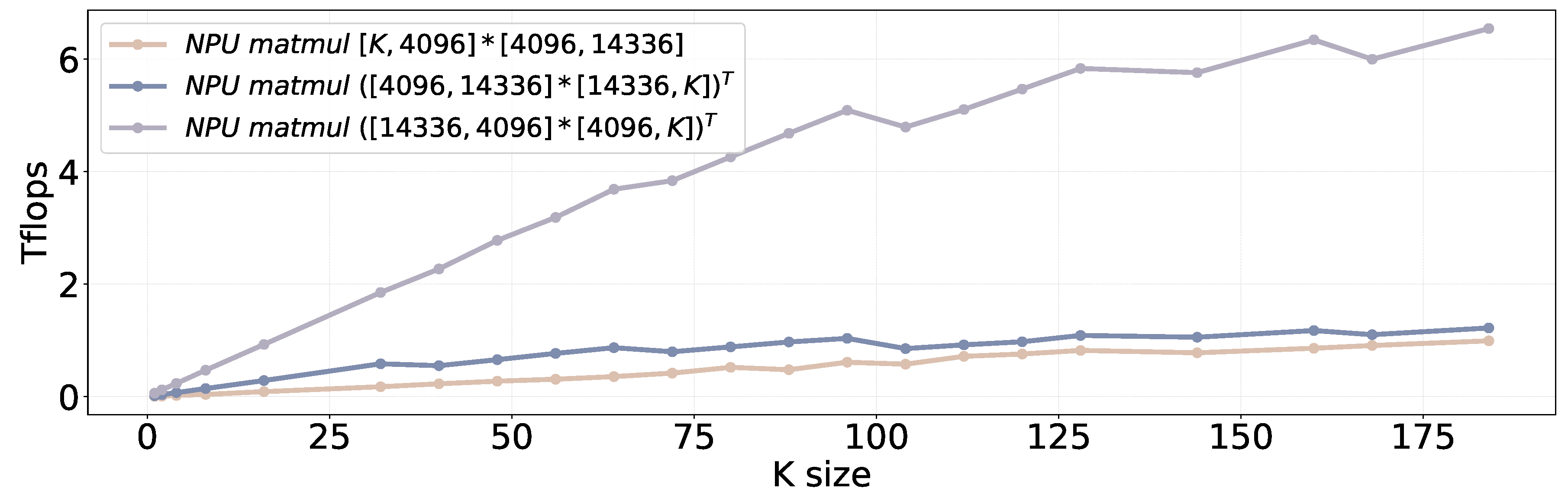

- Characteristic ❷: Order-sensitive Performance. In addition to stage performance, NPUs also exhibit order-sensitive computation behavior. Consider two tensors with dimensions and , where . A conventional matrix multiplication (MatMul) operation requires operations. If we reverse the order of these tensors, i.e., , the total number of computation operations remains unchanged. However, this can lead to significant performance degradation for the NPU, a phenomenon we refer to as order-sensitive performance. Figure 5 presents a specific example where the matrix multiplication operation of achieves 6× performance improvement compared to .

- Characteristic ❸: Shape-sensitive Performance. In addition to order-sensitive performance, NPUs also exhibit shape-sensitive performance characteristic. Even when the input tensor is larger than the weight tensor, the NPU’s efficiency is influenced by the ratio between row and column sizes. More specifically, when the row size of the input tensor exceeds the column size, NPU demonstrates a better performance (compare the blue line with the purple line in Figure 5). This is primarily because the column size of the input tensor is the same as the row size of the weight tensor. A larger column size of input tensor results in a larger weight tensor, undermining the advantages of the weight-stall computation paradigm.

3.3. SoC Memory Bandwidth

- Characteristic ❶: Underutilized Memory Bandwidth with Single Processor. Although mobile SoCs employ a unified memory address space for multiple heterogeneous processors, we have observed that no single processor can fully utilize the total memory bandwidth of the SoC under decoding workloads. As shown in Figure 6, in the Qualcomm Snapdragon 8 Gen 3 platform, the maximum available SoC memory bandwidth is approximately 68 GB/s (the black dotted line in the figure). However, using one processor (e.g., CPU, GPU or NPU), can only achieve 40 to 45 GB/s under decoding workloads. When NPU and GPU tasks are executed concurrently, the combined memory bandwidth utilization increases to approximately 60 GB/s, which is very close to the theoretical bandwidth limit. Therefore, NPU-GPU parallelism presents a new opportunity to enhance the decoding phase of LLMs, given that the token generation rate is linearly correlated with the available memory bandwidth.

4. Design

4.1. Tensor Partition Strategies

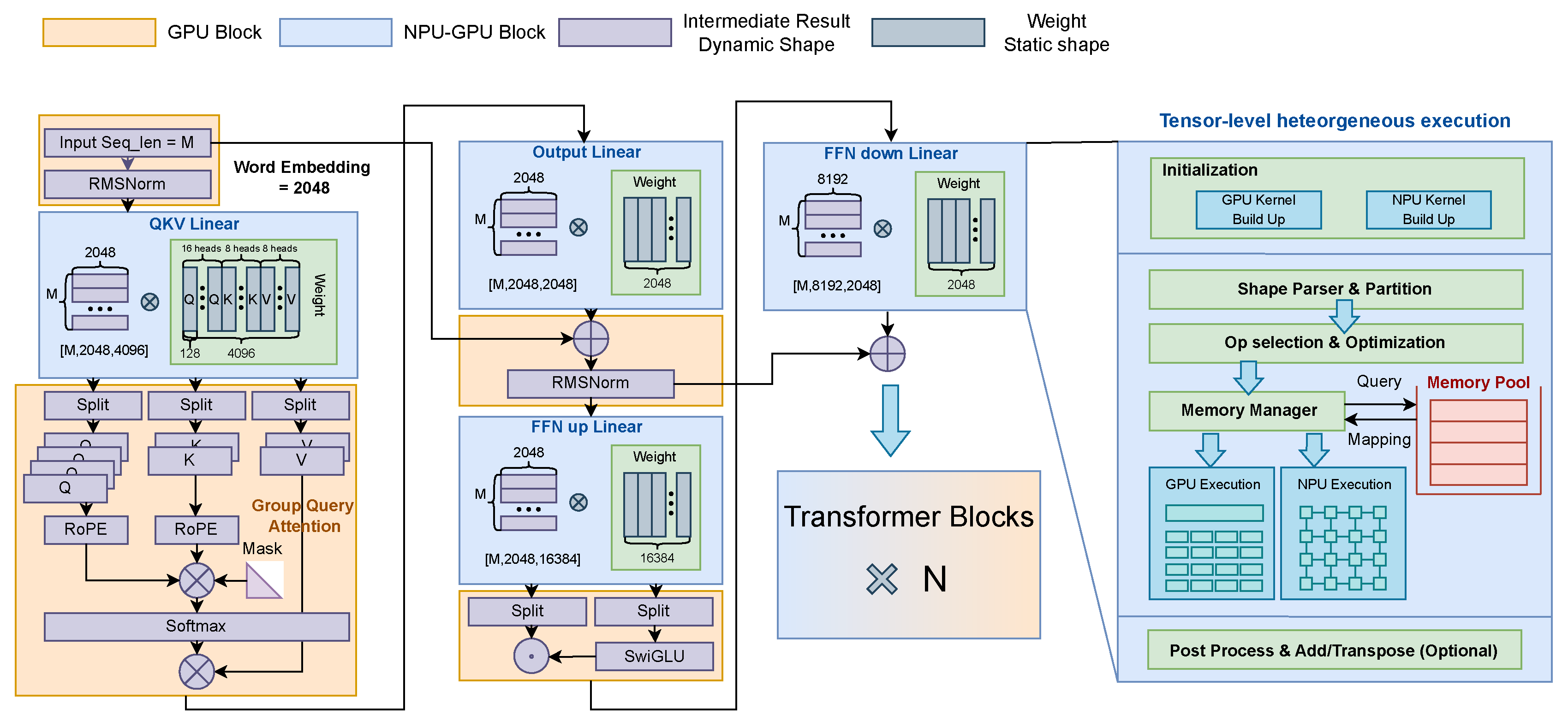

4.1.1. Tensor Partition During the Prefill Phase

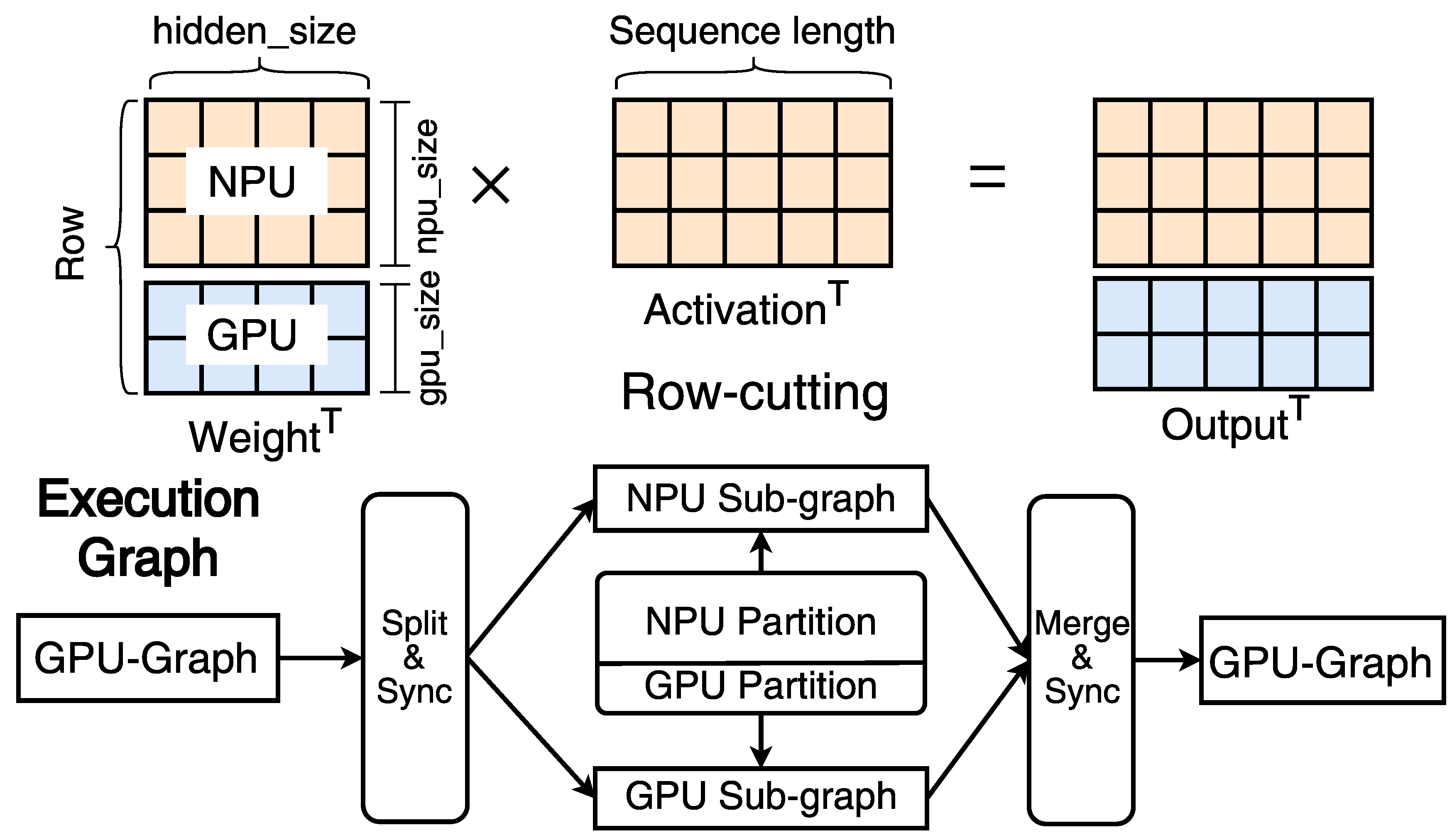

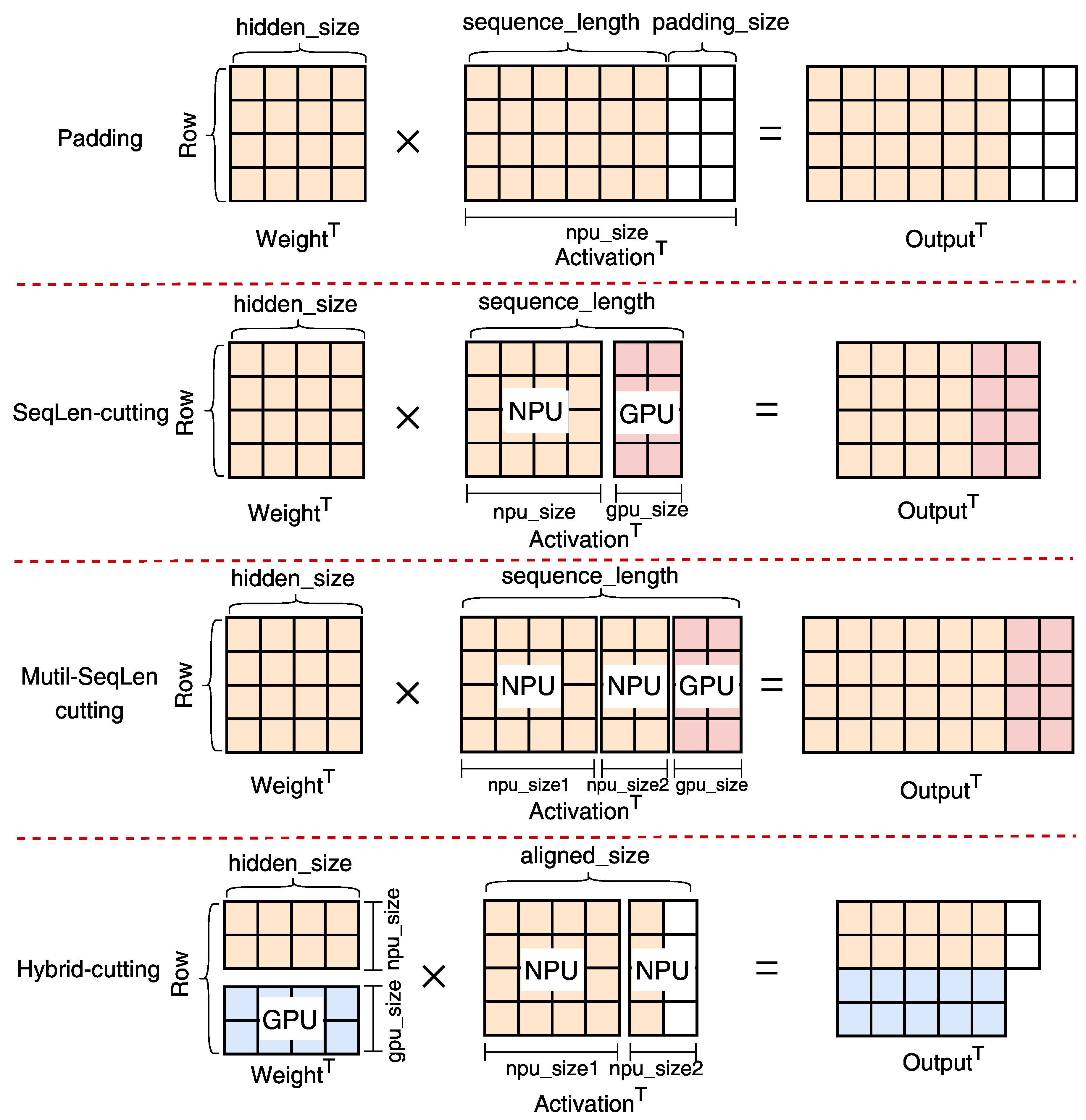

- Row-cutting. In the prefill phase, although the NPU can outperform the GPU by an order of magnitude in ideal scenarios, its performance is significantly influenced by the shape of the input and weight tensors. First, when the sequence length is short, NPU cannot exploit all available computational resources due to NPU-❶: stage performance, resulting in a similar performance compared to the GPU. Second, due to the dimensionality reduction matrix inherent in the FFN-down, the column size of this matrix is larger than the row size (after transposition). This configuration is suboptimal for NPU execution even with large sequence lengths, owing to NPU-❸: shape-sensitive performance. In such scenarios, the NPU exhibits only 0.5× to 1.5× performance improvement over the GPU, due to the NPU’s exceptionally low computational efficiency on this tensor shape. The Matmul on this tensor accounts for nearly half of the total prefill execution time.

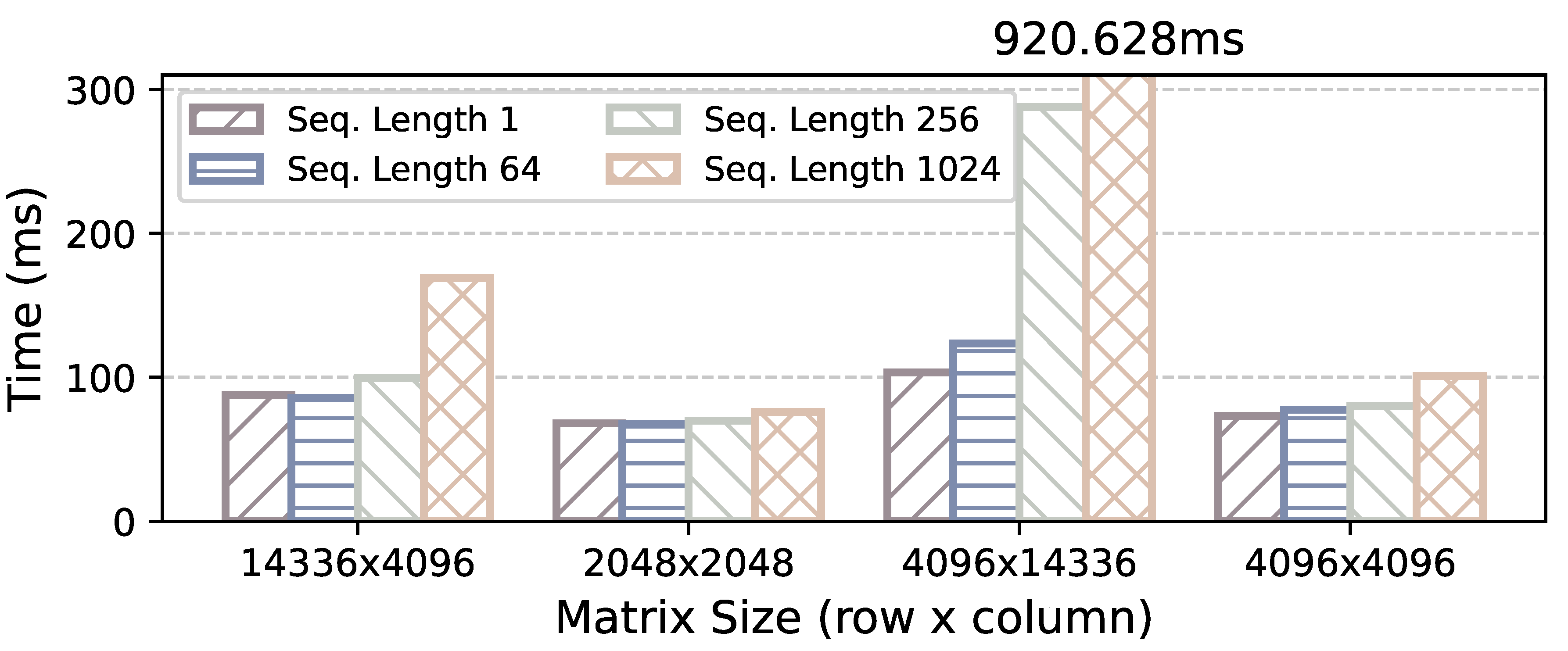

- Sequence-length cutting. In addition to NPU’s fluctuating performance, the mobile-side NPUs present another constraint: they only support static graph execution. The shape and size of tensors at runtime need to be ascertained during the kernel initialization phase. This limitation stems from the dataflow graph compilation [61,62,63], a method widely adopted by current mobile NPUs [33,64]. Furthermore, as shown in Figure 9, the cost of kernel optimization for the NPU is highly dependent on tensor size, as larger tensors expand the search space for optimization [65,66,67]. In contrast, the GPU framework provides a set of kernel implementations, each of which is adaptable to a variety of tensor shapes. This facilitates the dynamic-shape kernel execution at runtime.

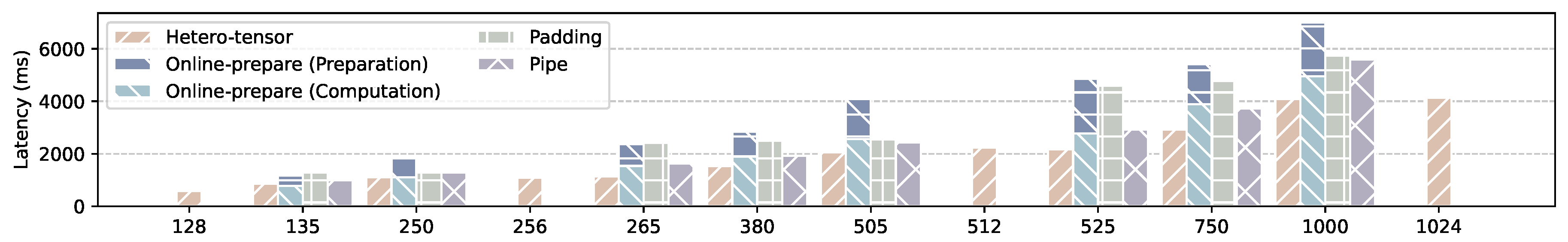

- Multi-sequence-length cutting and Hybrid-cutting. As the GPU’s performance is generally weaker than the NPU, its computations will become a bottleneck when the sequence length surpasses a certain threshold. To mitigate this, we further partition the input tensor along the sequence length dimension into multiple sub-tensors, each with predefined shapes, and one additional sub-tensor with an arbitrary shape (Multi-sequence-length cutting). All sub-tensors with predefined shapes are executed sequentially on the NPU. For instance, if the input tensor’s sequence length is 600, it can be divided into sub-tensors with sizes of 512, 32 and 56. 512 and 32 are the pre-defined tensor shapes, which can be executed sequentially on the NPU, while the sub-tensor with a dynamic size of 56 is offloaded to the GPU. In addition to multi-sequence-length cutting, HeteroLLM can also employ a hybrid approach, which combines row-cutting and sequence-length cutting (hybrid-cutting). In this configuration, HeteroLLM continues to use padding for NPU computation while offloading a portion of the computational load to the GPU backend based on the row dimension. Through these elaborate tensor partition approaches, HeteroLLM can overlap the execution time between the GPU and NPU, and further select the optimal partition strategies according to the different sequence lengths.

4.1.2. Tensor Partition During the Decoding Phase

4.2. Fast Synchronization

4.3. Putting It All Together

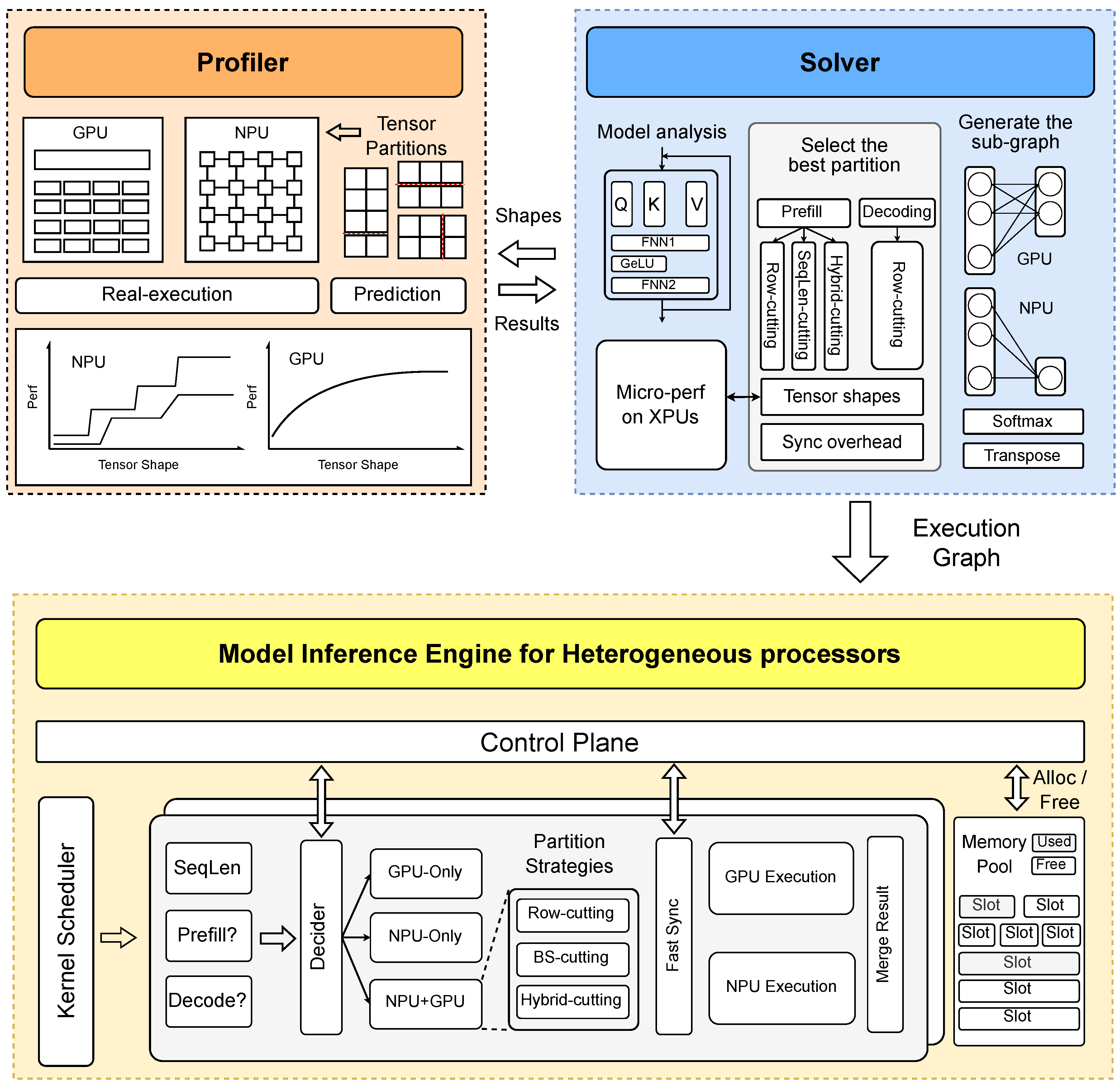

- Performance Profiler. To determine the optimal partition solution, the solver works in conjunction with a performance profiler tailored for heterogeneous processors. Our profiler operates in two modes: real-execution and prediction. In the real-execution mode, the profiler executes the target operator with various tensor shapes on actual hardware, gathering precise performance metrics for both GPUs and NPUs. Although this mode is time-consuming, it can be conducted offline. Moreover, the NPU’s stage performance characteristic facilitates effective pruning of the tensor partition search space, with constraints requiring row partitions to be aligned to 256 and sequence length partitions to 32, thereby reducing the number of candidate partitions. In addition to the real-execution mode, we provide a prediction mode. Due to the inherent fluctuation in hardware performance, minor inaccuracies in performance results across different backends are tolerable for our solver. Using traditional machine learning techniques, such as decision tree regression, we can predict NPU performance across different tensor shapes. Conversely, given that GPU performance is more stable and less dependent on tensor shapes, we easily estimate GPU execution time in compute-intensive scenarios using a fixed TFLOPS rate.

- Tensor Partition Solver. After obtaining the hardware performance results across various tensor shapes, the solver must determine the optimal partition strategy, taking into account GPU-only, NPU-only and GPU-NPU parallelism. The objective function to be optimized by the solver is illustrated in the following equation.

- Inference Engine. During execution, a control plane decider determines whether a kernel is executed on the NPU backend, the GPU backend, or using NPU-GPU parallelism, based on the inference phases and sequence length. When two adjacent kernels are allocated to different backends, our HeteroLLM engine employs a fast synchronization mechanism to ensure data consistency. Upon completion of kernel execution on both backends, it merges intermediate results as needed. Besides, the HeteroLLM engine also manages a memory pool for host-device shared buffers, which are allocated or reclaimed as input and output tensors for each GPU/NPU kernel, bypassing the organization of the device driver.

5. Evaluation

5.1. Experimental Setup

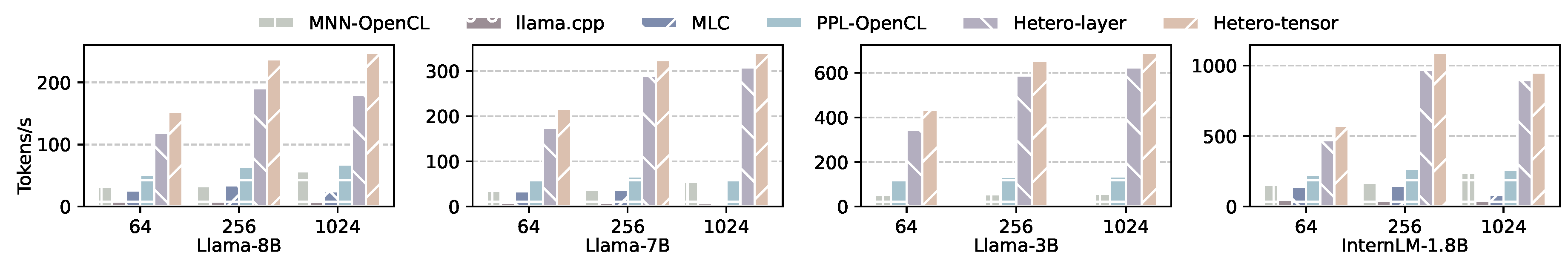

5.2. Prefill Performance

5.2.1. Aligned Sequence Length

5.2.2. Misaligned Sequence Length

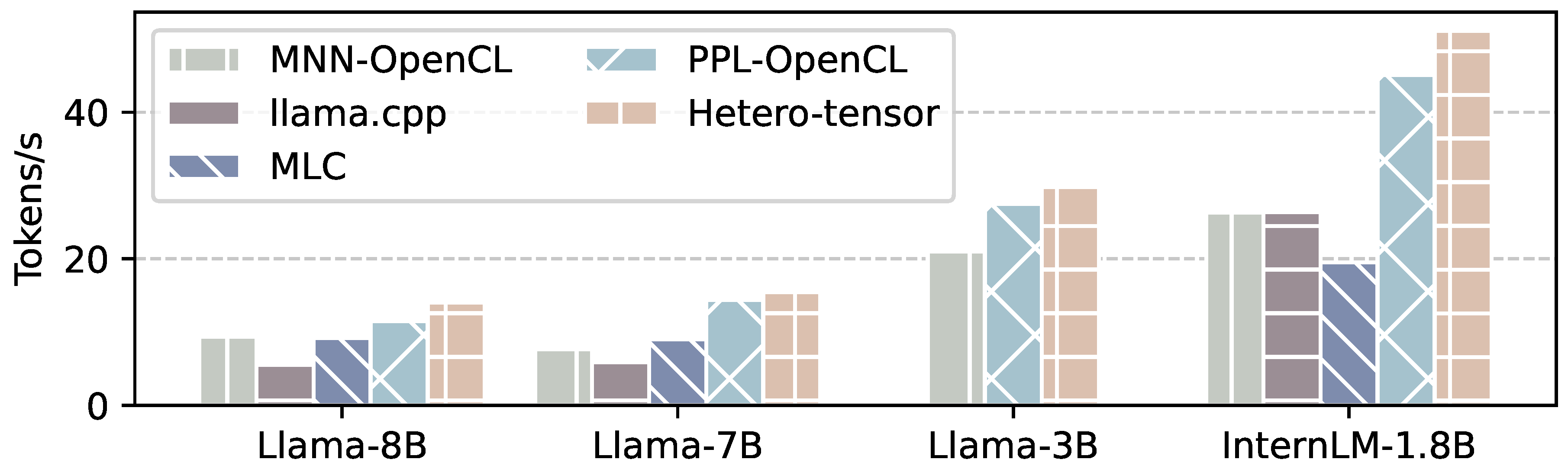

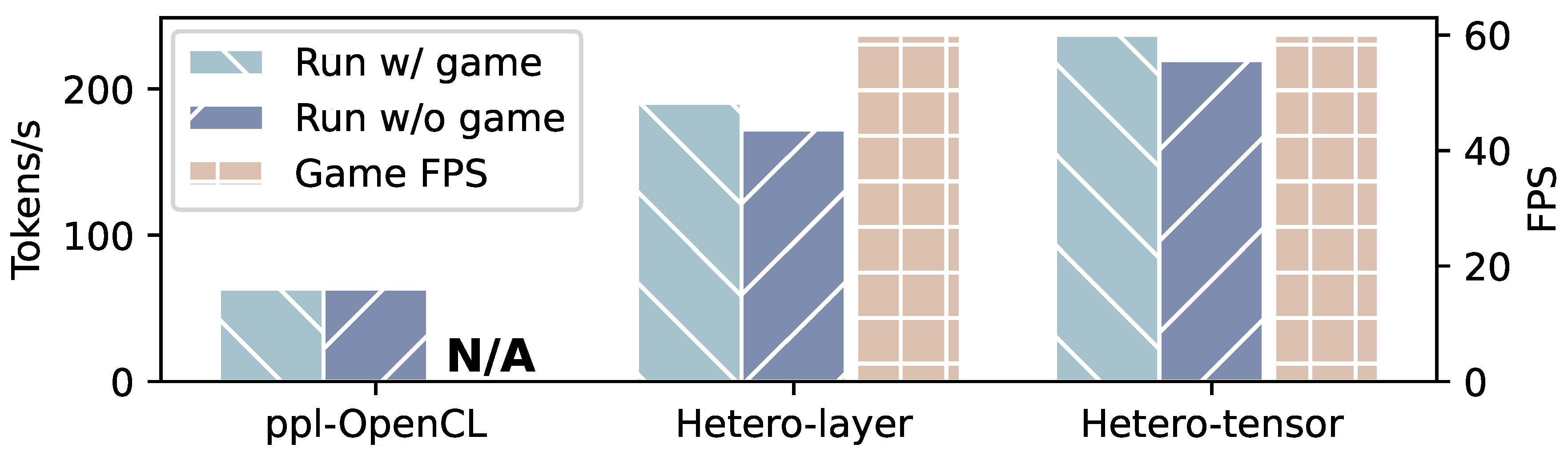

5.3. Decoding Performance

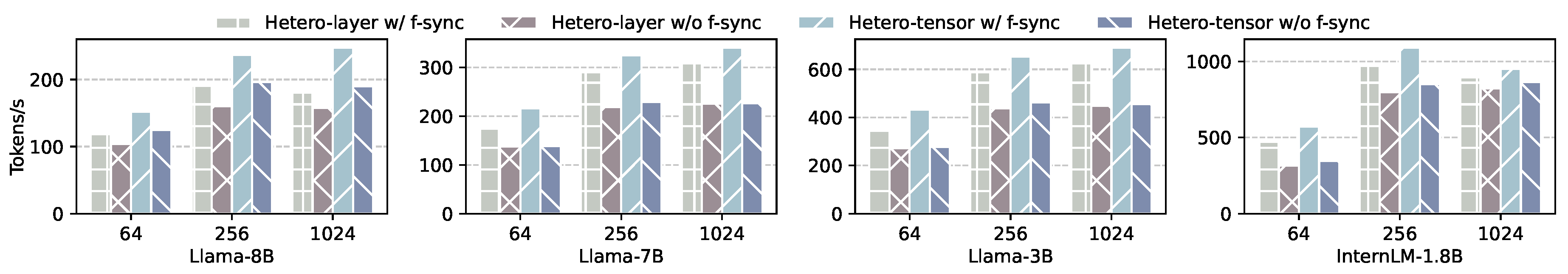

5.4. Effect of Fast Synchronization

- Prefill Performance: Figure 15 presents the prefill performance of Hetero-layer and Hetero-tensor with and without fast synchronization on various models, under sequence lengths of 64, 256 and 1024. On Llama-8B, the use of fast synchronization improves the performance of Hetero-layer and Hetero-tensor by 15.8% and 24.3% on average. Under a sequence length of 256, the prefill speed of Hetero-tensor increases from 196.44 tokens/s to 236.92 tokens/s. For Llama-7B and InternLM-1.8B, they achieve performance improvements of 49.0% and 34.5% with Hetero-tensor and 31.7% and 26.7% with Hetero-layer, respectively. Hetero-tensor is more susceptible to the synchronization cost, which may disrupt the computational balance between the GPU and NPU.

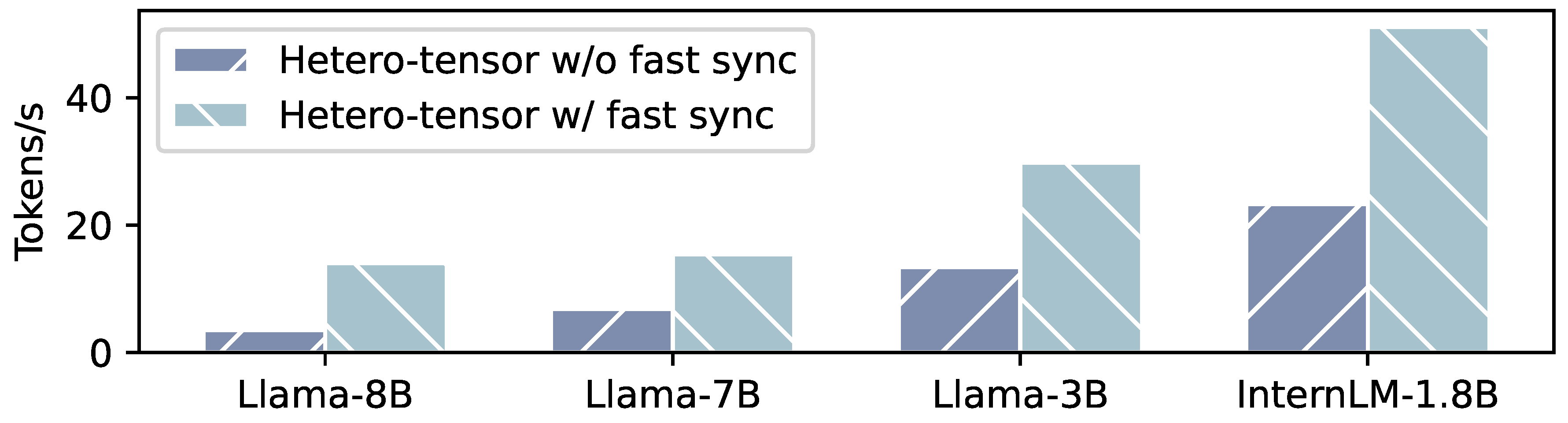

- Decoding Performance: Figure 17 presents the decoding performance of Hetero-tensor with and without fast synchronization. On Llama-8B, the decoding rate of Hetero-tensor speedups to 4.01× with fast synchronization. On other models, we observe comparable improvements of 2.2× speedup. The speedup in decoding phase is much higher than that in prefill phase, because the execution time of each kernel in the decoding phase is much shorter. Thus, the overhead of synchronization and GPU kernel submission is non-negligible.

5.5. GPU Performance Interference

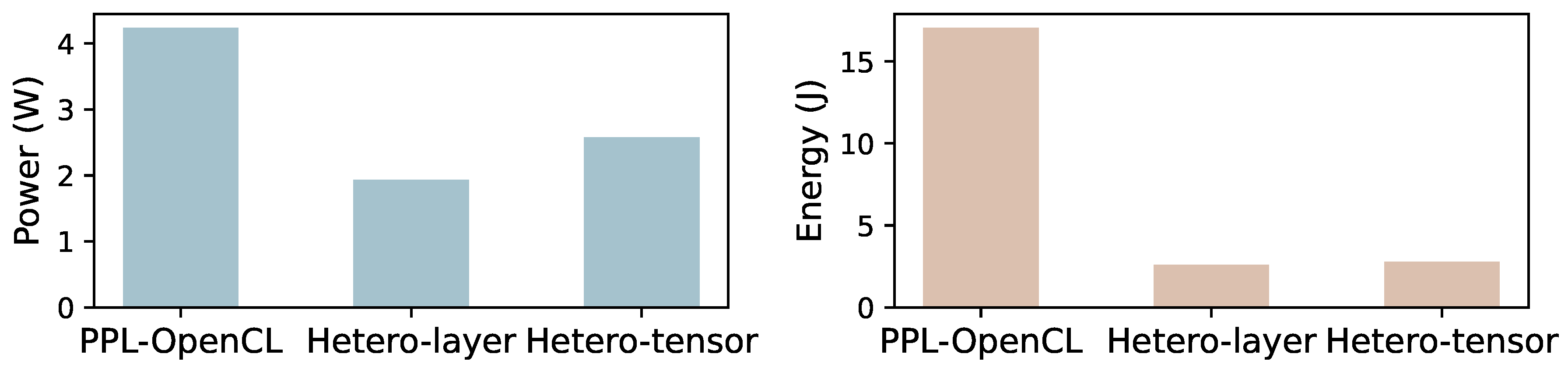

5.6. Energy Consumption

6. Discussion

- Model Quantization. HeteroLLM employs W4A16 (weight-only) quantization, striking a balance between memory footprint and computational accuracy. W4A16 quantization is the most widely used approach in real-world deployments. W4A16 quantizes model weights during storage and dequantizes them to FLOAT for computation. In contrast, other approaches [26,29,51] require quantization of both activations and weights from FLOAT to INT, which may compromise inference accuracy.

- Shared Memory Between GPUs and NPUs. Current mobile SoCs (e.g., Apple M/A series, Qualcomm Snapdragon series) support a unified address space for CPU, GPU and NPU. In our implementation, we have successfully established shared memory between the CPU and GPU using OpenCL, and between the CPU and NPU using QNN APIs. Additionally, by employing the ‘CL_MEM_USE_HOST_PTR’ flag, we can also map the NPU’s shared memory to the GPU, thereby establishing shared memory between the GPU and NPU. We recommend that mobile SoC vendors provide native APIs to allocate unified memory for all heterogeneous processors.

7. Conclusions

| 1 | only utilizes NPU’s TOPS during the decoding phase, as NPU currently does not support W4A16 for decoding. |

References

- OpenAI. Introducing ChatGPT. https://openai.com/index/chatgpt/, 2024. Referenced January 2024.

- McIntosh, T.R.; Susnjak, T.; Liu, T.; Watters, P.; Halgamuge, M.N. From google gemini to openai q*(q-star): A survey of reshaping the generative artificial intelligence (ai) research landscape. arXiv preprint arXiv:2312.10868 2023.

- Anthropic. Claude - Right-sized for any task, the Claude family of models offers the best combination of speed and performance. https://www.anthropic.com/, 2024. Referenced December 2024.

- GLM, T.; Zeng, A.; Xu, B.; Wang, B.; Zhang, C.; Yin, D.; Rojas, D.; Feng, G.; Zhao, H.; Lai, H.; et al. ChatGLM: A Family of Large Language Models from GLM-130B to GLM-4 All Tools, 2024, [arXiv:id=’cs.CL’ full_name=’Computation and Language’ is_active=True alt_name=’cmp-lg’ in_archive=’cs’ is_general=False description=’Covers natural language processing. Roughly includes material in ACM Subject Class I.2.7. Note that work on artificial languages (programming languages, logics, formal systems) that does not explicitly address natural-language issues broadly construed (natural-language processing, computational linguistics, speech, text retrieval, etc.) is not appropriate for this area.’/2406.12793].

- Hong, W.; Wang, W.; Lv, Q.; Xu, J.; Yu, W.; Ji, J.; Wang, Y.; Wang, Z.; Dong, Y.; Ding, M.; et al. Cogagent: A visual language model for gui agents. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 14281–14290.

- You, K.; Zhang, H.; Schoop, E.; Weers, F.; Swearngin, A.; Nichols, J.; Yang, Y.; Gan, Z. Ferret-ui: Grounded mobile ui understanding with multimodal llms. In Proceedings of the European Conference on Computer Vision. Springer, 2025, pp. 240–255.

- Wang, J.; Xu, H.; Jia, H.; Zhang, X.; Yan, M.; Shen, W.; Zhang, J.; Huang, F.; Sang, J. Mobile-Agent-v2: Mobile Device Operation Assistant with Effective Navigation via Multi-Agent Collaboration. arXiv preprint arXiv:2406.01014 2024.

- Wang, J.; Xu, H.; Ye, J.; Yan, M.; Shen, W.; Zhang, J.; Huang, F.; Sang, J. Mobile-Agent: Autonomous Multi-Modal Mobile Device Agent with Visual Perception. arXiv preprint arXiv:2401.16158 2024.

- Yang, Z.; Teng, J.; Zheng, W.; Ding, M.; Huang, S.; Xu, J.; Yang, Y.; Hong, W.; Zhang, X.; Feng, G.; et al. CogVideoX: Text-to-Video Diffusion Models with An Expert Transformer. arXiv preprint arXiv:2408.06072 2024.

- Wang, P.; Bai, S.; Tan, S.; Wang, S.; Fan, Z.; Bai, J.; Chen, K.; Liu, X.; Wang, J.; Ge, W.; et al. Qwen2-VL: Enhancing Vision-Language Model’s Perception of the World at Any Resolution. arXiv preprint arXiv:2409.12191 2024.

- Bai, J.; Bai, S.; Yang, S.; Wang, S.; Tan, S.; Wang, P.; Lin, J.; Zhou, C.; Zhou, J. Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond. arXiv preprint arXiv:2308.12966 2023.

- Hong, W.; Ding, M.; Zheng, W.; Liu, X.; Tang, J. CogVideo: Large-scale Pretraining for Text-to-Video Generation via Transformers. arXiv preprint arXiv:2205.15868 2022.

- Zhang, P.; Dong, X.; Zang, Y.; Cao, Y.; Qian, R.; Chen, L.; Guo, Q.; Duan, H.; Wang, B.; Ouyang, L.; et al. Internlm-xcomposer-2.5: A versatile large vision language model supporting long-contextual input and output. arXiv preprint arXiv:2407.03320 2024.

- Xue, Y.; Liu, Y.; Nai, L.; Huang, J. V10: Hardware-Assisted NPU Multi-tenancy for Improved Resource Utilization and Fairness. In Proceedings of the Proceedings of the 50th Annual International Symposium on Computer Architecture, New York, NY, USA, 2023; ISCA ’23. [CrossRef]

- Xue, Y.; Liu, Y.; Huang, J. System Virtualization for Neural Processing Units. In Proceedings of the Proceedings of the 19th Workshop on Hot Topics in Operating Systems, New York, NY, USA, 2023; HOTOS ’23, p. 80–86. [CrossRef]

- Kwon, H.; Lai, L.; Pellauer, M.; Krishna, T.; Chen, Y.H.; Chandra, V. Heterogeneous dataflow accelerators for multi-DNN workloads. In Proceedings of the 2021 IEEE International Symposium on High-Performance Computer Architecture (HPCA). IEEE, 2021, pp. 71–83.

- Odema, M.; Chen, L.; Kwon, H.; Al Faruque, M.A. SCAR: Scheduling Multi-Model AI Workloads on Heterogeneous Multi-Chiplet Module Accelerators. In Proceedings of the 2024 57th IEEE/ACM International Symposium on Microarchitecture (MICRO), 2024, pp. 565–579. [CrossRef]

- Jouppi, N.P.; Young, C.; Patil, N.; Patterson, D.; Agrawal, G.; Bajwa, R.; Bates, S.; Bhatia, S.; Boden, N.; Borchers, A.; et al. In-Datacenter Performance Analysis of a Tensor Processing Unit. In Proceedings of the Proceedings of the 44th Annual International Symposium on Computer Architecture, New York, NY, USA, 2017; ISCA ’17, pp. 1–12. [CrossRef]

- Hsu, K.C.; Tseng, H.W. Accelerating applications using edge tensor processing units. In Proceedings of the Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, New York, NY, USA, 2021; SC ’21. [CrossRef]

- Xue, Y.; Liu, Y.; Nai, L.; Huang, J. Hardware-Assisted Virtualization of Neural Processing Units for Cloud Platforms. arXiv preprint arXiv:2408.04104 2024.

- George, B.; Omer, O.J.; Choudhury, Z.; V, A.; Subramoney, S. A Unified Programmable Edge Matrix Processor for Deep Neural Networks and Matrix Algebra. ACM Trans. Embed. Comput. Syst. 2022, 21. [CrossRef]

- Jiang, Y.; Zhu, Y.; Lan, C.; Yi, B.; Cui, Y.; Guo, C. A unified architecture for accelerating distributed {DNN} training in heterogeneous {GPU/CPU} clusters. In Proceedings of the 14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20), 2020, pp. 463–479.

- Hsu, K.C.; Tseng, H.W. Simultaneous and Heterogenous Multithreading. In Proceedings of the Proceedings of the 56th Annual IEEE/ACM International Symposium on Microarchitecture, 2023, pp. 137–152.

- Hsu, K.C.; Tseng, H.W. SHMT: Exploiting Simultaneous and Heterogeneous Parallelism in Accelerator-Rich Architectures. IEEE Micro 2024.

- Qualcomm. Snapdragon 8Gen3 - Mobile Platform ignites endless possibilities. https://www.qualcomm.com/products/mobile/snapdragon/smartphones, 2023-2024. Referenced December 2024.

- Xu, D.; Zhang, H.; Yang, L.; Liu, R.; Huang, G.; Xu, M.; Liu, X. Empowering 1000 tokens/second on-device llm prefilling with mllm-npu. arXiv preprint arXiv:2407.05858 2024.

- Xu, D.; Xu, M.; Wang, Q.; Wang, S.; Ma, Y.; Huang, K.; Huang, G.; Jin, X.; Liu, X. Mandheling: Mixed-precision on-device dnn training with dsp offloading. In Proceedings of the Proceedings of the 28th Annual International Conference on Mobile Computing And Networking, 2022, pp. 214–227.

- Gerogiannis, G.; Aananthakrishnan, S.; Torrellas, J.; Hur, I. HotTiles: Accelerating SpMM with Heterogeneous Accelerator Architectures. In Proceedings of the 2024 IEEE International Symposium on High-Performance Computer Architecture (HPCA), 2024, pp. 1012–1028. [CrossRef]

- Xue, Z.; Song, Y.; Mi, Z.; Chen, L.; Xia, Y.; Chen, H. PowerInfer-2: Fast Large Language Model Inference on a Smartphone. arXiv preprint arXiv:2406.06282 2024.

- Kumar, T.; Ankner, Z.; Spector, B.F.; Bordelon, B.; Muennighoff, N.; Paul, M.; Pehlevan, C.; Ré, C.; Raghunathan, A. Scaling laws for precision. arXiv preprint arXiv:2411.04330 2024.

- Xiao, C.; Cai, J.; Zhao, W.; Zeng, G.; Han, X.; Liu, Z.; Sun, M. Densing Law of LLMs, 2024, [arXiv:cs.AI/2412.04315].

- Sensetime. PPL - High-performance deep-learning inference engine for efficient AI inferencing. https://github.com/OpenPPL/ppl.nn, 2018. Referenced December 2024.

- Qualcomm. QNN. https://www.qualcomm.com/developer/software/qualcomm-ai-engine-direct-sdk, 2024. Referenced December 2024.

- MLC team. MLC-LLM - Universal LLM Deployment Engine with ML Compilation. https://github.com/mlc-ai/mlc-llm, 2023-2024. Referenced December 2024.

- Lv, C.; Niu, C.; Gu, R.; Jiang, X.; Wang, Z.; Liu, B.; Wu, Z.; Yao, Q.; Huang, C.; Huang, P.; et al. Walle: An End-to-End, General-Purpose, and Large-Scale Production System for Device-Cloud Collaborative Machine Learning. In Proceedings of the 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22), Carlsbad, CA, 2022; pp. 249–265.

- Nanoreview. Online. https://nanoreview.net/en/soc-list/rating, 2023-2024. Referenced December 2024.

- Liu, Y.; Li, H.; Cheng, Y.; Ray, S.; Huang, Y.; Zhang, Q.; Du, K.; Yao, J.; Lu, S.; Ananthanarayanan, G.; et al. CacheGen: KV Cache Compression and Streaming for Fast Large Language Model Serving. In Proceedings of the Proceedings of the ACM SIGCOMM 2024 Conference, New York, NY, USA, 2024; ACM SIGCOMM ’24, p. 38–56. [CrossRef]

- Lee, W.; Lee, J.; Seo, J.; Sim, J. InfiniGen: Efficient Generative Inference of Large Language Models with Dynamic KV Cache Management. In Proceedings of the 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), Santa Clara, CA, 2024; pp. 155–172.

- Ainslie, J.; Lee-Thorp, J.; de Jong, M.; Zemlyanskiy, Y.; Lebrón, F.; Sanghai, S. Gqa: Training generalized multi-query transformer models from multi-head checkpoints. arXiv preprint arXiv:2305.13245 2023.

- Kwon, W.; Li, Z.; Zhuang, S.; Sheng, Y.; Zheng, L.; Yu, C.H.; Gonzalez, J.; Zhang, H.; Stoica, I. Efficient memory management for large language model serving with pagedattention. In Proceedings of the Proceedings of the 29th Symposium on Operating Systems Principles, 2023, pp. 611–626.

- Yu, G.I.; Jeong, J.S.; Kim, G.W.; Kim, S.; Chun, B.G. Orca: A distributed serving system for {Transformer-Based} generative models. In Proceedings of the 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22), 2022, pp. 521–538.

- Fu, Y.; Xue, L.; Huang, Y.; Brabete, A.O.; Ustiugov, D.; Patel, Y.; Mai, L. ServerlessLLM: Low-Latency Serverless Inference for Large Language Models. In Proceedings of the 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), Santa Clara, CA, 2024; pp. 135–153.

- Li, Z.; Zheng, L.; Zhong, Y.; Liu, V.; Sheng, Y.; Jin, X.; Huang, Y.; Chen, Z.; Zhang, H.; Gonzalez, J.E.; et al. AlpaServe: Statistical Multiplexing with Model Parallelism for Deep Learning Serving. In Proceedings of the 17th USENIX Symposium on Operating Systems Design and Implementation (OSDI 23), Boston, MA, 2023; pp. 663–679.

- Zhang, C.; Yu, M.; Wang, W.; Yan, F. MArk: Exploiting Cloud Services for Cost-Effective, SLO-Aware Machine Learning Inference Serving. In Proceedings of the 2019 USENIX Annual Technical Conference (USENIX ATC 19), Renton, WA, 2019; pp. 1049–1062.

- Zhuang, D.; Zheng, Z.; Xia, H.; Qiu, X.; Bai, J.; Lin, W.; Song, S.L. MonoNN: Enabling a New Monolithic Optimization Space for Neural Network Inference Tasks on Modern GPU-Centric Architectures. In Proceedings of the 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), Santa Clara, CA, 2024; pp. 989–1005.

- Zhong, Y.; Liu, S.; Chen, J.; Hu, J.; Zhu, Y.; Liu, X.; Jin, X.; Zhang, H. DistServe: Disaggregating Prefill and Decoding for Goodput-optimized Large Language Model Serving. In Proceedings of the 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), Santa Clara, CA, 2024; pp. 193–210.

- Shubha, S.S.; Shen, H. AdaInf: Data Drift Adaptive Scheduling for Accurate and SLO-guaranteed Multiple-Model Inference Serving at Edge Servers. In Proceedings of the Proceedings of the ACM SIGCOMM 2023 Conference, 2023, pp. 473–485.

- Patel, P.; Choukse, E.; Zhang, C.; Shah, A.; Goiri, Í.; Maleki, S.; Bianchini, R. Splitwise: Efficient Generative LLM Inference Using Phase Splitting. In Proceedings of the 2024 ACM/IEEE 51st Annual International Symposium on Computer Architecture (ISCA), 2024, pp. 118–132. [CrossRef]

- Agrawal, A.; Kedia, N.; Panwar, A.; Mohan, J.; Kwatra, N.; Gulavani, B.; Tumanov, A.; Ramjee, R. Taming Throughput-Latency Tradeoff in LLM Inference with Sarathi-Serve. In Proceedings of the 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), Santa Clara, CA, 2024; pp. 117–134.

- Yi, R.; Li, X.; Qiu, Q.; Lu, Z.; Zhang, H.; Xu, D.; Yang, L.; Xie, W.; Wang, C.; Xu, M. mllm: fast and lightweight multimodal LLM inference engine for mobile and edge devices, 2023.

- Qualcomm. Llama-v3.1-8B-Chat on Qualcomm 8 Elite. https://aihub.qualcomm.com/mobile/models/llama_v3_1_8b_chat_quantized?searchTerm=llama, 2024. Referenced December 2024.

- Ggerganov. llama.cpp - LLM inference with minimal setup and state-of-the-art performance on a wide range of hardware. https://github.com/ggerganov/llama.cpp, 2023. Referenced December 2024.

- Microsoft. Accelerated Edge Machine Learning. https://onnxruntime.ai/, 2020. Referenced April 2023.

- Apple. A18. https://nanoreview.net/en/soc/apple-a18, 2024. Referenced December 2024.

- Huawei. Kirin-9000. https://www.hisilicon.com/en/products/Kirin/Kirin-flagship-chips/Kirin-9000, 2020. Referenced December 2024.

- Tencent. NCNN - High-performance neural network inference computing framework optimized for mobile platforms. https://github.com/Tencent/ncnn, 2017. Referenced December 2024.

- Intel. OpenVino - Open-source toolkit for optimizing and deploying deep learning models from cloud to edge. https://docs.openvino.ai/2024/index.html, 2018. Referenced December 2024.

- Google. TensorFlow Lite - Google’s high-performance runtime for on-device AI. https://tensorflow.google.cn/lite, 2017. Referenced December 2024.

- Liu, Y.; Wang, Y.; Yu, R.; Li, M.; Sharma, V.; Wang, Y. Optimizing CNN Model Inference on CPUs. In Proceedings of the 2019 USENIX Annual Technical Conference (USENIX ATC 19), Renton, WA, 2019; pp. 1025–1040.

- Iyer, V.; Lee, S.; Lee, S.; Kim, J.J.; Kim, H.; Shin, Y. Automated Backend Allocation for Multi-Model, On-Device AI Inference. Proceedings of the ACM on Measurement and Analysis of Computing Systems 2023, 7, 1–33.

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. TensorFlow: A System for Large-Scale Machine Learning. In Proceedings of the 12th USENIX Symposium on Operating Systems Design and Implementation (OSDI 16), Savannah, GA, 2016; pp. 265–283.

- Team, T.T.D.; Al-Rfou, R.; Alain, G.; Almahairi, A.; Angermueller, C.; Bahdanau, D.; Ballas, N.; Bastien, F.; Bayer, J.; Belikov, A.; et al. Theano: A Python framework for fast computation of mathematical expressions. arXiv preprint arXiv:1605.02688 2016.

- Chen, T.; Moreau, T.; Jiang, Z.; Zheng, L.; Yan, E.; Shen, H.; Cowan, M.; Wang, L.; Hu, Y.; Ceze, L.; et al. TVM: An Automated End-to-End Optimizing Compiler for Deep Learning. In Proceedings of the 13th USENIX Symposium on Operating Systems Design and Implementation (OSDI 18), Carlsbad, CA, 2018; pp. 578–594.

- Huawei. HUAWEI HiAI Engine - About the Service. https://developer.huawei.com/consumer/en/doc/hiai-References/ir-overview-0000001052569365, 2024. Referenced December 2024.

- Zhu, H.; Wu, R.; Diao, Y.; Ke, S.; Li, H.; Zhang, C.; Xue, J.; Ma, L.; Xia, Y.; Cui, W.; et al. ROLLER: Fast and Efficient Tensor Compilation for Deep Learning. In Proceedings of the 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22), Carlsbad, CA, 2022; pp. 233–248.

- Zheng, L.; Jia, C.; Sun, M.; Wu, Z.; Yu, C.H.; Haj-Ali, A.; Wang, Y.; Yang, J.; Zhuo, D.; Sen, K.; et al. Ansor: Generating High-Performance Tensor Programs for Deep Learning. In Proceedings of the 14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20). USENIX Association, 2020, pp. 863–879.

- Ragan-Kelley, J.; Barnes, C.; Adams, A.; Paris, S.; Durand, F.; Amarasinghe, S. Halide: a language and compiler for optimizing parallelism, locality, and recomputation in image processing pipelines. Acm Sigplan Notices 2013, 48, 519–530.

- Miao, X.; Oliaro, G.; Zhang, Z.; Cheng, X.; Wang, Z.; Zhang, Z.; Wong, R.Y.Y.; Zhu, A.; Yang, L.; Shi, X.; et al. Specinfer: Accelerating large language model serving with tree-based speculative inference and verification. In Proceedings of the Proceedings of the 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, Volume 3, 2024, pp. 932–949.

- Frantar, E.; Ashkboos, S.; Hoefler, T.; Alistarh, D. Gptq: Accurate post-training quantization for generative pre-trained transformers. arXiv preprint arXiv:2210.17323 2022.

- Lin, J.; Tang, J.; Tang, H.; Yang, S.; Chen, W.M.; Wang, W.C.; Xiao, G.; Dang, X.; Gan, C.; Han, S. AWQ: Activation-aware Weight Quantization for On-Device LLM Compression and Acceleration. Proceedings of Machine Learning and Systems 2024, 6, 87–100.

- Li, J.; Xu, J.; Huang, S.; Chen, Y.; Li, W.; Liu, J.; Lian, Y.; Pan, J.; Ding, L.; Zhou, H.; et al. Large language model inference acceleration: A comprehensive hardware perspective. arXiv preprint arXiv:2410.04466 2024.

- Cheng, W.; Cai, Y.; Lv, K.; Shen, H. Teq: Trainable equivalent transformation for quantization of llms. arXiv preprint arXiv:2310.10944 2023.

- Park, G.; Park, B.; Kim, M.; Lee, S.; Kim, J.; Kwon, B.; Kwon, S.J.; Kim, B.; Lee, Y.; Lee, D. Lut-gemm: Quantized matrix multiplication based on luts for efficient inference in large-scale generative language models. arXiv preprint arXiv:2206.09557 2022.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).