Submitted:

20 December 2024

Posted:

23 December 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Importance of Ethical Considerations in AI

1.2. Research Purpose

2. Related Works

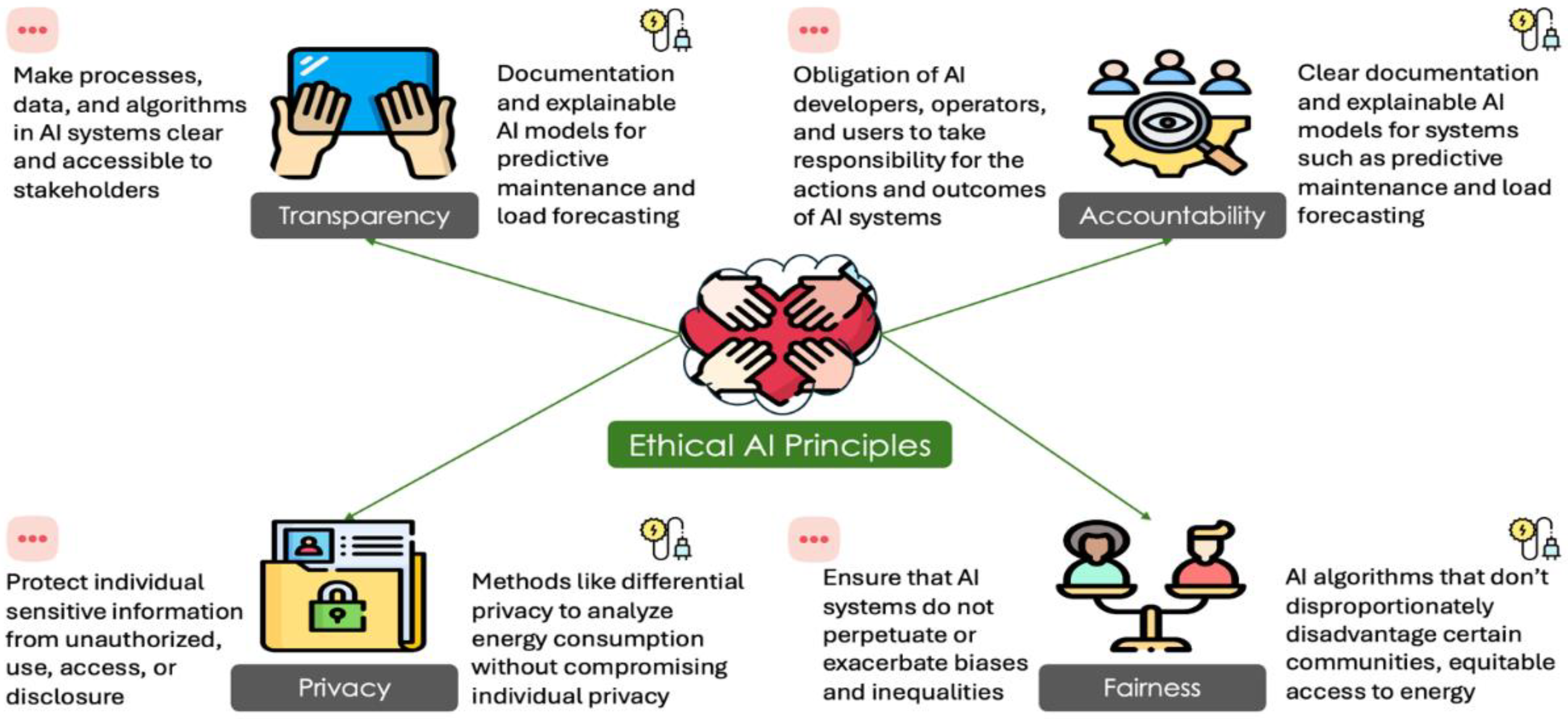

3. Ethical Principles in AI

3.1. Definition of Ethics

3.2. Core Principles

4. AI Transparency in Smart Grid

- Trust and Acceptance: A more transparent technology fosters trust in regulators, operators, and the public. The more transparent and comprehensible the decision made by AI systems is, the easier it is for stakeholders to accept and support the technology [32].

- Error Detection and Correction: Although AI systems are generally accurate after extensive training, they may inadvertently contain errors or biases on occasion. Transparency in this can make the detection and rectification of incorrect detection much easier.

- Compliance with Regulations: Generally speaking, algorithmic transparency is governed by regulations. Allowing AI systems to be transparent assists an organization in meeting the required legal and ethical requirements, which may be breached and result in fines as well as reputational damage [31].

4.1. Methods to Enhance Transparency

4.1.1. Explainable AI (XAI)

4.1.2. Documentation

4.1.3. Audits

4.2. Transparency Issues Case Studies

4.2.1. Case Study 1: Predictive Maintenance in Smart Grids:

4.2.2. Case Study 2: Load Forecasting and Energy Management:

4.2.3. Case Study 3: Fault Detection and Isolation:

5. AI Accountability in Smart Grid Systems

5.1. Stakeholder Responsibilities

5.2. Methods to Ensure Accountability

5.3. Examples of Accountability Measures

6. AI Bias and Fairness in Smart Grid Systems

6.1. Identifying and Mitigating Biases in AI Applications for Smart Grids

- Data Preprocessing: This ensures that the training data is balanced and representative of all relevant groups. It involves techniques such as data augmentation, re-sampling, and the removal of biased data points [47].

- Algorithmic Adjustments: Modifying algorithms can lead to the correction of identified biases. This includes adjusting the weighting of certain features, incorporating fairness constraints into the optimization process, and using bias mitigation algorithms [48].

- Post-processing Corrections: This involves applying adjustments to the outputs of AI models to ensure fairer outcomes. Techniques such as re-ranking or recalibrating predictions to align with fairness criteria [49] can improve AI outputs.

6.2. Strategies for Ensuring Equitable AI Outcomes

- Stakeholder Involvement: Various sets of stakeholders must be involved in the design and deployment of AI systems so that all viewpoints and requirements of varied groups can be considered in order to provide fair and inclusive AI solutions [50].

- Auditing Fairness: Regular fairness audits of the AI systems contribute to studying its impact on different groups and locating probable biases. These audits must be independent in order to give credibility and objectivity to the issue at hand [37].

- Transparency and Accountability: It is important to have in place processes and mechanisms that ensure transparency and hold developers and operators responsible for fairness in AI systems. This includes clear documentation, explainability of decisions, as well as avenues for redress in case of perceived unfair outcomes.[51].

- Regulatory Compliance: Conformity with legal and ethical standards that call for fairness in AI applications, among them anti-discrimination laws, data protection regulations, and sectorial regulations is very essential [52].

6.3. Case Studies on Fairness Issues

6.3.1. Case Study 1: Smart Grids-Load Distribution:

6.3.2. Case Study 2: Predictive Maintenance and Worker Safety:

6.3.3. Case Study 3: Renewable Energy Allocation:

7. Privacy Concerns in Smart Grids

7.1. Best Practices

- Data Minimization: Collect only the data that is strictly necessary for the AI system to function effectively. This reduces the risk of sensitive information being exposed or misused [55].

- Anonymization and Pseudonymization: Transform personal data into anonymous or pseudonymous forms, ensuring that individuals cannot be easily identified from the data sets used in AI models [56].

- Encryption: Implement robust encryption techniques for data at rest and in transit to protect it from unauthorized access and breaches [57].

- Access Controls: Establish strict access controls to limit who can view or manipulate sensitive data. This includes role-based access and regular audits to ensure compliance [58].

- Transparency: Maintain transparency with consumers about what data is being collected, how it is used, and with whom it is shared. Providing clear privacy policies and obtaining informed consent are essential components of this practice [59].

- Data Governance: Develop and enforce comprehensive data governance policies that outline procedures for data handling, storage, and sharing [60]. This ensures consistency and compliance with privacy standards across the organization.

7.2. Legal and Regulatory Frameworks Governing Data Privacy

- California Consumer Privacy Act (CCPA): In the United States, CCPA grants consumers rights over their personal data, including the right to access, delete, and opt out of the sale of their data [62].

- Smart Grid Privacy Policies: Various jurisdictions have specific policies aimed at protecting consumer privacy within smart grid systems [63]. These policies mandate secure data handling practices and consumer rights protection.

- Industry Standards: Organizations must also comply with industry-specific standards and guidelines, such as those from the National Institute of Standards and Technology (NIST) [64] or the International Organization for Standardization (ISO) [65], which provide frameworks for data protection and privacy.

7.3. Privacy-Preserving Methods

- Differential Privacy: This technique introduces noise into data sets to prevent the identification of individuals, ensuring that AI models can learn from the data without compromising privacy [66].

- Federated Learning: Instead of centralizing data, federated learning allows AI models to be trained across decentralized devices or servers holding local data samples [67]. This approach keeps personal data on local devices, reducing privacy risks.

- Homomorphic Encryption: This advanced encryption method allows computations to be performed on encrypted data without decrypting it first [68]. This ensures that sensitive data remains protected even during processing.

- Secure Multi-party Computation (SMPC): SMPC enables multiple parties to jointly compute a function over their inputs while keeping those inputs private [69]. This is particularly useful for collaborative AI projects involving multiple stakeholders.

- Data Masking: Involves obfuscating specific data within a data set to protect sensitive information [70]. This technique is useful for sharing data with third parties while preserving privacy.

8. Ethical Challenges in AI Integration

8.1. Ethical Dilemmas and Societal Implications

8.2. Recommendations for AI Applications in Smart Grid Systems

9. Conclusion

Funding

| 1 | |

| 2 |

Contributions

Data Availability Statement

Conflicts of Interest

References

- Z. Shi et al., “Artificial intelligence techniques for stability analysis and control in smart grids: Methodologies, applications, challenges and future directions,” Appl. Energy, vol. 278, p. 115733, Nov. 2020. [CrossRef]

- T. Mazhar et al., “Analysis of Challenges and Solutions of IoT in Smart Grids Using AI and Machine Learning Techniques: A Review,” Electronics, vol. 12, no. 1, Art. no. 1, Jan. 2023. [CrossRef]

- J. Ramesh, S. Shahriar, A. R. Al-Ali, A. Osman, and M. F. Shaaban, “Machine Learning Approach for Smart Distribution Transformers Load Monitoring and Management System,” Energies, vol. 15, no. 21, Art. no. 21, Jan. 2022. [CrossRef]

- M. A. Mahmoud, N. R. Md Nasir, M. Gurunathan, P. Raj, and S. A. Mostafa, “The Current State of the Art in Research on Predictive Maintenance in Smart Grid Distribution Network: Fault’s Types, Causes, and Prediction Methods—A Systematic Review,” Energies, vol. 14, no. 16, Art. no. 16, Jan. 2021. [CrossRef]

- U. Assad et al., “Smart Grid, Demand Response and Optimization: A Critical Review of Computational Methods,” Energies, vol. 15, no. 6, Art. no. 6, Jan. 2022. [CrossRef]

- M. Selim, R. Zhou, W. Feng, and P. Quinsey, “Estimating Energy Forecasting Uncertainty for Reliable AI Autonomous Smart Grid Design,” Energies, vol. 14, no. 1, Art. no. 1, Jan. 2021. [CrossRef]

- A. Chakraborty, M. Alam, V. Dey, A. Chattopadhyay, and D. Mukhopadhyay, “A survey on adversarial attacks and defences,” CAAI Trans. Intell. Technol., vol. 6, no. 1, pp. 25–45, 2021. [CrossRef]

- T. T. Nguyen et al., “Manipulating Recommender Systems: A Survey of Poisoning Attacks and Countermeasures,” ACM Comput Surv, Jul. 2024. [CrossRef]

- V. Franki, D. Majnarić, and A. Višković, “A Comprehensive Review of Artificial Intelligence (AI) Companies in the Power Sector,” Energies, vol. 16, no. 3, Art. no. 3, Jan. 2023. [CrossRef]

- Y. S. Afridi, K. Ahmad, and L. Hassan, “Artificial intelligence based prognostic maintenance of renewable energy systems: A review of techniques, challenges, and future research directions,” Int. J. Energy Res., vol. 46, no. 15, pp. 21619–21642, 2022. [CrossRef]

- S. Shahriar, A. R. Al-Ali, A. H. Osman, S. Dhou, and M. Nijim, “Machine Learning Approaches for EV Charging Behavior: A Review,” IEEE Access, vol. 8, pp. 168980–168993, 2020. [CrossRef]

- K. Hayawi, S. Shahriar, and H. Hacid, “Climate Data Imputation and Quality Improvement Using Satellite Data,” J. Data Sci. Intell. Syst., Jun. 2024. [CrossRef]

- M. Bahrami, R. Sonoda, and R. Srinivasan, “LLM Diagnostic Toolkit: Evaluating LLMs for Ethical Issues,” in 2024 International Joint Conference on Neural Networks (IJCNN), Jun. 2024, pp. 1–8. [CrossRef]

- B. K. Konidena, J. N. A. Malaiyappan, and A. Tadimarri, “Ethical Considerations in the Development and Deployment of AI Systems,” Eur. J. Technol., vol. 8, no. 2, Art. no. 2, Mar. 2024. [CrossRef]

- A. Nassar and, M. Kamal, “Ethical Dilemmas in AI-Powered Decision-Making: A Deep Dive into Big Data-Driven Ethical Considerations,” Int. J. Responsible Artif. Intell., vol. 11, no. 8, Art. no. 8, Aug. 2021.

- C. B. Head, P. Jasper, M. McConnachie, L. Raftree, and G. Higdon, “Large language model applications for evaluation: Opportunities and ethical implications,” New Dir. Eval., vol. 2023, no. 178–179, pp. 33–46, 2023. [CrossRef]

- A. B. Brendel, M. Mirbabaie, T.-B. Lembcke, and L. Hofeditz, “Ethical Management of Artificial Intelligence,” Sustainability, vol. 13, no. 4, Art. no. 4, Jan. 2021. [CrossRef]

- N. M. Safdar, J. D. Banja, and C. C. Meltzer, “Ethical considerations in artificial intelligence,” Eur. J. Radiol., vol. 122, p. 108768, Jan. 2020. [CrossRef]

- A. Čartolovni, A. Tomičić, and E. Lazić Mosler, “Ethical, legal, and social considerations of AI-based medical decision-support tools: A scoping review,” Int. J. Med. Inf., vol. 161, p. 104738, May 2022. [CrossRef]

- C. Wang, S. Liu, H. Yang, J. Guo, Y. Wu, and J. Liu, “Ethical Considerations of Using ChatGPT in Health Care,” J. Med. Internet Res., vol. 25, no. 1, p. e48009, Aug. 2023. [CrossRef]

- T. Dave, S. A. Athaluri, and S. Singh, “ChatGPT in medicine: an overview of its applications, advantages, limitations, future prospects, and ethical considerations,” Front. Artif. Intell., vol. 6, May 2023. [CrossRef]

- R. Dara, S. M. Hazrati Fard, and J. Kaur, “Recommendations for ethical and responsible use of artificial intelligence in digital agriculture,” Front. Artif. Intell., vol. 5, Jul. 2022. [CrossRef]

- M. J. Reiss, “The Use of Al in Education: Practicalities and Ethical Considerations,” Lond. Rev. Educ., vol. 19, no. 1, 2021, Accessed: Dec. 15, 2024. [Online]. Available: https://eric.ed.gov/?id=EJ1297682.

- D. Schiff, “Education for AI, not AI for Education: The Role of Education and Ethics in National AI Policy Strategies,” Int. J. Artif. Intell. Educ., vol. 32, no. 3, pp. 527–563, Sep. 2022. [CrossRef]

- R. Watkins, “Guidance for researchers and peer-reviewers on the ethical use of Large Language Models (LLMs) in scientific research workflows,” AI Ethics, vol. 4, no. 4, pp. 969–974, Nov. 2024. [CrossRef]

- L. Floridi et al., “AI4People—An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations,” Minds Mach., vol. 28, no. 4, pp. 689–707, Dec. 2018. [CrossRef]

- A. Zainab, A. Ghrayeb, D. Syed, H. Abu-Rub, S. S. Refaat, and O. Bouhali, “Big Data Management in Smart Grids: Technologies and Challenges,” IEEE Access, vol. 9, pp. 73046–73059. 2021. [CrossRef]

- U. Ehsan, Q. V. Liao, M. Muller, M. O. Riedl, and J. D. Weisz, “Expanding Explainability: Towards Social Transparency in AI systems,” in Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, in CHI ’21. New York, NY, USA: Association for Computing Machinery, May 2021, pp. 1–19. [CrossRef]

- C. Novelli, M. Taddeo, and L. Floridi, “Accountability in artificial intelligence: what it is and how it works,” AI Soc., Feb. 2023. [Google Scholar] [CrossRef]

- L. Devillers, F. Fogelman-Soulié, and R. Baeza-Yates, “AI & Human Values,” in Reflections on Artificial Intelligence for Humanity, B. Braunschweig and M. Ghallab, Eds., Cham: Springer International Publishing, 2021, pp. 76–89. [CrossRef]

- S. Shahriar, S. Allana, S. M. Hazratifard, and R. Dara, “A survey of privacy risks and mitigation strategies in the artificial intelligence life cycle,” IEEE Access Pract. Innov. Open Solut., vol. 11, pp. 61829–61854. 2023. [CrossRef]

- H. Felzmann, E. F. Villaronga, C. Lutz, and A. Tamò-Larrieux, “Transparency you can trust: Transparency requirements for artificial intelligence between legal norms and contextual concerns,” Big Data Soc., vol. 6, no. 1, p. 2053951719860542, Jan. 2019. [CrossRef]

- A. Barredo, Arrieta; et al. , “Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI,” Inf. Fusion, vol. 58, pp. 82–115, Jun. 2020. [Google Scholar] [CrossRef]

- A. Gramegna and, P. Giudici, “SHAP and LIME: an evaluation of discriminative power in credit risk,” Front. Artif. Intell., vol. 4, p. 752558, 2021.

- G. Falco et al., “Governing AI safety through independent audits,” Nat. Mach. Intell., vol. 3, no. 7, pp. 566–571, Jul. 2021. [CrossRef]

- A. S. Albahri et al., “A systematic review of trustworthy and explainable artificial intelligence in healthcare: Assessment of quality, bias risk, and data fusion,” Inf. Fusion, vol. 96, pp. 156–191, Aug. 2023. [CrossRef]

- I. D. Raji et al., “Closing the AI accountability gap: defining an end-to-end framework for internal algorithmic auditing,” in Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, in FAT* ’20. New York, NY, USA: Association for Computing Machinery, Jan. 2020, pp. 33–44. [CrossRef]

- F. Bayram, B. S. Ahmed, and A. Kassler, “From concept drift to model degradation: An overview on performance-aware drift detectors,” Knowl.-Based Syst., vol. 245, p. 108632, Jun. 2022. [Google Scholar] [CrossRef]

- A. Walz and, K. Firth-Butterfield, “Implementing Ethics into Artificial Intelligence: A Contribution, from a Legal Perspective, to the Development of an AI Governance Regime,” Duke Law Technol. Rev., vol. 18, p. 176, 2020 2019.

- A. Lior, “Insuring AI: The Role of Insurance in Artificial Intelligence Regulation,” Harv. J. Law Technol. Harv. JOLT, vol. 35, p. 467, 2022 2021.

- N. A. Smuha, “From a ‘race to AI’ to a ‘race to AI regulation’: regulatory competition for artificial intelligence,” Law Innov. Technol., vol. 13, no. 1, pp. 57–84, Jan. 2021. [CrossRef]

- P. Boddington, Towards a Code of Ethics for Artificial Intelligence. in Artificial Intelligence: Foundations, Theory, and Algorithms. Cham: Springer International Publishing, 2017. [CrossRef]

- P. Cihon, “Standards for AI governance: international standards to enable global coordination in AI research & development,” 2019.

- R. Schwartz et al., Towards a standard for identifying and managing bias in artificial intelligence, vol. 3. US Department of Commerce, National Institute of Standards and Technology, 2022.

- E. Ferrara, “Fairness and Bias in Artificial Intelligence: A Brief Survey of Sources, Impacts, and Mitigation Strategies,” Sci, vol. 6, no. 1, Art. no. 1, Mar. 2024. [CrossRef]

- N. Shahbazi, Y. Lin, A. Asudeh, and H. V. Jagadish, “Representation Bias in Data: A Survey on Identification and Resolution Techniques,” ACM Comput Surv, vol. 55, no. 13s, p. 293:1-293:39, Jul. 2023. [CrossRef]

- Y. Li and N. Vasconcelos, “REPAIR: Removing Representation Bias by Dataset Resampling,” presented at the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 9572–9581. Accessed: Jul. 03, 2024. [Online]. Available: https://openaccess.thecvf.com/content_CVPR_2019/html/Li_REPAIR_Removing_Representation_Bias_by_Dataset_Resampling_CVPR_2019_paper.html.

- Z. Wang et al., “Towards Fairness in Visual Recognition: Effective Strategies for Bias Mitigation,” presented at the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020, pp. 8919–8928. Accessed: Jul. 03, 2024. [Online]. Available: https://openaccess.thecvf.com/content_CVPR_2020/html/Wang_Towards_Fairness_in_Visual_Recognition_Effective_Strategies_for_Bias_Mitigation_CVPR_2020_paper.html.

- A. Ashokan and, C. Haas, “Fairness metrics and bias mitigation strategies for rating predictions,” Inf. Process. Manag., vol. 58, no. 5, p. 102646, Sep. 2021. [Google Scholar] [CrossRef]

- M. Madaio, L. Egede, H. Subramonyam, J. Wortman Vaughan, and H. Wallach, “Assessing the Fairness of AI Systems: AI Practitioners’ Processes, Challenges, and Needs for Support,” Proc ACM Hum-Comput Interact, vol. 6, no. CSCW1, p. 52:1-52:26, Apr. 2022. [CrossRef]

- J. A. Kroll, “Outlining Traceability: A Principle for Operationalizing Accountability in Computing Systems,” in Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, in FAccT ’21. New York, NY, USA: Association for Computing Machinery, Mar. 2021, pp. 758–771. [CrossRef]

- D. Kumar and N. Suthar, “Ethical and legal challenges of AI in marketing: an exploration of solutions,” J. Inf. Commun. Ethics Soc., vol. 22, no. 1, pp. 124–144, Jan. 2024. [CrossRef]

- Z. Zhang et al., “Vulnerability of Machine Learning Approaches Applied in IoT-Based Smart Grid: A Review,” IEEE Internet Things J., vol. 11, no. 11, pp. 18951–18975, Jun. 2024. [CrossRef]

- T. Jakobi, S. Patil, D. Randall, G. Stevens, and V. Wulf, “It Is About What They Could Do with the Data: A User Perspective on Privacy in Smart Metering,” ACM Trans Comput-Hum Interact, vol. 26, no. 1, p. 2:1-2:44, Jan. 2019. [CrossRef]

- R. Mukta, H. Paik, Q. Lu, and S. S. Kanhere, “A survey of data minimisation techniques in blockchain-based healthcare,” Comput. Netw., vol. 205, p. 108766, Mar. 2022. [CrossRef]

- Z. Zuo, M. Watson, D. Budgen, R. Hall, C. Kennelly, and N. A. Moubayed, “Data Anonymization for Pervasive Health Care: Systematic Literature Mapping Study,” JMIR Med. Inform., vol. 9, no. 10, p. e29871, Oct. 2021. [CrossRef]

- S. Shukla, J. P. George, K. Tiwari, and J. V. Kureethara, “Data Security,” in Data Ethics and Challenges, S. Shukla, J. P. George, K. Tiwari, and J. V. Kureethara, Eds., Singapore: Springer, 2022, pp. 41–59. [CrossRef]

- G. Cederquist, R. Corin, M. A. C. Dekker, S. Etalle, J. I. den Hartog, and G. Lenzini, “Audit-based compliance control,” Int. J. Inf. Secur., vol. 6, no. 2, pp. 133–151, Mar. 2007. [CrossRef]

- Rossi and, G. Lenzini, “Transparency by design in data-informed research: A collection of information design patterns,” Comput. Law Secur. Rev., vol. 37, p. 105402, Jul. 2020. [Google Scholar] [CrossRef]

- J. Kuzio, M. Ahmadi, K.-C. Kim, M. R. Migaud, Y.-F. Wang, and J. Bullock, “Building better global data governance,” Data Policy, vol. 4, p. e25, Jan. 2022. [CrossRef]

- “Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation).” 2016. [Online]. Available: https://eur-lex.europa.eu/eli/reg/2016/679/oj.

- “California consumer privacy act of 2018.” 2018. [Online]. Available: https://oag.ca.gov/privacy/ccpa.

- M. A. Brown, S. Zhou, and M. Ahmadi, “Smart grid governance: An international review of evolving policy issues and innovations,” WIREs Energy Environ., vol. 7, no. 5, p. e290, 2018. [CrossRef]

- National Institute of Standards and Technology, “National institute of standards and technology.” [Online]. Available: https://www.nist.gov/.

- International Organization for Standardization, “International organization for standardization.” [Online]. Available: https://www.iso.org/.

- C. Dwork, “Calibrating noise to sensitivity in private data analysis,” in Proceedings of the third conference on theory of cryptography (TCC), Berlin, Heidelberg: Springer-Verlag, 2006, pp. 265–284. [Online]. [CrossRef]

- H. B. McMahan, E. Moore, D. Ramage, S. Hampson, and B. A. y Arcas, “Communication-efficient learning of deep networks from decentralized data,” Proc. 20th Int. Conf. Artif. Intell. Stat. AISTATS, pp. 1273–1282, 2017.

- C. Gentry, “Fully homomorphic encryption using ideal lattices,” phd, Stanford University, 2009. [Online]. Available: https://crypto.stanford.edu/craig/craig-thesis.pdf.

- A. C.-C. Yao, “Protocols for secure computations,” Proc. 23rd Annu. Symp. Found. Comput. Sci. FOCS, pp. 160–164, 1982.

- S. Wohlgemuth, I. Echizen, and N. Sonehara, “Privacy protection by context-sensitive data masking,” Proc. 2007 ACM Workshop Priv. Electron. Soc. WPES, pp. 105–108, 2007.

- N. Díaz-Rodríguez, J. Del Ser, M. Coeckelbergh, M. López de Prado, E. Herrera-Viedma, and F. Herrera, “Connecting the dots in trustworthy Artificial Intelligence: From AI principles, ethics, and key requirements to responsible AI systems and regulation,” Inf. Fusion, vol. 99, p. 101896, Nov. 2023. [Google Scholar] [CrossRef]

- N. R. Mannuru et al., “Artificial intelligence in developing countries: The impact of generative artificial intelligence (AI) technologies for development,” Inf. Dev., p. 02666669231200628, Sep. 2023. [CrossRef]

- C. Burr and, D. Leslie, “Ethical assurance: a practical approach to the responsible design, development, and deployment of data-driven technologies,” AI Ethics, vol. 3, no. 1, pp. 73–98, Feb. 2023. [Google Scholar] [CrossRef]

- M. Fengchun, H. Wayne, R. Huang, H. Zhang, and UNESCO, AI and education: A guidance for policymakers. UNESCO Publishing, 2021.

- B. Shneiderman, “Bridging the Gap Between Ethics and Practice: Guidelines for Reliable, Safe, and Trustworthy Human-centered AI Systems,” ACM Trans Interact Intell Syst, vol. 10, no. 4, p. 26:1-26:31, Oct. 2020. [CrossRef]

- E. Eryurek, U. Gilad, V. Lakshmanan, A. Kibunguchy-Grant, and J. Ashdown, Data Governance: The Definitive Guide. O’Reilly Media, Inc., 2021.

- S. Yanisky-Ravid and S. K. Hallisey, “Equality and Privacy by Design: A New Model of Artificial Intelligence Data Transparency via Auditing, Certification, and Safe Harbor Regimes,” Fordham Urban Law J., vol. 46, p. 428, 2019.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).