Submitted:

31 December 2025

Posted:

01 January 2026

You are already at the latest version

Abstract

This paper describes a new auto-associative network called a Unit-Merge Network. It is so-called because novel compound keys are used to link 2 nodes in 1 layer, with 1 node in the next layer. Unit nodes at the base store integer values that can represent binary words. The word size is critical and specific to the dataset and it also provides a first level of consistency over the input patterns. A second cohesion network then links the unit nodes list, through novel compound keys that create layers of decreasing dimension, until the top layer contains only 1 node for any pattern. Thus, a pattern can be found using a search and compare technique through the memory network. The Unit-Merge network is compared to a Hopfield network and a Sparse Distributed Memory (SDM). It is shown that the memory requirements are not unreasonable and that it has a much larger capacity than a discrete Hopfield network, for example. It can store sparse data, deal with noisy input and a complexity of O(log n) compares favourably with these networks. This is demonstrated with test results for 4 benchmark datasets. Apart from the unit size, the rest of the configuration is automatic, and its simplistic design could make it an attractive option for some applications.

Keywords:

1. Introduction

2. Related Work

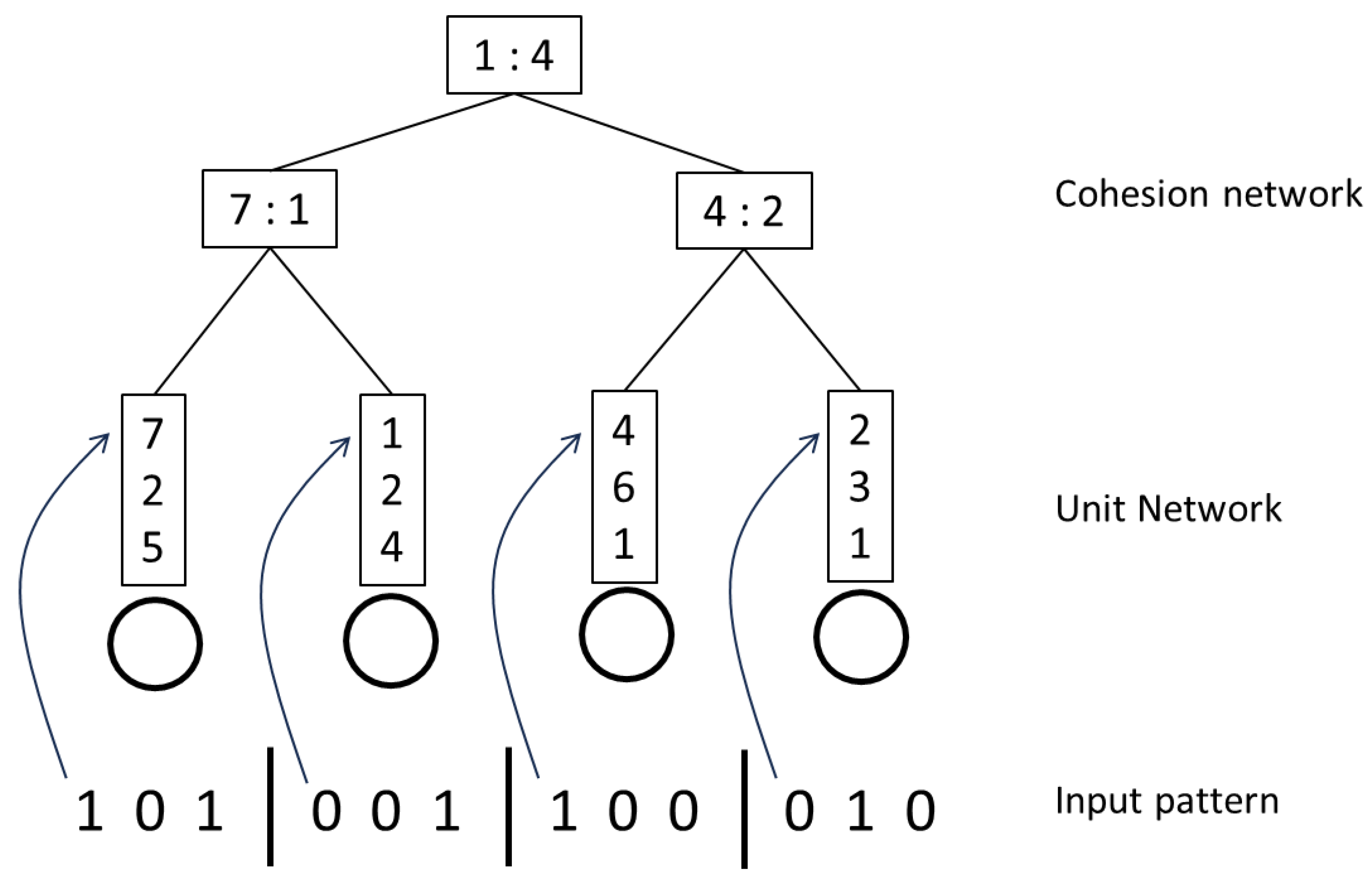

3. The Unit-Merge Network

3.1. Network Construction

3.2. Network Use

3.3. Train Algorithm

3.3.1. Unit List

- Create a unit list, where each node stores integer values relating to an indicated number x of base binary values.

-

For each input pattern, split it up into words of the indicated unit size x. Note that the unit size is important and will separate out the values (and features) differently.

- ○

- Convert a list of base words, representing a unit, from binary to decimal.

- ○

- Store the integer number only once in a value list, for the corresponding unit.

3.3.2. Cohesion Network

-

Link and add all decimal numbers for the current pattern to the cohesion network.

- ○

- Create a layer of base compound keys by combining the newly added unit node values, using 2 adjacent nodes each time.

- ○

- Then to reduce the dimensions, take the second compound value of the first node and the first compound value of the second node and create a new compound value and node from it, in a new layer.

- ○

- Repeat until there is only 1 node in the top layer.

-

This creates a path which if followed, will lead to the input pattern at the end.

- ○

- The paths are not unique however and because some information has been lost, there can be several instances at each node. But the numbers will be much smaller than for a flat list.

- ○

- Thus, when there are several choices, include all possibilities and leave the final decision to a comparison metric at the end.

3.4. Test Algorithm

3.4.1. Unit List

- Split the test input pattern into unit sizes and create the corresponding unit values.

-

Compare each number directly with the corresponding unit node value list.

- ○

- If the value list contains the number, then keep the value for that position.

- ○

- If the value list does not contain the number, then add a ‘null’ value for the position.

- This produces a list of integer or null values that represent the test pattern in the trained network.

3.4.2. Cohesion Network

- Create a 1-instance cohesion network for the test pattern only, using the test unit values.

-

Traverse the trained cohesion network using these values.

- ○

- If there is a null value at a node, then any key that includes the other value can be selected.

- ○

- While there will be multiple options, the final set of patterns will be much smaller than the train dataset.

- Retrieve all the selected base patterns and convert them back into binary patterns.

-

Use a Hamming distance or some other similarity count, to select the pattern that is closest to the test input pattern.

- ○

- If an appropriate match is not available, the current result pattern can be convoluted once and the test run again on the convoluted pattern.

- The finally selected pattern can thus have different values to the test pattern and therefore might remove noise from it.

4. Theory and Complexity

5. Testing

5.1. Test Strategy

-

If testing the row accuracy, then the test result was compared with the rows in the same category only. An exact match with any row was then required to indicate a positive score.

- ○

- If there was not an exact match, the current result pattern would be convoluted only once, and the test run again using the convoluted pattern.

- If testing the category accuracy, then the test result was compared with every row in each category. A percentage match was calculated, with the best score from any row determining the selected category.

- The metric was a basic count of how many (binary) pattern elements were the same.

5.2. Row and Category Test Results

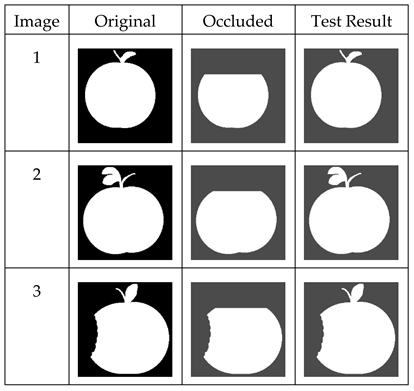

5.3. Occlusion Test Results

5.4. Comparison with Other Classifier Types

5.5. Limitations of the Unit-Merge Network

6. Conclusions

References

- Alonso, N.; Krichmar, J.L. A sparse quantized hopfield network for online-continual memory. Nature Communications 2024, 15, 3722. [Google Scholar] [CrossRef] [PubMed]

- Bouaguel, W.; Ben NCir, C.E. Distributed Evolutionary Feature Selection for Big Data Processing. Vietnam Journal of Computer Science 2022, 1–20. [Google Scholar] [CrossRef]

- Buscema, M. MetaNet: The Theory of Independent Judges. Substance Use & Misuse 1998, Vol. 33(No. 2), 439–461. [Google Scholar]

- Burns, T.E.; Fukao, T. Simplical Hopfield Networks. 2023. Available online: https://arxiv.org/abs/2305.05179.

- Deng, L. The MNIST Database of Handwritten Digit Images for Machine Learning Research [Best of the Web]. IEEE Signal Processing Magazine 2012, vol. 29(no. 6), 141–142. [Google Scholar] [CrossRef]

- Hopfield, J.J. Neural networks and physical systems with emergent collective computational abilities. Proc. Nat. Acad. Sci. 1982, 79(8), 2554–2558. [Google Scholar] [CrossRef] [PubMed]

- Hu, J.Y.C.; Wu, D.; Liu, H. Provably optimal memory capacity for modern hopfield models: Transformer-compatible dense associative memories as spherical codes. Advances in Neural Information Processing Systems 2024, 37, 70693–70729. [Google Scholar]

- Hu, J.Y-C.; Yang, D.; Wu, D.; Xu, C.; Chen, B-Y.; Liu, H. On Sparse Modern Hopfield Model. 37th Conference on Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Joshi, S.A., Prashanth, G. and Bazhenov, M. (2023). Modern Hopfield Network with Local Learning Rules for Class Generalization. In Associative Memory {\&} Hopfield Networks in 2023.

- Kanerva, P. Sparse Distributed Memory and Related Models, NASA Technological Report, Or. In Associative Neural Memories: Theory and Implementation; Hassoun, M.H., Ed.; Oxford University Press: New York, 1992; pp. 50–76. [Google Scholar]

- Krotov, D.; Hopfield, J. Dense Associative Memory Is Robust to Adversarial Inputs. Neural Computation 2018, 30(12), 3151–3167. [Google Scholar] [CrossRef] [PubMed]

- Marlin, B.; Swersky, K.; Chen, B.; Freitas, N. Inductive principles for restricted Boltzmann machine learning. In Proceedings of the thirteenth international conference on artificial intelligence and statistics, JMLR Workshop and Conference Proceedings, 2010; pp. 509–516. [Google Scholar]

- Mendes, M.; Coimbra, A.P.; Crisostomo, M. Assessing a Sparse Distributed Memory Using Different Encoding Methods. In Proceedings of the World Congress on Engineering 2009 Vol I, WCE 2009, London, U.K, July 1 - 3, 2009; 2009. [Google Scholar]

- Millidge, B.; Salvatori, T.; Song, Y.; Lukasiewicz, T.; Bogacz, R. Universal hopfield networks: A general framework for single-shot associative memory models. International Conference on Machine Learning, 2022, June; PMLR; pp. 15561–15583. [Google Scholar]

- Shape data for the MPEG-7 core experiment CE-Shape-1. Available online: http://www.cis.temple.edu/~latecki/TestData/mpeg7shapeB.tar.gz. (accessed on 30/8/25).

- Snaider, J.; Franklin, S. Integer sparse distributed memory. Twenty-fifth international flairs conference, 2012. [Google Scholar]

- The Chars74K dataset. Available online: http://www.ee.surrey.ac.uk/CVSSP/demos/chars74k/ (accessed on 30/8/25).

- UCI Machine Learning Repository. Available online: http://archive.ics.uci.edu/ml/ (accessed on 30/8/25).

- Unit-Merge Source Code. 2025. Available online: https://github.com/discompsys/Unit-Merge-Network.

| Dataset | Dimensions | Unit Size |

|---|---|---|

| Chars74 | 32 x 32 | 12 |

| Semeion | 16 x 16 | 16 |

| Silhouettes | 64 x 64 | 4 |

| Apples | 256x256 | 24 |

| Dataset | Row Accuracy for Noise % | Category Accuracy for Noise % | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 0% | 10% | 20% | 30% | 40% | 0% | 10% | 20% | 30% | 40% | |

| Chars74 | 100 | 98.7 | 91 | 75.9 | 60 | 100 | 98.9 | 91.5 | 76.1 | 59.3 |

| Semeion | 100 | 96.2 | 90.2 | 85.1 | 83.4 | 99.7 | 95.8 | 90 | 84.9 | 83.5 |

| Silhouettes | 100 | 98.4 | 95.3 | 87.4 | 77.2 | 100 | 98.5 | 94.9 | 87.2 | 78.9 |

| Apples | 100 | 100 | 100 | 100 | 100 | n/a | n/a | n/a | n/a | n/a |

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).