Submitted:

05 December 2024

Posted:

06 December 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Easy: The standard cart-pole problem with a mission time of up to 15,000 timesteps, averaged over 1,000 random starting positions (i.e., 1,000 different episodes).

- Medium: The Easy problem with an additional action: “do-nothing”. The agent must employ “do-nothing” for at least 75 percent of its actions. This is more challenging and also more attractive, as agents focus more on putting the pole into a naturally balanced state, rather than keeping it constantly perturbed.

- Hard: The Medium problem with only two observations instead of the standard four – just the position of the cart and the angle of the pole. There are no velocities. Unlike the Medium benchmark, there are no restrictions on the actions chosen. This is a very challenging benchmark, because it encourages the agent to maintain some system state in its “memory.”

- Hardest: This is the same as the Hard problem, but the only actions are to push the cart left or right.

2. Contributions of This Paper

- We codify four ways in which to employ the cart-pole problem as a benchmark for AI agents, along with target goals for agents to achieve on each benchmark.

- We perform an experiment to determine the best way to encode observations into spikes when the AI agent is a neuroprocessor running a spiking neural network.

- We perform an experiment to demonstrate how various neuroprocessor parameter settings effect the performance of the neuroprocessor on each of the benchmarks. Along with this, we highlight how to improve the performance by paying attention to how parameter settings interact with the way the observations are encoded.

- We highlight features of SNN’s that are useful in neural network design. For example, it is sometimes advantageous to arrange for multiple spikes to arrive at a neuron at different times, so that their effect is additive. Other times, it is advantageous to arrange for the spikes to arrive at the same time, so that their effect is not additive. In such as way, simple SNN constructs may implement, for example, a max() function that helps solve the benchmark.

3. The Cart-Pole Problem

- •

- Observations: Cart position , cart velocity , pole angle , pole velocity .

- •

- Track extent: -2.4 to 2.4 meters. If the cart moves beyond this, it is a failure.

- •

- Pole angle: -0.2095 to 0.2095 radians (12 degrees). If the pole falls beyond these angles, it is a failure.

- •

- Timestep duration: 1/50 second.

- •

- Reward: +1 for each non-failed timestep.

- •

- Actions: push left and push right.

- •

- Mission length: 15,000 timesteps, or five simulated minutes. This is much longer than OpenAI’s length of 200 timesteps (4 seconds) in v0, and 500 timesteps (10 seconds) in v1.

- •

- Starting cart position: between -1.2 and 1.2 on the track. OpenAI’s state is between -0.05 and 0.05.

- •

- Starting pole angle: between -0.10475 and 0.10475 radians.

- •

- Optional action: do-nothing.

- •

- Optional restriction: require 0.75 of actions to be “do-nothing.”

- •

- Optional restriction: remove cart velocity and pole velocity observations.

4. The Benchmarks and Performance Goals

4.1. The Easy Benchmark – Too Easy

4.2. The Medium Benchmark – More Natural

- Let the number of timesteps that the agent survives be t.

- Let the number of do-nothing actions be d.

- Define an “activity threshold”, a, to be a number between 0 and 1.

- If , then the fitness is equal to t.

- If , then the fitness is equal to .

4.3. The Hard Benchmark – Removing Velocities, but Retaining “Do-Nothing”

4.4. The Hardest Benchmark – Removing Velocities, and Only Two Actions

5. Spiking Neural Networks

5.1. Spike Encoding

5.1.1. Values versus Spikes versus Time

- •

- Values: The magnitude or weight of the value added to the neuron’s potential is scaled by the value being encoded.

- •

- Spikes: Values are converted into a number of spikes applied to the input neuron periodically. Higher values mean more spikes. The inter-spike duration is the same, regardless of the number of spikes, and each spike has the same weight (typically the maximum weight).

- •

- Time: A single spike is applied to the neuron, with maximum weight. The timing of the spike is determined by the value being encoded. One can either have higher values correspond to earlier spikes or to later spikes.

5.1.2. Binning

5.1.3. Six Highlighted Spike Encoders

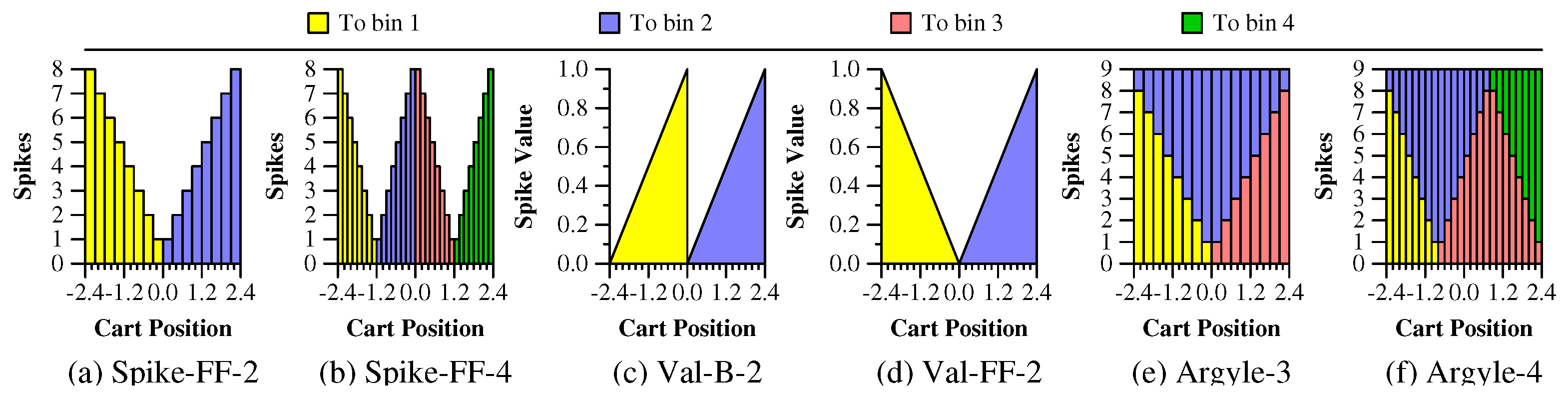

- Spike-FF-2: There are two bins, which, for all cart-pole observations, partitions the observations into negative values for the first bin and positive values for the second bin. The number of spikes applied to each bin is porportional to the absolute value of the observation, up to a maximum of eight spikes. The “FF” stands for “Flip Flop” [16]. The spikes all have a maximum charge value, and therefore cause their corresponding neurons to fire. Figure 1(a) illustrates how the cart positions are mapped to spikes with Spike-FF-2.

- Spike-FF-4: Instead of two bins, there are four bins. Figure 1(b) illustrates how the cart positions are mapped to spikes with Spike-FF-4.

- Val-B-2: There are two bins, and each value corresponds to a single spike into one of the bins. The spike’s value is proportional to the cart’s position within the interval for the bin, with a maximum value of 1.0. Figure 1(c) illustrates how the cart positions are mapped to bins and values with Val-B-2.

- Val-FF-2: This is the same as Val-B-2, except the value of the spike to the first bin ranges from 1 to 0 rather than from 0 to 1. Figure 1(d) illustrates how the cart positions are mapped to bins and values with Val-FF-2.

- Argyle-3: With the Argyle spike encoders, each value puts a total of nine spikes into two bins. With Argyle-3, the first and third bins are identical to the first and second bins of Spike-FF-2. The second bin receives spikes such that the total count of spikes for each value is nine. The name “argyle” comes from the spike encoder documentation within the TENNLab software [12,13,28]. Figure 1(e) illustrates how the cart positions are mapped to bins and spikes with Argyle-3.

- Argyle-4: This continues the “argyle” pattern, but with four spikes instead of three. Figure 1(f) illustrates how the cart positions are mapped to bins and spikes with Argyle-4.

5.2. Spike Decoding

- Voting: Here the number of spikes in each neuron is counted, and the one with the most spikes determines the actions. Ties go to the “lower” neuron.

- Temporal: The first (or last) neuron to spike is the winner.

5.3. Neuroprocessor

6. Training

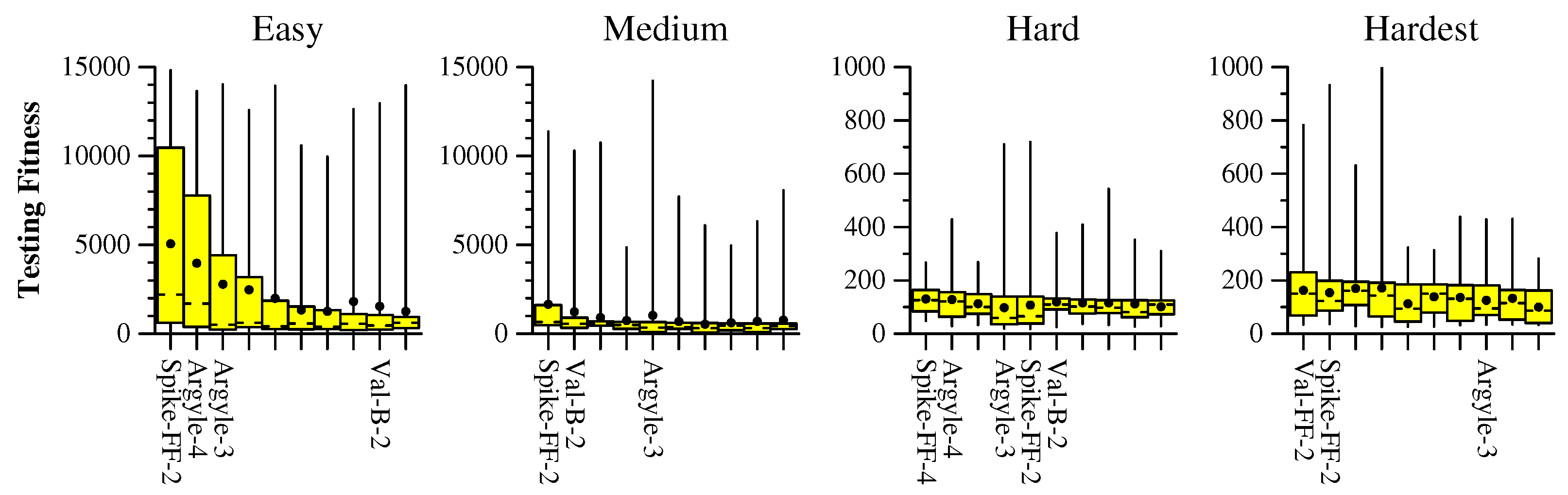

7. Spike Encoding and Decoding Experiment

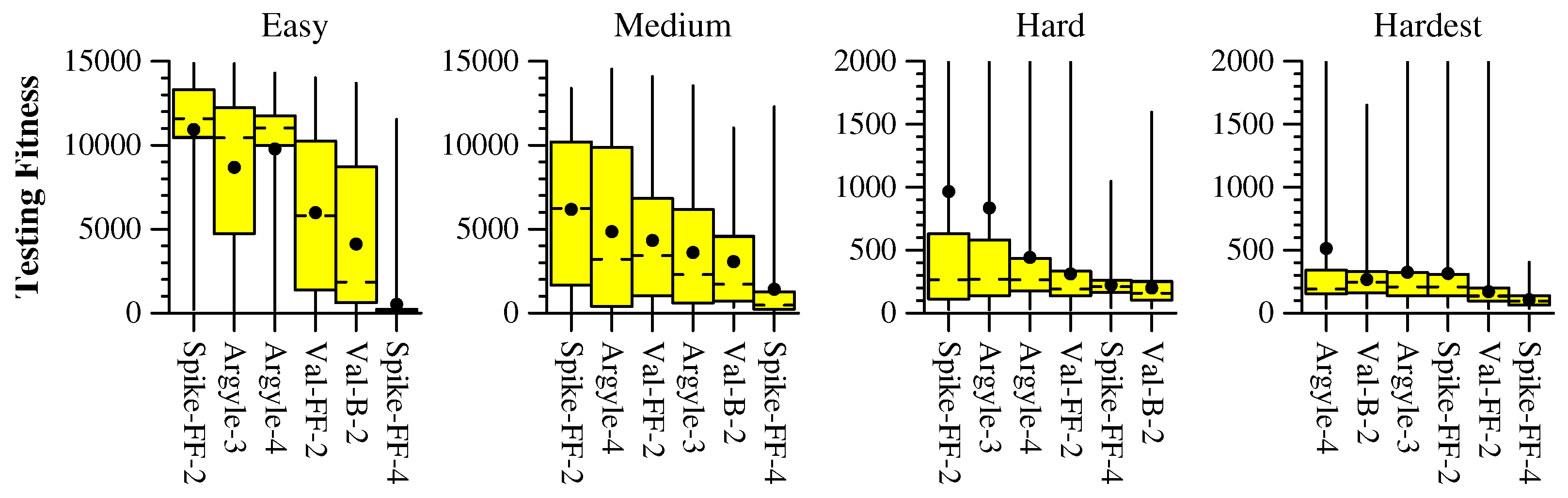

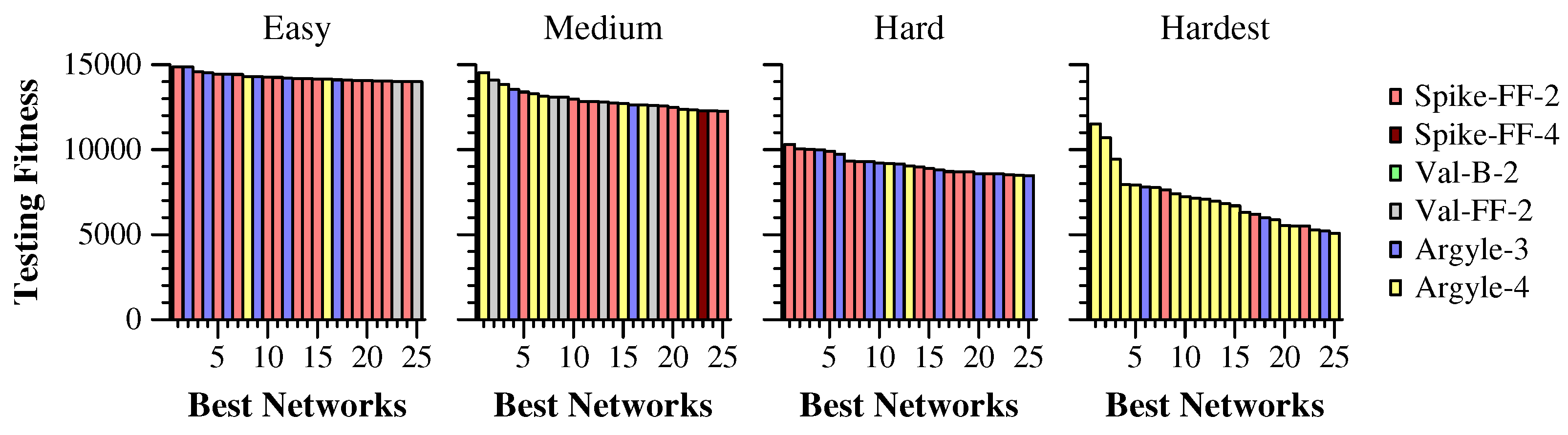

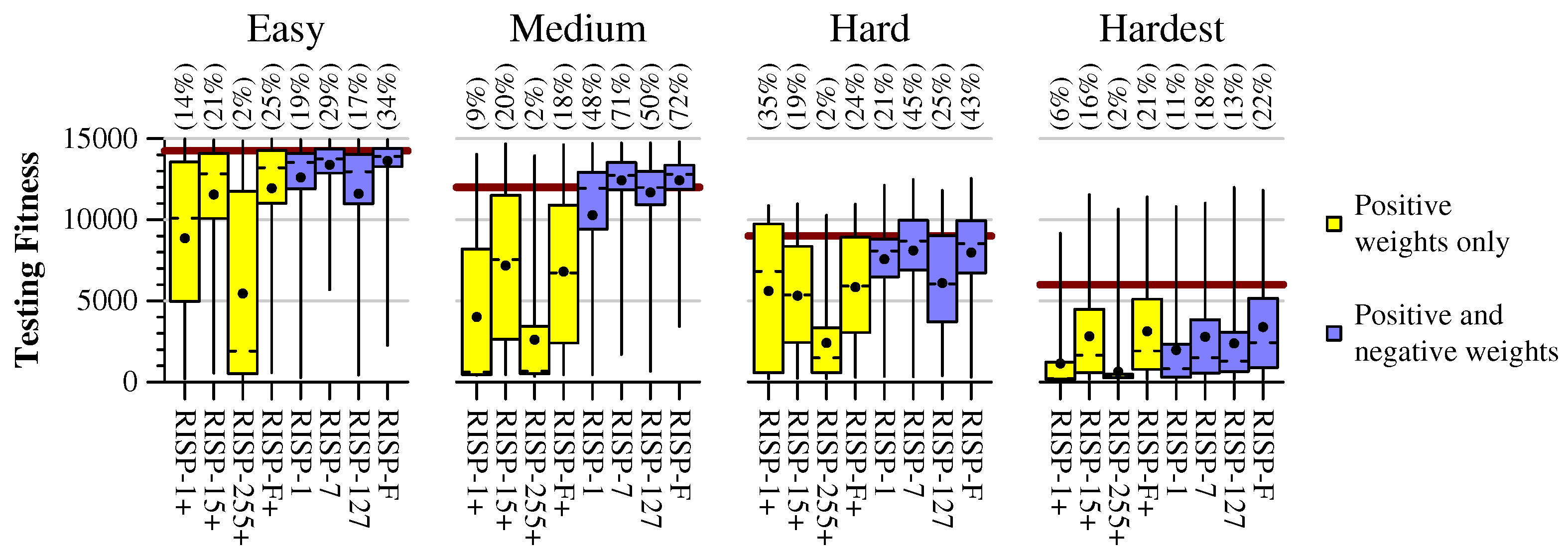

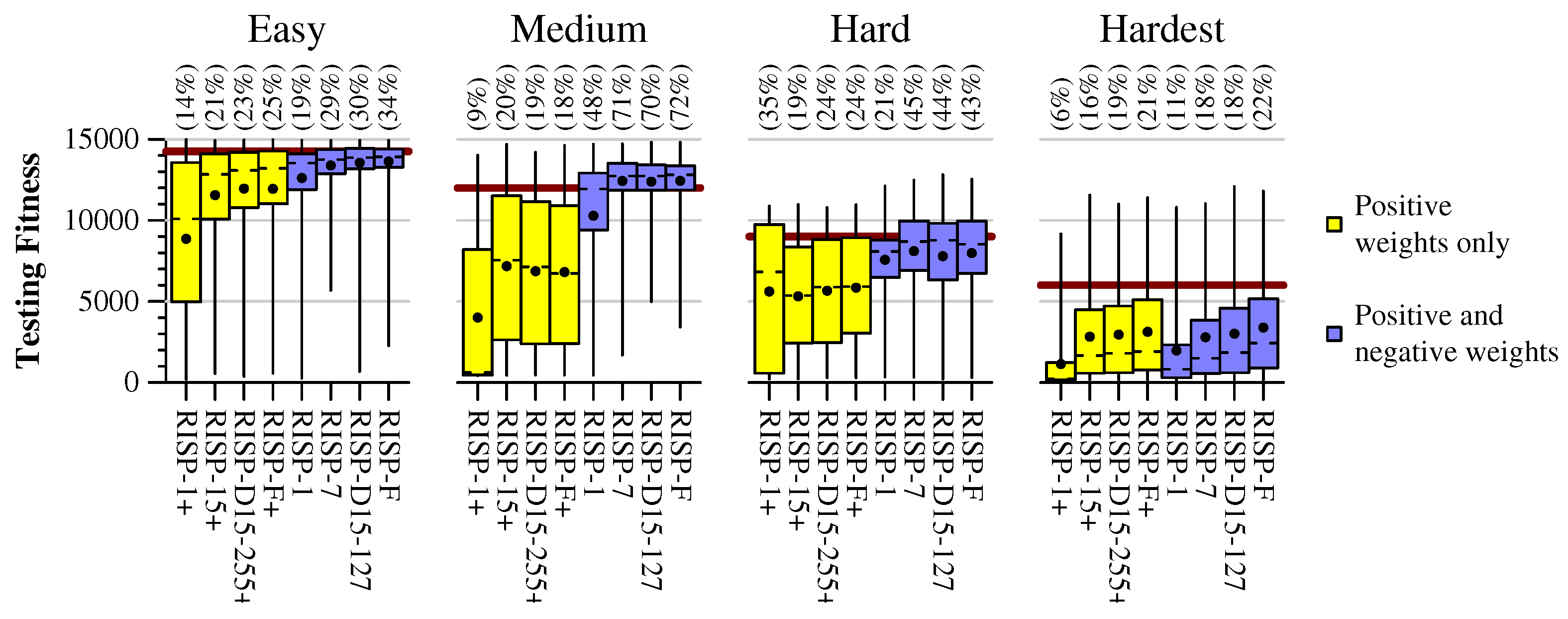

8. Neuroprocessor Experiment - RISP

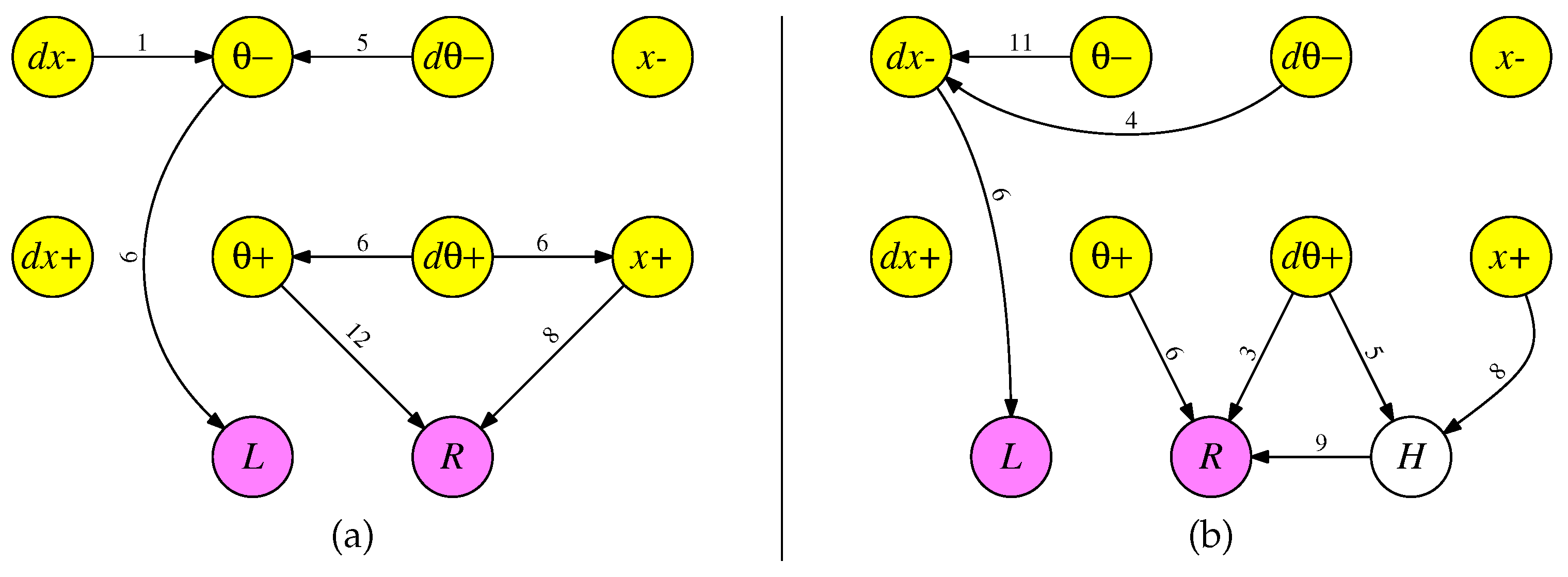

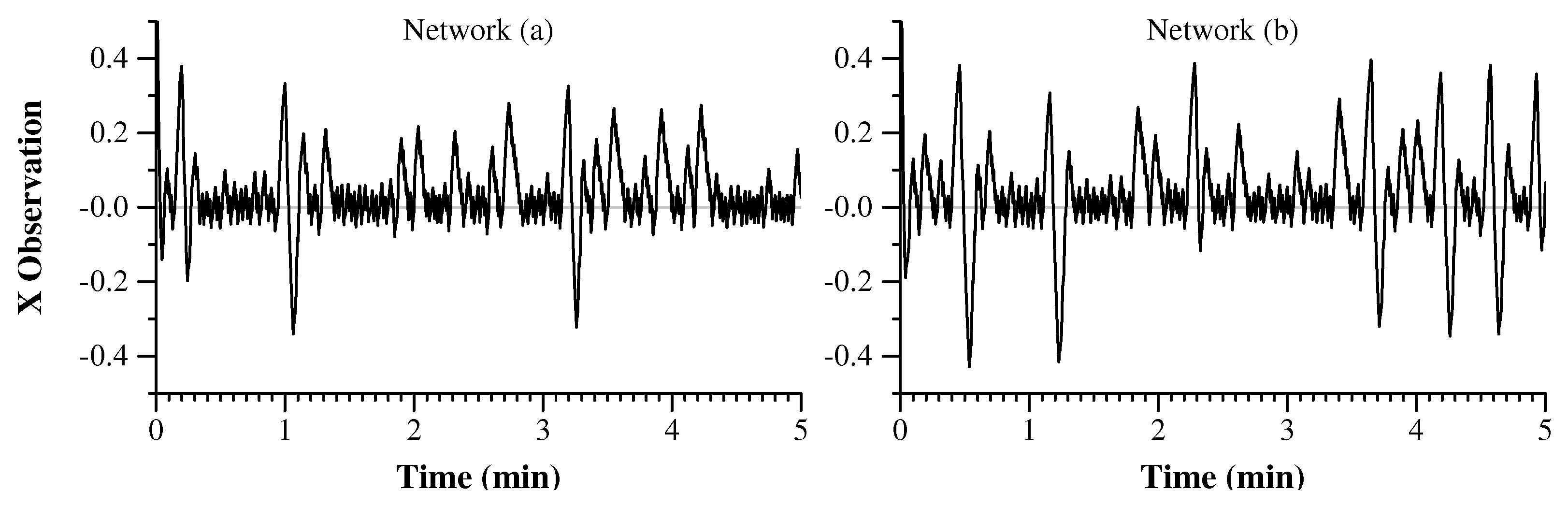

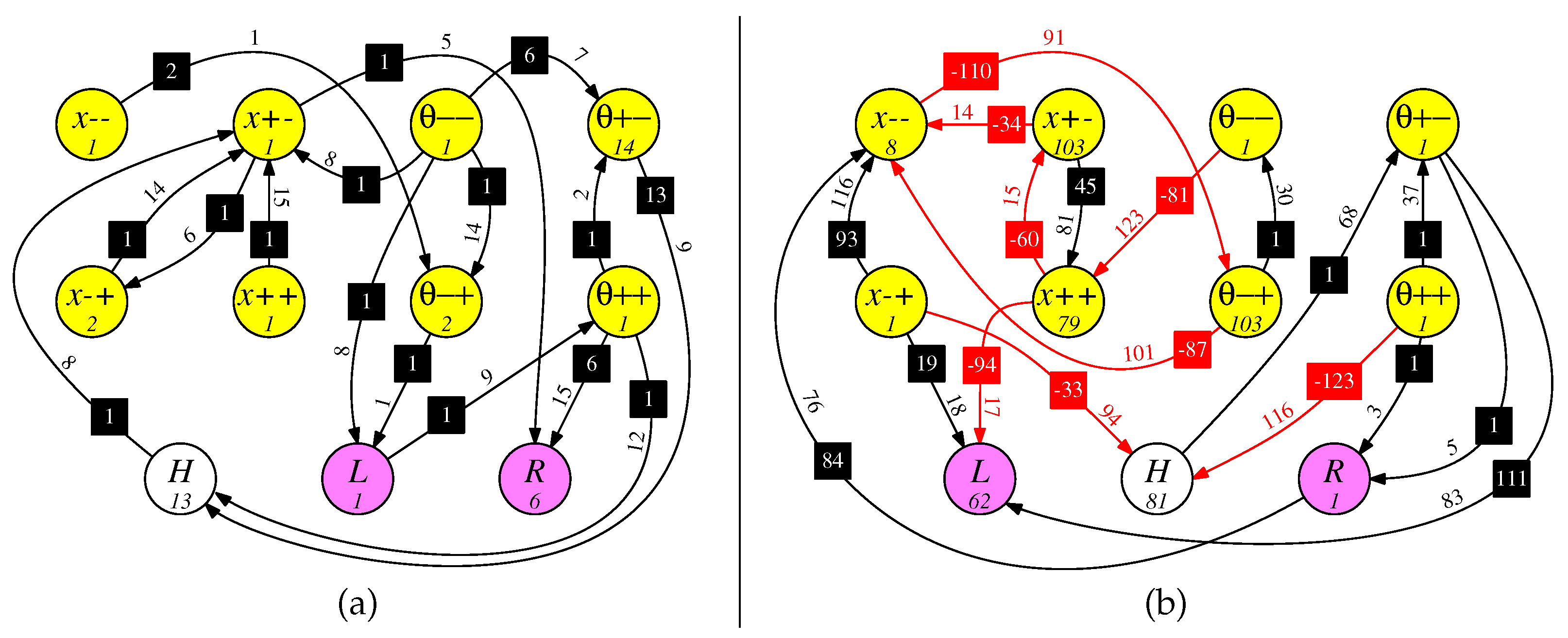

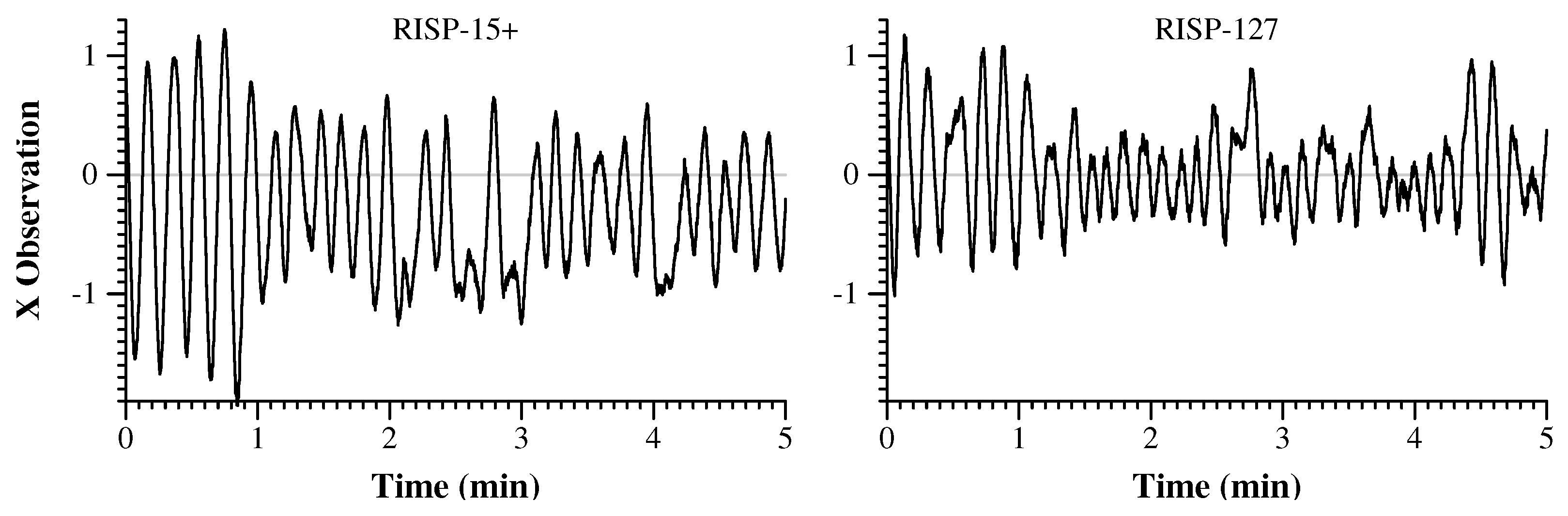

9. Example Networks

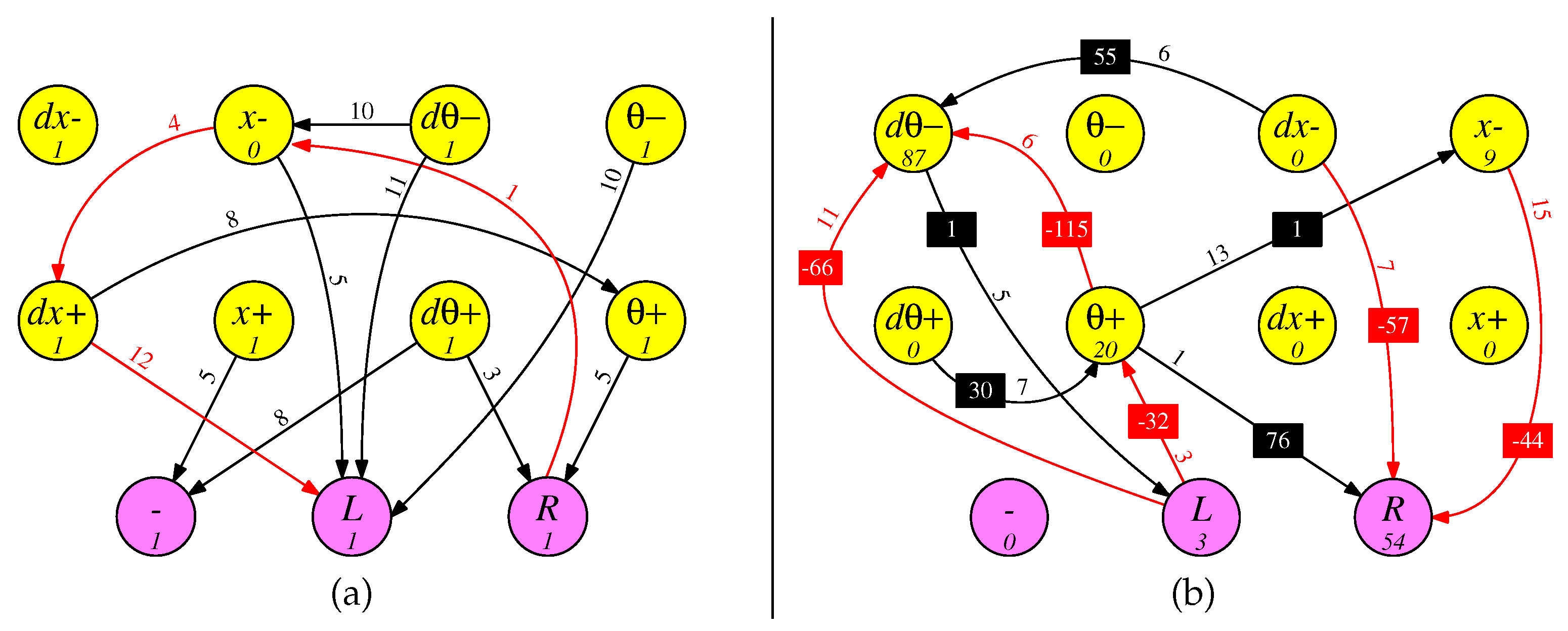

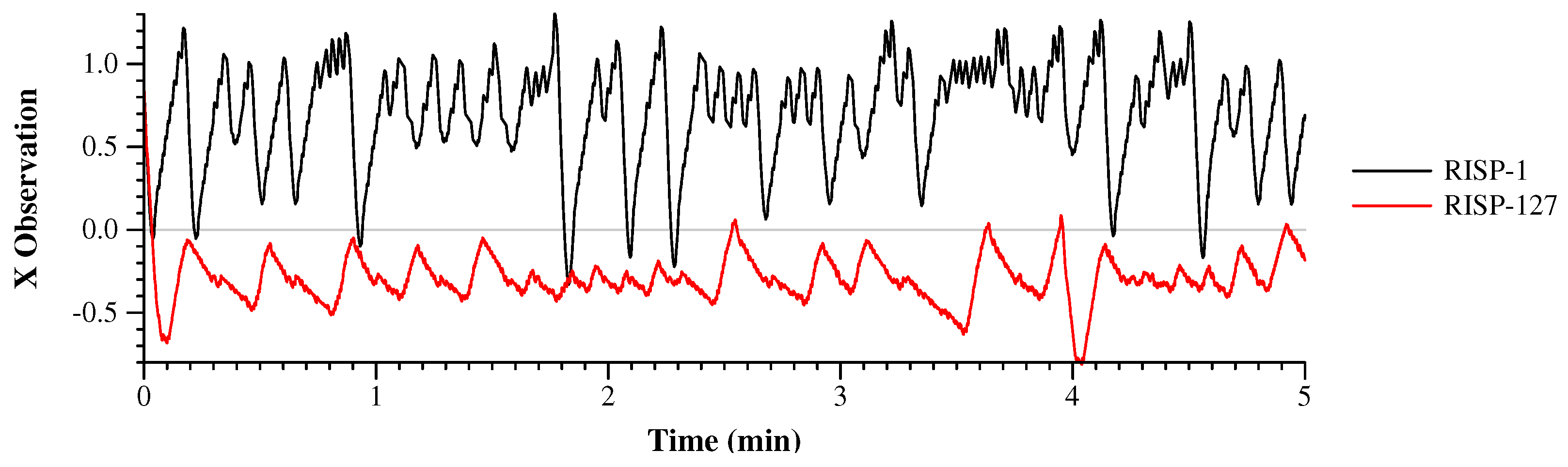

9.1. Two Example Easy Networks

9.2. Two Example Medium Networks

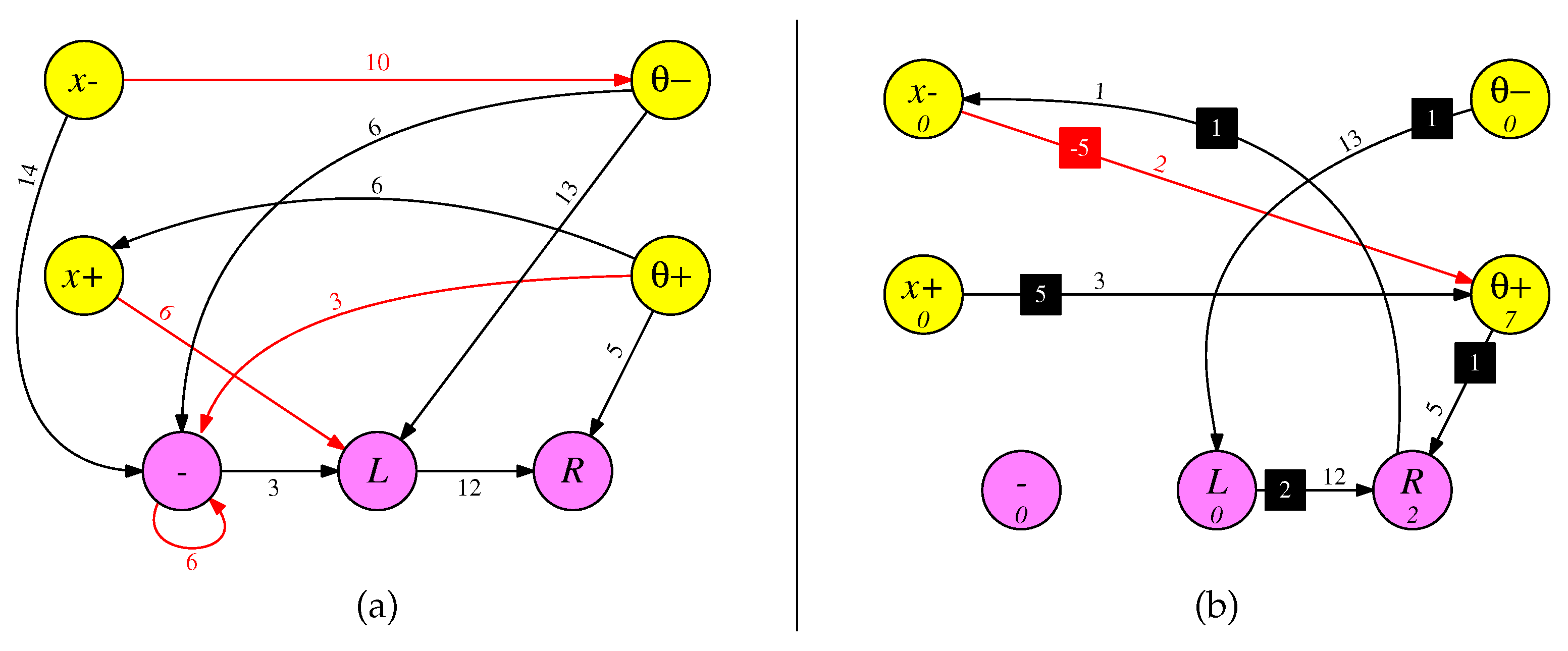

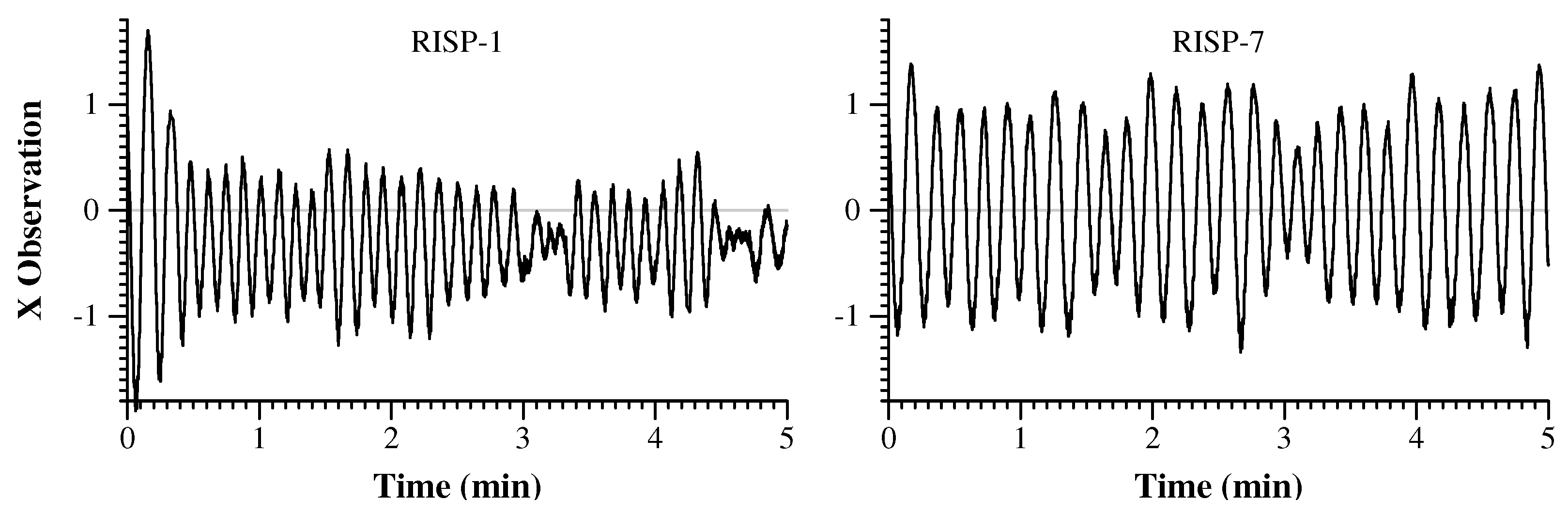

9.3. Two Example Hard Networks

9.4. Two Example Hardest Networks

10. Related Work

11. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A. Experiment to Determine Training Hyperparameters

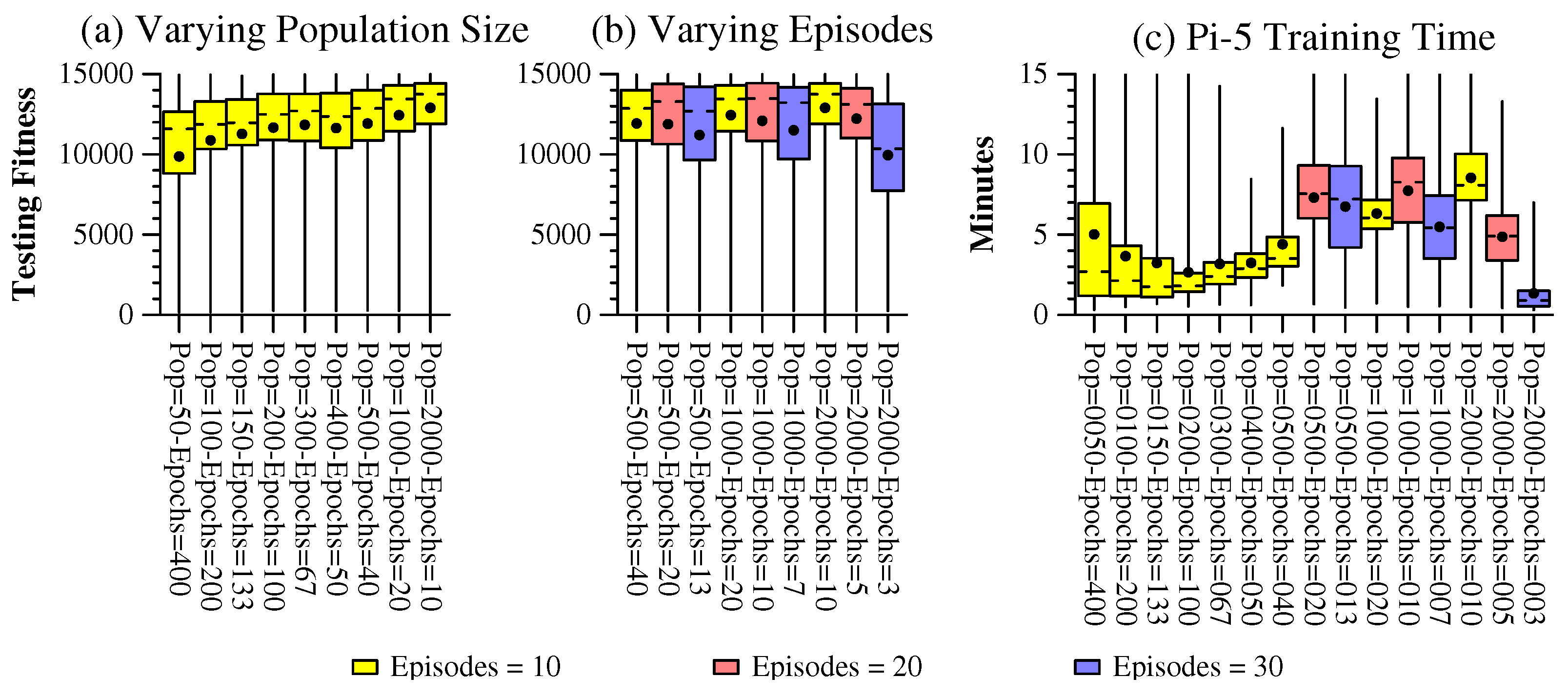

Appendix A.1. Parameters for Easy

- Runs with better fitnesses take longer, because the application must simulate more timesteps.

- We stop execution when the training fitness stalls at a maximum value for eight successive epochs. Therefore, runs with higher populations and more epochs are likely to truncate earlier. Runs with more episodes are likely to truncate later.

- Some SNN’s have more activity than others and take longer to simulate.

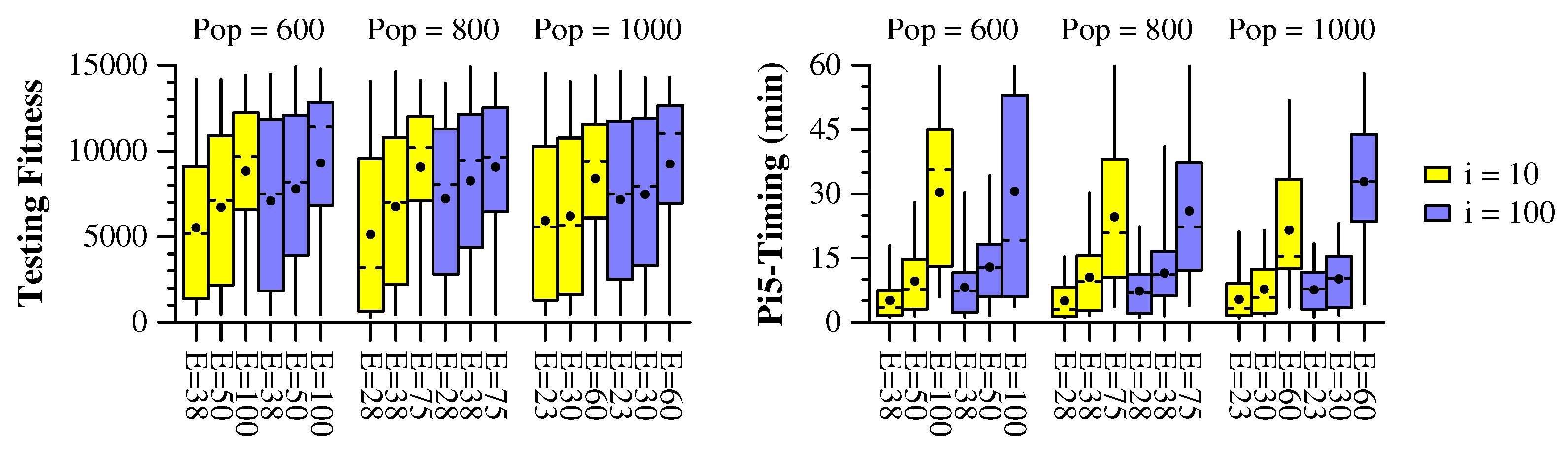

Appendix B. Parameters for Medium

- Vary the population size in .

- Vary the epochs so that the product of epochs and population size is a constant .

- Perform i initial passes to create the first population. At each pass, insert the best networks into the first population. Vary .

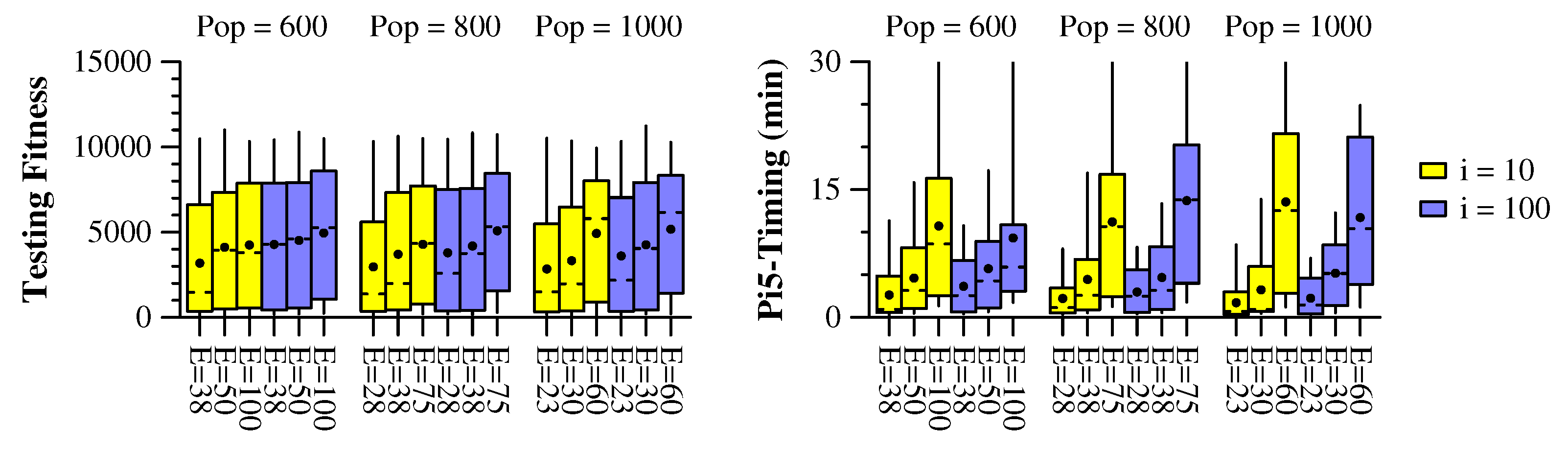

Appendix C. Parameters for Hard

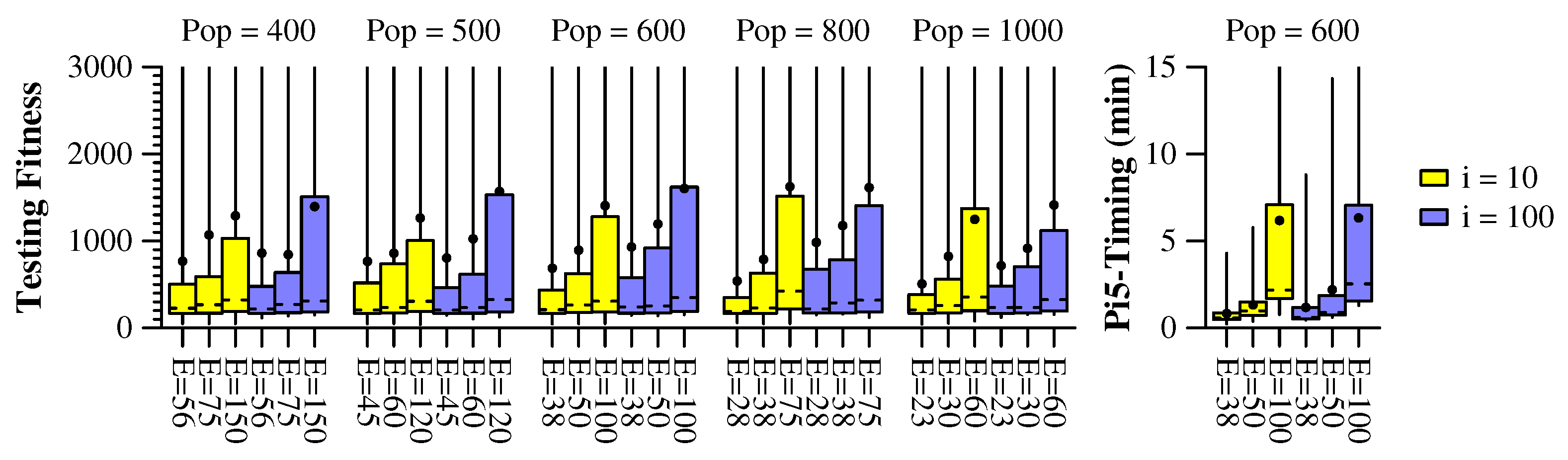

Appendix D. Parameters for Hardest

Appendix E. Summary

References

- Roy, K.; Jaiswal, A.; Panda, P. Towards spike-based machine intelligence with neuromorphic computing. Nature 2019, 575, 607–617. [Google Scholar] [CrossRef] [PubMed]

- Stroobants, S.; Depeyrous, J.; De Croon, G. Design and implementation of a parsimonious neuromorphic PID for onboard altitude control for MAVs using neuromorphic processors. International Conference on Neuromorphic Computing Systems (ICONS). ACM, 2022.

- Plank, J.S.; Rizzo, C.; Shahat, K.; Bruer, G.; Dixon, T.; Goin, M.; Zhao, G.; Anantharaj, J.; Schuman, C.D.; Dean, M.E.; Rose, G.S.; Cady, N.C.; Van Nostrand, J. The TENNLab Suite of LIDAR-Based Control Applications for Recurrent, Spiking, Neuromorphic Systems. 44th Annual GOMACTech Conference; , 2019.

- Rizzo, C.P.; Schuman, C.D.; Plank, J.S. Neuromorphic Downsampling of Event-Based Camera Output. NICE: Neuro-Inspired Computational Elements Workshop. ACM, 2023. [CrossRef]

- Barto, A.G.; Sutton, R.S.; Anderson, C.W. Neuronlike adaptive elements that can solve difficult learning control problems. IEEE Transactions on Systems, Man, and Cybernetics 1983, SMC-13, 834–846. [CrossRef]

- Anderson, C.W. Learning to control an inverted pendulum using neural networks. Control Systems Magazine 1989, 9, 31–37. [Google Scholar] [CrossRef]

- Florian, R.V. Correct equations for the dynamics of the cart-pole system. Center for Cognitive and Neural Studies (Coneural), Romania, 2007.

- Brockman, G.; Cheung, V.; Pettersson, L.; Schneider, J.; Schulman, J.; Tang, J.; Zaremba,W. OpenAI Gym. arXiv:1606.01540, 2016.

- Kumar, S. Balancing a CartPole System with Reinforcement Learning - A Tutorial. CoRR 2020, abs/2006.04938.

- Galarnyk, M.; Mika, S. An Introduction to Reinforcement Learning with OpenAI Gym, RLlib, and Google Colab. https://www.anyscale.com/blog/an-introduction-to-reinforcement-learning-with-openai-gymrllib-and-google, 2021.

- Ding, Y.; Zhang, Y.; Zhang, X.; Chen, P.; Zhang, Z.; Yang, Y.; Cheng, L.; Mu, C.; Wang, M.; Xiang, D.; Wu, G.; Zhou, K.; Yuan, Z.; Liu, Q. Engineering Spiking Neurons Using Threshold Switching Devices for High-Efficient Neuromorphic Computing. Frontiers in Neuroscience 2022, 15. [Google Scholar] [CrossRef] [PubMed]

- Plank, J.S.; Schuman, C.D.; Bruer, G.; Dean, M.E.; Rose, G.S. The TENNLab Exploratory Neuromorphic Computing Framework. IEEE Letters of the Computer Society 2018, 1, 17–20. [Google Scholar] [CrossRef]

- Schuman, C.D.; Plank, J.S.; Parsa, M.; Kulkarni, S.R.; Skuda, N.; Mitchell, J.P. A Software Framework for Comparing Training Approaches for Spiking Neuromorphic Systems. IJCNN: The International Joint Conference on Neural Networks, 2021, pp. 1–10.

- Parsa, M.; Mitchell, J.P.; Schuman, C.D.; Patton, R.M.; Potok, T.E.; Roy, K. Bayesian-Based Hyperparameter Optimization for Spiking Neuromorphic Systems. IEEE International Conference on Big Data, 2019, pp. 4472–4478.

- Schuman, C.D.; Disney, A.; Singh, S.P.; Bruer, G.; Mitchell, J.P.; Klibisz, A.; Plank, J.S. Parallel Evolutionary Optimization for Neuromorphic Network Training. Proceedings of the Workshop on Machine Learning in High Performance Computing Environments. IEEE Press, 2016, pp. 36–46.

- Schuman, C.D.; Plank, J.S.; Bruer, G.; Anantharaj, J. Non-Traditional Input Encoding Schemes for Spiking Neuromorphic Systems. IJCNN: The International Joint Conference on Neural Networks; , 2019; pp. 1–10. [CrossRef]

- Plank, J.S.; Dent, K.E.M.; Gullett, B.; Rizzo, C.P.; Schuman, C.D. The RISP Neuroprocessor - Open Source Support for Embedded Neuromorphic Computing. IEEE International Conference on Rebooting Computing (ICRC); , 2024.

- Schuman, C.D.; Potok, T.E.; Patton, R.M.; Birdwell, J.D.; Dean, M.E.; Rose, G.S.; Plank, J.S. A Survey of Neuromorphic Computing and Neural Networks in Hardware. arXiv:1705.06963, 2017.

- Cabessa, J.; Siegelmann, H.T. The Super-Turing Computational Power of Plastic Recurrent Neural Networks. International Journal of Neural Systems 2014, 24. [Google Scholar] [CrossRef] [PubMed]

- Akopyan, F.; Sawada, J.; Cassidy, A.; Alvarez-Icaza, R.; Arthur, J.; Merolla, P.; Imam, N.; Nakamura, Y.; Datta, P.; Nam, G.J.; Taba, B.; Beakes, M.; Brezzo, B.; Kuang, J.B.; Manohar, R.; Risk, W.P.; Jackson, B.; Modha, D.S. TrueNorth: Design and Tool Flow of a 65 mW 1 Million Neuron Programmable Neurosynaptic Chip. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2015, 34, 1537–1557. [Google Scholar] [CrossRef]

- Lehmann, H.M.; Julian, J.H.; Grassmann, C.; Issakov, V. Leaky integrate-and-fire neuron with a refractory period mechanism for invariant spikes. 17th conference on Ph.D research in microelectronics and electronics (PRIME), 2022, pp. 365–368. [CrossRef]

- Davies, M.; Srinivasa, N.; Lin, T.H.; Chinya, G.; Cao, Y.; Choday, S.H.; Dimou, G.; Joshi, P.; Imam, N.; Jain, S.; Liao, Y.; Lin, C.K.; Lines, A.; Liu, R.; Mathaikutty, D.; McCoy, S.; Paul, A.; Tse, J.; Venkataramanan, G.; Weng, Y.H.; Wild, A.; Yang, Y.; Wang, H. Loihi: A Neuromorphic Manycore Processor with On-Chip Learning. IEEE Micro 2018, 38, 82–99. [Google Scholar] [CrossRef]

- Azghadi, M.R.; Moradi, S.; Fasnacht, D.B.; Ozdas, M.S.; Indiveri, G. Programmable spike-timing dependent plasticity learning circuits in neuromorphic VLSI architectures. ACM Journal on Emerging Technologies in Computing Systems 2015, 12. [Google Scholar] [CrossRef]

- Masquelier, T.; Guyonneau, R.; Thorpe, S.J. Spike Timing Dependent Plasticity Finds the Start of Repeating Patterns in Continuous Spike Trains. PLoS ONE 2008, 3. [Google Scholar] [CrossRef] [PubMed]

- Schuman, C.D.; Rizzo, C.; McDonald-Carmack, J.; Skuda, N.; Plank, J.S. Evaluating Encoding and Decoding Approaches for Spiking Neuromorphic Systems. International Conference on Neuromorphic Computing Systems (ICONS). ACM, 2022, pp. 1–10.

- Dupeyroux, J. A Toolbox for Neuromorphic Sensing in Robotics. arXiv:2103.02751v1, 2021.

- Tang, G.; Kumar, N.; Yoo, R.; Michmizos, K. Deep Reinforcement Learning with Population-Coded Spiking Neural Network for Continuous Control. Conference on Robot Learning (CoRL); , 2020.

- Plank, J.S.; Schuman, C.D.; Rizzo, C.P. Framework-Open: Open-source part of the TENNLab Exploratory Neuromorphic Framework. https://github.com/TENNLab-UTK/framework-open, 2024.

- Plank, J.S.; Zheng, C.; Gullett, B.; Skuda, N.; Rizzo, C.; Schuman, C.D.; Rose, G.S. The Case for RISP: A Reduced Instruction Spiking Processor. arXiv:2206.14016, 2022, [2206.14016].

- Dent, K.; Gullett, B. Neuromorphic FPGA. https://github.com/TENNLab-UTK/fpga, 2024.

- Schuman, C.D.; Mitchell, J.P.; Patton, R.M.; Potok, T.E.; Plank, J.S. Evolutionary Optimization for Neuromorphic Systems. NICE: Neuro-Inspired Computational Elements Workshop, 2020.

- Li, C.; Chen, R.; Moutafis, C.; Furber, S. Robustness to Noisy Synaptic Weights in Spiking Neural Networks. IJCNN: The International Joint Conference on Neural Networks, 2020.

- Fonseca Guerra, G.A.; Furber, S.B. Using stochastic spiking neural networks on SpiNNaker to solve constraint satisfaction problems. Frontiers in Neuroscience 2017, 11, 714. [Google Scholar] [CrossRef] [PubMed]

- Dampfhoffer, M.; Lopez, J.M.; Mesquida, T.; Valentian, A.; Anghel, L. Improving the Robustness of Neural Networks to Noisy Multi-Level Non-Volatile Memory-based Synapses. IJCNN: The International Joint Conference on Neural Networks, 2023. [CrossRef]

- Schaefer, A.M.; Udluft, S.; Zimmermann, H.G. A Recurrent Control Neural Network for Data Efficient Reinforcement Learning. IEEE International Symposium on Approximate Dynamic Programming and Reinforcement Learning, 2007. [CrossRef]

- Nagendra, S.; Podila, N.; Ugarakhod, R.; George, K. Comparison of Reinforcement Learning algorithms applied to the Cart Pole problem. International Conference on Advances in Computing, Communications and Informatics (ICACCI), 2017, pp. 26–32. [CrossRef]

- Chang, O.; Kwiatkowski, R.; Chen, S.; Lipson, H. Agent Embeddings: A Latent Representation for Pole-Balancing Networks. Proceedings of the 18th International Conference on Autonomous Agents and MultiAgent Systems. ACM, 2019, pp. 656–664. [CrossRef]

- Buvanesh, P.V. DDPG Agent to Swing Up and Balance Cart-Pole System. International Journal of Advanced Research in Science, Communication and Technology (IJARSCT) 2021, 4. [Google Scholar]

- Variengien, A.; Pontes-Filho, S.; Glover, T.E.; Nichele, S. Towards Self-organized Control: Using Neural Cellular Automata to Robustly Control a Cart-pole Agent. Innovations in Machine Intelligence (IMI) 2021, 1, 1–14. [Google Scholar] [CrossRef]

- Rafe, A.W.; Garcia, J.A.; Raffe, W.L. Exploration Of Encoding And Decoding Methods For Spiking Neural Networks On The Cart Pole And Lunar Lander Problems Using Evolutionary Training. IEEE Congress on Evolutionary Computation (CEC), 2021. [CrossRef]

- Shin, S.S.; Kim, Y.H. A surrogate model-based genetic algorithm for the optimal policy in cart-pole balancing environments. Genetic and Evolutionary Computation Conference, 2022, pp. 503–505.

- Tran, D.D.; Le, T.T.; Duong, M.T.; Phan, M.Q.; Nguyen, M.S. FPGA Design for Deep Q-Network: a case study in cartpole environment. International Conference on Multimedia Analysis and Pattern Recognition (MAPR). IEEE, 2022. [CrossRef]

- Han, B.C.; Kim, H.C.; Kang, M.J. Comparison of value-based Reinforcement Learning Algorithms in Cart-Pole Environment. International Journal of Internet, Broadcasting and Communication 2023, 15, 166–175. [Google Scholar] [CrossRef]

- Xu, J. How to Beat the CartPole Game in 5 Lines – A Simple Solution without Artificial Intelligence. TowardsDataScience, https://towardsdatascience.com/how-to-beat-the-cartpole-game-in-5-lines-5ab4e738c93f, 2021.

| Label | Discrete | Threshold | Potential | Weight | Delay |

|---|---|---|---|---|---|

| Values | Range | Range | Range | Range | |

| RISP-F | No | ||||

| RISP-F+ | No | ||||

| RISP-1 | Yes | ||||

| RISP-1+ | Yes | ||||

| RISP-7 | Yes | ||||

| RISP-15+ | Yes | ||||

| RISP-127 | Yes | ||||

| RISP-255+ | Yes |

| Benchmark | Easy | Medium | Hard | Hardest |

|---|---|---|---|---|

| Target Fitness | 14,250 | 12,000 | 9,000 | 6,000 |

| Encoder | Spike-FF-2 | Spike-FF-2 | Spike-FF-2 | Argyle-4 |

| Population Size | 500 | 600 | 1000 | 600 |

| Epochs | 40 | 38 | 30 | 100 |

| Episodes | 10 | 10 | 10 | 10 |

| Initial Passes | 10 | 100 | 100 | 100 |

| % Exceeding Target | 19.4 | 23.6 | 9.2 | 8.9 |

| 3rd-Quartile Pi5 Timing (m) | 4.9 | 11.6 | 8.5 | 7.1 |

| Easy | Medium | Hard | Hardest |

|---|---|---|---|

| 3 | 7 | 4 | 11 |

| Estimated | Measure of | ||||

|---|---|---|---|---|---|

| Year | Citation | Data Structure | ML Technique | Parameters | Success (sec) |

| 2007 | Schaefer et al [35] | RNN/RCNN | BPTT | 240 | 2,000 |

| 2017 | Nagendra et al [36] | Various | Various | 44 | 2,000 |

| 2017 | Chang et al [37] | ANN | Generational | 212 | 4 |

| 2020 | Kumar [9] | DNN | Q-learning | ∼800 | 3.9 |

| 2021 | Buvanesh [38] | DNN | Actor-critic/DeepQ | 130,000 | 4,000 |

| 2021 | Variengien et al [39] | Neural Cellular Automata | DeepQ | 1,854 | 200 |

| 2021 | Rafe et al [40] | Izhikevich Neuron | Evolutionary | 8-256 | 3.9 |

| 2022 | Shin & Kim [41] | DNN | Genetic | 67 | 10 |

| 2022 | Ding et al [11] | Leaky Integrate & Fire SNN | Gradient Descent | 4096 | 9 |

| 2022 | Tran et al [42] | ANN/DNN on FPGA | DeepQ | 64 | 4 |

| 2023 | Han et al [43] | FNN/CNN | Q-Learning, Sarsa | ? | 4 |

| 2024 | This paper | Integrate & Fire SNN | Genetic | 25 | 300 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).