Submitted:

20 November 2024

Posted:

21 November 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

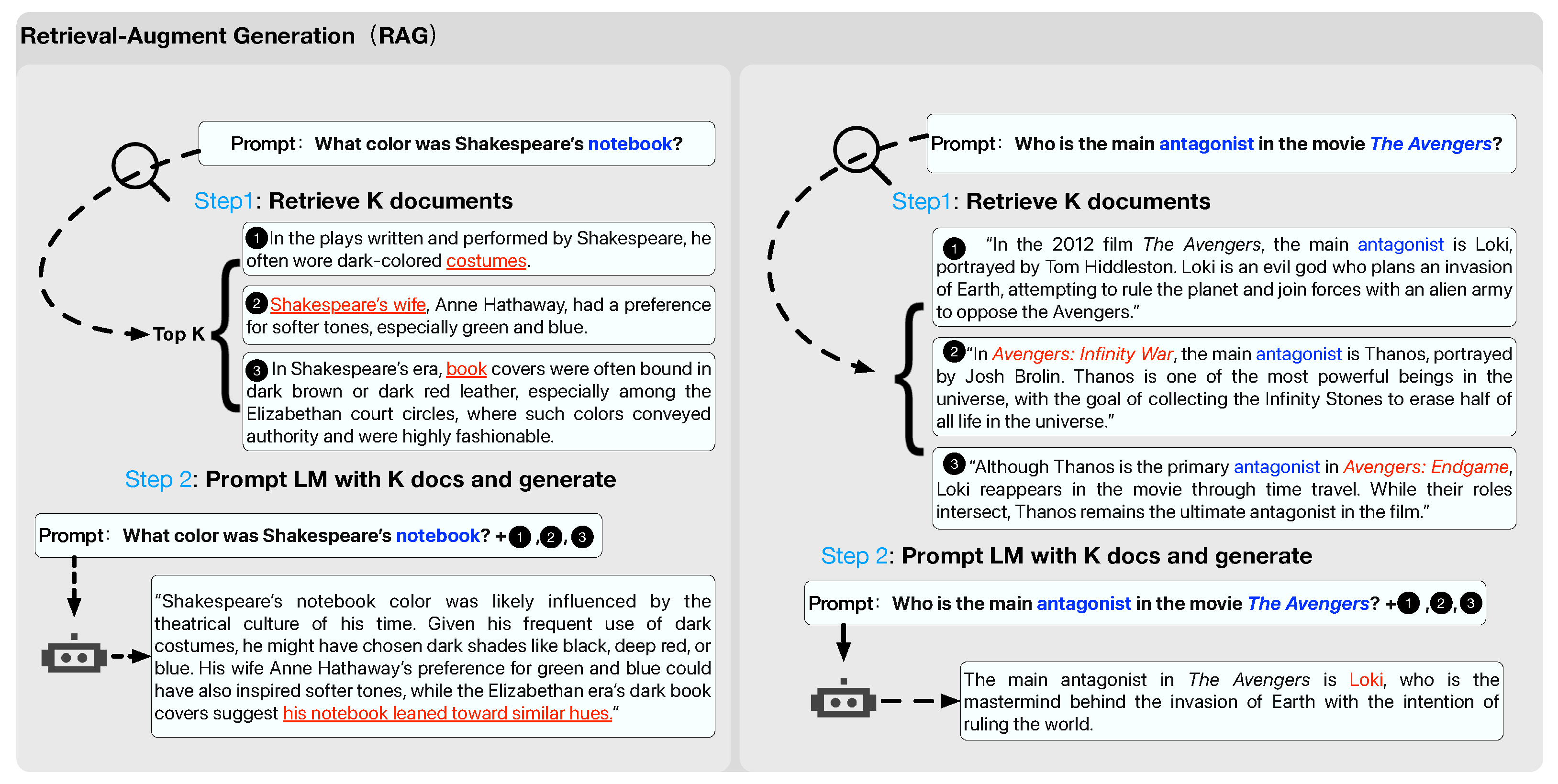

2.1. Retrieval Augmented Generation Based on Knowledge Structure

2.2. Retrieval Augmented Generation Based on Question Decomposition

2.3. Thinking and Planning in Retrieval Augmented Generation

2.4. Reasoning Structure of LLMs

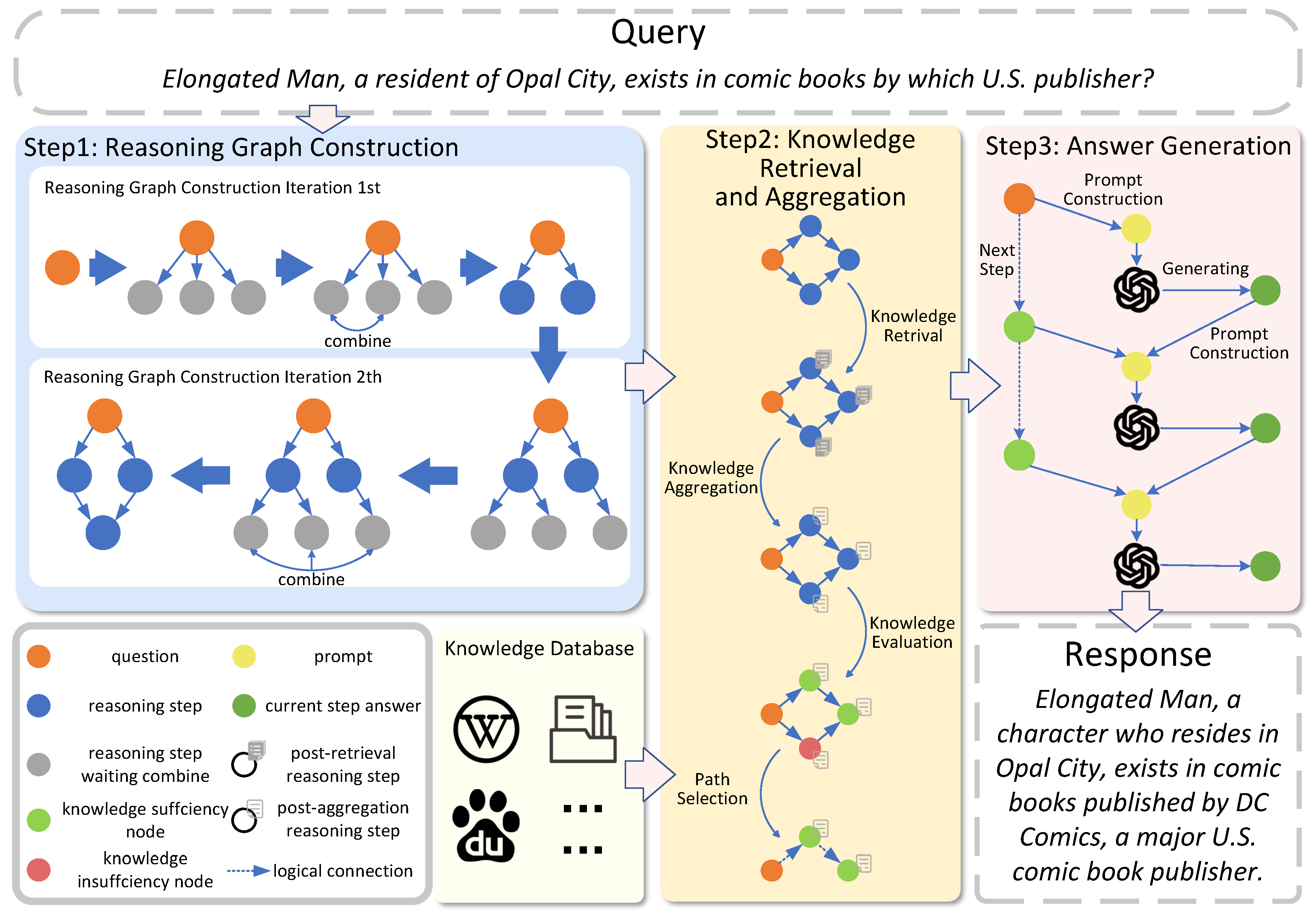

3. Method

3.1. Preliminary

3.2. Reasoning Graph Construction

3.3. Knowledge Retrieval and Aggregation

3.4. Answer Generation

4. Experiments

4.1. Dataset

4.2. Baselines and Metrics

4.3. Implementation Details

4.4. Main Results

5. Discussion

5.1. Ablation Study

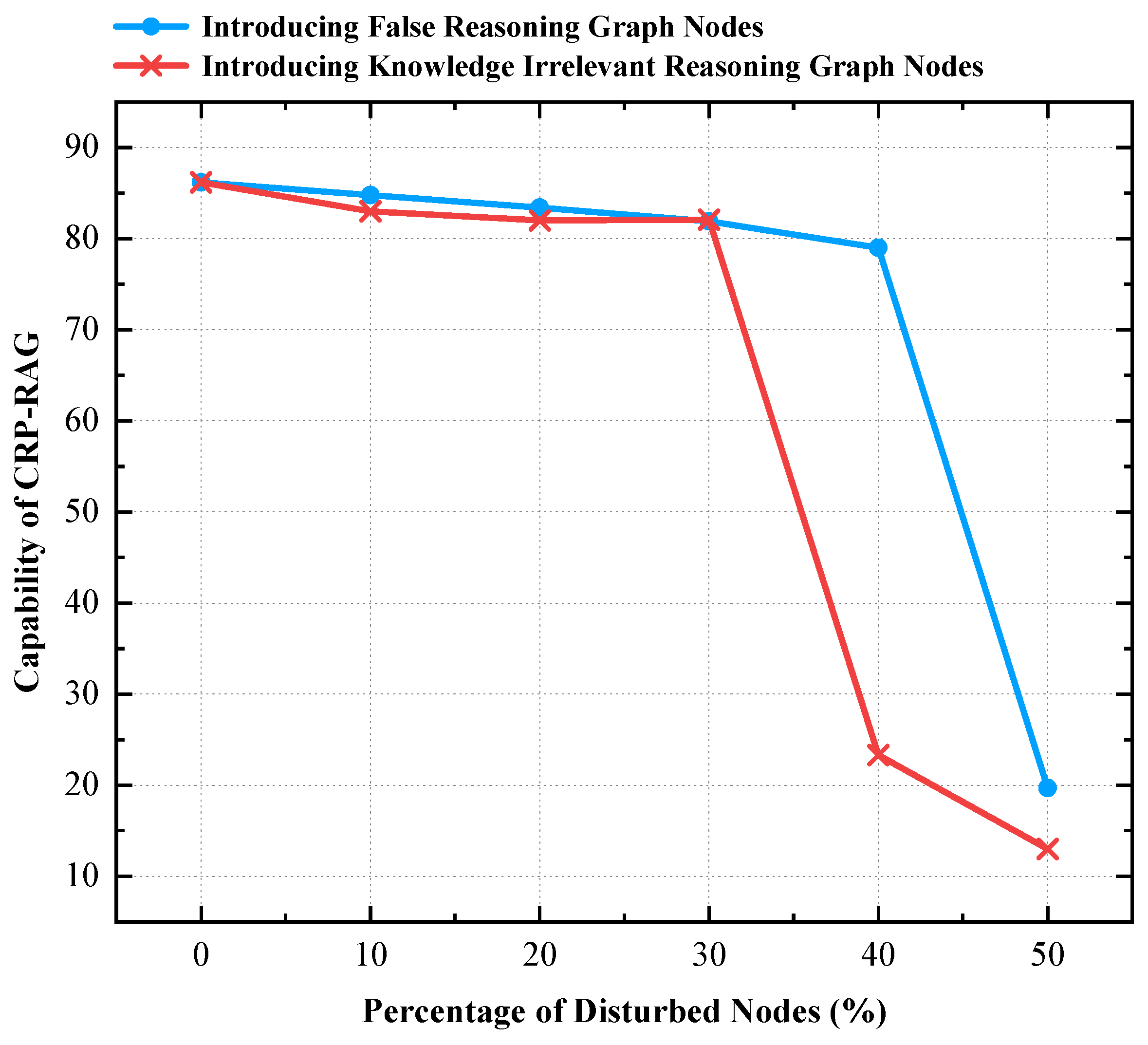

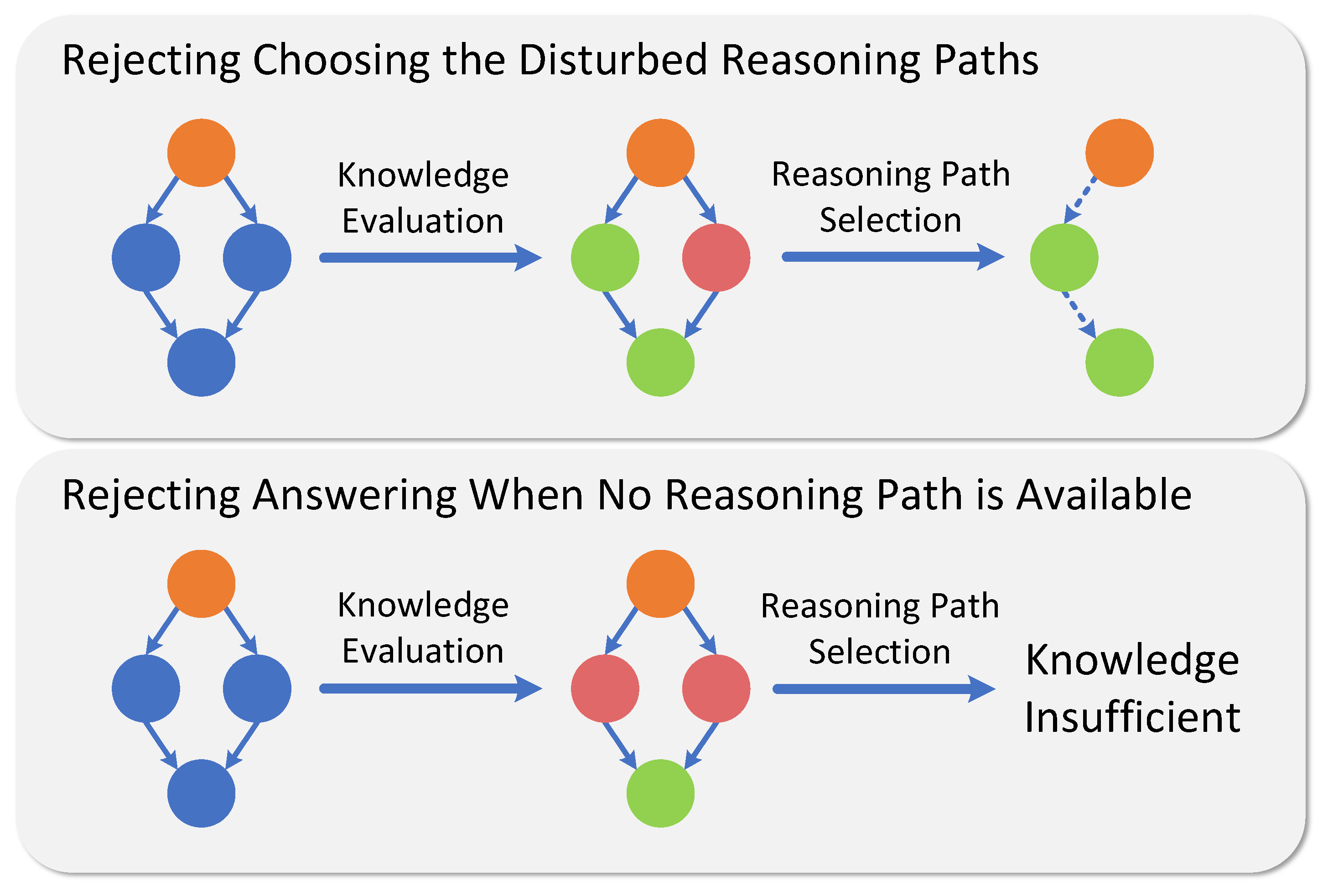

5.2. Robustness Analysis of CRP-RAG

5.3. Perplexity and Retrieval Faithfulness Analysis of CRP-RAG

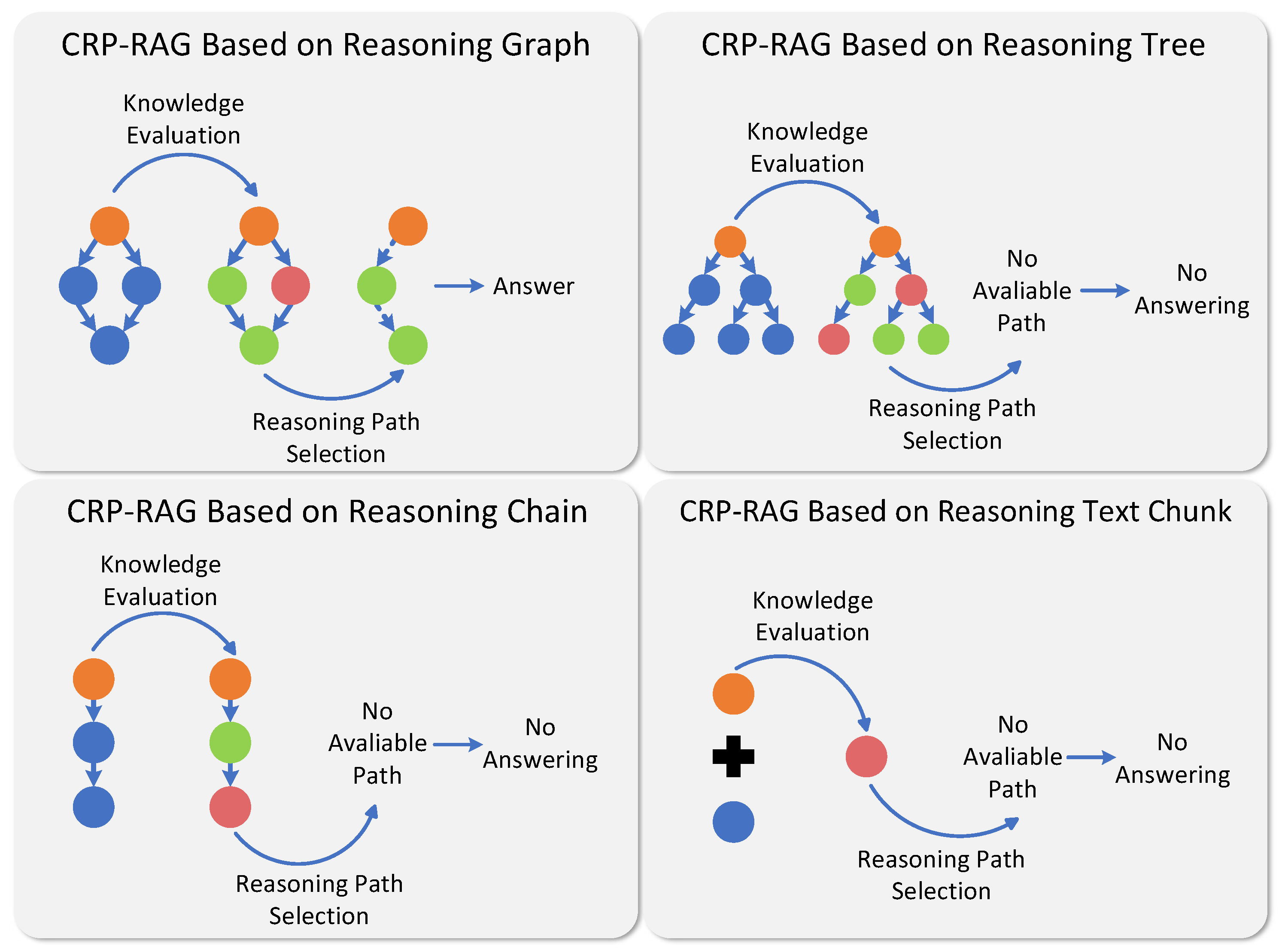

5.4. Analysis of the Effectiveness of Graph Structure for Reasoning

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A

Appendix A.1. New Node Generation

Appendix A.2. Fusion Based on Similar Nodes

Appendix A.3. Knowledge Integration

Appendix A.4. Knowledge Sufficiency Evaluation

Appendix A.5. Answer Abstracting

References

- Brown, T.B. Language models are few-shot learners. arXiv preprint, 2020; arXiv:2005.14165. [Google Scholar]

- Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; Roberts, A.; Barham, P.; Chung, H.W.; Sutton, C.; Gehrmann, S.; others. Palm: Scaling language modeling with pathways. Journal of Machine Learning Research 2023, 24, 1–113. [Google Scholar]

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S. ; others. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint, 2023; arXiv:2307.09288. [Google Scholar]

- Bang, Y.; Cahyawijaya, S.; Lee, N.; Dai, W.; Su, D.; Wilie, B.; Lovenia, H.; Ji, Z.; Yu, T.; Chung, W. ; others. A Multitask, Multilingual, Multimodal Evaluation of ChatGPT on Reasoning, Hallucination, and Interactivity. Proceedings of the 13th International Joint Conference on Natural Language Processing and the 3rd Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics (Volume 1: Long Papers), 2023, pp. 675–718.

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; others. Training language models to follow instructions with human feedback. Advances in neural information processing systems 2022, 35, 27730–27744. [Google Scholar]

- Huang, M.; Zhu, X.; Gao, J. Challenges in building intelligent open-domain dialog systems. ACM Transactions on Information Systems (TOIS) 2020, 38, 1–32. [Google Scholar] [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; others. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems 2020, 33, 9459–9474. [Google Scholar]

- Guu, K.; Lee, K.; Tung, Z.; Pasupat, P.; Chang, M. Retrieval augmented language model pre-training. International conference on machine learning. PMLR, 2020, pp. 3929–3938.

- Ram, O.; Levine, Y.; Dalmedigos, I.; Muhlgay, D.; Shashua, A.; Leyton-Brown, K.; Shoham, Y. In-context retrieval-augmented language models. Transactions of the Association for Computational Linguistics 2023, 11, 1316–1331. [Google Scholar] [CrossRef]

- Cuconasu, F.; Trappolini, G.; Siciliano, F.; Filice, S.; Campagnano, C.; Maarek, Y.; Tonellotto, N.; Silvestri, F. The power of noise: Redefining retrieval for rag systems. Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2024, pp. 719–729.

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; others. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 2022, 35, 24824–24837. [Google Scholar]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.; Cao, Y.; Narasimhan, K. Tree of thoughts: Deliberate problem solving with large language models. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Mao, Y.; He, P.; Liu, X.; Shen, Y.; Gao, J.; Han, J.; Chen, W. Generation-Augmented Retrieval for Open-Domain Question Answering. Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 2021, pp. 4089–4100.

- Kim, M.; Park, C.; Baek, S. Augmenting Query and Passage for Retrieval-Augmented Generation using LLMs for Open-Domain Question Answering. arXiv preprint, 2024; arXiv:2406.14277. [Google Scholar]

- An, K.; Yang, F.; Li, L.; Lu, J.; Cheng, S.; Si, S.; Wang, L.; Zhao, P.; Cao, L.; Lin, Q. ; others. Thread: A Logic-Based Data Organization Paradigm for How-To Question Answering with Retrieval Augmented Generation. arXiv preprint, 2024; arXiv:2406.13372. [Google Scholar]

- Sarthi, P.; Abdullah, S.; Tuli, A.; Khanna, S.; Goldie, A.; Manning, C.D. RAPTOR: Recursive Abstractive Processing for Tree-Organized Retrieval. The Twelfth International Conference on Learning Representations.

- Shen, Y.; Jiang, H.; Qu, H.; Zhao, J. Think-then-Act: A Dual-Angle Evaluated Retrieval-Augmented Generation. arXiv preprint, 2024; arXiv:2406.13050. [Google Scholar]

- Asai, A.; Wu, Z.; Wang, Y.; Sil, A.; Hajishirzi, H. Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. The Twelfth International Conference on Learning Representations.

- Besta, M.; Blach, N.; Kubicek, A.; Gerstenberger, R.; Podstawski, M.; Gianinazzi, L.; Gajda, J.; Lehmann, T.; Niewiadomski, H.; Nyczyk, P. ; others. Graph of thoughts: Solving elaborate problems with large language models. Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol. 38, pp. 17682–17690.

- Xu, F.; Shi, W.; Choi, E. Recomp: Improving retrieval-augmented lms with compression and selective augmentation. arXiv preprint, 2023; arXiv:2310.04408. [Google Scholar]

- Jin, J.; Zhu, Y.; Zhou, Y.; Dou, Z. BIDER: Bridging Knowledge Inconsistency for Efficient Retrieval-Augmented LLMs via Key Supporting Evidence. arXiv preprint, 2024; arXiv:2402.12174. [Google Scholar]

- Wang, Z.; Teo, S.X.; Ouyang, J.; Xu, Y.; Shi, W. M-RAG: Reinforcing Large Language Model Performance through Retrieval-Augmented Generation with Multiple Partitions. arXiv preprint, 2024; arXiv:2405.16420. [Google Scholar]

- Goel, K.; Chandak, M. HIRO: Hierarchical Information Retrieval Optimization. arXiv preprint, 2024; arXiv:2406.09979. [Google Scholar]

- He, X.; Tian, Y.; Sun, Y.; Chawla, N.V.; Laurent, T.; LeCun, Y.; Bresson, X.; Hooi, B. G-retriever: Retrieval-augmented generation for textual graph understanding and question answering. arXiv preprint, 2024; arXiv:2402.07630. [Google Scholar]

- Xu, Z.; Cruz, M.J.; Guevara, M.; Wang, T.; Deshpande, M.; Wang, X.; Li, Z. Retrieval-augmented generation with knowledge graphs for customer service question answering. Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2024, pp. 2905–2909.

- Chen, R.; Jiang, W.; Qin, C.; Rawal, I.S.; Tan, C.; Choi, D.; Xiong, B.; Ai, B. LLM-Based Multi-Hop Question Answering with Knowledge Graph Integration in Evolving Environments. arXiv preprint, 2024; arXiv:2408.15903. [Google Scholar]

- Wang, W.; Fang, T.; Li, C.; Shi, H.; Ding, W.; Xu, B.; Wang, Z.; Bai, J.; Liu, X.; Cheng, J. ; others. CANDLE: iterative conceptualization and instantiation distillation from large language models for commonsense reasoning. arXiv preprint, 2024; arXiv:2401.07286. [Google Scholar]

- Yang, L.; Yu, Z.; Zhang, T.; Cao, S.; Xu, M.; Zhang, W.; Gonzalez, J.E.; Cui, B. Buffer of Thoughts: Thought-Augmented Reasoning with Large Language Models. arXiv preprint, 2024; arXiv:2406.04271. [Google Scholar]

- Melz, E. Enhancing llm intelligence with arm-rag: Auxiliary rationale memory for retrieval augmented generation. arXiv preprint, 2023; arXiv:2311.04177. [Google Scholar]

- Wang, K.; Duan, F.; Li, P.; Wang, S.; Cai, X. LLMs Know What They Need: Leveraging a Missing Information Guided Framework to Empower Retrieval-Augmented Generation. arXiv preprint, 2024; arXiv:2404.14043. [Google Scholar]

- Zhou, P.; Pujara, J.; Ren, X.; Chen, X.; Cheng, H.T.; Le, Q.V.; Chi, E.H.; Zhou, D.; Mishra, S.; Zheng, H.S. Self-discover: Large language models self-compose reasoning structures. arXiv preprint, 2024; arXiv:2402.03620. [Google Scholar]

- Sun, S.; Li, J.; Zhang, K.; Sun, X.; Cen, J.; Wang, Y. A novel feature integration method for named entity recognition model in product titles. Computational Intelligence 2024, 40, e12654. [Google Scholar] [CrossRef]

- Feng, J.; Tao, C.; Geng, X.; Shen, T.; Xu, C.; Long, G.; Zhao, D.; Jiang, D. Synergistic Interplay between Search and Large Language Models for Information Retrieval. arXiv preprint, 2023; arXiv:2305.07402. [Google Scholar]

- Shi, Z.; Zhang, S.; Sun, W.; Gao, S.; Ren, P.; Chen, Z.; Ren, Z. Generate-then-Ground in Retrieval-Augmented Generation for Multi-hop Question Answering. Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2024, pp. 7339–7353.

- Yoran, O.; Wolfson, T.; Ram, O.; Berant, J. Making Retrieval-Augmented Language Models Robust to Irrelevant Context. The Twelfth International Conference on Learning Representations.

- Zhang, K.; Zeng, J.; Meng, F.; Wang, Y.; Sun, S.; Bai, L.; Shen, H.; Zhou, J. Tree-of-Reasoning Question Decomposition for Complex Question Answering with Large Language Models. Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol. 38, pp. 19560–19568. [Google Scholar].

- Ding, H.; Pang, L.; Wei, Z.; Shen, H.; Cheng, X. Retrieve only when it needs: Adaptive retrieval augmentation for hallucination mitigation in large language models. arXiv preprint, 2024; arXiv:2402.10612. [Google Scholar]

- Su, W.; Tang, Y.; Ai, Q.; Wu, Z.; Liu, Y. DRAGIN: Dynamic Retrieval Augmented Generation based on the Real-time Information Needs of Large Language Models. CoRR 2024. [Google Scholar]

- Yan, S.Q.; Gu, J.C.; Zhu, Y.; Ling, Z.H. Corrective retrieval augmented generation. arXiv preprint, 2024; arXiv:2401.15884. [Google Scholar]

- Liu, Y.; Peng, X.; Zhang, X.; Liu, W.; Yin, J.; Cao, J.; Du, T. RA-ISF: Learning to Answer and Understand from Retrieval Augmentation via Iterative Self-Feedback. arXiv preprint, 2024; arXiv:2403.06840. [Google Scholar]

- Kim, J.; Nam, J.; Mo, S.; Park, J.; Lee, S.W.; Seo, M.; Ha, J.W.; Shin, J. SuRe: Summarizing Retrievals using Answer Candidates for Open-domain QA of LLMs. The Twelfth International Conference on Learning Representations.

- He, B.; Chen, N.; He, X.; Yan, L.; Wei, Z.; Luo, J.; Ling, Z.H. Retrieving, Rethinking and Revising: The Chain-of-Verification Can Improve Retrieval Augmented Generation. Findings of the Association for Computational Linguistics: EMNLP 2024, 2024, pp. 10371–10393. [Google Scholar]

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large language models are zero-shot reasoners. Advances in neural information processing systems 2022, 35, 22199–22213. [Google Scholar]

- Zhang, Z.; Zhang, A.; Li, M.; Smola, A. Automatic Chain of Thought Prompting in Large Language Models. The Eleventh International Conference on Learning Representations.

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.V.; Chi, E.H.; Narang, S.; Chowdhery, A.; Zhou, D. Self-Consistency Improves Chain of Thought Reasoning in Language Models. The Eleventh International Conference on Learning Representations.

- Kwiatkowski, T.; Palomaki, J.; Redfield, O.; Collins, M.; Parikh, A.; Alberti, C.; Epstein, D.; Polosukhin, I.; Devlin, J.; Lee, K.; others. Natural questions: a benchmark for question answering research. Transactions of the Association for Computational Linguistics 2019, 7, 453–466. [Google Scholar] [CrossRef]

- Joshi, M.; Choi, E.; Weld, D.S.; Zettlemoyer, L. TriviaQA: A Large Scale Distantly Supervised Challenge Dataset for Reading Comprehension. Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2017, pp. 1601–1611.

- Berant, J.; Chou, A.; Frostig, R.; Liang, P. Semantic parsing on freebase from question-answer pairs. Proceedings of the 2013 conference on empirical methods in natural language processing, 2013, pp. 1533–1544.

- Yang, Z.; Qi, P.; Zhang, S.; Bengio, Y.; Cohen, W.; Salakhutdinov, R.; Manning, C.D. HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering. Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, 2018, pp. 2369–2380.

- Ho, X.; Nguyen, A.K.D.; Sugawara, S.; Aizawa, A. Constructing A Multi-hop QA Dataset for Comprehensive Evaluation of Reasoning Steps. Proceedings of the 28th International Conference on Computational Linguistics, 2020, pp. 6609–6625.

- Thorne, J.; Vlachos, A.; Christodoulopoulos, C.; Mittal, A. FEVER: a Large-scale Dataset for Fact Extraction and VERification. Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers), 2018, pp. 809–819.

- Trivedi, H.; Balasubramanian, N.; Khot, T.; Sabharwal, A. Interleaving Retrieval with Chain-of-Thought Reasoning for Knowledge-Intensive Multi-Step Questions. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2023, pp. 10014–10037.

- Shao, Z.; Gong, Y.; Shen, Y.; Huang, M.; Duan, N.; Chen, W. Enhancing Retrieval-Augmented Large Language Models with Iterative Retrieval-Generation Synergy. Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, 9248–9274. [Google Scholar]

- GLM, T.; Zeng, A.; Xu, B.; Wang, B.; Zhang, C.; Yin, D.; Zhang, D.; Rojas, D.; Feng, G.; Zhao, H. ; others. Chatglm: A family of large language models from glm-130b to glm-4 all tools. arXiv preprint, 2024; arXiv:2406.12793. [Google Scholar]

- Xiao, S.; Liu, Z.; Zhang, P.; Muennighof, N. C-pack: Packaged resources to advance general chinese embedding. arXiv preprint, 2023; arXiv:2309.07597. [Google Scholar]

- Merity, S.; Xiong, C.; Bradbury, J.; Socher, R. Pointer Sentinel Mixture Models. International Conference on Learning Representations, 2022.

| Open Domain Question Answering | Multi-Hop Reasoning Question Answering | Fact Varifing | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| NQ | TriviaQA(TQA) | WebQuestions(WQA) | HotPotQA | 2WikiMultiHopQA | FEVER | |||||||||||||||

| EM | F1 | Acc-LM | EM | F1 | Acc-LM | EM | F1 | Acc-LM | EM | F1 | Acc-LM | EM | F1 | Acc-LM | Acc-LM | |||||

| Vanilla LLMs | ||||||||||||||||||||

| GLM-4-Plus | 33.0 | 44.2 | 55.4 | 68.2 | 78.9 | 83.2 | 14.4 | 24.1 | 31.2 | 20.4 | 38.9 | 51.1 | 27.3 | 36.7 | 50.4 | 67.1 | ||||

| GLM-4-Plus w ToT | 39.0 | 50.9 | 59.3 | 72.1 | 85.8 | 85.0 | 25.3 | 37.3 | 48.3 | 34.0 | 47.8 | 57.2 | 28.1 | 40.5 | 53.6 | 68.4 | ||||

| RALMs FrameWork | ||||||||||||||||||||

| RALMs | 44.5 | 54.0 | 58.2 | 69.9 | 77.0 | 80.6 | 45.2 | 61.0 | 73.6 | 37.2 | 53.4 | 62.0 | 31.7 | 49.0 | 56.7 | 72.0 | ||||

| Query Decomposition RALMs Framework | ||||||||||||||||||||

| IRCoT | 50.0 | 58.2 | 68.8 | 70.2 | 81.9 | 80.8 | 51.4 | 65.4 | 76.8 | 48.2 | 60.7 | 71.3 | 46.8 | 58.0 | 68.4 | 72.9 | ||||

| ITER-RETGEN | 56.4 | 66.8 | 71.4 | 72.6 | 86.0 | 84.4 | 60.2 | 75.8 | 81.2 | 45.8 | 61.1 | 73.4 | 36.0 | 47.4 | 58.5 | 71.5 | ||||

| Knowledge Structure RALMs Framework | ||||||||||||||||||||

| RAPTOR | 60.1 | 68.5 | 77.8 | 73.6 | 80.9 | 83.9 | 57.8 | 65.2 | 79.1 | 60.3 | 73.1 | 81.5 | 39.6 | 55.3 | 66.8 | 66.6 | ||||

| GraphRAG | 42.6 | 51.6 | 62.1 | 72.1 | 83.0 | 81.6 | 51.5 | 60.4 | 75.5 | 56.0 | 68.9 | 76.3 | 38.7 | 51.8 | 60.9 | 71.6 | ||||

| Self-Planning RALMs Framework | ||||||||||||||||||||

| Think-then-Act | 56.0 | 65.7 | 69.9 | 74.7 | 80.7 | 84.8 | 55.9 | 69.5 | 79.0 | 56.9 | 65.8 | 79.8 | 52.6 | 68.7 | 76.6 | 76.9 | ||||

| Self-RAG | 59.2 | 66.3 | 70.0 | 76.3 | 80.1 | 79.3 | 58.2 | 69.0 | 77.4 | 67.4 | 80.1 | 86.0 | 57.6 | 69.4 | 79.1 | 80.8 | ||||

| Ours | ||||||||||||||||||||

| CRP-RAG | 63.2 | 71.1 | 82.3 | 79.7 | 86.4 | 87.0 | 62.5 | 75.6 | 85.2 | 81.0 | 87.6 | 87.4 | 69.3 | 77.9 | 81.0 | 85.0 | ||||

| HotPotQA | FEVER | |

|---|---|---|

| CRP-RAG | 87.4 | 85.0 |

| CRP-RAG w/o Knowledge Intergration | 84.9 | 83.4 |

| CRP-RAG w/o Knowledge Evaluation | 62.9 | 64.1 |

| CRP-RAG w/o Iterative Reasoning | 74.5 | 72.6 |

| HotPotQA | FEVER | |

|---|---|---|

| Vanilla LLMs | ||

| GLM-4-Plus | 786.2 | 771.9 |

| GLM4-Plus w ToT | 247.7 | 883.4 |

| RALMs Framework | ||

| RALMs | 201.1 | 558.8 |

| Query Decomposition RALMs Framework | ||

| IRCoT | 208.2 | 608.6 |

| ITER-RETGEN | 593.9 | 1094.8 |

| Knowledge Structure RALMs Framework | ||

| RAPTOR | 124.0 | 477.5 |

| GraphRAG | 236.0 | 794.6 |

| Self-Planning RALMs Framework | ||

| Think-then-Act | 156.8 | 330.6 |

| Self-RAG | 112.0 | 116.5 |

| Ours | ||

| CRP-RAG | 21.4 | 8.1 |

| HotPotQA | FEVER | |

|---|---|---|

| RALMs Framework | ||

| RALMs | 66.7 | 69.5 |

| Query Decomposition RALMs Framework | ||

| IRCoT | 72.1 | 75.5 |

| ITER-RETGEN | 74.2 | 75.6 |

| Knowledge Structure RALMs Framework | ||

| RAPTOR | 78.8 | 71.9 |

| GraphRAG | 79.0 | 73.4 |

| Self-Planning RALMs Framework | ||

| Think-then-Act | 81.9 | 80.7 |

| Self-RAG | 85.2 | 83.1 |

| Ours | ||

| CRP-RAG | 92.5 | 91.8 |

| HotPotQA | FEVER | |

|---|---|---|

| CRP-RAG | 87.4 | 85.0 |

| CRP-RAG(Tree) | 75.8 | 78.0 |

| CRP-RAG(Chain) | 69.0 | 69.8 |

| CRP-RAG(Text chunk) | 65.7 | 67.6 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).