Submitted:

20 November 2024

Posted:

21 November 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose TEM model which is a new and advanced deep multi-task architecture specifically developed for detecting tables and columns, as well as recognizing structures. It has shown exceptional performance on the Marmot benchmark datasets [29], setting a new standard in the field.

- The proposed work demonstrates the efficacy of transfer learning through the process of fine-tuning a pre-trained TEM model using a novel dataset, leading to improved performance of the model.

2. Literature Review

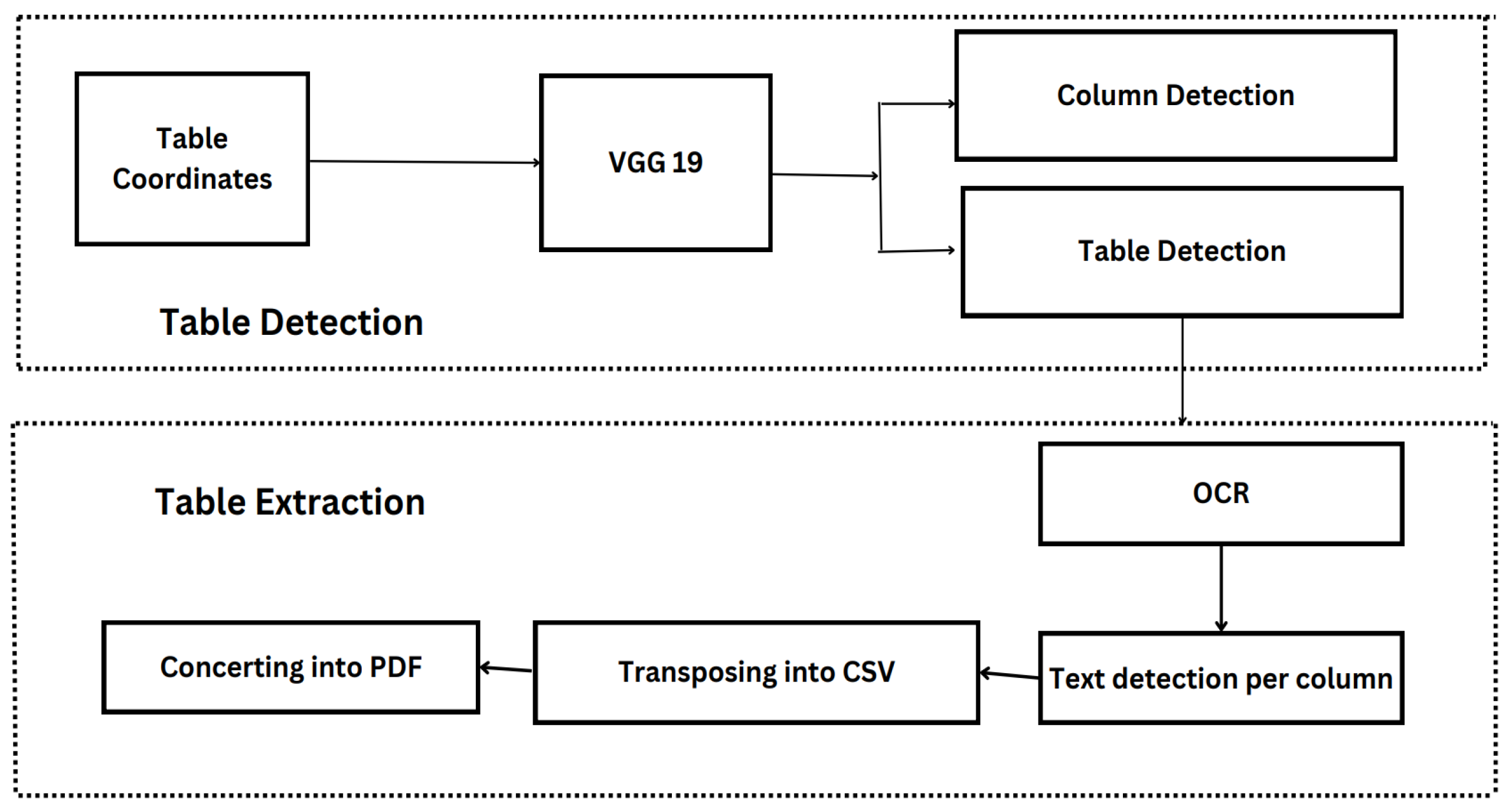

3. Methodology

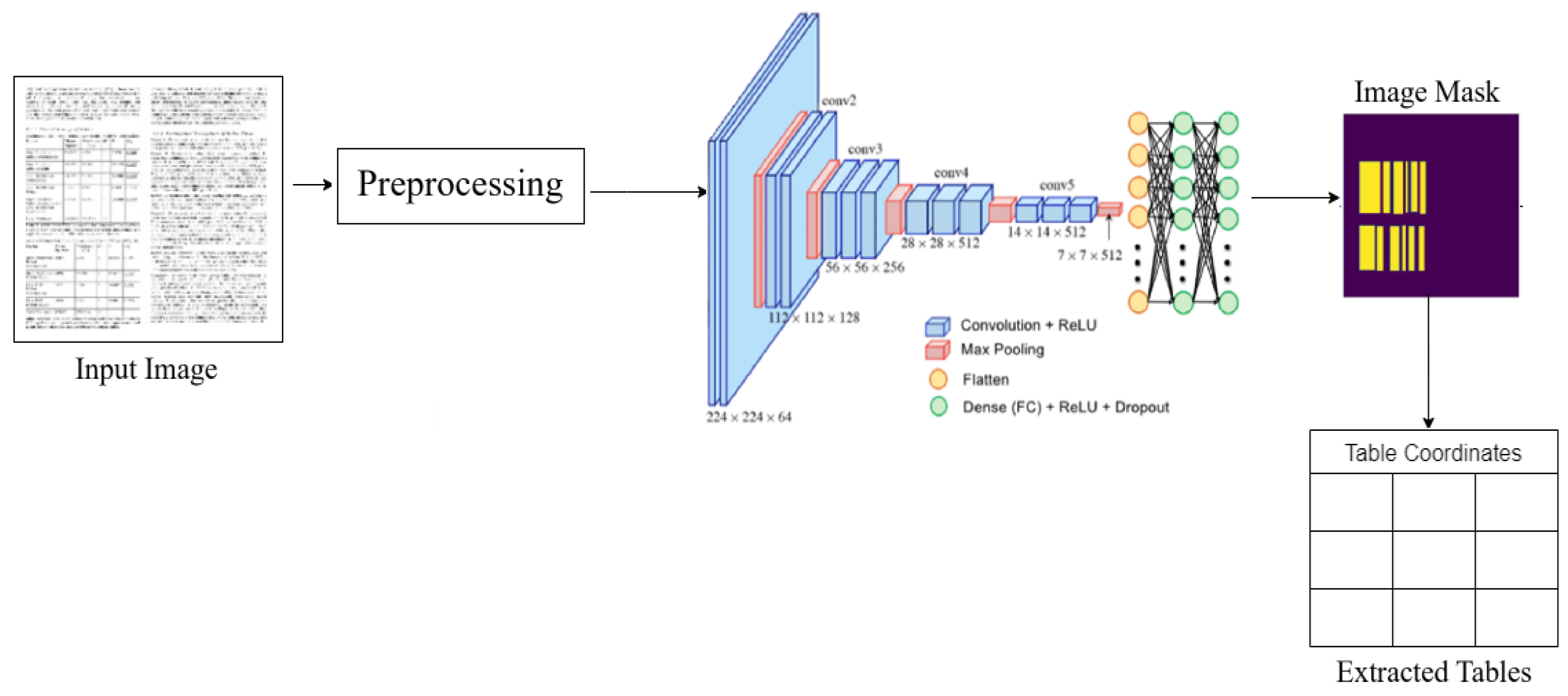

3.1. TEM: VGG19 for Table and Column Detection

3.2. Table Data Extraction

- In tables where line demarcations are visible, it is common for these lines to separate rows inside each column. In order to identify possible line boundaries for rows, we analyze each gap between two words that are vertically aligned in a column. This is done by applying a Radon transform [31] to see if there are any horizontal lines present. Horizontal line delineation facilitates the distinct separation of rows.

- In the case when a row extends across many lines, the initial position for a new row is determined by selecting the rows that contain the highest number of non-blank entries. In the context of a multi-column table, it is possible for certain columns to consist of items that span a single line, such as amount, while others may encompass data that span many lines, such as description. Consequently, every subsequent row begins after all columns have been populated with entries.

- In instances where all columns are completely populated and there are no line demarcations, it is possible to regard each line or level as an individual row.

3.3. Dataset Preparation

3.4. Image Pre-Processing

3.5. Providing Semantics Information

4. Evaluation

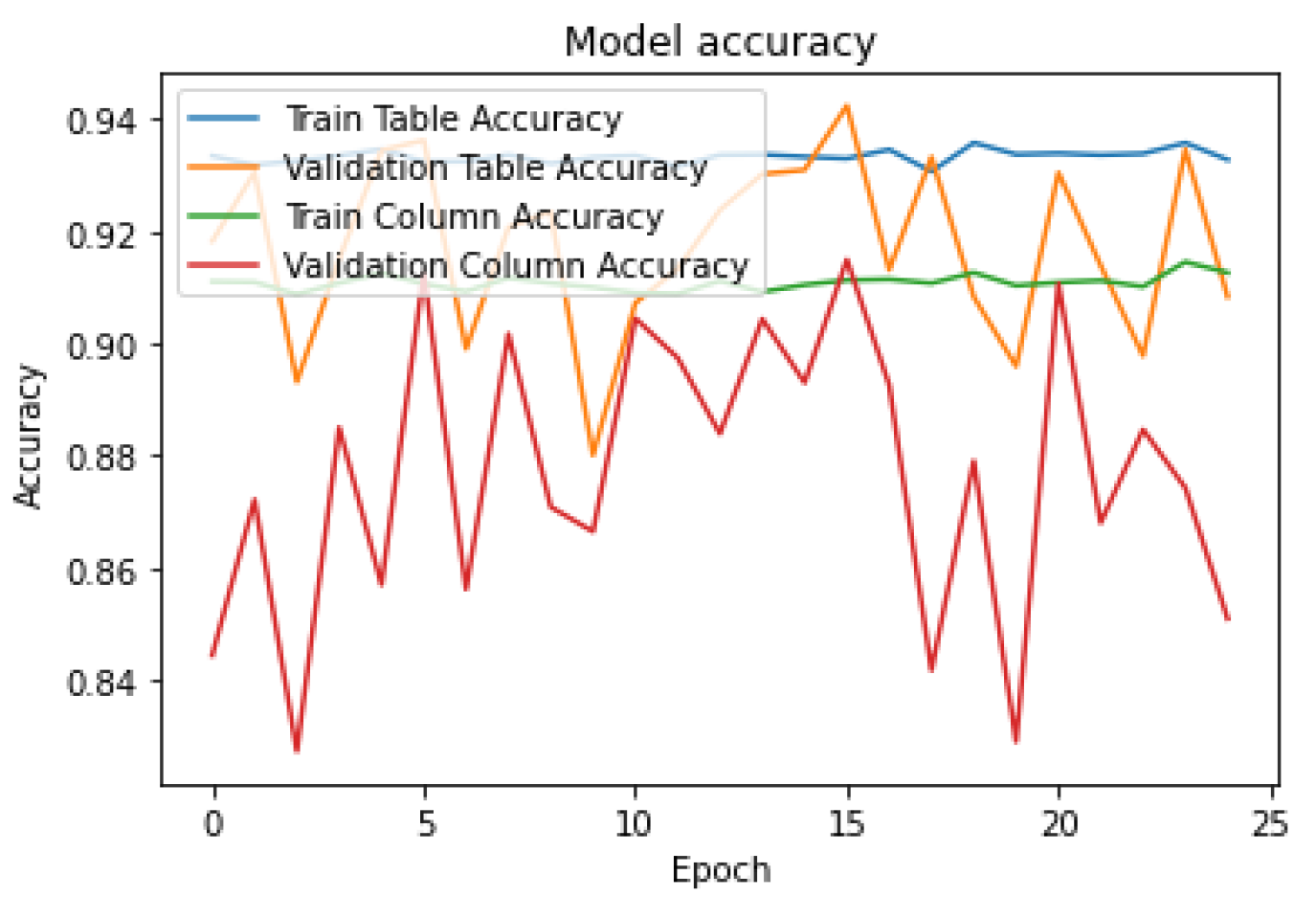

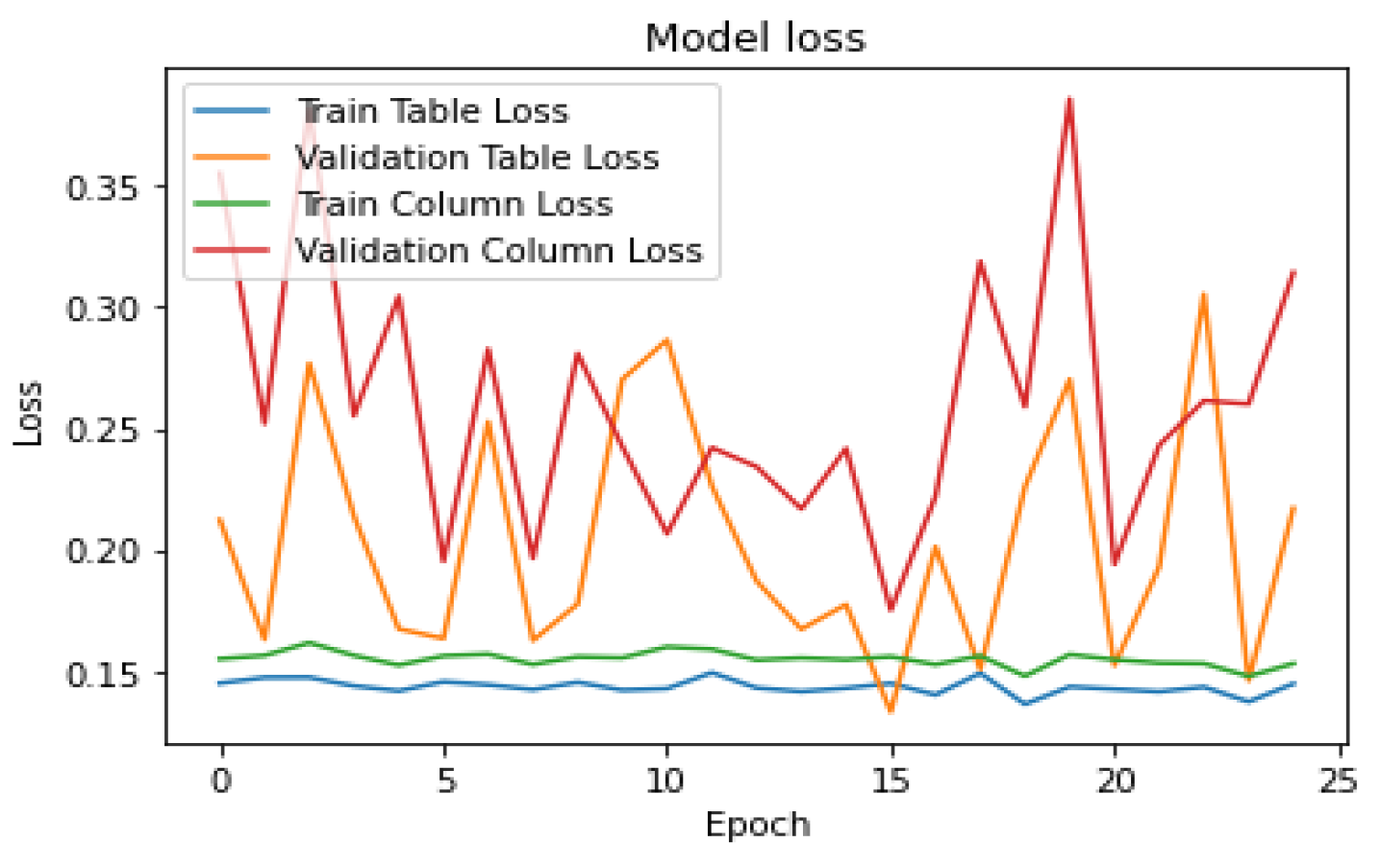

4.1. Training of Table Data

5. Experiment and Results

5.1. Experiments

6. Conclusion

References

- S. Schreiber, S. Agne, I. Wolf, A. Dengel, and S. Ahmed, “Deepdesrt: Deep learning for detection and structure recognition of tables in document images,” in Fourteenth International Conference on Document Analysis and Recognition, vol. 1, pp. 1162–1167, 2017. [CrossRef]

- T. Kieninger and A. Dengel, “A paper-to-html table converting system,” in Proceedings of document analysis systems, pp. 356–365, 1998.

- T. Kieninger and A. Dengel, “Applying the T-RECS table recognition system to the business letter domain,” in International Conference on Document Analysis and Recognition, p. 0518, 2001. [CrossRef]

- T. Kieninger and A. Dengel, “The T-Recs table recognition and analysis system,” in Document Analysis Systems: Theory and Practice, pp. 255– 270, 1999. [CrossRef]

- P. Pyreddy and W. B. Croft, “Tintin: A system for retrieval in text tables,” in Proceedings of the second ACM international conference on Digital libraries. ACM, 1997, pp. 193–200.

- F. Cesarini, S. Marinai, L. Sarti, and G. Soda, “Trainable table location in document images,” in Pattern Recognition, 2002. Proceedings. 16th International Conference on, vol. 3. IEEE, 2002, pp. 236–240. [CrossRef]

- T. Kasar, P. Barlas, S. Adam, C. Chatelain, and T. Paquet, “Learning to detect tables in scanned document”.

- A. C. e Silva, “Learning rich hidden markov models in document analysis: Table location,” in Document Analysis and Recognition, 2009. ICDAR’09. 10th International Conference on. IEEE, 2009, pp. 843– 847. [CrossRef]

- J. Fang, P. Mitra, Z. Tang, and C. L. Giles, “Table header detection and classification.” in AAAI, 2012, pp. 599–605. [CrossRef]

- M. Raskovic, N. Bozidarevic, and M. Sesum, “Borderless table detection engine,” Jun. 5 2018, uS Patent 9,990,347.

- W. Yalin, I. T. Phillips, and R. M. Haralick, “Table structure understanding and its performance evaluation,” Pattern Recognition, vol. 37, pp. 1479–1497, 2004. [CrossRef]

- F. Shafait, D. Keysers, and T. M. Breuel, “Performance evaluation and benchmarking of six-page segmentation algorithms,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 30, no. 6, pp. 941– 954, 2008. [CrossRef]

- A. Shigarov, A. Mikhailov, and A. Altaev, “Configurable table structure recognition in untagged pdf documents,” in ACM Symposium on Document Engineering, 2016. [CrossRef]

- S. Schreiber, S. Agne, I. Wolf, A. Dengel, and S. Ahmed, “Deepdesrt: Deep learning for detection and structure recognition of tables in document images,” in Document Analysis and Recognition (ICDAR), 2017 14th IAPR International Conference on, vol. 1. IEEE, 2017, pp. 1162–1167. [CrossRef]

- I. Kavasidis, S. Palazzo, C. Spampinato, C. Pino, D. Giordano, D. Giuffrida, and P. Messina, “A saliency-based convolutional neural network for table and chart detection in digitized documents,” arXiv preprint arXiv:1804.06236, 2018. [CrossRef]

- D. N. Tran, T. A. Tran, A. Oh, S. H. Kim, and I. S. Na, “Table detection from document image using vertical arrangement of text blocks,” International Journal of Contents, vol. 11, no. 4, pp. 77–85, 2015. [CrossRef]

- T. Kieninger and A. Dengel, “The t-recs table recognition and analysis system,” in International Workshop on Document Analysis Systems. Springer, 1998, pp. 255–270. [CrossRef]

- Y. Wang, I. T. Phillips, and R. M. Haralick, “Table structure understanding and its performance evaluation,” Pattern recognition, vol. 37, no. 7, pp. 1479–1497, 2004. [CrossRef]

- A. Tengli, Y. Yang, and N. L. Ma, “Learning table extraction from examples,” in Proceedings of the 20th international conference on Computational Linguistics. Association for Computational Linguistics, 2004, p. 987.

- P. Singh, S. Varadarajan, A. N. Singh, and M. M. Srivastava, “Multidomain document layout understanding using few shot object detection,” arXiv preprint arXiv:1808.07330, 2018. [CrossRef]

- R. Zanibbi, D. Blostein, and R. Cordy, “A survey of table recognition: Models, observations, transformations, and inferences,” International Journal on Document Analysis and Recognition, vol. 7, no. 1, pp. 1– 16, 2004. [CrossRef]

- J. Hu, R. S. Kashi, D. P. Lopresti, and G. Wilfong, “Table structure recognition and its evaluation,” in Document Recognition and Retrieval, pp. 44–55, 2001. [CrossRef]

- J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” CoRR, vol. abs/1411.4038, 2014.

- R. Smith, “An overview of the tesseract ocr engine,” in Document Analysis and Recognition, 2007. ICDAR 2007. Ninth International Conference on, vol. 2. IEEE, 2007, pp. 629–633. [CrossRef]

- J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” CoRR, vol. abs/1411.4038, 2014.

- “Parts that add up to a whole: a framework for the analysis of tables,” Ph.D. dissertation, University of Edinburgh, Edinburgh, Scotland, UK, 2010.

- M. C. Gobel, T. Hassan, E. Oro, and G. Orsi, “ICDAR 2013 Table ¨ Competition.” IEEE Computer Society, 2013, pp. 1449–1453. [CrossRef]

- B. Yildiz, K. Kaiser, and S. Miksch, “pdf2table: A Method to Extract Table Information from PDF Files,” in Proceedings of the 2nd Indian International Conference on Artificial Intelligence (IICAI) 2005, B. Prasad, Ed., 2005, pp. 1773–1785.

- https://www.icst.pku.edu.cn/cpdp/sjzy/.

- Khan, Saqib Ali, et al. “Table structure extraction with bi-directional gated recurrent unit networks.” 2019 International Conference on Document Analysis and Recognition (ICDAR). IEEE, 2019. [CrossRef]

- Toft, Peter. “The radon transform.” Theory and Implementation (Ph. D. Dissertation)(Copenhagen: Technical University of Denmark) (1996). [CrossRef]

- Smith, Ray. “An overview of the Tesseract OCR engine.” Ninth international conference on document analysis and recognition (ICDAR 2007). Vol. 2. IEEE, 2007. [CrossRef]

- Khan, Saqib Ali, et al. “Table structure extraction with bi-directional gated recurrent unit networks.” 2019 International Conference on Document Analysis and Recognition (ICDAR). IEEE, 2019. [CrossRef]

| Study | Method | Input | Accuracy |

|---|---|---|---|

| Table extraction using GRU | Khan, Saqib Ali, et al.[33] | Images | 0.55 |

| ICDAR Table comparison | Hsu et al.[27] | 0.5220 | |

| Extract Table Information from PDF Files | Yildiz[28] | 0.7313 | |

| Table detection and extraction with labels | TEM(Proposed) | Images | 0.876 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).