Submitted:

06 November 2024

Posted:

07 November 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- The high degree of generalization eliminates the need to retrain the model with real data;

- If further training (fine-tuning) is still necessary, very few images of the real data are needed until the desired accuracy is reached;

- The specially designed encoder is trained on the task of locating a searched object, what differs from the standard approaches of using a network trained on some other tasks, most often classification or a more general one;

- The integrated correlation operator we use provides real information about the location of the searched object, rather than being a separate layer of the network [12,13] which has to be further trained and the result to be decoded by one or more neural networks. This approach has not been found in the literature so far;

- Hierarchical propagation of activations from the last to the first layer leads to precise localization while precluding the need to use an additional decoder that needs to be trained. The result is an extremely simplified and lightweight architecture, while providing high accuracy. This approach has not been found in the literature so far;

- Two step self-supervised training, involving two types of data augmentations: color augmentation, and color and geometric augmentations;

- Self-TM is rotationally invariant. In this work, the Self-TM models’ family is trained over the entire interval from -90 to +90 degrees.

2. Related Work

2.1. Hand-Crafted Methods

2.2. Learnable Methods

2.3. Foundation Models

3. Theoretical Formulation of the Proposed Method

3.1. Model

3.2. Data

3.3. Training

- An input image I, is taken from an unannotated image database, to which a random crop is applied, and then resized by , to 189 × 189 pixels yielding a “query” image, , see Figure 1;

- Another random crop , is performed on , and then random image augmentations (color and/or geometric) , modifying the input image to obtain a “template” image, , see Figure 1. This step can produce one or many different templates. In the Self-TM training, only two “template” numbers are used;

- The position (ground truth position), of the resulting “template” on the “query” image is stored (the coordinates of the red rectangle’s center on “query”, see Figure 1);

- “Query” and the two templates are fed as input to the Self-TM network, , and the resulting feature maps from all layers are stored, , , where is the number of the network’s layers. In the current architecture the number of layers is 3, denoted above by: first, mid and last;

- A correlation operator , is applied to every two corresponding feature maps sequentially starting from the deepest layer. Then the position of the maximum value (predicted position), , is found on its output (see “hierarchical activations propagation” in Figure 1);

- A mean squared error is calculated on every two corresponding feature maps, , where is the region of summation, i.e. the intersection of the two definition domains of the “template” feature map and the “query” feature map , . Multiplication is scalar, i.e. elementwise in ;

- Parameter optimization (gradient descent): by minimizing the errors obtained from , and the offset of the positions of , relative to the real positions of the template , which is possible due to compatibility of positions (in pixels).

3.4. Augmentation

3.4.1. Color augmentations

-

To obtain the “query” image are applied sequentially:

- ○

- Random crop: scale: from 0.1 to 0.9

- ○

- Rescale: 189 × 189 pixels

- ○

- Normalization: mean = [0.485, 0.456, 0.406], std = [0.228, 0.224, 0.225], computed on ImageNet

-

To obtain the two “templates” on the “query” are applied sequentially:

- ○

- Random color jitter with independent probability* of 80%: brightness = 0.4, contrast = 0.4, saturation = 0.2, hue = 0.1

- ○

- Random grayscale with independent probability* of 20%

- ○

-

For „template“ 1:

- ▪

- Random Gaussian blur: radius from 0.1 to 0.2

- ○

-

For „template“ 2:

- ▪

- Random Gaussian blur with independent probability* of 10%: radius from 0.1 to 2.0

- ▪

- Random invert of all pixel values above a given threshold (Solarization) with independent probability* of 20%: threshold = 128

- Normalization: same as in “query”

- Random crop: scale from 0.14 to 0.85, ratio from 0.2 to 5.0

3.4.2. Geometric Augmentations

- Random square crop: scale from 0.14 to 0.45

-

A randomly selected geometric augmentation is applied with a 50% probability:

- ○

- Random perspective transformation: distortion scale = 0.5

- ○

- Random rotation: degrees from -90 to +90

- ○

- Random rescale: factor from -0.7 to 1.3

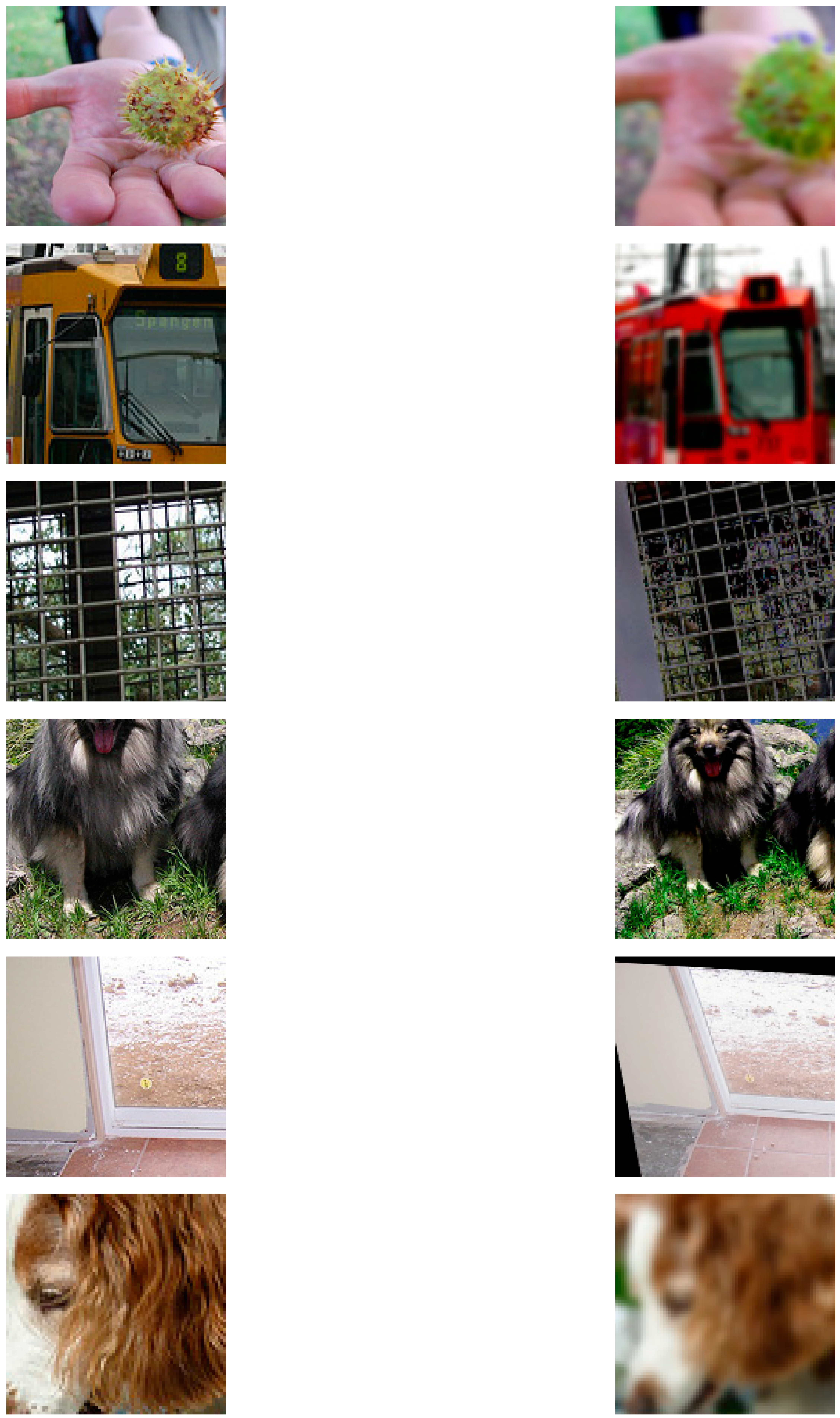

| Image crop | Color and geometric augmentation |

|---|---|

| |

3.5. Hierarchical Activations Propagation

3.6. Correlation

3.7. Loss

3.8. Hyperparameters/Optimization

3.9. Prediction

4. Experiments

- ImageNet-1K Test [53], showing the accuracy of template localization on data having the same modality as the training data;

- HPatches [43], evaluating the properties of feature maps as local descriptors for finding matches between different image patches in corresponding images;

- MegaDepth [44], evaluating image matching accuracy on outdoor scenes having different modality from the training data;

- ScanNet [45], evaluating image matching accuracy on indoor scenes having different modality from the training data.

4.1. ImageNet-1K Test

- An input image is taken from an unannotated dataset (ImageNet-1K Test), from which a "template" is obtained. On each two corresponding feature maps positions of the maximum values of the correlation operator’s result are then calculated (see training steps 1 to 5 in Section 3.3).

- For each two corresponding feature maps (i.e., for each layer), the position displacement measured in pixels () of is computed, relative to the actual template positions , using Euclidean distance:where is the number of images in the dataset, and are the two-dimensional vectors of each image , and is the currently selected layer of the network . In this case, and for clarity instead of we are using indexation. or is the average Euclidean distance for the deepest layer.

4.2. HPatches

4.3. Image Matching

5. Implementation Details

5.1. Two Step Training

- ImageNet training (Table 8 row 5 vs. row 4) results on increased model accuracy such as: Patch Verification +31.77%; Image Matching +81.76%; Patch Retrieval +66.63%;

- HPatches training (Table 9 row 3 vs. row 1) results on increased model accuracy such as: Patch Verification +9.23%; Image Matching +33.89%; Patch Retrieval +18.18%.

5.2. Model Architecture

- Standard two-dimensional convolution (Conv2D) using a certain number of filters performing scalar multiplication on their input data;

- Linear layer (Linear), representing a fully connected layer where each neuron is connected to each neuron from the previous layer;

- Non-linear activation function GELU [47], used in all modern transformers;

6. Conclusions

Author Contributions

Funding

Informed Consent Statement

Conflicts of Interest

References

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. International Conference on Machine Learning 2020, 1, 1597–1607. [Google Scholar] [CrossRef]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum contrast for unsupervised visual representation learning. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2019. [CrossRef]

- He, K.; Chen, X.; Xie, S.; Li, Y.; Dollar, P.; Girshick, R. Masked autoencoders are scalable vision learners. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2022. [CrossRef]

- Bertinetto, L.; Valmadre, J.; Henriques, J.F.; Vedaldi, A.; Torr, P.H. S. Fully-Convolutional Siamese networks for object tracking. In Lecture notes in computer science; 2016; pp 850–865. [CrossRef]

- Li, B.; Yan, J.; Wu, W.; Zhu, Z.; Hu, X. High performance visual tracking with Siamese Region Proposal network. IEEE Conference on Computer Vision and Pattern Recognition 2018. [CrossRef]

- He, A.; Luo, C.; Tian, X.; Zeng, W. A twofold siamese network for real-time object tracking. IEEE Conference on Computer Vision and Pattern Recognition 2018. [CrossRef]

- Valmadre, J.; Bertinetto, L.; Henriques, J.; Vedaldi, A.; Torr, P.H. S. End-to-end representation learning for correlation filter based tracking. IEEE Conference on Computer Vision and Pattern Recognition 2017. [CrossRef]

- Li, B.; Wu, W.; Wang, Q.; Zhang, F.; Xing, J.; Yan, J. SiamRPN++: Evolution of Siamese Visual Tracking with Very Deep Networks. arXiv (Cornell University) 2018. [CrossRef]

- Zhu, Z.; Wang, Q.; Li, B.; Wu, W.; Yan, J.; Hu, W. Distractor-Aware Siamese networks for visual object tracking. In Lecture notes in computer science; 2018; pp 103–119. [CrossRef]

- Fan, H.; Ling, H. Siamese cascaded region proposal networks for real-time visual tracking. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2018. [CrossRef]

- Song, Y.; Ma, C.; Gong, L.; Zhang, J.; Lau, R.; Yang, M.-H. CREST: Convolutional Residual Learning for Visual Tracking. IEEE International Conference on Computer Vision 2017. [CrossRef]

- Guo, D.; Wang, J.; Cui, Y.; Wang, Z.; Chen, S. SIAMCAR: Siamese Fully Convolutional Classification and Regression for visual tracking. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2020. [CrossRef]

- Hu, W.; Wang, Q.; Zhang, L.; Bertinetto, L.; Torr, P.H. S. SiamMask: a framework for fast online object tracking and segmentation. PubMed 2023, 45(3), 3072–3089. [Google Scholar] [CrossRef]

- Liu, Z.; Mao, H.; Wu, C.-Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. A ConvNet for the 2020s. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2022. [CrossRef]

- Steiner, A.; Kolesnikov, A.; Zhai, X.; Wightman, R.; Uszkoreit, J.; Beyer, L. How to train your ViT? Data, Augmentation, and Regularization in Vision Transformers. arXiv (Cornell University) 2021. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. IEEE Conference on Computer Vision and Pattern Recognition 2016. [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All you Need. arXiv (Cornell University) 2017, 30, 5998–6008. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; Uszkoreit, J.; Houlsby, N. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. arXiv (Cornell University) 2020. [CrossRef]

- Touvron, H.; Cord, M.; Douze, M.; Massa, F.; Sablayrolles, A.; Jégou, H. Training data-efficient image transformers & distillation through attention. International Conference on Machine Learning 2020. [CrossRef]

- Hisham, M.B.; Yaakob, S.N.; Raof, R.A. A.; Nazren, A.B. A.; Wafi, N.M. Template matching using sum of squared difference and normalized cross correlation. IEEE Student Conference on Research and Development (SCOReD) 2015. [CrossRef]

- Niitsuma, H.; Maruyama, T. Sum of absolute difference implementations for image processing on FPGAs. International Conference on Field Programmable Logic and Applications 2010, 33, 167–170. [Google Scholar] [CrossRef]

- Papageorgiou, C.P.; Oren, M.; Poggio, T. A general framework for object detection. Sixth International Conference on Computer Vision (IEEE Cat. No. 98CH36271) 1998. [CrossRef]

- Di Stefano, L.; Mattoccia, S.; Tombari, F. ZNCC-based template matching using bounded partial correlation. Pattern Recognition Letters 2005, 26(14), 2129–2134. [Google Scholar] [CrossRef]

- Lowe, D.G. Distinctive Image Features from Scale-Invariant Keypoints. International Journal of Computer Vision 2004, 60(2), 91–110. [Google Scholar] [CrossRef]

- Bay, H.; Tuytelaars, T.; Van Gool, L. SURF: Speeded up robust features. In European Conference on Computer Vision; 2006; pp 404–417. [CrossRef]

- Rublee, E.; Rabaud, V.; Konolige, K.; Bradski, G. ORB: Аn efficient alternative to SIFT or SURF. International Conference on Computer Vision 2011. [CrossRef]

- DeTone, D.; Malisiewicz, T.; Rabinovich, A. SuperPoint: Self-supervised interest point detection and description. IEEE Conference on Computer Vision and Pattern Recognition Workshops 2018. [CrossRef]

- Sarlin, P.-E.; DeTone, D.; Malisiewicz, T.; Rabinovich, A. SuperGlue: Learning feature matching with graph neural networks. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2020. [CrossRef]

- Cuturi, M. Sinkhorn Distances: Lightspeed computation of optimal transport. Neural Information Processing Systems 2013, 26, 2292–2300. [Google Scholar]

- Lindenberger, P.; Sarlin, P.-E.; Pollefeys, M. LightGlue: Local feature matching at light speed. International Conference on Computer Vision 2023. [CrossRef]

- Jiang, H.; Karpur, A.; Cao, B.; Huang, Q.; Araujo, A. OmniGlue: Generalizable feature matching with foundation model guidance. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2024, abs/2308.08479, 19865–19875. [CrossRef]

- Caron, M.; Touvron, H.; Misra, I.; Jegou, H.; Mairal, J.; Bojanowski, P.; Joulin, A. Emerging Properties in Self-Supervised Vision Transformers. 2021 IEEE/CVF International Conference on Computer Vision (ICCV) 2021. [CrossRef]

- Chen, H.; Luo, Z.; Zhou, L.; Tian, Y.; Zhen, M.; Fang, T.; McKinnon, D.; Tsin, Y.; Quan, L. ASpanFormer: Detector-Free Image Matching with Adaptive Span Transformer. In Lecture notes in computer science; 2022; pp 20–36. [CrossRef]

- Sun, J.; Shen, Z.; Wang, Y.; Bao, H.; Zhou, X. LOFTR: Detector-Free local feature matching with transformers. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2021. [CrossRef]

- Edstedt, J.; Athanasiadis, I.; Wadenbäck, M.; Felsberg, M. DKM: Dense Kernelized Feature Matching for geometry estimation. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2023. [CrossRef]

- Truong, P.; Danelljan, M.; Timofte, R.; Van Gool, L. PDC-NET+: Enhanced Probabilistic Dense Correspondence Network. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45(8), 10247–10266. [Google Scholar] [CrossRef]

- Oquab, M.; Darcet, T.; Moutakanni, T.; Vo, H.; Szafraniec, M.; Khalidov, V.; Fernandez, P.; Haziza, D.; Massa, F.; El-Nouby, A.; Assran, M.; Ballas, N.; Galuba, W.; Howes, R.; Huang, P.-Y.; Li, S.-W.; Misra, I.; Rabbat, M.; Sharma, V.; Synnaeve, G.; Xu, H.; Jegou, H.; Mairal, J.; Labatut, P.; Joulin, A.; Bojanowski, P. DINOv2: Learning Robust Visual Features without Supervision. arXiv (Cornell University) 2023. [CrossRef]

- Van Den Oord, A.; Li, Y.; Vinyals, O. Representation Learning with Contrastive Predictive Coding. arXiv (Cornell University) 2018. [CrossRef]

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.-Y.; Dollár, P.; Girshick, R. Segment anything. IEEE/CVF International Conference on Computer Vision 2023. [CrossRef]

- Ravi, N.; Gabeur, V.; Hu, Y.-T.; Hu, R.; Ryali, C.; Ma, T.; Khedr, H.; Rädle, R.; Rolland, C.; Gustafson, L.; Mintun, E.; Pan, J.; Alwala, K.V.; Carion, N.; Wu, C.-Y.; Girshick, R.; Dollár, P.; Feichtenhofer, C. SAM 2: Segment anything in images and videos. arXiv (Cornell University) 2024. [CrossRef]

- Wang, X.; Zhang, X.; Cao, Y.; Wang, W.; Shen, C.; Huang, T. SegGPT: towards segmenting everything in context. IEEE/CVF International Conference on Computer Vision 2023. [CrossRef]

- Wang, X.; Wang, W.; Cao, Y.; Shen, C.; Huang, T. Images speak in images: A generalist painter for in-context visual Learning. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2023. [CrossRef]

- Balntas, V.; Lenc, K.; Vedaldi, A.; Mikolajczyk, K. HPatches: a benchmark and evaluation of handcrafted and learned local descriptors. IEEE Conference on Computer Vision and Pattern Recognition 2017. [CrossRef]

- Li, Z.; Snavely, N. MegaDepth: Learning single-view depth prediction from internet photos. IEEE Conference on Computer Vision and Pattern Recognition 2018. [CrossRef]

- Dai, A.; Chang, A.X.; Savva, M.; Halber, M.; Funkhouser, T.; Niessner, M. ScanNet: Richly-annotated 3D reconstructions of indoor scenes. IEEE Transactions on Information Theory 2017. [CrossRef]

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer normalization. arXiv (Cornell University) 2016. [CrossRef]

- Nair, V.; Hinton, G.E. Rectified linear units improve restricted Boltzmann machines. International Conference on Machine Learning 2010, 807–814. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer using Shifted Windows. 2021 IEEE/CVF International Conference on Computer Vision (ICCV) 2021. [CrossRef]

- Tan, M.; Le, Q.V. EfficientNet: Rethinking model scaling for convolutional neural networks. International Conference on Machine Learning 2019. [CrossRef]

- Radosavovic, I.; Kosaraju, R.P.; Girshick, R.; He, K.; Dollar, P. Designing network design spaces. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2020. [CrossRef]

- Tan, M.; Le, Q. EfficientNetV2: Smaller models and faster training. International Conference on Machine Learning 2021.

- Kolesnikov, A.; Beyer, L.; Zhai, X.; Puigcerver, J.; Yung, J.; Gelly, S.; Houlsby, N. Big Transfer (BIT): General visual representation learning. In European Conference on Computer Vision; 2020; pp 491–507. [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.-J.; Li, N.K.; Fei-Fei, N.L. ImageNet: A large-scale hierarchical image database. 2009 IEEE Conference on Computer Vision and Pattern Recognition 2009. [CrossRef]

- Assran, M.; Duval, Q.; Misra, I.; Bojanowski, P.; Vincent, P.; Rabbat, M.; LeCun, Y.; Ballas, N. Self-Supervised learning from images with a joint-embedding predictive architecture. IEEE/CVF Conference on Computer Vision and Pattern Recognition 2023. [CrossRef]

- Bardes, A.; Ponce, J.; Lecun, Y. VICRegL: Self-supervised learning of local visual features. Advances in Neural Information Processing Systems 2022. [CrossRef]

- Bridle, J.S. Training stochastic model recognition algorithms as networks can lead to maximum mutual information estimation of parameters. Advances in Neural Information Processing Systems 1989, 2, 211–217. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. arXiv (Cornell University) 2017. [CrossRef]

- Irshad, A.; Hafiz, R.; Ali, M.; Faisal, M.; Cho, Y.; Seo, J. Twin-Net descriptor: twin negative mining with quad loss for Patch-Based matching. IEEE Access 2019, 7, 136062–136072. [Google Scholar] [CrossRef]

- Schonberger, J.L.; Frahm, J.-M. Structure-from-motion revisited. IEEE Conference on Computer Vision and Pattern Recognition 2016. [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random Sample Consensus: A Paradigm for Model Fitting with Applications to Image Analysis and Automated Cartography. In Elsevier eBooks; 1987; pp 726–740. [CrossRef]

- Ioffe, S. Batch renormalization: towards reducing minibatch dependence in Batch-Normalized models. Advances in Neural Information Processing Systems 2017. [CrossRef]

|

| Model | Num of params |

|---|---|

| Self-TM Small | 13M |

| DeiT-S [19], ViT-S [15], Swin-T [48] | 22-28М |

| ConvNeXt-T [14] | 29M |

| Self-TM Base | 40M |

| ConvNeXt-S [14], Swin-S [48] | 50M |

| EffNet-B7 [49], RegNetY-16G [50], DeiT-B [19], ViT-B [15], Swin-B [48] | 66-88M |

| ConvNeXt-B [14] | 89M |

| EffNetV2-L [51] | 120M |

| Self-TM Large | 130M |

| ConvNeXt-L [14] | 198M |

| ViT-L [15] | 304M |

| ConvNeXt-XL [14] | 350M |

| R-101x3 [52], R-152x4 [52] | 388-937M |

| Image crop | Color augmentation |

|---|---|

| |

| Model size | Applied augmentation | pixels | pixels | pixels |

|---|---|---|---|---|

| Self-TM Small | color | 0.579 | 0.176 | 0.156 |

| Self-TM Base | color | 0.577 | 0.173 | 0.156 |

| Self-TM Large | color | 0.572 | 0.171 | 0.153 |

| Self-TM Small | color and geometric | 2.214 | 0.767 | 0.409 |

| Self-TM Base | color and geometric | 1.752 | 0.602 | 0.338 |

| Self-TM Large | color and geometric | 1.331 | 0.452 | 0.273 |

| Template | Result |

|---|---|

| |

| Method | MegaDepth-1500 | ScanNet | |

|---|---|---|---|

| AUC@5° / 10° / 20° | AUC@5° / 10° / 20° | ||

| Descriptors with hand-crafter rules | SIFT [24] + MNN | 25.8 / 41.5 / 54.2 | 1.7 / 4.8 / 10.3 |

| SuperPoint [27] + MNN | 31.7 / 46.8 / 60.1 | 7.7 / 17.8 / 30.6 | |

| Sparse methods | SuperGlue [28] | 42.2 / 61.2 / 76.0 | 10.4 / 22.9 / 37.2 |

| LightGlue [30] | 47.6 / 64.8 / 77.9 | 15.1 / 32.6 / 50.3 | |

| OmniGlue [31] | 47.4 / 65.0 / 77.8 | 14.0 / 28.9 / 44.3 | |

| OmniGlue + Self-TM Small Relative gain (in %) over OmniGlue |

48.2 / 64.7 / 73.8 +1.8 / -0.4 / -5.1 |

15.8 / 29.4 / 43.4 +13.0 / +1.8 / -2.0 |

|

| OmniGlue + Self-TM Base Relative gain (in %) over OmniGlue |

56.7 / 69.4 / 78.1 +19.6 / +6.7 / +0.3 |

22.0 / 34.8 / 47.0 +57.1 / +20.5 / +6.2 |

|

| OmniGlue + Self-TM Large Relative gain (in %) over OmniGlue |

59.8 / 70.6 / 78.4 +26.2 / +8.7 / +0.8 |

26.6 / 37.7 / 48.4 +90.1 / +30.3 / +9.2 |

| Model | Num of parameters | Inference speed at various input resolution | ||

|---|---|---|---|---|

| 238 pixels | 490 pixels | 994 pixels | ||

| Self-TM (Small) | 13M | 212 ms | 659 ms | 2 481 ms |

| Self-TM (Base) | 40М | 244 ms | 914 ms | 3 432 ms |

| DINOv2 (ViT-14-base) | 87М | 445 ms | 3 065 ms | 38 709 ms |

| Self-TM (Large) | 130М | 377 ms | 1 268 ms | 4 706 ms |

| Model | # | Initial weights | Dataset | Augmentations | Patch Verification mAP % |

Image Matching mAP % |

Patch Retrieval mAP % |

|---|---|---|---|---|---|---|---|

| Self-TM Base | 1 | Random init | HPatches | color | 64.15 | 8.32 | 25.74 |

| 2 | Random init | HPatches | color and geometric | 65.04 | 9.95 | 28.86 | |

| 3 | HPatches (color) | HPatches | color and geometric | 70.07 | 11.14 | 30.42 | |

| 4 | Random init | ImageNet | color | 65.19 | 21.33 | 37.01 | |

| 5 | ImageNet (color) | ImageNet | color and geometric | 85.90 | 38.77 | 61.67 | |

| 6 | ImageNet (color) | HPatches | color | 66.09 | 21.97 | 37.79 | |

| 7 | ImageNet (color) | HPatches | color and geometric | 78.97 | 29.85 | 50.30 | |

| 8 | ImageNet (color and geometric) | HPatches | color and geometric | 86.89 | 40.35 | 64.01 |

| Model | Num of parameters | Dataset | Augmentations | pixels | |

|---|---|---|---|---|---|

| ConvNeXt-S [14] | 50M | ImageNet | color | 1.119 | 0.418 |

| color and geometric | 2.515 | 0.542 | |||

| Self-TM Base Relative gain over ConvNeXt-S [14] |

40M -20.00% |

ImageNet | color | 0.577 -48.44% |

0.156 -62.68% |

| color and geometric | 1.752 -30.39% |

0.338 -37.64% |

| Model | Initial weights | Dataset | Augmentations | Patch Verification mAP % |

Image Matching mAP % |

Patch Retrieval mAP % |

|---|---|---|---|---|---|---|

| ConvNeXt-S [14] | Random init | ImageNet | color | 63.00 | 16.99 | 33.49 |

| ImageNet (color) | ImageNet | color and geometric |

83.31 | 32.94 | 58.42 | |

| ImageNet (color and geometric) | HPatches | color and geometric |

84.39 | 34.88 | 60.61 | |

| Self-TM Base Relative gain over ConvNeXt-S [14] |

Random init | ImageNet | color | 65.19 +3.48% |

21.33 +25.54% |

37.01 +10.51% |

| ImageNet (color) | ImageNet | color and geometric |

85.90 +3.11% |

38.77 +17.70% |

61.67 +5.56% |

|

| ImageNet (color and geometric) | HPatches | color and geometric |

86.89 +2.96% |

40.35 +15.68% |

64.01 +5.61% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).