Submitted:

05 November 2024

Posted:

07 November 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Which DL models are best suitable for multi-class classification of cyberbullying using multi-modal data?

- Does DL perform better than the state-of-the-art algorithms?

2. Related Work

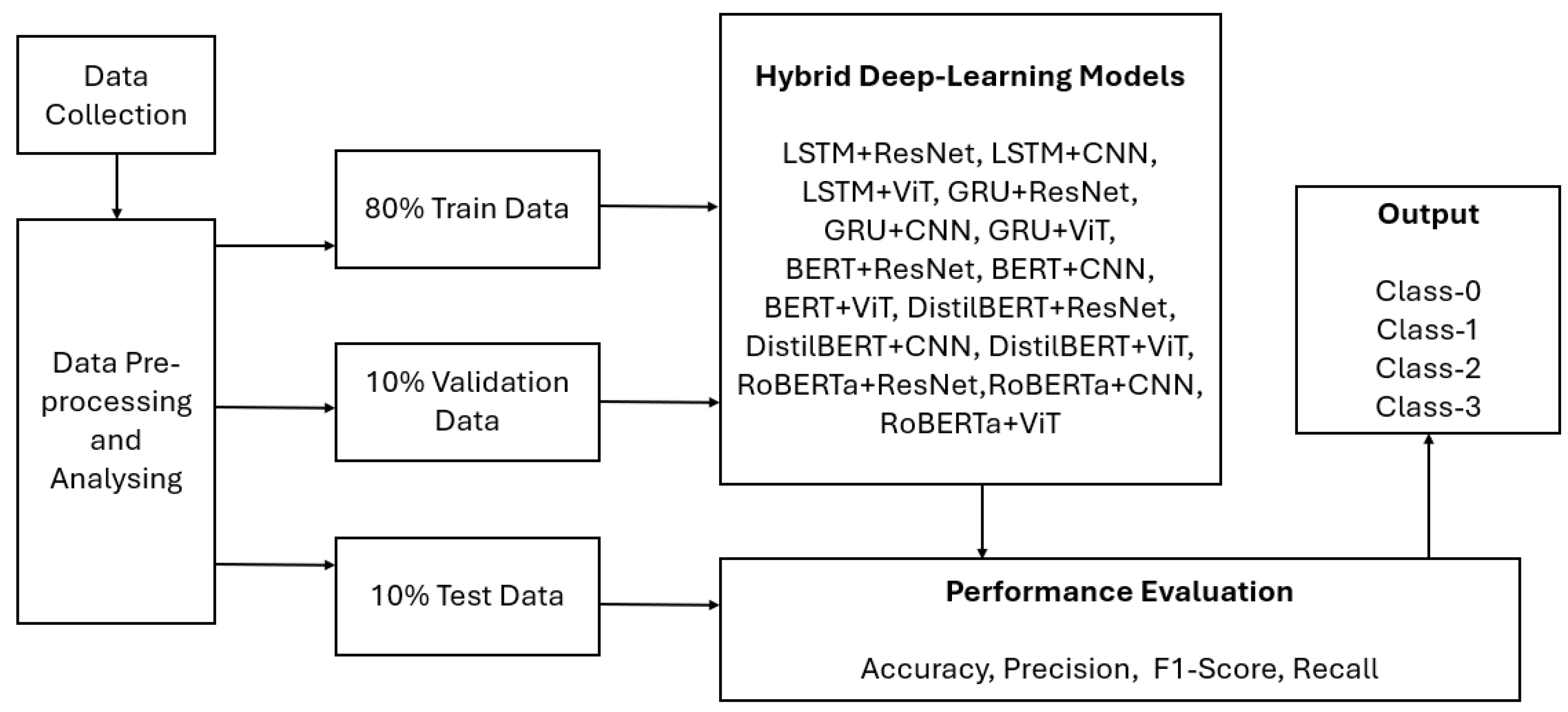

3. Materials and Methods

3.1. Proposed Solution

3.2. Datasets Collection

3.3. Data Pre-Processing and Analysis

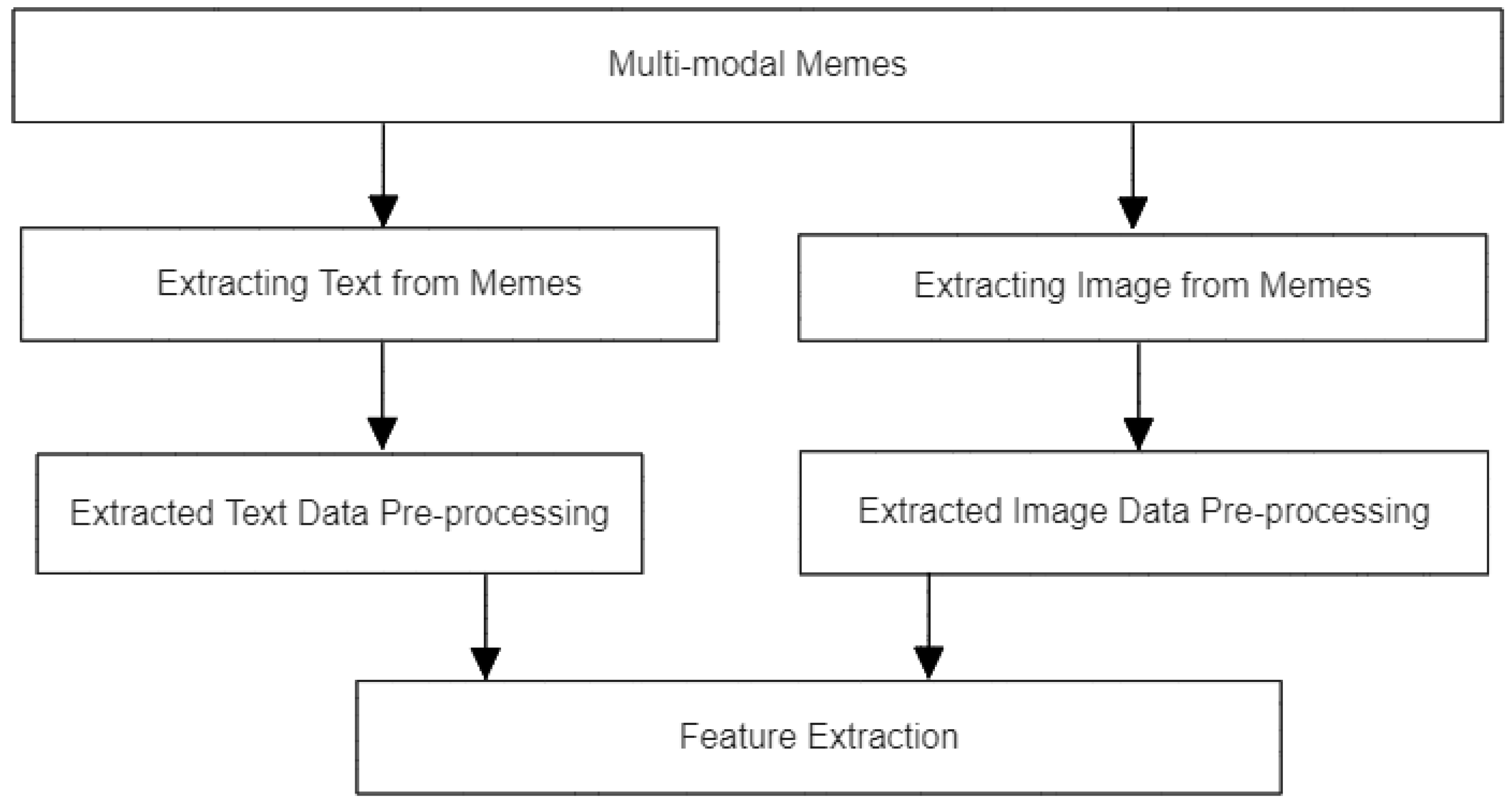

3.3.1. Extracting Text and Images from Memes

3.3.2. Data Categorization

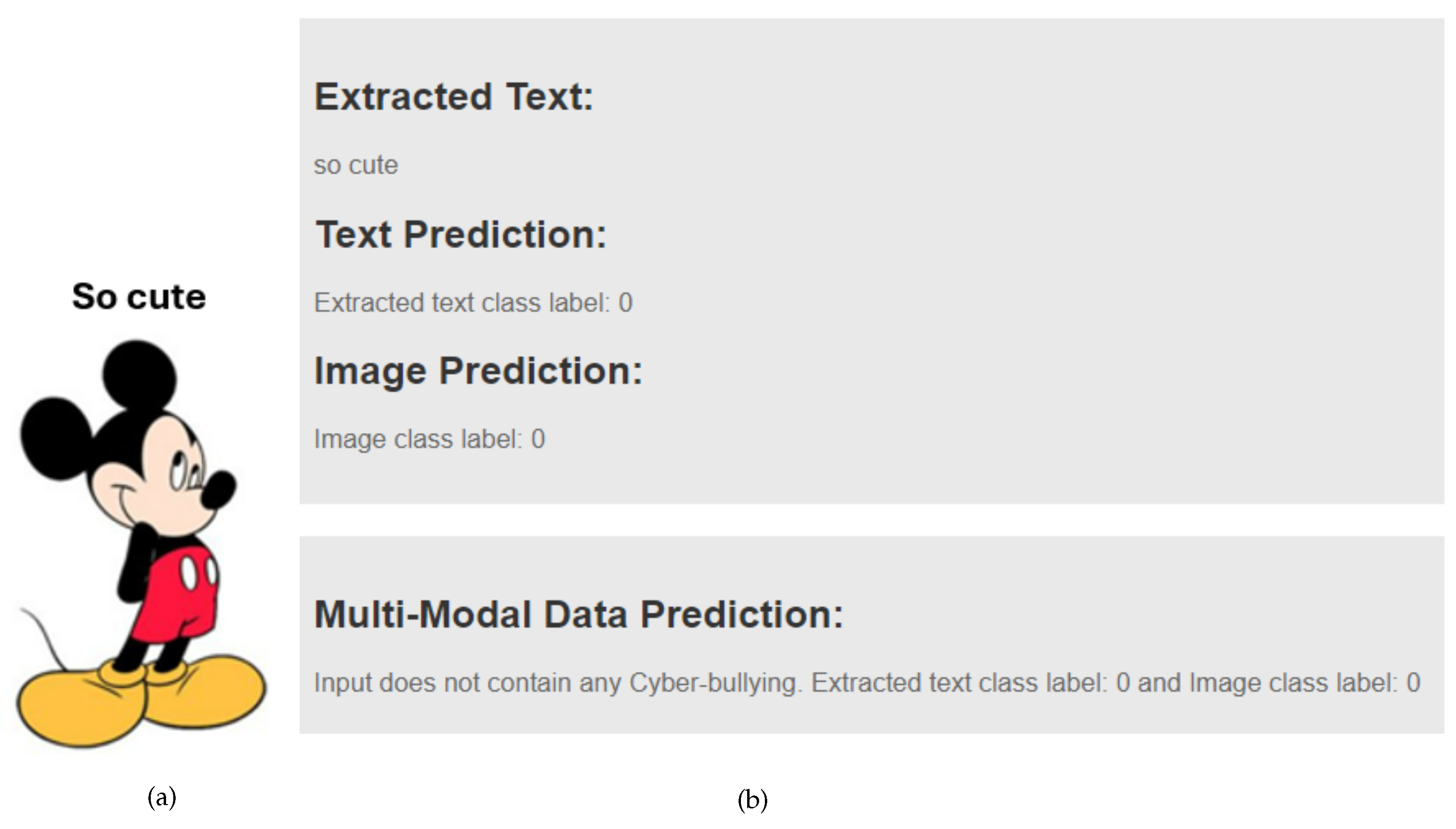

- Non-Bullying (class-0): Data that contains no insulting, defamatory, offensive, or aggressive language based on research [33].

- Non-Bullying (class-0): Images that do not feature defaming, sexual, offensive, or aggressive content.

- Defaming (class-1): Images that contain sexual or nudity-related content.

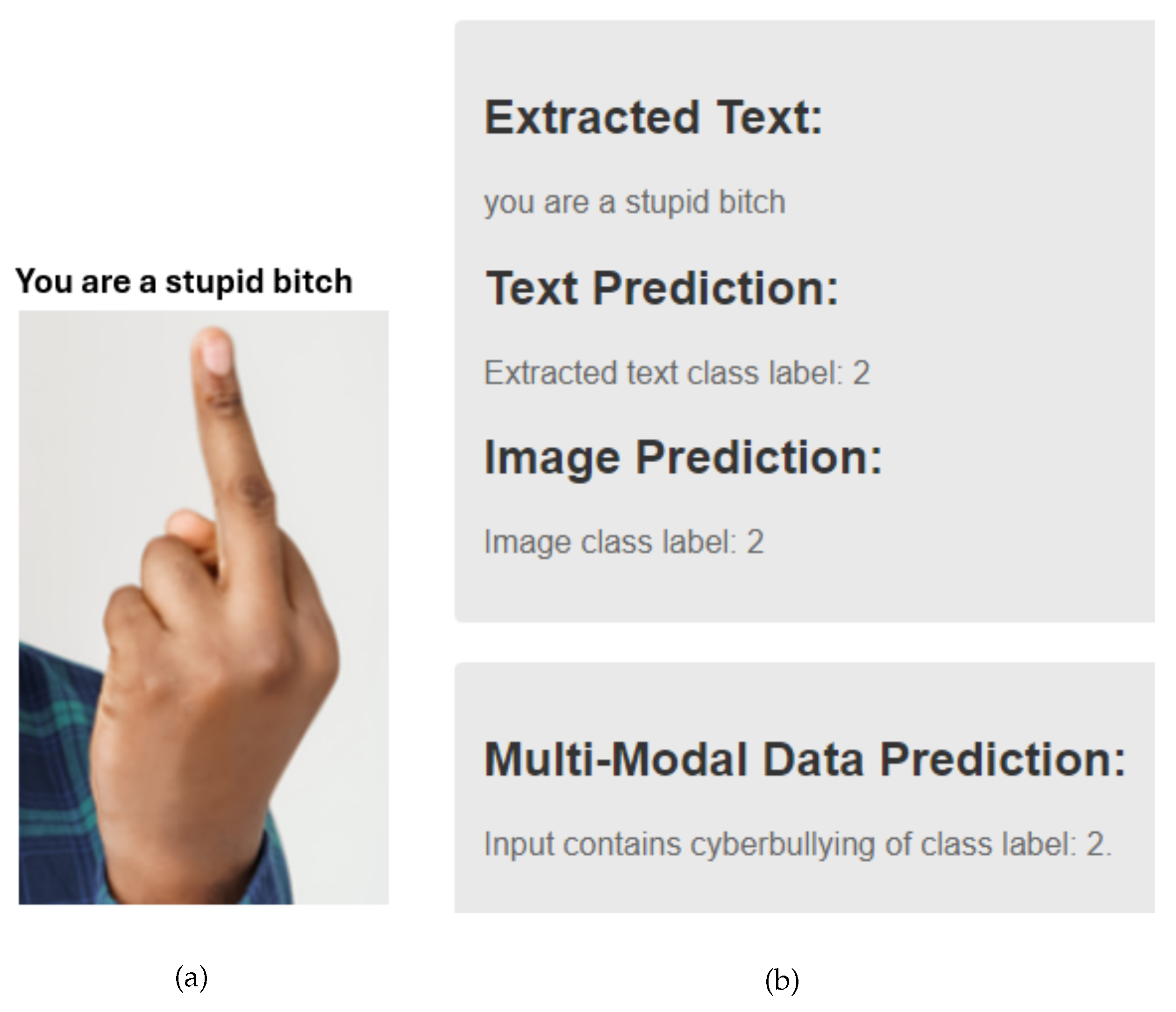

- Offensive (class-2): In the Private-dataset, this includes images such as those showing a middle finger or combining human faces with animal faces. As the Public-dataset lacks this specific type, class-2 is reserved for content showing a middle finger.

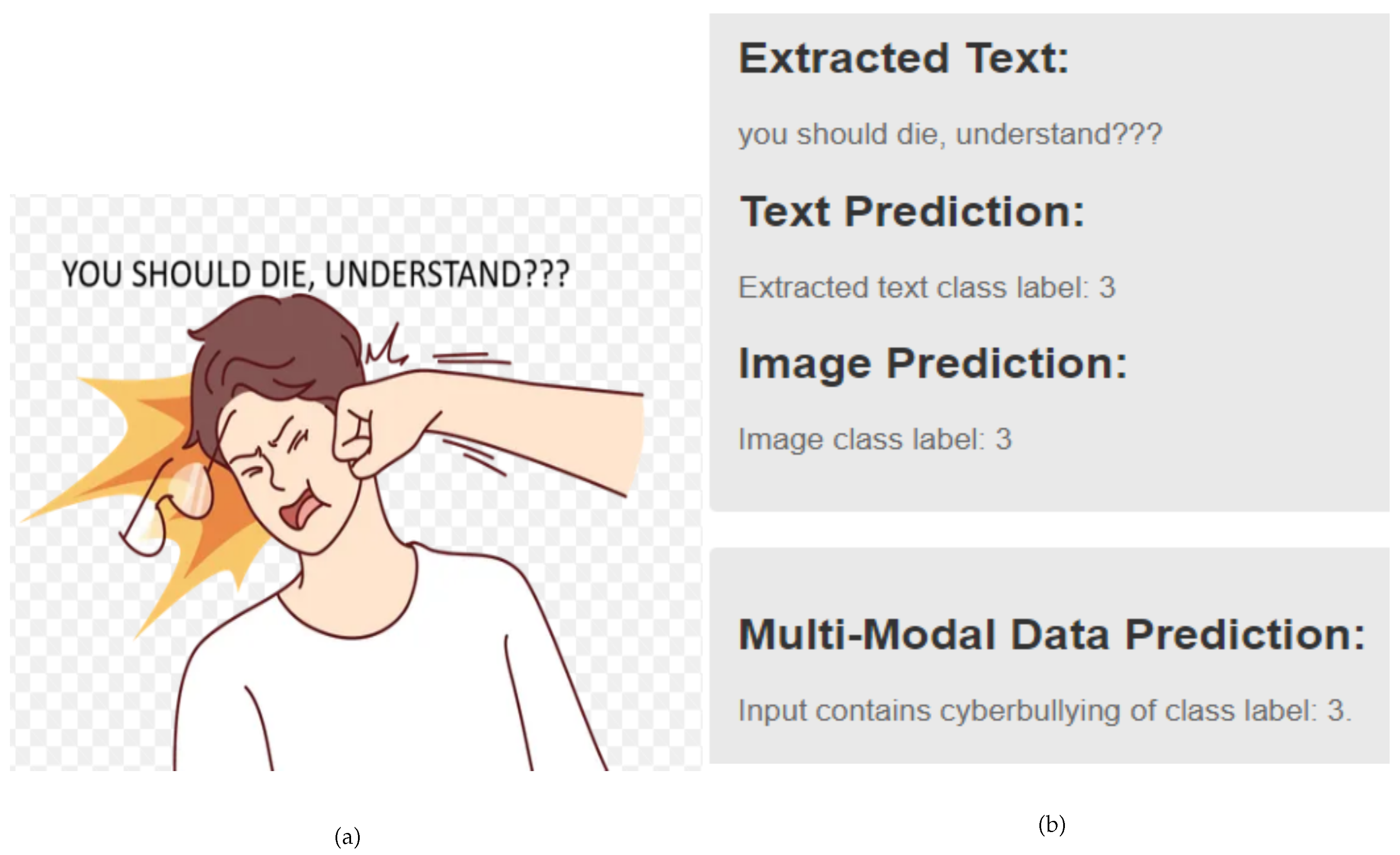

- Aggressive (class-3): Depictions of violence, such as beating someone or brandishing weapons, fall into this category and class-3.

3.3.3. Data Cleaning

3.3.4. Data Augmentation and Sampling

3.4. Feature Extraction of Multi-modal Data

3.5. Splitting Data into Training, Validation and Testing Datasets

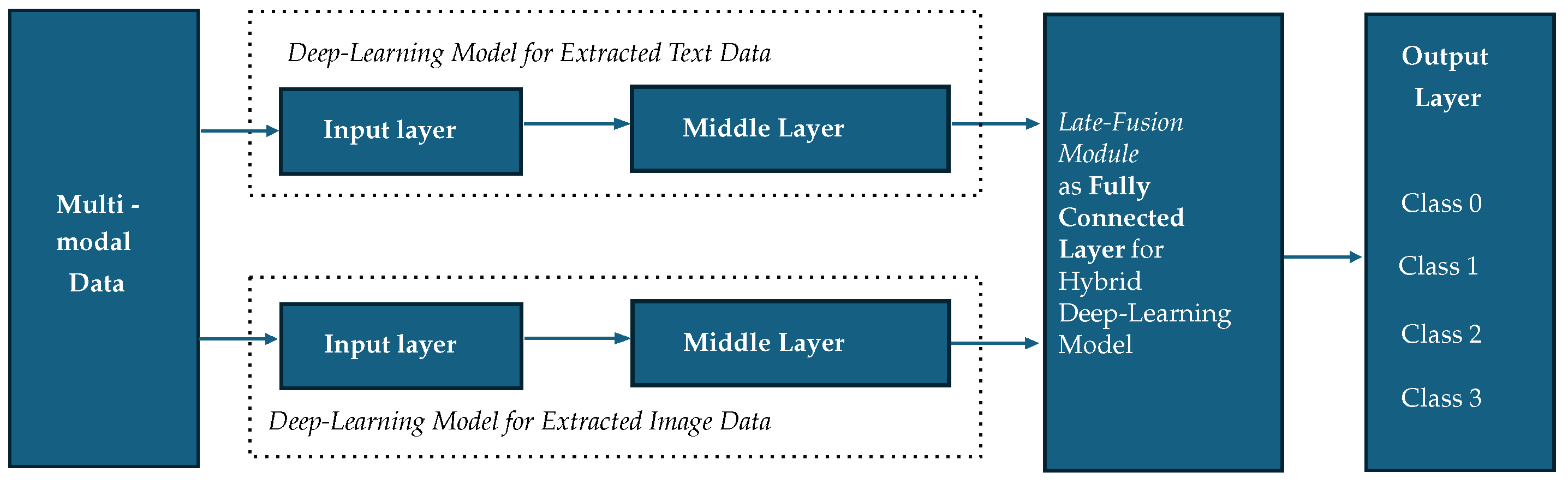

3.6. Network Architecture

3.7. Fusion Module

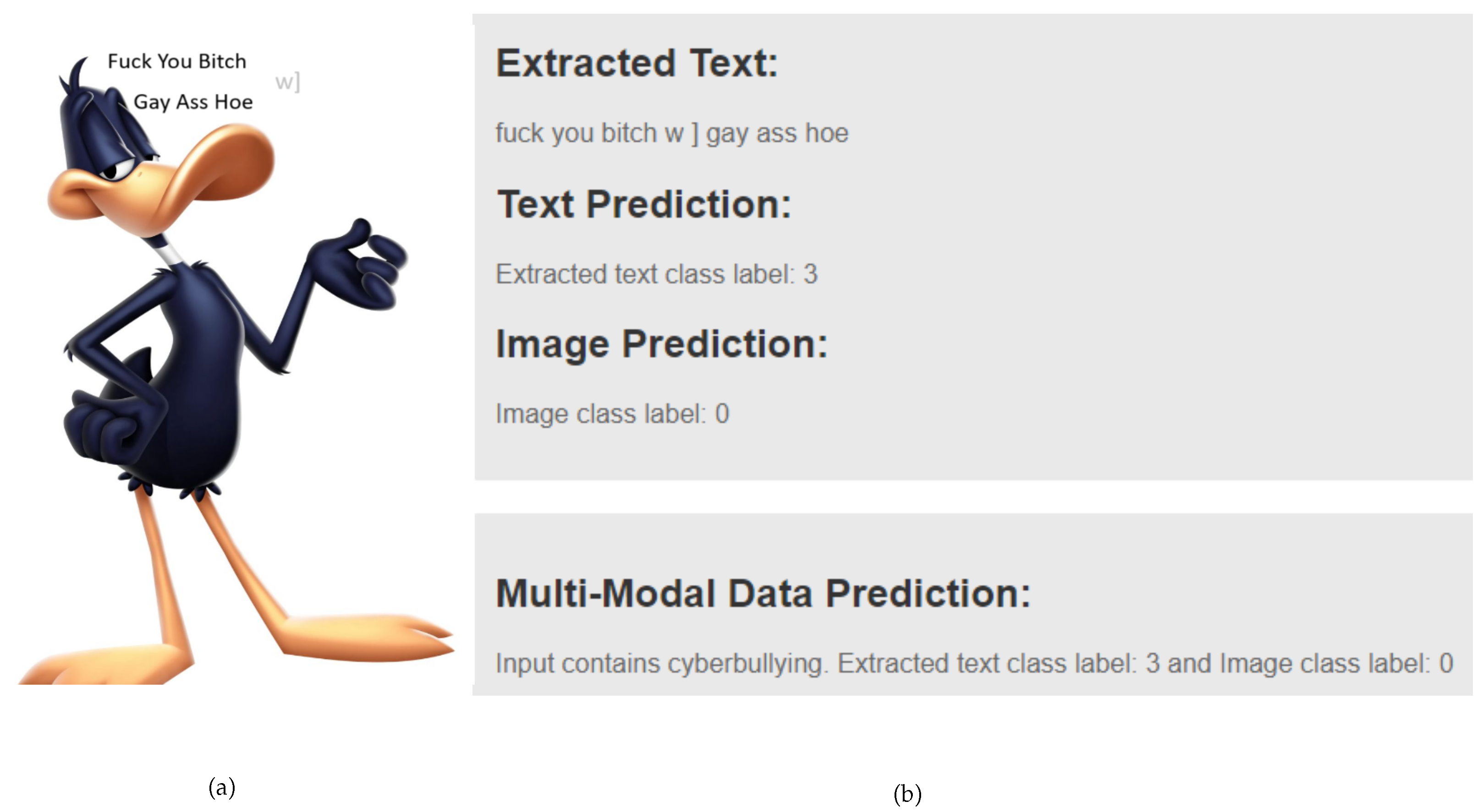

- Integration: Combines results from independent classification of extracted text and extracted image data.

- Decision-Making: Extracted text and image analyses are both taken into consideration when making decisions about the final output. First, it determines whether extracted text and image classifications are available, indicating multi-modal data. If both agree that there is no cyberbullying, a message appears indicating that the input is no cyberbullying. If both agree on the same type of cyberbullying, a specific class is defined. If the text and image classifications disagree, it acknowledges the existence of cyberbullying but labels it differently. If either classification is missing, the system concludes that the input is not multi-modal data and displays an appropriate message. The process concludes by determining the final message based on these conditions which is presented in Algorithm 1.

| Algorithm 1 Fusion Logic Decision Making Logic for Multi-modal Data |

|

- Output Synthesis: It creates a cohesive response that is presented to the user, effectively communicating the findings of the cyberbullying classification result.

3.8. Model Performance Metrics

- Accuracy: It measures how often the model makes correct predictions. It is calculated as follows:

- Precision: It shows the quality of positive predictions. It is calculated as:

- Recall: It measures how well the model finds actual positive cases. It is calculated as:

- F1 Score: It combines precision and recall into one metric. It is calculated as:

3.9. Models Deployment

| Algorithm 2 Process Input through GUI |

|

4. Results

4.1. Experimental Setup and Hyper-Parameter Tuning

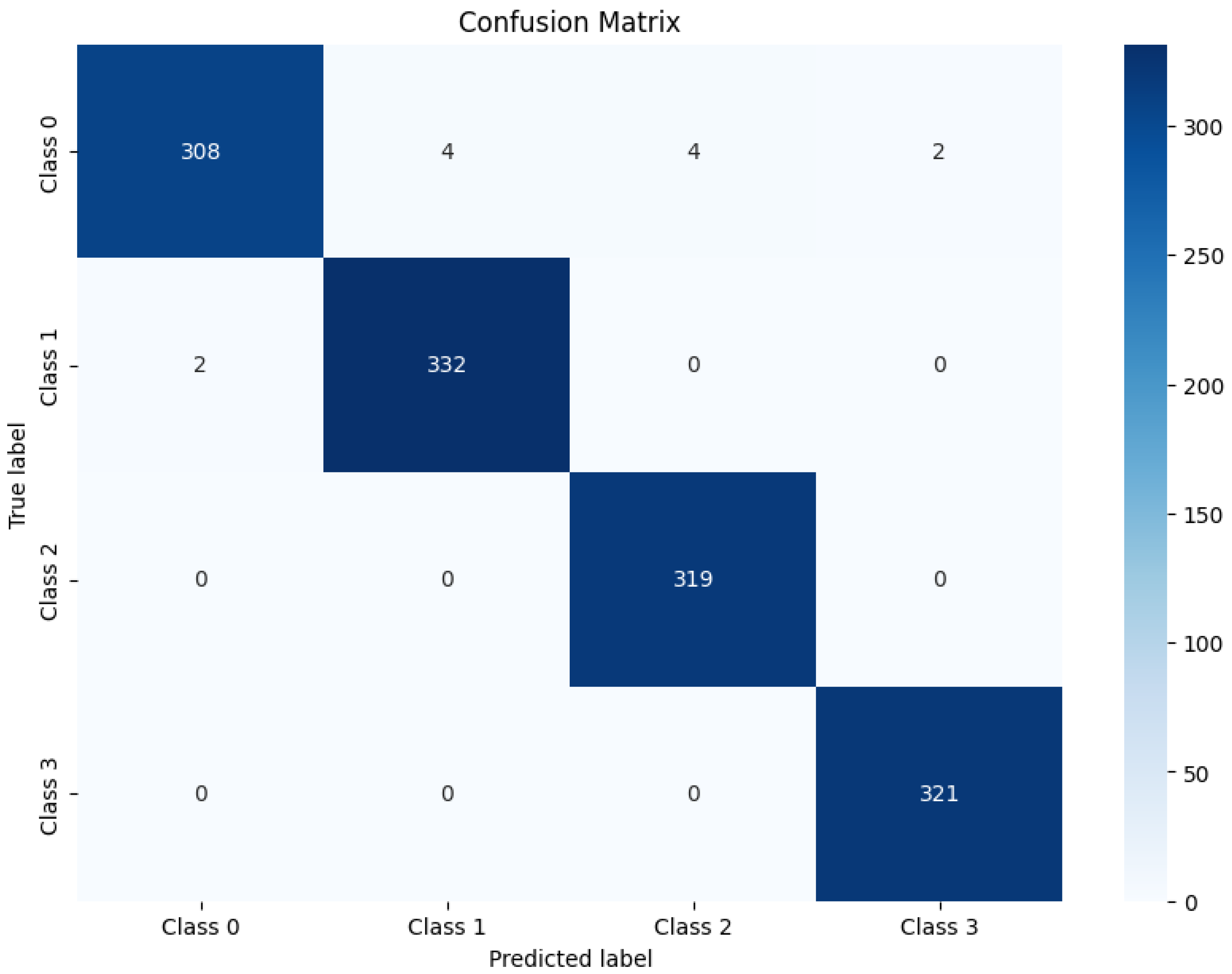

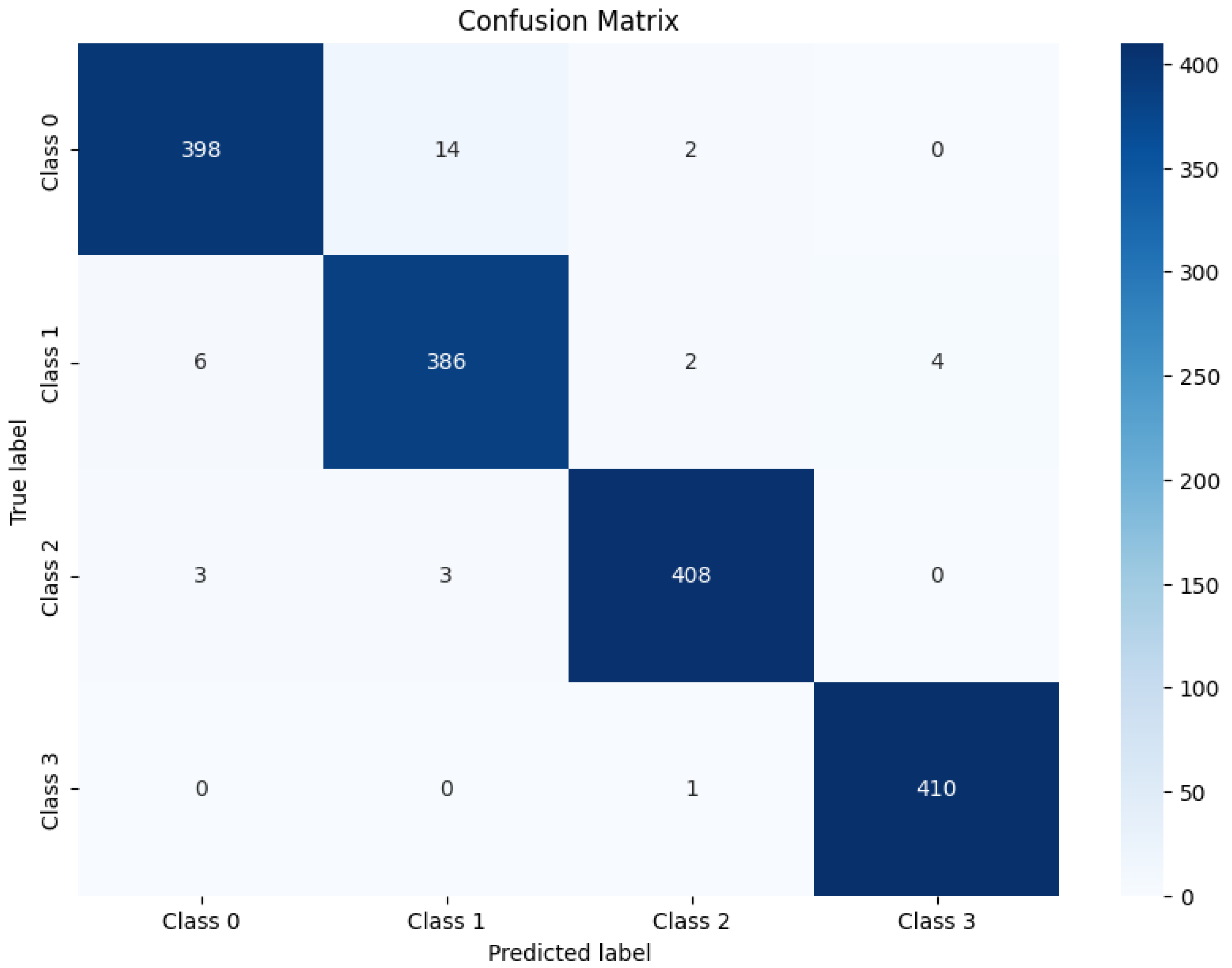

4.2. Experimental Results on Public-Dataset

4.3. Experimental Results on Private-Dataset

4.4. Results of Model Deployment

5. Discussion

6. Conclusions

6.1. Future Work

- Use multi-label classification for representing the work more realistic. As a result, if a comment contains aggressive content with bullying, the result will be displayed for both aggressive and bullying classification type.

- In this study, we have focused only on English language data. Hence, as a future work, we will collect data on multi-languages for multi-class classification on multi-modal data, so that we can classify cyberbullying from multiple language, such as Bengali, Hindi, Urdu, and Norwegian.

- Use stickers and GIF data along with our existing data to classify multi-class cyberbullying. As a result, we will be able to handle all kinds of data from the comments sections of social media short videos.

- Develop different models such as Swin Transformers (Shifted Window Transformer), Multi-scale Vision Transformers from, and BLIP-V2 (Bootstrapping Language-Image Pre-training Version 2).

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| SM | Social Media |

| DL | Deep-Learning |

| CNN | Convolutional Neural Network |

| LSTM | Long Short-Time Memory |

| GRU | Gated Recurrent Unit |

| RoBERTa | Robustly Optimized BERT Pre-training Approach |

| ViT | Vision Transformer |

| ResNet | Residual Network |

| BERT | Bidirectional Encoder Representations from Transformers |

| DistilBERT | Distilled BERT |

| TP | True Positive |

| TN | True Negative |

| FP | False Positive |

| FN | False Negative |

| OCR | Optical Character Recognition |

| GUI | Graphical User Interface |

References

- Mayfield, A. What is social media 2008.

- Le Compte, D.; Klug, D. “It’s Viral!”-A Study of the Behaviors, Practices, and Motivations of TikTok Users and Social Activism. In Proceedings of the Companion publication of the 2021 conference on computer supported cooperative work and social computing; 2021; pp. 108–111. [Google Scholar]

- Kaye, D.B.V.; Zeng, J.; Wikstrom, P. TikTok: Creativity and culture in short video; John Wiley & Sons, 2022.

- Edwards, L.; Kontostathis, A.E.; Fisher, C. Cyberbullying, race/ethnicity and mental health outcomes: A review of the literature. Media and Communication 2016, 4, 71–78. [Google Scholar] [CrossRef]

- Collantes, L.H.; Martafian, Y.; Khofifah, S.N.; Fajarwati, T.K.; Lassela, N.T.; Khairunnisa, M. The impact of cyberbullying on mental health of the victims. In Proceedings of the 2020 4th International Conference on Vocational Education and Training (ICOVET). IEEE; 2020; pp. 30–35. [Google Scholar]

- Livingstone, S.; Haddon, L.; Hasebrink, U.; Ólafsson, K.; O’Neill, B.; Smahel, D.; Staksrud, E. EU kids online: Findings, methods, recommendations. LSE, London: EU Kids Online. Available on http://lsedesignunit. com/EUKidsOnline, 2014. [Google Scholar]

- Tokunaga, R.S. Following you home from school: A critical review and synthesis of research on cyberbullying victimization. Computers in human behavior 2010, 26, 277–287. [Google Scholar] [CrossRef]

- Qiu, J.; Moh, M.; Moh, T.S. Multi-modal detection of cyberbullying on Twitter. In Proceedings of the Proceedings of the 2022 ACM Southeast Conference, 2022; pp. 9–16.

- Chen, Y.; Zhou, Y.; Zhu, S.; Xu, H. Detecting offensive language in social media to protect adolescent online safety. In Proceedings of the 2012 international conference on privacy, security, 2012, risk and trust and 2012 international confernece on social computing. IEEE; pp. 71–80. [Google Scholar]

- Van der Zwaan, J.; Dignum, V.; Jonker, C. Simulating peer support for victims of cyberbullying. In Proceedings of the Proceedings of the 22st Benelux conference on artificial intelligence (BNAIC 2010), 2010.

- Dingming Yang, Yanrong Cui, Z. Y.; Yuan, H. Deep Learning Based Steel Pipe Weld Defect Detection. Applied Artificial Intelligence 2021, 35, 1237–1249. [Google Scholar] [CrossRef]

- Mabrook, S. Al-Rakhami, S.A.A.; Alawwad, A. Effective Skin Cancer Diagnosis Through Federated Learning and Deep Convolutional Neural Networks. Applied Artificial Intelligence 2024, 38, 2364145. [Google Scholar] [CrossRef]

- Nunavath, V.; Goodwin, M. The Use of Artificial Intelligence in Disaster Management - A Systematic Literature Review. In Proceedings of the 2019 International Conference on Information and Communication Technologies for Disaster Management (ICT-DM); 2019; pp. 1–8. [Google Scholar]

- Sang, S.; Li, L. A Stock Prediction Method Based on Heterogeneous Bidirectional LSTM. Applied Sciences 2024, 14, 9158. [Google Scholar] [CrossRef]

- Chandrasekaran, S.; Singh Pundir, A.K.; Lingaiah, T.B.; et al. Deep learning approaches for cyberbullying detection and classification on social media. Computational Intelligence and Neuroscience 2022, 2022. [Google Scholar]

- Dadvar, M.; Eckert, K. Cyberbullying detection in social networks using deep learning based models. In Proceedings of the Big Data Analytics and Knowledge Discovery: 22nd International Conference, DaWaK 2020, Bratislava, Slovakia, September 14–17; 2020, Proceedings 22. Springer, 2020. pp. 245–255. [Google Scholar]

- Singh, N.K.; Singh, P.; Chand, S. Deep Learning based Methods for Cyberbullying Detection on Social Media. In Proceedings of the 2022 International Conference on Computin Communication, and Intelligent Systems (ICCCIS); IEEE, 2022. pp. 521–525.

- Alotaibi, M.; Alotaibi, B.; Razaque, A. A multichannel deep learning framework for cyberbullying detection on social media. Electronics 2021, 10, 2664. [Google Scholar] [CrossRef]

- Faraj, A.; Utku, S. Comparative Analysis of Word Embeddings for Multiclass Cyberbullying Detection. UHD Journal of Science and Technology 2024, 8, 55–63. [Google Scholar] [CrossRef]

- Ahmadinejad, M.; Shahriar, N.; Fan, L. Self-Training for Cyberbully Detection: Achieving High Accuracy with a Balanced Multi-Class Dataset. PhD thesis, Faculty of Graduate Studies and Research, University of Regina, 2023.

- Maity, K.; Jha, P.; Saha, S.; Bhattacharyya, P. A multitask framework for sentiment, emotion and sarcasm aware cyberbullying detection from multi-modal code-mixed memes. In Proceedings of the Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2022, pp.; pp. 1739–1749.

- Titli, S.R.; Paul, S. Automated Bengali abusive text classification: Using Deep Learning Techniques. In Proceedings of the 2023 International Conference on Advances in Electronics, Communication, Computing and Intelligent Information Systems (ICAECIS). IEEE, 2023; pp. 1–6. [Google Scholar]

- Romim, N.; Ahmed, M.; Talukder, H.; Saiful Islam, M. Hate speech detection in the bengali language: A dataset and its baseline evaluation. In Proceedings of the Proceedings of International Joint Conference on Advances in Computational Intelligence: IJCACI 2020. Springer, 2021; pp. 457–468.

- Karim, M.R.; Dey, S.K.; Islam, T.; Shajalal, M.; Chakravarthi, B.R. Multimodal hate speech detection from bengali memes and texts. In Proceedings of the International Conference on Speech and Language Technologies for Low-resource Languages. Springer; 2022; pp. 293–308. [Google Scholar]

- Haque, R.; Islam, N.; Tasneem, M.; Das, A.K. Multi-class sentiment classification on Bengali social media comments using machine learning. International Journal of Cognitive Computing in Engineering 2023, 4, 21–35. [Google Scholar] [CrossRef]

- Kumari, K.; Singh, J.P.; Dwivedi, Y.K.; Rana, N.P. Multi-modal aggression identification using convolutional neural network and binary particle swarm optimization. Future Generation Computer Systems 2021, 118, 187–197. [Google Scholar] [CrossRef]

- Barse, S.; Bhagat, D.; Dhawale, K.; Solanke, Y.; Kurve, D. Cyber-Trolling Detection System. Available at SSRN 4340372 2023. [Google Scholar] [CrossRef]

- Mollas, I.; Chrysopoulou, Z.; Karlos, S.; Tsoumakas, G. ETHOS: a multi-label hate speech detection dataset. Complex & Intelligent Systems 2022, 8, 4663–4678. [Google Scholar]

- Hossain, E.; Sharif, O.; Hoque, M.M.; Dewan, M.A.A.; Siddique, N.; Hossain, M.A. Identification of Multilingual Offense and Troll from Social Media Memes Using Weighted Ensemble of Multimodal Features. Journal of King Saud University-Computer and Information Sciences 2022, 34, 6605–6623. [Google Scholar] [CrossRef]

- Van Hee, C.; Lefever, E.; Verhoeven, B.; Mennes, J.; Desmet, B.; De Pauw, G.; Daelemans, W.; Hoste, V. Detection and fine-grained classification of cyberbullying events. In Proceedings of the Proceedings of the international conference recent advances in natural language processing, 2015; pp. 672–680.

- Van Hee, C.; Jacobs, G.; Emmery, C.; Desmet, B.; Lefever, E.; Verhoeven, B.; De Pauw, G.; Daelemans, W.; Hoste, V. Automatic detection of cyberbullying in social media text. PloS one 2018, 13, e0203794. [Google Scholar] [CrossRef]

- Hamza, A.; Javed, A.R.; Iqbal, F.; Yasin, A.; Srivastava, G.; Połap, D.; Gadekallu, T.R.; Jalil, Z. Multimodal Religiously Hateful Social Media Memes Classification based on Textual and Image Data. ACM Transactions on Asian and Low-Resource Language Information Processing, 2023. [Google Scholar]

- Hasan, M.T.; Hossain, M.A.E.; Mukta, M.S.H.; Akter, A.; Ahmed, M.; Islam, S. A review on deep-learning-based cyberbullying detection. Future Internet 2023, 15, 179. [Google Scholar] [CrossRef]

- Dewani, A.; Memon, M.A.; Bhatti, S. Cyberbullying detection: advanced preprocessing techniques & deep learning architecture for Roman Urdu data. Journal of big data 2021, 8, 160. [Google Scholar]

- Ahsan, S.; Hossain, E.; Sharif, O.; Das, A.; Hoque, M.M.; Dewan, M. A Multimodal Framework to Detect Target Aware Aggression in Memes. In Proceedings of the Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics (Volume 1: Long Papers), 2024; pp. 2487–2500.

- Paciello, M.; D’Errico, F.; Saleri, G.; Lamponi, E. Online sexist meme and its effects on moral and emotional processes in social media. Computers in human behavior 2021, 116, 106655. [Google Scholar] [CrossRef]

- Sharma, S.; Alam, F.; Akhtar, M.S.; Dimitrov, D.; Martino, G.D.S.; Firooz, H.; Halevy, A.; Silvestri, F.; Nakov, P.; Chakraborty, T. Detecting and understanding harmful memes: A survey. arXiv, 2022; arXiv:2205.04274 2022. [Google Scholar]

- Pandeya, Y.R.; Lee, J. Deep learning-based late fusion of multimodal information for emotion classification of music video. Multimedia Tools and Applications 2021, 80, 2887–2905. [Google Scholar] [CrossRef]

- Gupta, P.; Gupta, H.; Sinha, A. Dsc iit-ism at semeval-2020 task 8: Bi-fusion techniques for deep meme emotion analysis. arXiv, 2020; arXiv:2008.00825 2020. [Google Scholar]

- Cai, Y.; Cai, H.; Wan, X. Multi-modal sarcasm detection in twitter with hierarchical fusion model. In Proceedings of the Proceedings of the 57th annual meeting of the association for computational linguistics, 2019; pp. 2506–2515.

- Room, C. Confusion matrix. Mach. Learn 2019, 6, 27. [Google Scholar]

- Yue, T.; Mao, R.; Wang, H.; Hu, Z.; Cambria, E. KnowleNet: Knowledge fusion network for multimodal sarcasm detection. Information Fusion 2023, 100, 101921. [Google Scholar] [CrossRef]

- Aggarwal, S.; Pandey, A.; Vishwakarma, D.K. Modelling Visual Semantics via Image Captioning to extract Enhanced Multi-Level Cross-Modal Semantic Incongruity Representation with Attention for Multimodal Sarcasm Detection. arXiv, 2024; arXiv:2408.02595 2024. [Google Scholar]

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 | |

| 17 | |

| 18 | |

| 19 | |

| 20 | |

| 21 | |

| 22 | |

| 23 | |

| 24 |

| Literature Review | Dataset Collection Sources | Model Name | Accuracy | Label Type | Limitation |

|---|---|---|---|---|---|

| [21] | Twitter and Reddit memes | BERT, ResNET, GRU | Text accuracy: 61.14% and Image accuracy 63.36% | multi-label | Performance outcome, accuracy rate is low |

| [22] | YouTube comments | Bengali BERT | Accuracy: 70.6%, F1 score: 0.705 | multi-class | Textual data only |

| [26] | Facebook, Twitter, Instagram | CNN, BPSO | F1-Score of 0.74 | multi-class | Focused on Aggression’s level |

| [29] | MultiOFF and TamilMemes | VGG19 and m-distilBERT | Weighted F1-scores of 66.73% and 58.59% | multi-class | Focused on Aggression’s level |

| [27] | YouTube, tiktok, twitter and other social site | Random Forest | accuracy 96.50% | multi-class | Focused only textual data |

| [28] | Reddit and YouTube | BiLSTM | accuracy 80.36% | multi-label | Focused only textual data |

| [20] | RoBERTa | 99.80% accuracy for multi-class classification | multi-class | Focused only textual data |

| Model type | Input Layer | Middle Layers | Fully-Connected Layer | Output Layer |

|---|---|---|---|---|

| LSTM for extracted text data + ResNet for extracted image data | Extracted text tokens as embeddings + Extracted Images (e.g., 224x224x3 RGB) | 2 LSTM layers + 50 Convolutional layers (with residual connections) | Combining LSTM and ResNet features as hybrid (LSTM+ResNet) model | Softmax dense layer for four output classes |

| LSTM for extracted text data + CNN for extracted image data | Extracted text tokens as embeddings + Extracted Images (e.g., 224x224x3 RGB) | 2 LSTM layers + 1 Convolutional layer + 1 Activation layer + Additional layers (e.g., Pooling) | Combining LSTM and CNN features as hybrid (LSTM+CNN) model | Softmax dense layer for four output classes |

| GRU for extracted text data + ResNet for extracted image data | Extracted text tokens as embeddings + Extracted Images (e.g., 224x224x3 RGB) | 2 GRU layers + 50 Convolutional layers (with residual connections) | Combining GRU and ResNet features as hybrid (GRU+ResNet) model | Softmax dense layer for four output classes |

| GRU for extracted text data + CNN for extracted image data | Extracted text tokens as embeddings + Extracted Images (e.g., 224x224x3 RGB) | 2 GRU layers + 1 Convolutional layer + 1 Activation layer + Additional layers (e.g., Pooling) | Combining GRU and CNN features as hybrid (GRU+CNN) model | Softmax dense layer for four output classes |

| BERT for extracted text data + ResNet for extracted image data | Extracted text tokens with positional embeddings + Extracted images (e.g., 224x224x3 RGB) | 12 Transformer layers + 50 Convolutional layers (with residual connections) | Combining BERT and ResNet features as hybrid (BERT+ ResNet) model | Softmax dense layer for four output classes |

| BERT for extracted text data + CNN for extracted image data | Extracted text tokens with positional embeddings + Extracted images (e.g., 224x224x3 RGB) | 12 Transformer layers + 1 Convolutional layer + 1 Activation layer + Additional layers (e.g., Pooling) | Combining BERT and CNN features as hybrid (BERT+CNN) model | Softmax dense layer for four output classes |

| LSTM for extracted text data + ViT for extracted image data | Extracted text tokens as embeddings + Extracted images divided into patches (e.g., 16x16) | 2 LSTM layers + 12 Transformer layers | Combining LSTM and ViT features as hybrid (LSTM+ViT) model | Softmax dense layer for four output classes |

| GRU for extracted text data + ViT for extracted image data | Extracted text tokens as embeddings + Extracted images divided into patches (e.g., 16x16) | 2 GRU layers + 12 Transformer layers | Combining GRU and ViT features as hybrid (GRU+ViT) model | Softmax dense layer for four output classes |

| BERT for extracted text data + ViT for extracted image data | Extracted text tokens with positional embeddings + Extracted images divided into patches (e.g., 16x16) | 12 Transformer layers + 12 Transformer layers | Combining BERT and ViT features as hybrid (BERT+ViT) model | Softmax dense layer for four output classes |

| DistilBERT for extracted text data + ResNet for extracted image data | Extracted text tokens with positional embeddings + Extracted images (e.g., 224x224x3 RGB) | 6 Transformer layers + 50 Convolutional layers (with residual connections) | Combining DistilBERT and ResNet features as hybrid (DistilBERT+ ResNet) model | Softmax dense layer for four output classes |

| DistilBERT for extracted text data + CNN for extracted image data | Extracted text tokens with positional embeddings + Extracted images (e.g., 224x224x3 RGB) | 6 Transformer layers + 1 Convolutional layer + 1 Activation layer + Additional layers (e.g., Pooling) | Combining DistilBERT and CNN features as hybrid (DistilBERT+CNN) model | Softmax dense layer for four output classes |

| DistilBERT for extracted text data + ViT for extracted image data | Extracted text tokens with positional embeddings + Extracted images divided into patches (e.g., 16x16) | 6 Transformer layers + 12 Transformer layers | Combining DistilBERT and ViT features as hybrid (DistilBERT+ViT) model | Softmax dense layer for four output classes |

| RoBERTa for extracted text data + ResNet for extracted image data | Extracted text tokens with positional embeddings + Extracted images (e.g., 224x224x3 RGB) | 12 Transformer layers + 50 Convolutional layers (with residual connections) | Combining RoBERTa and ResNet features as hybrid (RoBERTa+ ResNet) model | Softmax dense layer for four output classes |

| RoBERTa for extracted text data + CNN for extracted image data | Extracted text tokens with positional embeddings + Extracted images (e.g., 224x224x3 RGB) | 12 Transformer layers + 1 Convolutional layer + 1 Activation layer + Additional layers (e.g., Pooling) | Combining RoBERTa and CNN features as hybrid (RoBERTa+ CNN) model | Softmax dense layer for four output classes |

| RoBERTa for extracted text data + ViT for extracted image data | Extracted text tokens with positional embeddings + Extracted images divided into patches (e.g., 16x16) | 12 Transformer layers + 12 Transformer layers | Combining RoBERTa and ViT features as hybrid (RoBERTa+ ViT) model | Softmax dense layer for four output classes |

| Hybrid Deep-Learning Model | Test Accuracy | Recall | F1-Score | Precision |

|---|---|---|---|---|

| LSTM+ResNet | 0.7085 | 0.71 | 0.665 | 0.665 |

| LSTM+CNN | 0.7285 | 0.73 | 0.685 | 0.685 |

| LSTM+ViT | 0.7335 | 0.735 | 0.69 | 0.69 |

| GRU+ResNet | 0.723 | 0.715 | 0.655 | 0.63 |

| GRU+CNN | 0.743 | 0.735 | 0.675 | 0.65 |

| GRU+ViT | 0.748 | 0.74 | 0.68 | 0.655 |

| BERT+ResNet | 0.9585 | 0.9585 | 0.9585 | 0.9585 |

| BERT+CNN | 0.9785 | 0.9785 | 0.9785 | 0.9785 |

| BERT+ViT | 0.9835 | 0.9835 | 0.9835 | 0.9835 |

| DistilBERT+ResNet | 0.9655 | 0.9655 | 0.9655 | 0.9655 |

| DistilBERT+CNN | 0.9855 | 0.9855 | 0.9855 | 0.9855 |

| DistilBERT+ViT | 0.9905 | 0.9905 | 0.9905 | 0.9905 |

| RoBERTa+ResNet | 0.966 | 0.966 | 0.966 | 0.966 |

| RoBERTa+CNN | 0.986 | 0.986 | 0.986 | 0.986 |

| RoBERTa+ViT | 0.9924 | 0.9924 | 0.9924 | 0.9924 |

| Hybrid Deep-Learning Model | Test Accuracy | Recall | F1-Score | Precision | ROC-AUC value |

|---|---|---|---|---|---|

| RoBERTa+ViT | 0.961 | 0.9599 | 0.9599 | 0.960 | 0.99 |

| Literature Review | Dataset | Label | Accuracy | Used Model |

|---|---|---|---|---|

| [32] | Dataset-1 | Hateful, Non-hateful | 70.60% | RexNeXT-152-based Masked R-CNN, BERT |

| [21] | Dataset-2 | Bullies, Attitude, Emotion, and Sarcasm Recognition | Text: 59.72%, Image: 59.39% | Text: BERT-GRU, Image: ResNet |

| [42] | Dataset-2 | Bullies, Attitude, Emotion, and Sarcasm Recognition | 64.35% | BERT, ResNet |

| [43] | Dataset-2 | Sarcasm Identification | Text: 63.83%, Image: 62.91% | Text: Cross-lingual Language Model, Image: Self-regulated ConvNet + Lightweight Attention |

| Our Result | Public-dataset (Dataset-1 + Dataset-2) | Non-bullying, Defaming, Offensive, Aggressive | 99.24% | Hybrid (RoBERTa + ViT) |

| Private-dataset | Non-bullying, Defaming, Offensive, Aggressive | 96.1% | Hybrid (RoBERTa + ViT) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).