Submitted:

28 October 2024

Posted:

30 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

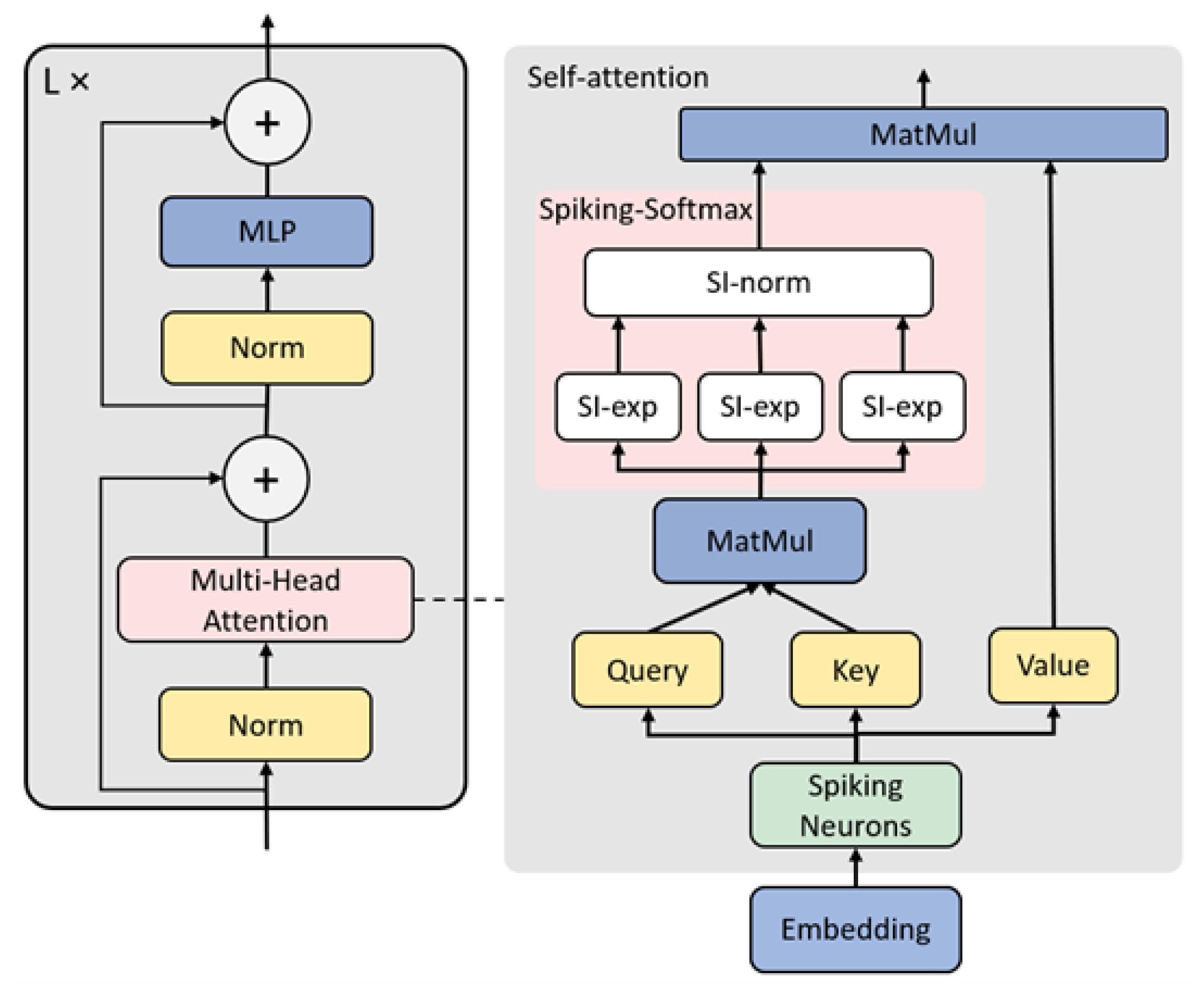

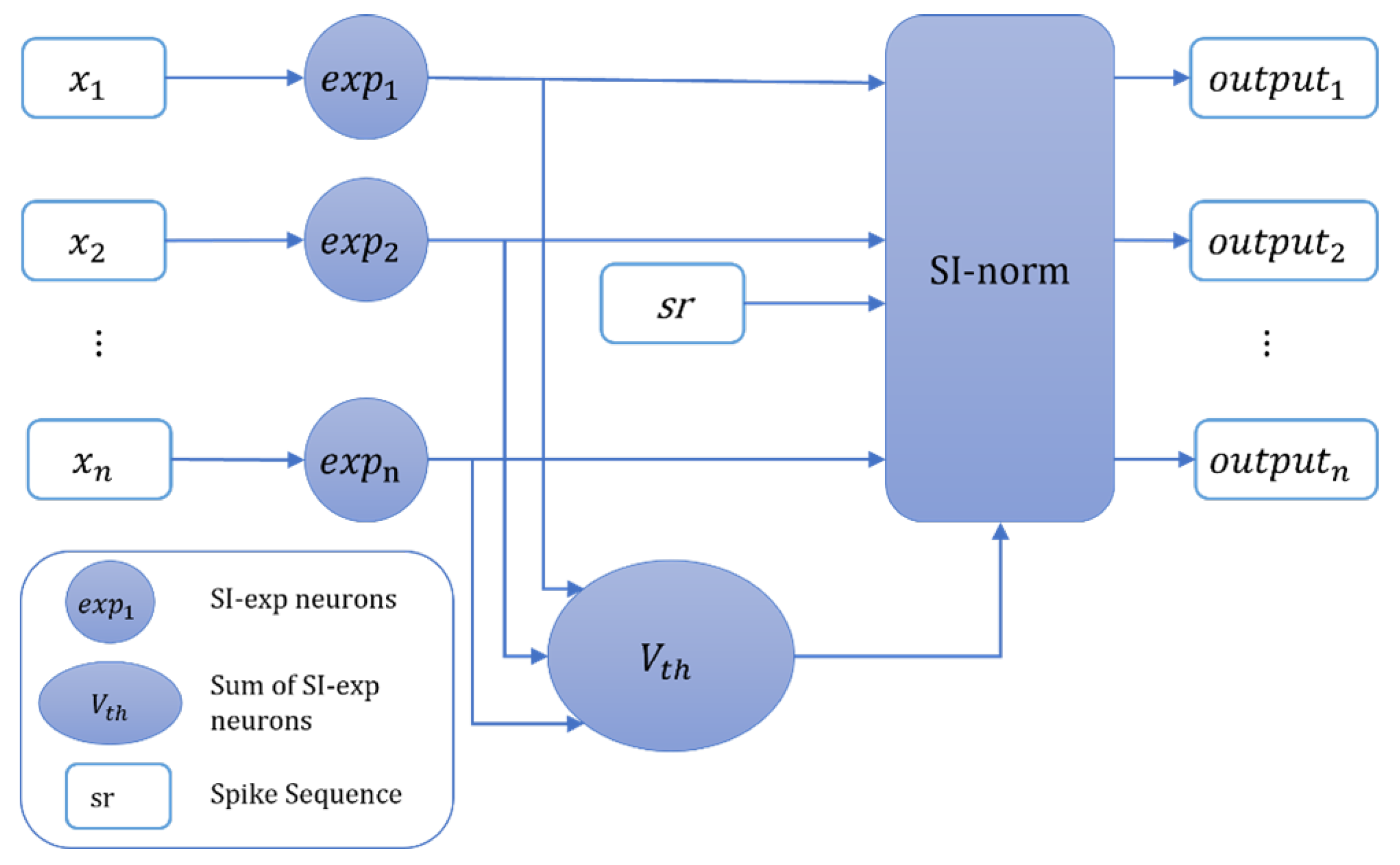

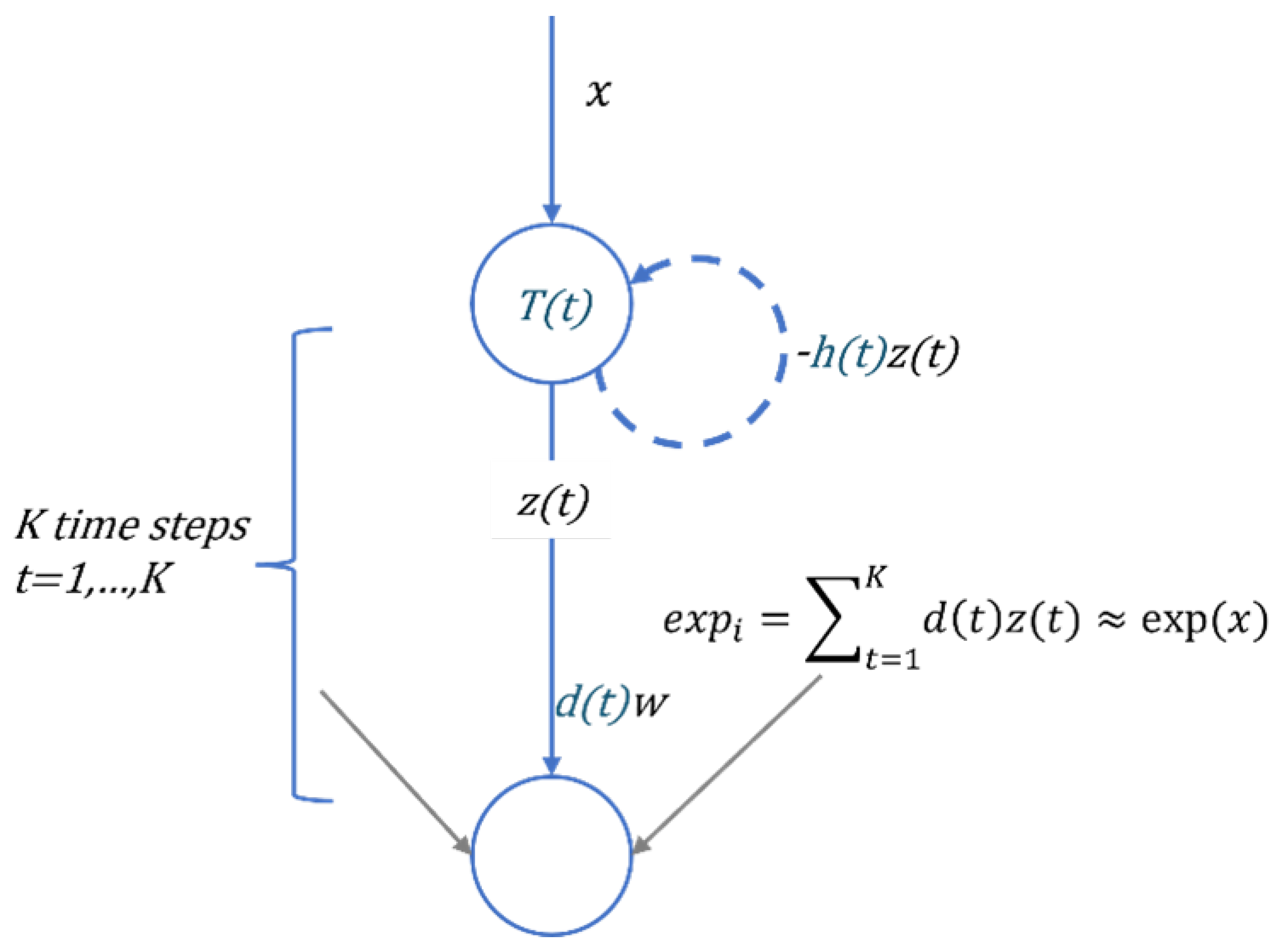

- We have designed a novel Spiking Exponential Neuron (SI-exp) and Spiking Collaboration Normalized Neuron (SI-norm), leveraging the computational rules of spiking neurons to realize the exponential and normalization operations of the Softmax activation function. By integrating SI-exp and SI-norm, we have achieved the Spiking-Softmax method to simulate the Softmax function in SNNs. This marks the first implementation of the Softmax activation function for the self-attention mechanism in SNNs. With these proposed neurons, we have proposed the SIT-conversion method, which fully applied the Transformer into SNNs.

- We evaluated SIT-conversion on static datasets using various Transformer structures and demonstrated that SNNs generated by SIT-conversion from Transformer models achieved nearly identical accuracy to their ANNs counterparts, thus achieving nearly lossless ANN-to-SNN conversion.

2. Related Works

3. Methods

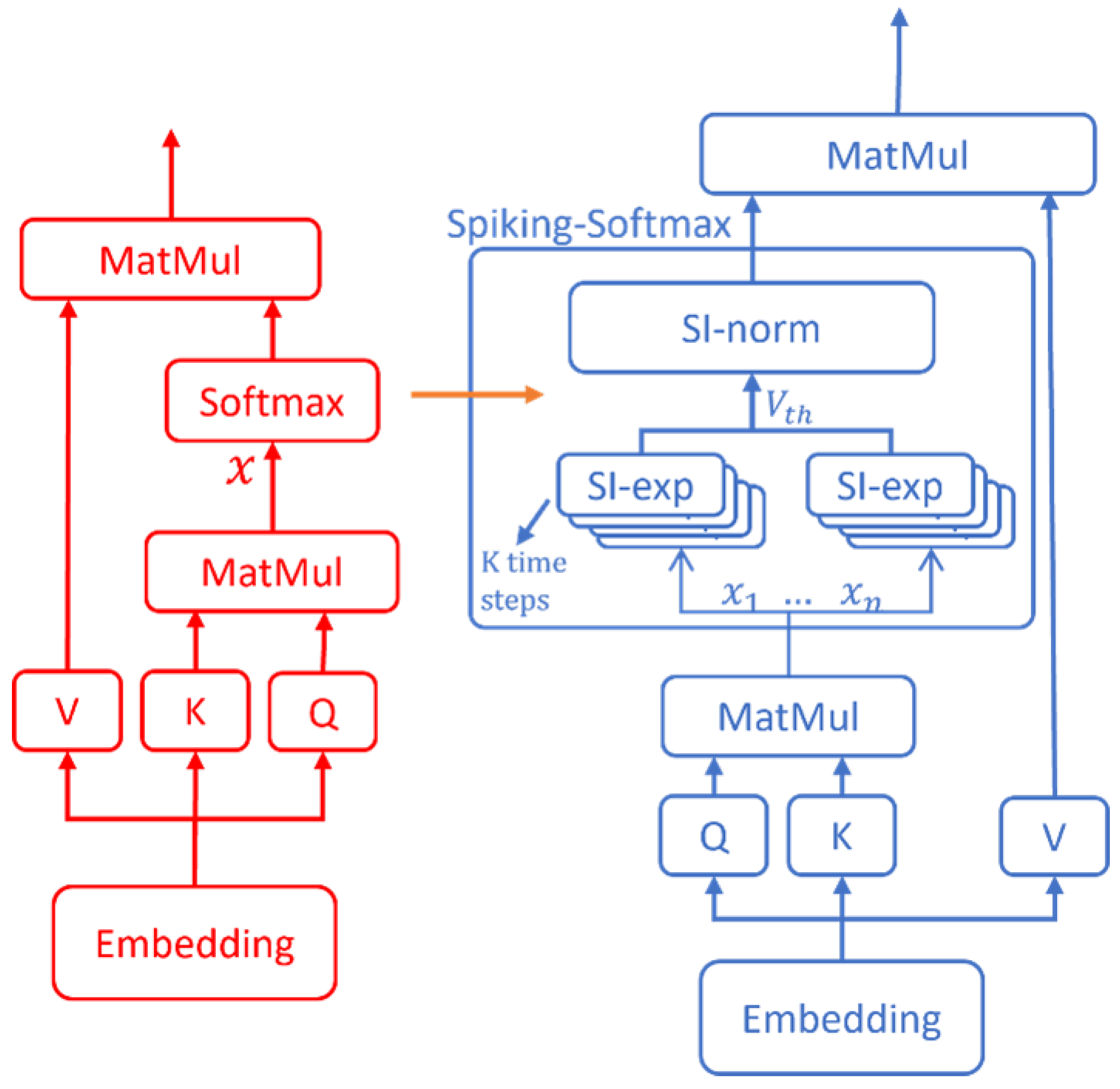

3.1. Structure of Spike Integrated Transformer

3.2. Spiking-Softmax Method

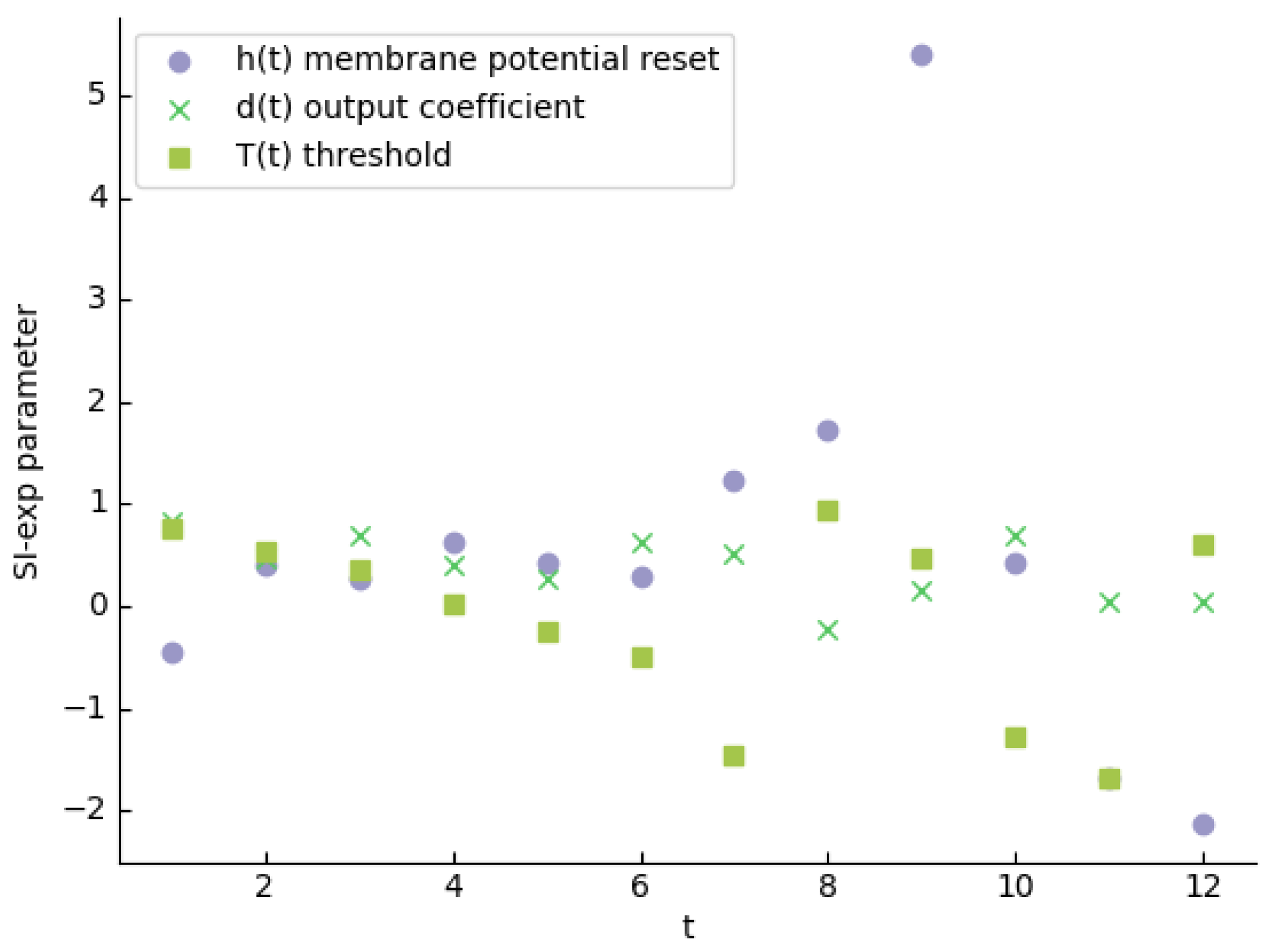

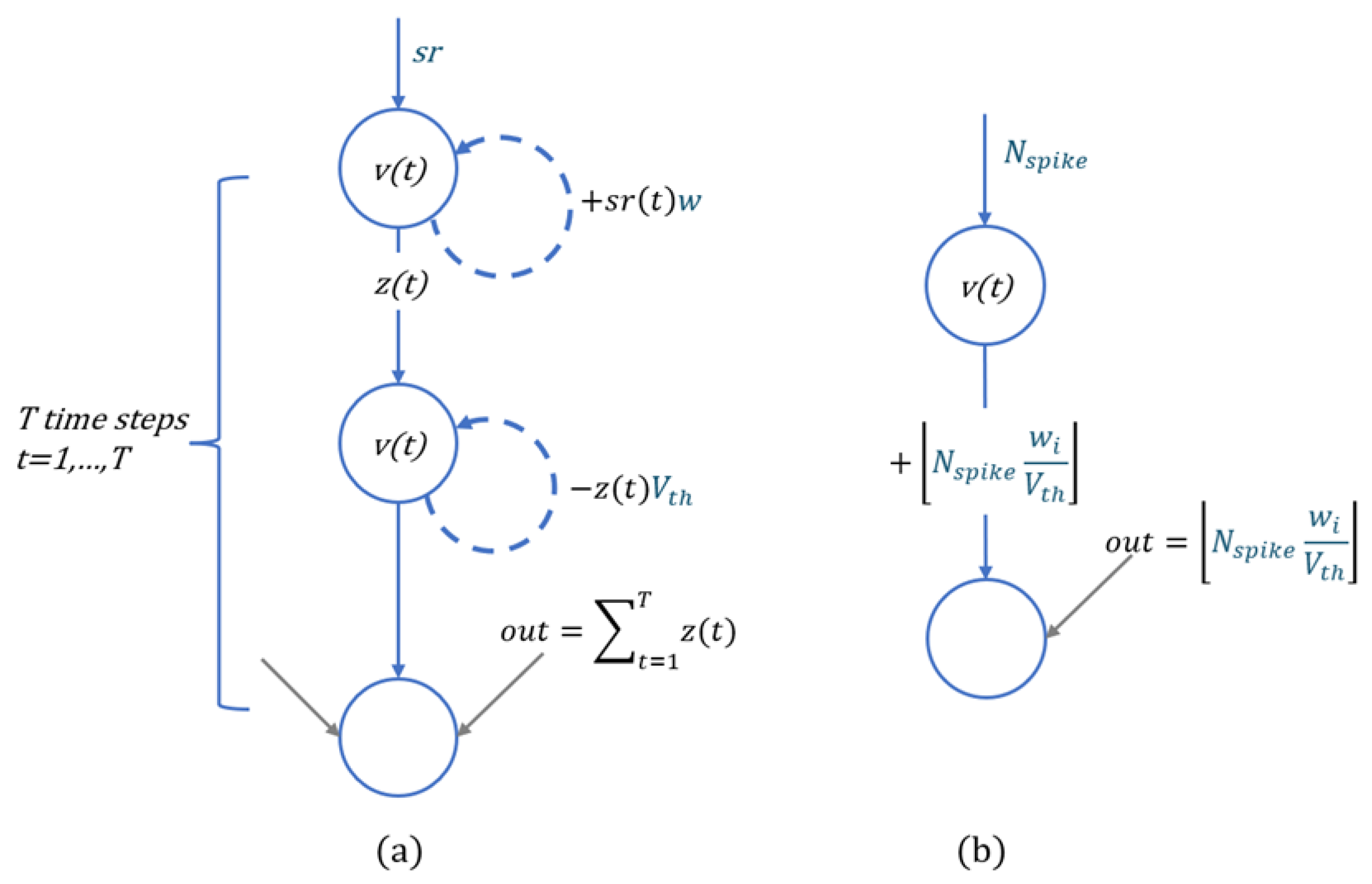

3.3. Spiking Exponential Neuron

3.4. Spiking Collaboration Normalized Neuron

3.5. ANN-to-SNN Conversion Method

4. Experiment and Results

4.1. Experiment Setup

4.1.1. Datasets and Parameter Setup

4.1.2. Calculation of Energy Efficiency

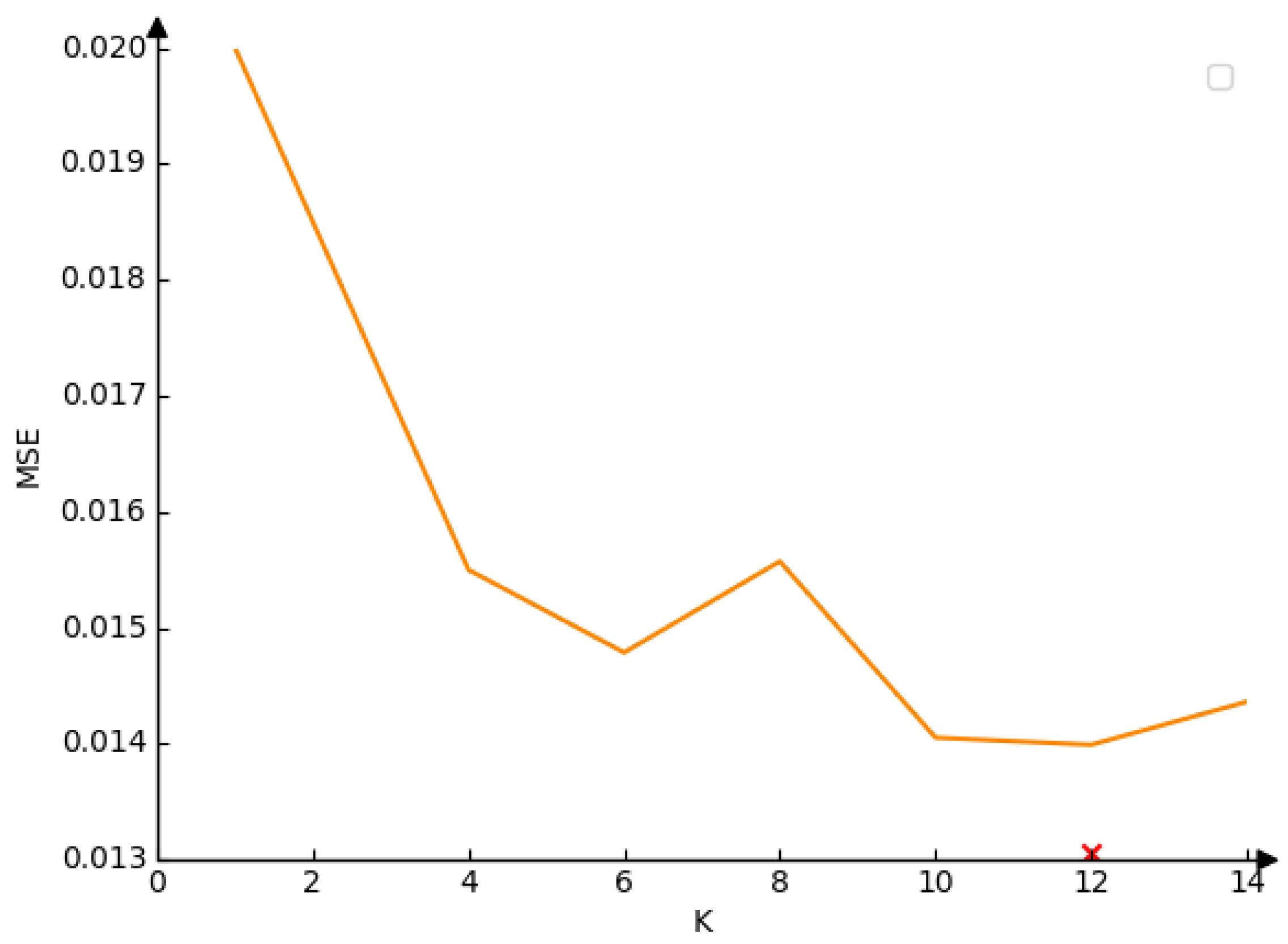

4.2. Loss of the Spiking-Softmax Method in Different Time Steps

4.3. Performance on Different Transformer Models

4.3.1. Performance on EdgeNeXt Model

4.3.2. Performance on Next-Vit Model

4.3.3. Performance on UniFormer Model

4.3.4. Comparison of Different Transformer Models

4.4. Performance on with SOTA SNN Models

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Maass, W. Networks of spiking neurons: the third generation of neural network models. Neural networks 1997, 10, 1659–1671. [Google Scholar] [CrossRef]

- Roy, K.; Jaiswal, A.; Panda, P. Towards spike-based machine intelligence with neuromorphic computing. Nature 2019, 575, 607–617. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Lin, X.; Dang, X. Supervised learning in spiking neural networks: A review of algorithms and evaluations. Neural Networks 2020, 125, 258–280. [Google Scholar] [CrossRef] [PubMed]

- Fang, W.; Yu, Z.; Chen, Y.; Huang, T.; Masquelier, T.; Tian, Y. Deep residual learning in spiking neural networks. Advances in Neural Information Processing Systems 2021, 34, 21056–21069. [Google Scholar]

- Hu, Y.; Tang, H.; Pan, G. Spiking deep residual networks. IEEE Transactions on Neural Networks and Learning Systems 2021, 34, 5200–5205. [Google Scholar] [CrossRef] [PubMed]

- Zheng, H.; Wu, Y.; Deng, L.; Hu, Y.; Li, G. Going deeper with directly-trained larger spiking neural networks. Proceedings of the AAAI conference on artificial intelligence, 2021, Vol. 35, pp. 11062–11070.

- Lotfi Rezaabad, A.; Vishwanath, S. Long short-term memory spiking networks and their applications. International Conference on Neuromorphic Systems 2020, 2020, pp. 1–9. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser. ; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Zhao, W.X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; others. A survey of large language models. arXiv preprint arXiv:2303.18223 2023.

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; others. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 2020.

- Yuan, L.; Chen, Y.; Wang, T.; Yu, W.; Shi, Y.; Jiang, Z.H.; Tay, F.E.; Feng, J.; Yan, S. Tokens-to-token vit: Training vision transformers from scratch on imagenet. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 558–567.

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. European conference on computer vision. Springer, 2020, pp. 213–229.

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 10012–10022.

- Zhu, X.; Su, W.; Lu, L.; Li, B.; Wang, X.; Dai, J. Deformable detr: Deformable transformers for end-to-end object detection. arXiv preprint arXiv:2010.04159 2020.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; others. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Zhou, Z.; Che, K.; Fang, W.; Tian, K.; Zhu, Y.; Yan, S.; Tian, Y.; Yuan, L. Spikformer V2: Join the High Accuracy Club on ImageNet with an SNN Ticket. arXiv preprint arXiv:2401.02020 2024.

- Zhou, C.; Yu, L.; Zhou, Z.; Zhang, H.; Ma, Z.; Zhou, H.; Tian, Y. Spikingformer: Spike-driven Residual Learning for Transformer-based Spiking Neural Network. arXiv preprint arXiv:2304.11954 2023.

- Zhou, Z.; Zhu, Y.; He, C.; Wang, Y.; Yan, S.; Tian, Y.; Yuan, L. Zhou, Z.; Zhu, Y.; He, C.; Wang, Y.; Yan, S.; Tian, Y.; Yuan, L. Spikformer: When spiking neural network meets transformer. arXiv preprint arXiv:2209.15425 2022.

- Mueller, E.; Studenyak, V.; Auge, D.; Knoll, A. Spiking transformer networks: A rate coded approach for processing sequential data. 2021 7th International Conference on Systems and Informatics (ICSAI). IEEE, 2021, pp. 1–5.

- Wang, Z.; Fang, Y.; Cao, J.; Zhang, Q.; Wang, Z.; Xu, R. Masked spiking transformer. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 1761–1771.

- Ho, N.D.; Chang, I.J. TCL: an ANN-to-SNN conversion with trainable clipping layers. 2021 58th ACM/IEEE Design Automation Conference (DAC). IEEE, 2021, pp. 793–798.

- Jiang, H.; Anumasa, S.; De Masi, G.; Xiong, H.; Gu, B. A unified optimization framework of ANN-SNN conversion: towards optimal mapping from activation values to firing rates. International Conference on Machine Learning. PMLR, 2023, pp. 14945–14974.

- Cordonnier, J.B.; Loukas, A.; Jaggi, M. Cordonnier, J.B.; Loukas, A.; Jaggi, M. On the relationship between self-attention and convolutional layers. arXiv preprint arXiv:1911.03584 2019.

- Siddique, A.; Vai, M.I.; Pun, S.H. A low cost neuromorphic learning engine based on a high performance supervised SNN learning algorithm. Scientific Reports 2023, 13, 6280. [Google Scholar] [CrossRef] [PubMed]

- Guo, L.; Gao, Z.; Qu, J.; Zheng, S.; Jiang, R.; Lu, Y.; Qiao, H. Transformer-based Spiking Neural Networks for Multimodal Audio-Visual Classification. IEEE Transactions on Cognitive and Developmental Systems 2023. [Google Scholar]

- Zhang, J.; Dong, B.; Zhang, H.; Ding, J.; Heide, F.; Yin, B.; Yang, X. Spiking transformers for event-based single object tracking. Proceedings of the IEEE/CVF conference on Computer Vision and Pattern Recognition, 2022, pp. 8801–8810.

- Stöckl, C.; Maass, W. Optimized spiking neurons can classify images with high accuracy through temporal coding with two spikes. Nature Machine Intelligence 2021, 3, 230–238. [Google Scholar] [CrossRef]

- Zhang, J.; Zhang, L. Spiking Neural Network Implementation on FPGA for Multiclass Classification. 2023 IEEE International Systems Conference (SysCon). IEEE, 2023, pp. 1–8.

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proceedings of the IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Hinton, G. ; others. Learning multiple layers of features from tiny images. 2009. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. 2009 IEEE conference on computer vision and pattern recognition. Ieee, 2009, pp. 248–255.

- Rathi, N.; Roy, K. Diet-snn: A low-latency spiking neural network with direct input encoding and leakage and threshold optimization. IEEE Transactions on Neural Networks and Learning Systems 2021, 34, 3174–3182. [Google Scholar] [CrossRef] [PubMed]

- Horowitz, M. 1.1 computing’s energy problem (and what we can do about it). 2014 IEEE international solid-state circuits conference digest of technical papers (ISSCC). IEEE, 2014, pp. 10–14.

- Maaz, M.; Shaker, A.; Cholakkal, H.; Khan, S.; Zamir, S.W.; Anwer, R.M.; Shahbaz Khan, F. Edgenext: efficiently amalgamated cnn-transformer architecture for mobile vision applications. European Conference on Computer Vision. Springer, 2022, pp. 3–20.

- Li, J.; Xia, X.; Li, W.; Li, H.; Wang, X.; Xiao, X.; Wang, R.; Zheng, M.; Pan, X. Next-vit: Next generation vision transformer for efficient deployment in realistic industrial scenarios. arXiv preprint arXiv:2207.05501 2022.

- Li, K.; Wang, Y.; Zhang, J.; Gao, P.; Song, G.; Liu, Y.; Li, H.; Qiao, Y. Uniformer: Unifying convolution and self-attention for visual recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 12581–12600. [Google Scholar] [CrossRef] [PubMed]

- Loshchilov, I. Loshchilov, I. Decoupled weight decay regularization. arXiv preprint arXiv:1711.05101 2017.

- Wang, Y.; Zhang, M.; Chen, Y.; Qu, H. Signed neuron with memory: Towards simple, accurate and high-efficient ann-snn conversion. International Joint Conference on Artificial Intelligence, 2022.

- Bu, T.; Fang, W.; Ding, J.; Dai, P.; Yu, Z.; Huang, T. Bu, T.; Fang, W.; Ding, J.; Dai, P.; Yu, Z.; Huang, T. Optimal ANN-SNN conversion for high-accuracy and ultra-low-latency spiking neural networks. arXiv preprint arXiv:2303.04347 2023.

| Model | Dataset | lr | weight-decay | epoch |

| EdgeNeXt | CIFAR10 | 6e-3 | 0.05 | 100 |

| CIFAR100 | 6e-3 | 0.05 | 100 | |

| ImageNet-1K | / | / | / | |

| Next-ViT | CIFAR10 | 5e-4 | 0.1 | 100 |

| CIFAR100 | 5e-4 | 0.1 | 100 | |

| ImageNet-1K | 5e-6 | 1e-8 | 20 | |

| UniFormer | CIFAR10 | 5e-4 | 0.05 | 100 |

| CIFAR100 | 5e-4 | 0.05 | 100 | |

| ImageNet-1K | 4e-5 | 0.05 | 25 |

| Layers | ImageNet-1K(%) | CIFAR100(%) | CIFAR10(%) |

| 0 | 79.42 | 85.40 | 97.69 |

| 1 | 79.30(-0.10) | 85.37(-0.03) | 97.69(0.00) |

| 2 | 79.30(-0.10) | 85.42(+0.02) | 97.68(-0.01) |

| 3 | 79.18(-0.24) | 85.37(-0.03) | 97.69(0.00) |

| Layers | ImageNet-1K(%) | CIFAR100(%) | CIFAR10(%) |

| 0 | 81.71 | 86.54 | 97.62 |

| 1 | 81.71(0.00) | 86.55(+0.01) | 97.61(-0.01) |

| 2 | 81.72(+0.01) | 86.55(+0.01) | 97.61(-0.01) |

| 3 | 81.72(+0.01) | 86.53(-0.01) | 97.61(-0.01) |

| 4 | 81.72(+0.01) | 86.55(+0.01) | 97.61(-0.01) |

| Layers | ImageNet-1K(%) | CIFAR100(%) | CIFAR10(%) |

| 0 | 77.16 | 85.70 | 97.46 |

| 1 | 77.16(0.00) | 85.68(-0.02) | 97.46(0.00) |

| 2 | 77.18(+0.02) | 85.75(+0.05) | 97.45(-0.01) |

| 3 | 77.18(+0.02) | 85.72(+0.02) | 97.47(+0.01) |

| 4 | 77.18(+0.02) | 85.68(-0.02) | 97.45(-0.01) |

| 5 | 77.20(+0.04) | 85.69(-0.01) | 97.45(-0.01) |

| 6 | 77.19(+0.03) | 85.70(0.00) | 97.47(+0.01) |

| 7 | 77.18(+0.02) | 85.68(-0.02) | 97.46(0.00) |

| 8 | 77.15(-0.01) | 85.72(+0.02) | 97.48(+0.02) |

| 9 | 77.17(+0.01) | 85.70(0.00) | 97.48(+0.02) |

| 10 | 77.11(-0.05) | 85.77(+0.07) | 97.59(+0.13) |

| Model | ANN Acc |

SIT-conversion Acc |

Conversion Loss |

Blocks | Params | FLOPs | Energy(mJ) |

| ImageNet-1K | |||||||

| EdgeNeXt | 79.42% | 79.18% | -0.24% | 3 | 5.59M | 1.26G | 3.19(-44.96%) |

| Next-ViT | 81.71% | 81.71% | 0.00% | 4 | 31.76M | 5.8G | 21.83(-18.18%) |

| UniFormer | 77.16% | 77.11% | -0.05% | 10 | 10.21M | 1.3G | 4.49(-24.92%) |

| CIFAR100 | |||||||

| EdgeNeXt | 85.40% | 85.37% | -0.03% | 3 | 5.59M | 1.26G | 3.22(-44.22%) |

| Next-ViT | 86.54% | 86.55% | +0.01% | 4 | 31.76M | 5.8G | 21.32(-20.09%) |

| UniFormer | 85.70% | 85.77% | +0.07% | 10 | 10.21M | 1.3G | 4.48(-25.08%) |

| CIFAR10 | |||||||

| EdgeNeXt | 97.69% | 97.69% | 0.00% | 3 | 5.59M | 1.26G | 3.18(-45.13%) |

| Next-ViT | 97.62% | 97.61% | -0.01% | 4 | 31.76M | 5.8G | 21.56(-19.19%) |

| UniFormer | 97.46% | 97.59% | +0.13% | 10 | 10.21M | 1.3G | 4.50(-24.75%) |

| Model | Method | Architecture | Time Steps | ACC(%) | Conversion Loss(%) |

| CIFAR10 | |||||

| Spikformer[18] | Direct Training | Spikformer-4-384 | 4 | 95.19 | / |

| Spikingformer[17] | Direct Training | Spikingformer-4-384 | 4 | 95.61 | / |

| SNM [38] | ANN-To-SNN | ResNet-18 | 128 | 95.19 | -0.02 |

| QCFS[39] | ANN-To-SNN | ResTNet-18 | 32 | 96.08 | +0.04 |

| MST[20] | ANN-To-SNN | Swin-T (BN) | 256 | 97.27 | -1.01 |

| SIT-EdgeNeXt (ours) | ANN-To-SNN | EdgeNeXt | 12 | 97.69 | 0.00 |

| SIT-Next-ViT (ours) | ANN-To-SNN | Next-ViT | 12 | 97.61 | -0.01 |

| SIT-UniFormer (ours) | ANN-To-SNN | Uniformer | 12 | 97.59 | +0.13 |

| CIFAR100 | |||||

| Spikformer[18] | Direct Training | Spikformer-4-384 | 4 | 77.86 | / |

| Spikingformer[17] | Direct Training | Spikingformer-4-384 | 4 | 79.09 | / |

| SNM [38] | ANN-To-SNN | ResNet-18 | 128 | 77.97 | -0.29 |

| QCFS[39] | ANN-To-SNN | RestNet-18 | 512 | 79.61 | +0.81 |

| MST[20] | ANN-To-SNN | Swin-T (BN) | 256 | 86.91 | -1.81 |

| SIT-EdgeNeXt (ours) | ANN-To-SNN | EdgeNeXt | 12 | 85.37 | -0.03 |

| SIT-Next-ViT (ours) | ANN-To-SNN | Next-ViT | 12 | 86.55 | +0.01 |

| SIT-UniFormer (ours) | ANN-To-SNN | Uniformer | 12 | 85.77 | +0.07 |

| ImageNet-1K | |||||

| Spikformer[18] | Direct Training | Spikformer-8-768 | 4 | 74.81 | / |

| Spikingformer[17] | Direct Training | Spikingformer-8-786 | 4 | 75.85 | / |

| SNM [38] | ANN-To-SNN | VGG16 | 1024 | 73.16 | -0.02 |

| QCFS[39] | ANN-To-SNN | RestNet-18 | 1024 | 74.32 | +0.03 |

| MST[20] | ANN-To-SNN | Swin-T (BN) | 512 | 78.51 | -2 |

| SIT-EdgeNeXt (ours) | ANN-To-SNN | EdgeNeXt | 12 | 79.18 | -0.24 |

| SIT-Next-ViT (ours) | ANN-To-SNN | Next-ViT | 12 | 81.71 | 0.00 |

| SIT-UniFormer (ours) | ANN-To-SNN | Uniformer | 12 | 77.11 | -0.05 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).