Submitted:

24 October 2024

Posted:

24 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background Study

2.1. Traditional Collaborative Filtering

2.2. Recurrent Neural Networks (RNN)

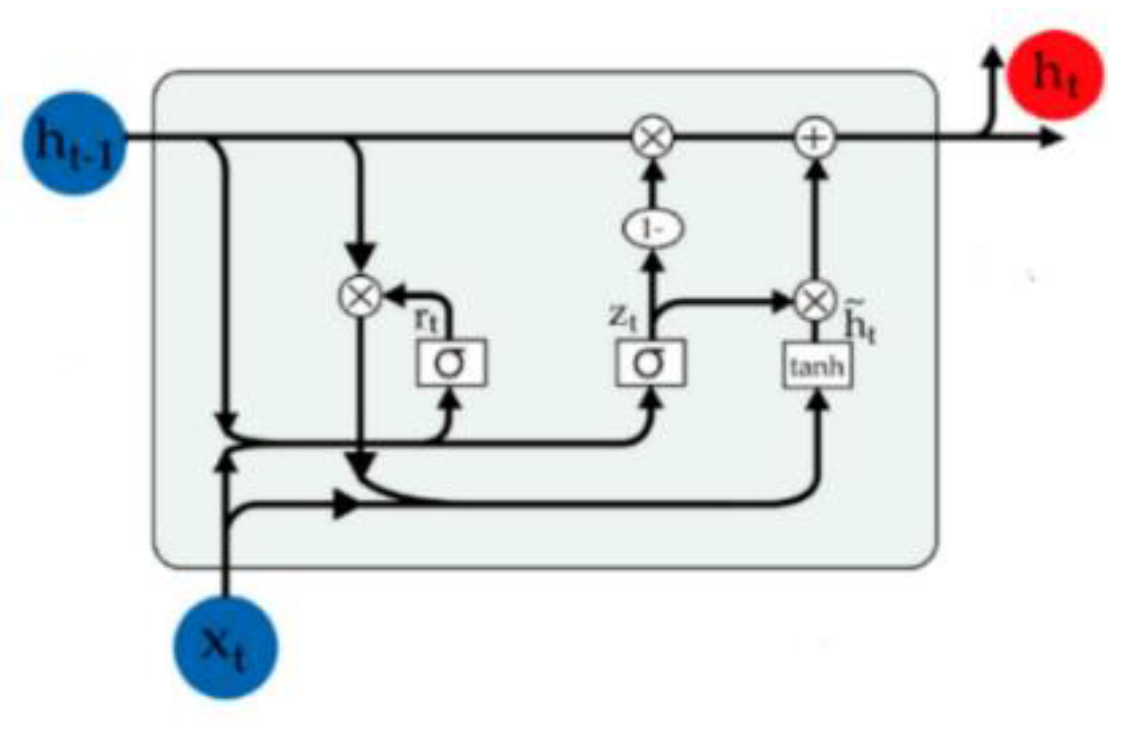

2.1.1. Gated Recurrent Unit (GRU)

3. Related Works

3.1. Beyond Matrix Factorization: Exploring New Avenues

3.2. Utilising User Reviews for Recommendations

3.3. Deep Learning and Recommendation Systems

4. Proposed Approach

4.1. Phase I: Deep Learning Bi-GRU Model

4.1.1. Data Pre-Processing

- Tokenization: The process of breaking down text into individual words or tokens is known as tokenization. This is accomplished by splitting the text based on whitespace and punctuation marks.

- Stop word removal: These are the common words that do not add much meaning to the text. Hence, these words are typically removed from the text to improve the efficiency of the analysis.

- Stemming: The process of reducing words to their base or root form is known as stemming. This is achieved by removing prefixes and suffixes.

- Lemmatization: Lemmatization is a process of grouping words that have the same base or root form, regardless of their inflection. This is done by considering the contextual meaning of words.

- Case normalization: Case normalization is the process of converting all words to either lowercase or uppercase. This is done to ensure that the text is consistent and well-formatted.

- Removal of extra white spaces: Extra white spaces are any additional spaces that are present in the text. These spaces are typically removed to improve the readability of the text.

- Removal of URLs: URLs are web addresses that are typically used to link to external websites. These URLs are typically removed from the text to improve the efficiency of the analysis.

- Removal of hashtags: Hashtags are used to tag text with keywords. These hashtags are typically removed from the text to improve the readability of the text.

- Removal of date and time references: To improve the efficiency of the analysis, Date and time references are typically removed from the text.

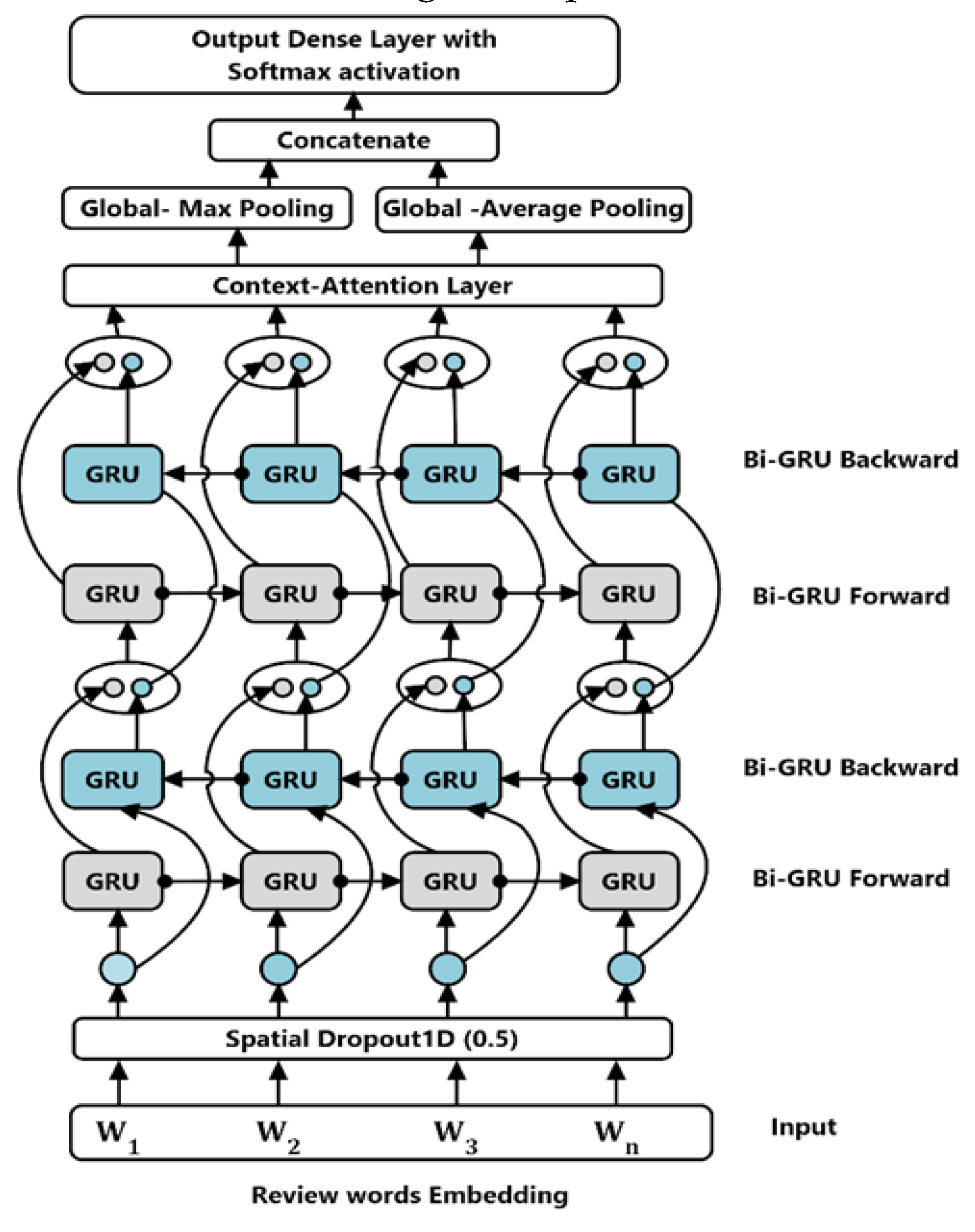

4.1.2. Bi-GRU Model

- Review Word Embedding Layer: The Word Embedding Layer is a crucial component of neural networks used for representing words as vectors. These vectors typically have dimensions ranging from 100 to 300 and are trained on extensive text corpora. By capturing the semantic meaning of words, these vectors significantly enhance the accuracy of natural language processing tasks. In the proposed CF system, the Review Word Embedding Layer plays a pivotal role. It accepts a fixed sequence of 200 words as input and transforms each word into its corresponding 300-dimensional GloVe word vector [37]. These embedding vectors are subsequently fed into the Bi-GRU layers, allowing them to learn the long-term dependencies at each specific time step present in the user review text.

- Drop-out layer: The embedded resulting word vectors can be encoded either with numerical values associated with the input words or by using one-hot vectors that is, vectors with only one position filled with a value of one and the rest filled with zeros. However, an optimized embedding matrix can cause overfitting, which can be prevented by using dropout on one-hot vectors [38]. The 1D of spatial dropout layer with a dropout rate of 0.5 plays a role in preventing the activation functions from becoming strongly correlated, leading to better generalization, and preventing overfitting of the model.

- Context Attention layer: The context attention layer is applied to more effectively predict the rating class of a given review since words do not equally contribute to indicating the importance of meaning or the message of the theme. The attention layer obtains such words that significantly demonstrate the meaning of a sentence. This model utilizes the attention mechanisms as in [39]. This mechanism initiates a context vector, which can be viewed as a fixed query, that helps to extract the informative words. The context vector is randomly initialized and jointly learned with the rest of the attention layer weights, formally: Let H be a matrix composed of output vectors that are produced by the last BiGRUlayer and is the length of the sentence. Context attention r of the sentence is determined by the weighted sum of the produced vectors as by Eqs. (11), (12) and (13),Where , is the dimension of the word vectors, is a trained parameter vector and is the transpose. Hence the dimension of is, separately. The output of this layer is calculated as by Eq (14),

- Concatenation layer: The concatenation layer performs global max pooling and global average pooling to generate one feature map for each class in the classification task. The use of global average pooling requires no parameter optimization, which helps prevent overfitting. Additionally, global average pooling ignores spatial information, making it more robust against spatial variations in the input data. After applying global max pooling and global average pooling to each Bi-GRU output, the resulting vectors are concatenated into a 1D array. Finally, this concatenated output is passed through a dense output layer with a sigmoid function to provide the rating prediction class.

4.2. Phase II: Recommender System

5. Experiments

5.1. Experiments Parameters Settings

5.2. Datasets

| Dataset | User # | Item # | Reviews# | Sparsity |

|---|---|---|---|---|

| Electronics | 1,92102 | 63,001 | 16,60038 | 98.62 |

| CDs &Vinyl | 7,5127 | 64,443 | 10,77845 | 97.77 |

| Movies &TV | 1,23633 | 50,052 | 16,65265 |

5.3. Evaluation Protocol and Metrics

5.3.1. Accuracy Metrics

5.3.2. Classification Metrics

5.4. Comparisons

- SVD: Singular Value Decomposition (SVD) is a sophisticated collaborative filtering (CF) technique that uses matrix factorization to transform users and items into a latent factor space. This method, widely recognized as a benchmark, effectively predicts ratings even for users with sparse data by leveraging the latent factors [26,44,45].

- NMFRS: Recommender Systems with Dynamic Bias (NMFRS) enhances traditional matrix factorization by incorporating non-negative updates and dynamic bias matrices, which improves interpretability and accuracy [25] . It focuses on probabilistic distributions and minimizes differences between observed and predicted ratings.

- Word2Vec: This approach captures semantic relationships between items from reviews, which helps alleviate data sparsity issues. It improves over traditional matrix factorization techniques by considering semantic relationships, although it primarily focuses on item relationships [27].

- RNN (LSTM): Recurrent Neural Network (RNN) architectures like Long Short-Term Memory (LSTM) are effective for sequential learning tasks involving textual data. They process and retain information over sequences, making them suitable for capturing user preferences in recommendation systems [31]

- CNN: Convolutional Neural Networks (CNNs) excel in extracting features from complex data, such as reviews or images. When integrated with CF techniques, they enhance the understanding of user preferences and item characteristics, leading to more accurate recommendations. Recent studies have demonstrated the potential of CNNs in improving CF methods for personalized recommendations [33].

5.5. Results and Discussion

5.5.1. Performance Comparison on Sparse Data

| Dataset | Sparsity# | Method | MAE | RMSE |

|---|---|---|---|---|

| Electronics | 98.62 | SVD | 0.88 | 1.22 |

| NMFRS | 0.94 | 1.25 | ||

| CNN | 1.72 | 2.27 | ||

| Word2Vec | 1.71 | 2.26 | ||

| MCNN | 1.71 | 2.26 | ||

| Bi-GRUCF | 0.37 | 0.81 | ||

| Movies &TV vedio | 97.77 | SVD | 0.78 | 1.08 |

| NMFRS | 0.79 | 1.07 | ||

| CNN | 2.21 | 2.86 | ||

| Word2Vec | 2.21 | 2.86 | ||

| CNN | 2.2 | 2.86 | ||

| Bi-GRUCF | 0.38 | 0.8 | ||

| CDs & Vinyl media | 97.3 | SVD | 0.71 | 1.01 |

| NMFRS | 0.84 | 1.14 | ||

| CNN | 1.86 | 1.82 | ||

| Word2Vec | 1.86 | 1.81 | ||

| CNN | 1.85 | 1.81 | ||

| Bi-GRUCF | 0.42 | 0.84 |

5.5.2. Evaluation Using Classification Accuracy Metrics

| Dataset | SVD | NMFRS | Word2Vec | RNN | CNN | BI-GRUCF |

|---|---|---|---|---|---|---|

| Electronics | 83.25 | 81.79 | 83.14 | 92.64 | 93.28 | 97.31 |

| Movies & TV | 87.16 | 81.88 | 87.05 | 90.7 | 92.39 | 96.82 |

| CDs & Vinyl | 84.71 | 82.28 | 84.6 | 90.89 | 92.65 | 95.95 |

| Dataset | SVD | NMFRS | Word2Vec | RNN | CNN | BI-GRUCF |

|---|---|---|---|---|---|---|

| Electronics | 84.84 | 83.52 | 84.73 | 93.11 | 93.44 | 95.04 |

| Movies & TV | 87.72 | 85.02 | 87.61 | 92.79 | 93.46 | 94.91 |

| CDs & Vinyl | 85.59 | 83.8 | 85.48 | 92.9 | 92.65 | 94.05 |

| Dataset | SVD | NMFRS | Word2Vec | RNN | CNN | BI-GRUCF |

|---|---|---|---|---|---|---|

| Electronics | 86.49 | 85.32 | 86.38 | 93.23 | 93.46 | 92.87 |

| Movies & TV | 88.28 | 88.41 | 87.05 | 93.62 | 93.56 | 93.08 |

| CDs & Vinyl | 86.49 | 85.37 | 85.48 | 92.41 | 92.4 | 92.23 |

| Symbol | Description | Category |

|---|---|---|

| R | User-item rating matrix | Variable |

| U | Set of registered users | Set |

| I | Set of all possible items | Set |

| u | User ID | Variable |

| i | Item ID | Variable |

| r_ui | Rating of item i by user u | Variable |

| N(u) | Neighborhood of user u (similar users) | Set |

| K | Number of nearest neighbors | Variable |

| pred_rui | Predicted rating of item i for user u | Variable |

| W | Weight matrix in Bi-GRU layer | Variable |

| b | Bias vector in Bi-GRU layer | Variable |

| H | Hidden state vector in Bi-GRU layer | Variable |

| x(t) | Input word vector at time step t | Variable |

| f | Forget gate function in GRU unit | Function |

| i | Input gate function in GRU unit | Function |

| o | Output gate function in GRU unit | Function |

| σ | Sigmoid activation function | Function |

| c(t) | Cell state vector in GRU unit at time step t | Variable |

| γ | Context vector in Attention layer | Variable |

| α(t) | Attention weight at time step t | Variable |

| φ | Embedding function (maps item/user ID to embedding vector) | Function |

6. Conclusions

Author Contributions

Acknowledgments

Conflicts of Interest

References

- X. Wang and S. Kadıoğlu, "Modeling uncertainty to improve personalized recommendations via Bayesian deep learning," International Journal of Data Science and Analytics, vol. 16, no. 2, pp. 191-201, 2023. [CrossRef]

- K. Wang, Y. Zhu, H. Liu, T. Zang, and C. Wang, "Learning aspect-aware high-order representations from ratings and reviews for recommendation," ACM Transactions on Knowledge Discovery from Data, vol. 17, no. 1, pp. 1-22, 2023. [CrossRef]

- Y. Li, K. Liu, R. Satapathy, S. Wang, and E. Cambria, "Recent developments in recommender systems: A survey," IEEE Computational Intelligence Magazine, vol. 19, no. 2, pp. 78-95, 2024. [CrossRef]

- M. Srifi, A. Oussous, A. Ait Lahcen, and S. Mouline, "Recommender systems based on collaborative filtering using review texts—a survey," Information, vol. 11, no. 6, p. 317, 2020. [CrossRef]

- U. A. Bhatti, H. Tang, G. Wu, S. Marjan, and A. Hussain, "Deep learning with graph convolutional networks: An overview and latest applications in computational intelligence," International Journal of Intelligent Systems, vol. 2023, pp. 1-28, 2023. [CrossRef]

- S.-H. Noh, "Analysis of gradient vanishing of RNNs and performance comparison," Information, vol. 12, no. 11, p. 442, 2021. [CrossRef]

- G. Van Houdt, C. Mosquera, and G. Nápoles, "A review on the long short-term memory model," Artificial Intelligence Review, vol. 53, no. 8, pp. 5929-5955, 2020. [CrossRef]

- H. Israr, S. A. Khan, M. A. Tahir, M. K. Shahzad, M. Ahmad, and J. M. Zain, "Neural Machine Translation Models with Attention-Based Dropout Layer," Computers, Materials & Continua, vol. 75, no. 2, 2023. [CrossRef]

- C. Zhang et al., "Multi-aspect enhanced graph neural networks for recommendation," Neural Networks, vol. 157, pp. 90-102, 2023. [CrossRef]

- K. Bi, Q. Ai, and W. B. Croft, "Learning a fine-grained review-based transformer model for personalized product search," in Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2021, pp. 123-132.

- C. Liu, T. Jin, S. C. H. Hoi, P. Zhao, and J. Sun, "Collaborative topic regression for online recommender systems: an online and Bayesian approach," Machine Learning, vol. 106, no. 5, pp. 651-670, 2017/05/01 2017. [CrossRef]

- Y. Bao, H. Fang, and J. Zhang, "Topicmf: simultaneously exploiting ratings and reviews for recommendation," presented at the Twenty-Eighth AAAI Conference on Artificial Intelligence, Québec City, Québec, Canada, 2014, 2-8.

- C. Gao et al., "A survey of graph neural networks for recommender systems: Challenges, methods, and directions," ACM Transactions on Recommender Systems, vol. 1, no. 1, pp. 1-51, 2023. [CrossRef]

- M. Ibrahim, I. S. Bajwa, N. Sarwar, F. Hajjej, and H. A. Sakr, "An intelligent hybrid neural collaborative filtering approach for true recommendations," IEEE Access, 2023. [CrossRef]

- V. R. Yannam, J. Kumar, K. S. Babu, and B. Sahoo, "Improving group recommendation using deep collaborative filtering approach," International Journal of Information Technology, vol. 15, no. 3, pp. 1489-1497, 2023. [CrossRef]

- N. Alharbe, M. A. Rakrouki, and A. Aljohani, "A collaborative filtering recommendation algorithm based on embedding representation," Expert Systems with Applications, vol. 215, p. 119380, 2023. [CrossRef]

- L. Huang, C.-R. Guan, Z.-W. Huang, Y. Gao, C.-D. Wang, and C. P. Chen, "Broad recommender system: An efficient nonlinear collaborative filtering approach," IEEE Transactions on Emerging Topics in Computational Intelligence, 2024. [CrossRef]

- F. Abbasi and A. Khadivar, "Collaborative filtering recommendation system through sentiment analysis," Turkish Journal of Computer and Mathematics Education, vol. 12, no. 14, pp. 1843-1853, 2021.

- G. Adomavicius and A. Tuzhilin, "Toward the Next Generation of Recommender Systems: A Survey of the State-of-the-Art and Possible Extensions," IEEE Trans. on Knowl. and Data Eng., vol. 17, no. 6, pp. 734-749, 2005. [CrossRef]

- M. Srifi, A. Oussous, A. A. Lahcen, and S. Mouline, "Recommender Systems Based on Collaborative Filtering Using Review Texts—A Survey," Information, vol. 11, no. 6, p. 317, 2020. [CrossRef]

- J. Schmidhuber, "Deep learning in neural networks: An overview," Neural Networks, vol. 61, pp. 85-117, 2015/01/01/ 2015,. [CrossRef]

- Y. Bengio, P. Simard, and P. Frasconi, "Learning long-term dependencies with gradient descent is difficult," IEEE transactions on neural networks, vol. 5, no. 2, pp. 157-166, 1994. [CrossRef]

- S. Hochreiter and J. Schmidhuber, "Long Short-Term Memory," Neural computation, vol. 9, no. 8, pp. 1735-1780, 1997. [CrossRef]

- K. Cho et al., "Learning phrase representations using RNN encoder-decoder for statistical machine translation," arXiv preprint arXiv:1406.1078, 2014. arXiv:1406.1078.

- W. Song and X. Li, "A Non-Negative Matrix Factorization for Recommender Systems Based on Dynamic Bias," in International Conference on Modeling Decisions for Artificial Intelligence, 2019: Springer, pp. 151-163.

- S. Wang, G. Sun, and Y. Li, "SVD++ Recommendation Algorithm Based on Backtracking," Information, vol. 11, no. 7, . [CrossRef]

- L. Vuong Nguyen, T.-H. Nguyen, J. J. Jung, and D. Camacho, "Extending collaborative filtering recommendation using word embedding: A hybrid approach," Concurrency and Computation: Practice and Experience, vol. 35, no. 16, p. e6232, 2023. [CrossRef]

- R. M. D'Addio and M. G. Manzato, "A sentiment-based item description approach for kNN collaborative filtering," presented at the Proceedings of the 30th Annual ACM Symposium on Applied Computing, Salamanca, Spain, 2015. [Online]. Available. [CrossRef]

- Ayman S. Ghabayen and Basem H. Ahmed, "Enhancing collaborative filtering recommendation using review text clustering," Jordanian Journal of Computers and Information Technology (JJCIT), vol. 7, no. 9, 2021. [CrossRef]

- . Zhang, L. Yao, A. Sun, and Y. Tay, "Deep learning based recommender system: A survey and new perspectives," ACM Computing Surveys, vol. 52, no. 1, pp. 1-38, 2019. [CrossRef]

- Nilla and E. Setiawan, "Film Recommendation System Using Content-Based Filtering and the Convolutional Neural Network (CNN) Classification Methods," Jurnal Ilmiah Teknik Elektro Komputer dan Informatika, vol. 10, p. 17, 02/12 2024. [CrossRef]

- D. Kim, C. Park, J. Oh, S. Lee, and H. Yu, "Convolutional matrix factorization for document context-aware recommendation," in Proceedings of the 10th ACM conference on recommender systems, 2016, pp. 233-240. [CrossRef]

- L. Zheng, V. Noroozi, and P. S. Yu, "Joint deep modeling of users and items using reviews for recommendation," in Proceedings of the Tenth ACM International Conference on Web Search and Data Mining, Cambridge, United Kingdom, 2017, in WSDM '17, pp. 425-434. [CrossRef]

- R.-C. Chen and Hendry, "User Rating Classification via Deep Belief Network Learning and Sentiment Analysis," IEEE Transactions on Computational Social Systems, vol. 6, no. 3, pp. 535-546, 2019. [CrossRef]

- B. H. Ahmed and A. S. Ghabayen, "Review rating prediction framework using deep learning," Journal of Ambient Intelligence and Humanized Computing, vol. 13, no. 7, pp. 3423-3432, 2022/07/01 2022. [CrossRef]

- J. H. Wang, M. Norouzi, and S. M. Tsai, "Multimodal Content Veracity Assessment with Bidirectional Transformers and Self-Attention-based Bi-GRU Networks," in 2022 IEEE Eighth International Conference on Multimedia Big Data (BigMM), 5-7 Dec. 2022 2022, pp. 133-137. [CrossRef]

- J. Pennington, R. Socher, and C. D. Manning, "Glove: Global vectors for word representation," in Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP), 2014, pp. 1532-1543.

- Y. Gal and Z. Ghahramani, "A theoretically grounded application of dropout in recurrent neural networks," presented at the Proceedings of the 30th International Conference on Neural Information Processing Systems, Barcelona,Spain, 2016.

- P. Zhou et al., "Attention-based bidirectional long short-term memory networks for relation classification," in Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Berlin, Germany, 2016: Association for Computational Linguistics, pp. 207-212. [CrossRef]

- J. McAuley, 2021, "Recommender Systems and Personalization Datasets," [Online]. Available online: http://jmcauley.ucsd.edu/data/amazon/.

- J. Ni, J. Li, and J. McAuley, "Justifying recommendations using distantly-labeled reviews and fine-grained aspects," in Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), Hong Kong, China, 2019: Association for Computational Linguistics, pp. 188-197. [CrossRef]

- M. R. McLaughlin and J. L. Herlocker, "A collaborative filtering algorithm and evaluation metric that accurately model the user experience," presented at the Proceedings of the 27th annual international ACM SIGIR conference on Research and development in information retrieval, Sheffield, United Kingdom, 2004. [Online]. Available. [CrossRef]

- N. Good et al., "Combining collaborative filtering with personal agents for better recommendations," presented at the Proceedings of the sixteenth national conference on Artificial intelligence and the eleventh Innovative applications of artificial intelligence conference, Orlando, Florida, USA, 1999.

- B. Sarwar, G. Karypis, J. Konstan, and J. Riedl, "Application of dimensionality reduction in recommender system-a case study," Minnesota University Minneapolis Department of Computer Science, In Proceedings of the ACM WebKDD 2000 Web Mining for E-Commerce Workshop, 2000.

- P. Symeonidis and A. Zioupos, Matrix and Tensor Factorization Techniques for Recommender Systems. Springer International Publishing, 2016.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).