Submitted:

18 October 2024

Posted:

22 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Dataset

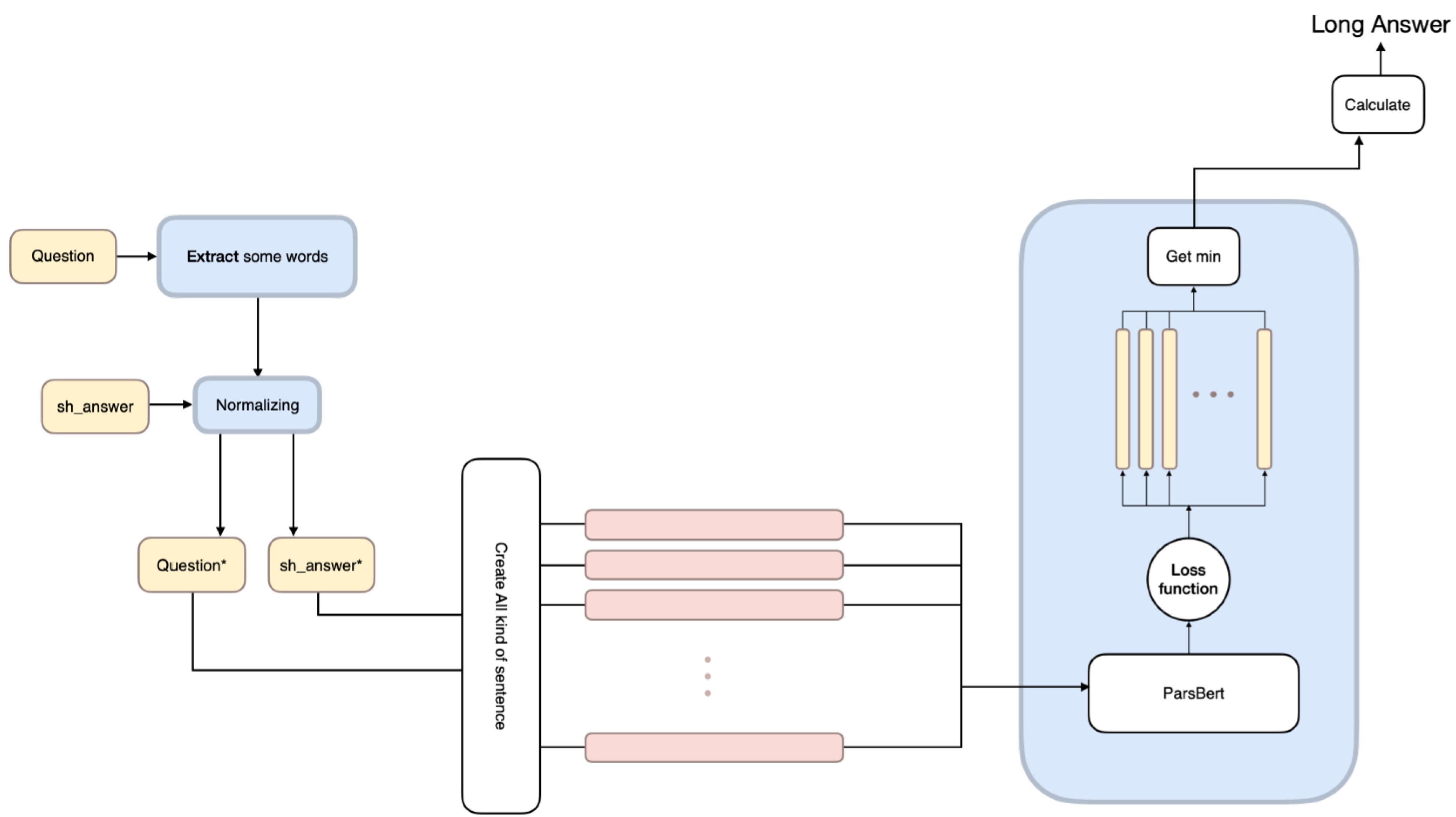

2.1. Model Workflow for Dataset Generation

2.2. Model Structure

- Removing extra particles from the words in the question. In the Persian language, the letter ی is used in certain cases. It could be attached to the end of a word and serves various purposes. In questions, the word چه, which means “what”, is co-located with a word that has that letter attached to the end of it. However, in the statement, this particle should be removed for better readability and clarity.

-

Removing question words. Naturally, to create a complete answer from the question text, the question words need to be removed from the question text. These words include

- چند

- چندم

- چندمين

- کجا

- کی

- چگونه

- چرا

- آيا

- چی

- کدام

- چه

- چطور

- Converting words from plural to singular. In some cases, the Persian language uses singular forms of words in the answers, contrary to the structure of the questions. This situation occurs in questions that contain the phrase "Which one." The following method is used to convert words from plural to singular: If the word after the phrase is in plural form, it is converted to singular form.Table 1. Processed transformation of question text by removing redundant parts.

Question Question (after processing) چه تيمی قهرمان شد؟ چه تيم قهرمان شد؟ چه کشوری بزرگترين کشور است؟ چه کشور بزرگترين کشور است؟ چه غذايی در يخچال است؟ چه غذا در يخچال است؟

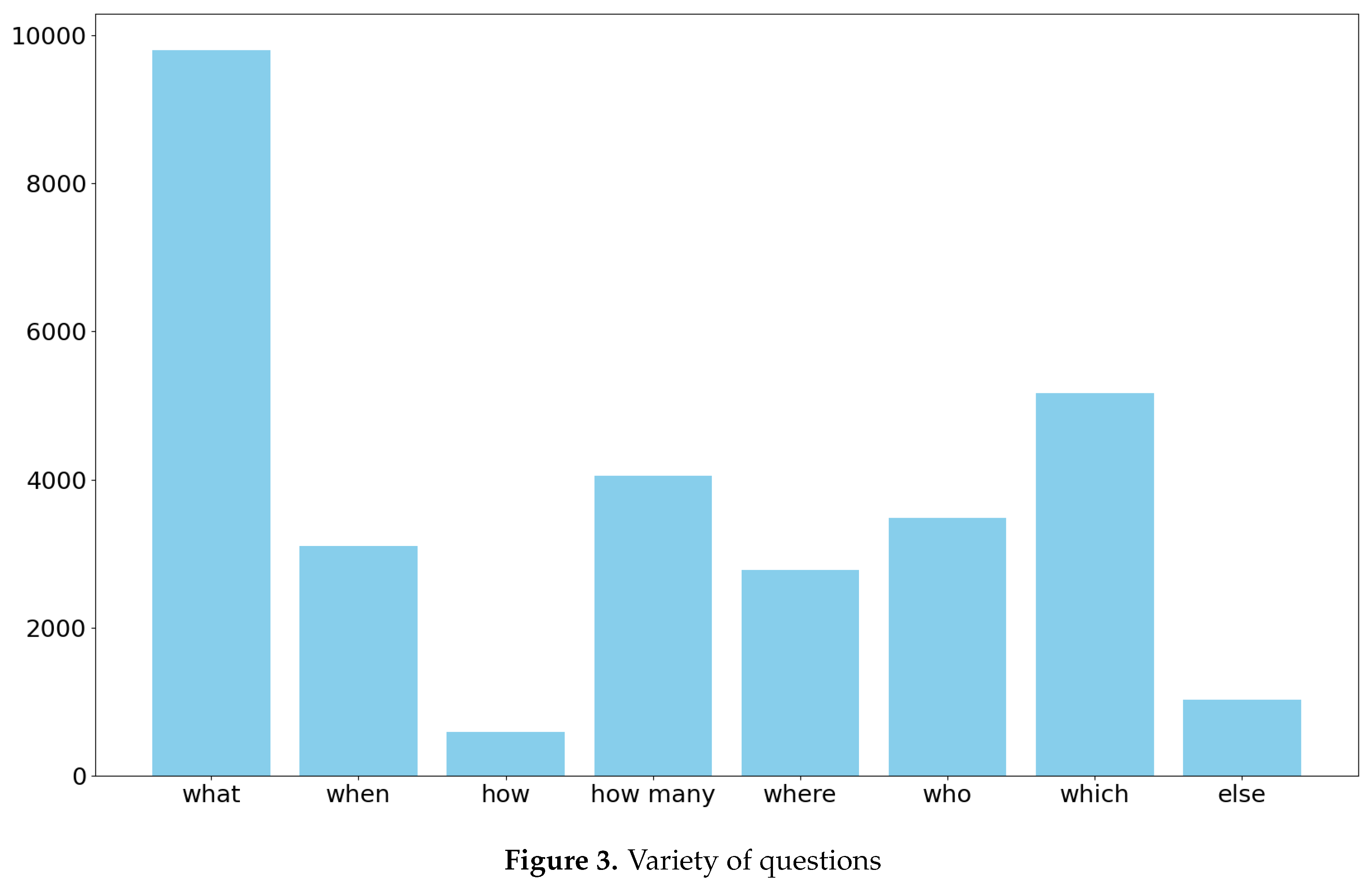

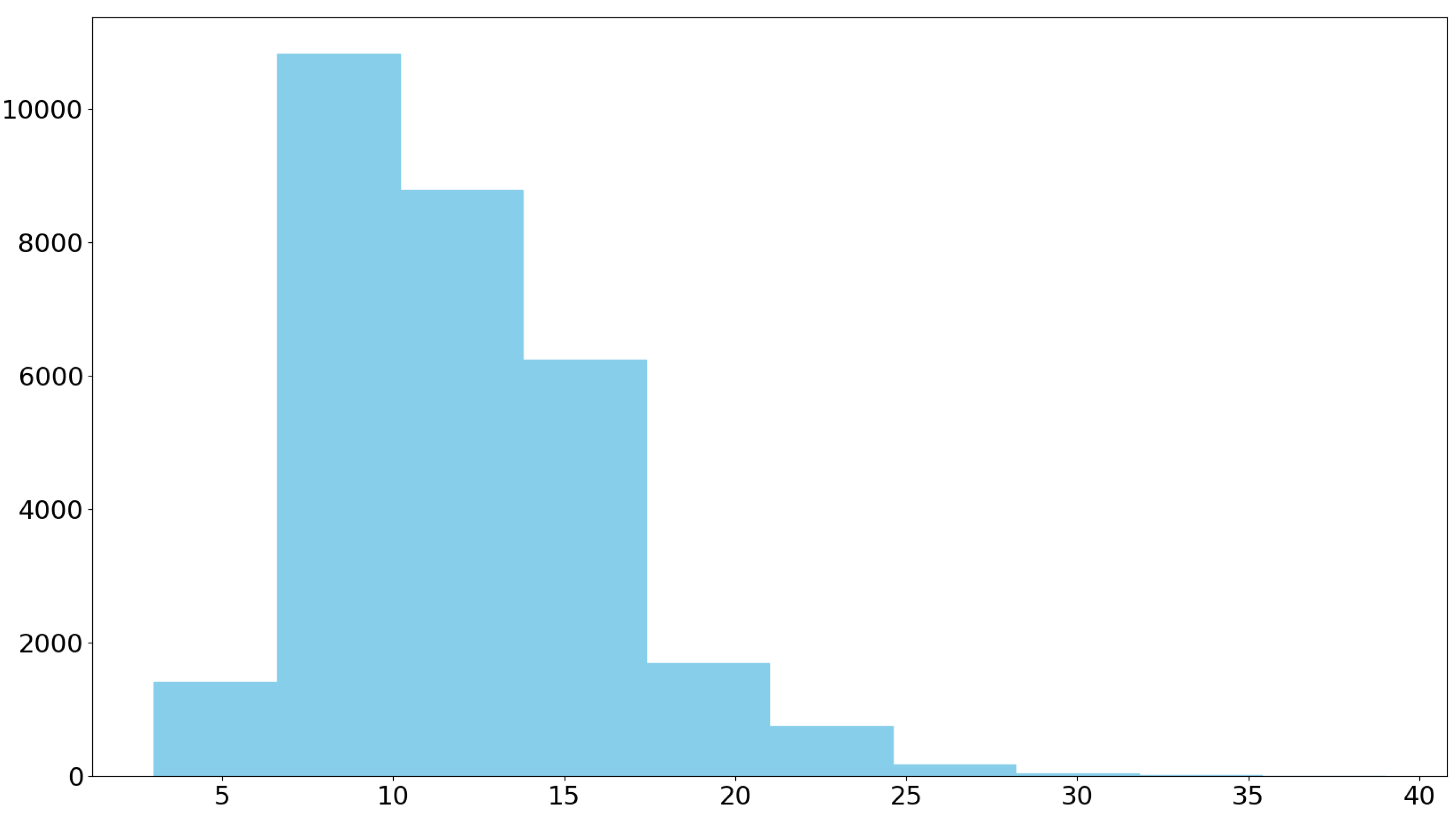

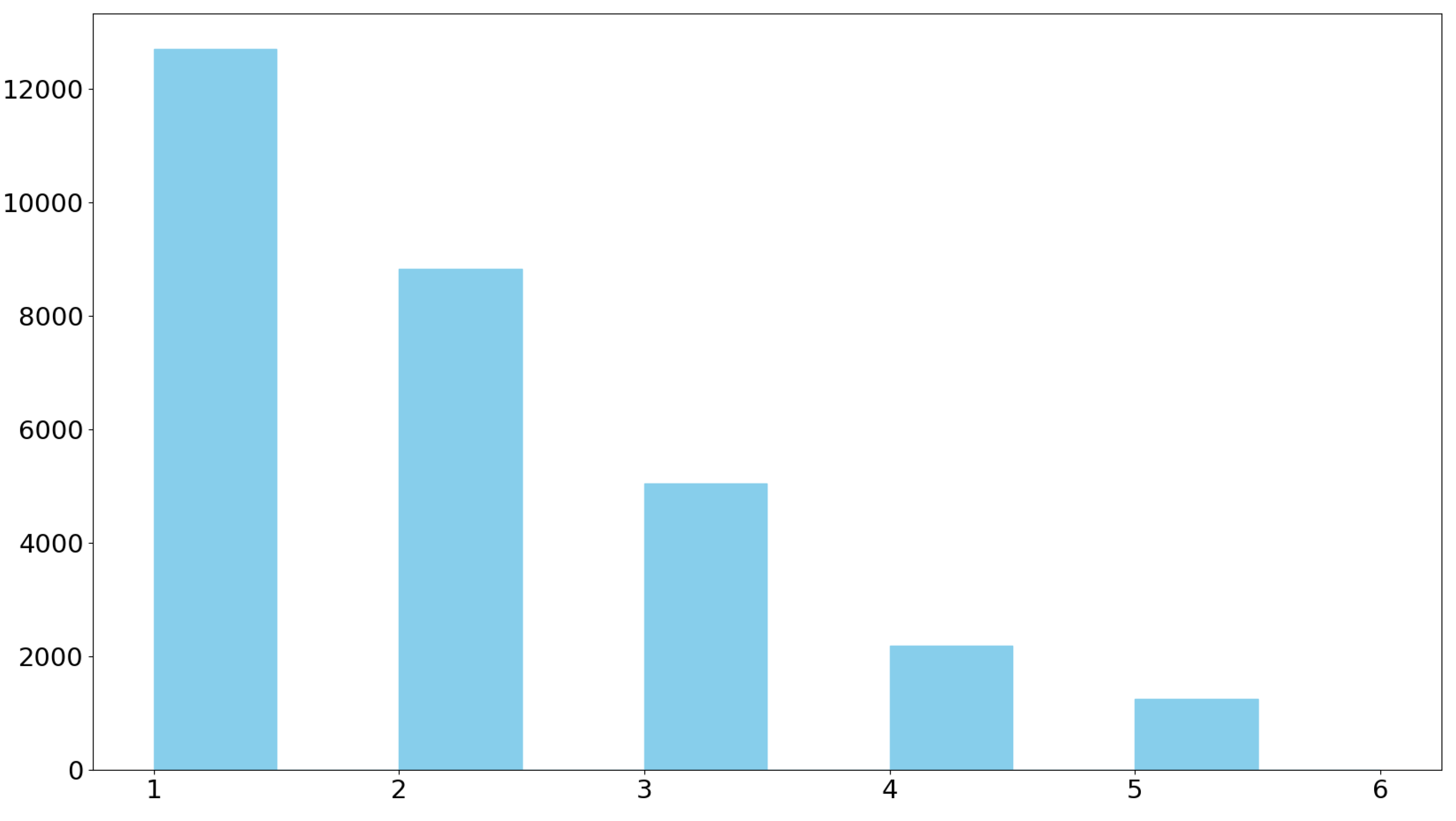

2.3. Statistics

3. Model

3.1. Why to Create Model?

- How much of

- How many of

- Which one of

- How many times

- What year

3.2. Model Setup

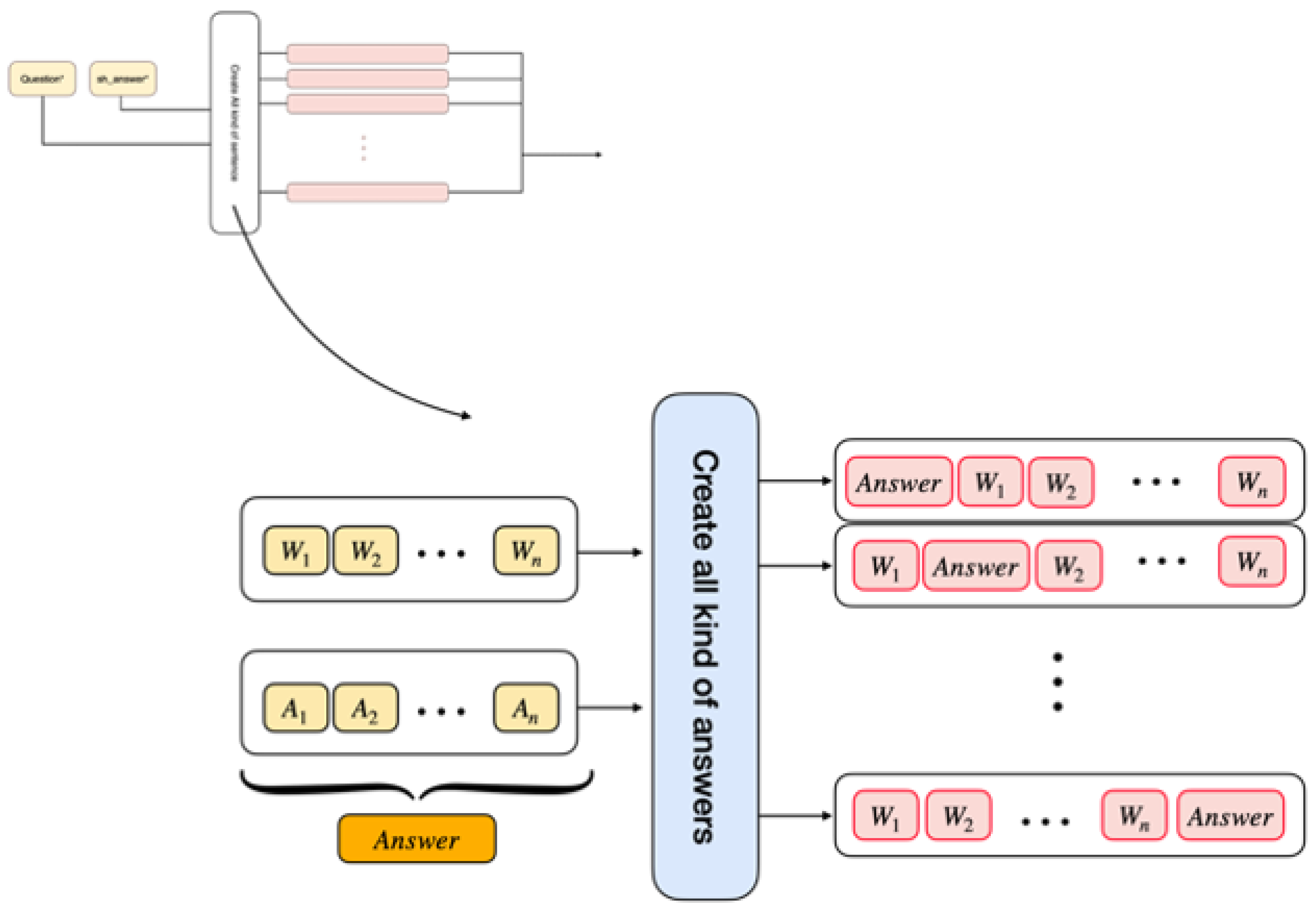

3.3. How to Integrate Inputs

4. Results

5. Applications

6. Suggestions

7. Conclusions

References

- Mervin, R. An overview of question answering system. International Journal Of Research In Advance Technology In Engineering (IJRATE) 2013, 1, 11–14. [Google Scholar]

- Kodra, L.; Meçe, E.K. Question answering systems: A review on present developments, challenges and trends. International Journal of Advanced Computer Science and Applications 2017, 8, 217–224. [Google Scholar] [CrossRef]

- Kwiatkowski, T.; Palomaki, J.; Redfield, O.; Collins, M.; Parikh, A.; Alberti, C.; Epstein, D.; Polosukhin, I.; Devlin, J.; Lee, K.; others. Natural questions: a benchmark for question answering research. Transactions of the Association for Computational Linguistics 2019, 7, 453–466. [Google Scholar] [CrossRef]

- Qiao, T.; Dong, J.; Xu, D. Exploring human-like attention supervision in visual question answering. Proceedings of the AAAI conference on artificial intelligence, 2018, Vol. 32.

- Magueresse, A.; Carles, V.; Heetderks, E. Low-resource languages: A review of past work and future challenges. arXiv 2020, arXiv:2006.07264. [Google Scholar]

- Yin, J.; Chen, Z.; Zhou, K.; Yu, C. A deep learning based chatbot for campus psychological therapy. arXiv 2019, arXiv:1910.06707. [Google Scholar]

- Waghmare, C.; Waghmare, C. Deploy chatbots in your business. Introducing Azure Bot Service: Building Bots for Business 2019, pp. 31–60. [CrossRef]

- Dharwadkar, R.; Deshpande, N.A. A medical chatbot. International Journal of Computer Trends and Technology (IJCTT) 2018, 60, 41–45. [Google Scholar] [CrossRef]

- Rajpurkar, P.; Zhang, J.; Lopyrev, K.; Liang, P. Squad: 100,000+ questions for machine comprehension of text. arXiv 2016, arXiv:1606.05250. [Google Scholar]

- Clark, C.; Lee, K.; Chang, M.W.; Kwiatkowski, T.; Collins, M.; Toutanova, K. BoolQ: Exploring the surprising difficulty of natural yes/no questions. arXiv 2019, arXiv:1905.10044. [Google Scholar]

- Joshi, M.; Choi, E.; Weld, D.S.; Zettlemoyer, L. Triviaqa: A large scale distantly supervised challenge dataset for reading comprehension. arXiv 2017, arXiv:1705.03551. [Google Scholar]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; others. Training language models to follow instructions with human feedback. Advances in neural information processing systems 2022, 35, 27730–27744. [Google Scholar]

- Kutalev, A.; Markoff, S. Investigating on RLHF methodology. arXiv 2024, arXiv:2410.01789. [Google Scholar]

- Sheng, G.; Zhang, C.; Ye, Z.; Wu, X.; Zhang, W.; Zhang, R.; Peng, Y.; Lin, H.; Wu, C. HybridFlow: A Flexible and Efficient RLHF Framework. arXiv 2024, arXiv:2409.19256. [Google Scholar]

- Yasunaga, M.; Ren, H.; Bosselut, A.; Liang, P.; Leskovec, J. QA-GNN: Reasoning with language models and knowledge graphs for question answering. arXiv 2021, arXiv:2104.06378. [Google Scholar]

- Yadav, V.; Sharp, R.; Surdeanu, M. Sanity check: A strong alignment and information retrieval baseline for question answering. The 41st International ACM SIGIR Conference on Research & Development in Information Retrieval, 2018, pp. 1217–1220.

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; others. Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems 2022, 35, 24824–24837. [Google Scholar]

- Qi, P.; Zhang, Y.; Zhang, Y.; Bolton, J.; Manning, C.D. Stanza: A Python natural language processing toolkit for many human languages. arXiv 2020, arXiv:2003.07082. [Google Scholar]

- Ayoubi, Sajjad & Davoodeh, M.Y. PersianQA: a dataset for Persian Question Answering. https://github.com/SajjjadAyobi/PersianQA, 2021.

- Abadani, N.; Mozafari, J.; Fatemi, A.; Nematbakhsh, M.; Kazemi, A. Parsquad: Persian question answering dataset based on machine translation of squad 2.0. International Journal of Web Research 2021, 4, 34–46. [Google Scholar]

- Darvishi, K.; Shahbodaghkhan, N.; Abbasiantaeb, Z.; Momtazi, S. PQuAD: A Persian question answering dataset. Computer Speech & Language 2023, 80, 101486. [Google Scholar]

- Farahani, M.; Gharachorloo, M.; Farahani, M.; Manthouri, M. Parsbert: Transformer-based model for Persian language understanding. Neural Processing Letters 2021. [Google Scholar] [CrossRef]

- Xue, L.; Constant, N.; Roberts, A.; Kale, M.; Al-Rfou, R.; Siddhant, A.; Barua, A.; Raffel, C. mT5: A massively multilingual pre-trained text-to-text transformer. arXiv 2020, arXiv:2010.11934. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. arXiv 2017, arXiv:1711.05101. [Google Scholar]

- Graves, A. Sequence transduction with recurrent neural networks. arXiv 2012, arXiv:1211.3711. [Google Scholar]

- Abbasi, M.A.; Ghafouri, A.; Firouzmandi, M.; Naderi, H.; Bidgoli, B.M. PersianLLaMA: Towards Building First Persian Large Language Model. arXiv 2023, arXiv:2312.15713. [Google Scholar]

| Integration Method | Loss (after 5 epochs) |

|---|---|

| 0.127 | |

| 0.103 | |

| 0.109 |

| BLEU | METEOR | ROUGE | |||||

|---|---|---|---|---|---|---|---|

| BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | ROUGE-1 | ROUGE-2 | ROUGE-L | |

| 0.823 | 0.745 | 0.672 | 0.612 | 0.911 | 0.850 | 0.800 | 0.872 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).