Submitted:

21 October 2024

Posted:

22 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Drug Development and Federal Learning

2.2. Key Features of Federated Learning Include

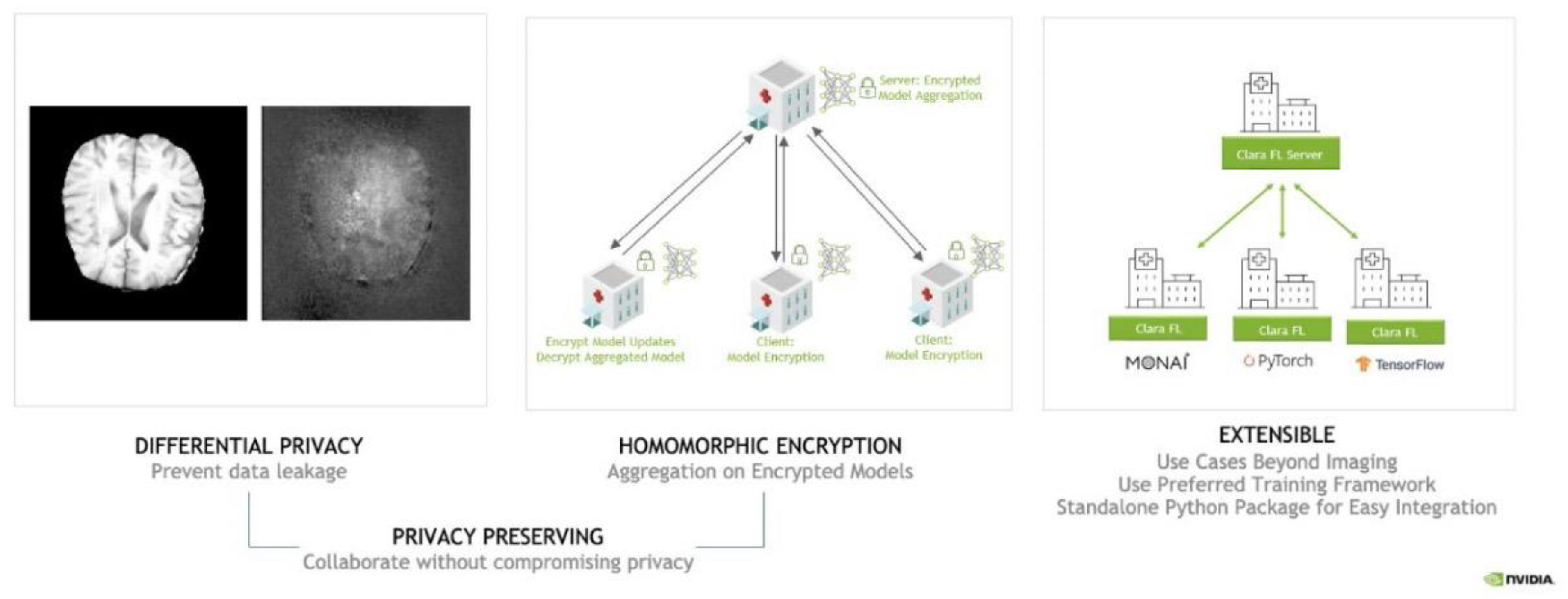

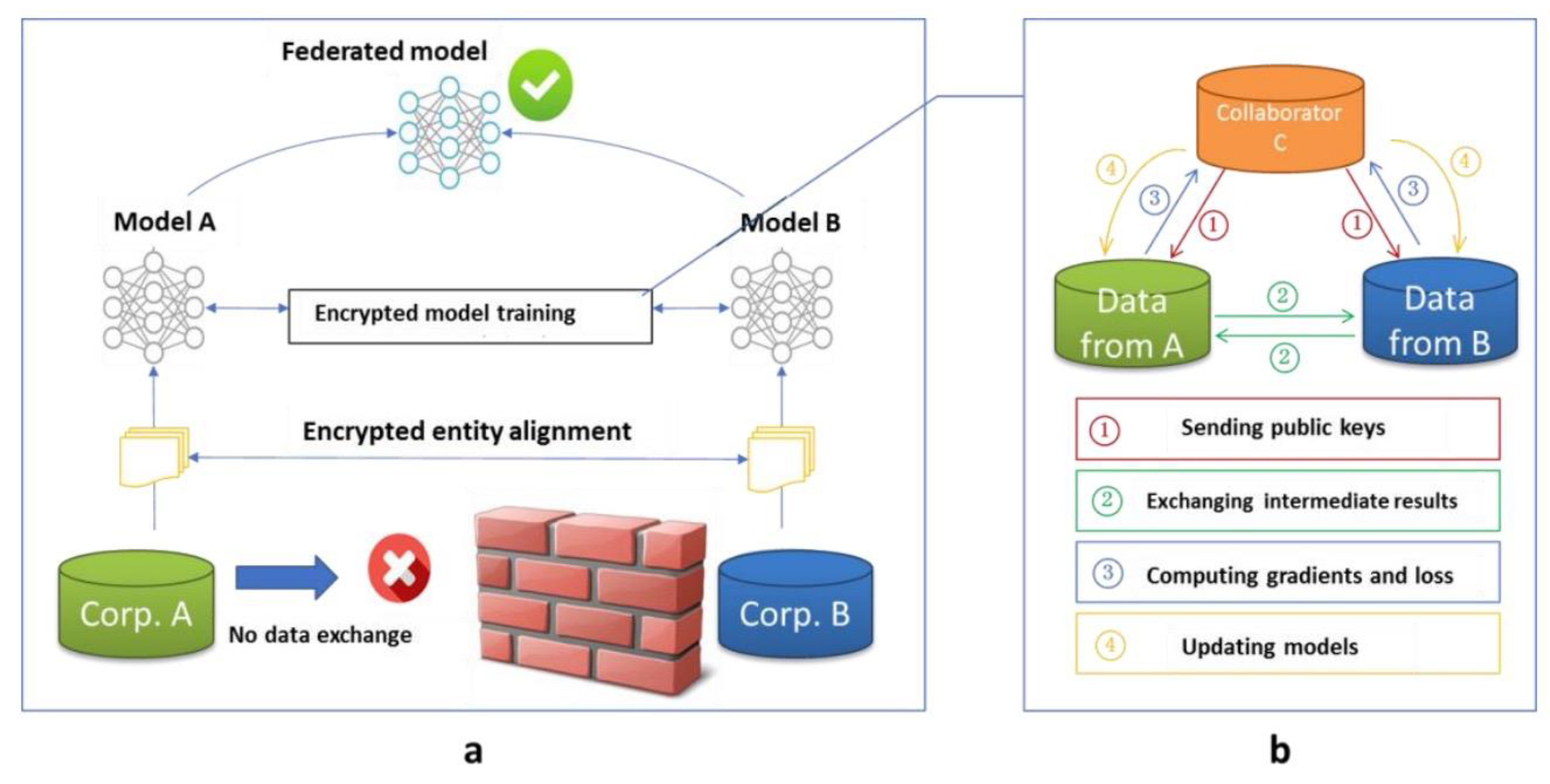

2.3. Federal Learning Integration for Patient Data Privacy

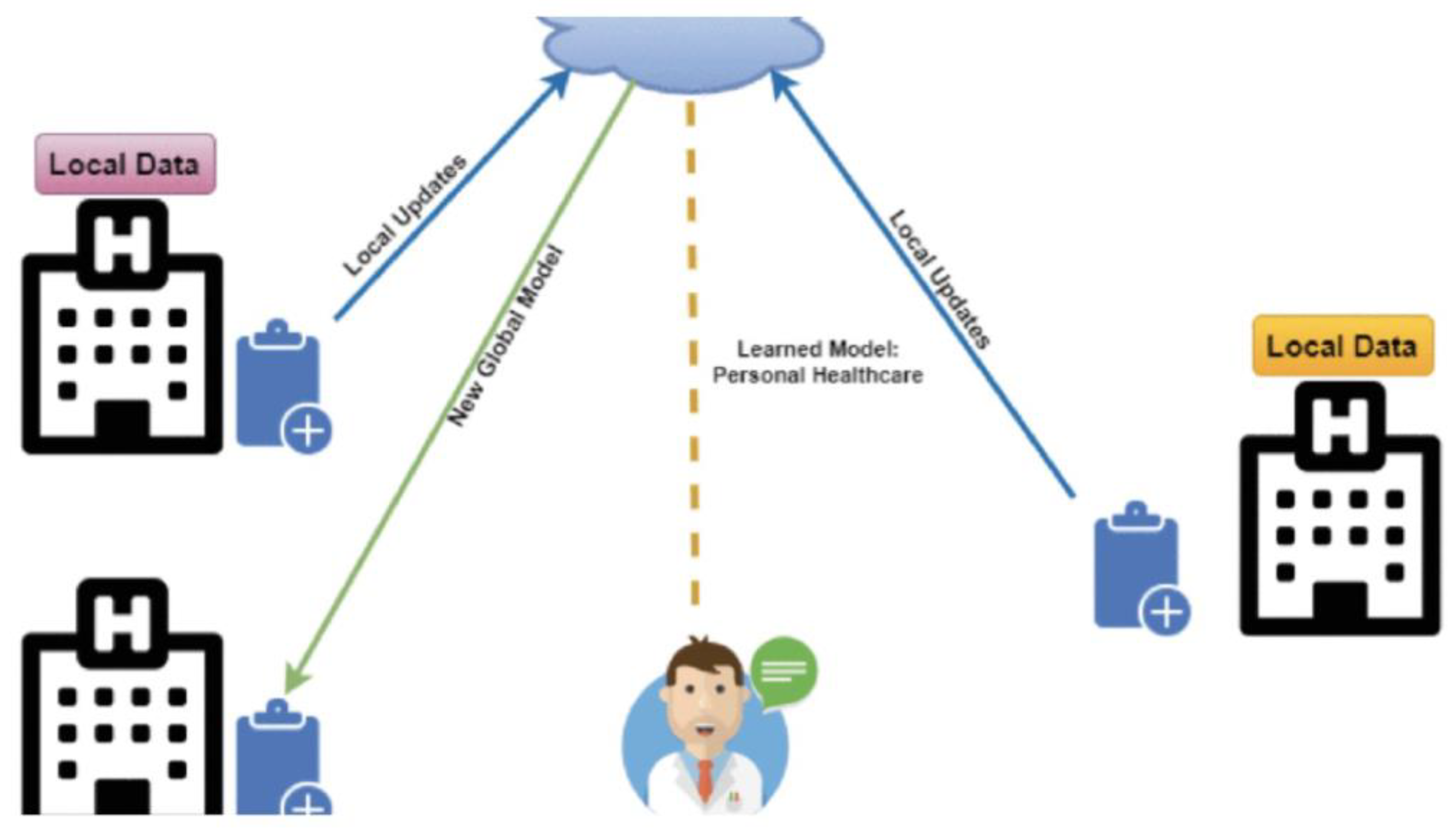

2.4. How Federated Learning (FL) Works in Healthcare

3. Methodology

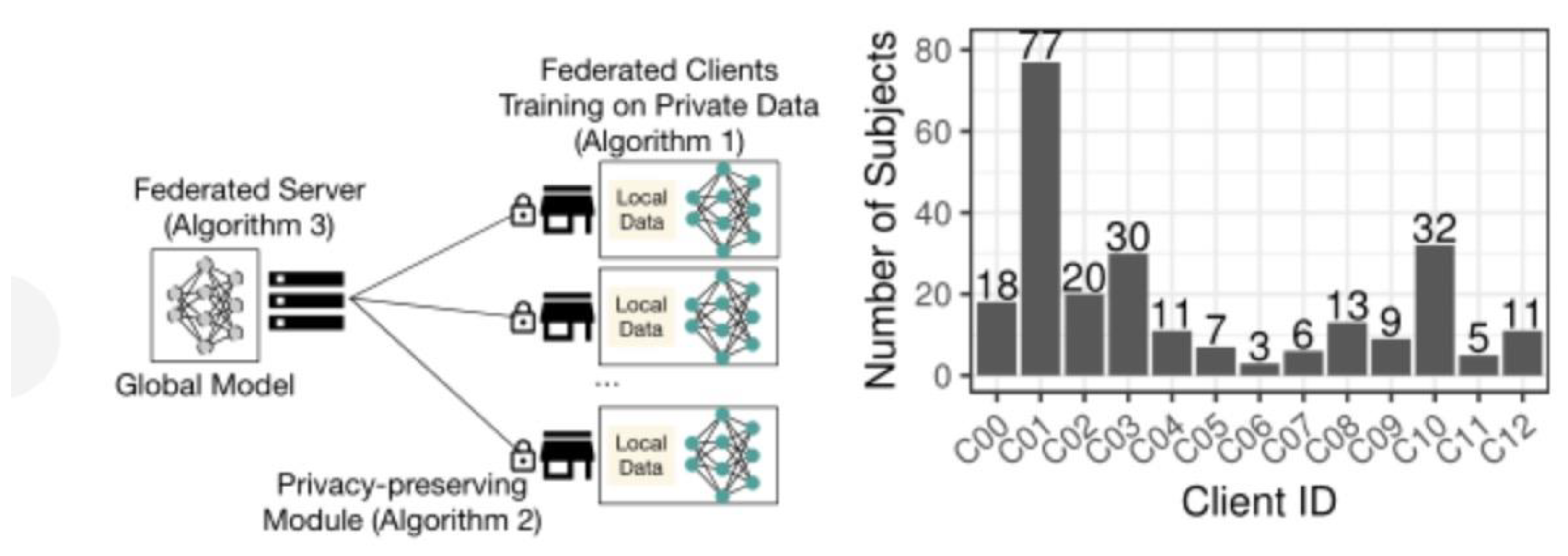

3.1. Federated Learning Framework

3.2. Patient Data Model Training Process

3.3. Patient Data Privacy Protection Model

3.4. Server Data Model Aggregation

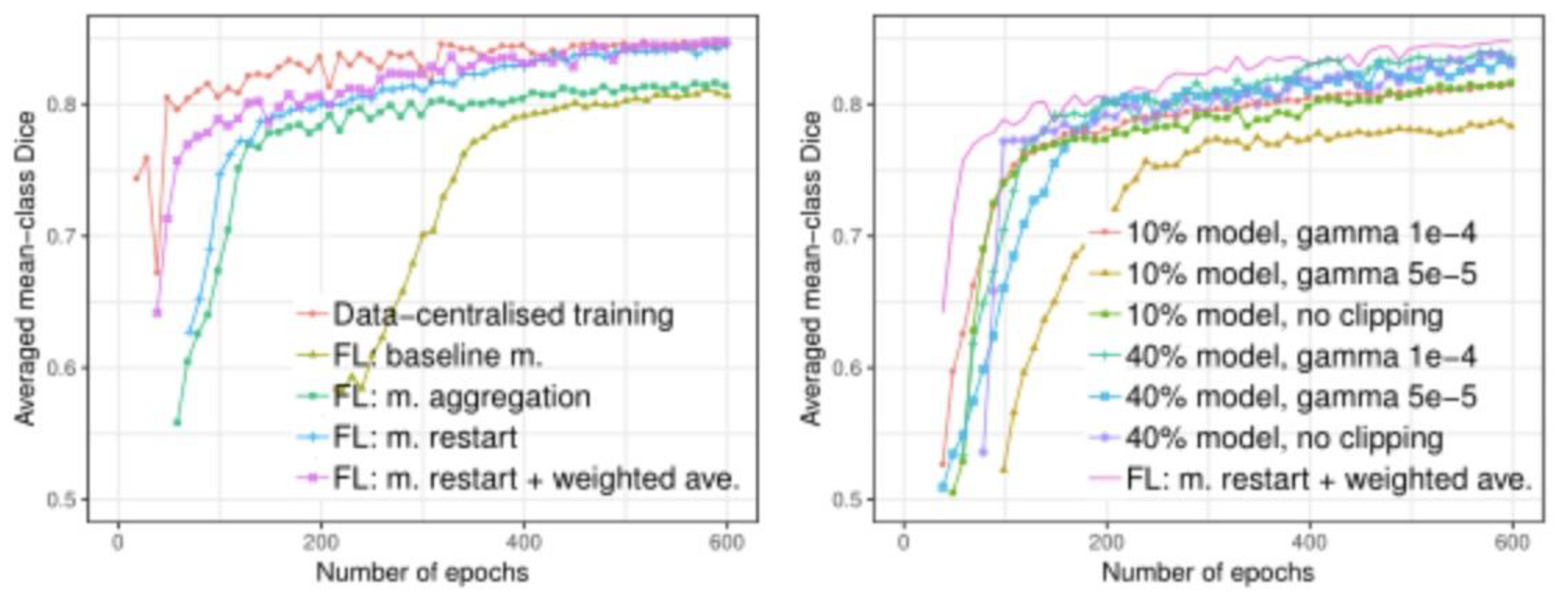

3.5. Experimental Data

3.6. Experimental Result

4. Conclusions and Discussion

References

- Bakas, S. , et al.: Identifying the best machine learning algorithms for brain tumor segmentation, progression assessment, and overall survival prediction in the BRATS challenge. arXiv:1811.02629 (2018).

- Hitaj, B. , Ateniese, G., Perez-Cruz, F.: Deep models under the GAN: information leakage from collaborative deep learning. In: SIGSAC. pp. 603–618. ACM (2017).

- Kingma, D.P. , Ba, J.: Adam: A method for stochastic optimization. arXiv:1412.6980.

- Li, L. , Fan, Y. , Tse, M., & Lin, K. Y. A review of applications in federated learning. Computers & Industrial Engineering 2020, 149, 106854. [Google Scholar] [CrossRef]

- Geyer, R.C. , Klein, T., Nabi, M.: Differentially private federated learning: A client level perspective. arXiv:1712.07557 (2017).

- Truex, S.; , Baracaldo, N.; Anwar, A.; Steinke, T.; Ludwig, H.; Zhang, R.; Zhou, Y. A hybrid approach to privacy-preserving federated learning. In Proceedings of the 12th ACM workshop on artificial intelligence and security, November 2019; pp. 1–11.

- Xu, K. , Zhou, H., Zheng, H., Zhu, M., & Xin, Q. (2024). Intelligent Classification and Personalized Recommendation of E-commerce Products Based on Machine Learning. arXiv:2403.19345.

- Xu, K. , Zheng, H., Zhan, X., Zhou, S., & Niu, K. (2024). Evaluation and Optimization of Intelligent Recommendation System Performance with Cloud Resource Automation Compatibility. [CrossRef]

- Zheng, H. , Xu, K. , Zhou, H., Wang, Y., & Su, G. Medication Recommendation System Based on Natural Language Processing for Patient Emotion Analysis. Academic Journal of Science and Technology 2024, 10, 62–68. [Google Scholar] [CrossRef]

- Zheng, H.; Wu, J.; Song, R.; Guo, L.; Xu, Z. Predicting Financial Enterprise Stocks and Economic Data Trends Using Machine Learning Time Series Analysis. Applied and Computational Engineering 2024, 87, 26–32. [Google Scholar] [CrossRef]

- El Ouadrhiri, A. , & Abdelhadi, A. Differential privacy for deep and federated learning: A survey. IEEE access 2022, 10, 22359–22380. [Google Scholar] [CrossRef]

- Xu, R. , Baracaldo, N. , Zhou, Y., Anwar, A., & Ludwig, H. Hybridalpha: An efficient approach for privacy-preserving federated learning. In Proceedings of the 12th ACM workshop on artificial intelligence and security (pp. 13-23)., November 2019. [Google Scholar]

- Li, J. , Wang, Y. , Xu, C., Liu, S., Dai, J., & Lan, K. Bioplastic derived from corn stover: Life cycle assessment and artificial intelligence-based analysis of uncertainty and variability. Science of The Total Environment 2024, 946, 174349. [Google Scholar] [CrossRef]

- Xiao, J. , Wang, J. , Bao, W., Deng, T., & Bi, S. Application progress of natural language processing technology in financial research. Financial Engineering and Risk Management 2024, 7, 155–161. [Google Scholar] [CrossRef]

- Truong, N. , Sun, K. , Wang, S., Guitton, F., & Guo, Y. Privacy preservation in federated learning: An insightful survey from the GDPR perspective. Computers & Security 2021, 110, 102402. [Google Scholar] [CrossRef]

- Mo, F., Haddadi, H, Katevas; K., Marin, E., Perino, D., & Kourtellis, June). PPFL: Privacy-preserving federated learning with trusted execution environments. In Proceedings of the 19th annual international conference on mobile systems, applications, and services, June 2021; pp. 94–108.

- Xu, K. , Zhou, H., Zheng, H., Zhu, M., & Xin, Q. (2024). Intelligent Classification and Personalized Recommendation of E-commerce Products Based on Machine Learning. arXiv:2403.19345.

- Xu, K. , Zheng, H., Zhan, X., Zhou, S., & Niu, K. (2024). Evaluation and Optimization of Intelligent Recommendation System Performance with Cloud Resource Automation Compatibility. Appl. Comput. Eng. 2024, 87, 228–233. [Google Scholar] [CrossRef]

- Zheng, H. , Xu, K. , Zhou, H., Wang, Y., & Su, G. Medication Recommendation System Based on Natural Language Processing for Patient Emotion Analysis. Academic Journal of Science and Technology 2024, 10, 62–68. [Google Scholar] [CrossRef]

- Zheng, H.; Wu, J.; Song, R.; Guo, L.; Xu, Z. Predicting Financial Enterprise Stocks and Economic Data Trends Using Machine Learning Time Series Analysis. Applied and Computational Engineering 2024, 87, 26–32. [Google Scholar] [CrossRef]

- Liang, P. , Song, B. , Zhan, X., Chen, Z., & Yuan, J. Automating the training and deployment of models in MLOps by integrating systems with machine learning. Applied and Computational Engineering 2024, 67, 1–7. [Google Scholar] [CrossRef]

- Wu, B. , Gong, Y. , Zheng, H., Zhang, Y., Huang, J., & Xu, J. Enterprise cloud resource optimization and management based on cloud operations. Applied and Computational Engineering 2024, 67, 8–14. [Google Scholar] [CrossRef]

- Liu, B. , & Zhang, Y. Implementation of seamless assistance with Google Assistant leveraging cloud computing. Journal of Cloud Computing 2023, 12, 1–15. [Google Scholar] [CrossRef]

- Zhang, M. , Yuan, B. , Li, H., & Xu, K. LLM-Cloud Complete: Leveraging Cloud Computing for Efficient Large Language Model-based Code Completion. Journal of Artificial Intelligence General science (JAIGS) ISSN: 3006-4023 2024, 5, 295–326. [Google Scholar] [CrossRef]

- Li, P., Hua, Y., Cao, Q., Zhang, M. Improving the Restore Performance via Physical-Locality Middleware for Backup Systems. In Proceedings of the 21st International Middleware Conference, December 2020; pp. 341–355.

- Zhou, S. , Yuan, B., Xu, K., Zhang, M., & Zheng, W. THE IMPACT OF PRICING SCHEMES ON CLOUD COMPUTING AND DISTRIBUTED SYSTEMS. Journal of Knowledge Learning and Science Technology ISSN: 2959-6386 (online) 2024, 3, 193–205. [Google Scholar] [CrossRef]

- Adnan, M. , Kalra, S. , Cresswell, J. C., Taylor, G. W., & Tizhoosh, H. R. Federated learning and differential privacy for medical image analysis. Scientific reports 2022, 12, 1953. [Google Scholar] [CrossRef] [PubMed]

- Ju, Chengru, and Yida Zhu. "Reinforcement Learning Based Model for Enterprise Financial Asset Risk Assessment and Intelligent Decision Making." (2024).

- Yu, Keke, et al. "Loan Approval Prediction Improved by XGBoost Model Based on Four-Vector Optimization Algorithm." (2024).

- Zhou, S. , Sun, J., & Xu, K. (2024). AI-Driven Data Processing and Decision Optimization in IoT through Edge Computing and Cloud Architecture. Zhou, S.; Sun, J.; Xu, K. AI-Driven Data Processing and Decision Optimization in IoT through Edge Computing and Cloud Architecture. Preprints 2024, 2024100736. [CrossRef]

- Sun, J. , Zhou, S., Zhan, X., & Wu, J. (2024). Enhancing Supply Chain Efficiency with Time Series Analysis and Deep Learning Techniques. Preprints 2024, 2024090983. [CrossRef]

- Zheng, H. , Xu, K. , Zhang, M., Tan, H., & Li, H. Efficient resource allocation in cloud computing environments using AI-driven predictive analytics. Applied and Computational Engineering 2024, 82, 6–12. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).