Submitted:

18 October 2024

Posted:

21 October 2024

You are already at the latest version

Abstract

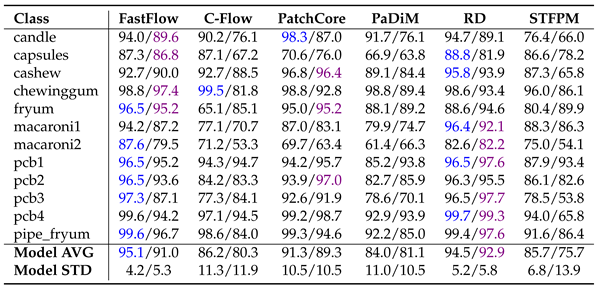

Keywords:

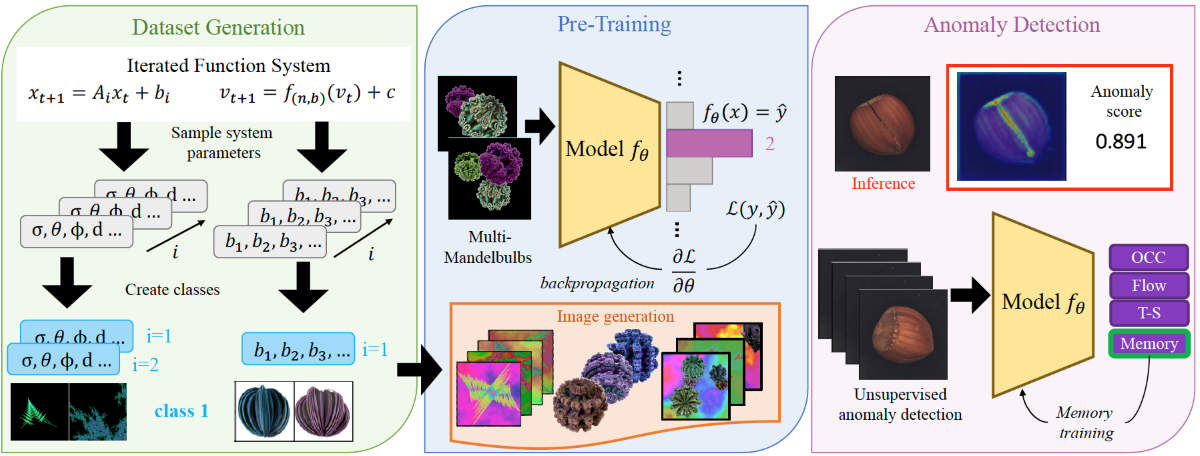

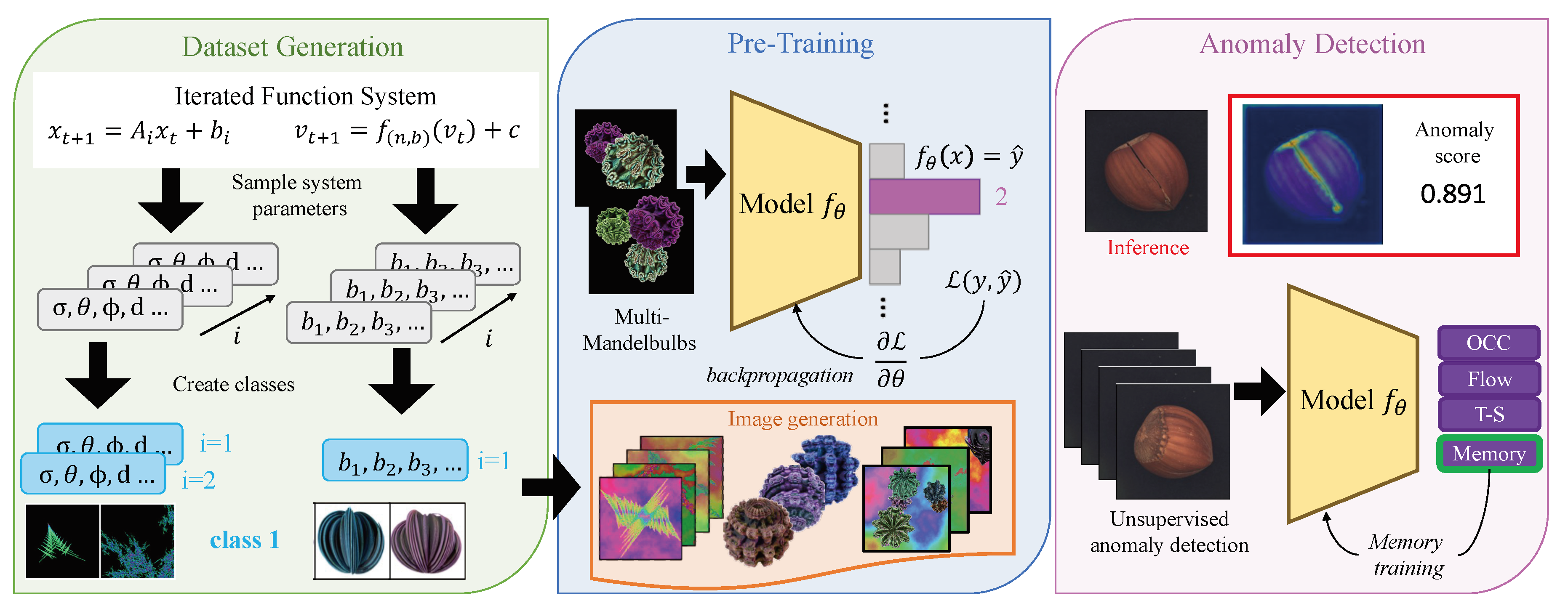

1. Introduction

- We conducted the first systematic analysis comparing the performance of eleven AD models pre-trained with fractals against those pre-trained with ImageNet on three benchmark datasets specifically designed for real-world industrial inspection scenarios, demonstrating that synthetically generated abstract images could be a valid alternative for defect detection.

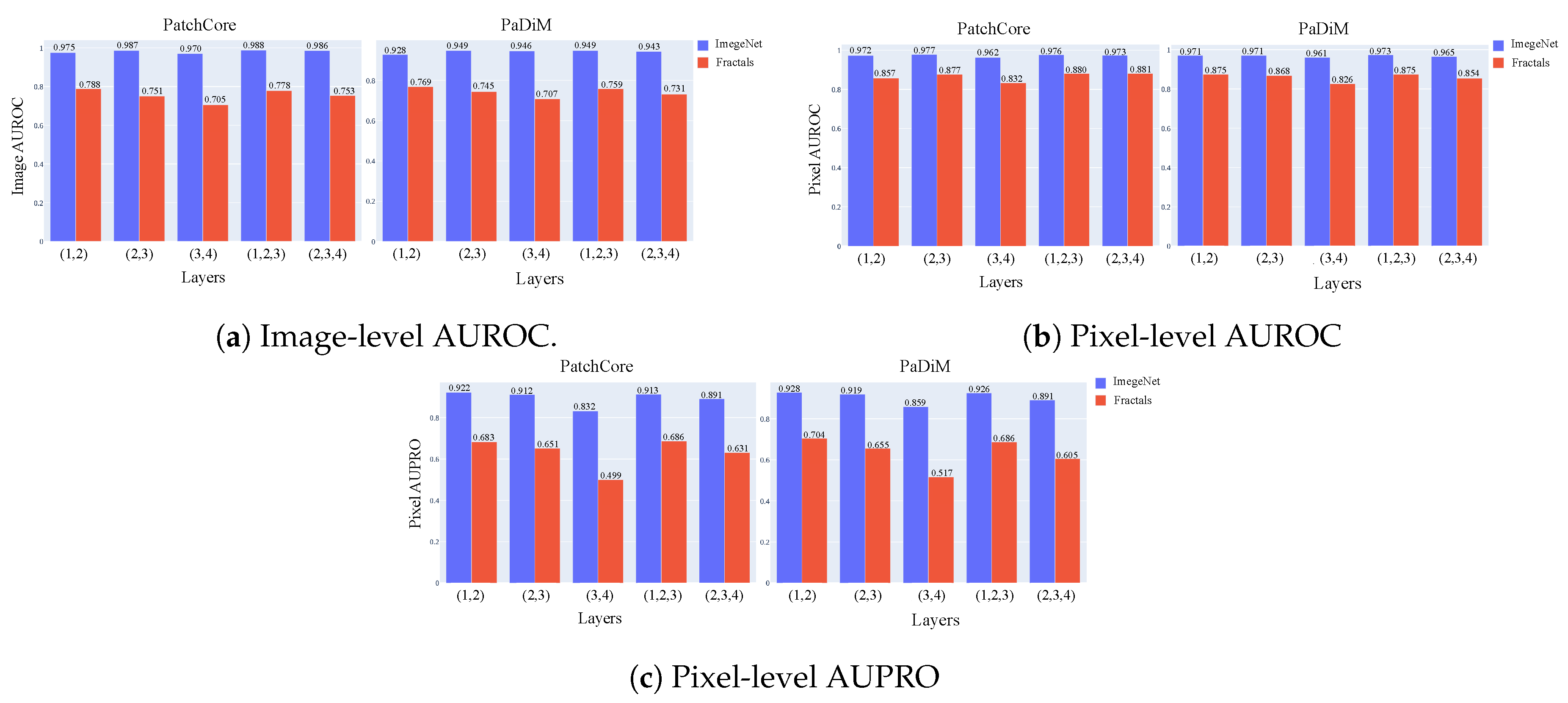

- We analyze the influence of feature hierarchy and object categories in addressing the anomaly detection (AD) task, demonstrating that the effectiveness of fractal-based features is closely tied to the type of anomaly. Nevertheless, we found that low-level fractal features performed better than high-level ones.

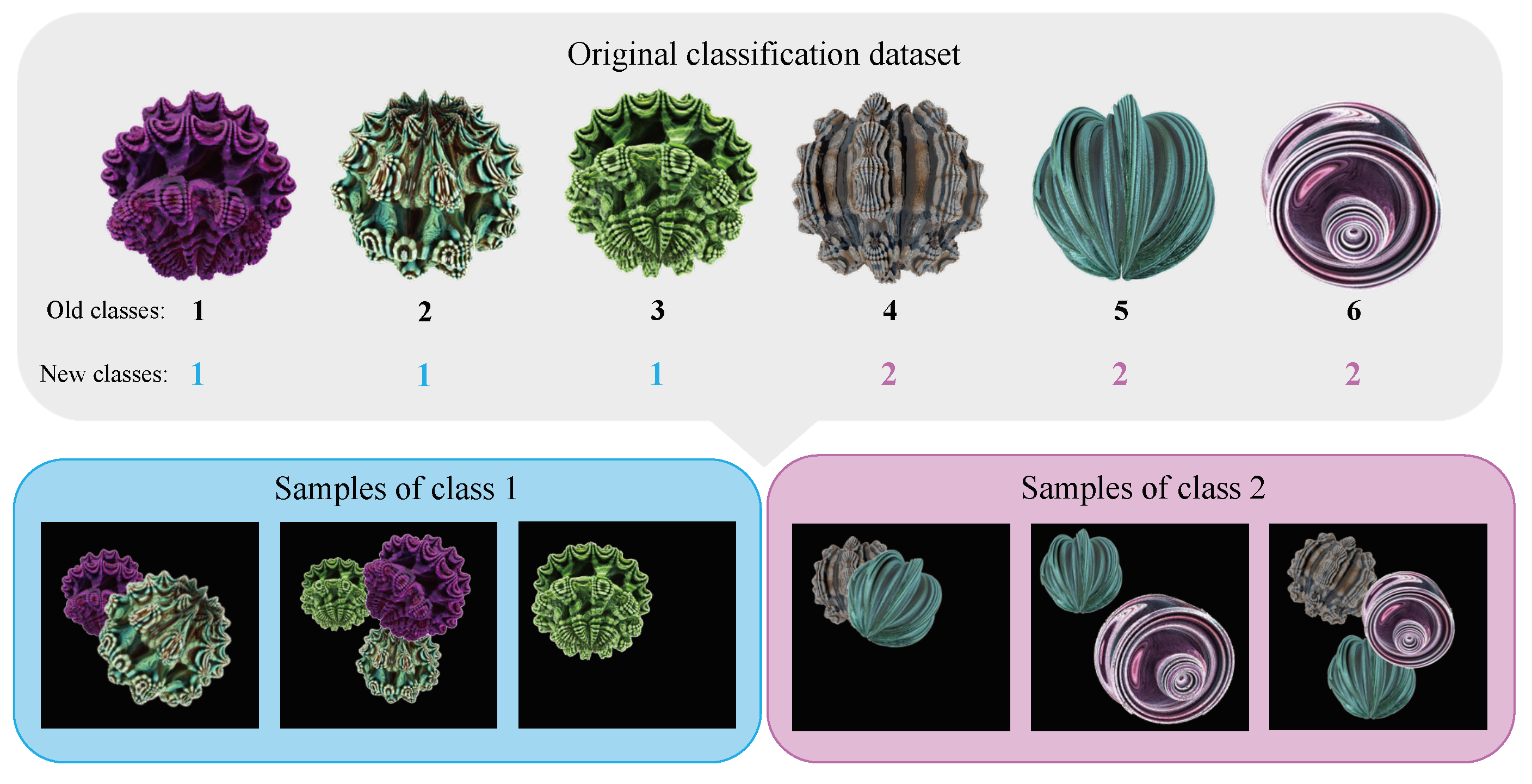

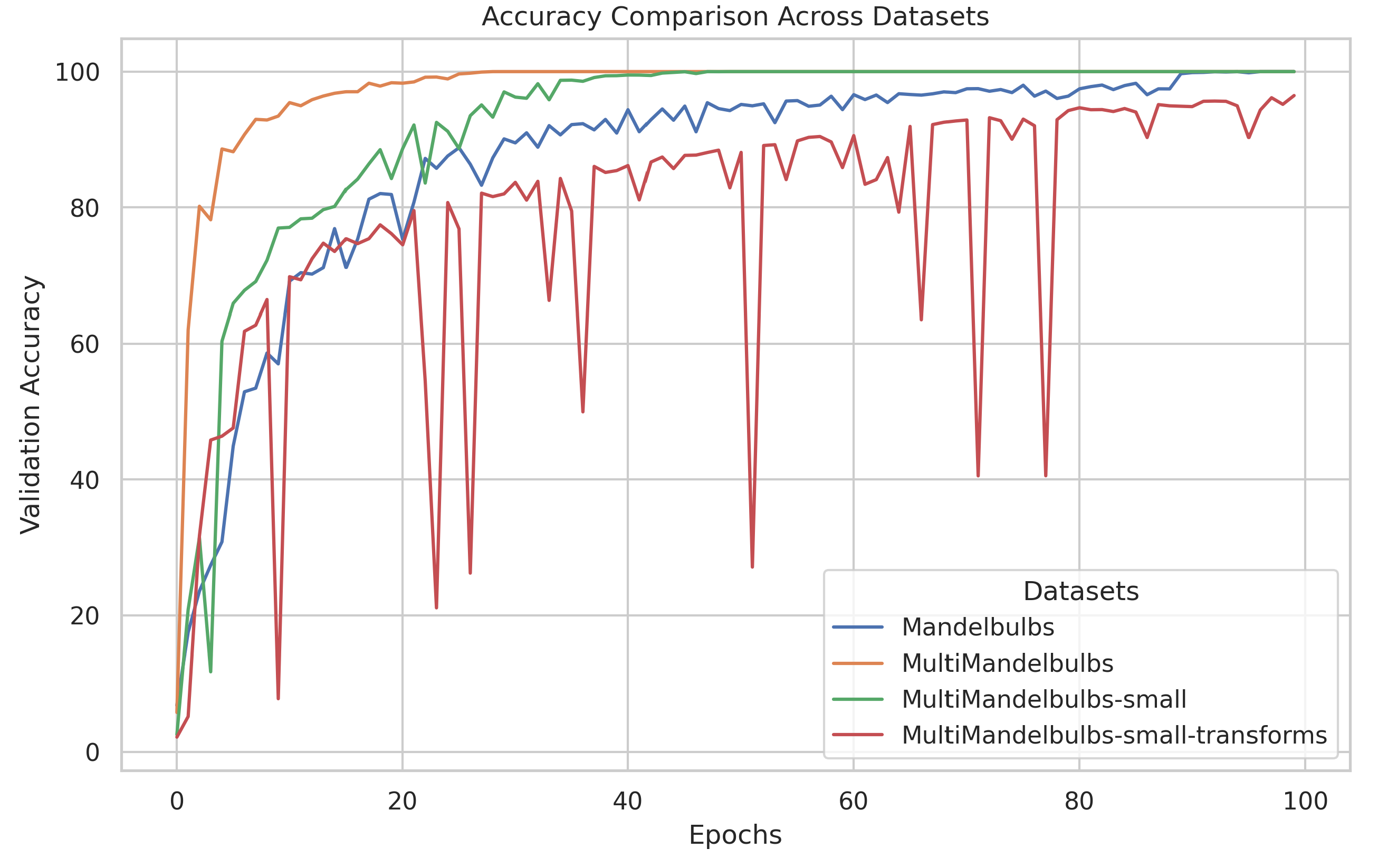

- We introduce a novel procedure for the generation of classification datasets dubbed “Multi-Formula” that integrates multiple fractals, increasing the number of characteristics for each class and show that this strategy leads to improved performance compared to the standard classification (“Single-Formula”) dataset under the same training condition.

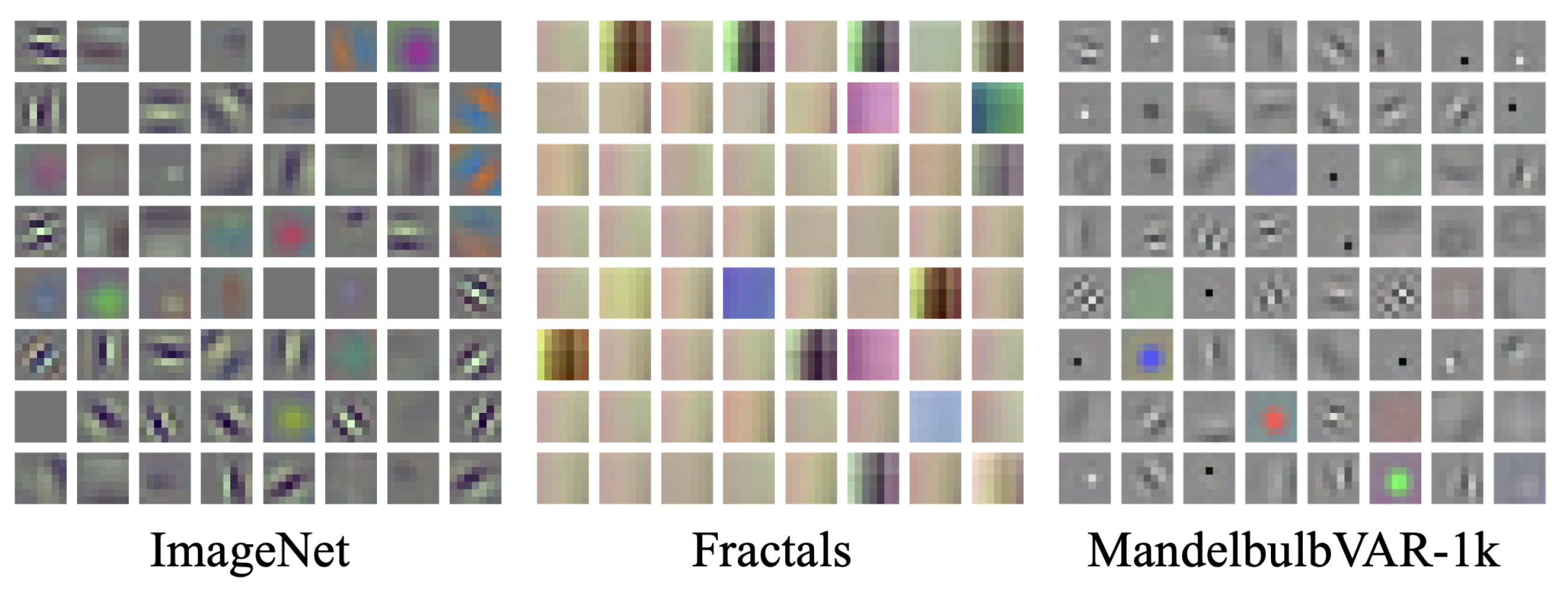

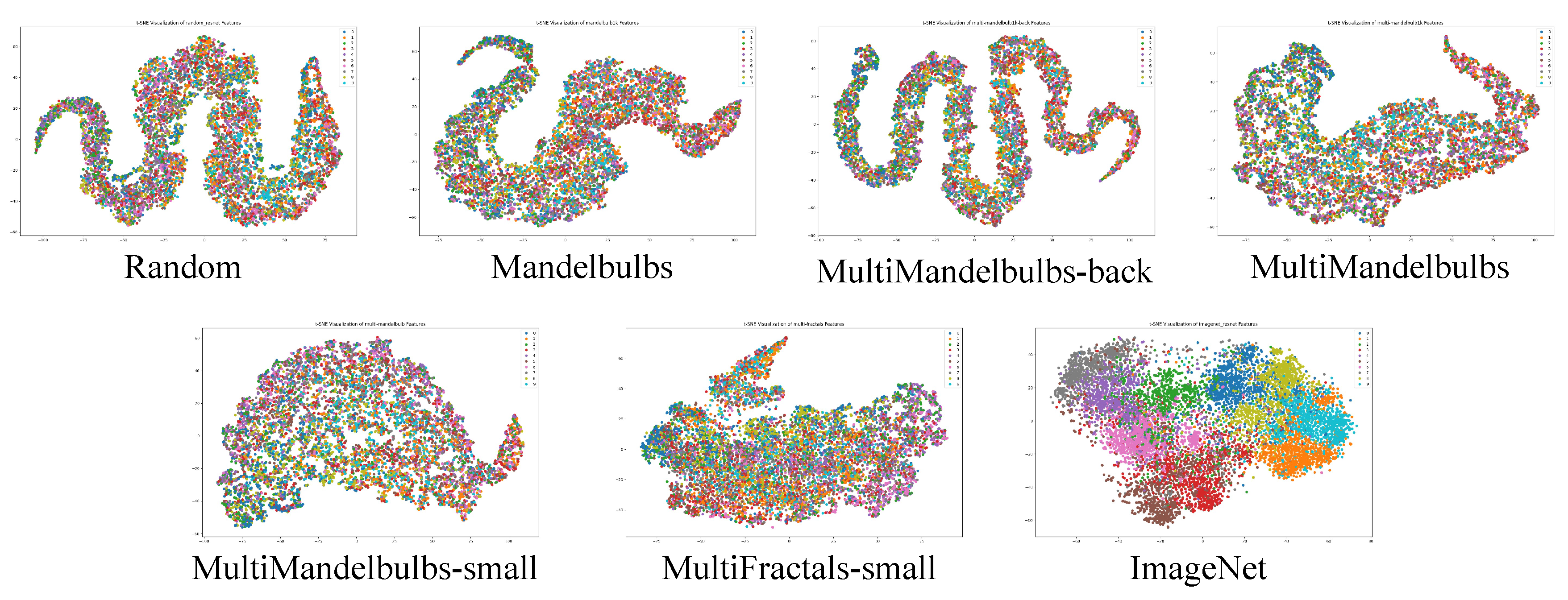

- We demonstrate that the learned weights are influenced by the specific fractals used during pre-training, showing that the presence of filters with complex patterns in the early network layers does not necessarily reflect well-learned weights across the entire architecture. On the contrary, higher-level weights may still be poorly optimized. Thus, we emphasize the importance of conducting a comprehensive analysis of the latent space structure to accurately assess the quality of the learned weights.

- We evaluated the performance variations when training a model using different dataset configurations, such as fractal structure types, the number of samples, and training settings. Additionally, we observed that careful tuning of model selection is crucial, and reducing the number of samples and classes led to improved anomaly detection performance.

2. Related Works

2.1. Formula-Driven Supervised Learning

2.2. Anomaly Detection

3. Dataset Generation Methods

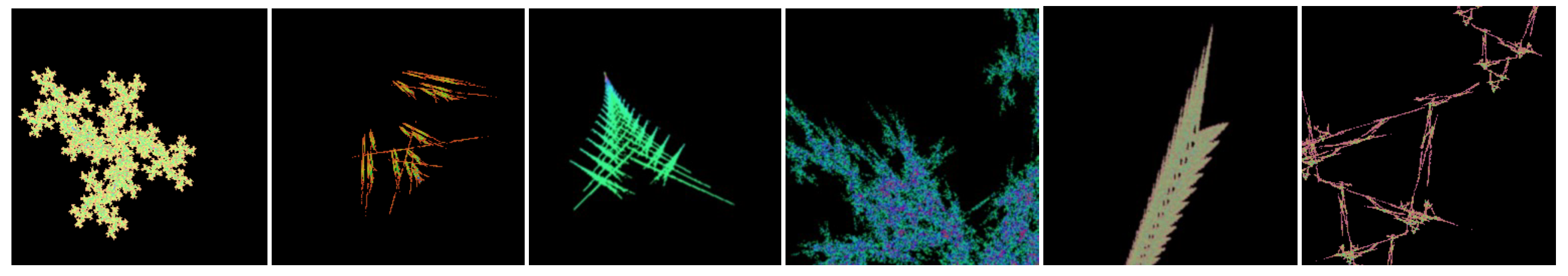

3.1. Fractals Images

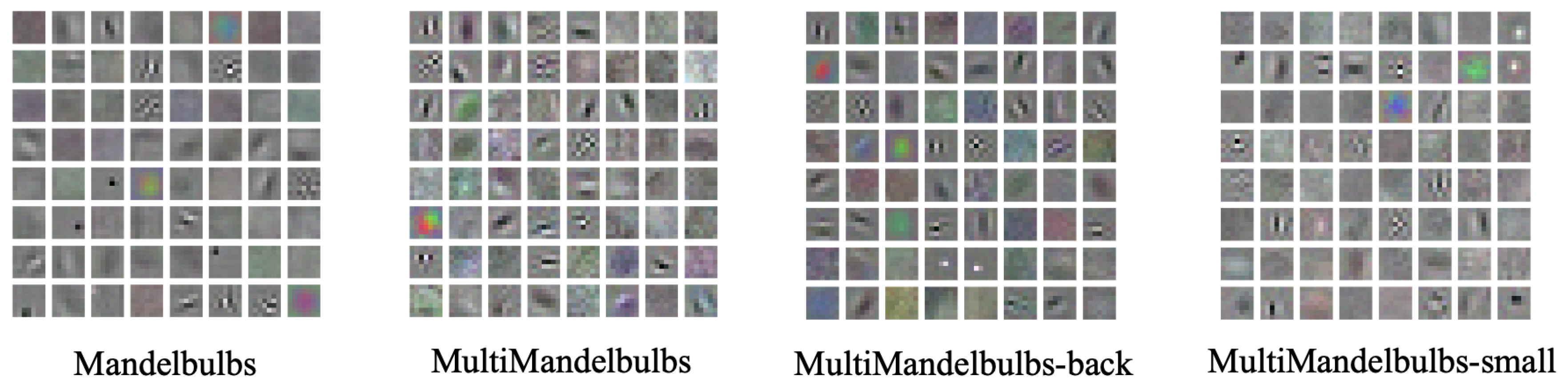

3.2. Mandelbulb Variations

3.3. Multi-Formula Dataset

4. Implementation Details

4.1. Datasets:

4.2. Anomaly Detection Methods:

4.3. Evaluation Metrics:

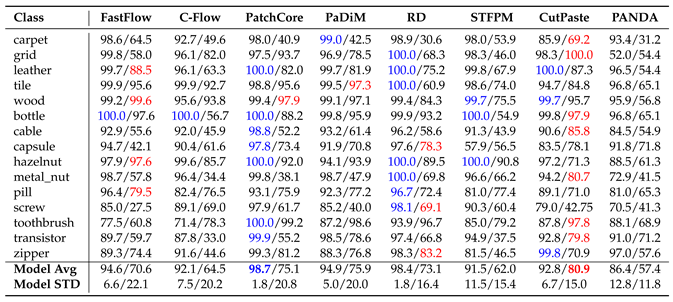

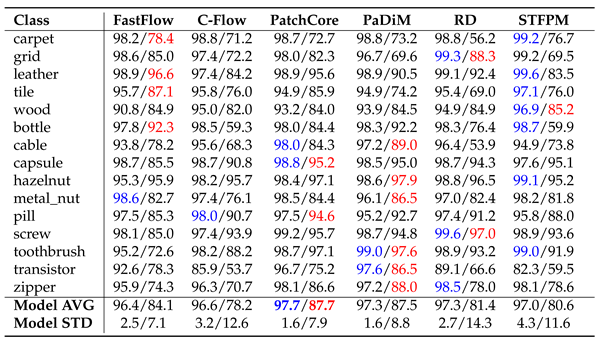

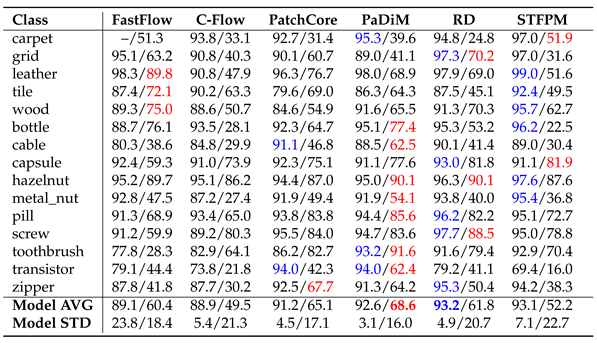

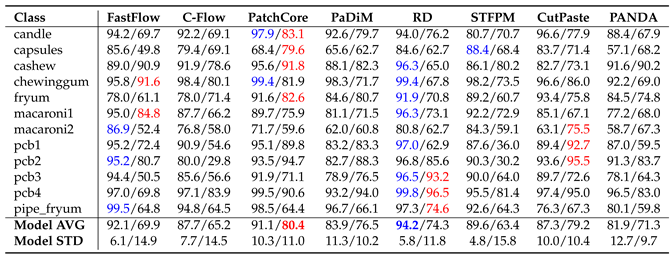

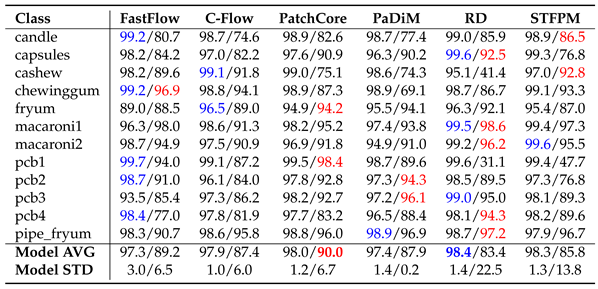

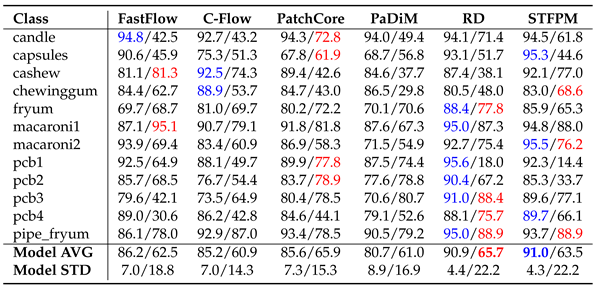

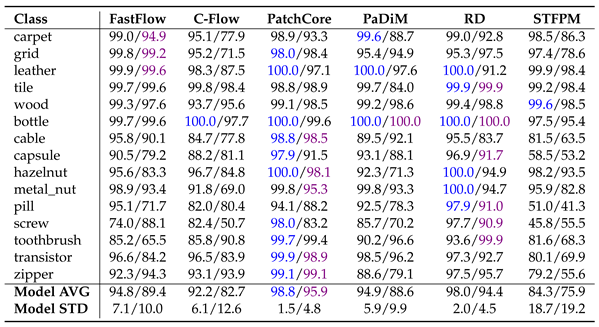

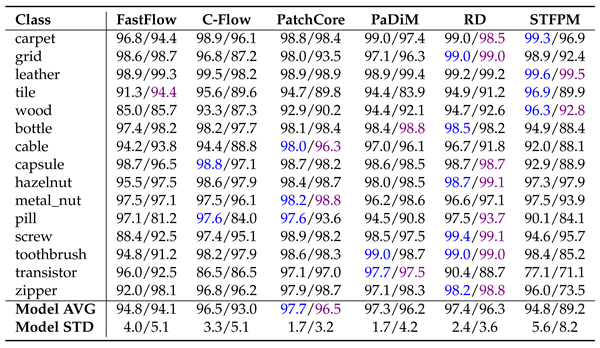

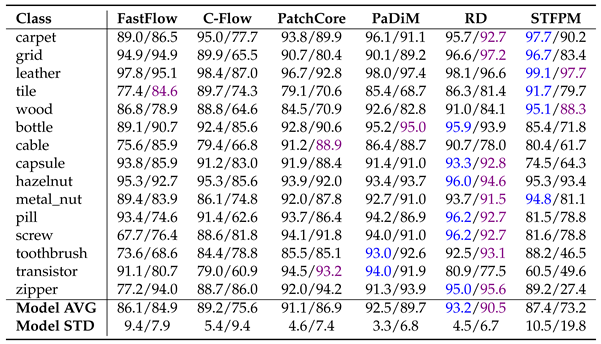

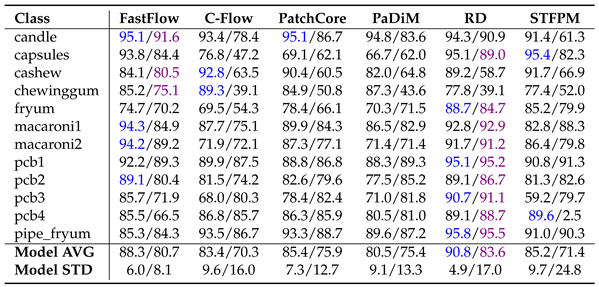

5. Performance of Different Anomaly Detection Methods on Fractals Dataset

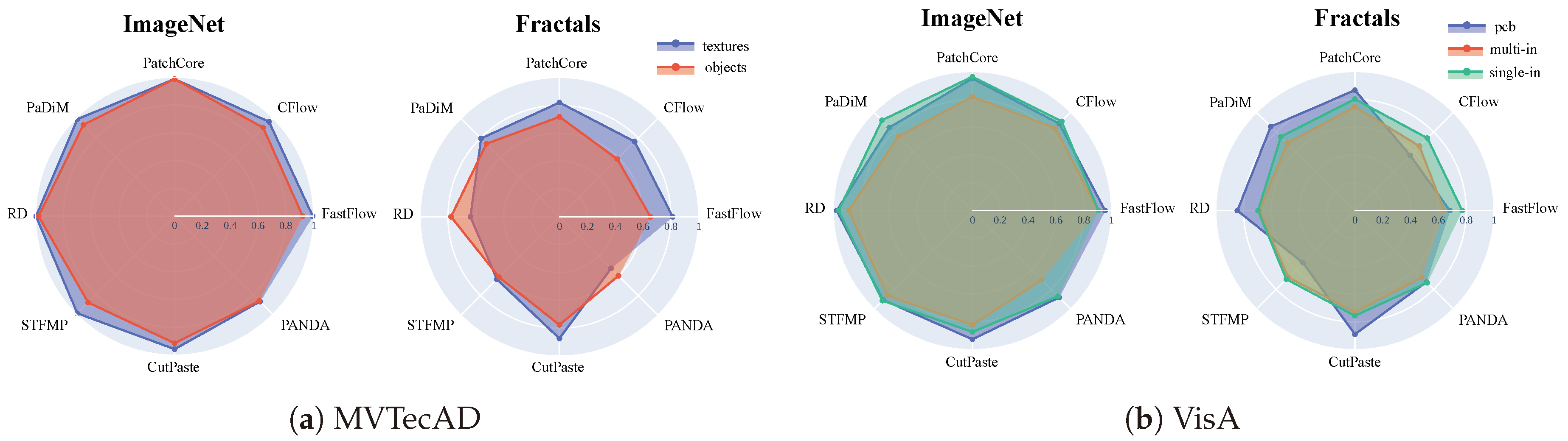

5.1. Comparison between Object Categories

5.2. Impact of Feature Hierarchy

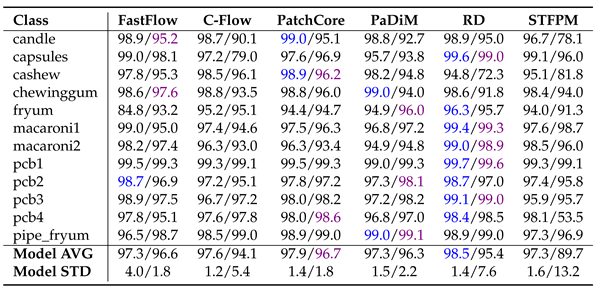

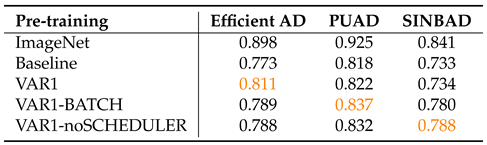

6. Performance of Different Anomaly Detection Methods on MandelbulbVAR-1k Dataset

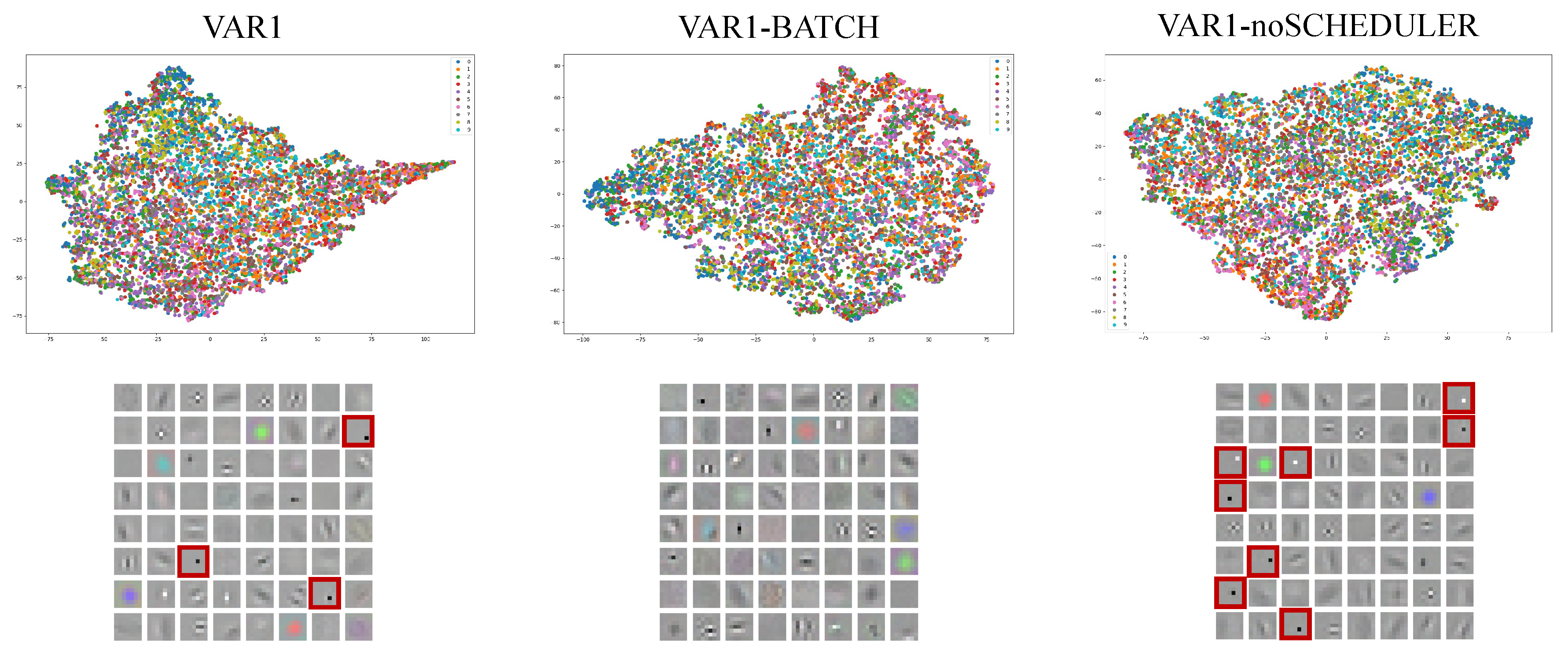

6.1. Visualization of Learned Low-Level Filters

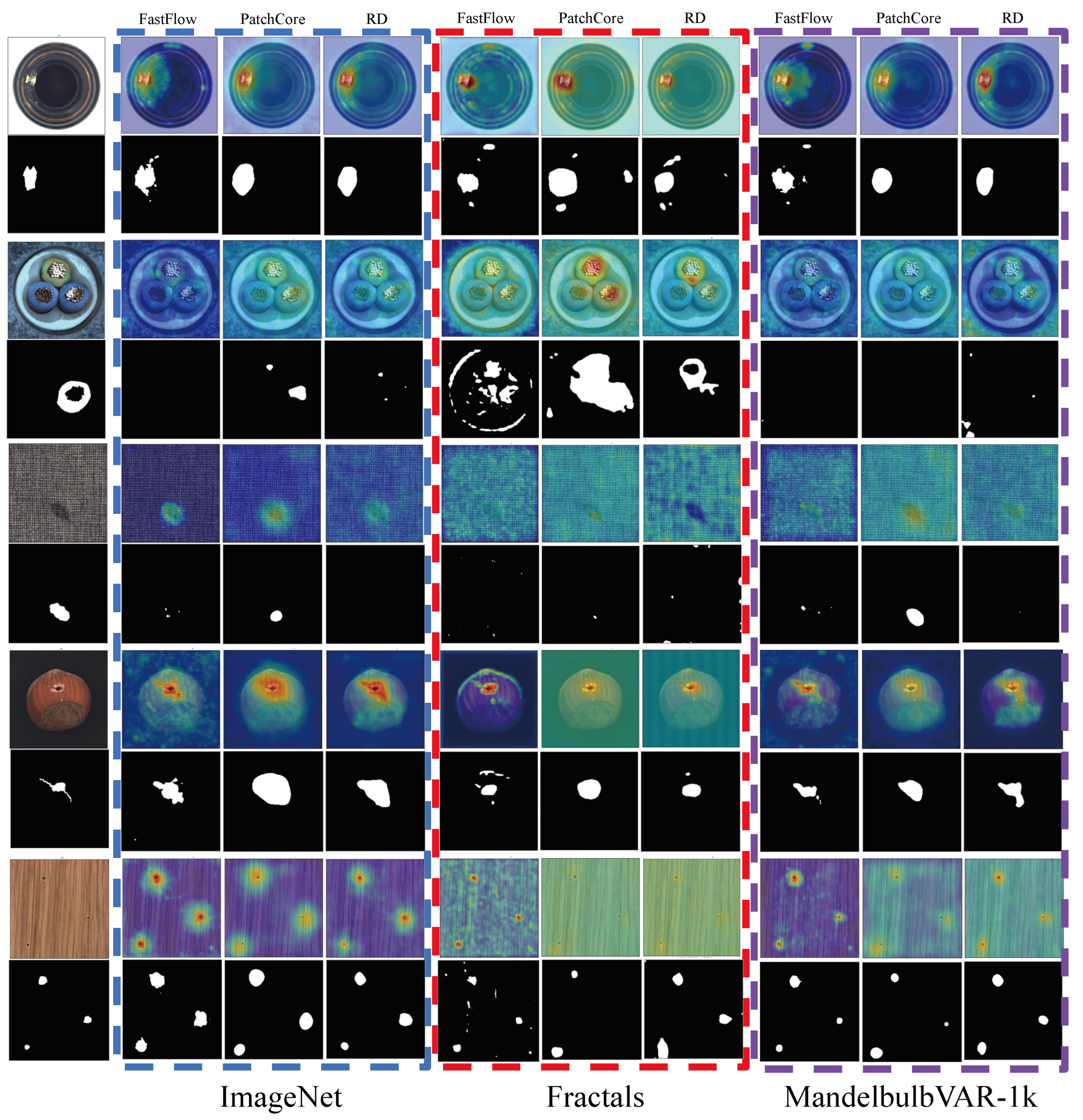

6.2. Qualitative Results

7. Ablation Study

| Pre-training | Image AUROC | Pixel AUROC |

|---|---|---|

| Random initialization | 0.772 | 0.860 |

| ImangenNet (1000 cl.) | 0.991 | 0.981 |

| Mandelbulbs (1000 cl.) | 0.678 | 0.784 |

| MultiMandelbulbs (1000 cl.) | 0.809 | 0.919 |

| MultiMandelbulbs-back (1000 cl.) | 0.719 | 0.833 |

| Fractals (200 cl.) | 0.720 | 0.823 |

| MultiFractals (200 cl.) | 0.771 | 0.900 |

| Mandelbulbs (200 cl.) | 0.695 | 0.802 |

| MultiMandlebulbs (200 cl.) | 0.817 | 0.921 |

| MultiMandelbulbs-back (200 cl.) | 0.699 | 0.781 |

| MultiMandelbulbs-transforms (200 cl.) | 0.791 | 0.912 |

| MultiMandelbulbs-gray (200 cl.) | 0.793 | 0.908 |

7.1. Convergence Speed in FDSL Training

7.2. Low- and High-Level Features Analysis

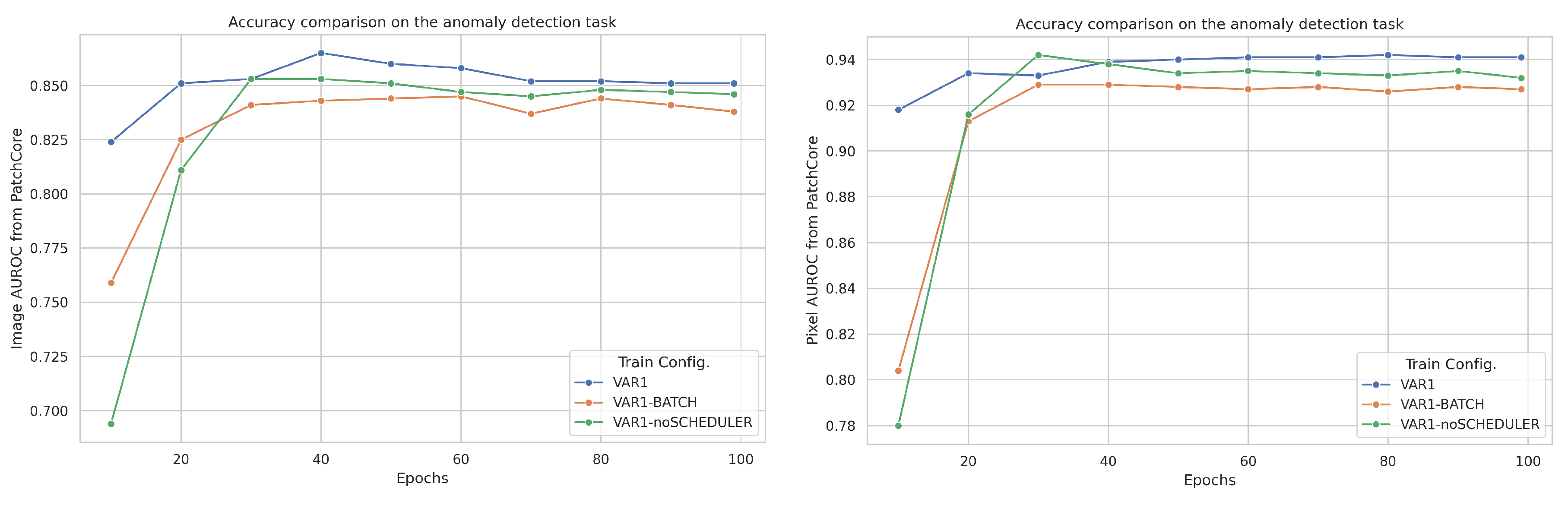

7.3. Impact of Training Configuration

| Train Config. | Best Epoch | Best Val. Acc. | Image AUROC | Pixel AUROC |

|---|---|---|---|---|

| VAR1 | 83 | 9.33 | 0.857 | 0.941 |

| VAR1-BATCH | 25 | 10.01 | 0.836 | 0.932 |

| VAR1-noSCHEDULER | 97 | 16.69 | 0.844 | 0.931 |

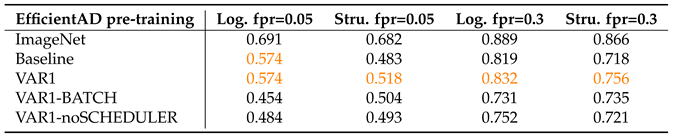

7.4. Results on MVTec LOCO AD

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| AD | Anomaly Detection |

| FDSL | Formula-Driven Supervised Learning |

| IFS | Iterated Function System |

References

- Bergmann, P.; Fauser, M.; Sattlegger, D.; Steger, C. MVTec AD–A comprehensive real-world dataset for unsupervised anomaly detection. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 9592–9600.

- Bergmann, P.; Batzner, K.; Fauser, M.; Sattlegger, D.; Steger, C. Beyond dents and scratches: Logical constraints in unsupervised anomaly detection and localization. International Journal of Computer Vision 2022, 130, 947–969. [Google Scholar] [CrossRef]

- Zimmerer, D.; Full, P.M.; Isensee, F.; Jäger, P.; Adler, T.; Petersen, J.; Köhler, G.; Ross, T.; Reinke, A.; Kascenas, A.; others. Mood 2020: A public benchmark for out-of-distribution detection and localization on medical images. IEEE Transactions on Medical Imaging 2022, 41, 2728–2738. [Google Scholar] [CrossRef] [PubMed]

- Menze, B.H.; Jakab, A.; Bauer, S.; Kalpathy-Cramer, J.; Farahani, K.; Kirby, J.; Burren, Y.; Porz, N.; Slotboom, J.; Wiest, R.; others. The multimodal brain tumor image segmentation benchmark (BRATS). IEEE transactions on medical imaging 2014, 34, 1993–2024. [Google Scholar] [CrossRef] [PubMed]

- Blum, H.; Sarlin, P.E.; Nieto, J.; Siegwart, R.; Cadena, C. Fishyscapes: A benchmark for safe semantic segmentation in autonomous driving. proceedings of the IEEE/CVF international conference on computer vision workshops, 2019, pp. 0–0.

- Hendrycks, D.; Basart, S.; Mazeika, M.; Zou, A.; Kwon, J.; Mostajabi, M.; Steinhardt, J.; Song, D. Scaling out-of-distribution detection for real-world settings. arXiv preprint arXiv:1911.11132 2019.

- Liu, W.; Luo, W.; Lian, D.; Gao, S. Future frame prediction for anomaly detection–a new baseline. Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 6536–6545.

- Nazare, T.S.; de Mello, R.F.; Ponti, M.A. Are pre-trained CNNs good feature extractors for anomaly detection in surveillance videos? arXiv preprint arXiv:1811.08495 2018.

- Kataoka, H.; Okayasu, K.; Matsumoto, A.; Yamagata, E.; Yamada, R.; Inoue, N.; Nakamura, A.; Satoh, Y. Pre-training without natural images. Proceedings of the Asian Conference on Computer Vision, 2020.

- Torralba, A.; Fergus, R.; Freeman, W.T. 80 million tiny images: A large data set for nonparametric object and scene recognition. IEEE transactions on pattern analysis and machine intelligence 2008, 30, 1958–1970. [Google Scholar] [CrossRef] [PubMed]

- Sun, C.; Shrivastava, A.; Singh, S.; Gupta, A. Revisiting unreasonable effectiveness of data in deep learning era. Proceedings of the IEEE international conference on computer vision, 2017, pp. 843–852.

- Mahajan, D.; Girshick, R.; Ramanathan, V.; He, K.; Paluri, M.; Li, Y.; Bharambe, A.; Van Der Maaten, L. Exploring the limits of weakly supervised pretraining. Proceedings of the European conference on computer vision (ECCV), 2018, pp. 181–196.

- Schuhmann, C.; Beaumont, R.; Vencu, R.; Gordon, C.; Wightman, R.; Cherti, M.; Coombes, T.; Katta, A.; Mullis, C.; Wortsman, M.; others. Laion-5b: An open large-scale dataset for training next generation image-text models. Advances in Neural Information Processing Systems 2022, 35, 25278–25294. [Google Scholar]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-Resolution Image Synthesis with Latent Diffusion Models, 2021, [arXiv:cs.CV/2112.10752].

- Anderson, C.; Farrell, R. Improving fractal pre-training. Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2022, pp. 1300–1309.

- Nakashima, K.; Kataoka, H.; Matsumoto, A.; Iwata, K.; Inoue, N.; Satoh, Y. Can vision transformers learn without natural images? Proceedings of the AAAI Conference on Artificial Intelligence, 2022, Vol. 36, pp. 1990–1998.

- Chiche, B.N.; Horikawa, Y.; Fujita, R. Pre-training Vision Models with Mandelbulb Variations. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 22062–22071.

- Yamada, R.; Kataoka, H.; Chiba, N.; Domae, Y.; Ogata, T. Point cloud pre-training with natural 3D structures. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 21283–21293.

- Shinoda, R.; Hayamizu, R.; Nakashima, K.; Inoue, N.; Yokota, R.; Kataoka, H. Segrcdb: Semantic segmentation via formula-driven supervised learning. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 20054–20063.

- Takashima, S.; Hayamizu, R.; Inoue, N.; Kataoka, H.; Yokota, R. Visual atoms: Pre-training vision transformers with sinusoidal waves. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 18579–18588.

- Kataoka, H.; Hayamizu, R.; Yamada, R.; Nakashima, K.; Takashima, S.; Zhang, X.; Martinez-Noriega, E.J.; Inoue, N.; Yokota, R. Replacing labeled real-image datasets with auto-generated contours. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 21232–21241.

- Xie, G.; Wang, J.; Liu, J.; Lyu, J.; Liu, Y.; Wang, C.; Zheng, F.; Jin, Y. Im-iad: Industrial image anomaly detection benchmark in manufacturing. arXiv preprint arXiv:2301.13359 2023.

- Wang, G.; Han, S.; Ding, E.; Huang, D. Student-teacher feature pyramid matching for anomaly detection. arXiv preprint arXiv:2103.04257 2021.

- Deng, H.; Li, X. Anomaly detection via reverse distillation from one-class embedding. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 9737–9746.

- Bergmann, P.; Fauser, M.; Sattlegger, D.; Steger, C. Uninformed students: Student-teacher anomaly detection with discriminative latent embeddings. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 4183–4192.

- Guo, J.; Lu, S.; Jia, L.; Zhang, W.; Li, H. ReContrast: Domain-Specific Anomaly Detection via Contrastive Reconstruction. arXiv preprint arXiv:2306.02602 2023.

- Roth, K.; Pemula, L.; Zepeda, J.; Schölkopf, B.; Brox, T.; Gehler, P. Towards total recall in industrial anomaly detection. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 14318–14328.

- Defard, T.; Setkov, A.; Loesch, A.; Audigier, R. Padim: a patch distribution modeling framework for anomaly detection and localization. International Conference on Pattern Recognition. Springer, 2021, pp. 475–489.

- Lee, S.; Lee, S.; Song, B.C. Cfa: Coupled-hypersphere-based feature adaptation for target-oriented anomaly localization. IEEE Access 2022, 10, 78446–78454. [Google Scholar] [CrossRef]

- Gudovskiy, D.; Ishizaka, S.; Kozuka, K. Cflow-ad: Real-time unsupervised anomaly detection with localization via conditional normalizing flows. Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2022, pp. 98–107.

- Yu, J.; Zheng, Y.; Wang, X.; Li, W.; Wu, Y.; Zhao, R.; Wu, L. Fastflow: Unsupervised anomaly detection and localization via 2d normalizing flows. arXiv preprint arXiv:2111.07677 2021.

- Reiss, T.; Cohen, N.; Bergman, L.; Hoshen, Y. Panda: Adapting pretrained features for anomaly detection and segmentation. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp. 2806–2814.

- Li, C.L.; Sohn, K.; Yoon, J.; Pfister, T. Cutpaste: Self-supervised learning for anomaly detection and localization. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 9664–9674.

- Rampe, J. New mandelbulb variations. https://softologyblog.wordpress.com/2011/07/21/, 2011. Accessed: 2024-07-22.

- Zou, Y.; Jeong, J.; Pemula, L.; Zhang, D.; Dabeer, O. Spot-the-difference self-supervised pre-training for anomaly detection and segmentation. European Conference on Computer Vision. Springer, 2022, pp. 392–408.

- Akcay, S.; Ameln, D.; Vaidya, A.; Lakshmanan, B.; Ahuja, N.; Genc, U. Anomalib: A deep learning library for anomaly detection. 2022 IEEE International Conference on Image Processing (ICIP). IEEE, 2022, pp. 1706–1710.

- Batzner, K.; Heckler, L.; König, R. Efficientad: Accurate visual anomaly detection at millisecond-level latencies. Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024, pp. 128–138.

- Sugawara, S.; Imamura, R. PUAD: Frustratingly Simple Method for Robust Anomaly Detection. arXiv preprint arXiv:2402.15143 2024.

- Cohen, N.; Tzachor, I.; Hoshen, Y. Set Features for Anomaly Detection. arXiv preprint arXiv:2311.14773 2023.

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).