6. The Spacetime Interface: Mass and Spin

In the last section we showed how the energy and momentum of a free particle in spacetime can be the projection of a Markovian RCC, and are intimately connected to the number of asymptotic sets in the RCC. What about mass and spin? Can these also be seen as projections of properties of an RCC? That is the topic of this section.

We begin by modifying the RCC of Example 1 from the last section.

Example 2: Q-harmonic Functions and Wavefunctions. Consider the Markovian kernel on eight states,

, given by

L has one absorbing set, containing all 8 states, so

. This absorbing set has 4 asymptotic events, viz., the 4 sets of states

, so

. It has exactly the same form for the 4 eigenvalues, eigenfunctions, Q-harmonic functions, and wavefunctions as Example 1.

So what is the difference? A key difference is that the entropy rate of Example 1 is 0, but the entropy rate of Example 2 is greater than 0. We propose that

and thus that Examples 1 and 2 differ in the masses of their particles.

We briefly review the definition of entropy rate [22]. A stochastic process

is a sequence of random variables. If

is generated by a stationary Markov chain, then asymptotically the joint entropy

grows linearly with

N at rate

, which is called the entropy rate of the process. An

irreducible, or

ergodic chain is one for which there is a non-zero probability of going from any given state to any other state in some finite number of steps. Such chains possess a unique stationary measure.The entropy rate of an ergodic Markov chain with stationary measure

and transition probability

P is given by

This says that the entropy rate of P is the weighted sum of the entropies of the probability measures constituting its rows, where the weighting is the stationary measure of the Markov kernel. Notice that a row of a Markov kernel with a single unit entry (and therefore all other entries 0) has zero entropy. So a Markov kernel all of whose rows are of this type will have a 0 entropy rate. Such kernels constitute the periodic kernels [18]: periodic chains have 0 entropy rate.

So if the physical mass of a particle is a projection of the entropy rate of a communicating class of CAs, then Examples 1 and 2 differ in the mass of the particle they represent. We can continue, by making similar examples whose 4 asymptotic events each contain more and more states, and thus potentially greater entropy rates, i.e., greater masses. As we said before, we think of this communicating class as arising from a sampled trace chain, so that the communicating class and the particle to which it projects are both results of an observation, and are not taken as an objective reality independent of observation.

Why should we posit this connection between mass and entropy rate? Intuitively, the more internal interactions an object has, the less it is affected by outside influences, i.e., the greater its “inertia.” Similarly, the more connections a given state of a communicating class has with its other states, the wider influence it has with other states. If a state has only one connection, then its row in the Markov kernel is all 0’s except for a single 1: the entropy of its row is 0. The more connections a state has, i.e., the more nonzero entries it has in its row, the greater its entropy will tend to be. Another way to say this is that the entropy rate is the minimum expected codeword length per symbol of a stationary stochastic process [22]. Greater entropy rate thus leads to “weightier” descriptions.

There is a physical analogy giving insight into how an intrinsic ‘mass’ of a particle, can arise from the sampling and trace-chaining on a Markovian dynamic by looking at the Langevin Equation for Brownian motion of a pollen particle [37,38]. Considering n interacting entities, one can ‘trace’ the coupled motion of the entire system only on entities, the ignored entities leaving their trace on the resulting dynamics of fewer entities. For the Brownian particle, m is the pollen grain under consideration, incessantly bombarded by and interacting with its environmental molecules. In our language, we can say that the Brownian particles ‘observes’ (i.e. samples) the whole system via the ‘trace-chaining’ operation. The equation of motion for the Brownian particle is then described by the Langevin equation, which is typically characterized by three properties: (i) a fluctuation term representing the initial conditions of the neglected degrees of motion; (ii) a ‘damping’ or dissipation term representing ‘friction’ or viscous drag in the soup of the entities of the environmental bath; and (iii) a memory of previous motion, as feedback from sampling i.e. tracing. Statistical mechanics connects the ‘fluctuation’ to ‘dissipation’ and ‘memory’ via the ‘fluctuation-dissipation’ theorem. Friction implies an inertial ‘mass’ experiencing ‘damping’ or resistance as ‘dissipation’ which is nothing but thermodynamic ‘heat’, connected with the loss of information from the whole system onto the Brownian particle: the ‘entropy flow or rate’ within the tracing/sampling processing process. This supports the conjecture of connecting the ‘entropy rate’ of a sampled trace-chaining to the ‘mass’ of a particle.

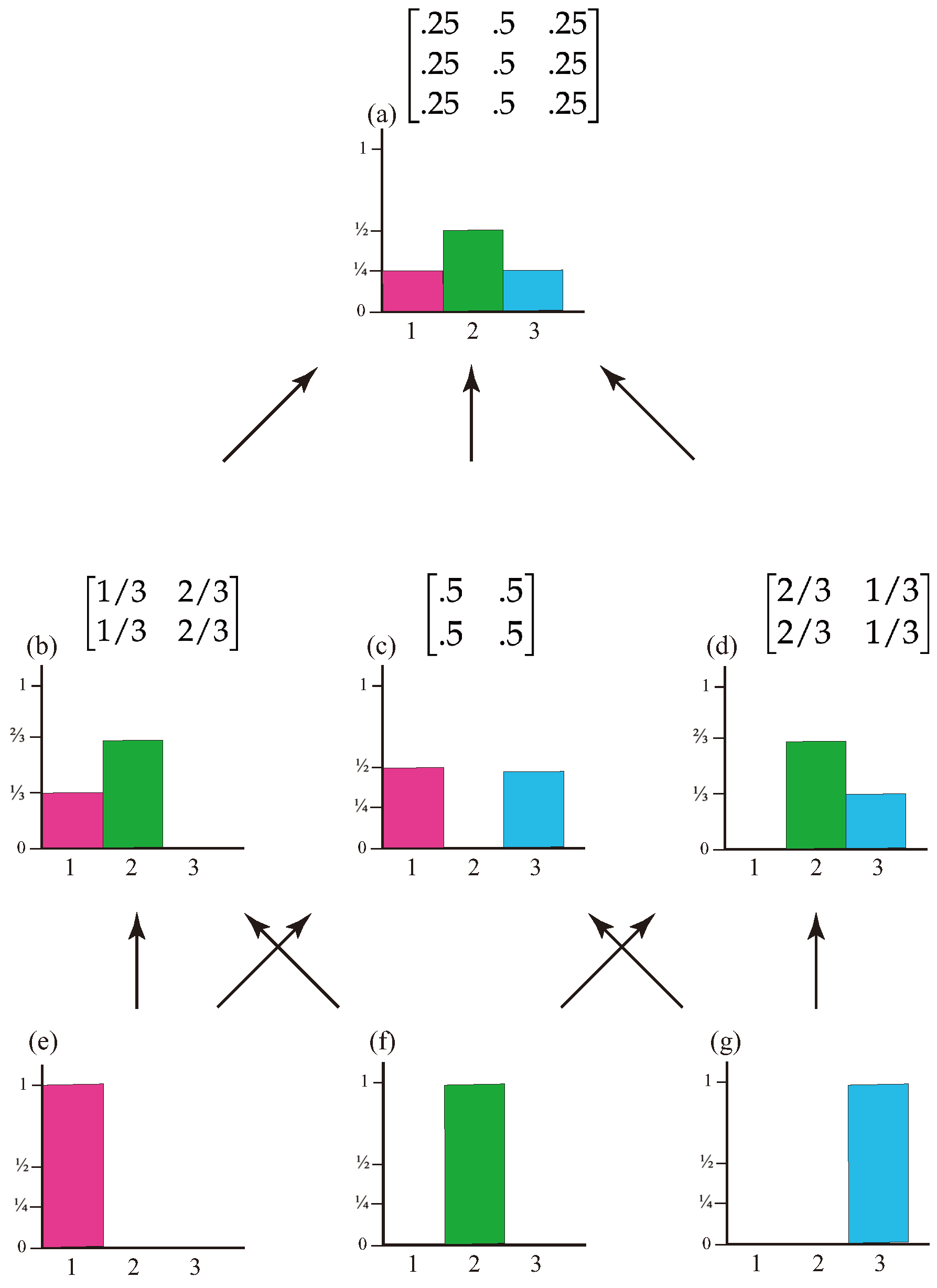

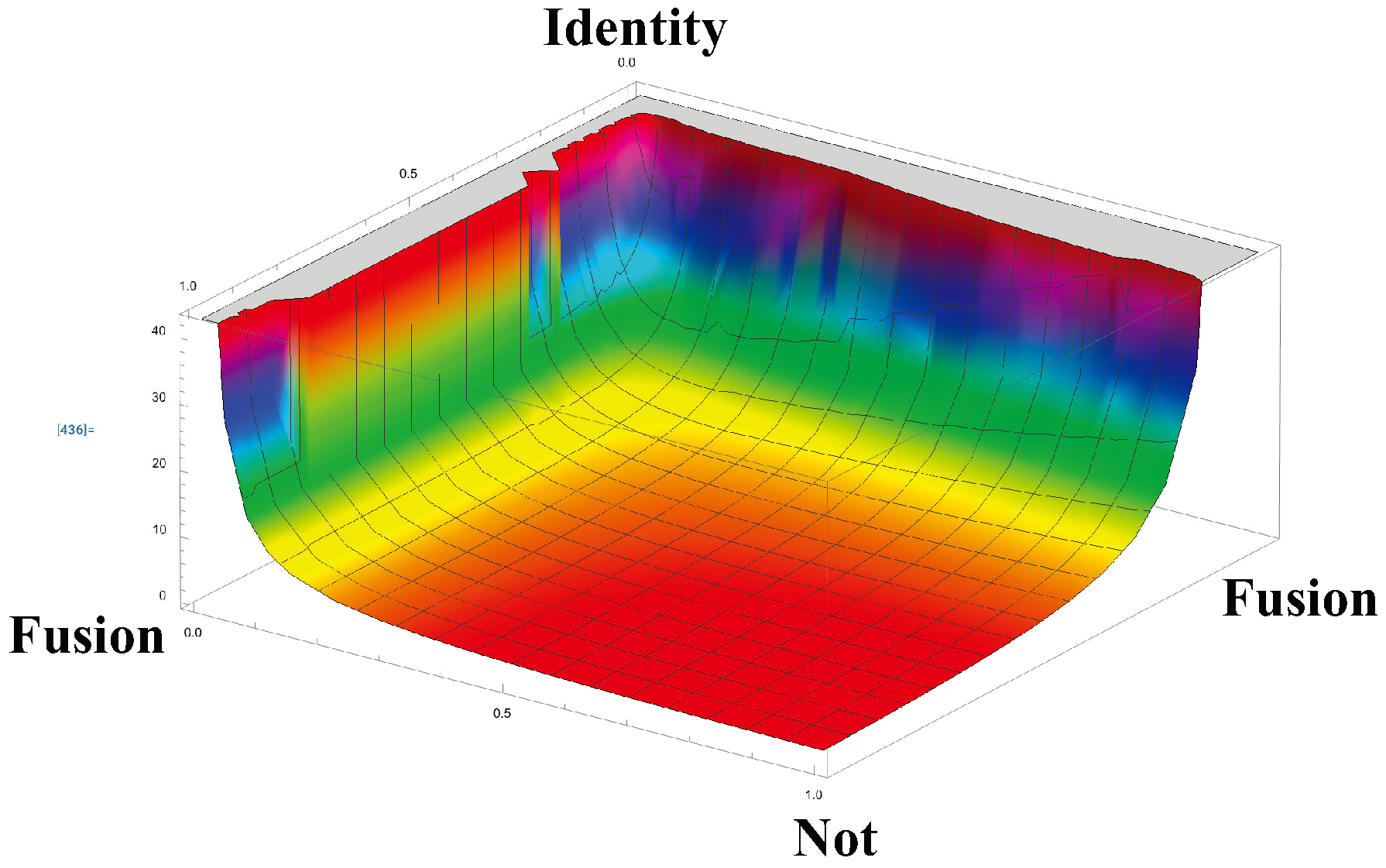

Figure 6 plots the entropy rate for each kernel in the Markov polytope

, the set of all

Markov matrices. The maximum entropy rate in

is 1. The maximum entropy rate for matrices in the Markov polytope

is

. Thus enormous matrices are required to get large masses. For instance, the maximum mass for Markov kernels of dimension

is about 1834, which is roughly the proton-electron mass ratio. Now

is a lot of elementary conscious agents. To compare, the number of particles in the observable universe is roughly

.

This claimed link between mass and entropy rate of an RCC faces two obvious challenges. First, quantum theory dictates that massless particles have spin 1, with helicities or . (There is the theoretical possibility of a massless spin 2 particle for gravity, but no experimental evidence yet). Second, relativity dictates that massless particles always move at the speed of light. Can entropy rate meet these challenges? Quite nicely, it appears.

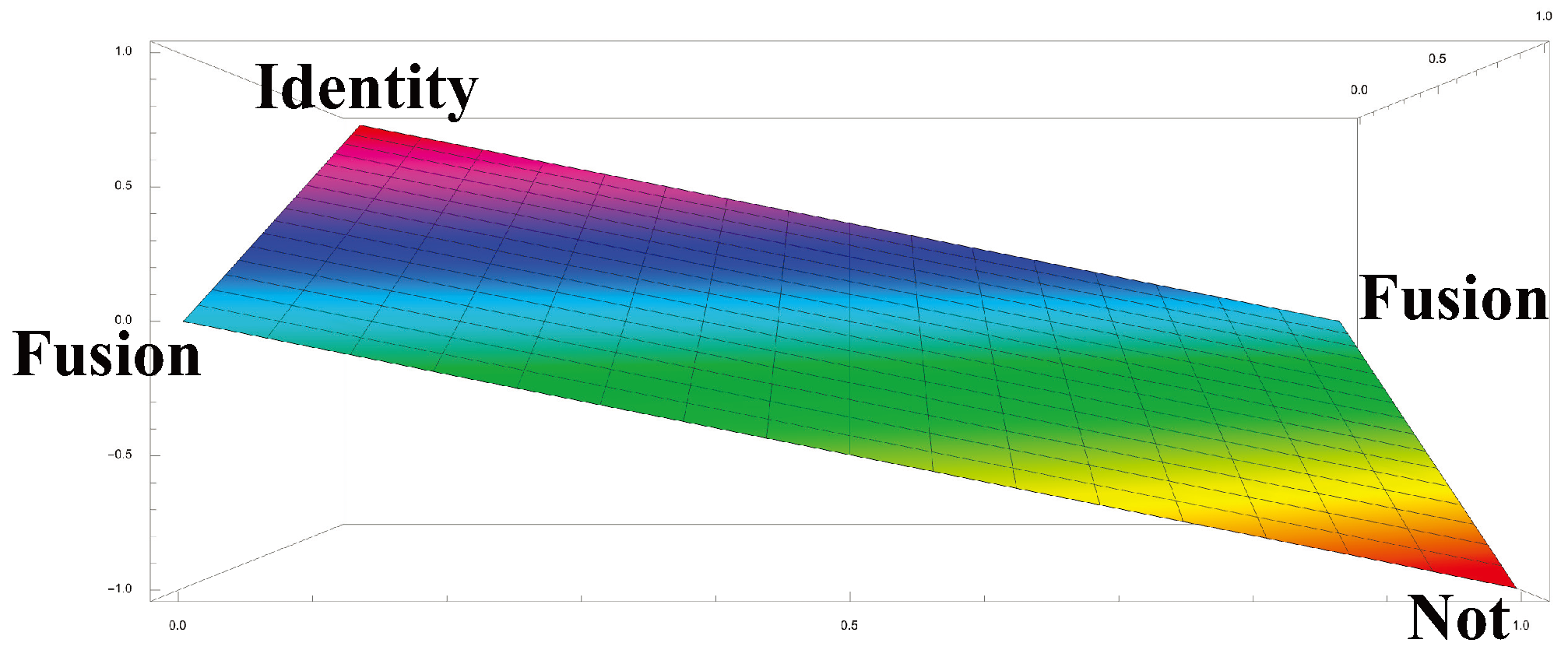

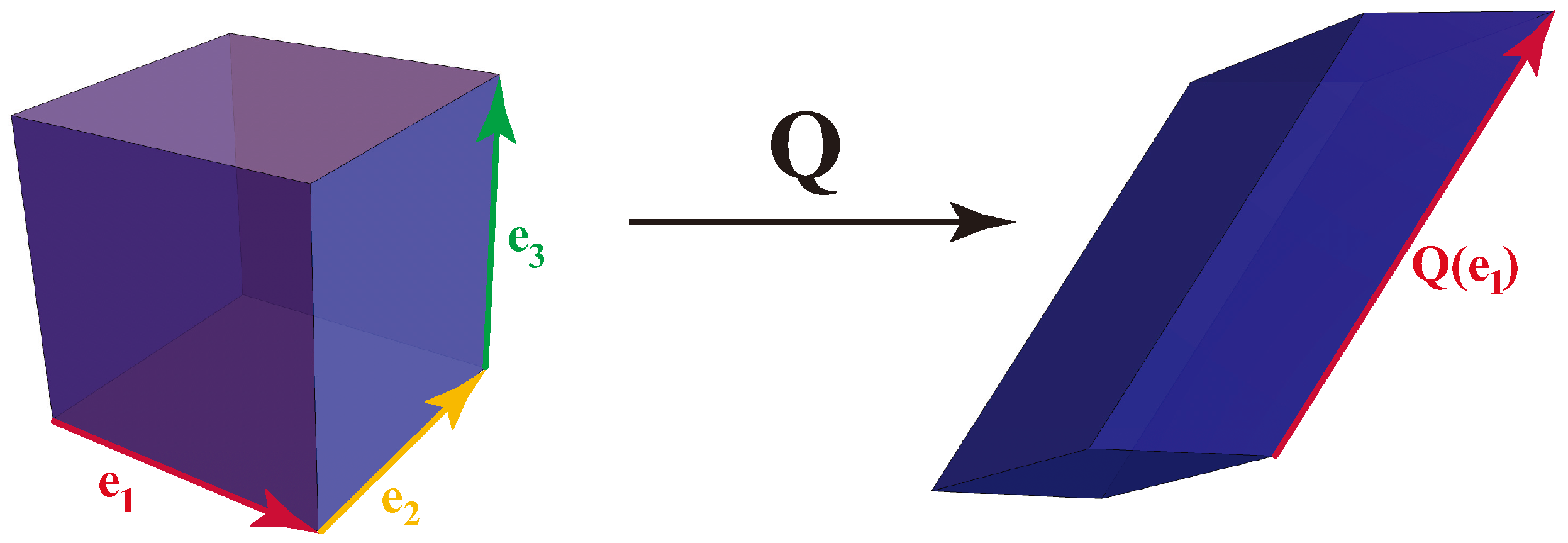

To meet the spin challenge, we first need to propose what property of an RCC projects to spin. An obvious candidate is the determinant of the Markov matrix of the RCC, which can take any real value between 1 and

inclusive.

Figure 8 shows the determinants for each matrix in the Markov polytope

. The identity matrix, at the corner labelled

Identity, has determinant 1. The Not matrix

in the corner labelled

Not, has determinant -1. Fusion matrices, which have the form

have deteminant 0, lying on the line between the corners labelled

Fusion, (roughly following the light blue streak). [18] The determinants between this line and the

Identity corner satisfy

, while determinants between this line and the

Not corner satisfy

.

While the determinants can take any real value between 1 and

inclusive, the only possible spin values are 0,

, and

. A natural projection from determinant

d to spin

is

We see from

Figure 6 that the matrices in

with zero entropy rate lie along the lines

and

. However, the only one of these that is irreducible (i.e., has a two-state RCC), is the NOT matrix: a periodic matrix with period 2. All other matrices with zero entropy rate have an absorbing state, so none of them are irreducible. Matrices arbitrarily close to the lines

and

do have a communicating class of two states and an entropy rate close to 0, but never exactly 0. This is reminiscent of neutrino masses, which are close to 0, but not exactly 0. The behavior of these matrices, which contain states that are nearly absorbing, is also reminiscent of neutrino behavior: they rarely interact.

The map is defined not just for matrices of , but also for matrices of , for any n. In each case, the communicating classes of size n that have determinant are periodic with period n, and these are the only matrices with full-size communicating classes having an entropy rate of 0. If n is even, these periodic classes have determinant , whereas if n is odd they have determinant is +1. This may be related to the hyperfine structure of energy states.

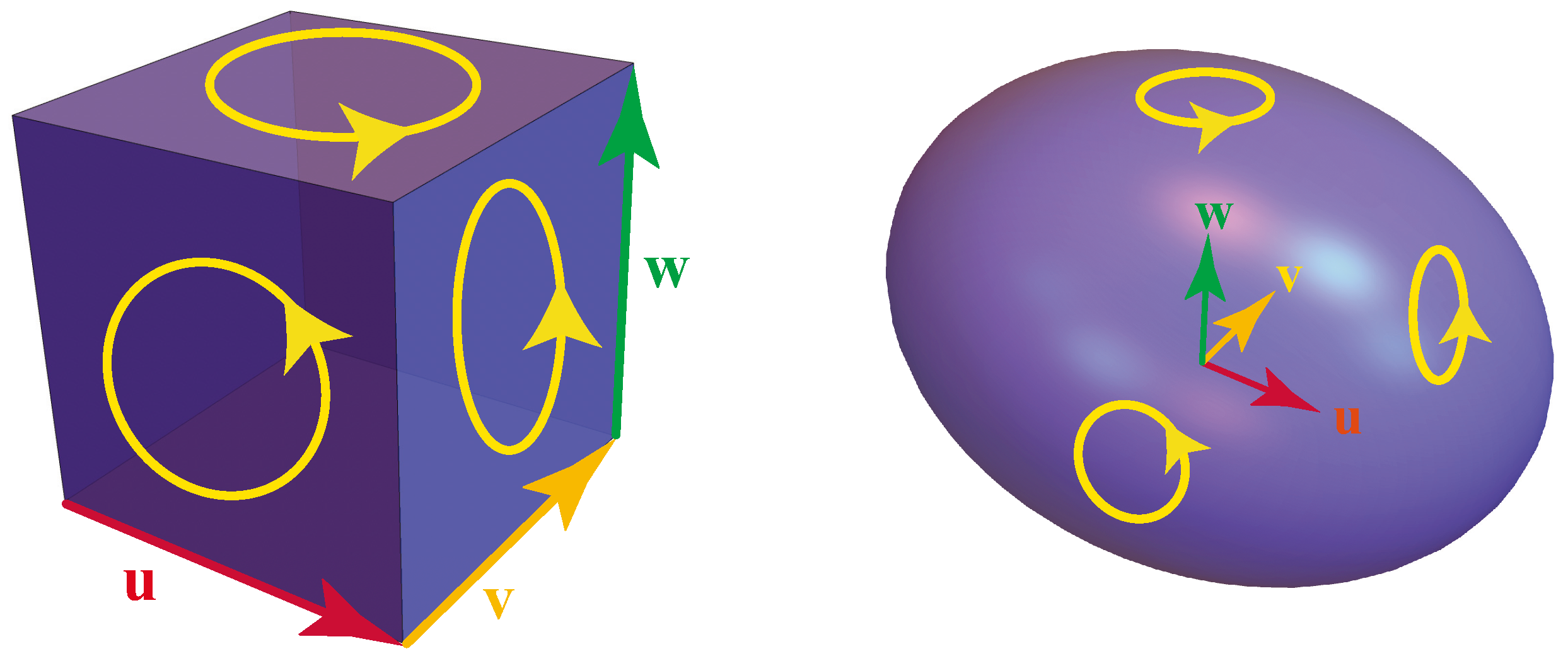

Another physical analogy can give insight as to how ‘spin’, or intrinsic angular momentum of a particle, can arise from the sampling of trace-chains in a Markovian dynamic. In the Ising model, which uses magnetic dipoles as a model of interacting ‘spins’, the phase transition from a ‘disordered’ state of magnetization (i.e. randomly distributed spins at high temperature) to a perfectly ‘ordered’ state of magnetization (i.e. aligned spins at low temperature) occurs below a positive, finite temperature (the Curie temperature), but only in dimensions higher than 1. In one dimension the total magnetization or ‘net spin’ continuously and monotonically decreases, as the temperature increases, from 1 (but only at the lowest temperature of absolute zero degrees), to zero at infinite temperature. But in 2- or more dimensions, a ‘disorder-order’ transition takes place at a non-zero temperature, separating a state of total magnetization (perfect spin alignment) from randomly distributed magnetic dipoles (random spin directions).

In statistical mechanics, the thermodynamic variables of a system can be derived from its partition function, which for the experiencing ‘magnetic domain’ of interaction spins has been calculated [39,40,41,42]. Typically, it is the trace of an N-fold product of individual spin-spin transfer matrices and for antisymmetric transfer matrices, it simply turns out to be the power of the highest eigenvalue. In 2- and higher dimensions, where a phase transition is a reality, it is shown to be given by the square root of the determinant of the associated anti-symmetric spin-spin transfer matrix. The interaction between two such magnetic domains can then be described by the product of the corresponding partition functions and (if the interaction between domains is identical in all other aspects) the total partition function is then proportional to the determinant of the associated transfer matrices. Given two magnetic/spin domains where aggregate spins can be all aligned (normalized spin +1) or all anti-aligned (normalized spin -1), this determinant can range between -1 to +1.

Thus ‘interacting spins’ in the Ising model, described by concatenated spin coupling transfer matrices, offers trace of the resultant matrix as a determinant of the associated matrix representing the statistical mechanical ‘partitioning’ between aligned and anti-aligned spin states, supports our conjecture that the determinant of the sampled and trace-chained Markov chain dynamic is a measure, in some sense, of the fundamental ‘spin’ of a particle. We intend to explore this further in our future investigations.

So the proposal that mass is a projection of entropy rate passes the challenge of quantum theory that massless particles have spin 1, with helicities or . How does it do with the challenge of relativity that massless particles travel at the maximum possible speed, the speed of light?

To investigate that challenge, we need to propose a property of Markov kernels that projects to speed. A natural candidate is related to the commute time, between two states of a Markov chain [27]. is the expected time, starting at a, to go to b and return to a. We have , and . This makes look like a distance between a and b, but it is more natural to view as the squared distance, .

We propose:

We briefly review how to compute mean commute times [31]. Let

P be an

ergodic Markovian tp and let

w be its unique stationary measure. Let

W be the

matrix all of whose rows are

w. Set

Then the matrix

M of

expected first passage times, where

is the expected time to start at

i and arrive at

for the first time (with

) is given by

(Recall that, since

P is ergodic, all

.) Then the

expected commute time is given by

The

total expected commute time for a Markov chain governed by

P is then

and has

terms.

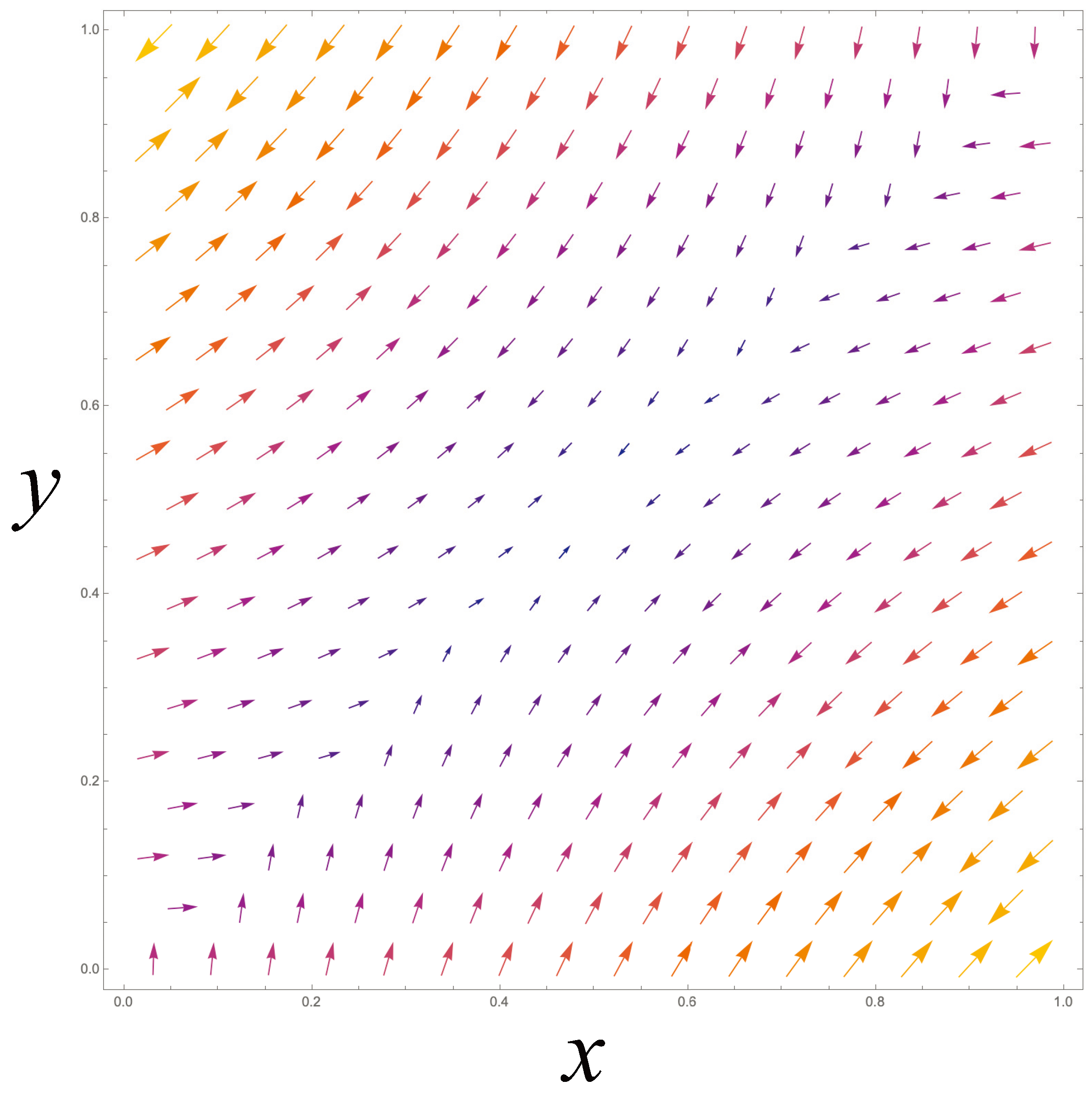

Example:

The typical Markov kernel in

is

The domain

E of ergodic

P consists of the interior of the unit square, together with the NOT operator at

. At these points, the stationary measure is

We have

Now (56) and (58) applied to (59) give us the total expected commute time for a Markov chain governed by

P is

Note that in

the total commute time has only one term; for

there will be

terms.

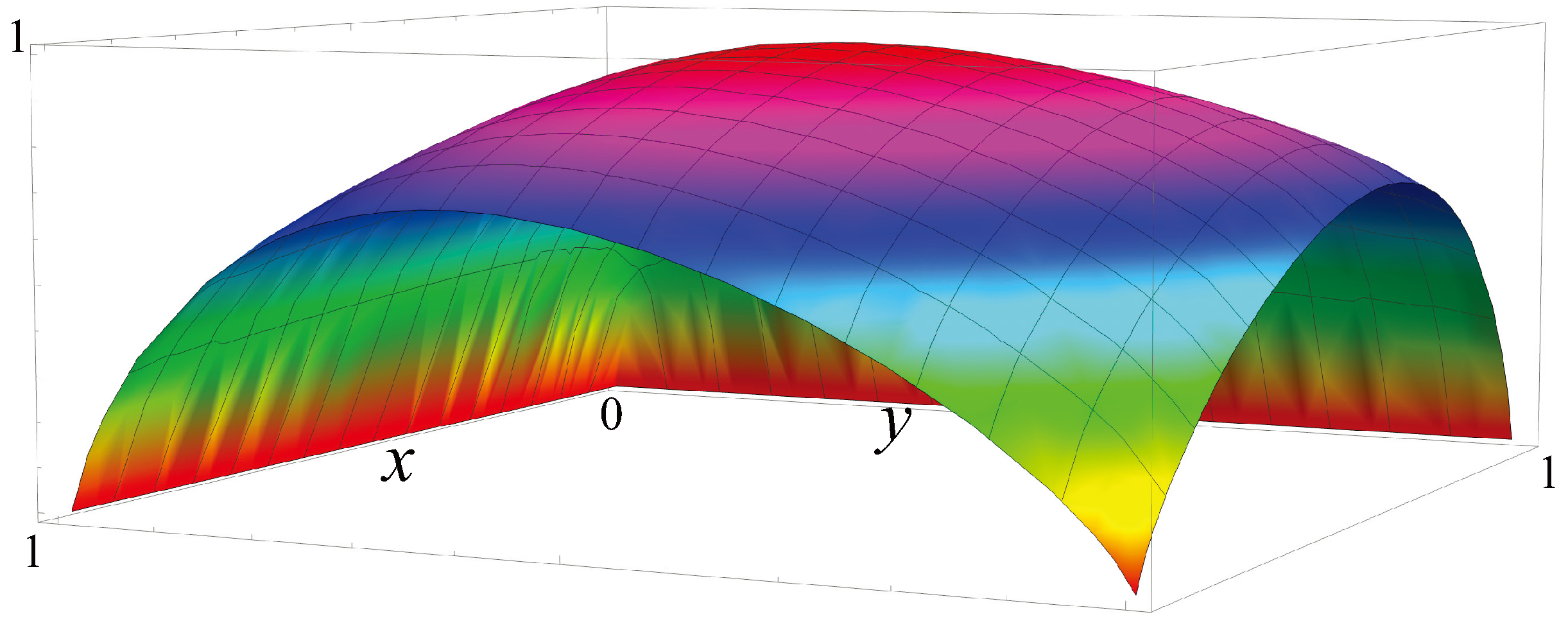

The commute times for Markov chains in

are shown in

Figure 9. The minimum commute time is 2, and is obtained by the Not matrix, the only periodic kernel of period 2 in

.

This example illustrates that when the entropy rate of a CC is 0, the commute times between states of the chain are the shortest possible; i.e., the speed of transition among states of the CC is the maximum speed possible: 1 state per step of the chain. This is achieved by periodic Markov chains, whose entropy rate is 0. Note that such periodic kernels correspond to permutations on the state space that are complete derangements. We make the following conjecture (which holds true in

Figure 9).

Conjecture:

An ergodic Markov chain on n states has a minimal total expected commute time between states if and only if it is periodic with period n.

For periodic CCs, which have zero entropy rate, the expected “speed” of transition between states is maximal, in the sense that the commute time between states is minimal. If we propose that the mass of a particle is a projection of the entropy rate of a CC of CA dynamics, and that the speed of the particle is a projection of the expected speed of transitions between states of that CC, then we satisfy the demand of relativity that massless particles always move at the speed of light, the maximum possible speed. This is encouraging. The proposal that mass is a projection of entropy rate passes the initial challenges posed by quantum theory and relativity.

Suppose, however, that we consider two distinct CCs, say CC1 and CC2, corresponding to two distinct particles, say and . The commute time between any state, i, of CC1 and any state, j, of CC2 is infinite, simply because the probability of getting from i to j is 0. This entails that there is no possible causal interaction between and . In relativistic language we would say that and are separated by a spacelike interval. So clearly we need to generalize our proposal, so that we can also describe particles that are separated by timelike and lightlike intervals.

We still propose that free particles are projections of communicating classes. But particles are not always free. Particles can be bound together, such as when an electron and proton bind to form a hydrogen atom. The strengths of binding vary across combinations of particles. The binding of quarks is so strong that they are said to be “confined.” Quarks are never free particles at normal temperatures, but are always grouped together into hadrons, such as protons, neutrons, and pions. This property of quarks is called “quark confinement.” Only if the temperature exceeds the Hagedorn temperature, about K, do quarks become free, forming a quark-gluon plasma.

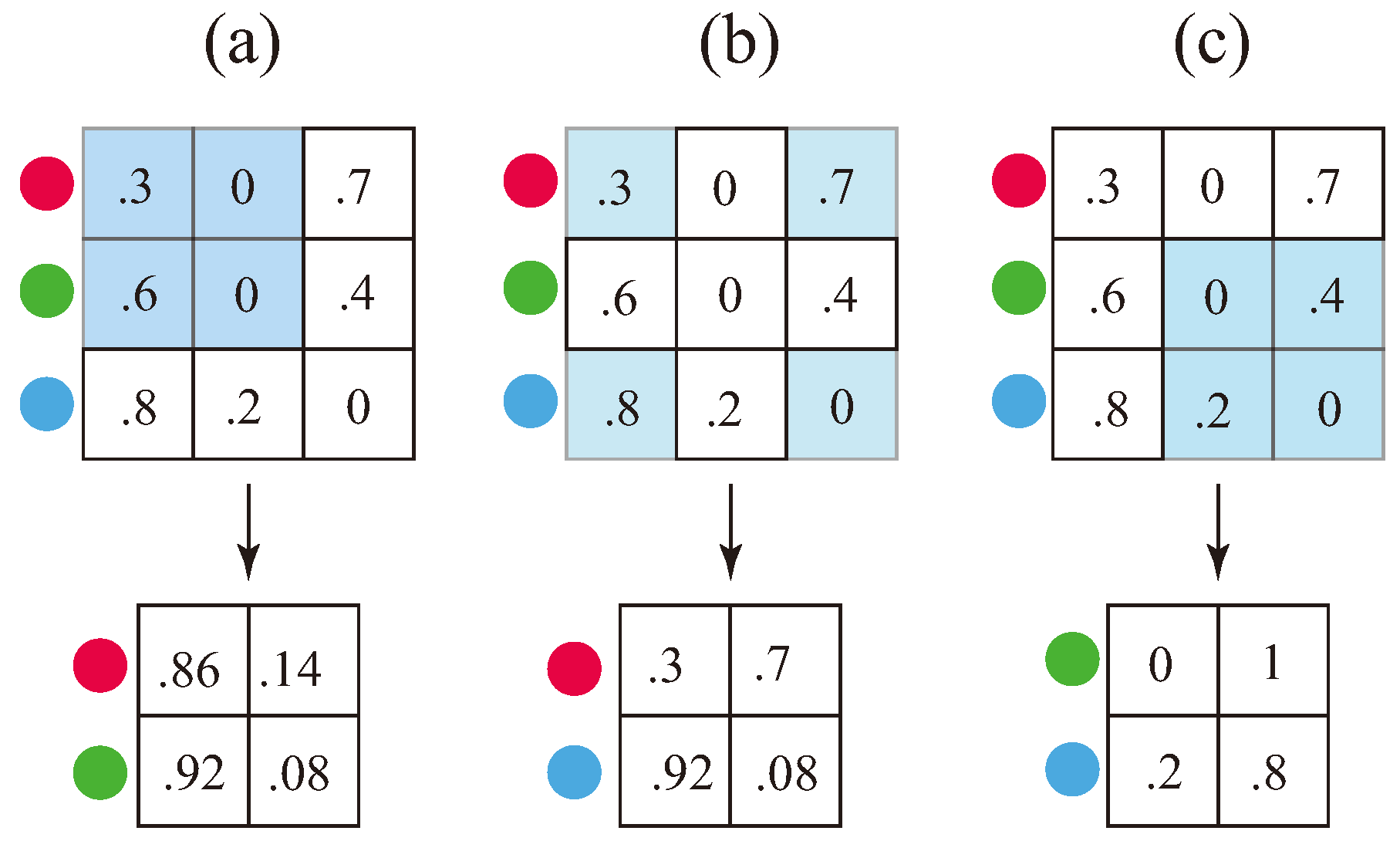

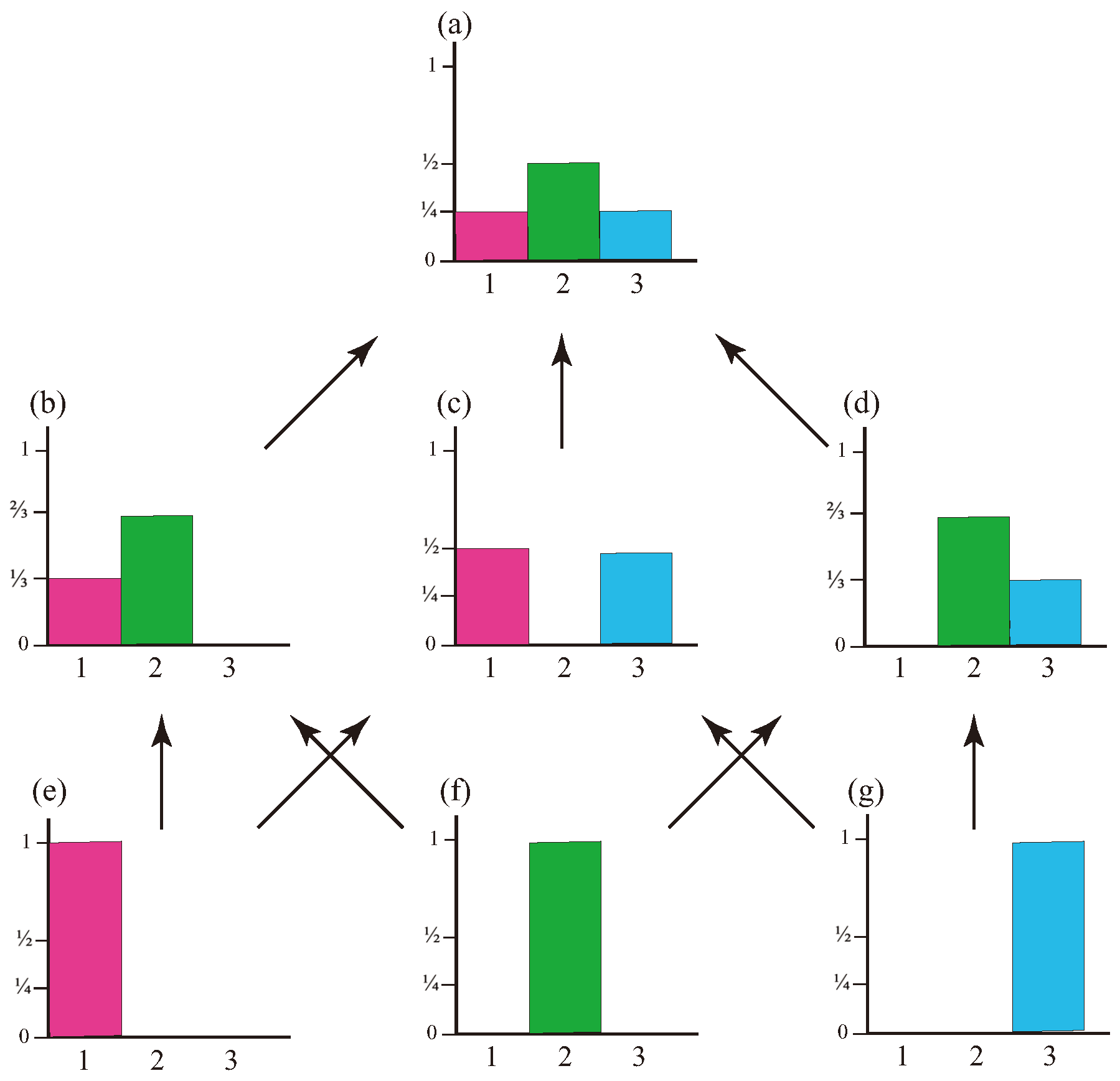

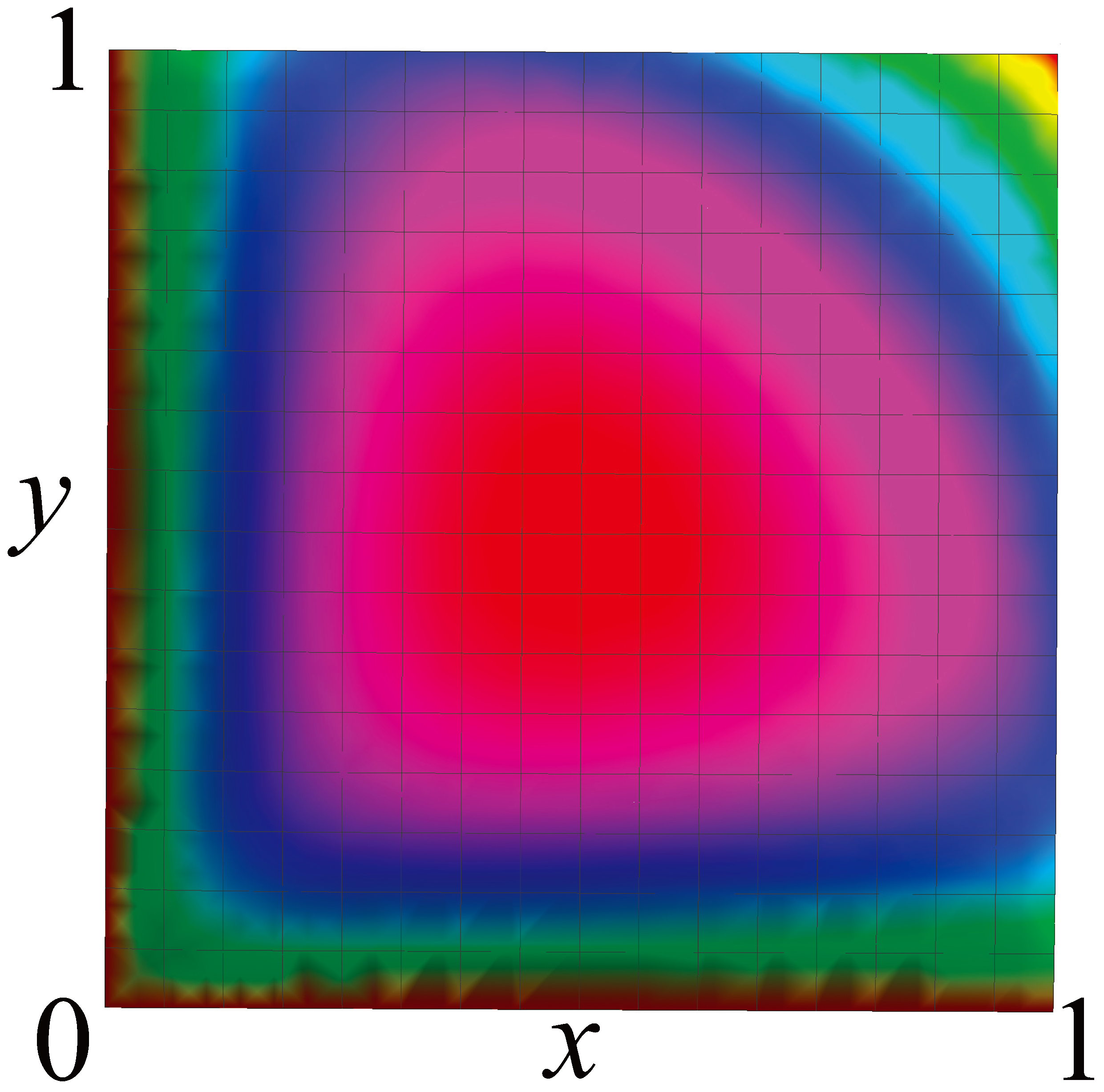

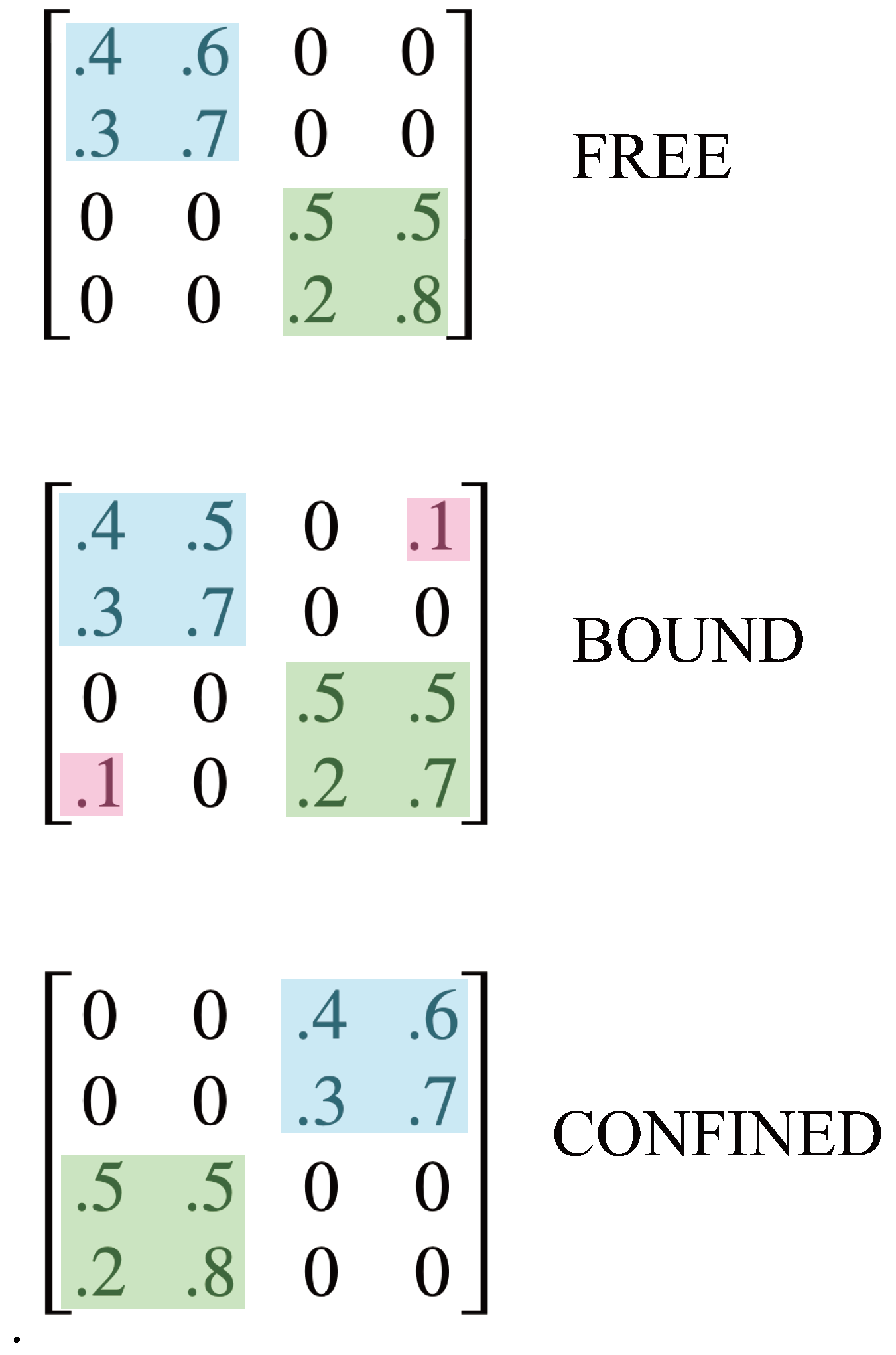

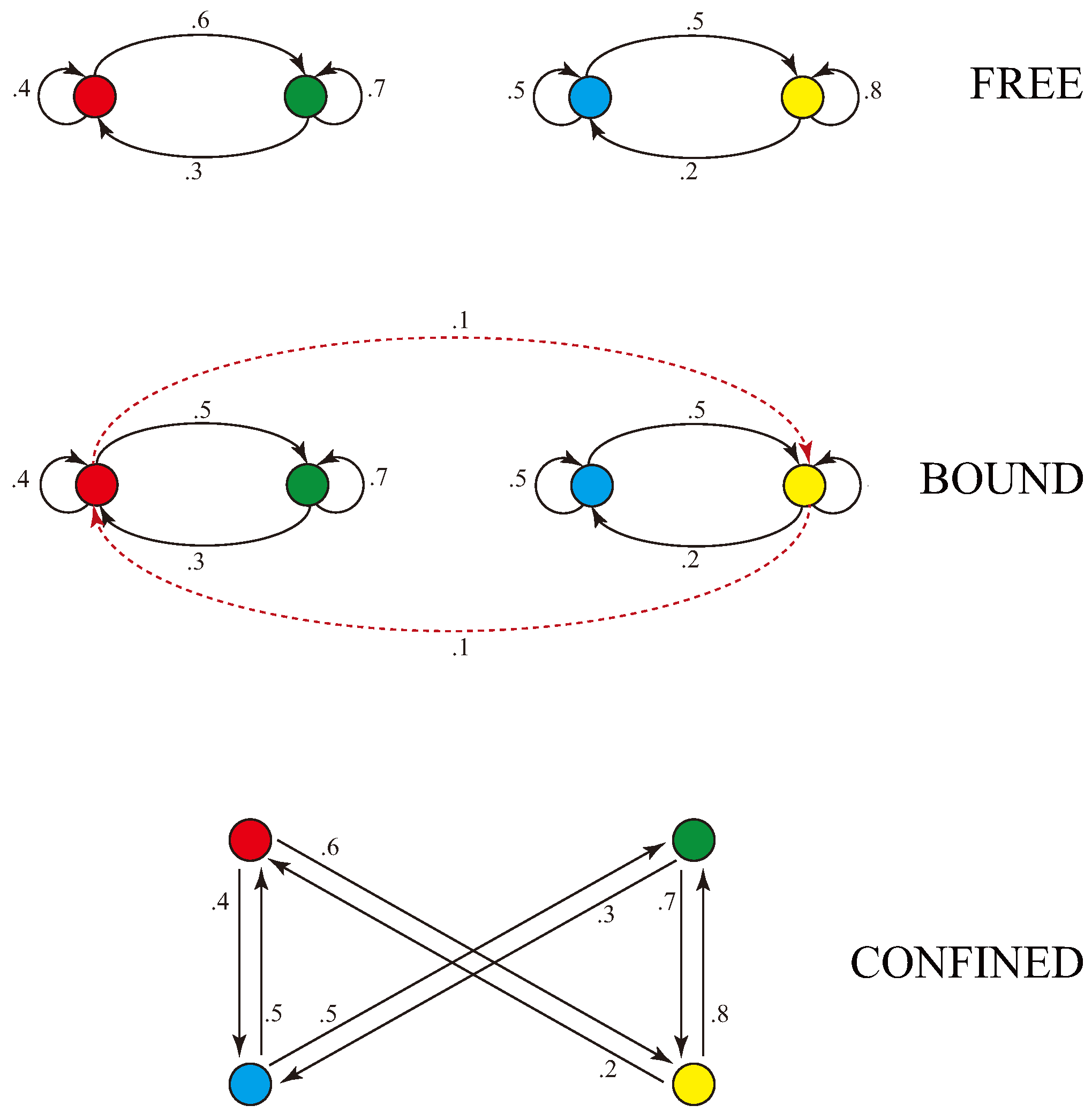

So the relationship between particles can vary on a continuum from free to bound to confined. We can model this variation using Markovian kernels, as illustrated in

Figure 11. The first kernel has two communicating classes, one highlighted in blue and one in green. There is no interaction between the two classes, and so this models free particles. The mass of the blue class is the entropy rate of states 1 and 2; the mass of the green is the entropy rate of states 3 and 4.

The second kernel in the figure has just one communicating class, which consists of all four states of the kernel. However, this kernel is almost identical to the kernel above it, with just the addition of small interaction terms, highlighted in red. So this models the case where the two particles are weakly bound and represent, together, a compound particle. The binding strength between particles can be varied from weak to strong, depending on the size of the interaction terms. We can quantify this by dividing the entropy rate of states 1 and 2 into two parts: the kinetic entropy rate (), which is the part colored blue and corresponds to the mass of the particle, and the potential entropy rate (), corresponding to the binding mass in red. Similarly for states 3 and 4 in green. We will say, suggestively, that a particle is “free” if , “bound” if , and “confined” otherwise.

With stronger binding, particles are confined, as shown in the third kernel. In this kernel the dominant terms are the interaction terms, highlighted in blue and green. In this case the entropy rate of states 1 and 2 is primarily binding mass, as is the entropy rate of states 3 and 4. The mass of the three valence quarks of a proton constitute about 2% of the mass of the proton. We can model this by placing 98% of the entropy rate in the bindings.

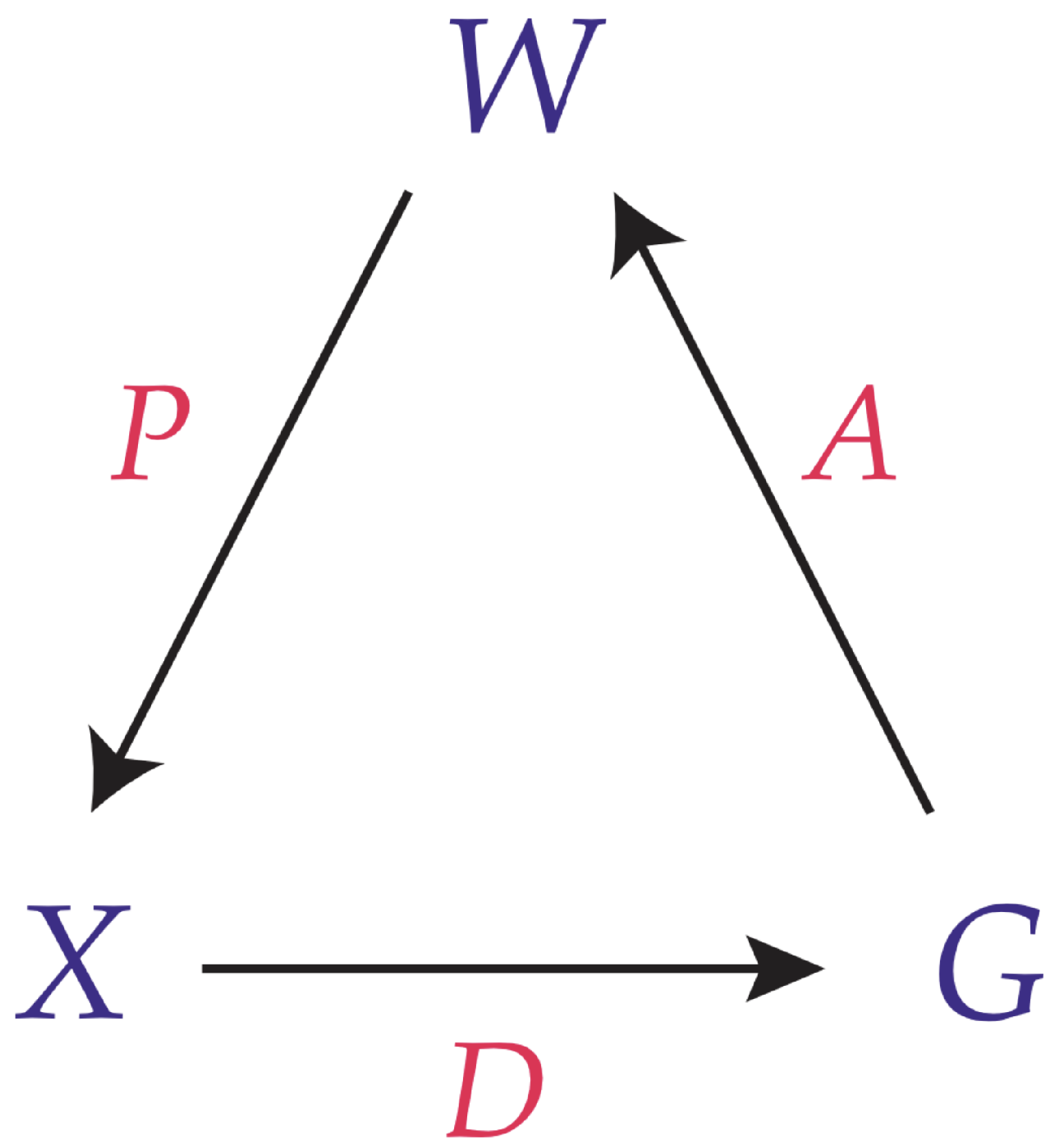

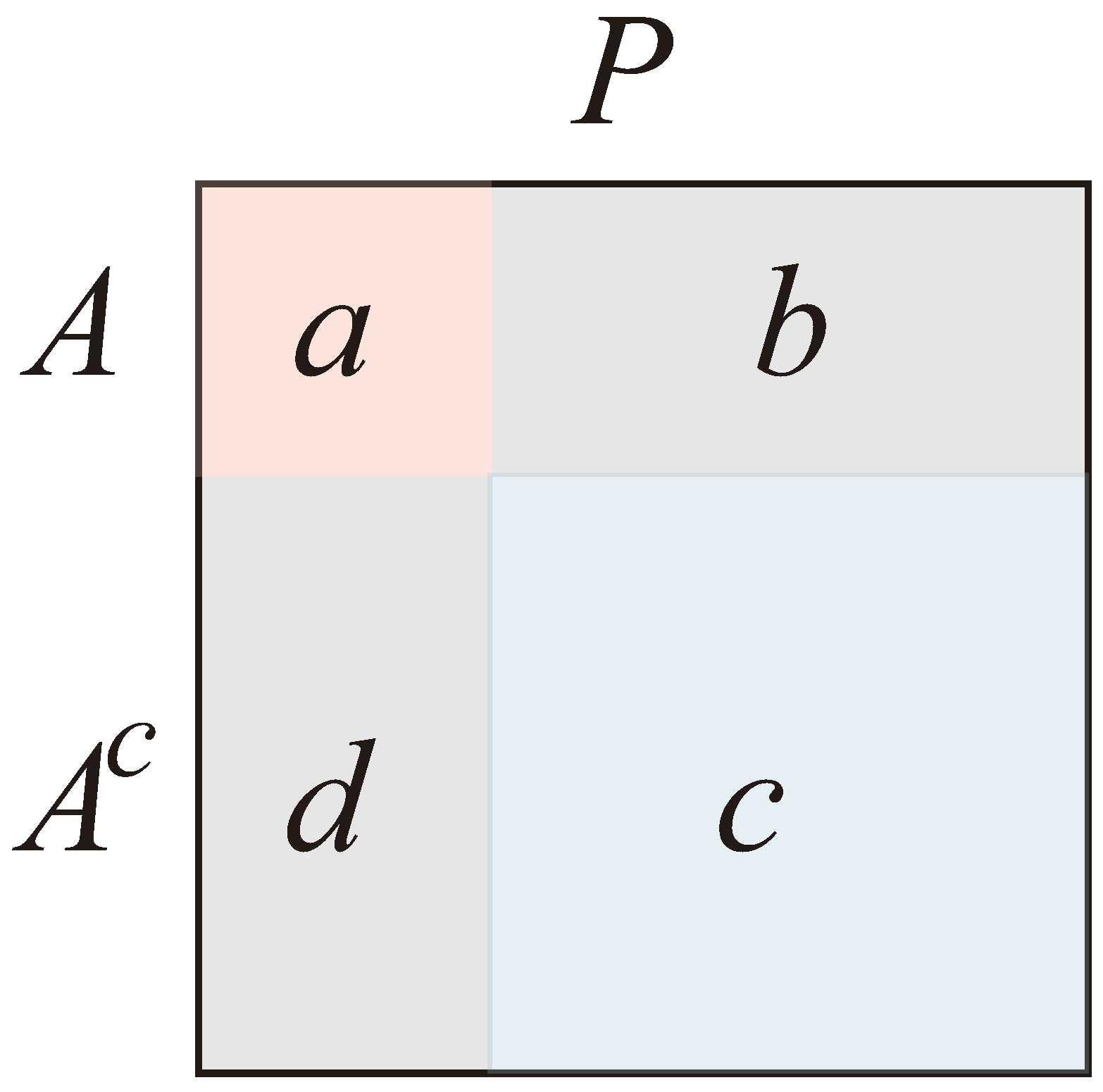

A way to model bound particles is to generalize from CCs to

communities, and to propose that bound particles in spacetime are projections of communities in Markov dynamics of CAs. The notion of communities naturally arises as follows. To each Markov matrix we can associate a weighted, directed graph: Each node of the graph represents a state of the Markov chain, and each directed link from a node

A to a node

B is weighted by the corresponding transition probability from the state represented by

A to the state represented by

B [30]. The graph associated to a Markov chain is called its

diagram.

Figure 10 shows the diagram associated to each matrix of

Figure 10. Red, green, blue and yellow nodes denote, respectively, states 1, 2, 3, and 4 of the matrix.

The diagram labelled “free” is a disconnected graph, with two connected components that correspond to the two communicating classes.

The diagram labelled “bound” is a connected graph, and corresponds to one communicating class involving all four states. However it has a natural division into two communities, one composed of the red and green states, and another composed of the blue and yellow states. The community of red and green states is highly interconnected: most of its transition probabilities stay within the group. Similarly, the community of blue and yellow states is highly interconnected. But the connection between the two communities is weak, accounting for a small fraction of the transition probabilities. There are various approaches to segregating a graph into communities, including infomap, spectral clustering, and modularity maximization [30].

Of particular interest is the diagram of the confined matrix. Note that the pair consisting of the red and green nodes connects only to the pair consisting of yellow and blue nodes, and vice versa. The red and green nodes have no connections between them, and the blue and yellow nodes have no connections between them. This is an example of a “bipartite graph” with two “partite sets.” The first partite set contains the red and green nodes, and the second partite set contains the blue and yellow nodes. This example happens to be a “complete” bipartite graph, in which every node of one partite set connects with every node of each other partite set. This illustrates an important connection between particle confinement and partite sets.

Graphs can also be tripartite, having three distinct partite sets. These may be critical for computational experiments testing our theory against the internal structure of the proton, since the proton, at large values of Bjorken x (i.e., at coarser temporal scales) consists primarily of three valence quarks that are confined within the proton. The proton itself might be modeled by a Markov matrix with a single communicating class, and the quarks confined within the proton might be modeled by having the states of the communicating class be divided into three partite sets. This suggests that for a computational experiment to successfully model the experimentally-determined momentum distributions of quarks and gluons at all values of (i.e., at all spatial scales within the proton) and Bjorken x, the master matrix from which all sampled trace matrices are derived may itself need to have a tripartite graph. It may turn out that the master matrix only needs to have three sets of nodes that are strongly bound rather than completely confined. We shall see. That is one of the points of doing a computational experiment.

We proposed in this section that the mass of a particle in spacetime is a projection of a specific property of the Markov dynamics of conscious agents beyond spacetime. In particular, we proposed that mass is a projection of the entropy rate of a communicating class or, more generally, a community of conscious agents.

This proposal suggests an interesting way to think about black holes in spacetime. Recall that black holes form when too much mass is concentrated into too small a volume of space. Recall also that, in information theory, entropy rate is a measure of the average information produced by a source per unit time. It quantifies the uncertainty about the next symbol to be generated. Channel capacity is the maximum rate at which information can be reliably transmitted over a communication channel. When the entropy rate of a source exceeds the channel capacity, it implies that the source generates information faster than the channel can handle. This mismatch leads to a fundamental limitation in communication.

When the source entropy outpaces channel capacity, the system faces a trade-off between data rate, error rate, and delay. Optimal strategies involve balancing these factors based on the specific application requirements. For example, consider streaming a high-definition video over a low-bandwidth network. The video source generates a high entropy rate due to the rich visual information. If the channel capacity is insufficient, the video quality will degrade, resulting in artifacts, pixelation, or buffering.

We are proposing that spacetime is just a headset that some conscious agents use to simplify their interactions with the vast network of conscious agents. This headset is a communication channel from the vast network to the specific agent that is using the headset. Different portions of this vast network will have different entropy rates for their Markov dynamics. Where this entropy rate exceeds the channel capacity of the spacetime headset, the headset quality will degrade. Black holes may be the result. Perhaps the size of a black hole is a measure of the degree to which the corresponding entropy rate exceeds the channel capacity of the spacetime headset. And perhaps the distortions of spacetime in the vicinity of the black hole offer clues to channel-theoretic properties of the spacetime headset, clues that may help us to reverse engineer the headset and better understand its construction.

Another intriguing possibility we can speculate on is the appearance of special-relativistic reference frames, in the following way. Consider a periodic Markov kernel with period n, and consider its traces on subsets of size which include the 1st and the states. Recall that if v is the relative velocity of a frame, we define to be . Then we propose that such a trace kernel will have, relative to the original kernel, a of the order of . Note that for , will tend to be large, i.e, v close to c, while for we get . We expect to explore this idea of frames further in the future.