Submitted:

09 December 2024

Posted:

10 December 2024

You are already at the latest version

Abstract

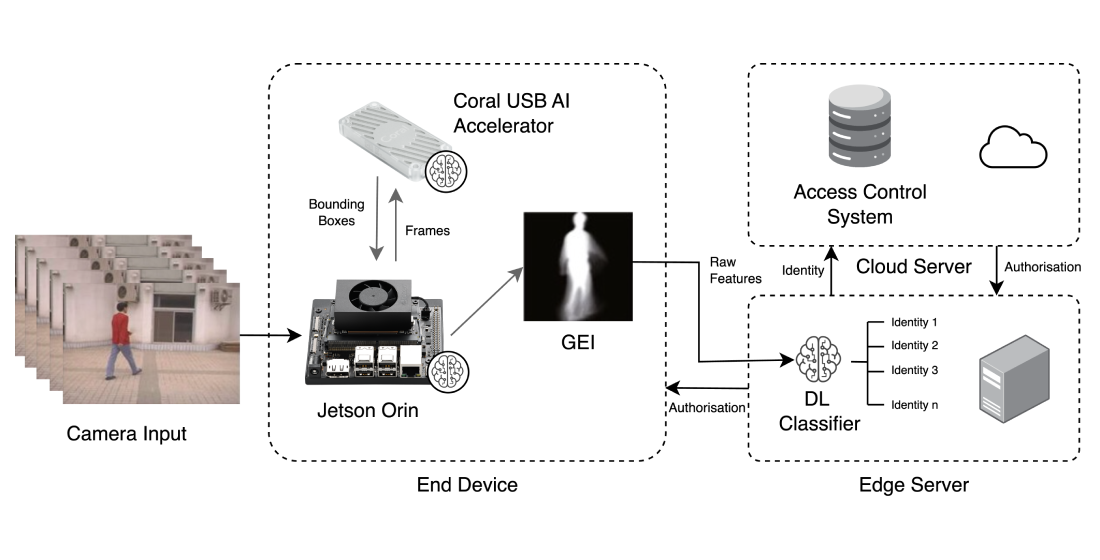

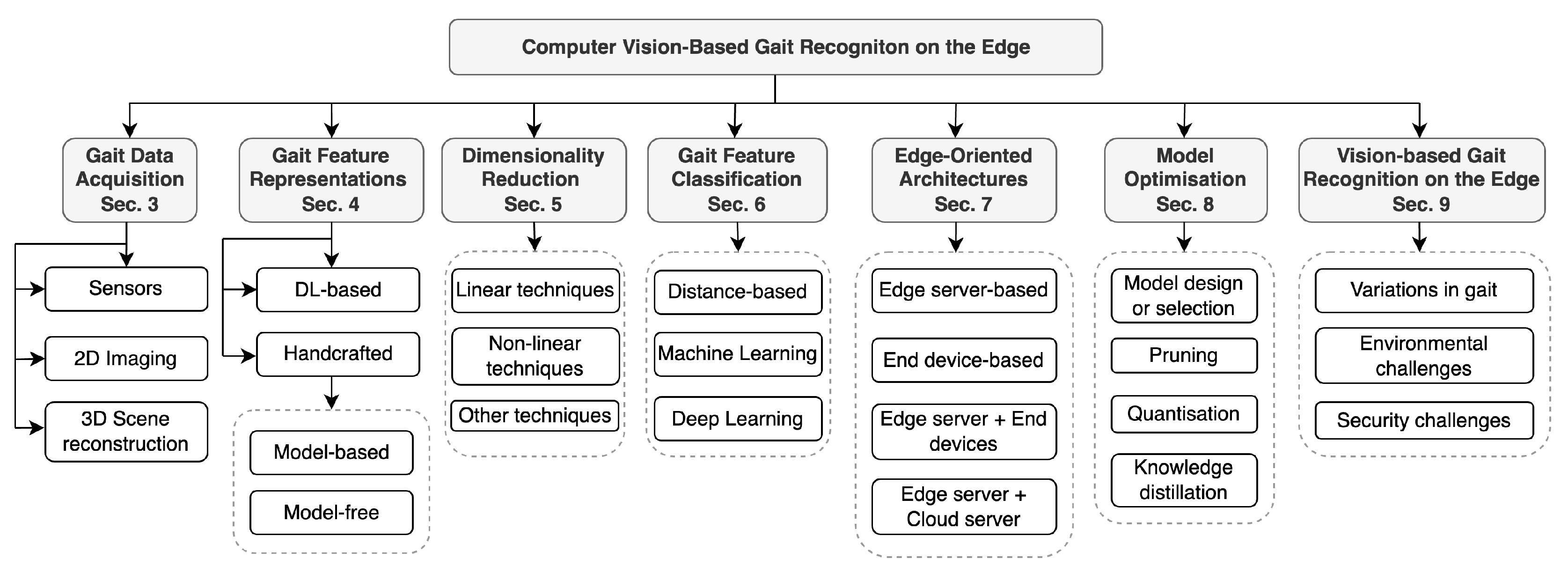

Computer vision-based gait recognition (CVGR) is a technology that has gained considerable attention in recent years due to its non-invasive, unobtrusive, and difficult-to-conceal nature. Beyond its applications in biometrics, CVGR holds significant potential for healthcare and human-computer interaction. Current CVGR systems often transmit collected data to a cloud server for machine-learning-based gait pattern recognition. While effective, this cloud-centric approach can result in increased system response times. Alternatively, the emerging paradigm of edge computing, which involves moving computational processes to local devices, offers the potential to reduce latency, enable real-time surveillance, and eliminate reliance on internet connectivity. Furthermore, recent advancements in low-cost, compact microcomputers capable of handling complex inference tasks (e.g., Jetson Nano Orin, Jetson Xavier NX, and Khadas VIM4) have created exciting opportunities for deploying CVGR systems at the edge. This paper reports the state of the art in gait data acquisition modalities, feature representations, models, and architectures for CVGR systems suitable for edge computing. Additionally, this paper addresses the general limitations and highlights new avenues for future research in the promising intersection of CVGR and edge computing.

Keywords:

1. Introduction

2. Survey Methodology

2.1. Research Questions

- RQ1: What are the primary sensors for gait data acquisition utilised in CVGR systems?

- RQ2: What are the known gait feature extraction, reduction, and representation approaches and their associated challenges in CVGR systems?

- RQ3: Which gait feature classification framework outperforms others, and what techniques can be used to mitigate the challenges associated with these frameworks?

- RQ4: What are the architecture options, challenges, and good practices when it comes to deploying CVGR systems on the edge?

- RQ5: What are the current limitations of CVGR systems based on edge computing, and what are the potential areas for future improvement?

2.2. Search Strategy and Inclusion/Exclusion Criteria

- Included only articles published in English.

- Prioritised peer-reviewed papers based on relevance to the CVGR and edge computing fields, excluding those with weaker or less pertinent contributions.

- Prioritised papers indexed in Scopus that explored the use of CVGR systems in biometric applications, where low latency is crucial.

- Prioritised papers presenting novel methodologies for CVGR systems on the edge.

- Excluded papers lacking sufficient experimental results or methodological details.

- While focused primarily on CVGR, we also included studies on gait recognition using other sensor modalities for comparative analysis.

- Included selectively papers published before 2020, focusing on those that introduced original ideas or made significant advancements in gait recognition.

3. Data Acquisition Methods for Gait Recognition

3.1. Sensor-Based Methods

3.2. 2D Imaging-Based Methods

- Noise reduction to minimise the impact of noise or artifacts in the video data.

- Background subtraction and silhouette extraction to isolate the moving human subject (foreground) from the static background.

- Gait cycle detection and normalisation to identify and standardise the repetitive pattern of gait cycles.

3.3. 3D Scene Reconstruction-Based Methods

3.3.1. Depth Sensing

3.3.2. 3D Lasers

3.3.3. Stereo Vision

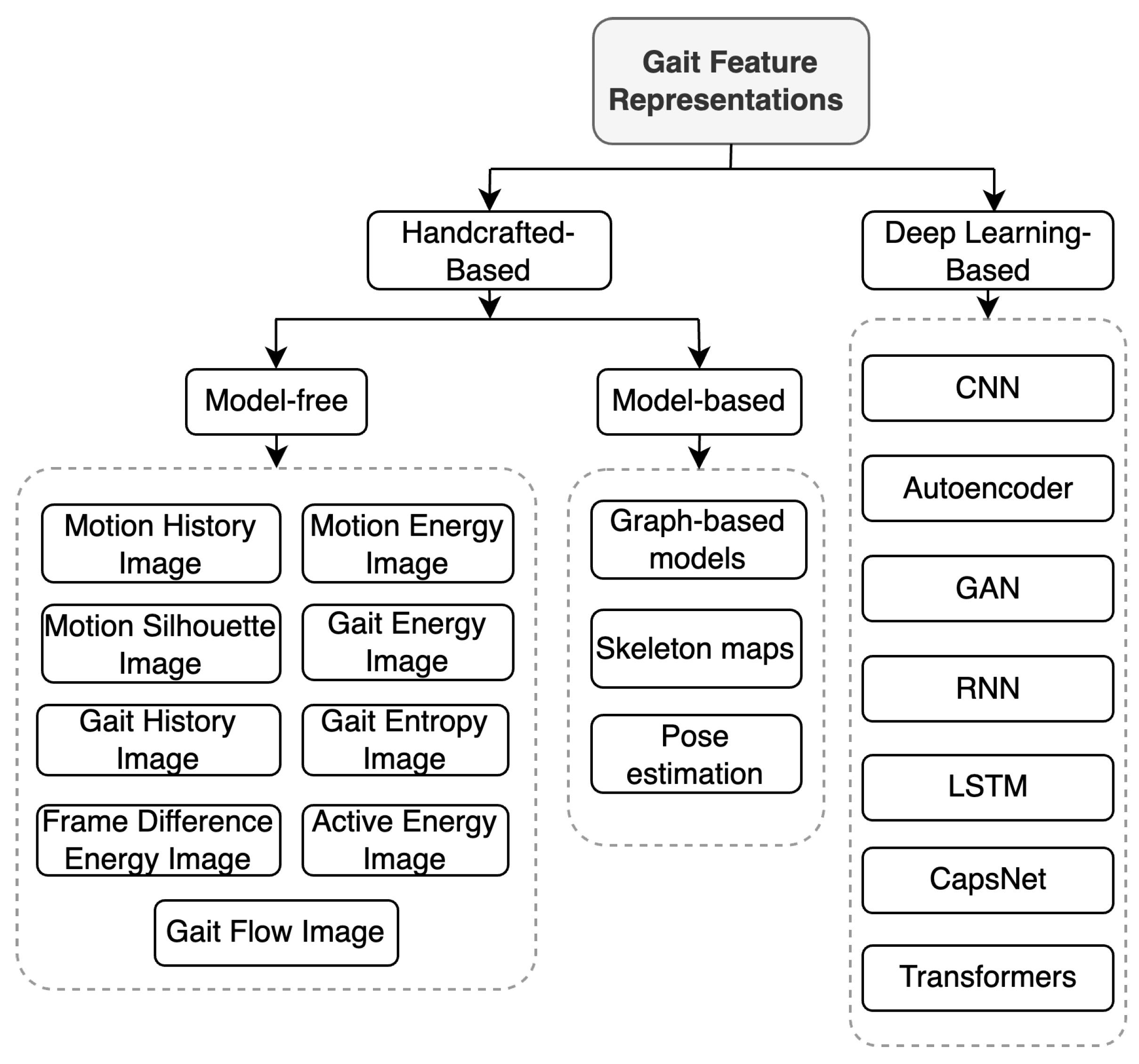

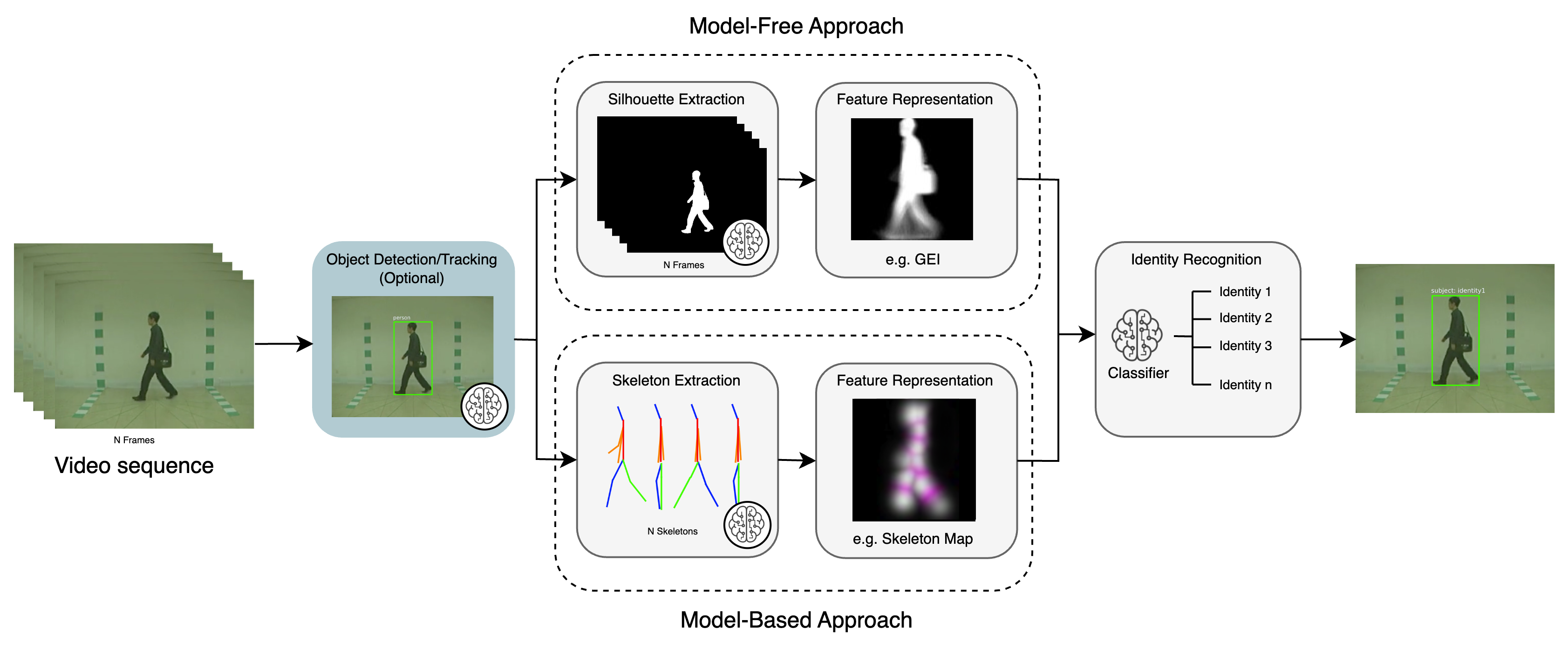

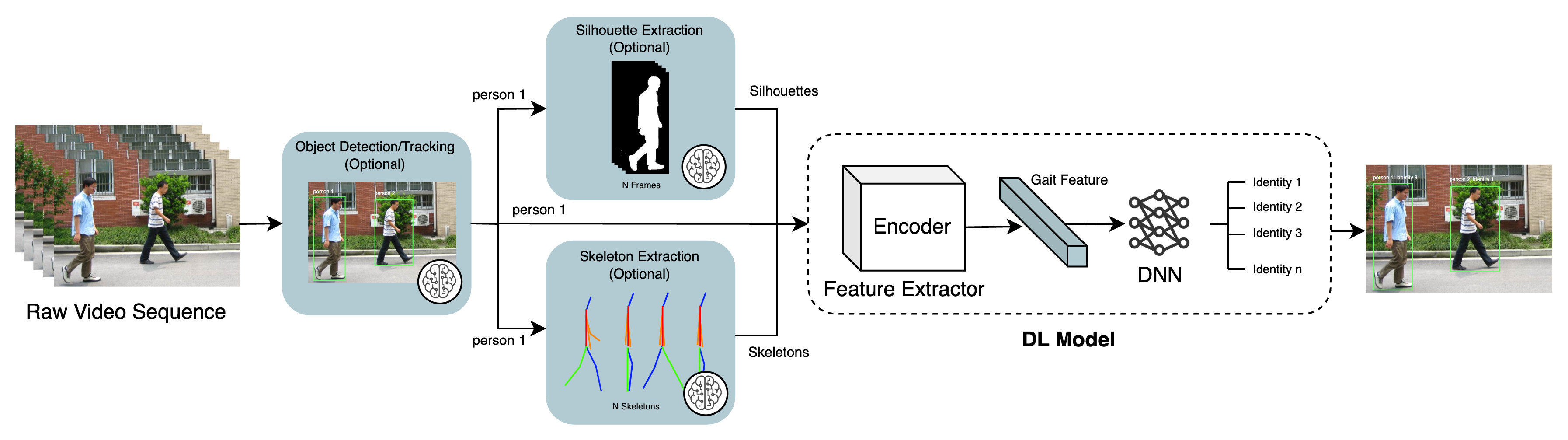

4. Feature Representations for Computer Vision-Based Gait Recognition

4.1. Model-Based Representations

4.1.1. Pose Estimation

4.1.2. Skeleton Maps

4.1.3. Graph-Based Models

4.2. Model-Free-Based Representations

4.2.1. Motion Energy Images and Motion History Images

4.2.2. Gait Energy Images and Gait History Images

4.2.3. Gait Entropy Images

4.2.4. Gait Flow Image

4.2.5. Other Model-Free Representation Methods

- To address the issue of poor image segmentation in gait recognition frameworks, Chen et al. [60] proposed the Frame Difference Energy Image (FDEI) representation. This is a robust representation designed to mitigate the impact of incomplete silhouettes.

- Wang et al. [57] proposed the Chrono-Gait Image (CGI), which encodes the temporal information by assigning different colours to the silhouettes based on their position in the gait cycle, generating a single CGI with richer information.

- To tackle the issue of variations in clothing and carried objects during walking, Zhang et al. [58] proposed the Active Energy Image (AEI) representation. This approach focuses on the dynamic body parts, discarding the static ones, by calculating the difference between consecutive silhouettes in a gait sequence.

- He et al. [53] proposed the Period Energy Image (PEI), a multichannel gait template designed as a generalisation of GEI. It aims to enrich spatial and temporal information in cross-view gait recognition, maintaining more of this information compared to other templates.

- The frame-by-frame Gait Energy Image (ff-GEI) presented in [52] effectively expresses available gait data, relaxes the gait cycle segmentation constraints imposed by existing algorithms, and is better suited to the requirements of DL models.

5. Gait Representation Dimensionality Reduction

5.1. Linear Techniques

5.2. Non-Linear Techniques

5.3. Other Dimensionality Reduction Methods for Gait Recognition

6. Classification of Gait Feature Representations

6.1. Traditional Models

6.1.1. Distance-Based Classification

6.1.2. Machine Learning

6.2. Deep Learning

6.2.1. Convolutional Neural Networks

- In 2022, Ambika et al. [100] proposed an approach based on a CNN-MLP (Multilayer Perceptron) for gait classification, aiming to be robust to subjects’ velocity variations and appearance covariates such as carrying a backpack.

- Researchers in [97] (2021) and [101] (2023) proposed CNN-based methods to split vertically the gait sequence into multiple parts and extract sequential, hierarchical gait features using 3D convolutional layers. Both methods achieved state-of-the-art results, demonstrating the effectiveness of CNNs for gait feature extraction.

6.2.2. Autoencoders

- In 2023, Guo et al. [107] introduced a physics-augmented autoencoder (PAA) that integrates a physics-based decoder. By incorporating physics, the learned 3D skeleton representations become more compact, and physically plausible.

- In the same year, Li et al. [108] proposed a novel gait recognition method that leverages the Koopman operator theory and invertible autoencoders to improve interpretability and reduce the computational cost of gait recognition by learning a low-dimensional, physically meaningful representation of gait dynamics that captures the complex kinematic features of gait cycles.

6.2.3. Generative Adversarial Networks

- Yu et al. [109] proposed a GAN to tackle gait recognition limitations related to appearance changes by generating realistic-looking gait images. The results demonstrated excellent performance on large datasets, where a canonical side-view gait image was generated without needing prior knowledge of the subject’s view angle, or clothing.

- To further research the challenge of view variations in gait recognition, Zhang et al. [110] proposed the View Transformation GAN (VT-GAN). This model translates gaits between any two views using an identity preserver module to prevent the loss of personal identity information during transformations.

6.2.4. Capsule Neural Networks

6.2.5. Recurrent Neural Networks

- Wang and Yan [52], who combined LSTM with convolutional layers (namely ConvLSTM) and trained the resulting model using ff-GEIs. Their method outperformed several state-of-the-art methods in multi-view angle gait recognition.

- To address the challenge of occlusions in gait recognition, Sepas-Moghaddam et al. [120] proposed a method for learning invariant convolutional gait energy maps with an attention-based recurrent model. Their network structure utilised partial representations by decomposing learned gait representations into convolutional energy maps. A recurrent learning module composed of bidirectional gated recurrent units (BGRU) was then used to exploit relationships between these partial spatiotemporal representations, coupled with an attention mechanism to focus on crucial information.

6.2.6. Graph Neural Networks

6.2.7. Transformers and Attention Mechanisms

- To enhance discrimination between different classes of gait features, Wang and Yan [126] (2021) presented a self-attention-based gait classification model that combines non-local and regionalised features. This combination helps identify relevant non-local features, which are then refined by a two-channel network.

- Jia et al. [127] (2021) proposed a CNN Joint Attention Mechanism (CJAM) for identifying the most crucial pixels in a gait sequence. Their workflow uses a CNN to extract feature vectors from the initial gait frames and feeds them into an attention model comprising encoder and decoder layers, followed by linear operations for information transformation and a softmax function for classification. Twelve experiments demonstrated that this attention model outperforms others in terms of reducing errors, with the CJAM model achieving accuracy improvements of 8.44%, 2.94%, and 1.45% over 3D-CNN, CNN-LSTM, and a simple CNN, respectively.

- Mogan et al. [128] (2022) converted GEI representations into 2D flattened patches and passed them into a Vision Transformer model consisting of an embedding layer (with patch embedding applied to the sequence of patches), a transformer encoder for achieving a final representation, and a multi-layer perceptron that performs the classification based on the first token of the sequence. Experiments on small and large datasets demonstrated the scalability of the Vision Transformer model, showcasing its robustness against noisy and incomplete silhouettes and its remarkable subject identification capability regardless of the different covariates. In 2023, the same team proposed assembling a similar Transformer model with two other architectures: a DenseNet-201 and a VGG-16 [129]. The experimental results showed substantial improvement using the CASIA-B [23] and the OU-ISIR [18] datasets.

- Li et al. [125] (2023) proposed TransGait, a novel gait recognition framework that utilises a transformer to effectively fuse silhouette and pose information, leveraging the strengths of both feature representations.

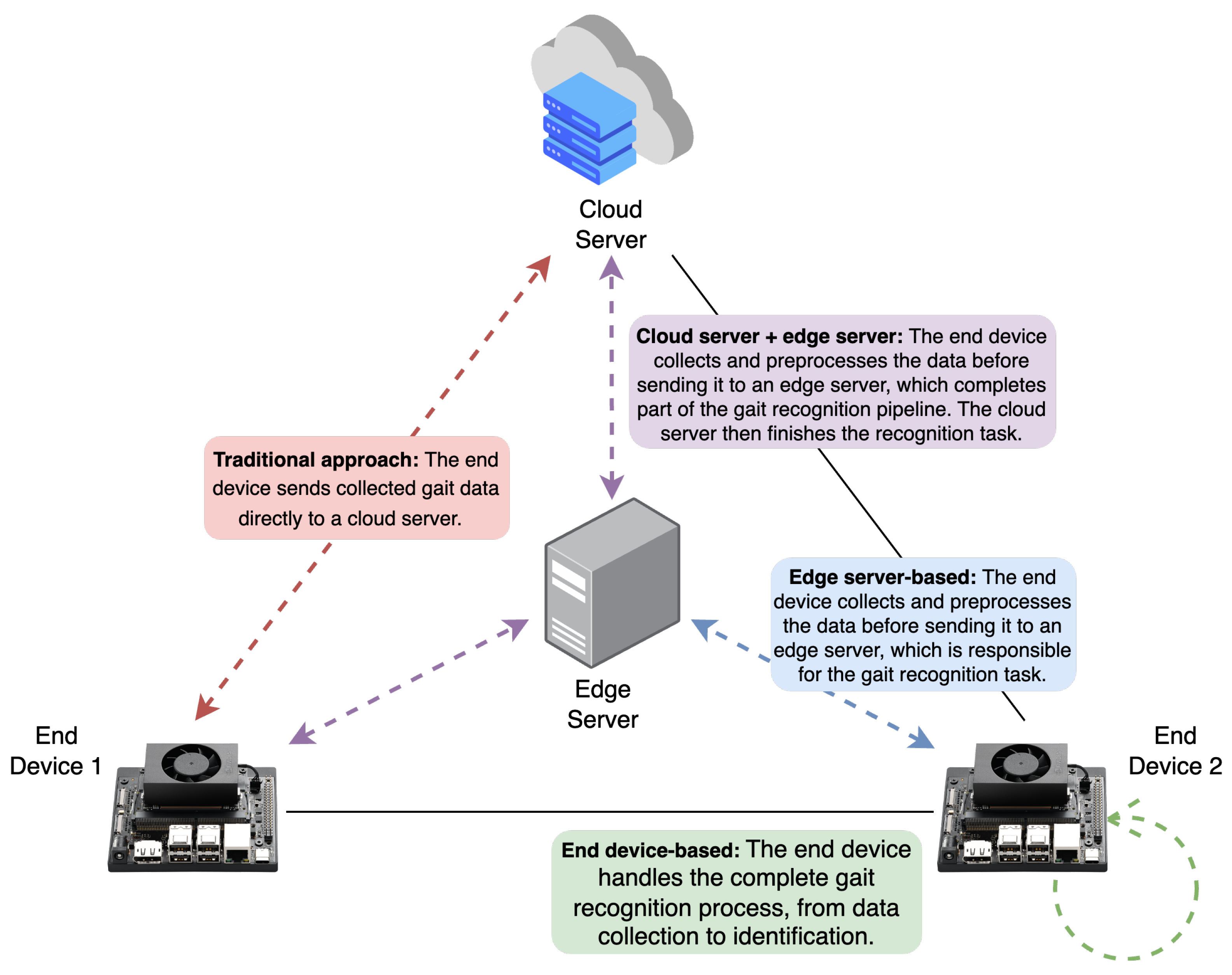

7. Edge-Oriented Inference Architectures

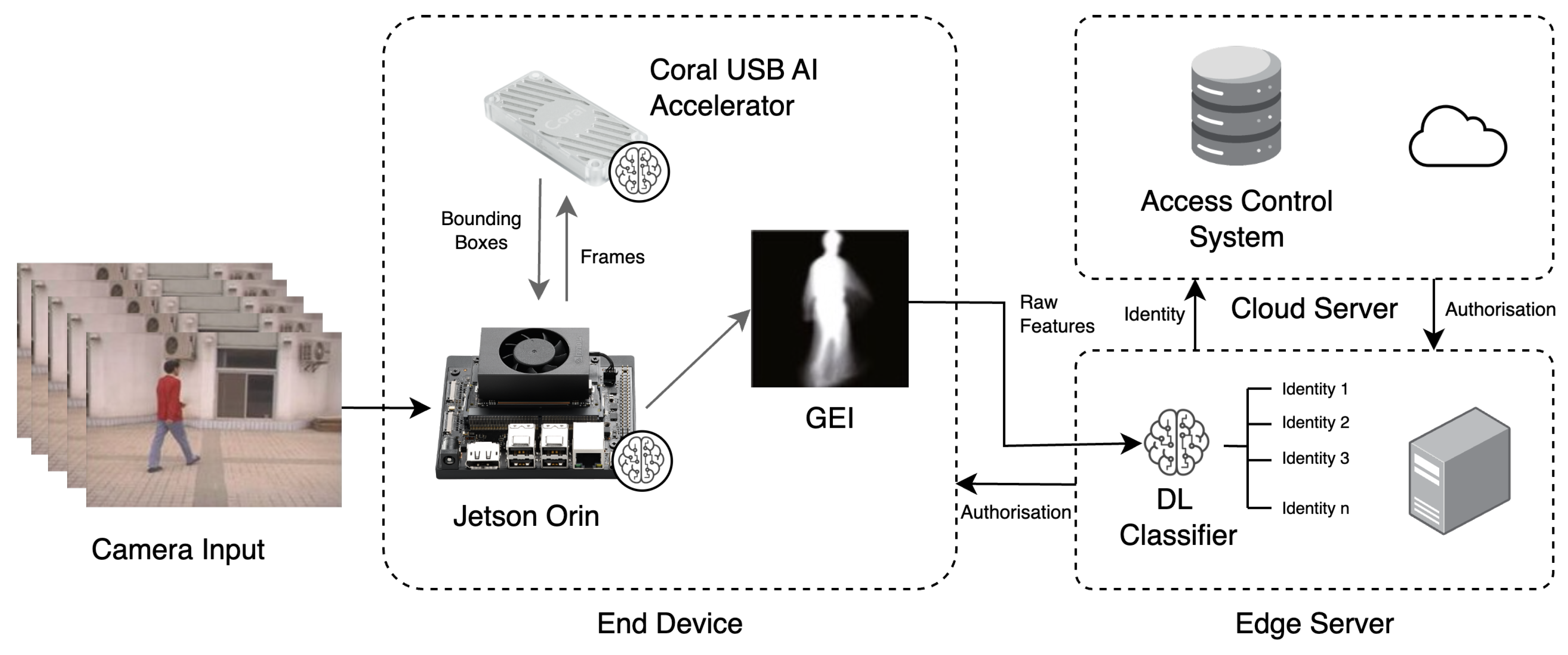

7.1. Edge Server-Based

7.2. End Device-Based

7.3. Edge Server + End Devices

7.4. Edge Server + Cloud Server

8. Model Optimisation for Edge Computing

- Latency: This measures the time taken to process and recognise gait patterns, crucial for real-time applications.

- Energy consumption: This quantifies power usage, highlighting sustainability and device longevity, particularly for battery-powered systems.

- Model accuracy: This, including metrics like precision, recall, and F1-score (detailed in [142]), evaluates the effectiveness of gait recognition under constrained conditions.

- Model size: This refers to the storage footprint of the model.

- Throughput: This is the number of frames per second processed, also known as FPS.

8.1. Model Design or Selection

8.2. Pruning

8.3. Quantisation

8.4. Knowledge Distillation

9. Gait Recognition on the Edge

9.1. Variations in Gait

9.2. Environmental Challenges

9.3. Security Challenges

9.3.1. Person Physical Impersonation

9.3.2. Spoofing via Synthetic Data Generation

9.3.3. Silhouette Poisoning for Misclasification

10. Discussion and Future Work

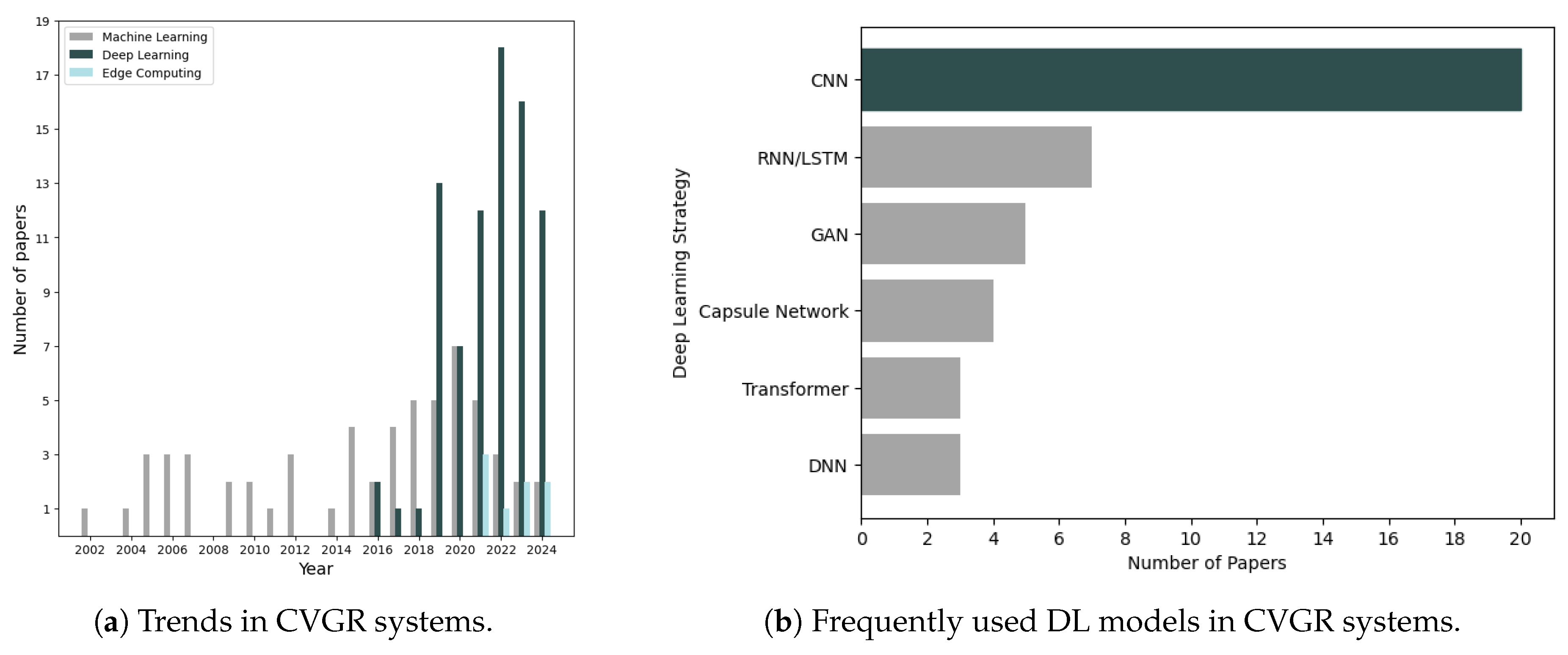

10.1. Trends and Challenges in Computer Vision-Based Gait Recognition Systems

10.2. Edge Computing for CVGR Systems

10.3. Applications of CVGR Systems on the Edge

11. Conclusions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| ANN | Artificial Neural Networks |

| CNN | Convolutional Neural Network |

| CVGR | Computer Vision-based Gait Recognition |

| DCT | Discrete Cosine Transform |

| DL | Deep Learning |

| DNN | Deep Neural Network |

| DR | Dimensionality Reduction |

| DWT | Discrete Wavelet Decomposition |

| ff-GEI | frame-by-frame Gait Energy Image |

| GAN | Generative Adversarial Networks |

| GEI | Gait Energy Images |

| GEnI | Gait Entropy Images |

| GFI | Gait Flow Image |

| GHI | Gait History Image |

| GNN | Graph Neural Networks |

| GPU | Graphics Processing Unit |

| IoT | Internet of Things |

| LDA | Linear Discriminant Analysis |

| LiDAR | Light Detection and Ranging |

| LSTM | Long Short-Term Memory |

| MEI | Motion-Energy Image |

| MHI | Motion-History Image |

| MSI | Motion Silhouettes Image |

| PCA | Principal Component Analysis |

| ML | Machine Learning |

| RNN | Recurrent Neural Networks |

| t-SNE | t-distributed Stochastic Neighbor Embedding |

| TFLOPS | Tera Floating-point Operations Per Second |

| TOPS | Tera Operations Per Second |

| TPU | Tensor Processing Unit |

| VPU | Vision Processing Unit |

References

- Singh, J.P.; Jain, S.; Arora, S.; Singh, U.P. Vision-based gait recognition: A survey. IEEE Access 2018, 6, 70497–70527. [Google Scholar] [CrossRef]

- Marsico, M.D.; Mecca, A. A Survey on Gait Recognition via Wearable Sensors. ACM Comput. Surv. 2019, 52, 1–39. [Google Scholar] [CrossRef]

- Wan, C.; Wang, L.; Phoha, V.V. A Survey on Gait Recognition. ACM Comput. Surv. 2018, 51, 1–35. [Google Scholar] [CrossRef]

- Li, R.; Yun, L.; Zhang, M.; Yang, Y.; Cheng, F. Cross-View Gait Recognition Method Based on Multi-Teacher Joint Knowledge Distillation. Sensors 2023, 23, 9289. [Google Scholar] [CrossRef] [PubMed]

- Zheng, J.; Liu, X.; Liu, W.; He, L.; Yan, C.; Mei, T. Gait recognition in the wild with dense 3d representations and a benchmark. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 20228–20237.

- Xu, C.; Tsuji, S.; Makihara, Y.; Li, X.; Yagi, Y. Occluded Gait Recognition via Silhouette Registration Guided by Automated Occlusion Degree Estimation. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 3199–3209.

- Fu, Y.; Meng, S.; Hou, S.; Hu, X.; Huang, Y. Gpgait: Generalized pose-based gait recognition. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 19595–19604.

- Shen, C.; Yu, S.; Wang, J.; Huang, G.Q.; Wang, L. A comprehensive survey on deep gait recognition: algorithms, datasets and challenges. arXiv preprint arXiv:2206.13732, arXiv:2206.13732 2022.

- Güner Şahan, P.; Şahin, S.; Kaya Gülağız, F. A survey of appearance-based approaches for human gait recognition: techniques, challenges, and future directions. The Journal of Supercomputing.

- Ahmed, D.M.; Mahmood, B.S. Survey: gait recognition based on image energy. 2023 IEEE 14th Control and System Graduate Research Colloquium (ICSGRC). IEEE, 2023, pp. 51–56.

- Alharthi, A.S.; Casson, A.J.; Ozanyan, K.B. Spatiotemporal Analysis by Deep Learning of Gait Signatures From Floor Sensors. IEEE Sens. J. 2021, 21, 16904–16914. [Google Scholar] [CrossRef]

- Li, J.; Wang, Z.; Zhao, Z.; Jin, Y.; Yin, J.; Huang, S.L.; Wang, J. TriboGait: A deep learning enabled triboelectric gait sensor system for human activity recognition and individual identification. In Adjunct Proceedings of the 2021 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2021 ACM International Symposium on Wearable Computers; Association for Computing Machinery: New York, NY, USA, 2021; pp. 643–648. [Google Scholar]

- Venkatachalam, S.; Nair, H.; Vellaisamy, P.; Zhou, Y.; Youssfi, Z.; Shen, J.P. Realtime Person Identification via Gait Analysis. arXiv preprint arXiv:2404.15312, arXiv:2404.15312 2024.

- Song, C.; Huang, Y.; Wang, W.; Wang, L. CASIA-E: a large comprehensive dataset for gait recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022, 45, 2801–2815. [Google Scholar] [CrossRef] [PubMed]

- Mu, Z.; Castro, F.M.; Marin-Jimenez, M.J.; Guil, N.; Li, Y.R.; Yu, S. Resgait: The real-scene gait dataset. 2021 IEEE International Joint Conference on Biometrics (IJCB). IEEE, 2021, pp. 1–8.

- Zhu, Z.; Guo, X.; Yang, T.; Huang, J.; Deng, J.; Huang, G.; Du, D.; Lu, J.; Zhou, J. Gait recognition in the wild: A benchmark. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 14789–14799.

- Dou, H.; Zhang, W.; Zhang, P.; Zhao, Y.; Li, S.; Qin, Z.; Wu, F.; Dong, L.; Li, X. Versatilegait: a large-scale synthetic gait dataset with fine-grainedattributes and complicated scenarios. arXiv preprint arXiv:2101.01394, arXiv:2101.01394 2021.

- An, W.; Yu, S.; Makihara, Y.; Wu, X.; Xu, C.; Yu, Y.; Liao, R.; Yagi, Y. Performance evaluation of model-based gait on multi-view very large population database with pose sequences. IEEE transactions on biometrics, behavior, and identity science 2020, 2, 421–430. [Google Scholar] [CrossRef]

- Iwashita, Y.; Ogawara, K.; Kurazume, R. Identification of people walking along curved trajectories. Pattern Recognition Letters 2014, 48, 60–69. [Google Scholar] [CrossRef]

- Samangooei, S.; Bustard, J.; Nixon, M.; Carter, J. On acquisition and analysis of a dataset comprising of gait, ear and semantic data. Multibiometrics for Human Identification.

- Sivapalan, S.; Chen, D.; Denman, S.; Sridharan, S.; Fookes, C. Gait energy volumes and frontal gait recognition using depth images. 2011 International Joint Conference on Biometrics (IJCB). IEEE, 2011, pp. 1–6.

- Tan, D.; Huang, K.; Yu, S.; Tan, T. Efficient night gait recognition based on template matching. 18th international conference on pattern recognition (ICPR’06). IEEE, 2006, Vol. 3, pp. 1000–1003.

- Yu, S.; Tan, D.; Tan, T. A Framework for Evaluating the Effect of View Angle, Clothing and Carrying Condition on Gait Recognition. 18th International Conference on Pattern Recognition (ICPR’06), 2006, Vol. 4, pp. 441–444.

- Wang, L.; Tan, T.; Ning, H.; Hu, W. Silhouette analysis-based gait recognition for human identification. IEEE transactions on pattern analysis and machine intelligence 2003, 25, 1505–1518. [Google Scholar] [CrossRef]

- Huang, P.S.; Harris, C.J.; Nixon, M.S. Recognising humans by gait via parametric canonical space. Artificial Intelligence in Engineering 1999, 13, 359–366. [Google Scholar] [CrossRef]

- Sadeghzadehyazdi, N.; Batabyal, T.; Acton, S.T. Modeling spatiotemporal patterns of gait anomaly with a CNN-LSTM deep neural network. Expert Syst. Appl. 2021, 185, 115582. [Google Scholar] [CrossRef]

- Chattopadhyay, P.; Roy, A.; Sural, S.; Mukhopadhyay, J. Pose Depth Volume extraction from RGB-D streams for frontal gait recognition. J. Vis. Commun. Image Represent. 2014, 25, 53–63. [Google Scholar] [CrossRef]

- Bari, A.S.M.H.; Gavrilova, M.L. Artificial Neural Network Based Gait Recognition Using Kinect Sensor. IEEE Access 2019, 7, 162708–162722. [Google Scholar] [CrossRef]

- Albert, J.A.; Owolabi, V.; Gebel, A.; Brahms, C.M.; Granacher, U.; Arnrich, B. Evaluation of the Pose Tracking Performance of the Azure Kinect and Kinect v2 for Gait Analysis in Comparison with a Gold Standard: A Pilot Study. Sensors 2020, 20. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; She, M.; Nahavandi, S.; Kouzani, A. A Review of Vision-Based Gait Recognition Methods for Human Identification. 2010 International Conference on Digital Image Computing: Techniques and Applications, 2010, pp. 320–327.

- Liao, R.; Yu, S.; An, W.; Huang, Y. A model-based gait recognition method with body pose and human prior knowledge. Pattern Recognit. 2020, 98. [Google Scholar] [CrossRef]

- Shen, C.; Fan, C.; Wu, W.; Wang, R.; Huang, G.Q.; Yu, S. Lidargait: Benchmarking 3d gait recognition with point clouds. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 1054–1063.

- Ma, C.; Liu, Z. A Novel Spatial–Temporal Network for Gait Recognition Using Millimeter-Wave Radar Point Cloud Videos. Electronics 2023, 12, 4785. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, P.; Zhang, Y.; Miyazaki, K. Gait analysis using stereo camera in daily environment. 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC). IEEE, 2019, pp. 1471–1475.

- Liu, H.; Cao, Y.; Wang, Z. Automatic gait recognition from a distance. 2010 Chinese Control and Decision Conference. IEEE, 2010, pp. 2777–2782.

- Kok, K.Y.; Rajendran, P. A review on stereo vision algorithm: Challenges and solutions. ECTI Transactions on Computer and Information Technology (ECTI-CIT) 2019, 13, 112–128. [Google Scholar] [CrossRef]

- Dai, J.; Li, Q.; Wang, H.; Liu, L. Understanding images of surveillance devices in the wild. Knowledge-Based Systems 2024, 284, 111226. [Google Scholar] [CrossRef]

- Stenum, J.; Rossi, C.; Roemmich, R.T. Two-dimensional video-based analysis of human gait using pose estimation. PLoS computational biology 2021, 17, e1008935. [Google Scholar] [CrossRef] [PubMed]

- Hii, C.S.T.; Gan, K.B.; Zainal, N.; Mohamed Ibrahim, N.; Azmin, S.; Mat Desa, S.H.; van de Warrenburg, B.; You, H.W. Automated gait analysis based on a marker-free pose estimation model. Sensors 2023, 23, 6489. [Google Scholar] [CrossRef] [PubMed]

- Teepe, T.; Khan, A.; Gilg, J.; Herzog, F.; Hörmann, S.; Rigoll, G. Gaitgraph: Graph convolutional network for skeleton-based gait recognition. 2021 IEEE international conference on image processing (ICIP). IEEE, 2021, pp. 2314–2318.

- Teepe, T.; Gilg, J.; Herzog, F.; Hörmann, S.; Rigoll, G. Towards a deeper understanding of skeleton-based gait recognition. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 1569–1577.

- Konz, L.; Hill, A.; Banaei-Kashani, F. ST-DeepGait: A spatiotemporal deep learning model for human gait recognition. Sensors 2022, 22, 8075. [Google Scholar] [CrossRef] [PubMed]

- Fan, C.; Ma, J.; Jin, D.; Shen, C.; Yu, S. SkeletonGait: Gait Recognition Using Skeleton Maps. Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol. 38, pp. 1662–1669.

- Takemura, N.; Makihara, Y.; Muramatsu, D.; Echigo, T.; Yagi, Y. Multi-view large population gait dataset and its performance evaluation for cross-view gait recognition. IPSJ transactions on Computer Vision and Applications 2018, 10, 1–14. [Google Scholar] [CrossRef]

- Bobick, A.F.; Davis, J.W. The recognition of human movement using temporal templates. IEEE Trans. Pattern Anal. Mach. Intell. 2001, 23, 257–267. [Google Scholar] [CrossRef]

- Lam, T.H.W.; Lee, R.S.T. A New Representation for Human Gait Recognition: Motion Silhouettes Image (MSI). Advances in Biometrics. Springer Berlin Heidelberg, 2005, pp. 612–618.

- Han, J.; Bhanu, B. Individual recognition using gait energy image. IEEE Trans. Pattern Anal. Mach. Intell. 2006, 28, 316–322. [Google Scholar] [CrossRef] [PubMed]

- Liu, J.; Zheng, N. Gait history image: A novel temporal template for gait recognition. 2007 IEEE international conference on multimedia and expo. IEEE Computer Society, 2007, pp. 663–666.

- Zebhi, S.; AlModarresi, S.M.T.; Abootalebi, V. Human activity recognition using pre-trained network with informative templates. International Journal of Machine Learning and Cybernetics 2021, 12, 3449–3461. [Google Scholar] [CrossRef]

- Wang, M.; Guo, X.; Lin, B.; Yang, T.; Zhu, Z.; Li, L.; Zhang, S.; Yu, X. DyGait: Exploiting dynamic representations for high-performance gait recognition. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 13424–13433.

- Bashir, K.; Xiang, T.; Gong, S. Gait recognition using Gait Entropy Image. 3rd International Conference on Imaging for Crime Detection and Prevention (ICDP 2009), 2009, pp. 1–6.

- Wang, X.; Yan, W.Q. Human Gait Recognition Based on Frame-by-Frame Gait Energy Images and Convolutional Long Short-Term Memory. Int. J. Neural Syst. 2020, 30, 1950027. [Google Scholar] [CrossRef]

- He, Y.; Zhang, J.; Shan, H.; Wang, L. Multi-Task GANs for view-specific feature learning in gait recognition. IEEE Trans. Inf. Forensics Secur. 2019, 14, 102–113. [Google Scholar] [CrossRef]

- Wang, K.; Liu, L.; Lee, Y.; Ding, X.; Lin, J. Nonstandard Periodic Gait Energy Image for Gait Recognition and Data Augmentation. Pattern Recognition and Computer Vision. Springer International Publishing, 2019, pp. 197–208.

- Lam, T.H.; Cheung, K.H.; Liu, J.N. Gait flow image: A silhouette-based gait representation for human identification. Pattern recognition 2011, 44, 973–987. [Google Scholar] [CrossRef]

- Ye, H.; Sun, T.; Xu, K. Gait Recognition Based on Gait Optical Flow Network with Inherent Feature Pyramid. Applied Sciences 2023, 13, 10975. [Google Scholar] [CrossRef]

- Wang, C.; Zhang, J.; Pu, J.; Yuan, X.; Wang, L. Chrono-gait image: A novel temporal template for gait recognition. Computer Vision–ECCV 2010: 11th European Conference on Computer Vision, Heraklion, Crete, Greece, -11, 2010, Proceedings, Part I 11. Springer, 2010, pp. 257–270. 5 September.

- Zhang, E.; Zhao, Y.; Xiong, W. Active energy image plus 2DLPP for gait recognition. Signal Processing 2010, 90, 2295–2302. [Google Scholar] [CrossRef]

- Bharadwaj, S.V.; Chanda, K. Person Re-Identification by Analyzing Dynamic Variations in Gait Sequences. Evolving Technologies for Computing, Communication and Smart World. Springer Singapore, 2021, pp. 399–410.

- Chen, C.; Liang, J.; Zhao, H.; Hu, H.; Tian, J. Frame difference energy image for gait recognition with incomplete silhouettes. Pattern Recognit. Lett. 2009, 30, 977–984. [Google Scholar] [CrossRef]

- Luo, J.; Zhang, J.; Zi, C.; Niu, Y.; Tian, H.; Xiu, C. Gait recognition using GEI and AFDEI. International Journal of Optics 2015, 2015, 763908. [Google Scholar] [CrossRef]

- Thomas, K.; Pushpalatha, K. A comparative study of the performance of gait recognition using gait energy image and shannon’s entropy image with CNN. Data Science and Security: Proceedings of IDSCS 2021. Springer, 2021, pp. 191–202.

- Filipi Gonçalves dos Santos, C.; Oliveira, D.d.S.; A. Passos, L.; Gonçalves Pires, R.; Felipe Silva Santos, D.; Pascotti Valem, L.; P. Moreira, T.; Cleison S. Santana, M.; Roder, M.; Paulo Papa, J.; Colombo, D. Gait Recognition Based on Deep Learning: A Survey. ACM Comput. Surv. 2022, 55, 1–34. [Google Scholar] [CrossRef]

- Wattanapanich, C.; Wei, H.; Petchkit, W. Investigation of robust gait recognition for different appearances and camera view angles. Int. J. Elect. Computer Syst. Eng. 2021, 11, 3977–3987. [Google Scholar] [CrossRef]

- Khalifa, O.O.; Jawed, B.; Bhuiyn, S.S.N. Principal component analysis for human gait recognition system. Bulletin of Electrical Engineering and Informatics 2019, 8, 569–576. [Google Scholar] [CrossRef]

- Hasan, B.M.S.; Abdulazeez, A.M. A Review of Principal Component Analysis Algorithm for Dimensionality Reduction. jscdm 2021, 2, 20–30. [Google Scholar]

- Gupta, S.K.; Sultaniya, G.M.; Chattopadhyay, P. An Efficient Descriptor for Gait Recognition Using Spatio-Temporal Cues. Emerging Technology in Modelling and Graphics. Springer Singapore, 2020, pp. 85–97.

- Guo, H.; Li, B.; Zhang, Y.; Zhang, Y.; Li, W.; Qiao, F.; Rong, X.; Zhou, S. Gait Recognition Based on the Feature Extraction of Gabor Filter and Linear Discriminant Analysis and Improved Local Coupled Extreme Learning Machine. Math. Probl. Eng. 2020, 2020. [Google Scholar] [CrossRef]

- Wang, H.; Fan, Y.; Fang, B.; Dai, S. Generalized linear discriminant analysis based on euclidean norm for gait recognition. International Journal of Machine Learning and Cybernetics 2018, 9, 569–576. [Google Scholar] [CrossRef]

- Li, W.; Kuo, C.C.J.; Peng, J. Gait recognition via GEI subspace projections and collaborative representation classification. Neurocomputing 2018, 275, 1932–1945. [Google Scholar] [CrossRef]

- Yu, S.; Tan, D.; Tan, T. A framework for evaluating the effect of view angle, clothing and carrying condition on gait recognition. 18th international conference on pattern recognition (ICPR’06). IEEE, 2006, Vol. 4, pp. 441–444.

- Honggui, L.; Xingguo, L. Gait analysis using LLE. Proceedings 7th International Conference on Signal Processing, 2004. Proceedings. ICSP’04. 2004. IEEE, 2004, Vol. 2, pp. 1423–1426.

- Pataky, T.C.; Mu, T.; Bosch, K.; Rosenbaum, D.; Goulermas, J.Y. Gait recognition: highly unique dynamic plantar pressure patterns among 104 individuals. Journal of The Royal Society Interface 2012, 9, 790–800. [Google Scholar] [CrossRef] [PubMed]

- Che, L.; Kong, Y. Gait recognition based on DWT and t-SNE. In Proceedings of the Third International Conference on Cyberspace Technology (CCT 2015). IET; 2015; pp. 1423–1426. [Google Scholar]

- Huang, X.; Zhu, D.; Wang, H.; Wang, X.; Yang, B.; He, B.; Liu, W.; Feng, B. Context-sensitive temporal feature learning for gait recognition. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 12909–12918.

- Pan, Z.; Rust, A.G.; Bolouri, H. Image redundancy reduction for neural network classification using discrete cosine transforms. Proceedings of the IEEE-INNS-ENNS International Joint Conference on Neural Networks. IJCNN 2000. Neural Computing: New Challenges and Perspectives for the New Millennium. ieeexplore.ieee.org, 2000, Vol. 3, pp. 149–154 vol.3.

- Fan, Z.; Jiang, J.; Weng, S.; He, Z.; Liu, Z. Human gait recognition based on Discrete Cosine Transform and Linear Discriminant Analysis. 2016 IEEE International Conference on Signal Processing, Communications and Computing (ICSPCC), 2016, pp. 1–6. [CrossRef]

- Chhatrala, R.; Jadhav, D.V. Multilinear Laplacian discriminant analysis for gait recognition. IET Comput. Vision 2017, 11, 153–160. [Google Scholar] [CrossRef]

- Shivani., *!!! REPLACE !!!*; Singh, N. Shivani.; Singh, N. Gait Recognition Using DWT and DCT Techniques. Proceedings of International Conference on Communication and Artificial Intelligence. Springer Singapore, 2021, pp. 473–481.

- Wen, J.; Wang, X. Gait recognition based on sparse linear subspace. IET Image Proc. 2021, 15, 2761–2769. [Google Scholar] [CrossRef]

- Liu, Z.; Sarkar, S. Simplest representation yet for gait recognition: averaged silhouette. Proceedings of the 17th International Conference on Pattern Recognition, 2004. ICPR 2004., 2004, Vol. 4, pp. 211–214 Vol.4.

- Chi, L.; Dai, C.; Yan, J.; Liu, X. An Optimized Algorithm on Multi-view Transform for Gait Recognition. Communications and Networking. Springer International Publishing, 2019, pp. 166–174.

- Das Choudhury, S.; Tjahjadi, T. Robust view-invariant multiscale gait recognition. Pattern Recognit. 2015, 48, 798–811. [Google Scholar] [CrossRef]

- Pratama, F.I.; Budianita, A. Optimization of K-Nn Classification In Human Gait Recognition. 2020 Fifth International Conference on Informatics and Computing (ICIC), 2020, pp. 1–5.

- Premalatha, G.; Chandramani, V, P. Improved gait recognition through gait energy image partitioning. Comput. Intell. 2020, 36, 1261–1274. [Google Scholar] [CrossRef]

- Suthaharan, S. Support Vector Machine. In Machine Learning Models and Algorithms for Big Data Classification: Thinking with Examples for Effective Learning; Suthaharan, S., Ed.; Springer US: Boston, MA, 2016; pp. 207–235. [Google Scholar]

- Wang, X.; Wang, J.; Yan, K. Gait recognition based on Gabor wavelets and (2D)2PCA. Multimed. Tools Appl. 2018, 77, 12545–12561. [Google Scholar] [CrossRef]

- Chen, K.; Wu, S.; Li, Z. Gait Recognition Based on GFHI and Combined Hidden Markov Model. 2020 13th International Congress on Image and Signal Processing, BioMedical Engineering and Informatics (CISP-BMEI). ieeexplore.ieee.org, 2020, pp. 287–292.

- Lu, H.; Plataniotis, K.N.; Venetsanopoulos, A.N. Gait Recognition Through MPCA Plus LDA. 2006 Biometrics Symposium: Special Session on Research at the Biometric Consortium Conference. ieeexplore.ieee.org, 2006, pp. 1–6.

- Isaac, E.R.H.P.; Elias, S.; Rajagopalan, S.; Easwarakumar, K.S. Template-based gait authentication through Bayesian thresholding. IEEE/CAA Journal of Automatica Sinica 2019, 6, 209–219. [Google Scholar] [CrossRef]

- Venkat, I.; De Wilde, P. Robust Gait Recognition by Learning and Exploiting Sub-gait Characteristics. Int. J. Comput. Vis. 2011, 91, 7–23. [Google Scholar] [CrossRef]

- Yousef, R.N.; Khalil, A.T.; Samra, A.S.; Ata, M.M. Model-based and model-free deep features fusion for high performed human gait recognition. The Journal of Supercomputing 2023, 79, 12815–12852. [Google Scholar] [CrossRef] [PubMed]

- Chao, H.; He, Y.; Zhang, J.; Feng, J. GaitSet: Regarding Gait as a Set for Cross-View Gait Recognition. Proceedings of the AAAI Conference on Artificial Intelligence 2019, 33, 8126–8133. [Google Scholar] [CrossRef]

- Zhang, S.; Wang, Y.; Li, A. Cross-view gait recognition with deep universal linear embeddings. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 9095–9104.

- Wang, L.; Shi, J.; Song, G.; Shen, I.f. Object detection combining recognition and segmentation. Asian conference on computer vision. Springer, 2007, pp. 189–199.

- Gul, S.; Malik, M.I.; Khan, G.M.; Shafait, F. Multi-view gait recognition system using spatio-temporal features and deep learning. Expert Syst. Appl. 2021, 179, 115057. [Google Scholar] [CrossRef]

- Huang, Z.; Xue, D.; Shen, X.; Tian, X.; Li, H.; Huang, J.; Hua, X.S. 3d local convolutional neural networks for gait recognition. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 14920–14929.

- Teuwen, J.; Moriakov, N. Chapter 20 - Convolutional neural networks. In Handbook of Medical Image Computing and Computer Assisted Intervention; Zhou, S.K.; Rueckert, D.; Fichtinger, G., Eds.; Academic Press, 2020; pp. 481–501.

- Yunardi, R.T.; Sardjono, T.A.; Mardiyanto, R. Skeleton-Based Gait Recognition Using Modified Deep Convolutional Neural Networks and Long Short-Term Memory for Person Recognition. IEEE Access 2024. [Google Scholar] [CrossRef]

- Ambika, K.; Radhika, K.R. Speed Invariant Human Gait Authentication Based on CNN. Second International Conference on Image Processing and Capsule Networks. Springer International Publishing, 2022, pp. 801–809.

- Wang, L.; Liu, B.; Liang, F.; Wang, B. Hierarchical spatio-temporal representation learning for gait recognition. 2023 IEEE/CVF International Conference on Computer Vision (ICCV). IEEE, 2023, pp. 19582–19592.

- Min, P.P.; Sayeed, S.; Ong, T.S. Gait Recognition Using Deep Convolutional Features. 2019 7th International Conference on Information and Communication Technology (ICoICT), 2019, pp. 1–5.

- Takemura, N.; Makihara, Y.; Muramatsu, D.; Echigo, T.; Yagi, Y. On Input/Output Architectures for Convolutional Neural Network-Based Cross-View Gait Recognition. IEEE Trans. Circuits Syst. Video Technol. 2019, 29, 2708–2719. [Google Scholar] [CrossRef]

- Lopez Pinaya, W.H.; Vieira, S.; Garcia-Dias, R.; Mechelli, A. Chapter 11 - Autoencoders. In Machine Learning; Mechelli, A.; Vieira, S., Eds.; Academic Press, 2020; pp. 193–208.

- Yu, S.; Wang, Q.; Shen, L.; Huang, Y. View invariant gait recognition using only one uniform model. 2016 23rd International Conference on Pattern Recognition (ICPR), 2016, pp. 889–894.

- Babaee, M.; Li, L.; Rigoll, G. Person identification from partial gait cycle using fully convolutional neural networks. Neurocomputing 2019, 338, 116–125. [Google Scholar] [CrossRef]

- Guo, H.; Ji, Q. Physics-augmented autoencoder for 3d skeleton-based gait recognition. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 19627–19638.

- Li, F.; Liang, D.; Lian, J.; Liu, Q.; Zhu, H.; Liu, J. Invka: Gait recognition via invertible koopman autoencoder. arXiv preprint arXiv:2309.14764, arXiv:2309.14764 2023.

- Yu, S.; Liao, R.; An, W.; Chen, H.; García, E.B.; Huang, Y.; Poh, N. GaitGANv2: Invariant gait feature extraction using generative adversarial networks. Pattern Recognit. 2019, 87, 179–189. [Google Scholar] [CrossRef]

- Zhang, P.; Wu, Q.; Xu, J. VT-GAN: View Transformation GAN for Gait Recognition across Views. 2019 international joint conference on neural networks (IJCNN). Institute of Electrical and Electronics Engineers Inc., 2019, Vol. 2019-July, pp. 1–8.

- Hinton, G.E.; Krizhevsky, A.; Wang, S.D. Transforming Auto-Encoders. Artificial Neural Networks and Machine Learning – ICANN 2011. Springer Berlin Heidelberg, 2011, pp. 44–51.

- Sepas-Moghaddam, A.; Ghorbani, S.; Troje, N.F.; Etemad, A. Gait recognition using multi-scale partial representation transformation with capsules. 2020 25th international conference on pattern recognition (ICPR). Institute of Electrical and Electronics Engineers Inc., 2020, pp. 8045–8052.

- Zhao, A.; Li, J.; Ahmed, M. SpiderNet: A spiderweb graph neural network for multi-view gait recognition. Knowledge-Based Systems 2020, 206. [Google Scholar] [CrossRef]

- Xu, Z.; Lu, W.; Zhang, Q.; Yeung, Y.; Chen, X. Gait recognition based on capsule network. J. Vis. Commun. Image Represent. 2019, 59, 159–167. [Google Scholar] [CrossRef]

- Wu, Y.; Hou, J.; Su, Y.; Wu, C.; Huang, M.; Zhu, Z. Gait Recognition Based on Feedback Weight Capsule Network. 2020 IEEE 4th Information Technology, Networking, Electronic and Automation Control Conference (ITNEC), 2020, Vol. 1, pp. 155–160.

- DiPietro, R.; Hager, G.D. Chapter 21 - Deep learning: RNNs and LSTM. In Handbook of Medical Image Computing and Computer Assisted Intervention; Zhou, S.K.; Rueckert, D.; Fichtinger, G., Eds.; Academic Press, 2020; pp. 503–519.

- Santos, C.F.G.d.; Oliveira, D.D.S.; Passos, L.A.; Pires, R.G.; Santos, D.F.S.; Valem, L.P.; Moreira, T.P.; Santana, M.C.S.; Roder, M.; Papa, J.P. ; others. Gait recognition based on deep learning: a survey. arXiv preprint arXiv:2201.03323, arXiv:2201.03323 2022.

- Björnsson, H.H.; Kaldal, J. Exploration and Evaluation of RNN Models on Low-Resource Embedded Devices for Human Activity Recognition, 2023.

- Wang, J.; Chen, Y.; Hao, S.; Peng, X.; Hu, L. Deep learning for sensor-based activity recognition: A survey. Pattern recognition letters 2019, 119, 3–11. [Google Scholar] [CrossRef]

- Sepas-Moghaddam, A.; Etemad, A. View-Invariant Gait Recognition with Attentive Recurrent Learning of Partial Representations. IEEE Transactions on Biometrics, Behavior, and Identity Science 2021, 3, 124–137. [Google Scholar] [CrossRef]

- Auten, A.; Tomei, M.; Kumar, R. Hardware acceleration of graph neural networks. 2020 57th ACM/IEEE Design Automation Conference (DAC). IEEE, 2020, pp. 1–6.

- Lan, T.; Shi, Z.; Wang, K.; Yin, C. Gait Recognition Algorithm based on Spatial-temporal Graph Neural Network. 2022 International Conference on Big Data, Information and Computer Network (BDICN). IEEE, 2022, pp. 55–58.

- Ma, K.; Fu, Y.; Zheng, D.; Cao, C.; Hu, X.; Huang, Y. Dynamic aggregated network for gait recognition. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 22076–22085.

- Wang, Y.; Sun, Y.; Liu, Z.; Sarma, S.E.; Bronstein, M.M.; Solomon, J.M. Dynamic graph cnn for learning on point clouds. ACM Transactions on Graphics (tog) 2019, 38, 1–12. [Google Scholar] [CrossRef]

- Li, G.; Guo, L.; Zhang, R.; Qian, J.; Gao, S. Transgait: Multimodal-based gait recognition with set transformer. Applied Intelligence 2023, 53, 1535–1547. [Google Scholar] [CrossRef]

- Wang, X.; Yan, W.Q. Non-local gait feature extraction and human identification. Multimed. Tools Appl. 2021, 80, 6065–6078. [Google Scholar] [CrossRef]

- Jia, P.; Zhao, Q.; Li, B.; Zhang, J. CJAM: Convolutional Neural Network Joint Attention Mechanism in Gait Recognition. IEICE Trans. Inf. Syst. 2021, E104.D, 1239–1249. [Google Scholar] [CrossRef]

- Mogan, J.N.; Lee, C.P.; Lim, K.M.; Muthu, K.S. Gait-ViT: Gait Recognition with Vision Transformer. Sensors 2022, 22. [Google Scholar] [CrossRef] [PubMed]

- Mogan, J.N.; Lee, C.P.; Lim, K.M.; Ali, M.; Alqahtani, A. Gait-CNN-ViT: Multi-model gait recognition with convolutional neural networks and vision transformer. Sensors 2023, 23, 3809. [Google Scholar] [CrossRef] [PubMed]

- Bilal, M.; Jianbiao, H.; Mushtaq, H.; Asim, M.; Ali, G.; ElAffendi, M. GaitSTAR: Spatial–Temporal Attention-Based Feature-Reweighting Architecture for Human Gait Recognition. Mathematics 2024, 12, 2458. [Google Scholar] [CrossRef]

- Jung, V.J.; Burrello, A.; Scherer, M.; Conti, F.; Benini, L. Optimizing the Deployment of Tiny Transformers on Low-Power MCUs. arXiv preprint arXiv:2404.02945, arXiv:2404.02945 2024.

- Dayal, A.; Paluru, N.; Cenkeramaddi, L.R.; J. , S.; Yalavarthy, P.K. Design and Implementation of Deep Learning Based Contactless Authentication System Using Hand Gestures. Electronics 2021, 10, 182. [Google Scholar] [CrossRef]

- Tiñini Alvarez, I.R.; Sahonero-Alvarez, G.; Menacho, C.; Suarez, J. Exploring Edge Computing for Gait Recognition. 2021 4th International Conference on Bio-Engineering for Smart Technologies (BioSMART), 2021, pp. 01–04.

- Isik, M.; Oldland, M.; Zhou, L. An energy-efficient reconfigurable autoencoder implementation on fpga. Proceedings of SAI Intelligent Systems Conference. Springer, 2023, pp. 212–222.

- Chen, Z.; Peng, L.; Hu, A.; Fu, H. Generative adversarial network-based rogue device identification using differential constellation trace figure. EURASIP Journal on Wireless Communications and Networking 2021, 2021, 72. [Google Scholar] [CrossRef]

- Costa, M.; Costa, D.; Gomes, T.; Pinto, S. Shifting capsule networks from the cloud to the deep edge. ACM Transactions on Intelligent Systems and Technology (TIST) 2022, 13, 1–25. [Google Scholar] [CrossRef]

- Wardana, I.N.K.; Gardner, J.W.; Fahmy, S.A. Optimising Deep Learning at the Edge for Accurate Hourly Air Quality Prediction. Sensors 2021, 21. [Google Scholar] [CrossRef] [PubMed]

- Jeziorek, K.; Wzorek, P.; Blachut, K.; Pinna, A.; Kryjak, T. Embedded Graph Convolutional Networks for Real-Time Event Data Processing on SoC FPGAs. arXiv preprint arXiv:2406.07318, arXiv:2406.07318 2024.

- Wang, Y.; Yang, C.; Lan, S.; Zhu, L.; Zhang, Y. End-edge-cloud collaborative computing for deep learning: A comprehensive survey. IEEE Communications Surveys & Tutorials.

- Galanopoulos, A.; Ayala-Romero, J.A.; Leith, D.J.; Iosifidis, G. AutoML for Video Analytics with Edge Computing. IEEE INFOCOM 2021 - IEEE Conference on Computer Communications, 2021, pp. 1–10. [CrossRef]

- Salcedo, E.; Peñaloza, P. Edge AI-Based Vein Detector for Efficient Venipuncture in the Antecubital Fossa. Mexican International Conference on Artificial Intelligence. Springer, 2023, pp. 297–314.

- Rojas, W.; Salcedo, E.; Sahonero, G. ADRAS: airborne disease risk assessment system for closed environments. Annual International Conference on Information Management and Big Data. Springer, 2022, pp. 96–112.

- Salcedo, E.; Uchani, Y.; Mamani, M.; Fernandez, M. Towards Continuous Floating Invasive Plant Removal Using Unmanned Surface Vehicles and Computer Vision. IEEE Access 2024. [Google Scholar] [CrossRef]

- Kang, Y.; Hauswald, J.; Gao, C.; Rovinski, A.; Mudge, T.; Mars, J.; Tang, L. Neurosurgeon: Collaborative Intelligence Between the Cloud and Mobile Edge. SIGARCH Comput. Archit. News 2017, 45, 615–629. [Google Scholar] [CrossRef]

- Fernandez-Testa, S.; Salcedo, E. Distributed Intelligent Video Surveillance for Early Armed Robbery Detection based on Deep Learning. 2024 37th SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI). IEEE, 2024, pp. 1–6.

- Han, Q.; Ren, X.; Zhao, P.; Wang, Y.; Wang, L.; Zhao, C.; Yang, X. ECCVideo: A Scalable Edge Cloud Collaborative Video Analysis System. IEEE Intelligent Systems 2023, 38, 34–44. [Google Scholar] [CrossRef]

- Chen, J.; Ran, X. Deep Learning With Edge Computing: A Review. Proc. IEEE 2019, 107, 1655–1674. [Google Scholar] [CrossRef]

- Gorospe, J.; Mulero, R.; Arbelaitz, O.; Muguerza, J.; Antón, M.Á. A Generalization Performance Study Using Deep Learning Networks in Embedded Systems. Sensors 2021, 21. [Google Scholar] [CrossRef] [PubMed]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and <0. 2016; arXiv:cs.CV/1602.07360]. [Google Scholar]

- Fang, W.; Wang, L.; Ren, P. Tinier-YOLO: A Real-Time Object Detection Method for Constrained Environments. IEEE Access 2020, 8, 1935–1944. [Google Scholar] [CrossRef]

- Karnin, E.D. A simple procedure for pruning back-propagation trained neural networks. IEEE Trans. Neural Netw. 1990, 1, 239–242. [Google Scholar] [CrossRef]

- Yu, F.; Cui, L.; Wang, P.; Han, C.; Huang, R.; Huang, X. EasiEdge: A Novel Global Deep Neural Networks Pruning Method for Efficient Edge Computing. IEEE Internet of Things Journal 2021, 8, 1259–1271. [Google Scholar] [CrossRef]

- Woo, Y.; Kim, D.; Jeong, J.; Ko, Y.W.; Lee, J.G. Zero-Keep Filter Pruning for Energy/Power Efficient Deep Neural Networks. Electronics 2021, 10, 1238. [Google Scholar] [CrossRef]

- Zebin, T.; Scully, P.J.; Peek, N.; Casson, A.J.; Ozanyan, K.B. Design and Implementation of a Convolutional Neural Network on an Edge Computing Smartphone for Human Activity Recognition. IEEE Access 2019, 7, 133509–133520. [Google Scholar] [CrossRef]

- Hinton, G.; Vinyals, O.; Dean, J. 2015; arXiv:stat.ML/1503.02531].

- Watrix. Watrix. WATRIX.ai, 2024. Accessed: Sept. 3, 2024.

- NtechLab. NtechLab. NTECHLAB.com, 2024. Accessed: Sept. 3, 2024.

- Ruiz-Barroso, P.; Castro, F.M.; Delgado-Escaño, R.; Ramos-Cózar, J.; Guil, N. High performance inference of gait recognition models on embedded systems. Sustainable Computing: Informatics and Systems 2022, 36, 100814. [Google Scholar] [CrossRef]

- Conchari, C.; Sahonero-Alvarez, G.; Mollocuaquira, R.; Salazar, E. Distributed Edge Computing for Appearance-Based Gait Recognition. 2024 IEEE ANDESCON. IEEE, 2024, pp. 1–6.

- Zeng, X.; Zhang, X.; Yang, S.; Shi, Z.; Chi, C. Gait-based implicit authentication using edge computing and deep learning for mobile devices. Sensors 2021, 21, 4592. [Google Scholar] [CrossRef] [PubMed]

- Yoshino, K.; Nakashima, K.; Ahn, J.; Iwashita, Y.; Kurazume, R. Gait recognition using identity-aware adversarial data augmentation. 2022 IEEE/SICE International Symposium on System Integration (SII). IEEE, 2022, pp. 596–601.

- Li, Z.; Li, Y.R.; Yu, S. FedGait: A Benchmark for Federated Gait Recognition. 2022 26th International Conference on Pattern Recognition (ICPR). IEEE, 2022, pp. 1371–1377.

- Das, D.; Agarwal, A.; Chattopadhyay, P. Gait recognition from occluded sequences in surveillance sites. European Conference on Computer Vision. Springer, 2022, pp. 703–719.

- Sepas-Moghaddam, A.; Etemad, A. View-invariant gait recognition with attentive recurrent learning of partial representations. IEEE Transactions on Biometrics, Behavior, and Identity Science 2020, 3, 124–137. [Google Scholar] [CrossRef]

- Hadid, A.; Ghahramani, M.; Kellokumpu, V.; Pietikäinen, M.; Bustard, J.; Nixon, M. Can gait biometrics be Spoofed? Proceedings of the 21st International Conference on Pattern Recognition (ICPR2012), 2012, pp. 3280–3283.

- Hadid, A.; Ghahramani, M.; Kellokumpu, V.; Feng, X.; Bustard, J.; Nixon, M. Gait biometrics under spoofing attacks: an experimental investigation. Journal of Electronic Imaging 2015, 24, 63022. [Google Scholar] [CrossRef]

- Jia, M.; Yang, H.; Huang, D.; Wang, Y. Attacking Gait Recognition Systems via Silhouette Guided GANs. Proceedings of the 27th ACM International Conference on Multimedia; Association for Computing Machinery: New York, NY, USA, 2019. [Google Scholar]

- Hirose, Y.; Nakamura, K.; Nitta, N.; Babaguchi, N. Discrimination between genuine and cloned gait silhouette videos via autoencoder-based training data generation. IEICE Trans. Inf. Syst. 2019, E102D, 2535–2546. [Google Scholar] [CrossRef]

- Maqsood, M.; Ghazanfar, M.A.; Mehmood, I.; Hwang, E.; Rho, S. A Meta-Heuristic Optimization Based Less Imperceptible Adversarial Attack on Gait Based Surveillance Systems. Journal of Signal Processing Systems 2022. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey Wolf Optimizer. Advances in Engineering Software 2014, 69, 46–61. [Google Scholar] [CrossRef]

- Hirose, Y.; Nakamura, K.; Nitta, N.; Babaguchi, N. Anonymization of Human Gait in Video Based on Silhouette Deformation and Texture Transfer. IEEE Transactions on Information Forensics and Security 2022, 17, 3375–3390. [Google Scholar] [CrossRef]

- Qin, Y.; Zhang, H.; Qing, L.; Liu, Q.; Jiang, H.; Xu, S.; Liu, Y.; He, X. Machine vision-based gait scan method for identifying cognitive impairment in older adults. Frontiers in Aging Neuroscience 2024, 16, 1341227. [Google Scholar] [CrossRef] [PubMed]

- Chen, B.; Chen, C.; Hu, J.; Sayeed, Z.; Qi, J.; Darwiche, H.F.; Little, B.E.; Lou, S.; Darwish, M.; Foote, C.; others. Computer vision and machine learning-based gait pattern recognition for flat fall prediction. Sensors 2022, 22, 7960. [Google Scholar] [CrossRef] [PubMed]

- Freire-Obregón, D.; Lorenzo-Navarro, J.; Santana, O.J.; Hernández-Sosa, D.; Castrillón-Santana, M. A large-scale re-identification analysis in sporting scenarios: the Betrayal of Reaching a Critical Point. 2023 IEEE International Joint Conference on Biometrics (IJCB). IEEE, 2023, pp. 1–9.

- Chi, W.; Wang, J.; Meng, M.Q.H. A gait recognition method for human following in service robots. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2017, 48, 1429–1440. [Google Scholar] [CrossRef]

- Xu, S.; Fang, J.; Hu, X.; Ngai, E.; Wang, W.; Guo, Y.; Leung, V.C. Emotion recognition from gait analyses: Current research and future directions. IEEE Transactions on Computational Social Systems 2022, 11, 363–377. [Google Scholar] [CrossRef]

| Name & Reference | Year | Subjects | Sequences | Views | Variations | Environment |

|---|---|---|---|---|---|---|

| CASIA-E [14] | 2022 | 1,014 | 778,752 | 26 | Dressing, Carrying, Walking Style, Gender, Age | Outdoor |

| ReSGait [15] | 2021 | 172 | 870 | 1 | Clothing, Carrying, Trajectories, Gender | Indoor |

| GREW [16] | 2021 | 26,345 | 128,671 | 882 | Clothing, Carrying, Occlusion, Viewpoint, Background | Outdoor |

| VersatileGait [17] | 2021 | 11,000 | 1,320,000 | 44 | Age, Gender, Walking Style | Unity3D |

| OU-MVLP * [18] | 2020 | 10,307 | 268,086 | 14 | Viewpoint | Indoor |

| KY4D * [19] | 2014 | 42 | 84 | 16 | Curve | Indoor |

| SOTON * [20] | 2011 | 300 | 5,000 | 12 | Viewpoint | Indoor |

| SAIVT-DGD [21] | 2011 | 35 | 700 | 1 | Speed, Carrying, Shoes | Indoor |

| CASIA-C [22] | 2006 | 153 | 1,530 | 1 | Speed, Walking Surface | Outdoor |

| CASIA-B [23] | 2006 | 124 | 13,640 | 11 | Clothing, Carrying, Walking Surface | Indoor |

| CASIA-A [24] | 2003 | 20 | 240 | 1 | Walking Direction | Outdoor |

| UCSD [25] | 1999 | 6 | 42 | 1 | Clothing, Carrying | Outdoor |

| Aspect | Handcrafted Features | Deep Learning Features |

|---|---|---|

| Feature Design | Manually designed using domain knowledge. | Automatically learned from data. |

| Computational Complexity | Generally lower complexity, faster to compute. | Higher computational complexity, requires more resources. |

| Generalisation Ability | Limited generalisation, often tailored to specific conditions. | Better generalisation, more robust to diverse conditions. |

| Adaptability | Requires redesign for new tasks or environments. | Learns features adaptively from data. |

| Data Requirement | Can work with smaller datasets. | Requires large amounts of labelled data for training. |

| Interpretability | Easier to interpret due to human design. | Harder to interpret, features are learned in black-box fashion. |

| Gait Feature Representation |

Year | Pros | Cons | Frequency of Use | Recent Applications |

|---|---|---|---|---|---|

| Graph-based Models | 2021 | Effective for capturing relationships between joints, capable of encoding spatial and temporal dependencies. | Computationally intensive, requires large datasets, can be sensitive to noise in joint detection. | Emerging, increasingly popular | [40,41,42] |

| Pose Estimation | 2016 (Deep Learning-based) | Captures human joint movements with high granularity, robust to appearance changes, clothing, and background noise. | Sensitive to inaccuracies in joint detection, requires high-quality input, limited in occlusion cases. | Increasingly frequent | [7,31,43] |

| Skeleton Maps | 2010s | Simple representation of body joints, efficient for machine learning models, invariant to appearance changes. | Can miss subtle gait dynamics, reliant on accurate joint detection, struggles in occlusions or missing joint data. | Moderately frequent | [16,43] |

| Gait Feature Representation |

Year | Pros | Cons | Frequency of Use |

Recent Applications |

|---|---|---|---|---|---|

| ff-GEI [52] | 2020 | Captures energy per frame, useful for detailed gait dynamics. | Computationally expensive, large data requirements. | Rare | No recent works found. |

| PEI [53] | 2019 | Highlights periodic gait motion, useful for recognising consistent gait patterns. | Sensitive to changes in walking speed and conditions. | Less frequent | [54] |

| GFI [55] | 2011 | Captures velocity and flow of movement, sensitive to gait dynamics. | Computationally complex, sensitive to noise and illumination changes. | Less frequent | [8,56] |

| CGI [57] | 2010 | Combines motion with temporal encoding, captures both spatial and temporal information. | Computationally complex, sensitive to frame rate and noise. | Less frequent | No recent works found. |

| AEI [58] | 2010 | Captures active energy regions, good for detecting dynamic motion. | Complex to compute, requires high-quality input for effectiveness. | Less frequent | [59] |

| FDEI [60] | 2009 | Highlights regions of change between frames, simple to compute. | Misses subtle motions, highly sensitive to noise. | Moderately frequent. | [61] |

| GEnI [51] | 2009 | Encodes gait variability and randomness, useful for capturing subtle dynamics. | Sensitive to noise, more complex to compute. | Less frequent | [62] |

| GHI [48] | 2007 | Captures both spatial and temporal aspects of movement. | More computationally expensive, sensitive to noise. | Less frequent | [49] |

| GEI [47] | 2006 | Robust to clothing and carrying conditions, captures averaged body silhouettes. | Loses fine temporal details, less effective in occlusion scenarios. | Very frequent | [50] |

| MSI [46] | 2005 | Simpler and easier to implement than MHI. | Primarily focuses on shape information without incorporating the temporal dynamics of the gait. | Moderately frequent | No recent works found. |

| MEI [45] | 2001 | Simple and efficient, captures where motion has occurred. | Lacks detailed temporal motion information, sensitive to noise. | Moderately frequent | No recent works found. |

| MHI [45] | 2001 | Captures temporal motion patterns, simple to compute, compact representation. | Sensitive to noise, cannot capture subtle variations in movement. | Less frequent | No recent works found. |

| Model | Frequency of Use in CVGR Systems | Suitability for Edge Computing | Recent Applications for Edge Computing |

|---|---|---|---|

| CNNs | High | Moderate, requires optimisation. | [132,133] |

| Autoencoders | Moderate | High, compact representations. | [134] |

| GANs | Moderate | Low, resource-intensive. | [135] |

| CapsNets | Low | Low, high complexity. | [136] |

| RNNs | High | Moderate, LSTM/GRU optimisations required. | [137] |

| GNNs | Moderate | Low, requires significant optimisation. | [121] |

| GCNs | Moderate | Low, optimisation needed for real-time deployment. | [138] |

| Transformers | Increasing | Low, requires optimisation for edge devices. | [131] |

| Device | Release Year |

GPU | CPU | RAM (Gigabytes) |

Storage (Gigabytes) |

Performance (TFLOPS) |

|---|---|---|---|---|---|---|

| Qualcomm Snapdragon AI Dev Kit |

2024 | Adreno 740 | Qualcomm 8-core Kryo |

16 | 512GB NVMe SSD | 4.6 |

| Jetson Orin Nano | 2023 | 1024-core Ampere + 32 Tensor Cores |

6-core ARM Cortex-A78AE v8.2 |

8 | 16 eMMC/ Expandable |

10 |

| Khadas VIM4 | 2022 | ARM Mali-G52 MP8 | Hexa-core Amlogic A311D2 SoC |

8 | 16 eMMC/ Expandable |

3.2 |

| Jetson Orin AGX | 2022 | 2048-core Ampere + 64 Tensor Cores |

12-core ARM Cortex-A78AE |

32 | 64 eMMC/ Expandable |

40 |

| Jetson Orin NX | 2022 | 1024-core Ampere + 32 Tensor Cores |

6-core ARM Cortex-A78AE |

16 | 128 eMMC/ Expandable |

20 |

| Xilinx Kria KV260 | 2021 | No GPU FPGA Integrated |

Quad-core ARM Cortex-A53 |

4 | 16 eMMC | 1.4 |

| Jetson Xavier NX | 2020 | 384-core Volta + 48 Tensor Cores |

6-core ARM Carmel |

8 | 16 eMMC/ Expandable |

1.3 |

| Jetson Nano | 2019 | 128-core Maxwell | Quad-core ARM Cortex-A57 |

4 | 16 eMMC/ Expandable |

0.5 |

| Khadas VIM3 | 2019 | 3-core ARM Mali-G52 MP2 |

Hexa-core Amlogic A311D |

4 | 16 eMMC/ Expandable |

0.8 |

| Google Coral Dev Board |

2019 | Integrated GC7000 Lite Graphics |

Quad-core ARM Cortex-A53 |

4 | 16 eMMC/ Expandable |

0.25 |

| Device Name | Release Year |

Type | AI Accelerator Type |

Performance (TOPS) |

Power (Watts) |

|---|---|---|---|---|---|

| Gyrfalcon | 2022 | USB Module |

MPE | 16 | 2 |

| Intel Edge AI Box |

2021 | Prebuilt Box |

Intel Movidius Myriad X VPU |

4 | 20 |

| Hailo-8 | 2021 | USB Module |

Hailo-8 Neural Processor |

26 | 2.5 |

| OAK-D | 2020 | Camera | VPU | 1.4 | 5 |

| Coral USB Accelerator |

2019 | USB Module |

Edge TPU | 4 | 2 |

| Year & Reference | Sensor | Gait Representation/Preprocessing | End device | Gait Feature Classification Model | Metrics |

|---|---|---|---|---|---|

| 2024 [159] | OAK-D Camera | GEI & Semantic Segmentation | Jetson Nano | CNN | 29 FPS |

| 2024 [13] | Accelerometer/Gyro | 2D FFT | B. Akida/Arduino | CNN | 0.97 accuracy |

| 2023 [33] | FMCW-MIMO Radar | 4D Point cloud videos | Laptop | Transformer | 0.91 accuracy |

| 2022 [158] | RGB Camera | GEI | Jetson Nano/AGX | 2D/3D CNN | ↓ 4.2 secs per inference |

| 2022 [160] | Inertial sensor | Gait Feauture Map | Cellphone | CNN+LSTM | 0.97 accuracy |

| 2021 [133] | OAK-D Camera | MGEI & PCA | Jetson Nano | MobileNetv2, CNN | 0.96 accuracy |

| 2021 [11] | Floor sensors | Spatiotemporal signals | Raspberry Pi | CNN | 0.91 f1-score |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).