Submitted:

04 September 2024

Posted:

09 September 2024

You are already at the latest version

Abstract

Keywords:

MSC: 68T07

1. Introduction

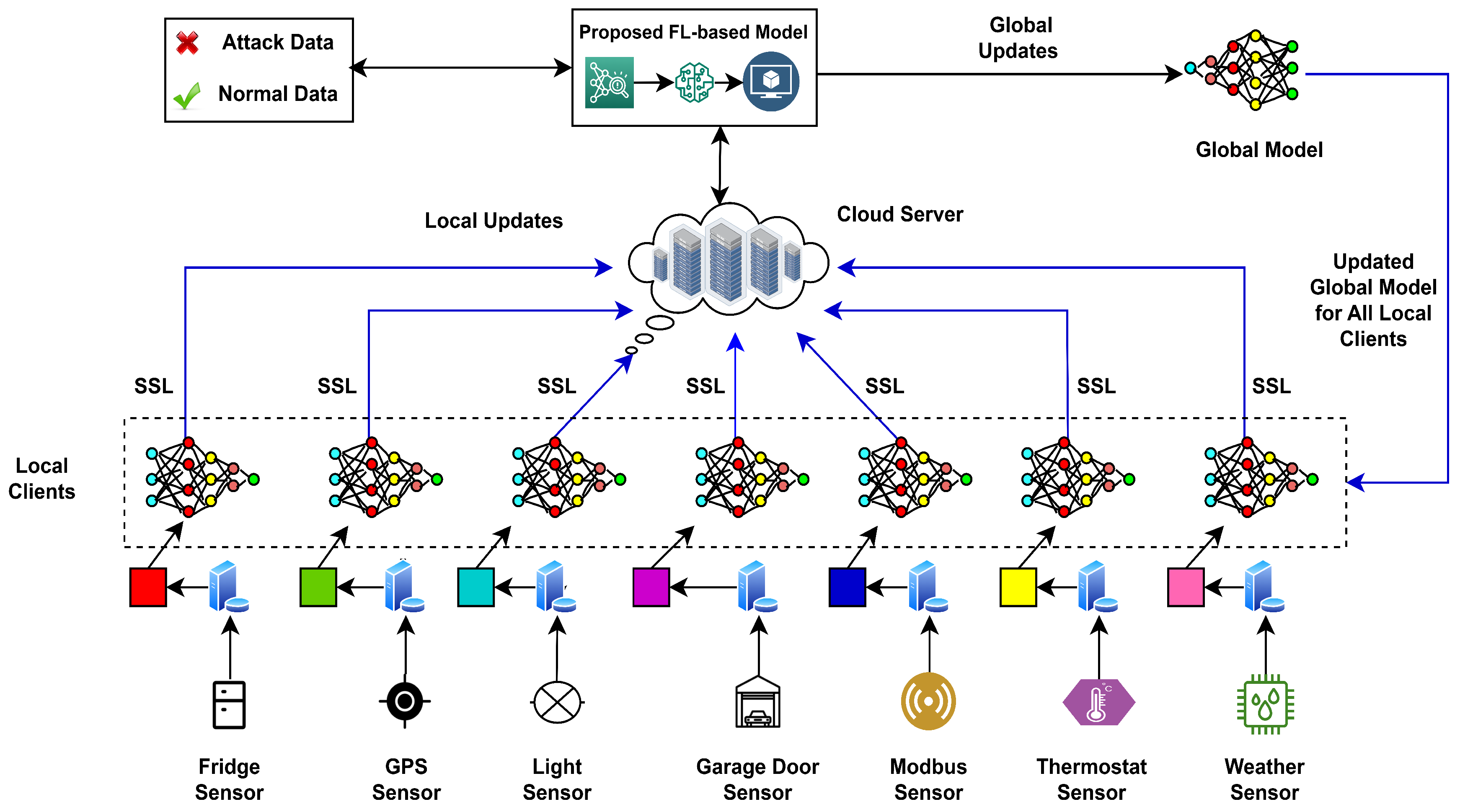

- In this work, a deep learning architecture based on federated learning has been proposed for efficient intrusion detection. This model successfully identifies a variety of attacks against heterogeneous IoT networks, including denial of service (DoS), distributed denial of service (DDoS), data injection, man-in-the-middle (MITM), backdoor, password cracking attacks (PCA), scanning, cross-site scripting (XSS) and ransomware attacks.

- The tests have been carried out by using real-world data from seven different IoT sensors, providing a diverse and realistic training dataset. By analyzing real-world sensor data streams, we identified optimal features for our supervised learning framework, which speed up training and improve performance metrics.

- Finally, a broad variety of deep learning architectures have been employed in performance evaluation, demonstrating that the proposed model is more sustainable and effective than similar models developed previously.

2. Privacy Preserving FL

3. Related Work

| Ref. | Detection Category | Accuracy | Dataset | ML/DL Models | FL Implementation | Aggregation Function | Validation Strategy |

|---|---|---|---|---|---|---|---|

| [31] | Intrusion Detection System | >85% | NF-UNSW-NB15-v2 | LSTM, DNN | TensorFlow | FedAvg | Empirical (2 Devices) 9 Categories |

| [30] | Cyberattack Detection System | >93% | Edge-IIoTset | CNN, LSTM, FSL | - | FedProx | Empirical (4 Devices) 7 Categories |

| [32] | DDoS Attack Detection | >96% | CIC-DDoS2019 | MLP | TensorFlow | FedAvg, FLAD, FLDDOS | Empirical (13 Devices) 13 Categories |

| [29] | Intrusion Detection System | >83% | SAT20, TER20 | CNN | OpenStack, TensorFlow | FedSGD | Simulation |

| [22] | Intrusion Detection System | >88% | NSL-KDD | KNN, RF, MLP | - | FedStacking | Empirical (4 Devices) 4 Categories |

| [21] | Intrusion Detection System | >88% | TON_IoT | Multinomial Logistic Regression | - | FedAvg, Fed+ | Empirical (7 Devices) 9 Categories |

| Proposed | Intrusion Detection System | >90% | TON_IoT | DNN, LSTM, GRU, FCN, LeNet | Flower, TensorFlow | FedAvg | Empirical (7 Devices) 9 Categories |

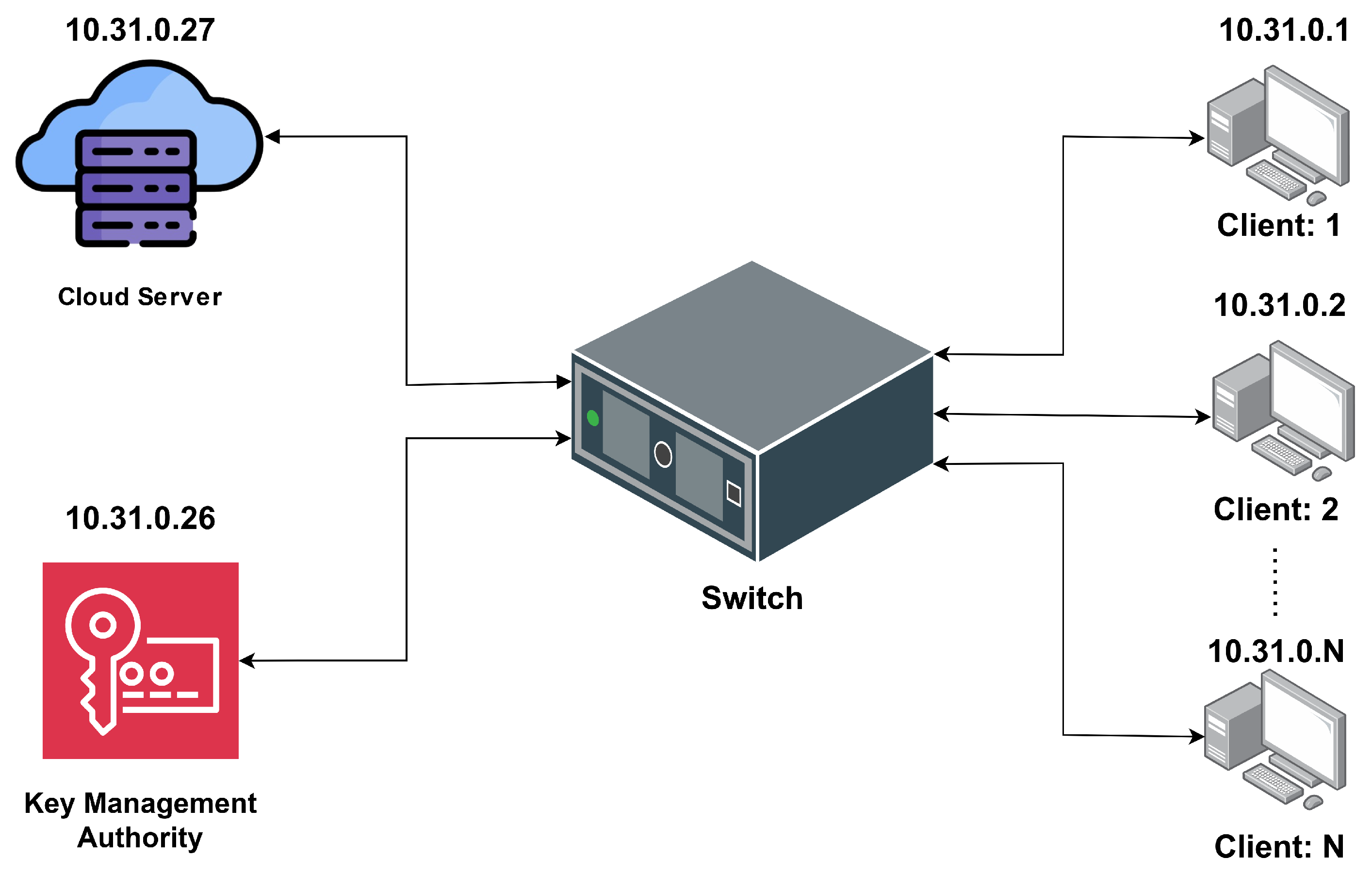

4. Proposed Privacy-Preserving FL-Enabled IDS for IoT/IIoT

4.1. Problem Statement

4.2. Federated Averaging (FedAVG)

4.3. SSL Mechanism

4.4. Workflow of the Proposed Model

4.4.1. Model Initialization

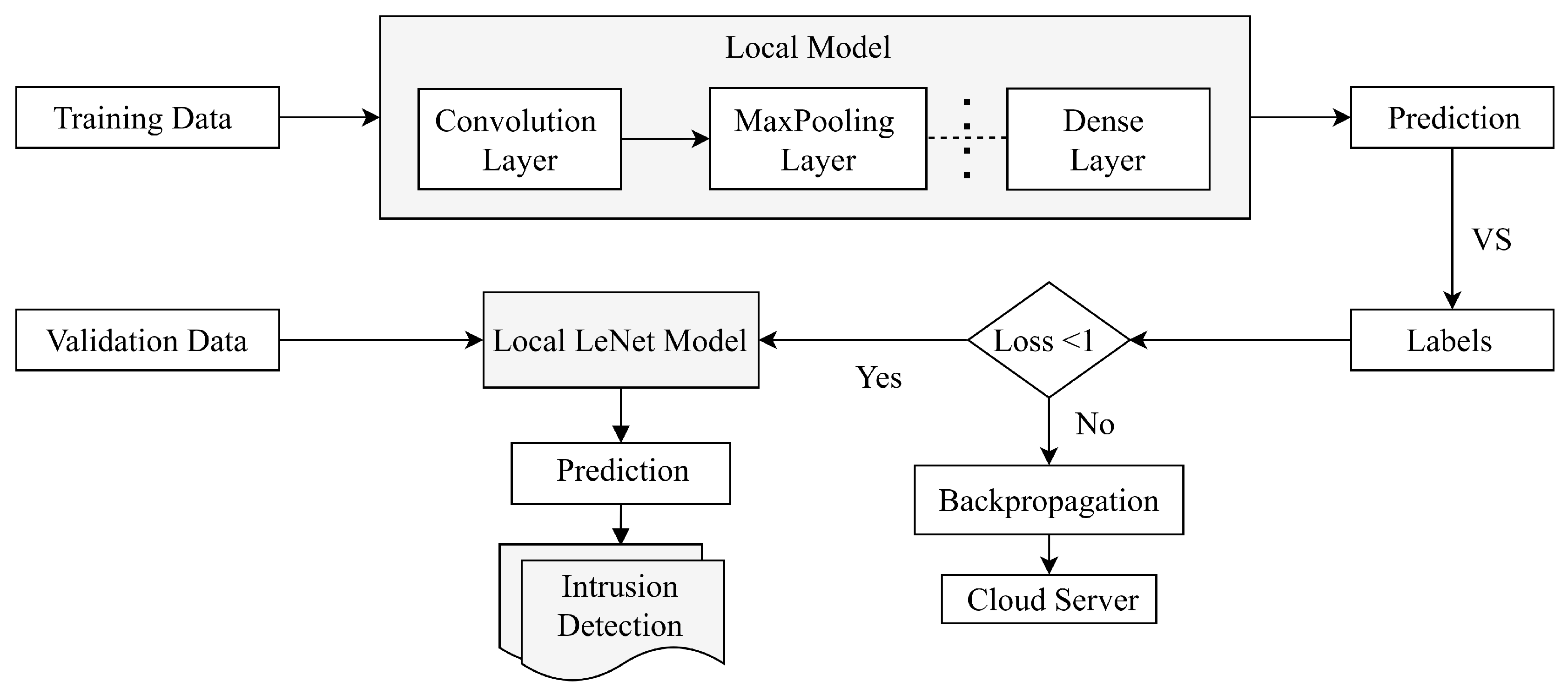

4.4.2. Local Model Training by Sensor Clients

4.4.3. Encryption of Model Parameters by Sensor Clients

4.4.4. Aggregation of Model Parameters by Cloud Server

4.4.5. Local Model Updating by Sensor Clients

4.4.6. Overall Computational Complexity of the Model

4.5. Proposed Architecture

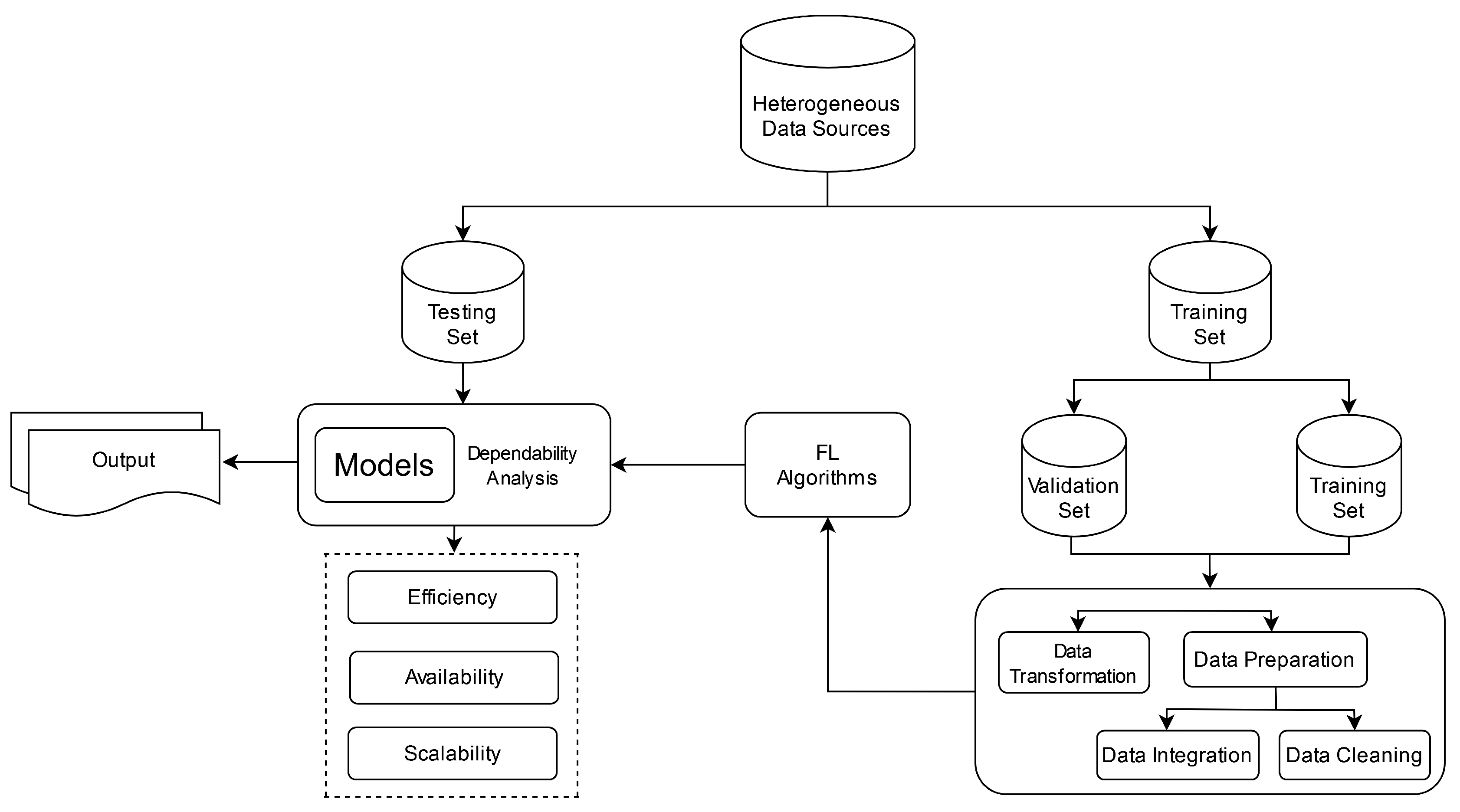

5. Model Implementation and Evaluation

5.1. Environment Setup

5.2. Implementation Procedure

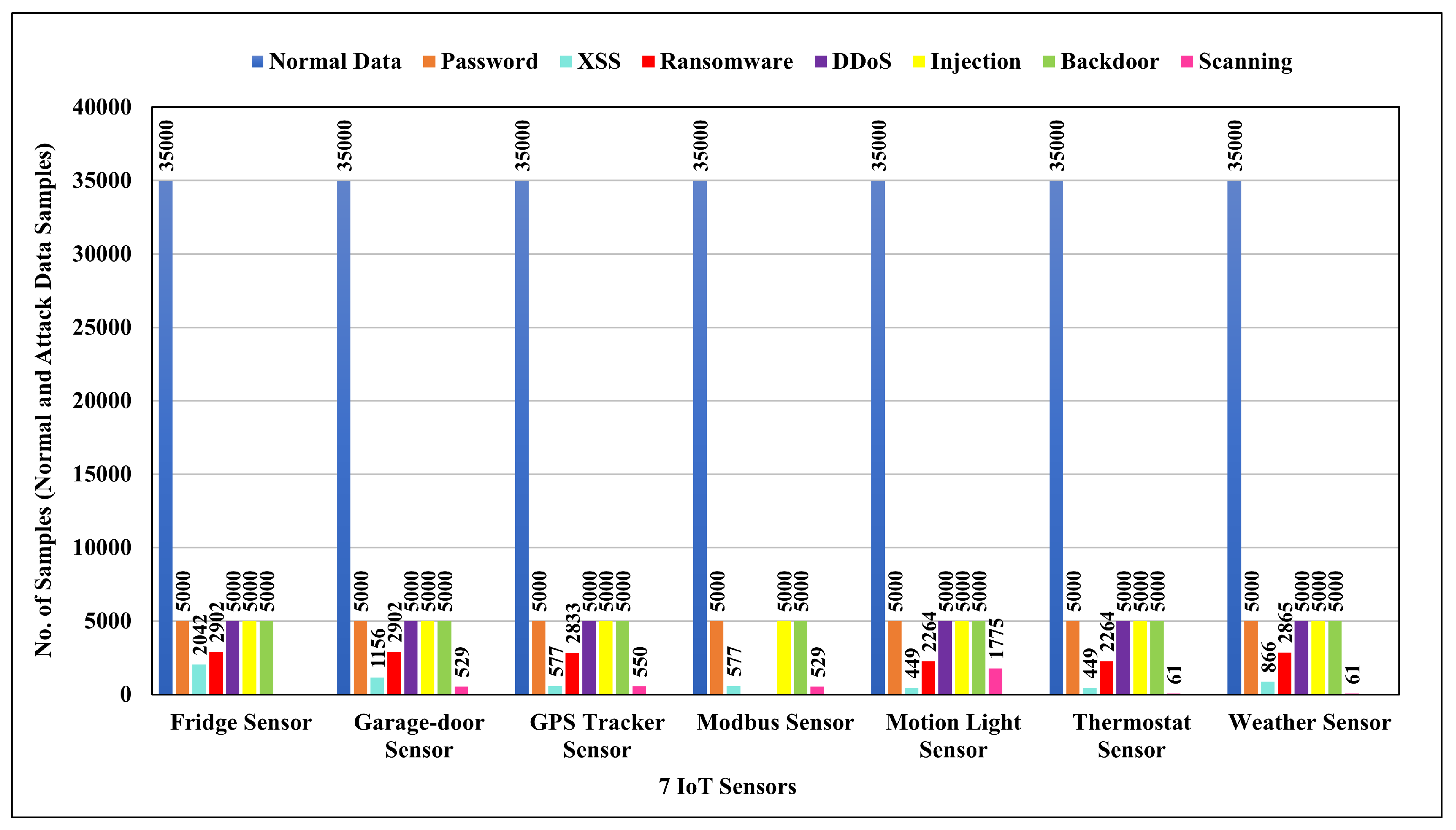

5.3. Data Description

5.4. Data Preparation

5.4.1. Data Cleaning and Preprocessing

- Handling Null Values: To handle null values in the dataset, we apply the predictive mean matching method to input missing values. This technique involves predicting missing values based on their relationships with other variables and then matching them to observed values to ensure a more accurate imputation.

- Handling Noise and Outliers Values: To address noise in the dataset, Rosner’s Test is applied.

- Removing White Spaces from Target Class: The ’strip()’ string method is used to remove leading and trailing whitespace (including spaces) from a string, ensuring that the contents do not start or end with spaces. For example, the ’temperature condition’ feature in the fridge sensor dataset, which has white spaces with values like ’high ’ and ’low ’, has been converted into ’high’ and ’low’.

- Checking Balance Data: The class Distribution Summary method is used to check balance data, and the Bar Chart ensures that the dataset is balanced. Each individual sensor dataset has been confirmed to be balanced.

5.4.2. Feature Engineering

- Eliminating Unnecessary Features: It involves selecting and determining which features are relevant to the FL model, thus improving the quality of the feature set. To provide one instance, the ’time_stamp’, ’time’, ’date’, and ’type’ features in the fridge sensor dataset are dropped to enhance the model’s performance.

- Encoding Categorical Data: Categorical values were converted into Numerical format. To provide one instance, the ’light_status’ feature in the Motion light sensor dataset, which has qualitative states of ’on’ and ’off’, has been transformed into their respective numeric values ’1’ and ’0’. This transformation was achieved using the library, which applies numerical convolution for label encoding [4].

- Replacing Boolean Data with Binary Values: Boolean values were converted into binary format. To provide one instance, the ’sphone_signal’ feature in the garage door sensor dataset, which has a ’True’ and a ’False’, has been converted into ’1’ and ’0’.

- Normalizing Different Range of Values: Normalization ensures that numerical features have consistent scales, which can improve the performance of our FL model. Some models may prefer large feature values; however, some features have greater values than others, which causes erroneous performance. These techniques have been used to scale the chosen feature values within the interval [0.0, 1.0] without affecting the properties of the data [44]. The chosen feature values have been scaled within the range using a method known as min-max data normalization, as indicated by the following equation:where X is the feature’s initial value and and are its maximum and lowest values, respectively.

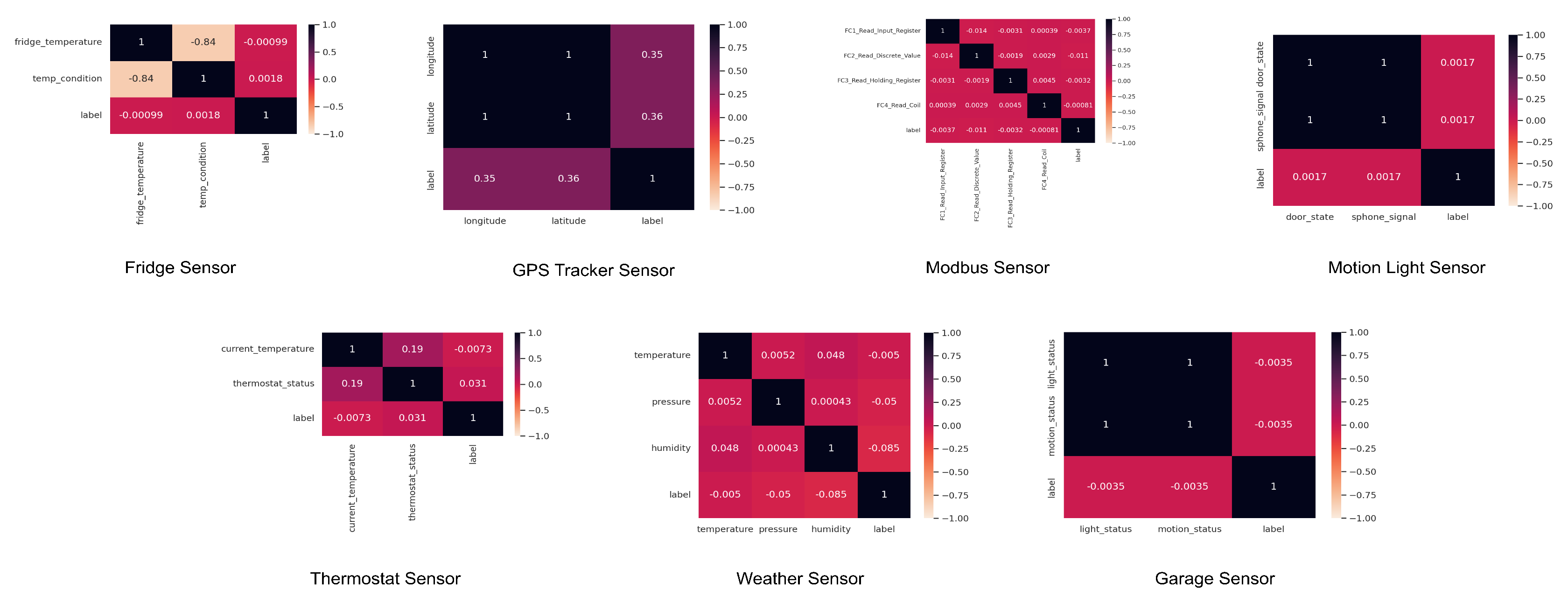

- Correlation Checking: A measurable strategy for calculating the link and gauging the strength of a linear relationship between two factors is correlation analysis. The degree to which a change in one variable affects a change in another is determined through correlation analysis 1.

5.5. Training Process

5.5.1. Model Selection and Initialization

5.5.2. Training Rounds

6. Result Analysis

6.1. Model Performance Analysis

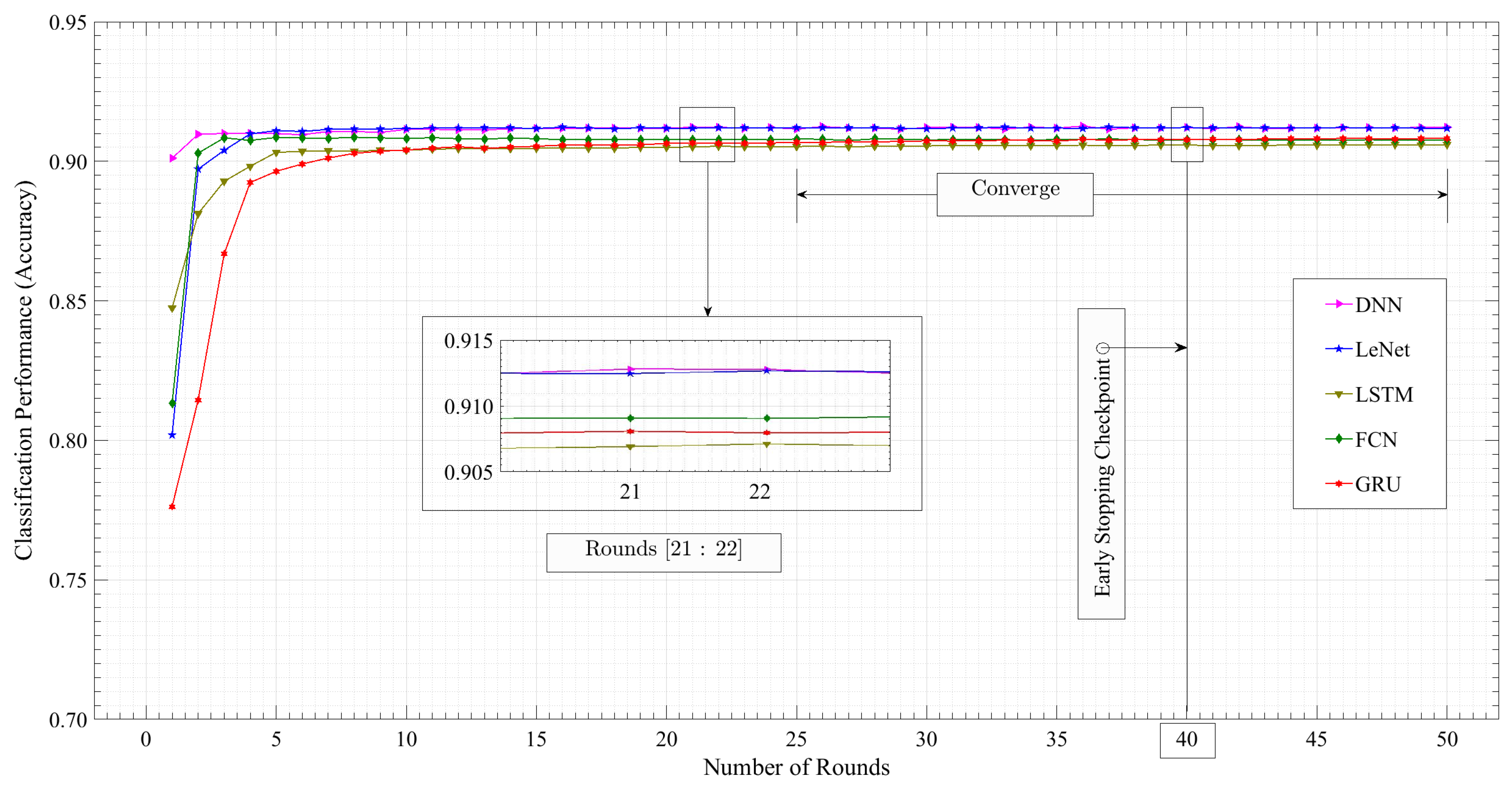

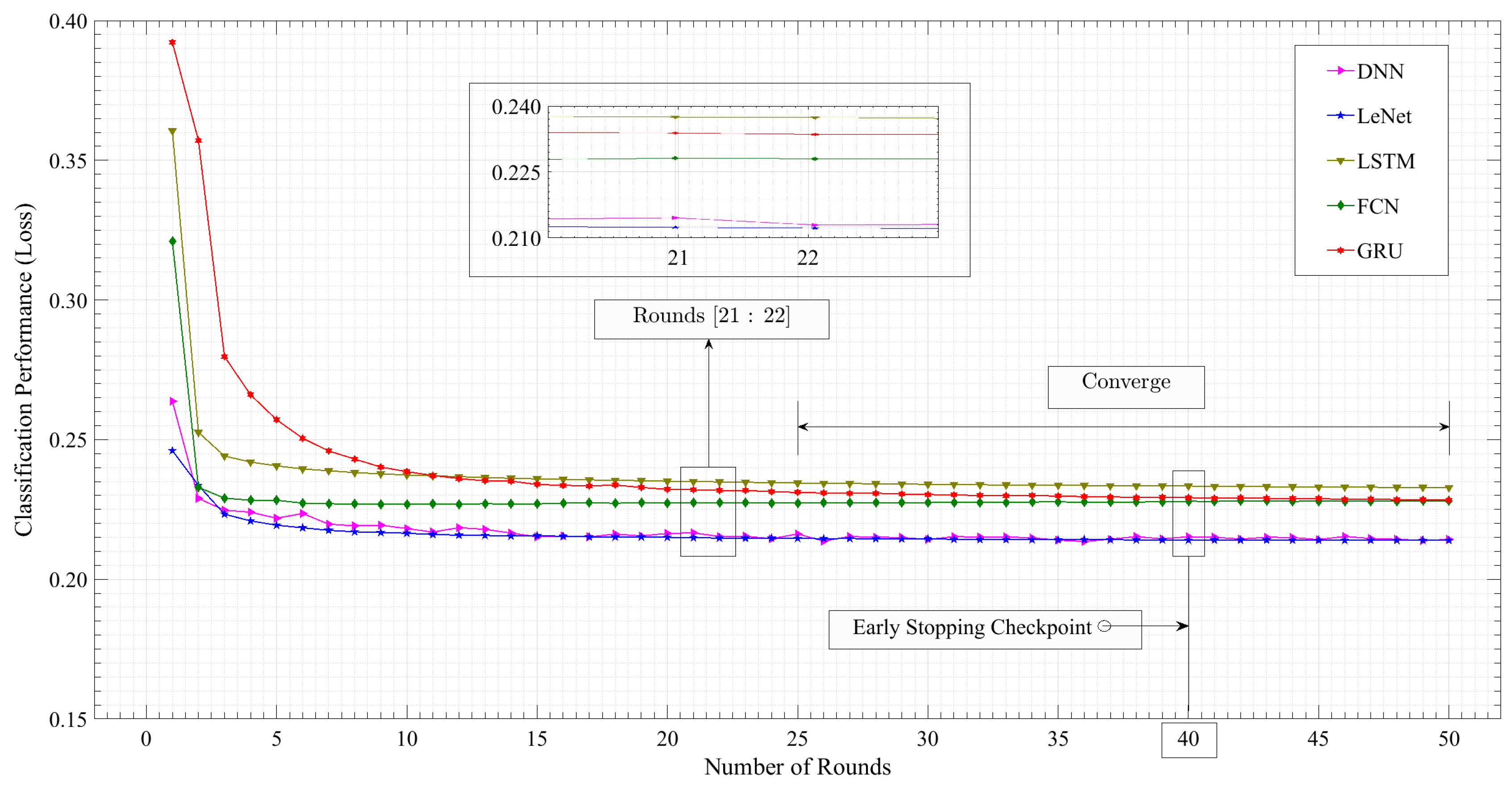

6.2. Server Accuracy and Loss Trajectory

7. Conclusion

Author Contributions

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| ANN | Artificial Neural Network |

| CDL | Centralized Deep Learning |

| CNN | Convolutional Neural Network |

| CPU | Central Processing Unit |

| CPS | Cyber-Physical Systems |

| DDL | Distributed Deep Learning |

| DDoS | Distributed Denial of Service |

| DL | Deep Learning |

| DNN | Deep Neural Network |

| DoS | Denial of Service |

| FCL | Fully Connected Layer |

| FCN | Fully Convolutional Network |

| FedAGRU | Federated Learning based Attention Gated Recurrent Uni |

| FedAvg | Federated Averaging |

| FDL | Federated Deep Learning |

| FL | Federated Learning |

| FSL | Federated Sequence Learning |

| GRU | Gated Recurrent Unit |

| GPU | Graphics Processing Unit |

| ICS | Industrial Control Systems |

| IDS | Intrusion Detection System |

| IIoT | Industrial Internet of Things |

| IoT | Internet of Things |

| JC | Jungle Computing |

| KMA | Key Management Authority |

| LDL | localized Deep Learning |

| LeNet | LeCun Network |

| LSTM | Long Short-Term Memory |

| ML | Machine Learning |

| MITM | Man-in-the-Middle |

| NIDS | Network Intrusion Detection System |

| PCA | Password Cracking Attack |

| PHEC | Probabilistic Hybrid Ensemble Classification |

| ReLU | Rectified Linear Unit |

| SSL | Secure Sockets Layer |

| STIN | Satellite Terrestrial Integrated Networks |

| SVDD | Support Vector Data Description |

| VAE | Variational Autoencoder |

| XAI | Explainable Artificial Intelligence |

| XSS | Cross-Site Scripting |

References

- Mehedi, S.; Anwar, A.; Rahman, Z.; Ahmed, K.; Islam, R. Dependable Intrusion Detection System for IoT: A Deep Transfer Learning-Based Approach. IEEE Trans. Ind. Inf. 19, 1006–1017. [CrossRef]

- Alsaedi, A.; Moustafa, N.; Tari, Z.; Mahmood, A.; Anwar, A. TON_IoT Telemetry Dataset: A New Generation Dataset of IoT and IIoT for Data-Driven Intrusion Detection Systems. IEEE Access 8, 165130–165150. [CrossRef]

- Konečný, J.; McMahan, H.; Yu, F.; Richtárik, P.; Suresh, A.; Bacon, D.L. Federated Learning: Strategies for Improving Communication Efficiency. Strategies for Improving Communication Efficiency. [CrossRef]

- Géron, A. Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 3rd ed.; O’Reilly Media, Inc.: United States of America, 2022. [Google Scholar]

- Lim, W.; Luong, N.; Hoang, D.; Jiao, Y.; Liang, Y.C.; Yang, Q.; Niyato, D.; Miao, C. Federated Learning in Mobile Edge Networks: A Comprehensive Survey. IEEE Commun. Surv. Tutorials 22, 2031–2063. [CrossRef]

- Zhao, Y.; Li, M.; Lai, L.; Suda, N.; Civin, D.; Chandra, V. Federated Learning with Non-IID Data. [CrossRef]

- Bhuiyan, M.; Kuo, S.; Lyons, D.; Shao, Z. Dependability in Cyber-Physical Systems and Applications. ACM Trans. Cyber-Phys. Syst 3, 1–4. [CrossRef]

- Aledhari, M.; Razzak, R.; Parizi, R.; Saeed, F. Federated Learning: A Survey on Enabling Technologies, Protocols, and Applications. IEEE Access 8, 140699–140725. [CrossRef]

- Li, T.; Sahu, A.; Talwalkar, A.; Smith, V. Federated Learning: Challenges, Methods, and Future Directions. IEEE Signal Process. Mag 37, 50–60. [CrossRef]

- Xu, J.; Glicksberg, B.; Su, C.; Walker, P.; Bian, J.; Wang, F. Federated Learning for Healthcare Informatics. J Healthc Inform Res 5, 1–19. [CrossRef]

- Wu, Q.; He, K.; Chen, X. Personalized Federated Learning for Intelligent IoT Applications: A Cloud-Edge Based Framework. IEEE Open J. Comput. Soc 1, 35–44. [CrossRef]

- Li, B.; Wu, Y.; Song, J.; Lu, R.; Li, T.; Zhao, L. Federated Deep Learning for Intrusion Detection in Industrial Cyber–Physical Systems. IEEE Trans. Ind. Inf 17, 5615–5624. [CrossRef]

- Yuan, B.; Ge, S.; Xing, W. A Federated Learning Framework for Healthcare IoT Devices. [CrossRef]

- Billah, M.; Mehedi, S.; Anwar, A.; Rahman, Z.; Islam, R. A Systematic Literature Review on Blockchain Enabled Federated Learning Framework for Internet of Vehicles. [CrossRef]

- Lo, S.; Lu, Q.; Wang, C.; Paik, H.Y.; Zhu, L. A Systematic Literature Review on Federated Machine Learning: From a Software Engineering Perspective. ACM Comput. Surv 54, 1–39. [CrossRef]

- Bonawitz, K.; Eichner, H.; Grieskamp, W.; Huba, D.; Ingerman, A.; Ivanov, V.; Kiddon, C.; Konečný, J.; Mazzocchi, S.; McMahan, H. Towards Federated Learning at Scale: System Design. 2019. [Google Scholar]

- Li, Z.; Sharma, V.; Mohanty, S.P. Preserving Data Privacy via Federated Learning: Challenges and Solutions. IEEE Consumer Electron. Mag 9, 8–16. [CrossRef]

- Neranjan Thilakarathne, N.; Muneeswari, G.; Parthasarathy, V.; Alassery, F.; Hamam, H.; Kumar Mahendran, R.; Shafiq, M. Federated Learning for Privacy-Preserved Medical Internet of Things. Intelligent Automation & Soft Computing 33, 157–172. [CrossRef]

- Khan, L.; Saad, W.; Han, Z.; Hossain, E.; Hong, C. Federated Learning for Internet of Things: Recent Advances, Taxonomy, and Open Challenges. [CrossRef]

- Fang, H.; Qian, Q. Privacy Preserving Machine Learning with Homomorphic Encryption and Federated Learning. Future Internet 13, 94. [CrossRef]

- Ruzafa-Alcazar, P.; Fernandez-Saura, P.; Marmol-Campos, E.; Gonzalez-Vidal, A.; Hernandez-Ramos, J.; Bernal-Bernabe, J.; Skarmeta, A. Intrusion Detection Based on Privacy-Preserving Federated Learning for the Industrial IoT. IEEE Trans. Ind. Inf 19, 1145–1154. [CrossRef]

- Chatterjee, S.; Hanawal, M. Federated Learning for Intrusion Detection in IoT Security: A Hybrid Ensemble Approach. IJITCA 2, 62. [CrossRef]

- Lazzarini, R.; Tianfield, H.; Charissis, V. Federated Learning for IoT Intrusion Detection. AI 4, 509–530. [CrossRef]

- Zhang, T.; He, C.; Ma, T.; Gao, L.; Ma, M.; Avestimehr, S. Federated Learning for Internet of Things. In Proceedings of the 19th ACM Conference on Embedded Networked Sensor Systems; ACM: Coimbra, Portugal, 2021; pp. 413–419. [Google Scholar] [CrossRef]

- Chen, Z.; Lv, N.; Liu, P.; Fang, Y.; Chen, K.; Pan, W. Intrusion Detection for Wireless Edge Networks Based on Federated Learning. IEEE Access 8, 217463–217472. [CrossRef]

- Aashmi, R.S.; Jaya, T. Intrusion Detection Using Federated Learning for Computing. Computer Systems Science and Engineering 45, 1295–1308. [CrossRef]

- Huong, T.; Bac, T.; Ha, K.; Hoang, N.; Hoang, N.; Hung, N.; Tran, K. Federated Learning-Based Explainable Anomaly Detection for Industrial Control Systems. IEEE Access 10, 53854–53872. [CrossRef]

- Popoola, S.; Ande, R.; Adebisi, B.; Gui, G.; Hammoudeh, M.; Jogunola, O. Federated Deep Learning for Zero-Day Botnet Attack Detection in IoT-Edge Devices. IEEE Internet Things J 9, 3930–3944. [CrossRef]

- Li, K.; Zhou, H.; Tu, Z.; Wang, W.; Zhang, H. Distributed Network Intrusion Detection System in Satellite-Terrestrial Integrated Networks Using Federated Learning. IEEE Access 8, 214852–214865. [CrossRef]

- Li, F.; Lin, J.; Han, H. FSL: Federated Sequential Learning-Based Cyberattack Detection for Industrial Internet of Things. Industrial Artificial Intelligence 1, 4. [CrossRef]

- Sarhan, M.; Layeghy, S.; Moustafa, N.; Portmann, M. Cyber Threat Intelligence Sharing Scheme Based on Federated Learning for Network Intrusion Detection. J Netw Syst Manage 31, 3. [CrossRef]

- Doriguzzi-Corin, R.; Siracusa, D. FLAD: Adaptive Federated Learning for DDoS Attack Detection. Computers & Security 137, 103597. [CrossRef]

- Jurcut, A.; Niculcea, T.; Ranaweera, P.; Le-Khac, N.A. Security Considerations for Internet of Things: A Survey. SN COMPUT. SCI 1, 193. [CrossRef]

- Sisinni, E.; Saifullah, A.; Han, S.; Jennehag, U.; Gidlund, M. Industrial Internet of Things: Challenges, Opportunities, and Directions. IEEE Trans. Ind. Inf 14, 4724–4734. [CrossRef]

- Xu, L.; He, W.; Li, S. Internet of Things in Industries: A Survey. IEEE Trans. Ind. Inf 10, 2233–2243. [CrossRef]

- Moshawrab, M.; Adda, M.; Bouzouane, A.; Ibrahim, H.; Raad, A.F.L.A.A.; Strategies, C. Reviewing Federated Learning Aggregation Algorithms; Strategies, Contributions, Limitations and Future Perspectives. Limitations and Future Perspectives. Electronics 12, 2287. [CrossRef]

- Zhang, C.; Chen, Y.; Meng, Y.; Ruan, F.; Chen, R.; Li, Y.; Yang, Y. A Novel Framework Design of Network Intrusion Detection Based on Machine Learning Techniques. Security and Communication Networks 1–15. [CrossRef]

- Dastres, R.; Soori, M. Secure Socket Layer (SSL) in the Network and Web Security. International Journal of Computer and Information Engineering 14, 330–333, in press.

- Singh, A.; Loar, R. Web Security and Enhancement Using SSL: A Review. International Journal of Scientific Research in Science and Technology 4.

- Das, M.; Samdaria, N. On the Security of SSL/TLS-Enabled Applications. Applied Computing and Informatics 10, 68–81. [CrossRef]

- Roy, P.; Singh, J.; Banerjee, S. Deep Learning to Filter SMS Spam. Future Generation Computer Systems 102, 524–533. [CrossRef]

- Nisioti, A.; Mylonas, A.; Yoo, P.; Katos, V. From Intrusion Detection to Attacker Attribution: A Comprehensive Survey of Unsupervised Methods. IEEE Commun. Surv. Tutorials 20, 3369–3388. [CrossRef]

- Tampuu, A.; Bzhalava, Z.; Dillner, J.; Vicente, R. ViraMiner: Deep Learning on Raw DNA Sequences for Identifying Viral Genomes in Human Samples. [CrossRef] [PubMed]

- Li, X.; Hu, Z.; Xu, M.; Wang, Y.; Ma, J. Transfer Learning Based Intrusion Detection Scheme for Internet of Vehicles. Information Sciences 547, 119–135. [CrossRef]

- Guyon, I. A Scaling Law for the Validation-Set Training-Set Size Ratio. AT & T Bell Laboratories 1.

| Hyper-parameters | Value/Function |

|---|---|

| Number of Hidden Layers | 3 |

| Units in Hidden Layers | 32, 64, 256 |

| Batch Size | 512 |

| Epochs | 5 |

| Hidden Layer Activation Function | ReLu |

| Output Layer Activation Function | Softmax |

| Dropout | N/A |

| Optimizer | Adam |

| Learning Rate | 0.01 |

| Decay | 0.005 |

| Loss Function | Categorical Cross-entropy |

| Parameters | LeNet | DNN | LSTM | GRU | FCN |

|---|---|---|---|---|---|

| Number of Hidden Layers | 3 | 3 | 4 | 2 | 3 |

| Units in Hidden Layers | 32, 64, 256 | 128, 64, 64 | 128, 256, 512, 256 | 32, 64 | 128, 256, 128 |

| Batch Size | 512 | 512 | 512 | 512 | 512 |

| Epochs | 5 | 5 | 5 | 5 | 5 |

| Hidden Layer Activation Function | ReLu | ReLu | PReLu | ReLu | ReLu |

| Output Layer Activation Function | Softmax | Softmax | Softmax | Softmax | Softmax |

| Dropout | N/A | 3 | 3 | 1 | N/A |

| Optimizer | Adam | Adam | Adam | Adam | Adam |

| Loss Function | Categorical Cross-entropy | Categorical Cross-entropy | Categorical Cross-entropy | Categorical Cross-entropy | Categorical Cross-entropy |

| Algorithms | Round No. | Clients | Accuracy | Precision | Recall | F1_Score | Loss |

|---|---|---|---|---|---|---|---|

| C=1 | 0.9168 | 0.8775 | 0.8776 | 0.8775 | 0.2032 | ||

| C=2 | 0.9167 | 0.8774 | 0.8775 | 0.8774 | 0.2031 | ||

| C=3 | 0.9166 | 0.8775 | 0.8777 | 0.8775 | 0.2032 | ||

| LeNet | 50 | C=4 | 0.9166 | 0.8777 | 0.8779 | 0.8778 | 0.2031 |

| C=5 | 0.9165 | 0.8774 | 0.8776 | 0.8775 | 0.2031 | ||

| C=6 | 0.9166 | 0.8775 | 0.8777 | 0.8776 | 0.2031 | ||

| C=7 | 0.9167 | 0.8776 | 0.8778 | 0.8777 | 0.2032 | ||

| C=1 | 0.9130 | 0.8704 | 0.8706 | 0.8705 | 0.2158 | ||

| C=2 | 0.9128 | 0.8706 | 0.8708 | 0.8707 | 0.2157 | ||

| C=3 | 0.9127 | 0.8709 | 0.8710 | 0.8709 | 0.2157 | ||

| DNN | 50 | C=4 | 0.9131 | 0.8709 | 0.8711 | 0.8710 | 0.2152 |

| C=5 | 0.9126 | 0.8707 | 0.8708 | 0.8708 | 0.2153 | ||

| C=6 | 0.9129 | 0.8709 | 0.8711 | 0.8710 | 0.2151 | ||

| C=7 | 0.9127 | 0.8709 | 0.8711 | 0.8710 | 0.2157 | ||

| C=1 | 0.9061 | 0.8584 | 0.8586 | 0.8585 | 0.2380 | ||

| C=2 | 0.9061 | 0.8587 | 0.8589 | 0.8588 | 0.2376 | ||

| C=3 | 0.9062 | 0.8588 | 0.8589 | 0.8589 | 0.2386 | ||

| LSTM | 50 | C=4 | 0.9061 | 0.8591 | 0.8592 | 0.8591 | 0.2379 |

| C=5 | 0.9061 | 0.8586 | 0.8589 | 0.8587 | 0.2379 | ||

| C=6 | 0.9061 | 0.8585 | 0.8586 | 0.8586 | 0.2381 | ||

| C=7 | 0.9062 | 0.8586 | 0.8589 | 0.8587 | 0.2380 | ||

| C=1 | 0.9069 | 0.8619 | 0.8620 | 0.8620 | 0.2329 | ||

| C=2 | 0.9073 | 0.8618 | 0.8620 | 0.8619 | 0.2328 | ||

| C=3 | 0.9072 | 0.8618 | 0.8620 | 0.8619 | 0.2329 | ||

| GRU | 50 | C=4 | 0.9071 | 0.8617 | 0.8619 | 0.8618 | 0.2326 |

| C=5 | 0.9070 | 0.8616 | 0.8618 | 0.8617 | 0.2329 | ||

| C=6 | 0.9071 | 0.8613 | 0.8615 | 0.8614 | 0.2327 | ||

| C=7 | 0.9070 | 0.8615 | 0.8616 | 0.8616 | 0.2327 | ||

| C=1 | 0.9154 | 0.8741 | 0.8747 | 0.8744 | 0.2093 | ||

| C=2 | 0.9150 | 0.8741 | 0.8747 | 0.8744 | 0.2093 | ||

| C=3 | 0.9147 | 0.8742 | 0.8744 | 0.8741 | 0.2093 | ||

| FCN | 50 | C=4 | 0.9151 | 0.8740 | 0.8746 | 0.8743 | 0.2092 |

| C=5 | 0.9148 | 0.8743 | 0.8749 | 0.8746 | 0.2093 | ||

| C=6 | 0.9147 | 0.8742 | 0.8747 | 0.8745 | 0.2093 | ||

| C=7 | 0.9153 | 0.8743 | 0.8749 | 0.8746 | 0.2092 |

| Algorithm | Accuracy | Precision | Recall | F1_Score | Loss |

|---|---|---|---|---|---|

| LeNet | 0.9168 | 0.8777 | 0.8779 | 0.8778 | 0.2031 |

| DNN | 0.9131 | 0.8709 | 0.8711 | 0.8710 | 0.2151 |

| LSTM | 0.9062 | 0.8591 | 0.8592 | 0.8591 | 0.2376 |

| GRU | 0.9073 | 0.8619 | 0.8620 | 0.8620 | 0.2326 |

| FCN | 0.9153 | 0.8743 | 0.8749 | 0.8746 | 0.2092 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).