Submitted:

29 August 2024

Posted:

30 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

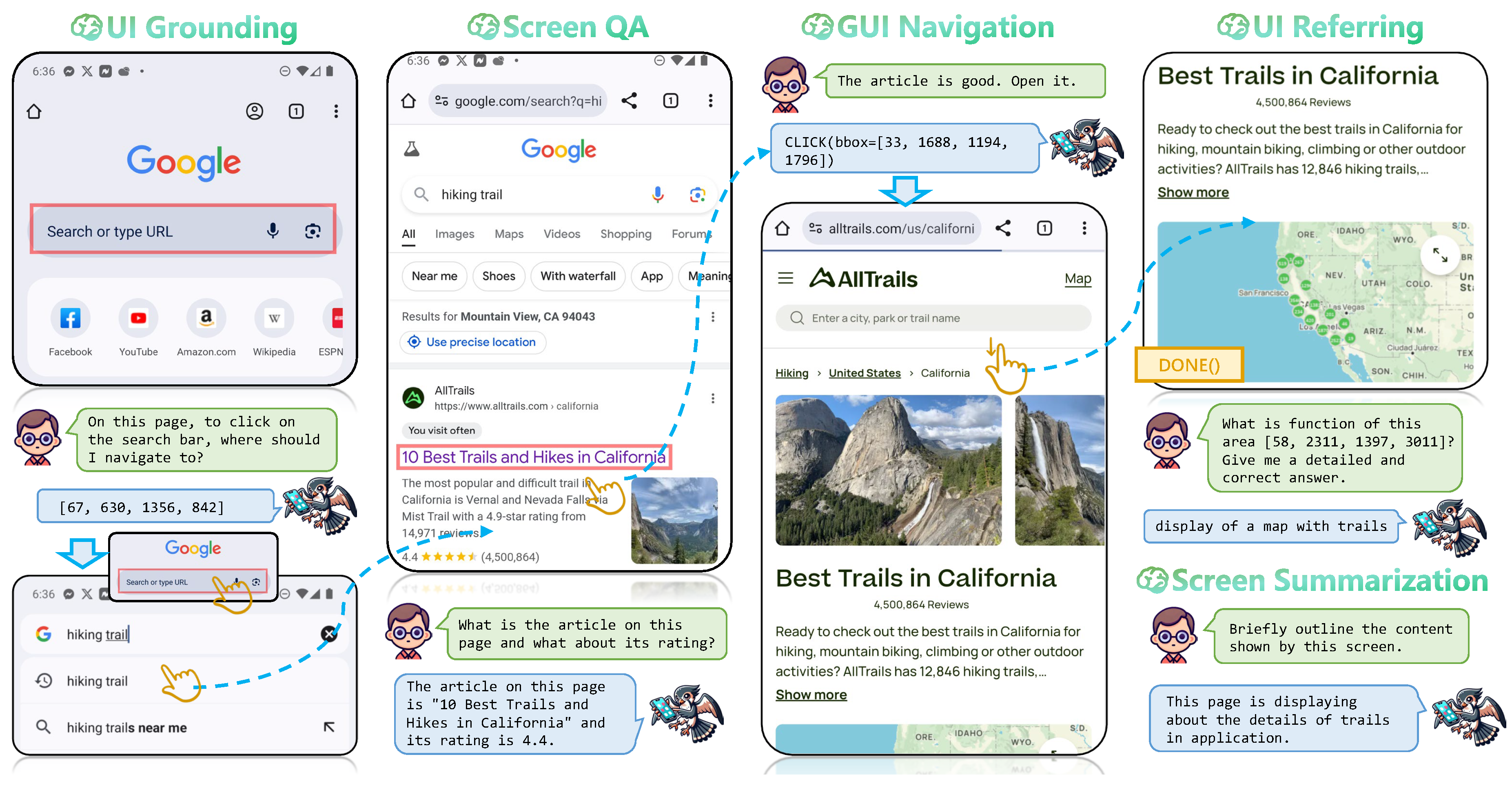

- We introduce a unified GUI agent UI-Hawk, combining a history-aware visual encoder and an efficient resampler with LLM to effectively process stream of screens.

- We meticulously identify four fundamental tasks for screen stream understanding, and validates the effectiveness of proposed tasks towards episodic GUI navigation.

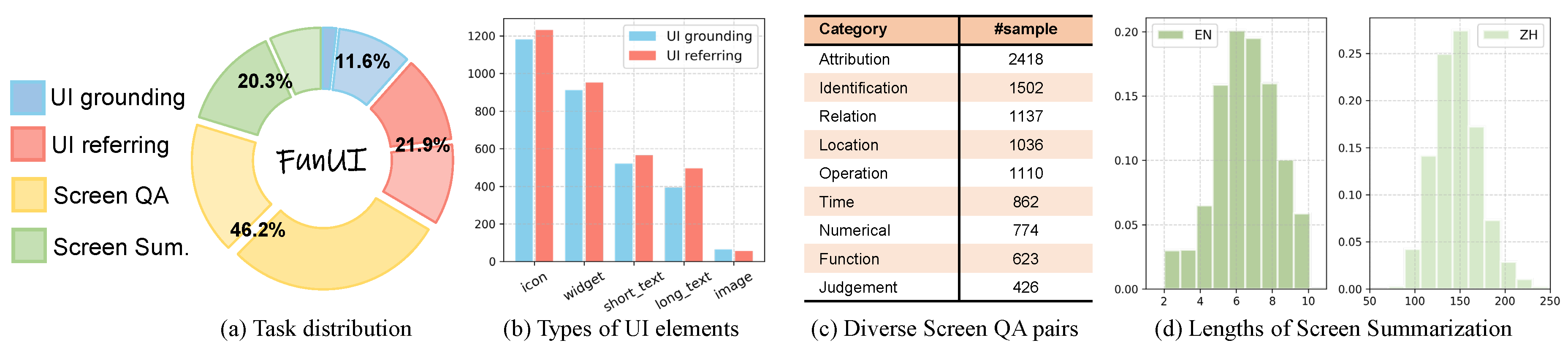

- We rigorously construct a bilingual and comprehensive screen understanding benchmark FunUI, encompassing 32k samples with over 120 types of UI elements.

- Experimental results demonstrate that possessing the screen stream understanding capability is the key to enhancing the performance of GUI navigation.

2. Related Works

3. Methodology

3.1. Model Architecture

3.2. Model Training

4. Dataset and Task Formulation

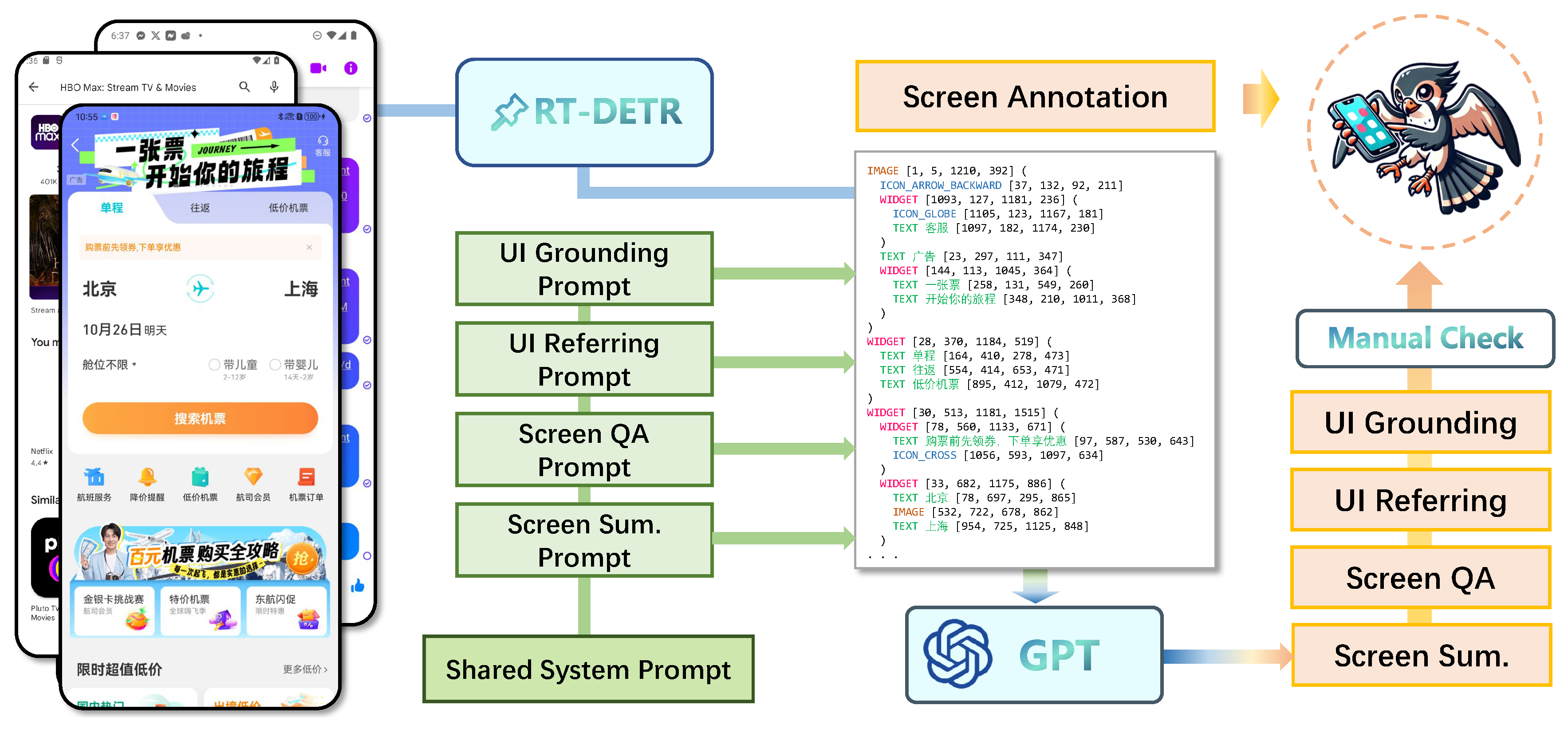

4.1. Data Collection

Mobile Screens

Screen Annotations

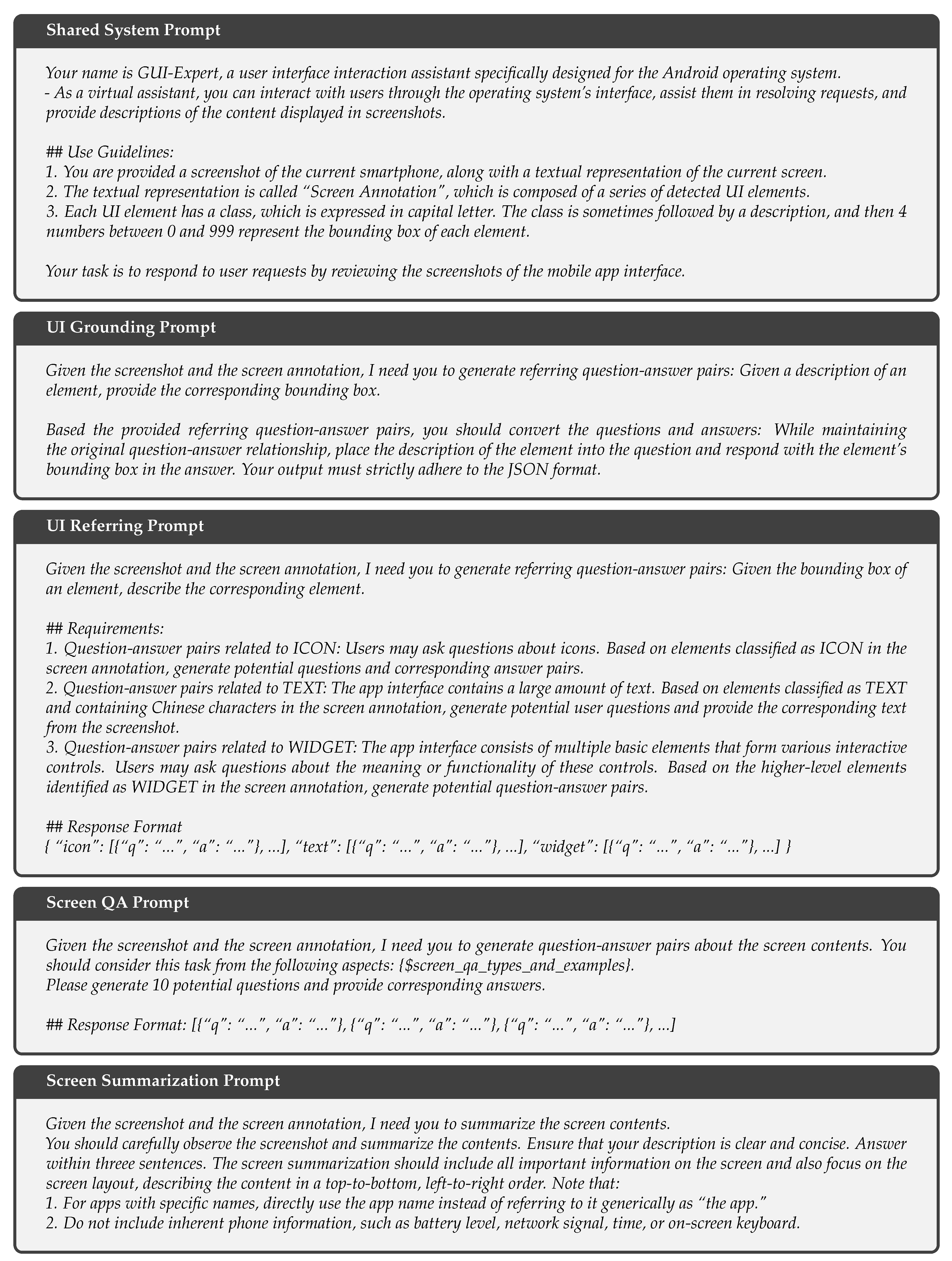

4.2. Fundamental Tasks

UI Referring

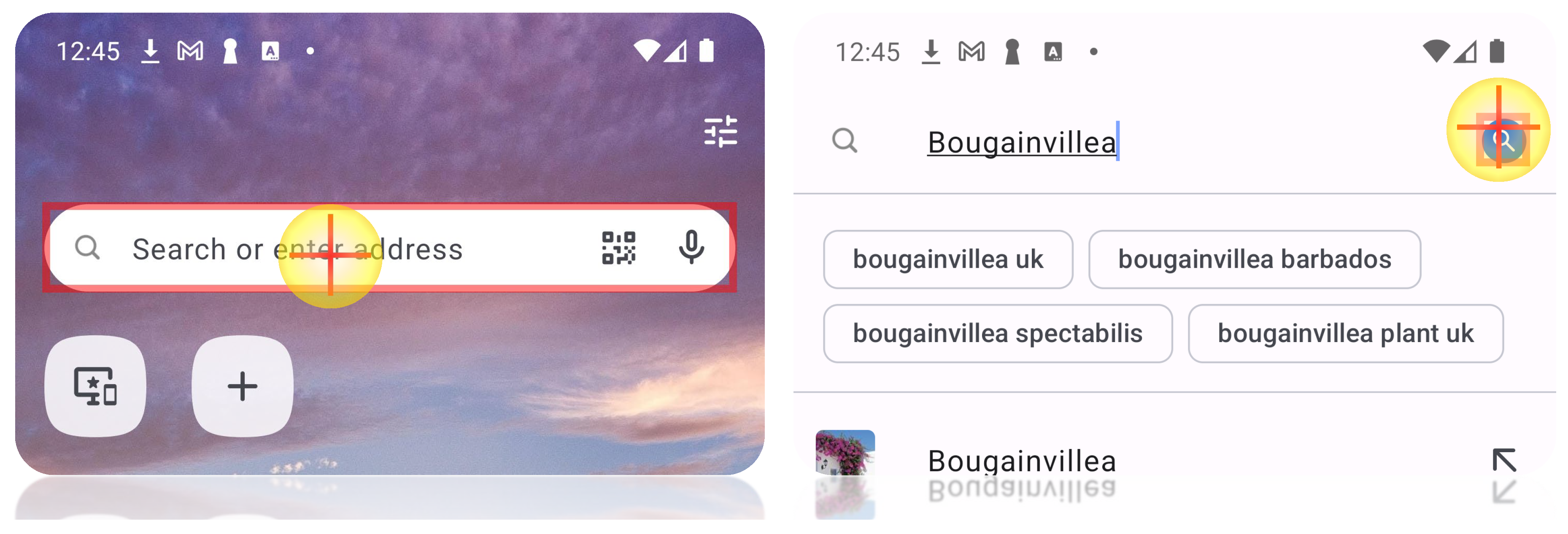

UI Grounding

Screen question answering

Screen Summarization

4.3. GUI Navigation Tasks

GUI-Odyssey+

GUI-Zouwu

5. FunUI Benchmark

6. Experiments

6.1. Experimental Setup

6.1.1. Baselines

6.1.2. Evaluation Metrics

6.2. Main Results

Screen Understanding

6.3. Ablation Studies

The Impact of Fundamental Screen Understanding

The Impact of Screen Streaming

7. Conclusion

Appendix A. Data Collection

Appendix A.1. Screen Annotation

Screen Filtering

Screen Annotation

Appendix A.2. Fundamental Tasks

- Identification Questions: These involve queries about what something is, such as “What is the doctor’s name?" when presented with a doctor’s information, but not directly telling you that this person is a doctor.

- Attribution Questions: Such questions involve associated attributes of screen elements, such as “What is the rating of the xxx?" and “Who is the author of the book yyy?".

- Relationship questions: These include comparisons between two or more screen elements, such as “Which one has a higher price, A or B?" or “Which shopping market is located at the farthest away?"

- Localization questions: These questions provide a detailed description of a specific screen element and then ask about its location on the screen, such as “Where is the 2019 MacBook Air product located on the screen?"

- Operation Questions: These questions involve operations on the screen, such as “How to open the shopping cart?"

- Temporal Questions: Any questions related to time or date fall into this category, such as “What is the current time on the screen?" or “What are the departure and arrival dates of the flight?"

- Numerical Questions: These contain any questions related to numbers or calculations, such as “How many items are there in the cart?" or “What is the lowest price of the science fictions on this page?"

- Judgement Questions: These questions involve making yes/no or true/false determinations. For example, “Is it possible to upgrade to VIP on this page? "

Appendix A.3. GUI-Zouwu

| Content | Structure | Fluency | Authenticity | GPT-Score | |

|---|---|---|---|---|---|

| GPT-4V* | 5.52 | 5.83 | 7.64 | 5.34 | 60.8 |

| Qwen-VL | 4.13 | 4.32 | 6.44 | 4.02 | 47.3 |

| InternVL2-8B | 7.34 | 7.50 | 8.41 | 7.91 | 77.9 |

| UI-Hawk-Minus | 7.45 | 7.71 | 8.53 | 7.79 | 78.7 |

| UI-Hawk | 7.51 | 7.84 | 8.60 | 7.85 | 79.5 |

Appendix B. Training Details

Pre-Training

Supervised Fine-Tuning

| (en) | GPT4-V | SeeClick | UI-Hawk |

|---|---|---|---|

| IoU=0.1 | 27.4 | 62.1 | 85.5 |

| IoU=0.5 | 2.3 | 29.6 | 63.9 |

| 25.13 | 32.57 | 21.59 |

Appendix C. Evaluation Details

Appendix C.1. Fundamental Tasks

UI Grounding

UI Referring

Screen QA

Screen Summariztion

Appendix C.2. GUI Navigation

Action Space

- CLICK(bbox=[x1, y1, x2, y2]): click (including long press) the on-screen element within the box.

- SCROLL(direction="up|down|left|right"): swipe the screen to a specified direction.

- TYPE(text="..."): type text with keyboard.

- PRESS(button="home|back|recent"): press the system level shortcut buttons provided by Android OS. “press home" means going to the home screen, “press back" means going to the previous screen, “press recent" means going to the previous app.

- DONE(status="complete|impossible"): stop and judge whether the task has been completed.

Metrics

References

- OpenAI. 2023. GPT-4V.

- Yang, Z.; Liu, J.; Han, Y.; Chen, X.; Huang, Z.; Fu, B.; Yu, G. Appagent: Multimodal agents as smartphone users. arXiv 2023, arXiv:2312.13771. [Google Scholar]

- Wang, J.; Xu, H.; Ye, J.; Yan, M.; Shen, W.; Zhang, J.; Huang, F.; Sang, J. Mobile-Agent: Autonomous Multi-Modal Mobile Device Agent with Visual Perception. arXiv 2024, arXiv:2401.16158. [Google Scholar]

- Zhang, C.; Li, L.; He, S.; Zhang, X.; Qiao, B.; Qin, S.; Ma, M.; Kang, Y.; Lin, Q.; Rajmohan, S.; et al. Ufo: A ui-focused agent for windows os interaction. arXiv 2024, arXiv:2402.07939. [Google Scholar]

- Zhan, Z.; Zhang, A. You only look at screens: Multimodal chain-of-action agents. arXiv 2023, arXiv:2309.11436. [Google Scholar]

- Hong, W.; Wang, W.; Lv, Q.; Xu, J.; Yu, W.; Ji, J.; Wang, Y.; Wang, Z.; Dong, Y.; Ding, M.; et al. Cogagent: A visual language model for gui agents. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2024; pp. 14281–14290. [Google Scholar]

- Zhang, J.; Wu, J.; Teng, Y.; Liao, M.; Xu, N.; Xiao, X.; Wei, Z.; Tang, D. Android in the zoo: Chain-of-action-thought for gui agents. arXiv 2024, arXiv:2403.02713. [Google Scholar]

- Bai, J.; Bai, S.; Yang, S.; Wang, S.; Tan, S.; Wang, P.; Lin, J.; Zhou, C.; Zhou, J. Qwen-vl: A frontier large vision-language model with versatile abilities. arXiv 2023, arXiv:2308.12966. [Google Scholar]

- Yang, A.; Yang, B.; Hui, B.; Zheng, B.; Yu, B.; Zhou, C.; Li, C.; Li, C.; Liu, D.; Huang, F.; et al. Qwen2 Technical Report. arXiv 2024, arXiv:2407.10671. [Google Scholar]

- Cheng, K.; Sun, Q.; Chu, Y.; Xu, F.; Li, Y.; Zhang, J.; Wu, Z. Seeclick: Harnessing gui grounding for advanced visual gui agents. arXiv 2024, arXiv:2401.10935. [Google Scholar]

- Fan, Y.; Ding, L.; Kuo, C.C.; Jiang, S.; Zhao, Y.; Guan, X.; Yang, J.; Zhang, Y.; Wang, X.E. Read Anywhere Pointed: Layout-aware GUI Screen Reading with Tree-of-Lens Grounding. arXiv 2024, arXiv:2406.19263. [Google Scholar]

- Shi, T.; Karpathy, A.; Fan, L.; Hernandez, J.; Liang, P. World of bits: An open-domain platform for web-based agents. International Conference on Machine Learning. PMLR, 2017, pp. 3135–3144.

- Deka, B.; Huang, Z.; Franzen, C.; Hibschman, J.; Afergan, D.; Li, Y.; Nichols, J.; Kumar, R. Rico: A mobile app dataset for building data-driven design applications. In Proceedings of the 30th annual ACM symposium on user interface software and technology; 2017; pp. 845–854. [Google Scholar]

- Liu, E.Z.; Guu, K.; Pasupat, P.; Shi, T.; Liang, P. Reinforcement Learning on Web Interfaces using Workflow-Guided Exploration. International Conference on Learning Representations, 2018.

- Burns, A.; Arsan, D.; Agrawal, S.; Kumar, R.; Saenko, K.; Plummer, B.A. Mobile app tasks with iterative feedback (motif): Addressing task feasibility in interactive visual environments. arXiv 2021, arXiv:2104.08560. [Google Scholar]

- Sun, L.; Chen, X.; Chen, L.; Dai, T.; Zhu, Z.; Yu, K. META-GUI: Towards Multi-modal Conversational Agents on Mobile GUI. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing; 2022; pp. 6699–6712. [Google Scholar]

- Deng, X.; Gu, Y.; Zheng, B.; Chen, S.; Stevens, S.; Wang, B.; Sun, H.; Su, Y. Mind2web: Towards a generalist agent for the web. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Lu, Q.; Shao, W.; Liu, Z.; Meng, F.; Li, B.; Chen, B.; Huang, S.; Zhang, K.; Qiao, Y.; Luo, P. GUI Odyssey: A Comprehensive Dataset for Cross-App GUI Navigation on Mobile Devices. arXiv 2024, arXiv:2406.08451. [Google Scholar]

- Rawles, C.; Li, A.; Rodriguez, D.; Riva, O.; Lillicrap, T. Androidinthewild: A large-scale dataset for android device control. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Chen, D.; Huang, Y.; Wu, S.; Tang, J.; Chen, L.; Bai, Y.; He, Z.; Wang, C.; Zhou, H.; Li, Y.; et al. GUI-WORLD: A Dataset for GUI-oriented Multimodal LLM-based Agents. arXiv 2024, arXiv:2406.10819. [Google Scholar]

- Yan, A.; Yang, Z.; Zhu, W.; Lin, K.; Li, L.; Wang, J.; Yang, J.; Zhong, Y.; McAuley, J.; Gao, J.; et al. Gpt-4v in wonderland: Large multimodal models for zero-shot smartphone gui navigation. arXiv 2023, arXiv:2311.07562. [Google Scholar]

- Zheng, B.; Gou, B.; Kil, J.; Sun, H.; Su, Y. Gpt-4v (ision) is a generalist web agent, if grounded. arXiv 2024, arXiv:2401.01614. [Google Scholar]

- Kim, G.; Baldi, P.; McAleer, S. Language models can solve computer tasks. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Wen, H.; Li, Y.; Liu, G.; Zhao, S.; Yu, T.; Li, T.J.J.; Jiang, S.; Liu, Y.; Zhang, Y.; Liu, Y. Empowering llm to use smartphone for intelligent task automation. arXiv 2023, arXiv:2308.15272. [Google Scholar]

- Bai, C.; Zang, X.; Xu, Y.; Sunkara, S.; Rastogi, A.; Chen, J.; et al. Uibert: Learning generic multimodal representations for ui understanding. arXiv 2021, arXiv:2107.13731. [Google Scholar]

- Zhang, X.; De Greef, L.; Swearngin, A.; White, S.; Murray, K.; Yu, L.; Shan, Q.; Nichols, J.; Wu, J.; Fleizach, C.; et al. Screen recognition: Creating accessibility metadata for mobile applications from pixels. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems; 2021; pp. 1–15. [Google Scholar]

- Venkatesh, S.G.; Talukdar, P.; Narayanan, S. Ugif: Ui grounded instruction following. arXiv 2022, arXiv:2211.07615. [Google Scholar]

- Li, G.; Li, Y. Spotlight: Mobile ui understanding using vision-language models with a focus. arXiv 2022, arXiv:2209.14927. [Google Scholar]

- Jiang, Y.; Schoop, E.; Swearngin, A.; Nichols, J. ILuvUI: Instruction-tuned LangUage-Vision modeling of UIs from Machine Conversations. arXiv 2023, arXiv:2310.04869. [Google Scholar]

- Yu, Y.Q.; Liao, M.; Wu, J.; Liao, Y.; Zheng, X.; Zeng, W. TextHawk: Exploring Efficient Fine-Grained Perception of Multimodal Large Language Models. arXiv 2024, arXiv:2404.09204. [Google Scholar]

- Zhai, X.; Mustafa, B.; Kolesnikov, A.; Beyer, L. Sigmoid loss for language image pre-training. In Proceedings of the IEEE/CVF International Conference on Computer Vision; 2023; pp. 11975–11986. [Google Scholar]

- Ye, J.; Hu, A.; Xu, H.; Ye, Q.; Yan, M.; Xu, G.; Li, C.; Tian, J.; Qian, Q.; Zhang, J.; et al. Ureader: Universal ocr-free visually-situated language understanding with multimodal large language model. arXiv 2023, arXiv:2310.05126. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. Lora: Low-rank adaptation of large language models. arXiv 2021, arXiv:2106.09685. [Google Scholar]

- Li, Y.; Li, G.; He, L.; Zheng, J.; Li, H.; Guan, Z. Widget Captioning: Generating Natural Language Description for Mobile User Interface Elements. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP); 2020; pp. 5495–5510. [Google Scholar]

- Hsiao, Y.C.; Zubach, F.; Wang, M.; et al. Screenqa: Large-scale question-answer pairs over mobile app screenshots. arXiv 2022, arXiv:2209.08199. [Google Scholar]

- Wang, B.; Li, G.; Zhou, X.; Chen, Z.; Grossman, T.; Li, Y. Screen2words: Automatic mobile UI summarization with multimodal learning. The 34th Annual ACM Symposium on User Interface Software and Technology, 2021, pp. 498–510.

- Wu, J.; Krosnick, R.; Schoop, E.; Swearngin, A.; Bigham, J.P.; Nichols, J. Never-ending learning of user interfaces. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology; 2023; pp. 1–13. [Google Scholar]

- Feiz, S.; Wu, J.; Zhang, X.; Swearngin, A.; Barik, T.; Nichols, J. Understanding screen relationships from screenshots of smartphone applications. In Proceedings of the 27th International Conference on Intelligent User Interfaces; 2022; pp. 447–458. [Google Scholar]

- Baechler, G.; Sunkara, S.; Wang, M.; Zubach, F.; Mansoor, H.; Etter, V.; Cărbune, V.; Lin, J.; Chen, J.; Sharma, A. Screenai: A vision-language model for ui and infographics understanding. arXiv 2024, arXiv:2402.04615. [Google Scholar]

- You, K.; Zhang, H.; Schoop, E.; Weers, F.; Swearngin, A.; Nichols, J.; Yang, Y.; Gan, Z. Ferret-UI: Grounded Mobile UI Understanding with Multimodal LLMs. arXiv 2024, arXiv:2404.05719. [Google Scholar]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. Detrs beat yolos on real-time object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2024; pp. 16965–16974. [Google Scholar]

- Sunkara, S.; Wang, M.; Liu, L.; Baechler, G.; Hsiao, Y.C.; Sharma, A.; Stout, J.; et al. Towards better semantic understanding of mobile interfaces. arXiv 2022, arXiv:2210.02663. [Google Scholar]

- Chen, Z.; Wang, W.; Tian, H.; Ye, S.; Gao, Z.; Cui, E.; Tong, W.; Hu, K.; Luo, J.; Ma, Z.; et al. How Far Are We to GPT-4V? Closing the Gap to Commercial Multimodal Models with Open-Source Suites. arXiv arXiv:2404.16821.

- Li, L.H.; Zhang, P.; Zhang, H.; Yang, J.; Li, C.; Zhong, Y.; Wang, L.; Yuan, L.; Zhang, L.; Hwang, J.N.; et al. Grounded language-image pre-training. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2022; pp. 10965–10975. [Google Scholar]

- Zhang, Z.; Xie, W.; Zhang, X.; Lu, Y. Reinforced ui instruction grounding: Towards a generic ui task automation api. arXiv 2023, arXiv:2310.04716. [Google Scholar]

- Everingham, M.; Van Gool, L.; Williams, C.K.; Winn, J.; Zisserman, A. The pascal visual object classes (voc) challenge. International journal of computer vision 2010, 88, 303–338. [Google Scholar] [CrossRef]

- Vedantam, R.; Lawrence Zitnick, C.; Parikh, D. Cider: Consensus-based image description evaluation. In Proceedings of the IEEE conference on computer vision and pattern recognition; 2015; pp. 4566–4575. [Google Scholar]

| Task | # Samples | Data Source | ||

|---|---|---|---|---|

| ZH | EN | ZH | EN | |

| UI Grounding | 580k | 16k | Ours | [25] |

| UI Referring | 600k | 109k | Ours | [34] |

| Screen QA | 1200k | 288k | Ours | [35] |

| Screen Sum. | 50k | 78k | Ours | [36] |

| GUI Navigation | 55k | 87k | Ours | [18] |

| Grounding | Referring | ScreenQA | Screen Sum. | Visual | GUI-Odyssey+ | GUI-Zouwu | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| EN | CN | EN | CN | EN | CN | EN | CN | History | Overall | ClickAcc | Overall | ClickAcc | |

| (1) | × | 71.7 | 66.9 | 41.2 | 49.3 | ||||||||

| (2) | ✓ | 75.7 | 71.9 | 44.8 | 56.5 | ||||||||

| (3) | 32 | 32 | ✓ | 77.8 | 74.5 | 46.1 | 59.2 | ||||||

| (4) | 32 | 32 | ✓ | 77.7 | 73.9 | 46.0 | 58.4 | ||||||

| (5) | 32 | 32 | ✓ | 77.6 | 73.6 | 46.5 | 58.4 | ||||||

| (6) | 32 | 32 | ✓ | 77.3 | 73.1 | 45.6 | 57.7 | ||||||

| (7) | 32 | 32 | 32 | 32 | 32 | 32 | 32 | 32 | × | 72.7 | 68.1 | 43.3 | 55.6 |

| (8) | 32 | 32 | 32 | 32 | 32 | 32 | 32 | 32 | ✓ | 78.3 | 75.1 | 45.9 | 58.4 |

| (9) | Full Data | ✓ | 79.4 | 76.3 | 47.9 | 61.4 | |||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).