Submitted:

17 August 2024

Posted:

19 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- (1)

- This paper introduces a lightweight and efficient LFNSB model that utilizes a deep convolutional neural network to capture both detailed and global features of facial images while maintaining high computational efficiency.

- (2)

- This paper introduces a new loss function called Cosine-Harmony Loss. It utilizes adjusted cosine distance to optimize the computation of class centers, balancing intra-class compactness and inter-class separation.

- (3)

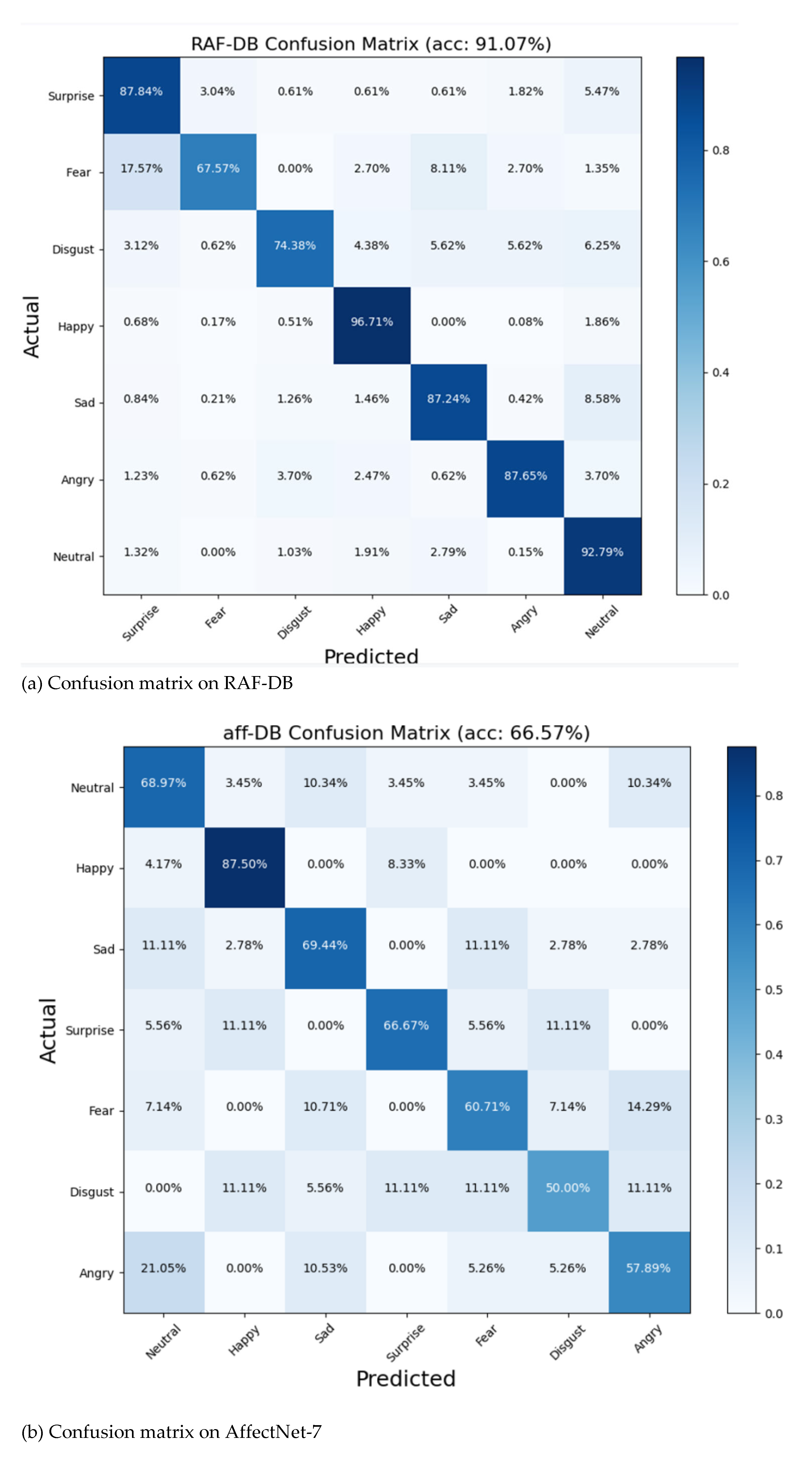

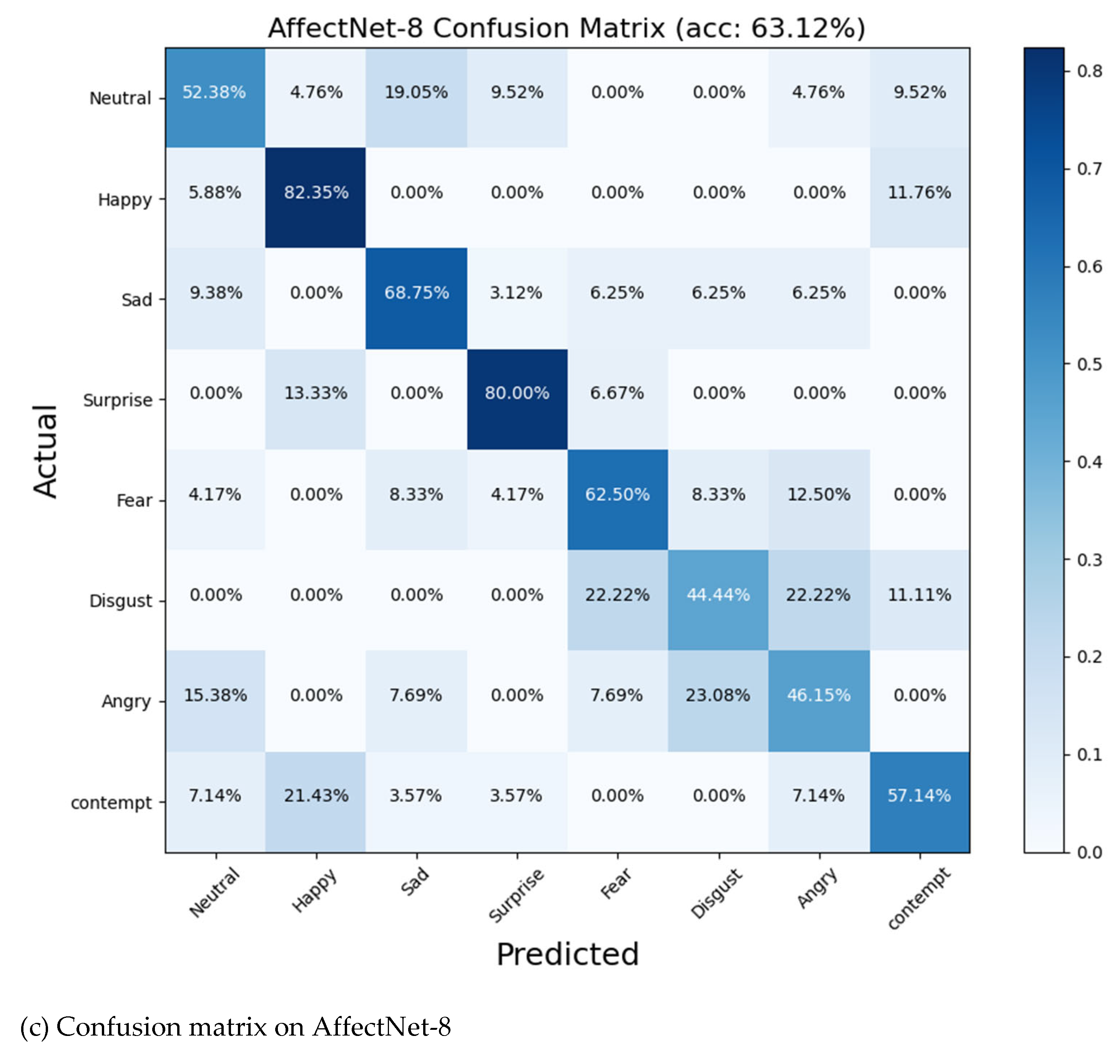

- Experimental results show that the proposed LFNSB method achieves an accuracy of 63.12% on AffectNet-8, 66.57% on AffectNet-7, and 91.07% on RAF-DB.

2. Materials and Methods

2.1. Related work

2.1.1. FER

2.1.2. Attention Mechanism

2.1.3. Loss Function

1.2. Method

2.2.1. LFN

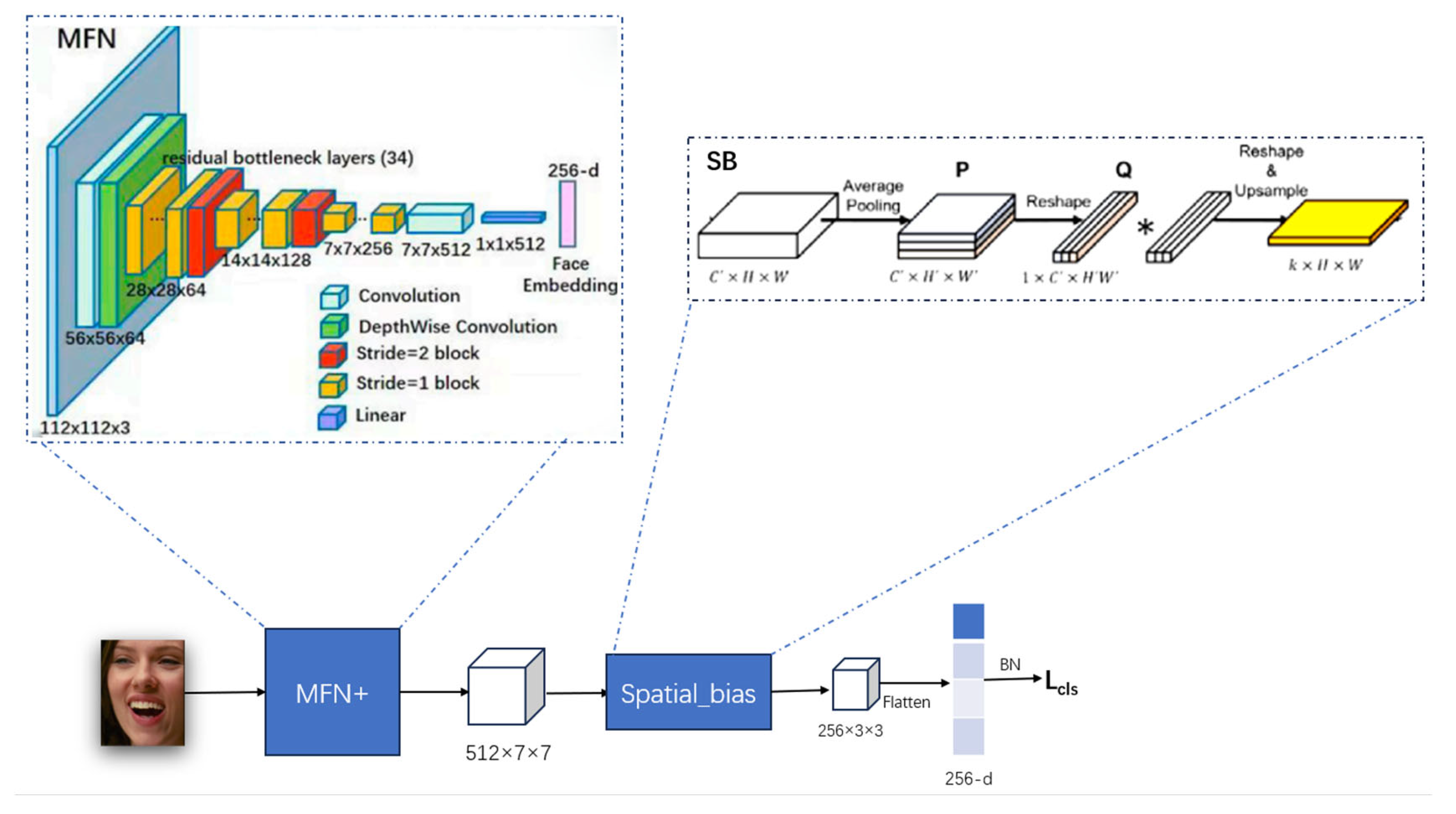

Improved Face Expression Recognition Network LFN Based on MFN

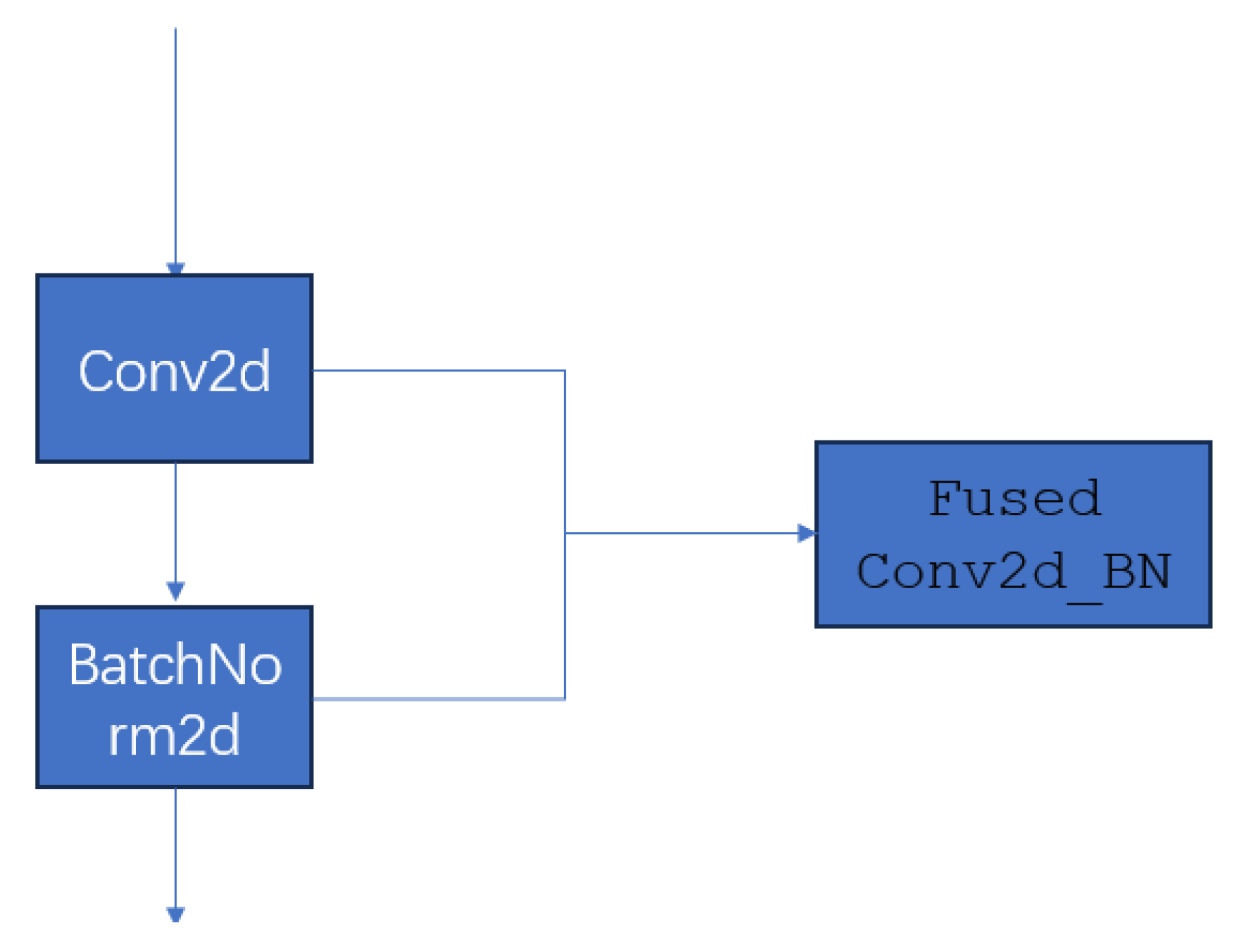

2.2.1.2. Conv2d_BN

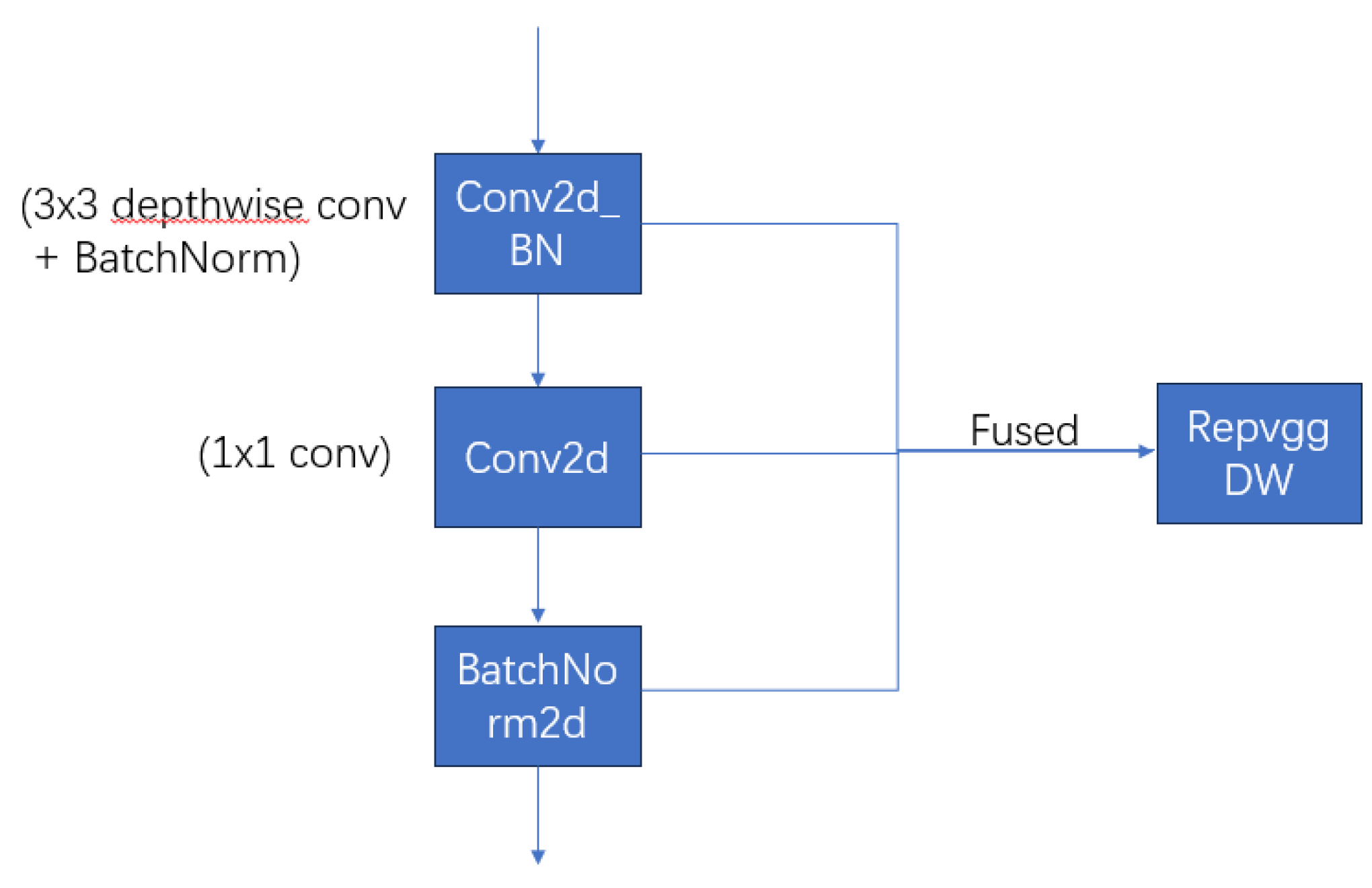

2.2.1.2. RepVGGDW

1.2.1. Spatial Bias

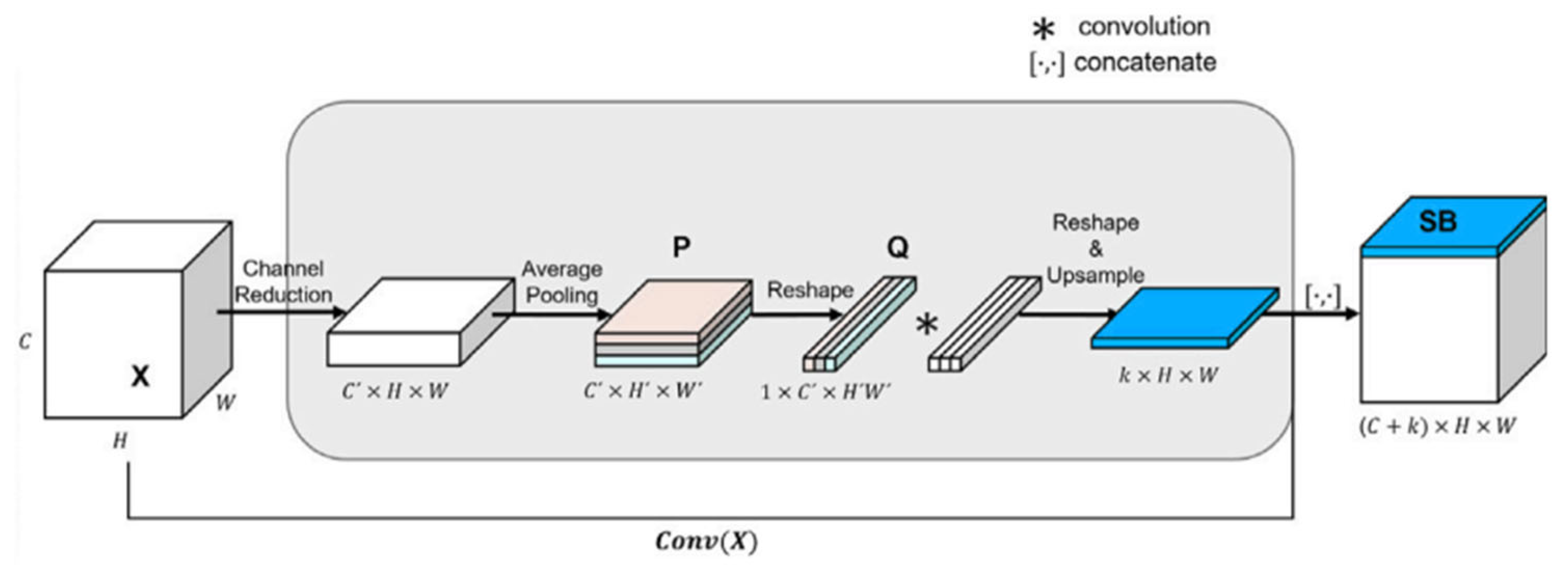

- Input Feature Map Compression: The input feature map is first compressed through a 1×1 convolution, resulting in a feature map with fewer channels. Then, an adaptive average pooling layer is used to spatially compress the feature map, producing a smaller feature map.

- Feature Map Flattening: The feature map for each channel is flattened into a one-dimensional vector, resulting in a transformed feature map.

- Global Knowledge Aggregation: A 1D convolution is applied to the flattened feature map to encode global knowledge, capturing global dependencies and generating the spatial bias map.

- Upsampling and Concatenation: The spatial bias map is upsampled to the same size as the original convolutional feature map using bilinear interpolation, and then concatenated with the convolutional feature map along the channel dimension.

1.2.1. Cosine-Harmony Loss

3. Results

3.1. Datasets

3.2. Implementation Details

3.3. Ablation Studies

3.3.1. Effectiveness of the Cosine-Harmony Loss

3.3.2. Effectiveness of the Cosine-Harmony Loss

3.3.3. Effectiveness of the LFNSB

3.4. Quantitative Performance Comparisons

3.5. K-Fold Cross-Validation

3.6. Confusion Matrix

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Banerjee, R., De, S., & Dey, S. (2023). A survey on various deep learning algorithms for an efficient facial expression recognition system. International Journal of Image and Graphics, 23(03), 2240005. [CrossRef]

- Sajjad, M., Ullah, F. U. M., Ullah, M., Christodoulou, G., Cheikh, F. A., Hijji, M.,... & Rodrigues, J. J. (2023). A comprehensive survey on deep facial expression recognition: challenges, applications, and future guidelines. Alexandria Engineering Journal, 68, 817-840. [CrossRef]

- Adyapady, R. R., & Annappa, B. (2023). A comprehensive review of facial expression recognition techniques. Multimedia Systems, 29(1), 73-103. [CrossRef]

- Zhang S, Zhang Y, Zhang Y, et al. A dual-direction attention mixed feature network for facial expression recognition. Electronics, 2023, 12(17): 3595.

- Tan, M.; Le, Q.V. Mixconv: Mixed depthwise convolutional kernels. In Proceedings of the 30th British Machine Vision Conference 2019, Cardiff, UK, 9–12 September 2019. [Google Scholar]

- Go, J., & Ryu, J. (2024). Spatial bias for attention-free non-local neural networks. Expert Systems with Applications, 238, 122053. [CrossRef]

- Deep Facial Expression Recognition: A Survey.

- Deng, J., Guo, J., Xue, N., & Zafeiriou, S. (2019). Arcface: Additive angular margin loss for deep face recognition. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 4690-4699).

- Wang, H., Wang, Y., Zhou, Z., Ji, X., Gong, D., Zhou, J., ... & Liu, W. (2018). Cosface: Large margin cosine loss for deep face recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 5265-5274).

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015; pp. 1–14. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Altaher, A., Salekshahrezaee, Z., Abdollah Zadeh, A., Rafieipour, H., & Altaher, A. (2020). Using multi-inception CNN for face emotion recognition. Journal of Bioengineering Research, 3(1), 1-12.

- Wang A, Chen H, Lin Z, et al. Repvit: Revisiting mobile cnn from vit perspective[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2024: 15909-15920.

- Chen, S., Liu, Y., Gao, X., & Han, Z. (2018). Mobilefacenets: Efficient cnns for accurate real-time face verification on mobile devices. In Biometric Recognition: 13th Chinese Conference, CCBR 2018, Urumqi, China, August 11-12, 2018, Proceedings 13 (pp. 428-438). Springer International Publishing.

- Tan, M.; Le, Q.V. Mixconv: Mixed depthwise convolutional kernels. In Proceedings of the 30th British Machine Vision Conference 2019, Cardiff, UK, 9–12 September 2019. [Google Scholar]

- Hou, Q.; Zhou, D.; Feng, J. Coordinate Attention for Efficient Mobile Network Design. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 13713–13722. [Google Scholar]

- Q. You, H. Jin, and J. Luo, Visual sentiment analysis by attending on local image regions,in Proc. AAAI Conf. Artif. Intell., 2017, pp. 231–237. [CrossRef]

- S. Zhao, Z. Jia, H. Chen, L. Li, G. Ding, and K. Keutzer, PDANet: Polarity-consistent deep attention network for fine-grained visual emotion regression, in Proc. 27th ACM Int. Conf. Multimedia, Oct. 2019, pp. 192–201.

- Farzaneh, A.H., Qi, X.: Facial expression recognition in the wild via deep attentive center loss. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp 2402–2411 (2021).

- Li, Y., Lu, Y., Li, J., & Lu, G. (2019, October). Separate loss for basic and compound facial expression recognition in the wild. In Asian conference on machine learning (pp. 897-911). PMLR.

- Yandong Wen, Kaipeng Zhang, Zhifeng Li, and Yu Qiao. A discriminative feature learning approach for deep face recognition. In European conference on computer vision, pages 499–515. Springer, 2016. [CrossRef]

- Wen, Z.; Lin, W.; Wang, T.; Xu, G. Distract your attention: Multi-head cross attention network for facial expression recognition. Biomimetics 2023, 8, 199. [Google Scholar] [CrossRef] [PubMed]

- Weiyang Liu, Yandong Wen, Zhiding Yu, Ming Li, Bhiksha Raj, and Le Song. Sphereface: Deep hypersphere embedding for face recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 212–220, 2017.

- Yu Liu, Hongyang Li, and Xiaogang Wang. Learning deep features via congenerous cosine loss for person recognition. arXiv preprint. arXiv:1702.06890, 2017.

- Abhinav Dhall, Roland Goecke, Simon Lucey, and Tom Gedeon. Collecting large, richly annotated facial expression databases from movies. IEEE multimedia, 19(03):34–41, 2012.

- Shan Li and Weihong Deng. Reliable crowdsourcing and deep locality-preserving learning for unconstrained facial expression recognition. IEEE Transactions on Image Processing, 28(1):356–370, 2018. [CrossRef]

- Guo, Y.; Zhang, L.; Hu, Y.; He, X.; Gao, J. Ms-celeb-1m: A dataset and benchmark for large-scale face recognition. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 87–102. [Google Scholar]

- Kai Wang, Xiaojiang Peng, Jianfei Yang, Debin Meng, and Yu Qiao. Region attention networks for pose and occlusion robust facial expression recognition. IEEE Transactions on Image Processing, 29:4057–4069, 2020. [CrossRef]

- Kai Wang, Xiaojiang Peng, Jianfei Yang, Shijian Lu, and Yu Qiao. Suppressing uncertainties for large-scale facial expression recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 6897–6906, 2020.

- Farzaneh, A.H.; Qi, X. Facial expression recognition in the wild via deep attentive center loss. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Virtual, 5–9 January 2021; pp. 2402–2411. [Google Scholar]

- Xue F, Wang Q, Tan Z, et al. Vision transformer with attentive pooling for robust facial expression recognition[J]. IEEE Transactions on Affective Computing, 2022, 14(4): 3244-3256.

- Thanh-Hung Vo, Guee-Sang Lee, Hyung-Jeong Yang, and Soo-Hyung Kim. Pyramid with super resolution for in-the-wild facial expression recognition. IEEE Access, 8:131988–132001, 2020. [CrossRef]

- Savchenko A V, Savchenko L V, Makarov I. Classifying emotions and engagement in online learning based on a single facial expression recognition neural network[J]. IEEE Transactions on Affective Computing, 2022, 13(4): 2132-2143.

- Wagner N, Mätzler F, Vossberg S R, et al. CAGE: Circumplex Affect Guided Expression Inference[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2024: 4683-4692.

- Li, H.; Sui, M.; Zhao, F.; Zha, Z.; Wu, F. Mvt: Mask vision transformer for facial expression recognition in the wild. arXiv 2021, arXiv:2106.04520. [Google Scholar]

- Zhao Z, Liu Q, Wang S. Learning deep global multi-scale and local attention features for facial expression recognition in the wild[J]. IEEE Transactions on Image Processing, 2021, 30: 6544-6556.

- Yuedong Chen, Jianfeng Wang, Shikai Chen, Zhongchao Shi, and Jianfei Cai. Facial motion prior networks for facial expression recognition. In 2019 IEEE Visual Communications and Image Processing (VCIP), pages 1–4. IEEE, 2019.

- Xiaojun Qi Farzaneh, Amir Hossein. Discriminant distribution-agnostic loss for facial expression recognition in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, pages 406–407, 2020.

- Zhang, W.; Ji, X.; Chen, K.; Ding, Y.; Fan, C. Learning a Facial Expression Embedding Disentangled from Identity. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 6755–6764. [Google Scholar]

| Input | Operator | t | c | n | s |

| 112 × 112 ×3 | Conv2d_BN | - | 64 | 1 | 2 |

| 56 × 56 × 64 | depthwiseConv2d_BN | - | 64 | 1 | 1 |

| 56 × 56 × 64 | bottleneck (MixConv 3 × 3, 5 × 5) | 2 | 64 | 1 | 2 |

| 28 × 28 × 64 | bottleneck (MixConv 3 × 3) | 2 | 128 | 9 | 1 |

| 28 × 28 × 128 | bottleneck (MixConv 3 × 3, 5 × 5) | 4 | 128 | 1 | 2 |

| 14 × 14 × 128 | bottleneck (MixConv 3 × 3) | 2 | 128 | 16 | 1 |

| 14 × 14 × 128 | bottleneck (MixConv 3 × 3, 5 × 5, 7 × 7) | 8 | 256 | 1 | 2 |

| 7 × 7 × 256 | bottleneck (MixConv 3 × 3, 5 × 5) | 2 | 256 | 6 | 1 |

| 7 × 7 × 256 | RepvggDW | - | 256 | 1 | 1 |

| 7 × 7 × 256 | linear GDConv7 × 7 | - | 256 | 1 | 1 |

| 1 × 1 × 256 | Linear | - | 256 | 1 | 1 |

| Methods | Accuracy (%) | Params | Flops |

| MobileFaceNet | 87.52 | 1.148M | 230.34M |

| MFN | 90.32 | 3.973M | 550.74M |

| LFNSB(ours) | 91.07 | 2.676M | 397.35M |

| Methods | RAF-DB | AffectNet-7 |

| CrossEntropyLoss | 89.57 | 64.26 |

| CrossEntropyLoss+CosineHarmony Loss | 90.22 | 65.45 |

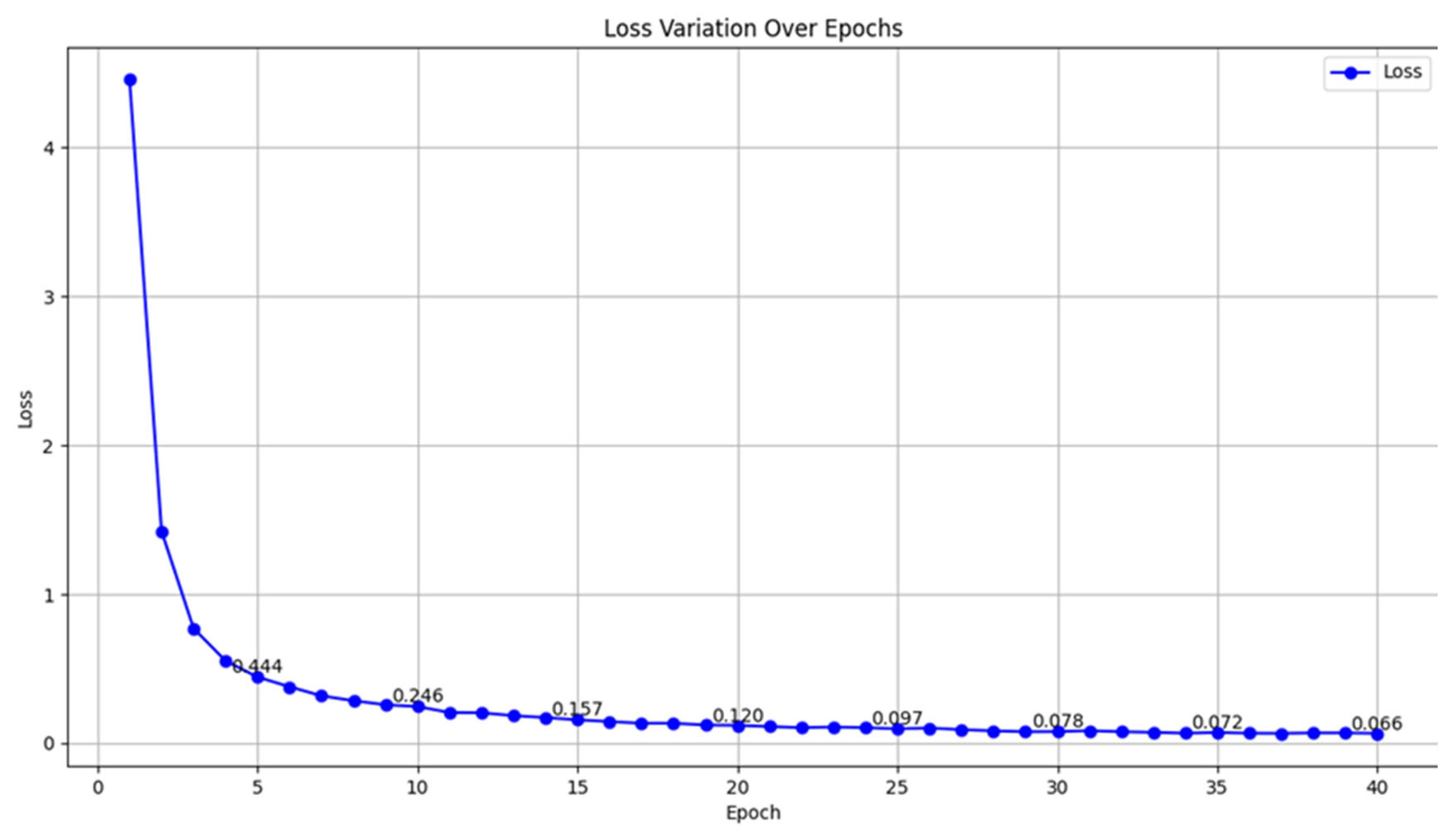

| Accuracy | Loss | |

| 0.1 | 90.22% | 0.066 |

| 0.2 | 90.12% | 0.083 |

| 0.3 | 89.96% | 0.137 |

| 0.4 | 90.03% | 0.096 |

| 0.5 | 89.86% | 0.161 |

| LFN | LFNSB | RAF-DB | AffectNet-7 |

| √ | - | 90.22 | 65.45 |

| √ | √ | 91.07 | 66.57 |

| Methods | Accuracy (%) |

| Separate-Loss [20] | 86.38 |

| RAN [28] | 86.90 |

| SCN [29] | 87.03 |

| DACL [30] | 87.78 |

| APViT [31] | 91.98 |

| DDAMFN [4] | 91.34 |

| DAN [22] | 89.70 |

| LFNSB(ours) | 91.07 |

| Methods | Accuracy (%) |

| PSR [32] | 60.68 |

| Multi-task EfficientNet-B0 [33] | 61.32 |

| DAN [22] | 62.09 |

| CAGE [34] | 62.3 |

| MViT [35] | 61.40 |

| MA-Net [36] | 60.29 |

| DDAMFN [4] | 64.25 |

| LFNSB(ours) | 63.12 |

| Methods | Accuracy (%) |

| Separate-Loss [20] | 58.89 |

| FMPN [37] | 61.25 |

| DDA-Loss [38] | 62.34 |

| DLN [39] | 63.7 |

| CAGE [34] | 67.62 |

| DAN [22] | 65.69 |

| DDAMFN [4] | 67.03 |

| LFNSB(ours) | 66.57 |

| Fold | Fold 1 | Fold 2 | Fold 3 | Fold 4 | Fold 5 | Fold 6 | Fold 7 | Fold 8 | Fold 9 | Fold 10 | Average |

| RAF-DB | 90.48 | 90.22 | 90.61 | 90.35 | 90.48 | 90.12 | 91.07 | 90.65 | 90.32 | 90.16 | 90.34 |

| Afectnet-7 | 65.71 | 66.57 | 66.11 | 64.69 | 65.65 | 65.45 | 65.61 | 65.25 | 65.12 | 66.03 | 65.72 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).